Evolutionary Insights from Comparative Gene Co-expression Networks: From Molecular Mechanisms to Biomedical Applications

This comprehensive review explores how comparative analysis of gene co-expression networks reveals evolutionary patterns of gene module conservation, duplication, and rewiring across species.

Evolutionary Insights from Comparative Gene Co-expression Networks: From Molecular Mechanisms to Biomedical Applications

Abstract

This comprehensive review explores how comparative analysis of gene co-expression networks reveals evolutionary patterns of gene module conservation, duplication, and rewiring across species. We examine foundational concepts of gene co-expression networks as tools for evolutionary studies, methodological approaches for cross-species network comparison and alignment, challenges in computational analysis and biological interpretation, and validation frameworks connecting evolutionary patterns to disease mechanisms. By integrating recent advances in multi-omics data integration, machine learning, and large-scale comparative transcriptomics, this article provides researchers and drug development professionals with practical insights for leveraging evolutionary principles to understand disease origins and identify therapeutic targets.

The Evolutionary Language of Gene Co-expression: Conservation and Rewiring Across Species

Gene Co-expression Networks as Windows into Evolutionary Processes

Gene co-expression networks (GCNs) represent a powerful data-driven approach for understanding evolutionary relationships by analyzing coordinated gene expression patterns across species. These networks model genes as nodes connected by edges representing their co-expression strength, typically calculated using correlation coefficients from transcriptomic data [1]. The fundamental premise is that evolutionary pressures act not merely on individual genes but on functional modules—groups of genes that work in concert to execute biological processes. By comparing how these modules are conserved or reconfigured across species, researchers can infer molecular adaptations that underlie phenotypic diversity [1].

The comparative analysis of GCNs has become increasingly valuable with the expansion of publicly available gene expression data, enabling studies in both model and non-model organisms. This approach reveals how evolutionary innovations often arise from the rewiring of biological networks rather than solely through gene sequence evolution. Through techniques like differential co-expression analysis and multilayer community detection, scientists can systematically identify evidence of conservation and adaptation in transcriptional programs across evolutionary lineages [1].

Theoretical Framework: How GCNs Illuminate Evolutionary Processes

Core Principles of Evolutionary Network Analysis

Gene co-expression networks provide unique insights into evolution because they capture functional relationships between genes that have been shaped by selective pressures. The organization of these networks reflects underlying biological constraints and evolutionary histories:

Evolutionary Conservation: Highly conserved co-expression relationships often indicate essential biological functions maintained across species. Studies have found that homologous genes tend to be negatively correlated with molecular evolution rates, and co-expression connectivity changes are more likely in evolutionarily younger genes with initially low connectivity [1].

Network Rewiring: Changes in co-expression relationships between orthologous genes across species reveal evolutionary adaptations. Genes with lower connectivity—fewer edges connecting them to other genes—tend to be co-expressed with other young genes, gradually becoming more connected as they gain functional importance [1].

Modular Evolution: Biological systems evolve through changes to interactions in pre-existing modules. Comparing co-expression modules across species helps identify which functional units are conserved and which have diverged, informing our understanding of evolutionary relationships [1].

Comparative GCN Methodologies for Evolutionary Studies

Table 1: Primary Methodological Approaches for Comparative GCN Analysis in Evolutionary Studies

| Method Type | Key Principle | Evolutionary Application | Key References |

|---|---|---|---|

| Differential Co-expression Analysis | Identifies changes in co-expression relationships between two conditions or species | Reveals species-specific adaptations and conserved coregulation | [1] [2] |

| Network Alignment | Maps nodes between networks to find conserved subnetworks | Discovers evolutionarily conserved functional modules | [1] |

| Multilayer Community Detection | Identifies communities across multiple tissue-specific or species-specific networks | Distinguishes generalist (multi-tissue) vs specialist (tissue-specific) modules | [3] |

| Module Preservation Analysis | Quantifies how well modules from one network reproduce in another | Assesses evolutionary conservation of functional modules | [4] |

Methodological Approaches: Constructing and Comparing Evolutionary GCNs

Standard Workflow for GCN Construction

The standard pipeline for building gene co-expression networks involves several critical stages, with Weighted Gene Co-expression Network Analysis (WGCNA) representing one of the most robust and widely-used methodologies [5] [4] [6]:

Data Preprocessing: RNA-seq or microarray data undergoes normalization, batch effect correction, and quality control. For evolutionary comparisons, data should be processed consistently across species using comparable tissues, developmental stages, and experimental conditions.

Network Construction: Pearson correlation coefficients are typically calculated between all gene pairs across samples. These correlations are transformed into connection strengths using a soft thresholding approach that preserves the continuous nature of co-expression relationships while emphasizing stronger correlations [5].

Module Detection: Genes are clustered into modules based on their co-expression patterns using topological overlap matrices, which measure network interconnectedness beyond direct correlations. The dynamic tree cut algorithm is then applied to define modules [5] [4].

Module Characterization: Eigengenes (first principal components) represent each module's expression profile. Modules are functionally annotated using enrichment analysis, and hub genes (highly connected genes) are identified [5] [6].

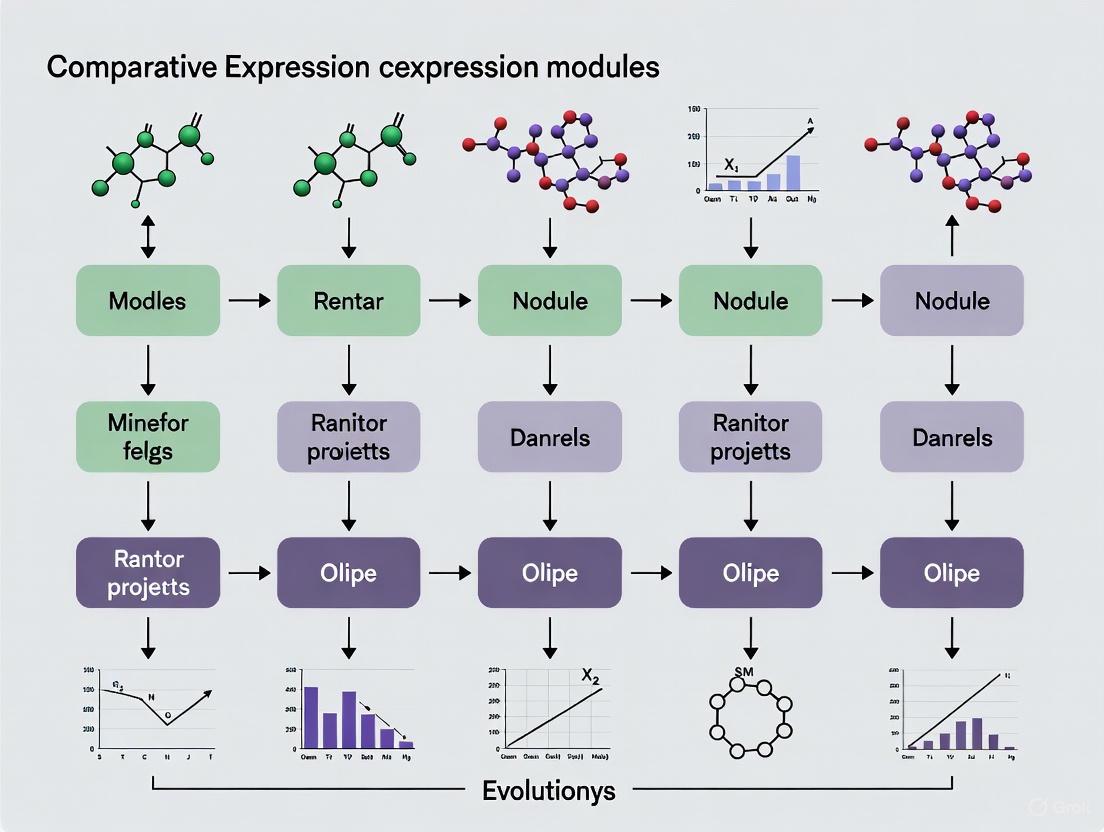

The following diagram illustrates this standard workflow for constructing gene co-expression networks:

Specialized Techniques for Evolutionary Comparisons

Differential Co-expression Analysis

This approach identifies genes with significantly different co-expression patterns between species, which may indicate evolutionary adaptations:

The methodology involves calculating partial correlation coefficients between gene pairs in each species separately, then identifying pairs with statistically significant differences in correlation strength. For example, in a study of breast cancer evolution under drug treatment, researchers used Spearman's partial correlation coefficients with a false discovery rate threshold of α = 0.01 to identify co-expressed gene pairs, validating results through permutation testing [2].

Multilayer Network Analysis for Cross-Species Comparison

This advanced framework constructs a multilayer network where each layer represents a different species or tissue:

In this approach, intralayer edges represent species-specific co-expression, while interlayer edges connect the same gene across species. Communities that span multiple layers represent conserved co-expression modules ("generalists"), while those confined to single layers represent species-specific adaptations ("specialists") [3].

Key Research Applications and Findings

Evolutionary Conservation of Core Biological Processes

Studies across diverse taxa have revealed remarkable conservation of co-expression modules representing fundamental biological processes:

Table 2: Evolutionarily Conserved Co-expression Modules Across Species

| Biological Process | Species Studied | Key Findings | Reference |

|---|---|---|---|

| Mitosis & Cell Proliferation | Human glioblastoma, multiple cancers | 4-fold enrichment of anticancer drug targets in proliferation modules; connectivity correlated with number of targeting drugs | [7] |

| Photosynthesis & Light Response | Arabidopsis thaliana | 14 light-associated modules with conserved hub genes; functional validation of novel regulators | [6] |

| Developmental Processes | Maize (Zea mays) | 24 coexpression modules strongly associated with tissue types; 67% showed tissue-specific expression patterns | [8] |

| Exocrine Gland Functions | Human tissues (multilayer) | Five generalist modules across four tissues; two specialist pancreas-specific modules with physical gene clustering | [3] |

The maize co-expression network study exemplifies how GCNs reveal evolutionary insights. Researchers examined transcript abundance at 50 developmental stages and identified 24 robust coexpression modules with strong associations to tissue types and biological processes. Sixteen modules (67%) showed preferential transcript abundance in specific tissues, while one-third exhibited absence of expression in particular tissues, revealing the evolutionary patterning of transcriptional programs [8].

Evolutionary Insights from Network Topology and Hub Genes

The position and connectivity of genes within co-expression networks provide evolutionary insights:

Hub Gene Conservation: Highly connected hub genes in co-expression networks often represent evolutionarily constrained genes with essential cellular functions. In glioblastoma networks, anticancer drug targets were significantly enriched in proliferation modules, and their connectivity within these modules correlated with the number of drugs targeting them [7].

Evolutionary Rate Correlation: Studies have found that genes with lower connectivity tend to be evolutionarily younger and evolve faster, while highly connected hub genes show greater evolutionary constraint [1].

Context-Dependent Essentiality: Gene essentiality can evolve through changes in network architecture. In breast cancer studies, influence network analysis identified different essential genes in untreated tumors versus those that had adapted to drug treatment, demonstrating evolutionary adaptation at the network level [9] [2].

Practical Implementation: Research Toolkit

Table 3: Essential Research Reagents and Computational Tools for Evolutionary GCN Analysis

| Resource Type | Specific Tools/Databases | Function in Evolutionary GCN Analysis | Key Features |

|---|---|---|---|

| Software Packages | WGCNA R package [5] [4] | Construction of weighted co-expression networks | Soft thresholding, topological overlap, module detection |

| Cytoscape [4] | Network visualization and analysis | Interactive exploration, customizable layouts | |

| clusterProfiler [4] | Functional enrichment analysis | GO, KEGG, Reactome pathway annotation | |

| Data Resources | NCBI GEO [7] [2] | Source of evolutionary transcriptomic data | Standardized format, multiple species |

| GTEx Consortium [3] | Tissue-specific human expression data | Normalized multi-tissue data | |

| IMP webserver [2] | Functional relationship prediction | Integrates multiple evidence sources | |

| Analysis Scripts | DynamicTreeCut [5] | Module identification in hierarchical clusters | Flexible branch cutting algorithm |

| Preservation analysis [4] | Cross-species module comparison | Quantifies module conservation |

Experimental Protocol: Cross-Species Module Preservation Analysis

For researchers comparing co-expression modules across species, the following protocol provides a standardized approach:

Data Collection and Harmonization:

- Obtain RNA-seq or microarray data for comparable tissues/developmental stages across species

- Map genes to orthologous groups using databases like OrthoDB or Ensembl Compara

- Apply consistent normalization procedures across all datasets

Network Construction in Reference Species:

- Construct WGCNA network using standard parameters (soft thresholding, topological overlap)

- Identify modules using dynamic tree cutting

- Characterize modules functionally through enrichment analysis

Preservation Analysis in Target Species:

- Calculate preservation statistics (Zsummary) using module labels from reference species

- Apply permutation testing (typically 100-200 permutations) to assess significance

- Classify modules as preserved (Zsummary > 10), moderately preserved (2 < Zsummary < 10), or not preserved (Zsummary < 2) [4]

Biological Interpretation:

- Analyze functionally enriched terms in preserved versus non-preserved modules

- Identify conserved versus rewired hub genes

- Relocate network changes to evolutionary divergences

This protocol typically requires 2-3 hours of hands-on time and 8-12 hours of computational time, depending on dataset size and permutation number [4].

Current Challenges and Future Directions

Despite considerable progress, several challenges remain in the evolutionary analysis of gene co-expression networks:

Technical Variation: Differences in experimental protocols, platforms, and data processing can introduce artifacts that confound evolutionary interpretations. Integration methods must distinguish technical noise from biological signals [1] [10].

Computational Complexity: Network alignment algorithms can be computationally intractable for large datasets, requiring heuristic approaches that may not find optimal solutions [1].

Functional Interpretation: While co-expression suggests functional relationships, it does not distinguish between direct and indirect interactions. Integration with regulatory networks (e.g., transcription factor binding) provides more mechanistic insights [3] [6].

Future directions include developing more sophisticated multilayer network methods that simultaneously analyze co-expression across multiple species and tissues, creating unified frameworks for comparing networks across evolutionary distances, and improving integration with other omics data to connect expression changes to regulatory evolution and phenotypic diversification [1] [3].

The continued expansion of transcriptomic datasets across diverse species, coupled with advanced network analysis methods, promises to further establish gene co-expression networks as fundamental tools for understanding the evolutionary processes that shape biological diversity.

Modularity represents a fundamental architectural principle across biological systems, describing the organization of complex systems into cohesive entities that are loosely coupled and can often function independently [11]. This concept, widespread in computer science, cognitive science, and organization theory, allows complex biological tasks to be broken down into smaller, simpler functions that can be modified or operated independently [11]. In biological contexts, modularity manifests at multiple scales—from structural domains within proteins that serve as reusable building blocks, to developmental modules in embryogenesis, to functional modules within cellular networks [11]. Functional modules constitute discrete molecular entities whose functions are separable from other modules, composed of various interacting molecules that collectively perform specific biological roles [11]. The availability of complete genome sequences and functional genomics datasets has revealed the profoundly modular organization of cellular systems, including protein-protein interaction networks, gene regulatory circuits, and metabolic pathways [11]. This article examines the conserved genetic programs that constitute these stable functional modules across evolutionary timescales, comparing their performance characteristics and experimental methodologies for their identification and validation.

Defining Modules Across Biological Networks

Conceptual Foundations and Identification Criteria

Functional modules in cellular networks represent discrete units that accomplish discrete biological functions with a degree of isolation from other network components [11]. This isolation can manifest through spatial, chemical, or temporal separation. Several overlapping criteria help define these modules:

- Strong internal cohesion: Modules display strong interactions within and weak connections outside the module [11]

- Network topology: Functional modules often correspond to network cliques with more connections among components than with outside elements [11]

- Evolutionary conservation: Modules may be conserved across species as identifiable functional units [11]

- Functional separability: Modules can frequently be reconstituted or operate independently from the larger network [11]

Not all modules require physical interactions; metabolic pathways represent functional modules connected by substrate-product relationships rather than physical associations [11]. Similarly, signal transduction cascades form information-processing modules through sequential interactions separated in time and space [11].

Types of Biological Modules

Table: Classification of Biological Modules by Network Type

| Module Type | Defining Interactions | Examples | Key Characteristics |

|---|---|---|---|

| Protein Complexes | Stable physical contacts between proteins | F1Fo ATP synthase | Large buried interfaces (>2500 Ų), hydrophobic contacts, strong connectivity [11] |

| Transient Complexes | Transient physical interactions | Cyclin-dependent kinase-cyclin dimers | Smaller interfaces (<2000 Ų), hydrophilic contacts, assemble/disassemble rapidly [11] |

| Metabolic Pathways | Substrate-product relationships | Metabolic pathways | Functional connectivity without physical interaction, process-oriented [11] |

| Signal Transduction Cascades | Sequential molecular interactions | Yeast MAPK mating pathway | Information processing through succession of interactions, temporal separation [11] |

| Gene Co-expression Modules | Coordinated transcriptional regulation | Spermatogenesis genetic program | Co-regulated genes, conserved expression patterns across species [12] |

Evolutionary Origins and Conservation of Genetic Modules

Deep Evolutionary Conservation of Core Genetic Programs

Male germ cells provide a compelling model for studying deeply conserved genetic modules. Research demonstrates that male germ cells share a common origin across animal species and retain a conserved genetic program defining their cellular identity [12]. Through network analysis of spermatocyte transcriptomes across vertebrates and invertebrates, researchers have identified a genetic scaffold of deeply conserved genes retained throughout evolution [12]. This scaffold contains approximately 10,000 protein-coding genes, with about one-third representing a deeply conserved core that has persisted across evolutionary timescales [12].

Phylostratigraphy analysis, which maps evolutionary age of gene groups by identifying their last common ancestor in a species tree, reveals that the majority (65.2-70.3%) of genes expressed in male germ cells map to the oldest phylostrata containing orthogroups common to all Metazoa [12]. This conservation persists despite the rapid evolution typically associated with reproduction-related genes and the widespread transcriptional leakage in male germ cells [12]. The conserved genetic module of spermatogenesis includes 79 functional associations between 104 gene expression regulators representing a core component of the metazoan spermatogenic program [12].

Conserved Modules in Plant Systems

Similar evolutionary conservation appears in plant reproductive systems. Comparative transcriptomics across 22 Solanaceae species reveals evolutionary conservation in expression patterns of reproductive organ-specific genes [13]. Flowers and fruits exhibit the highest proportion of organ-specific genes (49.45% at top 2% specificity threshold), with particularly high similarity among flower/fruit-specific genes across species [13]. This conservation highlights fundamental modular programs underlying plant reproduction.

Functional enrichment analysis identifies conserved transcription factor families in these modules, including YABBY and MADS-box genes critical for floral development [13]. These conserved regulatory modules shape reproductive organ development across Solanaceae species despite their diverse fruit morphologies [13].

Experimental Methodologies for Module Identification

Comparative Transcriptomics Approaches

Comparative transcriptomics has emerged as a powerful methodology for identifying conserved modules across species [13]. This approach typically involves:

- Data Collection: Compiling transcriptome samples across multiple species, tissues, and developmental stages (e.g., 293 samples across 22 Solanaceae species) [13]

- Expression Quantification: Generating expression matrices with normalized values (e.g., TPM - transcripts per million) [13]

- Organ-Specificity Analysis: Calculating specificity measures (SPM) to identify genes with restricted expression patterns [13]

- Cross-Species Comparison: Employing similarity metrics (e.g., Jaccard similarity coefficient) to assess conservation of gene sets across species [13]

- Network Construction: Building co-expression networks to identify functionally related gene groups [13]

Gene Co-expression Network Analysis

Gene co-expression network (GCN) analysis provides a powerful systems biology approach for identifying functional modules [14]. The modular GCN (mGCN) pipeline typically involves:

- Data Acquisition: Obtaining gene expression datasets from public repositories (e.g., GEO) [14]

- Correlation Calculation: Computing pairwise correlations (e.g., Pearson correlation coefficient) between genes across samples [14]

- Network Construction: Building scale-free topological networks using soft thresholding to optimize power law fit (R² > 0.8) [14]

- Module Detection: Identifying co-expression modules with minimum gene thresholds (e.g., ≥20 genes) [14]

- Module-Phenotype Association: Assessing module activities across conditions using enrichment scores (e.g., normalized enrichment score - NES) [14]

- Hub Gene Identification: Detecting central regulators within modules based on connectivity metrics [14]

Table: Key Analytical Metrics in Co-expression Network Analysis

| Analysis Stage | Metric | Typical Threshold | Interpretation |

|---|---|---|---|

| Network Construction | Scale-free topology fit (R²) | >0.8 | Indicates network follows power law distribution [14] |

| Gene-Gene Correlation | Pearson correlation coefficient (PCC) | ≥0.80 | Strong co-expression relationship [14] |

| Module Detection | Minimum genes per module | ≥20 | Ensures biological relevance [14] |

| Module Significance | Normalized enrichment score (NES) | >1 | Significant module activity in phenotype [14] |

| Statistical Significance | Benjamini-Hochberg p-value | <0.05 | False discovery rate-adjusted significance [14] |

Single-Cell Resolution Approaches

Advanced single-cell technologies now enable module identification at cellular resolution. Single-cell RNA sequencing (scRNA-seq) generates high-resolution gene expression data but introduces integration challenges due to batch effects [15]. Deep learning approaches address this by learning biologically conserved gene expression representations [15]. The scVI framework utilizes conditional variational autoencoders to embed single-cell data while treating batches as conditional variables to remove technical artifacts [15]. Extension to scANVI incorporates partial cell-type annotations through semi-supervised learning to improve biological conservation [15].

Comparative single-cell transcriptomic mapping (CSCTM) constructs integrated maps across species using orthologous genes, enabling identification of conserved and divergent cell types [16]. This approach revealed a sea-island cotton-specific cell cluster and conserved pigment gland development modules in cotton leaves [16].

Case Studies of Conserved Modules in Disease and Development

The Conserved Spermatogenesis Program

The genetic program governing male gamete formation represents a deeply conserved functional module with remarkable stability across evolution [12]. This module contains approximately 10,000 protein-coding genes, with a core scaffold of ancient genes maintained across metazoan evolution [12]. Functional studies demonstrate that disrupting this ancient genetic program leads to reproductive disease, including human infertility [12]. The conservation of this module across 600 million years of evolution highlights the exceptional stability of core developmental programs despite rapid evolution of reproductive proteins.

Disease Modules in Human Pathology

Gene co-expression module analysis of familial hypercholesterolemia (FH) reveals conserved modules underpinning atherosclerosis development [14]. Three significant modules (M2, M4, M5) show upregulated activity in FH patients and involvement in atherosclerosis-related processes: cell migration, cholesterol metabolism, inflammation, innate immunity, and shear stress response [14]. Hub genes within these modules (RHOA, ACTB, LYN, ACTG1, BCL6, BCL2L1, and STAT3) represent potential biomarkers and therapeutic targets [14]. This demonstrates how conserved functional modules can inform human disease mechanisms and treatment strategies.

Research Reagent Solutions Toolkit

Table: Essential Research Resources for Module Analysis

| Resource Category | Specific Tools | Application Purpose | Key Features |

|---|---|---|---|

| Computational Frameworks | CEMiTool R package [14] | Gene co-expression network analysis | Modular network construction, module-phenotype association, functional enrichment |

| Deep Learning Platforms | scVI [15], scANVI [15] | Single-cell data integration | Probabilistic modeling, batch effect correction, semi-supervised learning |

| Data Resources | Gene Expression Omnibus (GEO) [14] | Public data repository | Standardized expression data, clinical annotations, cross-study comparisons |

| Benchmarking Metrics | scIB/scIB-E metrics [15] | Integration performance evaluation | Batch correction quantification, biological conservation assessment |

| Orthology Databases | Orthologous gene sets [12] [16] | Cross-species comparisons | Evolutionary relationships, functional conservation analysis |

Conserved genetic modules represent fundamental organizational units in biological systems that maintain stable functions across evolutionary timescales. These modules exhibit defining characteristics including strong internal connectivity, functional separability, and evolutionary conservation [11]. Experimental approaches from comparative transcriptomics to single-cell analysis consistently identify these stable functional units across biological contexts—from reproductive development [12] [13] to disease processes [14]. The deep conservation of these modules, particularly in essential processes like reproduction, highlights their fundamental importance in biological systems. As analytical methods advance, particularly in deep learning and single-cell technologies [15] [16], our capacity to identify and characterize these ancient genetic programs continues to grow, offering insights into both fundamental biology and disease mechanisms.

For decades, phylogenetic profiling (PP) has been a powerful genomic-context method for predicting functional gene linkages by correlating gene presence and absence across species [17]. This approach operates on the principle that functionally related genes are retained or lost together during evolution. However, PP possesses a significant limitation: it cannot analyze genes that are never lost from genomes, which includes many essential cellular components [18].

Phylogenetic Expression Profiling (PEP) represents a methodological evolution. Instead of tracking gene presence/absence, PEP analyzes evolutionary covariation in gene expression levels across orthologs from widely diverged species [18]. This approach can reveal coordinated evolution in fundamental cellular machinery that is inaccessible to traditional PP, providing new insights into the evolution of pathways and protein complexes over deep evolutionary timescales.

Core Methodology: The PEP Workflow

The implementation of PEP involves a multi-stage analytical process, designed to handle the challenges of cross-species transcriptome comparison.

Ortholog Identification and Expression Matrix Construction

A primary challenge in PEP is identifying orthologous genes across highly divergent species. One established workflow involves [18]:

- Transcript-to-Protein Matching: Assembled transcripts are matched to clusters of related protein sequences (e.g., UniProt100 clusters) using BLASTP.

- Merging Redundant Hits: Redundant matches are iteratively merged. If a transcript's top match is uninformative, subsequent best matches are tested.

- Expression Summation: Within a sample, multiple transcripts matching the same protein cluster are summed to represent the total expression level of that gene family.

- Matrix Assembly: This process yields a unified gene expression matrix (e.g., 4,219 genes x 657 samples) [18], which serves as the foundation for all downstream analyses.

Detecting Coordinated Evolution

With the expression matrix, coordinated evolution is detected using a Phylogenetic Expression Profiling (PEP) correlation [18]:

- Correlation Calculation: Pairwise Spearman correlations between all genes in the expression matrix are computed.

- Accounting for Phylogenetic Structure: A critical step involves correcting for phylogenetic non-independence, which can inflate correlations. A permutation-based null model is created by randomizing gene sets, providing an expectation for correlations due to shared phylogeny alone.

- Significance Testing: Gene sets whose observed PEP correlation significantly exceed the null distribution are identified as showing coordinated evolution.

Table 1: Key Steps in a Standard PEP Workflow

| Step | Primary Action | Key Outcome |

|---|---|---|

| 1. Data Collection | RNA-seq from diverse species | Multi-species transcriptome data (e.g., 309 species) [18] |

| 2. Ortholog Identification | Map transcripts to protein clusters | Unified cross-species gene identifiers |

| 3. Expression Quantification | Calculate total expression per gene/sample | Unified gene expression matrix |

| 4. PEP Correlation | Compute pairwise Spearman correlations | Matrix of gene-gene expression evolutionary correlations |

| 5. Phylogenetic Correction | Compare against permuted null model | Statistically significant coordinately evolving gene sets |

The following diagram illustrates the logical workflow and data transformation at each stage of the PEP analysis:

Key Comparative Findings: PEP vs. Traditional Methods

PEP has been systematically compared to other functional genomics methods, revealing both overlaps and unique insights.

Validation Against Phylogenetic Profiling (PP)

PEP validates known functional modules identified by PP while extending beyond its limitations.

- Ciliary Genes: Ciliary genes, one of the most significant gene sets identified by PP, also show a strong coordinated evolutionary signal in PEP analysis (permutation p = 5.1 x 10⁻⁵) [18].

- Pairwise Agreement: Specific ciliary gene pairs with the strongest PP signal (based on co-loss) also exhibit high PEP correlations (permutation p = 3.4 x 10⁻²²) [18].

- Broad-Scale Overlap: A large set of 327 coordinately evolving modules from a previous PP study were significantly enriched for high PEP correlations [18].

Novel Discoveries Beyond PP and Within-Species Co-expression

The power of PEP lies in its ability to discover coordinated evolution where other methods cannot.

- Essential Complexes: PEP identified hundreds of coordinately evolving gene sets, including essential complexes like the proteasome, nuclear pore complex, and RNA degradation pathways, which PP rarely detects due to the extreme rarity of gene loss in these complexes [18].

- Orthogonality to Within-Species Co-expression: PEP correlations show little correspondence with co-expression patterns observed across environmental or genetic perturbations in yeast. This indicates PEP captures evolutionary constraints that are distinct from and complementary to those revealed by condition-specific co-expression within a single species [18].

Table 2: Comparative Performance of PEP vs. Other Functional Genomics Methods

| Method | Basis of Association | Key Strength | Key Limitation |

|---|---|---|---|

| Phylogenetic Expression Profiling (PEP) | Coordinated evolution of expression levels across species | Reveals evolutionary constraints on essential, never-lost genes | Requires diverse transcriptome data; complex phylogeny correction |

| Phylogenetic Profiling (PP) | Co-occurrence of gene presence/absence across genomes | Powerful for non-essential, frequently lost pathways | Blind to essential genes and complexes |

| Within-Species Co-expression | Expression correlation across conditions/perturbations | Identifies condition-specific regulation and responses | Captures short-term regulatory links, not deep evolutionary constraints |

Experimental Validation and Practical Application

Case Study: Adaptive Evolution of tRNA Ligase

PEP can detect signatures of adaptive evolution. One study found that expression levels of tRNA ligase evolved to match the genome-wide codon usage across diverse eukaryotic lineages [18]. This is a clear example of physiological adaptation through coordinated evolution of gene expression, where the expression of a gene evolves in tune with a global genomic feature to maintain optimal translation efficiency.

Protocol: Implementing a PEP Analysis

Researchers can implement PEP using the following detailed methodology, adapted from Martin et al. (2018) [18]:

- Data Acquisition: Obtain RNA-seq data from a broad phylogenetic range of species (e.g., Marine Microbial Eukaryotic Transcriptome Sequencing Project (MMETSP) data: 657 samples from 309 species).

- Ortholog Definition:

- Perform de novo transcriptome assembly for each species if required.

- Use a two-step BLASTP approach against a reference protein database (e.g., UniProt100) with an E-value cutoff (e.g., 1e-5).

- For each transcript, test up to five top UniProt100 matches. Merge isoforms/paralogs by summing read counts for the same UniProt100 ID within a sample.

- Retain only ortholog groups with detectable expression in a sufficient number of samples (e.g., >100 samples) for robust analysis.

- Expression Matrix Construction: Build a gene (rows) x sample (columns) matrix of normalized expression values (e.g., TPM or FPKM).

- PEP Correlation Calculation:

- Compute pairwise Spearman correlations between all genes, using only samples where both genes are detectably expressed.

- To correct for phylogenetic non-independence, generate a null distribution of correlations: randomly select gene sets matched for phylogenetic distribution and calculate their median correlation. Repeat 10,000 times.

- Compare observed gene set correlations to this null distribution to assign empirical p-values.

- Control for multiple testing using a False Discovery Rate (FDR) threshold (e.g., 5% FDR).

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Resources for PEP Studies

| Reagent/Resource | Function in PEP Analysis | Example or Source |

|---|---|---|

| Multi-Species RNA-seq Data | Provides raw gene expression measurements across phylogeny | Marine Microbial Eukaryotic Transcriptome Project (MMETSP) [18] |

| Reference Protein Database | Serves as a common scaffold for ortholog identification | UniProt100 cluster database [18] |

| BLAST Suite | Identifies homologous sequences between transcriptomes and reference DB | NCBI BLAST+ [18] |

| Ortholog Clusters | Defines groups of evolutionarily related genes for analysis | Custom-built from BLAST results [18] |

| Statistical Software (R) | Performs correlation calculations, permutation tests, and FDR correction | R packages for statistics (e.g., stats, multtest) |

Phylogenetic Expression Profiling (PEP) represents a significant advance in comparative genomics. By analyzing the coordinated evolution of gene expression across deep evolutionary timescales, it reveals functional relationships within essential cellular machinery that remains invisible to gene presence/absence methods. The method has proven effective in identifying novel coordinately evolved pathways, validating known functional modules, and detecting adaptive evolution. As transcriptomic data from an ever-widening range of species becomes available, PEP is poised to become an increasingly powerful tool for deciphering the evolutionary forces that have shaped gene regulatory networks and complex cellular systems.

Co-expression Conservation as a Measure of Functional Divergence

The evolution of new phenotypes in organisms often results not from the emergence of entirely new genes, but from changes to interactions within pre-existing biological networks [19]. Gene co-expression networks (GCNs), which represent patterns of coordinated gene expression across various conditions, tissues, or developmental stages, have emerged as a powerful framework for quantifying these evolutionary changes [19] [20]. The fundamental premise underlying this approach is that the conservation or divergence of co-expression relationships between orthologous genes can serve as a sensitive measure of functional conservation or divergence [20].

The increasing abundance of transcriptomic data from both model and non-model organisms has positioned GCNs as an invaluable tool for evolutionary studies, complementing traditional sequence-based analyses [19]. This review provides a comprehensive comparison of GCN methodologies and their applications in measuring functional divergence, offering experimental protocols, analytical frameworks, and empirical findings to guide researchers in designing and interpreting comparative co-expression studies.

Theoretical Framework: Co-expression Networks as Evolutionary Indicators

Fundamental Concepts in Co-expression Network Analysis

Gene co-expression networks are typically represented as undirected graphs where nodes correspond to genes and edges represent the strength of co-expression between gene pairs, often derived from correlation coefficients or mutual information measures [19]. Unlike protein-protein interaction networks that often have unweighted edges, GCNs typically employ weighted graphs where edge weights can range from -1 to 1 when using correlation coefficients, capturing both positive and negative regulatory relationships [19].

The construction of GCNs has been greatly facilitated by advancing technologies such as RNA-seq and single-cell RNA-seq, which enable comprehensive profiling of transcriptomic activity closely tied to phenotypic expression [19]. In evolutionary contexts, GCN analysis allows researchers to move beyond studying genes as independent units to investigating how networks are "re-wired" throughout evolution, potentially revealing the molecular underpinnings of phenotypic diversification [19].

Evolutionary Principles Underlying Co-expression Conservation

The evolutionary analysis of GCNs rests on several key principles. First, homologous genes tend to show negative correlations between their evolutionary rates and co-expression connectivity changes, with genes of more recent evolutionary origin typically displaying lower connectivity [19]. Second, regulatory evolution appears to be a major driver of species differentiation, with divergence in gene regulation often preceding coding sequence changes [20]. Third, different functional categories of genes exhibit distinct patterns of evolutionary conservation, with regulatory processes (e.g., signal transducers, transcription factors) generally displaying higher plasticity than core processes (e.g., metabolism, transport) [21].

Table 1: Evolutionary Patterns in Gene Co-expression Networks

| Evolutionary Pattern | Description | Functional Implication |

|---|---|---|

| Conserved Modules | Groups of genes maintaining co-expression across species | Core biological processes essential across taxa |

| Diverged Modules | Co-expression relationships specific to certain lineages | Species-specific adaptations and novel functions |

| Hub Gene Evolution | Changes in highly connected genes within networks | Potential rewiring of regulatory architectures |

| Network Motif Turnover | Changes in small recurrent network patterns | Evolution of regulatory logic and circuit structures |

Methodological Approaches for Comparative Co-expression Analysis

Network Construction and Feature Detection

The initial step in comparative co-expression analysis involves constructing robust GCNs for each species under investigation. The selection of association measures significantly influences network properties, with Pearson correlation being most common, though mutual information with context likelihood of relatedness (CLR) has been shown to be state-of-the-art in comparative studies [20]. Mutual information offers the advantage of capturing non-linear relationships, though it requires discrete data and may lead to signal loss [19].

Network construction typically involves applying a threshold to association measures to determine whether gene pairs should be connected. Both hard thresholds (strict cut-offs) and soft thresholds (power law transformations) can be applied, with the latter better preserving the continuous nature of co-expression relationships [19] [22]. An essential consideration is that network properties, including scale-free characteristics, often only emerge above certain co-expression thresholds [20].

Once networks are constructed, module detection algorithms identify groups of highly interconnected genes potentially involved in related biological processes. Hierarchical clustering coupled with dynamic tree cutting is commonly employed, followed by module eigengene calculation - the first principal component of a module's expression matrix - which serves as a representative profile for the entire module [22].

Cross-Species Network Alignment and Comparison

Multiple computational approaches exist for comparing GCNs across species, each with distinct advantages and limitations. Local and global network alignment methods address the challenge of mapping nodes between networks, though the optimal methods for GCN alignment specifically remain an area of active research [19]. Alignment-free methods provide alternative approaches that do not require explicit node-to-node mapping.

The choice of network analysis strategy (node-based vs. community-based) often has a stronger impact on biological interpretation than the specific network modeling approach [10]. Node-based methods focus on individual genes and their direct connections, while community-based approaches consider modules as functional units, with the largest differences in biological interpretation typically observed between these two strategies [10].

Table 2: Methods for Cross-Species Co-expression Network Comparison

| Method Type | Key Features | Representative Tools | Best Applications |

|---|---|---|---|

| Local Alignment | Identifies conserved local network regions | - | Studying specific functional modules |

| Global Alignment | Maps entire networks to each other | IsoRankN | System-level conservation patterns |

| Orthology-Based | Relies on sequence similarity between genes | - | Initial cross-species mapping |

| Topology-Based | Uses network structure similarity | - | Discovering convergent evolution |

| Hybrid Methods | Combines orthology and topology information | - | Comprehensive evolutionary analysis |

Experimental Protocols for Comparative Co-expression Studies

Standard Workflow for Cross-Species GCN Analysis

The following experimental protocol outlines a comprehensive approach for comparing co-expression networks across species to assess functional divergence, integrating best practices from multiple studies [20] [23] [22].

Detailed Methodology for Key Steps

Data Collection and Preprocessing: Compile gene expression datasets from comparable tissues, developmental stages, or experimental conditions across species. For plants, studies have successfully utilized Affymetrix microarray data from public repositories like GEO [20] [14]. For mammalian systems, single-cell RNA-seq data enables cell-type-specific comparisons [24]. Filter genes with low counts or low variation across samples to reduce noise, while being cautious not to over-filter and lose biological signal [22].

Orthology Mapping: Identify orthologous genes across species using established methods such as OrthoMCL, which accounts for gene families and paralogs [20]. Note that genes often have multiple predicted orthologs, presenting challenges for one-to-one mapping. Interestingly, the most sequence-similar ortholog is not necessarily the one with the most conserved gene regulation [20].

Network Construction: Calculate pairwise gene co-expression using appropriate association measures. Mutual information with CLR correction has been shown to outperform simple correlation in comparative studies [20]. Apply soft thresholding to achieve scale-free topology when using methods like WGCNA [14] [22].

Cross-Species Comparison: Quantify conservation at multiple levels - individual links, network neighborhoods, and entire modules. For network neighborhood conservation, compute similarity scores between orthologous genes based on their connection patterns [20]. Consider analyzing networks across a range of co-expression thresholds, as conservation patterns may vary with threshold stringency [20].

Key Findings from Empirical Studies

Evolutionary Patterns in Plant Systems

Comparative analysis of co-expression networks in A. thaliana, Populus, and O. sativa revealed that although individual gene-gene co-expression links had massively diverged, approximately 80% of genes still maintained significantly conserved network neighborhoods [20]. This suggests substantial conservation of functional relationships despite extensive sequence divergence.

A particularly significant finding was that for genes with multiple predicted orthologs, about half had one ortholog with conserved regulation and another with diverged or non-conserved regulation [20]. In over half of these cases, the most sequence-similar ortholog was not the one with the most conserved gene regulation, highlighting the importance of incorporating regulatory information into orthology assessments for functional predictions.

The study also found that scale-free characteristics in co-expression networks emerged only above certain co-expression thresholds in all three plant species, and that genes with high centrality (hub genes) tended to be conserved across species [20]. This conservation of network architecture suggests evolutionary constraints on the overall organization of transcriptional programs.

Mammalian Neocortex Evolution

A comprehensive single-cell multiomics study of the primary motor cortex in human, macaque, marmoset, and mouse revealed both conserved and divergent gene regulatory programs [24]. The research identified 2,689 (~20%) mammal-conserved genes with similar expression patterns across cell types in all four species, and 2,638 (~20%) genes with conserved patterns only among primates.

The study demonstrated that conserved and divergent gene regulatory features were reflected in the evolution of the three-dimensional genome, with transposable elements contributing to nearly 80% of human-specific candidate cis-regulatory elements in cortical cells [24]. Despite significant sequence divergence, the genomic regulatory syntax - DNA motifs recognized by sequence-specific DNA binding proteins - was highly preserved from rodents to primates.

Functional enrichment analysis revealed that ubiquitous mammal-conserved genes were primarily involved in basic cellular processes, while non-ubiquitous mammal-conserved genes showed enrichment for transcriptional regulation and nervous system development [24]. Human-biased genes were enriched for extracellular matrix organization, suggesting potential mechanisms for human-specific cortical features.

Tissue-Specific Co-expression Conservation

Multilayer network analysis of co-expression patterns across four exocrine gland tissues revealed both "generalist" communities (co-expressed in multiple tissues) and "specialist" communities (co-expressed in just one tissue) [23]. This approach enabled researchers to distinguish between fundamental regulatory programs operating across tissues and tissue-specific adaptations.

The study further found that some co-expression communities showed significant physical clustering in the genome, with genes from the same community located proximally on chromosomes 1 and 11 [23]. This clustering suggests underlying regulatory elements, such as shared enhancers or topologically associated domains, that coordinate expression of these gene groups across individuals and cell types.

Research Reagent Solutions Toolkit

Table 3: Essential Tools and Resources for Comparative Co-expression Studies

| Tool/Resource | Function | Application Notes |

|---|---|---|

| GWENA R Package | Extended co-expression network analysis | Integrates network construction, module characterization, and differential co-expression [22] |

| WGCNA | Weighted gene co-expression network analysis | Widely used for correlation network construction and module detection [14] [22] |

| CEMiTool | Gene co-expression network analysis | Identifies co-expression modules and performs enrichment analysis [14] |

| ComPlEx Web Tool | Comparative analysis of plant co-expression networks | Specifically designed for cross-species comparisons in plants [20] |

| GTEx Database | Tissue-specific gene expression data | Essential for studying conservation patterns across tissues [23] |

| OrthoMCL | Orthology mapping between species | Identifies orthologous groups for cross-species comparisons [20] |

| Single-cell Multiome | Simultaneous profiling of transcriptome and epigenome | Enables cell-type-specific cross-species comparisons [24] |

Analytical Framework for Differential Co-expression

Conceptual Model of Co-expression Conservation

The relationship between sequence conservation, co-expression conservation, and functional divergence can be visualized as a conceptual framework guiding analytical decisions in comparative studies.

Interpretation Guidelines for Conservation Patterns

Interpreting co-expression conservation patterns requires considering multiple biological and technical factors. Strong conservation of co-expression relationships between orthologs typically indicates functional constraints preserving these regulatory relationships. However, several caveats merit consideration:

Technical artifacts: Batch effects, differences in experimental protocols, or tissue composition disparities can create spurious conservation or divergence signals.

Evolutionary timing: Conservation patterns may reflect different evolutionary timescales, with some functions conserved across deep evolutionary distances while others show lineage-specific adaptations [21] [24].

Functional redundancy: Genes with multiple orthologs may show subfunctionalization, where different orthologs maintain different aspects of the ancestral function [20].

Network position: Hub genes and genes in central network positions often show different evolutionary constraints compared to peripheral genes [20].

Comparative analysis of gene co-expression networks provides a powerful approach for quantifying functional divergence across species. The methodologies and findings summarized in this review highlight the value of moving beyond sequence-based comparisons to understand the evolutionary rewiring of regulatory relationships that underlies phenotypic diversity. As single-cell multiomics technologies continue to advance and computational methods for network alignment improve, comparative co-expression analysis will offer increasingly nuanced insights into the molecular mechanisms of evolution. The integration of co-expression conservation measures with other functional genomic data will further enhance our ability to interpret genetic variants contributing to species-specific traits and disease susceptibility.

The phytohormone auxin, primarily indole-3-acetic acid (IAA), functions as a master regulator of plant growth and development, coordinating processes ranging from embryogenesis to environmental responses. The nuclear auxin pathway (NAP), with its three dedicated components—TIR1/AFB receptors, Aux/IAA repressors, and ARF transcription factors—forms the core signaling machinery that translates auxin perception into transcriptional reprogramming [25]. This case study employs a comparative framework to examine how auxin signaling modules have evolved across plant lineages, focusing on evolutionary origins, functional diversification, and comparative analysis of co-expression networks. By integrating phylogenomic reconstructions with transcriptomic data, we trace the molecular trajectory of auxin response components from streptophyte algae to modern angiosperms, revealing how gene duplication and functional specialization have shaped this essential signaling system.

The evolutionary history of auxin signaling provides a remarkable model for understanding how plants developed molecular complexity to adapt to terrestrial environments. Recent evidence indicates that while auxin itself is present across diverse lineages including algae and bacteria, the canonical NAP originated during plant terrestrialization [25] [26]. This case study will analyze the stepwise evolution of auxin response components, their functional diversification into specialized classes, and how these evolutionary innovations are reflected in modern signaling networks across species. Through this approach, we aim to establish a comprehensive framework for understanding the principles governing the evolution of complex signaling systems in plants.

Evolutionary Origins of Auxin Signaling Components

Deep Phylogenetic Roots of ARF Transcription Factors

The Auxin Response Factors (ARFs), which function as the central effectors of auxin-responsive transcription, exhibit deep evolutionary origins predating the emergence of land plants. Phylogenomic analyses reveal that the ARF DNA-binding domain (DBD) fold shares ancestral homology with the crypto-Tudor (cTudor) domain found in the PHIP/BRWD family of eukaryotic chromatin reader proteins [27]. Structural comparisons demonstrate remarkable conservation between the DD-AD fold in ARF DBDs and the double Tudor-like domain of PHIPcTudor, with an overall Root Mean Square Deviation (RMSD) of 1.10 Å [27]. This evolutionary relationship suggests that ARFs originated from pre-existing chromatin-associated proteins, with the B3 DNA-binding domain representing a Viridiplantae-specific insertion that created the modern ARF DNA-binding architecture.

The complete ARF protein architecture, incorporating B3, DD, AD, and PB1 domains, first emerged in streptophyte algae, the closest relatives to land plants [27] [25]. Genomic surveys of chlorophyte and streptophyte algae have identified partial ARF precursors in species such as Coleochaete orbicularis and Spirogyra pratensis, though these lack full domain complements [28]. The PB1 domain, which mediates oligomerization between ARF and Aux/IAA proteins, represents another critical evolutionary innovation with deep eukaryotic roots but plant-specific modifications [25]. The assembly of these pre-existing domains into the complete ARF architecture represented a key step in the evolution of the nuclear auxin response system.

Origin and Diversification of the Full Nuclear Auxin Pathway

The complete nuclear auxin signaling pathway, comprising TIR1/AFB receptors, Aux/IAA repressors, and ARF transcription factors, is a land plant innovation [25]. Deep phylogenomics across more than 1,000 plant species has demonstrated that while individual domains exist in algal lineages, the integrated three-component system first appeared in the common ancestor of land plants [25]. Notably, algal TIR1/AFB homologs lack critical auxin-binding residues, and corresponding Aux/IAA proteins missing the TIR1-interacting degron motif [27], indicating that the co-receptor system for auxin perception emerged during terrestrialization.

Following its origin in early land plants, the NAP underwent substantial diversification through gene duplication and functional specialization. In bryophytes, the system displays limited genetic redundancy, with minimal gene families (e.g., a single TIR1/AFB ortholog in Marchantia polymorpha) [25]. In contrast, angiosperms exhibit expanded gene families, with Arabidopsis thaliana encoding 6 TIR1/AFBs, 29 Aux/IAAs, and 23 ARFs [25]. This expansion enabled subfunctionalization and neofunctionalization, allowing for more complex and tissue-specific auxin responses in vascular plants.

Table 1: Evolutionary Expansion of Nuclear Auxin Pathway Components Across Plant Lineages

| Plant Lineage | TIR1/AFB Genes | Aux/IAA Genes | ARF Genes | Key Evolutionary Innovations |

|---|---|---|---|---|

| Chlorophyte Algae | 0 | 0 | 0 (partial domains) | Auxin biosynthesis and transport components present |

| Streptophyte Algae | 0 (partial) | 0 (partial) | 1-2 (partial) | Precursor ARFs with incomplete domains |

| Bryophytes | 1 | 3-5 | 5-7 | Complete NAP with minimal redundancy |

| Lycophytes | 2-3 | 8-12 | 10-15 | Initial expansion of gene families |

| Angiosperms | 4-6 | 20-30 | 20-25 | Substantial expansion and functional diversification |

Functional Diversification of Auxin Signaling Modules

ARF Classification and Specialized Functions

ARF transcription factors have diversified into three functionally distinct classes (A, B, and C) with specialized roles in auxin signaling [27]. Class A ARFs function as transcriptional activators regulated by auxin through the NAP, while Class B and C ARFs act as transcriptional repressors [27]. This functional diversification represents a key evolutionary innovation that enabled more sophisticated regulation of auxin responses. In Marchantia polymorpha, the minimal auxin response system requires the antagonistic interplay between a single A-ARF activator (MpARF1) and a single B-ARF repressor (MpARF2) that compete for the same target sites [27]. This core regulatory module appears conserved across land plants, though with increased complexity in angiosperms.

The DNA-binding specificity and regulatory mechanisms differ between ARF classes. A- and B-class ARFs share DNA-binding specificity for auxin response elements (AuxREs), while C-class ARFs recognize different target sequences [27]. This divergence in DNA-binding specificity likely contributed to functional specialization during evolution. The middle region (MR) of ARFs, which flanks the DNA-binding and PB1 domains, appears to have been a major target for evolutionary innovation, acquiring activation or repression domains that define the functional differences between ARF classes [25].

Table 2: Functional Classes of ARF Transcription Factors and Their Characteristics

| ARF Class | Transcriptional Activity | Regulation by Auxin | DNA-Binding Specificity | Representative Members |

|---|---|---|---|---|

| A-ARF | Activator | Yes (via Aux/IAA degradation) | Same as B-ARFs | AtARF5 (MP), AtARF6, AtARF7, AtARF8, AtARF19 |

| B-ARF | Repressor | No | Same as A-ARFs | AtARF1, AtARF2, AtARF3 (ETT), AtARF4 |

| C-ARF | Repressor | No | Different from A/B-ARFs | AtARF10, AtARF16, AtARF17 |

Evolution of Auxin Biosynthesis and Transport Mechanisms

Auxin biosynthesis and transport mechanisms exhibit complex evolutionary histories that parallel the diversification of signaling components. The tryptophan aminotransferase (TAA) and YUCCA (YUC) families, which catalyze the two-step conversion of tryptophan to IAA via the IPyA pathway, are deeply conserved across land plants [26]. Phylogenetic analyses reveal that TAA genes diverged into two clades encoding alliinase domain-containing proteins, with streptophyte algae possessing orthologs containing both Alliinase-EGF and Alliinase-C domains [26]. The YUC gene family similarly diverged into two clades, with land plants possessing both YUC and sister YUC (sYUC) clades, while some green algae sequences form a sister clade basal to both [26].

Auxin transport mechanisms also show evolutionary innovation across plant lineages. PIN-FORMED (PIN) efflux carriers, AUX1/LIKE AUX1 (LAX) influx carriers, and ABCB/PGP transporters have been identified in streptophyte algae, suggesting that directional auxin transport mechanisms predate land plants [28] [26]. However, these transport components have undergone substantial diversification in land plants, with PIN genes expanding into distinct subfamilies with specialized functions in development [25]. The co-evolution of biosynthesis, transport, and signaling components has enabled increasingly sophisticated auxin-mediated development across plant evolution.

Comparative Analysis of Auxin Response Networks

Co-expression Network Analysis Across Species

Comparative transcriptomic and co-expression network analyses have revealed both conserved and species-specific aspects of auxin signaling networks. In maize (Zea mays), weighted gene co-expression network analysis (WGCNA) of aleurone development identified a specific module associated with auxin signaling where the naked endosperm1 (nkd1) and nkd2 genes negatively regulate auxin signaling to maintain normal cell patterning and differentiation [29]. The DR5 auxin reporter, a widely used synthetic auxin response element, showed significantly enhanced expression in nkd1,2 mutant endosperm, confirming altered auxin signaling [29]. This demonstrates how co-expression network analysis can identify novel regulators of auxin response in a tissue-specific context.

Similar approaches in foxtail millet (Setaria italica) mesocotyl elongation identified co-expression modules where auxin-responsive proteins (IAA1, IAA17, and SAUR36) and ethylene genes (EBF1 and EIL3) showed positive correlation with elongation [30]. The application of exogenous IAA and ethylene precursor ethephon promoted mesocotyl elongation, confirming the functional significance of these network associations [30]. These studies illustrate how comparative analysis of co-expression networks across species can identify conserved auxin signaling modules while also revealing lineage-specific adaptations.

Divergent Auxin Responses in Monocots and Dicots

Comparative studies have revealed significant divergence in auxin signaling between monocot and dicot species, particularly in root system architecture. In dicots such as Arabidopsis thaliana, lateral roots originate from founder cells in the xylem pole pericycle, with auxin maxima establishing initiation sites [31]. Monocots like maize develop more complex root systems comprising primary, seminal, and crown roots that emerge at different developmental stages [31]. These architectural differences reflect modifications in auxin transport, response, and crosstalk with other hormones.

The mechanism of lateral root emergence also shows lineage-specific variations. In both monocots and dicots, emerging lateral roots must grow through overlying cell layers (endodermis, cortex, and epidermis), a process requiring auxin-mediated spatial accommodation [31]. However, the specific transcriptional programs and cell wall remodeling enzymes involved display significant variation. Auxin facilitates this process by regulating aquaporin expression, influencing water flow and turgor pressure dynamics in lateral roots and adjacent tissues [31]. These comparative analyses highlight how core auxin signaling mechanisms have been adapted to support divergent developmental programs in different plant lineages.

Experimental Protocols for Analyzing Auxin Signaling Evolution

Phylogenomic Reconstruction and Ancestral State Prediction

Objective: To reconstruct the evolutionary history of auxin signaling components across plant lineages.

Methodology:

- Sequence Collection: Compile protein sequences for NAP components (ARFs, Aux/IAAs, TIR1/AFBs) from diverse species spanning chlorophyte algae, streptophyte algae, bryophytes, lycophytes, ferns, gymnosperms, and angiosperms using databases such as OneKP [25].

- Domain Analysis: Identify protein domains using HMMER searches against PFAM database, focusing on B3, DD, AD, MR, and PB1 domains in ARFs; TIR1-interacting degron and PB1 domains in Aux/IAAs; and LRR and F-box domains in TIR1/AFBs [27] [25].

- Phylogenetic Reconstruction: Perform multiple sequence alignment using MAFFT or ClustalOmega, followed by phylogenetic tree construction using maximum likelihood (RAxML) or Bayesian (MrBayes) methods [27].

- Ancestral State Reconstruction: Infer gene content at evolutionary nodes using parsimony- or likelihood-based methods, accounting for potential underestimation due to incomplete transcriptome data [25].

Key Considerations: Transcriptome-based analyses may underestimate gene numbers if genes are not expressed under sampled conditions. Including multiple species per evolutionary node helps compensate for this limitation [25].

Comparative Transcriptomics and Co-expression Network Analysis

Objective: To identify conserved and lineage-specific auxin signaling modules across species.

Methodology:

- Experimental Design: Collect tissue samples under comparable developmental stages and experimental conditions (e.g., auxin treatment, tissue-specific expression) across multiple species [29] [30].

- RNA Sequencing: Isolate RNA, prepare libraries, and perform RNA-seq on Illumina platform with minimum three biological replicates per condition [30] [32].

- Differential Expression Analysis: Process reads (quality control, adapter trimming), map to respective reference genomes using STAR or HISAT2, and identify differentially expressed genes using DESeq2 or edgeR [30] [32].

- Co-expression Network Construction: Perform Weighted Gene Co-expression Network Analysis (WGCNA) to identify modules of co-expressed genes, associating modules with traits of interest [29] [30] [33].

- Hub Gene Identification: Calculate module membership and gene significance to identify highly connected "hub" genes within significant modules [30] [33].

Validation: Confirm network predictions using transgenic approaches (e.g., DR5 reporter expression [29]), hormone measurements [30], or functional characterization of candidate genes.

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 3: Essential Research Reagents for Studying Evolution of Auxin Signaling

| Research Reagent | Composition/Type | Function in Research | Example Applications |

|---|---|---|---|

| DR5 Reporter System | Synthetic promoter with AuxREs fused to GFP/GUS/RFP | Visualizing auxin response maxima and dynamics | Validation of auxin signaling in nkd1,2 mutants [29] |

| Auxin Analogues | IAA, NAA, 2,4-D | Experimental manipulation of auxin levels and responses | Mesocotyl elongation assays in foxtail millet [30] |

| Transport Inhibitors | NPA, TIBA | Disrupting polar auxin transport | Analyzing role of auxin transport in root development [31] |

| Proteasome Inhibitors | MG132 | Blocking Aux/IAA degradation | Confirming proteasome-dependent regulation of Aux/IAAs [25] |

| Cross-species Antibodies | Anti-ARF, Anti-Aux/IAA | Detecting protein expression and localization across species | Comparative protein level analysis in algae and plants [28] |

| Heterologous Systems | Yeast, Xenopus oocytes | Studying protein-protein interactions and auxin perception | Analysis of TIR1-Aux/IAA interactions [25] |

Visualization of Auxin Signaling Pathways and Experimental Workflows

Evolutionary Origin and Structure of ARF DNA-Binding Domain

Nuclear Auxin Signaling Pathway and Experimental Analysis Workflow

Comparative Network Analysis: Methods, Algorithms, and Biomedical Applications

In the field of comparative expression co-expression modules evolution research, the construction of robust biological networks is a foundational step. The choice of correlation measure and network weighting strategy fundamentally shapes the topology of the resulting network, influencing module detection, biological interpretation, and the validity of evolutionary comparisons. This guide provides an objective comparison of predominant methods for constructing gene co-expression networks, evaluating their performance characteristics, computational requirements, and suitability for different research contexts. We focus specifically on their application in evolutionary studies where researchers must balance sensitivity to complex relationships with computational feasibility and statistical robustness.

Correlation Measures in Network Construction

Various correlation measures are employed to quantify co-expression relationships, each with distinct mathematical properties, advantages, and limitations.

Comparative Analysis of Correlation Measures

Table 1: Comparison of Correlation Measures for Co-expression Network Analysis

| Measure | Relationship Type | Robustness to Outliers | Distributional Assumptions | Computational Complexity | Biological Context Recommendation |

|---|---|---|---|---|---|

| Pearson | Linear | Low | Normality preferred | Low | Normally distributed data, linear relationships |

| Spearman | Monotonic | Medium | None | Medium | Non-normal data, ordinal relationships |

| Biweight Midcorrelation | Linear | High | None | Medium | Typical gene expression data with potential outliers |

| Distance Correlation | Linear and non-linear | High | None | High | Complex, non-linear biological relationships |

| Mutual Information (MI) | All types | Medium | None | High | Hypothesized complex non-linear dependencies |

| Maximal Information Coefficient (MIC) | All types | Medium | None | High | Exploratory analysis of diverse relationship types |

Detailed Methodological Protocols

Pearson Correlation Protocol:

The Pearson correlation coefficient measures the strength and direction of a linear relationship between two genes' expression profiles. For genes X and Y with expression values across m samples, the similarity is calculated as:

s_ij = |cov(X, Y) / (σ_X × σ_Y)|

where cov denotes covariance and σ represents standard deviation [34]. This measure is ideal for normally distributed data where linear relationships are expected.

Biweight Midcorrelation Protocol: The biweight midcorrelation (bicor) is a robust alternative to Pearson correlation that is less sensitive to outliers. For two vectors x and y, it is calculated using median and median absolute deviation (MAD) instead of mean and standard deviation [35]. This method is particularly valuable for gene expression data where outliers can disproportionately influence results.

Distance Correlation Protocol: Distance correlation measures both linear and nonlinear associations between variables. Unlike Pearson correlation, distance correlation is zero only if the variables are independent [36]. The calculation involves:

- Computing Euclidean distances between all pairs of observations for each variable

- Double-centering the distance matrices

- Calculating the covariance of the transformed distances This method requires significantly more computational resources but can capture complex biological relationships missed by linear measures.

Mutual Information Protocol: Mutual information measures the mutual dependence between two variables by quantifying the information gained about one variable through knowledge of the other. For continuous gene expression data, MI estimation typically involves:

- Discretizing the continuous expression values into bins

- Calculating the joint and marginal probability distributions

- Applying the formula:

MI(X,Y) = Σ p(x,y) × log(p(x,y)/(p(x)p(y)))[35] While theoretically powerful for detecting non-linear relationships, MI estimation in practice often shows strong agreement with correlation measures for most gene pairs in empirical datasets [35].

Network Weighting and Adjacency Transformation

After calculating pairwise correlations, the resulting similarity matrix must be transformed into an adjacency matrix representing the network structure.

Weighting Strategies

Table 2: Network Weighting Strategies and Their Properties

| Weighting Method | Formula | Topological Properties | Module Characteristics | Implementation Considerations | ||

|---|---|---|---|---|---|---|

| Hard Thresholding | aij = 1 if sij ≥ τ, 0 otherwise | Discrete connections, information loss | Well-defined but potentially unstable | Sensitive to threshold choice τ | ||

| Soft Thresholding (Signed) | aij = ((1 + cor(xi, x_j))/2)^β | Preserves continuous information, emphasizes positive correlations | More robust modules, preserves sign information | Requires power β selection (often β=12) | ||

| Soft Thresholding (Unsigned) | a_ij = | cor(xi, xj) | ^β | Emphasizes strong correlations regardless of sign | Groups negatively correlated genes | Requires power β selection (often β=6) |

| Topological Overlap Matrix | TOMij = (Σu aiu auj + aij)/(min(ki,kj) + 1 - aij) | Measures network interconnectedness | Biologically meaningful modules | Computationally intensive for large networks |

Parameter Selection Methodology

Scale-Free Topology Criterion: A key methodological approach for selecting the soft thresholding power β involves evaluating the scale-free topology fit. The process involves:

- Calculating the connectivity distribution p(k) for a range of β values

- Fitting a linear model between log(p(k)) and log(k)

- Selecting the smallest β that achieves a high model fit (R²) while maintaining reasonable mean connectivity [34] [37] This approach is grounded in the empirical observation that biological networks often exhibit scale-free properties, where the connectivity distribution follows a power law.

Experimental Workflow for Method Comparison: [35] describes a comprehensive protocol for comparing correlation measures through:

- Calculating pairwise associations using multiple methods on the same dataset

- Transforming associations into adjacency matrices using standardized approaches

- Applying topological overlap matrix (TOM) transformation to the adjacency matrices

- Performing hierarchical clustering and module detection

- Evaluating biological significance through gene ontology enrichment analysis

Network Construction and Comparison Workflow

Experimental Performance Data

Empirical Comparisons

[35] conducted one of the most comprehensive comparisons of correlation measures, testing mutual information against multiple correlation measures (Pearson, Spearman, and biweight midcorrelation) across eight diverse gene expression datasets from yeast, mouse, and humans. Their evaluation criteria included:

- Strength of association between different measures

- Ability to produce biologically meaningful modules (assessed by gene ontology enrichment)

- Robustness to outliers and non-normal distributions

The key finding was that biweight midcorrelation coupled with topological overlap transformation consistently produced modules with the most significant functional enrichment, outperforming mutual information-based approaches [35].

Domain-Specific Performance

[36] specifically evaluated distance correlation in gene co-expression network analysis, comparing it with Pearson correlation, Spearman correlation, and MIC on both microarray and RNA-seq datasets. Their implementation (DC-WGCNA) demonstrated:

- Enhanced enrichment analysis results compared to traditional WGCNA

- Improved module stability across different analytical conditions

- Better performance on complex, non-linear relationships present in biological systems

However, the authors noted the high computational cost of distance correlation, requiring substantially more memory and processing time [36].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| WGCNA R Package | Comprehensive weighted correlation network analysis | General co-expression network construction | Provides multiple correlation options and visualization tools |

| Biweight Midcorrelation | Robust linear correlation measure | Typical gene expression data with potential outliers | Implemented in WGCNA package; preferred over Pearson for robustness |

| Topological Overlap Matrix (TOM) | Measuring network interconnectedness | Module detection in all network types | Computationally intensive but biologically meaningful |

| Scale-Free Topology Fitting | Parameter selection for network construction | Choosing soft thresholding power β | Not applicable when very large modules dominate |

| Dynamic Tree Cut Algorithm | Module detection from hierarchical clustering | Identifying cohesive gene modules | Preferable to static height cutting for complex dendrograms |