Evolutionary Algorithms for GRN Parameter Inference: From Foundations to Clinical Applications

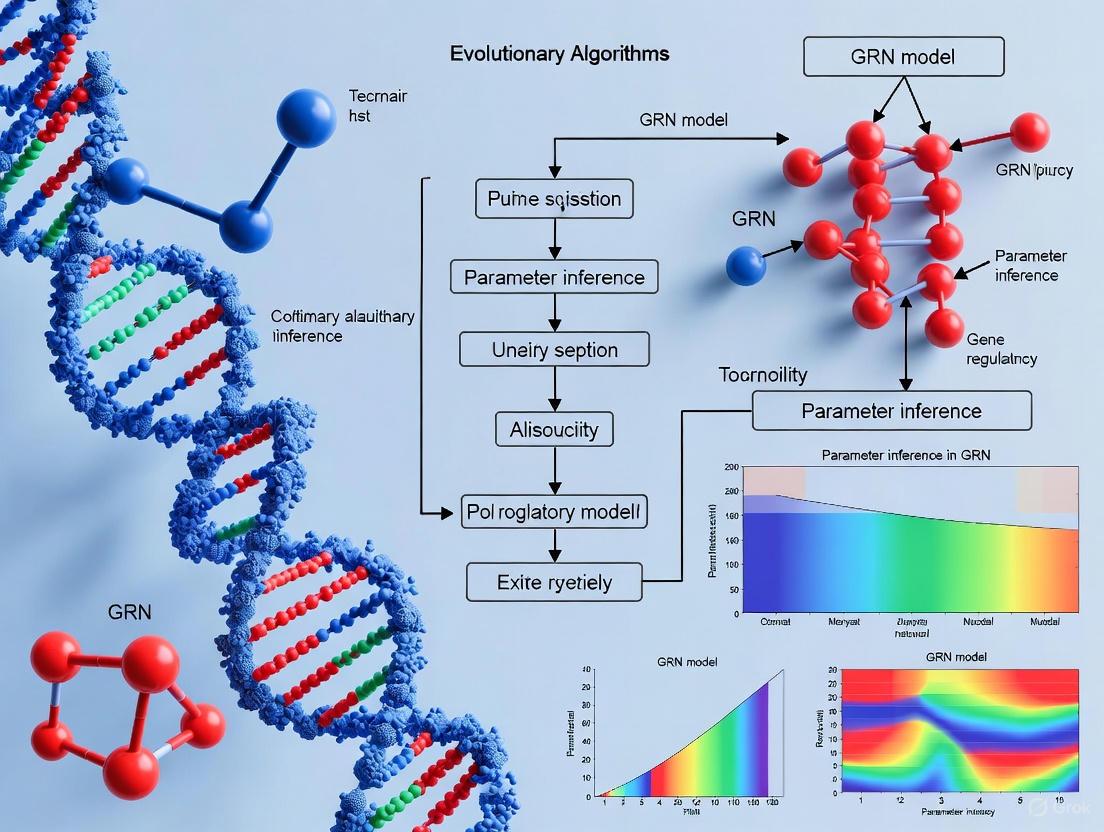

This article provides a comprehensive overview of evolutionary algorithms (EAs) for inferring parameters in Gene Regulatory Network (GRN) models.

Evolutionary Algorithms for GRN Parameter Inference: From Foundations to Clinical Applications

Abstract

This article provides a comprehensive overview of evolutionary algorithms (EAs) for inferring parameters in Gene Regulatory Network (GRN) models. It explores the foundational principles that make EAs suited for tackling the high-dimensional, non-linear optimization challenges inherent in GRN reverse-engineering from noisy expression data. We delve into key methodological approaches, including the use of S-system models, parallel computing frameworks, and sensitivity analysis. The content further addresses critical troubleshooting and optimization strategies to enhance robustness and scalability. Finally, we present validation frameworks and comparative analyses of state-of-the-art EA methods, highlighting their growing impact on drug discovery and the development of clinical biomarkers.

The Foundation of Evolutionary Algorithms in GRN Modeling

The Reverse-Engineering Challenge in Systems Biology

Gene Regulatory Networks (GRNs) are complex systems that determine the development, differentiation, and function of cells and organisms, as well as their response to environmental stimuli [1]. GRN inference, or reverse engineering, refers to the process of reconstructing these networks from gene expression data, modeling them as directed graphs where nodes represent genes and edges show regulatory relationships between them [2]. The fundamental challenge lies in deciphering the intricate causal interactions between genes and other molecules from observational data, which is often noisy, high-dimensional, and incomplete [1] [3].

The reverse-engineering process represents a significant computational challenge because GRNs are not just constellations of genes toiling individually; these genes mutually inhibit or activate one another, establishing feedback loops through which cellular processes can be exquisitely fine-tuned [3]. In systems where tissue patterning and tissue morphogenesis are coupled and occurring simultaneously, the problem becomes even more complex, as GRNs alone cannot account for the resulting patterns, requiring novel methodologies that explicitly accommodate cell movements and tissue shape changes [4].

Current Methodologies and Machine Learning Approaches

Modern GRN inference methodologies have evolved from classical statistical approaches to sophisticated machine learning techniques. Table 1 summarizes the primary computational approaches used in GRN inference, categorized by their learning paradigms and key technologies.

Table 1: Machine Learning Methods for GRN Inference

| Algorithm Name | Learning Type | Deep Learning | Input Data Type | Year | Key Technology |

|---|---|---|---|---|---|

| GENIE3 | Supervised | No | Bulk RNA-seq | 2010 | Random Forest |

| SIRENE | Supervised | No | Bulk | 2009 | Support Vector Machine |

| DeepIMAGER | Supervised | Yes | Single-cell | 2024 | Convolutional Neural Network |

| GRNFormer | Supervised | Yes | Single-cell | 2025 | Graph Transformer |

| ARACNE | Unsupervised | No | Bulk | 2006 | Information Theory |

| CLR | Unsupervised | No | Bulk | 2007 | Mutual Information |

| GRN-VAE | Unsupervised | Yes | Single-cell | 2020 | Variational Autoencoder |

| GRGNN | Semi-Supervised | Yes | Single-cell | 2020 | Graph Neural Network |

| GCLink | Contrastive | Yes | Single-cell | 2025 | Graph Contrastive Learning |

| BIO-INSIGHT | Evolutionary | No | Multiple | 2025 | Many-objective Evolutionary Algorithm |

The diversity of approaches highlights the evolution of GRN modeling from classical machine learning methods to more recent deep learning frameworks [1]. Supervised learning methods enable the prediction of direct downstream targets of transcription factors by leveraging labeled datasets containing experimentally validated regulatory interactions [1]. Unsupervised methods like ARACNE and CLR utilize information theory to identify regulatory relationships without labeled training data [1]. More recently, semi-supervised and contrastive learning approaches have emerged to leverage both labeled and unlabeled data, with graph neural networks showing particular promise for capturing the inherent graph structure of GRNs [1] [5].

Evolutionary Algorithms for Parameter Inference in GRN Models

Evolutionary Algorithms (EAs) represent a family of population-based optimization algorithms inspired by Darwinian evolution that have demonstrated significant potential for GRN model parameter inference [6]. These algorithms are particularly valuable for navigating the complex, high-dimensional parameter spaces characteristic of GRN models, as they can achieve good solutions from searching a relatively small section of the entire space [6].

The generic methodology of fitting a GRN model to data using EAs involves evolving model parameters over generations. A population of parameter sets, representing different models, undergoes genetic operations including mutation and crossover, with selection pressure favoring individuals with better fitness (model accuracy) [6]. This approach is especially effective for parameterizing complex model formalisms like S-Systems, which are differential equation systems based on power-law formalism capable of capturing complex network dynamics [6].

Recent advances in evolutionary approaches include BIO-INSIGHT, a parallel asynchronous many-objective evolutionary algorithm that optimizes consensus among multiple inference methods guided by biologically relevant objectives [7]. This approach has demonstrated statistically significant improvement in performance metrics (AUROC and AUPR) compared to primarily mathematical approaches, showing that biologically guided optimization can generate more accurate and biologically feasible networks [7].

Evolutionary Algorithm Workflow for GRN Inference

Experimental Protocols and Workflows

Protocol 1: AGETs Methodology for GRN Inference with Cell Movements

The Approximated Gene Expression Trajectories (AGETs) methodology addresses the critical challenge of reverse-engineering GRNs in tissues undergoing morphogenetic changes, where pattern formation and cell movements are coupled [4].

Materials and Reagents:

- Live zebrafish embryos (22nd to 25th somite stages)

- Fixation reagents for tissue preservation

- HCR (Hybridization Chain Reaction) reagents for tbxta, tbx16, tbx6 staining

- DAPI stain for nuclear visualization

- Antibodies for Wnt and FGF signaling pathways

- Two-photon microscope with imaging capability

Methodology:

- Live Imaging and Cell Tracking:

- Image developing zebrafish tailbud with fluorescently labeled nuclei for 2 hours at 2-minute intervals, generating 61 consecutive frames

- Process each frame using tracking algorithms (e.g., Imaris software) to obtain 3D positions of single cells over time

- Manually validate selected tracks to ensure accuracy

Gene Expression Quantification:

- Fix tailbud samples at 23-25 somite stages

- Perform HCR staining for T-box gene products (tbxta, tbx16, tbx6)

- Conduct antibody stains for Wnt and FGF signaling pathways

- Acquire 3D images using appropriate microscopy systems

- Quantify nuclear gene expression using computational pipelines

AGETs Construction:

- Align point clouds from live imaging with gene expression data

- Project 3D spatial quantifications of gene expression onto cell tracks

- Assign gene and signaling expression levels to every cell in the time lapse

- Generate approximated gene expression trajectories for each cell

GRN Reverse Engineering:

- Use subset of AGETs with Markov Chain Monte Carlo (MCMC) approach to reverse-engineer candidate GRNs

- Assess fit of resulting candidate GRNs through "live-modeling" simulations

- Validate networks by challenging with experimental perturbations [4]

Protocol 2: Evolutionary Algorithm Implementation for GRN Inference

Computational Requirements:

- Java framework for Evolutionary Algorithms (EvA2)

- High-performance computing resources for population-based optimization

- Data preprocessing and normalization pipelines

Implementation Steps:

- Model Formalism Selection:

- Choose appropriate mathematical formalism (S-Systems, ANN, etc.)

- Define parameter space and constraints based on biological knowledge

EA Parameter Configuration:

- Initialize population size (typically 50-500 individuals)

- Set mutation and crossover rates based on parameter space complexity

- Define fitness function (e.g., mean squared error between model and data)

- Establish termination criteria (generation limit or convergence threshold)

Optimization Process:

- Generate initial population of model parameters randomly

- Iterate through selection, genetic operations, and fitness evaluation

- Maintain diversity through elitism and diversity preservation mechanisms

- Converge on parameter sets that best explain expression data [6]

Validation and Consensus Building:

- Implement multiple runs with different initial conditions

- Apply consensus strategies to identify robust interactions

- Validate against held-out data or experimental perturbations [7]

AGETs Workflow for GRN Inference with Cell Movements

Research Reagent Solutions and Computational Tools

Table 2: Essential Research Reagents and Computational Tools for GRN Inference

| Category | Item | Function/Application | Key Features |

|---|---|---|---|

| Experimental Reagents | HCR (Hybridization Chain Reaction) reagents | Multiplexed gene expression quantification | High sensitivity and specificity for RNA detection |

| Antibody stains for signaling pathways | Protein-level expression quantification | Targets specific signaling molecules (Wnt, FGF) | |

| DAPI stain | Nuclear visualization and segmentation | DNA intercalation for cell identification | |

| Fluorescent labeling kits | Live cell tracking and lineage tracing | Non-toxic, photostable markers | |

| Computational Tools | GENIE3 | Supervised GRN inference | Random Forest-based, high accuracy [1] |

| BIO-INSIGHT | Evolutionary consensus inference | Many-objective optimization, biological guidance [7] | |

| GTAT-GRN | Graph neural network inference | Topology-aware attention mechanism [5] | |

| GRN-VAE | Unsupervised deep learning | Variational autoencoder for pattern discovery [1] | |

| Data Resources | DREAM Challenges | Benchmark datasets and evaluation | Standardized GRN inference benchmarks [1] |

| Single-cell RNA-seq data | Cell-type specific expression patterns | Reveals cellular heterogeneity [3] | |

| Time-series expression data | Dynamic regulatory relationships | Enables temporal network inference [3] |

Future Directions and Concluding Remarks

The field of GRN inference continues to evolve with emerging methodologies that integrate multi-source features and leverage advanced machine learning paradigms. The integration of graph topological attention with multi-source feature fusion, as demonstrated by approaches like GTAT-GRN, shows promise for substantially improving the characterization of true GRN structures [5]. Similarly, evolutionary approaches like BIO-INSIGHT demonstrate that biologically guided optimization can outperform primarily mathematical approaches, opening new avenues for more accurate and biologically feasible network inference [7].

A critical frontier in GRN inference involves developing methodologies that explicitly accommodate tissue morphogenesis and cell movements, as traditional approaches that assume separability of pattern formation and morphogenesis are inadequate for many developmental systems [4]. The AGETs methodology represents an important step in this direction, enabling reverse-engineering of GRNs underlying pattern formation in tissues undergoing complex rearrangements [4].

As high-throughput technologies continue to generate increasingly large and diverse genomic datasets, the development of robust, scalable, and biologically informed inference methods will remain essential for advancing our understanding of gene regulation in development, disease, and evolution.

Why Evolutionary Algorithms? Overcoming Noisy and Insufficient Data

Inferring Gene Regulatory Networks (GRNs) is a foundational challenge in systems biology, crucial for understanding cellular mechanisms, disease pathways, and therapeutic target discovery [1]. This process involves reconstructing the complex web of causal interactions between genes and other regulatory molecules from experimental data, most commonly gene expression profiles [7] [1]. However, real-world biological data is often characterized by high levels of noise and insufficient sample sizes, posing significant obstacles for conventional machine learning and statistical inference methods [6].

Evolutionary Algorithms (EAs) have emerged as a powerful class of optimization techniques that are particularly robust to these challenges. Inspired by Darwinian principles of natural selection, EAs maintain a population of candidate solutions that undergo iterative selection, recombination, and mutation to evolve toward an optimal fit for the data [6]. Their population-based, stochastic search nature makes them exceptionally well-suited for navigating high-dimensional, noisy solution spaces where traditional gradient-based methods fail [8] [9]. This application note details how EAs overcome the specific hurdles of noisy and insufficient data in GRN parameter inference, providing structured protocols and resources for researchers.

Core Strengths of Evolutionary Algorithms in GRN Inference

Robustness to Noisy Data

Noise in gene expression data can arise from technical measurement errors (e.g., in microarrays or RNA-seq) or intrinsic biological variability. This noise can mislead inference algorithms by corrupting fitness evaluations. EAs demonstrate inherent robustness to noise through several mechanisms:

- Population-Based Buffering: A single poor evaluation due to noise is less detrimental as EAs maintain a diverse population of solutions. The collective search process averages out stochastic errors [9].

- Implicit Noise Tolerance: Recent theoretical analyses surprisingly indicate that EAs can be more robust to noise when they avoid frequent re-evaluation of solutions. One study proved that the (1+1) EA could optimize the LeadingOnes benchmark with constant noise rates, outperforming strategies that re-evaluate solutions whenever comparisons are made [8].

- Explicit Noise Handling: Advanced EA variants incorporate specific strategies to mitigate noise. The E-NSGA-II algorithm, for instance, uses an Elman neural network for dynamic fitness estimation, which acts as a filter against noisy evaluations [9]. Furthermore, adaptive re-evaluation methods, as developed for the CMA-ES algorithm, dynamically determine the optimal number of re-evaluations based on estimated noise levels and problem characteristics, balancing accuracy and computational cost [10].

Effectiveness with Insufficient Data

Biological experiments are often limited by cost, time, or sample availability, leading to datasets where the number of genes (features) far exceeds the number of samples (observations). EAs excel in these data-constrained environments:

- No Gradient Requirement: Unlike many deep learning models that require large datasets for stable gradient estimation, EAs operate through selection and variation operators, making them effective even with small sample sizes [6].

- Leveraging Domain Knowledge: EAs can seamlessly integrate existing biological knowledge into the optimization process. For example, the BIO-INSIGHT framework uses biologically guided objective functions to optimize consensus among multiple inference methods, thereby amortizing the cost of optimization in high-dimensional spaces and achieving high biological coverage even with limited direct data [7].

- Hybrid and Transfer Learning: EAs can be integrated with transfer learning strategies. A model trained on a data-rich species (e.g., Arabidopsis thaliana) can provide a prior, and an EA can then efficiently fine-tune the network parameters for a target species with limited data (e.g., poplar or maize), effectively overcoming data insufficiency [11].

Table 1: Comparison of EA-based GRN Inference Methods and Their Data Handling Capabilities

| Method / Algorithm | Model Type | Key Innovation | Handling of Noisy Data | Handling of Insufficient Data |

|---|---|---|---|---|

| BIO-INSIGHT [7] | Many-Objective EA (Consensus) | Biologically guided optimization functions | High (Robust consensus across methods) | High (Amortizes optimization cost) |

| E-NSGA-II [9] | Multi-Objective EA (NSGA-II) | Elman neural network for fitness estimation | High (Explicit denoising via neural network) | Medium |

| CMA-ES with Adaptive Re-evaluation [10] | Evolution Strategy | Theoretical optimum for re-evaluation count | High (Adapts to noise level) | Medium |

| GA for Synthetic Data Generation [12] | Genetic Algorithm | Generates synthetic data for class balance | Medium (Generates data for imbalance) | High (Creates synthetic samples) |

| S-System Parameter Inference [6] | Various EAs (GA, ES, DE) | Fine-grained differential equation models | Medium (Population-based search) | Low (Requires more data for many parameters) |

Application Notes & Experimental Protocols

Protocol: Inferring a GRN using a Many-Objective Evolutionary Algorithm

This protocol outlines the steps for employing a many-objective EA, such as BIO-INSIGHT, to infer a consensus GRN from noisy gene expression data [7].

1. Input Data Preparation:

- Gene Expression Matrix: Obtain a normalized gene expression matrix (e.g., TMM-normalized RNA-seq counts) with dimensions m genes × n samples [11].

- Prior Biological Knowledge (Optional): Compile a list of known or hypothesized regulatory interactions from databases (e.g., AGRIS, PlantRegMap) to guide the inference [7] [11].

2. Algorithm Initialization:

- Parameter Setup: Configure the EA parameters: population size (e.g., 100-500), number of generations, crossover and mutation rates.

- Objective Function Definition: Define multiple objective functions. Example objectives include:

- Accuracy: Fit to the observed expression data.

- Sparsity: Minimize the number of edges in the network.

- Biological Plausibility: Maximize agreement with prior knowledge or known network motifs [7].

3. Evolutionary Optimization:

- Initialization: Generate an initial population of candidate GRNs.

- Evaluation: Evaluate each candidate GRN against all defined objective functions.

- Selection & Variation: Apply non-dominated sorting (e.g., NSGA-II) to select parents. Create offspring through crossover (recombining network edges) and mutation (adding/removing edges).

- Termination: Repeat the selection-variation-evaluation cycle for a fixed number of generations or until convergence.

4. Output and Validation:

- Pareto-Optimal Solutions: The output is a set of Pareto-optimal networks representing trade-offs between the objectives.

- Consensus Network: Select a final consensus network from the Pareto front or use the entire set for robust inference [7].

- In-silico and in-vitro validation of key predicted regulatory interactions is crucial (e.g., using ChIP-seq or perturbation experiments).

The following workflow diagram illustrates this multi-objective optimization process.

Protocol: Generating Synthetic Data with Genetic Algorithms for Imbalanced Learning

This protocol uses a Genetic Algorithm (GA) to generate synthetic samples for the minority class in an imbalanced dataset, thereby improving the performance of downstream GRN inference models [12].

1. Problem Identification:

- Identify a pronounced class imbalance in your labeled regulatory data (e.g., few known positive TF-target interactions versus many unknown/negative ones).

2. GA-Based Oversampling:

- Fitness Function: Define a fitness function using a classifier like Logistic Regression or SVM to capture the underlying data distribution. The goal is to generate synthetic data that maximizes the classifier's ability to distinguish the minority class [12].

- Population Initialization: Create an initial population of potential synthetic data points, often by applying small perturbations to existing minority class instances.

- Evolution: Evolve the population of synthetic data points over multiple generations. Selection is based on how well the synthetic data improves the classifier's performance on a validation set. Crossover and mutation operators are used to explore the feature space.

3. Dataset Augmentation and Model Training:

- Augmentation: Add the fittest synthetic data points from the final GA population to the original training set, creating a balanced dataset.

- Training: Train the final GRN inference model (e.g., a classifier to predict edges) on this augmented, balanced dataset. This approach has been shown to significantly outperform traditional oversampling methods like SMOTE and ADASYN in metrics like F1-score and AUROC [12].

The logical flow of this data generation process is shown below.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for EA-driven GRN Research

| Item / Resource | Type | Function in EA-based GRN Inference | Example Sources / Tools |

|---|---|---|---|

| Normalized Expression Data | Data | The fundamental input for inferring regulatory relationships. | RNA-seq, Microarray data (e.g., from SRA) [11] |

| Validated TF-Target Interactions | Data (Gold Standard) | Serves as ground truth for training supervised models and validating predictions. | AGRIS, PlantRegMap, species-specific databases [11] |

| BIO-INSIGHT / GENECI Software | Software | Implements the many-objective EA for robust, biologically-informed consensus inference. | Python PyPI package: GENECI [7] |

| EvoJAX / PyGAD | Software | GPU-accelerated toolkits for running evolutionary algorithms, compressing computation time. | EvoJAX (Google), PyGAD [13] |

| EvA2 Framework | Software | A Java-based framework for designing and executing Evolutionary Algorithms. | EvA2 [6] |

| S-System Model Formalism | Model | A powerful fine-grained differential equation model for representing GRN dynamics. | Mathematical framework for quantitative modeling [6] |

Evolutionary Algorithms provide a uniquely powerful and flexible framework for tackling the pervasive challenges of noise and data insufficiency in Gene Regulatory Network inference. Their ability to perform robust, global optimization without relying on gradients, to integrate diverse biological constraints, and to generate novel solutions makes them indispensable in the computational biologist's toolkit. As the field moves towards more personalized medicine and the analysis of increasingly complex datasets, the synergy between EAs and other AI technologies, such as deep learning and large language models, promises to further unlock the regulatory secrets of the cell [10].

The reverse engineering of Gene Regulatory Networks (GRNs) from high-throughput expression data represents a fundamental challenge in systems biology and computational drug discovery [6]. Evolutionary Algorithms (EAs) have emerged as powerful optimization tools for this task, capable of navigating the high-dimensional, multi-modal parameter spaces characteristic of biological network models [6] [14]. These population-based stochastic algorithms mimic principles of Darwinian evolution—selection, recombination, and mutation—to iteratively refine candidate solutions toward an optimal fit between model predictions and experimental data [6].

The appeal of EAs in GRN inference stems from their ability to handle nonlinear models, avoid local optima through global search, and incorporate diverse biological constraints directly into the optimization process [14]. Unlike deterministic methods that may converge rapidly to local solutions, EAs maintain a diverse population of potential parameter sets, providing resilience to noise and incompleteness in biological datasets [14]. This robustness is particularly valuable for pharmaceutical researchers seeking to identify therapeutic targets from noisy transcriptomic data, where model accuracy directly impacts downstream validation experiments.

Core Conceptual Framework

Populations in Evolutionary Search

In EA terminology, a population refers to a collection of candidate solutions—in GRN inference, these are typically complete parameter sets for a specified network model [6]. For a system of differential equations modeling gene interactions, each individual in the population represents one possible instantiation of kinetic parameters, interaction strengths, and rate constants. Population-based approaches enable parallel exploration of the solution landscape, maintaining diversity to prevent premature convergence while selectively reinforcing promising regions of parameter space [6] [14].

Population initialization can significantly impact optimization performance. In practice, parameters are often drawn from uniform random distributions spanning biologically plausible ranges, though informed initialization using prior knowledge can accelerate convergence [15]. Population size represents a critical trade-off between computational expense and search effectiveness, with typical GRN inference experiments employing hundreds of individuals per generation [15].

Fitness Functions for Biological Plausibility

The fitness function quantitatively measures how well a candidate parameter set explains experimental data, serving as the selection pressure driving evolutionary improvement [15] [6]. For GRN inference, the mean absolute error between simulated and experimental expression values provides a straightforward fitness metric, though more sophisticated functions may incorporate additional biological constraints [15].

Advanced applications employ multi-objective optimization frameworks that simultaneously maximize multiple aspects of biological plausibility. The BIO-INSIGHT algorithm, for example, expands the objective space to achieve high biological coverage during inference, optimizing consensus among multiple inference methods while incorporating biologically relevant objectives [7]. Such approaches demonstrate that biologically guided optimization outperforms primarily mathematical approaches in both AUROC and AUPR metrics [7].

Genetic Operators for Parameter Space Exploration

Genetic operators manipulate individuals to create new candidate solutions, balancing exploration of new parameter regions with exploitation of known promising areas:

Mutation introduces random variations to parameter values, maintaining population diversity and enabling escape from local optima [15]. In GRN parameter estimation, polynomial bounded mutation functions ensure parameters remain within biologically realistic ranges while exploring the immediate neighborhood of existing solutions [15].

Crossover (recombination) combines information from parent solutions to create offspring, potentially merging beneficial traits from different lineages [15]. Two-point crossover is commonly employed, exchanging contiguous parameter blocks between parents to create novel combinations [15].

Selection determines which individuals proceed to the next generation, typically based on tournament selection where randomly chosen subsets compete based on fitness [15]. This probabilistic approach preserves some less-fit individuals that may contain useful genetic material absent from the current best solutions.

Table 1: Genetic Operators in Evolutionary Algorithms for GRN Inference

| Operator Type | Implementation Example | Biological Analogy | Role in GRN Inference |

|---|---|---|---|

| Selection | Tournament selection | Natural selection | Preserves parameter sets that best fit expression data |

| Crossover | Two-point crossover | Sexual reproduction | Combines regulatory interactions from different network models |

| Mutation | Polynomial bounded mutation | Random mutation | Explores new parameter values within biologically plausible bounds |

Quantitative Comparison of EA Approaches

The landscape of evolutionary algorithms for GRN inference encompasses diverse strategies with varying performance characteristics. Recent benchmarking efforts have systematically evaluated these approaches across multiple kinetic formulations and noise conditions, providing empirical guidance for algorithm selection [14].

Table 2: Performance Comparison of Evolutionary Algorithms for GRN Parameter Inference

| Algorithm | Best Suited Kinetic Models | Noise Resilience | Computational Efficiency | Key Strengths |

|---|---|---|---|---|

| CMAES | GMA, Linear-logarithmic [14] | Low [14] | High (fraction of others' cost) [14] | Rapid convergence on clean data |

| SRES | GMA, Michaelis-Menten, Linear-logarithmic [14] | High [14] | Moderate [14] | Versatile application across kinetics |

| ISRES | GMA [14] | High [14] | Low [14] | Reliability with increasing noise |

| G3PCX | Michaelis-Menten [14] | High [14] | High (numerous folds saving) [14] | Efficacy regardless of noise |

| DE | Not recommended [14] | Poor [14] | Poor [14] | Dropped due to poor performance |

Comparative studies reveal that self-adaptive Evolution Strategies (ESs) generally outperform both genetic algorithms (GAs) and simulated annealing (SA) for continuous parameter optimization in biological contexts [14]. This advantage stems from their specialized design for continuous search spaces and capacity for self-adapting strategy parameters during optimization [14]. The terrain of biological parameter space is particularly challenging, characterized by numerous steep optima, inconsistency due to experimental variability, and sensitivity to small parameter changes [14].

Experimental Protocol: EA for GRN Parameter Estimation

Workflow for Evolutionary Parameter Inference

The following diagram illustrates the iterative cycle of evolutionary parameter estimation for GRN models:

Detailed Protocol Steps

This protocol implements an evolutionary approach to parameter estimation using the DEAP (Distributed Evolutionary Algorithms in Python) framework, following established methodologies with modifications for GRN inference [15].

Phase 1: Population Initialization

- Step 1.1: Define parameter bounds based on biological constraints for each kinetic parameter in the GRN model.

- Step 1.2: Generate initial population of 500 individuals by sampling parameters from uniform random distributions within specified bounds [15].

- Step 1.3: Encode parameters as real-valued vectors for evolutionary operations.

Phase 2: Simulation and Evaluation

- Step 2.1: For each individual in the population, simulate the GRN model using numerical integration methods appropriate for the specific formalism (S-system, ODE, etc.) [6].

- Step 2.2: Calculate fitness using mean absolute error between simulated and experimental expression values across all genes and time points [15].

- Step 2.3: Apply additional penalty terms to fitness score for biologically implausible parameter combinations when necessary.

Phase 3: Evolutionary Operations

- Step 3.1: Perform tournament selection with size 4-7 to identify parent individuals for reproduction [15].

- Step 3.2: Apply two-point crossover to selected parents with probability 0.8 to create offspring solutions [15].

- Step 3.3: Implement polynomial bounded mutation with probability 0.1 per parameter to maintain diversity [15].

- Step 3.4: Create new generation by combining elite individuals (top 5%) with offspring.

Phase 4: Termination and Validation

- Step 4.1: Repeat phases 2-3 for 100 generations or until convergence criteria met [15].

- Step 4.2: Validate best parameter set on held-out experimental data not used during training.

- Step 4.3: Perform sensitivity analysis to identify well-constrained versus poorly-identified parameters.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools and Resources for EA-based GRN Inference

| Resource Category | Specific Examples | Function in GRN Research |

|---|---|---|

| Evolutionary Algorithm Frameworks | DEAP (Python) [15], EvA2 (Java) [6] | Provide implemented EA components for custom optimization pipelines |

| GRN Modeling Formalisms | S-Systems [6], Artificial Neural Networks [6] | Mathematical representations of gene regulatory interactions |

| Biological Databases | Gene Expression Omnibus (GEO), ArrayExpress | Sources of experimental transcriptomic data for model fitting |

| Model Evaluation Metrics | AUROC, AUPR [7] | Quantitative assessment of inferred network accuracy and biological plausibility |

S-System Modeling Framework for GRN Inference

The S-system formalism provides a particularly powerful representation for GRN modeling within evolutionary inference frameworks, capturing complex network dynamics through power-law approximations of biochemical interactions [6].

The S-system model represents each gene's rate of change as the difference between synthesis and degradation terms, both approximated as power-law functions of all system variables [6]. For a GRN with n genes, the dynamics of gene i are described by:

dXᵢ/dt = αᵢ Π Xⱼ^{gᵢⱼ} - βᵢ Π Xⱼ^{hᵢⱼ}

where αᵢ and βᵢ are rate constants, and gᵢⱼ and hᵢⱼ are kinetic orders representing the influence of gene j on the synthesis and degradation of gene i's product, respectively [6]. Evolutionary algorithms optimize these parameters to minimize discrepancy between simulated and experimental expression patterns.

Advanced Applications and Future Directions

Evolutionary algorithms have demonstrated particular utility in addressing the consensus inference problem in GRN modeling, where multiple inference techniques produce disparate results with preferences for specific datasets [7]. The BIO-INSIGHT algorithm represents a state-of-the-art approach that employs a parallel asynchronous many-objective evolutionary algorithm to optimize consensus among multiple inference methods guided by biologically relevant objectives [7]. This approach has shown statistically significant improvement in both AUROC and AUPR on benchmarks of 106 GRNs, demonstrating the advantage of biologically guided optimization over purely mathematical approaches [7].

In pharmaceutical applications, EA-based GRN inference has revealed disease-specific regulatory patterns in complex conditions like myalgic encephalomyelitis and fibromyalgia, suggesting clinical utility in biomarker identification and potential therapeutic target discovery [7]. The robustness of these methods against experimental noise and their ability to integrate diverse biological constraints positions them as valuable tools for drug development pipelines seeking to prioritize molecular targets for experimental validation.

In the field of systems biology, gene regulatory network (GRN) models are abstract constructs used to represent the complex interactions between genes and their products [16]. These models can be classified along a spectrum of granularity, from coarse-grained models that capture broad, logical relationships to fine-grained models that provide detailed, quantitative dynamics [6]. Coarse-grained models, such as Boolean networks, typically rely on discrete variables and are suited for global, high-level analysis of network architecture. In contrast, fine-grained models, such as those based on systems of differential equations, employ continuous variables to capture the detailed kinetics of regulatory interactions, albeit with increased parameter complexity [6].

S-system models within Biochemical Systems Theory (BST) offer a particularly powerful framework for quantitative GRN modeling because they provide a canonical nonlinear representation that can be tailored to different levels of granularity while maintaining a consistent mathematical structure [17]. These models have the format:

[ \dot{X}i = \alphai \prod{j=1}^{n+m} Xj^{g{ij}} - \betai \prod{j=1}^{n+m} Xj^{h_{ij}} ]

where (Xi) represents the state variable (e.g., gene expression level), (\alphai) and (\betai) are non-negative rate constants, and (g{ij}) and (h_{ij}) are real-valued kinetic orders capturing the regulatory influence of variable (j) on the synthesis and degradation of variable (i), respectively [17]. This power-law formalism enables a flexible modeling approach that can range from coarse-grained representations with limited interactions to fine-grained models with highly detailed parameterization.

S-system Models in Evolutionary Algorithms Parameter Inference

Theoretical Foundation of S-systems for Parameter Inference

The inference of GRN parameters from experimental data represents a significant challenge in systems biology, particularly due to the nonlinear nature of regulatory interactions and the typically limited quantity of available data [6]. Evolutionary algorithms (EAs), including genetic algorithms, evolution strategies, and differential evolution, have emerged as powerful tools for addressing this challenge due to their ability to navigate large, complex solution spaces effectively [6] [17].

S-system models are exceptionally well-suited for parameter inference with EAs due to their analytical tractability at steady state. After logarithmic transformation, the steady-state equations of an S-system can be represented as a system of linear equations:

[ AD \cdot yD + AI \cdot yI = b ]

where (yD) and (yI) are vectors of the logarithms of dependent and independent variables, respectively, and the matrices (AD) and (AI) contain the differences (g{ij} - h{ij}) for all interactions [17]. This linearization enables efficient computation of fitness functions during evolutionary optimization, significantly accelerating the parameter inference process.

Table 1: Comparison of Coarse-Grained and Fine-Grained Modeling Approaches

| Characteristic | Coarse-Grained Models | Fine-Grained Models | S-system Flexibility |

|---|---|---|---|

| Computational Demand | Lower | Higher | Configurable based on network size |

| Parameter Requirements | Fewer parameters | Extensive parameterization | Scalable parameter space |

| Data Requirements | Moderate | Substantial | Adaptable to data availability |

| Biological Detail | Qualitative relationships | Quantitative dynamics | Adjustable granularity |

| Regulatory Specificity | Logical interactions | Kinetic parameters | Tunable resolution |

| Typical Applications | Network motif identification, architecture analysis | Dynamic simulation, quantitative prediction | Both structural and dynamic analysis |

Multi-Species Inference with Evolutionary Algorithms

Recent advances in evolutionary algorithms have enabled the inference of GRN models across multiple species, incorporating phylogenetic information to improve inference accuracy. The Multi-species Regulatory neTwork LEarning (MRTLE) algorithm represents a sophisticated approach that uses a phylogenetic framework to infer regulatory networks in multiple species simultaneously [18].

In MRTLE, the regulatory network of each species is modeled as a probabilistic graphical model, with phylogenetic information incorporated through a prior probability distribution over edge gain and loss from ancestral to extant species [18]. This approach models the probability of edge gain and loss as a continuous-time Markov process parameterized by a rate matrix Q and branch length t₂, allowing for branch-specific probabilities of regulatory change. The evolutionary prior significantly enhances inference accuracy, particularly when working with the limited data typically available for non-model organisms [18].

Application Notes: Experimental Protocols

Protocol 1: Fine-Grained S-system Parameter Inference Using Evolutionary Algorithms

Purpose: To infer fine-grained S-system parameters from time-series gene expression data using evolutionary algorithms.

Materials:

- High-quality gene expression data (microarray or RNA-seq)

- Computational framework for evolutionary algorithms (e.g., EvA2 Java framework)

- Prior biological knowledge (e.g., known regulatory interactions from databases)

- High-performance computing resources

Procedure:

- Data Preprocessing: Normalize expression data, handle missing values, and transform to logarithmic scale if necessary.

- Model Scope Definition: Identify dependent and independent variables based on biological context and research questions.

- EA Parameter Initialization:

- Population size: 100-500 individuals

- Representation: Real-valued encoding for α, β, g, h parameters

- Mutation rate: 0.01-0.05 per parameter

- Crossover rate: 0.7-0.9

- Selection strategy: Tournament or fitness-proportional selection

- Fitness Function Definition: Implement mean squared error between simulated and experimental data, optionally incorporating regularization terms for parameter sparsity.

- Evolutionary Optimization: Run EA for 1000-5000 generations or until convergence criteria are met.

- Model Validation: Perform cross-validation, assess predictive capability on withheld data, and compare with known biological interactions.

Troubleshooting:

- For premature convergence: Increase mutation rate or population diversity measures

- For overfitting: Implement stronger regularization or reduce model complexity

- For poor convergence: Verify data quality and consider parameter scaling

Protocol 2: Multi-Species S-system Inference with MRTLE

Purpose: To infer S-system parameters across multiple phylogenetically related species using the MRTLE framework.

Materials:

- Gene expression data for multiple species

- Phylogenetic tree with branch lengths

- Gene orthology relationships, including gene duplication events

- Sequence-specific motif information (if available)

Procedure:

- Data Integration: Compile expression datasets, ensuring comparable conditions across species.

- Phylogenetic Prior Specification: Define rate parameters for regulatory edge loss and gain based on phylogenetic distances.

- MRTLE Initialization:

- Set prior probability for regulatory interactions combining phylogenetic and sequence information

- Initialize species-specific networks with orthology mappings

- Iterative Optimization: Execute MRTLE algorithm to simultaneously optimize all species networks:

- E-step: Estimate expected edge states given current parameters

- M-step: Update parameters to maximize expected complete-data log-likelihood

- Cross-Species Validation: Assess conserved regulatory interactions against known biological pathways.

- Network Analysis: Identify evolutionarily conserved and diverged regulatory modules.

Validation Methods:

- Comparison with ChIP-based transcription factor binding data

- Functional enrichment analysis of regulated gene sets

- Prediction of regulatory changes in experimentally perturbed systems

Table 2: Research Reagents and Computational Tools for S-system Modeling

| Resource | Type | Function | Applicability |

|---|---|---|---|

| EvA2 Java Framework [6] | Software | Evolutionary algorithms framework | Parameter inference for S-system models |

| BioTapestry [19] | Software | GRN visualization and modeling | Network representation and analysis |

| SBML-Compatible Tools [19] | Software Standard | Model exchange and simulation | Interoperability between modeling platforms |

| Microarray/RNA-seq Data | Experimental Data | Gene expression measurements | Model training and validation |

| Phylogenetic Tree Data | Evolutionary Data | Species relationship information | Multi-species inference with MRTLE |

| ChIP-seq Data | Experimental Data | Transcription factor binding sites | Model validation and prior knowledge integration |

Visualization and Workflow Diagrams

S-system Parameter Inference Workflow

Multi-Species S-system Inference with Phylogenetic Prior

S-system Steady-State Solution Space

Discussion and Future Perspectives

The evolution from coarse-grained to fine-grained S-system models represents a significant advancement in quantitative GRN modeling, enabling researchers to capture both the architectural and dynamic properties of gene regulatory systems. The integration of evolutionary algorithms with S-system formalism provides a powerful framework for parameter inference, particularly when dealing with the limited and noisy data typical of biological experiments [6].

Future developments in this field are likely to focus on several key areas. Multi-scale modeling approaches that integrate fine-grained dynamic models with coarse-grained architectural analysis will provide more comprehensive understanding of regulatory systems [16]. Improved evolutionary algorithms with better convergence properties and reduced computational requirements will enable larger-scale network inference. Additionally, the integration of diverse data types - including sequence motifs, protein-protein interactions, and epigenetic information - will enhance the biological relevance and predictive power of inferred models [18] [16].

The application of these methods to drug development offers promising avenues for identifying therapeutic targets and understanding mechanisms of action. By modeling the differential operation of GRNs in healthy and diseased states, fine-grained S-system models can identify critical regulatory nodes whose manipulation may restore normal cellular function [17]. As these methodologies continue to mature, they will play an increasingly important role in both basic biological research and translational applications.

The inference of Gene Regulatory Networks (GRNs) is a fundamental challenge in systems biology, critical for deciphering the complex interactions that control cellular functions and for identifying novel therapeutic targets [20] [7]. Traditional GRN inference techniques often exhibit disparities in their results and a clear preference for specific datasets, limiting their robustness and general applicability [7]. To address these challenges, the field is increasingly turning to evolutionary algorithms (EAs), which harness the principles of Darwinian evolution as a powerful, general-purpose search engine. This paradigm, sometimes termed Natural Generative AI (NatGenAI), offers a pathway to explore solution spaces beyond the limits of available data, fostering greater diversity and creativity in the solutions discovered [21]. These methods are particularly potent for personalizing Boolean network models of GRNs using data such as steady-state gene expression profiles from specific cell lines or patients, enabling the development of tailored models with significant implications for drug target discovery and personalized medicine [20]. These Application Notes and Protocols provide a detailed guide for researchers and drug development professionals to implement these cutting-edge, biologically-inspired computational methods.

Key Quantitative Findings in Evolutionary GRN Research

Empirical evaluations of evolutionary methods for GRN inference and related tasks demonstrate their significant advantages. The table below summarizes key performance data from recent studies.

Table 1: Performance of evolutionary algorithms in GRN and microbial evolution tasks

| Algorithm or Method | Key Comparative Finding | Performance Metrics | Context of Use |

|---|---|---|---|

| BIO-INSIGHT [7] | Statistically significant improvement against MO-GENECI and other consensus strategies. | Improved AUROC (Area Under the Receiver Operating Characteristic Curve) and AUPR (Area Under the Precision-Recall Curve). | Consensus inference of GRNs on a benchmark of 106 networks. |

| Lexicase Selection [22] | Generally outperformed commonly used directed evolution approaches (elite and top 10% selection). | Enhanced performance when selecting for multiple functional traits. | Directed evolution of microbial populations for multiple traits. |

| Non-Dominated Elite Selection [22] | Generally outperformed commonly used directed evolution approaches (elite and top 10% selection). | Enhanced performance when selecting for multiple functional traits. | Directed evolution of microbial populations for multiple traits. |

| In Silico Replication (Eco/Evo) [23] | A study with 'strong' evidence (P=0.001) has a replicability of ~75%. Requires a twofold increase in sample size to reach ~90% replicability. | Replicability Probability | Field-wide replication project in ecology and evolution. |

Experimental Protocols

This section provides detailed methodologies for implementing key evolutionary algorithms in GRN research.

Protocol: Personalizing a Stochastic Boolean Network Model from a Steady-State Expression Profile

This protocol details the process of calibrating a parameterized stochastic Boolean network (SBN) to create a cell-type or patient-specific GRN model using a single steady-state gene expression measurement [20].

Applications: Creating personalized models for non-small cell lung cancer (NSCLC) cell lines or patient samples to predict individual responses to perturbations and identify therapeutic strategies [20].

Materials:

- Hardware: Standard computer workstation.

- Software: Programming environment (e.g., Python, R, Julia) or specialized software like MaBoSS [20].

- Input Data:

- A generic Boolean GRN model (topology and logical rules).

- A single steady-state gene expression measurement (e.g., from RNA-seq) for the condition or patient of interest.

Procedure:

- Model Formulation: Define a parameterized stochastic Boolean network model. This involves introducing node-specific noise parameters that control the probability of a gene flipping its state instead of following its deterministic update rule.

- Problem Reformulation: Under the assumption that the provided gene expression profile represents the steady-state distribution of the Markov chain associated with the SBN, reformulate the parameter estimation problem as a system of linear equations. This ensures ergodicity and the existence of a unique solution.

- Parameter Estimation: Given the high computational demand of explicitly solving the linear system, employ a simulation-based estimation approach: a. Simulate the SBN across a range of parameter values. b. For each parameter set, run the network until it reaches a steady-state distribution. c. Compare the simulated steady-state distribution to the provided experimental gene expression profile. d. Identify the parameter set that minimizes the difference between the simulation and experimental data. Optimization algorithms can be used to efficiently search the parameter space.

- Model Validation: Validate the personalized SBN by testing its ability to reproduce known behaviors or by comparing its predictions to additional, held-out experimental data.

Protocol: Biologically-Guided Consensus GRN Inference using BIO-INSIGHT

This protocol describes the use of the BIO-INSIGHT algorithm to optimize the consensus of multiple GRN inference methods, guided by biologically relevant objectives [7].

Applications: Inferring more accurate and biologically feasible GRNs from gene expression data; revealing disease-specific GRN patterns in complex conditions like myalgic encephalomyelitis/chronic fatigue syndrome (ME/CFS) and fibromyalgia (FM) [7].

Materials:

- Hardware: Computer cluster or high-performance computing (HPC) environment capable of parallel processing.

- Software: BIO-INSIGHT Python library (available on PyPI:

GENECI). - Input Data:

- Gene expression dataset(s) for the biological condition of interest.

- (Optional) Prior biological knowledge (e.g., known pathways, interactions).

Procedure:

- Initialization: Execute multiple base GRN inference methods (e.g., GENIE3, PIDC, etc.) on the input gene expression data to generate a set of initial candidate networks.

- Algorithm Execution: Run the BIO-INSIGHT algorithm, which implements a parallel asynchronous many-objective evolutionary algorithm. a. Representation: Encode the consensus network and its features as an individual in the evolutionary population. b. Evaluation: Evaluate individuals against multiple objectives, which are designed to maximize consensus among the base methods while ensuring high biological coverage and relevance. This expands the objective space beyond purely mathematical fits. c. Variation and Selection: Apply evolutionary operators (crossover, mutation) and selection based on non-dominated sorting (e.g., similar to NSGA-II) to create new generations of candidate consensus networks.

- Output: The algorithm outputs an optimized consensus GRN that demonstrates statistically significant improvement in standard metrics (AUROC, AUPR) over other consensus strategies and base methods [7].

- Downstream Analysis: Analyze the final consensus network to identify key regulatory interactions, hubs, and modules specific to the disease condition, which can inform biomarker identification and target discovery.

Protocol: Applying Multi-Objective Selection for Directed Microbial Evolution

This protocol adapts artificial selection methods from evolutionary computing, specifically multi-objective selection techniques, for directing the evolution of microbial populations in the laboratory [22].

Applications: Enhancing multiple functional traits in a single microbial strain or producing a set of diverse strains that each specialize in different traits, for biotech, medical, or agricultural applications [22].

Materials:

- Biological: Library of microbial variants (e.g., communities, genomes).

- Lab Equipment: Culturing devices, continuous culture devices (e.g., morbidostats), or equipment for high-throughput screening.

- Computational: Software to implement selection algorithms (e.g., Python, R).

Procedure:

- Population Growth & Evaluation: Grow the library of microbial variants. Measure the performance of each variant (or population) against the multiple traits of interest (e.g., plastic degradation efficiency, molecule production yield, growth rate).

- Progenitor Selection: Instead of simply selecting the top-performing variants (elite selection), apply a multi-objective selection algorithm: a. Lexicase Selection: Randomize the order of the traits (test cases). Sequentially filter the population, keeping only the variants in the current selection that are in the elite group for the first trait. Repeat this filtering for each subsequent trait in the random order until one or a few variants remain. These are selected as progenitors. b. Non-Dominated Elite Selection: Identify the Pareto front—the set of variants for which no other variant is better across all traits. All variants on this non-dominated front are selected as progenitors for the next generation.

- Propagation: Use the selected progenitor variants to produce the next generation. This may involve partitioning high-performing populations or using individuals from these populations to found new cultures.

- Iteration: Repeat the cycle of growth, evaluation, multi-objective selection, and propagation for multiple generations until the desired level of performance or trait combination is achieved.

Visualization of Workflows and Relationships

The following diagrams, generated with Graphviz, illustrate the logical flow and key relationships of the protocols described above.

Personalized Boolean Network Calibration

BIO-INSIGHT Consensus GRN Inference

Multi-Objective Microbial Directed Evolution

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key research reagents and computational tools for evolutionary GRN research

| Item Name | Function / Application | Specifications / Examples |

|---|---|---|

| Base GRN Inference Methods | Generate initial candidate networks from expression data for consensus algorithms. | GENIE3, PIDC, or other methods integrated by BIO-INSIGHT [7]. |

| Stochastic Boolean Network Simulator | Simulate the dynamics of a parameterized BN to generate steady-state distributions. | MaBoSS [20], or custom scripts in Python/R. |

| Evolutionary Computing Framework | Provide the infrastructure for implementing selection algorithms and evolutionary operators. | DEAP (Python), GAUL (C), or custom code in a high-performance language [22] [21]. |

| Gene Expression Dataset | Serve as the primary input data for inferring or personalizing GRN models. | RNA-seq or microarray data from public repositories (e.g., GEO) or in-house experiments [20] [7]. |

| High-Performance Computing (HPC) Cluster | Amortize the cost of optimization in high-dimensional spaces and enable parallel execution. | Required for running BIO-INSIGHT and computationally intensive stochastic simulations [7]. |

Methodologies and Real-World Applications of EA in GRNs

Inference of Gene Regulatory Networks (GRNs) from high-throughput gene expression data is a cornerstone problem in modern systems biology. The goal is to reconstruct the causal, directional interactions between genes, often represented as a graph where edges signify regulation [6]. Given the high-dimensional, noisy, and non-linear nature of biological data, coupled with the combinatorial complexity of possible network structures, this reverse-engineering task presents a significant optimization challenge [6] [24].

Evolutionary Algorithms (EAs), a family of population-based, stochastic optimization methods inspired by natural selection, have emerged as powerful tools for navigating this complex solution space. Unlike gradient-based methods, EAs do not require derivative information, making them well-suited for black-box optimization problems where the functional form of the model is unknown or difficult to characterize [25]. This application note details three prominent EA variants—Genetic Algorithms (GAs), Evolution Strategies (ES), and the Covariance Matrix Adaptation Evolution Strategy (CMA-ES)—and their specific protocols for inferring parameters and structures of GRN models.

The General EA Framework for GRNs

The generic process of fitting a GRN model using an EA involves several key components, which are shared across different flavors of EAs [6]:

- Model Selection: A mathematical formalism is chosen to represent the GRN (e.g., a system of differential equations).

- Parameter Encoding: The parameters of the chosen model (e.g., interaction strengths, rate constants) are encoded into a data structure called a "chromosome" or "individual."

- Population Evolution: A population of such individuals evolves over multiple generations. In each generation:

- The fitness of each individual is evaluated by how well the corresponding model fits the experimental gene expression data.

- Genetic operators (selection, crossover, mutation) are applied to create a new, hopefully better-adapted, population of candidate solutions.

This process continues until a satisfactory model is found or a termination criterion is met [6]. The differences between GA, ES, and CMA-ES lie primarily in how they represent solutions, the genetic operators they employ, and their specific strategies for adapting the search distribution.

Quantitative Performance Comparison

Evaluations like the BEELINE framework have systematically benchmarked various GRN inference algorithms, including those based on evolutionary principles, on both synthetic and real-world datasets [24]. Performance is often measured using metrics like the Area Under the Precision-Recall Curve (AUPRC) and its ratio over a random predictor.

The table below summarizes the performance of a selection of algorithms, including some EA-based methods, on synthetic network topologies, illustrating the variation in performance across different network structures [24].

Table 1: Performance of GRN Inference Algorithms on Synthetic Networks (AUPRC Ratio). A higher ratio indicates better performance relative to a random predictor. Data adapted from a large-scale benchmarking study [24].

| Algorithm | Linear Network | Cycle Network | Bifurcating Network | Trifurcating Network |

|---|---|---|---|---|

| SINCERITIES | >5.0 | ~1.5 | ~1.5 | <1.5 |

| SINGE | >2.0 | ~2.5 | ~1.5 | <1.5 |

| GENIE3 | >2.0 | ~1.5 | ~1.5 | <1.5 |

| PIDC | >2.0 | ~1.5 | ~1.5 | ~2.0 |

| PPCOR | >2.0 | ~1.5 | ~1.5 | <1.5 |

Detailed Methodologies and Protocols

This section provides detailed protocols for implementing three key EA flavors for GRN inference.

Genetic Algorithms (GAs)

GAs are one of the most traditional EA forms. In the context of GRNs, they are often used with a binary or real-valued encoding to evolve network models.

Table 2: Key Research Reagents for GA-based GRN Inference

| Item | Function in Protocol |

|---|---|

| Expression Data Matrix | The primary input; each row represents a gene's expression profile across time or conditions [6]. |

| S-system Model Formalism | A powerful differential equation model that defines the synthesis and degradation of gene products [6]. |

| Fitness Function (e.g., MSE) | Quantifies the discrepancy between the simulated expression from the candidate model and the real data [6]. |

| Real-valued Encoding | Represents S-system parameters (α, β, g, h) directly in the chromosome as a vector of real numbers [6]. |

Protocol 3.1.1: GA for S-system Model Inference

Objective: To infer the parameters of an S-system GRN model from time-series gene expression data using a Real-valued Genetic Algorithm.

Initialization:

- Define the S-system model for

ngenes. The number of parameters to be inferred is2n(n+1). - Set GA parameters: population size (e.g., 100-500), crossover probability (e.g., 0.8-0.9), mutation probability (e.g., 1/#parameters), and maximum generations.

- Initialize a population of individuals, where each individual is a real-valued vector of length

2n(n+1). Parameter values can be randomly initialized within a plausible biological range.

- Define the S-system model for

Fitness Evaluation:

- For each individual in the population, decode the parameter vector into its corresponding S-system model.

- Numerically simulate the S-system equations to generate predicted gene expression time series.

- Calculate the fitness, for example, using the Negative Mean Squared Error (MSE) between the simulated data and the actual experimental data:

Fitness = -MSE(actual, simulated).

Selection:

- Use a selection strategy (e.g., tournament selection or roulette wheel) to choose parents for reproduction, favoring individuals with higher fitness.

Crossover:

- Apply a crossover operator (e.g., simulated binary crossover - SBX) to pairs of selected parents to create offspring. This recombines parameters from two solutions.

Mutation:

- Apply a mutation operator (e.g., polynomial mutation) to the offspring. This introduces small random changes to the parameter values, maintaining population diversity.

Termination Check:

- If the maximum generation is reached or the fitness has converged, terminate and output the best individual. Otherwise, replace the old population with the new offspring population and return to Step 2.

The following workflow diagram illustrates this iterative process:

Evolution Strategies (ES)

Evolution Strategies are a class of EAs specifically designed for continuous optimization problems. They primarily rely on mutation as the search operator and explicitly adapt the strategy parameters (e.g., step-size) of the search distribution.

Protocol 3.2.1: Simple Gaussian Evolution Strategy for GRN Inference

Objective: To optimize a GRN model by adapting the mean and a single, isotropic step-size of a Gaussian search distribution.

Initialization:

- Initialize the mean vector

μ(representing the model parameters) and a step-sizeσ. - Set the population size

λ(number of offspring).

- Initialize the mean vector

Sampling (Ask):

- For

i = 1toλ, sample a new candidate solution:x_i = μ + σ * y_i, wherey_i ~ N(0, I)(a vector of independent standard normal random variables).

- For

Evaluation:

- Evaluate the fitness

f(x_i)for each of theλoffspring.

- Evaluate the fitness

Update (Tell):

- Select the top

μbest offspring based on fitness. - Update the mean:

μ_new = average of the top μ offspring. - Update the step-size

σusing a method like self-adaptation or the "1/5th success rule".

- Select the top

Iteration:

- Repeat steps 2-4 until a termination criterion is met.

Covariance Matrix Adaptation ES (CMA-ES)

CMA-ES is a state-of-the-art evolution strategy that overcomes the limitations of a fixed step-size by adapting the full covariance matrix of the search distribution. This allows it to learn the pairwise dependencies between model parameters and scale the search process effectively [25] [26] [27].

Table 3: Key Internal Parameters of the CMA-ES Algorithm

| Parameter | Description |

|---|---|

| Mean (μ) | The center of the current search distribution, representing the current best estimate of the solution. |

| Step-size (σ) | The overall scale of the search distribution. |

| Covariance Matrix (C) | Defines the shape and orientation of the search distribution, capturing correlations between parameters. |

| Evolution Paths (pσ, pc) | Memory vectors that track the path of the mean over generations, used to adapt σ and C. |

Protocol 3.3.1: CMA-ES for GRN Inference

Objective: To infer GRN model parameters by efficiently adapting a multivariate normal search distribution using the CMA-ES algorithm.

Initialization:

- Initialize

μ,σ, and set the covariance matrixC = I(identity matrix). Initialize evolution pathsp_σ = 0,p_c = 0. - Set algorithm parameters like population size

λ, and recombination weights.

- Initialize

Sampling:

- For

k = 1, ..., λ, sample new offspring:x_k ~ N(μ, σ²C). This is equivalent tox_k = μ + σ * B * D * z_k, whereC = B D² Bᵀis the eigendecomposition ofC, andz_k ~ N(0, I).

- For

Evaluation and Selection:

- Evaluate the fitness

f(x_k)for all offspring. - Sort and select the top

μoffspring with the best fitness.

- Evaluate the fitness

Update Equations:

- Update Mean:

μ_new = Σ (w_i * x_i:λ), wherew_iare recombination weights. - Update Evolution Paths:

p_σupdate: incorporates the path of the mean, measuring whether consecutive steps are correlated.p_cupdate: tracks the path for the covariance update.

- Update Covariance Matrix C: combines rank-μ update (using information from the current population) and rank-1 update (using the evolution path).

- Update Step-size σ: based on the length of the evolution path

p_σ. If the path is long (consecutive steps are correlated), the step-size is increased.

- Update Mean:

Termination:

- Repeat from Step 2 until the solution is satisfactory or other termination criteria are met.

The diagram below visualizes how CMA-ES adapts its search distribution over generations, effectively "deforming" the search space to navigate towards the optimum.

Advanced Applications and Consensus Approaches

Beyond applying a single EA, recent research explores hybrid and consensus methods to improve robustness and accuracy. For instance, the GENECI framework functions as an "evolutionary organizer" that optimizes a consensus network derived from the outputs of multiple individual inference techniques (e.g., GENIE3, GRNBoost2) according to their confidence levels and topological characteristics [28]. This meta-optimization approach demonstrates improved generalizability across diverse datasets.

Another advanced approach, GRNMOPT, integrates a multi-objective EA (NSGA-II) to simultaneously optimize multiple critical parameters in an ODE model, such as gene decay rates and regulatory time delays, which are often hard to determine a priori [29]. This framework seeks to maximize both the AUROC and AUPR metrics, resulting in a Pareto front of optimal solutions that represent the best trade-offs between these objectives.

The Scientist's Toolkit

Table 4: Essential Software and Data Resources for EA-based GRN Inference

| Tool/Resource | Type | Description | Key Application |

|---|---|---|---|

| PyCMA [30] | Software Library | A Python implementation of the CMA-ES algorithm. | Provides a robust, readily usable function for optimizing GRN model parameters. |

| BEELINE [24] | Benchmarking Framework | A platform for standardized evaluation of GRN inference algorithms on synthetic and real data. | Used to validate and compare the performance of newly developed EA methods against benchmarks. |

| BoolODE [24] | Data Simulator | A tool that simulates single-cell expression data from GRN models using stochastic ODEs. | Generates realistic, ground-truth datasets for controlled testing of inference algorithms. |

| S-system Formalism [6] | Mathematical Model | A power-law based differential equation model capable of capturing complex GRN dynamics. | Serves as the quantitative model whose parameters are inferred by the EA. |

| DREAM Challenges & IRMA Network [28] | Benchmark Data | Community-standard in silico and real-world benchmark datasets for GRN inference. | Provides gold-standard datasets for training and validation. |

The S-system formalism is a canonical nonlinear modeling framework within Biochemical Systems Theory (BST) that provides a powerful representation for capturing the dynamics of gene regulatory networks (GRNs). It describes the system dynamics using coupled ordinary differential equations (ODEs) where the change in each molecular species is represented as the difference between a production term and a degradation term, each taking a power-law form [31] [32]. For a network with N genes, the dynamics of each gene's expression level, X_i, is described by:

dXi/dt = αi ∏{j=1}^N Xj^{g{ij}} - βi ∏{j=1}^N Xj^{h_{ij}} for i = 1...N

Here, αi and βi are non-negative rate constants for production and degradation, respectively, while g{ij} and h{ij}} are real-valued kinetic orders that quantify the effect of variable Xj on the production and degradation of Xi [31]. The kinetic orders capture the regulatory logic of the network: positive values for g{ij} indicate activation of gene i by gene j, negative values indicate repression, and zero values indicate no direct regulatory effect. Similarly, in the degradation term, positive values for h{ij} typically represent enhanced degradation [31].

The S-system model offers several unique advantages for GRN modeling. It provides a systematic mapping between the network topology and model parameters, where the structure of the power-law terms directly reflects the biological interactions [32]. The formalism can represent regulations in both production and degradation phases, capturing complex regulatory mechanisms that simpler models might miss [31]. Additionally, despite its nonlinear form, the S-system can be decoupled for parameter estimation, significantly reducing computational complexity [31] [32].

Table: Key Parameters in the S-system Formalism

| Parameter | Biological Interpretation | Typical Range | Regulatory Role |

|---|---|---|---|

| α_i | Production rate constant | 0 to 20 [31] | Controls maximum production rate |

| β_i | Degradation rate constant | 0 to 20 [31] | Controls basal degradation rate |

| g_{ij} | Kinetic order in production | -3.00 to 3.00 [31] | Positive: activation; Negative: inhibition |

| h_{ij} | Kinetic order in degradation | -3.00 to 3.00 [31] | Positive: enhanced degradation; Negative: suppressed degradation |

S-system Applications in Gene Regulatory Networks

Reverse Engineering of Network Topology

The primary application of S-system models in GRN research is the reverse engineering of network topology from time-series gene expression data. The inverse problem of identifying both the structure (connectivity) and parameters (kinetic orders and rate constants) of GRNs represents a cornerstone challenge in systems biology [32]. The S-system framework is particularly valuable for this task because it uniquely maps dynamical and topological information onto its parameters [32]. Each interaction in the network corresponds to specific kinetic order parameters, enabling researchers to infer regulatory relationships from expression dynamics.

Experimental and computational studies have demonstrated the effectiveness of S-system approaches for network inference. For example, the method has been successfully applied to infer regulations in the IRMA network, a real-life in-vivo synthetic network constructed within Saccharomyces cerevisiae yeast, and the SOS DNA repair network in Escherichia coli [31]. In these applications, the S-system model proved capable of extracting meaningful network topology from noisy experimental data, highlighting its practical utility for deciphering regulatory mechanisms.

Addressing Stochasticity in Biological Systems

Conventional deterministic S-system models face limitations when dealing with the inherent stochasticity of biological systems. Microarray gene expression data shows unpredictable variations in the range of 20-30% of original expression values due to both biological and technical factors [31]. To address this challenge, stochastic S-system variants have been developed that incorporate noise terms to better represent the biological reality.

These stochastic extensions investigate the incorporation of various standard noise measurements, including:

- Additive noise: Mimics the effect of nature's random processes

- Multiplicative noise: Models external noise imposed on GRNs

- Langevin noise: Represents internal noise due to small copy numbers of key molecular species [31]

Such stochastic models are particularly necessary for accurate inference of GRNs from noisy microarray data, as they can accommodate the biological variations (changes in mRNA levels) and technical variations (sampling, labeling, and hybridization artifacts) that characterize experimental data [31].

Model Condensation and Simplification

Beyond network inference, S-system modeling has been applied for purposeful simplification of complex dynamic models through a process called model condensation [33]. This approach is valuable in cases where only part of the system dynamics is to be investigated, allowing researchers to reduce complex models to their essential components while preserving dynamic behavior for the variables of interest.

The condensation procedure involves generating S-system equations that reflect interactions and processes of the complex model, with parameter values estimated using optimization techniques. This method has been successfully demonstrated in ecological modeling, such as condensing the complex TREEDYN3 forest simulation model into a simpler S-system representation that maintained predictive accuracy for key variables like tree height, diameter, and canopy closure [33]. This application highlights the flexibility of the S-system approach for creating computationally efficient models that retain essential system dynamics.

Parameter Inference Using Evolutionary Algorithms

The Parameter Estimation Challenge

Parameter estimation for S-system models represents a significant computational challenge due to the high-dimensional search space and nonlinear nature of the equations. For a network of N genes, the number of parameters that must be estimated is 2×N×(N+1), creating a complex optimization landscape with many local minima [31] [32]. Traditional optimization methods often struggle with this problem, leading to the adoption of evolutionary algorithms and other metaheuristic approaches.

The parameter identification problem can be formulated as an optimization task where the goal is to minimize the difference between the model predictions and experimental data. This is typically measured using the sum of squared errors between the slopes of the optimized system and the true slopes derived from time-series data [32]. The decoupled S-system approach, which decomposes the canonical system into smaller problems, significantly reduces computational burden by allowing parameters for each metabolite to be computed separately [31] [32].

Table: Evolutionary Algorithm Performance for S-system Parameter Estimation

| System Dimension | Computational Time | Success Rate | Error Magnitude | Key Challenges |

|---|---|---|---|---|

| 2-variable system | Variable | Moderate | ~10⁻⁵ | Oscillatory behavior, phase shifts |

| 4-variable system | ~5 seconds per case | High | ~10⁻⁵ | Well-behaved dynamics |

| 5-variable system | 23 seconds total | High (with exceptions) | ~10⁻⁵ | Problematic parameters (g₃₂, h₃₂) |

| 10-variable system | 75 seconds total | Good | ~10⁻⁵ | Scalability, parameter interactions |

Evolutionary Computation Protocols

Evolutionary algorithms for S-system parameter estimation follow a structured protocol that mimics natural selection processes [34]: