Evolutionary Algorithms and Artificial Neural Networks for Advanced Landslide Susceptibility Mapping: A Comprehensive Guide

Landslide Susceptibility Mapping (LSM) is a critical tool for disaster risk reduction and land-use planning.

Evolutionary Algorithms and Artificial Neural Networks for Advanced Landslide Susceptibility Mapping: A Comprehensive Guide

Abstract

Landslide Susceptibility Mapping (LSM) is a critical tool for disaster risk reduction and land-use planning. This article provides a comprehensive exploration of integrating Evolutionary Algorithms (EAs) with Artificial Neural Networks (ANNs) to create robust, accurate, and interpretable landslide susceptibility models. We cover the foundational principles of this hybrid approach, detail the implementation of various optimization algorithms like COA, HS, SFS, and TLBO, and address key challenges such as hyperparameter tuning, non-landslide sample selection, and model overfitting. The article further presents rigorous validation and comparative analysis techniques, including performance metrics like AUC-ROC and geomorphic plausibility tests, to benchmark these models against traditional methods. Aimed at researchers, geoscientists, and engineers, this guide synthesizes cutting-edge methodologies to advance the field of geohazard assessment.

The Foundation of Hybrid EA-ANN Models for Landslide Risk Assessment

Landslide Susceptibility Mapping (LSM) represents a fundamental proactive tool in geological risk management, enabling the identification of areas prone to landsliding based on local terrain conditions and triggering factors. As a destructive natural disaster, landslides cause extensive damage to vegetation, infrastructure, and property, often resulting in substantial loss of life and economic damage [1]. The integration of sophisticated computational approaches, particularly evolutionary algorithms combined with artificial neural networks (ANN), has significantly advanced the predictive accuracy of LSM models in recent years. These technological advancements coincide with growing recognition of the profound socio-economic consequences of landslides, which extend beyond immediate physical damage to encompass long-term impacts on community resilience, economic stability, and sustainable development, particularly in impoverished regions where recovery capacity is limited [1]. This article explores the integration of evolutionary algorithm-based ANN approaches in LSM and examines their critical relationship with socio-economic impact assessment, providing application notes and experimental protocols for researchers and disaster risk management professionals.

Theoretical Foundations and Current Approaches

Landslide susceptibility refers to the spatial probability of landslide occurrences, helping to identify high-risk areas based on the interaction of multiple causative factors [1]. Current LSM methodologies generally fall into two categories: qualitative (knowledge-driven) and quantitative (data-driven) approaches [2]. Qualitative methods, including the analytical hierarchy process (AHP) and fuzzy logic, rely on expert judgment and are inherently subjective [3] [1]. Quantitative approaches encompass statistical, probabilistic, and increasingly, machine learning techniques that learn the complex, non-linear relationships between landslide occurrences and multiple predisposing factors [4] [2].

The integration of socio-economic factors into LSM represents a paradigm shift from purely geological approaches to more holistic risk assessment frameworks. Traditional models relying purely on geological data fail to address social vulnerabilities that may be most critical in determining impact scenarios of disaster events [5]. Social vulnerability encompasses socio-economic factors like population density, economic status, and infrastructure quality, influencing a community's preparedness, response, and recovery capacity [5]. This integration is particularly crucial given the significant socio-economic impacts of landslides, which claim tens of thousands of lives globally and cause an estimated $20 billion in annual economic losses [6].

Table 1: Key Socio-Economic Impacts of Landslides

| Impact Category | Specific Consequences | Regional Examples |

|---|---|---|

| Human Costs | Fatalities, injuries, displacement | 66,438 deaths globally (1900-2020) [7] |

| Direct Economic Losses | Infrastructure damage, property destruction | $10 billion economic losses (1900-2020) [7], $300 million annual average in Germany [6] |

| Indirect Economic Impacts | Disrupted transportation, reduced agricultural productivity, decreased property values | Hindered resource development and economic growth in mountainous regions [1] |

| Social Disruption | Community displacement, psychological trauma, public service interruption | Exacerbated poverty in contiguous impoverished areas of Liangshan, China [1] |

Evolutionary Algorithms and ANN in LSM: Mechanisms and Workflows

Evolutionary algorithms (EAs) represent a class of population-based metaheuristic optimization algorithms inspired by biological evolution. In LSM, EAs are primarily employed to optimize the structural parameters of ANN models and select optimal feature subsets from multiple landslide conditioning factors [2]. The synergy between EAs and ANN addresses several limitations of standalone ANN applications, including computational complexity, over-fitting problems, and challenges in tuning structural parameters [2].

The most commonly implemented evolutionary algorithms in LSM include Genetic Algorithms (GA), Particle Swarm Optimization (PSO), Non-dominated Sorting Genetic Algorithm II (NSGA-II), and Evolutionary Non-dominated Radial Slots-Based Algorithm (ENORA) [2] [7]. These algorithms enhance ANN performance through two primary mechanisms: feature selection optimization and structural parameter tuning. Feature selection reduces the effects of the "curse of dimensionality" by identifying the most relevant landslide conditioning factors, while parameter tuning optimizes ANN architecture parameters such as learning rate, number of hidden layers, and activation functions [2].

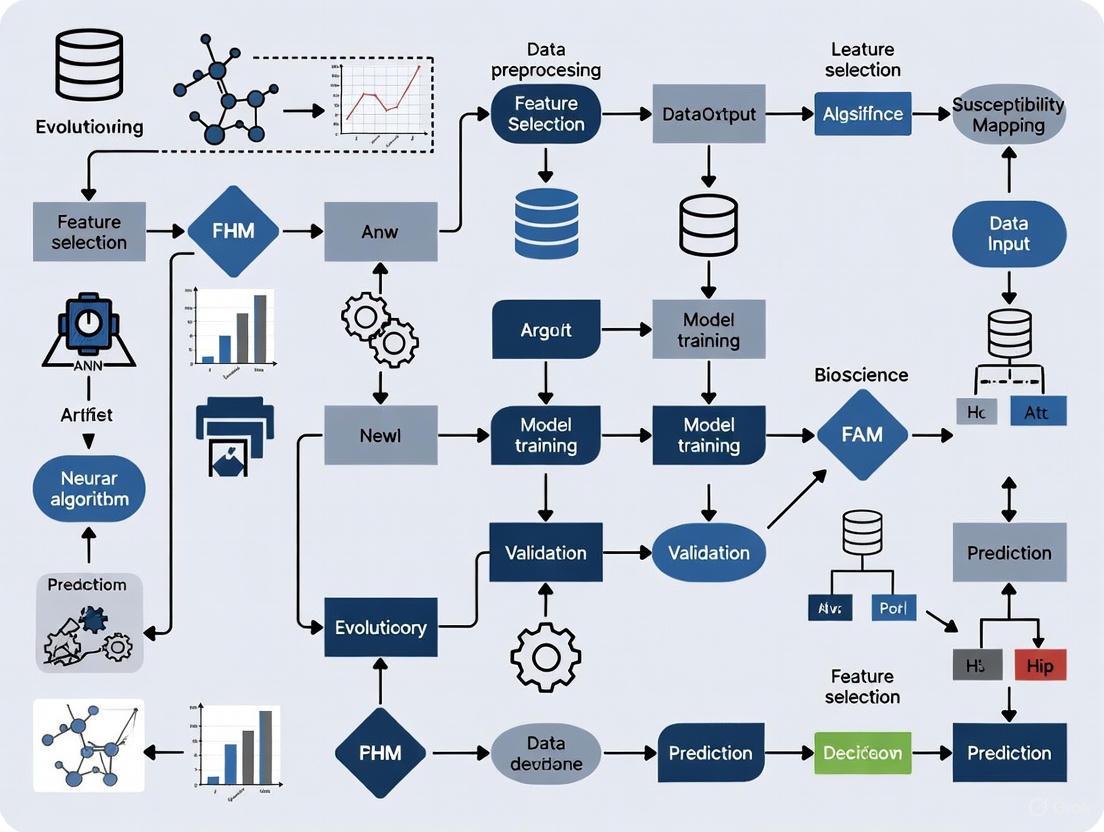

Diagram 1: Integrated workflow for evolutionary algorithm-ANN based landslide susceptibility mapping and socio-economic impact assessment

Experimental Protocols and Application Notes

Protocol 1: Development of Evolutionary ANN for LSM

Objective: To create an optimized ANN model using evolutionary algorithms for accurate landslide susceptibility mapping with integration of socio-economic factors.

Materials and Software Requirements:

- Geographical Information System (GIS) software (e.g., ArcGIS, QGIS)

- Programming environment (e.g., Python with TensorFlow/Keras, MATLAB)

- Spatial database management system

- High-resolution digital elevation models (DEM)

- Remote sensing data (e.g., Landsat imagery, InSAR data)

Methodological Steps:

Landslide Inventory Mapping:

Conditioning Factor Selection:

- Select relevant landslide conditioning factors based on literature review and regional characteristics

- Key factors typically include: topographic (elevation, slope, aspect), geological (lithology, distance to faults), hydrological (distance to rivers, rainfall), environmental (NDVI, land use), and socio-economic factors (population density, infrastructure) [1] [7]

- Process all factors to a consistent spatial resolution and coordinate system

Evolutionary Algorithm Optimization:

- Initialize population of potential solutions (ANN parameters and feature subsets)

- Define fitness function based on prediction accuracy (e.g., AUC, F1-score)

- Implement selection, crossover, and mutation operations (for GA) or position/velocity updates (for PSO)

- Iterate until convergence criteria met (e.g., maximum generations, fitness threshold)

ANN Model Training and Validation:

- Train ANN model using optimized parameters and feature subset

- Validate model performance using area under receiver operating characteristic curve (AUC), accuracy, precision, recall, and F1-score [4]

- Generate final landslide susceptibility map classified into very low, low, moderate, high, and very high susceptibility zones

Table 2: Performance Metrics of Evolutionary Algorithm-Optimized ANN Models in LSM

| Algorithm Combination | Study Region | Performance Metrics | Key Conditioning Factors Identified |

|---|---|---|---|

| COA-MLP [4] | Gilan, Iran | AUC: 0.995 (testing) | 16 topographic, geomorphologic, geological, land use, and hydrological factors |

| PSO-ANN [2] | Achaia, Greece | AUC: 0.969 (training), 0.800 (validation) | Elevation, slope angle, slope aspect, curvature, distance to faults |

| NSGA-II-Fuzzy [7] | Khalkhal, Iran | AUC: 0.867, RMSE: 0.43 (validation) | Lithology, land cover, altitude |

| Hybrid RF-GB [5] | Multiple | Accuracy: 92%, Precision: 0.89, F1-score: 0.90 | Geological and social vulnerability factors |

Protocol 2: Integration of Socio-Economic Vulnerability Assessment

Objective: To incorporate socio-economic vulnerability factors into LSM for comprehensive risk assessment.

Methodological Steps:

Socio-Economic Data Collection:

- Collect demographic data (population density, age distribution)

- Gather economic data (income levels, property values, infrastructure distribution)

- Acquire land use and planning data (settlement patterns, critical facilities)

Social Vulnerability Index Calculation:

- Normalize socio-economic indicators to common scale

- Apply principal component analysis (PCA) to reduce dimensionality [5]

- Calculate composite social vulnerability index

Integrated Risk Assessment:

- Combine physical susceptibility map with social vulnerability index

- Apply catastrophe theory to model discontinuous changes and threshold effects [1]

- Implement the Landslide Misjudgment Potential Societal Loss Evaluation Index (LMPSLEI) to quantify potential losses from false negatives and false positives [8]

Climate Change Scenario Integration:

- Utilize CMIP6 climate projections under different SSP-RCP scenarios [9]

- Model changes in rainfall patterns and extreme weather events

- Project future landslide susceptibility under climate change scenarios

- Assess future population and economic exposure to landslide hazards

Diagram 2: Evolutionary algorithm optimization process for ANN parameter tuning in LSM

Table 3: Essential Research Toolkit for Evolutionary Algorithm-Based LSM Research

| Tool Category | Specific Tools/Software | Application in LSM Research | Key Functions |

|---|---|---|---|

| GIS Software | ArcGIS, QGIS, GRASS GIS | Spatial data management, analysis, and visualization | Geoprocessing, map algebra, susceptibility visualization |

| Remote Sensing Data | Landsat, Sentinel, ASTER DEM, LiDAR | Terrain analysis, land cover classification, change detection | Deriving conditioning factors (slope, aspect, curvature, NDVI) |

| Machine Learning Libraries | TensorFlow, Keras, Scikit-learn, WEKA | Implementing ANN and evolutionary algorithms | Model development, training, and validation |

| Evolutionary Algorithm Frameworks | DEAP, Platypus, JMetal | Implementing optimization algorithms | Parameter tuning, feature selection |

| Statistical Analysis Tools | R, SPSS, MATLAB | Statistical analysis and model validation | Performance evaluation, significance testing |

| Climate Projection Data | CMIP6 model outputs | Future scenario analysis | Projecting climate change impacts on landslide susceptibility [9] |

| Socio-Economic Data | Census data, night light data, land use maps | Social vulnerability assessment | Quantifying socioeconomic exposure and vulnerability [9] |

Data Analysis and Interpretation Guidelines

Model Validation Techniques

Robust validation of LSM models is essential for reliability in practical applications. The area under the receiver operating characteristic curve (AUC) represents the most widely adopted validation metric, with values above 0.8 indicating good performance and above 0.9 indicating excellent performance [4] [2]. Additional statistical measures including accuracy, precision, recall, F1-score, and root mean square error (RMSE) provide comprehensive assessment of model performance [5] [7].

Spatial validation through field verification represents a critical step in model assessment. This involves selecting random points across different susceptibility classes and conducting ground truthing to verify model predictions [3]. Comparative analysis with independent landslide inventories or historical records further validates model robustness and temporal transferability.

Interpretation of Integrated Socio-Economic Results

The integration of socio-economic factors necessitates specialized interpretation frameworks. The Landslide Misjudgment Potential Societal Loss Evaluation Index (LMPSLEI) provides a quantitative measure of potential societal losses resulting from model errors, giving greater weight to false negatives (undetected landslides) due to their typically more severe consequences [8]. This approach represents a significant advancement beyond pure statistical metrics by explicitly incorporating the asymmetric impact of different error types.

Future scenario analysis under climate change and socioeconomic development pathways enables proactive risk management. Studies project potential landslide activities over mainland China to increase by 20.6% to 46.5% by the end of the 21st century depending on emission scenarios, with parallel increases in population and economic exposure in most scenarios [9]. Such analyses help prioritize regions for intervention and guide adaptation planning.

The integration of evolutionary algorithms with artificial neural networks represents a powerful methodological advancement in landslide susceptibility mapping, significantly enhancing model accuracy and robustness through optimized parameter tuning and feature selection. The concurrent incorporation of socio-economic factors transforms LSM from a purely physical assessment to a comprehensive risk evaluation tool that directly addresses the human dimensions of landslide impacts.

Implementation of these advanced LSM approaches provides valuable insights for disaster prevention, poverty alleviation, and sustainable development strategies, particularly in vulnerable regions [1]. The proposed protocols and application notes offer researchers and practitioners a structured framework for developing integrated physical-socioeconomic landslide risk assessments. Future research directions should focus on enhancing model transferability across regions, improving the temporal resolution of susceptibility assessments, and strengthening the linkage between susceptibility mapping and decision-making processes for land use planning and emergency preparedness.

The Role of Artificial Neural Networks (ANNs) in Capturing Complex Landslide Patterns

Landslides represent one of the most destructive natural hazards globally, causing significant loss of life and extensive damage to infrastructure and the environment [4]. The complex, nonlinear interactions between multiple conditioning factors—including topography, geology, hydrology, and land use—make landslide pattern recognition and susceptibility mapping particularly challenging. Artificial Neural Networks (ANNs) have emerged as powerful computational tools capable of learning these complex, high-dimensional relationships from geospatial data, offering significant advantages over traditional statistical methods for landslide susceptibility assessment [10] [11].

When integrated with evolutionary optimization algorithms, ANNs demonstrate enhanced capability to identify optimal network architectures and parameters, substantially improving prediction accuracy for landslide patterns [4] [10]. This integration represents a significant advancement in geohazard assessment, enabling more reliable identification of susceptible areas for disaster mitigation and land-use planning.

Performance Analysis of Evolutionary Algorithm-Optimized ANNs

Extensive research has validated the performance improvements achieved by coupling ANNs with various optimization algorithms for landslide susceptibility mapping. The table below summarizes quantitative performance comparisons from recent studies:

Table 1: Performance of ANN models optimized with different algorithms for landslide susceptibility mapping

| Optimization Algorithm | Study Area | Training AUC | Testing AUC | Key Advantages |

|---|---|---|---|---|

| COA-MLP [4] | Gilan, Iran | 0.998 | 0.995 | Best swarm size = 450; high accuracy |

| SFS-MLP [4] | Gilan, Iran | 0.999 | 0.996 | Highest accuracy; dependable susceptibility zoning |

| TLBO-MLP [4] | Gilan, Iran | 0.999 | 0.995 | Excellent training and testing performance |

| HS-MLP [4] | Gilan, Iran | 0.997 | 0.995 | Consistent high performance |

| PSO-ANN [10] | Karakoram, Pakistan | Comparable to BO_TPE | ~1.84% lower than BO_TPE | Optimizes weights, biases, and architecture |

| GA-ANN [10] | Karakoram, Pakistan | Comparable to BO_TPE | ~0.32% lower than BO_TPE | Effective weight adjustment via genetic operators |

| BO_TPE-ANN [10] | Karakoram, Pakistan | High | Benchmark performance | Optimal hyperparameter configuration |

| Transfer Learning ANN [11] | Pacitan, Indonesia | - | 0.97 | Superior performance in data-scarce regions |

These optimization algorithms enhance ANN performance through distinct mechanisms. Particle Swarm Optimization (PSO) and Genetic Algorithms (GA) excel at optimizing ANN weights, biases, and architecture [10], while Bayesian Optimization methods (BOGP and BOTPE) effectively tune hyperparameters like learning rate, regularization strength, and network architecture [10]. The high accuracy demonstrated by these integrated models (AUC > 0.995 across multiple studies) confirms their robustness for capturing complex landslide patterns.

Advanced Protocols for Landslide Pattern Recognition

Protocol: Evolutionary Algorithm-Optimized ANN for Landslide Susceptibility Mapping

Application: Developing high-accuracy landslide susceptibility models in data-rich environments

Reagents & Solutions:

- Landslide inventory database (historical landslide locations)

- Sixteen causal factor layers (topographic, geomorphologic, geological, land use, hydrological, hydrogeological)

- Normalization algorithms for data preprocessing

- Optimization algorithms (COA, HS, SFS, TLBO, PSO, GA, or Bayesian variants)

Procedure:

- Data Preparation and Causal Factor Selection

- Compile landslide inventory map using verified sources and aerial photograph analysis [4]

- Select sixteen causal factors based on sensitivity analysis, prior research, and empirical landslide data [4]

- Apply feature selection algorithms (Information Gain, Variance Inflation Factor, Relief Attribute Evaluator, etc.) to determine geospatial variable importance [10]

- Partition data into training (70%), validation (20%), and testing (10%) sets [12]

Model Optimization and Training

- Initialize ANN architecture with input neurons matching causal factors

- Apply optimization algorithm to determine optimal weights, biases, and hyperparameters:

- Train model using backpropagation with optimization-guided parameter adjustments

- Validate model performance using validation dataset to prevent overfitting

Model Evaluation and Susceptibility Mapping

- Calculate Area Under the Receiver Operating Characteristic Curve (AUROC) for training and testing datasets [4]

- Generate landslide susceptibility index (LSI) values for the study area

- Classify susceptibility into zones (low, moderate, high) based on LSI thresholds

- Compare susceptibility patterns with known landslide events for validation

Troubleshooting:

- If model shows poor convergence, adjust optimization algorithm parameters (swarm size for COA, population size for GA)

- If overfitting occurs, increase regularization strength or implement early stopping

- If feature importance varies significantly, apply multiple feature selection techniques for consensus

Protocol: Transfer Learning ANN for Data-Scarce Regions

Application: Landslide susceptibility mapping in regions with limited landslide inventory data

Reagents & Solutions:

- Source area dataset with complete landslide inventory

- Target area with limited landslide data

- Pre-trained ANN model from source area

- Fine-tuning algorithms for model adaptation

Procedure:

- Source Model Development

- Train ANN model on data-rich source area using protocol 3.1

- Validate model performance using comprehensive testing

- Archive model architecture, weights, and preprocessing parameters

Knowledge Transfer and Model Adaptation

- Initialize target model with pre-trained source model architecture and weights

- Freeze early layers to retain general feature extraction capabilities

- Replace and retrain final layers using limited target area data

- Fine-tune model with reduced learning rate to adapt to target area characteristics [11]

Interpretation and Plausibility Assessment

- Apply SHAP (SHapley Additive exPlanations) values to identify influential factors [11]

- Generate partial dependence plots to visualize feature relationships

- Assess geomorphic plausibility by comparing susceptibility patterns with terrain behavior [11]

- Validate model using qualitative assessment of susceptibility distribution across slope, TWI, and curvature features [11]

Troubleshooting:

- If transfer performance is poor, adjust the number of frozen layers

- If limited data causes overfitting, implement data augmentation techniques

- If model interpretation reveals implausible relationships, incorporate domain expertise to constrain model

Workflow Visualization

Diagram 1: Workflow for ANN landslide pattern recognition

Research Reagent Solutions

Table 2: Essential research reagents and computational tools for ANN-based landslide analysis

| Reagent/Tool | Function | Application Example | Implementation Considerations |

|---|---|---|---|

| Airborne LiDAR [13] | High-resolution DEM generation; penetrates vegetation to capture micro-topography | Landslide trace identification in vegetated areas [13] | Requires specialized equipment; data processing expertise needed |

| Optimization Algorithms (PSO, GA) [10] | Optimize ANN weights, biases, and architecture | Enhancing ANN performance in Karakoram Highway susceptibility mapping [10] | Parameter tuning critical; computational resource intensive |

| Bayesian Optimization (BOGP, BOTPE) [10] | Hyperparameter tuning; probabilistic model-based optimization | Finding optimal learning rates and network structures [10] | More efficient than grid search; handles complex parameter spaces |

| Feature Selection Algorithms [10] | Identify relevant geospatial variables; reduce dimensionality | Determining key landslide conditioning factors along Karakoram Highway [10] | Multiple methods (Information Gain, VIF, etc.) provide validation through consensus |

| SHAP (SHapley Additive exPlanations) [11] | Model interpretation; feature importance quantification | Explaining ANN predictions in Pacitan, Indonesia study [11] | Computationally intensive for large datasets; provides both global and local interpretability |

| Ensemble Learning Methods [12] | Combine multiple models; reduce variance and improve accuracy | Landslide detection from satellite images using multiple CNN models [12] | Requires training multiple models; strategies include majority vote, weighted average, stacking |

| Transfer Learning Framework [11] | Knowledge transfer from data-rich to data-scarce regions | Applying models from source areas to target areas with limited inventory [11] | Effective for regions with similar geological characteristics; requires careful fine-tuning |

The integration of Artificial Neural Networks with evolutionary optimization algorithms represents a transformative advancement in landslide pattern recognition and susceptibility mapping. The protocols and methodologies outlined in this application note provide researchers with robust frameworks for implementing these sophisticated computational techniques. Through optimization algorithms, ANNs achieve exceptional accuracy (AUC > 0.995) in capturing complex, nonlinear relationships between multiple landslide conditioning factors [4] [10].

The complementary approaches of evolutionary optimization for data-rich environments and transfer learning for data-scarce regions [11] significantly expand the applicability of ANN-based methods across diverse geographical contexts. Furthermore, the incorporation of interpretability frameworks like SHAP values [11] and advanced visualization techniques such as LiDAR-enhanced terrain mapping [13] addresses the critical need for model transparency and geomorphic plausibility in landslide risk assessment.

These computational advancements, supported by the comprehensive reagent solutions and standardized protocols detailed herein, empower researchers to develop more accurate, reliable, and interpretable landslide susceptibility models, ultimately contributing to more effective disaster risk reduction and sustainable land-use planning strategies globally.

Why Evolutionary Algorithms? Overcoming the Limitations of Traditional ANN Training

In the specialized field of landslide susceptibility mapping (LSM), Artificial Neural Networks (ANNs) have emerged as a powerful tool for modeling the complex, non-linear relationships between landslide occurrences and their contributing factors. However, the performance of an ANN is highly dependent on the optimal configuration of its parameters and structure. Traditional training methods, such as backpropagation, are often plagued by limitations including convergence to local minima, sensitivity to initial weights, and the curse of dimensionality when dealing with numerous conditioning factors. Evolutionary Algorithms (EAs) offer a robust meta-heuristic solution to these challenges. This application note details how EAs can be systematically integrated with ANNs to overcome these hurdles, providing researchers with structured protocols and tools to enhance their LSM models.

Quantitative Superiority of EA-ANN Hybrid Models

Empirical studies conducted in various landslide-prone regions quantitatively demonstrate the enhanced performance of EA-ANN hybrids over traditional ANNs. The following table summarizes key performance metrics from recent research.

Table 1: Performance Comparison of EA-ANN Models in Landslide Susceptibility Mapping

| Study Location | EA-ANN Model | Key Performance Metrics (AUC) | Comparative Traditional Model | Reference |

|---|---|---|---|---|

| Gilan, Iran | SFS-MLP | Training: 0.999, Testing: 0.996 | N/A | [4] |

| Gilan, Iran | COA-MLP | Training: 0.998, Testing: 0.995 | N/A | [4] |

| Gilan, Iran | HS-MLP | Training: 0.997, Testing: 0.995 | N/A | [4] |

| Gilan, Iran | TLBO-MLP | Training: 0.999, Testing: 0.995 | N/A | [4] |

| Achaia, Greece | PSO-ANN | Prediction Accuracy: 0.800 | SVM (0.750) | [2] |

| Khalkhal, Iran | NSGA-II-Fuzzy | AUC: 0.867, RMSE: 0.43 (Validation) | ENORA (AUC: 0.844) | [7] |

The consistency of high Area Under the Curve (AUC) values across multiple EA types and geographical locations underscores the robustness of the evolutionary approach. The EA-ANN models consistently achieve AUC values exceeding 0.99 during training and maintain high performance (>0.995) during testing, indicating excellent model generalization without overfitting [4]. Furthermore, the optimization process leads to more reliable models, as evidenced by lower Root Mean Square Error (RMSE) in models like NSGA-II [7].

Core Protocols for EA-ANN Integration in Landslide Susceptibility Mapping

The following protocols outline the primary methodologies for implementing EA-ANN models, synthesizing procedures from validated studies.

Protocol 1: EA for ANN Parameter Optimization

This protocol uses EAs to find the optimal set of ANN parameters (e.g., weights, biases, learning rate).

Workflow Diagram: EA-driven ANN Parameter Optimization

Detailed Procedure:

- Initialization: Generate an initial population of candidate solutions. Each solution is a vector representing a complete set of ANN parameters (e.g., connection weights and biases) [14].

- Fitness Evaluation: For each candidate solution in the population:

- Configure the ANN with the parameters from the solution.

- Train the ANN on a subset of the landslide inventory data (typically 70%).

- Validate the trained ANN on a separate testing subset (typically 30%).

- Calculate the fitness score, typically using a metric like the Area Under the Receiver Operating Characteristic Curve (AUC). A higher AUC indicates a better solution [4] [2].

- Reproduction:

- Selection: Choose parent solutions from the population with a probability proportional to their fitness scores.

- Crossover: Create offspring solutions by combining parts of the parameter vectors from two parents.

- Mutation: Introduce small random changes to the offspring's parameters to maintain population diversity [14].

- Replacement: Form a new generation by replacing less-fit individuals with the newly created offspring.

- Termination: Repeat steps 2-4 until a stopping condition is met (e.g., a maximum number of generations, or fitness convergence). The best solution is used to configure the final ANN model for generating the landslide susceptibility map [2].

Protocol 2: EA for Landslide Conditioning Factor Selection

This protocol uses EAs as a feature selection mechanism to identify the most relevant landslide conditioning factors, reducing model complexity and improving performance.

Workflow Diagram: Feature Selection for LSM

Detailed Procedure:

- Initialization: Create a population where each individual is a binary string representing a subset of all available conditioning factors (e.g., slope, lithology, NDVI, distance to roads) [2] [15].

- Fitness Evaluation: For each factor subset:

- Train an ANN model using only the selected factors.

- Evaluate the model's performance on a validation set.

- The fitness function is a combination of model accuracy (e.g., AUC) and a penalty for larger numbers of factors to promote parsimony.

- Reproduction: Apply selection, crossover, and mutation operators to generate new candidate subsets.

- Termination: The process iterates until the optimal trade-off between model simplicity and predictive power is achieved. Studies have shown that this method effectively identifies the most influential factors, such as lithology, land cover, and altitude [7].

The Researcher's Toolkit for EA-ANN Landslide Modeling

Table 2: Essential Research Reagents and Computational Tools for EA-ANN Protocols

| Category/Item | Specification/Function | Application Context in LSM |

|---|---|---|

| Evolutionary Algorithms | ||

| Genetic Algorithm (GA) | Feature selection; optimizes factor set for ANN input. | Reduces model dimensionality, mitigates overfitting [2]. |

| Particle Swarm Optimization (PSO) | Tunes structural parameters (e.g., weights) of ANN and SVM. | Enhances prediction accuracy; used in Achaia, Greece [2]. |

| Non-dominated Sorting GA II (NSGA-II) | Multi-objective optimizer for fuzzy rules in a GIS. | Generates high-accuracy LSM; applied in Khalkhal, Iran [7]. |

| Data & Validation | ||

| Landslide Inventory Map | Geospatial database of historical landslide locations. | Essential for model training and validation; base for non-landslide points [4] [15]. |

| Landslide Conditioning Factors | Raster layers (Topography, Geology, Hydrology, Anthropogenic). | Model inputs (e.g., slope, lithology, distance to river) [7] [15]. |

| Area Under Curve (AUC) | Primary metric for evaluating model prediction performance. | Standardized validation; values >0.8 indicate good model [4] [7]. |

| Software & Platforms | ||

| Geographic Info System (GIS) | Platform for spatial data management, analysis, and LSM visualization. | Core environment for processing spatial data and generating final maps [7] [16]. |

| Google Earth Engine (GEE) | Cloud platform for processing satellite imagery and deriving factors. | Efficiently calculates factors like NDVI, MNDWI from satellite data [15]. |

The integration of Evolutionary Algorithms with Artificial Neural Networks presents a formidable methodology for advancing landslide susceptibility research. By systematically overcoming the key limitations of traditional ANN training—specifically through global search capabilities, automated feature selection, and direct performance optimization—EA-ANN hybrids deliver quantifiable improvements in predictive accuracy and model robustness. The structured protocols and toolkit provided herein offer a clear roadmap for researchers to implement these advanced techniques, ultimately contributing to the development of more reliable tools for geohazard risk assessment and mitigation.

Landslide Susceptibility Mapping (LSM) is a critical proactive measure for risk management, sustainable development, and the protection of human lives, infrastructure, and the environment [4]. In recent years, the integration of Artificial Neural Networks (ANNs) with evolutionary optimization algorithms has significantly enhanced the predictive accuracy of LSM models [4] [17]. These hybrid approaches address the limitations of conventional ANN models, such as convergence to local minima and sensitivity to initial parameters, by systematically optimizing the network's weights and architecture [4] [18]. This application note provides a comprehensive technical overview of four key evolutionary algorithms—Cuckoo Optimization Algorithm (COA), Harmony Search (HS), Stochastic Fractal Search (SFS), and Teaching-Learning-Based Optimization (TLBO)—for enhancing ANN performance in geohazard assessment, with particular emphasis on landslide susceptibility mapping.

Algorithm Performance Comparison and Quantitative Analysis

Table 1: Performance Metrics of Optimization Algorithms for ANN in Landslide Susceptibility Mapping

| Algorithm | Full Name | Training AUC | Testing AUC | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| COA-MLP | Cuckoo Optimization Algorithm-Multilayer Perceptron | 0.998 [4] | 0.995 [4] | Powerful global search capabilities [4] | Computationally intensive, sensitive to parameter tuning [4] |

| HS-MLP | Harmony Search-Multilayer Perceptron | 0.997 [4] | 0.995 [4] | Maintains diversity in search space [4] | Struggles with premature convergence [4] |

| SFS-MLP | Stochastic Fractal Search-Multilayer Perceptron | 0.999 [4] | 0.996 [4] | High accuracy, dependable for susceptibility zoning [4] | May lack strong theoretical foundation [4] |

| TLBO-MLP | Teaching-Learning-Based Optimization-Multilayer Perceptron | 0.999 [4] | 0.995 [4] | No algorithm-specific parameters required [19] | May suffer from slow convergence [4] |

| EFO-MLP | Electromagnetic Field Optimization-Multilayer Perceptron | 0.879 [17] | N/A | Quick training time (1161s) [17] | Lower AUC compared to other optimizers [17] |

Table 2: Computational Efficiency and Implementation Considerations

| Algorithm | Convergence Speed | Parameter Sensitivity | Implementation Complexity | Robustness to Noisy Data |

|---|---|---|---|---|

| COA-MLP | Medium [4] | High [4] | Medium [4] | Robust [4] |

| HS-MLP | Fast initially [4] | Medium [4] | Low to Medium [4] | Medium [4] |

| SFS-MLP | Fast [4] | Low to Medium [4] | Medium [4] | Robust [4] |

| TLBO-MLP | May be slow [4] | Low [19] | Low [19] | Medium [4] |

| EFO-MLP | Fast [17] | Medium [17] | Medium [17] | Information not available |

Detailed Experimental Protocols

General Workflow for Hybrid Evolutionary Algorithm-ANN Implementation

Protocol 1: TLBO-ANN Implementation for LSM

Principle: TLBO mimics the teaching-learning process in a classroom, operating without algorithm-specific parameters [19]. The algorithm progresses through a Teacher Phase (global exploration) and Learner Phase (local refinement) [19] [18].

Step-by-Step Procedure:

- Initialize ANN Architecture: Define input neurons corresponding to landslide conditioning factors (e.g., 16 factors as used in Gilan, Iran study [4]), hidden layers, and output neuron representing susceptibility value.

- Set TLBO Parameters:

- Population size (typically 50-100 individuals)

- Maximum iterations (typically 500-1000)

- Dimension size (equal to number of ANN weights and biases) [19]

- Teacher Phase:

- Identify best solution (teacher) in current population

- Calculate mean of all solutions

- Update each solution using: ( X{new} = X{old} + r \times (X{teacher} - TF \times X{mean}) )

- Where ( TF ) is teaching factor (1 or 2) and r is random number [0,1] [19]

- Learner Phase:

- Randomly select two different solutions ( Xi ) and ( Xj )

- Update solutions based on mutual interaction:

- If ( f(Xi) < f(Xj) ): ( X{new} = X{old} + r \times (Xi - Xj) )

- Else: ( X{new} = X{old} + r \times (Xj - Xi) ) [19]

- Fitness Evaluation: Use Mean Square Error (MSE) between predicted and actual landslide occurrences as fitness function

- Termination Check: Continue until maximum iterations or convergence criterion met

- Model Validation: Evaluate using Area Under Curve (AUC) with testing dataset [4]

Enhanced TLBO Variants: For improved performance, implement strengthened TLBO (STLBO) with:

- Linear increasing teaching factor

- Elite system with new teacher and class leader

- Cauchy mutation to escape local optima [18]

Protocol 2: COA-ANN Implementation for LSM

Principle: COA is inspired by the brood parasitism of some cuckoo species, combining Lévy flight behavior with competitive population elimination [4].

Step-by-Step Procedure:

- Initialize Cuckoo Habitats: Create initial population of nests representing ANN parameters

- Set COA Parameters:

- Lévy Flight Generation:

- Calculate step size: ( s = \frac{u}{|v|^{1/\beta}} )

- Where u and v follow normal distributions, β = 1.5 [4]

- Egg Laying: Each cuckoo lays 5-20 eggs in different nests within specified radius

- Population Evaluation: Calculate profit value (fitness) for each habitat

- Immigration: Less profitable habitats migrate toward better regions

- Elimination: Worst habitats are eliminated and new ones generated

- ANN Training: Use best habitat parameters to train final ANN model

- Validation: Assess using AUC, MSE, and other statistical measures [4]

Protocol 3: Integrated Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Critical Data Components for Evolutionary Algorithm-ANN Landslide Modeling

| Component Category | Specific Elements | Function in LSM | Data Sources |

|---|---|---|---|

| Topographic Factors | Elevation, Slope, Aspect, Profile Curvature, Plan Curvature [20] [17] | Determine terrain stability and water flow patterns | Digital Elevation Model (DEM), Aerial Photographs [4] |

| Geological Factors | Lithology, Soil Type, Distance to Faults [20] [17] | Define subsurface composition and structural weaknesses | Geological Society of Iran (GSI), Soil Conservation and Watershed Management Research Institute (SCWMRI) [17] |

| Hydrological Factors | Distance to Rivers, River Density, TWI, SPI [20] [17] | Model hydrological impact on slope stability | DEM-derived indices, Local hydrographic maps [17] |

| Land Cover Factors | NDVI, Land Use Type [20] [17] | Assess vegetation stabilization and anthropogenic impact | Satellite Imagery (Landsat, Sentinel), Land Cover Maps [17] |

| Triggering Factors | Annual Rainfall [20] | Represent primary landslide trigger in study region | Meteorological Stations, Climate Databases [20] |

| Landslide Inventory | Historical Landslide Locations [4] [17] | Provide training and validation data for models | National Geoscience Database of Iran (NGDIR), Field Surveys, Aerial Photograph Interpretation [4] [17] |

Technical Considerations and Optimization Strategies

Algorithm Selection Guidelines

For high-precision requirements, SFS-MLP demonstrates superior performance with testing AUC of 0.996 [4]. For computational efficiency, EFO-MLP offers significantly faster training times (1161 seconds) while maintaining respectable accuracy (AUC = 0.879) [17]. When implementation simplicity is prioritized, TLBO requires no algorithm-specific parameters, reducing tuning complexity [19].

Performance Enhancement Techniques

Population Sizing: Optimal swarm size for COA-MLP is approximately 450, as determined in the Gilan case study [4]. For other algorithms, population sizes between 50-100 typically provide balanced performance [4].

Data Splitting Strategy: A 70/30 training/testing split consistently produces reliable results across multiple studies [4] [20] [17]. This ratio sufficiently represents spatial patterns while maintaining adequate validation samples.

Conditioning Factor Selection: Incorporate 12-16 representative factors covering topographic, geological, hydrological, and land cover aspects [4] [20]. Factor importance analysis using Random Forest or similar methods can optimize model efficiency by eliminating redundant variables [17].

Hybrid Approach: Combine multiple optimization algorithms to leverage their complementary strengths. The ensemble approach has been shown to produce outstanding results with AUC reaching 99.4% in some applications [21].

The integration of evolutionary optimization algorithms with ANN architectures substantially enhances landslide susceptibility mapping accuracy, with SFS-MLP achieving exceptional testing AUC of 0.996 [4]. Successful implementation requires careful consideration of algorithm-specific characteristics, appropriate parameter tuning, and comprehensive validation using multiple statistical measures. These optimized hybrid models provide decision-makers with reliable tools for identifying landslide-prone areas, enabling proactive risk management and land-use planning in vulnerable regions.

Application Notes

The integration of Evolutionary Algorithms (EAs) with Artificial Neural Networks (ANNs) represents a paradigm shift in landslide susceptibility mapping (LSM). This hybrid approach directly addresses critical challenges in model performance, including overfitting, convergence on suboptimal solutions, and poor generalization to new geographic areas [4] [7]. The EA-ANN framework leverages the global search capabilities of evolutionary computation to systematically design and optimize the architecture and parameters of neural networks, resulting in models with significantly enhanced predictive robustness [22].

The synergistic advantages of this integration are quantifiable. Research from Gilan, Iran, demonstrated that EA-optimized ANNs achieved exceptional performance metrics, with Area Under the Receiver Operating Characteristic Curve (AUROC) values reaching 0.998–0.999 on training data and 0.995–0.996 on testing data across four different optimization algorithms [4]. This indicates not only high accuracy but also superior generalizability, as the minimal gap between training and testing performance mitigates overfitting. Subsequent studies have validated these findings, with models in Khalkhal, Iran, achieving AUROCs of 0.867 [7], and ensemble models in China maintaining AUROCs above 0.84 while significantly improving spatial prediction consistency [15] [23].

Table 1: Performance Metrics of EA-ANN Models in Landslide Susceptibility Mapping

| Study Location | EA Algorithm | ANN Model | Training AUC | Testing AUC | Key Advantage |

|---|---|---|---|---|---|

| Gilan, Iran [4] | SFS-MLP | MLP | 0.999 | 0.996 | Highest Accuracy |

| Gilan, Iran [4] | COA-MLP | MLP | 0.998 | 0.995 | Robust Swarm Optimization |

| Eastern Himalaya [22] | SNN (Level-3) | Custom SNN | Comparable to DNN | Comparable to DNN | Full Interpretability |

| Khalkhal, Iran [7] | NSGA-II | Fuzzy ANN | 0.867 (Overall) | - | Multi-objective Optimization |

| Dujiangyan, China [23] | Bagging-REPT | REPT Tree | 0.857 (Overall) | - | Overfitting Control |

The robustness of EA-ANN models stems from their explicit optimization for generalization. Unlike traditional ANNs that may overfit to training data, EA-ANNs employ mechanisms that maintain population diversity within the search space, effectively avoiding local optima [4]. Furthermore, multi-objective EAs can simultaneously optimize for accuracy and model complexity, creating simpler, more generalizable networks [7]. This was evidenced in Dujiangyan, China, where hybrid models exhibited minimal performance differences between training and testing sets, indicating effective overfitting mitigation [23].

Table 2: Optimization Outcomes and Robustness Improvements

| Optimization Target | EA Mechanism | Impact on Robustness | Evidence |

|---|---|---|---|

| Network Architecture | Global search for optimal hidden layers/neurons | Prevents over-parameterization | Higher testing accuracy [4] |

| Connection Weights | Population-based weight initialization | Avoids local minima | Reduced overfitting [4] [23] |

| Input Feature Selection | Fitness-based feature evaluation | Eliminates redundant factors | Improved generalizability [24] [15] |

| Hyperparameter Tuning | Adaptive parameter optimization | Enhances model stability | Consistent performance across regions [22] |

Experimental Protocols

Protocol 1: Comprehensive EA-ANN Model Development for LSM

Application: Developing an optimized landslide susceptibility model with enhanced generalizability

Background: This protocol outlines the complete workflow for integrating evolutionary algorithms with artificial neural networks to create robust landslide susceptibility models, adapted from multiple validated studies [4] [7] [22].

Materials and Reagents:

- Geospatial Software: QGIS, ArcGIS for data preparation [25]

- Programming Environment: Python with Scikit-learn, TensorFlow/PyTorch [24] [22]

- Computational Resources: Multi-core processors for parallel EA operations [4]

Procedure:

- Landslide Inventory Preparation

Conditioning Factors Processing

- Select 12-16 relevant conditioning factors based on geological expertise and literature review [4] [15]

- Critical factors include: slope angle, lithology, distance to faults, rainfall, land cover, NDVI, distance to roads and rivers [23] [7] [25]

- Perform multicollinearity analysis using VIF (<5) or PCA to eliminate redundant factors [24] [15]

EA-ANN Integration Phase

- Encoding Strategy: Represent ANN architecture (layers, neurons) and parameters (weights, activation functions) as chromosomes [4] [22]

- Population Initialization: Create initial population of 100-500 candidate ANNs with diverse architectures [4]

- Fitness Function: Define objective function combining AUC-ROC and regularization terms to prevent overfitting [7] [26]

Evolutionary Optimization Cycle

- Evaluation: Train each ANN candidate on training dataset and evaluate using AUC-ROC [4] [26]

- Selection: Apply tournament or roulette wheel selection to choose parents for reproduction [7]

- Crossover: Implement single-point or uniform crossover to exchange architectural elements between parent ANNs

- Mutation: Introduce random modifications to network weights, layers, or learning parameters with low probability (0.01-0.1)

- Elitism: Preserve top 5-10% performers unchanged in next generation [4]

Termination and Extraction

Validation Methods:

- Statistical Validation: AUC-ROC, accuracy, precision, recall, F1-score, RMSE [4] [26]

- Spatial Validation: Overlay susceptibility maps with historical landslide locations [15] [26]

- Comparative Analysis: Benchmark against standalone ANNs and traditional models [22]

Protocol 2: Interpretable EA-ANN using Superposable Neural Networks

Application: Developing physically interpretable landslide models without sacrificing accuracy

Background: This protocol adapts the Superposable Neural Network (SNN) approach to create fully interpretable EA-ANN models that maintain high predictive performance while providing insights into landslide causation mechanisms [22].

Procedure:

- Input Feature Engineering

- Prepare Level-1 features (individual conditioning factors): slope, aspect, curvature, lithology, etc. [22]

- Generate Level-2 composite features (pairwise interactions): slope×precipitation, NDVI×lithology, etc.

- Create Level-3 composite features for complex multivariate interactions

Additive ANN Optimization

- Initialize separate neural networks for each feature and composite feature [22]

- Apply evolutionary algorithms to select optimal combination of features and composite features

- Train individual feature networks using radial basis functions with gradient-free optimizers

- Assemble final model as sum of individual feature network outputs: St = ΣSj [22]

Feature Importance Quantification

- Calculate relative contribution of each feature to final susceptibility output

- Identify critical feature interactions through composite feature analysis

- Validate physical plausibility of identified relationships through geological expertise

Validation:

- Compare performance against black-box DNNs using AUC-ROC [22]

- Assess model interpretability through explicit contribution quantification

- Verify identified relationships against known landslide mechanics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Data Resources for EA-ANN Landslide Research

| Research Reagent | Function | Example Applications | Implementation Notes |

|---|---|---|---|

| Optimization Algorithms | Global search for optimal ANN parameters | COA, HS, SFS, TLBO, NSGA-II [4] [7] | Balance exploration/exploitation; Population size: 100-500 [4] |

| ANN Architectures | Nonlinear pattern recognition from conditioning factors | MLP, RBFN, SNN, Custom [4] [22] | Adaptive architecture evolution outperforms fixed designs [22] |

| Conditioning Factors | Landslide causative factors for model input | Slope, lithology, distance to roads, NDVI, rainfall [23] [15] [25] | 12-16 factors recommended; Apply multicollinearity check [24] |

| Validation Metrics | Model performance and generalizability assessment | AUC-ROC, accuracy, precision, spatial validation [26] | Multi-criteria evaluation essential for reliable selection [26] |

| Fitness Functions | Guide evolutionary search toward optimal solutions | Multi-objective: accuracy + complexity [7] | Incorporate regularization terms to prevent overfitting [4] |

Technical Specifications

The architectural specification illustrates the integrated EA-ANN framework where evolutionary algorithms dynamically optimize the neural network configuration based on performance feedback, creating a self-improving system for landslide prediction. This synergistic integration enables the discovery of optimal model configurations that would be intractable through manual design or isolated optimization approaches, directly contributing to enhanced robustness and generalizability across diverse geological environments.

Implementing EA-ANN Models: A Step-by-Step Methodological Framework

Data preparation forms the foundational stage of any landslide susceptibility mapping (LSM) study, directly influencing the reliability and accuracy of the final predictive models. For research utilizing evolutionary artificial neural networks (ANN), this phase is particularly critical, as the performance of these sophisticated algorithms is contingent upon the quality, resolution, and appropriate processing of input data [4] [2]. This protocol details the systematic procedures for compiling two essential datasets: the landslide inventory map and the landslide conditioning factors. The guidelines are framed within the context of advanced statistical and machine learning methodologies, with specific considerations for their integration with evolutionary algorithm-based ANN approaches, which require optimized input data to efficiently navigate the solution space and avoid local minima [4] [2].

Compiling the Landslide Inventory

The landslide inventory is a spatially referenced database of past and present landslide occurrences and serves as the response variable in susceptibility models.

A multi-source approach is recommended for constructing a comprehensive and accurate inventory:

- Remote Sensing: Analyze high-resolution satellite imagery (e.g., SPOT, Pleiades) and aerial photographs to identify landslide scarps, deposits, and altered geomorphological features [27].

- Field Verification: Ground-truthing is essential for validating remotely identified landslides, classifying landslide types, and determining the state of activity [27].

- Existing Databases: Utilize publicly available landslide inventories, such as those provided by national geological surveys (e.g., the United States Geological Survey's "Landslide Inventories across the United States") [28].

Inventory Requirements for Evolutionary ANN Modeling

For use with evolutionary ANNs, the inventory must be partitioned to facilitate model training and validation.

Data Partitioning: The inventory data should be randomly split into two subsets:

Spatial Representation: The inventory should be representative of the study area's geomorphological diversity to prevent model bias.

Table 1: Key characteristics of a landslide inventory for evolutionary ANN modeling

| Characteristic | Description | Importance for Evolutionary ANN |

|---|---|---|

| Inventory Type | Polygons representing the spatial extent of landslides are preferred over point data [29]. | Provides more precise spatial data for the model to learn from. |

| Temporal Quality | Ideally, landslides should be from a similar temporal period and trigger event. | Reduces noise in the training data, leading to more robust models. |

| Partitioning | Random split into training (e.g., 70%) and testing (e.g., 30%) sets [7]. | Essential for unbiased training and rigorous validation of the model's performance. |

Compiling Landslide Conditioning Factors

Landslide conditioning factors (LCFs) are the independent variables representing the predisposing environmental and anthropogenic factors that contribute to slope instability.

Selection of Conditioning Factors

The selection of LCFs should be guided by the specific geo-environmental context of the study area, data availability, and literature review. Common factor groups include:

- Topographic Factors: Derived from a Digital Elevation Model (DEM), these are often the most influential. Key factors include slope angle, slope aspect, elevation, plan and profile curvature, Topographic Wetness Index (TWI), and Stream Power Index (SPI) [7] [27].

- Geological Factors: Lithology and distance to faults or lineaments [7] [27].

- Hydrological Factors: Distance to rivers and average annual rainfall [7].

- Land Cover/Use Factors: NDVI, land cover type, and distance to roads [7] [27].

Factor Processing and Classification

A crucial step in data preparation is the processing of continuous LCFs, which significantly impacts model performance [29] [30].

- The Classification Challenge: Continuous data (e.g., slope angle) must often be classified into discrete intervals for many statistical models. The method and number of classifications can be highly subjective and impact results [29] [30].

- Classification Criteria Comparison: Studies have tested various criteria, including natural breaks, quantiles, geometrical intervals, equal intervals, and methods based on studentized contrast [29]. Research indicates that using a larger number of classes (e.g., more than 10) or even continuous "stretched" values can yield more reliable models, especially for machine learning methods [29].

- Optimal Parameter-based Geographical Detector (OPGD): To overcome subjectivity, novel methods like the OPGD can be employed. This approach automatically determines the optimal grading strategy and number of classes for each conditioning factor based on the principle of spatial stratified heterogeneity, thereby enhancing modeling efficiency and objectivity [30].

Table 2: Common landslide conditioning factors and data sources

| Factor Group | Specific Factor | Typical Data Source | Brief Description of Function |

|---|---|---|---|

| Topographic | Slope Angle | DEM | Measures steepness; primary control on shear stress. |

| Aspect | DEM | Orientation of slope; influences microclimate & weathering. | |

| Curvature | DEM | Describes surface convexity/concavity; affects water flow. | |

| TWI | Derived from DEM | Quantifies topographic control on soil moisture. | |

| Geological | Lithology | Geological Map | Rock and soil type influencing strength & permeability. |

| Distance to Fault | Geological Map | Proximity to zones of rock weakness and fracturing. | |

| Hydrological | Rainfall | Meteorological Records | Primary trigger for landslide initiation. |

| Distance to River | Hydrographic Data | Influence of riverbank erosion and soil saturation. | |

| Anthropogenic | Distance to Road | Transport Maps | Impact of slope cutting and vibration from traffic. |

| Land Use | Satellite Imagery | Influence of vegetation root strength and water infiltration. |

Experimental Protocols for Data Preparation

Protocol 1: Landslide Inventory Development and Validation

Objective: To create a spatially accurate and temporally consistent landslide inventory map for model training and validation.

- Data Collection: Acquire multi-temporal high-resolution satellite imagery and aerial photographs. Compile all existing reports and maps of landslide events in the study area.

- Landslide Mapping: Manually digitize landslide polygons based on visual interpretation of geomorphological features (e.g., scarps, hummocky terrain) in a GIS environment.

- Field Survey: Conduct a targeted field campaign to verify a representative sample of the mapped landslides, noting type, volume, and activity. Adjust the digital inventory based on field findings.

- Inventory Partitioning: Randomly split the final, validated landslide inventory into a training set (e.g., 70% of landslides) and a testing set (e.g., 30%). Ensure splits are statistically representative.

Protocol 2: Optimized Processing of Conditioning Factors using OPGD

Objective: To objectively determine the optimal classification scheme for continuous conditioning factors prior to modeling.

- Factor Raster Preparation: Compile all continuous conditioning factors as raster layers in a GIS, ensuring they share the same spatial extent and cell size.

- Factor Grading: For each factor, use the OPGD method to test a range of classification schemes (e.g., from 5 to 15 classes) and different classification methods (e.g., natural breaks, quantiles).

- Optimal Scheme Selection: The OPGD algorithm will calculate the q-statistic (a measure of spatial stratified heterogeneity) for each scheme. Select the classification parameters that yield the highest q-value for each factor, indicating the strongest explanatory power [30].

- Input Data Calculation: Using the optimal classification scheme, calculate the input values for the model (e.g., Frequency Ratio) for each class of each factor.

Workflow Visualization

The following diagram illustrates the integrated data preparation workflow for an evolutionary algorithm-based ANN study, from raw data compilation to the creation of analysis-ready datasets.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and tools for landslide susceptibility data preparation

| Tool/Reagent | Function in Data Preparation |

|---|---|

| High-Resolution DEM | The foundational dataset for deriving topographic conditioning factors (slope, aspect, curvature, TWI, SPI). |

| GIS Software (e.g., QGIS, ArcGIS) | The primary platform for spatial data management, layer creation, factor derivation, and map algebra operations. |

| Geological & Land Use Maps | Provide vector data for factors like lithology and land cover, which are converted to raster formats. |

| Optimal Parameters-based Geographical Detector (OPGD) | An algorithm used to objectively determine the optimal classification method and number of classes for continuous conditioning factors [30]. |

| Frequency Ratio (FR) / Weight of Evidence (WoE) | Statistical metrics calculated after factor classification to establish the nonlinear relationship between factors and landslides, often used as model inputs [7] [30]. |

| Evolutionary Algorithm Library (e.g., for Python, R) | Software libraries containing implementations of algorithms like NSGA-II, PSO, etc., used to optimize the ANN model [4] [7] [2]. |

The integration of Artificial Neural Networks (ANNs) into landslide susceptibility mapping represents a significant advancement in geohazard prediction. However, a primary challenge remains: the determination of the optimal network structure and hyperparameters to ensure high predictive accuracy and model generalizability. This process is often complex, time-consuming, and heavily reliant on expert knowledge. Evolutionary algorithms (EAs) provide a powerful, systematic solution to this challenge by automating the search for optimal ANN architectures and their tuning parameters. This document outlines application notes and detailed protocols for leveraging evolutionary optimization techniques to architect ANNs specifically for landslide susceptibility assessments, providing researchers and scientists with a structured methodology to enhance their predictive models.

Core Concepts and Rationale

The Need for Optimization in Landslide Susceptibility Modeling

Landslide susceptibility modeling is a complex, non-linear problem influenced by numerous geo-environmental factors. While ANNs excel at capturing these complex relationships, their performance is highly sensitive to the choice of hyperparameters. Manual tuning of these parameters is inefficient and often fails to locate the global optimum, leading to suboptimal model performance [31] [32]. Factors such as learning rate, number of hidden layers, and the number of neurons in each layer directly impact the network's ability to learn from spatial data on landslide conditioning factors.

Evolutionary algorithms, a class of metaheuristic optimization techniques, mimic natural selection processes to efficiently navigate vast and complex search spaces. When applied to ANN architecting, EAs can automatically identify high-performing network configurations that might be overlooked by manual tuning [4] [2]. This is particularly crucial in landslide mapping, where model accuracy directly influences risk mitigation strategies and land-use planning decisions.

Several evolutionary and metaheuristic algorithms have been successfully applied to optimize ANNs for landslide susceptibility mapping. These algorithms can be broadly categorized into swarm intelligence and evolutionary computation techniques.

- Swarm Intelligence Algorithms: These include Particle Swarm Optimization (PSO), which simulates social behavior patterns like bird flocking. PSO has been effectively used to optimize the structural parameters of ANN and Support Vector Machine (SVM) models [2].

- Evolutionary Algorithms: This category includes Genetic Algorithms (GA), which mimic natural selection, and other population-based methods like the Gradient-based optimizer (GBO). For instance, one study used GBO to optimize the hyperparameters of a Backpropagation Neural Network (BPNN), including the number of hidden layers and learning rate, resulting in a significant increase in the Area Under the Curve (AUC) value [31].

- Advanced Hybrid and Niche Algorithms: Research has validated the use of several other powerful optimizers, including:

- Coot Optimization Algorithm (COA)

- Harmony Search (HS)

- Stochastic Fractal Search (SFS)

- Teaching-Learning-Based Optimization (TLBO) [4]

Comparative studies have shown that these optimization algorithms can increase the performance and accuracy of neural networks, with some models achieving AUC values exceeding 0.99 on training datasets [4].

Performance Comparison of Optimization Algorithms

The table below summarizes the performance of various evolutionary algorithms as reported in landslide susceptibility studies.

Table 1: Performance Comparison of Evolutionary Algorithms for ANN Optimization

| Optimization Algorithm | ANN Model Type | Reported Performance (AUC) | Key Optimized Hyperparameters | Reference Study Area |

|---|---|---|---|---|

| Gradient-based Optimizer (GBO) | Backpropagation (BPNN) | Training AUC increased by ~4% [31] | Number of hidden layers, Learning rate, Num_epochs [31] | Sinan County, China [31] |

| Coot Optimization (COA) | Multilayer Perceptron (MLP) | Training: 0.998, Testing: 0.995 [4] | Swarm size, Network weights/structure | Gilan, Iran [4] |

| Stochastic Fractal Search (SFS) | Multilayer Perceptron (MLP) | Training: 0.999, Testing: 0.996 [4] | Network weights/structure | Gilan, Iran [4] |

| Particle Swarm Optimization (PSO) | Multilayer Perceptron (MLP) | Overall accuracy of RF model boosted by 3-5% [32] | Feature selection, Structural parameters [2] | Achaia, Greece [2] |

| Genetic Algorithm (GA) | Multilayer Perceptron (MLP) | Used for feature selection [2] | Feature subset, Model parameters [2] | Achaia, Greece [2] |

Experimental Protocols

Protocol 1: Optimizing a BPNN using a Gradient-based Optimizer (GBO)

This protocol details the methodology for optimizing a Backpropagation Neural Network using a GBO, as validated in a study of Sinan County, China [31].

1. Research Objectives: To optimize the hyperparameters of a BPNN model for landslide susceptibility mapping, thereby improving prediction accuracy and reliability.

2. Materials and Reagents:

- Software: Python with libraries such as TensorFlow/Keras or PyTorch for ANN development, and Scikit-learn for data preprocessing and validation.

- Hardware: A computer with a multi-core CPU; a GPU is recommended to accelerate the neural network training and optimization process.

3. Experimental Workflow:

Step 1: Data Preparation and Preprocessing

- Construct a spatial database from 167 historical landslide events [31].

- Select 12 landslide conditioning factors (e.g., slope, aspect, lithology, distance to roads, etc.).

- Address the critical challenge of non-landslide sample selection. Employ a method like Multi-Sample Label Learning (MSLL) to reduce uncertainty. Studies show MSLL can improve AUC by approximately 3% compared to simpler methods like Buffer Control Sampling [31].

- Randomly split the landslide and non-landslide samples into training and testing sets (e.g., 70%/30%).

Step 2: Define the Search Space for Hyperparameters

- Identify the key BPNN hyperparameters to be optimized and their plausible ranges:

learning_rate: Continuous (e.g., 0.001 to 0.1)n_hidden_layers: Integer (e.g., 1 to 3)n_units_per_layer: Integer (e.g., 10 to 100)num_epochs: Integer (e.g., 100 to 1000) [31]

Step 3: Initialize the GBO Algorithm

- Set the GBO population size and maximum number of iterations.

- Define the objective function, which is to maximize the validation AUC (Area Under the ROC Curve) of the BPNN model.

Step 4: Execute the Optimization Loop

- For each individual in the GBO population:

- Configure the BPNN with the hyperparameters represented by the individual.

- Train the BPNN on the training dataset.

- Evaluate the trained model on the validation set and compute the AUC.

- The AUC value is returned as the fitness score for the individual.

- The GBO algorithm then updates the population based on fitness, moving towards hyperparameter combinations that yield higher AUC values.

Step 5: Model Validation and Susceptibility Mapping

- Once the optimization converges, retrieve the best hyperparameter set.

- Train a final BPNN model on the entire training set using these optimized parameters.

- Evaluate the final model's performance on the held-out test set to obtain an unbiased measure of accuracy.

- Apply the model to the entire study area to generate the Landslide Susceptibility Map (LSM).

Protocol 2: Multi-Algorithm Validation for ANN Optimizaiton

This protocol is based on a comparative study from Gilan, Iran, which validated four different optimization algorithms combined with ANN [4].

1. Research Objectives: To comprehensively compare the performance of multiple evolutionary algorithms (COA, HS, SFS, TLBO) in optimizing an ANN for landslide susceptibility mapping and to identify the most effective optimizer for the specific study area.

2. Materials and Reagents:

- Software: GIS software (e.g., ArcGIS, QGIS) for spatial data management, and a programming environment (e.g., MATLAB, Python) for implementing ANN and optimization algorithms.

- Data: A landslide inventory map with 370 historical landslide locations and sixteen causal factor layers [4].

3. Experimental Workflow:

Step 1: Database Construction

- Compile and preprocess sixteen landslide conditioning factors from topographic, geomorphologic, geological, land use, and hydrological data [4].

- Perform a correlation analysis to check for multicollinearity among factors.

Step 2: Algorithm Configuration

- Implement four optimization algorithms: COA, HS, SFS, and TLBO.

- For each algorithm, set a common ANN architecture (e.g., a Multilayer Perceptron) as the base model to be optimized.

- Define a consistent search space for hyperparameters relevant to the ANN's structure and the learning process.

Step 3: Parallel Optimization and Evaluation

- Run each optimization algorithm independently to tune the ANN model.

- For each optimizer, use k-fold cross-validation (e.g., 10-fold) to ensure a robust evaluation of the model performance and avoid overfitting.

- Record the optimal hyperparameters found by each algorithm and the corresponding training/testing performance metrics (AUC, RMSE, Accuracy).

Step 4: Comparative Analysis and Model Selection

- Compare the final performance of the four optimized ANN models (e.g., COA-MLP, HS-MLP, SFS-MLP, TLBO-MLP) using the testing dataset.

- Select the model with the highest predictive accuracy and generalizability for the final susceptibility mapping. The study in Gilan found SFS-MLP to have the highest training AUC (0.999) and testing AUC (0.996) [4].

The following diagram illustrates the high-level logical workflow common to both protocols, from data preparation to the generation of a susceptibility map.

Diagram 1: Workflow for Evolutionary Algorithm-based ANN Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational "Reagents" for Evolutionary ANN Optimization

| Reagent / Tool | Function / Purpose | Example / Notes |

|---|---|---|

| Landslide Inventory | The fundamental response variable for model training and validation. | A map of 501 documented events [33] or 335 landslides [2], created via field work, satellite imagery, and historical records. |

| Conditioning Factors | The predictive variables representing geo-environmental conditions. | Common factors: Lithology, Slope, Aspect, Distance to roads/faults/rivers, Land use, NDVI, Rainfall, Elevation, Curvature [34] [33] [2]. |

| Genetic Algorithm (GA) | An evolutionary optimizer used for feature selection to reduce dimensionality and improve model generalization [2]. | Selects an optimal subset of conditioning factors, removing redundant information. |

| Particle Swarm Optimization (PSO) | A swarm intelligence optimizer used for tuning the structural parameters of ML models [2]. | Effective for optimizing parameters like the number of neurons, learning rate, and kernel parameters for SVMs. |

| Gradient-based Optimizer (GBO) | A metaheuristic algorithm for optimizing model hyperparameters [31]. | Used to optimize BPNN hyperparameters (hidden layers, epochs, learning rate), increasing AUC by 3-4% [31]. |

| Performance Metrics | Quantitative measures to evaluate model accuracy and generalizability. | AUC (Area Under ROC Curve): Primary metric for binary classification [31] [4]. RMSE, Accuracy, Precision are also used [31] [7]. |

Architecting an ANN for landslide susceptibility mapping is a non-trivial task that is greatly enhanced by the application of evolutionary algorithms. The protocols and data presented herein demonstrate that methods like GBO, PSO, COA, and SFS can systematically and automatically discover high-performing network architectures and hyperparameters, leading to substantial improvements in predictive accuracy (AUC) over manually tuned models. By following the structured experimental protocols, researchers can implement these powerful optimization techniques to develop more reliable and accurate landslide susceptibility models, thereby providing a stronger scientific basis for land-use planning and hazard mitigation in vulnerable regions.

This application note provides a detailed protocol for integrating four optimization algorithms—Coyote Optimization Algorithm (COA), Genetic Algorithm (GA), Particle Swarm Optimization (PSO), and Bayesian Optimization (BO)—to enhance the performance of Artificial Neural Networks (ANN) in landslide susceptibility mapping (LSM). The workflow addresses critical challenges in model tuning, feature selection, and computational efficiency, which are paramount for producing reliable geospatial risk assessments. Designed for researchers and scientists in geohazard modeling, the document includes structured performance data, step-by-step experimental procedures, and visual workflows to facilitate implementation and reproducibility.

Landslide Susceptibility Mapping (LSM) is a critical tool for identifying landslide-prone areas, supporting disaster risk management, and informing land-use planning [24] [35]. Machine learning (ML) models, particularly Artificial Neural Networks (ANN), have demonstrated superior performance in handling the complex, non-linear relationships between landslide causative factors [36] [2]. However, these models present significant challenges, including computational complexity, the curse of dimensionality, and the need for precise tuning of structural parameters [2]. Suboptimal parameter configuration can lead to overfitting, reduced generalization ability, and unreliable susceptibility maps [2].

Evolutionary and Bayesian optimization algorithms offer a robust solution to these challenges by automating the search for optimal model parameters and feature subsets. For instance, studies have confirmed that integrating optimization algorithms can increase prediction accuracy significantly, from nearly 77% to around 86% [2]. This document outlines a synthesized workflow leveraging the strengths of COA, GA, PSO, and BO to create a hybrid optimization framework for ANN-based LSM, enhancing both model accuracy and operational efficiency.

Performance Comparison of Optimization Algorithms

The selection of an optimization algorithm depends on the specific requirements of the LSM project, including dataset size, available computational resources, and desired performance metrics. The following tables summarize the characteristic strengths and documented performance of the discussed algorithms.

Table 1: Characteristic Strengths and Computational Profiles of Optimization Algorithms

| Algorithm | Primary Strength | Computational Profile | Ideal Use Case |

|---|---|---|---|

| COA (Coyote Optimization Algorithm) | High predictive accuracy in complex landscapes [36] | Computationally intensive; requires parameter tuning [36] | Final model tuning for high-stakes mapping where accuracy is critical |

| GA (Genetic Algorithm) | Effective feature selection; reduces model complexity [2] | Moderately intensive; efficient for feature subset exploration [37] [2] | Pre-processing stage for identifying optimal causative factors |

| PSO (Particle Swarm Optimization) | Fast convergence; excellent for parameter tuning [37] [2] | Highly parallelizable; suitable for distributed computing [38] | Rapid optimization of ANN parameters (e.g., weights, learning rate) |

| Bayesian Optimization (BO) | Sample-efficient for expensive-to-evaluate functions [37] [38] | Sequential nature can limit parallelization [38] | Optimizing complex models with limited computational budget |

Table 2: Documented Performance in Landslide Susceptibility Mapping

| Algorithm | Application Context | Reported Performance | Citation |

|---|---|---|---|

| COA-MLP | LSM in Gilan, Iran (ANN optimization) | AUC (Training): 0.998; AUC (Testing): 0.995 | [36] |

| PSO | Set-point tracking for MPC (not LSM) | Achieved power load tracking error of <2% | [37] |

| GA | Set-point tracking for MPC (not LSM) | Reduced power load tracking error from 16% to 8% | [37] |

| BO | Tuning MPC controllers | Reduced computational cost vs. traditional methods | [37] |

| PSO-SVM | LSM in Achaia, Greece (Parameter tuning) | AUC (Training): 0.977; AUC (Testing): 0.750 | [2] |