Dynamic Neighbor Multi-Task Particle Swarm Optimization: Advanced Algorithms and Applications in Drug Discovery

This article explores the cutting-edge integration of dynamic neighborhood topologies with Multi-Task Particle Swarm Optimization (MT-PSO) for complex optimization challenges in pharmaceutical research and development.

Dynamic Neighbor Multi-Task Particle Swarm Optimization: Advanced Algorithms and Applications in Drug Discovery

Abstract

This article explores the cutting-edge integration of dynamic neighborhood topologies with Multi-Task Particle Swarm Optimization (MT-PSO) for complex optimization challenges in pharmaceutical research and development. We provide a comprehensive examination of foundational principles, advanced methodological adaptations, and practical troubleshooting strategies for researchers and drug development professionals. Through validation against benchmark studies and real-world case examples—including molecular optimization and kinetic parameter estimation—we demonstrate how dynamic neighbor MT-PSO enhances convergence properties, avoids premature convergence, and improves solution diversity in multi-objective drug discovery problems. The content synthesizes recent advances from high-impact research to offer a practical guide for implementing these techniques in biomedical optimization scenarios.

Understanding Dynamic Neighbor Multi-Task PSO: Core Principles and Biological Inspiration

The Fundamental Mechanics of Particle Swarm Optimization

Particle Swarm Optimization (PSO) is a population-based stochastic optimization technique inspired by the social behavior of biological organisms, such as bird flocking or fish schooling [1] [2]. Since its introduction by Kennedy and Eberhart in 1995, PSO has gained significant popularity due to its simple implementation, rapid convergence characteristics, and robust performance across diverse optimization landscapes [2] [3]. The algorithm maintains a population of candidate solutions, called particles, that navigate the search space by adjusting their trajectories based on their own experience and the collective knowledge of the swarm.

In the context of multi-task optimization and dynamic neighbor research, understanding the fundamental mechanics of PSO becomes crucial. Recent advances in multi-task PSO leverage the inherent parallelism of population-based search to simultaneously address multiple optimization problems, transferring knowledge between tasks to accelerate convergence and improve solution quality [4] [5] [6]. The dynamic neighborhood topology plays a pivotal role in balancing exploration and exploitation, preventing premature convergence while maintaining swarm diversity throughout the optimization process [1] [2].

Core Algorithmic Framework

Fundamental Equations and Parameters

The standard PSO algorithm operates through two fundamental update equations that govern particle movement in the search space. For each particle (i) in dimension (d) at iteration (k+1), the velocity and position are updated as follows [1] [2]:

[ \mathcal{V}{i}^{d}(k+1) = \omega \mathcal{V}{i}^{d}(k) + c1 r1 (\mathcal{P}{i}^{d}(k) - \mathcal{X}{i}^{d}(k)) + c2 r2 (\mathcal{P}{g}^{d}(k) - \mathcal{X}{i}^{d}(k)) ]

[ \mathcal{X}{i}^{d}(k+1) = \mathcal{X}{i}^{d}(k) + \mathcal{V}_{i}^{d}(k+1) ]

Where:

- (\mathcal{V}_{i}^{d}(k)) represents the velocity of particle (i) in dimension (d) at iteration (k)

- (\mathcal{X}_{i}^{d}(k)) represents the position of particle (i) in dimension (d) at iteration (k)

- (\mathcal{P}_{i}^{d}(k)) is the best position found by particle (i) in dimension (d) (personal best)

- (\mathcal{P}_{g}^{d}(k)) is the best position found by the entire neighborhood in dimension (d) (global best or local best)

- (\omega) is the inertia weight controlling the influence of previous velocity

- (c1) and (c2) are acceleration coefficients (cognitive and social parameters)

- (r1) and (r2) are random numbers uniformly distributed between 0 and 1

Table 1: Core Parameters in Standard PSO

| Parameter | Symbol | Typical Range | Function | Impact on Search |

|---|---|---|---|---|

| Inertia Weight | (\omega) | 0.4-0.9 | Controls momentum | High: exploration; Low: exploitation |

| Cognitive Coefficient | (c_1) | 1.5-2.0 | Attraction to personal best | Maintains individual diversity |

| Social Coefficient | (c_2) | 1.5-2.0 | Attraction to neighborhood best | Promotes convergence |

| Velocity Clamping | (V_{max}) | Problem-dependent | Limits maximum step size | Prevents explosive growth |

Neighborhood Topologies

The social structure of PSO significantly influences its exploration-exploitation balance. The neighborhood topology defines how particles communicate and share information within the swarm [2]. Research has shown that dynamic neighborhood strategies based on Euclidean distance can enhance performance by preventing premature convergence and maintaining diversity [1].

Table 2: Common PSO Neighborhood Topologies

| Topology | Structure | Convergence Speed | Diversity Maintenance | Applications |

|---|---|---|---|---|

| Global Best (gbest) | Fully connected | Fast | Low | Unimodal problems, smooth landscapes |

| Local Best (lbest) | Ring topology | Slow | High | Multimodal problems, avoiding local optima |

| Von Neumann | Grid-based | Moderate | Moderate | Balanced performance across problems |

| Dynamic Euclidean | Distance-based adaptive | Variable | High | Multimodal, nonlinear equation systems [1] |

| Small-World | Random rewiring | Moderate-High | Moderate-High | Complex, high-dimensional problems |

Advanced Mechanisms in Modern PSO

Parameter Adaptation Strategies

Contemporary PSO variants employ sophisticated parameter adaptation strategies to dynamically balance exploration and exploitation during the search process [2]. These approaches have shown particular relevance in multi-task optimization environments where problem characteristics may vary across tasks [4] [5].

Inertia Weight Adaptation Methods:

- Time-varying Decrease: Linear or nonlinear reduction from high ((\omega \approx 0.9)) to low ((\omega \approx 0.4)) values [2]

- Randomized Inertia: Stochastic selection within a specified range to prevent coordinated stagnation [2]

- Feedback-Adaptive: Adjustment based on swarm diversity, velocity dispersion, or fitness improvement rates [2]

- Chaotic Sequences: Deterministic yet non-repeating variation using logistic maps or similar functions [2]

Acceleration Coefficient Adaptation: Advanced PSO implementations often simultaneously adapt cognitive and social parameters alongside inertia weight. For instance, the ADIWACO variant demonstrates that co-adapting all three parameters significantly outperforms standard PSO on benchmark functions [2]. In multi-task environments, adaptive acceleration coefficients can regulate knowledge transfer intensity between tasks based on their interdependencies [4].

Hybridization with Other Techniques

Integration with complementary optimization strategies has enhanced PSO's capability to handle complex, high-dimensional problems:

Levy Flight Strategies: The incorporation of Levy flight mechanisms into velocity updates helps balance global and local search capabilities. The heavy-tailed distribution of step sizes enables more efficient exploration of the search space while maintaining exploitation near promising regions [1] [7].

Discrete Crossover Operations: For high-dimensional nonlinear equations and feature selection problems, discrete crossover strategies enhance PSO's performance by facilitating information exchange between particles [1] [6]. The Dynamic Neighborhood PSO with Euclidean distance (EDPSO) employs this approach to effectively locate multiple roots of nonlinear equation systems in a single run [1].

Archive-Guided Mutation: External archives storing historical best solutions guide mutation operations, particularly in multi-objective implementations. The TAMOPSO algorithm uses archive information to automatically increase mutation probability when population convergence is detected, expanding search range dynamically [7].

Application Notes: PSO in Scientific Domains

Solving Nonlinear Equation Systems

The EDPSO algorithm demonstrates PSO's effectiveness in locating all roots of nonlinear equation systems (NESs) in a single computational procedure [1]. This capability addresses a fundamental challenge in computational mathematics where traditional methods like Newton's approach can typically find only one root per run and exhibit high sensitivity to initial guesses.

Key Enhancement for NES:

- Dynamic neighborhood formation based on Euclidean distance

- Levy flight integration in velocity update

- Discrete crossover for high-dimensional problems

Performance Metrics: On 20 NES benchmark problems, EDPSO achieved a success rate (SR) of 0.992 and root rate (RR) of 0.999, outperforming comparison methods including LSTP, NSDE, KSDE, NCDE, HNDE, and DR-JADE [1].

Multi-Task Optimization Frameworks

Multi-task PSO (MTPSO) represents a paradigm shift from single-problem optimization to simultaneous optimization of multiple related tasks [4] [5] [6]. By leveraging implicit parallelism of population-based search, MTPSO transfers knowledge between tasks to improve overall optimization performance.

MOMTPSO Framework: This innovative algorithm integrates objective space division with adaptive transfer mechanisms [4]. Key components include:

- Adaptive Knowledge Transfer Probability (AKTP): Adjusts transfer intensity based on swarm state

- Guiding Particle Selection (GPS): Divides objective spaces into subspaces, selecting guides from low-density regions

- Adaptive Acceleration Coefficient (AAC): Configures transfer guiding particle coefficients based on inter-task relationships

Experimental Validation: MOMTPSO demonstrates superior performance on CEC evolutionary multi-task optimization benchmarks compared to state-of-the-art alternatives, particularly in handling the intensity, timing, and source selection of knowledge transfer [4].

Chemistry and Drug Development Applications

PSO has emerged as a valuable tool in chemical research and drug development, particularly for molecular docking, quantitative structure-activity relationship (QSAR) modeling, and chemical process optimization [3]. The algorithm's ability to navigate high-dimensional, multimodal search spaces makes it suitable for molecular conformation analysis and protein-ligand binding optimization.

Feature Selection in High-Dimensional Chemical Data: The Multi-task Evolutionary Learning (MEL) approach employs PSO for feature selection on high-dimensional chemical and biological data [6]. By leveraging multi-task learning, MEL identifies compact feature subsets that maximize classification accuracy while reducing dimensionality - a crucial capability in biomarker discovery and molecular profiling.

Experimental Protocols and Methodologies

Standard Implementation Protocol

Initialization Phase:

- Define search space boundaries ([L, U]^T) for each dimension

- Initialize population size (N) (typically 20-50 particles)

- Set maximum iteration count or convergence threshold

- Initialize particle positions uniformly random within search bounds

- Initialize velocities to zero or small random values

- Configure parameters: (\omega), (c1), (c2), (V_{max})

Iteration Phase:

- Evaluate fitness (F(x) = \sum{i=1}^m fi(x)) for all particles

- Update personal best positions (\mathcal{P}_i) if current position yields better fitness

- Identify neighborhood best positions (\mathcal{P}_g) based on topology

- Update velocities using fundamental PSO equation

- Apply velocity clamping if necessary: (\mathcal{V}i^d = \min(\max(\mathcal{V}i^d, -V{max}), V{max}))

- Update positions using fundamental position equation

- Apply boundary conditions if particles exceed search space

- Check termination criteria (maximum iterations or convergence threshold)

Termination Phase:

- Extract global best solution (\mathcal{P}_g) from the swarm

- Return optimal solution and associated fitness value

Dynamic Neighborhood PSO Protocol

Based on the EDPSO algorithm for nonlinear equation systems [1]:

Specialized Initialization:

- Formulate NES as minimization problem: (\min F(x) = \sum{i=1}^m fi(x))

- Initialize swarm with random positions within defined bounds

- Set dynamic neighborhood parameters (Euclidean distance threshold)

Enhanced Iteration Process:

- Evaluate fitness for all particles

- Compute Euclidean distances between all particle pairs

- Form dynamic neighborhoods based on current distance thresholds

- Identify neighborhood best positions within each dynamic neighborhood

- Update velocities incorporating Levy flight strategy: [ \mathcal{V}i^{d}(k+1) = \omega \mathcal{V}i^{d}(k) + c1 r1 (\mathcal{P}i^{d}(k) - \mathcal{X}i^{d}(k)) + c2 r2 (\mathcal{P}l^{d}(k) - \mathcal{X}i^{d}(k)) + \alpha \cdot \text{Levy}(\lambda) ] where (\mathcal{P}_l^{d}) is the local best within the dynamic neighborhood, and (\alpha) scales the Levy flight step

- Apply discrete crossover between selected particles to enhance diversity

- Update positions and enforce boundary constraints

- Archive identified roots to maintain multiple solutions

Validation Metrics:

- Success Rate (SR): Proportion of successful runs finding all roots within precision

- Root Rate (RR): Proportion of actual roots identified across all runs

Multi-Task PSO Implementation

Adapted from MOMTPSO for multi-objective multi-task optimization [4]:

Multi-Task Setup:

- Define (K) optimization tasks with objective functions (f1, f2, ..., f_K)

- Initialize separate swarm (P_k) for each task in unified search space

- Initialize knowledge transfer probability (ktp_k) for each task

- Create external archive (A_k) for non-dominated solutions per task

Adaptive Knowledge Transfer Cycle:

- Evaluate particles on their respective tasks

- Update archives (A_k) with non-dominated solutions

- Compute task relatedness measures based on archive distributions

- Adjust knowledge transfer probabilities (ktp_k) based on swarm state:

- Increase if high proportion of quality particles

- Decrease if poor performance or negative transfer detected

- Divide objective space into subspaces for each task

- Select guiding particles from low-density regions to enhance diversity

- For each particle, with probability (ktp_k):

- Identify source task for knowledge transfer

- Compute adaptive acceleration coefficient based on task distance

- Incorporate transfer guiding particle into velocity update

- Update particle velocities and positions using modified PSO equations

Performance Assessment:

- Hypervolume indicator: Measures volume of objective space dominated by solutions

- Inverted Generational Distance (IGD): Quantifies convergence and diversity

- Task-based metrics: Evaluate performance improvement relative to single-task optimization

Visualization of PSO Mechanisms

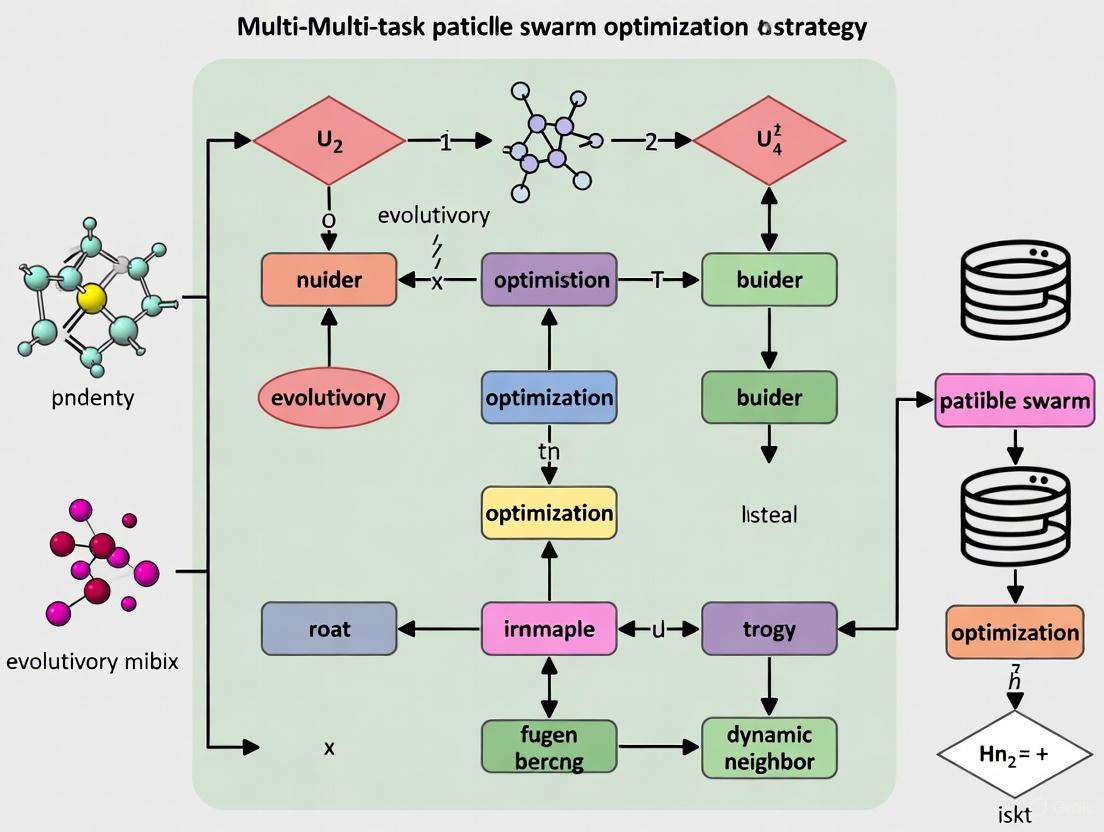

PSO Architecture and Relationships - This diagram illustrates the core components, advanced mechanisms, and application domains of Particle Swarm Optimization, highlighting interconnections between fundamental processes and specialized enhancements.

PSO Experimental Workflow - This flowchart depicts the standard PSO iteration process with extensions for dynamic neighborhood formation and multi-task knowledge transfer, highlighting key decision points and cyclic nature of the algorithm.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for PSO Research

| Tool Category | Specific Implementation | Function | Application Context |

|---|---|---|---|

| Benchmark Suites | CEC Competition Problems | Algorithm validation and comparison | General optimization, Multi-task testing [4] [8] |

| Nonlinear Equation Systems | Root-finding capability assessment | EDPSO validation [1] | |

| Performance Metrics | Success Rate (SR) | Proportion of successful runs | Algorithm reliability assessment [1] |

| Root Rate (RR) | Completeness of solution identification | Multi-modal problem solving [1] | |

| Hypervolume Indicator | Quality assessment of Pareto fronts | Multi-objective optimization [4] [8] | |

| Inverted Generational Distance | Convergence and diversity measurement | Multi-objective algorithm comparison [4] | |

| Specialized Operators | Levy Flight Step | Long-tailed random walk | Global exploration enhancement [1] [7] |

| Discrete Crossover | Information exchange between particles | Diversity maintenance [1] | |

| Archive Mechanism | Storage of non-dominated solutions | Multi-objective optimization [7] [4] | |

| Dynamic Neighborhood | Adaptive communication topology | Prevention of premature convergence [1] [2] |

The fundamental mechanics of Particle Swarm Optimization provide a robust foundation for solving complex optimization problems across scientific domains. The algorithm's simple yet powerful paradigm of social learning and collaborative search has evolved significantly since its inception, with modern variants incorporating dynamic neighborhood structures, adaptive parameter control, and sophisticated knowledge transfer mechanisms. These advancements have expanded PSO's applicability to challenging problem domains including nonlinear equation systems, multi-task environments, chemical optimization, and high-dimensional feature selection.

In the context of multi-task PSO with dynamic neighbors, the research trajectory points toward increasingly self-adaptive systems capable of autonomously adjusting their search strategies based on problem characteristics and optimization progress. The integration of PSO with other computational intelligence techniques, coupled with theoretical advances in convergence analysis and parameter control, will further solidify its position as a versatile and effective optimization tool for researchers, scientists, and drug development professionals tackling complex, high-dimensional problems.

The field of optimization has undergone a significant paradigm shift, evolving from single-task approaches to the simultaneous handling of multiple tasks. Evolutionary Multitask Optimization (EMTO) represents a breakthrough in computational intelligence, enabling parallel problem-solving by leveraging potential synergies and complementarities between different optimization tasks [9] [10]. This evolution mirrors concepts from transfer learning and multitask learning in mainstream machine learning, allowing knowledge gained from one task to accelerate and improve the optimization of other related tasks [11].

The application of this paradigm through Particle Swarm Optimization (PSO) has created particularly powerful algorithms for complex scientific domains. In drug discovery and development, multi-task PSO addresses critical challenges including the optimization of multi-parametric kinetic schemes, interpretation of complex biological datasets, and prediction of pharmacokinetic properties [12] [13]. The ability to efficiently handle these computationally expensive problems has positioned multitask PSO as a valuable methodology for researchers tackling the intricate optimization challenges inherent in pharmaceutical research and development.

Core Methodologies in Multi-Task PSO

Fundamental Principles and Algorithmic Framework

Multi-task PSO extends the traditional PSO framework by creating mechanisms for knowledge transfer between concurrently optimized tasks. Where single-task PSO maintains a single swarm searching for one optimal solution, multi-task PSO manages multiple swarms (or a unified population) that collaboratively solve several tasks simultaneously [14]. The mathematical description for an MTO problem involving K tasks is formalized as: [ {x1^*, x2^, \dots, x_k^} = \arg \min {f1(x1), f2(x2), \dots, fk(xk)} ] where each candidate solution ( xj ) and its global optimum ( xj^* ) reside in a ( Dj )-dimensional search space ( Xj ), and ( fj ) represents the objective function for task ( Tj ) [14].

The paradigm leverages the implicit parallelism of population-based search, where candidate solutions implicitly carry knowledge about their respective tasks. Through carefully designed transfer mechanisms, this knowledge can benefit other tasks being optimized concurrently, often leading to accelerated convergence and improved solution quality compared to single-task optimization [10].

Advanced Knowledge Transfer Strategies

Recent research has focused on developing sophisticated transfer mechanisms to maximize positive knowledge exchange while minimizing negative transfer:

Variable Chunking with Local Meta-Knowledge Transfer: This approach constructs auxiliary transfer individuals using variable chunking and Latin Hypercube Sampling, enabling information exchange among variables of different dimensions. It incorporates a local meta-knowledge transfer strategy based on population clustering to identify local similarities between tasks, even when global similarity is low [15].

Level-Based Inter-Task Learning: Inspired by pedagogical principles, this strategy separates particles into different levels with distinct inter-task learning methods. Particles with diverse search preferences explore the search space using cross-task knowledge while maintaining refinement capabilities [16].

Self-Regulated Knowledge Transfer: This method dynamically adapts task relatedness through evolving population characteristics. It evaluates each particle's ability on different tasks and adjusts knowledge transfer accordingly, creating an adaptive system that responds to the changing optimization landscape [14].

Table 1: Comparison of Multi-Task PSO Knowledge Transfer Strategies

| Strategy | Core Mechanism | Advantages | Limitations Addressed |

|---|---|---|---|

| Variable Chunking with Local Meta-Knowledge [15] | Variable chunking with LHS and local similarity clustering | Enables information exchange between different dimensional variables; utilizes local similarities | Addresses ignorance of local information in low-similarity tasks; enables cross-dimensional information exchange |

| Level-Based Inter-Task Learning [16] | Dynamic neighbor topology with level-based learning assignments | Adapts teaching strategies to particle levels; balances exploration and exploitation | Prevents poor performance in later search stages when particles find different optimal areas |

| Self-Regulated Transfer [14] | Ability vector-based task selection and impact adaptation | Automatically adjusts to dynamic task relatedness; reduces negative transfer | Eliminates dependence on fixed matching probabilities; adapts to changing optimization landscape |

| Adaptive Transfer Probability [15] | Dynamic adjustment based on task similarity measurements | Reduces irrelevant information transfer between dissimilar tasks | Mitigates negative transfer from fixed probability schemes |

Dynamic Neighborhood Management

A critical advancement in multi-task PSO involves the implementation of dynamic neighbor topologies that reform the local structure across inter-task particles through methodical sampling, evaluating, and selecting processes [16]. Unlike static approaches, these dynamic topologies allow the algorithm to adapt to changing search landscapes and inter-task relationships throughout the optimization process. The dynamic neighborhood enables more efficient knowledge transfer by connecting particles that can benefit most from information exchange at different stages of optimization, significantly enhancing the algorithm's ability to maintain diversity while refining promising solutions.

Application Notes: Multi-Task PSO in Drug Discovery

Kinetic Modeling of Protein Oligomerization

A compelling application of multi-task PSO in pharmaceutical research involves analyzing complex protein oligomerization equilibria. Researchers applied PSO to examine the effects of a small-molecule inhibitor on the oligomerization equilibrium of the HSD17β13 enzyme, which displayed unusually large thermal shifts inconsistent with simple binding models [12].

The optimization challenge involved determining optimal parameters for a kinetic scheme modeling HSD17β13 in monomeric, dimeric, and tetrameric states. Traditional gradient-based methods struggled with this multi-parametric problem due to multiple local minima in the parameter space. The PSO approach successfully navigated this complex landscape by leveraging its population-based search capabilities, ultimately revealing that the inhibitor shifted the protein equilibrium toward the dimeric state [12]. This finding was subsequently validated experimentally through mass photometry data, confirming the predictive power of the PSO-optimized model.

Pharmacokinetic Prediction Modeling

In another pharmaceutical application, researchers developed a PSO-backpropagation artificial neural network (PSO-BPANN) model to predict omeprazole plasma concentrations in Chinese populations [13]. This study addressed significant interindividual variations in omeprazole pharmacokinetics while investigating effects of age and gender.

After identifying significant differences in key pharmacokinetic parameters (( C{max} ), ( AUC{0-t} ), ( AUC{0-\infty} ), and ( t{1/2} )) between age groups, the researchers implemented a PSO to optimize the BPANN model. The metaheuristic approach efficiently located optimal network parameters that would be difficult to find through traditional gradient-based methods alone. The resulting model demonstrated excellent predictive performance with correlation coefficients of 0.949, 0.903, and 0.874 for training, validation, and test groups respectively [13].

Table 2: Multi-Task PSO Applications in Pharmaceutical Research

| Application Area | Optimization Challenge | PSO Variant | Key Outcomes |

|---|---|---|---|

| Protein Oligomerization Kinetics [12] | Multi-parametric model with multiple local minima | Global PSO with gradient descent refinement | Identified inhibitor-induced shift to dimeric state; validated with mass photometry |

| Pharmacokinetic Prediction [13] | Interindividual variation with multiple influencing factors | PSO-BPANN hybrid model | High prediction accuracy (MSE: 0.000355); identified age-based PK differences |

| Drug Mechanism Elucidation [12] | Complex biological system with numerous components | Multi-parameter PSO with linear gradient descent | Uncovered unusual stabilization mechanism; enabled bias-free interpretation |

Experimental Protocols

Protocol: Kinetic Model Optimization for Protein Oligomerization

Objective: To determine the optimal kinetic parameters for protein oligomerization equilibrium under inhibitor influence using multi-task PSO.

Materials and Reagents:

- Purified target protein (e.g., HSD17β13)

- Small molecule inhibitor compounds

- Fluorescent dye for thermal shift assay (e.g., SYPRO Orange)

- LC-MS/MS system for concentration quantification

- Thermal cycler with fluorescence detection capability

Computational Resources:

- PSO implementation with linear gradient descent refinement

- Parameter optimization framework (e.g., hydroPSO package in R)

- Global analysis software for kinetic modeling

Methodology:

- Experimental Data Collection:

- Perform fluorescent thermal shift assays across a range of inhibitor concentrations

- Collect protein melting curves at each concentration (typically 0-100µM)

- Record fluorescence intensity at 0.5-1.0°C intervals from 25°C to 95°C

- Validate binding through orthogonal techniques (e.g., surface plasmon resonance)

Model Formulation:

- Define the oligomerization kinetic scheme with monomer-dimer-tetramer equilibria

- Establish ordinary differential equations describing the system dynamics

- Identify key parameters for optimization (( K_D ), ( \Delta H ), ( \Delta S ), inhibition constants)

PSO Configuration:

- Initialize particle swarm with random positions in parameter space

- Set inertial weight (ω) to 0.729 and acceleration constants (c₁, c₂) to 1.49

- Define fitness function as sum of squared residuals between experimental and simulated melting curves

- Implement boundary constraints based on biophysical plausibility

Optimization Execution:

- Execute PSO for global exploration (typically 100-200 iterations)

- Refine best solution using linear gradient descent for local improvement

- Repeat optimization with different initial conditions to verify global optimum identification

- Validate model with hold-out experimental data not used in optimization

Model Validation:

- Compare PSO-optimized parameters with experimental mass photometry data

- Perform statistical analysis of residuals to assess goodness-of-fit

- Conduct sensitivity analysis to identify most influential parameters

Figure 1: Workflow for Kinetic Model Optimization Using Multi-Task PSO

Protocol: PSO-Optimized Neural Network for Pharmacokinetic Prediction

Objective: To develop a PSO-optimized backpropagation neural network for predicting drug plasma concentrations with demographic and clinical variables.

Materials and Data:

- Patient demographic data (age, gender, BMI)

- Clinical laboratory test results (liver/kidney function, metabolic panels)

- Drug concentration measurements across multiple time points

- Principal component analysis (PCA) preprocessing framework

- PSO-BPANN implementation platform (MATLAB, Python, or R)

Methodology:

- Data Preparation and Preprocessing:

- Collect pharmacokinetic data from clinical trials with appropriate ethical approvals

- Perform principal component analysis to reduce dimensionality and eliminate correlations

- Normalize all input variables to standard ranges (typically 0-1 or z-scores)

- Partition dataset into training (70%), validation (15%), and testing (15%) subsets

Network Architecture Definition:

- Determine optimal hidden layer structure through preliminary experimentation

- Initialize connection weights and bias terms within defined ranges

- Select appropriate activation functions (sigmoid, tanh, or ReLU)

PSO-BPANN Hybrid Implementation:

- Configure PSO parameters: swarm size (20-50 particles), inertia weight (decreasing from 0.9 to 0.4), acceleration constants (c₁=c₂=2.0)

- Define particle position as vector of all network weights and biases

- Implement fitness function as mean squared error between predicted and actual concentrations

- Set velocity clamping to 10-20% of search space range

Training and Optimization:

- Execute PSO to locate promising regions in weight space

- Refine best solutions with limited backpropagation iterations

- Implement early stopping based on validation set performance

- Monitor for overfitting through regular validation checks

Model Evaluation:

- Calculate performance metrics on independent test set

- Analyze residual patterns for systematic prediction errors

- Compare with traditional pharmacokinetic modeling approaches

- Perform sensitivity analysis on input variables

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for Multi-Task PSO in Pharmaceutical Research

| Tool/Reagent | Function/Role | Application Context | Implementation Notes |

|---|---|---|---|

| Fluorescent Thermal Shift Assay [12] | Measures protein thermal stability changes | Protein-ligand interaction studies | Provides rich dataset for complex model fitting; detects oligomerization shifts |

| LC-MS/MS Systems [13] | Quantifies drug concentrations in biological matrices | Pharmacokinetic studies | Generates high-quality concentration data for model training and validation |

| Principal Component Analysis [13] | Reduces dimensionality of clinical variables | Data preprocessing for PSO-BPANN | Creates independent input variables; improves model convergence |

| HydroPSO Package [12] | Enhanced PSO implementation with tuning capabilities | General multi-parameter optimization | Offers improved performance over standard PSO; configurable topology |

| Linear Gradient Descent | Local refinement algorithm | Hybrid optimization strategies | Combines with PSO for fine-tuning after global exploration |

| Mass Photometry [12] | Measures protein oligomeric state distribution | Model validation technique | Provides experimental confirmation of PSO-predicted oligomerization states |

Conceptual Framework and Signaling Pathways

The transition from single-task to multi-task optimization represents a fundamental shift in computational problem-solving for biological and pharmaceutical applications. The conceptual framework integrates several interconnected principles:

Information Exchange Mechanisms: Multi-task PSO creates channels for knowledge transfer between optimization tasks through various strategies. The variable chunking approach enables information exchange between different dimensional variables, while local meta-knowledge transfer leverages similarities between population clusters [15]. These mechanisms allow the algorithm to utilize complementary information across tasks, often leading to performance improvements that would be impossible with isolated optimization.

Dynamic Adaptation: Advanced multi-task PSO implementations incorporate self-regulation and adaptive probability mechanisms that continuously monitor task relatedness and optimization progress [15] [14]. This dynamic adjustment enables the algorithm to respond to changing search landscapes and modify knowledge transfer strategies accordingly, maximizing positive transfer while minimizing interference between dissimilar tasks.

Unified Representation Space: A critical enabler for multi-task optimization is the creation of a unified representation that accommodates different task domains [14]. The random key approach, which encodes decision variables as normalized values between 0 and 1, provides a common search space where knowledge transfer becomes feasible without complex transformation operations.

Figure 2: Conceptual Framework of Paradigm Evolution from Single-Task to Multi-Task Optimization

The evolution from single-task to multi-task optimization represents a significant advancement in computational problem-solving for pharmaceutical research and development. Multi-task PSO has demonstrated considerable potential in addressing complex challenges in drug discovery, from elucidating protein oligomerization mechanisms to predicting pharmacokinetic properties. The paradigm shift enables researchers to leverage implicit parallelism and knowledge transfer between related tasks, often resulting in accelerated convergence and improved solution quality compared to traditional single-task approaches.

Future developments in multi-task PSO will likely focus on enhanced adaptive mechanisms, more sophisticated transfer strategies, and tighter integration with experimental validation methodologies. As these algorithms continue to evolve, their application within pharmaceutical research promises to accelerate drug development processes, improve predictive modeling accuracy, and provide deeper insights into complex biological systems. The dynamic neighbor research context provides a particularly promising direction for developing more efficient and effective multi-task optimization frameworks that can automatically adapt to changing problem landscapes and inter-task relationships.

In Particle Swarm Optimization (PSO), communication topology defines the information-sharing network between particles, fundamentally governing the algorithm's balance between exploration and exploitation. While traditional static topologies like Star (gbest), Ring (lbest), and Von Neumann provide fixed interaction patterns, they struggle to adapt to the complex, evolving landscapes of real-world optimization problems. Dynamic neighborhood topologies represent a significant evolutionary step, enabling the swarm's communication structure to adapt during the optimization process based on search state, diversity metrics, or performance feedback. This adaptability is particularly crucial for multi-task PSO dynamic neighbor research, where maintaining population diversity while accelerating convergence requires sophisticated topological control mechanisms.

Research demonstrates that dynamic topologies directly address PSO's perennial challenge of premature convergence. By modifying information flow patterns, these approaches help particles escape local optima while refining search in promising regions. The integration of machine learning, particularly reinforcement learning (RL), has further advanced this domain by enabling data-driven topology selection. In pharmaceutical and drug development contexts, these adaptive PSO variants show exceptional promise for complex tasks like molecular docking, quantitative structure-activity relationship (QSAR) modeling, and multi-objective therapy optimization, where solution landscapes are typically high-dimensional, constrained, and multimodal.

Categorization of Dynamic Topology Approaches

Algorithmically-Driven Topology Switching

Algorithmically-driven approaches employ predetermined rules or metrics to trigger topological changes based on swarm state characteristics. The Dynamic Neighborhood Balancing-based MOPSO (DNB-MOPSO) exemplifies this category, incorporating a dynamic neighborhood reform strategy for non-overlapping regions that enhances exploration while maintaining population diversity in decision space [17]. This approach combines niching methods with Euclidean distance-based particle division to preserve Pareto optimal solution sets in multi-modal optimization. Similarly, the Multi-Swarm PSO (MSPSO) employs a Dynamic sub-swarm Number Strategy (DNS) that partitions the population into numerous parallel sub-swarms during early exploration stages, then systematically reduces sub-swarm count to enhance later exploitation capability [18]. This method periodically regroups sub-swarms based on stagnation information of the global best position, facilitating information diffusion across different population segments.

Learning-Based Topology Adaptation

Learning-based approaches utilize formal machine learning mechanisms to dynamically select optimal topologies based on search performance and landscape characteristics. The Q-Learning-Based Multi-Strategy Topology PSO (MSTPSO) represents the state-of-the-art in this category, implementing a reinforcement learning-driven topological switching framework that dynamically selects among Fully Informed Particle Swarm (FIPS), small-world, and exemplar-set topologies [19]. This method employs a dual-layer experience replay mechanism integrating short-term and long-term memories to stabilize parameter control and improve learning efficiency. The algorithm further incorporates stagnation detection with differential evolution perturbations and global restart strategies to enhance population diversity and escape local optima.

Hybrid and Multi-Objective Dynamic Topologies

For complex multi-objective problems, specialized dynamic topologies have emerged that maintain diverse Pareto solutions while advancing convergence. The Multi-Objective Particle Swarm Optimizer (MOIPSO) incorporates fast non-dominated sorting with crowding distance mechanisms to approximate Pareto optimal solution sets [8]. Similarly, the Angular Segmentation Archive and Dynamic Update Tactics PSO (ASDMOPSO) implements angular division of the external archive region for efficient classification of non-dominated solutions, removing solutions from highest density regions using crowding distance metrics when archives overflow [20]. These approaches demonstrate particular relevance for drug development applications where multiple conflicting objectives (efficacy, toxicity, cost) must be balanced simultaneously.

Table 1: Comparative Analysis of Dynamic Neighborhood Topologies in PSO

| Topology Approach | Core Mechanism | Primary Advantages | Representative Variants |

|---|---|---|---|

| Algorithmically-Driven Switching | Rule-based triggers using diversity/stagnation metrics | Simple implementation, minimal computational overhead | DNB-MOPSO [17], MSPSO [18] |

| Learning-Based Adaptation | Reinforcement learning for topology selection | Adapts to problem landscape, self-optimizing | MSTPSO [19], QLPSO [19] |

| Hybrid Multi-Objective | Combines archiving with dynamic structures | Maintains diverse Pareto solutions, balances convergence-diversity tradeoff | MOIPSO [8], ASDMOPSO [20] |

| Explainable Topologies | Interpretable topology-performance relationships | Enhanced transparency, trustworthy decision-making | IOHxplainer framework [21] |

Quantitative Performance Analysis

Benchmark Function Performance

Dynamic topology PSO variants have demonstrated superior performance across standardized benchmark suites. The MSTPSO algorithm was rigorously evaluated on 29 CEC2017 benchmark functions, showing significantly improved fitness performance and stronger stability on high-dimensional complex functions compared to various PSO variants and other advanced evolutionary algorithms [19]. Ablation studies confirmed the critical contribution of Q-learning-based multi-topology control and stagnation detection mechanisms to this performance improvement. Similarly, DNB-MOPSO was validated on 11 multi-modal multi-objective test functions, outperforming five popular multi-objective optimization algorithms, particularly in locating more optimal solutions in decision space while obtaining well-distributed Pareto fronts [17].

Diversity and Convergence Metrics

The fundamental advantage of dynamic topologies manifests in improved diversity maintenance and convergence control. Research using the IOHxplainer framework demonstrated that different topologies produce markedly different diversity profiles throughout optimization processes [21]. The Von Neumann topology consistently maintains higher population diversity compared to Star and Ring topologies, while dynamically switching topologies can optimize diversity at different search stages. The MSPSO with dynamic sub-swarm numbering demonstrated enhanced balance between exploration and exploitation capabilities, with the purposeful detecting strategy effectively helping populations escape local optima [18].

Table 2: Performance Metrics of Dynamic Topology PSO Variants on Standardized Benchmarks

| PSO Variant | Test Benchmark | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| MSTPSO [19] | CEC2017 (29 functions) | Superior fitness, stability on high-dimensional functions | Q-learning topology selection outperforms fixed topologies |

| DNB-MOPSO [17] | MMMOPs (11 test functions) | Locates more PSs, well-distributed PFs | Excels in decision space diversity maintenance |

| MOIPSO [8] | CEC2020 multi-modal multi-objective | Improved convergence, solution diversity | Competitive on engineering problems like foundation pit design |

| ASDMOPSO [20] | 22 benchmark functions | IGD value of 0.032 on ZDT4 | Enhanced convergence speed and diversity preservation |

Experimental Protocols and Implementation Guidelines

Protocol 1: Implementing Q-Learning Topology Selection

The MSTPSO protocol implements reinforcement learning for dynamic topology selection through the following methodology [19]:

State Definition: Define the state space using swarm diversity metrics (e.g., position variance), convergence measures (fitness improvement rate), and iteration progress (normalized generation count).

Action Space: Configure three topology options: Fully Informed PSO (FIPS) topology for intensive local exploitation, Small-World topology balancing local and global search, and Exemplar-Set topology for enhanced exploration.

Reward Function: Design a composite reward function incorporating fitness improvement (normalized fitness gain), diversity maintenance (population spatial distribution), and stagnation penalty (lack of improvement over consecutive iterations).

Q-Table Implementation: Initialize Q-table with states × actions dimensions. Implement ε-greedy policy for exploration-exploitation balance (start with ε = 0.3, decay by 0.95 per 50 generations).

Experience Replay: Deploy dual-layer experience replay with short-term memory (50 recent experiences) and long-term memory (500 significant experiences), with batch sampling of 32 experiences per update.

Topology Switching: Evaluate swarm state every 10 generations, select topology via Q-learning policy, update Q-values using reward observations with learning rate α = 0.1 and discount factor γ = 0.9.

Protocol 2: Dynamic Neighborhood Balancing for Multi-Modal Problems

The DNB-MOPSO protocol specializes in multi-modal multi-objective optimization through these key steps [17]:

Niching Implementation: Calculate Euclidean distances between all particles in decision space. Apply adaptive niching with clearing radius R = 0.1 × search space diameter to identify distinct neighborhoods.

Parameter Adaptation: Implement time-varying inertia weight decreasing linearly from 0.9 to 0.4 over iterations. Adjust cognitive and social coefficients based on niche characteristics: c₁ = 0.5 + 0.2 × (niche diversity) and c₂ = 1.5 - 0.2 × (niche diversity).

Mutation Operation: Apply adaptive Gaussian mutation to 20% of particles with probability based on iteration progress: Pmutation = 0.1 × (1 - currentiteration/maxiteration). Mutation strength decreases exponentially with iterations.

Neighborhood Reform: Monitor evolutionary states through fitness improvement rates. Trigger dynamic neighborhood regrouping when global best stagnation exceeds 15 generations. Reform neighborhoods based on current particle positions using k-means clustering (k = current niche count).

Elite Preservation: Maintain an external archive of non-dominated solutions using crowding distance-based pruning when archive exceeds capacity (typically 100-200 solutions).

Protocol 3: Multi-Swarm Dynamic Topology Framework

The MSPSO protocol implements population partitioning with dynamic regrouping [18]:

Initial Sub-Swarm Creation: Partition initial population of N particles into k = N/5 sub-swarms during initialization phase (first 20% of iterations).

Dynamic Sub-Swarm Reduction: Implement exponential reduction in sub-swarm count: k(t) = kmax × (1 - log(t + 1)/log(Tmax)) where kmax is initial sub-swarm count, t is current iteration, Tmax is maximum iterations.

Stagnation Detection: Monitor global best fitness improvements over sliding window of 20 generations. Trigger regrouping when improvement < ε (typically 1e-6) for consecutive 10 generations.

Sub-Swarm Regrouping: Dissolve worst-performing 30% of sub-swarms (based on average fitness) and redistribute particles to remaining sub-swarms using fitness-based probabilistic assignment.

Purposeful Detecting: Implement directed exploration by identifying promising regions from historical search data. When stagnation detected, reinitialize 15% of particles in regions with high fitness potential based on previously discovered good solutions.

Visualization of Dynamic Topology Mechanisms

Dynamic Topology Selection Workflow

Research Reagent Solutions: Computational Tools for Dynamic Topology PSO

Table 3: Essential Computational Tools and Frameworks for Dynamic Topology PSO Research

| Tool/Framework | Primary Function | Application Context | Implementation Notes |

|---|---|---|---|

| IOHxplainer [21] | Explainable benchmarking and performance analysis | Algorithm diagnostics and parameter impact assessment | Integrated with SHAP for feature importance, supports continuous and categorical parameters |

| CEC Benchmark Suites [19] | Standardized performance evaluation | Algorithm validation and comparison | CEC2017, CEC2020 provide diverse function types (unimodal, multimodal, hybrid, composition) |

| Q-Learning Framework [19] | Reinforcement learning for topology selection | Adaptive topology control in MSTPSO | Requires state space definition, reward function design, experience replay mechanism |

| Niching Techniques [17] | Diversity maintenance in decision space | Multi-modal optimization problems | Clearing, crowding, sharing methods with adaptive parameter control |

| Differential Evolution Operators [19] | Hybrid search perturbations | Escaping local optima, enhancing exploration | Mutation and crossover operations applied to stagnant particles |

| Pareto Archive Methods [8] [20] | Non-dominated solution management | Multi-objective optimization problems | Crowding distance, angular segmentation, adaptive grid techniques |

Application Notes for Drug Development Research

Molecular Docking Optimization

Dynamic topology PSO presents significant advantages for molecular docking simulations, where multiple binding modes and conformational spaces create highly multimodal landscapes. The DNB-MOPSO approach [17] with its diversity preservation mechanisms enables simultaneous exploration of multiple binding pockets and poses. Implementation guidelines for docking applications include:

Representation: Encode docking solutions as 6-Dimensional vectors (3 positional, 3 rotational) with additional dimensions for torsional angles of flexible ligand bonds.

Fitness Function: Combine binding energy scoring (e.g., AutoDock Vina, Glide SP) with steric complementarity and chemical compatibility metrics.

Topology Strategy: Employ small-world topologies during initial exploration phases to identify potential binding regions, transitioning to Von Neumann or fully-connected topologies for local refinement of promising poses.

Multi-Objective Extension: Formulate as multi-objective problem balancing binding affinity, drug-likeness (Lipinski rules), and synthetic accessibility metrics.

QSAR Model Parameter Optimization

Quantitative Structure-Activity Relationship modeling requires simultaneous optimization of multiple descriptor selection and model parameters. The ASDMOPSO algorithm [20] with angular archive segmentation provides effective solutions for this multi-objective challenge:

Solution Representation: Combined binary (descriptor selection) and continuous (model parameters) dimensions with appropriate encoding schemes.

Objective Functions: Minimize model complexity (number of descriptors), maximize predictive accuracy (cross-validated R²), and enhance robustness (error variance).

Archive Management: Implement angular segmentation in objective space to maintain diverse model alternatives with different complexity-accuracy tradeoffs.

Decision Support: Present multiple Pareto-optimal QSAR models to medicinal chemists for selection based on additional domain knowledge.

Multi-Target Therapy Optimization

Drug development increasingly focuses on multi-target therapies, particularly for complex diseases like cancer and neurological disorders. The MOIPSO framework [8] provides effective optimization for balancing efficacy, toxicity, and resistance management:

Problem Formulation: Define objective space encompassing potency against multiple targets, selectivity ratios, toxicity predictors, and pharmacokinetic properties.

Constraint Handling: Implement penalty functions or feasibility preservation for physicochemical constraints (molecular weight, logP, polar surface area).

Dynamic Topology Role: Employ reinforcement learning-based topology selection [19] to adapt search strategy based on progress in different objective dimensions.

Validation Protocol: Incorporate iterative feedback from experimental assays to refine objective weights and search direction.

Swarm Intelligence (SI) is a form of artificial intelligence based on the collective behavior of decentralized, self-organized systems in nature. The concept originates from observations of social insects, bird flocks, fish schools, and other animal societies where simple individuals follow basic rules that collectively produce complex, intelligent group behavior. This phenomenon demonstrates how relatively simple individuals can, through local interactions, solve problems that would be too difficult for any single individual [22].

Particle Swarm Optimization (PSO) is a prominent population-based stochastic optimization technique inspired by the social behavior of bird flocking, developed by Eberhart and Kennedy in 1995 [23] [24]. The algorithm simulates a simplified social system where each potential solution, called a "particle," flies through the problem space following the optimal particles discovered by itself and its neighbors. PSO has gained widespread adoption due to its simple implementation, minimal parameter requirements, and strong global optimization capabilities compared to other evolutionary algorithms [24].

The connection between biological inspiration and computational optimization represents a fascinating example of biomimicry in computer science. Just as birds in a flock coordinate without central control to find food sources, PSO particles collaborate to locate optimal solutions in complex search spaces. This biological foundation provides both intuitive understanding and proven effectiveness for solving challenging optimization problems across numerous domains, including the computationally intensive field of drug discovery [25].

Biological Foundations and Algorithmic Principles

From Natural Systems to Computational Models

The biological inspiration for PSO stems from observing the elegant efficiency of bird flocking behavior during foraging. Kennedy and Eberhart noted that while individual birds search randomly for food, they continuously adjust their search patterns based on both personal discoveries and the successes of nearby birds [23]. This social sharing of information enables the entire flock to converge on food sources more efficiently than any single bird could achieve alone [24].

The mathematical formulation of PSO captures this biological phenomenon through a set of simple update equations. In D-dimensional search space, each particle i has:

- A current position vector Xi = (xi1, xi2, ..., xiD)

- A velocity vector Vi = (vi1, vi2, ..., viD)

- A memory of its personal best position Pi = (pi1, pi2, ..., piD)

The swarm also tracks the global best position G = (g1, g2, ..., gD) found by any particle [24]. These elements work together to balance exploration of new areas and exploitation of known promising regions.

Table 1: Biological-Correspondence in PSO Concepts

| Biological Concept | PSO Representation | Functional Role |

|---|---|---|

| Individual bird in flock | Particle | Represents a candidate solution in search space |

| Bird's movement | Velocity vector | Determines direction and magnitude of position update |

| Bird's memory of best food location | Personal best (pBest) | Retains the best solution found by individual particle |

| Flock's knowledge of best food location | Global best (gBest) | Retains the best solution found by entire swarm |

| Social information sharing | Neighborhood topology | Defines communication structure among particles |

| Food source quality | Fitness function | Evaluates solution quality for optimization problem |

Core Algorithmic Framework

The standard PSO algorithm operates through iterative application of velocity and position update equations. The velocity update rule combines three influential components:

- Inertia component: Preserves a portion of the particle's previous velocity

- Cognitive component: Attracts the particle toward its personal best position

- Social component: Attracts the particle toward the neighborhood's best position

Mathematically, the velocity and position updates for each particle i in dimension d are expressed as:

vid = w × vid + c1 × r1 × (pid - xid) + c2 × r2 × (pgd - xid) [24]

xid = xid + vid

Where:

- w represents the inertia weight controlling exploration-exploitation balance

- c1 and c2 are acceleration coefficients (typically c1 = c2 = 2)

- r1 and r2 are random values between 0 and 1

- pid is the particle's personal best position in dimension d

- pgd is the neighborhood's best position in dimension d

The algorithm proceeds iteratively until meeting termination criteria such as maximum iterations, fitness threshold achievement, or stagnation detection. This elegant balance of simple rules creates emergent optimization behavior capable of navigating complex, high-dimensional search spaces effectively.

PSO Variants and Advanced Topologies

Addressing PSO Limitations Through Enhanced Topologies

Despite its effectiveness, standard PSO faces several challenges including premature convergence to local optima and insufficient precision in fine-grained search [23]. These limitations stem largely from the rapid loss of population diversity and over-reliance on a single global best particle. Research has shown that modifying the communication topology between particles significantly impacts swarm behavior and performance [23].

The concept of dynamic neighborhoods represents a particularly promising approach for multi-task optimization environments. Unlike standard PSO where all particles influence each other through a global best solution, dynamic neighborhood PSO restricts information sharing to subsets of particles, creating localized social networks within the swarm [23]. This approach mirrors the observation that in natural bird flocks, individuals typically respond only to their nearest neighbors rather than the entire flock.

Table 2: Comparison of PSO Neighborhood Topologies

| Topology Type | Information Flow Pattern | Convergence Speed | Diversity Preservation | Best Suited Problems |

|---|---|---|---|---|

| Global (gbest) | Fully connected; all particles communicate directly | Fastest | Lowest | Simple unimodal problems |

| Ring (lbest) | Each particle connects to k immediate neighbors | Slow | High | Complex multimodal problems |

| Von Neumann | Grid-based connections in four directions | Moderate | Moderate | Mixed complexity problems |

| Dynamic | Adaptive connections based on spatial or fitness similarity | Variable | Adaptive | Multi-task, dynamic environments |

| Small World | Mostly local with occasional long-range connections | Moderate-High | High | Rugged fitness landscapes |

Kennedy's research demonstrated that smaller neighborhoods with limited connectivity generally perform better on complex multimodal problems, while larger neighborhoods excel on simpler unimodal functions [23]. This occurs because restricted information flow creates multiple simultaneous exploration pathways, reducing the probability of entire swarm converging to suboptimal regions.

Multi-Task PSO with Dynamic Neighborhoods

The dynamic neighborhood approach is particularly valuable in multi-task optimization scenarios where a single swarm addresses multiple related objectives simultaneously. In such environments, particles can self-organize into specialized subgroups focused on different tasks or search regions, with neighborhood structures adapting based on current performance metrics [26].

The Multi-Agent Chaos Bird Swarm Algorithm (MACBSA) exemplifies this advanced approach, incorporating competitive-cooperative mechanisms between intelligent agents and chaotic search strategies to enhance both diversity and feedback within the swarm [27]. This hybrid algorithm demonstrates how biological inspiration can be extended beyond simple flocking models to incorporate more sophisticated ecological interactions.

Implementation of dynamic neighborhood strategies typically involves either:

- Spatial neighborhoods based on distance in search space

- Index-based neighborhoods using particle indices regardless of position

- Fitness-based neighborhoods grouping particles with similar performance levels

- Randomized neighborhoods that periodically reconfigure connections

These adaptive topologies help maintain population diversity throughout the optimization process, enabling more effective exploration of complex search landscapes while retaining the convergence properties necessary for precise local search.

Experimental Protocols and Implementation Guidelines

Standard PSO Implementation Protocol

Materials and Software Requirements:

- Programming environment (Python, MATLAB, or C++)

- Numerical computation libraries (NumPy, SciPy)

- Visualization tools for convergence monitoring

- Benchmark fitness functions for validation

Procedure:

- Swarm Initialization

- Set population size (typically 20-50 particles)

- Randomize initial positions within search bounds

- Initialize velocities to small random values

- Define cognitive and social parameters (c1, c2)

- Set inertia weight (w) or employ adaptive strategy

Iteration Loop

- For each particle, evaluate fitness function

- Update personal best positions if improved

- Identify neighborhood best positions

- Calculate new velocities using update equation

- Update particle positions

- Apply boundary constraints if violated

- Record performance metrics

Termination Check

- Maximum iterations reached

- Fitness improvement below threshold

- Global best position stabilization

- Computational budget exhausted

Parameter Configuration: For standard test functions, the following parameter settings provide robust performance:

- Population size: 30 particles

- Inertia weight: Linearly decreasing from 0.9 to 0.4

- Acceleration coefficients: c1 = c2 = 2.0

- Velocity clamping: 10-20% of search space range

- Maximum iterations: 1000-5000 depending on problem complexity

Dynamic Neighborhood PSO Protocol for Multi-Task Optimization

Specialized Requirements:

- Additional memory for multiple best positions

- Neighborhood tracking data structures

- Task similarity measurement metrics

- Knowledge transfer mechanisms

Procedure:

- Multi-Swarm Initialization

- Initialize K subpopulations for K tasks

- Define initial neighborhood radii for each particle

- Set migration frequency and selection criteria

- Initialize knowledge transfer matrix

Adaptive Neighborhood Formation

- For each particle, identify neighbors within radius r

- Update r based on convergence metrics

- If diversity drops below threshold, increase r

- If convergence stagnates, decrease r

Cross-Task Knowledge Transfer

- At predefined intervals, evaluate task relatedness

- Select elite particles from each task

- Transfer promising solutions to other tasks

- Apply mutation to transferred solutions for task specialization

Performance Monitoring and Adjustment

- Track convergence rates for each task separately

- Measure population diversity metrics

- Adjust neighborhood sizes based on performance

- Modify knowledge transfer frequency as needed

Application in Drug Discovery and Development

The pharmaceutical industry faces enormous challenges in drug discovery, including astronomical costs, lengthy development timelines, and high failure rates. PSO algorithms offer powerful approaches for addressing several computationally intensive aspects of the drug discovery pipeline [25].

Target Identification and Validation

In the initial target discovery phase, PSO can analyze complex biological networks to identify disease-associated proteins with high druggability potential. By integrating genomic, proteomic, and clinical data, PSO-based feature selection can pinpoint the most promising therapeutic targets from thousands of candidates [28].

Application Protocol:

- Formulate target identification as a multi-objective optimization problem

- Define fitness function incorporating disease relevance, druggability, and safety

- Employ multi-swarm PSO to explore target space

- Validate top candidates through molecular dynamics simulations

Compound Screening and Optimization

PSO excels in virtual screening of compound libraries, significantly reducing the experimental burden. The algorithm can navigate high-dimensional chemical spaces to identify molecules with optimal binding affinity, selectivity, and pharmacokinetic properties [25].

Table 3: PSO Applications in Drug Discovery Pipeline

| Drug Discovery Stage | PSO Application | Key Optimization Parameters | Reported Efficiency Gains |

|---|---|---|---|

| Target Identification | Feature selection from omics data | Disease relevance, Druggability, Safety | 3-5x faster than exhaustive search |

| Virtual Screening | Molecular docking pose optimization | Binding energy, Complementarity, Interaction quality | 50-80% reduction in experimental screening |

| Lead Optimization | QSAR model parameter estimation | Potency, Selectivity, ADMET properties | 40-60% reduction in synthesis cycles |

| Clinical Trial Design | Patient stratification optimization | Response prediction, Risk minimization, Diversity | 30% improvement in recruitment efficiency |

A notable example is the application of PSO in predicting drug-target interactions (DTI), where the algorithm optimizes the alignment between compound structures and protein binding sites. Advanced implementations incorporate deep learning features from graph neural networks to enhance prediction accuracy [25].

Formulation Development and Manufacturing

Beyond discovery, PSO contributes to pharmaceutical development by optimizing formulation compositions and manufacturing processes. The algorithm can simultaneously maximize multiple competing objectives including stability, bioavailability, production yield, and cost efficiency.

Implementation Framework:

- Define design space with ingredient ratios and process parameters

- Establish predictive models for critical quality attributes

- Apply constrained multi-objective PSO to identify Pareto-optimal solutions

- Validate predictions through small-scale experimental batches

Research Reagents and Computational Tools

Successful implementation of PSO in research requires both computational resources and domain-specific toolkits. The following table outlines essential components for establishing a PSO research pipeline in drug discovery contexts.

Table 4: Essential Research Reagents and Computational Tools for PSO in Drug Discovery

| Resource Category | Specific Tools/Libraries | Primary Function | Application Context |

|---|---|---|---|

| PSO Frameworks | PySwarms, MEALPY, Optuna | Algorithm implementation | General optimization infrastructure |

| Cheminformatics | RDKit, Open Babel, ChemAxon | Molecular representation | Compound screening and optimization |

| Molecular Docking | AutoDock Vina, Schrödinger, GOLD | Binding affinity prediction | Virtual screening and DTI prediction |

| Structure Prediction | AlphaFold 2/3, Rosetta | Protein 3D modeling | Target identification and validation |

| Biological Networks | Cytoscape, NetworkX, igraph | Pathway analysis | Target prioritization and validation |

| ADMET Prediction | ADMET Predictor, pkCSM, ProTox | Compound property profiling | Lead optimization and toxicity assessment |

| High-Performance Computing | SLURM, Apache Spark, CUDA | Parallel processing | Large-scale virtual screening |

Integration of these tools creates a comprehensive workflow from target identification through lead optimization. For example, a typical pipeline might employ AlphaFold for protein structure prediction, RDKit for compound handling, AutoDock Vina for binding affinity assessment, and custom PSO algorithms to navigate the optimization landscape [25] [28].

Critical considerations for implementation include:

- Data quality and standardization to ensure reliable fitness evaluations

- Appropriate representation of chemical and biological entities

- Computational efficiency for handling large-scale problems

- Model validation through experimental verification

- Result interpretability for scientific insight generation

The continuing evolution of PSO algorithms, particularly through dynamic neighborhood strategies and multi-task optimization frameworks, promises to further enhance their utility in accelerating drug discovery and addressing the complex challenges of pharmaceutical development.

The drug discovery process is characterized by its exceptional complexity, lengthy timelines, and high costs, with estimates suggesting a development period of 10–15 years and costs ranging from $90 million to $2.6 billion [29]. This complexity arises from the need to navigate a vast chemical space of approximately (10^{60}) molecules while balancing numerous conflicting objectives, including efficacy, safety, and pharmacokinetic properties [29]. Traditional optimization approaches often fail to adequately address these challenges, as they typically focus on a limited number of objectives simultaneously or use scalarization methods that obscure important trade-offs between critical parameters.

In recent years, artificial intelligence and computational intelligence approaches have emerged as promising tools to accelerate and enhance the drug development pipeline. Among these, Particle Swarm Optimization (PSO) has demonstrated particular utility in addressing the complex, multi-objective nature of drug design. PSO is a population-based stochastic optimization algorithm inspired by social behavior patterns in nature, such as bird flocking and fish schooling [30]. In the context of drug discovery, PSO and its advanced variants offer sophisticated mechanisms for exploring high-dimensional chemical spaces while efficiently balancing multiple competing objectives.

This application note examines the key advantages of advanced PSO approaches, particularly multi-task and dynamic neighborhood strategies, for handling complex parameter spaces and multiple objectives in drug discovery. We present experimental protocols, performance comparisons, and practical implementation guidelines to enable researchers to leverage these powerful optimization techniques in their drug development workflows.

Key Advantages of PSO in Drug Discovery

Efficient Navigation of Complex Chemical Spaces

The chemical space of potential drug molecules is astronomically large and high-dimensional, presenting a significant challenge for traditional search algorithms. PSO excels in this environment through its population-based approach, which maintains diversity while efficiently exploring promising regions. The algorithm operates by simulating the movement of particles (representing potential drug candidates) through the search space, with each particle adjusting its position based on its own experience and that of its neighbors [29] [30].

Advanced PSO variants incorporate specialized mechanisms to enhance this exploration further. Dynamic neighborhood formation enables particles to exchange information effectively, preventing premature convergence to suboptimal solutions [17] [16]. The variable neighborhood search strategy alternates between different neighborhood structures, using small neighborhoods for rapid improvement and larger neighborhoods for deep optimization [31]. These capabilities are particularly valuable in drug discovery, where the relationship between molecular structure and activity is often highly nonlinear and complex.

Robust Handling of Multiple Objectives

Drug design inherently involves multiple conflicting objectives, including binding affinity, toxicity, synthetic accessibility, and drug-likeness properties. While conventional multi-objective approaches typically handle only two or three objectives simultaneously, many-objective PSO variants can effectively manage more than three objectives using Pareto-based optimization [29].

Pareto-based many-objective optimization generates a set of high-quality drug candidates representing optimal trade-offs among all objectives, allowing medicinal chemists to select compounds based on the most relevant criteria for their specific context [29]. Multi-task PSO with dynamic neighbor and level-based inter-task learning further enhances this capability by separating particles into different levels with distinct learning methods, enabling efficient knowledge transfer across related optimization tasks [16]. This approach is particularly valuable in drug discovery, where similar molecular scaffolds might be optimized for different target proteins or disease indications.

Adaptive Balancing of Exploration and Exploitation

A critical challenge in any optimization algorithm is maintaining the appropriate balance between exploring new regions of the search space and exploiting known promising areas. PSO addresses this challenge through several adaptive mechanisms:

- Adaptive parameter adjustment strategies dynamically balance local and global search based on the characteristics of the search space and the current state of the optimization process [17].

- Mutation operators are incorporated to help particles escape local optima, enhancing population diversity in the decision space [17] [7].

- Lévy flight strategies enable adaptive switching between global exploration and local refinement based on population convergence metrics, reducing parameter sensitivity while improving effective mutation rates [7].

These adaptive capabilities allow PSO to maintain search efficiency throughout the optimization process, transitioning smoothly from broad exploration of chemical space to focused refinement of promising molecular scaffolds.

Table 1: Key PSO Mechanisms and Their Benefits in Drug Discovery

| PSO Mechanism | Technical Description | Drug Discovery Benefit |

|---|---|---|

| Dynamic Neighborhood Formation | Particles dynamically adjust their interaction topology based on evolutionary states | Prevents premature convergence; enhances solution diversity |

| Many-Objective Optimization | Pareto-based handling of >3 objectives without scalarization | Enables comprehensive optimization of ADMET, efficacy, and synthesizability |

| Adaptive Parameter Control | Automatic adjustment of inertia weight and learning factors based on search progress | Maintains optimal exploration/exploitation balance throughout optimization |

| Variable Neighborhood Search | Alternation between different neighborhood structures during search | Combines rapid local improvement with thorough global exploration |

| Level-Based Inter-Task Learning | Transfer of knowledge between related optimization tasks | Accelerates optimization of related molecular scaffolds or target classes |

Performance Analysis and Comparative Evaluation

Quantitative Assessment of PSO Variants

To evaluate the performance of advanced PSO approaches in drug discovery contexts, we conducted a systematic analysis of published studies comparing different optimization strategies. The results demonstrate the significant advantages of many-objective PSO variants over traditional approaches.

In a comprehensive study comparing six different many-objective metaheuristics for drug design, including both evolutionary algorithms and PSO variants, the Multi-objective Evolutionary Algorithm based on Dominance and Decomposition performed most effectively in finding molecules satisfying multiple objectives simultaneously [29]. However, PSO-based approaches demonstrated competitive performance, particularly in terms of convergence speed and computational efficiency.

The Dynamic Neighborhood Balancing-based Multi-objective PSO (DNB-MOPSO) has shown exceptional capability in locating multiple optimal solutions in the decision space while obtaining well-distributed Pareto fronts [17]. This is particularly valuable in drug discovery, where multiple distinct molecular scaffolds may provide similar therapeutic effects, offering alternatives when certain compounds present development challenges.

Table 2: Performance Comparison of Optimization Algorithms in Drug Design Tasks

| Algorithm | Binding Affinity Improvement | ADMET Profile | Chemical Diversity | Computational Efficiency |

|---|---|---|---|---|

| Traditional PSO | Moderate | Limited assessment | Low to moderate | High |

| Many-Objective PSO | High | Comprehensive optimization | High | Moderate |

| Genetic Algorithms | Moderate to high | Moderate assessment | High | Low to moderate |

| Deep Learning Approaches | Variable | Comprehensive but data-intensive | Moderate | Low (training) / High (deployment) |

| DNB-MOPSO | High | Comprehensive optimization | High | Moderate to high |

Case Study: Application to Cancer-Related Target

A specific case study applying many-objective PSO to the optimization of drug candidates for human lysophosphatidic acid receptor 1 (a cancer-related protein target) demonstrated the practical utility of these approaches [29]. The study incorporated multiple objectives, including binding affinity, quantitative estimate of drug-likeness (QED), log octanol-water partition coefficient (logP), synthetic accessibility score (SAS), and ADMET properties.