Differential Evolution Algorithm: A Complete Guide for Biomedical Research and Drug Discovery

This article provides a comprehensive introduction to the Differential Evolution (DE) algorithm, a powerful evolutionary optimization technique.

Differential Evolution Algorithm: A Complete Guide for Biomedical Research and Drug Discovery

Abstract

This article provides a comprehensive introduction to the Differential Evolution (DE) algorithm, a powerful evolutionary optimization technique. Tailored for researchers, scientists, and professionals in drug development, it covers foundational concepts, practical implementation, and advanced strategies for overcoming common challenges like parameter sensitivity and premature convergence. The guide highlights cutting-edge enhancements, including reinforcement learning for adaptive parameter control, and demonstrates DE's practical utility through real-world applications such as drug-target binding affinity prediction and hyperparameter optimization for deep learning models. Finally, it offers a rigorous framework for validating DE performance against other state-of-the-art heuristic methods, equipping practitioners with the knowledge to leverage DE for complex optimization problems in biomedicine.

What is Differential Evolution? Core Principles and Evolutionary Concepts

Differential Evolution (DE) is a versatile and robust evolutionary algorithm widely used for solving complex global optimization problems. First introduced by Storn and Price in the mid-1990s, DE has gained significant popularity due to its simple structure, fast convergence properties, and ability to handle non-differentiable, nonlinear, and multimodal objective functions [1] [2] [3]. This technical guide provides a comprehensive examination of DE's theoretical foundations, algorithmic components, and practical applications, with particular emphasis on its growing utilization in drug discovery and development. We present detailed experimental protocols, performance comparisons, and implementation guidelines to assist researchers in effectively applying this powerful optimization technique to challenging real-world problems.

Differential Evolution belongs to the class of evolutionary algorithms (EAs) and operates on principles inspired by natural selection and genetics [4]. Unlike traditional gradient-based optimization methods that require differentiable objective functions, DE makes few or no assumptions about the underlying optimization problem and can search very large spaces of candidate solutions [1] [5]. This characteristic makes DE particularly valuable for optimizing complex, real-world problems where objective functions may be noisy, non-continuous, or change over time [1].

DE processes a population of candidate solutions through iterative application of mutation, crossover, and selection operations [2]. The algorithm maintains a population of candidate solutions that are gradually improved over generations. What distinguishes DE from other evolutionary approaches is its unique mutation strategy, which utilizes weighted differences between population vectors to explore the search space [4]. This differential mutation operation enables DE to automatically adapt the step size and orientation during the optimization process, allowing for efficient exploration and exploitation of the solution space.

The significance of DE in scientific computing is evidenced by its successful application across diverse domains, including chemometrics [5], structural engineering [6], neural network training [3], and drug discovery [7]. A notable bibliometric analysis reveals a steadily growing interest in DE, with citation counts of foundational DE papers exceeding 1,000 annually in recent years [8]. Despite its empirical success, theoretical analyses of DE remain relatively limited compared to its practical applications, presenting opportunities for further research into its convergence properties and population dynamics [8].

Theoretical Foundations

Historical Context and Development

Differential Evolution was introduced by Rainer Storn and Kenneth Price in 1995 as a means of quickly optimizing functions that lack desirable mathematical properties like differentiability or continuity [1] [5]. Their pioneering work addressed the need for robust optimization techniques capable of handling real-world problems where traditional methods often failed. The algorithm rapidly gained recognition for its simplicity, efficiency, and remarkable performance across diverse optimization landscapes [2].

DE emerged during a period of growing interest in nature-inspired metaheuristic algorithms. While it shares the population-based approach of other evolutionary algorithms, DE differentiates itself through its unique differential mutation strategy and one-to-one selection mechanism [9] [4]. The development of DE represented a significant advancement in the field of evolutionary computation, offering improved convergence characteristics compared to earlier approaches like Genetic Algorithms (GAs) [5].

Fundamental Concepts and Principles

At its core, DE is a stochastic, population-based optimization algorithm designed for continuous search spaces [3]. The algorithm maintains a population of candidate solutions, represented as vectors of real numbers, which evolve over generations through the application of differential mutation, crossover, and selection operations [2] [4].

The philosophical underpinning of DE revolves around the concept of utilizing vector differences to explore the search space. This approach allows DE to automatically adapt its step size and search direction based on the distribution of the current population [8]. As the population converges toward promising regions, the magnitude of vector differences naturally decreases, providing an inherent adaptation mechanism that transitions from exploration to exploitation [8].

Unlike many other evolutionary algorithms that rely on predefined probability distributions for mutation, DE uses the natural coordinate system defined by the current population distribution [9]. This coordinate-independent approach contributes to DE's robustness and performance across diverse problem domains. Theoretical studies have shown that DE exhibits several invariance properties, including rotation invariance and scale invariance, which further enhance its applicability to complex optimization landscapes [8].

Algorithmic Framework

Core Components and Operations

The Differential Evolution algorithm operates through four fundamental operations: initialization, mutation, crossover, and selection. These components work together to efficiently explore and exploit the search space, gradually improving the population of candidate solutions.

Population Initialization

The algorithm begins by initializing a population of NP candidate solutions, often called agents or individuals [2]. Each individual is represented as a D-dimensional vector, where D corresponds to the number of parameters in the optimization problem:

[ xi = [x{i,1}, x{i,2}, ..., x{i,D}] \quad \text{for} \quad i = 1, 2, ..., NP ]

Initial values for each parameter are typically generated randomly within specified search boundaries:

[ x_{i,j} = low[j] + rand \cdot (high[j] - low[j]) ]

where (low[j]) and (high[j]) define the lower and upper bounds for the j-th dimension, and (rand) is a random number uniformly distributed in [0,1] [2].

Mutation Operation

The mutation operation generates mutant vectors by combining randomly selected individuals from the population. The most common mutation strategy, known as "DE/rand/1", produces a mutant vector (vi) for each target vector (xi) as follows:

[ vi = x{r1} + F \cdot (x{r2} - x{r3}) ]

where (x{r1}), (x{r2}), and (x{r3}) are three distinct randomly selected population vectors different from (xi), and (F) is a scaling factor controlling the amplification of differential variations [6] [2]. Several alternative mutation strategies have been proposed in the literature:

- DE/best/1: (vi = x{best} + F \cdot (x{r1} - x{r2}))

- DE/rand/2: (vi = x{r1} + F \cdot (x{r2} - x{r3}) + F \cdot (x{r4} - x{r5}))

- DE/current-to-best/1: (vi = xi + F \cdot (x{best} - xi) + F \cdot (x{r1} - x{r2})) [6]

The choice of mutation strategy influences the balance between exploration and exploitation, with rand-based strategies typically favoring exploration and best-based strategies promoting faster convergence.

Crossover Operation

Following mutation, DE performs a crossover operation to increase population diversity by combining each target vector with its corresponding mutant vector [2]. The most common approach is binomial crossover, which generates a trial vector (u_i):

[ u{i,j} = \begin{cases} v{i,j} & \text{if } rand(j) \leq CR \text{ or } j = j{rand} \ x{i,j} & \text{otherwise} \end{cases} ]

where (CR) is the crossover probability in [0,1], (rand(j)) is a uniform random number in [0,1] for the j-th dimension, and (j_{rand}) is a randomly chosen index ensuring at least one parameter is inherited from the mutant vector [1] [2].

Selection Operation

The selection operation determines whether the target vector or the trial vector survives to the next generation. DE employs a one-to-one tournament selection, where the trial vector replaces the target vector if it has better or equal fitness:

[ xi^{t+1} = \begin{cases} ui^t & \text{if } f(ui^t) \leq f(xi^t) \ x_i^t & \text{otherwise} \end{cases} ]

for minimization problems [2] [4]. This greedy selection strategy provides strong selection pressure, contributing to DE's fast convergence properties.

Algorithmic Workflow

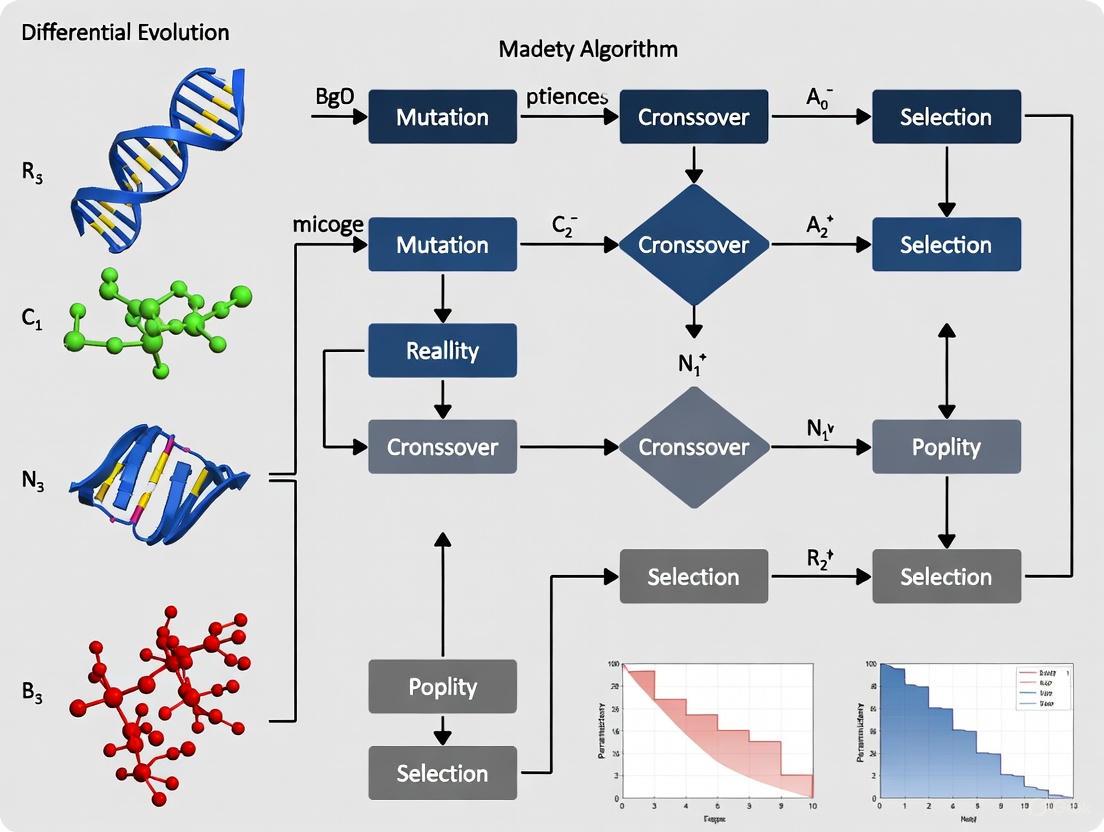

The complete DE algorithm integrates these components into an iterative optimization process. The following diagram illustrates the fundamental workflow of the Differential Evolution algorithm:

DE Algorithm Workflow

The pseudocode below summarizes the complete Differential Evolution algorithm:

Performance Analysis and Comparison

Comparative Performance Studies

Differential Evolution has been extensively evaluated against other optimization algorithms across various problem domains. A comprehensive comparison study examining ten PSO and ten DE variants on numerous single-objective numerical benchmarks and 22 real-world problems revealed that DE algorithms clearly outperform PSO variants on average [9]. This performance advantage is particularly noteworthy given that PSO algorithms are two to three times more frequently used in the literature than DE methods [9].

The table below summarizes key performance comparisons between DE and other optimization approaches:

Table 1: Performance Comparison of Differential Evolution with Other Optimization Algorithms

| Comparison Metric | DE vs. Genetic Algorithms | DE vs. Particle Swarm Optimization | DE vs. Gradient-Based Methods |

|---|---|---|---|

| Convergence Speed | Faster convergence, particularly in high-dimensional problems [4] | Mixed results, with DE generally superior on complex benchmarks [9] | Slower for smooth convex functions but applicable to non-differentiable problems [10] |

| Solution Quality | Generally produces more stable, higher-quality solutions [5] | Clear advantage for DE on average across diverse problems [9] | Can find global optima where gradient methods get stuck in local optima [10] |

| Parameter Sensitivity | Fewer parameters to tune (F, CR, NP) [6] | More parameters requiring careful adjustment [9] | Step size selection critical for performance |

| Application Domain | Effective on both continuous and discrete (with modifications) spaces [5] | Primarily continuous optimization | Requires differentiable objective functions |

| Theoretical Foundation | Limited theoretical analysis despite empirical success [8] | Similar limited theoretical understanding | Well-established convergence theories |

Constrained Optimization Performance

In constrained optimization problems, which are prevalent in engineering applications, DE has demonstrated remarkable effectiveness [6]. A comparative study of DE variants in constrained structural optimization examined five well-known benchmark structures in 2D and 3D, evaluating algorithms based on final optimum results and convergence rate [6]. The study employed a penalty function approach to handle constraints:

[ F(x) = f(x) + P(x) = f(x) + \mu \sum{k=1}^N Hk(x) g_k^2(x) ]

where (f(x)) is the objective function, (P(x)) is the penalty term, (gk(x)) is the k-th constraint, (\mu \geq 0) is a penalty factor, and (Hk(x)) indicates constraint violation [6].

The results demonstrated DE's reliability and robustness in handling complex constrained problems, with adaptive DE variants particularly effective for structural optimization tasks [6].

Applications in Drug Discovery and Development

Drug-Target Binding Affinity Prediction

Differential Evolution has found significant application in drug discovery, particularly in predicting drug-target interactions (DTIs). Traditional experimental approaches to DTI investigation are time-consuming and require substantial financial resources [7]. Machine learning-based methodologies have been adopted to reduce costs and save time, but their effectiveness has been limited by binary classification approaches and lack of empirically validated negative samples [7].

A novel approach redefines the DTI problem as a regression task, leveraging abundant DTI datasets and protein structure data [7]. Researchers have proposed a deep learning approach integrating Convolutional Neural Network blocks with self-attention mechanisms to create an attention-based bidirectional Long Short-Term Memory model (CSAN-BiLSTM-Att) [7]. Due to the model's complexity, proper hyperparameter tuning is essential, and DE has been successfully employed to select optimal hyperparameters [7].

Experimental results demonstrate that the DE-based CSAN-BiLSTM-Att model outperforms previous approaches, achieving a concordance index of 0.898 and a mean square error of 0.228 on the DAVIS dataset, and a concordance value of 0.971 with a mean square error of 0.014 on the KIBA dataset [7].

Experimental Protocol for Drug-Target Affinity Prediction

The following protocol outlines the methodology for applying DE to optimize deep learning models for drug-target binding affinity prediction:

Table 2: Experimental Protocol for DE in Drug-Target Affinity Prediction

| Step | Procedure | Parameters | Output |

|---|---|---|---|

| 1. Problem Formulation | Define drug-target affinity prediction as regression problem | Drug descriptors (SMILES), protein sequences, binding affinity data | Structured dataset with features and targets |

| 2. Model Architecture Design | Design CSAN-BiLSTM-Att network architecture | Convolution filters, LSTM units, attention mechanisms | Model framework ready for hyperparameter optimization |

| 3. DE Parameter Setup | Initialize DE parameters | Population size NP=50, F=0.8, CR=0.9, generations=200 | Initial population of hyperparameter vectors |

| 4. Hyperparameter Encoding | Encode neural network hyperparameters as DE vectors | Learning rate, batch size, filter sizes, layer dimensions | D-dimensional vectors representing hyperparameter sets |

| 5. Fitness Evaluation | Train model with candidate hyperparameters and evaluate on validation set | Concordance index, mean square error | Fitness score for each hyperparameter set |

| 6. DE Optimization | Run DE algorithm to evolve hyperparameters | Mutation strategy DE/rand/1, binomial crossover | Optimized hyperparameter values |

| 7. Model Validation | Evaluate final model with optimized hyperparameters on test set | Concordance index, mean square error | Final performance metrics |

The optimization process for drug-target binding affinity prediction can be visualized as follows:

DE for Drug-Target Affinity Prediction

Experimental Results in Drug Discovery

The application of DE to optimize deep learning models for drug-target binding affinity prediction has demonstrated remarkable success. The DE-optimized CSAN-BiLSTM-Att model achieved state-of-the-art performance on standard benchmarks, substantially outperforming previous methods like KronRLS, SimBoost, and other deep learning approaches [7].

The significant improvement in prediction accuracy enables more reliable in silico drug screening, reducing the need for expensive and time-consuming laboratory experiments. This application exemplifies how DE can enhance complex machine learning workflows by automatically discovering optimal configurations that would be difficult to identify through manual tuning.

Implementation Guidelines

Parameter Selection and Tuning

The performance of Differential Evolution depends largely on the appropriate setting of its control parameters: population size (NP), scaling factor (F), and crossover rate (CR) [1] [5]. The table below provides practical guidance for parameter selection based on empirical studies and theoretical analyses:

Table 3: Differential Evolution Parameter Selection Guide

| Parameter | Typical Range | Effect on Optimization | Recommendations |

|---|---|---|---|

| Population Size (NP) | [5D, 10D] where D is problem dimension [1] | Larger values increase diversity but slow convergence | Start with NP=10D, adjust based on problem complexity |

| Scaling Factor (F) | [0.4, 1.0] with optimal range [0.7, 0.9] [6] | Higher values promote exploration, lower values aid exploitation | Use F=0.8 as default, adapt dynamically for complex landscapes |

| Crossover Rate (CR) | [0.7, 1.0] with optimal range [0.8, 0.95] [6] | Higher values preserve more mutant information | Start with CR=0.9, reduce for separable problems |

| Mutation Strategy | DE/rand/1, DE/best/1, DE/current-to-best/1 [6] | Different balances between exploration and exploitation | Use DE/rand/1 for multimodal problems, DE/best/1 for unimodal |

Adaptive and self-adaptive DE variants have been developed to automatically adjust control parameters during the optimization process. Algorithms such as JADE, JDE, and SADE have demonstrated improved performance by eliminating the need for manual parameter tuning [6] [8].

Constraint Handling Techniques

Many practical optimization problems involve constraints that must be satisfied. DE employs various constraint-handling techniques, with penalty functions being the most common approach [1] [6]. The basic penalty function method transforms a constrained problem into an unconstrained one by adding a penalty term to the objective function:

[ f_{\text{penalty}}(x) = f(x) + \rho \times CV(x) ]

where (CV(x)) measures the constraint violation and (\rho) is a penalty coefficient [1]. The main challenge with this approach is selecting an appropriate penalty coefficient (\rho) [1]. Alternative constraint-handling methods include:

- Projection onto feasible set: Moving infeasible solutions to the nearest feasible point

- Repair algorithms: Transforming infeasible solutions into feasible ones

- Multi-objective approaches: Treating constraints as separate objectives

- Feasibility preservation: Designing mutation and crossover to maintain feasibility

The choice of constraint-handling technique depends on the problem characteristics, including the number of constraints, the size of the feasible region, and the landscape of the objective function.

Table 4: Research Reagent Solutions for Differential Evolution Applications

| Tool/Resource | Function | Application Context |

|---|---|---|

| Standard DE Variants (DE/rand/1, DE/best/1) | Basic optimization engine | General-purpose continuous optimization |

| Adaptive DE Variants (JADE, SADE, CODE) | Self-tuning parameter adaptation | Complex problems with unknown parameter sensitivities |

| Constraint Handling Modules (Penalty functions, feasibility rules) | Managing problem constraints | Engineering design, resource allocation |

| Parallel DE Frameworks | Distributed fitness evaluation | Computationally expensive objective functions |

| Hybrid DE-Local Search | Memetic algorithms combining global and local search | Refining solutions after initial convergence |

| Multi-objective DE Extensions (MODE, NSDE) | Handling multiple conflicting objectives | Design trade-off analysis, Pareto front identification |

Differential Evolution represents a powerful, versatile, and robust approach to global optimization problems across diverse scientific and engineering domains. Its simple yet effective combination of differential mutation, crossover, and selection operations enables efficient exploration and exploitation of complex search spaces. The algorithm's ability to handle non-differentiable, multimodal, and constrained optimization problems makes it particularly valuable for real-world applications where traditional methods often fail.

The growing application of DE in drug discovery, particularly in optimizing deep learning models for drug-target binding affinity prediction, demonstrates its practical significance in accelerating scientific research and development. As computational challenges in fields like drug discovery continue to increase in complexity, DE's role as an effective optimization tool is likely to expand further.

Future research directions include developing more sophisticated theoretical foundations for DE, creating enhanced adaptive mechanisms for parameter control, and exploring hybrid approaches that combine DE with other optimization techniques. The continued refinement and application of Differential Evolution will undoubtedly contribute to solving increasingly complex optimization problems in science and industry.

Differential Evolution (DE), introduced by Storn and Price in 1997, represents a paradigm of evolutionary computation that directly translates the principles of natural selection into a robust optimization framework [5] [11] [12]. As a population-based metaheuristic, DE operates on the fundamental Darwinian tenets of variation, inheritance, and selection to solve complex optimization problems across continuous spaces. Unlike its predecessor, the Genetic Algorithm (GA), which operates on discrete spaces using binary representations, DE utilizes real-number encoding and vector operations, enabling it to often produce better, more stable solutions for continuous optimization challenges [5] [12]. This technical guide examines the core biological mechanisms embedded within DE's architecture, providing researchers and drug development professionals with a comprehensive understanding of its operational paradigm.

Biological Foundations and Computational Analogies

The DE algorithm meticulously mirrors evolutionary processes through specific computational operations. Table 1 delineates the direct correspondence between biological evolutionary concepts and their algorithmic implementations in DE.

Table 1: Mapping Natural Selection to Differential Evolution

| Biological Concept | DE Implementation | Function in Optimization |

|---|---|---|

| Population | Set of candidate solution vectors | Maintains genetic diversity for exploration |

| Chromosome | D-dimensional parameter vector | Encodes a potential solution to the problem |

| Gene | Single vector parameter | Represents one variable in the solution |

| Mutation | Differential variation of vectors | Introduces new genetic material/exploration |

| Recombination | Crossover operation | Combines traits from parent and donor vectors |

| Natural Selection | Greedy selection operator | Preserves fitter individuals for next generation |

| Fitness | Objective function evaluation | Quantifies solution quality |

The Evolutionary Workflow in DE

The following diagram illustrates the complete evolutionary cycle of DE, demonstrating how the algorithm iteratively improves its population through simulated evolutionary pressures.

Core Evolutionary Operators: A Technical Examination

Population Initialization: Genetic Diversity Foundation

The evolutionary process begins with the creation of an initial population, representing the gene pool from which subsequent generations will evolve. DE typically employs uniform random initialization across the defined search space to maximize initial genetic diversity [13]. For a population of NP individuals, each D-dimensional parameter vector is initialized as:

Where rand_ij(0,1) is a uniformly distributed random number, and x_ij^U and x_ij^L represent the upper and lower bounds of the j-th parameter [13]. Advanced variants may use sequences like the Halton sequence for more uniform initialization, improving the ergodicity of the initial solution set [13].

Mutation: The Engine of Variation

The mutation operator in DE introduces genetic variation by creating donor vectors through differential perturbations, simulating random genetic mutations in biological evolution. Unlike the random mutations in Genetic Algorithms, DE employs directed mutations using vector differences, creating a more efficient search mechanism [13] [11].

The most common mutation strategy, DE/rand/1, generates a donor vector for each target vector in the population:

Where r1, r2, r3 are distinct random indices different from i, and F is the scaling factor controlling the amplification of the differential variation [13] [11]. This differential mutation represents a fundamental departure from other evolutionary algorithms, creating a self-organizing adaptive step size that automatically scales based on the population distribution.

Table 2: Common DE Mutation Strategies

| Strategy | Formula | Characteristics |

|---|---|---|

| DE/rand/1 | v_i = x_r1 + F·(x_r2 - x_r3) |

Balanced exploration/exploitation |

| DE/best/1 | v_i = x_best + F·(x_r1 - x_r2) |

Faster convergence, reduced diversity |

| DE/current-to-best/1 | v_i = x_i + F·(x_best - x_i) + F·(x_r1 - x_r2) |

Combines individual and best information |

| DE/rand/2 | v_i = x_r1 + F·(x_r2 - x_r3) + F·(x_r4 - x_r5) |

Enhanced exploration capability |

Crossover: Genetic Recombination

Following mutation, the crossover operation combines genetic information from the donor vector with the target vector to produce a trial vector, simulating biological recombination. DE primarily uses binomial crossover:

Where CR is the crossover probability controlling the fraction of parameters inherited from the donor vector, and rn(i) is a randomly chosen index ensuring at least one parameter comes from the donor vector [13] [11]. This process introduces new genetic combinations while preserving some characteristics of the parent solution.

Selection: Survival of the Fittest

The selection operator implements Darwinian survival of the fittest through greedy competition between parent and offspring:

This one-to-one selection mechanism ensures that improvements are immediately retained in the population, driving successive generations toward higher fitness regions of the search space [13] [11]. The algorithm's elitist strategy guarantees that solution quality never deteriorates from one generation to the next.

Advanced Evolutionary Mechanisms

Parameter Adaptation: Evolutionary Plasticity

Basic DE requires manual setting of control parameters (F and CR), which significantly impact performance. Advanced DE variants introduce adaptive parameter control mechanisms that enable the algorithm to self-adjust its parameters during evolution, mimicking the evolutionary plasticity found in natural systems [13] [14].

Reinforcement Learning-based DE (RLDE) establishes a dynamic parameter adjustment mechanism using a policy gradient network within a reinforcement learning framework. This enables online adaptive optimization of the scaling factor and crossover probability based on the evolutionary state [13]. Similarly, JADE introduces a parameter adaptation technique where F and CR values that successfully generate improved offspring are retained and used to guide future parameter choices [14].

Multi-objective Optimization: Evolutionary Trade-offs

Many real-world problems in drug development involve multiple conflicting objectives. Multi-objective DE (MODE) extends the basic algorithm to handle such problems by incorporating Pareto-based selection [14]. MODE algorithms maintain a diverse population of solutions representing different trade-offs between objectives, ultimately converging toward the Pareto-optimal front.

The MODE-FDGM algorithm introduces a directional generation mechanism that leverages current and historical information to guide the search toward superior regions of the Pareto front while maintaining diversity through crowding distance evaluation and niche preservation strategies [14].

Experimental Framework and Validation

Standard Testing Methodology

DE performance is typically validated using standardized benchmark functions that simulate various optimization landscapes. Table 3 summarizes common benchmark categories and their significance in evaluating DE's evolutionary capabilities.

Table 3: Benchmark Functions for DE Performance Evaluation

| Function Type | Characteristics | Evaluation Purpose |

|---|---|---|

| Unimodal | Single global optimum, no local optima | Convergence velocity measurement |

| Multimodal | Multiple local optima | Global exploration capability |

| Hybrid | Combination of different function types | Search adaptability assessment |

| Composition | Weighted sum of multiple functions | Overall performance evaluation |

Recent comparative studies employ rigorous statistical testing, including the Wilcoxon signed-rank test for pairwise comparisons and the Friedman test for multiple algorithm comparisons, to validate performance improvements statistically [11]. These tests determine whether observed differences in performance are statistically significant, accounting for DE's stochastic nature.

Experimental Protocol for DE Evaluation

A standardized experimental protocol for evaluating DE variants includes:

- Test Function Selection: 26 standard test functions encompassing unimodal, multimodal, hybrid, and composition categories [13]

- Dimensionality Analysis: Performance evaluation across multiple dimensions (typically 10D, 30D, 50D, and 100D) to assess scalability [11]

- Population Settings: Population size (NP) typically set between 50-500 depending on problem complexity [5]

- Parameter Configuration: For classic DE, F=0.5 and CR=0.9 are common starting points [15]

- Termination Criteria: Maximum function evaluations (e.g., 10,000×D) or convergence threshold [13]

- Independent Runs: 30-51 independent runs per algorithm to account for stochasticity [11]

- Performance Metrics: Mean error, standard deviation, success rate, and convergence speed [15]

The Researcher's Toolkit: Essential Components for DE Implementation

Table 4: Research Reagent Solutions for DE Experimentation

| Component | Function | Implementation Example |

|---|---|---|

| Population Initializer | Generates initial candidate solutions | Halton sequence for uniform distribution [13] |

| Mutation Strategy | Creates genetic variation | DE/rand/1, DE/best/1, or adaptive strategies [11] |

| Crossover Operator | Recombines genetic material | Binomial crossover with CR parameter [5] |

| Selection Mechanism | Implements survival of the fittest | Greedy one-to-one selection [11] |

| Parameter Controller | Adapts F and CR parameters | Reinforcement learning policy network [13] |

| Archive Mechanism | Preserves diversity | External archive for discarded solutions [13] |

| Constraint Handler | Manages feasible solutions | Penalty functions or feasibility rules [12] |

Application in Drug Development and Chemometrics

DE has demonstrated significant utility in drug development and chemometrics, particularly in optimizing experimental designs and model parameters [12]. Applications include:

- Molecular Docking Optimization: DE efficiently explores conformational spaces to predict ligand-receptor binding affinities

- Quantitative Structure-Activity Relationship (QSAR) Modeling: Optimizes parameters in statistical models linking chemical structure to biological activity [12]

- Experimental Design Optimization: Finds optimal configurations for chemical experiments involving the Arrhenius equation, reaction rates, and concentration measures [12]

- Chemical Mixture Optimization: Determines optimal proportions in mixture experiments to maximize desired properties [12]

In these applications, DE's evolutionary approach enables robust optimization without requiring gradient information or differentiable objective functions, making it particularly valuable for complex, noisy, or multi-modal optimization landscapes common in pharmaceutical research.

Differential Evolution embodies a computationally efficient translation of Darwinian evolutionary principles into a powerful optimization methodology. Through its population-based approach employing mutation, crossover, and selection operations, DE demonstrates how biological concepts of genetic variation and natural selection can solve complex engineering and scientific problems. The algorithm's continued evolution through parameter adaptation, hybrid mechanisms, and multi-objective extensions ensures its relevance for contemporary challenges in drug development and chemometrics. As DE variants incorporate advanced learning mechanisms and adaptive strategies, they increasingly mirror the sophisticated adaptability of natural evolutionary systems while maintaining the mathematical rigor required for computational optimization.

Differential Evolution (DE), introduced by Storn and Price, is a population-based evolutionary algorithm (EA) designed for optimizing problems over continuous domains [16] [11]. Its prominence in the computational intelligence community stems from a simple structure coupled with remarkable performance when tackling optimization problems that are intractable for traditional, gradient-based methods. Specifically, DE possesses an inherent ability to handle non-differentiable, nonlinear, and multimodal cost functions, making it a robust and versatile optimizer for complex real-world scenarios [16]. Unlike traditional convex optimization techniques that rely on gradient information and can easily become trapped in local optima, DE operates through the iterative application of mutation, crossover, and selection operators on a population of candidate solutions, enabling effective exploration of rugged search spaces [16] [17].

This guide details the core mechanisms of DE that confer these key advantages, supported by experimental methodologies and quantitative data. It is structured within the broader context of DE research, highlighting the algorithm's foundational principles and its proven efficacy in demanding fields, including engineering design and drug development.

Core Mechanisms and Advantages

The proficiency of DE in navigating complex function landscapes arises from the synergistic operation of its core components. The following subsections and visual workflow detail these mechanisms.

Algorithmic Workflow and Core Operators

The DE process evolves a population of candidate solutions over successive generations to converge towards the global optimum. The sequence of operations is logically depicted in the workflow below.

Diagram 1: DE Algorithm Workflow.

Advantage Analysis of Core Operators

Handling Non-Differentiable and Nonlinear Functions: DE is a gradient-free optimizer, meaning it does not require the objective function to be differentiable or convex [16] [18]. It navigates the search space using a population of solutions, relying solely on direct function evaluations. This makes it supremely suitable for discontinuous, noisy, or irregular functions where classical gradient-based methods (like BFGS) fail or are inapplicable [16] [19].

Navigating Multimodal Functions: DE's population-based nature and its differential mutation operator are key to escaping local optima. Mutation, by creating new vectors based on the scaled difference of randomly selected population members, introduces a spontaneous and directed diversity that allows the algorithm to explore new regions of the search space [20] [11]. This is crucial in multimodal optimization, which involves identifying multiple global and local optima to provide decision-makers with diverse alternative solutions [20]. Enhanced variants further improve this by integrating niching techniques to form stable subpopulations around different optima simultaneously [20].

Balancing Exploration and Exploitation: The performance of DE hinges on balancing global exploration (searching new areas) and local exploitation (refining good solutions). This balance is influenced by the choice of mutation strategies and their control parameters [16].

- Mutation Strategies: The

DE/rand/1strategy is highly explorative, favoring global search, whereasDE/best/1is more exploitative, potentially leading to faster convergence but also to premature convergence in multimodal landscapes [16]. - Control Parameters: The mutation factor (

F) and crossover rate (CR) are critical. A higherF(e.g., 0.5-1.0) emphasizes exploration, while a lowerF(e.g., 0.3-0.5) favors exploitation. Similarly, a highCR(e.g., 0.7-0.9) is often better for non-separable, nonlinear problems [16].

- Mutation Strategies: The

Table 1: DE Mutation Strategies and Their Characteristics

| Strategy | Formula | Exploration/Exploitation Balance | Best Suited For |

|---|---|---|---|

| DE/rand/1 | v_i = x_r1 + F*(x_r2 - x_r3) |

High Exploration | Multimodal problems, avoiding premature convergence [16] |

| DE/best/1 | v_i = x_best + F*(x_r1 - x_r2) |

High Exploitation | Unimodal problems, faster convergence [16] [18] |

| DE/current-to-pbest/1 | v_i = x_i + F*(x_pbest - x_i) + F*(x_r1 - x_r2) |

Adaptive Balance | Complex composite functions [18] |

Experimental Validation and Performance

Robust evaluation of DE algorithms requires standardized benchmark functions and rigorous statistical testing, as commonly employed in contemporary research.

Standard Benchmark Functions and Evaluation

The IEEE Congress on Evolutionary Computation (CEC) benchmark suites (e.g., CEC2017, CEC2022) are widely adopted for testing. These suites contain functions categorized to probe specific algorithmic capabilities [11] [19]:

- Unimodal Functions: Test convergence speed and exploitation.

- Multimodal Functions: Test the ability to escape local optima and global exploration.

- Hybrid and Composition Functions: Combine different landscapes to test overall robustness on complex, real-world-like problems [11].

Performance is typically measured by solution accuracy (the difference between the found optimum and the known global optimum) and convergence speed (the number of function evaluations required to reach a target accuracy). Statistical tests like the Wilcoxon signed-rank test (for pairwise comparison) and the Friedman test (for multiple algorithm comparisons) are used to establish statistical significance of results [11].

Quantitative Performance Comparison

Modern DE variants demonstrate superior performance on these benchmarks. The table below summarizes results from recent studies comparing state-of-the-art DE algorithms.

Table 2: Performance Comparison of Modern DE Variants on CEC Benchmarks

| Algorithm | Key Mechanism | Reported Performance (CEC Suite) | Statistical Significance |

|---|---|---|---|

| RLDE [13] | Reinforcement learning for parameter adaptation; Halton sequence initialization | Significantly enhanced global optimization performance on 26 standard test functions vs. other heuristic algorithms | Superior performance verified in 10D, 30D, and 50D |

| ARRDE [18] | Nonlinear population reduction; Adaptive restart | Ranked 1st across CEC2011, CEC2017, CEC2019, CEC2020, and CEC2022 (212 problem instances) | Consistently top-tier, demonstrating high robustness |

| MHDE [21] | Multi-hybrid mutation; Weibull crossover; Gaussian sampling | Superior results on CEC2005, CEC2014, CEC2017; Effective on frame structure design problems | Friedman and Wilcoxon tests confirmed better performance |

| LSHADESPA [19] | Simulated Annealing scaling factor; Oscillating crossover | 1st rank on CEC2014, CEC2017, and CEC2022 benchmark functions | Lowest f-rank value (41, 77, 26 respectively) |

Case Study: DE vs. Gradient-Based Optimizer

A direct comparison highlights DE's advantage on multimodal problems. Consider optimizing a convex function versus a rugged, multimodal function [16].

- Convex Function: Both a gradient-based method (BFGS) and DE found the global optimum. However, BFGS required only 6 iterations and 7 function evaluations, while DE used 56 generations with a population of 50, resulting in several thousand evaluations [16].

- Multimodal Function: When a nonlinear periodical term was added, creating many local optima, BFGS converged to a poor local optimum (objective value: 12.12) from a given starting point. DE consistently found a much better solution (objective value: 1.61), likely the global optimum. Testing 500 random starting points for BFGS yielded a best result of 1.855, still worse than DE and at a great computational cost [16].

This demonstrates that while DE may be less efficient for simple convex problems, it is uniquely powerful and reliable for complex, multimodal landscapes where traditional methods fail.

For researchers aiming to implement or experiment with DE, the following "toolkit" comprises essential algorithmic components and resources.

Table 3: Essential Computational Toolkit for Differential Evolution Research

| Tool/Component | Function/Description | Example/Note |

|---|---|---|

| Population | A set of candidate solutions explored in parallel. | Size (NP) is critical; often 5-10x the number of dimensions [16]. |

| Mutation Factor (F) | Scales the differential variation, controlling step size. | Typical range [0.3, 1.0]; can be fixed or adaptive [16] [18]. |

| Crossover Rate (CR) | Controls the mixing of genetic information between target and mutant vectors. | Typical range [0.7, 0.9] for non-separable problems [16]. |

| Mutation Strategy | The scheme used to create a mutant/donor vector. | DE/rand/1 (robust), DE/best/1 (fast), DE/current-to-pbest/1 (modern) [18]. |

| Benchmark Suites | Standardized test functions for algorithmic validation. | IEEE CEC test suites (e.g., CEC2017, CEC2022) [11] [19]. |

| Statistical Tests | Methods to validate performance results. | Wilcoxon signed-rank test, Friedman test, Mann-Whitney U-score test [11]. |

| Software Libraries | Pre-implemented code for development and testing. | scipy.optimize.differential_evolution in Python [16]; Minion framework [18]. |

Differential Evolution stands as a powerful and robust solver for some of the most challenging optimization problems in science and engineering. Its fundamental advantages in handling non-differentiable, nonlinear, and multimodal functions are rooted in its gradient-free, population-based stochastic architecture. Through mechanisms like differential mutation and greedy selection, it effectively explores complex landscapes and escapes local optima where traditional optimizers falter.

Ongoing research continues to enhance DE's capabilities, with adaptive parameter control, hybridization, and sophisticated initialization strategies further solidifying its position as a leading tool for real-world problems. For researchers in drug development and other computationally intensive fields, mastery of DE offers a pathway to solving high-dimensional, non-convex optimization problems that are otherwise intractable.

Within the field of evolutionary computation, Differential Evolution (DE) and Genetic Algorithms (GA) stand as two prominent pillars for solving complex optimization problems. While both are population-based metaheuristics inspired by principles of natural evolution, they differ fundamentally in their representation of solutions and the design of their search operations. These distinctions make each algorithm uniquely suited to particular classes of problems, from high-dimensional continuous optimization to discrete combinatorial challenges. This guide provides a detailed technical comparison of DE and GA, framing their operational mechanisms within the context of modern research and practical applications, including the critical domain of drug development. Understanding these differential characteristics is essential for researchers and scientists to select the appropriate algorithm for their specific optimization needs.

Fundamental Concepts and Algorithmic Frameworks

Genetic Algorithms (GA)

Genetic Algorithms operate on a population of potential solutions, typically encoded as fixed-length binary strings (chromosomes), though other representations like integers or real numbers are also used [22] [23]. The algorithm iteratively improves this population through the application of selection, crossover, and mutation operators. Selection mimics natural selection by favoring individuals with higher fitness (better solutions) to pass their genetic material to the next generation. Crossover (or recombination) combines genetic information from two parent solutions to produce offspring, exploiting the search space by blending promising solution features [23]. Mutation introduces random changes to individual genes, helping the algorithm explore new regions of the search space and maintain population diversity [23]. A significant conceptual challenge in GAs is ensuring that the crossover operation produces offspring that are fitter than their parents, rather than merely increasing the variance of fitness, which can also be achieved through more intense mutation [23].

Differential Evolution (DE)

Differential Evolution, specifically designed for continuous optimization in real-valued search spaces, distinguishes itself through a unique differential mutation strategy [13] [11]. Unlike GA, DE creates new candidate solutions by calculating weighted differences between randomly selected population vectors. The core DE/rand/1 mutation strategy is defined as:

$$v{i}(t+1) = x{r1}(t) + F \cdot (x{r2}(t) - x{r3}(t))$$

Here, (v{i}(t+1)) is the resulting mutant (or donor) vector, (x{r1}, x{r2}, x{r3}) are three distinct randomly selected parent vectors from the population, and (F) is the scaling factor controlling the amplification of the differential variation [13] [11]. Following mutation, a crossover operation (typically binomial) is performed between the mutant vector and the original target vector ((x{i})) to generate a trial vector ((u{i})). Finally, a greedy selection mechanism is applied: the trial vector replaces the target vector in the next generation only if it has better or equal fitness [13] [11]. This structure makes DE a particularly effective and robust optimizer for real-parameter spaces.

Comparative Analysis: Representation, Operations, and Performance

Table 1: Core Algorithmic Comparison between DE and GA

| Feature | Differential Evolution (DE) | Genetic Algorithms (GA) |

|---|---|---|

| Solution Representation | Real-valued vectors (continuous parameters) [11] | Typically binary strings, but also integers, real-values, etc. [22] [23] |

| Core Exploration Mechanism | Differential mutation (vector differences) [13] [11] | Crossover (recombination of parents) [23] |

| Core Disruption Mechanism | Crossover (binomial or exponential) [13] | Mutation (bit-flips, etc.) [23] |

| Selection Type | Greedy (deterministic one-to-one survival) [13] [11] | Probabilistic (e.g., roulette wheel, tournament) [22] |

| Parameter Sensitivity | Sensitive to scaling factor (F) and crossover rate (CR) [13] | Sensitive to crossover and mutation rates [24] |

| Typical Search Space | Continuous [11] | Discrete, combinatorial, or continuous [24] |

| Strengths | Powerful local search, efficiency on continuous problems, simple structure [13] [11] | Flexibility in representation, good for combinatorial problems [24] [22] |

Analysis of Key Operational Differences

The comparative analysis reveals that the most profound difference lies in their primary search drivers. DE relies heavily on its differential mutation operator, which uses the spatial distribution information of the current population to guide its search. This makes DE a highly self-organizing algorithm, as the step size and direction for exploration automatically adapt as the population converges or disperses [13] [11]. In contrast, the traditional GA search is primarily driven by crossover, which seeks to recombine high-quality "building blocks" from parent solutions [23]. The greedy selection mechanism in DE often leads to a more exploitative search with faster convergence on continuous problems, whereas GA's probabilistic selection can help maintain diversity for a longer duration, which can be beneficial in complex multimodal landscapes [23].

Advanced Modern Variants and Performance Evaluation

Contemporary Enhancements

Recent research has focused on overcoming the inherent limitations of both algorithms, particularly their parameter sensitivity and the risk of premature convergence.

For DE, one significant advancement is the integration with Reinforcement Learning (RL) to create an adaptive parameter control mechanism. For instance, the RLDE algorithm uses a policy gradient network to dynamically adjust the scaling factor (F) and crossover probability (CR) during the search process, thereby optimizing performance without manual tuning [13]. Furthermore, to enhance population diversity and initial coverage, RLDE and other modern variants employ quasi-random sequences like the Halton sequence for population initialization, improving the ergodicity of the initial solution set [13]. Another major research direction is multimodal DE, which incorporates niching techniques and specialized mutation strategies to identify multiple optimal solutions in a single run, a valuable capability for real-world decision-making [20].

GAs have also evolved, finding new relevance in 2025 within AutoML platforms for hyperparameter optimization of machine learning models [24]. Their model-agnostic and gradient-free nature makes them suitable for tuning complex models like deep neural networks, where the hyperparameter space (e.g., number of layers, learning rates) is encoded as a chromosome [24]. Furthermore, GAs remain a tool of choice for tasks involving semantic similarity and recommendation systems, where solutions are effectively encoded as vectors of floating-point values and optimized using fitness functions like Mean Absolute Error (MAE) [22].

Rigorous Performance Testing Protocols

Evaluating the performance of stochastic optimizers like DE and GA requires robust statistical methodologies. Standard practice involves running each algorithm multiple independent times (e.g., 30-51 runs) on a suite of benchmark functions to account for random variation [13] [11].

- Benchmark Functions: Testing typically employs standard test function sets, such as those from the CEC (Congress on Evolutionary Computation) competitions. These include unimodal, multimodal, hybrid, and composition functions to assess an algorithm's exploitation, exploration, and ability to escape local optima [11]. Performance is evaluated across varying dimensions (e.g., 10D, 30D, 50D, 100D) to test scalability [13] [11].

- Statistical Analysis: Non-parametric statistical tests are preferred because the results of evolutionary algorithms often violate the normality assumptions of parametric tests [11].

- The Wilcoxon signed-rank test is used for pairwise algorithm comparison, ranking the absolute differences in performance across multiple benchmark functions [11].

- The Friedman test is used for multiple algorithm comparisons, ranking each algorithm's performance on every function [11].

- The Mann-Whitney U-score test (or Wilcoxon rank-sum test) can also be employed to determine if one algorithm tends to outperform another [11].

Table 2: Summary of Statistical Tests for Algorithm Performance Validation

| Statistical Test | Purpose | Key Principle |

|---|---|---|

| Wilcoxon Signed-Rank Test | Pairwise comparison of two algorithms [11] | Ranks the absolute differences in performance for each function. Compares the sum of ranks for positive and negative differences. |

| Friedman Test | Multiple comparison of several algorithms [11] | Ranks algorithms for each function (best=1). Tests if the average ranks across all functions are significantly different. |

| Mann-Whitney U-Score Test | Pairwise comparison to assess performance tendency [11] | Ranks all results from both algorithms together. Tests if the ranks for one algorithm are significantly higher than the other. |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational and Algorithmic Tools for Evolutionary Computation Research

| Item / Concept | Function / Purpose |

|---|---|

| Benchmark Suites (CEC) | Standardized sets of test functions (unimodal, multimodal, etc.) for fair and reproducible performance evaluation of optimization algorithms [11]. |

| Halton Sequence | A low-discrepancy sequence for quasi-random population initialization. Improves initial space coverage and ergodicity compared to purely random initialization [13]. |

| Policy Gradient Network (in RL) | A reinforcement learning component used in adaptive DE variants (e.g., RLDE) to dynamically control algorithm parameters ((F), (CR)) based on the evolutionary state [13]. |

| Niching Methods | Techniques (e.g., crowding, fitness sharing) integrated into EAs to subdivide the population and maintain diversity, enabling the location of multiple optima in multimodal problems [20]. |

| Non-parametric Statistical Tests | Essential tools (Wilcoxon, Friedman) for robustly comparing stochastic optimization algorithms, as their performance data often violates the assumptions of parametric tests [11]. |

Workflow and Algorithmic Structures

The following diagrams illustrate the high-level logical workflows of the standard DE and GA processes, highlighting their distinct operational sequences.

Genetic Algorithm High-Level Workflow

Differential Evolution High-Level Workflow

The principles of DE and GA have significant implications in drug development, a field rife with complex optimization problems. DE's strength in high-dimensional continuous optimization makes it suitable for tasks like molecular docking simulations, where the goal is to find the optimal conformation and orientation of a small molecule (ligand) within a protein's binding site—a continuous search in 3D space. Its ability to perform a powerful, directed local search allows for fine-tuning molecular conformations efficiently. Furthermore, advanced multimodal DE variants can be used to identify multiple potential binding modes, providing researchers with a set of alternative candidate interactions to explore [20].

Conversely, GAs are highly effective for combinatorial challenges in drug discovery. A prime application is in de novo molecular design, where the chemical structure of a novel compound is built piece-by-piece. This process can be encoded as a GA chromosome, where genes represent molecular fragments or specific functional groups. The crossover and mutation operators then generate new candidate molecules by recombining and altering these fragments, with the fitness function evaluating properties like drug-likeness, binding affinity, or synthetic accessibility [24]. GAs are also instrumental in optimizing chemical synthesis pathways, which involve sequencing discrete reaction steps.

In conclusion, the choice between DE and GA is not a matter of which algorithm is universally superior, but rather which is more appropriate for the problem's structure. DE, with its unique differential mutation and greedy selection, often demonstrates superior performance and faster convergence on numerical and continuous optimization problems, a fact supported by its prominence in ongoing research and CEC competitions [11]. GA, with its flexible representation and well-understood operators, remains a powerful and versatile tool for combinatorial and discrete problems. The ongoing evolution of both algorithms, through hybridization with machine learning and adaptive parameter control, continues to expand their capabilities and solidify their relevance for researchers and scientists tackling the intricate optimization challenges of today, including the critical pursuit of new therapeutics.

Within the broader study of evolutionary computation, the Differential Evolution (DE) algorithm stands out for its simplicity, robustness, and effectiveness in solving complex optimization problems [2] [25]. First introduced by Storn and Price in the mid-1990s, DE is a population-based stochastic optimizer that has become a tool for tackling non-linear, non-differentiable, and multimodal optimization challenges across diverse fields, including engineering design, machine learning, and notably, drug development [4] [25]. Unlike gradient-based methods, DE does not require the problem to be differentiable, treating it instead as a black box where only the measure of quality is needed [1]. This technical guide deconstructs the core components of the DE algorithm—population initialization, mutation, crossover, and selection—providing researchers with detailed methodologies and visualizations to understand and apply this powerful algorithm effectively.

The Core Operational Cycle of Differential Evolution

The Differential Evolution algorithm follows an iterative process to evolve a population of candidate solutions toward an optimum. Its canonical workflow can be visualized as a cycle, as shown in the diagram below.

This cycle of mutation, crossover, and selection repeats, driving the population toward better regions of the search space until a termination criterion is met, such as a maximum number of generations or convergence to a satisfactory solution [2] [4].

Detailed Breakdown of Core Components

Population Initialization

The algorithm begins by generating an initial population of NP candidate solutions, often called individuals or parameter vectors [2]. Each individual is a D-dimensional vector, where D is the number of parameters in the optimization problem. A common initialization method for the j-th parameter of the i-th vector is:

Xi,j = low[j] + rand · (high[j] - low[j]) [2]

Here, low[j] and high[j] define the lower and upper bounds for the j-th parameter, and rand is a random number uniformly distributed between 0 and 1 [2].

Table: Comparison of Population Initialization Techniques [26] [27]

| Technique | Type | Principle | Key Characteristics |

|---|---|---|---|

| Random (RNG) | Stochastic | Simple random sampling | Most common method; can lead to clustering or empty regions (high discrepancy). |

| Latin Hypercube (LHC) | Stochastic | Stratified sampling | Improves coverage by ensuring each parameter range is divided into segments. |

| Sobol Sequence | Deterministic | Low-discrepancy sequence | Generates points that are more evenly distributed (low discrepancy); enhances early exploration. |

| Halton Sequence | Deterministic | Low-discrepancy sequence | Similar to Sobol, offers high uniformity in point distribution. |

While simple random initialization is widely used, advanced low-discrepancy sequences like Sobol and Halton can generate a more uniform spread of initial points, potentially enhancing the algorithm's exploration ability in the early iterations [26] [27]. For problems with a known promising region, a population can also be initialized using a predefined set of guesses to speed up convergence [27].

Mutation

The mutation operation introduces new genetic material into the population by generating a mutant vector, Vi, for each target vector, Xi, in the current population [2]. The most common mutation strategy, known as "rand/1," is defined as:

Vi = Xr1 + F · (Xr2 - Xr3) [2] [25]

Here, Xr1, Xr2, and Xr3 are three distinct vectors randomly selected from the population, and F is the mutation scale factor, a positive real number that typically controls the amplification of the differential variation (Xr2 - Xr3) [2] [25]. The flow of this process is detailed below.

The mutation strategy is a key differentiator of DE, and several variants exist. For instance, "best/1" uses the best solution in the population as the base vector, promoting faster convergence but potentially increasing the risk of premature convergence on local optima [28].

Crossover

Following mutation, a crossover operation is applied to increase the diversity of the population. It combines the mutant vector, Vi, with the target vector, Xi, to produce a trial vector, Ui [2]. The most common is binomial crossover, performed as follows for each component j:

[ Ui,j = \begin{cases} Vi,j & \text{if } (rand(j) \leq CR) \text{ or } (j = j_{rand}) \ Xi,j & \text{otherwise} \end{cases} ] [2]

Here, CR is the crossover probability within [0, 1], rand(j) is a uniform random number, and j_rand is a randomly chosen index ensuring the trial vector gets at least one component from the mutant vector [2]. The logical relationship of this operation is shown in the following diagram.

The crossover operation allows the algorithm to explore new combinations of parameters by mixing information from the mutant and the parent, while the CR parameter controls the influence of the mutant vector [2] [29].

Selection

The final step in the DE cycle is selection, which determines whether the target vector Xi or the trial vector Ui survives to the next generation. DE employs a simple one-to-one greedy selection mechanism:

[ X{i,new} = \begin{cases} Ui & \text{if } f(Ui) \leq f(Xi) \ X_i & \text{otherwise} \end{cases} ] [2] [25]

For a minimization problem, if the trial vector Ui has an equal or lower objective function value, f(Ui), than the target vector f(Xi), it replaces the target vector in the next generation. Otherwise, the target vector is retained [2]. This greedy strategy ensures the population's average fitness improves monotonically over successive generations.

Experimental Protocols and Parameter Analysis

A Numerical Example of a Single DE Iteration

To solidify understanding, consider minimizing the simple function ( f(x) = x^2 ) [25]. Suppose the current population of five 1-D individuals is ( P = {-5, 3, -2, -3, -1} ) with fitness values ( f(P) = {25, 9, 4, 9, 1} ) [25].

Individual 1 (x = -5, f=25):

- Mutation: Select ( X{r1}=3, X{r2}=-2, X{r3}=-1 ). With ( F=0.8 ), ( V1 = 3 + 0.8 * (-2 - (-1)) = 2.2 ).

- Crossover: With a high

CR, the trial vector ( U1 = 2.2 ). Fitness ( f(U1)=4.84 ). - Selection: Since ( 4.84 < 25 ), ( X1 ) is replaced by ( U1 ). New ( X_1 = 2.2 ).

Individual 5 (x = -1, f=1):

- Mutation: Select ( X{r1}=-2, X{r2}=2.2, X{r3}=3 ). ( V5 = -2 + 0.8 * (2.2 - 3) = -2.64 ).

- Crossover: ( U5 = -2.64 ). Fitness ( f(U5)=6.97 ).

- Selection: Since ( 6.97 > 1 ), ( X5 ) is retained. ( X5 = -1 ).

This process is repeated for all individuals, and over multiple iterations, the population converges towards the global minimum at ( x=0 ) [25].

Parameter Settings and Control Methods

The performance of DE is highly influenced by the choice of its parameters: population size (NP), mutation factor (F), and crossover rate (CR).

Table: Key Parameters of Differential Evolution [2] [1] [30]

| Parameter | Symbol | Typical Range | Influence on Algorithm Behavior |

|---|---|---|---|

| Population Size | NP |

( [4, 10*D] ) | A larger population increases diversity and exploration but raises computational cost per generation. |

| Mutation Factor | F |

( [0.4, 1.0] ) | A smaller F favors exploitation (local search), while a larger F favors exploration (global search). Values above 1.0 can be used but may slow convergence. |

| Crossover Rate | CR |

( [0.7, 1.0] ) | A low CR is more conservative, inheriting more from the parent. A high CR gives the mutant more influence, increasing diversity. |

While static parameter settings are common (e.g., ( F=0.8 ), ( CR=0.9 )), more advanced DE variants employ adaptive or self-adaptive parameter control [30]. For example, the jDE algorithm adapts F and CR for each individual based on previous success [30], and other methods use fuzzy logic or reinforcement learning to dynamically tune parameters during the run [30].

The Scientist's Toolkit: Research Reagents & Computational Solutions

For researchers implementing and applying Differential Evolution, the following tools are essential.

Table: Essential Computational Tools for Differential Evolution Research

| Tool / "Reagent" | Type | Function in Research | Examples & Notes |

|---|---|---|---|

| Algorithmic Framework | Software Library | Provides pre-built, tested implementations of DE and other evolutionary algorithms for rapid prototyping and application. | DEAP (Python), ECJ (Java), MOEA Framework (Java) [25]. |

| Numerical & Optimization Environment | Software Library | Offers built-in DE functions for quick integration into scientific computing workflows, often with robust constraint handling. | SciPy (scipy.optimize.differential_evolution) [27]. |

| Benchmark Problem Set | Standardized Functions | A suite of test functions for fairly comparing the performance of different DE variants and parameter settings. | CEC (Congress on Evolutionary Computation) Benchmark Suites [30]. |

| Low-Discrepancy Sequences | Initialization Method | Used to generate a more uniform initial population, potentially improving the algorithm's initial exploration phase. | Sobol, Halton, Latin Hypercube sequences [26] [27]. |

Advanced Strategies and Research Directions

To enhance DE's performance, researchers have developed numerous advanced strategies. Ensemble methods utilize multiple mutation strategies or parameter settings simultaneously during the optimization process [30]. For example, the EPSDE algorithm maintains a pool of mutation strategies and parameter values, allowing the algorithm to dynamically choose the most effective combination [30]. Another advanced variant, JADE, introduces a novel "current-to-pbest" mutation strategy that incorporates information from high-quality solutions to improve convergence speed [30].

Hybrid methods combine DE with other optimization algorithms to leverage their complementary strengths. For instance, DE has been hybridized with the Gravitational Search Algorithm (GSA) and the Bat Algorithm to create more powerful optimizers [30]. Furthermore, machine learning techniques, such as Reinforcement Learning (RL), are being integrated to intelligently adapt the DE's parameters based on the state of the search [30]. These ongoing research efforts continue to expand the capabilities and applications of the Differential Evolution algorithm.

The Differential Evolution algorithm derives its power from a straightforward yet effective cycle of population-based operations. A solid grasp of its core components—initialization, which sets the starting point; mutation, which explores the landscape through differential vectors; crossover, which diversifies the search by combining solutions; and selection, which greedily refines the population—is fundamental for researchers aiming to apply or extend this versatile optimizer. By leveraging established computational tools and understanding the impact of key parameters and advanced strategies, scientists and engineers can effectively harness DE to tackle complex optimization challenges in drug development and other scientific domains.

Implementing Differential Evolution: From Basic Steps to Advanced Biomedical Applications

A Step-by-Step Walkthrough of the DE Algorithm (DE/rand/1/bin)

Differential Evolution (DE) is a population-based stochastic optimization algorithm belonging to the field of Evolutionary Computation, a subfield of Computational Intelligence. Proposed by Storn and Price in the mid-1990s, DE has established itself as a powerful and versatile optimizer for nonlinear and non-differentiable continuous space functions. Its simple structure, minimal control parameters, and robust performance have led to successful applications across numerous scientific and engineering domains, including machine learning, engineering design, and computational biology [17] [31]. Within the broader context of evolutionary algorithm research, DE distinguishes itself through a unique differential mutation operator that leverages vector differences between population members to navigate the search space effectively. This technical guide provides an in-depth examination of the canonical DE/rand/1/bin variant, detailing its mechanistic operations, parameter tuning, and implementation considerations for researchers and practitioners.

The DE algorithm follows the standard evolutionary computing paradigm, maintaining a population of candidate solutions which it iteratively improves through cycles of mutation, crossover, and selection. The algorithm's notation "DE/x/y/z" classifies variants based on their operational strategies: "x" denotes the base vector to be mutated (e.g., "rand" for random, "best" for the best solution in the population), "y" indicates the number of difference vectors used, and "z" specifies the crossover type (e.g., "bin" for binomial) [32] [33]. The DE/rand/1/bin variant, the focus of this guide, utilizes a randomly selected base vector with one difference vector and binomial crossover, striking an effective balance between exploration and exploitation throughout the search process.

Algorithmic Framework and Mathematical Formulation

Population Initialization

The DE algorithm begins by generating an initial population of NP candidate solutions, often called individuals or parameter vectors. Each individual is a D-dimensional vector representing a potential solution to the optimization problem within the defined search space. Formally, the population at generation G is denoted as Px,g = (xi,g), where i ∈ {1, 2, ..., NP}, g ∈ {0, 1, ..., gmax}, and xi,g = (xj,i,g), j ∈ {1, 2, ..., D} [34]. For a population of size NP, each individual is randomly initialized within the specified lower and upper bounds of the search space:

Here, randij(0,1) represents a uniformly distributed random number within [0, 1], while xUj and xLj denote the upper and lower bounds for the j-th dimension, respectively [13]. This initialization strategy ensures a uniform dispersion of the initial population across the search space, providing comprehensive coverage for the subsequent evolutionary operations.

Table 1: Standard Parameter Ranges and Settings for DE/rand/1/bin

| Parameter | Symbol | Recommended Range | Default Value | Remarks |

|---|---|---|---|---|

| Population Size | NP | 30-50, or 5-10×D | 50 | Larger values improve exploration but increase computational cost |

| Scaling Factor | F | [0.4, 1.0] | 0.5 | Controls amplification of differential variation |

| Crossover Rate | CR | [0, 1] | 0.9 | Higher values favor exploration; lower values aid exploitation |

| Maximum Generations | gmax | Problem-dependent | 1000 | Determined by computational budget and convergence requirements |

Core Operational Mechanisms

The DE algorithm employs three principal operations—mutation, crossover, and selection—to evolve the population toward improved regions of the search space. These operations are applied sequentially to each individual in the population across successive generations.

Mutation: Differential Variation

The mutation operation generates a mutant vector vi,g for each target vector xi,g in the current population. For the DE/rand/1/bin variant, the mutation strategy combines a randomly selected base vector with a scaled difference between two other distinct randomly selected population members:

In this formulation, r0, r1, and r2 are mutually exclusive random indices distinct from the current index i, and F is the scaling factor controlling the amplification of the differential variation (xr1,g - xr2,g) [34] [32]. This differential mutation strategy effectively explores the search space by generating new solutions along the direction vector defined by the difference of two population members.

Crossover: Binomial Recombination

Following mutation, DE applies a crossover operation to increase population diversity by combining parameters from the target vector xi,g and its corresponding mutant vector vi,g. This process produces a trial vector ui,g. The binomial crossover scheme, denoted by "bin" in the algorithm's name, operates as follows:

Here, rand(j) is a uniformly distributed random number in [0,1] generated for each dimension j, CR is the crossover probability controlling the fraction of parameters inherited from the mutant, and jrand is a randomly selected dimension index ensuring the trial vector differs from the target vector in at least one component [34] [32]. This dimension-wise recombination creates a trial vector that shares characteristics with both parent solutions, balancing the introduction of new genetic material with the preservation of beneficial traits from the target vector.

Selection: Greedy Competition

The final operation in the DE cycle is selection, which determines whether the trial vector ui,g replaces the target vector xi,g in the next generation. DE employs a greedy one-to-one selection strategy where the trial vector must demonstrate equal or superior fitness to survive:

For minimization problems, if the trial vector's objective function value f(ui,g) is less than or equal to that of the target vector f(xi,g), the trial vector replaces the target vector in the subsequent generation; otherwise, the target vector is retained [32] [31]. This elitist selection mechanism ensures the population's average fitness never deteriorates, guiding the search toward progressively better solutions with each generation.

Figure 1: Differential Evolution (DE/rand/1/bin) Algorithm Workflow

Implementation Protocol

Step-by-Step Algorithmic Procedure

Implementing the DE/rand/1/bin algorithm requires careful attention to the sequence of operations and parameter settings. The following step-by-step protocol provides a reproducible methodology for researchers:

Algorithm Parameterization: Set the population size (NP), scaling factor (F), crossover rate (CR), and termination criteria. For initial experimentation, the default values of NP=50, F=0.5, and CR=0.9 provide a robust starting point [34].

Population Initialization: Generate NP individuals uniformly distributed across the search space using the initialization formula in Section 2.1. For a D-dimensional problem with bounds [xL, xU] for each dimension:

where random(NP, D) generates an NP×D matrix of uniform random numbers in [0,1].

Fitness Evaluation: Compute the objective function value f(xi) for each individual in the initial population.

Generational Loop: While termination criteria are not met, iterate through the following steps for each generation:

a. Mutation: For each target vector xi,g, randomly select three distinct individuals xr0,g, xr1,g, xr2,g from the population where i ≠ r0 ≠ r1 ≠ r2. Generate a mutant vector:

Implement bounds checking to ensure vi,g remains within the feasible search space [31].

b. Crossover: For each dimension j in the solution vector, generate a trial vector through binomial crossover:

where jrand is a randomly selected dimension index ensuring at least one component is inherited from the mutant vector.

c. Selection: Evaluate the trial vector ui,g and compare its fitness with the target vector xi,g:

Termination Check: After each generation, evaluate termination criteria such as maximum function evaluations, convergence threshold, or maximum generations. Common termination conditions include:

- Maximum function evaluations (e.g., FE ≥ 20000)

- Maximum computation time (e.g., TIME_MIN > 10)

- Stagnation criteria (e.g., BESTREMAINSFE > threshold) [34]

Solution Extraction: Upon termination, return the best solution found in the final population.

Practical Implementation Considerations

Boundary Constraint Handling: When mutant vectors violate search space boundaries, apply a bounding method such as:

- Random reinitialization within bounds

- Reflection from boundaries

- Clipping to the nearest boundary [31]

Solution Representation: DE operates on real-valued parameter vectors, making it suitable for continuous optimization problems. For mixed-integer or discrete problems, appropriate encoding schemes are necessary.

Computational Efficiency: The algorithm's complexity is O(NP × D × gmax), with population evaluation typically being the most computationally expensive component. Parallelization of fitness evaluations can significantly enhance performance for computationally intensive objective functions.

Parameter Tuning and Performance Analysis

Experimental Analysis of Control Parameters

The performance of DE/rand/1/bin is significantly influenced by the appropriate setting of three key control parameters: population size (NP), scaling factor (F), and crossover rate (CR). Through extensive empirical studies, researchers have established heuristic guidelines for parameter selection based on problem characteristics:

Table 2: Parameter Configuration Guidelines for Different Problem Types

| Problem Characteristics | Population Size (NP) | Scaling Factor (F) | Crossover Rate (CR) | Rationale |

|---|---|---|---|---|

| Low-dimensional (D < 10) | 30-50 | 0.5-0.6 | 0.8-0.9 | Smaller populations with higher CR for efficient search |

| High-dimensional (D > 30) | 5×D to 10×D | 0.4-0.5 | 0.9-1.0 | Larger populations maintain diversity; lower F prevents overshooting |