Decoding the Cellular Control System: A Guide to Machine Learning Classification of Gene Regulatory Network Topological Features

This article provides a comprehensive exploration of machine learning (ML) techniques for classifying topological features within Gene Regulatory Networks (GRNs).

Decoding the Cellular Control System: A Guide to Machine Learning Classification of Gene Regulatory Network Topological Features

Abstract

This article provides a comprehensive exploration of machine learning (ML) techniques for classifying topological features within Gene Regulatory Networks (GRNs). Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of key GRN topological metrics—such as degree, Knn, and PageRank—and their biological significance in distinguishing regulators from targets and identifying life-essential subsystems. The scope extends to a review of state-of-the-art methodologies, including Graph Neural Networks (GNNs) and Topological Deep Learning (TDL), and addresses critical challenges like data sparsity and noise. Finally, the article outlines rigorous validation frameworks and benchmarks, synthesizing how topological feature classification can drive advances in understanding disease mechanisms and accelerating therapeutic discovery.

The Blueprint of Life: Understanding GRN Topology and Its Key Features

What is a Gene Regulatory Network? Defining the Cellular Wiring Diagram

A Gene Regulatory Network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [1]. Think of a GRN as the cell's wiring diagram—a complex, hierarchical circuit that directs the flow of genetic information, enabling a cell to respond to its environment, undergo development, and maintain its identity [1] [2]. These networks are central to morphogenesis (the creation of body structures) and are fundamental to understanding evolutionary developmental biology [1].

In practical terms, GRNs consist of genes, transcription factors (TFs), microRNAs, and other regulatory molecules represented as nodes. The regulatory interactions between them—such as activation or repression—are represented as edges [3]. The structure of these networks is not random; they often approximate a hierarchical, scale-free topology with a few highly connected hubs and many poorly connected nodes [1]. This organization supports key biological properties like robustness and adaptability [3].

The Biological Foundation of GRNs

At its core, a GRN describes the regulatory logic that controls when and where genes are turned on or off. In multicellular organisms, this process is vital for directing cellular fate [2].

- Key Components: The physical components of a GRN include cis-regulatory elements (stretches of DNA), transcription factors (proteins), and signaling molecules [2]. For example, in neural development, transcription factors like SOX9 and REST are targeted by microRNAs such as miR-124 to fine-tune the process of neural stem cell differentiation [2].

- Recurring Circuit Motifs: GRNs are characterized by recurring patterns of interaction known as network motifs. A quintessential example is the feed-forward loop, which consists of three genes connected in a specific pattern [1]. This motif can perform defined functions, such as accelerating the activation of a target operon in E. coli or acting as a fold-change detector in the Wnt signaling pathway of Xenopus, thus providing speed and noise resistance to the network [1].

- Dynamic Operation: The operation of GRNs is dynamic. They can include feedback loops that stabilize cellular states or ensure progression through developmental pathways. A 'self-sustaining feedback loop' can help a cell maintain its identity across divisions [1]. Furthermore, morphogen gradients provide a positioning system that tells a cell its location in the body, thereby influencing its fate [1].

Computational Inference: Mapping the Wiring Diagram

Inferring the structure of GRNs from experimental data is a central challenge in systems biology. The goal is to predict the directed, regulatory relationships between transcription factors and their target genes. The field has evolved significantly with the advent of high-throughput technologies.

Table 1: Essential Research Reagents and Data Types for GRN Inference

The following table details key experimental reagents and data types crucial for generating inputs for GRN inference algorithms.

| Reagent/Data Type | Primary Function in GRN Research |

|---|---|

| scRNA-seq Data (Single-cell RNA sequencing) | Profiles genome-wide gene expression at the level of individual cells, enabling the study of cellular heterogeneity and the inference of GRNs in specific cell types [3] [4]. |

| ChIP-seq Data (Chromatin Immunoprecipitation sequencing) | Identifies genome-wide binding sites for a specific transcription factor or histone modification, providing evidence for direct physical interactions between a TF and DNA [5] [3]. |

| ATAC-seq Data (Assay for Transposase-Accessible Chromatin) | Maps regions of open, accessible chromatin, which often correspond to active regulatory elements like promoters and enhancers [3]. |

| Perturb-seq Data | Involves coupling genetic perturbations (e.g., CRISPR-based) with single-cell RNA sequencing to uncover causal gene relationships by observing downstream effects [6]. |

| Prior GRN Databases (e.g., STRING) | Collections of known molecular interactions from curated databases, often used as prior knowledge to guide or validate computational inferences [4]. |

Evolution of GRN Inference Methodologies

The methods for inferring GRNs have transitioned from traditional statistical approaches to modern machine learning and deep learning techniques.

- Classical Machine Learning Methods: Early approaches included:

- GENIE3: A supervised method that uses tree-based ensemble learning (Random Forests) to infer regulatory links [3] [7].

- ARACNE: An unsupervised method based on mutual information that infers interactions while eliminating indirect edges [7].

- LASSO: A regression-based method that uses regularization to infer sparse network structures [3].

- Modern Deep Learning Approaches: Current state-of-the-art methods leverage deep learning to model complex, non-linear relationships and integrate diverse data types [3]. These can be categorized by their learning paradigms:

- Supervised Learning: Methods like STGRNS and GRNFormer use architectures like Transformers, trained on known TF-target gene interactions to predict new edges [3].

- Unsupervised Learning: Methods like GRN-VAE use variational autoencoders to learn latent representations of gene expression data and infer relationships without labeled data [3].

- Graph-Based Learning: Methods like GRLGRN and GCNG represent the prior knowledge of gene interactions as a graph and use Graph Neural Networks (GNNs) or Graph Transformers to learn powerful gene embeddings that predict new regulatory dependencies [3] [4].

Comparative Analysis of GRN Inference Methods

A critical step for researchers is selecting the appropriate inference algorithm. The performance of different methods can be benchmarked on standardized scRNA-seq datasets from various cell lines, with ground-truth networks derived from sources like STRING or ChIP-seq [4]. Common evaluation metrics include the Area Under the Receiver Operating Characteristic Curve (AUROC) and the Area Under the Precision-Recall Curve (AUPRC).

Table 2: Performance Comparison of Selected GRN Inference Methods

This table summarizes the reported performance of a selection of classical and modern methods, highlighting the advancements brought by deep learning.

| Method | Type | Key Technology | Reported Performance (AUROC) |

|---|---|---|---|

| GRLGRN [4] | Deep Learning (Graph-based) | Graph Transformer Network | Achieved the best AUROC on 78.6% of benchmark datasets, with an average improvement of 7.3% over other models. |

| GENIE3 [3] [7] | Classical ML | Random Forest | A widely used benchmark; performance is generally strong but often surpassed by newer deep learning models on complex datasets. |

| ARACNE [7] | Classical ML | Mutual Information | Effective at removing indirect edges, but may struggle with recovering the full network due to its strict statistical filtering. |

| GRN-VAE [3] | Deep Learning (Unsupervised) | Variational Autoencoder | Demonstrates the ability to infer networks in an unsupervised manner, capturing complex data distributions. |

Experimental Protocols for GRN Benchmarking

To generate the comparative data found in studies and tables like the one above, a standardized experimental protocol is essential. The following workflow, as implemented in studies benchmarking tools like GRLGRN [4], provides a template for rigorous comparison.

Detailed Workflow for GRN Inference and Evaluation

- Dataset Curation: Collect multiple benchmark scRNA-seq datasets from public resources like the BEELINE database. These should span different cell lines (e.g., human embryonic stem cells, mouse dendritic cells) and include pre-defined ground-truth networks derived from experimental evidence (e.g., STRING, ChIP-seq) [4].

- Data Preprocessing: For each dataset, preprocess the gene expression matrix (e.g., normalization, log-transformation) and format the corresponding ground-truth adjacency matrix, where an entry of 1 indicates a validated regulatory interaction.

- Model Training and Inference:

- For Deep Learning Models (e.g., GRLGRN): The model architecture typically includes:

- A Gene Embedding Module that uses a graph transformer network to extract implicit links from a prior GRN and a Graph Convolutional Network (GCN) to generate gene embeddings from both the prior graph and the gene expression matrix [4].

- A Feature Enhancement Module that uses an attention mechanism (e.g., CBAM) to refine the extracted gene features [4].

- An Output Module that takes the refined embeddings of a transcription factor and a potential target gene to predict the likelihood of a regulatory edge [4].

- The model is trained using a loss function that often includes a regularization term, such as graph contrastive learning, to prevent over-smoothing [4].

- For Deep Learning Models (e.g., GRLGRN): The model architecture typically includes:

- Model Evaluation: For each method, compute the ranked list of all possible TF-gene edges. Use this ranking to calculate performance metrics like AUROC and AUPRC against the held-out ground-truth network. Perform cross-validation and report results across multiple datasets to ensure robustness [4].

- Downstream Analysis and Validation: Conduct case studies on top-ranked novel predictions. Perform enrichment analysis on hub genes in the inferred network and visualize the resulting GRN structure to assess its biological plausibility [4].

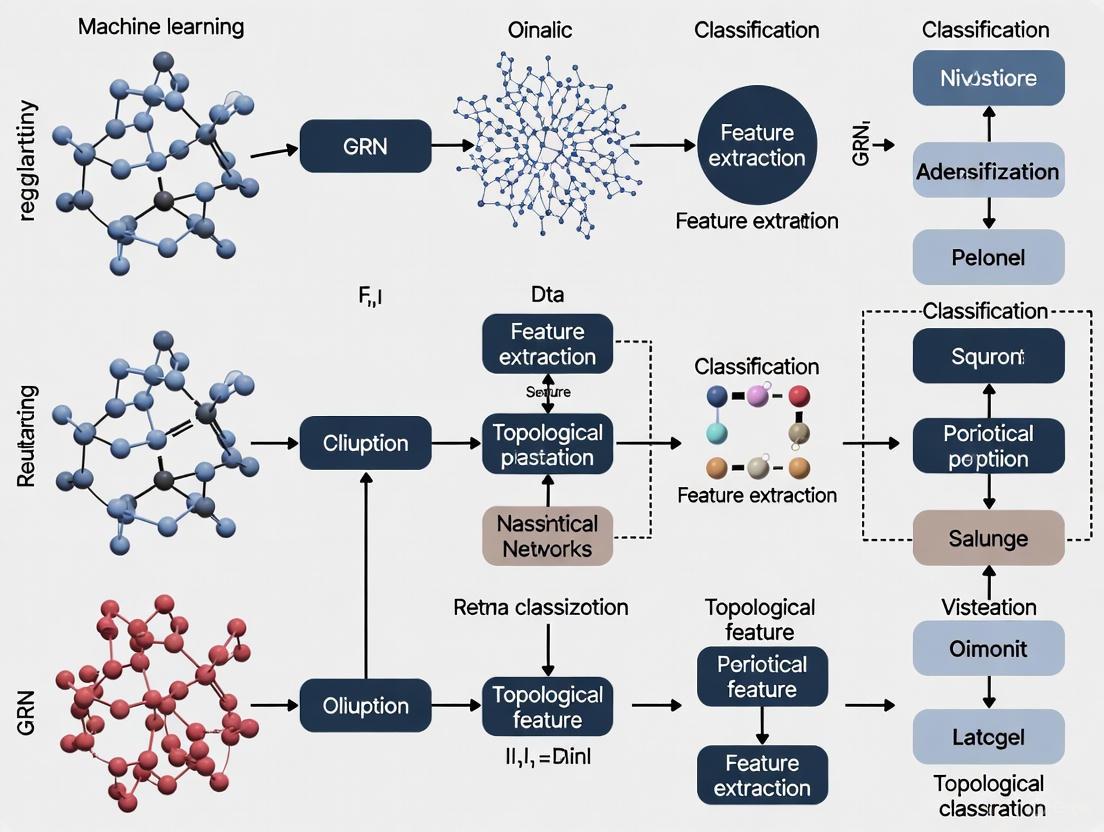

GRN Inference Workflow

The Role of GRNs in Disease and Therapeutics

Understanding GRNs has profound implications for biomedicine. Dysregulation of GRNs is a fundamental mechanism in many diseases, especially neurological and psychiatric disorders [2].

- Neurological Disorders: In Huntington's disease, widespread alterations in cortical and striatal GRNs lead to the repression of key neuronal genes. In Alzheimer's disease, network analysis has identified dysregulated functional modules related to immune system and microglial function, with TYROBP emerging as a key driver gene [2].

- Cancer Research: GRNs can reveal the molecular underpinnings of oncogenesis. For example, the loss of feedback processes in regulatory networks can lead to uncontrolled cell proliferation, a hallmark of cancer [1]. Network-based approaches are being used to identify key driver genes and potential therapeutic targets [7].

- Drug Development: The perspective of GRNs enables a shift from targeting single molecules to targeting dysregulated networks. Interventions could aim to restore a network to its healthy state by modulating the activity of key hubs, such as transcription factors or epigenetic regulators [2].

The study of Gene Regulatory Networks represents a paradigm shift from a reductionist view of biology to a systems-level understanding. The "cellular wiring diagram" is not static; it is a dynamic, context-specific, and hierarchical system that dictates cellular phenotype. The field is rapidly advancing due to the convergence of single-cell multi-omics technologies and sophisticated AI-driven inference models, particularly deep learning methods that can integrate diverse data types and learn complex regulatory logic.

Future progress will depend on several key factors: the development of more accurate and scalable inference algorithms; the creation of comprehensive, gold-standard benchmarking resources; and a continued focus on biological validation. As these tools mature, the application of GRN knowledge in clinical settings, such as identifying novel drug targets and enabling personalized medicine strategies, will move from a promising prospect to a tangible reality.

In the field of systems biology, the analysis of Gene Regulatory Networks (GRNs) has become a cornerstone for understanding cellular processes, disease mechanisms, and identifying potential drug targets. GRNs represent the complex web of interactions where transcription factors regulate target genes, controlling gene expression across different conditions and developmental stages [8]. The topological analysis of these networks provides a powerful, structure-based approach to uncover their functional organization and identify critically important elements. Among the myriad of topological metrics available, four features have consistently proven essential for classifying genes and understanding their roles: Degree, Knn (Average Nearest Neighbor Degree), PageRank, and Betweenness Centrality. This guide provides a comparative analysis of these core topological features, examining their performance characteristics, computational methodologies, and applications within machine learning frameworks for GRN analysis, offering researchers an evidence-based resource for selecting appropriate metrics for their investigations.

Methodological Framework for Topological Feature Analysis

Definition and Computation of Core Features

The meaningful application of topological features in GRN analysis requires a clear understanding of their mathematical definitions and computational approaches. In graph theory terms, a GRN is represented as a directed graph G = (V, E) where vertices (V) correspond to genes and directed edges (E) represent regulatory interactions [9].

Degree Centrality: This fundamental measure counts the number of direct connections a node possesses. In directed GRNs, this separates into in-degree (number of regulators targeting the gene) and out-degree (number of targets regulated by the gene) [8] [10]. Degree is computed as ( C_{\text{deg}}(v) = d(v) ), where ( d(v) ) represents the number of edges incident to vertex v. Its computational simplicity (O(|V|)) makes it scalable to large networks.

Knn (Average Nearest Neighbor Degree): This measure captures the connectivity patterns of a node's immediate neighborhood. For a node i, ( K{nn}(i) = \frac{1}{Ni} \sum{j \in Ni} kj ), where ( Ni ) is the set of neighbors of i and ( k_j ) is the degree of neighbor j [11]. Knn helps identify whether highly connected nodes tend to link with other highly connected nodes (assortative mixing) or with poorly connected nodes (disassortative mixing).

PageRank: Originally developed for web page ranking, PageRank measures node importance based on both the quantity and quality of incoming connections. The PageRank score of a node i is computed as ( PR(i) = \frac{1-d}{|V|} + d \sum{j \in Ni} \frac{PR(j)}{L(j)} ), where d is a damping factor (typically 0.85), ( N_i ) are nodes linking to i, and L(j) is the number of outgoing links from j [9] [11]. This recursive definition requires iterative computation until convergence.

Betweenness Centrality: This metric quantifies a node's influence over information flow by measuring how frequently it lies on shortest paths between other nodes. Formally, ( C{\text{spb}}(v) = \sum{s \neq v \in V} \sum{t \neq v \in V} \frac{\sigma{st}(v)}{\sigma{st}} ), where ( \sigma{st} ) is the total number of shortest paths from s to t, and ( \sigma_{st}(v) ) is the number of those paths passing through v [9]. With a computational complexity of O(|V||E|) using Brandes' algorithm, it's the most computationally expensive of the four features.

Experimental Protocols for Feature Evaluation

Robust evaluation of topological features requires standardized experimental protocols. Based on recent GRN research, the following methodological framework has emerged:

Network Data Curation: Studies typically compile GRNs from multiple organisms to ensure biological diversity. For example, one comprehensive analysis used GRNs from Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens, comprising 49,801 regulatory interactions and 12,319 nodes (1,073 regulators and 11,246 targets) after filtering [11]. This cross-species approach enhances the generalizability of findings.

Feature Selection and Model Training: Attribute selection algorithms (such as wrapper methods or information gain analysis) identify the most discriminative topological features for classifying regulators versus targets. Decision tree classifiers with 9-15 leaves have been effectively trained using these features, with performance evaluated through correctly classified instances (CCI) and ROC analysis [11].

Cross-Validation and Statistical Testing: Stratified k-fold cross-validation (typically 10-fold) assesses model performance, with additional validation on randomized datasets to confirm that performance exceeds chance levels (CCI ≈ 50% for random data) [11].

Biological Validation: Topological predictions require validation through biological knowledge. Gene ontology enrichment analysis of genes classified into different topological categories determines whether specific topological profiles correlate with essential biological functions or specialized subsystems [11].

The following diagram illustrates the experimental workflow for evaluating topological features in GRN research:

Comparative Performance Analysis

Classification Accuracy Across Organisms

Experimental evidence demonstrates that Knn, PageRank, and degree collectively provide strong discriminatory power for distinguishing regulators from targets in GRNs. Research evaluating these features across multiple organisms shows consistent performance:

Table 1: Classification Performance of Topological Features Across Organisms

| Organism | Network Size (Nodes) | Top Features | Correctly Classified Instances (CCI) | ROC Score |

|---|---|---|---|---|

| E. coli | 2,212 | Knn, PageRank, Degree | 85.2% | 87.1% |

| S. cerevisiae | 1,897 | Knn, PageRank, Degree | 84.7% | 86.8% |

| D. melanogaster | 2,405 | Knn, PageRank, Degree | 83.9% | 86.2% |

| A. thaliana | 2,118 | Knn, PageRank, Degree | 85.6% | 87.4% |

| H. sapiens | 2,687 | Knn, PageRank, Degree | 84.3% | 86.5% |

| Consensus Model | 12,319 | Knn, PageRank, Degree | 84.91% | 86.86% |

Data derived from multi-species GRN analysis [11]

The consensus model, trained on combined data from all organisms, achieved an average CCI of 84.91% and ROC of 86.86%, indicating robust performance across diverse biological contexts [11]. Betweenness centrality, while valuable for identifying bottleneck positions in networks, did not rank among the top three features for regulator-target classification in these experiments.

Computational Characteristics

The practical implementation of these topological features requires consideration of their computational demands, especially for large-scale GRNs:

Table 2: Computational Characteristics of Topological Features

| Feature | Computational Complexity | Scalability | Primary Biological Interpretation | ||||

|---|---|---|---|---|---|---|---|

| Degree | O( | V | ) | Excellent | Direct regulatory influence/casualty | ||

| Knn | O( | E | ) | Very Good | Neighborhood connectivity pattern | ||

| PageRank | O(k· | E | ) for k iterations | Good | Overall influence considering network structure | ||

| Betweenness | O( | V | · | E | ) | Moderate | Control over information flow, bottleneck positions |

Complexity analysis based on standard graph algorithm implementations [9]

Notably, Knn emerged as the most significant feature in decision tree models for classifying regulators versus targets, followed by PageRank and degree [11]. The high discriminatory power of Knn stems from its ability to capture the distinct connectivity patterns between regulators (which typically have low Knn, connecting to sparsely connected targets) and targets (which often have high Knn) [11].

Functional Correlations and Biological Significance

Association with Essential and Specialized Subsystems

Topological features show distinct correlations with biological function, providing a structure-function mapping that enhances their utility for gene classification:

Knn-Profile Correlations: Transcription factors with low Knn values predominantly regulate specialized subsystems (e.g., cell differentiation), whereas targets with high Knn typically participate in essential cellular processes [11]. This suggests that high Knn for targets may provide robustness against random perturbations, ensuring reliable signal reception for vital subsystems.

PageRank/Degree Functional Associations: Regulatory elements with high PageRank or degree values frequently control life-essential subsystems [11]. The high PageRank scores ensure robustness of essential functions against random perturbations, as these nodes maintain influence through multiple network pathways.

Betweenness Centrality in Disease Contexts: While not foremost in regulator classification, betweenness centrality excels at identifying disease-related genes through network diffusion approaches [12]. Genes with high betweenness act as critical bottlenecks, and their disruption can have widespread network consequences, making them prime candidates for disease association studies.

The relationship between topological features and their functional implications can be visualized as follows:

Evolutionary Conservation and Duplication Effects

Topological features exhibit evolutionary conservation patterns, with Knn, PageRank, and degree maintaining their discriminative power across diverse organisms from prokaryotes to mammals [11]. Gene duplication events significantly influence these topological properties:

Target Duplication: Increasing the degree of regulators (through target duplication) gradually decreases the regulator's Knn [11].

Regulator Duplication: Increasing the degree of targets (through regulator duplication) increases the regulator's Knn [11].

These evolutionary dynamics shape the characteristic topological profiles observed in modern GRNs, with TF-hubs typically exhibiting low Knn values, indicating they primarily connect to sparsely connected targets [11].

Integration in Machine Learning Frameworks

Advanced Graph Neural Network Approaches

Recent advancements in GRN analysis have incorporated topological features into sophisticated Graph Neural Network (GNN) architectures. The GTAT-GRN (Graph Topology-Aware Attention method) exemplifies this approach, integrating multi-source feature fusion with topological attention mechanisms [8] [10]. This framework combines:

- Temporal Features: Gene expression dynamics across time points

- Expression-Profile Features: Baseline expression levels and variability

- Topological Features: Structural properties including degree, Knn, PageRank, and betweenness centrality

The GTAT component dynamically captures high-order dependencies and asymmetric topological relationships among genes, significantly improving inference accuracy over traditional methods like GENIE3 and GreyNet [8] [10]. Experimental results on benchmark datasets (DREAM4 and DREAM5) demonstrate that GTAT-GRN achieves superior performance in AUC (Area Under Curve), AUPR (Area Under Precision-Recall Curve), and Top-k metrics (Precision@k, Recall@k, F1@k) [8].

Stable Learning for Enhanced Generalization

A significant challenge in GRN analysis is the Out-of-Distribution (OOD) problem, where models trained on one data distribution perform poorly on data from different distributions. Stable-GNN approaches address this by:

- Incorporating feature sample weighting decorrelation in random Fourier transform space

- Eliminating spurious correlations while preserving genuine causal features

- Maintaining predictive performance on both training distribution and unseen test distributions [13]

These methods demonstrate that traditional GNN models can suffer 5.66-20% performance degradation under OOD settings, while Stable-GNN architectures maintain robust performance across distributions [13].

Practical Implementation Guide

Research Reagent Solutions

Implementing topological feature analysis requires specific computational tools and resources:

Table 3: Essential Research Reagents and Computational Tools

| Resource Type | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| GRN Datasets | DREAM4, DREAM5, RegulonDB, STRING | Benchmarking & Validation | Standardized performance evaluation [13] [8] |

| Network Analysis | NetworkX, igraph, Cytoscape | Topological Feature Computation | Centrality calculation, visualization [9] |

| Machine Learning | Scikit-learn, PyTorch, TensorFlow | Classifier Implementation | Decision trees, GNNs, model training [11] |

| Biological Validation | GeneOntology, DisGeNET | Functional Enrichment Analysis | Biological significance assessment [11] [12] |

Selection Guidelines for Research Applications

Choosing appropriate topological features depends on specific research objectives:

Regulator-Target Classification: Prioritize Knn, PageRank, and degree, which collectively provide ~85% classification accuracy [11].

Essential Gene Identification: Focus on PageRank and degree, as high values strongly correlate with life-essential subsystems [11].

Disease Gene Prioritization: Include betweenness centrality in network diffusion models, as it effectively identifies critical bottlenecks in disease pathways [12].

Large-Scale Network Analysis: For massive GRNs, consider computational complexity, potentially focusing on lower-complexity metrics (degree, Knn) before incorporating more demanding measures (betweenness).

The integration of multiple topological features within GNN architectures like GTAT-GRN represents the state-of-the-art, leveraging the complementary strengths of different metrics to achieve superior inference accuracy and biological insight [8] [10].

In the realm of systems biology, network topology—the architectural arrangement of connections between biological components—serves as a fundamental determinant of cellular behavior and function. Rather than being mere abstractions, the structural properties of biological networks directly govern information processing, signal propagation, and functional outcomes within cells. The emergence of sophisticated machine learning approaches for topological feature classification is now enabling researchers to move beyond static descriptions to predictive models that accurately link network structure to biological activity. This paradigm shift is particularly evident in the study of Gene Regulatory Networks (GRNs), where topological analysis is revealing how hierarchical arrangements, modular organization, and specific network motifs encode functional capabilities and constrain evolutionary possibilities.

The integration of topological features into machine learning frameworks represents a frontier in computational biology, allowing scientists to decode the biological information embedded in network architecture. From identifying key regulatory hubs in disease processes to predicting the functional impact of structural variations, topology-aware models are providing unprecedented insights into the design principles of biological systems. This guide examines the current landscape of topological analysis in GRN research, comparing the performance of leading computational methods and providing the experimental protocols necessary for implementing these approaches in drug discovery and basic research.

Quantitative Comparison of Topology-Aware GRN Inference Methods

The performance advantages of topology-aware methods for GRN inference become evident when comparing their accuracy against traditional approaches across standardized benchmarks. The table below summarizes quantitative performance metrics for leading methods on the DREAM4 and DREAM5 benchmark datasets, which represent community standards for evaluating GRN inference algorithms.

Table 1: Performance Comparison of GRN Inference Methods on Standardized Benchmarks

| Method | Approach Category | AUC | AUPR | Key Topological Features Leveraged |

|---|---|---|---|---|

| GTAT-GRN | Graph Topology-Aware Attention | 0.812 | 0.785 | Multi-source feature fusion, topological attributes, graph structure information [8] |

| GENIE3 | Traditional Machine Learning | 0.721 | 0.693 | Expression patterns only [8] |

| GreyNet | Statistical Inference | 0.698 | 0.674 | Linear dependencies [8] |

| Hybrid CNN-ML | Hybrid Deep Learning | >0.950 | N/A | Integrated prior knowledge, nonlinear regulatory relationships [14] |

| TGPred | Integrated Optimization | N/A | N/A | Statistics, machine learning, optimization [14] |

The superior performance of topology-aware methods stems from their ability to capture the non-linear regulatory relationships and higher-order dependencies that characterize biological networks. GTAT-GRN specifically achieves its performance edge through a graph topology-aware attention mechanism that dynamically captures asymmetric topological relationships between genes, going beyond predefined graph structures to uncover latent regulatory patterns [8]. Similarly, hybrid models that combine convolutional neural networks with machine learning demonstrate exceptional accuracy by integrating prior biological knowledge with learned topological features, achieving over 95% accuracy on holdout test datasets [14].

When evaluating these methods, it's important to consider their performance on specific topological metrics that measure their ability to recover key network structures. The following table compares methods on their precision in identifying different network components and motifs.

Table 2: Topological Precision Metrics for GRN Inference Methods

| Method | Precision@K | Recall@K | F1@K | Hub Gene Identification Accuracy | Regulatory Motif Recovery |

|---|---|---|---|---|---|

| GTAT-GRN | High | High | High | Improved | Enhanced [8] |

| Hybrid CNN-ML | Highest | High | Highest | Superior | Superior [14] |

| Traditional ML | Moderate | Moderate | Moderate | Limited | Limited [8] [14] |

The high performance of topology-aware methods on these metrics demonstrates their particular strength in identifying biologically significant network elements, including master regulators and key hub genes. For instance, hybrid approaches have demonstrated superior precision in ranking known master regulators such as MYB46 and MYB83, along with upstream regulators from the VND, NST, and SND families [14]. This capability has direct implications for drug development, as these regulatory hubs often represent promising therapeutic targets.

Experimental Protocols for Topological Feature Analysis

Protocol 1: Graph Topology-Aware Attention for GRN Inference

The GTAT-GRN framework represents a sophisticated approach for inferring gene regulatory networks by integrating multi-source biological features with graph structural information [8].

Step 1: Multi-Source Feature Extraction

- Temporal Features: Extract time-series expression trajectories including mean, standard deviation, maximum/minimum values, skewness, kurtosis, and directional trends from expression data. Apply Z-score normalization to standardize expression values across timepoints using the formula:

X̂_ti = (X_ti - μ_i)/σ_i, where μi and σi represent the mean and standard deviation of gene i's expression [8]. - Expression-Profile Features: Compute baseline expression levels, expression stability across conditions, expression specificity, pattern classification, and pairwise correlation coefficients between genes.

- Topological Features: Calculate network centrality measures including degree centrality, in-degree/out-degree distributions, clustering coefficient, betweenness centrality, local efficiency, PageRank scores, and k-core indices [8].

Step 2: Feature Fusion and Representation Learning

- Integrate the multi-source features through a dedicated fusion module that jointly models temporal dynamics, baseline expression patterns, and topological attributes.

- Transform heterogeneous features into unified node representations with enriched multidimensional expressiveness for downstream graph learning tasks [8].

Step 3: Graph Topology-Aware Attention Mechanism

- Implement the Graph Topology-Aware Attention Network (GTAT) which combines graph structure information with multi-head attention.

- Dynamically capture high-order dependencies and asymmetric topological relationships between genes during graph learning.

- Generate attention weights that reflect both node feature similarity and structural relationships within the network [8].

Step 4: GRN Prediction and Validation

- Process the enriched node representations through feedforward networks with residual connections.

- Generate final GRN predictions through an output layer that estimates regulatory relationships.

- Validate against benchmark datasets (DREAM4, DREAM5) using AUC, AUPR, and Top-k metrics (Precision@k, Recall@k, F1@k) [8].

Graph Topology-Aware Attention Workflow

Protocol 2: Topological Data Analysis with Persistent Homology

Persistent homology provides a powerful mathematical framework for extracting robust topological features from biomolecular data by capturing enduring topological characteristics across multiple scales [15].

Step 1: Molecular Dynamics Simulation and Data Generation

- Generate molecular dynamics trajectories of biological membranes at varying temperatures (280-330K).

- Construct lipid bilayer systems with 117 DPPC lipids per leaflet using CHARMM-GUI.

- Solvate and ionize systems with 150mM NaCl using CHARMM36m force field parameters and TIP3P water model.

- Perform equilibration runs followed by 100-ns production simulations for each temperature replica using NAMD.

- Extract coordinate frames for analysis, typically using the last 200 frames (2 ns) to minimize degenerate starting condition effects [15].

Step 2: Simplicial Complex Construction and Filtration

- Represent atomic coordinates as point clouds in 3D space (0-simplices).

- Grow n-dimensional spheres around each vertex with increasing radius (filtration parameter α).

- Track intersection patterns between spheres as they expand, forming simplicial complexes.

- Record formation and disappearance of topological features (connected components, loops, voids) during the filtration process [15].

Step 3: Persistent Homology Feature Extraction

- Compute persistence diagrams capturing the birth and death scales of topological features.

- Transform persistence diagrams into persistence images for machine learning compatibility.

- Extract topological fingerprints that encode multiscale structural information about lipid configurations [15].

Step 4: Neural Network-Based Temperature Prediction

- Train attention-based neural networks (Visual Transformer or ConvNeXt) on persistence image data.

- Predict effective lipid temperatures from static coordinate data.

- Validate predictions against known temperature values from MD simulations [15].

Topological Data Analysis with Persistent Homology

Protocol 3: Topology-Based Negative Example Selection for Protein Function Prediction

Automated protein function prediction represents a challenging classification problem where negative examples are rarely documented in biological databases. Topological features derived from protein networks provide critical information for identifying reliable negative examples [16].

Step 1: Protein Network Construction

- Retrieve protein-protein interaction networks from STRING database (version 10.0 or later).

- Filter connections using a combined score threshold of 700 to ensure high-confidence interactions.

- Normalize network edge weights to the [0,1] interval for comparative analysis.

- Construct graphs G〈V,W〉 where V represents proteins and W contains confidence-weighted interactions [16].

Step 2: Term-Aware and Term-Unaware Feature Calculation

- Term-Unaware Features: Compute network centrality measures including weighted degree, betweenness centrality, and clustering coefficient. Calculate protein multifunctionality metrics independent of specific Gene Ontology terms.

- Term-Aware Features: Calculate positive neighborhood metrics, mean positive neighborhood, and other features dependent on specific GO term annotations [16].

Step 3: Feature Selection and Negative Example Identification

- Apply feature selection algorithms to identify topological features most discriminative for reliable negative examples.

- Utilize temporal holdout validation by comparing GO annotation releases from different time periods.

- Define category Cnp (negative proteins that become positive) as those receiving new annotations during the holdout period [16].

Step 4: Protein Function Prediction and Validation

- Train classifiers using the selected topological features to predict protein-GO term associations.

- Validate predictions against subsequent GO annotation releases.

- Evaluate precision-recall metrics for specific GO term categories (Biological Process, Molecular Function, Cellular Component) [16].

Topological Determinants of Biological Network Function

Local Network Motifs and Regulatory Patterns

Local topological motifs serve as fundamental computational units within larger biological networks, generating characteristic functional capabilities through specific connection patterns. The diamond motif (bi-parallel) and triangle motif (feed-forward loop) represent two particularly important topological patterns that distinctly influence signal processing and genetic variance propagation [17].

In regulatory networks, the sign consistency across paths within these motifs determines their operational characteristics. Coherent motifs, where all paths from regulator to target have the same effect (activation or repression), amplify trans-acting genetic variance and enhance signal propagation. Conversely, incoherent motifs with opposing effects along different paths generate negative covariance terms that buffer against variation [17]. The probability of motif coherence is mathematically determined by (2p+ - 1)^k where p+ represents the fraction of activators and k denotes path length, creating a direct link between topological structure and functional output.

Experimental validation demonstrates that these local motifs significantly impact the distribution of expression heritability, with coherent motifs substantially increasing the trans-acting variance contribution to specific genes. This explains why master regulators operating through coherent feed-forward loops typically exhibit outsized effects on network behavior and represent promising intervention points for therapeutic development [17].

Hierarchical Organization and Modular Architecture

Biological networks frequently exhibit hierarchical organization with master regulators controlling coherent functional modules, a topological arrangement that profoundly shapes their genetic architecture and functional capabilities. This hierarchical structure creates short network paths that concentrate regulatory influence and genetic effects at specific hub genes [17].

The modular architecture of biological networks provides both functional specialization and evolutionary robustness. Analysis of heritability distributions in human gene expression demonstrates that realistic GRN architectures must be sparse yet enriched for master regulators and modular groups to explain observed patterns of cis- and trans-acting heritability [17]. This topological arrangement creates a system where most trans-acting expression variance flows through short paths and concentrates at key pleiotropic genes.

From a machine learning perspective, these global topological properties provide critical constraints for network inference algorithms. Methods that incorporate hierarchical priors or modularity constraints demonstrate significantly improved accuracy in recovering true biological networks compared to approaches that treat all potential connections equally [14] [17].

Cross-Species Topological Conservation and Transfer Learning

The conservation of topological principles across species enables powerful transfer learning approaches for GRN inference, particularly valuable for non-model organisms with limited experimental data [14]. By leveraging topological regularities conserved through evolution, models trained on well-characterized organisms can accurately predict regulatory relationships in less-studied species.

Protocol: Cross-Species GRN Inference via Transfer Learning

- Train topology-aware models on Arabidopsis thaliana using comprehensive transcriptomic compendia (22,093 genes across 1,253 expression samples).

- Identify orthologous gene pairs between source and target species using sequence similarity and synteny analysis.

- Map topological features from source to target network using orthology relationships.

- Fine-tune models on limited target-species data (poplar: 34,699 genes across 743 samples; maize: 39,756 genes across 1,626 samples).

- Validate predictions against known regulatory relationships from literature and experimental data [14].

This approach demonstrates that topological principles remain sufficiently conserved across evolutionary distances to enable accurate cross-species predictions, significantly outperforming species-specific models when training data is limited. The success of transfer learning underscores the fundamental nature of topological constraints in shaping biological network architecture across diverse organisms [14].

Research Reagent Solutions for Topological Analysis

Table 3: Essential Research Tools for Network Topology Analysis

| Resource Name | Type | Function | Application Context |

|---|---|---|---|

| STRING Database | Protein Network Resource | Provides confidence-weighted protein-protein interactions | Network construction for topological feature extraction [16] |

| CHARMM-GUI | Simulation Toolset | Membrane bilayer construction and molecular dynamics setup | Persistent homology analysis of lipid membranes [15] |

| DREAM Challenges | Benchmark Datasets | Standardized GRN inference benchmarks | Method performance validation [8] [18] |

| MembTDA | Topological Analysis Tool | Persistent homology-based lipid order characterization | Effective temperature prediction from static coordinates [15] |

| TopoDoE | Experimental Design Tool | Topology-guided perturbation selection | GRN refinement through targeted experimentation [18] |

| 3Prop | Feature Extraction Algorithm | Network feature propagation | Protein function prediction [16] |

| Viz Palette | Accessibility Tool | Color palette evaluation for data visualization | Accessible scientific communication [19] |

The integration of topological analysis with machine learning represents a paradigm shift in computational biology, moving beyond descriptive network maps to predictive models that accurately link structure to function. The performance advantages of topology-aware methods—from GTAT-GRN's graph attention mechanisms to persistent homology approaches—demonstrate that explicitly modeling network architecture is essential for accurate biological prediction.

For drug development professionals, these approaches offer new opportunities for target identification by pinpointing topologically significant hub genes and master regulators that disproportionately influence network behavior. The conservation of topological principles across species further enables knowledge transfer from model organisms to human pathophysiology, accelerating therapeutic discovery.

As topological feature classification continues to evolve, the integration of multiscale network analysis with deep learning frameworks promises to further unravel the complex relationship between biological structure and function, ultimately enabling the rational design of therapeutic interventions that target not just individual components, but the overarching architecture of biological systems.

Inference of Gene Regulatory Networks (GRNs) is a cornerstone of systems biology, aiming to elucidate the complex web of interactions where regulator genes control the expression of their target genes [20] [10]. Accurately distinguishing regulators from targets is not merely a topological exercise; it is fundamental to understanding cellular behavior, disease mechanisms, and identifying potential therapeutic targets [10]. Within the architecture of a GRN, regulators, such as transcription factors, often occupy structurally distinct positions compared to their targets. This article posits that machine learning (ML) classifiers, particularly those leveraging key topological features like K-Nearest Neighbors (KNN)-based metrics, PageRank, and degree centrality, are powerful tools for deciphering this regulatory code from network structure. We frame this discussion within a broader thesis on GRN topological feature classification, arguing that the integration of these features provides a robust, computationally efficient framework for regulatory role identification, especially in data-scarce scenarios prevalent in biological research.

The challenge of GRN inference is multifaceted. Gene expression data is often noisy, and many deep learning approaches require large amounts of labeled data—known regulatory interactions—that are costly and difficult to obtain for less-studied cell types or species [20]. Furthermore, conventional methods struggle with high computational complexity and often fail to capture the non-linear dependencies inherent in gene regulation [10]. Topology-based classification offers a compelling solution by capitalizing on the inherent structural patterns of regulatory networks. By treating the GRN as a graph where genes are nodes and regulatory interactions are edges, we can quantify the importance and role of each node through features derived from its connections.

Topological Features as Classifiers: A Comparative Analysis

The structural properties of a GRN provide a rich source of information for distinguishing between regulators and targets. The underlying hypothesis is that these two classes of genes occupy distinct topological niches: regulators tend to be hubs with significant influence over the network, while targets often reside in more peripheral positions. The following section provides a detailed comparative analysis of three key topological classifiers, summarizing their core principles, advantages, and limitations when applied to GRN inference.

Table 1: Comparative Analysis of Topological Classifiers for GRN Inference

| Classifier | Core Principle | Advantages in GRN Context | Limitations |

|---|---|---|---|

| Degree Centrality | Quantifies the number of direct connections a node has. In directed GRNs, in-degree (inputs) and out-degree (outputs) are distinguished [10]. | - Computationally simple and intuitive.- High out-degree may indicate a transcription factor regulating many targets.- Serves as a foundational feature for more complex metrics. | - Local view; ignores the broader network context.- Cannot identify influential nodes that are not highly connected (e.g., bottlenecks). |

| PageRank | Measures node importance based on the quantity and quality of its incoming connections, simulating a "random walk" on the graph [21] [22] [10]. | - Global perspective of node influence.- Can identify key regulators that are highly influential even with moderate direct connections.- Robust against noise. | - Higher computational cost than degree.- May be less effective in very sparse, tree-like networks without shared neighbors [22]. |

| K-Nearest Neighbors (KNN) | A non-parametric ML algorithm that classifies a node based on the majority label of its 'k' most similar nodes in the feature space (e.g., a space of topological features) [23] [24]. | - Flexibility without strict data distribution assumptions [23].- Robustness to label noise in large-scale biological datasets [23].- Can be enhanced for confidence calibration [23]. | - Performance can degrade with many noisy, non-informative features [24].- "Curse of dimensionality" in high-dimensional feature spaces [24]. |

Advanced Methodologies and Hybrid Approaches

The baseline capabilities of these classifiers can be significantly enhanced through advanced methodologies. For KNN, a major innovation addresses the reliability of its predictions. The calibrated kNN approach introduces confidence-awareness through a two-layered neighborhood analysis [23]. For a given query gene, it first finds its k1 nearest neighbors (first layer). Then, for each of these neighbors, it finds their k2 nearest neighbors (second layer). A confidence score is calculated based on the label agreement within this second-layer neighborhood, leading to more reliable classification, which is critical for biomedical applications [23].

Similarly, PageRank's utility can be extended beyond simple influence measurement. It can be combined with local similarity-based methods for link prediction, a task at the heart of GRN inference. This hybrid approach helps predict new regulatory interactions between nodes that do not share common neighbors, a known weakness of local methods, thereby improving the precision of network reconstruction [22].

Ultimately, the most powerful modern approaches involve feature fusion. Instead of relying on a single metric, methods like GTAT-GRN integrate multiple topological features—including degree centrality, PageRank, and others like betweenness centrality and clustering coefficient—alongside temporal and expression-profile features [10]. This multi-source fusion enriches the representation of each gene, allowing a classifier to learn from a comprehensive profile that captures both its structural role and biological context.

Experimental Protocols and Benchmarking

Evaluating the performance of topological classifiers requires rigorous experimentation on standardized datasets and against established baseline methods. The following protocols and data are drawn from recent state-of-the-art research in GRN inference.

Protocol 1: Few-Shot GRN Inference with Graph Meta-Learning

- Objective: To infer GRNs for target cell lines with only a limited number of known regulatory interactions, framing the problem as a few-shot learning task.

- Method: The Meta-TGLink model uses a structure-enhanced graph meta-learning framework [20].

- Meta-Task Construction: The model is trained on multiple meta-tasks. Each task is a subgraph-level link prediction problem consisting of a support set (a few known regulatory links) and a query set (links to be predicted). This teaches the model to transfer knowledge across different parts of the network.

- Model Architecture (TGLink): The model incorporates a GNN combined with a Transformer architecture to integrate relational and positional information. This enhances its ability to capture long-range dependencies in the network.

- Bi-Level Optimization: A meta-training process updates the model's parameters so it can quickly adapt to new, unseen meta-tasks (i.e., new cell lines with sparse known data) with only a few gradient steps.

- Benchmarking: Meta-TGLink was evaluated against nine baseline methods (including GENIE3, DeepSEM, and other GNN-based methods) on four human cell line datasets (A375, A549, HEK293T, PC3). Performance was measured using Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) [20].

Protocol 2: Multi-Source Feature Fusion with Graph Topological Attention

- Objective: To accurately infer GRNs by integrating multiple biological data sources and explicitly modeling topological dependencies.

- Method: The GTAT-GRN model employs a deep graph neural network with a graph topology-aware attention mechanism [10].

- Feature Fusion: A module jointly models three information streams:

- Temporal Features: Statistical indicators (mean, standard deviation, trend) from gene expression time-series data.

- Expression-Profile Features: Expression levels, stability, and specificity under wild-type and multiple conditions.

- Topological Features: Metrics including degree centrality, PageRank, betweenness centrality, and clustering coefficient, calculated from the GRN graph structure.

- Graph Topology-Aware Attention (GTAT): This module combines the graph structure with a multi-head attention mechanism to dynamically capture high-order and potential regulatory dependencies between genes.

- Training and Prediction: The fused features are processed through the GTAT module and a feedforward network to output a score for each potential regulatory link.

- Feature Fusion: A module jointly models three information streams:

- Benchmarking: GTAT-GRN was evaluated on standard benchmark datasets (DREAM4, DREAM5) and compared against state-of-the-art methods like GENIE3 and GreyNet. Performance was assessed using AUC, AUPR, and Top-k metrics (Precision@k, Recall@k) [10].

Table 2: Performance Benchmarking of Advanced Models on GRN Inference Tasks

| Model | Core Approach | Dataset | Key Metric | Reported Performance | Comparative Note |

|---|---|---|---|---|---|

| Meta-TGLink [20] | Graph Meta-Learning | A375, A549, HEK293T, PC3 | AUROC | Outperformed 9 baseline methods | Showed ~26% average improvement in AUROC over unsupervised methods. |

| GTAT-GRN [10] | Multi-Source Feature Fusion + Topological Attention | DREAM4, DREAM5 | AUC & AUPR | Consistently higher than benchmarks | Confirmed robustness and capacity to capture key regulatory links. |

| Calibrated kNN (MaMi) [23] | Two-Layer Neighborhood Analysis | Clinical EHR Data | Classification Accuracy & Certainty | Improved accuracy and certainty assessment | Demonstrated effectiveness in providing reliable confidence scores. |

The following workflow diagram illustrates the typical process for integrating topological features into a machine learning model for GRN inference, as seen in protocols like GTAT-GRN.

The Scientist's Toolkit: Research Reagent Solutions

The application of these computational methods relies on a suite of foundational data resources and software tools. The table below details essential "research reagents" for scientists embarking on GRN inference using topological features.

Table 3: Essential Research Reagents for Topological GRN Classification

| Item Name | Type | Primary Function in Research |

|---|---|---|

| Gene Expression Time-Series Data | Data | Provides dynamic expression levels for calculating temporal features and serves as the primary input for inferring initial network structures. |

| Prior Regulatory Network (e.g., from ChIP-Atlas) | Data/Known Interactions [20] | Supplies a set of known gene-regulatory relationships for model training (supervised learning) and validation of predictions. |

| Topological Feature Calculator (e.g., NetworkX) | Software Tool | A Python library used to compute key graph metrics from a network, including Degree Centrality, PageRank, betweenness, and clustering coefficient. |

| Benchmark Datasets (DREAM4, DREAM5) | Data | Standardized, gold-standard datasets used to evaluate and compare the performance of different GRN inference methods objectively [10]. |

| Graph Neural Network (GNN) Framework (e.g., PyTorch Geometric) | Software Tool | Provides the building blocks for implementing and training advanced models like Meta-TGLink and GTAT-GRN that learn from network structure. |

The distinction between regulators and targets in Gene Regulatory Networks is a fundamental problem in computational biology, with direct implications for understanding disease and guiding drug development. As evidenced by the latest research, topological features provide a powerful lexicon for this task. Degree centrality offers a simple yet effective initial filter for hub regulators, while PageRank delivers a more nuanced measure of influence that captures a gene's importance within the broader network context. When used as features for a KNN or a more sophisticated Graph Neural Network classifier, these metrics enable robust prediction of regulatory roles.

The trajectory of research clearly points toward hybrid, multi-source approaches. The most accurate models, such as GTAT-GRN and Meta-TGLink, do not rely on a single feature but successfully fuse topological, temporal, and expression-profile data. Furthermore, the development of meta-learning frameworks addresses the critical challenge of data scarcity, enabling reliable inference in few-shot scenarios that are common in practice. For researchers and drug development professionals, this evolving toolkit offers increasingly sophisticated and dependable methods to illuminate the dark corners of the gene regulatory map, ultimately accelerating the discovery of novel therapeutic targets.

Gene regulatory networks (GRNs) represent the complex orchestration of transcriptional interactions that control cellular processes. Within these networks, life-essential subsystems—those governing fundamental processes like energy metabolism and DNA repair—and specialized subsystems—responsible for context-specific functions like cell differentiation—exhibit distinct organizational principles. Emerging research demonstrates that machine learning (ML) models can classify gene regulators based on topological features extracted from GRNs, revealing consistent patterns that distinguish these functionally distinct subsystems [11]. This classification capability provides a powerful analytical framework for predicting gene function, identifying drug targets, and understanding the fundamental architecture of cellular control systems.

The foundation of this approach lies in the insight that GRNs are scale-free networks possessing specific topological properties that can be quantified using graph theory metrics [11]. By applying ML algorithms to these topological features, researchers can now predict whether a transcription factor (TF) primarily regulates essential core processes or specialized adaptive functions with remarkable accuracy. This guide compares the performance of different topological features and ML approaches in classifying subsystem regulators, providing experimental protocols and data to guide research in computational biology and drug development.

Analytical Framework: Topological Features for Subsystem Classification

Defining Key Topological Metrics

Machine learning classification of GRN subsystems relies on quantifying specific topological properties that capture distinct aspects of a gene's position and influence within the network. Research has consistently identified three features as particularly discriminative: the average nearest neighbor degree (Knn), PageRank, and degree centrality [11]. The table below defines these and other important topological features used in GRN analysis.

Table 1: Key Topological Features in GRN Analysis

| Feature Name | Mathematical Definition | Biological Interpretation | Measurement Scale |

|---|---|---|---|

| Knn (Average Nearest Neighbor Degree) | Average degree of a node's direct neighbors | Measures the connectivity pattern of a gene's interaction partners; indicates whether hubs connect to other hubs or to less connected genes | Local |

| PageRank | Iterative algorithm weighting incoming links based on their own importance | Quantifies the relative influence of a gene based on how many important regulators target it | Global |

| Degree Centrality | Number of direct connections a node has | Simple measure of a gene's connectivity; hub genes have high degree | Local |

| Betweenness Centrality | Number of shortest paths passing through a node | Identifies genes that act as bridges between different network modules | Global |

| Clustering Coefficient | Measures how connected a node's neighbors are to each other | Indicates the presence of tightly-knit functional modules or complexes | Local |

Performance Comparison of Topological Features

Decision tree models built exclusively on Knn, PageRank, and degree have demonstrated exceptional performance in distinguishing regulators from target genes, achieving an average correct classification instance (CCI) of 84.91% and ROC average of 86.86% across multiple species [11]. The comparative strength of these three key features is detailed in the table below.

Table 2: Performance Comparison of Key Topological Features in Subsystem Classification

| Topological Feature | Classification Accuracy | Strength in Discriminating Subsystems | Robustness to Sampling Bias |

|---|---|---|---|

| Knn | High (Primary split in decision trees) | Excellent separator: Low Knn → specialized subsystems; Intermediate Knn → essential subsystems | Generally robust (local measure) [25] |

| PageRank | High (Secondary decision node) | Strong identifier: High PageRank → life-essential subsystems | Less robust (global measure) [25] |

| Degree Centrality | High (Tertiary decision node) | Good indicator: High degree → essential subsystems; Low degree → specialized functions | Generally robust (local measure) [25] |

| Betweenness Centrality | Moderate | Identifies bridge genes connecting modules | Variable depending on network type |

| Clustering Coefficient | Moderate | Detects tightly-coupled functional modules | Generally robust |

Experimental evidence from GRNs of Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens confirms that these topological relationships are evolutionarily conserved, suggesting they represent fundamental design principles of transcriptional regulation [11]. The decision tree logic consistently classifies TFs with low Knn as regulators of specialized subsystems, while TFs with intermediate Knn combined with high PageRank or degree typically control life-essential subsystems.

Experimental Protocols for Topological Feature Analysis

Core Methodology for GRN Topological Classification

The standard workflow for classifying life-essential versus specialized subsystems based on topological features involves a structured pipeline from data collection to model validation. Below is the experimental protocol implemented in foundational studies [11].

Table 3: Experimental Protocol for GRN Topological Classification

| Step | Procedure | Parameters | Output |

|---|---|---|---|

| 1. Data Collection | Compile regulatory interactions from species-specific databases | 49,801 regulatory interactions; 12,319 nodes (1,073 regulators, 11,246 targets) | Raw GRN structure |

| 2. Network Filtering | Apply quality filters to remove spurious interactions | Scale-free property verification (R² ≈ 1) | Filtered GRN |

| 3. Feature Calculation | Compute topological metrics for each node | Knn, PageRank, degree centrality, betweenness, etc. | Feature matrix |

| 4. Model Training | Train decision tree classifiers on topological features | 12 balanced training sets; 1,938 instances/set | Trained classifier |

| 5. Validation | Test model on held-out datasets | CCI, ROC analysis | Performance metrics |

The following diagram illustrates the logical decision process used by the classification model to distinguish regulators from target genes based on topological features:

Advanced Machine Learning Approaches

While decision trees provide interpretable models, recent advances incorporate more sophisticated ML and deep learning architectures. GTAT-GRN employs a graph topology-aware attention method that integrates multi-source feature fusion, combining temporal expression patterns, baseline expression levels, and structural topological attributes [8]. This approach demonstrates how topological features can be enriched with complementary data types to improve classification performance.

Hybrid models that combine convolutional neural networks (CNNs) with traditional machine learning have shown particularly strong performance, achieving over 95% accuracy in holdout test datasets for GRN inference [14]. These models excel at identifying known transcription factors regulating specific pathways and demonstrate higher precision in ranking key master regulators.

For non-model species with limited training data, transfer learning strategies successfully leverage models trained on well-characterized species (e.g., Arabidopsis thaliana) to predict regulatory relationships in less-characterized species (e.g., poplar, maize) [14]. This approach demonstrates that topological relationships conserved across evolution can facilitate knowledge transfer between species.

Functional Implications of Topological Signatures

Distinct Topological Roles of Life-Essential vs. Specialized Subsystems

The classification of subsystems based on topological features reveals fundamental design principles of GRNs. Life-essential subsystems, encompassing processes like transcription, protein transport, and energy metabolism, are predominantly governed by TFs with intermediate Knn combined with high PageRank or degree centrality [11]. This specific topological signature ensures two critical properties: (1) high probability that TFs will be accessed by random signals, and (2) high probability of signal propagation to target genes, thereby ensuring subsystem robustness.

In contrast, specialized subsystems, such as those controlling cell differentiation, are mainly regulated by TFs with low Knn [11]. This topological arrangement creates more modular, self-contained regulatory units that can be activated or silenced without destabilizing core cellular functions. The following diagram illustrates how gene duplication events shape these distinct topological configurations over evolutionary timescales:

Experimental Validation of Topological Predictions

Biological evidence supports the functional implications of these topological classifications. Genes classified into target and regulator leaves of consensus decision trees correspond to cellular processes consistent with their predicted roles [11]. The high PageRank associated with life-essential subsystems provides robustness against random perturbation, ensuring maintenance of core cellular functions despite stochastic events or environmental challenges.

Specialized subsystems, characterized by low Knn regulators, exhibit more flexible evolutionary patterns, allowing for species-specific adaptation without compromising essential functions. This topological arrangement creates evolutionary "sandboxes" where innovation can occur with minimal risk to core processes.

Table 4: Research Reagent Solutions for GRN Topological Analysis

| Resource Category | Specific Tools/Databases | Function in Analysis | Application Context |

|---|---|---|---|

| GRN Databases | BioGRID, STRING, Species-specific regulatory databases | Provide validated regulatory interactions for network construction | Ground truth data for all topological analyses [25] |

| Topology Calculation | NetworkX (Python), igraph (R) | Compute Knn, PageRank, degree, and other centrality measures | Feature extraction for classification models [25] |

| ML Frameworks | Scikit-learn, PyTorch, TensorFlow | Implement decision trees, GNNs, and hybrid models | Model training and classification [11] [14] |

| Specialized GRN Tools | GTAT-GRN, DiffGRN, GENIE3 | Network inference and topology-aware analysis | Advanced topological feature integration [26] [8] |

| Validation Resources | ChIP-seq, DAP-seq, Y1H experimental data | Biological validation of topological predictions | Experimental confirmation of classifications [14] |

The classification of life-essential versus specialized subsystems based on topological features represents a powerful application of machine learning in systems biology. The comparative analysis reveals that Knn, PageRank, and degree centrality collectively provide the strongest discriminatory power for identifying subsystem types, with each feature contributing unique information about network organization.

While decision trees based on these three features achieve approximately 85% classification accuracy, emerging approaches that integrate topological features with additional data types show promise for further improvement. Graph neural networks with topology-aware attention mechanisms [8] and hybrid CNN-ML models [14] demonstrate how topological features can be fruitfully combined with temporal expression patterns and other biological data to enhance predictive performance.

For drug development professionals, these topological classifications offer strategic insights for identifying potential therapeutic targets. Essential subsystem regulators, with their high PageRank and specific Knn profiles, represent potential targets for fundamental cellular processes, while specialized subsystem regulators may offer opportunities for more targeted interventions with reduced side-effect profiles. As topological analysis frameworks continue to evolve, they will increasingly enable predictive modeling of network perturbations, accelerating the identification of therapeutic interventions that specifically modulate disease-relevant subsystems while preserving essential cellular functions.

Gene regulatory networks (GRNs) represent the complex circuits of interactions where transcription factors (TFs) regulate target genes, ultimately controlling cellular processes, development, and environmental responses [11]. The topological structure of these networks—how nodes (genes) and edges (regulatory interactions) are arranged—fundamentally influences their functional robustness, evolutionary adaptability, and control over essential biological subsystems. Among evolutionary mechanisms, gene duplication stands as a principal architect that actively shapes and reshapes GRN topology over evolutionary timescales.

This review examines how gene and whole-genome duplication events drive the structural evolution of GRNs, with significant implications for topological feature classification in machine learning research. We explore the specific topological metrics most sensitive to duplication events, present comparative experimental data on their evolutionary dynamics, and detail methodologies for quantifying these relationships. Understanding these evolutionary principles provides researchers with powerful insights for improving GRN inference algorithms, identifying disease-associated regulatory disruptions, and discovering novel therapeutic targets through network-based approaches.

Key Topological Features for GRN Classification and Evolution

Essential Topological Metrics for GRN Analysis

Machine learning classification of GRN components relies heavily on specific topological metrics that distinguish regulatory roles and evolutionary histories. Research has identified three particularly informative features for understanding duplication-driven network evolution [11]:

Knn (Average Nearest Neighbor Degree): Measures the average degree of a node's direct neighbors. This metric effectively distinguishes regulators from targets, with regulators typically exhibiting lower Knn values. Gene duplication significantly influences Knn values, with target duplication decreasing regulator Knn and regulator duplication increasing it [11].

PageRank: Assesses node importance based on both the quantity and quality of incoming connections. TFs with high PageRank typically control life-essential subsystems, ensuring signal propagation robustness [11].

Degree Centrality: Counts direct regulatory connections (in-degree for regulators, out-degree for targets). Degree often correlates with evolutionary age, with hub genes frequently resulting from ancient duplication events [11].

Table 1: Key Topological Features for GRN Classification and Their Evolutionary Significance

| Topological Feature | Biological Interpretation | Response to Duplication Events | Classification Value |

|---|---|---|---|

| Knn (Average Nearest Neighbor Degree) | Measures connectivity pattern of direct neighbors | Target duplication decreases regulator Knn; Regulator duplication increases regulator Knn | Primary discriminator between regulators and targets |

| PageRank | Measures node influence based on connection importance | High PageRank often conserved in essential TFs after duplication | Identifies TFs controlling life-essential subsystems |

| Degree Centrality | Number of direct regulatory connections | Increases through both target and regulator duplication | Distinguishes hub genes from peripheral nodes |

| Betweenness Centrality | Measures control over information flow in network | Can increase substantially after duplication events | Identifies bottleneck genes with strategic network positions |

Machine Learning Classification of GRN Topology

Decision tree models utilizing Knn, PageRank, and degree achieve approximately 85% accuracy in classifying nodes as regulators or targets [11]. The classification logic follows a structured hierarchy:

- Primary Split: Nodes with low Knn (categories "A-B") classify as regulators, while high Knn ("D-F") indicates targets

- Secondary Split: Intermediate Knn ("C") requires PageRank evaluation

- Tertiary Split: Remaining ambiguous cases resolved by degree centrality

This classification scheme reveals important biological insights: TFs with low Knn typically regulate specialized processes (e.g., cell differentiation), while those with high PageRank or degree often control life-essential subsystems [11]. These topological signatures directly reflect evolutionary histories including duplication events.

Experimental Evidence: Quantifying Duplication Effects on GRN Topology

Whole-Genome Duplication Studies

Recent long-term evolution experiments with snowflake yeast (Saccharomyces cerevisiae) provide direct evidence of whole-genome duplication (WGD) dynamics. In the Multicellular Long-Term Evolution Experiment (MuLTEE), spontaneous WGD occurred within the first 50 days and remained stable for over 1,000 days (∼3,000 generations) – a previously unobserved laboratory phenomenon [27]. This WGD provided immediate selective advantages by generating larger cells and bigger multicellular clusters, demonstrating how genome duplication can drive rapid evolutionary adaptation through morphological changes.

Table 2: Experimental Evidence of Duplication Effects on GRN Topology

| Experimental System | Duplication Type | Key Topological Effects | Functional Consequences |

|---|---|---|---|

| MuLTEE (S. cerevisiae) [27] | Whole-genome duplication | Increased network complexity; Emergence of aneuploidy patterns | Larger cell size; Enhanced multicellular clustering; Long-term evolutionary stability |

| E. coli GRN analysis [11] | Target gene duplication | Decreased Knn of connected regulators | Specialized subsystem regulation; Network resilience |

| S. cerevisiae GRN analysis [11] | Regulator duplication | Increased Knn of duplicated regulators | Expansion of regulatory control; Increased network modularity |

| H. sapiens GRN analysis [11] | Segmental duplication | Altered PageRank distribution of TFs | Rewiring of disease-associated regulatory pathways |

Segmental Duplication and Network Analysis

Network-based analysis of segmental duplications in the human genome has revealed principles governing their distribution and evolutionary impact. By representing duplication events as edges and affected genomic sites as nodes, researchers can reconstruct duplication histories and identify genomic features associated with increased duplication rates [28]. This approach has revealed that segmental duplications are non-randomly distributed and frequently associate with specific repeat classes, influencing GRN topology through the duplication of both genes and their regulatory elements.

Methodologies for Analyzing Duplication-Driven Topological Evolution

Computational Simulation of Duplication Events

Network dynamic simulations model how topological features emerge through evolutionary processes including duplication. Starting from a hypothetical ancestral network, simulations implementing target duplication demonstrate a gradual decrease in regulator Knn values, while regulator duplication increases regulator Knn [11]. These simulations replicate the topological patterns observed in empirical GRN data, supporting gene duplication as a fundamental mechanism shaping modern network architectures.

Advanced GRN Inference Methodologies