Decoding GRNs: How Decision Tree Models Leverage Topological Features for Biomedical Discovery

This article provides a comprehensive exploration of decision tree models for analyzing Gene Regulatory Network (GRN) topological features, a critical methodology in systems biology and computational genomics.

Decoding GRNs: How Decision Tree Models Leverage Topological Features for Biomedical Discovery

Abstract

This article provides a comprehensive exploration of decision tree models for analyzing Gene Regulatory Network (GRN) topological features, a critical methodology in systems biology and computational genomics. Tailored for researchers, scientists, and drug development professionals, it details how topological features like Knn, PageRank, and degree centrality are identified and applied to distinguish regulatory roles, predict key regulators, and associate network structures with biological function. The content spans from foundational concepts and practical implementation strategies to advanced optimization techniques and validation against state-of-the-art methods, offering a complete guide for leveraging interpretable machine learning to uncover the logic of gene regulation.

The Essential Guide to GRN Topology and Decision Tree Fundamentals

Analytical Framework for GRN Topology

Gene Regulatory Networks (GRNs) are complex systems that represent the intricate interactions between genes, transcription factors (TFs), and other regulatory molecules [1] [2]. Understanding their topology is fundamental to deciphering the molecular mechanisms that control cellular functions, development, and disease progression [3]. Topological analysis provides a quantitative framework for moving beyond mere interaction maps to reveal the organizational principles, key regulatory components, and dynamic control properties of these networks [2].

Within the specific context of decision tree models in GRN research, topological features serve as critical inputs for predicting gene function, identifying master regulators, and understanding system robustness [1] [4]. For instance, decision tree models can leverage these features to classify the functional importance of genes or to predict novel regulatory interactions [4]. The integration of degree centrality, K-nearest neighbor (Knn) connectivity, and PageRank offers a multi-faceted perspective on a gene's role, capturing not just its local connectivity but also its global influence and its position within the broader community structure of the network [3].

Core Topological Features and Their Biological Significance

The following table summarizes the definitions, biological interpretations, and applications of the three key topological features in GRN analysis.

Table 1: Core Topological Features in Gene Regulatory Network Analysis

| Feature | Mathematical Definition | Biological Interpretation | Application in Decision Tree Models |

|---|---|---|---|

| Degree | Number of direct connections (edges) a node (gene) has in the network [3]. | Indicates local connectivity and potential functional influence; high-degree "hub" genes are often master regulators or stable controllers essential for network integrity [5] [3]. | Serves as a primary feature for identifying candidate master regulator genes and assessing node criticality [4]. |

| Knn (K-nearest neighbor degree) | Average degree of the nearest neighbors of a node [3]. | Reveals network assortativity; high Knn indicates genes connected to other highly-connected genes, often forming functional modules or "rich clubs" crucial for coherent network operation [3]. | Helps in identifying functional modules and conserved sub-networks across cell types or species, informing feature selection for lineage-specific predictions [6]. |

| PageRank | Algorithm measuring node importance based on the quantity and quality of its incoming connections, where a link from an important node counts more [3]. | Identifies genes with global influence through downstream cascades; high PageRank genes are key downstream effectors or integrators of multiple pathways [3]. | Used to rank genes by their systemic influence, providing a robust feature for predicting phenotypic outcomes from regulatory perturbations [4] [7]. |

Experimental Protocols for Topological Analysis

The process of calculating these key metrics involves a structured workflow from data acquisition to final interpretation. The following diagram outlines the primary steps for a standard topological analysis of a GRN.

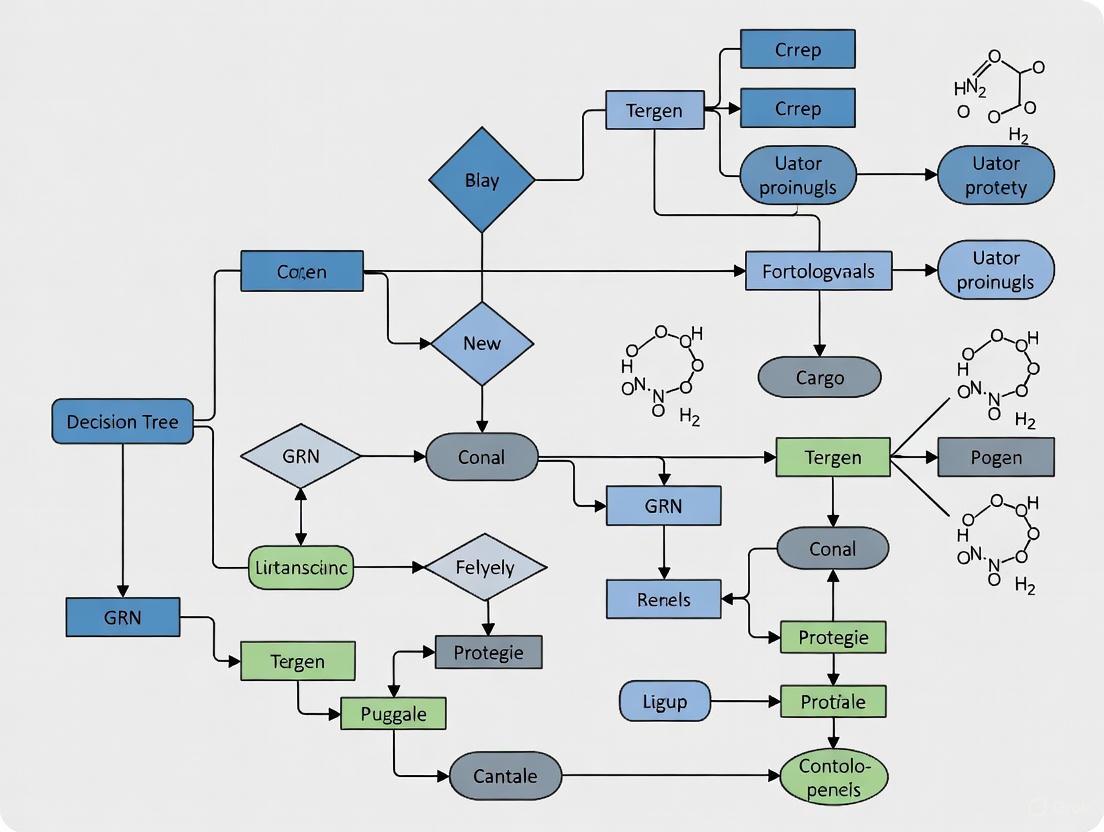

Figure 1: Workflow for Topological Analysis of Gene Regulatory Networks.

GRN Inference and Network Construction

The first step involves reconstructing the GRN from gene expression data. High-throughput techniques like single-cell RNA sequencing (scRNA-seq) provide the necessary input data [7] [6]. For analysis centered on decision tree models, methods like GENIE3 (which uses Random Forests) are particularly relevant, as they directly align with the model's logic and provide a robust set of inferred interactions [1] [4] [6]. The output is a list of regulatory interactions, which is formalized into a network graph comprising nodes (genes, TFs) and directed edges (representing regulatory links) [2] [3]. This graph is typically stored as an adjacency matrix for computational processing.

Computational Calculation of Topological Metrics

Once the network is constructed, topological features are computed using graph analysis libraries:

- Degree is calculated by summing the rows or columns of the adjacency matrix for each node [3].

- Knn for a node is computed by first identifying its direct neighbors, then calculating the average degree of those neighbors [3].

- PageRank uses an iterative algorithm that simulates a "random walk" on the network, where the importance of a node is determined by the importance of nodes that link to it [3]. This is computationally more intensive than degree calculation.

Table 2: Key Software Tools for GRN Topology Analysis

| Tool/Platform | Primary Function | Application in Topological Analysis |

|---|---|---|

| Cytoscape [3] | Network visualization and analysis. | GUI-based platform for calculating centrality measures, visualizing hubs, and exploring community structure. |

| NetworkX [3] | Python package for network analysis. | Programmatic calculation of degree, Knn, PageRank, and other complex metrics on graph objects. |

| Igraph [3] | Efficient network analysis library (R/C/Python). | Handles large-scale GRNs for fast computation of all key topological features. |

Comparative Performance Data

The predictive power of these topological features has been validated in multiple studies. The table below summarizes quantitative data on their performance in identifying key regulatory genes.

Table 3: Performance Comparison of Topological Features in GRN Studies

| Study Context | Topological Feature | Performance Metric | Result | Experimental Validation |

|---|---|---|---|---|

| Arabidopsis Lignin Biosynthesis GRN [4] | Degree & PageRank | Ranking of known master regulators (e.g., MYB46, MYB83) | Top 5% of candidate lists | Known TFs for lignin biosynthesis ranked highly [4]. |

| Hematopoiesis GRN Inference (NetID) [6] | Integrated Topological Features | Early Precision Rate (EPR) & AUROC vs. ground truth | Significant improvement over imputation-based methods | Benchmarking against ChIP-seq curated networks [6]. |

| Scale-Free Network Analysis [5] | Degree Distribution | Power-law exponent | Fit to scale-free topology | Agreement with network theory models [5]. |

Successful GRN topological analysis relies on a combination of computational tools, data resources, and prior knowledge databases.

Table 4: Essential Research Reagent Solutions for GRN Topology Studies

| Category & Item | Function/Description | Example Use Case |

|---|---|---|

| Data Generation | ||

| scRNA-seq Platform | Profiles gene expression at single-cell resolution. | Generating input expression data for cell-type-specific GRN inference [7]. |

| GRN Inference Software | ||

| GENIE3 [1] [6] | Random Forest-based GRN inference. | Constructing a baseline network for topological feature extraction. |

| LINGER [7] | Lifelong learning neural network for GRN inference. | Inferring high-accuracy GRNs from single-cell multiome data by incorporating external bulk data. |

| Prior Knowledge Databases | ||

| Motif Databases | Collections of transcription factor binding motifs. | Validating inferred TF-target edges or as priors in methods like LINGER [7]. |

| ChIP-seq Validation Data [7] [6] | Experimentally determined TF binding sites. | Serving as ground truth for benchmarking the accuracy of topology-based predictions. |

| Computational Analysis | ||

| NetworkX Library [3] | Python library for network analysis. | Calculating degree, Knn, and PageRank from an adjacency matrix. |

Integrated Analysis of Topological Features in a Signaling Pathway

To illustrate how these features interact in a biological system, consider a simplified model of a signaling pathway and its regulated GRN. The following diagram integrates the concepts of degree, Knn, and PageRank into a cohesive regulatory module.

Figure 2: Integrated topological roles in a simplified GRN module. TF A is a high-degree hub, TFs B and C form a high-Knn module, and Gene X is a high-PageRank effector.

This model shows:

- TF A acts as a high-degree hub, directly regulating multiple targets and initiating the regulatory cascade.

- TFs B and C form a high-Knn module, indicating they are interconnected and likely co-regulate common targets, enhancing functional robustness.

- Gene X is a high-PageRank effector, receiving inputs from multiple important regulators (TF B, TF C, and indirectly from TF A), marking it as a key downstream effector with significant global influence on the network's output.

In conclusion, a multi-feature topological approach incorporating degree, Knn, and PageRank provides a powerful, quantitative framework for deciphering the complex architecture of GRNs. When integrated with machine learning models like decision trees, these features enable the identification of master regulators, functional modules, and key effector genes, directly supporting advanced research in systems biology and drug development.

Gene Regulatory Networks (GRNs) represent the complex orchestration of molecular interactions that control cellular identity, function, and response. Understanding these networks requires more than just cataloging individual components; it demands insight into their organizational architecture, or topology. Topology refers to the structural arrangement of connections within a network, characterizing which elements interact and how these interaction patterns influence system-wide behavior. In biological systems, topological analysis has revealed that GRNs are not random collections of interactions but are organized with specific structural patterns that confer functional advantages [8]. These patterns include scale-free properties, where a few highly connected "hub" genes regulate many targets, and small-world properties, enabling efficient information flow between distant network regions [9].

The relationship between network topology and biological function represents a fundamental frontier in systems biology. Research has demonstrated that life-essential subsystems are governed by distinct topological signatures compared to specialized subsystems [8]. This architectural difference suggests that natural selection has shaped not just the molecular components themselves but the very structure of their interactions. By analyzing topological features, researchers can now predict which genes are functionally indispensable, identify key regulatory points in disease processes, and uncover novel therapeutic targets that might remain hidden when studying genes in isolation.

This guide provides a comparative analysis of how different computational approaches leverage topological features to reconstruct GRNs and link network structure to biological function. We focus specifically on the context of decision tree models that utilize topological features for GRN analysis, examining their experimental performance, methodological frameworks, and practical applications in biomedical research.

Topological Features of GRNs: A Comparative Framework

Defining Key Topological Metrics

Topological features quantify the structural roles and importance of individual genes within a GRN. Different features capture distinct aspects of network architecture, from local connectivity patterns to global influence.

Table 1: Key Topological Features in GRN Analysis

| Feature Name | Description | Biological Interpretation | Role in Decision Trees |

|---|---|---|---|

| Knn (Average Nearest Neighbor Degree) | The average degree of a node's direct neighbors [8] | Measures the connectivity of a gene's interaction partners; indicates network modularity | Primary splitter in consensus decision trees; distinguishes regulators from targets [8] |

| PageRank | Measures node importance based on both quantity and quality of connections [10] [11] | Identifies influential genes through recursive "voting" by neighbors | Resolves classification ambiguity in intermediate Knn ranges [8] |

| Degree Centrality | Number of direct connections a node has [10] [11] | Identifies hub genes with numerous regulatory relationships | Secondary classifier; distinguishes targets from regulators when Knn and PageRank are ambiguous [8] |

| Betweenness Centrality | Measures how often a node lies on shortest paths between other nodes [10] [11] | Identifies bridge genes connecting different network modules | Not featured in core decision tree but important for network robustness [8] |

| Clustering Coefficient | Measures how interconnected a node's neighbors are to each other [10] [11] | Identifies densely connected functional modules | Captures local network organization beyond direct connections |

Methodological Comparison: How Approaches Leverage Topology

Different computational methods utilize topological features in distinct ways for GRN inference and analysis. The following table compares how various approaches incorporate topological information.

Table 2: Methodological Comparison of Topological Approaches to GRN Analysis

| Method/Approach | Core Methodology | Topological Features Utilized | Biological Insights Generated |

|---|---|---|---|

| Decision Tree Consensus Model [8] | Machine learning classification using Knn, PageRank, and degree | Knn, PageRank, degree | Distinguishes regulators from targets; links topological features to subsystem essentiality |

| INSPRE [9] | Causal discovery using interventional data and sparse regression | Eigencentrality, in-degree, out-degree | Discovers scale-free networks; relates eigencentrality to gene essentiality and heritability |

| GTAT-GRN [10] [11] | Graph neural network with topology-aware attention | Degree centrality, clustering coefficient, betweenness centrality, PageRank | Integrates multi-source features for improved GRN inference accuracy |

| GRLGRN [12] | Graph representation learning with transformer networks | Implicit topological links from prior networks | Captures latent regulatory dependencies through graph structure |

| TAFS [13] | Topology-aware functional similarity | Extended neighborhood connectivity | Improves protein function prediction using network topology |

Decision Tree Models: Topological Features as Classification Predictors

Experimental Protocol and Workflow

The decision tree approach to GRN topology analysis follows a structured experimental pipeline that transforms raw network data into biological insights:

Network Compilation: Researchers gathered GRNs from multiple species including Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens [8]. After filtering, the dataset contained 49,801 regulatory interactions with 12,319 nodes (1,073 regulators and 11,246 targets).

Topological Feature Calculation: For each node in the compiled networks, researchers computed multiple topological features including Knn (average nearest neighbor degree), PageRank, degree, and others [8]. The networks demonstrated scale-free properties, fitting a power-law distribution (R² ≈ 1).

Attribute Selection and Model Training: Through feature importance analysis, Knn, PageRank, and degree were identified as the most relevant attributes [8]. Decision trees with 9-15 leaves were trained using these three features exclusively.

Model Validation: The trained models were validated using randomized datasets, with the normal consensus model significantly outperforming random classifications (84.91% CCI vs. 51.82% CCI) [8].

Biological Interpretation: The decision tree leaves were analyzed for functional enrichment, revealing associations between topological profiles and biological processes [8].

Diagram 1: Decision Tree Analysis Workflow for GRN Topology

Decision Tree Consensus Rules and Biological Interpretation

The consensus decision tree generated classification rules based on three topological features, creating a hierarchical decision framework that distinguishes regulators from targets and links topology to biological function:

Primary Split (Knn): Nodes with very low or high Knn values are initially classified as regulators or targets, respectively [8]. This indicates that the connectivity patterns of a gene's neighbors provide strong predictive power for identifying its regulatory role.

Secondary Split (PageRank): For nodes with intermediate Knn values, PageRank resolves ambiguity [8]. High PageRank nodes are classified as regulators, reflecting their influential position in the network.

Tertiary Split (Degree): Remaining ambiguous cases are resolved using degree, with high-degree nodes classified as regulators [8]. This captures the hub property common to many transcription factors.

The topological classification revealed striking biological patterns: specialized processes like cell differentiation were primarily regulated by transcription factors with low Knn values, while essential subsystems were governed by regulators with high PageRank or degree [8]. This suggests that life-essential functions require robust regulatory control achieved through influential network positions, while specialized functions operate through more modular, segregated regulatory structures.

Advanced Topological Inference Methods

Causal Network Discovery with INSPRE

The INSPRE (inverse sparse regression) approach represents a methodological advancement in causal network discovery by leveraging large-scale interventional data from CRISPR-based experiments [9]. The method applies a two-stage procedure:

Marginal Effect Estimation: Using guide RNA as instrumental variables, INSPRE first estimates the marginal average causal effect of every feature on every other feature [9].

Sparse Inverse Optimization: The method then estimates a sparse approximate inverse of the causal effect matrix through constrained optimization, which is used to reconstruct the underlying causal graph [9].

When applied to a genome-wide Perturb-seq dataset targeting 788 essential genes in K562 cells, INSPRE discovered a network with distinct small-world and scale-free properties [9]. The network contained 10,423 edges (1.68% density) with an exponential decay in both in-degree and out-degree distributions. Analysis revealed that 47.5% of gene pairs were connected by at least one path, with a median path length of 2.67, indicating efficient information flow [9].

A key finding was the relationship between topological centrality and gene essentiality: eigencentrality was significantly associated with multiple measures of loss-of-function intolerance [9]. This provides strong evidence that evolutionarily constrained, essential genes occupy central positions in regulatory networks, making them topologically identifiable.

Graph Neural Network Approaches

Recent advances in graph neural networks (GNNs) have created new opportunities for topology-aware GRN inference. The GTAT-GRN framework integrates multi-source feature fusion with a graph topology-aware attention mechanism to improve inference accuracy [10] [11]. The model architecture includes:

- Multi-Source Feature Fusion: Jointly models temporal expression patterns, baseline expression levels, and structural topological attributes [10] [11]

- Graph Topology-Aware Attention: Combines graph structure information with multi-head attention to capture potential regulatory dependencies [10] [11]

- Topological Feature Integration: Specifically incorporates degree centrality, clustering coefficient, betweenness centrality, and PageRank [10] [11]

In comparative evaluations on benchmark datasets, GTAT-GRN consistently achieved higher inference accuracy and improved robustness compared to methods like GENIE3 and GreyNet [10] [11]. This demonstrates the value of explicitly modeling topological relationships in GRN inference.

Similarly, GRLGRN utilizes graph representation learning with transformer networks to extract implicit links from prior GRNs [12]. The model employs a graph transformer network to capture latent topological relationships, then uses these enriched representations to infer regulatory dependencies. On benchmark evaluations across seven cell lines, GRLGRN achieved average improvements of 7.3% in AUROC and 30.7% in AUPRC compared to existing methods [12].

Diagram 2: GTAT-GRN Multi-Source Feature Fusion Architecture

Experimental Data and Performance Comparison

Quantitative Performance Metrics

Different topological approaches to GRN analysis demonstrate distinct performance characteristics across various evaluation metrics. The following table summarizes comparative performance data from multiple studies.

Table 3: Experimental Performance Comparison of Topological GRN Methods

| Method | AUROC | AUPRC | Precision | Recall | F1-Score | Structural Hamming Distance |

|---|---|---|---|---|---|---|

| Decision Tree Consensus [8] | 86.86% (average ROC) | Not reported | Not reported | Not reported | Not reported | Not reported |

| INSPRE [9] | Not reported | Not reported | High (varies by condition) | Variable (precision-focused) | Competitive | Lowest among compared methods |

| GTAT-GRN [10] [11] | Highest on DREAM4/5 benchmarks | Highest on DREAM4/5 benchmarks | High Precision@k | High Recall@k | High F1@k | Not reported |

| GRLGRN [12] | 7.3% average improvement | 30.7% average improvement | Not reported | Not reported | Not reported | Not reported |

Biological Validation Findings

Beyond computational metrics, topological approaches have generated biologically validated insights:

Essential vs. Specialized Subsystems: Analysis of decision tree leaves revealed that essential biological processes are predominantly regulated by transcription factors with intermediate Knn and high PageRank or degree, while specialized functions are governed by TFs with low Knn [8]. This topological signature suggests essential functions require robust, influential regulators.

Centrality-Essentiality Relationship: INSPRE analysis found statistically significant associations between eigencentrality and loss-of-function intolerance metrics including gnomad_pLI (padj = 2.9×10⁻⁸), sHet (padj = 4.9×10⁻⁸), and haploinsufficiency scores [9]. This establishes that evolutionarily constrained genes occupy central network positions.

Hub Gene Identification: Topological analysis of the K562 network identified high-out-degree regulators including DYNLL1 (out-degree: 422), HSPA9 (out-degree: 374), and PHB (out-degree: 355) [9]. These represent influential regulatory hubs controlling essential cellular processes.

Duplication Effects: Network simulations demonstrated that gene/genome duplication significantly affects topological features, with target duplication decreasing regulator Knn and regulator duplication increasing regulator Knn [8]. This reveals how evolutionary mechanisms shape network topology.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Resources for Topological GRN Analysis

| Resource Type | Specific Examples | Function in Topological Analysis |

|---|---|---|

| Genome-Wide Perturbation Platforms | CRISPR-based Perturb-seq [9] | Generates interventional data for causal network inference; enables large-scale knockout studies with transcriptional profiling |

| Prior Knowledge Databases | STRING [12], Cell-type-specific ChIP-seq [12], Non-specific ChIP-seq [12] | Provides established regulatory relationships for initial network construction; serves as ground truth for method validation |

| Single-Cell RNA Sequencing Datasets | BEELINE benchmark datasets [12] (hESCs, hHEPs, mDCs, mESCs, mHSCs) | Supplies gene expression matrices for topological feature calculation; enables cell-type-specific GRN reconstruction |

| Topological Feature Calculators | Custom algorithms for Knn, PageRank, centrality metrics [8] [10] | Computes structural metrics from network graphs; generates features for machine learning classification |

| Graph Neural Network Frameworks | GTAT-GRN [10] [11], GRLGRN [12] | Implements topology-aware deep learning for GRN inference; captures complex nonlinear regulatory relationships |

The integration of topological analysis with GRN research has established network structure as a fundamental determinant of biological function and essentiality. The consensus across multiple methodologies is clear: distinct topological signatures characterize genes with different functional roles and evolutionary constraints. Decision tree models demonstrate that simple topological rules can effectively classify regulatory elements and predict their functional associations [8]. Advanced causal discovery methods reveal that network centrality measures correlate strongly with gene essentiality and evolutionary constraint [9]. Graph neural networks show that explicit topological modeling significantly improves GRN inference accuracy [10] [12] [11].

These findings have profound implications for biomedical research. Topological analysis provides a powerful framework for identifying critical regulatory hubs in disease networks, potentially revealing new therapeutic targets. The relationship between network position and gene essentiality suggests topology could help prioritize candidate genes in genetic studies. As single-cell technologies continue to generate increasingly detailed maps of cellular states, topological approaches will be essential for extracting functional insights from these complex datasets. The convergence of network science and molecular biology continues to demonstrate that in complex biological systems, position is destiny—a gene's functional importance is fundamentally encoded in its topological relationships within the regulatory network.

In the complex world of biological data analysis, machine learning models must balance predictive power with interpretability to generate actionable scientific insights. Decision trees stand as a cornerstone in interpretable machine learning, offering a transparent methodology for classification and regression tasks by learning simple decision rules inferred from data features [14]. Unlike "black box" models such as neural networks, decision trees provide a white box model where if a given situation is observable, the explanation for the condition is easily explained by boolean logic [14]. This characteristic makes them particularly valuable for biological research areas including gene regulatory network (GRN) analysis, variant pathogenicity prediction, and disease gene identification, where understanding the reasoning behind predictions is as crucial as the predictions themselves.

The fundamental structure of a decision tree consists of nodes that test specific features, branches that represent outcomes of these tests, and leaf nodes that provide final classifications or predictions [15]. This hierarchical, rule-based structure mirrors human decision-making processes, allowing researchers to trace the complete logic path from input data to final outcome. For computational biologists studying GRN topological features, this interpretability enables validation of findings against domain knowledge and generation of testable hypotheses about regulatory mechanisms.

Fundamental Principles of Decision Tree Algorithms

Core Mathematical Framework

Decision tree algorithms operate by recursively partitioning the feature space based on optimization criteria that evaluate the quality of potential splits [16]. The process begins with the entire dataset at the root node and employs impurity measures to select features that best separate the data into homogenous subgroups. Two common impurity measures are:

Entropy and Information Gain: Entropy measures the disorder or impurity in a dataset, calculated as ( I = -\sum{i=1}^{m}pi\log pi ), where ( pi ) represents the fraction of items belonging to class i [16]. Information gain quantifies the reduction in entropy after splitting based on a particular attribute, with higher values indicating better separation.

Gini Index: The Gini index measures the probability of misclassifying a randomly chosen element if it were randomly labeled according to the class distribution in the subset [15]. Calculated as ( 1-\sum{i=1}^{m}pi^2 ), lower Gini values indicate purer node partitions.

The algorithm evaluates all possible splits and selects the one that maximizes information gain or minimizes impurity, continuing recursively until stopping conditions are met, such as maximum tree depth or minimum samples per leaf node [17].

Tree Construction and Optimization

Practical decision tree implementations incorporate strategies to prevent overfitting, where models become too complex and capture noise rather than underlying patterns [14]. These include:

Pre-pruning: Stopping growth early by setting constraints on maximum depth, minimum samples per leaf, or minimum impurity decrease.

Post-pruning: Growing the tree completely and then removing branches that provide little predictive power, typically using validation set performance [16].

Ensemble methods: Combining multiple trees through random forests or boosting to improve generalization, though this sacrifices some interpretability [16].

For biological applications, the optimal tree complexity balances capture of meaningful biological patterns without overfitting to dataset-specific noise. The scikit-learn implementation provides parameters such as max_depth, min_samples_split, and min_impurity_decrease to control tree growth [14].

Decision Trees for GRN Topological Feature Analysis

Key Topological Features in GRN Research

Gene regulatory networks represent complex systems where transcription factors regulate target genes through intricate interactions [8]. When modeled as graphs with genes as nodes and regulatory relationships as edges, several topological features emerge as biologically significant:

Table 1: Key Topological Features in Gene Regulatory Networks

| Feature | Mathematical Definition | Biological Interpretation |

|---|---|---|

| Degree | Number of connections a node has | Indicates how many genes a transcription factor regulates or how many regulators a target gene has [11] |

| Knn (Average Nearest Neighbor Degree) | Average degree of a node's neighbors | Measures the connectivity pattern among a gene's direct interaction partners [8] |

| PageRank | Importance measure based on connection structure | Identifies influential hub genes in regulatory networks [11] |

| Betweenness Centrality | Number of shortest paths passing through a node | Highlights genes that act as bridges between different regulatory modules [11] |

| Clustering Coefficient | Measures how connected a node's neighbors are to each other | Quantifies the presence of local regulatory complexes or feedback loops [11] |

Research has demonstrated that these topological features are not random but correlate with biological function. For instance, studies have shown that life-essential subsystems are governed mainly by transcription factors with intermediary Knn and high page rank or degree, whereas specialized subsystems are primarily regulated by transcription factors with low Knn [8]. This topological organization provides robustness to essential cellular functions while allowing plasticity in specialized responses.

Decision Tree Classification of Regulatory Elements

In groundbreaking research on GRN topology, decision trees have successfully classified nodes as regulators or targets based solely on topological features [8]. The study analyzed GRNs from multiple species (Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens), comprising 49,801 regulatory interactions and 12,319 nodes (1,073 regulators and 11,246 targets).

The resulting decision tree achieved an average correct classification instance of 84.91% with ROC average of 86.86%, using only three features: Knn, page rank, and degree [8]. The classification rules revealed that:

- Small Knn values primarily indicate regulators, while high Knn values indicate targets

- Intermediate Knn regions require additional page rank information for classification

- High page rank nodes are classified as regulators, while low page rank areas require degree for final classification

This decision tree model not only provides accurate classification but also reveals fundamental biological insights about network organization. The finding that TF-hubs have small Knn (meaning their targets have low connections) suggests these regulators operate early in regulatory cascades and likely control specialized modules with fewer connections [8].

Performance Comparison: Decision Trees vs Alternative Methods

Classification Accuracy Across Biological Domains

Decision trees demonstrate variable performance across different biological applications, with their effectiveness dependent on data characteristics and problem complexity:

Table 2: Performance Comparison Across Biological Applications

| Application Domain | Decision Tree Performance | Alternative Methods | Key Insights |

|---|---|---|---|

| GRN Topological Analysis [8] | 84.91% CCI*, 86.86% ROC | Not reported | Knn, PageRank, degree sufficient for regulator/target classification |

| Pathogenic Mutation Prediction [18] | 85.3% accuracy | 91% accuracy (best supervised ML) | Simpler interpretation advantage over higher-performing black boxes |

| Alzheimer's Disease Gene Identification [19] | 85.3% accuracy | 96% accuracy (ANN - best) | Network topology features enhance all models |

| Diabetes Prediction [15] | 95.08% accuracy (deep tree) 97.19% (max_depth=2) | 95.83% (logistic regression) | Proper parameter tuning critical for performance |

CCI: Correctly Classified Instances

The performance comparison reveals that while decision trees may not always achieve the highest absolute accuracy, they provide an excellent balance between performance and interpretability. In the diabetes prediction example, a simpler tree with max_depth=2 actually outperformed both a more complex tree and logistic regression, while providing clinically meaningful thresholds that aligned with medical guidelines (HbA1c threshold of 6.75% vs clinical standard of 6.5%) [15].

Strengths and Limitations in Biological Contexts

Decision trees offer particular advantages for biological data analysis:

- Handling mixed data types: Native ability to work with both numerical and categorical features without extensive preprocessing [14]

- Missing value tolerance: Some algorithm implementations can handle missing values directly [16]

- Nonlinear pattern capture: Ability to model complex, nonlinear relationships without transformation [15]

- Visual interpretability: Tree structures can be visualized and understood by domain experts without machine learning expertise [15]

However, limitations include:

- Instability: Small data variations can produce completely different trees [14]

- Overfitting tendency: Can create over-complex trees that don't generalize without proper pruning [14]

- Linear relationship weakness: Not optimal for capturing linear relationships between highly correlated features [15]

Experimental Protocols for GRN Topological Analysis

Standardized Workflow for Topological Feature Extraction

Reproducible GRN analysis requires systematic procedures for network construction and feature calculation:

Network Construction: Compile regulatory interactions from curated databases (e.g., RegNet, TRRUST) or infer from expression data using tools like GENIE3 or GTAT-GRN [11]

Topological Feature Calculation:

- Compute node-level metrics: degree, Knn, PageRank, betweenness centrality, clustering coefficient

- Use network analysis libraries (NetworkX, igraph) for efficient computation

- Normalize features to account for network size variations [11]

Data Partitioning:

- Create balanced training sets with equal representation of regulator and target classes

- Implement cross-validation strategies to assess model stability [8]

Model Training and Validation:

- Train decision tree with impurity-based feature selection (Gini index or information gain)

- Apply pruning to prevent overfitting

- Validate on held-out test sets from multiple species to assess generalizability [8]

Experimental Design for Method Comparison

Robust comparison of decision trees against alternative methods requires:

Consistent Evaluation Metrics: Utilize multiple metrics including accuracy, sensitivity, specificity, ROC-AUC, and precision-recall curves [18]

Appropriate Baselines: Compare against:

- Traditional statistical models (logistic regression)

- Alternative machine learning approaches (SVM, k-NN, neural networks)

- Ensemble versions (random forests, boosted trees) [16]

Biological Validation: Where possible, correlate predictions with experimental evidence (e.g., essential gene screens, ChIP-seq validation) [8]

Interpretability Assessment: Evaluate not just predictive performance but also model-derived biological insights and hypothesis generation capability

Effective implementation of decision trees for GRN analysis requires specific computational tools:

Table 3: Essential Computational Resources for GRN Topological Analysis

| Resource Category | Specific Tools | Primary Function | Application Notes |

|---|---|---|---|

| Machine Learning Libraries | scikit-learn (Python) | Decision tree implementation | Provides DecisionTreeClassifier with visualization support [14] |

| Network Analysis | NetworkX, igraph | Topological feature calculation | Efficient computation of degree, centrality, PageRank [11] |

| Tree Visualization | Graphviz export | Model interpretation | Convert trees to interpretable diagrams [14] |

| Specialized GRN Tools | GTAT-GRN | Graph neural network approach | Alternative method for comparison [11] |

| Data Processing | pandas, NumPy | Data manipulation | Preprocessing of biological datasets |

High-quality biological datasets are prerequisite for meaningful GRN analysis:

- Regulatory Interaction Databases: RegNet, TRRUST, RegulonDB for curated TF-target relationships

- Expression Data Repositories: GEO, ArrayExpress for time-series and perturbation data

- Variant Annotation: ClinVar, dbNSFP for mutation impact analysis [18]

- Protein-Protein Interactions: STRING, BioGRID for extended network context

Decision trees provide a powerful yet interpretable approach for analyzing GRN topological features, with particular strength in identifying meaningful biological patterns from complex network data. Their performance, while sometimes exceeded by more complex models, is frequently sufficient for biological discovery when balanced against their superior interpretability.

For researchers implementing these methods, key recommendations include:

Start Simple: Begin with standard decision trees before progressing to ensemble methods, as simpler models often provide adequate performance with greater interpretability [15]

Prioritize Biological Validation: Always correlate computational findings with biological knowledge and, when possible, experimental validation [8]

Leverage Topological Features: The consistent importance of Knn, PageRank, and degree across evolutionary diverse GRNs suggests these are fundamental features worth calculating in any network analysis [8]

Optimize Complexity: Use pruning and cross-validation to identify the optimal trade-off between model complexity and generalizability [14]

As biological datasets continue growing in size and complexity, decision trees will remain an essential tool in the computational biologist's arsenal, providing a transparent pathway from raw data to biological insight in gene regulatory network analysis.

Identifying the Most Relevant Topological Features for Classification Tasks

In the field of systems biology, the accurate inference of Gene Regulatory Networks (GRNs) is fundamental to understanding cellular dynamics, disease mechanisms, and developmental processes. A GRN is a graph-level representation where nodes symbolize genes and edges depict regulatory interactions between transcription factors (TFs) and their target genes [12]. The topological structure of these networks—the arrangement and connection patterns between nodes—holds critical information about their function and robustness. Consequently, identifying the most relevant topological features for classifying network components and predicting regulatory relationships has become a central task in computational biology. This guide objectively compares the performance of different models and analytical approaches that leverage topological features for classification tasks within GRNs, framed by a thesis focused on decision tree models. We summarize experimental data, provide detailed methodologies, and visualize key concepts to serve researchers, scientists, and drug development professionals.

Topological Features of GRNs: A Primer

Topological features are quantitative metrics derived from the structural properties of nodes and edges in a GRN graph. They characterize a gene's position, importance, and interaction patterns within the complex web of regulation [10] [11]. The accurate computation of these features is a prerequisite for any classification or inference model.

The following table summarizes the key topological features commonly used in GRN analysis, their definitions, and their biological significance for classification tasks.

Table 1: Key Topological Features in Gene Regulatory Networks

| Feature Name | Mathematical/Graph Definition | Biological Interpretation in GRNs |

|---|---|---|

| Degree Centrality | The total number of direct connections (edges) a node has. | Indicates a gene's overall connectivity. Hubs (high-degree genes) are often key regulators or stable core components. |

| In-Degree | The number of incoming edges to a node. | For a gene, this represents the number of transcription factors that directly regulate it. |

| Out-Degree | The number of outgoing edges from a node. | For a TF, this represents the number of target genes it directly regulates. |

| Knn (Average Nearest Neighbor Degree) | The average degree of a node's direct neighbors [8]. | Helps distinguish regulators with low-Knn (controlling specialized subsystems) from targets with high-Knn (involved in essential subsystems) [8]. |

| PageRank | An algorithm measuring node importance based on the quantity and quality of its incoming connections. | Identifies genes with high influence in the network, often crucial for life-essential subsystems and network robustness [8]. |

| Betweenness Centrality | The number of shortest paths between all node pairs that pass through a given node. | Highlights "bottleneck" genes that control information flow and are potential critical control points. |

| Clustering Coefficient | A measure of how interconnected a node's neighbors are to each other. | Quantifies the presence of tightly-knit regulatory modules or feedback loops around a gene. |

Experimental Comparison of Model Performance

Various models have been developed to leverage these topological features, among other data types, for GRN inference and node classification. The following experiments and benchmarks illustrate how different approaches perform in practice.

Decision Tree Model for Classifying Regulators and Targets

A foundational study constructed decision tree models using topological features to classify network nodes as either regulators (TFs) or targets [8].

Table 2: Performance of Decision Tree Model in Node Classification

| Evaluation Metric | Performance Score | Experimental Context |

|---|---|---|

| Correctly Classified Instances (CCI) | 84.91% (average) | Model trained on GRNs from multiple species (E. coli, S. cerevisiae, D. melanogaster, A. thaliana, H. sapiens) [8]. |

| ROC Area | 86.86% (average) | Same multi-species training set as above [8]. |

| Feature Importance Ranking | 1. Knn 2. PageRank 3. Degree | Attribute selection identified these three as the most relevant features for the classification task [8]. |

Experimental Protocol:

- Data Curation: Regulatory interactions were compiled from species-specific databases for E. coli, S. cerevisiae, D. melanogaster, A. thaliana, and H. sapiens, resulting in 49,801 interactions and 12,319 nodes (1,073 regulators and 11,246 targets) after filtering [8].

- Feature Calculation: Topological features, including Knn, PageRank, and degree, were calculated for each node in the networks [8].

- Model Training and Validation: Decision trees were trained using the Waikato Environment for Knowledge Analysis (WEKA) software. The model was built on 12 balanced training sets and its performance was validated against randomized datasets, which resulted in low performance (CCI ~51.82%), confirming the model's reliability [8].

The logic of the resulting consensus decision tree is visualized below, showing how the key topological features are used for classification.

Advanced Graph Neural Network Models for GRN Inference

More recently, advanced deep learning models have been developed that integrate topological features with other data sources for superior GRN inference.

GTAT-GRN Model: This model uses a Graph Topology-Aware Attention (GTAT) mechanism and fuses multi-source features [10] [11].

Table 3: GTAT-GRN Performance on Benchmark Datasets

| Benchmark Dataset | Key Performance Metrics vs. State-of-the-Art (e.g., GENIE3, GreyNet) |

|---|---|

| DREAM4 | Consistently higher inference accuracy and improved robustness across datasets [10] [11]. |

| DREAM5 | Outperformed existing methods in overall metrics, including Area Under the Curve (AUC) and Area Under the Precision-Recall Curve (AUPR) [10] [11]. |

| General Performance | Demonstrated high-confidence predictive performance on Top-k metrics (Precision@k, Recall@k, F1@k) [10] [11]. |

Experimental Protocol:

- Multi-Source Feature Fusion: The model integrates three streams of information:

- Temporal Features: Statistical indicators (mean, standard deviation, trend) from gene expression time-series data.

- Expression-Profile Features: Baseline expression levels, stability, and specificity from wild-type and multi-condition data.

- Topological Features: The network-based metrics listed in Table 1, calculated from a prior GRN structure [10] [11].

- Graph Topology-Aware Attention: The GTAT module dynamically captures high-order dependencies and asymmetric relationships between genes by combining graph structure information with multi-head attention mechanisms [10] [11].

- Model Training and Evaluation: The model was trained and evaluated on standard benchmark datasets like DREAM4 and DREAM5, with performance quantified using AUC, AUPR, and Top-k metrics against other established methods [10] [11].

GRLGRN Model: This model employs a graph transformer network to infer GRNs from single-cell RNA-sequencing data [12].

Table 4: GRLGRN Performance on scRNA-seq Benchmarks

| Evaluation Context | Performance Improvement Over Prevalent Models |

|---|---|

| Seven Cell-Line Datasets (hESCs, hHEPs, mDCs, etc.) | Achieved the best predictions in Area Under the Receiver Operating Characteristic (AUROC) and AUPRC on 78.6% and 80.9% of datasets, respectively [12]. |

| Average Performance Gain | Achieved an average improvement of 7.3% in AUROC and 30.7% in AUPRC [12]. |

Experimental Protocol:

- Data Preprocessing: Utilized scRNA-seq data from the BEELINE database, comprising seven cell lines with three different ground-truth networks (STRING, cell type-specific ChIP-seq, non-specific ChIP-seq) [12].

- Implicit Link Extraction: A graph transformer network was used to extract implicit links from a prior GRN, going beyond explicit connections to capture latent regulatory dependencies [12].

- Feature Enhancement and Output: Gene embeddings were refined using a Convolutional Block Attention Module (CBAM) and a graph contrastive learning regularization term was added to prevent over-fitting. The final output layer predicts gene regulatory relationships [12].

The Scientist's Toolkit: Research Reagent Solutions

The experiments cited rely on a suite of computational tools and data resources. The following table details these essential components.

Table 5: Key Research Reagents and Computational Resources

| Resource Name | Type | Function in Research |

|---|---|---|

| BEELINE Database [12] | Benchmark Data | Provides standardized scRNA-seq datasets and ground-truth networks from multiple cell lines for fair evaluation and benchmarking of GRN inference algorithms. |

| DREAM4 & DREAM5 [10] [11] | Benchmark Data | Community-standard in silico challenge datasets used to objectively compare the performance of GRN inference methods. |

| WEKA [8] | Software | A suite of machine learning software written in Java, used for building and validating the decision tree models in the foundational study. |

| STRING DB [20] | Biological Database | A database of known and predicted protein-protein interactions, often used as a source of prior biological knowledge to guide and validate network models. |

| Graph Transformer Network [12] | Algorithm | A type of graph neural network that uses self-attention to model dependencies between all nodes in a graph, used in GRLGRN to extract implicit links. |

| CRISPR-Cas9 Screens (e.g., DepMap) [20] | Experimental Data | Functional genomic screens that measure gene dependency scores, which are used as a gold standard to validate the functional relevance of predicted network interactions and biomarkers. |

Integrated Workflow and Biological Significance

The process of leveraging topological features for GRN analysis, from data input to biological insight, can be summarized in the following workflow. This diagram integrates the components from the various models discussed, showing how topological features are central to the classification and inference process.

The biological significance of topological features is profound. The decision tree study revealed that life-essential subsystems are predominantly governed by TFs with intermediate Knn and high PageRank or degree [8]. This combination suggests a structure where robustness against random perturbation is ensured by the high probability of signal propagation (high PageRank/degree) through well-connected nodes. In contrast, specialized subsystems (e.g., cell differentiation) are mainly regulated by TFs with low Knn [8]. These TF-hubs, which likely emerged from gene duplication events, act early in regulatory cascades and control more modular, specialized functions with fewer connections to other subsystems. This topological arrangement elegantly maps form to function in cellular regulation.

This guide provides an objective comparison of the performance of decision tree models in identifying evolutionarily conserved topological features within Gene Regulatory Networks (GRNs). The analysis synthesizes experimental data from multiple studies to evaluate how topological characteristics, including K nearest neighbor (Knn) degree, page rank, and degree, serve as robust classifiers for distinguishing regulatory elements and reveal conserved patterns across species. The conservation of these features is critically linked to gene and genome duplication events, which shape network architecture and subsystem control. Below, we present structured quantitative data, detailed experimental protocols, and essential research tools to support the evaluation and application of these models in research and drug development.

Quantitative Comparison of Topological Features and Model Performance

Core Topological Features Across Species

The following table summarizes the three most relevant topological features identified from GRNs of multiple species and their roles in classifying network components and essential subsystems [8].

| Topological Feature | Role in Classifying Regulators vs. Targets | Association with Subsystems | Evolutionary Influence |

|---|---|---|---|

| Knn (K nearest neighbor degree) | Primary classifier; Regulators have low Knn, targets have high Knn [8]. | Low Knn regulators control specialized subsystems; Targets with high Knn operate in life-essential subsystems [8]. | Gene/genome duplication is the main process that increases Knn [8]. |

| Page Rank | Secondary classifier; High page rank indicates regulators [8]. | High page rank regulators control life-essential subsystems, ensuring robustness [8]. | Conserved along evolution; A primary trait in cell development [8]. |

| Degree | Tertiary classifier; High degree indicates regulators [8]. | High degree regulators control life-essential subsystems [8]. | Conserved along evolution [8]. |

Decision Tree Model Performance Metrics

Analysis of GRNs from Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens demonstrated the high performance of decision tree models built on these three features [8].

| Model / Dataset | Correctly Classified Instances (CCI) | ROC Area | Model Complexity (Tree Leaves) |

|---|---|---|---|

| Consensus Decision Tree (Normal Data) | 84.91% (Average) | 86.86% (Average) | 9 to 15 leaves [8] |

| Independent Test Set Classification | 68.23% to 100% | ≥ 0.8 (Predictive Score) | Not Specified [8] |

| Model Trained on Randomized Data | 51.82% (Average) | 51% (Average) | Up to 17 leaves [8] |

Experimental Protocols and Workflows

Protocol 1: GRN Topological Analysis and Decision Tree Classification

This methodology was used to identify Knn, page rank, and degree as the most relevant features and build the classifier [8].

1. Data Acquisition and Network Filtering:

- Obtain species-specific GRN data from curated databases.

- Apply filtering steps to select high-confidence regulatory interactions. The studied networks represented up to 51.17% of all genes in each genome [8].

2. Topological Feature Calculation:

- For each node (TF or target gene) in the filtered network, calculate its degree (number of connections), Knn (average degree of its neighbors), and page rank (measure of node importance based on incoming connections) [8].

- Verify the scale-free property of the filtered networks by fitting a power-law function (R² ≈1) [8].

3. Attribute Selection and Model Training:

- Use attribute selection algorithms to rank the importance of all topological features. Knn, page rank, and degree consistently rank highest [8].

- Construct decision trees using only these three attributes. Generate multiple balanced training sets (e.g., 12 sets with ~1900 instances each) [8].

- Train the decision tree model, resulting in trees with 9-15 leaves [8].

4. Model Validation and Testing:

- Validate model performance using k-fold cross-validation and independent test sets.

- Benchmark against randomized data to confirm reliability (low performance on random data supports model reliability) [8].

Protocol 2: In Silico Network Evolution Simulation

This protocol tests how gene duplication events influence the emergence of Knn as a key topological feature [8].

1. Initial Network Construction:

- Create a hypothetical initial GRN with a simple, defined architecture [8].

2. Simulation of Duplication Events:

- Target Duplication: Duplicate the target genes of a given regulator. This increases the regulator's degree and leads to a smooth decrease in the regulator's Knn [8].

- Regulator Duplication: Duplicate regulator nodes. This increases the degree of the targets and leads to an increase in the regulator's Knn [8].

3. Topological Analysis Post-Duplication:

- After each duplication event, recalculate the Knn, page rank, and degree for all nodes in the simulated network.

- Track changes in these features to confirm that duplication is a key evolutionary process shaping Knn [8].

Logical Workflow of GRN Topological Analysis

The diagram below illustrates the core logic and process flow for using topological features to classify network components and understand their evolutionary conservation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and computational tools essential for conducting research on GRN topological features and their evolution.

| Resource/Tool | Function in Research | Relevance to Topological Conservation |

|---|---|---|

| NoC Classification Model [8] | A decision tree model for classifying regulators and targets based on topological features. | Provides the foundational model demonstrating Knn, page rank, and degree as evolutionarily conserved classifiers. |

| Graphlet Degree Vector (GDV) [21] | A 73-dimensional vector describing the local wiring patterns of a node in a network. | Used in protein-protein interaction networks to find topology-function relationships conserved between species (topological orthology). |

| Biologically Informed Neural Networks (BINNs) [22] | Sparse neural networks with layers mapped to biological pathways for enhanced interpretability. | Offers an alternative, highly accurate method for integrating network biology and identifying important proteins/pathways. |

| TopoDoE Strategy [23] | A design of experiment strategy to refine GRN topology using perturbation simulations. | Helps validate and correct GRN topologies inferred from data, crucial for accurate evolutionary studies. |

| Power-Law Distribution Analysis [8] | A statistical test to verify the scale-free property of a biological network. | Confirms that filtered GRNs maintain a key topological property (scale-freeness), supporting evolutionary analysis. |

| Descendants Variance Index (DVI) [23] | A topological index measuring variability in a gene's regulatory interactions across candidate GRNs. | Identifies genes with the most uncertain regulatory connections, prime targets for experimental refinement. |

Discussion of Comparative Performance

Decision tree models based on Knn, page rank, and degree provide a highly accurate and interpretable framework for classifying GRN components and linking topology to biological function across evolution. The high performance scores (CCI ~85%, ROC ~87%) on multi-species data and the stark contrast with models trained on randomized data underscore their reliability [8].

The primary advantage of this approach is its ability to distill complex network architecture into simple, evolutionarily conserved rules. The finding that gene duplication directly shapes the most relevant feature, Knn, provides a mechanistic link between evolutionary processes and network topology [8]. This offers a significant edge in generating testable hypotheses about subsystem control.

Alternative methods, such as Biologically Informed Neural Networks (BINNs), can achieve superior predictive accuracy (ROC-AUC up to 0.99) for specific tasks like patient subphenotyping [22]. However, they are typically more complex and require pre-defined pathway databases. Similarly, graphlet-based correlation analysis can identify topologically orthologous functions between species [21] but operates on protein-protein interaction networks. For the specific goal of identifying broad, evolutionarily conserved architectural principles in GRNs, the decision tree model offers an unparalleled balance of performance, simplicity, and biological insight.

Building and Applying Decision Tree Models to GRN Data

Gene Regulatory Networks (GRNs) represent the complex web of interactions where transcription factors (TFs) regulate the expression of target genes. Reconstructing these networks from omics data is fundamental for understanding cellular identity, differentiation, and disease mechanisms [24]. The field has evolved through distinct phases, from early computational tools using transcriptomic data alone to contemporary methods that leverage single-cell multi-omics measurements [24]. This progression has enabled more robust modeling of regulatory processes by integrating information about TF binding site accessibility from assays like ATAC-seq and ChIP-seq alongside gene expression data [24].

Within this context, topological features of GRNs provide a powerful, abstract representation of network structure that captures relationships beyond simple gene co-expression. These features describe the connectivity patterns, hierarchical organization, and relational roles of genes within the regulatory network. When combined with decision tree models—notably gradient-boosted trees like XGBoost—they create a framework for predicting key regulatory elements, classifying cell states, and identifying dynamically changing network components across biological conditions. This pipeline details the comprehensive process from raw data preprocessing to model training, emphasizing the extraction of topological features and their application in tree-based machine learning models.

GRN Data Preprocessing and Network Construction

Data Source Preparation and Initial Processing

The first stage involves preparing and validating the input data. For GRN construction, this typically comes from transcriptomic (e.g., scRNA-seq) and epigenomic (e.g., scATAC-seq) sources.

- Single-cell RNA-seq Data Processing: Raw count matrices require rigorous preprocessing. This includes quality control (mitochondrial content, number of detected genes), normalization (e.g., library size normalization), and log-transformation (

log2(counts + 1)) to stabilize variance [24]. Highly variable genes are often selected to reduce computational complexity before network inference. - Single-cell Multi-omics Data: When using paired or integrated multi-omics data, chromatin accessibility information from scATAC-seq is integrated with gene expression. This allows for the mapping of accessible cis-regulatory elements (CREs) to potential target genes, providing critical context for TF binding [24].

- Data Formatting for Analysis: Processed data is formatted into a gene expression matrix (cells x genes) for tools like SCENIC. As per best practices, ensure file paths and variable names do not contain spaces or special characters and do not conflict with function names in the computing environment (e.g., MATLAB, R) [25].

Core GRN Inference and Topological Feature Extraction

Once data is preprocessed, regulatory networks are inferred. The following table compares several prominent GRN inference tools, highlighting their data requirements and modeling approaches.

Table 1: Comparison of Multi-omics GRN Inference Tools

| Tool | Possible Inputs | Type of Multimodal Data | Type of Modelling | Type of Interactions | Statistical Framework |

|---|---|---|---|---|---|

| SCENIC+ [24] | Groups, contrasts, trajectories | Paired or integrated | Linear | Signed, weighted | Frequentist |

| CellOracle [24] [26] | Groups, trajectories | Unpaired | Linear | Signed, weighted | Frequentist or Bayesian |

| Pando [24] | Groups | Paired or integrated | Linear or non-linear | Signed, weighted | Frequentist or Bayesian |

| GRaNIE [24] | Groups | Paired or integrated | Linear | Weighted | Frequentist |

| FigR [24] | Groups | Paired or integrated | Linear | Signed, weighted | Frequentist |

| Gene2role [26] | Inferred GRNs | N/A (works on networks) | Role-based embedding | N/A | Frequentist |

The output of these tools is a signed GRN, formally represented as ( G = (V, E^+, E^-) ), where ( V ) is the set of genes (nodes), and ( E^+ ) and ( E^- ) are sets of positive (activation) and negative (inhibition) regulatory interactions (edges) [26].

From this network, foundational topological features are computed for each gene:

- Signed-degree: A 2-dimensional vector ( \mathbf{d} = [d^+, d^-] ) where ( d^+ ) and ( d^- ) are the number of positive and negative regulatory interactions for a gene [26].

- Multi-hop Neighborhood Topology: Advanced methods like Gene2role go beyond direct connections. They construct a multilayer graph that reflects structural similarities between nodes (genes) at different depths (e.g., 1-hop, 2-hop neighbors). This captures a gene's role in the broader network architecture, which is crucial for comparative analysis [26]. The similarity between genes is calculated using a distance function like Exponential Biased Euclidean Distance (EBED) to account for the scale-free nature of GRNs [26].

The diagram below illustrates the complete workflow from raw data to a topologically-enriched GRN ready for model training.

Graphical Abstract: GRN Preprocessing to Topological Feature Extraction

Integration with Decision Tree Models and Experimental Protocols

Feature Vector Construction and Model Training

The topological features extracted from the GRN are structured into a feature matrix suitable for machine learning. Each row corresponds to a gene, and columns represent features such as signed in-degree, signed out-degree, clustering coefficient, and multi-dimensional embeddings from role-based methods like Gene2role [26]. These features can be supplemented with node-level attributes (e.g., gene expression variance) and, for multi-omics GRNs, edge-level data like TF-binding scores from integrated epigenomics [24].

Decision tree models, particularly XGBoost (Extreme Gradient Boosting), are well-suited for this data. XGBoost is an ensemble method that builds sequential decision trees, each correcting the errors of its predecessor. It handles mixed data types well, provides feature importance scores, and has demonstrated high performance in biological classification tasks, achieving accuracies up to 85.2% in multi-class settings and 92.4% in binary classification in topological materials research [27]. The training protocol involves:

- Dataset Splitting: Partitioning the data into training, validation, and test sets, often using Nested Cross-Validation (NCV) to robustly tune hyperparameters and evaluate performance without data leakage [27].

- Hyperparameter Tuning: Optimizing parameters such as learning rate, maximum tree depth, number of estimators, and regularization terms (L1/L2) via grid or random search on the validation set.

- Model Training: Fitting the XGBoost model on the training set and monitoring performance on the held-out validation set to prevent overfitting.

Key Experiments and Performance Comparison

To evaluate the utility of GRN topological features in conjunction with decision tree models, several experimental paradigms are employed. The performance of different models and feature sets is typically compared using accuracy, F1 score, and area under the receiver operating characteristic curve (AUC-ROC).

Table 2: Experimental Performance Comparison of Models and Features

| Experiment Description | Model / Feature Set | Key Performance Metric | Interpretation / Top Feature |

|---|---|---|---|

| Five-type topological material classification [27] | XGBoost | 85.2% Accuracy | Demonstrates high efficacy of tree-based models on topological data. |

| Binary classification (Trivial vs. Non-trivial) [27] | XGBoost | 92.4% Accuracy | Highlights model strength in simpler discriminative tasks. |

| Identification of key topological influencers [27] | XGBoost Feature Importance | Max Packing Efficiency (MPE), Fraction of p valence electrons (FPV) | Topological properties can be linked to compositional/structural features. |

| Quantifying gene module stability [26] | Gene2role Embeddings + Distance Metrics | N/A | Enables measurement of topological changes in gene modules across cell states. |

A critical experiment is the identification of Differentially Topological Genes (DTGs). This involves:

- GRN Construction: Inferring cell-type-specific GRNs for two or more biological states (e.g., healthy vs. diseased, different differentiation stages) using a consistent tool like CellOracle or from single-cell co-expression [26].

- Embedding Generation: Using a role-based embedding method like Gene2role to project genes from each GRN into a unified latent space based on their multi-hop topological identities [26].

- Distance Calculation: Computing the Euclidean or cosine distance between the embeddings of the same gene across the two different cellular states.

- Statistical Analysis: Ranking genes by their embedding distance and selecting the top N as DTGs. These genes have undergone significant changes in their regulatory context, which may not be apparent from differential expression analysis alone [26].

The logical flow of this key experiment is detailed below.

Workflow for Identifying Differentially Topological Genes

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful execution of the GRN pipeline requires a suite of computational tools and data resources. The table below catalogs essential "research reagents" for this field.

Table 3: Essential Computational Reagents for GRN Topological Analysis

| Tool / Resource Name | Type | Primary Function | Key Application in Pipeline |

|---|---|---|---|

| SCENIC/SCENIC+ [24] | GRN Inference Tool | Infers regulons from scRNA-seq data using co-expression and motif enrichment. | Core network construction from transcriptomics. |

| CellOracle [24] [26] | GRN Inference & Simulation | Models GRNs from multi-omics data and simulates perturbation responses. | Network construction and in silico validation. |

| Gene2role [26] | Topological Embedding | Generates role-based gene embeddings from signed GRNs for comparison. | Extracting comparable topological features across networks. |

| XGBoost [27] | Machine Learning Library | Implements gradient-boosted decision trees for classification/regression. | Predictive modeling using topological features. |

| PyTorch Geometric | Deep Learning Library | Provides graph neural network primitives and layers. | Building custom GNNs for feature extraction (as in MFTReNet [28]). |

| Single-cell Omics Datasets (e.g., from cell atlas projects) | Data Resource | Provides raw count matrices for gene expression and chromatin accessibility. | Primary input data for GRN inference. |

| CisTarget Databases [24] | Motif Discovery Resource | Contains ranked lists of genomic regions for motif discovery (used by SCENIC). | Identifying direct targets of transcription factors. |

The integration of GRN-derived topological features with decision tree models creates a powerful, interpretable framework for computational biology. This step-by-step pipeline—from stringent data preprocessing and robust network inference to sophisticated topological feature extraction and model training—enables researchers to move beyond static network descriptions. It facilitates the prediction of key regulators, the classification of cellular states based on network architecture, and the identification of genes whose topological roles are dynamically altered in development and disease. As GRN inference methods continue to mature with multi-omics integration and topological deep learning, their synergy with robust tree-based models will remain a cornerstone of quantitative, network-based biological discovery.

Gene Regulatory Networks (GRNs) represent the complex interactions between transcription factors (TFs) and their target genes, playing essential roles in development, phenotype plasticity, and evolution [8]. Analyzing these networks requires extracting quantitative topological features that can describe their structure and function. Topological metrics provide a mathematical framework to characterize these complex systems, enabling researchers to identify key regulatory elements, understand robustness mechanisms, and predict system behavior under perturbation.

The structure of GRNs is typically scale-free, meaning their degree distribution follows a power law, which provides network resilience against random node removal and fits data from genome evolution by gene duplication [8]. This property makes certain topological features particularly informative for understanding the functional organization of regulatory systems. Research has demonstrated that three specific topological features—Knn (average nearest neighbor degree), page rank, and degree—serve as the most relevant attributes for distinguishing regulators from targets in GRNs and are conserved along evolution [8].

Key Topological Metrics and Their Biological Significance

Fundamental Metrics and Computational Definitions

Degree: The number of connections a node has to other nodes. In GRNs, TFs often serve as hubs (high-degree nodes) [8]. Degree is calculated as ( d(i) = \sum{j}A{ij} ), where ( A ) is the adjacency matrix.

Knn (Average Nearest Neighbor Degree): Measures the average degree of a node's neighbors, quantifying assortativity (the tendency of nodes to connect to similar nodes) [8]. Knn is calculated as ( k{nn}(i) = \frac{1}{d(i)}\sum{j}A_{ij}d(j) ).

Page Rank: An algorithm that measures the importance of a node based on the importance of its neighbors, originally developed for web search but highly applicable to biological networks for identifying master regulators [8].

Betweenness Centrality: Quantifies the number of shortest paths passing through a node, identifying bottlenecks in the network [29].

Assortativity: Measures the tendency of nodes to connect to similar nodes, typically calculated as the Pearson correlation coefficient of degree between pairs of connected nodes [29].

Network Efficiency: Quantifies how efficiently a network exchanges information, related to its robustness to perturbations [29].

Biological Interpretation of Key Metrics

The relationship between topological features and biological function reveals fundamental design principles of regulatory networks. Research analyzing GRNs from Escherichia coli, Saccharomyces cerevisiae, Drosophila melanogaster, Arabidopsis thaliana, and Homo sapiens has demonstrated that life-essential subsystems are governed mainly by TFs with intermediary Knn and high page rank or degree, whereas specialized subsystems are primarily regulated by TFs with low Knn [8].

This distribution suggests that the high probability of TFs being traversed by random signals (high page rank) and the high probability of signal propagation to target genes (high degree) ensure the robustness of essential subsystems. Conversely, TF-hubs with low Knn (meaning their neighbors have low connectivity) typically operate early in regulatory cascades and control specialized modules with fewer connections [8]. This topological organization provides insights into how networks maintain stability while enabling specialized functions.

Experimental Protocols for Topological Metric Calculation

Benchmarking Framework for GRN Inference Algorithms

Accurately inferring GRN topology from experimental data presents significant computational challenges. The STREAMLINE pipeline provides a three-step benchmarking framework specifically designed to quantify the ability of inference algorithms to capture topological properties and identify hubs [29]. This approach addresses limitations of previous benchmarking studies that focused primarily on local features like gene-gene interactions rather than global structural properties.

The STREAMLINE protocol employs:

Diverse Ground Truth Networks: Synthetic networks from four classes (Random, Scale-Free, Semi-Scale-Free, Small-World) and curated GRNs from biological systems [29].

Real Experimental Validation: Application to real scRNA-seq datasets from yeast, mouse, and human to compare against silver standard networks derived from ChIP-chip, ChIP-seq, or gene perturbations [29].

Topological Performance Metrics: Evaluation based on network efficiency (related to robustness) and hub identification accuracy rather than just interaction prediction [29].

Data Simulation and Network Generation

For synthetic benchmarks, STREAMLINE uses parameter-controlled network generation:

- Random Networks: Created with the Erdös-Renyi G(n, p) model where each node pair connects with probability p [29].

- Scale-Free Networks: Generated with degree distributions following a power law ( P(d)∼d^{−α} ) with different parameters for in-degree (αin) and out-degree (αout) [29].

- Semi-Scale-Free Networks: Feature power law out-degree distribution but uniform in-degree distribution, with only 50% of nodes having outgoing edges [29].

- Small-World Networks: Created using the Watts-Strogatz model starting with n nodes with degree k in a regular lattice with rewiring probability p [29].