CRE-DDC Models for Complex Traits: A Comprehensive Guide from Development to Clinical Translation

This article provides a comprehensive resource for researchers and drug development professionals on the application of Cre-recombinase and Drug Discovery Center (CRE-DDC) models in deciphering complex polygenic traits.

CRE-DDC Models for Complex Traits: A Comprehensive Guide from Development to Clinical Translation

Abstract

This article provides a comprehensive resource for researchers and drug development professionals on the application of Cre-recombinase and Drug Discovery Center (CRE-DDC) models in deciphering complex polygenic traits. We explore the foundational principles of genetic engineering and polygenic architecture, detail methodological approaches for model design and high-throughput screening, address critical troubleshooting and optimization challenges, and present rigorous validation and comparative analysis frameworks. By synthesizing current methodologies and real-world case studies, this guide aims to advance the use of CRE-DDC models in identifying novel therapeutic targets and accelerating drug discovery for complex diseases.

Decoding Complex Traits: Genetic Architecture and CRE-DDC Model Fundamentals

The study of complex traits requires frameworks that bridge the gap between genetic association and biological mechanism. Within the context of CRE-DDC (Cis-Regulatory Element - Disease Development Context) model research, understanding polygenic risk involves dissecting how non-coding genetic variants in regulatory elements collectively influence disease phenotypes through specific developmental and cellular contexts. Genome-wide association studies (GWAS) have identified hundreds of thousands of genomic loci associated with human traits and diseases [1]. However, over 90% of these variants fall in noncoding regions of the genome, predominantly in regulatory elements that exhibit cell type-specific usage [1]. This enrichment suggests that many disease-associated noncoding variants affect gene expression through cis-regulatory elements (CREs), including enhancers, promoters, silencers, and insulators [1].

The CRE-DDC model posits that the phenotypic expression of polygenic risk requires understanding the dynamic activity of CREs across specific disease-relevant developmental contexts and cell types. This framework is essential because complex diseases often involve multiple organ systems, implicating multiple tissues and cell types [1]. Noncoding regulatory elements have exquisitely cell type-specific usage, suggesting that a disease-associated noncoding variant in a given regulatory element may exert its effects only in the specific cell types that use this regulatory element [1]. The DDC component thus provides the necessary context for interpreting how CREs collectively contribute to polygenic risk across different disease trajectories.

From GWAS Signals to Causal Variants: Analytical Prioritization Methods

The Challenge of Linkage Disequilibrium

GWAS summary statistics provide the set of variants most strongly associated with a trait, but linkage disequilibrium (LD) obscures causative variants among a co-inherited set at a given locus [1]. LD, the nonrandom association of alleles, depends on population-level factors such as natural selection and genetic bottlenecks, and cellular-level factors such as meiotic recombination frequency between variants [1]. High LD in a locus can render non-causative variants statistically indistinguishable from the true causative variant(s), a challenge exacerbated by the use of SNP arrays rather than whole-genome sequencing in most GWAS to date [1].

Statistical Fine-Mapping Approaches

Post-GWAS analyses aiming to predict causative variant(s) in disease-associated GWAS loci are collectively referred to as fine-mapping [1]. Several computational approaches have been developed to address the LD challenge:

- LD-based filtering: Filtering variants based on an arbitrary LD threshold (pairwise correlation, r²) with the lead variant. This strategy has limitations as it fails to account for potential joint effects of multiple causative variants in the locus (allelic heterogeneity) and does not provide a measure of confidence that a given variant is causative [1].

- Penalized regression models: Methods including lasso and elastic net that jointly analyze all variants in a locus, simultaneously estimate effect sizes, and shrink the contribution of variants with small effect sizes toward zero. These allow for allelic heterogeneity but tend to be sparse, potentially excluding true causative variants when they are highly correlated [1].

- Bayesian fine-mapping: Approaches that determine posterior inclusion probabilities (PIPs) for each variant in a locus, providing a statistical framework for causal variant prioritization [1].

Table 1: Comparison of Statistical Fine-Mapping Methods

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| LD-based Filtering | Filters variants exceeding LD threshold with lead variant | Simple implementation | Does not account for allelic heterogeneity; uses arbitrary thresholds |

| Penalized Regression | Simultaneous effect size estimation with shrinkage | Allows for multiple causal variants; provides effect sizes | May exclude true causal variants in high LD |

| Bayesian Fine-Mapping | Calculates posterior inclusion probabilities (PIPs) | Provides probability measures for causality | Computational complexity; depends on prior specifications |

Functionally Informed Fine-Mapping

Refinements to fine-mapping incorporate functional genomic data to improve resolution. This integration leverages the understanding that noncoding variants can affect cellular functions and gene expression through multiple mechanisms:

- Alteration of transcription factor binding dynamics due to sequence changes in regulatory elements [1]

- Changes to three-dimensional chromatin conformation affecting enhancer-promoter interactions [1]

- Disruption of regulatory element function through various molecular mechanisms [1]

These approaches use functional genomic annotations as priors to prioritize variants more likely to have biological effects, significantly improving fine-mapping resolution [1].

Experimental Validation of Causal Variants

High-Throughput Empirical Prioritization

After analytical prioritization, empirical methods enable functional validation of putative causal variants:

- Massively Parallel Reporter Assays (MPRAs): These enable high-throughput testing of thousands of sequences for regulatory activity, identifying variants that alter transcriptional regulation [1].

- CRISPR-based screens (CRISPRi/a): CRISPR interference and activation approaches allow targeted perturbation of noncoding regions to assess their effects on gene expression and cellular phenotypes [1].

Endogenous Validation Methods

Once candidate causal variants are identified through high-throughput methods, validation in endogenous contexts is essential:

- Allelic imbalance analysis: Measuring differential expression of alleles in primary human tissue samples to confirm regulatory effects of variants in their native genomic context [1].

- Genome editing in cellular and animal models: Using techniques like CRISPR-Cas9 to introduce specific variants and assess their effects on molecular and cellular phenotypes in relevant model systems [1].

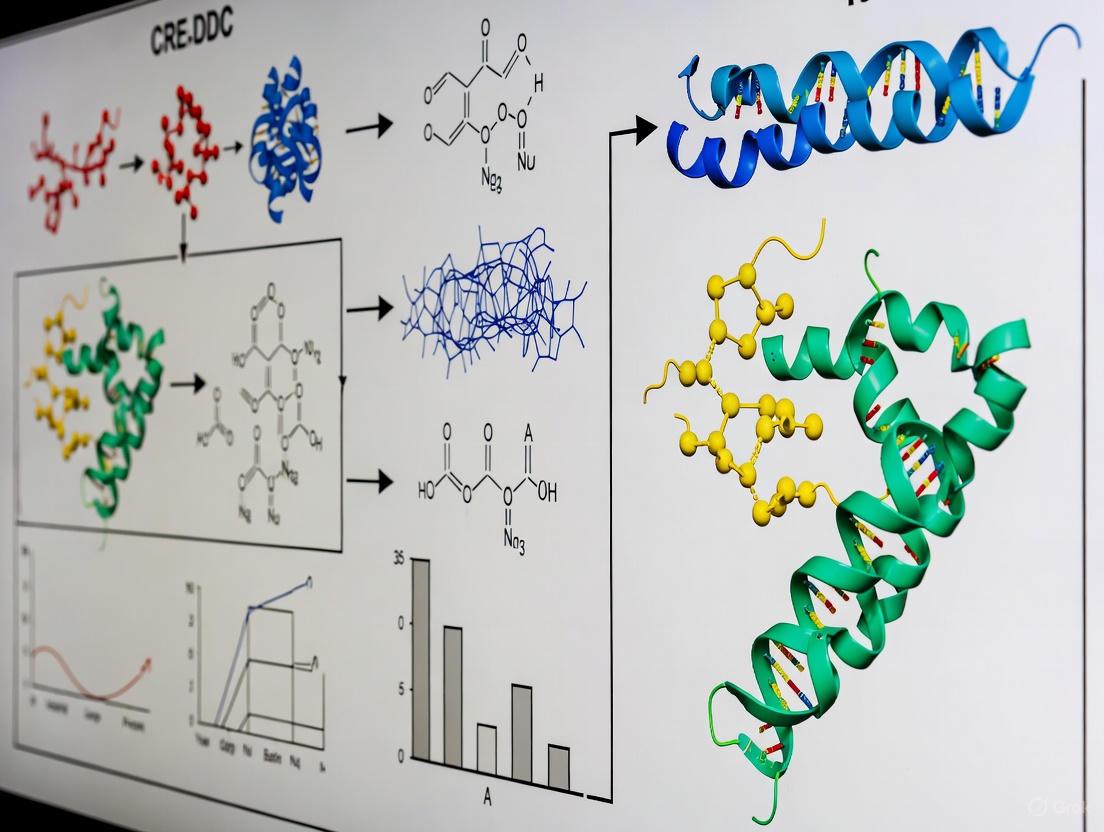

Diagram 1: Causal Variant Identification Workflow. This diagram outlines the sequential process from initial GWAS findings through to the validation of causal genetic variants.

Polygenic Risk Score Calculation and Application

Foundation of PRS

Polygenic risk scores (PRS) are defined as single value estimates of an individual's common genetic liability to a phenotype, calculated as a sum of their genome-wide genotypes weighted by corresponding genotype effect size estimates derived from GWAS summary statistics [2]. PRS analyses require two input datasets: (1) base data from GWAS summary statistics, and (2) target data comprising genotypes and phenotypes in individuals of the target sample [2].

The predictive power of PRS is fundamentally limited by the accuracy of GWAS effect size estimates and differences between base and target samples. While PRS could theoretically explain the SNP-heritability (h²snps) of a trait with perfect effect size estimates, predictive power is typically substantially lower but tends toward h²snp as GWAS sample sizes increase [2].

Quality Control Procedures

The power and validity of PRS analyses depend on rigorous quality control of both base and target data [2]:

Base Data QC:

Target Data QC:

- Sample size: PRS analyses involving association testing should use target sample sizes of at least 100 individuals to minimize misleading results from less stringent QC and underpowered association tests [2].

- Standard GWAS QC: Both base and target data should undergo standard GWAS quality control including genotyping rate > 0.99, sample missingness < 0.02, heterozygosity P > 1×10⁻⁶, minor allele frequency > 1%, and imputation info score > 0.8 [2].

Methodological Considerations

Important challenges in PRS construction include selecting SNPs for inclusion and determining appropriate shrinkage of GWAS effect size estimates [2]. When parameters for generating optimal PRS are unknown, the target sample can be used for model training with appropriate cross-validation to avoid overfitting [2].

Table 2: Key Quality Control Measures for PRS Analysis

| Data Type | QC Measure | Threshold/Requirement | Rationale |

|---|---|---|---|

| Base Data | Heritability (h²snp) | > 0.05 | Avoid misleading conclusions from low-heritability traits |

| Base Data | Effect allele identification | Must be clearly defined | Prevents spurious results from reversed effect direction |

| Target Data | Sample size | ≥ 100 individuals | Minimizes misleading results from underpowered tests |

| Both | Genotyping rate | > 0.99 | Ensures data quality for accurate scoring |

| Both | Minor allele frequency | > 1% | Filters rare variants with unstable effect estimates |

| Both | Imputation quality | Info score > 0.8 | Ensures high-quality imputed genotypes |

Technical Challenges and Limitations

Ancestry-Related Performance Disparities

A significant limitation in current PRS applications is their reduced performance in diverse populations. Most available PRS were built with genetic data from predominantly European-ancestry populations, and performance declines when applied to populations different from those in which they were derived [3]. This disparity creates urgent need to improve PGS performance in currently under-studied populations.

Multi-ancestry approaches that combine GWAS data from multiple populations produce PRS that perform better across diverse populations than approaches utilizing smaller single-population GWAS results matched to the target population [3]. Specifically, multi-ancestry scores built with methods like PRS-CSx outperform other approaches across diverse populations [3].

Biological Interpretation Challenges

Beyond statistical challenges, biological interpretation of polygenic risk presents several difficulties:

- Variant-to-gene mapping: GWAS-nominated noncoding variants are often assigned to the nearest gene, but enhancers can skip nearby genes and regulate genes more than 1 Mb away in linear distance [1].

- Pleiotropy: Single or multiple gene variants can increase risk of some diseases while decreasing risk of others, creating challenges for therapeutic interventions [4].

- Cell type specificity: Identifying the specific cell types in which genetic variants affect active regulatory elements is critical for understanding disease mechanisms [1].

Research Reagents and Experimental Tools

Table 3: Essential Research Reagents for Polygenic Risk Studies

| Reagent/Tool | Category | Function/Application | Examples/Notes |

|---|---|---|---|

| GWAS Summary Statistics | Data | Base data for PRS calculation | Must include effect sizes, allele information, and P-values [2] |

| Genotyped Target Dataset | Data | Target for PRS application and validation | Requires both genotypes and phenotypes for association testing [2] |

| PLINK | Software | Quality control and basic genetic analysis | Standard tool for performing QC procedures [2] |

| LD Score Regression | Software | Heritability estimation and QC | Estimates h²snp from GWAS summary statistics [2] |

| CRISPR-based Screens | Experimental | Functional validation of noncoding variants | CRISPRi/a for perturbing regulatory elements [1] |

| MPRAs | Experimental | High-throughput regulatory activity testing | Assesses thousands of sequences for regulatory function [1] |

| Transgenic Models | Experimental | In vivo functional validation | e.g., Ucp1-Cre models for brown fat research [5] |

Future Directions and Ethical Considerations

Emerging Technologies

As GWAS sample sizes increase and functional genomics advances, several emerging technologies promise to enhance polygenic risk research:

- Multiplex gene editing: The development of technologies enabling simultaneous editing of multiple genomic loci opens possibilities for studying polygenic effects in model systems [4]. Although not currently possible to target hundreds or thousands of polymorphisms simultaneously, rapid development of gene editing technology suggests this may become feasible in coming decades [4].

- Improved functional annotation: As more causal variants for common diseases are identified through larger sample sizes, increased genome coverage, and improved functional annotation, fine-mapping resolution will continue to improve [4].

Ethical Implications

The advancing capabilities in polygenic risk prediction and manipulation raise significant ethical considerations:

- Heritable polygenic editing (HPE): Theoretical models suggest that editing multiple variants associated with diseases could dramatically reduce lifetime risks [4]. For example, editing ten variants for Alzheimer's disease could reduce lifetime prevalence from 5% to under 0.6% [4]. However, the putatively positive consequences at the individual level may deepen health inequalities at the population level [4].

- Clinical implementation: PRS show promise for clinical application, such as stratifying risk of progression among individuals with subclinical hypothyroidism [6]. However, careful consideration is needed for implementation to avoid misinterpretation and misuse of genetic risk information.

Diagram 2: Evolution of Polygenic Risk Research. This diagram contrasts current limitations in polygenic risk research with promising future directions that address these challenges.

Understanding polygenic risk from GWAS insights to causal variant identification requires integration of statistical genetics, functional genomics, and experimental validation. The CRE-DDC model provides a valuable framework for contextualizing how noncoding variants in regulatory elements collectively influence disease risk through specific developmental and cellular contexts. While significant challenges remain—particularly regarding ancestry-related performance disparities and biological interpretation—advances in fine-mapping methods, functional validation techniques, and multi-ancestry approaches promise to enhance both the predictive power and biological insights gained from polygenic risk research. As these methods continue to evolve, careful attention to ethical implications will be essential for responsible translation of polygenic risk findings into clinical applications.

Core Principles of Cre-Lox Technology and Inducible Systems in Complex Trait Modeling

Cre-Lox technology represents one of the most powerful tools in the geneticist's toolbox, enabling unprecedented precision in dissecting gene function. This site-specific recombinase system allows researchers to bypass embryonic lethality and investigate gene-phenotype relationships in a cell-type-specific and temporally controlled manner. When applied to complex trait modeling, particularly within the framework of the CRE-DDC model (Conditional Recombinase-Enabled Determinants of Complex Traits), this technology provides a sophisticated methodological approach for unraveling the intricate genetic architecture of polygenic characteristics. This technical guide comprehensively outlines the core principles of Cre-Lox systems, details advanced inducible platforms, and provides practical experimental frameworks for implementing these technologies in complex trait research, specifically designed for researchers, scientists, and drug development professionals.

The Cre-Lox system is a site-specific recombinase technology derived from bacteriophage P1 that enables carry out deletions, insertions, translocations, and inversions at specific sites in cellular DNA [7]. The system consists of two fundamental components: the Cre recombinase enzyme and loxP recognition sequences [8]. This technology has revolutionized mouse genetics by allowing conditional gene manipulation that circumvents the embryonic lethality often caused by systemic inactivation of genes essential for development [9] [7].

The Cre protein is a 38 kDa site-specific DNA recombinase that recognizes 34-base-pair loxP sequences [8] [10]. Each loxP site consists of two 13-bp palindromic repeats that function as Cre binding sites, flanking an asymmetric 8-bp core spacer sequence that gives the site directionality [7]. The canonical loxP sequence is: ATAACTTCGTATA-GCATACAT-TATACGAAGTTAT [8]. The length and specificity of this sequence ensure it does not occur randomly in known genomes, allowing for highly specific genetic manipulations [8].

The molecular mechanism involves Cre recombinase proteins binding to the first and last 13 bp regions of a lox site, forming a dimer. This dimer then binds to a dimer on another lox site to create a tetramer [7]. The double-stranded DNA is cut at both loxP sites within the core spacer region, and the strands are rejoined with DNA ligase in an efficient process [7] [8]. The outcome of recombination depends entirely on the orientation and relative position of the loxP sites [8].

Molecular Mechanisms and Genetic Outcomes

Figure 1: Cre-Lox Recombination Outcomes Based on loxP Orientation and Location

The Cre-Lox system enables three primary genetic outcomes based on the arrangement of loxP sites [7] [8]:

Excision/Deletion: When two loxP sites are positioned on the same DNA molecule in the same orientation, Cre-mediated recombination results in the excision of the intervening DNA sequence as a circular molecule, while the original DNA molecule is left with a single loxP site. This is the principle mechanism for creating conditional knockouts [8].

Inversion: When loxP sites are on the same DNA molecule in opposite orientations, recombination causes the inversion of the intervening DNA sequence. The inverted sequence can be flipped back to its original orientation through subsequent recombination events [7].

Translocation: When loxP sites are located on different DNA molecules (such as different chromosomes), Cre-mediated recombination results in a reciprocal translocation. This application is particularly valuable for modeling chromosomal rearrangements found in human diseases [7].

The system functions independently of other accessory proteins or co-factors, allowing broad application across various experimental systems including transgenic animals, embryonic stem cells, and tissue-specific cell types [8].

Advanced Inducible Cre Systems for Temporal Control

While conventional Cre-Lox systems provide spatial control through tissue-specific promoters, many research questions require precise temporal control to address gene function at specific developmental stages or in response to particular stimuli. Two primary inducible systems have been developed to address this need:

Tamoxifen-Inducible Cre System (CreERT2)

The tamoxifen-inducible Cre system utilizes a modified Cre recombinase fused with a mutated ligand-binding domain of the estrogen receptor (ER) [10]. This fusion protein, known as CreERT or the improved CreERT2 version, remains sequestered in the cytoplasm in complex with heat shock protein 90 (HSP90) under basal conditions [10]. Upon administration of the synthetic steroid tamoxifen (or its active metabolite 4-hydroxytamoxifen), the conformational change disrupts the HSP90 interaction, leading to nuclear translocation of CreERT2 and subsequent recombination at loxP sites [10]. The CreERT2 variant demonstrates approximately tenfold greater sensitivity to 4-OHT in vivo compared to the original CreERT, making it the preferred choice for most applications [10].

Tetracycline-Inducible Cre System

The tetracycline (Tet)-inducible system offers an alternative approach for temporal control, utilizing the tetracycline derivative doxycycline (Dox) as the inducing agent [10]. This system operates in two complementary configurations:

Tet-On System: The reverse tetracycline-controlled transactivator (rtTA) binds to tetracycline response elements (TRE) and activates Cre expression only in the presence of doxycycline [10].

Tet-Off System: The tetracycline-controlled transactivator (tTA) binds to TRE and activates Cre expression under basal conditions, but is inhibited when doxycycline is administered [10].

Doxycycline is typically administered via feed or drinking water, making this system particularly suitable for long-term or chronic induction studies [10].

Figure 2: Molecular Mechanisms of Inducible Cre Systems

Experimental Design and Breeding Strategies

Core Breeding Scheme for Tissue-Specific Knockouts

The most efficient breeding scheme for generating tissue-specific knockout mice involves a multi-generational approach [9]:

Initial Cross: Mate a homozygous loxP-flanked ("floxed") mouse with a Cre transgenic mouse strain. Approximately 50% of the offspring will be heterozygous for the loxP allele and hemizygous/heterozygous for the Cre transgene [9].

Experimental Cross: Mate these double heterozygous mice back to homozygous loxP-flanked mice. Approximately 25% of the progeny will be homozygous for the loxP-flanked allele and carry the Cre transgene, serving as experimental animals [9].

Control Animals: Approximately 25% will be homozygous for the loxP-flanked allele but lack the Cre transgene, serving as ideal controls for distinguishing between Cre-mediated recombination effects and potential confounding factors [9].

This breeding scheme requires careful genotyping at each generation and may need adaptation based on the specific genetic backgrounds and characteristics of the loxP-flanked and Cre strains utilized [9].

Methodologies for Mapping Transgene Integration Sites

Random integration of Cre transgenes via pronuclear microinjection can disrupt endogenous genes or create unexpected phenotypes, making integration site mapping crucial [11]. Several methods are available:

Targeted Locus Amplification (TLA): This method enables selective amplification and next-generation sequencing of transgene integration loci without requiring detailed prior knowledge of the region. TLA involves crosslinking, fragmentation, re-ligation, and selective amplification of DNA, yielding over 100 kb of sequence information flanking the transgene [11].

Inverse PCR (iPCR): This traditional method relies on knowledge of restriction sites within the transgene to amplify flanking genomic regions. While effective for simple integrations, it works best for low-copy-number integrations and provides limited information about structural changes [11].

Splinkerette PCR: Developed for cloning retroviral integration sites, this method is suited for single or low-copy integrations but shares similar limitations with iPCR regarding structural variant detection [11].

For comprehensive characterization, TLA represents the most powerful approach as it identifies exact integration sites, breakpoint sequences, and structural changes occurring at the integration site [11].

Table 1: Tissue-Specific Promoters for Cre Driver Lines

| System | Tissue/Cell Type | Targeted Promoter/Enhancer | Primary Applications |

|---|---|---|---|

| Nervous | Cerebral Neurons | CaMKIIα | Forebrain-specific gene deletion |

| Astrocytes | GFAP | Astrocyte-specific manipulation | |

| Dopaminergic Neurons | Slc6a3 (DAT) | Parkinson's disease modeling | |

| Immune | Macrophages | Lyz2 | Innate immunity studies |

| Dendritic Cells | CD11c (Itgax) | Antigen presentation research | |

| T-cells | CD4 | T-cell function and development | |

| B-cells | CD19 | B-cell biology and humoral immunity | |

| Metabolic | Liver | Alb | Liver-specific gene function |

| Pancreatic β-cells | Ins1 (MIP) | Diabetes modeling | |

| Adipose Tissue | Lepr | Obesity and metabolic syndrome | |

| Musculoskeletal | Osteoblasts | BGLAP (OC) | Bone formation and remodeling |

| Skeletal Muscle | ACTA1 (HSA) | Muscular dystrophy models | |

| Chondrocytes | Col10a1 | Skeletal development and arthritis | |

| Other | Kidney | Aqp2 | Renal function and disease |

| Skin Epidermis | Krt14 | Epithelial biology and carcinogenesis |

Source: Adapted from commonly used Cre promoters [10]

Applications in Complex Trait Modeling and CRE-DDC Framework

The Cre-Lox system provides an indispensable methodological foundation for the CRE-DDC (Conditional Recombinase-Enabled Determinants of Complex Traits) model, which addresses fundamental challenges in complex trait genetics:

Addressing Context Dependency in Complex Traits

Complex traits demonstrate substantial context dependency, where genetic effects are modulated by environmental variables, age, sex, or cellular milieu [12]. The CRE-DDC framework leverages inducible Cre systems to model these gene-by-environment (GxE) interactions by enabling precise temporal control over gene perturbation, allowing researchers to administer manipulations after specific environmental exposures [12]. This approach is particularly valuable for traits where SNP heritability estimates fall substantially short of pedigree-based heritability predictions, suggesting additional genetic architectures beyond simple additive models [13].

Elucidating Somatic Genetic Contributions

Recent evidence suggests that somatic variants interacting with heritable variants may represent an underappreciated component of complex trait architecture [13]. Somatic mutation rates are almost two orders of magnitude higher than germline rates, and certain disease-associated genes appear characteristically hypermutable [13]. The CRE-DDC model utilizes Cre-Lox technology to engineer somatic genetic alterations in specific cell types at defined developmental timepoints, enabling direct investigation of how somatic variants contribute to complex trait variation and potentially explain portions of the "missing heritability" observed in genome-wide association studies [13].

Overcoming Technical Limitations in Traditional Genetics

Traditional GWAS approaches estimate marginal additive effects of alleles across multidimensional contexts, potentially obscuring significant context-specific effects [12]. The CRE-DDC framework addresses this limitation through tissue-specific and inducible genetic manipulation, allowing for direct testing of candidate genes in specific cell types under controlled environmental conditions. This approach is particularly powerful for validating effector genes identified through statistical genetics and elucidating their mechanistic roles in complex trait pathophysiology [13] [14].

Table 2: Research Reagent Solutions for Cre-Lox Experiments

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Cre Driver Lines | ACTB-Cre (Ubiquitous) | General deletion across tissues |

| Cdh5-CreERT2 (Endothelial) | Inducible vascular-specific recombination | |

| Syn1-Cre (Neuronal) | Neuron-specific gene manipulation | |

| Floxed Alleles | Commercial loxP-flanked mice | Conditional knockout targets |

| Custom-designed targeting vectors | Creating novel conditional alleles | |

| Reporter Strains | Rosa26-LSL-tdTomato | Fate mapping and lineage tracing |

| Ai14 (Rosa-CAG-LSL-tdTomato) | Cre activity detection and visualization | |

| Inducing Agents | Tamoxifen | CreERT2 system activation |

| 4-Hydroxytamoxifen | More potent CreERT2 activation | |

| Doxycycline | Tet-on/Tet-off system regulation | |

| Validation Tools | TLA kits | Transgene integration site mapping |

| Quantitative PCR assays | Zygosity determination and copy number analysis |

Source: Compiled from multiple references [9] [11] [8]

Technical Considerations and Limitations

Potential Artifacts and Confounding Factors

Several technical considerations must be addressed when implementing Cre-Lox technology:

Cre Toxicity: Cre recombinase itself can produce phenotypic effects independent of loxP recombination, necessitating appropriate Cre-only controls [9]. Some cell types, particularly in the nervous system, demonstrate sensitivity to high Cre expression levels.

Incomplete Recombination: Most Cre lines do not achieve 100% recombination efficiency, potentially resulting in mosaic animals with mixed populations of recombined and non-recombined cells [7]. This can be advantageous for studying cell-autonomous effects but complicates phenotypic interpretation.

Unexpected Recombination: Cre can recognize cryptic pseudo-loxP sites in the genome, leading to unauthorized recombination events and potential DNA damage [7]. Computational screening of target loci for such sequences is recommended.

Compensatory Mechanisms: Recent research has identified that certain cell types, particularly dendritic cells and Langerhans cells, can overcome Cre-Lox induced gene deficiencies by acquiring cytosolic material from surrounding cells through a novel mechanism termed "intracellular monitoring" [15]. This potential compensatory pathway should be considered when interpreting null phenotypes.

Optimization Strategies for Complex Trait Studies

Characterization of New Cre Lines: Thoroughly validate recombination efficiency, specificity, and potential off-target effects using reporter strains before undertaking complex trait studies [11].

Genetic Background Control: Maintain consistent genetic backgrounds through backcrossing and utilize appropriate littermate controls to minimize confounding effects from modifier genes [9].

Temporal Control Optimization: For inducible systems, titrate inducer concentrations and administration protocols to balance recombination efficiency with potential toxicity [10].

Integration Site Analysis: For transgenic Cre lines, map integration sites to identify potential disruptions of endogenous genes that might complicate phenotypic interpretation [11].

Cre-Lox technology and its advanced inducible derivatives provide an exceptionally powerful methodological platform for complex trait modeling within the CRE-DDC framework. The precise spatiotemporal control afforded by these systems enables researchers to move beyond correlation to causation in dissecting the genetic architecture of polygenic traits. By integrating tissue-specific promoters with temporal control systems, implementing rigorous breeding strategies, and accounting for potential technical artifacts, researchers can leverage these technologies to address fundamental questions in complex trait biology. As the field advances, continued refinement of these tools—including the development of more specific Cre drivers, reduced-toxicity recombinases, and sophisticated multiplexing approaches—will further enhance their utility in unraveling the intricate relationship between genotype and phenotype in complex biological systems.

Target validation represents a critical gateway in the drug development pipeline, determining whether potential therapeutic targets progress toward clinical investment. This technical guide examines the integration of Domain-Disease Context (DDC) frameworks within modern validation paradigms, particularly for complex traits research. We detail how DDC models enhance validation stringency by incorporating multi-dimensional biological context—spanning human genetic evidence, tissue expression profiles, and clinical datasets—to build mechanistic confidence before substantial resource allocation. By framing established validation principles within the specific context of CRE-DDC model complexes, this whitepaper provides researchers with structured experimental methodologies, quantitative assessment tools, and visual workflows to systematically prioritize targets with the highest therapeutic potential.

The Critical Foundation of Target Validation

In the drug development continuum, target validation ensures that engagement of a putative biological target (e.g., a gene, protein, or pathway) yields a potential therapeutic benefit with an acceptable safety profile [16]. This process is paramount; failure to adequately validate a target is a primary contributor to the high attrition rates observed in Phase II clinical trials, where approximately 66% of novel compounds fail due to insufficient efficacy or safety concerns [16]. The core objective is to establish a causal link between target modulation and disease phenotype, moving beyond mere correlation.

The emergence of complex trait research, which investigates conditions governed by multiple genetic and environmental factors, has necessitated more sophisticated validation frameworks. The CRE-DDC (Cis-Regulatory Element - Domain-Disease Context) model addresses this need by emphasizing the biological and pathological context in which a target operates. This model integrates:

- Domain Knowledge: Existing biological understanding of pathways and systems.

- Disease Mechanisms: Insights into the specific pathophysiology of complex traits.

- Contextual Data: Multi-omic profiles (genomic, transcriptomic, proteomic) across tissues, cell types, and disease states.

This integrated approach provides a systematic method for building confidence in a target's role in a disease, which we designate as "target confidence building" [17]. It shifts the paradigm from validating targets in isolation to validating them within their precise functional and disease-relevant domains.

A Structured Framework for DDC Integration in Validation

The DDC framework structures the validation process around three core components derived from human data and three from preclinical qualification, as synthesized from established metrics [16]. The following table summarizes the key components and their ascending metrics for building confidence in a target.

Table 1: Key Components for DDC-Driven Target Validation and Qualification

| Component | Description | Key Ascending Metrics for Confidence |

|---|---|---|

| Human Genetic Evidence [16] | Using human genetics to link target to disease. | Variant association → Segregation in pedigrees → Causative mutation identified |

| Tissue/Pathway Expression [16] | Assessing target presence in disease-relevant tissues/pathways. | mRNA/protein detected → Expression in relevant cells → Altered expression in disease state |

| Clinical Experience [16] | Leveraging known clinical data related to the target. | Known drug target class → Clinical data on related targets → Human proof-of-concept (POC) with target modulation |

| Preclinical Pharmacology [16] | Using tool compounds to probe target function in vitro/vivo. | In vitro binding → In vitro functional effect → In vivo POC in model |

| Genetically Engineered Models [16] | Manipulating target genetics in model systems. | Cellular phenotype from knockdown/overexpression → Phenotype in animal model → Humanized model phenotype |

| Translational Endpoints [16] | Measuring biomarkers translatable to human trials. | Biomarker change in model → Biomarker predicts efficacy in model → Biomarker is direct mediator of disease |

The power of this framework is its iterative nature. Evidence from one component, such as human genetics, should inform and be tested against evidence from another, such as tissue expression or preclinical models. This creates a reinforcing loop of evidence that solidifies the target's validity within its specific domain and disease context.

Quantitative Modeling and Computational DDC Tools

Quantitative modeling is indispensable for predicting the potential impact of target modulation, especially for polygenic complex traits. Recent analyses of heritable polygenic editing (HPE) demonstrate its theoretical power. For instance, modeling the effect of editing known risk variants for common diseases reveals dramatic potential reductions in lifetime risk among individuals with edited genomes [4].

Table 2: Predicted Impact of Polygenic Editing on Disease Risk in Edited Genomes

| Disease | Baseline Lifetime Prevalence | Prevalence After Editing 10 Top Variants | Key Candidate Genes/Loci |

|---|---|---|---|

| Alzheimer's Disease [4] | 5% | < 0.6% | APOE, etc. |

| Coronary Artery Disease [4] | 6% | 0.1% | LDLR, PCSK9, etc. |

| Type 2 Diabetes [4] | 10% | 0.2% | Various |

| Schizophrenia [4] | 1% | 0.1% | Various |

| Major Depressive Disorder [4] | 15% | 9.0% | Various |

These models, while currently speculative for germline editing, provide a quantitative framework for setting expectations about the degree of phenotypic change required for therapeutic benefit. They underscore the importance of understanding variant effect sizes, allele frequency, and pleiotropy—all core considerations in a DDC framework.

Computational tools are critical for operationalizing the DDC approach:

- Genome-Wide Association Studies (GWAS) and Fine-Mapping: Large-scale GWAS in diverse populations are essential for identifying disease-associated variants. Subsequent statistical fine-mapping, as demonstrated in a study of 50,309 Holstein bulls that identified 381 significant association peaks, helps prioritize putative causal variants and genes (e.g., AOPEP, GC, VPS13B) for further validation [18].

- Proteomics for Target Identification and Validation: Proteomic technologies aim to globally analyze protein expression and post-translational modifications, which can directly implicate proteins in disease pathways. However, challenges remain, including the immense complexity of proteomes and technical limitations in analyzing membrane proteins, a key class of drug targets [17].

The following diagram illustrates the integrated computational and experimental workflow for a DDC-driven target validation pipeline.

Experimental Protocols for DDC-Driven Validation

Protocol: In-Depth Phenotypic Characterization of Transgenic Models

The comprehensive validation of any genetic model, especially those expressing auxiliary elements like Cre-recombinase, is a cornerstone of rigorous research. Unexamined assumptions about model fidelity can introduce profound confounding effects.

Background: The Ucp1-CreEvdr mouse line, widely used for brown adipose tissue research, was recently subjected to rigorous validation. This revealed that the transgene itself, independently of any conditional knockout, caused major transcriptomic dysregulation in fat tissues, growth retardation, craniofacial abnormalities, and high mortality in homozygotes. This was traced to a complex genomic insertion event on chromosome 1 that disrupted several endogenous genes and retained an extra, potentially expressed, Ucp1 gene copy [5].

Methodology:

- Genomic Mapping: Precisely map the transgene insertion site using techniques like whole-genome sequencing or junction PCR. This identifies potential disruptions to endogenous genes and the structure of the integrated concatemer [5].

- Copy Number Assay: Develop and employ a quantitative PCR (qPCR) assay to determine transgene copy number in experimental animals, moving beyond simple qualitative genotyping. This is crucial for identifying homozygous animals and interpreting dose-dependent effects [5].

- Phenotypic Screening: Conduct systematic phenotyping of hemizygous and homozygous animals against wild-type littermate controls. This should include:

- Longitudinal Monitoring: Track survival, body weight, and overall health from weaning to adulthood [5].

- Tissue Dissection and Weights: At endpoint, systematically dissect and weigh key metabolic tissues (e.g., iBAT, pgWAT, rWAT) and lean masses (e.g., quadriceps) to identify tissue-specific growth defects [5].

- Morphological Analysis: For observed abnormalities (e.g., skull shape), perform detailed morphological assessments on dissected tissues, such as Alizarin Red staining for bone structure [5].

- Transcriptomic Analysis: Perform RNA sequencing on relevant tissues (e.g., BAT, WAT) from transgenic and control animals to identify transcriptomic dysregulation that may indicate altered tissue function independent of the intended genetic manipulation [5].

Protocol: Functional Validation of Target Engagement in Complex Traits

This protocol outlines a multi-layered approach to confirm that a candidate gene or variant, prioritized by DDC, functionally influences a complex trait.

Background: For complex traits, establishing a causal link from a genetic association to a molecular function and ultimately to a phenotype is non-trivial. This requires moving from statistical association to mechanistic insight, a process advanced by fine-mapping and functional genomics [18].

Methodology:

- Variant-to-Function (V2F) Analysis:

- Fine-Mapping: Following a GWAS hit, use statistical fine-mapping methods (e.g., Bayesian approaches) on large-scale genomic data to narrow the association signal to a set of putative causal variants with high posterior probability [18].

- Functional Annotation: Annotate prioritized variants using epigenomic data (e.g., ChIP-seq for histone marks, ATAC-seq for chromatin accessibility) from disease-relevant cell types to determine if they reside in functional regulatory elements.

- In Vitro Mechanistic Studies:

- Gene Editing: Use CRISPR/Cas9-based genome editing in relevant cell models to introduce or correct the candidate risk variant in its endogenous genomic context.

- Functional Assays: Quantify the impact of the edited variant on candidate gene expression (e.g., qPCR, RNA-seq), protein function, and pathway activity using reporter assays, Western blotting, or targeted proteomics [17].

- In Vivo Phenotypic Confirmation:

- Animal Models: Develop and characterize animal models (e.g., knock-in, humanized models) carrying the human risk or protective allele.

- Challenge Paradigms: Subject these models to physiological or environmental challenges relevant to the human disease (e.g., high-fat diet for metabolic traits, behavioral tests for neurological traits) to assess the in vivo consequence of the variant on the complex phenotype.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential reagents and their applications for implementing the experimental protocols within a DDC validation framework.

Table 3: Essential Research Reagents for DDC-Centric Target Validation

| Reagent / Tool | Function in Validation | Key Considerations |

|---|---|---|

| Validated Cre-driver Lines [5] | Enables cell-type-specific genetic manipulation (e.g., knockout, knock-in) in model organisms. | Requires rigorous validation of insertion site, copy number, and off-target phenotypic effects to avoid misinterpretation. |

| CRISPR-Cas9 Systems [4] | Facilitates targeted genome editing for creating isogenic cell lines or animal models with specific variants (knock-in, knockout). | Efficiency, specificity, and delivery are critical. Off-target effects must be assessed. |

| Polygenic Risk Score (PRS) Calculators [4] | Computational tools that aggregate the effects of many genetic variants to estimate an individual's genetic predisposition to a complex trait. | Highly dependent on the size and diversity of the underlying GWAS summary statistics. |

| Quantitative Proteomics Kits [17] | Reagents for mass spectrometry-based profiling of protein expression, post-translational modifications, and protein-protein interactions. | Crucial for assessing target expression and engagement. Challenges include membrane protein analysis and dynamic range. |

| BAC Transgenic Constructs [5] | Bacterial Artificial Chromosomes used to generate transgenic models, as they contain large genomic regions for more physiological transgene expression. | Random genomic integration can cause disruptive insertions and passenger gene effects, necessitating thorough characterization. |

| Translational Biomarker Assays [16] | Kits for measuring biomarkers (e.g., in plasma, CSF) that are mechanistically linked to the target and can be used across species. | Essential for demonstrating target engagement and pharmacodynamic effects in preclinical models and human trials. |

The integration of Domain-Disease Context (DDC) frameworks into target validation represents a necessary evolution in the pursuit of therapies for complex human traits. By systematically incorporating human genetic evidence, multi-omic data, and clinical context, the DDC model moves target validation beyond a simple confirmatory step and establishes it as an iterative, confidence-building process. This approach, powered by quantitative modeling and stringent experimental protocols—including the essential step of deeply characterizing research tools like Cre-driver lines—directly addresses the high failure rates in drug development. As the field advances toward manipulating polygenic risk, the principles of context, causality, and quantitative rigor outlined in this guide will be paramount. The CRE-DDC model provides a structured path forward for researchers to prioritize and validate targets with a higher probability of clinical success, ultimately accelerating the delivery of new medicines.

Complex traits, including many common human diseases, do not follow simple Mendelian inheritance patterns. Instead, they arise from the interplay of multiple genetic and environmental factors, creating a multifaceted architectural landscape. The genetic architecture of a trait describes how genetic factors contribute to its development and manifestation [19]. While some cardiovascular conditions like hypertrophic cardiomyopathy often fit a simple Mendelian paradigm, most complex traits exhibit marked locus heterogeneity, allelic heterogeneity, and polygenic influences [19].

Emerging evidence suggests that a substantial proportion of dilated cardiomyopathy (DCM) may have an oligogenic basis, where multiple rare variants from different, unlinked loci collectively determine the disease phenotype [19]. Preliminary data indicates this may explain 20-30% of DCM cases, with one European cohort reporting up to 38% with oligogenic contributions [19]. Beyond rare coding variants, the complete genetic architecture encompasses low-frequency variations, common polymorphisms, non-coding regulatory elements, epigenetic modifications, and gene-environment interactions [19].

Table 1: Components of Complex Trait Architecture

| Genetic Component | Description | Example in Disease |

|---|---|---|

| Rare Variants | Protein-altering variants with large effect sizes | Monogenic DCM subtypes |

| Oligogenic Contributions | Multiple rare variants across unlinked loci | Up to 38% of DCM cases [19] |

| Common Variants | Small-effect polymorphisms identified via GWAS | Polygenic risk for common diseases |

| Gene-Environment Interactions | Environmental exposure effects modified by genetics | Alcohol- or chemotherapy-induced DCM [19] |

| Non-Coding Regulatory Elements | Variants affecting gene regulation | Promoter/enhancer variants influencing expression |

Methodologies for Delineating Complex Traits

Genome-Wide Association Studies (GWAS)

GWAS has become a fundamental tool for identifying common genetic variants associated with complex traits. This approach tests hundreds of thousands to millions of single-nucleotide polymorphisms (SNPs) across the genome to identify statistical associations with specific diseases or quantitative traits.

Experimental Protocol: A recent large-scale DCM GWAS protocol [20] involved:

- Sample Collection: Assembling 14,256 DCM cases and 1,199,156 controls from 16 studies participating in the Heart Failure Molecular Epidemiology for Therapeutic Targets (HERMES) Consortium

- Phenotype Standardization: Applying consistent DCM definitions characterized by left ventricular systolic dysfunction with left ventricular enlargement after excluding known clinical causes

- Genome-Wide Genotyping: Using array-based technologies followed by imputation to increase genomic coverage

- Meta-Analysis: Combining results across participating studies using fixed-effects inverse-variance weighted approaches

- Multi-Trait Analysis: Integrating data from three left ventricular traits in 36,203 UK Biobank participants

- Functional Annotation: Linking associated loci to putative effector genes through integration with single-nucleus transcriptomic data

This methodology identified 80 genomic risk loci for DCM and prioritized 62 putative effector genes, including several with established rare variant DCM associations such as MAP3K7, NEDD4L, and SSPN [20].

Figure 1: DCM GWAS Workflow from Sample to Discovery

Transgenic Model Validation

Bacterial artificial chromosome (BAC) transgenic models enable spatial and temporal genetic manipulation but require rigorous validation to avoid misinterpretation of results. The Ucp1-Cre model widely used in brown adipose tissue research exemplifies both the utility and limitations of such approaches [5].

Experimental Protocol: Comprehensive validation of the Ucp1-CreEvdr line included [5]:

- Genetic Crosses: Generating control, hemizygous, and homozygous littermates through controlled breeding strategies

- Quantitative Copy Number Assay: Developing a Cre copy number detection method in genomic DNA rather than relying on endpoint PCR genotyping

- Phenotypic Characterization: Monitoring mortality, body weights, tissue-specific growth patterns, and craniofacial abnormalities

- Transcriptomic Analysis: Performing comprehensive gene expression profiling in brown and white adipose tissues

- Insertion Site Mapping: Identifying transgene integration location and accompanying genomic alterations through molecular techniques

- Thermogenic Challenge Tests: Assessing gene expression under high thermogenic burden conditions

This rigorous approach revealed that the Ucp1-CreEvdr transgene insertion in chromosome 1 was accompanied by large genomic alterations disrupting several genes, and the transgene retained an extra Ucp1 gene copy that may be highly expressed under high thermogenic burden [5].

Table 2: Key Research Reagents for Complex Trait Analysis

| Research Reagent | Function/Application | Technical Considerations |

|---|---|---|

| BAC Transgenic Models | Cell type-specific genetic manipulation | Random integration can cause genomic disruptions; requires validation [5] |

| CRISPR-Cas9 Systems | Targeted genome editing | Enables multiplex editing for polygenic trait modeling [4] |

| Polygenic Scores | Cumulative genetic risk assessment | Effect sizes increasing with larger GWAS sample sizes [4] |

| Single-Nucleus RNA-seq | Cell type-specific expression profiling | Identifies cellular states and communication networks [20] |

| Deregressed Breeding Values | Phenotypic prediction in animal models | Accounts for varying reliability across individuals [18] |

Case Studies in Complex Trait Pathophysiology

Dilated Cardiomyopathy: From Monogenic to Polygenic

DCM provides a compelling example of how complex genetic architecture bridges genetic associations with pathophysiology. The classic definition of DCM includes left ventricular systolic dysfunction with left ventricular enlargement after exclusion of known clinical causes (except genetic) [19]. While initially considered primarily a monogenic disorder, emerging evidence reveals a more complex architecture.

Pathophysiological Insights: Recent research has demonstrated that polygenic scores can predict DCM in the general population and modify penetrance in carriers of rare DCM variants [20]. This finding has profound implications for genetic testing strategies, suggesting that incorporating polygenic background may improve risk prediction and clinical management. The molecular etiology of DCM involves diverse biological pathways including sarcomeric function, myocardial energy metabolism, calcium handling, and transcriptional regulation [20].

Huntington's Disease: Somatic Expansion and Selective Vulnerability

Huntington's disease (HD), while caused by a CAG repeat expansion in the HTT gene, exhibits complex features in its pathophysiology through tissue-specific somatic instability and modifier genes.

Experimental Protocol: Investigating mismatch repair (MMR) genes in HD [21] involved:

- Genetic Crosses: Generating HD mice with 140 inherited CAG repeats (Q140) crossed with knockout models of 9 HD GWAS/MMR genes

- Somatic Expansion Tracking: Quantifying CAG repeat length changes over time in striatal and cortical neurons

- Molecular Phenotyping: Assessing transcription patterns, mHtt aggregation, and chromatin accessibility (ATAC-seq)

- Functional Assessment: Evaluating synaptic function, astrocytic activity, and locomotor behavior

- Threshold Determination: Establishing repeat length thresholds for pathological manifestations

This research revealed that distinct MMR complex genes set neuronal CAG-repeat expansion rates to drive selective pathogenesis [21]. Specifically, Msh3 and Pms1 deficiency dramatically reduced the fast linear rate of mHtt modal-CAG-repeat expansion in striatal medium-spiny neurons (from 8.8 repeats/month to nearly zero) and prevented mHtt aggregation by keeping somatic CAG length below a critical threshold of 150 repeats [21].

Figure 2: MMR-Driven Somatic Expansion in Huntington's Disease

Metabolic Traits and Model System Limitations

The Ucp1-Cre transgenic model case study highlights how experimental tools themselves can complicate the interpretation of complex trait pathophysiology. This widely used model for brown adipose tissue research was found to exhibit major unexpected phenotypes independent of intended genetic manipulations [5].

Pathophysiological Insights: Hemizygous Ucp1-CreEvdr mice exhibited significant brown and white fat transcriptomic dysregulation, suggesting altered tissue function even before experimental manipulation [5]. Homozygous animals showed high mortality (40% from 3-6 weeks), tissue-specific growth defects, and craniofacial abnormalities. The transgene insertion caused large genomic alterations disrupting several genes expressed across multiple tissues [5]. This case underscores the critical importance of comprehensive validation for models used in complex trait research, as unnoticed confounding factors can lead to erroneous conclusions about gene function and disease mechanisms.

Advanced Approaches and Future Directions

Polygenic Editing and Therapeutic Horizons

As genetic knowledge advances, the potential for therapeutic interventions grows more sophisticated. Heritable polygenic editing (HPE) represents a frontier approach that could theoretically yield extreme reductions in disease susceptibility by simultaneously editing multiple genomic variants [4].

Methodological Framework: Computational modeling of HPE for common diseases suggests that editing a relatively small number of variants could dramatically alter disease risk [4]:

- Editing 10 variants for Alzheimer's disease could reduce lifetime risk from 5% to 0.6%

- Editing 10 variants for coronary artery disease could reduce risk from 6% to 0.1%

- Editing 5 lipid-associated loci could reduce LDL cholesterol by approximately 2 mmol/L

While currently speculative and facing significant ethical considerations, these models demonstrate the potential power of targeting multiple variants simultaneously for complex disease prevention [4].

Integrating Multi-Omics for Pathway Elucidation

Future complex trait research increasingly requires integration of diverse data types to bridge genetic associations with pathophysiology. Single-nucleus transcriptomics in DCM research has identified cellular states, biological pathways, and intracellular communications that drive pathogenesis [20]. Similar approaches across complex traits will be essential for moving beyond association to mechanistic understanding.

Experimental Framework: A comprehensive multi-omics protocol includes:

- Genetic Association Data: GWAS summary statistics and polygenic risk scores

- Epigenomic Profiling: ATAC-seq, ChIP-seq, and methylation arrays

- Transcriptomic Analysis: Single-cell and single-nucleus RNA sequencing

- Proteomic and Metabolomic Characterization: Mass spectrometry-based profiling

- Computational Integration: Bayesian fine-mapping, network analysis, and machine learning

This integrated approach enables prioritization of putative causal genes and pathways, as demonstrated in DCM research where Bayesian fine-mapping provided statistical prioritization of candidate genes over conventional proximity-based assignment [20].

Table 3: Quantitative Effects of Polygenic Editing on Disease Risk [4]

| Disease/Trait | Baseline Prevalence | Number of Variants Edited | Predicted Prevalence After Editing |

|---|---|---|---|

| Alzheimer's Disease | 5% | 1 variant (APOE ε4) | 2.9% |

| Alzheimer's Disease | 5% | 10 variants | 0.6% |

| Coronary Artery Disease | 6% | 10 variants | 0.1% |

| Type 2 Diabetes | 10% | 10 variants | 0.2% |

| Major Depressive Disorder | 15% | 10 variants | 9% |

| LDL Cholesterol | - | 5 variants | Reduction of ~2 mmol/L |

Selecting Appropriate Model Organisms and Genetic Backgrounds for Trait-Specific Studies

The central goal of genetics is to understand the links between genetic variation and disease, but for complex traits, association signals tend to be spread across most of the genome—including near many genes without an obvious connection to disease [22]. This reality presents significant challenges for researchers in CRE-DDC (Comprehensive Research Entity-Drug Development Center) models who must select appropriate biological systems for studying trait-specific mechanisms. The prevailing "omnigenic" model proposes that gene regulatory networks are sufficiently interconnected that all genes expressed in disease-relevant cells can affect the functions of core disease-related genes, with most heritability explained by effects on genes outside core pathways [22]. This framework fundamentally impacts how researchers approach model organism selection, as it suggests that meaningful insights require systems that capture this network complexity rather than focusing exclusively on presumed core pathways.

For drug development professionals, this understanding is crucial for translating basic research into clinical applications. The selection of appropriate model organisms and their genetic backgrounds must be guided by both the omnigenic architecture of complex traits and practical research constraints. This technical guide provides a comprehensive framework for making these critical decisions within CRE-DDC model complex traits research, integrating theoretical foundations with practical experimental design considerations.

Theoretical Framework: The Omnigenic Model and Its Implications

Foundations of Complex Trait Architecture

The contemporary understanding of complex traits has evolved significantly from early monogenic paradigms. Throughout the 20th century, human geneticists expected that even complex traits would be driven by a handful of moderate-effect loci, leading to mapping studies that were greatly underpowered by modern standards [22]. Genome-wide association studies (GWAS) have since revealed that for typical traits, even the most important loci in the genome have small effect sizes, with significant hits explaining only a modest fraction of predicted genetic variance—a phenomenon initially described as "missing heritability" [22].

Subsequent analyses have demonstrated that common single nucleotide polymorphisms (SNPs) with effect sizes well below genome-wide statistical significance account for most of this missing heritability for many traits [22]. In contrast to Mendelian diseases largely caused by protein-coding changes, complex traits are mainly driven by noncoding variants that presumably affect gene regulation [22]. This regulatory focus necessitates model systems that accurately capture gene regulatory networks.

The Omnigenic Model in Practice

The omnigenic model provides a framework for understanding how effects spread across regulatory networks. This hypothesis suggests that core genes with direct effects on disease are influenced by peripheral genes with indirect effects through interconnected regulatory networks [23]. Research on ulcerative colitis demonstrates that identified core genes are characterized by tissue-specific expression and trait-relevant network connections, with approximately one-third of overexpression or knockdown perturbations impacting core genes differently than peripheral genes—a pattern not observed for GWAS or random genes [23].

This coordinated perturbation response by core genes appears robust across traits and cell lines, despite differing causal perturbagens, suggesting a universal core-gene property [23]. Furthermore, co-perturbation simulations indicate frequent genetic interactions between core genes, highlighting the role of non-additive interactions previously not considered in the omnigenic model [23]. For researchers, this means that model organisms must capture not just individual gene effects but these network properties.

Figure 1: The Omnigenic Model of Complex Traits. Core genes (yellow) directly influence the disease phenotype, while peripheral genes (gray) indirectly affect the phenotype through regulatory networks that influence core gene function. This interconnectedness explains the highly polygenic nature of complex traits.

Model Organism Selection Criteria

Fundamental Selection Parameters

Model organisms are non-human species extensively studied to understand biological phenomena, with the expectation that discoveries will provide insight into the workings of other organisms [24]. When selecting model organisms for complex trait research, scientists consider multiple factors:

Phylogenetic relatedness: The evolutionary principle that all organisms share degree of relatedness and genetic similarity due to common ancestry provides the foundation for comparative biology. Humans and chimpanzees last shared a common ancestor about 6 million years ago, making them close genetic relatives for disease mechanism studies [24].

Practical experimental attributes: Ideal model organisms typically have short life cycles, techniques for genetic manipulation (inbred strains, stem cell lines, transformation methods), non-specialist living requirements, and compact genomes with low proportion of junk DNA [24].

Genetic tractability: The capacity for precise genetic manipulation remains paramount, particularly with the increasing importance of optogenetic and thermogenetic tools for circuit mapping in behavioral neurobiology [25].

Trait-Specific Selection Considerations

Different complex traits require different considerations in model selection:

Metabolic and physiological traits: For conditions like obesity, diabetes, or lipid disorders, mammalian models with conserved metabolic pathways are often essential. Research in dogs led to the 1922 discovery of insulin and its use in treating diabetes, demonstrating the value of physiologically relevant systems [24].

Neurological and behavioral traits: The neural basis of behavior is being established at cellular resolution in genetic model organisms [25]. Zebrafish, with their translucent embryonic phase and vertebrate neuroanatomy, provide unique advantages for studying nervous system development and function [26].

Immune and inflammatory traits: Mouse models have been indispensable for understanding autoimmune diseases like ulcerative colitis, with their highly conserved immune systems and abundant research tools [23].

Comparative Analysis of Model Organisms

Table 1: Model Organisms for Complex Trait Research

| Organism | Genetic Tractability | Generation Time | Key Advantages | Complex Trait Applications | CRE-DDC Utility |

|---|---|---|---|---|---|

| Mouse (Mus musculus) | High (inbred strains, CRISPR, transgenics) | 10-12 weeks | Mammalian physiology, extensive genetic tools, humanized models possible | Autoimmune diseases, metabolic disorders, cancer, neurological conditions | High - Gold standard for preclinical therapeutic testing |

| Fruit Fly (Drosophila melanogaster) | High (Gal4/UAS system, RNAi libraries) | 8-10 days | Conserved developmental pathways, complex behavior, low maintenance cost | Neurodegeneration, circadian rhythms, innate immunity, metabolic regulation | Medium - Initial pathway screening, genetic networks |

| Zebrafish (Danio rerio) | Medium-High (CRISPR, transparent embryos) | 3 months | Vertebrate development, in vivo imaging, high fecundity | Cardiovascular development, neurobiology, toxicology, regenerative medicine | Medium - Developmental toxicity, phenotypic screening |

| Nematode (C. elegans) | Very High (CRISPR, RNAi, full connectome) | 3-4 days | Simple nervous system (302 neurons), full cell lineage, high-throughput | Aging, neurobiology, metabolic regulation, cell death | Medium - High-throughput genetic screening |

| Arabidopsis (A. thaliana) | High (T-DNA insertion, natural variants) | 4-6 weeks | Plant-specific traits, natural variation, ecological genetics | Polygenic adaptation, stress responses, flowering time | Low - Plant-specific trait models only |

Vertebrate Models

Mouse (Mus musculus)

The mouse has been used extensively as a model organism and is associated with many important biological discoveries of the 20th and 21st centuries [24]. Its status as a mammalian model with physiological systems highly comparable to humans makes it invaluable for drug development pipelines. The systematic generation of inbred strains began with William Ernest Castle's collaboration with Abbie Lathrop, leading to the DBA ("dilute, brown and non-agouti") strain and numerous others [24]. These defined genetic backgrounds are crucial for controlling variability in complex trait studies.

Zebrafish (Danio rerio)

Zebrafish are vertebrates and hence have more in common with humans—including muscles, hearts, kidneys, and eyeballs [26]. Their translucent embryonic phase allows researchers to observe internal development, including blood vessel formation, making them excellent for studying cardiovascular development [26]. For complex traits involving developmental origins, zebrafish provide unique insights into how genetic variation influences tissue morphogenesis.

Invertebrate and Non-Mammalian Models

Fruit Fly (Drosophila melanogaster)

Drosophila melanogaster became one of the first, and for some time the most widely used, model organisms for genetics [24]. Thomas Hunt Morgan's work between 1910-1927 identified chromosomes as the vector of inheritance for genes [24]. The fruit fly's digestive and nervous systems share similarities with mammals, and despite their relatively simple nervous system (approximately 100,000 brain cells), they exhibit complex behaviors [26]. For high-throughput genetic studies of complex traits, Drosophila remains unparalleled in terms of speed and genetic tool availability.

Nematode (Caenorhabditis elegans)

C. elegans offers the unique advantage of a completely mapped connectome with only 302 neurons, whose activity can be imaged simultaneously in the intact animal using genetically encoded Ca++ indicators [25]. This comprehensive neural mapping capability makes it ideal for studying how genetic variation influences neuronal networks underlying behavior—a key aspect of complex neurobehavioral traits.

Genetic Background Considerations

Impact of Genetic Background on Trait Expression

The genetic background in which specific variants are studied can dramatically influence phenotypic outcomes. Research on height, often considered the quintessential polygenic trait, reveals that its genetic architecture is broadly similar to many other quantitative traits and diseases [22]. Remarkably, analyses suggest that 62% of all common SNPs are associated with non-zero effects on height, implying that most 100kb windows in the genome include variants that affect this trait [22]. This extreme polygenicity means that genetic background effects are substantial and must be controlled in experimental designs.

Epigenetic Contributions to Complex Traits

Beyond DNA sequence variation, epigenetic modifications like DNA methylation (DNAm) contribute significantly to complex trait variability. DNAm predictors for smoking, alcohol, education, and waist-to-hip ratio can predict mortality in multivariate models, showing moderate discrimination for obesity, alcohol consumption, and HDL cholesterol, and excellent discrimination for current smoking status [27]. These epigenetic predictors explain varying proportions of phenotypic variance—from small amounts for educational attainment (0.6%) to large amounts for smoking (60.9%) [27]. This highlights the need for model organisms that either naturally exhibit or can be engineered to study epigenetic regulation.

Table 2: Genetic Background and Epigenetic Considerations

| Factor | Impact on Complex Traits | Research Implications | Control Strategies |

|---|---|---|---|

| Strain Background | Phenotypic expression varies significantly between strains due to modifier genes | Results may not generalize across genetic contexts | Use defined inbred strains, F1 hybrids, or collaborative cross designs |

| Genetic Load | Accumulation of deleterious variants in lab strains affects trait variance | May confound specific genetic effects | Regular outcrossing, use of multiple strains, genome sequencing |

| Epigenetic Background | DNA methylation patterns influence trait penetrance and expressivity | Intergenerational effects, environmental interactions | Controlled breeding, environmental standardization, epigenetic profiling |

| Microbiome Composition | Gut microbiota influences metabolic, immune, and neurological traits | Non-genetic source of variation, host-genome interactions | Co-housing, fecal transplants, gnotobiotic animals |

| Sex Chromosomes | Sex-specific effects on complex traits, hormonal interactions | Sexual dimorphism in disease risk and progression | Study both sexes separately, include as biological variable |

Experimental Design and Methodological Approaches

Circuit Mapping and Functional Validation

The past decade has witnessed the development of powerful, genetically encoded tools for manipulating and monitoring neuronal function in freely moving animals [25]. These tools are most readily deployed in genetic model organisms, and efforts to map behavioral circuits have increasingly focused on worms, flies, zebrafish, and mice [25]. The traditional virtues of these animals for genetic studies—small size, short generation times, and ease of laboratory husbandry—have facilitated rapid progress when combined with new genetic tools for neuronal manipulation and monitoring [25].

Figure 2: Model Organism Selection Workflow for Complex Trait Studies. The decision process begins with assessing trait complexity, proceeds through key experimental design considerations informed by the omnigenic model, and incorporates validation across systems to maximize translational relevance.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Complex Trait Studies

| Reagent Category | Specific Examples | Function in Complex Trait Research | Compatible Model Organisms |

|---|---|---|---|

| Genome Editing Tools | CRISPR-Cas9, TALENs, Zinc Finger Nucleases | Precise genetic manipulation to validate candidate genes and create disease models | Mouse, zebrafish, Drosophila, C. elegans, plants |

| Optogenetic/ Thermogenetic Actuators | Channelrhodopsin, NpHR, TRPA1 | Acute neuronal manipulation to establish causal circuit relationships | C. elegans, Drosophila, zebrafish, mouse |

| Genetically Encoded Sensors | GCaMP (calcium), pHluorin (pH), iGluSnFR (glutamate) | Monitoring neuronal activity and signaling events in living animals | All major model organisms |

| Barcoded Viral Tracers | Rabies virus, AAV, lentivirus with barcodes | Mapping connectivity between neurons in complex circuits | Mouse, zebrafish, primates |

| Single-Cell Multiomics Platforms | 10X Genomics, Slide-seq, CITE-seq | Characterizing cellular diversity and gene expression networks | All model organisms with reference genomes |

| Perturbation Libraries | RNAi collections, CRISPR libraries, small molecule screens | High-throughput functional screening for gene discovery | Cell cultures, C. elegans, Drosophila, zebrafish |

Integrated Research Strategies for CRE-DDC Models

Cross-Species Validation Frameworks

Given the omnigenic nature of complex traits, validation across multiple model systems provides stronger evidence for therapeutic target identification. The coordinated perturbation response observed in core genes across different traits and cell lines suggests conserved properties that can be leveraged in multi-system approaches [23]. A strategic approach might employ:

- Primary discovery in high-throughput systems (Drosophila, C. elegans)

- Circuit mechanism elucidation in translucent models (zebrafish)

- Physiological validation in mammalian systems (mouse)

- Human biomarker correlation using epigenetic predictors [27]

Artificial Intelligence in Model Selection

Emerging technologies are expanding model organism options. Artificial intelligence can help scientists choose the right model organism by comparing genomic similarity between potential model organisms and the species of interest [26]. In the future, AI might assemble genome sequences from different model organisms to create idealized virtual models for specific research questions [26]. For CRE-DDC pipelines, these computational approaches can optimize resource allocation by predicting which model systems will most efficiently answer specific therapeutic questions.

Selecting appropriate model organisms and genetic backgrounds for trait-specific studies requires integration of omnisgenic principles with practical research constraints. The extreme polygenicity of complex traits, evidenced by the distribution of GWAS signals across most of the genome, necessitates model systems that capture network-level biology rather than focusing exclusively on presumed core pathways [22]. Effective strategies combine evolutionary considerations (phylogenetic relatedness), practical experimental attributes, and trait-specific biological requirements.

For CRE-DDC model complex traits research, a hierarchical approach that leverages multiple model systems provides the most robust path from genetic discovery to therapeutic development. Cross-species validation, attention to genetic background effects, and integration of emerging technologies like AI and single-cell multiomics will continue to enhance the predictive value of model organism research for human complex traits. As our understanding of omnigenic architecture deepens, so too must our strategies for selecting biological systems that capture this complexity.

Advanced Methodologies: From Model Engineering to High-Throughput Screening

Designing Multiplexed Genome Editing Strategies for Polygenic Trait Recapitulation

Polygenic traits, which are controlled by the cumulative effect of many small-effect genes, represent a fundamental challenge in complex traits research. Within the CRE-DDC model (Characterization, Recapitulation, and Engineering for Drug Development Core), the ability to precisely recapitulate these traits in model systems is crucial for understanding disease mechanisms and advancing therapeutic development. The emergence of multiplexed genome editing technologies, particularly CRISPR/Cas systems, has transformed our approach to polygenic trait engineering by enabling simultaneous modification of multiple genomic loci. This technical guide provides an in-depth framework for designing effective multiplexed genome editing strategies specifically for polygenic trait recapitulation, integrating the latest technological innovations with practical implementation considerations for researchers, scientists, and drug development professionals. By leveraging these advanced genome engineering approaches, research within the CRE-DDC framework can accelerate the translation of genetic discoveries into targeted interventions for complex diseases influenced by polygenic architectures, such as type 2 diabetes, coronary artery disease, and psychiatric disorders [28] [29].

Technological Foundations of Multiplex Genome Editing

CRISPR/Cas Systems for Multiplex Editing