Constrained Optimization Problems (COPs): A Foundational Guide for Biomedical Research and Drug Development

This article provides a comprehensive exploration of Constrained Optimization Problems (COPs), tailored for researchers and professionals in drug development.

Constrained Optimization Problems (COPs): A Foundational Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive exploration of Constrained Optimization Problems (COPs), tailored for researchers and professionals in drug development. It covers the foundational mathematics of COPs, details key algorithmic solutions from linear programming to evolutionary methods, and addresses practical challenges in troubleshooting and optimization. With a focus on validation and comparative analysis, the guide also highlights cutting-edge applications in precision medicine, including personalized drug target identification and model-informed drug development, offering a vital resource for leveraging computational optimization in biomedical innovation.

What is a Constrained Optimization Problem? Core Principles and Definitions for Scientists

Constrained Optimization Problems (COPs) represent a fundamental pillar in applied mathematics, engineering, computer science, and economics [1] [2]. Within the broader context of research on COPs, a precise understanding of the core components—objective functions, decision variables, and constraints—is paramount. This framework allows researchers and practitioners to systematically model complex decision-making scenarios, transforming real-world challenges into mathematically tractable forms. In fields such as drug development, this formalism enables the optimization of molecular structures, the minimization of side effects, and the efficient allocation of research resources subject to biological, chemical, and economic limitations [3].

This technical guide provides an in-depth examination of the mathematical structure of COPs, offering a rigorous foundation for scientists and researchers engaged in optimization-driven innovation.

Core Mathematical Components of a COP

A Constrained Optimization Problem is fundamentally characterized by three interconnected components that define its structure and solution space [4].

Decision Variables

Decision variables are the unknown quantities within the system that the optimizer can manipulate to achieve the best outcome [5]. These variables represent the fundamental degrees of freedom in the model and are often denoted as a vector ( \mathbf{x} = (x1, x2, \ldots, x_n)^T ) belonging to an ( n )-dimensional space [3]. The nature of these variables classifies the optimization problem type, which can be continuous (taking any real value within bounds), discrete (limited to integer values or specific combinatorial structures), or mixed [3] [2]. In drug development, these might represent continuous variables like dosage concentrations or discrete variables representing the presence or absence of specific molecular subgroups.

Objective Function

The objective function, denoted ( f(\mathbf{x}) ), is a scalar function that quantitatively measures the performance or cost of a solution [1] [4]. The goal is to either minimize (e.g., cost, energy, deviation) or maximize (e.g., profit, utility, efficacy) this function by selecting appropriate values for the decision variables [1] [3]. In the context of drug development, this could correspond to maximizing therapeutic efficacy, minimizing toxicity, or minimizing the cost of production. The objective function is also referred to as a fitness function, merit function, or cost function depending on the domain [3].

Constraints

Constraints define the conditions that any feasible solution must satisfy, representing physical, logical, or resource limitations inherent to the problem [1] [4]. They restrict the allowable values of the decision variables and shape the feasible region within the broader search space. Constraints are categorized as follows:

- Equality Constraints: Expressed as ( gi(\mathbf{x}) = ci ) for ( i = 1, \ldots, n ) [1].

- Inequality Constraints: Expressed as ( hj(\mathbf{x}) \geq dj ) or ( hj(\mathbf{x}) \leq dj ) for ( j = 1, \ldots, m ) [1].

- Domain Constraints: Direct bounds on variables, e.g., ( (xi)L \leq xi \leq (xi)_U ) [3].

A solution satisfying all constraints is termed feasible, and the set of all feasible solutions constitutes the feasible search space, denoted by ( \Omega ) [3].

Formal Mathematical Definition

The standard form of a constrained minimization problem is expressed as follows [1] [3]:

[ \begin{aligned} & \underset{\mathbf{x}}{\text{minimize}} & & f(\mathbf{x}) \ & \text{subject to} & & gi(\mathbf{x}) = ci \quad \text{for } i = 1, \ldots, n \quad \text{(Equality constraints)} \ & & & hj(\mathbf{x}) \geq dj \quad \text{for } j = 1, \ldots, m \quad \text{(Inequality constraints)} \end{aligned} ]

Maximization problems are handled by negating the objective function: ( \max f(\mathbf{x}) ) is equivalent to ( \min -f(\mathbf{x}) ) [2]. Some formulations also include an explicit open set ( S \subseteq \mathbb{R}^n ) representing an underlying domain for the decision variables (e.g., where functions are defined), with the feasible set given by ( S \cap {\mathbf{x} \in \mathbb{R}^n : gi(\mathbf{x}) = ci, hj(\mathbf{x}) \geq dj} ) [6].

Table 1: Fundamental Components of a Constrained Optimization Problem

| Component | Mathematical Notation | Description | Role in COP |

|---|---|---|---|

| Decision Variables | (\mathbf{x} = (x1, x2, ..., x_n)) | Quantities representing choices or system parameters [5] [3]. | Define the solution space to be explored. |

| Objective Function | (f(\mathbf{x})) | Scalar function measuring solution quality (cost, reward, etc.) [1] [4]. | Determines the goal of the optimization. |

| Equality Constraints | (gi(\mathbf{x}) = ci) | Conditions that must be satisfied exactly [1]. | Restrict the feasible region to a surface or manifold. |

| Inequality Constraints | (hj(\mathbf{x}) \geq dj) | Conditions that define allowable ranges (can also use (\leq)) [1]. | Restrict the feasible region to a defined volume. |

| Feasible Set | (\Omega) | The set of all points (\mathbf{x}) satisfying all constraints [3]. | Contains all candidate solutions eligible for evaluation. |

A Simplified Illustrative Example

Consider the problem of maximizing the area of a rectangle given a fixed perimeter length. This can be formulated as a COP [4]:

- Decision Variables: Let ( a ) and ( b ) represent the unknown lengths of the two sides of the rectangle.

- Objective Function: ( f(a, b) = a \cdot b ) (Area to be maximized).

- Constraint: ( 2a + 2b = P ), where ( P ) is the fixed perimeter (e.g., ( a + b = 10 ) if ( P=20 )).

This problem can be solved by substitution. Using ( b = 10 - a ) from the constraint, the objective becomes ( p(a) = a(10 - a) = 10a - a^2 ). Taking the derivative and setting it to zero, ( \frac{dp}{da} = 10 - 2a = 0 ), yields the optimal solution ( a = 5, b = 5 ), confirming a square maximizes the area [4].

Classification of Constrained Optimization Problems

COPs are categorized based on the characteristics of their core components, which dictates the choice of suitable solution algorithms [3].

Table 2: Classification of Optimization Problems

| Classification Criteria | Problem Type | Defining Characteristics | Common Solution Approaches |

|---|---|---|---|

| Variable Type | Continuous [3] [2] | Variables take real values. | Gradient-based methods, Interior-point methods. |

| Discrete/Integer [3] [2] | Variables take integer or binary values. | Branch-and-Bound, Cutting planes. | |

| Combinatorial [3] [2] | Variables represent combinatorial structures (permutations, graphs). | Specialized algorithms (e.g., for traveling salesman). | |

| Mixed-integer [3] | Contains both continuous and discrete variables. | Mixed-Integer Programming (MIP) solvers. | |

| Constraints | Unconstrained [3] | No constraints on the variables. | Gradient descent, Newton's method. |

| Constrained [1] [3] | Explicit equality/inequality constraints are present. | Lagrange multipliers, Penalty methods, KKT conditions. | |

| Objective Function | Single-objective [3] | Single scalar function to optimize. | Most classical optimization algorithms. |

| Multi-objective [3] | Multiple, often conflicting, objective functions. | Pareto-optimal methods, weighted sum. | |

| Linearity | Linear Programming (LP) [1] [3] | Objective and constraints are linear; a special, well-solved case. | Simplex method, Interior-point methods. |

| Nonlinear Programming (NLP) [1] [3] | Objective or constraints are nonlinear. | Sequential Quadratic Programming (SQP). | |

| Quadratic Programming (QP) [1] | Objective is quadratic, constraints are linear. | Ellipsoid method, active-set methods. |

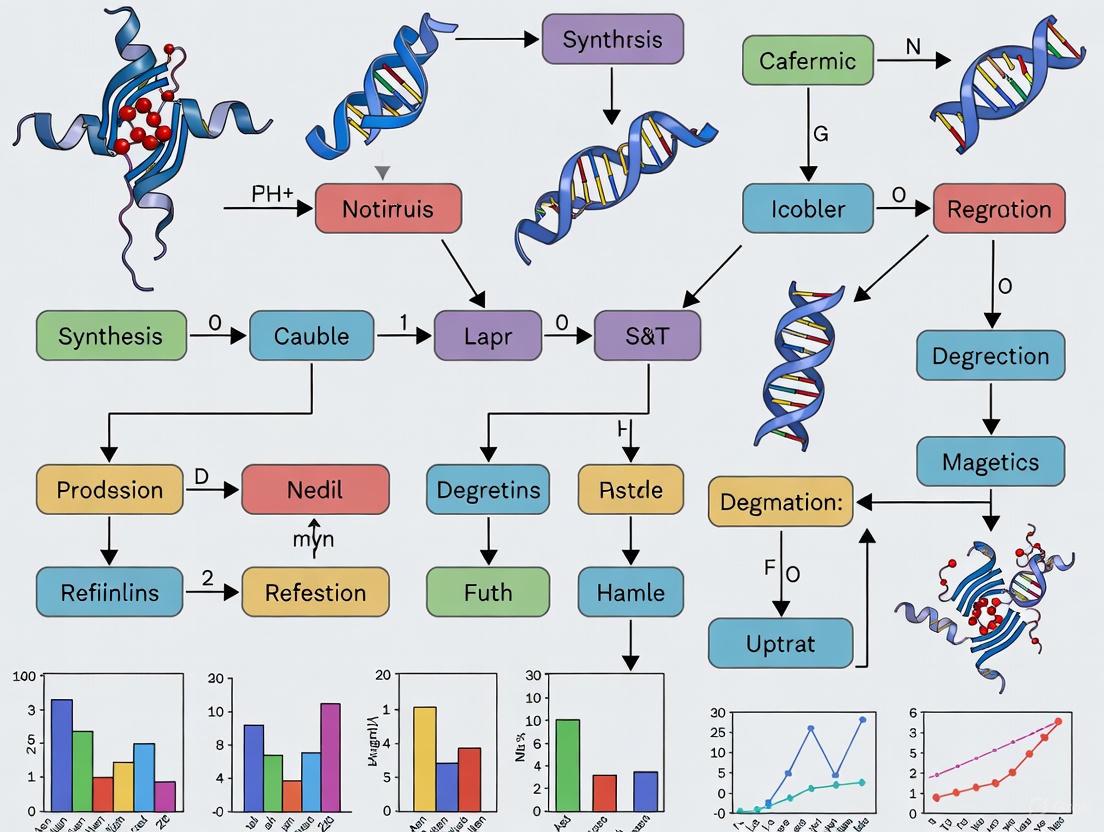

The following diagram illustrates the logical relationships between different types of optimization problems based on their core characteristics.

Solution Methodologies and The Researcher's Toolkit

The approach to solving a COP depends critically on its classification. This section outlines key methodologies and their associated "research reagents" – the core algorithmic tools.

Table 3: Research Reagent Solutions: Key Algorithmic Tools for COPs

| Solver / Method | Underlying Principle | Best-Suited Problem Type | Key Function in 'Experimentation' |

|---|---|---|---|

| Lagrange Multipliers [1] | Incorporates equality constraints into a new objective function (Lagrangian). | Problems with continuous variables and equality constraints. | Transforms a constrained problem into an unconstrained one via dual variables. |

| Karush-Kuhn-Tucker (KKT) Conditions [1] | Generalizes Lagrange multipliers to handle inequality constraints; provides necessary conditions for optimality. | Differentiable nonlinear problems with inequalities. | Serves as an optimality certificate; a target condition for solvers. |

| Branch-and-Bound [1] | A tree-based search that partitions the feasible region and prunes suboptimal branches. | Discrete, integer, and mixed-integer problems. | Systematically explores a combinatorial solution space without full enumeration. |

| Penalty Methods [1] | Adds a term to the objective that penalizes constraint violations. | Problems where a feasible initial point is hard to find. | Allows the use of unconstrained solvers on constrained problems. |

| Simplex Method [1] | Moves along the edges of the feasible polytope to find an optimal vertex. | Linear Programming (LP) problems. | An efficient algorithm for LPs that, while exponential in theory, is fast in practice. |

| CP-SAT Solver [7] | Uses satisfiability (SAT) and constraint propagation techniques. | Combinatorial problems with many discrete decisions (e.g., scheduling). | Effectively narrows down a large set of possibilities by applying constraints. |

Advanced Considerations in COP Formulation

The Feasible Set and Search Space

The search space is the set of all possible solutions that satisfy the problem's constraints and goals [2]. For continuous optimization, this is often a multidimensional real-valued domain, while for discrete problems, it is a finite set of combinations or permutations [2]. The feasible set is the intersection of the search space and all explicit constraints [3] [6]. Understanding the structure of this set (e.g., whether it is convex) is critical for selecting an algorithm and predicting problem difficulty.

Constraint Programming vs. Optimization

Constraint Programming (CP) is a related paradigm often used for feasibility problems with discrete variables. CP focuses on finding solutions that satisfy all constraints, potentially without an objective function [7]. When an objective is added, it becomes a constraint optimization problem solvable by techniques like Russian Doll Search, which solves a series of subproblems to bound the solution cost [1].

The Role of Duality

Duality theory, particularly through the Lagrangian dual function, provides a powerful mechanism for analyzing COPs. It offers lower bounds (for minimization) on the optimal value and is foundational for many algorithms [6]. The definition of the underlying domain ( S ) can significantly simplify the dual problem by incorporating some constraints directly into the domain, thereby reducing the number of dual variables [6].

Distinguishing Hard Constraints vs. Soft Constraints in Practical Models

Constrained Optimization Problems (COPs) represent a fundamental pillar in scientific and engineering disciplines, particularly in complex fields like drug development. A critical aspect of formulating and solving these problems lies in the correct identification and application of hard and soft constraints. Hard constraints define the non-negotiable boundaries of a feasible solution, whereas soft constraints express desirable goals whose violation is permitted but penalized. This guide provides researchers and scientists with a structured framework for distinguishing between these constraint types, complete with quantitative comparison tables, experimental protocols from chemometrics, and visual workflows to inform model design in pharmacological research.

In mathematical optimization, a Constrained Optimization Problem involves optimizing an objective function with respect to several variables while satisfying certain restrictions, known as constraints [1]. The general form of a COP can be expressed as:

Minimize ( f(\mathbf{x}) ) Subject to: ( gi(\mathbf{x}) = ci \quad \text{for } i = 1, \ldots, n \quad \text{(Equality constraints)} ) ( hj(\mathbf{x}) \geq dj \quad \text{for } j = 1, \ldots, m \quad \text{(Inequality constraints)} )

Here, ( f(\mathbf{x}) ) is the objective function to be minimized (or maximized), and ( gi(\mathbf{x}) ) and ( hj(\mathbf{x}) ) are the constraint functions that define the feasible set of solutions [1]. Constraints are categorized as either hard constraints, which are absolute and must be satisfied for a solution to be considered valid, or soft constraints, which are preferable but not mandatory; violating a soft constraint incurs a penalty in the objective function, proportional to the extent of the violation [8] [1]. Properly classifying constraints is paramount for developing models that are both realistic and computationally tractable.

Theoretical Foundations: Hard vs. Soft Constraints

Definition and Characteristics of Hard Constraints

Hard constraints represent inviolable conditions stemming from physical laws, safety regulations, or fundamental operational limits. In a pharmacokinetic model, a hard constraint could enforce that a drug concentration cannot be negative. In a scheduling problem, a hard constraint might enforce that an employee cannot work more than a legally mandated maximum number of hours [8]. Solutions that violate any hard constraint are deemed infeasible and are unacceptable. These constraints strictly define the feasible region of the optimization problem.

Definition and Characteristics of Soft Constraints

Soft constraints, in contrast, model preferences, desires, or goals. They should be satisfied if possible, but the model can tolerate their violation at a cost. This cost is quantified by a penalty term added to the objective function. For example, a soft constraint might state that an employee should work no more than 40 hours per week. If this is violated, the objective function (e.g., total cost) is penalized, say, by £150 for every overtime hour worked [8]. The optimization algorithm then seeks a solution that optimally balances the primary objective with the penalties incurred from violating soft constraints.

Comparative Analysis

The table below summarizes the core differences between hard and soft constraints.

Table 1: Key Differences Between Hard and Soft Constraints

| Feature | Hard Constraints | Soft Constraints |

|---|---|---|

| Enforcement | Absolute, mandatory | Preferential, flexible |

| Solution Validity | Violation renders solution infeasible | Violation permitted |

| Effect on Objective | None directly; defines feasible space | Penalty term added for violations |

| Modeling Purpose | Capture fundamental limits | Capture goals and preferences |

| Example | x + y ≤ 60 (Max weekly hours) |

x + y - z ≤ 40 with +150z in objective (Overtime cost) [8] |

A Framework for Distinguishing Constraints in Practical Models

Distinguishing between hard and soft constraints requires a systematic approach, analyzing the source and nature of the requirement.

The "What Happens If..." Test

A practical method for differentiation is to interrogate the consequence of violating a constraint. If a violation makes the solution impossible or unlawful in the real world, the constraint is hard. If a violation makes the solution less desirable or more costly, the constraint is soft [8]. For instance, exceeding a drug's lethal dosage threshold (hard) versus deviating from an ideal storage temperature that merely reduces efficacy over time (soft).

Identifying Penalty Structures in the Objective Function

In a formulated model, the most direct indicator of a soft constraint is the presence of a penalty term associated with it in the objective function [8] [1]. Scrutinize the objective for terms that minimize deviation from a target or minimize a cost that can be triggered by a specific condition. Hard constraints appear only in the constraint set of the problem.

Table 2: Interpreting Constraint Types in Model Formulations

| Model Component | What to Look For | Indicates |

|---|---|---|

| Objective Function | Terms minimizing z (e.g., +150z), where z measures violation |

Soft Constraint |

| Constraint Equations | Inequalities/equalities with no linked penalty in the objective | Hard Constraint |

| Constraint Equations | Inequalities/equalities that include an auxiliary variable (e.g., x + y - z ≤ 40) which appears in the objective |

Soft Constraint |

Domain-Specific Context: The Case of Drug Development

In pharmaceutical research, constraints often originate from different layers of the scientific process. Hard constraints are frequently derived from biophysical and biochemical laws (e.g., mass balance, non-negative concentrations) or toxicological limits. Soft constraints often relate to efficacy goals, operational preferences in an experimental setup, or kinetic fitting ideals. The following diagram illustrates the logical decision process for classifying a constraint in this domain.

Figure 1: A decision workflow for classifying hard and soft constraints in pharmacological models.

Experimental Protocol: Hard-and-Soft Modelling in Drug Uptake Studies

Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) with hard-and-soft modelling provides a powerful experimental framework for studying drug uptake and cellular responses, demonstrating the application of constraints in chemometric analysis [9].

Detailed Methodology

The following workflow details the key steps in applying hard and soft constraints within the MCR-ALS protocol to resolve concentration and spectral profiles from Raman microspectroscopy data.

Figure 2: MCR-ALS workflow with hard and soft kinetic constraints.

1. Problem Formulation & Data Collection: Raman spectral data is acquired from cell cultures (e.g., A549 and Calu-1 human lung cells) inoculated with a drug (e.g., doxorubicin) over a time series (e.g., 0 to 72 hours) [9]. The data matrix D is constructed, where rows represent spectra at different times and columns represent spectral variables.

2. Initialization: The number of components (I) in the system (e.g., free drug, bound drug, cellular metabolites) is estimated using Singular Value Decomposition (SVD) or Evolving Factor Analysis. An initial concentration matrix C⁰ is estimated [9].

3. Iterative Optimization with Constraints: The MCR-ALS algorithm iteratively solves the bilinear equation ( \mathbf{D} = \mathbf{C} \mathbf{S}^\text{T} ) using an alternating least squares approach. Within each iteration:

- Hard Constraints are Applied: These are non-negotiable, mathematical conditions applied to the concentration and spectral profiles. The most common hard constraints are non-negativity (concentrations and spectral intensities cannot be negative) and unimodality (for a single-component concentration profile) [9].

- Soft Kinetic Constraints are Applied: A system of ordinary differential equations (ODEs) describing the phenomenological kinetics of drug uptake and cellular response is incorporated as a soft constraint. The kinetic constants ( ( k1, k2, ... ) ) are fitted using a non-linear least squares solver (e.g.,

lsqcurvefitin MATLAB) to the current concentration estimates [9]. The resulting updated kinetic model provides a refined, kinetically plausible estimate for the concentration profiles, which is fed back into the ALS iteration. This step is "soft" because the model is guided, not forced, to follow these kinetics; the final fit is a balance between the spectral data and the kinetic model.

4. Convergence: The iteration continues until the relative change in the residual error (the difference between the reconstructed and original data matrix) falls below a set threshold (e.g., <1% change for 20 iterations) or a maximum number of iterations is reached [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Raman-Based Drug Uptake Studies

| Research Reagent / Material | Function in the Experimental Protocol |

|---|---|

| Human Lung Cell Lines (A549, Calu-1) | In vitro model system for studying human physiological response to drug inoculation [9]. |

| Doxorubicin (or drug of interest) | Chemotherapeutic agent whose uptake, binding, and metabolic influence are being quantified [9]. |

| Raman Microspectrometer | Label-free analytical instrument for acquiring molecularly specific spectral data from live or fixed cells [9]. |

| MCR-ALS Software Toolbox | Computational environment (e.g., MATLAB with MCR-ALS toolbox) for implementing the decomposition algorithm and applying constraints [9]. |

| Non-linear Least Squares Solver | Software component (e.g., lsqcurvefit in MATLAB's Optimization Toolbox) for fitting kinetic constants within the soft modelling step [9]. |

The rigorous distinction between hard and soft constraints is a critical success factor in constructing meaningful and solvable constrained optimization models in drug development and scientific research. Hard constraints encode the non-negotiable realities of the system, while soft constraints guide the solution toward optimal performance by incorporating preferences and goals via penalty mechanisms. The MCR-ALS framework with integrated kinetic constraints serves as a powerful exemplar of how this distinction is applied in practice, enabling the elucidation of complex pharmacokinetic parameters from spectroscopic data. Mastering this conceptual framework allows researchers to build more accurate, interpretable, and effective models for scientific discovery.

Constrained Optimization Problems (COPs) represent a fundamental class of problems in mathematical optimization where the goal is to optimize an objective function subject to restrictions on its variables [1]. In applied mathematics, engineering, and scientific research, optimization rarely involves finding an unconditional extremum; instead, practical problems almost always involve constraints that define feasible solutions based on physical, economic, or technical limitations [10]. The constrained optimization framework provides a powerful formalism for modeling such real-world problems across diverse domains, from drug development and systems biology to manufacturing and logistics.

A COP is a significant generalization of the classic constraint-satisfaction problem (CSP) model, incorporating an objective function to be optimized in addition to the constraints that must be satisfied [1]. This combination of constraints with optimization objectives makes COPs particularly valuable for scientific and engineering applications where researchers must balance multiple competing requirements while seeking optimal solutions. In drug development specifically, constrained optimization enables researchers to maximize therapeutic efficacy while respecting safety thresholds, dosage limitations, and physiological constraints.

The general COP formulation encompasses both hard constraints, which must be satisfied for a solution to be feasible, and soft constraints, which may be violated at some cost [1]. This distinction is particularly relevant in biological and pharmaceutical applications where certain constraints represent absolute physical limits (hard constraints) while others represent preferences or desirable ranges (soft constraints). The ability to formally distinguish between these constraint types makes COPs invaluable for modeling complex biological systems and pharmaceutical optimization problems.

Mathematical Formulation of COPs

Standard Mathematical Notation

The general form of a constrained minimization problem can be written as follows [1]:

Here, f(x) represents the objective function to be minimized, while the functions g_i(x) and h_j(x) define the equality and inequality constraints respectively [1]. The variables x = [x_1, x_2, ..., x_k] constitute the decision variables over which the optimization is performed. For maximization problems, the objective function is typically transformed by negation (max f(x) ≡ min -f(x)).

In this formulation, the equality constraints g_i(x) = c_i define specific relationships that must be satisfied exactly, while the inequality constraints h_j(x) ≥ d_j set lower bounds (or upper bounds, through appropriate transformation) on function values [1]. The feasible region comprises all points x that satisfy all constraints simultaneously, and the optimal solution is the feasible point that yields the most favorable value of the objective function.

Alternative Formulations

Different disciplines may employ variations of the general COP formulation. In engineering contexts, constrained optimization problems are often expressed as [10]:

This formulation explicitly includes bound constraints on variables in addition to general inequality and equality constraints [10]. The representation highlights that constraints can be classified as behavioral constraints (representing system performance requirements) or regional constraints (representing physical or geometric limitations).

Table 1: Classification of Constraints in Optimization Problems

| Constraint Type | Mathematical Form | Interpretation | Application Examples |

|---|---|---|---|

| Equality Constraints | g_i(x) = c_i |

Exact relationships that must be satisfied | Mass balance equations, stoichiometric relationships |

| Inequality Constraints | h_j(x) ≥ d_j |

Minimum or maximum requirements | Safety thresholds, dosage limits, resource capacities |

| Bound Constraints | x_k^min ≤ x_k ≤ x_k^max |

Variable domain restrictions | Physiological parameter ranges, concentration limits |

| Hard Constraints | Must be satisfied | Absolute requirements | Physical laws, material balances |

| Soft Constraints | May be violated with penalty | Desirable but flexible targets | Preference-based objectives, ideal ranges |

Solution Methodologies for COPs

Analytical Approaches

For problems with specific structural properties, analytical methods provide powerful solution approaches. The method of Lagrange multipliers can transform equality-constrained problems into equivalent unconstrained formulations [1]. For a problem with only equality constraints, the Lagrangian function takes the form:

where the λ_i values are Lagrange multipliers. The optimal solution then satisfies ∇L(x, λ) = 0, providing a system of equations that can be solved for both the primal variables x and the dual variables λ [10].

When dealing with inequality constraints, the Karush-Kuhn-Tucker (KKT) conditions generalize the method of Lagrange multipliers [1]. The KKT conditions provide necessary criteria for optimality in problems with inequality constraints, requiring:

- Stationarity: ∇f(x) + Σλi∇gi(x) + Σμj∇hj(x) = 0

- Primal feasibility: All constraints are satisfied

- Dual feasibility: μ_j ≥ 0 for inequality constraints

- Complementary slackness: μj(hj(x) - d_j) = 0 for all j

For very simple problems with few variables and constraints, the substitution method may be applicable, where constraints are solved for some variables in terms of others, and these expressions are substituted into the objective function [1].

Numerical and Computational Approaches

For complex problems where analytical solutions are infeasible, numerical methods provide practical alternatives. Linear programming applies when both the objective function and all constraints are linear [1]. The simplex method or interior point methods can solve such problems efficiently, even for large-scale instances.

When the objective function is quadratic and constraints are linear, quadratic programming approaches are appropriate [1]. If the quadratic objective function is convex, polynomial-time solutions exist; otherwise, the problem may be NP-hard.

For general nonlinear problems, nonlinear programming techniques are required [1]. These include:

- Penalty methods: Convert constrained problems to unconstrained ones by adding penalty terms for constraint violations [1]

- Barrier methods: Transform constraints into barrier terms that prevent leaving the feasible region

- Augmented Lagrangian methods: Combine Lagrangian approaches with penalty functions

Branch-and-bound algorithms systematically explore the solution space while using bounds to prune suboptimal regions [1]. These algorithms store the cost of the best solution found during execution and use it to avoid exploring parts of the search space that cannot yield better solutions.

Table 2: Optimization Algorithms and Their Applications

| Algorithm Class | Key Characteristics | Applicable Problem Types | Theoretical Guarantees |

|---|---|---|---|

| Linear Programming | Linear objective and constraints | Resource allocation, network flows | Polynomial time for most cases |

| Quadratic Programming | Quadratic objective, linear constraints | Portfolio optimization, model predictive control | Polynomial time if convex |

| Nonlinear Programming | Nonlinear objective or constraints | Chemical process optimization, pharmacokinetics | Local convergence guarantees |

| Integer Programming | Discrete decision variables | Scheduling, resource planning | NP-hard in general |

| Stochastic Programming | Uncertainty in parameters | Financial planning under uncertainty | Probabilistic guarantees |

Specialized Methods for Structured Problems

Many practical problems exhibit special structures that enable more efficient solution approaches. Constraint graphs capture the structure of constraint satisfaction problems, where nodes represent variables and edges connect variables involved in the same constraint [11]. For problems with sparse constraint graphs, tree decomposition techniques can significantly reduce computational complexity by exploiting the graph structure [11].

In distributed settings, Distributed Constraint Optimization Problems (DCOP) frameworks enable multiple agents to coordinate their decisions while satisfying inter-agent constraints [11]. Message-passing algorithms like Max-Sum can find optimal solutions for tree-structured constraint graphs [11].

For problems with both continuous and discrete aspects, mixed-integer programming combines linear or nonlinear programming with integer variables, enabling modeling of fixed costs, logical conditions, and discrete choices [10].

Experimental Protocols and Implementation

Workflow for COP Formulation and Solution

Implementing constrained optimization in practice requires a systematic approach. The following workflow outlines key steps for formulating and solving COPs in scientific and engineering contexts:

- Problem Definition: Identify the objective function, decision variables, and constraints based on domain knowledge and requirements

- Mathematical Formulation: Translate the practical problem into a precise mathematical model with objective function and constraints

- Algorithm Selection: Choose appropriate solution methods based on problem structure, size, and complexity

- Implementation: Code the model using appropriate optimization frameworks or languages

- Validation: Verify that solutions satisfy all constraints and make sense in the problem context

- Sensitivity Analysis: Examine how solutions change with variations in parameters or constraints

Figure 1: Constrained Optimization Workflow

Handling Qualitative Constraints in Scientific Applications

In scientific domains such as drug development and systems biology, researchers often encounter qualitative data that can be incorporated as inequality constraints [12]. The approach involves:

- Converting qualitative observations to inequalities: Qualitative data such as "increased," "decreased," "activated," or "inhibited" can be formalized as inequality constraints on model outputs

- Combining qualitative and quantitative data: The overall objective function combines both types of information:

where f_quant(x) represents the sum of squared errors for quantitative data:

and f_qual(x) represents the penalty for qualitative constraint violations:

Here, each qualitative observation is expressed as an inequality g_i(x) < 0, and C_i is a problem-specific constant that weights the importance of each qualitative constraint [12].

- Solving the combined optimization problem: The resulting scalar function can be minimized using standard optimization algorithms such as differential evolution or scatter search [12].

Applications in Scientific Research and Drug Development

Systems Biology and Pharmaceutical Applications

Constrained optimization has proven particularly valuable in systems biology, where researchers build mathematical models of cellular processes. For example, in parameterizing models of cell cycle regulation in yeast, researchers incorporated both quantitative time courses (561 data points) and qualitative phenotypes of 119 mutant yeast strains (1647 inequalities) to identify 153 model parameters [12]. This approach enabled automated parameter identification that would be infeasible through manual tuning alone.

In drug development, constrained optimization facilitates pharmacokinetic-pharmacodynamic (PK-PD) modeling, where researchers must identify model parameters that simultaneously satisfy:

- Physiological constraints (e.g., non-negative concentrations, mass balance)

- Experimental observations (both quantitative measurements and qualitative trends)

- Stability requirements (e.g., bounded oscillations, convergence to steady states)

The Raf inhibition model provides a concrete example of how constrained optimization enables parameter identification from limited data [12]. With only partial quantitative information, qualitative constraints on protein behavior significantly improve confidence in parameter estimates, directly impacting drug development decisions.

Resource-Constrained Optimization in Experimental Design

Constrained optimization approaches have demonstrated remarkable effectiveness in resource-constrained experimental settings. Recent studies show that constraint programming models can achieve up to 95% reduction in computation time compared to linear programming approaches for parallel machine scheduling problems involving resource constraints [13]. These advances enable researchers to solve complex scheduling instances with up to 20 machines, 40 resources, and 90 operations per resource—scenarios that traditional linear models cannot handle within reasonable computational limits [13].

In pharmaceutical manufacturing and clinical trial scheduling, these resource-constrained optimization approaches enable more efficient allocation of limited equipment, personnel, and materials while respecting temporal constraints and dependency relationships between experimental steps.

The Scientist's Toolkit: Research Reagent Solutions

Implementing constrained optimization in experimental research requires both computational tools and physical resources. The following table outlines essential materials and their functions in optimization-driven research.

Table 3: Essential Research Reagents and Computational Tools

| Item | Function | Application Context |

|---|---|---|

| Parameter Estimation Software (e.g., COPASI, PottersWheel) | Automated parameter identification for biological models | Systems biology, pharmacokinetics |

| Constraint Programming Solvers (e.g., Google CP-SAT, Gecode) | Solving combinatorial optimization problems with constraints | Experimental design, resource scheduling |

| Nonlinear Optimization Tools (e.g., MATLAB fmincon, SciPy optimize) | Local and global optimization of nonlinear objectives with constraints | Model calibration, dose optimization |

| Sensitivity Analysis Packages | Quantifying parameter uncertainty and identifiability | Model validation, experimental planning |

| High-Throughput Screening Platforms | Generating quantitative data for constraint formulation | Drug discovery, phenotype characterization |

| Kinase Activity Assays | Measuring quantitative responses for optimization constraints | Raf inhibition studies, signaling pathway analysis |

Visualization of Constraint Relationships

Understanding the relationships between variables and constraints is essential for effective COP formulation. Constraint graphs provide powerful visual representations of these relationships.

Figure 2: Constraint Graph Representation

The constraint graph visualization reveals the structure of the optimization problem, showing how variables participate in different constraints. Sparse constraint graphs (with limited connections between variables) often enable more efficient solution approaches, while dense graphs typically indicate more challenging problems requiring advanced computational methods [11].

Constrained optimization provides a powerful framework for addressing complex problems across scientific domains, particularly in drug development and systems biology. The general form of COPs—with its distinction between equality constraints, inequality constraints, and objective functions—offers a mathematically rigorous yet flexible approach to balancing multiple requirements and objectives.

The integration of both quantitative data and qualitative constraints represents a particularly promising direction for scientific applications, where diverse data types must be incorporated into coherent modeling frameworks [12]. As optimization algorithms continue to advance, especially in constraint programming and distributed optimization, researchers gain increasingly powerful tools for tackling the complex constrained optimization problems that arise throughout scientific discovery and pharmaceutical development.

Future research directions include the development of more efficient algorithms for non-convex problems, improved methods for handling uncertainty in constraints, and enhanced techniques for multi-scale optimization problems spanning from molecular interactions to clinical outcomes. These advances will further solidify the role of constrained optimization as an indispensable methodology in scientific research and drug development.

How COPs Generalize Constraint Satisfaction Problems (CSPs)

Constraint Satisfaction Problems (CSPs) and Constraint Optimization Problems (COPs) represent two fundamental paradigms in artificial intelligence, operations research, and decision science. While CSPs focus on finding any feasible solution that satisfies all given constraints, COPs extend this formulation by introducing an objective function to be optimized amid those constraints [1] [14]. This generalization transforms the problem from merely identifying feasible states to finding the best possible solution according to a quantifiable metric, thereby significantly expanding the modeling capability and practical applicability of constraint-based reasoning.

The relationship between these two problem classes is fundamental: every CSP is essentially a COP with a constant objective function, whereas COPs represent a broader class that includes optimization components [1]. This hierarchical relationship means that techniques developed for CSPs often provide foundations for COP solvers, though COPs require additional mechanisms to handle the optimization dimension. In domains such as drug development and healthcare resource allocation, this optimization capability becomes crucial where trade-offs between cost, efficacy, and safety must be quantitatively balanced [15].

Formal Definitions and Structural Relationships

Constraint Satisfaction Problems (CSPs)

Formally, a CSP is defined as a triple ⟨X, D, C⟩, where [16] [17]:

- X = {X₁, ..., Xₙ} is a set of variables

- D = {D₁, ..., Dₙ} is a set of domains for each variable

- C = {C₁, ..., Cₘ} is a set of constraints

Each constraint Cⱼ is defined as a pair ⟨tⱼ, Rⱼ⟩ where tⱼ ⊆ {1, ..., n} is the scope of the constraint and Rⱼ is a relation that defines the allowed combinations of values [16]. A solution to a CSP is a complete assignment of values to all variables that satisfies every constraint [16].

Figure 1: The formal structure of a Constraint Satisfaction Problem (CSP), consisting of variables, domains, and constraints [16].

Constraint Optimization Problems (COPs)

A COP extends the CSP framework by introducing an objective function to be optimized [1] [14]. Formally, a COP can be represented as:

minimize f(x) subject to gᵢ(x) = cᵢ for i = 1, ..., n hⱼ(x) ≥ dⱼ for j = 1, ..., m [1]

Where:

- f(x) is the objective function to be minimized (or maximized)

- gᵢ(x) = cᵢ are equality constraints

- hⱼ(x) ≥ dⱼ are inequality constraints

- x represents the decision variables

The key distinction is that while CSPs seek any feasible solution, COPs must find the optimal feasible solution with respect to the objective function [1] [14].

Figure 2: Constraint Optimization Problem (COP) structure, showing the additional objective function component that generalizes beyond CSPs [1].

Key Differences: CSPs vs. COPs

Table 1: Structural and methodological differences between CSPs and COPs

| Aspect | Constraint Satisfaction Problems (CSPs) | Constraint Optimization Problems (COPs) |

|---|---|---|

| Primary Goal | Find any assignment satisfying all constraints [16] | Find optimal assignment satisfying constraints [1] |

| Solution Space | Set of all feasible solutions | Feasible solutions with quality measure |

| Objective Function | None (implicitly constant) [1] | Explicit function to minimize/maximize [1] |

| Constraint Types | Typically hard constraints only [16] | Hard and soft constraints (with penalties) [1] |

| Solution Approaches | Backtracking, constraint propagation, local search [16] [18] | Branch-and-bound, Lagrange multipliers, KKT conditions [1] |

| Problem Examples | Map coloring, N-queens, Sudoku [18] [17] | Resource allocation, portfolio optimization, treatment planning [15] |

The Generalization Relationship

The fundamental generalization relationship can be formally expressed: A CSP is a special case of COP where the objective function is constant (f(x) = c) for all x [1]. This means:

CSP ⊂ COP

This subset relationship has important implications:

- COP algorithms can solve CSP problems, though potentially less efficiently than specialized CSP solvers

- CSP solution techniques (constraint propagation, backtracking) form building blocks for COP solvers

- Complexity results for CSPs inform the development of COP algorithms

Table 2: Quantitative comparison of solution characteristics

| Characteristic | CSP | COP |

|---|---|---|

| Solution Count | 0 to dⁿ (d=domain size, n=variables) [17] | 0 to dⁿ, but seeking single optimum |

| Typical Approach | Depth-first search with backtracking [18] | Branch-and-bound with cost estimation [1] |

| Optimality Criteria | Satisfaction of all constraints | Best objective value subject to constraints |

| Theoretical Complexity | NP-hard [17] | NP-hard (general case) [1] |

Algorithmic Implications of the Generalization

From Backtracking to Branch-and-Bound

The transition from CSP to COP algorithms mirrors the conceptual generalization:

CSP Approach: Backtracking Search

Algorithm 1: Basic backtracking algorithm for CSPs [18] [19]

COP Extension: Branch-and-Bound

Algorithm 2: Branch-and-bound extension for COPs, showing the additional bounding step [1]

The critical addition in COP algorithms is the bounding step, which uses the objective function to prune search spaces that cannot yield better solutions than the current best [1]. This leverages the objective function to reduce computational effort, a capability absent in pure CSP solving.

Constraint Handling: From Hard to Soft Constraints

CSPs typically involve only hard constraints that must be satisfied [16]. COPs generalize this by incorporating soft constraints that can be violated at a cost [1]. This is frequently implemented through penalty functions in the objective:

Weighted CSP Formulation: A weighted CSP (WCSP) is a common COP formulation where constraints have associated violation costs, and the goal is to minimize the total cost of violated constraints [14].

Practical Applications in Scientific Domains

Drug Development and Healthcare Applications

COPs provide powerful modeling capabilities for healthcare decision-making, where CSPs would be insufficient due to the need for optimization. Example applications include:

HIV Program Optimization [15]

- Decision Variables: Investment levels in different intervention programs

- Constraints: Budget limits, program capacity constraints

- Objective Function: Maximize overall health benefits (e.g., QALYs)

- COP Advantage: Optimal resource allocation rather than just feasible allocation

Public Defibrillator Placement [15]

- Decision Variables: Placement locations for defibrillators

- Constraints: Budget, coverage requirements

- Objective Function: Maximize coverage of high-risk areas

- COP Advantage: Quantitative optimization of life-saving potential

Table 3: COP components in healthcare resource allocation

| COP Component | HIV Program Example | Defibrillator Placement Example |

|---|---|---|

| Decision Variables | Funding allocation to each program | Binary variables for placement at each location |

| Parameters | Program cost, effectiveness estimates | Population density, risk factors, distance |

| Constraints | Total budget, implementation capacity | Budget, minimum coverage requirements |

| Objective Function | Maximize infections prevented | Maximize coverage of high-risk populations |

The Scientist's Toolkit: Essential Methods for COP Research

Table 4: Key computational methods and their applications in COP research

| Method/Algorithm | Function | Application Context |

|---|---|---|

| Branch-and-Bound [1] | Systematically explores solution space with pruning | General COPs, especially with discrete variables |

| Lagrange Multipliers [1] [20] | Handles equality constraints through relaxation | Continuous COPs with equality constraints |

| Karush-Kuhn-Tucker (KKT) Conditions [1] [20] | Generalizes Lagrange multipliers to inequality constraints | Nonlinear programming with inequality constraints |

| Russian Doll Search [1] | Solves subproblems to provide bounds | Complex COPs with decomposable structure |

| Bucket Elimination [1] | Variable elimination with constraint processing | COPs with specific structural properties |

| Linear/Integer Programming [21] [15] | Solves problems with linear objectives and constraints | Resource allocation, scheduling problems |

Experimental and Methodological Considerations

Protocol for COP Formulation and Solution

Based on analysis of successful COP applications across domains [15], the following methodological protocol provides a systematic approach:

Phase 1: Problem Formulation

- Identify Decision Variables: Determine what quantities can be controlled (e.g., resource allocations, placement decisions)

- Define Parameters: Collect fixed numerical data describing the problem instance

- Specify Constraints: Formulate both hard constraints (must be satisfied) and soft constraints (can be violated with penalty)

- Establish Objective Function: Define the quantitative measure to optimize

Phase 2: Computational Implementation

- Select Appropriate Solver: Choose based on problem characteristics (linear/nonlinear, discrete/continuous)

- Implement Model: Code the COP using appropriate modeling languages (e.g., Python with Gurobi extension)

- Validate Formulation: Verify that the model accurately represents the real-world problem

- Execute and Verify: Solve the COP and verify solution feasibility and optimality

Phase 3: Analysis and Interpretation

- Sensitivity Analysis: Examine how solutions change with parameter variations

- Solution Implementation: Translate mathematical solution to practical implementation

- Performance Monitoring: Track actual outcomes against predicted optimization results

Figure 3: Comprehensive methodology for formulating and solving COPs, showing the three-phase approach with detailed sub-steps [15].

The generalization from Constraint Satisfaction Problems to Constraint Optimization Problems represents a significant expansion of modeling capability and practical applicability. While CSPs answer the question "Is there a feasible solution?", COPs address the more complex and practically relevant question "What is the best feasible solution?" [1] [14].

This generalization is not merely theoretical but has profound implications for algorithmic development and practical application across domains, particularly in scientifically rigorous fields like drug development and healthcare planning [15]. The incorporation of an objective function transforms constraint-based reasoning from a satisfiability framework to an optimization paradigm, enabling more nuanced and effective decision support for complex real-world problems.

The relationship between these two problem classes ensures that advances in CSP research continue to inform COP methodologies, while COP challenges drive further innovation in constraint processing and optimization techniques. This symbiotic relationship continues to yield powerful tools for scientific decision-making in increasingly complex domains.

Constrained Optimization Problems (COPs) form the backbone of numerous scientific and engineering disciplines, providing a mathematical framework for making optimal decisions within specified boundaries. In pharmaceutical research and development, these techniques enable scientists to optimize drug formulations, predict molecular behavior, and streamline clinical trial designs while adhering to biological, chemical, and regulatory constraints. This whitepaper presents an in-depth technical examination of three fundamental concepts in constrained optimization: the feasible region, optimal solutions, and convexity. Understanding the interplay between these concepts is crucial for developing efficient optimization algorithms and guaranteeing reliable solutions to complex problems encountered in drug discovery and development pipelines. We explore the mathematical foundations, properties, and practical implications of these concepts within the broader context of COP research, with particular attention to applications in scientific domains.

Foundational Definitions

Constrained Optimization Problems

A Constrained Optimization Problem (COP) aims to find the best solution from all feasible alternatives, where "best" is defined by an objective function and "feasible" means satisfying all constraints. Formally, a COP can be stated as:

- Minimize or maximize an objective function, ( f(x) ), where ( f: \mathbb{R}^n \rightarrow \mathbb{R} ) [22]

- Subject to constraints defined by:

The domain of the variables ( x ) is typically a subset of ( \mathbb{R}^n ), though it may be restricted to integers or other discrete structures in some problem classes [22].

Feasible Region

The feasible region, also known as the feasible set or solution space, is the collection of all points that satisfy the problem's constraints [24] [23]. Mathematically, it is defined as:

[ S = { x \in \mathbb{R}^n \mid gi(x) \leq 0 \ \forall i = 1, \dots, m, \ hj(x) = 0 \ \forall j = 1, \dots, p } ]

This region represents the admissible search space for candidate solutions [23]. In linear programming problems, the feasible region takes the form of a convex polyhedron formed by the intersection of half-spaces defined by linear constraints [24]. The feasible region may be bounded (containable within a finite sphere) or unbounded (extending infinitely in some directions) [24]. In some cases, constraints may be mutually contradictory, resulting in an empty feasible region and thus no solution to the problem [24].

Optimal Solutions

An optimal solution ( x^* ) is a feasible point that yields the best value of the objective function among all points in the feasible region [22]. For minimization problems, this means:

[ f(x^*) \leq f(x) \ \forall x \in S ]

Solutions may be classified as:

- Global optimum: The best solution across the entire feasible region [22]

- Local optimum: The best solution within a neighborhood of points [22]

- Strong local optimum: Strictly better than all neighboring points [25]

- Weak local optimum: Equal to some neighboring points but not worse [25]

Convexity

Convexity is a fundamental geometric property with profound implications for optimization. A set ( \mathcal{X} ) is convex if for any two points ( a, b \in \mathcal{X} ), the entire line segment connecting them is also contained in ( \mathcal{X} ) [26]. Mathematically:

[ \lambda a + (1-\lambda) b \in \mathcal{X} \ \forall \lambda \in [0, 1] ]

A function ( f: \mathcal{X} \to \mathbb{R} ) is convex if for any ( x, x' \in \mathcal{X} ) and ( \lambda \in [0, 1] ) [26]:

[ \lambda f(x) + (1-\lambda) f(x') \geq f(\lambda x + (1-\lambda) x') ]

Table 1: Properties of Convex vs. Non-Convex Optimization Problems

| Property | Convex Problems | Non-Convex Problems |

|---|---|---|

| Local Optima | All local optima are global optima [26] | Multiple local optima possible [27] |

| Solution Time | Polynomial-time algorithms exist [28] | Generally NP-hard [28] |

| Feasible Region | Single convex region [27] | Possible multiple disjoint regions [27] |

| Verification of Optimality | Straightforward [28] | Computationally challenging [27] |

| Applications in Drug Development | Linear dosing models, convex QSAR relationships | Molecular docking, protein folding, complex trial designs |

Key Properties and Their Implications

Properties of Feasible Regions

Feasible regions in optimization problems exhibit several critical properties that directly impact solution approaches and outcomes:

Convexity: When the feasible region is defined by convex inequality constraints and affine equality constraints, it forms a convex set [23]. This property ensures that any convex combination of feasible points remains feasible, significantly simplifying optimization [23].

Boundedness and Unboundedness: A feasible region is bounded if it can be contained within a sphere of finite radius; otherwise, it is unbounded [23]. Boundedness guarantees that continuous objective functions will attain their optimal values within the region (by the Weierstrass extreme value theorem), while unbounded regions may lead to unbounded objective functions with no finite optimum [23].

Empty Feasible Regions: When constraints are mutually contradictory, the feasible region becomes empty, indicating the problem is infeasible and has no solution [24]. This situation commonly arises in poorly formulated practical problems where requirements cannot be simultaneously satisfied.

Properties of Convex Functions and Sets

Convexity introduces powerful properties that make optimization problems more tractable:

Intersection Property: The intersection of any collection of convex sets remains convex [26]. This is particularly important in optimization since the feasible region is defined by the intersection of constraint sets.

Jensen's Inequality: For a convex function ( f ), the value at a convex combination of points is less than or equal to the convex combination of the function values [26]. Mathematically:

[ \sumi \alphai f(xi) \geq f\left(\sumi \alphai xi\right) ]

where ( \alphai \geq 0 ) and ( \sumi \alpha_i = 1 ). This inequality enables powerful bounds in probabilistic settings and variational methods [26].

Global Optimality: For convex optimization problems, any local minimum is automatically a global minimum [28] [26]. This property eliminates the need to search for potentially better solutions in distant regions of the feasible space.

Structure of Optimal Solutions

The mathematical structure of optimal solutions varies depending on problem characteristics:

Extreme Points in Linear Programming: In linear programming problems, optimal solutions (if they exist) occur at extreme points (vertices) of the feasible polyhedron [23]. This property enables efficient algorithms like the simplex method to search only these vertices rather than the entire feasible region.

Convex Combinations in General Problems: For optimization problems in convex spaces with affine objective functions and J affine constraints, there exists an optimal solution representable as a convex combination of no more than J+1 extreme points of the feasible region [29] [30]. This fundamental result limits the search space for optimal solutions.

Table 2: Classification of Optimization Problems by Feasible Region and Objective Function Properties

| Problem Type | Feasible Region | Objective Function | Solution Methods | Pharmaceutical Applications |

|---|---|---|---|---|

| Linear Programming | Convex polyhedron [24] | Linear [28] | Simplex, interior point [28] | Resource allocation, supply chain optimization |

| Convex Programming | Convex set [28] | Convex (minimization) [28] | Gradient descent, interior point [28] | Drug formulation optimization, pharmacokinetic modeling |

| Integer Programming | Discrete points [22] | Linear or nonlinear [22] | Branch and bound, cutting planes [22] | Clinical trial site selection, molecular structure optimization |

| Nonlinear Programming | Convex or non-convex [22] | Nonlinear [22] | Sequential quadratic programming, heuristic methods [22] | Dose-response modeling, enzyme kinetics |

Visualization of Core Concepts

Feasible Region Classification and Properties

Convexity Relationships in Optimization

Solution Methodologies and Algorithms

Algorithmic Approaches for Different Problem Classes

The choice of optimization algorithm depends critically on the properties of the feasible region and objective function:

Interior Point Methods: These approaches handle convex optimization problems by adding a barrier function that keeps search points within the feasible region's interior [28] [27]. They are particularly effective for large-scale convex problems, requiring relatively few iterations (typically less than 50) independent of problem size [27].

Simplex Method: Designed specifically for linear programming, this algorithm navigates along the edges of the feasible polyhedron from one vertex to an adjacent one with improved objective value until reaching an optimum [24] [23]. Its efficiency stems from the fundamental property that optimal solutions occur at vertices of the feasible region [23].

Stochastic Gradient Methods: For problems with complex constraints that introduce inter-sample dependencies (such as rate constraints in fair machine learning), specialized stochastic gradient descent-ascent (SGDA) algorithms can solve the Lagrangian formulation of the constrained problem [31].

Branch and Bound: This algorithm addresses integer programming problems by systematically enumerating candidate solutions through a state space search, using bounds on the objective function to prune suboptimal branches [22].

Lagrangian Methods for Constrained Problems

The Lagrangian approach transforms constrained optimization problems into unconstrained ones through the introduction of Lagrange multipliers [28]. For a problem with objective function ( f(x) ) and constraints ( g_i(x) \leq 0 ), the Lagrangian function is:

[ L(x, \lambda0, \lambda1, \ldots, \lambdam) = \lambda0 f(x) + \lambda1 g1(x) + \cdots + \lambdam gm(x) ]

This reformulation enables the application of unconstrained optimization techniques to constrained problems, with the optimal solution satisfying the Karush-Kuhn-Tucker (KKT) conditions under appropriate constraint qualifications [28].

Experimental Protocols and Research Applications

Methodologies for COP Analysis in Research Settings

Researchers employ several established methodologies to analyze and solve constrained optimization problems:

Convexity Verification Protocol: Before selecting solution algorithms, researchers systematically verify problem convexity by:

- Checking if all inequality constraint functions are convex

- Verifying that equality constraints are affine

- Confirming the objective function is convex (for minimization) or concave (for maximization)

- Using automated convexity testing tools when available [27]

Feasibility Analysis Workflow:

- Formulate all constraints mathematically

- Check for constraint contradictions that might yield an empty feasible region

- Determine boundedness through analysis of the recession cone [23]

- Identify active constraints at potential solution points

Solution Approach Selection Framework:

- Classify the optimization problem type (LP, QP, SOCP, SDP, etc.)

- Assess problem scale (number of variables and constraints)

- Verify special properties (convexity, sparsity, separability)

- Select appropriate algorithm based on classification and properties

Research Reagent Solutions for COP Experimentation

Table 3: Essential Computational Tools for Constrained Optimization Research

| Research Tool | Function | Application Context |

|---|---|---|

| Convex Optimization Solvers | Implement interior point, simplex, or other algorithms to find solutions [27] | General convex programming, linear programming, conic optimization |

| Automatic Differentiation Frameworks | Compute precise gradients and Hessians for objective and constraint functions | Gradient-based optimization methods, sensitivity analysis |

| KKT Condition Verifiers | Check necessary and sufficient optimality conditions at candidate solutions [28] | Validation of solution optimality, identification of active constraints |

| Feasible Region Visualizers | Generate 2D/3D representations of feasible regions and objective function contours | Problem analysis, educational purposes, algorithm debugging |

| Integer Programming Solvers | Implement branch-and-bound, cutting plane, and heuristic methods [22] | Discrete optimization problems in drug design and trial management |

| Global Optimization Platforms | Apply stochastic, heuristic, and complete search methods for non-convex problems [27] | Molecular docking, protein folding, complex pharmaceutical formulations |

Applications in Pharmaceutical Research

Constrained optimization techniques find diverse applications throughout drug discovery and development:

Drug Formulation Optimization: Pharmaceutical scientists use convex optimization to determine optimal component ratios in drug formulations, maximizing efficacy while respecting constraints on excipient compatibility, stability requirements, and manufacturing limitations [27]. The convexity of these problems ensures reliable, reproducible solutions.

Clinical Trial Design: Integer and linear programming techniques optimize patient allocation, site selection, and monitoring schedules in clinical trials while satisfying regulatory constraints, ethical requirements, and budgetary limitations [22].

Pharmacokinetic Modeling: Nonlinear programming estimates drug absorption, distribution, metabolism, and excretion parameters from experimental data, with constraints representing physiological boundaries and known biological relationships [22].

Molecular Structure Optimization: Quantum chemistry calculations employ constrained optimization to predict molecular geometries, with constraints representing known bond lengths and angles, enabling efficient in silico drug design [27].

The fundamental concepts of feasible regions, optimal solutions, and convexity provide the mathematical foundation for constrained optimization problems with significant applications in pharmaceutical research and development. The feasible region defines the space of possible solutions, while optimality criteria identify the best among them. Convexity serves as the "great watershed" in optimization, distinguishing problems that can be solved efficiently from those that are computationally challenging [27]. Understanding the properties of these concepts—including boundedness, convexity preservation under intersection, and the structure of optimal solutions—enables researchers to select appropriate solution methods and interpret results correctly. As drug development faces increasing complexity in molecular design, clinical trials, and manufacturing processes, sophisticated constrained optimization techniques将继续 play an essential role in balancing multiple objectives and constraints while ensuring scientifically valid and economically viable outcomes.

Solving COPs: Algorithms and Their Impact on Drug Discovery and Precision Medicine

A Constrained Optimization Problem (COP) is formally defined as finding the vector of decision variables ( \mathbf{x} ) that minimizes (or maximizes) a scalar objective function ( f(\mathbf{x}) ) subject to a set of constraints. The standard form is [32]:

[ \begin{aligned} & \underset{\mathbf{x}}{\text{minimize}} & & f(\mathbf{x}) \ & \text{subject to} & & gi(\mathbf{x}) \leq 0, \quad i = 1, \ldots, m \ & & & hj(\mathbf{x}) = 0, \quad j = 1, \ldots, p \end{aligned} ]

where ( \mathbf{x} \in \mathbb{R}^n ) is the vector of ( n ) decision variables, ( f(\mathbf{x}): \mathbb{R}^n \rightarrow \mathbb{R} ) is the objective function, ( gi(\mathbf{x}) \leq 0 ) are ( m ) inequality constraints, and ( hj(\mathbf{x}) = 0 ) are ( p ) equality constraints. The feasible set ( \mathcal{F} ) is defined as all points ( \mathbf{x} ) satisfying the constraints [32].

Constrained optimization provides an accountable mathematical framework for embedding requirements—such as fairness, safety, robustness, and interpretability—directly into the training process of machine learning models. This is particularly critical in safety-critical domains like medical diagnosis and drug discovery, where solutions must satisfy explicit regulatory, industry, or ethical standards [32].

Linear Programming (LP) and Quadratic Programming (QP) are two fundamental classes of COPs. LP problems feature a linear objective function and linear constraints, while QP problems have a quadratic objective function with linear constraints. Their canonical forms are summarized in Table 1.

Table 1: Canonical Forms of Linear and Quadratic Programs

| Feature | Linear Program (LP) | Quadratic Program (QP) |

|---|---|---|

| Objective Function | ( \mathbf{c}^T \mathbf{x} ) | ( \frac{1}{2} \mathbf{x}^T \mathbf{P} \mathbf{x} + \mathbf{q}^T \mathbf{x} ) |

| Inequality Constraints | ( \mathbf{A} \mathbf{x} \leq \mathbf{b} ) | ( \mathbf{A} \mathbf{x} \leq \mathbf{b} ) |

| Equality Constraints | ( \mathbf{G} \mathbf{x} = \mathbf{h} ) | ( \mathbf{G} \mathbf{x} = \mathbf{h} ) |

| Key Properties | Convex | Convex (if ( \mathbf{P} \succeq 0 )) |

| Solution Methods | Simplex, Interior-Point | Active-Set, Interior-Point, Gradient Projection |

Core Algorithmic Methodologies

Linear Programming Algorithms

The Simplex method, developed by George Dantzig, is a cornerstone algorithm for LP. It operates by traversing the vertices of the feasible polyhedron, at each step moving to an adjacent vertex that improves the objective function until an optimum is found. While efficient in practice, its worst-case complexity is exponential.

Interior-point methods (IPMs) represent a modern alternative. Instead of moving along the boundary, IPMs traverse the interior of the feasible region. The primal-dual path-following algorithm is a prominent IPM that solves the system of nonlinear equations derived from the Karush-Kuhn-Tucker (KKT) conditions using Newton's method. IPMs have polynomial time complexity, typically ( O(\sqrt{n} \log(1/\epsilon)) ) iterations to achieve ( \epsilon )-accuracy.

Quadratic Programming Algorithms

Active-set methods are widely used for convex QP. These methods iteratively predict which constraints are active (i.e., hold with equality) at the solution. The algorithm solves an equality-constrained QP subproblem at each iteration, then updates the active set based on the computed step and Lagrange multipliers.

Gradient projection methods are particularly effective for large-scale QP problems with simple bounds. These methods descend along the negative gradient of the objective function, then project the result back onto the feasible set. The projection step must be computationally efficient for practical utility.

For the general form QP, interior-point methods can be extended from LP. The primal-dual IPM for QP solves the following perturbed KKT system via Newton's method:

[ \begin{aligned} \mathbf{P} \mathbf{x} + \mathbf{q} + \mathbf{A}^T \boldsymbol{\lambda} + \mathbf{G}^T \boldsymbol{\nu} &= 0 \ \mathbf{G} \mathbf{x} - \mathbf{h} &= 0 \ \mathbf{A} \mathbf{x} - \mathbf{b} + \mathbf{s} &= 0 \ si \lambdai &= \mu, \quad i = 1, \ldots, m \ \mathbf{s} \geq 0, \boldsymbol{\lambda} \geq 0 \end{aligned} ]

where ( \boldsymbol{\lambda} ) and ( \boldsymbol{\nu} ) are Lagrange multipliers, and ( \mathbf{s} ) are slack variables for the inequality constraints.

Applications in Drug Discovery and Healthcare

Computer-Aided Drug Design (CADD)

CADD methods are broadly classified as either structure-based or ligand-based approaches [33]. Structure-based methods require 3D structures of both target and ligand, employing techniques like molecular docking and pharmacophore modeling. Ligand-based methods use only ligand information, utilizing quantitative structure-activity relationships (QSAR) and similarity searches [33]. Optimization algorithms are fundamental to both paradigms.

Table 2: CADD Applications of Optimization Algorithms

| Application Area | Optimization Problem Type | Specific Use Cases |

|---|---|---|

| Virtual High-Throughput Screening (vHTS) | Mixed-Integer Linear Programming, Quadratic Programming | Ranking compounds from virtual libraries by predicted binding affinity [33] |

| Lead Optimization | Nonlinear Programming, Quadratic Programming | Improving binding affinity and optimizing drug metabolism/pharmacokinetics (DMPK) properties [33] |

| De Novo Drug Design | Evolutionary Algorithms, Mixed-Integer Nonlinear Programming | Designing novel molecular structures from scratch [34] |

| Pharmacokinetic Modeling | Linear Programming, Quadratic Programming | Predicting absorption, distribution, metabolism, excretion, and toxicity (ADMET) [33] |

Pharmaceutical Supply Chain Optimization

A recent study demonstrated a QP-based machine learning model for designing pharmaceutical supply chains with soft time windows and perishable products [35]. The model incorporates two objective functions: minimizing total cost and minimizing delivery penalties due to schedule violations.

The researchers employed quadratic regression (QR) as a machine learning algorithm to forecast medicine demand, showing it outperformed linear regression (LR) in prediction accuracy [35]. The overall optimization was solved using the Goal Attainment (GA) method, which transforms the multi-objective problem into a single-objective formulation by introducing aspiration levels for each objective.

Biomedical Imaging for Cancer Detection

In microwave imaging for breast cancer detection, researchers have combined the Born iterative method (BIM) - based on a quadratic programming approach - with convolutional neural networks (CNNs) [36]. This hybrid method addresses the ill-posed inverse scattering problem to accurately recover the permittivity of breast tissues from measured scattering data.

The QP formulation discretizes the imaging domain and solves for the contrast function ( \chi(r) ) that maps to the dielectric properties of tissues. The incorporation of CNNs significantly reduced reconstruction time while maintaining accuracy exceeding 90% in validation tests [36].

Experimental Protocols and Workflows

Quadratic Programming for Microwave Imaging

Protocol Title: QP-Based Microwave Imaging for Breast Cancer Detection [36]

Objective: To accurately reconstruct the dielectric properties of breast tissues from scattered field measurements for tumor identification.

Materials and Reagents:

- Electromagnetic Simulator: For solving forward scattering problems

- Measurement Apparatus: Antenna array for S-parameter measurements

- Breast Phantom: Tissue-equivalent dielectric materials

- Computational Framework: MATLAB/Python for implementing BIM and CNN

Procedure:

- Data Acquisition: Measure scattered electric fields ( E^s ) at receiver positions for multiple transmitter locations.

- Forward Solution: Compute the total electric field ( E^t ) inside the domain using the discretized state equation: ( \overline{E}^t = \overline{E}^i + \overline{G}_D \cdot \overline{J} ), where ( \overline{J} = \text{diag}(\overline{\chi}) \cdot \overline{E}^t ) is the contrast current density.

- Inverse Solution Setup: Formulate the QP problem to minimize the misfit between computed and measured scattered fields.

- CNN Integration: Train a CNN to accelerate the solution of the inverse problem using simulated data.

- Iterative Reconstruction: Alternate between updating the contrast function ( \chi ) and the total field until convergence criteria are met.

- Image Formation: Reconstruct the permittivity map from the optimized contrast function.

Validation: Compare reconstructed images with ground truth phantom geometry and known dielectric properties. Calculate accuracy metrics including structural similarity index and relative permittivity error.

Pharmaceutical Supply Chain Optimization

Protocol Title: ML-Enhanced QP for Pharmaceutical Supply Chain Design [35]

Objective: To design an efficient pharmaceutical supply chain network that minimizes total costs and delivery penalties while accounting for product perishability and soft time windows.

Materials:

- Demand Dataset: Historical pharmaceutical demand data

- Optimization Software: CPLEX, Gurobi, or MATLAB Optimization Toolbox

- ML Framework: Python with scikit-learn for regression analysis

Procedure:

- Data Preprocessing: Clean historical demand data and extract relevant features.

- Demand Forecasting: Apply quadratic regression to predict future medicine demand.

- Model Formulation: Develop the bi-objective optimization model with cost and delivery penalty functions.

- Goal Attainment Method: Transform the multi-objective problem using aspiration levels.

- QP Solution: Solve the resulting quadratic program using an interior-point method.

- Sensitivity Analysis: Evaluate solution robustness to parameter variations.

- Implementation: Deploy the optimized supply chain network design.

Validation Metrics: Total operational costs, service level (percentage of on-time deliveries), shortage rates, and computational time.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Optimization in Drug Discovery

| Tool/Category | Function | Example Applications |

|---|---|---|

| QP Solvers (Gurobi, CPLEX) | Solve convex quadratic programs with linear constraints | Supply chain optimization, portfolio management [35] |

| Structure-Based Docking Software | Predict binding poses and affinities of small molecules | Virtual high-throughput screening, lead optimization [33] |

| QSAR Modeling Tools | Build predictive models of biological activity from chemical structure | Lead optimization, toxicity prediction [33] |

| Molecular Dynamics Packages | Simulate physical movements of atoms and molecules | Binding free energy calculations, conformational analysis [37] |

| Quantum Chemistry Software | Compute electronic structure of molecules | Reaction mechanism studies, catalyst design |

| Bioinformatics Suites | Analyze biological data including sequences and structures | Target identification, pathway analysis [38] |

Advanced Methodologies and Emerging Trends

Hybrid Machine Learning-Optimization Frameworks