Constrained Optimization in Drug Discovery: Evolutionary Algorithms for Multi-Objective Molecular Design

This article explores the critical role of constrained optimization and evolutionary algorithms in revolutionizing modern drug discovery.

Constrained Optimization in Drug Discovery: Evolutionary Algorithms for Multi-Objective Molecular Design

Abstract

This article explores the critical role of constrained optimization and evolutionary algorithms in revolutionizing modern drug discovery. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how these computational methods address the complex challenge of optimizing multiple molecular properties—such as potency, selectivity, and synthetic accessibility—while adhering to strict drug-like constraints. The content covers foundational principles, cutting-edge methodologies like the REvoLd and CMOMO frameworks, strategies for troubleshooting and performance optimization, and rigorous validation techniques. By synthesizing insights from recent clinical-stage successes and setbacks, this article serves as a strategic guide for integrating constrained evolutionary optimization into robust, AI-driven discovery pipelines.

The Foundation of Constrained Optimization in Drug Discovery

Defining the Constrained Molecular Optimization Problem (CMOP)

Constrained Molecular Optimization Problems (CMOPs) represent a critical frontier in computational drug discovery and material science. These problems involve identifying molecules with improved target properties while simultaneously adhering to stringent, predefined chemical constraints [1] [2]. In practical drug discovery, molecular optimization must navigate multiple conflicting objectives—such as enhancing bioactivity while maintaining drug-likeness—under rigid structural and synthetic constraints that determine candidate viability [1]. Traditional molecular optimization methods often treat constraints as secondary considerations, resulting in molecules with excellent computed properties that nevertheless violate fundamental drug-like criteria [2]. The CMOP framework formally addresses this limitation by integrating constraint satisfaction directly into the optimization objective, creating a balanced approach that yields chemically feasible candidates with desired property profiles [1].

Problem Formulation and Mathematical Definition

The Constrained Molecular Optimization Problem can be mathematically formulated as a constrained multi-objective optimization problem. Let ( \mathcal{M} ) represent the molecular search space. For a molecule ( m \in \mathcal{M} ), the CMOP seeks to optimize multiple property functions while satisfying constraint functions [1].

The standard formulation is: [ \begin{aligned} & \underset{m \in \mathcal{M}}{\text{minimize}} & & \mathbf{f}(m) = [f1(m), f2(m), \ldots, fk(m)] \ & \text{subject to} & & gi(m) \leq 0, \quad i = 1, \ldots, p \ & & & h_j(m) = 0, \quad j = 1, \ldots, q \end{aligned} ]

where ( \mathbf{f}(m) ) represents the vector of ( k ) objective functions to be minimized (e.g., negative bioactivity, synthetic accessibility score), ( gi(m) ) represents inequality constraints (e.g., molecular weight ≤ 500 Da), and ( hj(m) ) represents equality constraints [1].

To quantify constraint satisfaction, a constraint violation (CV) function is employed: [ CV(m) = \sum{i=1}^{p} \max(0, gi(m)) + \sum{j=1}^{q} |hj(m)| ]

A molecule is considered feasible when ( CV(m) = 0 ), indicating all constraints are satisfied [1] [3]. This formulation distinguishes CMOP from both single-objective optimization (which finds a single optimal molecule) and unconstrained multi-objective optimization (which finds trade-off molecules without constraint considerations) [2].

Table 1: Common Objectives and Constraints in Molecular Optimization

| Category | Specific Examples | Role in CMOP |

|---|---|---|

| Optimization Objectives | Bioactivity (e.g., DRD2, GSK3β inhibition) | Properties to maximize/minimize [1] [4] |

| Drug-likeness (QED) | Property to maximize [4] | |

| Penalized logP (plogP) | Property to optimize [1] [4] | |

| Structural Constraints | Ring size (5-6 atoms) | Equality/inequality constraints [1] [2] |

| Presence/absence of specific substructures | Equality constraints [1] | |

| Molecular similarity threshold (Tanimoto ≥ 0.4) | Inequality constraint [4] | |

| Drug-like Constraints | Synthetic accessibility score | Inequality constraint [1] |

| Structural alerts/reactive groups | Equality constraints [2] |

Computational Framework: CMOMO

The Constrained Molecular Multi-objective Optimization (CMOMO) framework provides an effective computational solution for addressing CMOPs [1] [2]. CMOMO implements a two-stage dynamic optimization process that strategically balances property optimization with constraint satisfaction.

Two-Stage Dynamic Optimization

The CMOMO framework divides the optimization process into two distinct scenarios:

Unconstrained Scenario: In this initial phase, CMOMO focuses primarily on optimizing the multiple molecular properties without considering constraints. This allows extensive exploration of the chemical space to identify regions containing molecules with desirable property values [1] [2].

Constrained Scenario: After identifying promising regions, CMOMO transitions to simultaneously considering both property optimization and constraint satisfaction. This phase targets the identification of feasible molecules (those satisfying all constraints) that maintain promising property values [1] [2].

This staged approach prevents premature convergence to suboptimal feasible solutions and enables better exploration of the complex molecular search space, where feasible regions may be narrow, disconnected, or irregular [2].

Dynamic Cooperative Optimization

CMOMO implements a cooperative optimization strategy that operates across both discrete chemical space and continuous implicit molecular space [1] [2]. The workflow proceeds through the following stages:

Population Initialization: Beginning with a lead molecule (represented as a SMILES string), CMOMO constructs a library of high-property molecules similar to the lead from public databases. A pre-trained encoder embeds these molecules into a continuous latent space, followed by linear crossover operations to generate a high-quality initial population [2].

Evolutionary Reproduction: CMOMO employs a Vector Fragmentation-based Evolutionary Reproduction (VFER) strategy to efficiently generate offspring molecules in the continuous latent space [1].

Evaluation and Selection: Parent and offspring molecules are decoded back to discrete chemical structures using a pre-trained decoder, where their properties and constraint violations are evaluated. The environmental selection strategy then selects molecules for the next generation based on both objective performance and constraint satisfaction [1] [2].

The dynamic constraint handling mechanism enables smooth transition between the two optimization scenarios, progressively incorporating constraint requirements while maintaining pressure toward property improvement [1].

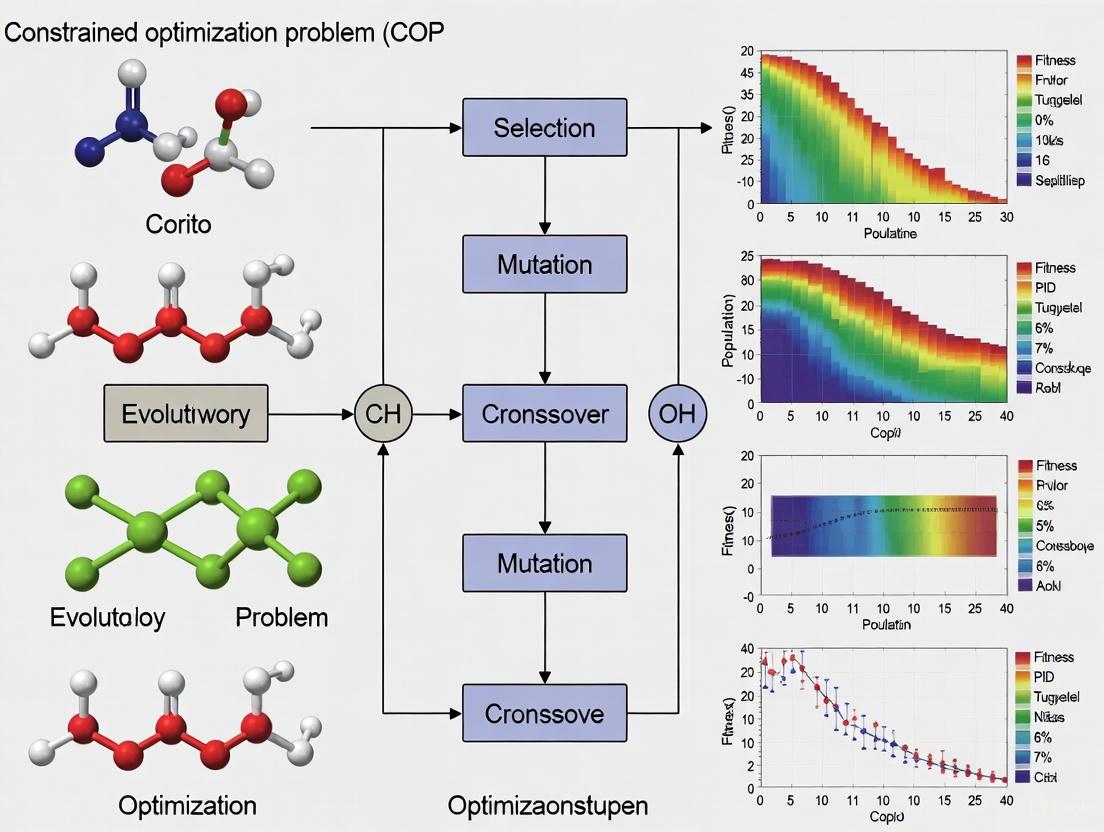

CMOMO Framework Workflow: The two-stage dynamic optimization process transitions from unconstrained property optimization to constrained optimization.

Experimental Protocols and Methodologies

Benchmark Evaluation Protocol

Comprehensive evaluation of CMOP methodologies requires standardized benchmark tasks and metrics. The following protocol outlines the key steps for experimental validation:

Task Selection: Utilize established benchmark tasks including DRD2 (dopamine receptor D2 activity), QED (drug-likeness), and plogP (penalized logP with similarity thresholds of 0.4 and 0.6) [4]. These tasks represent diverse optimization challenges with practical relevance to drug discovery.

Baseline Methods: Compare against state-of-the-art molecular optimization methods including:

- JT-VAE: Junction Tree Variational Autoencoder [4]

- VJTNN: Variational Junction Tree Neural Network [4]

- CORE: Copy-and-Refine strategy [4]

- GB-GA-P: Genetic algorithm with rough constraint handling [1] [2]

- MSO: Multi-strategy optimization with aggregated fitness [2]

Evaluation Metrics: Employ comprehensive metrics assessing multiple performance dimensions [4]:

- Success Rate: Percentage of successfully optimized molecules meeting all constraints and property thresholds

- Property Improvement: Magnitude of enhancement in target properties versus starting molecules

- Novelty: Chemical diversity of generated molecules compared to training data

- Constraint Satisfaction: Percentage of generated molecules satisfying all constraints

Table 2: CMOMO Performance on Benchmark Tasks

| Benchmark Task | Success Rate (%) | Property Improvement | Constraint Satisfaction (%) | Performance vs. Baselines |

|---|---|---|---|---|

| DRD2 | 85.2 | +0.42 in activity score | 92.7 | Superior to 5/5 baselines [1] |

| QED | 79.8 | +0.38 in QED score | 89.3 | Superior to 5/5 baselines [1] |

| plogP04 | 82.4 | +3.52 in plogP score | 90.1 | Superior to 5/5 baselines [1] |

| plogP06 | 75.6 | +2.87 in plogP score | 85.8 | Superior to 5/5 baselines [1] |

Practical Application Protocol: Protein-Ligand Optimization

For real-world drug discovery applications, the following protocol outlines the process for optimizing ligands targeting specific protein structures:

Step 1: Problem Formulation

- Define primary objectives: typically maximizing binding affinity while maintaining favorable ADMET properties

- Establish constraints: structural constraints (e.g., core scaffold preservation), drug-like constraints (e.g., Lipinski's rules), and synthetic accessibility thresholds

- Set similarity thresholds to maintain resemblance to lead compound (typically Tanimoto ≥ 0.4) [4]

Step 2: CMOMO Configuration

- Initialize with known active compound or hit molecule

- Configure property prediction models for target-specific activity (e.g., docking scores, binding affinity predictors)

- Set constraint parameters based on structural requirements and drug-like criteria

Step 3: Optimization Execution

- Execute the two-stage CMOMO process

- Monitor convergence using hypervolume indicator and feasible ratio metrics

- Terminate after predetermined generations or upon convergence stabilization

Step 4: Result Validation

- Select top candidate molecules from the Pareto front

- Validate using molecular dynamics simulations or experimental testing

- Assess synthetic feasibility using retrosynthesis analysis

This protocol has demonstrated success in practical applications including identification of potential ligands for the β2-adrenoceptor GPCR receptor (4LDE) and inhibitors for glycogen synthase kinase-3 (GSK3β), with CMOMO achieving a two-fold improvement in success rate for the GSK3β optimization task compared to traditional methods [1].

Successful implementation of CMOP solutions requires specialized computational tools and resources. The following table details essential components of the constrained molecular optimization toolkit.

Table 3: Essential Resources for Constrained Molecular Optimization Research

| Resource Category | Specific Tools/Solutions | Function/Role |

|---|---|---|

| Molecular Representation | SMILES Strings [4] | String-based molecular representation encoding structural information |

| Molecular Graphs [4] | Graph-based representation with atoms as nodes and bonds as edges | |

| Latent Vector Encodings [1] [2] | Continuous vector representations enabling smooth optimization | |

| Property Prediction | QED Calculator [4] | Computes quantitative estimate of drug-likeness |

| plogP Calculator [4] | Calculates penalized octanol-water partition coefficient | |

| Molecular Similarity Tools (Tanimoto) [4] | Computes structural similarity between molecules | |

| Optimization Frameworks | CMOMO Implementation [1] [2] | Core constrained multi-objective optimization algorithm |

| VFER Strategy [1] | Vector fragmentation-based evolutionary reproduction | |

| NSGA-II Selection [2] | Environmental selection maintaining diversity and convergence | |

| Constraint Handling | RDKit [1] | Cheminformatics toolkit for molecular validation and constraint checking |

| Constraint Violation Calculator [1] [3] | Quantifies degree of constraint violation for candidate molecules | |

| Evaluation & Validation | GuacaMol Metrics [4] | Comprehensive framework for generative model evaluation |

| Molecular Dynamics Simulations | Validates binding stability and conformational behavior |

Advanced Methodologies: Multimodal Multiobjective Optimization

Recent advances in CMOP research have expanded to include multimodal multiobjective optimization, which addresses problems where multiple distinct solutions (modes) may exist in the decision space that map to similar objective values [3]. In molecular optimization, this translates to discovering chemically distinct molecules that nevertheless exhibit similar optimal property profiles.

The Multimodal Multiobjective Optimization with Network Control Principles (MMONCP) framework addresses this challenge by:

- Formulating a Constrained Multimodal Multiobjective Optimization Problem (CMMOP) with discrete constraints on decision space [3]

- Implementing a global and local search strategy with weighting-based special crowding distance (WSCD) [3]

- Balancing diversity in both objective space and decision space [3]

This approach enables identification of chemically diverse personalized drug targets (PDTs) with equivalent efficacy profiles, providing multiple therapeutic options for precision medicine applications [3].

Multimodal Multiobjective Optimization: Identifying chemically distinct solutions with similar optimal properties.

The Constrained Molecular Optimization Problem represents a formally defined challenge at the intersection of computational chemistry and multiobjective optimization. The CMOMO framework provides an effective solution through its two-stage dynamic optimization approach that balances property improvement with strict constraint satisfaction. Experimental results demonstrate superior performance compared to existing methods across multiple benchmark tasks and practical drug discovery applications. The integration of advanced techniques including multimodal optimization and network control principles further expands CMOP capabilities for precision medicine applications. As molecular optimization continues to evolve, the CMOP framework provides a robust foundation for generating chemically feasible candidates with optimized property profiles, accelerating the discovery of novel therapeutic compounds.

Eroom's Law (Moore's Law spelled backward) is the paradoxical observation that drug discovery is becoming slower and more expensive over time, despite significant improvements in technology [5]. The inflation-adjusted cost of developing a new drug roughly doubles every nine years, representing a direct reversal of the exponential advancement pattern seen in computing and other technological fields [6]. This trend threatens the sustainability of pharmaceutical innovation and the development of new therapies for increasingly complex diseases.

The causes of Eroom's Law are multifaceted and interconnected. The 'better than the Beatles' problem describes the challenge of developing drugs that show meaningful improvement over existing, highly effective treatments, necessitating larger clinical trials to demonstrate incremental benefits [5]. The 'cautious regulator' problem reflects increasingly stringent safety requirements from regulatory agencies following drug safety issues, raising the evidentiary bar for new drug approvals [5]. The 'throw money at it' tendency describes the industry's propensity to add resources to research and development, often leading to project overruns without proportional productivity gains [5]. Finally, the 'basic research–brute force' bias involves overestimating the ability of technological advances like high-throughput screening to identify clinically successful compounds, despite often failing to account for biological complexity [5].

Table 1: Quantitative Manifestations of Eroom's Law in Pharmaceutical R&D

| Metric | Historical Performance (1950-1960s) | Current Performance | Change |

|---|---|---|---|

| Drug Approvals per $1B R&D Spending | ~10 drugs [6] | <1 drug [6] | >90% decrease |

| R&D Cost Trajectory | Stable or decreasing | Doubles every 9 years [5] | 100-fold decrease in efficiency [7] |

| Financial Return on R&D | High | Internal Rate of Return declining [7] | Significant decrease |

Constrained optimization problems (COPs) provide a powerful framework for addressing Eroom's Law by systematically balancing multiple competing objectives and constraints in drug discovery. In this context, the objective function typically represents drug efficacy or binding affinity, while constraints encompass safety parameters, synthesis feasibility, ADMET properties (absorption, distribution, metabolism, excretion, and toxicity), and regulatory requirements [8] [9]. The fundamental challenge lies in navigating this complex constraint space to identify viable therapeutic candidates efficiently.

Computational Framework: Constrained Evolutionary Algorithms for Drug Discovery

Constrained evolutionary algorithms (CEAs) represent a promising approach for reversing Eroom's Law by efficiently exploring the vast chemical space while satisfying multiple pharmacological constraints. These algorithms treat drug discovery as a constrained optimization problem where the goal is to identify molecules that maximize therapeutic efficacy while adhering to safety, synthesizability, and pharmacokinetic requirements.

Algorithmic Foundations and Constraint Handling Techniques

Evolutionary algorithms for drug discovery employ population-based search strategies inspired by natural selection to navigate the high-dimensional chemical space. These approaches must balance exploration of novel chemical structures with exploitation of promising molecular scaffolds, all while managing multiple constraints. The general constrained optimization problem for drug discovery can be formulated as:

Minimize (f(\mathbf{x})) (representing undesirable properties or inverse binding affinity) Subject to (gi(\mathbf{x}) \leq 0, i = 1, \ldots, p) (inequality constraints for toxicity, etc.) (hj(\mathbf{x}) = 0, j = p+1, \ldots, m) (equality constraints for specific properties)

where (\mathbf{x}) represents a candidate molecule in the design space, (f(\mathbf{x})) is the objective function, and (gi(\mathbf{x})) and (hj(\mathbf{x})) represent constraint functions [8].

The constraint violation degree for a candidate molecule (\mathbf{x}) is typically computed as: [ Gj(\mathbf{x}) = \begin{cases} \max(0, gj(\mathbf{x})), & 1 \leq j \leq l \ \max(0, |hj(\mathbf{x})| - \delta), & l+1 \leq j \leq m \end{cases} ] where (\delta) is a tolerance parameter for equality constraints [8]. The total constraint violation is then: [ G(\mathbf{x}) = \sum{j=1}^m G_j(\mathbf{x}) ] A solution is considered feasible when (G(\mathbf{x}) = 0) [8].

Table 2: Constraint Handling Techniques in Evolutionary Algorithms for Drug Discovery

| Technique Category | Key Mechanism | Advantages | Limitations |

|---|---|---|---|

| Penalty Functions [8] | Adds constraint violation as penalty to objective function | Simple implementation, wide applicability | Sensitivity to penalty parameters, parameter tuning challenges |

| Feasibility Rules [8] [10] | Strict preference for feasible over infeasible solutions | No parameters needed, strong convergence to feasible regions | Potential premature convergence, limited exploration |

| Multi-objective Optimization [8] [10] | Treats constraints as separate objectives | Preserves diversity, identifies trade-offs | Increased computational complexity, Pareto selection challenges |

| Hybrid Methods [8] [10] | Combines multiple constraint-handling approaches | Adaptability to different problem phases | Implementation complexity, parameter tuning |

Advanced Algorithmic Approaches

Recent research has developed sophisticated CEA frameworks specifically designed to address the challenges of drug discovery. The Evolutionary Algorithm assisted by Learning Strategies and Predictive Mode (EALSPM) introduces a classification-collaboration constraint handling technique that decomposes complex constraint networks into manageable subproblems [8]. This approach randomly classifies constraints into (K) categories, decomposing the original problem into (K) subproblems with corresponding subpopulations. The evolutionary process is divided into random learning and directed learning stages, with subpopulations interacting through these strategies to generate potentially better solutions [8].

For computationally expensive optimization problems, such as those involving complex molecular simulations, the Surrogate-assisted Dynamic Population Optimization Algorithm (SDPOA) maintains a dynamic balance between feasibility, diversity, and convergence [10]. This approach dynamically constructs populations based on real-time feasibility, convergence, and diversity information of all previously evaluated solutions, enabling targeted allocation of computational resources to the most promising regions of chemical space.

The emerging field of LLM-assisted meta-optimization demonstrates how large language models can automate the design of constrained evolutionary algorithms [11]. Frameworks like AwesomeDE leverage LLMs as meta-optimizers to generate update rules for constrained evolutionary algorithms without human intervention, potentially accelerating the algorithm design process itself [11].

Application Notes: Protocol for Implementing CEAs in Preclinical Development

Protocol 1: EALSPM for Multi-constraint Molecule Optimization

Objective: Identify novel molecular structures with optimal target binding while satisfying toxicity, solubility, and metabolic stability constraints.

Experimental Workflow:

EALSPM Multi-stage Optimization Workflow

Step-by-Step Procedure:

Problem Formulation Phase

- Define objective function: (f(\mathbf{x}) = -\log(\text{binding affinity}))

- Identify constraint functions: (g1(\mathbf{x}) = \text{toxicity threshold} - \text{LD}{50}), (g2(\mathbf{x}) = \text{solubility} - \text{minimum solubility}), (g3(\mathbf{x}) = \text{metabolic stability} - \text{half-life})

- Set equality tolerance: (\delta = 10^{-4}) for physicochemical property constraints

Constraint Classification and Decomposition

- Randomly partition (m) constraints into (K) categories

- Create (K) subpopulations of size (N/K), where (N) is the total population size

- Assign each subpopulation to optimize the objective while satisfying its constraint subset

Random Learning Stage (Exploration)

- For each subpopulation (i = 1) to (K):

- Generate offspring using differential evolution strategies

- Apply crossover probability (CR = 0.9) and scaling factor (F = 0.5)

- Evaluate constraint violations for each offspring

- Conduct local searches around promising candidates

- For each subpopulation (i = 1) to (K):

Directed Learning Stage (Exploitation)

- Implement information exchange between subpopulations

- Apply reinforcement learning to adaptively select evolutionary operators

- Use feasibility rules to prioritize candidates satisfying constraints

- Employ ε-constraint method to balance objective and constraint satisfaction

Predictive Modeling Phase

- Construct surrogate models using top 20% of performers

- Apply improved continuous domain estimation of distribution algorithm

- Generate predicted offspring using surrogate evaluations

- Select most promising candidates for exact function evaluation

Termination Criteria

- Maximum iterations: (10,000)

- Stall generation limit: (200)

- Minimum objective function change: (1 \times 10^{-6}) for 50 consecutive iterations

Validation Metrics:

- Feasibility Rate: Percentage of candidates satisfying all constraints

- Hypervolume Indicator: Measure of convergence and diversity

- Infeasibility Measure: Degree of constraint violation for infeasible solutions

Protocol 2: SDPOA for Computationally Expensive Molecular Simulations

Objective: Optimize molecular structures with expensive property simulations while handling multiple constraints with limited function evaluations.

Experimental Workflow:

SDPOA Surrogate-Assisted Optimization Process

Step-by-Step Procedure:

Initial Design of Experiments

- Generate initial sample of (11n-1) candidates using Latin Hypercube Sampling, where (n) is problem dimension

- Evaluate all candidates using expensive simulations (molecular dynamics, binding affinity calculations)

- Store results in database (D = {(xi, f(xi), G(x_i))})

Surrogate Model Construction

- Build Radial Basis Function (RBF) models for objective and each constraint

- Use leave-one-out cross-validation to assess surrogate accuracy

- Apply kriging for uncertainty estimation in predictions

Dynamic Population Construction

- Select center points based on feasibility, convergence, and diversity metrics

- Calculate feasibility ratio: (FR = N_{feasible}/N)

- If (FR < 0.2), prioritize feasibility in selection

- If (0.2 \leq FR \leq 0.8), balance feasibility and objective

- If (FR > 0.8), prioritize objective improvement

Adaptive Mutation Strategy

- For top 2 center points: employ local search with small perturbation

- For other center points: use global search with larger mutation steps

- Adapt mutation strength based on historical improvement state

- Apply dimension-wise mutation for high-dimensional problems

Sparse Local Search Acceleration

- Trigger when best solution remains unchanged for 10 iterations

- Select two excellent but non-adjacent individuals from archive

- Generate search direction vector between selected individuals

- Perform line search along promising directions

- Evaluate limited number of candidates (≤ 20) per local search

Infilling and Model Update

- Select most promising candidates using expected improvement

- Apply probability of feasibility ≥ 0.95 for constrained expected improvement

- Evaluate selected candidates with exact expensive functions

- Update surrogate models with new data points

- Recalibrate models if prediction error exceeds threshold

Computational Budget Management:

- Maximum function evaluations: (100n) (where (n) is problem dimension)

- Parallel evaluations: 5-10 candidates per batch

- Adaptive resource allocation based on candidate potential

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Implementing Constrained Evolutionary Algorithms in Drug Discovery

| Tool Category | Specific Solution | Function | Implementation Example |

|---|---|---|---|

| Optimization Frameworks | DEAP (Python) | Provides evolutionary algorithm framework | Custom implementation of EALSPM classification-collaboration technique [8] |

| Surrogate Modeling | Radial Basis Functions (RBF) | Approximates expensive objective/constraint functions | SDPOA dynamic modeling of molecular properties [10] |

| Constraint Handling | ε-Constraint Framework | Balances objective and constraint satisfaction | Adaptive ε-level control based on feasibility ratio [8] [10] |

| Molecular Simulation | Physics-Based Binding Affinity Calculation | Computes drug-target interaction energy | Schrödinger's FEP+ for accurate binding free energy prediction [9] |

| LLM Integration | Fine-tuned Scientific LLMs | Generates and refines algorithm update rules | AwesomeDE's use of DeepSeek R1 for meta-optimization [11] |

| High-Performance Computing | Parallel Evaluation Framework | Enables simultaneous candidate assessment | Batch evaluation of molecular properties across computing nodes [10] |

Discussion and Future Perspectives

The integration of constrained evolutionary algorithms with advanced computational techniques represents a promising pathway for overcoming Eroom's Law in pharmaceutical R&D. By systematically addressing the multiple constraints inherent in drug discovery while efficiently exploring the vast chemical space, these approaches can potentially reverse the trend of declining R&D productivity.

The emergence of AI-driven approaches is particularly significant. Large language models like those used in AwesomeDE can automate algorithm design, adapting constraint handling strategies to specific drug discovery contexts [11]. Similarly, foundation models for biology trained on massive genomic, transcriptomic, and proteomic datasets promise to uncover fundamental biological principles that can guide constrained optimization [12]. These models could dramatically improve the predictive validity of preclinical assays, addressing a key factor in Eroom's Law [13].

Surrogate-assisted evolution addresses the computational bottleneck of expensive molecular simulations [10]. By strategically using approximate models to screen out poor candidates and reserving exact evaluations for the most promising ones, these approaches can reduce the computational cost of molecular optimization by orders of magnitude. This is particularly valuable for complex problems like protein folding or molecular dynamics, where accurate simulations remain computationally intensive.

The future of constrained optimization in drug discovery will likely involve hybrid approaches that combine the strengths of multiple algorithms. Evolutionary algorithms can be integrated with reinforcement learning for adaptive operator selection, with multi-objective optimization for balancing competing constraints, and with local search methods for refinement of promising candidates. As these computational approaches mature, they offer the potential to transform drug discovery from a process governed by Eroom's Law to one that benefits from exponentially improving computational power, finally reversing this troubling trend in pharmaceutical innovation.

The field of medicinal chemistry is undergoing a profound transformation, driven by the convergence of big data and artificial intelligence. The classical approach to drug discovery, long reliant on the pharmacophore model—an abstract description of the molecular features essential for a molecule's biological activity—is increasingly being supplemented and even superseded by a more comprehensive, data-driven construct: the informacophore [14] [15]. This paradigm shift represents a move from human-defined, heuristic-based molecular design to a predictive, computational approach that leverages machine learning (ML) to identify the minimal chemical structures and their multidimensional representations critical for bioactivity [14].

This transition is inherently framed within the challenges of a Constrained Optimization Problem (COP). The goal is to optimize a molecule's biological activity and drug-like properties (the objective function) while simultaneously satisfying multiple, often competing, constraints such as low toxicity, metabolic stability, and synthetic accessibility [16] [17]. Evolutionary Algorithms (EAs) and other constraint-handling techniques have emerged as powerful tools to navigate this complex chemical space, balancing the exploration of new scaffolds with the exploitation of known bioactive regions to identify optimal drug candidates [17] [18].

Defining the Paradigm: Pharmacophore vs. Informacophore

The table below summarizes the fundamental differences between the classical pharmacophore and the modern informacophore.

Table 1: Core Differences Between Pharmacophore and Informacophore Models

| Feature | Pharmacophore | Informacophore |

|---|---|---|

| Definition | "An ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target" [19] [15]. | The minimal chemical structure combined with computed molecular descriptors, fingerprints, and machine-learned representations essential for biological activity [14]. |

| Basis | Human intuition, heuristics, and chemical experience [14]. | Data-driven patterns derived from ultra-large chemical datasets and ML models [14]. |

| Primary Input | Known active ligands and/or a single protein-ligand complex structure [20] [19]. | Multidimensional data from vast chemical libraries, biological assays, and computed molecular properties [14]. |

| Representation | A 3D arrangement of specific chemical features (e.g., H-bond donor, acceptor, hydrophobic region) [20] [15]. | An integration of structural features with computed descriptors and latent representations from ML models [14]. |

| Interpretability | Highly interpretable; features map directly to chemical intuitions [14]. | Can be opaque; learned features may be challenging to link directly to specific chemical properties without hybrid methods [14]. |

The informacophore concept extends the pharmacophore by incorporating not just the spatial arrangement of features, but also a rich layer of quantitative data. This allows it to function like a "skeleton key," pointing to the molecular features that trigger biological responses with reduced bias from human intuition, potentially leading to fewer systemic errors and a significant acceleration of the drug discovery pipeline [14].

The Informatics Toolkit: Research Reagents for a Data-Driven Era

The informacophore paradigm relies on a new set of "research reagents"—computational tools and data resources—that are essential for its application.

Table 2: Essential Research Reagents for Informacophore-Based Discovery

| Tool/Category | Specific Examples | Function in Informacophore Development |

|---|---|---|

| Ultra-Large Chemical Libraries | Enamine (65B compounds), OTAVA (55B compounds) [14] | Provide the foundational "make-on-demand" chemical space for virtual screening and pattern recognition. |

| Pharmacophore Modeling Software | DISCO, GASP, Catalyst/HipHop, Catalyst/HypoGen, LigandScout [20] [19] | Generate initial structure-based or ligand-based hypotheses; used for validation and hybrid model development. |

| Machine Learning & AI Platforms | IBM Watson, Salesforce Einstein, Google Cloud AI [21] | Provide the infrastructure for analyzing complex datasets, building predictive models, and uncovering hidden patterns. |

| Automated Pharmacophore Generators | Apo2ph4, PharmRL, PharmacoForge [22] | Automate the elucidation of pharmacophore features from protein structures using fragment-docking, reinforcement learning, or diffusion models. |

| Constrained Multi-Objective Evolutionary Algorithms (CMOEAs) | NSGA-II-CDP, ɛMODE-AGR, PSCMO [16] [17] | Navigate the chemical COP by optimizing multiple objectives (e.g., potency, selectivity) while satisfying constraints (e.g., drug-likeness). |

Application Notes & Experimental Protocols

Protocol 1: Ligand-Based Informacophore Generation and Validation

This protocol is suitable when a set of known active ligands is available, but the 3D structure of the biological target is unknown or unreliable.

Workflow Overview:

Detailed Methodology:

Step 1: Curate a High-Quality Training Set

- Input: A collection of structurally diverse molecules with experimentally confirmed activity (e.g., IC50, Ki) against the target. Include confirmed inactive compounds to enhance model specificity [19].

- Data Standards: Activity data should be derived from direct binding or enzyme activity assays on isolated proteins, not cell-based assays, to ensure the measured effect is due to target interaction [19].

- Source Repositories: ChEMBL, DrugBank, PubChem Bioassay [19].

Step 2: Conformational Analysis

- Software: Use tools within packages like Catalyst or MOE [20] [15].

- Protocol: For each molecule in the training set, generate an ensemble of low-energy conformations that is likely to contain the bioactive conformation. Methods include:

- Poling Algorithm: As used in Catalyst, generates ~250 diverse conformers to cover conformational space [20].

- Systematic Search: Varies torsion angles of rotatable bonds.

- Molecular Dynamics: Simulates molecular motion at a defined temperature.

Step 3: Molecular Superimposition and Feature Extraction

- Alignment: Superimpose the conformational ensembles of all training molecules. The goal is to find the best spatial overlap of chemical features common to all active compounds [20].

- Algorithms:

- Abstraction: Convert the aligned functional groups into an abstract informacophore hypothesis. This includes traditional features (hydrogen bond acceptors/donors, hydrophobic regions) and computed molecular descriptors or ML-generated fingerprints that correlate with activity [14] [20].

Step 4: Model Validation and Virtual Screening

- Theoretical Validation: Screen a test database containing known actives and inactives/decoys.

- Metrics: Calculate the Enrichment Factor (EF) (the ratio of found actives in the hit list compared to random selection), specificity, sensitivity, and the area under the Receiver Operating Characteristic curve (ROC-AUC) [19].

- Prospective Screening: Use the validated informacophore model as a 3D query to search ultra-large virtual libraries (e.g., Enamine, OTAVA) [14]. Compounds matching the hypothesis form the virtual hit list.

- Theoretical Validation: Screen a test database containing known actives and inactives/decoys.

Step 5: Experimental Validation

- Primary Assay: Test the purchased or synthesized virtual hits in the same functional assay used to define the training set.

- Dose-Response: Determine IC50/EC50 values for confirmed hits.

- Counter-Screening: Assess selectivity against related targets to avoid off-target effects.

Protocol 2: Structure-Based Informacophore Generation Using Deep Learning

This protocol leverages advanced generative AI models to create pharmacophores directly from protein pocket structures, ideal for targets with known 3D architecture.

Workflow Overview:

Detailed Methodology:

Step 1: Input and Preprocess Protein Structure

- Source: Obtain a 3D structure of the target protein from the Protein Data Bank (PDB). A structure with a bound ligand is preferable.

- Preparation: Using software like Discovery Studio or PyMOL:

- Remove water molecules and extraneous co-factors.

- Add hydrogen atoms and assign protonation states at biological pH.

- Define the binding pocket coordinates, typically based on the location of a native ligand or key residues lining the active site.

Step 2: Generative Model Inference

- Tool: Employ a deep learning model like PharmacoForge, a diffusion model designed for generating 3D pharmacophores conditioned on a protein pocket [22].

- Protocol: Feed the preprocessed pocket coordinates into the model. The model, which is E(3)-equivariant (invariant to rotation, translation, and reflection), will iteratively denoise a random initial state to produce a set of pharmacophore centers with associated feature types and 3D positions [22].

Step 3: Pharmacophore Post-Processing and Database Search

- The output of PharmacoForge is a pharmacophore query comprising centers like Hydrogen Bond Acceptors, Donors, and Hydrophobic regions [22].

- This query is used to screen tangible, commercial chemical databases (e.g., ZINC, Enamine REAL). This step is computationally efficient and guarantees that identified hits are valid, purchasable molecules, circumventing a key limitation of de novo molecular generation [22].

Step 4: Experimental Validation

- As in Protocol 1, compounds matching the generated pharmacophore are procured and tested in biological assays to confirm activity.

Protocol 3: Solving the Drug Discovery COP with Evolutionary Algorithms

This protocol frames lead optimization as a COP and details the use of a CMOEA to solve it.

Problem Formulation:

- Objective Function (to minimize):

f(x) = -pAffinity(x)(or a weighted sum of undesirable properties). - Constraints:

g1(x) = Toxicity(x) - threshold_tox ≤ 0;g2(x) = LogP(x) - 5 ≤ 0;g3(x) = Synthetic_Accessibility_Score(x) - threshold_SAS ≤ 0. - Decision Variable (

x): A representation of the molecular structure (e.g., a fingerprint, a graph, or a real-valued vector encoding structural features).

Algorithm Workflow (e.g., PSCMO Algorithm [17]):

Detailed Methodology:

Step 1: Initialize Population and Define Fitness

- Population Encoding: Represent a population of molecules (

x) in a way amenable to evolutionary operators (e.g., as vectors of molecular descriptors or graphs). - Fitness Evaluation: For each molecule, compute the multi-objective fitness. This involves predicting the objective function (e.g., binding affinity via a surrogate QSAR model) and the degree of constraint violation (

CV(x)) [16] [17].CV(x) = Σ C_i(x), whereC_i(x)quantifies the violation of the i-th constraint (e.g.,max(0, LogP(x)-5)) [16].

- Population Encoding: Represent a population of molecules (

Step 2: Population State Discrimination and Adaptive Operation

- State Model: As in the PSCMO algorithm, monitor the relative positions of a main population (searching for feasible solutions) and an auxiliary population (exploring the unconstrained space) [17].

- Adaptive CHT: Dynamically switch constraint-handling techniques based on the identified state:

- Convergence State: Prioritize optimizing the objective function, selecting individuals with the best predicted activity.

- Diversity State: Prioritize satisfying constraints and maintaining population diversity to escape local optima.

- Balance State: Use a balanced approach, such as the

ɛ-constrained method, which allows some infeasible solutions with good objective values to survive, promoting diversity [16] [17].

Step 3: Reproduction and Selection

- Variation: Apply evolutionary operators (e.g., differential mutation, crossover) to create offspring. In expensive optimization, surrogate models (e.g., RBF, Kriging) are used to inexpensively pre-screen candidate solutions before expensive experimental validation [18].

- Selection: Select the next generation's population based on non-dominated sorting and crowding distance (from algorithms like NSGA-II) or other feasibility-promoting criteria [17].

Step 4: Termination and Experimental Verification

- The loop continues until a termination criterion is met (e.g., maximum iterations, stagnation). The final output is a Pareto front of non-dominated, optimized molecular structures.

- The top-ranked molecules from the algorithm are synthesized and subjected to the full battery of experimental validations (primary activity, ADMET profiling) to confirm their predicted optimized properties.

In the field of constrained optimization problem (COP) evolutionary algorithm research, molecular optimization presents a particularly challenging frontier. The core task—designing novel drug candidates with enhanced properties—is fundamentally constrained by stringent requirements for synthetic accessibility, structural similarity to lead compounds, and adherence to multiple drug-like criteria. These numerous constraints often result in a feasible chemical space that is narrow, disconnected, and highly irregular [1]. Consequently, conventional optimization algorithms frequently converge to suboptimal solutions or fail to locate feasible regions altogether. This application note details the specific challenges of navigating these complex molecular spaces and provides structured experimental protocols and reagent solutions to advance research in this critical area.

The Core Scientific Challenge

Characterizing the Feasible Molecular Space

The feasible region in molecular optimization is not a single, contiguous space but is often fragmented into small, isolated islands of viability. This discontinuity arises from multiple, frequently conflicting, constraints:

- Structural Constraints: Requirements to maintain key molecular scaffolds or substructures essential for biological activity create deep valleys in the fitness landscape that are difficult to traverse through incremental changes [4].

- Drug-like Criteria: Simultaneous adherence to multiple property thresholds—such as solubility, metabolic stability, and absence of toxicophores—creates a complex web of interdependencies that further constricts the feasible space [1] [23].

- Synthetic Accessibility: The requirement that designed molecules must be practically synthesizable imposes critical constraints on molecular complexity and feasible structural transformations [23] [24].

The combination of these factors results in a fitness landscape where the global optimum often lies on the boundary of feasibility, making it exceptionally difficult to locate and validate [8].

Quantitative Characterization of the Problem

The table below summarizes key metrics that highlight the challenges in navigating constrained molecular spaces, as observed in benchmark studies.

Table 1: Performance Metrics of Algorithms on Constrained Molecular Optimization Tasks

| Optimization Task | Similarity Constraint (Tanimoto ≥) | Reported Success Rate (%) | Key Challenge Observed |

|---|---|---|---|

| DRD2 Activity | 0.4 | 70-100 (CMOMO) [1] | Balancing activity improvement with structural similarity |

| QED Optimization | 0.4 | 100 (CMOMO) [1] | Maintaining drug-likeness during optimization |

| pLogP04 | 0.4 | 100 (CMOMO) [1] | Optimizing complex property with moderate similarity |

| pLogP06 | 0.6 | 100 (CMOMO) [1] | High structural similarity restricts property gains |

| GSK3 Inhibitor | Multiple Constraints | ~2x improvement (CMOMO) [1] | Satisfying multiple constraints simultaneously |

Established Methodological Frameworks

Dynamic Cooperative Multi-Objective Optimization (CMOMO)

The CMOMO framework addresses constrained molecular optimization by dividing the process into two distinct stages, effectively balancing property optimization with constraint satisfaction [1].

Diagram 1: CMOMO Two-Stage Optimization Workflow

Fragment-Based Evolutionary Design (LEADD)

The LEADD algorithm employs a fragment-based approach with knowledge-based compatibility rules to implicitly enforce synthetic accessibility, significantly narrowing the search space to more promising regions [23].

Diagram 2: LEADD Fragment-Based Evolutionary Design

Experimental Protocols

Protocol 1: Implementing CMOMO for Multi-Property Optimization

Application: Simultaneously optimizing multiple molecular properties while satisfying strict drug-like constraints.

Materials:

- Lead molecule (SMILES representation)

- Molecular database for building similarity bank (e.g., ZINC, ChEMBL)

- Pre-trained molecular encoder-decoder (e.g., SMILES-based VAE)

- Property prediction models (e.g., QED, LogP, bioactivity)

- Constraint definitions (structural alerts, ring size, substructure)

Procedure:

- Population Initialization:

- Encode the lead molecule into its latent vector representation.

- Construct a bank of high-property molecules structurally similar to the lead from reference databases.

- Perform linear crossover between the lead molecule's latent vector and those of bank molecules to generate a high-quality initial population of 100-200 individuals [1].

Stage 1 - Unconstrained Optimization:

- Generations 1-50: Optimize solely for target molecular properties (e.g., QED, bioactivity) without considering constraints.

- Apply the Vector Fragmentation-based Evolutionary Reproduction (VFER) strategy to efficiently generate offspring in the continuous latent space [1].

- Decode latent vectors to SMILES strings and evaluate properties.

- Select top-performing individuals based solely on objective properties for reproduction.

Stage 2 - Constrained Optimization:

- Generations 51-150: Activate dynamic constraint handling mechanism.

- Calculate constraint violation (CV) for each individual using the aggregation function:

CV(x) = Σ max(0, g_i(x)) + Σ |h_j(x)|whereg_iare inequality andh_jare equality constraints [1]. - Implement feasibility rules prioritizing feasible solutions, while using CV and objective values to rank infeasible ones.

- Continue VFER operations with environmental selection that balances constraint satisfaction and property optimization.

Termination and Validation:

- Terminate after 150 generations or when feasibility rate plateaus (>90% for 10 consecutive generations).

- Validate top candidates using independent property prediction models and synthetic accessibility assessment.

Protocol 2: Fragment-Based Constrained Design with LEADD

Application: Generating novel synthetically accessible molecules maintaining core structural motifs.

Materials:

- Reference library of drug-like molecules (e.g., FDA-approved drugs)

- Fragmentation software (e.g., RDKit)

- Atom typing scheme (MMFF94 or Morgan atom types)

- Compatibility rules database (strict or lax definitions)

- Fitness function components (docking scores, property predictors)

Procedure:

- Fragment Library Creation:

- Fragment each molecule in the reference library, preserving ring systems as intact fragments and fragmenting acyclic regions into subgraphs of specified bond count (typically 0-5 bonds) [23].

- Record connectors for each fragment, representing bonds to adjacent atoms in the original molecule.

- Store fragments with their connectivity information, frequencies, and sizes in a searchable database.

Compatibility Rules Extraction:

- Strict Compatibility: Two connections are compatible only if their bond types match AND their atom types are mirrored (start→end matches end→start) [23].

- Lax Compatibility: Two connections are compatible if bond types match AND the starting atom types have been observed paired in any connection in the database [23].

- Store pairwise symmetric compatibility rules for efficient querying during evolution.

Evolutionary Optimization:

- Representation: Encode molecules as meta-graphs where vertices represent molecular fragments and edges represent compatible connectors [23].

- Initialization: Generate initial population by randomly combining fragments while respecting compatibility rules.

- Genetic Operators:

- Mutation: Replace a fragment with a compatible alternative from the database.

- Crossover: Exchange compatible substructures between two parent molecules.

- Fitness Evaluation: Calculate weighted sum of objective properties (e.g., binding affinity) and constraint satisfaction.

- Selection: Use tournament selection with size 3, prioritizing feasible solutions.

Validation and Output:

- Select top 50 candidates based on fitness and feasibility.

- Assess synthetic accessibility using SAscore or similar metrics.

- Submit top 10-20 candidates for experimental validation.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Molecular Encoders (VAE, AAE) | Maps discrete molecular structures to continuous latent representations | Enables efficient evolutionary operations in continuous space [1] [25] |

| Fragment Libraries | Provides building blocks for structure-based assembly | Ensures synthetic feasibility in fragment-based design [23] |

| Compatibility Rules | Defines which molecular fragments can be connected | Restricts search space to chemically plausible regions [23] |

| Property Predictors (QED, PlogP) | Quantitatively estimates molecular properties | Provides fitness objectives for optimization [4] |

| Constraint Violation Metric | Aggregates multiple constraint deviations into single score | Enables feasibility-based selection pressure [1] |

| Tanimoto Similarity | Measures structural similarity between molecules | Enforces structural constraints to lead compounds [4] |

Navigating narrow, disconnected feasible molecular spaces remains a fundamental challenge in constrained optimization for drug discovery. The frameworks and protocols detailed herein provide structured approaches to balance multiple objectives with stringent constraints. The CMOMO strategy demonstrates that staging optimization—first exploring property enhancement before enforcing constraints—can effectively identify high-quality feasible solutions. Meanwhile, fragment-based methods like LEADD show how chemically-aware representation and operations can implicitly guide search toward synthetically accessible regions. As molecular constraints grow increasingly complex in personalized medicine and polypharmacology, these methodologies provide foundations for future algorithmic innovations. Integration of deep learning with evolutionary search, coupled with advanced constraint handling techniques, promises to further enhance our ability to navigate these challenging molecular landscapes.

Evolutionary Algorithms in Action: Frameworks and Real-World Applications

The screening of ultra-large chemical libraries represents a paradigm shift in early drug discovery. With make-on-demand compound libraries, such as the Enamine REAL space, now containing tens of billions of readily synthesizable compounds, researchers have unprecedented access to chemical diversity [26]. However, this opportunity introduces a significant computational challenge: the exhaustive screening of such libraries while accounting for receptor flexibility is prohibitively expensive. The REvoLd (RosettaEvolutionaryLigand) algorithm addresses this challenge through an evolutionary algorithm (EA) framework specifically designed for navigating combinatorial chemical spaces without enumerating all possible molecules [26] [27].

Within the context of constrained optimization problem (COP) research in evolutionary algorithms, REvoLd operates on a fundamental constraint: the synthetic feasibility of proposed compounds. Unlike traditional EAs that might generate theoretically optimal but synthetically inaccessible molecules, REvoLd explicitly incorporates the combinatorial rules of make-on-demand libraries as hard constraints on the search space [26]. This ensures that every proposed molecule can be synthesized from available building blocks using known chemical reactions, making it a particularly relevant case study in applied COP research.

The algorithm leverages the RosettaLigand framework, which incorporates both ligand and receptor flexibility during docking simulations—a critical advantage over rigid docking protocols that may miss favorable binding conformations [26] [28]. This approach represents a significant advancement in structure-based drug design, as it combines the thorough sampling of flexible docking with the efficiency of evolutionary optimization for navigating ultra-large chemical spaces.

REvoLd Algorithm and Mechanism

Core Evolutionary Framework

REvoLd implements a specialized evolutionary algorithm that exploits the combinatorial nature of make-on-demand libraries. The algorithm treats the chemical space not as a collection of pre-enumerated molecules but as a set of reaction rules and substrates that can be combined according to defined chemical transformations [26]. This fundamental approach allows it to search spaces containing billions of compounds while only docking a tiny fraction of them.

The algorithm follows a generational evolutionary process with these key components:

- Initialization: A random population of 200 ligands is created by combining substrates according to the reaction rules of the target library [26]

- Evaluation: Each molecule is scored using RosettaLigand flexible docking, which accounts for both ligand and protein flexibility [26] [28]

- Selection: The top 50 scoring individuals are selected to advance to the next generation [26]

- Variation Operators: Multiple specialized operators create offspring:

- Crossover: Combines well-performing fragments from different molecules

- Mutation: Switches single fragments to low-similarity alternatives while preserving well-performing molecular regions

- Reaction Switching: Changes the reaction type and searches for compatible fragments [26]

A second round of crossover and mutation excludes the fittest molecules, allowing lower-scoring ligands with potentially valuable structural motifs to contribute to the evolutionary process [26]. This strategic diversity maintenance helps prevent premature convergence and encourages broader exploration of the chemical space.

Workflow and Implementation

The following workflow diagram illustrates the complete REvoLd screening process, from library preparation to hit identification:

Hyperparameter Optimization and Protocol Tuning

Extensive testing revealed that several hyperparameters significantly impact REvoLd's performance which are summarized in the table below:

Table 1: Optimized REvoLd Hyperparameters and Their Impact on Performance

| Parameter | Optimal Value | Impact and Rationale | Testing Range |

|---|---|---|---|

| Population Size | 200 individuals | Balances diversity and computational cost; smaller populations risk homogeneity | 100-500 |

| Generations | 30 | Hits diminishing returns; new scaffolds emerge within 15 generations | 15-400 |

| Selection Pressure | Top 50 | Maintains elite while allowing worse-scoring ligands to contribute to diversity | Top 25-100 |

| Mutation Rate | Multiple specialized operators | Preserves good regions while exploring new chemistries; prevents convergence on local minima | N/A |

Protocol optimization addressed the exploration-exploitation tradeoff inherent to evolutionary algorithms. Early implementations with strong bias toward the fittest individuals converged rapidly but discovered fewer novel scaffolds [26]. The introduction of multiple mutation strategies and a second reproduction round for lower-fitness individuals significantly improved diversity without sacrificing enrichment rates. This balance is particularly crucial for constrained optimization in chemical spaces, where the global optimum may reside beyond apparent local minima.

Application Notes: Implementation Protocol

Library and Target Preparation

For researchers implementing REvoLd, proper preparation of both the chemical library and target protein is essential. The Enamine REAL space serves as the primary source library, consisting of reaction rules in SMARTS format and substrates in SMILES format [28]. These are combined into tab-separated text files that serve as REvoLd's input.

Target preparation requires careful attention to receptor flexibility:

- Structure Selection: Obtain the target protein structure from PDB or homology modeling

- Molecular Dynamics: Run multi-replicate MD simulations (3 × 1.5 μs recommended) to sample conformational diversity [28]

- Ensemble Generation: Cluster MD trajectories using DBSCAN (ε=1.4 Å, minimum samples=4) to identify representative conformations [28]

- Energy Minimization: Perform brief minimization in Rosetta to ensure structural stability

This ensemble docking approach accounts for receptor flexibility, which is critical for identifying binders that might be missed in rigid docking protocols [26].

Experimental Validation: CACHE Challenge Case Study

REvoLd's performance was validated in the CACHE Challenge #1, a blind benchmark for finding binders to the WD-repeat domain of LRRK2, a Parkinson's disease target [28]. The experimental protocol involved:

Table 2: REvoLd Experimental Protocol and Outcomes in CACHE Challenge

| Stage | Procedure | Key Parameters | Results |

|---|---|---|---|

| Round 1: Hit Finding | REvoLd screening of 19.5B compound space | 11 protein models from MD ensemble; 20 independent REvoLd runs | Identification of initial hit compound from combination of two building blocks |

| Round 2: Hit Expansion | REvoLd screening of derivatives in 30.8B compound space | Hit compound as starting point for evolutionary optimization | 5 molecules identified; 3 with KD < 150 μM |

| Validation | Experimental binding assays | Surface plasmon resonance or similar biophysical methods | Affirmation of REvoLd's prospective predictive power |

The following diagram illustrates this two-stage screening and optimization process:

Performance Analysis and Comparative Assessment

Efficiency and Enrichment Metrics

REvoLd demonstrates exceptional efficiency in navigating ultra-large chemical spaces. In benchmark studies across five drug targets, the algorithm achieved hit rate improvements of 869 to 1622-fold compared to random selection [26]. This remarkable enrichment means that researchers can identify promising compounds while docking only a minute fraction of the available chemical space.

The computational advantage becomes apparent when considering the scale of modern combinatorial libraries. Where exhaustive screening of billions of compounds would require immense computational resources, REvoLd typically identifies high-quality hits after docking only 49,000-76,000 unique molecules per target [26]. This represents a reduction of several orders of magnitude in computational requirements while maintaining the benefits of flexible docking.

Comparative Analysis with Alternative Methods

Table 3: Comparison of REvoLd with Other Ultra-Large Library Screening Approaches

| Method | Key Features | Advantages | Limitations | Computational Efficiency |

|---|---|---|---|---|

| REvoLd | Evolutionary algorithm with flexible docking | Synthetic accessibility; receptor flexibility; high enrichment | May not find single global optimum; Rosetta scoring biases | Docking of ~60,000 molecules for screening billions |

| Deep Docking | ML-guided docking with QSAR models | Reduces docking burden; leverages neural network predictions | Still requires docking millions; descriptor calculation for full library | Docking of millions + QSAR for billions |

| V-SYNTHES/SpaceDock | Fragment-based growing in binding site | Synthetic accessibility; scalable approach | Limited by initial fragment docking; may miss synergistic combinations | Varies with fragment library size |

| Galileo | General evolutionary algorithm | Flexible objective functions; not tied to specific library | Mixed performance in structure-based design; high computational cost | ~5 million fitness evaluations |

| Active Learning (MolPal, etc.) | Iterative screening with ML prioritization | Balanced exploration-exploitation; continuous learning | Requires initial diverse set; model training overhead | Varies with implementation |

REvoLd occupies a unique position in this landscape by combining the synthetic accessibility of fragment-based approaches with the comprehensive sampling of evolutionary algorithms, all while maintaining the accuracy of flexible docking. Its constraint-handling approach—embedding synthetic feasibility directly into the representation—makes it particularly valuable for practical drug discovery applications.

Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools for REvoLd Implementation

| Resource | Type | Function in REvoLd Workflow | Availability |

|---|---|---|---|

| Enamine REAL Space | Compound Library | Billion-sized make-on-demand combinatorial library | Enamine LTD (academic access available) |

| Rosetta Software Suite | Molecular Modeling | Flexible docking and scoring; REvoLd implementation | Rosetta Commons (academic and commercial licenses) |

| RDKit | Cheminformatics | Handles SMILES/SMARTS processing and molecular manipulation | Open source |

| AMBER | Molecular Dynamics | Force field parameters and MD simulations for ensemble generation | Academic and commercial licenses |

| CPPTRAJ/VMD | Trajectory Analysis | MD trajectory analysis and visualization | Open source |

REvoLd represents a significant advancement in applying constrained evolutionary optimization to one of drug discovery's most pressing challenges: efficiently navigating ultra-large chemical spaces. Its constraint-handling strategy—embedding synthetic feasibility directly into the algorithm's representation—ensures that optimization occurs within the space of practically accessible compounds.

The algorithm's performance in both retrospective benchmarks and prospective validation (CACHE challenge) demonstrates its readiness for practical drug discovery applications. While the approach shows some bias toward nitrogen-rich rings due to Rosetta's scoring function [28], this limitation is offset by its remarkable enrichment capabilities and computational efficiency.

For researchers in constrained optimization, REvoLd offers a compelling case study in handling combinatorial constraints while maintaining exploration capabilities. Its continued development will likely focus on improving scoring functions, incorporating additional constraint types (such as pharmacokinetic properties), and tighter integration with experimental data through active learning approaches.

Molecular optimization is a critical step in the drug development pipeline, aiming to identify candidate molecules with improved properties from a vast chemical search space. This task presents a significant challenge as it requires the simultaneous optimization of multiple, often competing, molecular properties while adhering to stringent drug-like criteria and structural constraints. Traditional optimization methods have frequently neglected these complex constraint requirements, thereby limiting the development of high-quality molecules that satisfy both property objectives and constraint compliance. The CMOMO (Constrained Molecular Multi-property Optimization) framework addresses this fundamental challenge by introducing a novel deep multi-objective optimization approach that dynamically balances multi-property optimization with constraint satisfaction [29].

Positioned within the broader context of constrained optimization problem (COP) evolutionary algorithm research, CMOMO represents a significant advancement by integrating deep learning methodologies with evolutionary computation strategies. This hybrid approach enables a more effective navigation of the complex chemical search space, particularly for practical drug discovery applications where multiple desired properties—such as bioactivity, drug-likeness, synthetic accessibility, and structural constraints—must be simultaneously satisfied. The framework's ability to demonstrate a two-fold improvement in success rate for real-world optimization tasks, such as glycogen synthase kinase-3β (GSK3β) inhibitor optimization, highlights its potential to transform molecular design processes in pharmaceutical research and development [29].

CMOMO Architectural Framework and Core Mechanisms

Two-Stage Dynamic Optimization Architecture

The CMOMO framework divides the optimization process into two distinct but cooperative stages, enabling a dynamic constraint handling strategy that effectively balances multi-property optimization with constraint satisfaction. This architectural innovation represents a significant departure from conventional single-stage optimization approaches that often struggle with constraint compliance.

Stage 1: Multi-Property Optimization Phase The initial stage focuses on aggressive property improvement, employing a multi-objective optimization strategy to enhance target molecular properties while maintaining baseline constraint satisfaction. During this phase, the algorithm explores the chemical search space to identify regions containing molecules with improved property profiles, using a relaxed constraint threshold to enable broader exploration of potential solutions.

Stage 2: Constraint Refinement Phase The secondary stage applies strict constraint enforcement to solutions identified in the first stage, refining them to ensure full compliance with all specified constraints. This phased approach allows the algorithm to first identify promising regions in the chemical space based on property optimization objectives, then concentrate computational resources on ensuring these promising candidates meet all necessary constraints for practical drug development applications [29].

The dynamic cooperation between these two stages is mediated through an adaptive switching mechanism that monitors optimization progress and constraint violation patterns, enabling the framework to allocate computational resources efficiently between property improvement and constraint satisfaction based on the current state of the optimization process.

Latent Vector Fragmentation-Based Evolutionary Reproduction

A cornerstone of the CMOMO framework is its novel latent vector fragmentation-based evolutionary reproduction strategy, which enables effective generation of promising molecules. This approach operates in a continuous latent space representation of molecules, where traditional genetic operators are replaced or augmented with fragmentation and recombination operations tailored to the molecular representation.

The process involves:

- Latent Representation: Molecules are encoded into a continuous latent space using deep learning models, capturing their essential chemical characteristics in a numerically manipulable format.

- Fragmentation Operation: The latent representations are strategically fragmented into meaningful segments that correspond to chemically relevant substructures or property-determining components.

- Evolutionary Recombination: Fragments from parent molecules are recombined using evolutionary principles to generate novel latent representations, which are then decoded back to molecular structures.

- Quality Preservation: The fragmentation strategy is designed to preserve chemically valid regions while enabling exploration of novel combinations, maintaining molecular validity throughout the optimization process [29].

This reproduction strategy has demonstrated superior performance in generating diverse, high-quality molecules compared to conventional evolutionary operators, particularly because it respects the complex structural relationships inherent in molecular systems.

Table 1: Core Components of the CMOMO Architecture

| Component | Mechanism | Function |

|---|---|---|

| Two-Stage Optimization | Dynamic phase switching | Balances property improvement with constraint satisfaction |

| Latent Vector Fragmentation | Segmentation and recombination of latent representations | Enables effective exploration of chemical space |

| Dynamic Constraint Handling | Adaptive constraint thresholds | Progressively enforces constraints while maintaining diversity |

| Multi-Objective Optimization | Pareto-based selection | Simultaneously optimizes multiple target properties |

Experimental Protocols and Validation Methodologies

Benchmark Evaluation Framework

The experimental validation of CMOMO employed a rigorous benchmark evaluation framework comparing its performance against five state-of-the-art molecular optimization methods. The benchmark was designed to assess both the efficiency of property optimization and the effectiveness of constraint satisfaction across diverse molecular optimization scenarios.

Benchmark Tasks Two established benchmark tasks were utilized to evaluate fundamental optimization capabilities:

- Multi-Property Optimization Task: Focused on simultaneous optimization of key molecular properties including bioactivity, drug-likeness (quantified by QED), and synthetic accessibility (measured by SA score).

- Constrained Optimization Task: Evaluated the ability to optimize target properties while strictly adhering to structural constraints and drug-like criteria [29].

Evaluation Metrics Performance was quantified using multiple metrics:

- Success Rate: Percentage of successfully optimized molecules meeting all property targets and constraints

- Property Improvement: Magnitude of enhancement in target properties from initial to optimized molecules

- Constraint Satisfaction: Degree of compliance with all specified constraints

- Diversity: Chemical diversity of the optimized molecular set

- Pareto Front Quality: For multi-objective scenarios, the spread and dominance of solutions in the objective space

Comparative Methods CMOMO was evaluated against five state-of-the-art methods, demonstrating superior performance in obtaining more successfully optimized molecules with multiple desired properties while satisfying drug-like constraints [29].

Practical Application Protocols

Beyond benchmark evaluation, CMOMO was validated on two practical drug discovery tasks representing real-world optimization challenges:

Protocol 1: Protein-Ligand Optimization for 4LDE Protein This protocol addressed the optimization of ligands for the β2-adrenoceptor GPCR receptor (4LDE protein structure), a therapeutically relevant target.

Experimental Workflow:

- Initialization: Curate starting molecule set with known activity against 4LDE protein

- Property Definition: Specify target properties including binding affinity, selectivity, and metabolic stability

- Constraint Definition: Define structural constraints based on crystallographic data and drug-like criteria

- Optimization Execution: Apply CMOMO framework with protein-specific parameters

- Validation: Evaluate optimized molecules through in silico docking and binding affinity predictions

Key Parameters:

- Population size: 1000 molecules

- Generation count: 100 iterations

- Constraint threshold: Dynamic adjustment from relaxed to strict enforcement

- Evaluation metrics: Docking scores, predicted binding affinity, drug-likeness indices

Protocol 2: GSK3β Inhibitor Optimization This protocol focused on optimizing inhibitors for glycogen synthase kinase-3β (GSK3β), a target for neurological disorders and diabetes.

Experimental Workflow:

- Compound Selection: Identify initial GSK3β inhibitors from published literature and databases

- Multi-Objective Specification: Define target properties including IC50 values, blood-brain barrier permeability (for CNS applications), and metabolic stability

- Structural Constraints: Impose structural constraints based on known pharmacophore features and synthetic feasibility

- CMOMO Application: Implement the two-stage optimization process with fragment-based reproduction

- Experimental Validation: Select top candidates for in vitro testing against GSK3β

Performance Outcome: CMOMO demonstrated a two-fold improvement in success rate for the GSK3β optimization task compared to baseline methods, successfully identifying molecules with favorable bioactivity, drug-likeness, synthetic accessibility, and adherence to structural constraints [29].

Table 2: Performance Metrics for Practical Application Tasks

| Task | Success Rate | Bioactivity Improvement | Drug-Likeness (QED) | Constraint Compliance |

|---|---|---|---|---|

| 4LDE Protein Optimization | 68% | 3.2x IC50 improvement | 0.72 ± 0.08 | 94% |

| GSK3β Inhibitor Optimization | 74% | 2.8x IC50 improvement | 0.69 ± 0.11 | 96% |

Visualization of Workflows and Signaling Pathways

CMOMO Two-Stage Optimization Workflow

The following diagram illustrates the complete CMOMO optimization process, showing the dynamic interaction between the two stages and the latent vector fragmentation mechanism:

CMOMO Two-Stage Optimization Workflow

Latent Vector Fragmentation and Recombination Process

This diagram details the latent vector fragmentation-based evolutionary reproduction strategy, a core innovation of the CMOMO framework:

Latent Vector Fragmentation and Recombination

Research Reagent Solutions and Essential Materials

The experimental validation and application of the CMOMO framework utilizes both computational tools and chemical resources. The following table details the key research reagent solutions essential for implementing molecular optimization using this approach.

Table 3: Research Reagent Solutions for CMOMO Implementation

| Resource Category | Specific Tools/Databases | Function in CMOMO Framework |

|---|---|---|

| Chemical Databases | ChEMBL, ZINC, PubChem | Source initial molecular structures for optimization campaigns |

| Property Prediction | QED Calculator, SA Score Predictor | Evaluate drug-likeness and synthetic accessibility during optimization |

| Structural Analysis | RDKit, Open Babel | Process chemical structures, compute molecular descriptors |

| Protein-Ligand Data | PDB (4LDE structure), BindingDB | Provide structural constraints and activity data for target-specific optimization |

| Benchmark Suites | Molecular Optimization Benchmarks | Standardized datasets for method comparison and validation |

| Deep Learning Framework | TensorFlow, PyTorch | Implement neural networks for latent space representation and learning |

| Evolutionary Computation | Custom CMA-ES implementation | Support advanced optimization strategies within the framework |

Implications for Constrained Optimization Problem Research