Constrained and Multi-Objective Evolutionary Algorithms: Principles, Methods, and Applications in Drug Discovery

Evolutionary Algorithms (EAs) are powerful global optimization tools, but their application to real-world problems in drug discovery and engineering requires sophisticated methods to handle constraints.

Constrained and Multi-Objective Evolutionary Algorithms: Principles, Methods, and Applications in Drug Discovery

Abstract

Evolutionary Algorithms (EAs) are powerful global optimization tools, but their application to real-world problems in drug discovery and engineering requires sophisticated methods to handle constraints. This article provides a comprehensive overview of Constraint-Handling Techniques (CHTs) for single- and multi-objective optimization. We explore foundational concepts, categorize mainstream methodologies from penalty functions to hybrid and multi-objective approaches, and detail advanced strategies for troubleshooting and performance optimization. A special focus is placed on biomedical applications, including personalized drug target recognition and de novo drug design, providing researchers and drug development professionals with insights into selecting, validating, and applying these techniques to complex constrained problems.

The Core Challenge: Why Evolutionary Algorithms Need Constraint-Handling Techniques

Defining Constrained Optimization Problems (COPs) and Constrained Multi-Objective Problems (CMOPs)

Constrained optimization represents a fundamental class of problems in mathematical optimization where an objective function must be optimized with respect to decision variables while simultaneously satisfying constraint conditions. These constraints can be either hard constraints, which set conditions that must be strictly satisfied, or soft constraints, where violations are penalized in the objective function rather than strictly prohibited [1]. In scientific and engineering contexts, Constrained Optimization Problems (COPs) and their multi-objective counterparts, Constrained Multi-Objective Problems (CMOPs), appear extensively in domains ranging from drug development and portfolio optimization to engineering design and logistics scheduling [2] [3].

The fundamental distinction between constrained and unconstrained optimization lies in the feasible region—the set of points satisfying all constraints where an optimal solution must be sought [4]. For COPs, bringing infeasible designs into the feasible region is critical, and gradients of active constraints are considered when determining the search direction for the next design iteration [4]. In contrast, Constrained Multi-Objective Optimization must reconcile multiple conflicting objectives with complex constraints, generating not a single optimal solution but rather a set of trade-off solutions known as the Pareto front [5] [3].

Table 1: Core Terminology in Constrained Optimization

| Term | Mathematical Definition | Interpretation |

|---|---|---|

| Decision Variables | ( \vec{x} = (x1, x2, ..., x_D)^T ) | Parameters the optimizer can control |

| Objective Function | ( f(\vec{x}) ) | Quantity to minimize/maximize |

| Inequality Constraint | ( g_i(\vec{x}) \leq 0 ) | Limit that must not be exceeded |

| Equality Constraint | ( h_j(\vec{x}) = 0 ) | Condition that must be exactly met |

| Feasible Solution | ( G(\vec{x}) = 0 ) | Solution satisfying all constraints |

| Infeasible Solution | ( G(\vec{x}) > 0 ) | Solution violating at least one constraint |

Mathematical Formulations

Canonical COP Formulation

A general constrained minimization problem can be mathematically formulated as follows [1] [2]:

[ \begin{array}{rcll} \min & ~ & f(\mathbf{x}) & \ \mathrm{subject~to} & ~ & gi(\mathbf{x}) \leq ci & {\text{for }} i=1,\ldots,n \quad {\text{Inequality constraints}} \ & ~ & hj(\mathbf{x}) = dj & {\text{for }} j=1,\ldots,m \quad {\text{Equality constraints}} \end{array} ]

where (f(\mathbf{x})) is the objective function to be minimized, (gi(\mathbf{x})) represent inequality constraints, and (hj(\mathbf{x})) represent equality constraints. The feasible region comprises all points (x) satisfying all constraints simultaneously [1] [4].

Canonical CMOP Formulation

For problems with multiple conflicting objectives, the CMOP formulation extends naturally to [5] [3]:

[ \begin{array}{l} \min \vec{F}(\vec{x}) = \left( f{1}(\vec{x}), f{2}(\vec{x}), \ldots, f{m}(\vec{x})\right)^{\text{T}} \ \text{ s.t. } \left{ \begin{array}{l} g{i}(\vec{x}) \leq 0, i=1, \ldots, l \ h{i}(\vec{x}) = 0, i=1, \ldots, k \ \vec{x} = \left( x{1}, x{2}, \ldots, x{D}\right)^{\text{T}} \in \mathbb{R} \end{array}\right. \end{array} ]

where (\vec{F}) denotes the objective vector with (m) conflicting objectives, and the constraints remain similar to the single-objective case [3].

Constraint Violation Measurement

For evolutionary algorithms, the total constraint violation provides a quantitative measure of how much a solution violates constraints [2] [3]:

[ CV(\vec{x}) = \sum{i=1}^{l+k} cv{i}(\vec{x}) ]

where each constraint violation is computed as:

[ cv{i}(\vec{x}) = \left{ \begin{array}{ll} \max \left( 0, g{i}(\vec{x})\right), & i=1, \ldots, l \ \max \left( 0, \left| h_{i}(\vec{x}) \right| -\delta \right), & i=1, \ldots, k \end{array}\right. ]

Here, (\delta) is a small positive tolerance value that relaxes equality constraints to make them more manageable in numerical optimization [2].

Classification of Constrained Optimization Problems

Constrained optimization problems can be categorized based on the characteristics of their objective functions and constraints, which directly influences the choice of appropriate solution algorithms [6].

Table 2: Classification of Constrained Optimization Problems

| Problem Type | Objective Function | Constraints | Example Formulation |

|---|---|---|---|

| Linear Programming (LP) | Linear | Linear | ( \min c^Tx ) s.t. ( Ax \leq b ) |

| Integer Linear Programming (ILP) | Linear | Linear, integer variables | ( \min c^Tx ) s.t. ( Ax \leq b, x \in \mathbb{Z} ) |

| Quadratic Programming (QP) | Quadratic | Linear | ( \min \frac{1}{2}x^TQx + c^Tx ) s.t. ( Ax \leq b ) |

| Nonlinear Programming (NLP) | Nonlinear | Nonlinear | ( \min f(x) ) s.t. ( g(x) \leq 0 ) |

| Mixed-Integer Nonlinear Programming (MINLP) | Nonlinear | Nonlinear, integer variables | ( \min f(x) ) s.t. ( g(x) \leq 0, x_i \in \mathbb{Z} ) |

The presence of integer constraints requires special handling, as they restrict variables to discrete values, making the problem combinatorially complex [6] [7]. Similarly, binary variables (0-1 constraints) enable modeling of "yes/no" decisions common in resource allocation and feature selection problems [7].

Constraint Handling Techniques in Evolutionary Algorithms

Evolutionary Algorithms (EAs) have emerged as powerful global optimization methods for solving complex COPs and CMOPs due to their population-based nature, which enables exploring diverse regions of the search space simultaneously [2] [8]. However, EAs require specialized Constraint Handling Techniques (CHTs) to effectively guide the search toward feasible regions while maintaining optimization performance [8] [3].

Major Categories of CHTs

Research in constraint handling for evolutionary computation has identified four primary methodological approaches [2] [8]:

Penalty Function Methods: These techniques use penalty factors to balance the influence of objective function and constraint violations. Penalty methods transform constrained problems into unconstrained ones by adding a penalty term to the objective function that quantifies constraint violations [2]. Adaptive penalty methods that utilize evolutionary feedback to adjust penalty factors have shown particular promise [2].

Feasibility Preference Methods: These approaches prioritize feasible solutions over infeasible ones through mechanisms such as feasibility rules, stochastic ranking, and ε-constrained methods [2]. The feasibility rule, for instance, strictly prefers feasible solutions over infeasible ones, and between two feasible solutions, selects the one with better objective function value [2].

Multi-Objective Optimization Techniques: These methods transform constraints into additional objectives, converting a COP into a multi-objective optimization problem [2] [8]. This allows using the well-established Pareto dominance concept to balance objective optimization and constraint satisfaction [2].

Hybrid Constraint Handling Techniques: Recent approaches combine multiple CHTs to leverage their complementary strengths, often adapting the strategy based on the current population characteristics [2]. These methods can switch between different techniques depending on whether the population contains only feasible solutions, only infeasible solutions, or a mixture of both [2].

The ε-Active Strategy

In gradient-based optimization, the ε-active strategy provides a systematic approach to identify which constraints should be considered active at a given design point [4]. This strategy normalizes constraints and defines an active set based on a tolerance ε:

[ \begin{aligned} bi &= \frac{gi}{gi^u} - 1 \leq 0, \quad i=1,m \ ei &= \frac{hj}{hj^u} - 1, \quad j=1,p \end{aligned} ]

When (bi) (or (ei)) falls between parameters CT (usually -0.03) and CTMIN (usually 0.005), the constraint (g_i) is considered active [4]. This approach helps optimization algorithms focus computational resources on the most critical constraints.

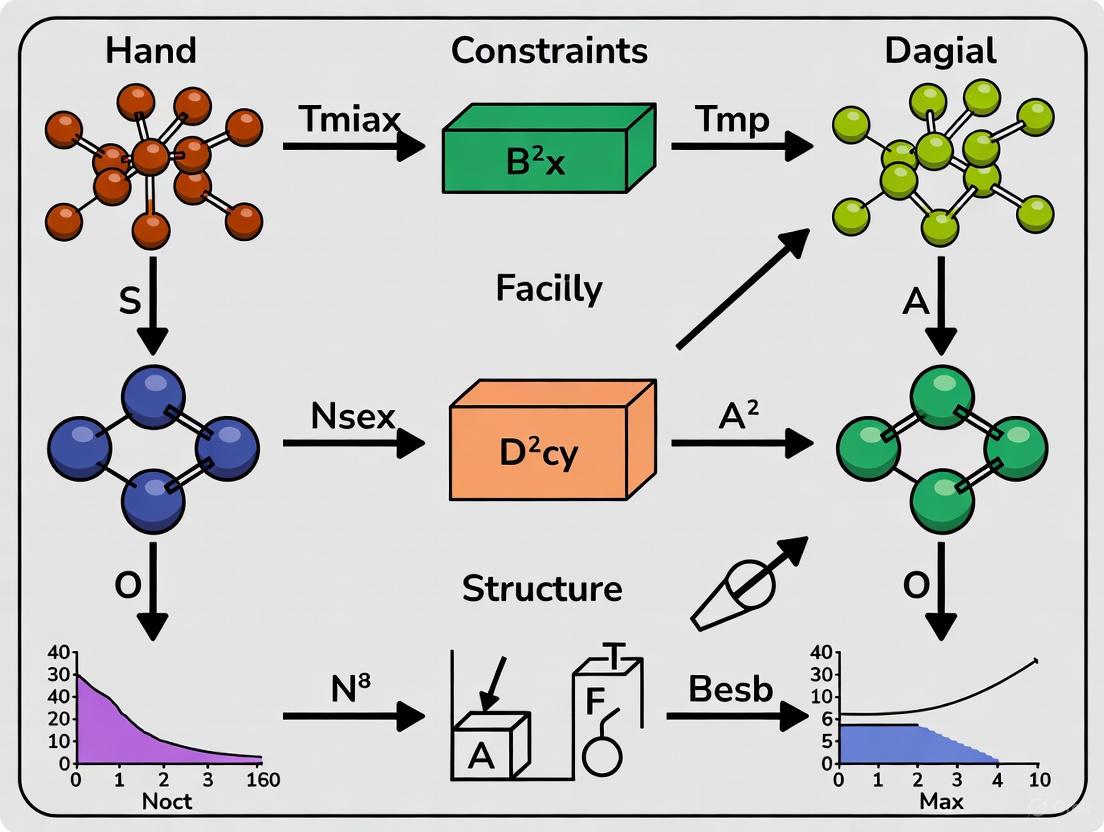

Figure 1: ε-Active Constraint Strategy Workflow

Solution Methods and Algorithms

Classical Mathematical Programming Approaches

Traditional approaches to constrained optimization include both direct methods and transformation techniques [6]:

Substitution Method: For simple problems with equality constraints, this approach solves constraints for some variables and substitutes them into the objective function [1] [6]. While conceptually straightforward, it becomes impractical for complex nonlinear constraints [6].

Lagrange Multipliers: This method converts equality-constrained problems into unconstrained ones by incorporating constraints into a Lagrangian function [1]. The approach can be extended to inequality constraints through the Karush-Kuhn-Tucker (KKT) conditions, which provide necessary optimality conditions for constrained optimization [1] [6].

Sequential Linear Programming (SLP): This technique linearizes nonlinear problems around the current design point and solves a sequence of linear programming subproblems [4]. SLP uses move limits to control the step size and ensure the linear approximation remains reasonable [4].

Sequential Quadratic Programming (SQP): SQP methods solve a sequence of quadratic programming subproblems, typically demonstrating faster convergence than SLP for nonlinear problems [4].

Evolutionary Algorithm Frameworks

Evolutionary Algorithms for constrained optimization typically follow a population-based iterative process with specialized constraint handling mechanisms [2] [3]. Recent advanced frameworks include:

EALSPM (Evolutionary Algorithm assisted by Learning Strategies and Predictive Model): This approach uses a classification-collaboration constraint handling technique where constraints are randomly classified into K classes, decomposing the original problem into K subproblems [2]. Each subpopulation addresses a subproblem, with interaction through random learning and directed learning strategies [2].

Two-Stage Evolutionary Algorithms: These methods separate the search process into distinct phases, such as first focusing on feasibility followed by objective optimization, or using different techniques based on the current population's characteristics [2].

Multi-Objective Based COEAs: These algorithms transform single-objective constrained problems into multi-objective ones, treating constraint satisfaction as separate objectives [2] [3]. This allows using Pareto dominance concepts to balance constraint satisfaction and objective optimization [2].

Table 3: Comparison of Constrained Optimization Algorithms

| Algorithm | Problem Type | Key Mechanism | Advantages | Limitations |

|---|---|---|---|---|

| SLP | NLP with linear constraints | Sequential linearization with move limits | Memory efficient, numerically stable | Poor performance on highly nonlinear problems |

| SQP | General NLP | Sequential quadratic approximation | Fast convergence, handles nonlinearities | Computationally expensive for large problems |

| Branch-and-Bound | MILP, MIQP | Tree search with bounds | Guaranteed optimality for convex problems | Exponential worst-case complexity |

| Penty Function EA | General COPs | Adaptive penalty coefficients | Simple implementation, general applicability | Sensitive to penalty parameter tuning |

| Feasibility Rule EA | General COPs | Strict preference for feasible solutions | Effective when feasible region is large | May stagnate with disconnected feasible regions |

| Multi-Objective EA | Complex CMOPs | Pareto dominance with constraint objectives | Balanced approach, maintains diversity | Increased computational complexity |

Figure 2: Evolutionary Algorithm Framework for Constrained Optimization

Experimental Protocols and Benchmarking

Performance Evaluation Methodology

Rigorous experimental protocols are essential for evaluating and comparing constrained optimization algorithms. Standard methodologies include [2] [3]:

Benchmark Test Problems: Well-established test suites such as CEC2010, CEC2017, and other specialized constrained multi-objective problem sets provide standardized evaluation environments [2] [3]. These benchmarks include problems with diverse characteristics, including linear/nonlinear constraints, connected/disconnected feasible regions, and varying objective-contraint interactions [3].

Performance Metrics: For single-objective COPs, common metrics include solution feasibility, objective function value at the best feasible solution, and convergence speed [2]. For CMOPs, metrics assess both convergence to the true Pareto front and diversity along the front, such as Inverted Generational Distance (IGD) and Hypervolume (HV) indicators [3].

Statistical Significance Testing: Multiple independent runs with different random seeds are essential to account for the stochastic nature of evolutionary algorithms, with results typically reported as mean and standard deviation, accompanied by statistical tests like Wilcoxon signed-rank test to establish significance [2].

Case Study: EALSPM Experimental Protocol

The EALSPM algorithm exemplifies modern experimental methodology in constrained optimization research [2]:

Parameter Settings: Population size = 300, maximum function evaluations = 200,000, tolerance for equality constraints δ = 0.0001 [2].

Constraint Decomposition: Original constraints randomly classified into K=3 classes, generating 3 subpopulations of 100 individuals each [2].

Two-Stage Evolution:

- Random Learning Stage: Subpopulations interact through random learning strategies to explore promising regions.

- Directed Learning Stage: Subpopulations interact through directed learning strategies for refined local search [2].

Predictive Model: Improved continuous domain estimation of distribution model predicts offspring based on information from high-quality individuals [2].

This approach demonstrated competitive performance against state-of-the-art methods on CEC2010 and CEC2017 benchmark problems, plus two practical engineering problems [2].

Research Reagent Solutions: Algorithmic Tools for Constrained Optimization

Table 4: Essential Algorithmic Tools for Constrained Optimization Research

| Tool Category | Specific Methods/Software | Primary Function | Application Context |

|---|---|---|---|

| Optimization Solvers | CPLEX, Gurobi, lp_solve | Exact solution of LP, QP, MILP problems | Medium to large-scale convex problems |

| Evolutionary Frameworks | PlatEMO, JMetal, DEAP | Implementation of COEAs and CMOEAs | Complex nonlinear, nonconvex problems |

| Constraint Handling Modules | Custom implementations of penalty functions, feasibility rules, multi-objective transformations | Managing constraints within EA frameworks | Experimental algorithm development |

| Benchmark Suites | CEC2010, CEC2017, NCTP | Standardized performance evaluation | Algorithm comparison and validation |

| Visualization Tools | MATLAB, Python matplotlib, VOSviewer | Performance metrics and Pareto front visualization | Results analysis and research reporting |

Constrained Optimization Problems and Constrained Multi-Objective Problems represent fundamental challenges in optimization theory with extensive practical applications. While significant progress has been made in developing efficient constraint handling techniques, particularly within evolutionary computation frameworks, several research frontiers remain active [8] [3]:

Large-Scale CMOPs: Developing efficient algorithms for problems with high-dimensional decision spaces and numerous constraints remains challenging [3].

Dynamic Constrained Optimization: Many real-world problems feature time-varying constraints and objectives, requiring adaptive algorithms that can track moving optima [3].

Multimodal CMOPs: Problems with multiple equivalent Pareto optimal sets need specialized techniques to locate and maintain diverse solutions [3].

Theoretical Foundations: Despite empirical success, theoretical understanding of convergence properties for constrained evolutionary algorithms requires further development [8].

Real-World Applications: Bridging the gap between benchmark performance and practical effectiveness in domains like drug development, energy systems, and healthcare optimization necessitates problem-specific algorithm customization [2] [3].

The integration of machine learning techniques with evolutionary algorithms, exemplified by approaches like EALSPM that incorporate predictive models, represents a promising direction for enhancing constraint handling capabilities in complex optimization scenarios [2]. As research continues, the development of more sophisticated, efficient, and robust constrained optimization methods will remain critical for addressing increasingly complex real-world optimization challenges.

Evolutionary Algorithms (EAs) are population-based metaheuristics inspired by natural selection, where a collection of candidate solutions evolves under specified selection rules to optimize a general cost function [9]. In their fundamental formulation, EAs excel as unconstrained optimizers, leveraging their population-based nature and global search capabilities to explore complex solution spaces without relying on gradient information. This inherent strength, however, becomes their primary limitation when confronting real-world problems where constraints are inevitable. The "fundamental hurdle" thus emerges: the core mechanisms that make EAs powerful for unconstrained search—their ability to freely explore the entire solution space—are precisely what render them unsuitable for constrained optimization problems (COPs) without significant modification [2].

The transition from unconstrained to constrained optimization represents a paradigm shift in evolutionary computation. In scientific research and engineering practice, many problems can be reduced to function optimization with constraints [2]. The challenge is particularly acute in domains like drug design, where molecules must satisfy multiple physiological properties including efficacy, safety, and synthetic feasibility [10] [9]. This article examines the inherent limitations of EAs as unconstrained optimizers, surveys the constraint-handling techniques that have evolved to address these limitations, and provides experimental frameworks for evaluating these methods within the context of drug discovery applications.

The Unconstrained Foundation of Evolutionary Algorithms

Core Principles and Mechanisms

EAs operate on principles of selection, variation, and population dynamics—mechanisms inherently designed for unconstrained search spaces. The algorithm begins with an initial population of candidate solutions, typically generated randomly across the search domain. Through iterative processes of recombination (crossover) and mutation, new offspring solutions are created. Selection operators then determine which solutions survive to the next generation based on their fitness, typically measured solely by objective function value in unconstrained settings [9].

The population-based approach allows EAs to maintain diversity while exploring multiple regions of the solution space simultaneously. This characteristic enables them to avoid local optima more effectively than point-based search methods. Furthermore, their black-box nature means they require no specific problem knowledge beyond the ability to evaluate solution quality. These attributes make EAs exceptionally well-suited for unconstrained optimization across various domains, from mathematical function optimization to parameter tuning in complex systems [11].

Inherent Limitations for Constrained Problems

When constraints are introduced, fundamental conflicts arise with the EA's core operating principles:

- Blind Exploration: The unrestricted exploration mechanism of EAs frequently generates infeasible solutions that violate problem constraints, wasting computational resources [2].

- Selection Criterion Mismatch: Traditional fitness-based selection has no inherent mechanism for evaluating constraint satisfaction, often leading populations toward high-fitness but infeasible regions [2].

- Feasible Region topology: In many practical problems, feasible regions may be discontinuous, small, or located near constraint boundaries, making them difficult to locate through undirected search [12] [2].

Table 1: Fundamental Conflicts Between Unconstrained EAs and Constrained Problems

| EA Mechanism | Unconstrained Operation | Challenge in Constrained Context |

|---|---|---|

| Population Initialization | Random across entire search space | High probability of generating infeasible solutions |

| Selection Criteria | Based solely on objective function value | No consideration for constraint violations |

| Variation Operators | Blind mutation and recombination | Often produce offspring violating constraints |

| Convergence | Toward global optimum | May lead to infeasible regions |

Constraint Handling Techniques: Bridging the Gap

To overcome the limitations of unconstrained EAs, researchers have developed four primary categories of constraint-handling techniques, each addressing the fundamental hurdle through different strategies.

Penalty Function Methods

Penalty methods transform constrained problems into unconstrained ones by adding a penalty term to the objective function that increases with constraint violation severity. The fitness function takes the form:

F(x) = f(x) + P(G(x))

Where f(x) is the original objective function, G(x) represents the constraint violation, and P is a penalty function [2]. The key challenge lies in determining appropriate penalty factors that effectively guide the search toward feasible regions without overwhelming the objective function. Three approaches have emerged:

- Fixed penalty factors use predetermined weights but require extensive parameter tuning

- Dynamic penalty factors increase penalties over generations to first encourage exploration then enforce feasibility

- Adaptive penalty factors utilize evolutionary feedback to automatically adjust penalty pressure [2]

Recent approaches like the ν-level penalty function have shown promise in handling complex nonlinear constraints by transforming COPs into unconstrained problems [2].

Feasibility Preference Methods

These methods modify selection mechanisms to prioritize feasible over infeasible solutions through three main approaches:

- Feasibility rules strictly prefer feasible solutions, but may over-constrain search and miss promising regions near constraint boundaries [2]

- Stochastic ranking introduces a probability

Pof comparing infeasible solutions based on objective function rather than constraint violation, balancing objective and feasibility pressures [2] - ε-constraint methods use a tolerance parameter

εto control the acceptance of slightly infeasible solutions, particularly effective when the global optimum lies near feasible region boundaries [2]

Advanced implementations like the FROFI framework mitigate the greediness of pure feasibility rules by incorporating objective function information through specialized replacement mechanisms and mutation strategies [2].

Multi-Objective Optimization Techniques

These methods reformulate constrained optimization as multi-objective problems, treating constraint satisfaction as separate objectives to be optimized alongside the primary objective [2]. This approach leverages the EA's inherent ability to handle multiple objectives simultaneously.

- Bi-objective transformation converts COPs into problems with two objectives: optimizing the original function and minimizing constraint violation [2]

- Dynamic constrained multi-objective optimization creates equivalent dynamic problems that can be solved with multi-objective EAs [2]

- Decomposition-based methods like DeCODE exploit multi-objective optimization potential through problem decomposition [2]

- Helper objectives transform COPs into problems with helper and equivalent objectives to reduce the time needed to cross wide feasibility gaps [2]

Table 2: Classification of Constraint-Handling Techniques in Evolutionary Algorithms

| Technique Category | Key Variants | Strengths | Weaknesses |

|---|---|---|---|

| Penalty Functions | Fixed, Dynamic, Adaptive | Simple implementation, Wide applicability | Parameter sensitivity, Premature convergence |

| Feasibility Preference | Feasibility Rules, Stochastic Ranking, ε-Constraint | Strong feasibility pressure, Good boundary search | Overly greedy selection, Population diversity loss |

| Multi-Objective Reformulation | Bi-objective, Dynamic CMOP, Decomposition | Pareto approach, Maintains diversity | Computational complexity, Specialized algorithms needed |

| Hybrid Techniques | Multi-stage, Situation-based | Adaptability, Robust performance | Implementation complexity, Parameter tuning |

Hybrid and Advanced Approaches

Recent research focuses on hybrid methodologies that combine multiple techniques or introduce learning mechanisms:

- Classification-collaboration constraint handling randomly classifies constraints into K classes, decomposing the original problem into K subproblems with corresponding subpopulations [2]

- Two-stage evolutionary frameworks divide the optimization process into random learning and directed learning stages where subpopulations interact through different strategies [2]

- Learning-based approaches like EALSPM incorporate estimation of distribution models to predict offspring based on information from high-quality individuals [2]

- LLM-assisted meta-optimization uses large language models as meta-optimizers to automatically generate update rules for constrained evolutionary algorithms without human intervention [12]

Experimental Frameworks and Evaluation Methodologies

Standardized Benchmark Problems

Rigorous evaluation of constrained EAs requires standardized benchmark problems with diverse characteristics. The CEC2010 benchmark suite for constrained real-parameter optimization provides 18 scalable problems with features including [12]:

- Separable and non-separable objectives

- Mixed inequality and equality constraints

- Rotated constraint geometries that eliminate coordinate system bias

- Varying feasibility ratios from 0 to 1

- Hybrid constraint types per problem

Problems are formally defined as:

where ϵ = 10^-4 denotes the equality tolerance [12]. Typical experimental configurations use search space dimensionalities of D=10 and 30, with maximum function evaluations set to 2×10^5 and 6×10^5, respectively [12].

Performance Metrics and Statistical Validation

Comprehensive evaluation requires multiple performance metrics:

- Solution quality: Best, median, and worst objective values across multiple runs

- Feasibility rate: Percentage of runs converging to feasible solutions

- Computational efficiency: Normalized computation times, distinguishing pure evaluation cost (

T1) from full optimization overhead (T2) [12] - Statistical significance: Experiments typically conducted over 25-31 independent runs with non-parametric statistical tests [12] [2]

Experimental Workflow for Constrained Optimization

The following diagram illustrates a generalized experimental workflow for evaluating constrained evolutionary algorithms:

Application in Drug Design and Discovery

The pharmaceutical domain presents particularly challenging constrained optimization problems that demonstrate the real-world importance of effective constraint handling in EAs.

Constrained Multi-Objective Optimization in Drug Design

De novo drug design (dnDD) aims to create novel molecules satisfying multiple conflicting objectives, naturally forming many-objective optimization problems (ManyOOPs) with more than three objectives [9]. Key objectives include:

- Maximizing drug potency against specific targets

- Maximizing structural novelty

- Optimizing pharmacokinetic profiles

- Minimizing synthesis costs

- Minimizing unwanted side effects [9]

These objectives are typically conflicting (improving one degrades another) and non-commensurable (having different units of measurement), eliminating single optimal solutions and necessitating Pareto-optimal trade-offs [9].

Knowledge-Embedded Constrained Multi-Objective Evolutionary Algorithms

The KMCEA algorithm exemplifies advanced constraint handling for personalized drug target recognition in cancer treatment [13]. This approach:

- Analyzes relationships between objectives (minimizing driver nodes, maximizing prior-known drug-target information) and constraints (guaranteeing network control)

- Creates single-objective auxiliary tasks to optimize individual objectives separately

- Designs a local auxiliary task to maintain diversity for objectives with complex inverse constraint relationships

- Develops specialized population initialization for objectives with simple positive constraint relationships [13]

Research Reagent Solutions for Pharmaceutical Applications

Table 3: Essential Research Reagents and Computational Tools for Constrained EA Applications in Drug Discovery

| Tool/Resource | Type | Function in Constrained Optimization |

|---|---|---|

| CEC2010/2017 Benchmark Suites | Computational Benchmark | Standardized constrained problems for algorithm validation |

| Structural Network Control Models | Biological Network Model | Framework for formulating drug target recognition as CMOPS |

| KMCEA Algorithm | Constrained Multi-Objective EA | Solves structural network control problems with embedded knowledge |

| Decomposition-based Multi-Objective EA | Algorithmic Framework | Handles COPs through problem decomposition |

| LLM-assisted Meta-Optimizer | Advanced EA Framework | Generates update rules for CEAs without human intervention |

| Quantitative Structure-Activity Relationship (QSAR) | Predictive Model | Estimates biological activity for molecular fitness evaluation |

| Molecular Docking Tools | Simulation Software | Evaluates drug-target binding affinity as objective function |

Implementation Framework and Code Structure

Generalized Algorithmic Framework for Constrained EAs

The following diagram illustrates the architecture of a modern constrained evolutionary algorithm, incorporating advanced constraint-handling techniques:

LLM-Assisted Meta-Optimization Framework

Recent advances incorporate large language models as meta-optimizers to automatically design update rules for constrained evolutionary algorithms:

The LLM-assisted approach employs specialized prompt engineering with five key components [12]:

- Role Definition: Specifies the LLM's function as a meta-optimizer

- Task Description: Provides decision variables, constraint violations, and objective values

- Operating Requirement: Details steps for generating update rules

- History Feedback: Includes previous rules and their performance

- Output Format: Defines structured output for automated processing

The fundamental hurdle of EAs as unconstrained optimizers has driven decades of research into sophisticated constraint-handling techniques. From simple penalty functions to knowledge-embedded multitasking algorithms and LLM-assisted meta-optimizers, the field has evolved to address increasingly complex real-world problems. In pharmaceutical applications like drug design, these advanced constrained EAs enable the simultaneous optimization of multiple conflicting objectives while satisfying critical pharmacological constraints. As evolutionary computation continues to integrate with machine learning and artificial intelligence, the capacity to handle constraints effectively will remain central to applying these powerful optimization techniques to the most challenging problems in science and engineering.

Constrained optimization problems are ubiquitous in real-world applications, from engineering design to drug development. When solving these problems, Evolutionary Algorithms (EAs) must effectively manage constraints to locate feasible solutions with optimal performance. The methods for achieving this fall into two fundamental categories: explicit and implicit constraint handling. This dichotomy represents a core conceptual division in how algorithms perceive and respond to constraints during the search process. Explicit techniques maintain constraints as separate, defined entities within the algorithm's logic, while implicit techniques embed constraint satisfaction directly into the problem's representation or operational mechanics. Within the broader thesis on how evolutionary algorithms handle constraints, understanding this dichotomy is essential for selecting, designing, and improving algorithms for complex optimization tasks in research and industry [8].

The challenge of constraints is particularly pronounced in multi-objective optimization problems (MOOPs), where algorithms must balance competing objectives while satisfying a set of constraints. Real-world problems in fields like drug development often involve constraints that can be physical, geometrical, or operational, making the finding of a single feasible solution challenging [14]. This paper provides an in-depth technical examination of the explicit-implicit dichotomy, presenting a structured comparison, detailed experimental methodologies, and practical resources for researchers.

Defining the Dichotomy: Explicit and Implicit Techniques

Explicit Constraint Handling Techniques

Explicit constraint handling techniques are characterized by the direct and separate evaluation of constraints during the evolutionary process. These methods explicitly define constraints as part of the problem formulation and are designed to respect and enforce them by guiding the search towards feasible regions of the solution space [14]. The algorithm typically computes a measure of constraint violation, which is then used alongside the objective function to guide selection.

Common explicit approaches include [8] [15]:

- Penalty Functions: Transform constrained problems into unconstrained ones by adding a penalty to the objective function based on the degree of constraint violation. Variations include static, dynamic, and adaptive penalties.

- Constraint Dominance Principles (CDP): Feasible solutions are always preferred over infeasible ones. Among feasible solutions, dominance applies, while among infeasible solutions, the one with smaller constraint violation is better.

- Multi-objective Optimization Techniques: Treat constraints as additional objectives to be minimized, converting a constrained single-objective problem into an unconstrained multi-objective one.

Implicit Constraint Handling Techniques

Implicit constraint handling techniques, in contrast, do not require the explicit form of constraints to be directly evaluated during the search process. Instead, they inherently satisfy constraints through specialized representations, operators, or dynamic adjustments to the search space. This approach avoids the need for additional objective function evaluations specifically for constraint checking [14].

Notable implicit methods include [16] [14]:

- Special Representations and Operators: Use problem-specific chromosome encodings and genetic operators that ensure only feasible solutions are produced. For example, permutations for sequencing problems or tree structures for genetic programming.

- Boundary Update (BU) Methods: An implicit technique that dynamically updates variable bounds using the constraints themselves, effectively cutting the infeasible search space over iterations.

- Constraint Consensus Methods: Such as the Maximum Direction-based Method (DBmax), which helps guide infeasible solutions toward feasibility without explicit penalty functions.

The fundamental distinction lies in how constraints are integrated into the search mechanism. Explicit methods evaluate and use constraint violation as a separate, visible component of the fitness evaluation, while implicit methods embed constraint satisfaction into the very fabric of the search process, often making constraints "invisible" to the core algorithm.

Table 1: Comparative Analysis of Explicit and Implicit Constraint Handling Techniques

| Feature | Explicit Techniques | Implicit Techniques |

|---|---|---|

| Core Principle | Constraints are directly evaluated and handled as separate entities [14] | Constraints are satisfied inherently through representation or space alteration [14] |

| Common Methods | Penalty functions, CDP, ɛ-constraint, multi-objective ranking [8] [15] | Special representations/operators, decoders, Boundary Update (BU) [16] [14] |

| Implementation | Easier to implement for general problems | Often requires deep problem knowledge for representation/operator design |

| Search Space | Searches the entire space but penalizes infeasible regions | Often restricts search to feasible regions or repairs infeasible solutions |

| Feasibility Guarantee | Does not guarantee feasible solutions | Can guarantee feasibility with proper representation/repair |

| Primary Challenge | Balancing objectives and constraints; parameter tuning (e.g., penalty factors) | Potential loss of diversity; designing effective representations/operators |

Table 2: Quantitative Performance Comparison of Techniques on Benchmark Problems (Illustrative Data based on cited research)

| Technique Category | Sample Method | Typical Feasibility Rate (%) | Convergence Speed | Remarks on Performance |

|---|---|---|---|---|

| Explicit | Adaptive Penalty [15] | High (90-100%) | Medium | Performance highly dependent on penalty parameter tuning |

| Explicit | Feasibility Rules (CDP) [8] | High (90-100%) | Fast in early, slow in late | Simple and effective; may stagnate with disconnected feasible regions |

| Implicit | Boundary Update (BU) [14] | Very High (95-100%) | Fast initial feasibility | Rapidly finds feasible region; may twist search space |

| Implicit | Special Representations [17] | 100% by design | Varies by problem | Guarantees feasibility; search limited to feasible space only |

Experimental Protocols and Methodologies

Protocol for Evaluating Explicit Methods: Adaptive Penalty Approach

A common and powerful explicit method is the adaptive penalty function. The following protocol outlines its implementation and evaluation [15]:

- Problem Formulation: Define the constrained optimization problem as minimizing

f(x)subject tog_i(x) ≤ 0, i = 1, ..., n. - Algorithm Selection: Choose a base EA (e.g., Genetic Algorithm, Differential Evolution).

- Fitness Assignment: For each individual in the population, calculate the fitness

F(x)using an adaptive penalty function:F(x) = f(x) + Σ [ α_i * max(0, g_i(x))^β ]- Where

α_iare penalty coefficients that adapt based on the current generation's performance (e.g., increased if most solutions are infeasible, decreased otherwise). βis a constant, often set to 2 for a quadratic penalty.

- Selection and Variation: Apply selection, crossover, and mutation operators based on the computed fitness

F(x). - Termination and Analysis: Run the algorithm for a fixed number of generations or until convergence. Record key metrics: best solution found, feasibility rate, number of function evaluations to find the first feasible solution, and convergence history.

Protocol for Evaluating Implicit Methods: Boundary Update (BU) with Switching

The Boundary Update (BU) method is a sophisticated implicit technique. The following protocol details its application, including novel switching mechanisms to mitigate search space distortion [14]:

- Initialization: Define the original variable bounds

[LB, UB]and initialize a population. - BU Phase: For each generation during this phase:

- Identify Repairing Variable: Select a variable

x_ithat participates in the largest number of active constraints. - Update Bounds: For the selected

x_i, compute new bounds[lb_i^u, ub_i^u]using the constraints. For a constraintg_j(x) ≤ 0, solve forx_ito find the feasible interval. The new bounds are the intersection of all such intervals and the original[LB_i, UB_i].lb_i^u = min(max( l_i,1(x_{≠i}), l_i,2(x_{≠i}), ..., LB_i ), UB_i)ub_i^u = max(min( u_i,1(x_{≠i}), u_i,2(x_{≠i}), ..., UB_i ), LB_i)

- Evolve Population: The EA operates with these dynamically updated bounds, effectively narrowing the search towards the feasible region.

- Identify Repairing Variable: Select a variable

- Switching Mechanism: To prevent the twisted search space from hindering optimization after the feasible region is found, implement a switch to deactivate BU.

- Hybrid-cvtol: Switch when the overall constraint violation of the population reaches zero (or a very low tolerance).

- Hybrid-ftol: Switch when the objective function value shows no significant improvement over a number of generations.

- Post-Switch Phase: Continue the optimization using a standard EA (e.g., with an explicit CHT like Feasibility Rules) on the original search space.

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Key Research Reagent Solutions for Constrained Evolutionary Optimization

| Reagent / Tool | Function in Research | Example Use Case |

|---|---|---|

| Benchmark Test Suites (e.g., LIR-CMOP, DAS-CMOP) | Provides standardized, non-trivial problems with known properties to fairly evaluate and compare algorithm performance [18] | Testing an algorithm's ability to handle complex, disconnected feasible regions before real-world application. |

| Differential Evolution (DE) Framework | Serves as a robust and versatile base EA for implementing and testing new CHTs, especially for continuous optimization [16] | Acting as the core search engine in a cooperative co-evolution framework for large-scale constrained optimization. |

| Constraint Consensus Method (DBmax) | A mathematical tool to guide infeasible solutions towards feasibility by identifying the direction of maximum constraint violation reduction [16] | Integrated with an EA to rapidly improve feasibility of subpopulations in large-scale problems. |

| Feasibility Rules (CDP) | A simple, parameter-less explicit CHT that provides a baseline for comparison and is often hybridized with more complex methods [14] | Used as the primary selection criterion in the post-switch phase of a hybrid BU method. |

| Multi-objective EA (e.g., NSGA-II) | Allows constraints to be handled by treating them as additional objectives, a powerful explicit approach [8] | Solving problems where the trade-off between objective performance and constraint satisfaction is itself informative. |

Advanced Hybrids and Future Directions

The explicit-implicit dichotomy is not absolute, and modern research often focuses on hybrid approaches that leverage the strengths of both. The Knowledge-driven Two-stage Co-evolutionary Algorithm (KTCOEA) is a prime example, where one population explicitly co-evolves towards the constrained Pareto front (CPF), while another explores the unconstrained Pareto front (UPF), generating both explicit and implicit knowledge to guide the final search [18]. Another trend is the integration of LLMs as subjective judges in evolutionary loops, which replaces the traditional objective function with a describable, qualitative standard, opening new avenues for constraint handling in open-ended domains [19].

The dichotomy between explicit and implicit constraint handling remains a foundational framework for understanding and advancing evolutionary computation. Explicit methods offer generality and conceptual simplicity, while implicit methods can provide efficiency and guarantee feasibility by leveraging problem structure. The current trajectory of research points toward intelligent, adaptive, and knowledge-driven hybrids that dynamically select the most effective strategies from both paradigms. For researchers and scientists in computationally intensive fields like drug development, a deep understanding of this dichotomy is crucial for selecting the right tool for the problem at hand and for contributing to the development of the next generation of robust optimization algorithms.

In the broader research on how evolutionary algorithms handle constraints, the accurate quantification of constraint violation and the strategic identification of feasible solutions are foundational to algorithm performance. Constrained Multi-Objective Evolutionary Algorithms (CMOEAs) are designed to solve problems that require optimizing multiple conflicting objectives simultaneously while satisfying various constraints [3]. The performance of these algorithms hinges on the effectiveness of their Constraint Handling Techniques (CHTs), which guide the search process by balancing the drive for optimal performance with the necessity of remaining within feasible regions. This guide details the core metrics and methodologies that enable this balance, providing researchers, particularly those in computationally complex fields like drug development, with the tools to implement and evaluate advanced CMOEAs.

Fundamental Concepts and Definitions

A Constrained Multi-Objective Optimization Problem (CMOP) can be mathematically formulated as follows [20] [3]:

[ \begin{array}{l} \min \vec {F}(\vec {x})=\left( f{1}(\vec {x}), f{2}(\vec {x}), \ldots , f{m}(\vec {x})\right) ^{\text{T}} \ \text{ s.t. } \left{ \begin{array}{l} g{i}(\vec {x}) \le 0, i=1, \ldots, l \ h{i}(\vec {x})=0, i=1, \ldots, k \ \vec {x}=\left( x{1}, x{2}, \ldots, x{D}\right) ^{\text{T}} \in \mathbb {R} \end{array}\right. \end{array} ]

Here, ( \vec {F} ) is the objective vector with ( m ) functions, ( \vec {x} ) is a ( D )-dimensional decision vector within the search space ( \mathbb {R} ), and ( g{i}(\vec {x}) ) and ( h{i}(\vec {x}) ) represent ( l ) inequality and ( k ) equality constraints, respectively [3].

A solution ( \vec {x} ) is feasible if it satisfies all constraints. The set of all feasible solutions is the feasible region. The Pareto optimal set is the collection of solutions where no objective can be improved without worsening another; its image in the objective space is the Pareto front (PF). For CMOPs, the goal is to find the constrained Pareto set (CPS) and constrained Pareto front (CPF), which are the Pareto optimal solutions residing within the feasible region [3].

Quantifying Constraint Violation

The degree of constraint violation is a numerical measure of how much a solution breaches constraints. For a solution ( \vec{x} ), the violation for each constraint is calculated as [21] [3]:

[ cv{i}(\vec {x})=\left{ \begin{array}{ll} \max \left( 0, g{i}(\vec {x})\right) , & i=1, \ldots, l \ \max \left( 0,\left| h_{i}(\vec {x})\right| -\delta \right) , & i=1, \ldots, k \end{array}\right. ]

The parameter ( \delta ) is a small positive tolerance value (e.g., ( 10^{-6} )) that converts equality constraints into inequality constraints [21]. The total constraint violation ( CV(\vec{x}) ) is the sum of all individual violations [3]:

[ CV(\vec{x}) = \sum{i=1}^{l+k} cv{i}(\vec{x}) ]

A solution is feasible if ( CV(\vec{x}) = 0 ); otherwise, it is infeasible.

Techniques for Feasibility Identification and Balancing

Simply distinguishing between feasible and infeasible solutions is often insufficient for effective optimization. Advanced CMOEAs employ sophisticated strategies to leverage information from infeasible solutions, particularly those that are promising for convergence or diversity.

The Adaptive Constraint Regulation Strategy

This strategy regulates the constraint violation of an infeasible solution based on its ( K )-nearest neighbors in the population [20]. The core idea is to achieve different trade-offs between constraints and objectives in different regions of the search space. The regulated constraint violation ( G_r(x) ) for a solution ( x ) is calculated as [20]:

[ Gr(x) = G(x) \times (1 + \eta \times \frac{Rx}{K}) ]

- ( G(x) ): Original total constraint violation.

- ( R_x ): Number of feasible solutions among the ( K )-nearest neighbors of ( x ).

- ( \eta ): A scaling factor.

- ( K ): The neighborhood size, adaptively adjusted based on the number of feasible solutions and evolutionary generations.

This approach allows some infeasible solutions with good convergence properties to be treated as feasible, preserving their valuable information [20].

Feasibility-Based Grouping

This method divides the population into distinct feasible and infeasible groups [22]. Evaluation and ranking are performed separately within each group. Parents for reproduction are then selected from both groups according to a tunable parameter ( S_p ), which determines the proportion of parents chosen from the feasible group. This ensures infeasible solutions with useful genetic information can contribute to the evolutionary process [22].

Two-Stage Archive Framework

Some algorithms use a multi-stage approach with an archive. In the first stage, constraints are relaxed based on the proportion of feasible solutions and their constraint violation degrees, allowing the population to explore a wider search space. In the second stage, the archive and main population share information, and strict constraint dominance principles are applied to refine solutions and enhance feasibility [21].

Feasibility-Guided and Predictor Models

For computationally expensive problems, such as pump station design, a Feasibility Predictor Model (FPM) can be integrated [23]. The FPM is a machine learning classifier trained to identify feasible solutions before performing complex simulations. This hybrid approach significantly reduces computational burden by filtering out infeasible solutions early [23].

Quantitative Comparison of Constraint Handling Techniques

The table below summarizes the core mechanisms, advantages, and challenges of different CHTs.

Table 1: Classification and Comparison of Constraint Handling Techniques

| Technique Category | Core Mechanism | Key Advantage | Primary Challenge |

|---|---|---|---|

| Penalty Function [20] [3] | Adds constraint violation to objectives, scaled by a penalty factor. | Simple to implement and widely applicable. | Performance highly dependent on the choice of penalty factor. |

| Feasibility-Based Ranking (e.g., CDP) [20] [3] | Feasible solutions are always preferred over infeasible ones. | Parameter-free and straightforward. | May discard valuable infeasible solutions, leading to local optima. |

| Multi-Objective (e.g., C-TAEA) [20] [21] | Treats constraints as additional objectives to be optimized. | Effectively utilizes information from infeasible solutions. | Requires careful design of bias/search strategy for the new objectives. |

| Hybrid/Multi-Stage (e.g., PPS, CMOEA-TA) [20] [21] | Divides search into distinct phases (e.g., push/pull, explore/exploit). | Can effectively balance exploration and exploitation. | Requires design of switching criteria and phase-specific strategies. |

| Adaptive Regulation [20] | Adjusts a solution's violation based on its local neighborhood. | Context-aware balancing of feasibility, convergence, and diversity. | Sensitive to the choice of neighborhood size ( K ) and scaling factor. |

Experimental Protocols for Evaluating CHTs

To ensure reproducible and comparable results in CMOEA research, standardized experimental protocols are essential. The following methodology outlines a robust evaluation framework.

Benchmark Test Problems

- Selection: Use established benchmark suites like C-DTLZ, CTP, LIR-CMOP, and DOC to test algorithms on problems with diverse characteristics, including disconnected feasible regions and complex constraint landscapes [20] [3].

- Scale: Testing should involve a substantial number of problems (e.g., 41 to 54 test instances) to ensure statistical significance and generalizability [20] [21].

Performance Metrics

Two primary metrics are used to evaluate the final population's quality:

- Inverted Generational Distance (IGD): Measures convergence and diversity by calculating the average distance from the true Pareto front (CPF) to the solutions found by the algorithm. A lower IGD indicates better performance [21].

- Hypervolume (HV): Measures the volume of the objective space dominated by the solution set and bounded by a reference point. A higher HV indicates better convergence and diversity [21].

Comparative Analysis

- Baseline Algorithms: Compare the new algorithm against multiple state-of-the-art CMOEAs (e.g., 7 or more competitors) [20] [21].

- Statistical Testing: Perform multiple independent runs of each algorithm on each test problem. Report the mean and variance of the performance metrics to facilitate statistical significance tests [20].

Protocol for Adaptive Parameter Tuning

For algorithms with adaptive parameters, such as the neighborhood size ( K ) in ACR [20]:

- Initialization: Set an initial value for ( K ) (e.g., a percentage of the population size).

- Update Rule: Define a rule that adjusts the parameter during evolution. For example, ( K ) can be decreased as the number of generations increases or as the number of feasible solutions in the population rises, thereby tightening the feasibility criterion over time [20].

- Validation: Test the sensitivity of the algorithm's performance to different initializations and update rules to ensure robustness.

Visualization of a General CMOEA Workflow

The following diagram illustrates the core workflow of a generic CMOEA, highlighting the critical role of feasibility identification and constraint violation assessment in the evolutionary process.

The Scientist's Toolkit: Essential Research Reagents

The table below lists key computational "reagents" and tools necessary for researching and implementing CMOEAs.

Table 2: Essential Research Reagents and Tools for CMOEA Development

| Research Reagent / Tool | Function / Purpose | Application Example |

|---|---|---|

| Benchmark Test Suites (C-DTLZ, DOC, etc.) | Provides standardized, scalable test problems with known Pareto fronts to validate and compare algorithm performance [20] [3]. | Used in experimental studies to demonstrate an algorithm's effectiveness against state-of-the-art methods [20] [21]. |

| Performance Metrics (IGD, HV) | Quantifies the convergence and diversity of the obtained solution set, enabling objective comparison of different CMOEAs [21]. | Calculated at the end of multiple algorithm runs to produce statistical performance reports [20]. |

| Feasibility Predictor Model (FPM) | A machine learning classifier (e.g., XGBoost, Random Forest) that pre-filters infeasible solutions to reduce computational cost [23]. | Integrated into the evolutionary loop to avoid expensive simulations for solutions predicted to be infeasible [23]. |

| Adaptive Parameter Controller | A mechanism to dynamically adjust algorithm parameters (e.g., neighborhood size K, penalty factors) during the search process [20]. | Improves robustness by allowing the algorithm to adapt its search strategy based on the current population's state [20]. |

A Taxonomy of Modern Constraint-Handling Techniques and Their Real-World Uses

Constrained optimization problems (COPs) are ubiquitous in engineering and scientific fields, from structural design to drug discovery. Evolutionary Algorithms (EAs), while powerful for global optimization, require specialized mechanisms to handle constraints. Penalty function methods represent a fundamental approach to incorporating constraints into EAs by modifying the objective function to penalize infeasible solutions. The core challenge lies in setting the penalty coefficient, which balances objective function optimization with constraint satisfaction. This guide traces the evolution of these methods from simple static approaches to sophisticated adaptive techniques, framing them within the broader context of how evolutionary algorithms handle constraints.

The fundamental penalty function formulation combines the objective function f(x) and constraint violation G(x) into an expanded function: F(x) = f(x) + rf · G(x), where rf is the penalty coefficient that determines the severity of constraint violation penalties [24]. The effectiveness of this approach hinges critically on selecting an appropriate rf value, which has driven decades of research into increasingly sophisticated penalty methodologies.

Historical Development and Classification

Penalty function methods have evolved significantly from their initial formulations. The historical progression has moved from one-size-fits-all static approaches to context-aware adaptive systems that respond to population characteristics and search progress.

Table: Evolution of Penalty Function Methods

| Era | Method Type | Key Characteristics | Limitations |

|---|---|---|---|

| Early Approaches | Static Penalty | Fixed penalty coefficient throughout evolution [24] | Poor performance across diverse problem types [24] |

| 1990s-2000s | Dynamic Penalty | Predefined variation of coefficient with generations [24] | Difficulty defining general trend functions [24] |

| 2000s-Present | Adaptive Penalty | Automatic adjustment using population information [24] | Increased computational complexity [24] |

| Recent Trends | Learning-Driven | Integration with machine learning and co-evolution [25] | Implementation complexity [25] |

The classification of penalty function methods extends beyond this historical timeline to include specialized approaches:

- Death Penalty: Immediately discards all infeasible solutions

- Self-Adaptive Penalty: Automatically adjusts parameters based on search progress [26]

- Co-evolutionary Penalty: Maintains multiple populations with different penalty factors [27]

Static Penalty Methods

Static penalty methods employ a constant penalty coefficient throughout the evolutionary process. The expanded objective function maintains the same balance between objective optimization and constraint satisfaction from initial population to final solution.

Fundamental Formulation

The traditional static penalty approach defines the expanded objective function as:

Where rf remains constant regardless of the generation or population distribution [24]. The constraint violation G(x) is typically calculated as the sum of individual constraint violations:

With each constraint violation computed differently for inequality and equality constraints [24].

Limitations and Applications

The primary limitation of static penalty methods is their problem-dependent performance. A penalty value suitable for one problem may severely hinder performance on another [24]. Furthermore, as optimization progresses, the same penalty coefficient may not be appropriate throughout different stages of the search process.

Despite these limitations, static penalty methods remain relevant due to their implementation simplicity and computational efficiency. They can perform adequately on problems where the relationship between objective function and constraint violations is well-understood, allowing for informed setting of the penalty coefficient.

Dynamic Penalty Methods

Dynamic penalty methods address the rigidity of static approaches by varying the penalty coefficient according to a predefined schedule throughout the evolutionary process.

Core Mechanism

In dynamic penalty methods, the penalty coefficient rf becomes a function of the generation number t:

Where f(t) is a predefined function, typically increasing over time to apply greater selective pressure toward feasibility as evolution progresses [24]. This temporal variation allows for more flexible search behavior across different evolutionary stages.

Implementation Considerations

The design of the dynamic function f(t) represents the critical implementation decision. Common approaches include:

- Linear increase: Simple but may not match problem characteristics

- Exponential growth: Rapidly increases selection pressure

- Step functions: Applies discrete changes at predetermined generations

While more effective than static approaches, dynamic methods still require prior knowledge to set appropriate trend functions [24]. The performance remains problem-dependent, as different problems may benefit from distinct dynamic profiles.

Adaptive Penalty Methods

Adaptive penalty methods represent a significant advancement by automatically adjusting penalty coefficients based on information extracted from the current population. These methods respond to search progress rather than following predetermined patterns.

Information-Driven Adaptation

Adaptive methods leverage various population characteristics to guide penalty adjustment:

- Feasibility Proportion: The percentage of feasible solutions in the population [24]

- Objective-Constraint Relationship: Correlation between objective values and constraint violations [2]

- Search Progress: Convergence metrics and diversity measures

The adaptive fuzzy penalty method (AFPDE) exemplifies this approach by operating at two levels. At the individual level, each solution selects a penalty coefficient from a predefined domain using fuzzy rules based on normalized objective and constraint violation values. At the population level, the output domain is adjusted adaptively using population information [24].

Fuzzy Logic Approaches

Fuzzy penalty methods incorporate expert knowledge through fuzzy rules and membership functions. The AFPDE method uses normalized objective function values and constraint violations as antecedent variables in fuzzy rules to determine appropriate penalty coefficients for each individual [24]. This approach reduces problem dependency by normalizing the scales of objective functions and constraint violations.

Table: Adaptive Penalty Method Variations

| Method | Adaptation Mechanism | Key Features | Application Context |

|---|---|---|---|

| Adaptive Fuzzy Penalty (AFPDE) | Two-level fuzzy rules | Normalized objectives and constraints [24] | General constrained optimization |

| Co-evolutionary Penalty | Multiple subpopulations | Parallel exploration of penalty factors [27] | Complex constrained problems |

| Two-Stage Adaptive Penalty (TAPCo) | Co-evolution + shuffle stages | Balance exploration/exploitation [27] | Problems with deceptive feasible regions |

Advanced Adaptive Frameworks

Co-evolutionary Approaches

Co-evolutionary frameworks represent a sophisticated adaptive paradigm where penalty factors and solutions evolve simultaneously. The Two-Stage Adaptive Penalty method based on Co-evolution (TAPCo) divides the evolutionary process into distinct phases:

- Co-evolution Stage: The population divides into multiple subpopulations, each associated with a different penalty factor. These subpopulations co-evolve, enabling exploration of diverse regions of the search space [27].

- Shuffle Stage: All subpopulations merge into a single population, using the best-performing penalty factor from the co-evolution stage to intensify exploitation [27].

This coordinated approach enables more effective balancing of exploration and exploitation while adapting penalty pressure to problem characteristics.

Constraint Decomposition Methods

Recent innovations decompose complex constrained problems into simpler components. The Coevolutionary Algorithm based on Constraints Decomposition (CCMOEA) breaks down CMOPs into multiple helper subproblems, each with a single constraint. This decoupling strategy manages complex constraint interactions more effectively [26].

The algorithm employs a two-stage strategy:

- Evolution Stage: Multiple subpopulations explore feasible regions from different directions by optimizing single-constraint problems.

- Improvement Stage: The main population collects valuable information from subpopulations to solve the original CMOP [26].

Experimental Protocols and Methodologies

Benchmarking Frameworks

Rigorous evaluation of penalty methods requires standardized testing protocols. Researchers typically employ established benchmark suites:

- CEC2010 and CEC2017 Constrained Optimization Benchmarks: Standardized test functions for comparing constrained optimization algorithms [2]

- Mechanical Design Problems: Real-world engineering problems including structural optimization [24]

- Cancer Drug Target Recognition Problems: Biological optimization challenges with clinical relevance [13]

Experimental comparisons typically evaluate multiple performance metrics:

- Feasibility Rate: Percentage of runs successfully finding feasible solutions

- Solution Quality: Objective function values of discovered feasible solutions

- Convergence Speed: Generations or function evaluations required to reach satisfactory solutions

- Algorithm Robustness: Performance consistency across multiple runs

Implementation Protocols

The adaptive fuzzy penalty method (AFPDE) employs this experimental workflow:

Adaptive Fuzzy Penalty Workflow

- Initialization: Generate initial population and parameter settings

- Normalization: Normalize objective function values and constraint violations using population extremes [24]:

- Fuzzy Rule Application: For each individual, determine penalty coefficient using fuzzy rules with normalized objective and constraint values as antecedents [24]

- Population-Level Adjustment: Adapt the output domain of penalty coefficients using population feasibility information [24]

- Solution Reproduction: Generate new solutions using evolutionary operators (e.g., differential evolution)

- Diversity Maintenance: Apply specialized mutation schemes to preserve population diversity [24]

- Termination Check: Evaluate stopping criteria; return to step 2 if continuing

The two-stage adaptive penalty method based on co-evolution (TAPCo) follows this alternative protocol:

Co-evolutionary Penalty Workflow

Applications in Drug Discovery and Development

Penalty function methods have demonstrated particular utility in pharmaceutical research, where multiple constraints naturally arise.

De Novo Drug Design

De novo drug design (dnDD) represents a natural many-objective optimization problem with multiple constraints. Molecular properties function as either objectives or constraints in the optimization process [9]. Penalty methods help balance conflicting objectives such as:

- Maximizing drug potency and selectivity

- Minimizing toxicity and side effects

- Optimizing pharmacokinetic properties

- Maintaining synthetic accessibility

Personalized Drug Target Recognition

The knowledge-embedded multitasking constrained multiobjective evolutionary algorithm (KMCEA) applies constrained optimization to identify personalized drug targets in cancer treatment [13]. This approach formulates drug target recognition as a constrained multiobjective optimization problem that:

- Maximizes prior-known drug-target information

- Minimizes the number of driver nodes in cancer networks

- Maintains network controllability constraints [13]

The algorithm incorporates domain knowledge through specialized initialization methods and auxiliary tasks, demonstrating how penalty methods can integrate biological insights for improved performance [13].

The Scientist's Toolkit

Table: Essential Research Reagents for Penalty Method Implementation

| Tool/Component | Function | Implementation Example |

|---|---|---|

| Differential Evolution (DE) | Search algorithm | Solution reproduction through mutation and crossover [24] |

| Fuzzy Inference System | Penalty adaptation | Mapping normalized objectives/constraints to penalty coefficients [24] |

| Co-evolution Framework | Parallel optimization | Simultaneous evolution of multiple subpopulations with different penalty factors [27] |

| Normalization Module | Scale balancing | Normalizing objective and constraint violation values to comparable ranges [24] |

| Constraint Decomposition | Problem simplification | Breaking complex CMOPs into single-constraint subproblems [26] |

| Evolutionary State Detection | Progress monitoring | Determining evolutionary stage to trigger strategy adaptation [26] |

Future Perspectives

The evolution of penalty function methods continues toward increasingly sophisticated learning-driven approaches. Future developments will likely focus on:

- Deep Learning Integration: Using neural networks to predict effective penalty strategies based on problem characteristics [25]

- Transfer Learning: Applying knowledge from previously solved problems to new optimization challenges [25]

- Hybrid CHTs: Combining penalty methods with other constraint-handling techniques for improved performance [8]

- Automated Configuration: Self-configuring penalty systems that require minimal expert intervention

As these methods evolve, they will enable more effective optimization of complex real-world problems across scientific and engineering domains, particularly in data-rich fields like pharmaceutical research where multiple constraints and objectives naturally occur [9].

Constrained optimization problems are ubiquitous in science and engineering, requiring the minimization or maximization of an objective function subject to various constraints. Evolutionary Algorithms (EAs) have emerged as powerful tools for solving such problems, though they initially lacked explicit mechanisms for handling constraints. Among the various constraint-handling techniques developed for EAs, feasibility-preference methods represent a significant class that prioritizes feasible solutions while carefully managing promising infeasible solutions to guide the search process effectively. This technical guide focuses on two prominent feasibility-preference methods: Feasibility Rules and Stochastic Ranking, examining their theoretical foundations, implementation details, and performance characteristics within the broader context of constrained evolutionary optimization.

The fundamental challenge in constrained optimization is balancing the search between feasible and infeasible regions. While the global optimum typically resides within the feasible region, maintaining some infeasible solutions in the population can be beneficial, particularly when dealing with disconnected feasible regions or when the optimum lies on feasibility boundaries. Feasibility-preference methods address this challenge by systematically incorporating preference for feasible solutions while preserving useful information from selected infeasible solutions [8].

Theoretical Foundations

Constrained Optimization Problem Formulation

Without loss of generality, minimization considered, the general nonlinear programming (NLP) problem can be formulated as:

where f(x) is the objective function, x ∈ S ∩ F, and S is an n-dimensional rectangle space in Rⁿ bounded by the parametric constraints:

The feasible region F is defined by a set of m additional linear or nonlinear constraints (m ≥ 0):

where q is the number of inequality constraints and m - q is the number of equalities [28]. For an inequality constraint that satisfies gⱼ(x) = 0 (1 ≤ j ≤ q) at any point at x in region F, we say it is active at x. All equality constraints are considered active at all points in the feasible region F.

Constraint Violation Measurement

For constraint handling, the degree of constraint violation must be quantified. For an individual x, the constraint violation for the j-th constraint is defined as:

where δ is a small positive tolerance value for relaxing equality constraints (generally set to 0.0001) [28] [29]. The total constraint violation is then calculated as:

Some approaches standardize constraints to prevent any single constraint from dominating the violation measure due to scaling differences:

where G_max,ⱼ is the maximum violation of the j-th constraint in the current population [29].

Feasibility Rules

Fundamental Principles

Feasibility Rules, often attributed to Deb's work, employ a set of deterministic criteria to compare solutions during selection operations. These rules establish a strict hierarchy where feasibility takes precedence over objective function quality [28] [8].

Table 1: Deb's Feasibility Rules for Tournament Selection

| Rule | Description | Rationale |

|---|---|---|

| Rule 1 | Any feasible solution is preferred to any infeasible solution | Establishes feasibility as the primary criterion |

| Rule 2 | Between two feasible solutions, the one with better objective function value is preferred | Guides search toward optimality within feasible region |

| Rule 3 | Between two infeasible solutions, the one with smaller constraint violation is preferred | Drives population toward feasibility |

The implementation of these rules typically occurs during selection operations, such as tournament selection, where candidate solutions are paired and compared based on these criteria.

Implementation Considerations

A key advantage of Feasibility Rules is their simplicity and lack of parameters requiring tuning. However, this method faces challenges when dealing with problems featuring disconnected feasible regions or when the global optimum lies on the boundary of the feasible region. In such cases, the strict preference for feasible solutions may cause the algorithm to converge to local optima within a feasible component, potentially missing better solutions in other feasible regions [28].

To mitigate these limitations, some implementations incorporate additional mechanisms such as nicheing or crowding to maintain diversity within the feasible population. Furthermore, the method can be hybridized with other techniques to preserve useful infeasible solutions in specific scenarios.

Stochastic Ranking

Conceptual Framework

Stochastic Ranking, introduced by Runarsson and Yao, represents a more nuanced approach to balancing objective function improvement and constraint satisfaction [30]. This method addresses the limitation of Feasibility Rules by allowing controlled comparisons between infeasible solutions with good objective values and feasible solutions with poorer objective values.

The fundamental insight behind Stochastic Ranking is that maintaining promising infeasible solutions can be beneficial, particularly in the early stages of evolution or when solving problems with complex feasibility boundaries. Instead of deterministic rules, Stochastic Ranking employs a probability parameter Pƒ to decide whether comparisons between individuals should be based on objective function value or constraint violation [28] [30].

Ranking Mechanism

The Stochastic Ranking process involves ranking the population through a bubble-sort-like procedure where adjacent individuals are compared and potentially swapped. For each comparison, a random decision is made:

- With probability

Pƒ, the comparison is based on objective function value (ignoring constraints) - With probability

1 - Pƒ, the comparison is based solely on constraint violation

This stochastic approach allows infeasible solutions with excellent objective values to remain in the population, potentially guiding the search toward better regions that might be adjacent to the infeasible space [28].

Figure 1: Stochastic Ranking Process Flow

Dynamic Stochastic Selection

Recent advances in Stochastic Ranking have introduced dynamic adjustments of the comparison probability Pƒ during the evolutionary process. In Dynamic Stochastic Selection (DSS), the value of Pƒ typically decreases as evolution progresses, reflecting the changing needs of the search process [28].

In early generations, a higher Pƒ allows more exploration of the search space, including promising infeasible regions. As evolution continues, reducing Pƒ shifts focus toward constraint satisfaction, refining solutions toward feasibility. Two common dynamic schemes include:

- Linear decrease:

Pƒ(G) = Pƒ_initial - (Pƒ_initial - Pƒ_final) × (G/MAX_GEN) - Square root adjusted:

Pƒ(G) = Pƒ_initial × √(1 - G/MAX_GEN)

where G is the current generation and MAX_GEN is the maximum number of generations [28].