Conquering Technical Variation: Advanced Strategies for Robust Single-Cell GRN Inference

Inference of Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data is fundamentally challenged by pervasive technical variation, notably zero-inflation or 'dropout' events, where true gene expression is erroneously...

Conquering Technical Variation: Advanced Strategies for Robust Single-Cell GRN Inference

Abstract

Inference of Gene Regulatory Networks (GRNs) from single-cell RNA sequencing (scRNA-seq) data is fundamentally challenged by pervasive technical variation, notably zero-inflation or 'dropout' events, where true gene expression is erroneously measured as zero. This article provides a comprehensive resource for researchers and drug development professionals, exploring the foundational causes of this variation, presenting cutting-edge computational methods designed to overcome it, and offering practical guidance for model optimization and troubleshooting. We further synthesize insights from recent large-scale benchmark studies, enabling informed method selection. By addressing these critical technical hurdles, the field moves closer to deriving accurate, biologically meaningful regulatory networks that can power the discovery of novel therapeutic targets.

Understanding the Enemy: Deconstructing Technical Variation and Dropout in Single-Cell Data

Frequently Asked Questions

What is the difference between a biological zero and a technical dropout? A biological zero represents the true absence of a gene's transcripts (mRNAs) in a cell. This is a meaningful biological signal indicating that a gene is not expressed in that particular cell state [1]. In contrast, a technical dropout (also called a non-biological zero) is an artifact where a gene is actively expressed in a cell, but its transcripts are not detected by the sequencing technology. This creates a "false zero" or missing value in the data [1] [2]. Dropouts are a major source of technical variation that can confound downstream analysis.

Why are dropouts so prevalent in single-cell RNA-seq data? The high sparsity in scRNA-seq data arises from the combination of extremely low starting amounts of mRNA in individual cells and technical limitations throughout the library preparation process [3]. Key factors include:

- Low mRNA quantity: A single cell contains only picograms of total RNA [3].

- Technical inefficiencies: Steps like reverse transcription and cDNA amplification are inefficient, leading to transcript loss [1].

- Limited sequencing depth: Even with modern protocols, only a fraction of a cell's transcriptome is sampled, meaning lowly expressed genes are easily missed [1]. In droplet-based protocols, over 90% of the data matrix can be zeros, a significant proportion of which are technical dropouts [1] [4].

How do technical dropouts impact Gene Regulatory Network (GRN) inference? Technical dropouts present a significant challenge for GRN inference. They can:

- Break correlation structures: The artificial zeros bias the estimation of gene-gene expression correlations, which are fundamental to many GRN inference methods [1] [5].

- Reduce statistical power: The high noise level makes it difficult to reliably distinguish true regulatory relationships [6].

- Cause model overfitting: Computational models may over-fit to the dropout noise instead of learning the underlying biological signal, leading to unstable and inaccurate networks [5] [6].

Should I use imputation or model regularization to handle dropouts for GRN inference? Both are valid strategies, and the choice depends on your goal. Imputation methods (e.g., RESCUE, MAGIC, scImpute) aim to "fill in" the missing values by borrowing information from similar cells or genes before performing downstream analysis [3] [2]. Alternatively, model regularization approaches like Dropout Augmentation (DA) take a different view. Instead of removing zeros, DA deliberately adds synthetic dropout noise during model training to force the algorithm to become more robust to them, ultimately improving the stability and performance of GRN inference tools like DAZZLE [5] [6].

Is a zero-inflated model always necessary for analyzing scRNA-seq data? Not always. The need for a zero-inflated model is context-dependent and can be influenced by the sequencing protocol and the specific genes being analyzed. Some research suggests that for UMI-based droplet protocols, a Negative Binomial (NB) distribution alone may sufficiently model the count variation, with zeros largely explained by low sampling rates [4]. Other studies find that a Zero-Inflated Negative Binomial (ZINB) model, which explicitly models an extra probability of zero, provides a better fit for certain genes or datasets [4]. Bayesian model selection can help determine the best model for your data [4].

A Researcher's Guide to Zero-Inflation and Dropouts

Classifying Zeros in Your Data

Understanding the source of zeros in your count matrix is the first step in addressing them. The table below categorizes the types of zeros you will encounter.

Table 1: Classification of Zero Observations in scRNA-seq Data

| Type of Zero | Definition | Origin | Interpretation |

|---|---|---|---|

| Biological Zero | True absence of a gene's mRNA in a cell [1]. | Biology | The gene is not expressed in that cell's state. This is a meaningful signal. |

| Technical Zero | A gene's mRNA is present but lost during library prep (e.g., failed reverse transcription) [1]. | Sequencing Technology | A missing value; an artifact that obscures the true biological state. |

| Sampling Zero | A gene's mRNA is present but not sampled due to limited sequencing depth or inefficient amplification [1]. | Sequencing Technology | A missing value; reflects the random, finite sampling of the cell's transcriptome. |

Quantitative Impact on Data Analysis

The high proportion of zeros in scRNA-seq data has measurable consequences. The following table summarizes key quantitative findings from recent studies.

Table 2: Documented Impacts of Dropouts on scRNA-seq Analysis

| Impact Area | Quantitative/Descriptive Finding | Source |

|---|---|---|

| Data Sparsity | Up to 90% of entries in a scRNA-seq count matrix can be zeros, compared to 10-40% in bulk RNA-seq [1]. | [1] |

| Clustering Stability | Under high dropout rates, cluster homogeneity (cells in a cluster are the same type) can remain high, but cluster stability (consistent grouping of the same cell pairs) decreases significantly [7]. | [7] |

| GRN Inference | A study of nine datasets found that 57% to 92% of observed counts were zeros, which can cause models to overfit and degrade the quality of inferred networks [6]. | [6] |

| Imputation Performance | The RESCUE imputation method achieved a median reduction in absolute count estimation error of 50% in simulated data, leading to greatly improved cell-type identification [2]. | [2] |

Methodologies for Handling Dropouts

Imputation via RESCUE RESCUE is an ensemble imputation method designed to address the bias in cell neighbor identification caused by dropouts.

- Input: A normalized and log-transformed expression matrix [2].

- Feature Selection: Identify the top 1,000 highly variable genes (HVGs) [2].

- Bootstrap & Cluster: Repeatedly subsample a proportion of the HVGs with replacement. For each subsample:

- Impute: For each cluster in each subsample, calculate the average within-cluster expression for every gene. This serves as a sample-specific imputation [2].

- Aggregate: Average all sample-specific imputation values to produce the final, imputed dataset [2].

Model Regularization via Dropout Augmentation (DA) DA is a counter-intuitive but effective regularization technique to improve model robustness.

- Input: A (log-transformed) gene expression matrix [5] [6].

- Augmentation: During model training, at each iteration, randomly select a small proportion of the non-zero expression values and set them to zero. This simulates additional dropout noise [5] [6].

- Model Training: Train the model (e.g., an autoencoder for GRN inference) on this repeatedly noised data. This forces the model to learn to be less sensitive to missing values [5] [6].

- Output: A trained model that is more stable and robust to the dropout noise inherent in real scRNA-seq data [6].

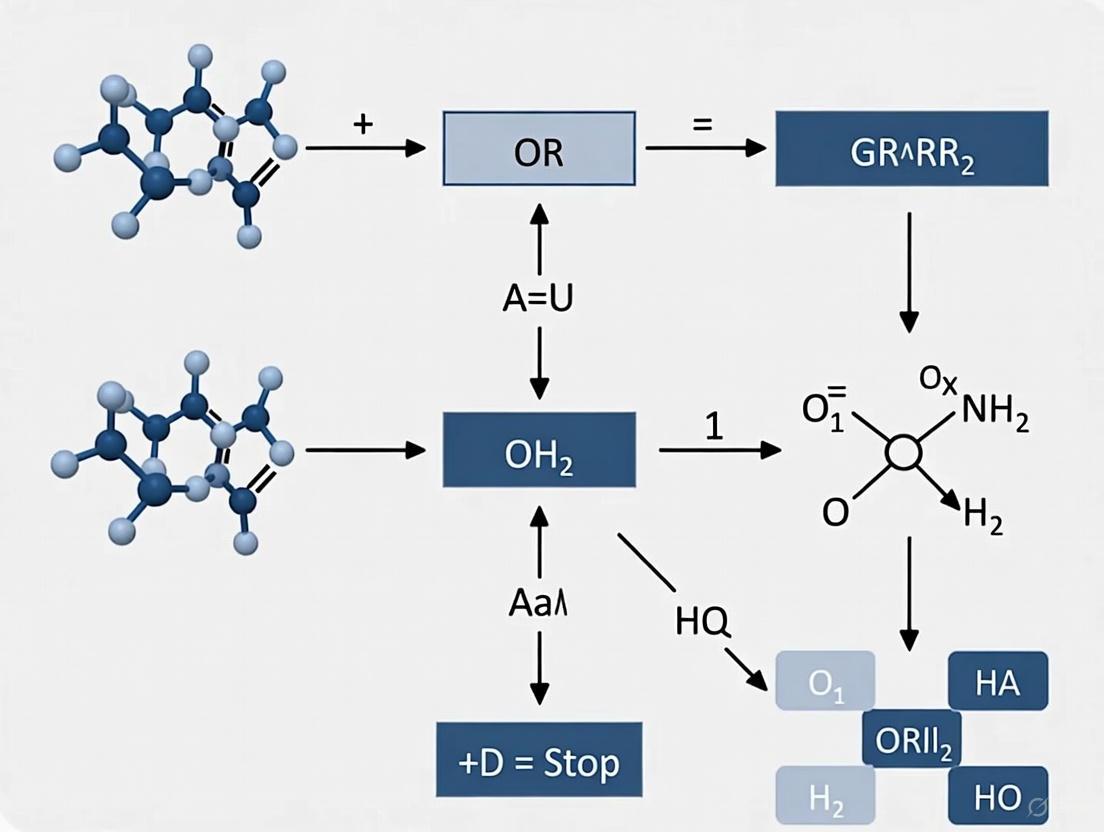

Workflow Diagram: Strategies for Handling Dropouts

The following diagram illustrates the decision pathway for choosing between two major strategies to handle technical dropouts in scRNA-seq data analysis.

Research Reagent Solutions

The following table lists key computational tools and their functions for addressing dropouts in single-cell analysis.

Table 3: Key Computational Tools for Addressing Dropouts

| Tool / Reagent | Function / Purpose | Use Case |

|---|---|---|

| RESCUE [2] | An ensemble-based imputation method that uses bootstrapping of highly variable genes to mitigate bias in cell clustering. | Recovers under-detected expression to improve cell-type identification. |

| DAZZLE [5] [6] | A GRN inference method that uses Dropout Augmentation for regularization, leading to improved robustness and stability. | Infers gene regulatory networks from zero-inflated single-cell data. |

| scVI (NB vs ZINB) [4] | A probabilistic framework that allows model selection between Negative Binomial (NB) and Zero-Inflated Negative Binomial (ZINB) likelihoods. | Data-driven decision-making on whether a zero-inflated model is needed for a given dataset. |

| Co-occurrence Clustering [3] | A clustering algorithm that uses binarized data (0/1 for zero/non-zero), treating the dropout pattern itself as a useful signal. | Identifying cell populations based on gene co-detection patterns beyond highly variable genes. |

The Impact of Dropout on Downstream GRN Inference Accuracy

Single-cell RNA sequencing (scRNA-seq) provides unprecedented resolution for analyzing cellular heterogeneity. However, a major technical challenge is the prevalence of "dropout," a phenomenon where transcripts present in a cell are not detected by the sequencing technology. This results in zero-inflated count data, with 57% to 92% of observed counts being zeros in typical datasets [6]. For Gene Regulatory Network (GRN) inference, which aims to reconstruct regulatory relationships between transcription factors and their target genes, these false zeros represent significant noise that can obscure true biological signals and lead to inaccurate network predictions [6] [8]. Understanding and mitigating dropout effects is therefore crucial for researchers, scientists, and drug development professionals working with single-cell data to ensure reliable biological insights.

Frequently Asked Questions (FAQs)

Q1: What exactly is "dropout" in scRNA-seq data, and how does it differ from true biological zeros?

Dropout refers to technical zeros in scRNA-seq data where transcripts are expressed at low or moderate levels in a cell but are not captured by the sequencing technology. This contrasts with true biological zeros where a gene is genuinely not expressed. The distinction is crucial for GRN inference because false zeros can create misleading correlation structures between genes, potentially suggesting regulatory relationships that don't exist or masking ones that do [6] [8].

Q2: Why does dropout particularly affect GRN inference compared to other analyses?

GRN inference relies on accurately estimating co-expression and regulatory relationships between genes. Dropout events:

- Create false independence between genuinely correlated genes

- Reduce statistical power to detect true regulatory interactions

- Introduce spurious correlations that can lead to false positive edges in networks

- Disproportionately affect moderately expressed transcription factors that are crucial for network inference [6] [8] [9]

Q3: What strategies exist to mitigate dropout effects in GRN inference?

Two primary philosophical approaches have emerged:

- Data Imputation: Methods that fill in missing values before GRN inference

- Model Regularization: Methods that make inference algorithms more robust to zero-inflation, such as Dropout Augmentation (DA) which intentionally adds synthetic dropout during training to improve model resilience [6]

Q4: How can I evaluate whether dropout is significantly affecting my GRN inference results?

Performance can be assessed using:

- Benchmarking against known gold-standard networks (when available)

- Evaluation metrics like Area Under the Precision Recall Curve (AUPRC)

- Stability analysis across bootstrap samples or subsampled data

- Comparison of results before and after applying dropout correction methods [10] [11]

Technical Troubleshooting Guides

Problem: High False Positive Rates in Inferred GRNs

Symptoms: Inferred network contains many edges not supported by biological knowledge; poor validation rate with ChIP-seq or perturbation data.

Solutions:

- Apply Dropout Augmentation: Use methods like DAZZLE that incorporate synthetic dropout during training to improve robustness [6]

- Integrate Prior Knowledge: Leverage databases of known TF-target interactions to constrain the solution space [9]

- Use Methods with Uncertainty Quantification: Implement approaches like PMF-GRN that provide confidence estimates for each predicted interaction [11]

Validation Protocol:

- Run inference with and without dropout correction

- Compare overlap with known regulatory databases

- Perform stability analysis with data subsampling

- Validate key predictions experimentally if possible

Problem: Inconsistent GRNs Across Similar Datasets

Symptoms: Networks inferred from biologically similar samples show poor reproducibility; key regulators vary unexpectedly.

Solutions:

- Employ Multi-dataset Integration: Use batch correction methods like sysVI before GRN inference when combining datasets [12]

- Implement Privacy-preserving Federated Learning: For multi-institutional studies, use tools like FedscGen that enable collaborative analysis while addressing technical variations [13]

- Leverage Cell State-specific Inference: Apply methods like inferCSN that account for cellular trajectories and state transitions [10]

Validation Protocol:

- Calculate normalized mutual information between networks from similar biological conditions

- Assess conservation of hub genes across replicates

- Check cell-type specificity of inferred regulatory relationships

Problem: Poor Performance on Dynamic Biological Processes

Symptoms: Network fails to capture known developmental transitions; missing key temporal regulators.

Solutions:

- Incorporate Pseudotime Information: Use methods like inferCSN that leverage pseudotime ordering to construct state-specific GRNs [10]

- Account for Cell Density in Trajectories: Implement sliding window approaches that normalize for uneven cell distribution across pseudotime [10]

- Combine with RNA Velocity: Integrate transcriptional dynamics through tools like scVelo within comprehensive pipelines like scDown [14]

Validation Protocol:

- Check recovery of known stage-specific regulators

- Validate predicted temporal ordering of regulatory events

- Assess coherence of network transitions along developmental trajectories

Quantitative Comparison of GRN Inference Methods

Table 1: Performance comparison of GRN inference methods on benchmark datasets

| Method | Approach | Handling of Dropout | AUPRC on Benchmark Data | Scalability | Uncertainty Quantification |

|---|---|---|---|---|---|

| DAZZLE | VAE with Dropout Augmentation | Active regularization via synthetic dropout | 0.28-0.35 (BEELINE) | High (GPU compatible) | Limited |

| PMF-GRN | Probabilistic Matrix Factorization | Generative modeling of zero-inflation | 0.24-0.32 (Yeast) | High (GPU compatible) | Yes (well-calibrated) |

| inferCSN | Sparse regression + pseudotime | Windowing across cell states | Superior on multiple metrics | Moderate | No |

| GENIE3 | Random forest | Not specifically addressed | Variable performance | Low for large networks | No |

| SCENIC | Co-expression + TF motif analysis | Not specifically addressed | Moderate | Moderate | No |

Table 2: Impact of dropout correction on GRN inference accuracy

| Correction Strategy | False Positive Reduction | False Negative Reduction | Computational Overhead | Ease of Implementation |

|---|---|---|---|---|

| Dropout Augmentation (DA) | Significant (15-25%) | Moderate (10-15%) | Low | Moderate (requires retraining) |

| Data Imputation | Variable (can introduce bias) | Moderate (10-20%) | Medium-High | Easy (preprocessing step) |

| Probabilistic Modeling | Moderate (10-15%) | Significant (15-25%) | Medium | Difficult (specialized expertise) |

| Prior Knowledge Integration | Significant (20-30%) | Limited (5-10%) | Low | Moderate (depends on data availability) |

Experimental Protocols

Protocol: Dropout Augmentation for GRN Inference using DAZZLE

Purpose: To improve GRN inference robustness against dropout noise through model regularization.

Materials:

- Single-cell RNA-seq count matrix

- High-performance computing environment (GPU recommended)

- DAZZLE software (https://github.com/TuftsBCB/dazzle)

Procedure:

- Data Preprocessing:

- Transform raw counts using ( \log(x+1) ) to reduce variance

- Select highly variable genes if working with large gene sets

- Optional: Basic quality control to remove low-quality cells

Dropout Augmentation Implementation:

- During model training, randomly set a small percentage of non-zero values to zero

- Recommended augmentation rate: 5-15% of non-zero values

- Apply augmentation independently for each training epoch

Model Training:

- Use the same VAE-based structure equation model framework as DeepSEM

- Implement augmented data in both encoder and decoder components

- Train until convergence with early stopping to prevent overfitting

Network Inference:

- Extract the parameterized adjacency matrix as the inferred GRN

- Apply sparsity constraints based on biological plausibility

- Validate stability through multiple runs with different random seeds

Troubleshooting:

- If model instability occurs, reduce augmentation rate

- For memory issues, subset genes or use batch processing

- Validate on synthetic data with known ground truth if available [6]

Protocol: Cell State-Aware GRN Inference using inferCSN

Purpose: To construct cell type and state-specific GRNs while accounting for dropout effects across cellular trajectories.

Materials:

- scRNA-seq data with cell type annotations

- Computing environment with R/Python and inferCSN package

- Prior regulatory knowledge (optional)

Procedure:

- Pseudotime Inference:

- Calculate pseudotime ordering using appropriate tools (Monocle3, etc.)

- Reorder cells based on pseudotemporal information

Cell State Partitioning:

- Identify transition points between cell states based on cell density in pseudotime

- Partition cells into multiple windows representing distinct states

- Balance cell numbers across windows to mitigate density biases

Network Inference:

- Apply sparse regression model with L0 and L2 regularization within each window

- Incorporate reference network information if available

- Construct state-specific GRNs for each window

Network Integration and Analysis:

- Compare GRNs across different states to identify dynamic regulations

- Perform differential network analysis to find state-specific regulators

- Validate key findings through pathway enrichment analysis [10]

Signaling Pathways and Workflow Visualizations

Diagram Title: Comprehensive GRN Inference Workflow with Dropout Mitigation Strategies

Diagram Title: Impact Cascade of Dropout Events on GRN Inference Accuracy

Research Reagent Solutions

Table 3: Essential computational tools for dropout-resistant GRN inference

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| DAZZLE | Software Package | GRN inference with dropout augmentation | General scRNA-seq data; high dropout scenarios |

| PMF-GRN | Probabilistic Model | GRN inference with uncertainty quantification | When confidence estimates are needed; integrative analysis |

| inferCSN | Algorithm | Cell state-specific network inference | Dynamic processes; differentiation trajectories |

| sysVI | Integration Method | Batch correction for multi-dataset analysis | Cross-study comparisons; atlas-scale projects |

| FedscGen | Federated Learning Tool | Privacy-preserving batch correction | Multi-institutional studies; clinical data |

| scDown | Analysis Pipeline | Downstream analysis integration | Comprehensive single-cell workflow including GRN inference |

Frequently Asked Questions

What are the main limitations of data imputation for handling single-cell noise? Many imputation methods depend on restrictive assumptions, and some require additional information, such as existing GRNs or bulk transcriptomic data, which may not be available. Imputation aims to eliminate zeros, but this can sometimes introduce new biases or obscure the true underlying biological variation [5] [6].

Is there an alternative to replacing missing data? Yes. Instead of replacing zeros (imputation), you can choose to build models that are inherently robust to this noise. This philosophy focuses on model regularization rather than data alteration. The core idea is to train your model to be insensitive to dropout events, so that its predictions remain reliable even in the presence of missing data [5] [6].

How can I make my GRN inference model more robust to dropout noise? A technique called Dropout Augmentation (DA) can be applied. During model training, you intentionally set a small, random subset of gene expression values to zero. This simulates additional dropout events, effectively teaching the model to ignore this type of noise. Counter-intuitively, adding this noise during training leads to a more stable and robust model [5] [6].

My data has batch effects in addition to technical noise. Can these be handled simultaneously? Yes. Methods like iRECODE are designed to address this exact challenge. They integrate technical noise reduction and batch correction into a single, cohesive framework. This simultaneous approach is more effective than performing these steps sequentially, as it prevents the propagation of errors from one step to the next [15].

How can I leverage prior knowledge to improve GRN inference? You can integrate prior knowledge, such as known regulatory interactions from curated databases or multi-omic data (e.g., from scATAC-seq). This information helps constrain the solution space of possible networks, guiding the inference algorithm toward more biologically plausible results and significantly enhancing reliability [9].

Troubleshooting Guides

Problem: Inferred Gene Regulatory Network is Unstable or Poorly Reproducible

Potential Cause: The model is overfitting to the technical dropout noise present in the single-cell data, rather than learning the true biological signals.

Solution: Implement Dropout Augmentation (DA) Adopt the DAZZLE framework, which uses DA. The workflow involves intentionally adding zeros to your training data to build a model that generalizes better.

Protocol: Implementing Dropout Augmentation

- Input Your Data: Start with your single-cell gene expression matrix ( X ), where rows are cells and columns are genes. Transform counts using ( \log(x+1) ).

- Augment with Zeros: In each training iteration (epoch), randomly select a small proportion ( p ) (e.g., 1-5%) of the non-zero values in ( X ) and set them to zero, creating a noisier matrix ( X' ).

- Train a Robust Model: Use ( X' ) as input to your model (e.g., a variational autoencoder). The model learns to reconstruct the original ( X ) despite the augmented zeros, forcing it to become resilient to missing data.

- Infer the Network: The trained model will yield a more stable adjacency matrix ( A ), representing the inferred GRN [5] [6].

Potential Cause: The data is affected by both technical dropouts and batch effects, and addressing them separately is ineffective.

Solution: Use a Unified Noise Reduction Framework Apply a method like iRECODE that performs technical noise reduction and batch correction simultaneously within a high-dimensional statistical framework.

Protocol: Simultaneous Technical and Batch Noise Reduction with iRECODE

- Input Multi-Batch Data: Provide your scRNA-seq expression matrix alongside a batch identifier for each cell.

- Noise Variance-Stabilizing Normalization (NVSN): The data is mapped to an "essential space" using NVSN and singular value decomposition (SVD). This step stabilizes the technical noise.

- Integrated Batch Correction: Within this stabilized, lower-dimensional space, a batch correction algorithm (e.g., Harmony) is applied to remove non-biological variation across batches.

- Reconstruct Denoised Data: The processed data is transformed back to the original gene space, resulting in a denoised expression matrix free from both technical dropouts and batch effects [15].

Problem: Simple GRNs Do Not Capture Dynamic Biological Processes

Potential Cause: The regulatory relationships between genes change as cells transition between states (e.g., during differentiation). A single, static network cannot capture this dynamics.

Solution: Infer Cell State-Specific Networks Use methods like inferCSN that incorporate pseudo-temporal ordering to construct networks specific to different cell states.

Protocol: Constructing Dynamic, State-Specific GRNs

- Determine Cell States: Calculate pseudo-time ordering for your cells to reconstruct their biological trajectory (e.g., using Monocle, PAGA).

- Segment the Trajectory: Divide the continuous pseudo-time into discrete windows or states based on cell density to avoid bias.

- Infer Networks per State: For each window, use a regularized regression model (like L0L2 norm) to infer the GRN specific to that cell state.

- Compare Networks: Analyze differences in regulatory edges between states to identify key dynamic changes driving the biological process [10].

Performance Comparison of Alternative Methods

The table below summarizes key methods that move beyond simple imputation, highlighting their core philosophy and performance.

| Method Name | Core Philosophical Approach | Reported Performance Advantages |

|---|---|---|

| DAZZLE [5] [6] | Model regularization via Dropout Augmentation | Improved model stability & robustness; ~50% faster inference and ~22% fewer parameters than DeepSEM [5]. |

| iRECODE [15] | High-dimensional statistics for simultaneous technical and batch noise reduction | Effectively reduces sparsity and batch effects; ~10x more computationally efficient than combining separate noise reduction and batch correction [15]. |

| ZILLNB [16] | Hybrid statistical-deep learning (ZINB regression + latent factor learning) | Superior performance in cell type classification (ARI improvements of 0.05-0.2) and differential expression analysis [16]. |

| inferCSN [10] | Leveraging pseudo-time to infer dynamic, state-specific networks | Outperforms other methods (GENIE3, SINCERITIES) in accuracy on both simulated and real datasets [10]. |

| Item / Resource | Function in Experiment |

|---|---|

| Prior Knowledge Databases (e.g., TF-target interactions from ChIP-seq) | Provides a graph-based prior to constrain GRN inference, improving accuracy by incorporating known biology [9]. |

| Pseudo-Time Inference Tool (e.g., Monocle, PAGA) | Orders cells along a hypothetical timeline, enabling the construction of dynamic, state-specific GRNs [10]. |

| Benchmarking Platform (e.g., BEELINE) | Provides standardized datasets and ground-truth networks to fairly evaluate the performance of new GRN inference methods [5] [6]. |

| 4sU (4-thiouridine) Labelling | Enables metabolic labeling of newly transcribed RNA, improving the inference of transcriptional bursting kinetics and providing more dynamic data [17]. |

FAQ: Addressing Common Single-Cell GRN Inference Challenges

FAQ 1: What is the most significant source of technical variation in single-cell RNA-seq data? A primary source of technical variation is "dropout," a phenomenon where transcripts with low or moderate expression are not detected, resulting in an excess of zero values in the data. In various single-cell datasets, 57% to 92% of observed counts can be zeros [5] [6]. This zero-inflation poses a major challenge for downstream analyses like Gene Regulatory Network (GRN) inference, as it can obscure true biological signals.

FAQ 2: How does transcriptome diversity confound gene expression analysis? Transcriptome diversity, measured using Shannon entropy, is a key biological factor that systematically explains a large portion of global gene expression variability [18] [19]. It reflects the evenness of transcript distribution across genes. In highly diverse transcriptomes, reads are evenly distributed, whereas in low-diversity transcriptomes, a few highly expressed genes dominate. This factor is so pervasive that it is often the strongest signal encoded in hidden covariates (PEER factors) used for normalization, and it can be correlated with numerous technical and biological variables [18]. Not accounting for it can lead to spurious results in differential expression or eQTL mapping.

FAQ 3: What strategies exist to mitigate the impact of dropout noise in GRN inference? Beyond traditional data imputation, a novel approach called Dropout Augmentation (DA) has been developed to improve model robustness [5] [6]. This method involves augmenting the input data with a small number of artificially introduced zeros during model training. This seemingly counter-intuitive process acts as a form of regularization, exposing the model to multiple versions of the data with different noise patterns and preventing it from overfitting to the specific dropout noise in the original dataset. This approach is used in the GRN inference tool DAZZLE [5].

FAQ 4: What are the overarching data science challenges in single-cell genomics? The field of single-cell data science faces several grand challenges, including the critical need to quantify measurement uncertainty due to the limited biological material per cell, scaling computational methods to handle millions of cells and multiple data modalities, and developing robust methods for integrating data across different samples, experiments, and measurement types [20].

Troubleshooting Guides & Solutions

Troubleshooting Guide 1: Handling Data Sparsity and Dropout in GRN Inference

Problem: Inferred Gene Regulatory Networks are unstable or inaccurate due to zero-inflated single-cell data.

Solution: Implement model regularization via Dropout Augmentation.

Detailed Protocol:

- Data Preprocessing: Transform your raw count matrix ( X ) using ( \log(X+1) ) to reduce variance and avoid undefined values [5] [6].

- Dropout Augmentation: During each training iteration of your GRN inference model (e.g., an autoencoder), randomly select a small proportion of the non-zero expression values and set them to zero. This simulates additional dropout events.

- Model Training: Train the model on this augmented data. The DAZZLE model, for instance, incorporates a noise classifier that learns to identify which zeros are likely technical artifacts, helping the decoder reconstruct the original data more robustly [5].

- Sparsity Control: Improve stability by delaying the application of sparsity-inducing loss terms on the GRN adjacency matrix until after the initial training epochs have converged [5].

Troubleshooting Guide 2: Accounting for Systematic Variation from Transcriptome Diversity

Problem: Global patterns in gene expression, driven by transcriptome diversity rather than specific biological questions, are confounding differential expression or eQTL analyses.

Solution: Calculate and correct for transcriptome diversity using Shannon entropy.

Detailed Protocol:

- Calculation: For each sample (or cell) in your expression matrix, calculate transcriptome diversity ( Hs ) using Shannon's entropy formula: ( Hs = - \sum{i=1}^{G} pi \log(pi) ) where ( G ) is the total number of expressed genes and ( pi ) is the proportion of transcripts belonging to gene ( i ) (calculated from normalized counts like TMM) [18] [19].

- Visualization and Assessment: Perform a Principal Component Analysis (PCA) on the gene expression matrix and color the samples by their transcriptome diversity value. This will often reveal that a primary principal component (e.g., PC2) is highly correlated with diversity [18] [19].

- Correction: Include the calculated transcriptome diversity values as a covariate in your linear models during differential expression or eQTL analysis. This directly controls for its effect.

The table below summarizes key quantitative findings from recent studies relevant to addressing systematic challenges.

Table 1: Quantitative Benchmarks for Single-Cell and RNA-seq Challenges

| Challenge Area | Metric | Value / Finding | Source |

|---|---|---|---|

| Data Sparsity | Prevalence of zeros in scRNA-seq data | 57% - 92% of observed counts | [5] [6] |

| Transcriptome Diversity | Genes significantly associated with diversity (in a D. melanogaster dataset) | 68.9% of genes (FDR < 0.05) | [18] [19] |

| Long-read RNA-seq (lrRNA-seq) | Key finding for transcript identification | Longer, more accurate reads produce more accurate transcripts than increased depth | [21] |

| Long-read RNA-seq (lrRNA-seq) | Key finding for transcript quantification | Greater read depth improves quantification accuracy | [21] |

Experimental Protocols for Key Methodologies

Protocol 1: GRN Inference with DAZZLE

Methodology: DAZZLE uses a variational autoencoder (VAE) based on a structural equation model (SEM) framework, regularized with Dropout Augmentation [5] [6].

- Input: A single-cell gene expression matrix (cells x genes), preprocessed with ( \log(X+1) ) transformation.

- Model Architecture:

- The adjacency matrix ( A ), representing the GRN, is a parameterized matrix learned during training.

- The encoder network processes the input data to create a latent representation.

- The decoder network uses the latent representation and the adjacency matrix ( A ) to reconstruct the input.

- Dropout Augmentation: During training, synthetic dropout noise is added by zeroing random values.

- Training: The model is trained to minimize reconstruction error while simultaneously learning a sparse adjacency matrix ( A ). DAZZLE uses a single optimizer and a closed-form prior for the latent space to improve stability and reduce runtime compared to earlier methods [5].

Protocol 2: Calculating Transcriptome Diversity

Methodology: This protocol quantifies the evenness of gene expression within a sample using Shannon Entropy [18] [19].

- Input Data: A normalized gene expression count matrix (e.g., TMM-normalized counts) for multiple samples.

- Normalization per Sample: For each sample, calculate the proportional expression for each gene ( i ): ( pi = \frac{\text{count}i}{\sum{j=1}^{G} \text{count}j} ), where ( G ) is the number of genes.

- Entropy Calculation: Compute the Shannon entropy ( Hs ) for each sample: ( Hs = - \sum{i=1}^{G} pi \log(p_i) ).

- Output: A vector of transcriptome diversity values, one for each sample, ready to be used as a covariate in downstream analyses.

Table 2: Key Computational Tools and Resources for Addressing Systematic Challenges

| Resource / Tool | Function / Description | Application in Challenge |

|---|---|---|

| DAZZLE | A stabilized autoencoder-based model for GRN inference. | Mitigates dropout effects in single-cell data using Dropout Augmentation [5]. |

| PEER Factors | A method (Probabilistic Estimation of Expression Residuals) to infer hidden covariates. | Corrects for unmeasured systematic technical and biological variation [18] [19]. |

| Shannon Entropy | A simple metric from information theory, calculated from normalized counts. | Quantifies transcriptome diversity, a major source of expression variation [18] [19]. |

| TMM / Median of Ratios | Normalization methods for RNA-seq data. | Account for RNA composition effects and sequencing depth differences across samples [18] [19]. |

Workflow and Relationship Diagrams

From Single-Cell Data to Robust GRN

DAZZLE Model Architecture

The Modern Toolkit: Computational Methods for Robust GRN Inference

Gene Regulatory Network (GRN) inference from single-cell RNA sequencing (scRNA-seq) data offers a contextual model of interactions between genes in vivo, which is crucial for understanding development, pathology, and key regulatory points amenable to therapeutic intervention [6] [5]. However, a predominant technical challenge in scRNA-seq data analysis is the prevalence of "dropout"—events where transcripts are erroneously not captured by the sequencing technology, leading to zero-inflated count data [6] [5]. In various datasets, 57% to 92% of observed counts can be zeros [6] [5]. This technical variation complicates many downstream analyses, including GRN inference, as it can obscure true biological signals.

Traditional approaches to handling dropout have focused on data imputation methods that replace missing values [6] [5]. In contrast, Dropout Augmentation (DA) introduces a novel regularization perspective by augmenting training data with synthetic dropout events, thereby increasing model robustness against this inherent technical noise [6] [5]. The DAZZLE model (Dropout Augmentation for Zero-inflated Learning Enhancement) implements this approach within an autoencoder-based structural equation modeling framework, providing a stabilized method for GRN inference that demonstrates improved performance and stability over existing approaches [6] [5].

Table: Key Challenges in Single-Cell GRN Inference and DAZZLE's Approach

| Challenge in scRNA-seq Data | Traditional Approach | DAZZLE's Innovative Solution |

|---|---|---|

| High dropout rates (zero-inflation) | Data imputation | Dropout Augmentation regularization |

| Model overfitting to noise | Early stopping, parameter penalty | Synthetic dropout noise during training |

| Computational complexity | Large parameter networks | Simplified structure & closed-form prior |

| Instability in network inference | Multiple training runs | Stabilized adjacency matrix learning |

Core Methodology: Understanding Dropout Augmentation and DAZZLE Architecture

Theoretical Foundation of Dropout Augmentation

Dropout Augmentation (DA) represents a paradigm shift from eliminating zeros through imputation to actively incorporating them as a regularization mechanism. While seemingly counter-intuitive, DA improves model resilience by exposing the model to multiple versions of the same data with slightly different batches of dropout noise across training iterations [6] [5]. This approach has solid theoretical foundations in machine learning, as Bishop first demonstrated that adding noise to input data is equivalent to Tikhonov regularization [6] [5].

DA differentiates from conventional dropout regularization in deep learning, which randomly disables neurons during training to prevent overfitting [22] [23] [24]. Instead, DA specifically targets the input data layer by artificially introducing additional zeros that simulate the technical variation characteristic of scRNA-seq protocols [6]. This approach aligns with the concept that training with input noise enhances model smoothness and resilience to input perturbations [24].

DAZZLE Architecture and Implementation

DAZZLE builds upon the structural equation model (SEM) framework previously employed by DeepSEM and DAG-GNN [6] [5]. The model takes as input a single-cell gene expression matrix where rows represent cells and columns represent genes, with raw counts transformed as log(x+1) to reduce variance and avoid taking the logarithm of zero [6] [5].

The key architectural components of DAZZLE include:

- Parameterized Adjacency Matrix: A learned adjacency matrix (A) that encapsulates the GRN structure and is used on both sides of the autoencoder [6] [5].

- Dropout Augmentation Module: Introduces synthetic dropout noise during training by sampling a proportion of expression values and setting them to zero [6] [5].

- Noise Classifier: Predicts the probability that each zero is an augmented dropout value, helping the model assign less weight to likely dropout events during reconstruction [5].

- Stabilization Mechanisms: Implements delayed introduction of sparse loss terms and uses a closed-form Normal distribution as prior, reducing model size and computational time [5].

Table: DAZZLE Architecture Components and Functions

| Component | Function | Improvement over DeepSEM |

|---|---|---|

| Dropout Augmentation | Regularizes model with synthetic zeros | Increases robustness to technical noise |

| Noise Classifier | Identifies likely dropout events | Reduces overfitting to false zeros |

| Delayed Sparse Loss | Controls adjacency matrix sparsity | Improves training stability |

| Closed-form Prior | Replaces estimated latent variable | 21.7% parameter reduction |

| Unified Optimizer | Single training optimizer | 50.8% faster training |

Diagram Title: DAZZLE Model Architecture with Dropout Augmentation

Performance Benchmarks and Experimental Validation

Quantitative Performance Metrics

DAZZLE has been rigorously evaluated against established GRN inference methods using the BEELINE benchmark framework [6] [5]. The benchmarks employ datasets with approximately known ground truth networks, enabling quantitative comparison of inference accuracy. Performance improvements are observed across multiple metrics including precision-recall curves and early precision recovery rates.

Notably, DAZZLE demonstrates superior stability during training compared to DeepSEM, where the quality of inferred networks in DeepSEM may degrade quickly after model convergence due to overfitting to dropout noise [6] [5]. DAZZLE's implementation also shows significant computational efficiency gains, requiring 21.7% fewer parameters and achieving 50.8% reduction in running time on the BEELINE-hESC dataset with 1,410 genes [5].

Practical Application on Real-World Data

The practical utility of DAZZLE was demonstrated on a longitudinal mouse microglia dataset containing over 15,000 genes, where it efficiently handled real-world single-cell data with minimal gene filtration [6] [5]. This application highlighted DAZZLE's ability to facilitate interpretation of typical-sized datasets and explain expression dynamics across biological conditions—in this case, microglial changes across the mouse lifespan [6] [5].

Table: Performance Comparison of GRN Inference Methods

| Method | Theoretical Basis | Handling of Dropout | Stability | Computational Efficiency |

|---|---|---|---|---|

| DAZZLE | Autoencoder SEM with DA | Active regularization via augmentation | High | 50.8% faster than DeepSEM |

| DeepSEM | Variational Autoencoder SEM | Vulnerable to overfitting | Degrades with training | Baseline |

| GENIE3/GRNBoost2 | Tree-based ensembles | No special handling | Moderate | Moderate |

| PIDC | Partial Information Decomposition | Models heterogeneity | Moderate | Computationally intensive |

| SINUM | Mutual Information | Statistical independence testing | Moderate | Moderate |

Experimental Workflow and Protocol Specifications

Standard DAZZLE Implementation Protocol

Implementing DAZZLE for GRN inference requires careful attention to data preprocessing, model configuration, and training procedures. The following workflow outlines the key steps for reproducible results:

Diagram Title: DAZZLE Experimental Workflow for GRN Inference

Critical Experimental Parameters

Successful implementation of DAZZLE requires careful configuration of several key parameters:

- Dropout Augmentation Rate: Typically ranges from 0.1 to 0.3, simulating additional technical noise beyond natural dropout [6] [5].

- Sparse Loss Delay: Number of epochs before introducing sparse loss term, crucial for training stability [5].

- Learning Rate Schedule: Adaptive learning rate optimization for the unified training approach [5].

- Adjacency Matrix Sparsity Constraint: Controls the density of the inferred GRN topology [6] [5].

Key Research Reagent Solutions

Table: Essential Materials and Computational Resources for DAZZLE Implementation

| Resource Category | Specific Tool/Platform | Function/Purpose |

|---|---|---|

| Data Processing | Scanpy, Seurat | scRNA-seq quality control and normalization |

| Implementation Framework | PyTorch, TensorFlow | Deep learning model implementation |

| Benchmarking | BEELINE framework | Performance validation against ground truth |

| Computational Environment | H100 GPU or equivalent | Accelerated model training |

| Biological Validation | STRING database, human interactome | Network validation against known interactions |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q: What distinguishes Dropout Augmentation from traditional dropout regularization in deep learning? A: Traditional dropout randomly disables neurons during training to prevent overfitting [22] [23], while Dropout Augmentation specifically adds synthetic zeros to input data to mimic technical noise in scRNA-seq data, thereby regularizing the model against this specific data artifact [6] [5].

Q: Why does adding more zeros help with zero-inflated data? Shouldn't we instead impute missing values? A: While imputation attempts to eliminate zeros, DA takes an alternative regularization approach. By exposing the model to controlled dropout patterns during training, it learns to become robust to this noise rather than relying on potentially inaccurate imputations. This approach recognizes that some zeros represent true biological absence while others are technical artifacts, and the model learns to distinguish these through the noise classifier [6] [5].

Q: How do I determine the optimal dropout augmentation rate for my dataset? A: The optimal rate depends on your dataset's specific dropout characteristics. Start with a rate between 0.1-0.3 and perform ablation studies. Higher rates may be needed for datasets with particularly sparse expression patterns. Monitor reconstruction loss and network stability during training as indicators [6] [5].

Q: My inferred GRN appears too dense/too sparse. How can I adjust this? A: Adjust the sparsity constraint on the adjacency matrix and consider modifying the delay before introducing the sparse loss term. Earlier introduction typically results in sparser networks, while delayed introduction allows more connections to form before pruning [5].

Q: How does DAZZLE compare to mutual information-based methods like SINUM for GRN inference? A: SINUM uses mutual information to construct single-cell networks by determining gene-gene dependencies in individual cells [25], while DAZZLE employs a structural equation model within an autoencoder framework. DAZZLE specifically addresses dropout through augmentation, while SINUM captures non-linear relationships through information theory without special dropout handling [6] [25].

Common Error Conditions and Solutions

Table: Troubleshooting Guide for DAZZLE Implementation

| Problem | Potential Causes | Solution Approaches |

|---|---|---|

| Training instability | Learning rate too high, sparse loss introduced too early | Reduce learning rate, increase sparse loss delay epoch |

| Overfitting to training data | Insufficient dropout augmentation, too many parameters | Increase DA rate, simplify model architecture |

| Poor reconstruction accuracy | Excessive dropout augmentation, too few training epochs | Reduce DA rate, increase training iterations |

| Computational memory issues | Large gene set, excessive batch size | Filter low-expression genes, reduce batch size |

| Biologically implausible GRN | Improper sparsity constraints, insufficient validation | Adjust sparsity parameters, validate against known interactions |

Inferring Gene Regulatory Networks (GRNs) from single-cell data is a fundamental challenge in computational biology, essential for understanding cellular identity, fate decisions, and dysregulation in disease. Traditional regression-based methods for GRN inference often lack estimates of uncertainty and require complete re-engineering when new data or assumptions are integrated. PMF-GRN (Probabilistic Matrix Factorization for Gene Regulatory Network Inference) addresses these limitations by using a probabilistic matrix factorization approach coupled with variational inference, providing a flexible framework that delivers well-calibrated uncertainty estimates for each predicted regulatory interaction. This technical guide details the methodology, implementation, and troubleshooting of PMF-GRN, supporting robust and interpretable GRN reconstruction.

Methodology and Core Concepts

What is the PMF-GRN Model?

PMF-GRN is a generative model designed to infer latent factors from single-cell gene expression data. Its goal is to decompose the observed gene expression matrix (W \in \mathbb{R}^{N \times M}) (for (N) cells and (M) genes) into two key latent components [11]:

- A Transcription Factor Activity (TFA) matrix (U \in \mathbb{R}_{>0}^{N \times K}), representing the activity of (K) transcription factors in each cell.

- A TF-target gene interaction matrix (V \in \mathbb{R}^{M \times K}), representing the regulatory relationships.

The interaction matrix (V) is further decomposed into the element-wise product of two matrices [11]: [ V = A \odot B ] where:

- (A \in (0, 1)^{M \times K}) represents the degree of existence of a regulatory interaction.

- (B \in \mathbb{R}^{M \times K}) represents the interaction strength and its direction (activation or repression).

The model incorporates prior knowledge (e.g., from TF motif databases or chromatin accessibility data) through the hyperparameters of (A), allowing for data integration [11]. The posterior distribution over (A), specifically the mean and variance of its entries, provides the confidence and uncertainty for each predicted TF-target gene interaction [11].

The Role of Variational Inference

Exact inference in this probabilistic model is intractable. PMF-GRN uses variational inference to approximate the true posterior distribution (p(U, A, B, \sigma{obs}, d | W)) with a simpler, tractable variational distribution (q(U, A, B, \sigma{obs}, d)) [11].

The core of variational inference is the maximization of the Evidence Lower Bound (ELBO), which is equivalent to minimizing the Kullback-Leibler (KL) divergence between the approximate distribution (q) and the true posterior (p) [11]. Upon convergence, the variational distribution (q) provides the estimated interactions and their uncertainties.

The following diagram illustrates the core structure of the PMF-GRN model and its inference process [11].

Core Structure of the PMF-GRN Model

How does PMF-GRN compare to other GRN inference methods?

PMF-GRN belongs to the category of probabilistic models for GRN inference, which aim to model the dependence between variables like TFs and their target genes [26]. The table below contrasts its approach with other common methodologies.

| Method Category | Underlying Principle | Key Advantages | Key Limitations |

|---|---|---|---|

| PMF-GRN (Probabilistic) | Probabilistic matrix factorization; variational inference [11]. | Provides well-calibrated uncertainty estimates; principled hyperparameter search; integrates prior knowledge. | Assumes specific distributions; can be computationally intensive. |

| Correlation-based | Guilt-by-association; measures like Pearson correlation or mutual information [26]. | Simple and fast to compute. | Cannot distinguish direct/indirect relationships; confounded by co-regulation. |

| Regression-based | Models gene expression as a function of TF expression/accessibility [26]. | Infers directionality; some interpretability via coefficients. | Prone to overfitting with many predictors; often lacks uncertainty quantification. |

| Dynamical Systems | Models gene expression changes over time using differential equations [26]. | Captures temporal dynamics; highly interpretable parameters. | Requires temporal data; complex to scale to large networks. |

Implementation and Experimental Protocols

Key Experiment: Benchmarking on Saccharomyces cerevisiae Data

A key experiment validating PMF-GRN involved its application to single-cell gene expression datasets for S. cerevisiae (yeast) [11].

1. Objective: To evaluate the accuracy of inferred TF-target interactions against a database-derived gold standard and compare performance against state-of-the-art methods (Inferelator, SCENIC, Cell Oracle) [11].

2. Input Data Preparation:

- Single-cell RNA-seq Data: A preprocessed gene expression matrix (W) of dimensions (Number of cells × Number of genes).

- Prior Knowledge Matrix: A binary or probabilistic matrix for (A), derived from integrating TF motif information (e.g., from databases like JASPAR) with chromatin accessibility data (e.g., from scATAC-seq) to indicate potential binding [11].

3. Model Setup and Execution:

- Hyperparameter Selection: Utilize the built-in variational inference framework for principled hyperparameter search to select the optimal model [11].

- Stochastic Gradient Descent (SGD): Run the model on a GPU using SGD for efficient optimization and scalability [11].

- Output: The approximate posterior distributions (q(A)) and (q(B)), from which the mean (interaction strength) and variance (uncertainty) are extracted.

4. Validation and Evaluation:

- Gold Standard: Compare the ranked list of predicted TF-target edges against a curated set of known interactions.

- Metric: Calculate the Area Under the Precision-Recall Curve (AUPRC) to evaluate performance [11].

Quantitative Benchmarking Results

The following table summarizes typical quantitative outcomes from the benchmarking experiment, demonstrating PMF-GRN's performance advantage [11].

| Evaluation Metric | PMF-GRN | Inferelator | SCENIC | Cell Oracle |

|---|---|---|---|---|

| AUPRC (Yeast Dataset 1) | 0.88 | 0.75 | 0.79 | 0.81 |

| AUPRC (Yeast Dataset 2) | 0.85 | 0.72 | 0.74 | 0.78 |

| Uncertainty Calibration | Well-calibrated | Not provided | Not provided | Not provided |

| Scalability (to 10k cells) | Supported via GPU | Variable | Moderate | Moderate |

The workflow for this benchmark is outlined below.

PMF-GRN Benchmarking Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Research Reagent / Resource | Function in PMF-GRN Workflow |

|---|---|

| Single-cell RNA-seq Data | Provides the observed gene expression matrix (W), the fundamental input for inference [11]. |

| scATAC-seq Data | Used to identify accessible chromatin regions, which are integrated with TF motifs to create the prior interaction matrix for (A) [11]. |

| TF Motif Database (e.g., JASPAR) | Provides position weight matrices (PWMs) of TF binding specificities, essential for constructing the prior knowledge matrix [11]. |

| Gold Standard GRN Databases | Curated sets of known TF-target interactions (e.g., for yeast or from resources like CRISPR screens) used for model validation and benchmarking [11]. |

| GPU Computing Resource | Accelerates the stochastic gradient descent optimization in variational inference, enabling analysis of large-scale single-cell datasets [11]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What format should the prior knowledge matrix be in, and how critical is its quality? The prior matrix for (A) should be a matrix of dimensions (Genes × TFs) with entries in (0,1), representing the prior probability of an interaction. While PMF-GRN can run with an uninformative prior (e.g., all ones), the quality of the prior is crucial for accuracy. A high-quality prior, derived from integrating motif scanning with chromatin accessibility (e.g., scATAC-seq), significantly enhances performance by focusing the model on biologically plausible interactions [11].

Q2: My model inference is slow. What steps can I take to improve performance? Ensure you are leveraging GPU acceleration, as PMF-GRN's use of Stochastic Gradient Descent is designed for this. Check the size of your input data; for very large datasets (e.g., >10,000 cells or genes), consider a preliminary feature selection step to filter to highly variable genes and key TFs. Furthermore, the variational inference framework includes a hyperparameter search—monitor the ELBO convergence to ensure the model is not running for an unnecessarily long time [11].

Q3: How should I interpret the uncertainty estimates provided by PMF-GRN for a predicted regulatory edge? The variance of the approximate posterior (q(A)) for a specific TF-target pair quantifies the model's uncertainty about that interaction's existence. A low variance indicates high confidence, meaning the data strongly supports the prediction (whether present or absent). A high variance indicates the data is ambiguous. You can use these estimates to filter predictions, prioritizing high-confidence interactions (low uncertainty) for downstream experimental validation [11].

Q4: Can PMF-GRN be applied to human data, such as PBMCs? Yes. The method is not organism-specific. It has been successfully applied to human Peripheral Blood Mononuclear Cell (PBMC) data, where it inferred TFA profiles that correlated with annotated cell types and identified key immune-related TFs [11]. The same workflow—integrating human scRNA-seq and scATAC-seq data with human TF motifs—is directly applicable.

Common Error Messages and Solutions

| Issue / Error | Possible Cause | Solution |

|---|---|---|

| Dimension mismatch during model initialization. | The dimensions of the gene expression matrix (W) and the prior matrix (A) do not align correctly. | Verify that the number of genes (rows) in (W) matches the number of genes (rows) in (A), and that the number of TFs (columns) in (A) is consistent with the model's K parameter. |

| ELBO fails to converge or is unstable. | The learning rate might be too high, or the data may require different scaling. | Reduce the learning rate in the SGD optimizer. Ensure the input gene expression data is properly normalized. |

| Predictions appear random or no high-confidence edges are found. | The prior knowledge may be too weak or incorrect, or the hyperparameters may be suboptimal. | Check the source and construction of your prior matrix. Utilize the built-in hyperparameter search to find a better model fit for your specific dataset. |

Gene Regulatory Network (GRN) inference is fundamental for understanding the causal relationships that govern cellular identity and function. While single-cell multiome data (paired gene expression and chromatin accessibility) offers unprecedented resolution for this task, learning such complex mechanisms from limited data points remains a significant challenge. Technical variations, including data sparsity and noise, further complicate accurate inference. This guide explores how the LINGER (Lifelong neural network for gene regulation) framework addresses these issues by integrating atlas-scale external bulk data, providing troubleshooting and best practices for researchers.

Frequently Asked Questions (FAQs) and Troubleshooting

1. Question: What is the core innovation of LINGER in handling technical variation? Answer: LINGER's core innovation is the use of lifelong learning (or continuous learning) to integrate knowledge from large-scale external bulk datasets. It first pre-trains a model on hundreds of diverse bulk tissue samples (e.g., from the ENCODE project) to learn a general regulatory landscape. This pre-trained model is then refined on your specific single-cell multiome data using Elastic Weight Consolidation (EWC). The EWC loss function acts as a regularizer, preventing the model from forgetting the general knowledge acquired from the bulk data while it adapts to the new, potentially noisy single-cell data. This process significantly enhances the model's robustness to the technical noise and sparsity inherent in scRNA-seq and scATAC-seq data [27].

2. Question: My single-cell multiome dataset is from a specific tissue. What are the requirements for the external bulk data? Answer: The power of LINGER comes from using external bulk data that covers a diverse range of cellular contexts. You do not need bulk data from the exact same tissue. The model is pre-trained on a compendium of bulk data (like from ENCODE) spanning many different cell types and states. This helps the model learn a generalized, foundational understanding of gene regulation. When you subsequently fine-tune it on your specific single-cell data, it effectively applies these general principles to your specific context, leading to more accurate inference than using the single-cell data alone [27].

3. Question: How does LINGER incorporate prior knowledge like transcription factor motifs? Answer: LINGER integrates prior knowledge of transcription factor (TF) binding motifs through a technique called manifold regularization. In the neural network's second layer, which consists of regulatory modules, the model is designed to enrich for TF motifs that bind to regulatory elements (REs) belonging to the same module. This means the learning process is guided by biological knowledge, encouraging the model to group TFs and REs with established binding relationships, which improves the biological plausibility of the inferred networks [27].

4. Question: What are the key performance metrics for validating LINGER's output, and what values can I expect? Answer: LINGER's performance is typically validated using independent experimental data. Key metrics and reported performance include:

Table 1: LINGER Performance Benchmarks

| Validation Method | Metric | Reported Performance | Context |

|---|---|---|---|

| ChIP-seq (Trans-regulation) | Area under the ROC curve (AUC) | Significantly higher than scNN, BulkNN, and PCC | 20 ground truth datasets in blood cells [27] |

| ChIP-seq (Trans-regulation) | Area under the Precision-Recall curve (AUPR) ratio | Significantly higher than scNN, BulkNN, and PCC | 20 ground truth datasets in blood cells [27] |

| eQTL data (Cis-regulation) | AUC & AUPR ratio | Higher than scNN across different RE-TG distance groups | Validation using GTEx and eQTLGen data in whole blood [27] |

5. Question: I have a novel cell type that wasn't present in the bulk pre-training data. Will LINGER still work? Answer: Yes. The purpose of pre-training is not to memorize specific cell types, but to learn the fundamental "grammar" of gene regulation—how TFs and REs generally combine to influence target gene expression. The lifelong learning approach allows LINGER to adapt these general rules to novel cell types present in your single-cell multiome data. The EWC regularization ensures this adaptation is stable and does not catastrophically forget the foundational principles [27].

Experimental Protocols and Workflows

LINGER's Core Workflow

The following diagram illustrates the multi-stage process of the LINGER method, from data input to final GRN output.

Protocol: Implementing LINGER for GRN Inference

Objective: To infer a context-specific Gene Regulatory Network from single-cell multiome data by leveraging lifelong learning from external bulk resources.

Step 1: Data Preparation and Input

- Single-cell Multiome Data: You will need a cell-by-gene expression matrix (from scRNA-seq) and a cell-by-peak (or bin) accessibility matrix (from scATAC-seq). LINGER also requires cell type annotation for each cell.

- External Bulk Data: Obtain a large-scale bulk compendium, such as from the ENCODE project, which includes hundreds of samples from diverse cellular contexts.

- Prior Knowledge: Prepare a file of transcription factor binding motifs.

Step 2: Model Pre-training (BulkNN)

- Train the initial neural network model (BulkNN) on the external bulk data.

- The model is designed to predict target gene (TG) expression using TF expression and regulatory element (RE) accessibility as input.

- The second layer of the network uses manifold regularization to incorporate TF motif information, guiding the formation of biologically plausible regulatory modules [27].

Step 3: Model Refinement with EWC

- Refine the pre-trained BulkNN model on your single-cell multiome data.

- This step uses Elastic Weight Consolidation (EWC). The EWC loss penalizes changes to model parameters that were identified as important during bulk pre-training (measured by Fisher information). This prevents "catastrophic forgetting" and ensures the model retains general regulatory knowledge while adapting to the single-cell context [27].

Step 4: GRN Extraction using Interpretable AI

- After training, use Shapley value analysis to infer the regulatory strength of TF-TG and RE-TG interactions from the refined model. Shapley values quantify the contribution of each feature (TF/RE) to the prediction of each target gene.

- The TF-RE binding strength is derived from the correlation of their parameters in the network's second layer.

- The final output is a comprehensive GRN that can be analyzed at the cell population, cell-type-specific, or even cell-level resolution [27].

Performance Benchmarking and Validation

To ensure the reliability of your inferred GRNs, it is crucial to benchmark LINGER's performance against other methods and validate it with independent data. The following table summarizes a comparative benchmark based on the provided search results.

Table 2: GRN Method Benchmarking Overview

| Method | Core Approach | Key Input Data | Handling of Technical Variation | Reported Advantage |

|---|---|---|---|---|

| LINGER | Lifelong Learning + EWC | scMultiome + External Bulk | Leverages bulk data as a robust prior to mitigate single-cell noise | 4-7x relative increase in accuracy over existing methods [27] |

| DAZZLE | Dropout Augmentation | scRNA-seq | Augments data with synthetic zeros to regularize model against dropout | Improved stability and robustness vs. DeepSEM [6] [5] |

| CausalCell | Causal Discovery (PC algorithm) | scRNA-seq | Uses kernel-based conditional independence tests tolerant to noise/missing values | Works well with incomplete models and missing values [28] |

| inferCSN | Sparse Regression + Pseudotime | scRNA-seq | Divides cells into state-specific windows to address density bias | High accuracy and robustness on linear/bifurcating trajectories [10] |

| AnomalGRN | Graph Anomaly Detection | scRNA-seq | Uses network sparsification to remove "fake" links (structural noise) | Outperforms models like GNNLink on benchmark datasets [29] |

| BEELINE | Benchmarking Framework | Various (Evaluation) | Provides standardized datasets and metrics to assess method performance | Enables fair comparison of GRN inference algorithms [30] |

Table 3: Key Research Reagent Solutions for GRN Inference

| Item | Function / Description | Example / Source |

|---|---|---|

| Single-cell Multiome Kit | Simultaneous profiling of gene expression and chromatin accessibility in the same cell. | 10x Genomics Single Cell Multiome ATAC + Gene Expression |

| Bulk Data Compendium | A large-scale collection of bulk genomic data for pre-training and building foundational models. | ENCODE (Encyclopedia of DNA Elements) Project |

| Transcription Factor Motif Database | A curated collection of position weight matrices (PWMs) representing DNA binding preferences of TFs. | JASPAR, CIS-BP |

| Curated TF-Target Interactions | Prior knowledge networks of known or predicted TF-target gene relationships for validation. | DoRothEA, TRRUST [31] |

| Benchmarking Datasets & Ground Truths | Experimental data with validated interactions to assess the accuracy of inferred GRNs. | ChIP-seq data, eQTL data (from GTEx/eQTLGen) [27] |

| BEELINE Framework | A software environment with standardized datasets and metrics for benchmarking GRN methods. | https://github.com/Murali-group/Beeline [30] |

Validation Logic Pathway

After generating a GRN with LINGER, a systematic validation approach is critical. The following diagram outlines a logical pathway for confirming the biological relevance of your results.

Fundamental Concepts and Workflows

What is single-cell multiome data, and what insights does it provide?

Single-cell multiome data refers to datasets where multiple molecular modalities—most commonly gene expression (from scRNA-seq) and chromatin accessibility (from scATAC-seq)—are measured from the same single cell or nucleus [32] [33]. This approach provides a more comprehensive view of cellular identity and function by simultaneously capturing:

- Transcriptional Output: The current expression levels of genes (scRNA-seq) [32].

- Regulatory Landscape: The accessibility of chromatin, which reveals active regulatory elements and poised regulatory states (scATAC-seq) [32] [34].

The key advantage is the ability to directly link regulatory elements to target gene expression within the same cell, overcoming the limitations of analyzing each modality separately [34] [35]. This is crucial for understanding complex biological processes like cellular differentiation, where changes in chromatin accessibility often precede and prime cells for changes in gene expression during lineage commitment [34].

What are the primary experimental techniques for generating multiome data?

The field has moved from profiling modalities separately on matched cell populations to technologies that co-profile them from the same cell.

Table 1: Key Experimental Technologies for Multiome Data Generation

| Technology Name | Measured Modalities | Key Feature | Example Source |

|---|---|---|---|

| 10x Genomics Multiome ATAC + Gene Expression | Chromatin accessibility (scATAC-seq) & 3' gene expression (scRNA-seq) | Commercially available, widely used kit for parallel library generation from the same nucleus [36]. | 10x Genomics Support [36] |

| SHARE-Seq (Simultaneous High-throughput ATAC and RNA Expression with Sequencing) | Chromatin accessibility & gene expression | Highly scalable, low-cost method with a low cell multiplet rate; uses multiple rounds of hybridization barcoding [34]. | PMC (Cell, 2020) [34] |

| ASAP-Seq (ATAC with Select Antigen Profiling by Sequencing) | Chromatin accessibility & surface proteins | Profiles chromatin accessibility alongside hundreds of cell surface and intracellular protein markers using oligo-labeled antibodies [33]. | PMC (Nature Biotechnology, 2021) [33] |

| DOGMA-Seq | Chromatin accessibility, gene expression, & proteins | A variant of CITE-seq that allows co-measurement of all three modalities from the same cells [33]. | PMC (Nature Biotechnology, 2021) [33] |

The following diagram illustrates a generalized experimental workflow for generating single-cell multiome data, integrating steps common to technologies like the 10x Genomics Multiome kit and SHARE-seq:

Experimental Design and Quality Control

What are the critical sample preparation requirements for a successful multiome experiment?

Successful multiome experiments depend heavily on the quality of the starting material.

- Cell Viability and Concentration: Ideally, samples should have a high concentration (e.g., 1,000–1,600 cells/μL) and high viability (>90%) with minimal debris and aggregation [37].

- Sample Buffer: Cells should be delivered in a buffer free of components that inhibit reverse transcription, such as high concentrations of EDTA. Phosphate-buffered saline (PBS) with 0.04% BSA is often recommended [37].

- Biological Replicates: Just like any other sequencing experiment, biological replicates are necessary for robust statistical testing. Treating individual cells as replicates is a form of pseudoreplication that can dramatically increase false-positive rates in differential expression analysis. Pseudobulking approaches are recommended to account for between-sample variation [37].

How do I assess the quality of my multiome data?

Quality control should be performed separately on each modality and then jointly.

- scRNA-seq Quality Metrics: Assess the number of unique genes detected per cell, total counts per cell, and the percentage of mitochondrial reads.

- scATAC-seq Quality Metrics: Assess the total number of unique fragments per cell, the fraction of fragments in peak regions (a measure of signal-to-noise), and the Transcription Start Site (TSS) enrichment score, which indicates the quality of the chromatin accessibility profile [33].

- Joint Quality: For technologies like SHARE-seq, high-quality data should show clear separation of cell types based on both modalities, a low doublet rate, and a significant correlation between accessibility at key regulatory elements and the expression of associated genes [34].

Table 2: Key Quality Control Metrics for Multiome Data

| Data Modality | Key Metric | Interpretation | Example from Literature |

|---|---|---|---|

| scRNA-seq | Unique Molecular Identifiers (UMIs) per cell | Indicates sequencing depth and capture efficiency per cell. | SHARE-seq: ~2,545 RNA UMIs per cell (mouse skin) [34]. |

| scATAC-seq | Unique Fragments per cell | Indicates the complexity and depth of the chromatin accessibility library. | SHARE-seq: ~8,252 unique ATAC fragments per cell (mouse skin) [34]. |

| scATAC-seq | Fragments in Peaks | Measures the signal-to-noise ratio; higher percentages indicate cleaner data. | SHARE-seq: 65.5% of fragments in peaks [34]. |

| scATAC-seq | TSS Enrichment Score | Measures the signal strength at transcription start sites; higher is better. | ASAP-seq showed no impact on TSS score from protein staining [33]. |

| Multiome | Correlation between accessibility and expression | Validates biological consistency; e.g., accessibility at a promoter should correlate with its gene's expression. | In SHARE-seq, chromatin accessibility at the NFkB1 locus correlated with its gene expression (Spearman ρ = 0.31) [34]. |

Data Integration and Analysis Methods

How can I integrate unmatched scRNA-seq and scATAC-seq data?

While multiome technologies are powerful, a vast amount of existing data consists of unmatched scRNA-seq and scATAC-seq datasets. Several computational methods have been developed for this "diagonal integration" task [38].

- Challenge: The goal is to integrate these datasets into a shared space where batch effects are removed and joint cell identities or trajectories are revealed, without having direct pairwise cell measurements [38].

- Traditional Approach: Many methods (e.g., Seurat v3, Liger) use a pre-defined Gene Activity Matrix (GAM) to convert scATAC-seq data into a scRNA-seq-like matrix by aggregating accessibility near gene promoters. A limitation is that this GAM is often based solely on genomic proximity and assumes a linear relationship [38].

- Advanced Methods: Newer tools like scDART overcome these limitations. scDART is a deep learning framework that integrates unmatched data and learns the cross-modality relationship simultaneously, without relying on a fixed GAM. It is particularly effective at preserving continuous cell trajectory structures in the integrated latent space [38].

What computational methods can link regulatory elements to genes and infer Gene Regulatory Networks (GRNs)?

A primary goal of multiome analysis is to build GRNs. Several advanced methods have been developed for this purpose.

Table 3: Computational Methods for Enhancer-Gene Linking and GRN Inference

| Method Name | Primary Function | Key Innovation | Reported Performance |

|---|---|---|---|

| SCARlink (Single-cell ATAC + RNA linking) | Predicts gene expression from chromatin accessibility and identifies enhancers. | Uses regularized Poisson regression on tile-level accessibility data to jointly model all regulatory effects across a gene's locus (±250 kb) [39]. | Outperformed ArchR gene score and DORC-based methods in predicting gene expression, especially in high-coverage datasets [39]. |

| LINGER (Lifelong neural network for gene regulation) | Infers cell-type-specific Gene Regulatory Networks (GRNs). | Uses "lifelong learning" to incorporate knowledge from large-scale external bulk data (e.g., from ENCODE), mitigating the challenge of limited single-cell data points [27]. | Achieved a 4x to 7x relative increase in accuracy over existing GRN inference methods when validated against ChIP-seq ground truth [27]. |

| DAZZLE | Infers GRNs from scRNA-seq data alone, addressing data dropout. | Employs "Dropout Augmentation," a model regularization technique that adds synthetic zeros during training to improve robustness to the zero-inflation characteristic of scRNA-seq [5]. | Showed improved performance, stability, and reduced run time compared to its predecessor, DeepSEM [5]. |

The following diagram illustrates the conceptual workflow for inferring Gene Regulatory Networks (GRNs) from multiome data, as implemented by methods like LINGER and SCARlink: