Comparative Gene Regulatory Network Analysis: Decoding Sequence Expression Modules for Evolution and Disease

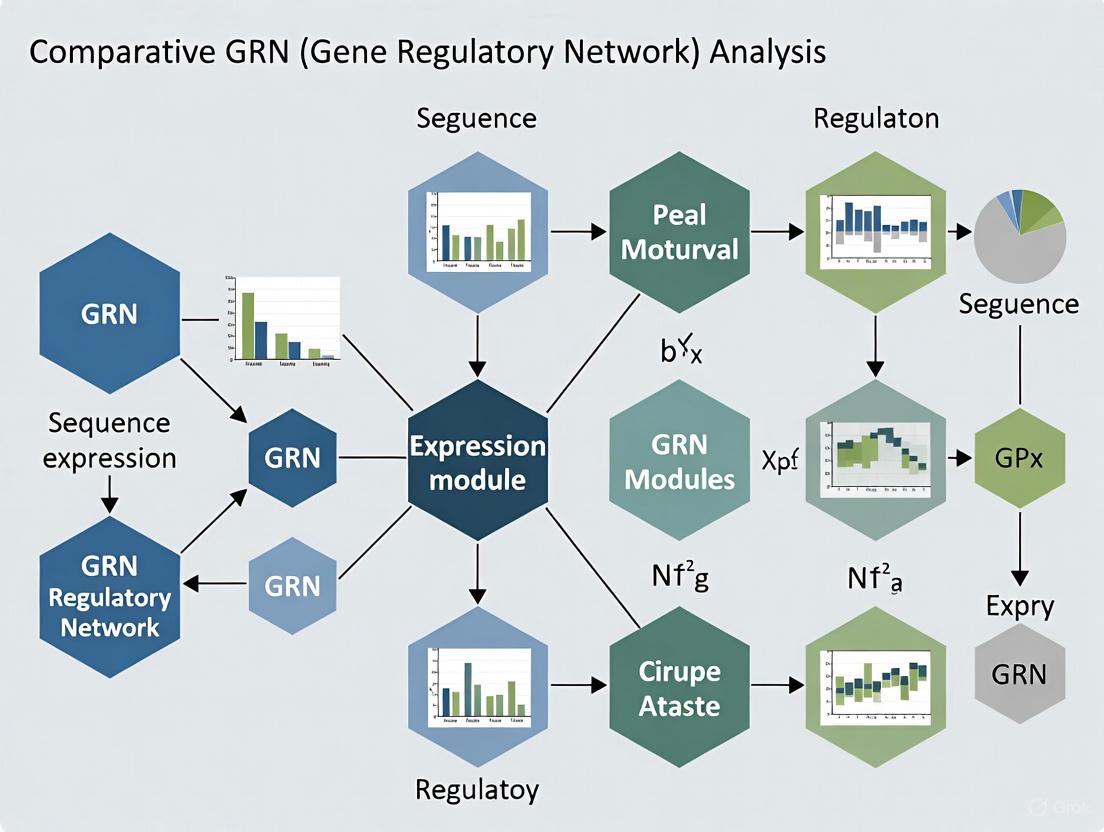

This article provides a comprehensive guide to comparative Gene Regulatory Network (GRN) analysis, a powerful approach for understanding the regulatory logic behind phenotypic diversity, cellular differentiation, and disease states.

Comparative Gene Regulatory Network Analysis: Decoding Sequence Expression Modules for Evolution and Disease

Abstract

This article provides a comprehensive guide to comparative Gene Regulatory Network (GRN) analysis, a powerful approach for understanding the regulatory logic behind phenotypic diversity, cellular differentiation, and disease states. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of GRNs as blueprints for development and evolution. The content details state-of-the-art methodologies for inferring and comparing GRNs from bulk and single-cell RNA-seq data, including advanced techniques like graph embedding and transformers. We address critical challenges in experimental design, data preprocessing, and analysis optimization. Furthermore, we cover validation strategies and comparative frameworks for identifying conserved and divergent regulatory modules, concluding with the translational implications of these insights for identifying novel therapeutic targets.

GRNs as Blueprints: Unraveling the Core Principles of Developmental Programs and Evolution

A gene regulatory network (GRN) is a collection of molecular regulators that interact with each other and with other substances in the cell to govern the gene expression levels of mRNA and proteins, which in turn determine cellular function [1]. GRNs play a central role in morphogenesis (the creation of body structures) and are fundamental to evolutionary developmental biology (evo-devo) [1]. These networks represent a complex wiring diagram of transcriptional regulation that controls all aspects of cellular behavior, from development and metabolism to disease progression [2]. The ability to define and analyze GRNs provides researchers with powerful insights into the molecular mechanisms underlying phenotypic outcomes, enabling advances in basic biological understanding and therapeutic development.

Table 1: Core Components of a Gene Regulatory Network

| Component | Description | Biological Correlate |

|---|---|---|

| Nodes (Genes/Regulators) | Represent genes, their products (proteins, mRNA), or other regulatory elements (e.g., transcription factors, miRNAs). | A transcription factor protein or its target gene [1] [3]. |

| Nodes (Regulatory Regions) | Represent non-coding DNA sequences that control gene expression. | Gene promoters, enhancers, or 3' untranslated regions (3'UTRs) [3]. |

| Edges (Directed) | Represent physical and/or functional interactions between nodes; are typically directional. | A transcription factor (TF) binding to a gene's promoter to activate or repress its expression [3] [2]. |

| Edges (Inductive) | An increase in the concentration of one node leads to an increase in another. Represented by arrowheads or a '+' sign. | A TF that activates a target gene [1]. |

| Edges (Inhibitory) | An increase in the concentration of one node leads to a decrease in another. Represented by filled circles, blunt arrows, or a '-' sign. | A TF that represses a target gene [1]. |

The Architecture of GRNs: Nodes, Edges, and Network Properties

Nodes: The Fundamental Entities

The nodes in a GRN are the fundamental players involved in regulatory processes. These are primarily of two types:

- Genes and their Products: This includes the genes themselves, their mRNA transcripts, and the proteins they encode. Some proteins serve as transcription factors (TFs), which are the main players in regulatory networks [1]. In C. elegans, for example, approximately 940 genes (5% of the genome) encode TFs [3].

- Regulatory Elements: These are non-coding genomic sequences that participate in the control of gene expression. The most studied are promoters, the genomic regions upstream of the transcription start site where TFs bind. Other crucial regulatory nodes include 3' untranslated regions (3'UTRs) of mRNAs, which are targets for post-transcriptional regulators like microRNAs and RNA-binding proteins [3].

Edges: The Interactions and Causality

The edges in a GRN represent the physical and/or functional relationships between the nodes. A key distinction from other biological networks (e.g., protein-protein interaction networks) is that GRNs are bipartite (containing two types of nodes) and directional [3]. Directionality means that a regulator (like a TF) controls a target gene, and not typically the reverse [4] [2]. Edges can be categorized based on their nature:

- Direct vs. Indirect Edges: A direct edge implies a physical interaction, such as a TF directly binding to a promoter. An indirect edge represents a regulatory effect mediated through intermediate nodes, such as a TF regulating another TF that then controls a target gene [2].

- Causal Relationships: Establishing causality—identifying a "cause" node (C) and an "effect" node (E)—is a central challenge. Methods like Granger Causality (for time-series data) or leveraging prior knowledge (e.g., assuming a causal relationship from a TF to a correlated non-TF gene) are used to infer directionality [2].

Diagram 1: Core GRN structure showing activation, repression, and indirect edges.

Global and Local Network Features

GRNs are not random; they exhibit distinct topological features that influence their robustness and function.

- Global Structure: GRNs approximate a hierarchical scale-free network. This means they consist of a few highly connected nodes (hubs) and many poorly connected nodes. This structure is thought to evolve through the preferential attachment of duplicated genes to hubs and is favored by natural selection for its robustness [1].

- Local Structure: Network Motifs: GRNs are enriched with repetitive sub-networks called network motifs. The most abundant three-node motif is the feed-forward loop [1]. These motifs are considered potential "optimal designs" for specific regulatory tasks, such as accelerating metabolic transitions or acting as fold-change detectors that resist fluctuations in input signals [1].

Practical Protocols for GRN Construction and Analysis

Protocol 1: Weighted Gene Co-expression Network Analysis (WGCNA)

WGCNA is a widely used method to identify modules of highly correlated genes from transcriptomic data, which often correspond to functional units [5]. The following protocol is adapted for analyzing paired tumor and normal datasets to identify conserved and condition-specific modules.

A. Software and Data Preparation

- Install R Packages: Install the necessary packages in R:

WGCNA,tidyverse,dendextend,gplots,ggplot2,ggpubr,VennDiagram,dplyr,GO.db,DESeq2,genefilter,clusterProfiler, andorg.Hs.eg.db[5]. - Data Acquisition: Download gene expression data (e.g., RNA-seq count data from the TCGA portal for Oral Squamous Cell Carcinoma (OSCC), including both tumor and normal solid tissue samples). Preprocess the data: normalize read counts, filter lowly expressed genes, and split the dataset into separate matrices for tumor and normal samples [5].

B. Network Construction and Module Detection

- Data Preprocessing: For each dataset (tumor and normal), check for excessive missing values and outliers. Use the

goodSamplesGenesfunction in WGCNA to remove offending genes and samples. - Soft-Thresholding: Choose an appropriate soft-thresholding power (β) to achieve a scale-free topology. Use the

pickSoftThresholdfunction to select a β value for which the scale-free topology fit index reaches ~0.90. - Module Detection: Construct a weighted co-expression network and identify modules using a one-step or step-by-step network construction function in WGCNA (

blockwiseModulesis recommended for large datasets). Modules are identified as branches of a dendrogram generated by hierarchical clustering of a Topological Overlap Matrix (TOM) [5].

C. Module Preservation Analysis

- Compare Networks: Use the

modulePreservationfunction in WGCNA to assess whether modules identified in the reference network (e.g., normal tissue) are preserved in the test network (e.g., tumor tissue). - Interpret Results: High preservation Z-scores indicate modules that are conserved between conditions (representing core biological processes). Low Z-scores indicate modules that are disrupted or unique to one condition (potentially related to disease pathogenesis) [5].

D. Downstream Analysis

- Functional Enrichment: Use the

clusterProfilerpackage to perform Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway enrichment analysis on the gene modules to infer their biological functions. - Hub Gene Identification: Identify genes with high intramodular connectivity (hub genes) within significant modules, as they are often key drivers of the module's function.

- Network Visualization: Export the network for key modules (e.g., using an edge list of the top connections) and visualize them in Cytoscape to explore topology and interactions [5] [6].

Diagram 2: Key steps in the WGCNA protocol for comparative GRN analysis.

Protocol 2: Gene-Centered Yeast One-Hybrid (Y1H) Assay for Direct TF-Promoter Interactions

While WGCNA infers functional relationships from correlation, the Y1H assay is a gene-centered method to physically map direct interactions between a TF (node) and a regulatory DNA sequence (edge) [3].

A. Experimental Setup

- Clone Regulatory Region: Clone the promoter or other regulatory region of the gene of interest (the "prey") into a Y1H vector. This vector contains a reporter gene (e.g., LacZ or HIS3) downstream of the cloned sequence.

- Prepare TF Library: Create a library of known or predicted TFs (the "baits") cloned into an activation domain vector.

- Yeast Transformation: Co-transform the promoter-reporter construct and the TF library into a suitable yeast strain. The yeast strain should have the reporter gene integrated into its genome or carried on a plasmid.

B. Selection and Interaction Detection

- Select for Interactions: Plate the transformed yeast on selective media that lacks specific nutrients (e.g., lacking histidine if HIS3 is the reporter) and contains a chromogenic substrate (e.g., X-Gal for LacZ). The growth of colonies and/or a color change indicates a physical interaction between the TF and the regulatory region, which activates the reporter gene.

- Identify Interacting TFs: Isolate the TF plasmid from positive yeast colonies and sequence it to identify the specific TF that interacted with the regulatory DNA.

C. Data Integration

- Network Construction: Use the identified TF-promoter interactions to build a small-scale, high-confidence GRN. The nodes are the TF and the target gene, connected by a directed edge from the TF to the gene.

- Validation: Validate key interactions using independent methods, such as electrophoretic mobility shift assays (EMSAs) or chromatin immunoprecipitation (ChIP) [3].

A Toolkit for GRN Research: Software and Reagents

Successfully defining GRNs requires a combination of computational tools and experimental reagents. The following table catalogs essential resources for researchers in the field.

Table 2: Research Reagent Solutions for GRN Analysis

| Resource Name | Type | Primary Function | Relevance to GRN Research |

|---|---|---|---|

| WGCNA R Package [5] | Software / Algorithm | Identifies modules of highly correlated genes from expression data. | Infers functional co-expression networks from transcriptomic compendia. A cornerstone of module detection. |

| Cytoscape [7] [6] | Software Platform | Visualizes complex networks and integrates them with attribute data. | The standard for GRN visualization and exploration; enables topological analysis and integration with other data types. |

| Inferelator [7] | Software / Algorithm | Infers predictive, causal regulatory networks from gene expression and prior knowledge. | Moves beyond correlation to infer directionality and causality in regulatory interactions. |

| cMonkey [7] | Software / Algorithm | Discovers co-regulated modules (biclusters) by integrating diverse data (e.g., expression, sequence motifs). | Identifies modules that are co-expressed only under specific conditions, handling local co-expression. |

| BioTapestry [7] | Software Platform | Builds, visualizes, and simulates genetic regulatory networks. | Particularly useful for modeling developmental GRNs and their dynamics over time. |

| NetworkAnalyst [8] | Web-based Platform | Statistical, visual, and network-based meta-analysis of gene expression data. | Provides a user-friendly interface for network visualization and analysis without requiring programming. |

| Promoterome [3] | Experimental Reagent | A comprehensive collection of cloned gene promoters for an organism (e.g., C. elegans). | Enables high-throughput screening of TF-promoter interactions using assays like Y1H. |

| C. elegans ORFeome [3] | Experimental Reagent | A resource of open reading frames (ORFs) cloned in a flexible system. | Provides the source for building a comprehensive library of TF "baits" for interaction screens. |

Comparative Analysis of GRN Methodologies

Different GRN inference methods leverage distinct types of data and have complementary strengths and weaknesses. A comprehensive evaluation of module detection methods found that decomposition methods (e.g., Independent Component Analysis) generally outperform other strategies in recovering known regulatory modules [9]. Surprisingly, methods designed to handle local co-expression (biclustering) or model regulatory relationships (network inference) did not consistently outperform classical clustering on large gene expression datasets, though top performers like GENIE3 (a network inference method) can be competitive in specific contexts [9].

Table 3: Performance Overview of Module Detection Approaches

| Method Category | Key Features | Example Algorithms | Performance Notes |

|---|---|---|---|

| Decomposition | Handles local co-expression; allows overlap between modules. | ICA (Independent Component Analysis) variants | Overall best performance in identifying known regulatory modules [9]. |

| Clustering | Groups genes co-expressed across all samples; generally no overlap. | FLAME, hierarchical clustering, WGCNA | Robust performance; WGCNA is a widely used and powerful standard [9] [5]. |

| Biclustering | Finds genes co-expressed only in a subset of samples; allows overlap. | FABIA, ISA, QUBIC | Lower overall performance, but can excel in human and synthetic data contexts [9]. |

| Network Inference (NI) | Models regulatory relationships between genes. | GENIE3, MERLIN | Does not clearly outperform clustering on large datasets, but valuable for inferring causality [9] [2]. |

Defining GRNs through their fundamental components—nodes and edges—provides a powerful conceptual and practical framework for understanding how phenotypic outcomes emerge from complex molecular interactions. The integration of complementary methodologies, from co-expression analysis with tools like WGCNA to physical interaction mapping with Y1H, allows for the construction of more accurate and comprehensive models of transcriptional regulation. As the field progresses, the systematic application and integration of these protocols, supported by the growing toolkit of software and reagents, will continue to refine our understanding of biological systems in health and disease, ultimately informing novel therapeutic strategies in drug development.

Application Notes: Core Principles and Quantitative Frameworks

The integration of Gene Regulatory Networks (GRNs) into evolutionary developmental biology (EvoDevo) has provided a systems-level framework for understanding how developmental processes evolve. GRNs are graph-based representations where nodes symbolize genes and edges depict regulatory interactions, typically activating or inhibitory relationships between transcription factors and their target genes [10]. In EvoDevo, the architecture of these networks—their topology, modularity, hierarchy, and sparsity—is a major determinant of evolutionary outcomes, either facilitating or constraining phenotypic variation [11].

Central to the EvoDevo perspective is the concept that evolution acts by rewiring GRNs, altering the strengths and connections of regulatory interactions rather than inventing new genes de novo. This rewiring modulates the output of developmental systems, leading to morphological diversity. Key architectural features that influence evolutionary trajectories include:

- Hierarchical Structure: GRNs are often organized hierarchically, with a core of few, highly interconnected "hub" genes governing large sub-netrices. This structure confers stability but can also create evolutionary bottlenecks [11].

- Modularity: Functional modules within GRNs allow for independent modification of specific traits without pleiotropic effects on the entire organism, thereby facilitating evolutionary change [11].

- Sparsity: Biological GRNs are sparse, meaning each gene is regulated by only a small number of other genes. This sparsity limits connectivity, dampens the effects of perturbations, and makes the network more tractable for evolution to modify [11].

- Scale-Free Topology: The in- and out-degree of nodes in GRNs often follows a power-law distribution. This property leads to emergent features like motif enrichment and the "small-world" phenomenon, where most nodes are connected by short paths [11].

Quantitative Descriptors of GRN Architecture

The following table summarizes key quantitative metrics used in comparative GRN analysis to quantify network architecture and its impact on evolutionary potential.

Table: Key Quantitative Metrics for Comparative GRN Analysis

| Metric | Description | Interpretation in EvoDevo | Typical Value Range |

|---|---|---|---|

| Sparsity [11] | Proportion of possible regulatory connections that actually exist. | Higher sparsity dampens perturbation effects and may constrain evolutionary paths. | Networks are sparse; e.g., only 2.4-3.1% of gene pairs show perturbation effects [11]. |

| Signed-Degree [10] | A 2D vector ( \mathbf{d} = [d^+, d^-] ) representing a gene's number of activating ((d^+)) and repressing ((d^-)) connections. | Captures the regulatory role of a gene (e.g., activator vs. repressor hub). | Varies per gene; follows an approximate power-law distribution [10] [11]. |

| Local Response Coefficient ((r_{ij})) [12] | Quantifies the logarithmic change in steady-state concentration of gene (i) with respect to gene (j) following a perturbation. | Measures the direction and intensity of a specific regulatory interaction during a cell fate. | Calculated from perturbation data; sign indicates activation/inhibition [12]. |

| Degree Dispersion [11] | The variance in the number of connections (degree) across all nodes in the network. | High dispersion indicates a few hub genes, increasing network robustness but also vulnerability to hub mutations. | Characteristic of scale-free networks [11]. |

Experimental Protocols

This section provides detailed methodologies for key experiments in comparative GRN analysis.

Protocol: Inferring GRN Topology Using Systematic Perturbation and Local Response Analysis

This protocol outlines a method for inferring network topology and identifying differences across cell states (e.g., during differentiation) using perturbation data and statistical analysis of local response matrices [12].

Table: Research Reagent Solutions for Perturbation-Based GRN Inference

| Reagent/Material | Function in Protocol |

|---|---|

| CRISPR-based Perturbation Library | Enables systematic knockout or knockdown of individual genes (nodes) in the network. |

| scRNA-seq Reagents | Profiles the transcriptome of single cells in both unperturbed and perturbed states, providing gene expression data. |

| Cell Culture System | Supports the growth and maintenance of the cell type of interest (e.g., erythroid progenitors, differentiating stem cells). |

| ODE Modeling Software | Used to simulate network dynamics and steady states, validating the inferred local response matrix. |

Procedure:

- System Setup and Basal State Definition: For a GRN with (n) molecules, define the system dynamics with a set of ordinary differential equations (ODEs): ( \frac{d\mathbf{x}}{dt} = \mathbf{f}(\mathbf{x}; \mathbf{p}) ), where (\mathbf{x}) is a vector of molecular concentrations and (\mathbf{p}) is a parameter vector. Identify a set of (n) sensitive parameters (e.g., degradation rates) where each parameter (pi) directly affects gene (i). Culture the cells and establish a stable, unperturbed steady state (\bar{\mathbf{x}}) at the basal parameter set (\mathbf{pb}) [12].

- Systematic Perturbation: For each sensitive parameter (pk) ((k = 1) to (n)), perform a mild perturbation, changing its value from the basal (p{b,k}) to a perturbed value (p{s,k}). For each perturbation, allow the system to reach a new stable steady state (\bar{\mathbf{x}}k). This requires (n) separate perturbation experiments [12].

- Calculate Local Response Matrix: For each ordered pair of genes ((i, j)), compute the local response coefficient (r{ij}) using the formula: ( r{ij} = \frac{\bar{x}j}{\bar{x}i} \cdot \frac{\partial xi}{\partial xj} \approx \frac{\bar{x}j}{\bar{x}i} \cdot \frac{\Delta xi}{\Delta xj} ). In practice, this is derived from the changes in steady-state concentrations between the perturbed and unperturbed conditions. The diagonal elements (r_{ii}) are defined as -1. This generates the local response matrix (\mathbf{R}), which quantitatively represents the direct regulatory links in the network [12].

- Statistical Analysis and Sparsity Enforcement: To account for noise and determine significant regulations, perform multiple replicates of the perturbation experiments. Calculate the confidence interval (CI) for each element (r{ij}) in the local response matrix. A regulation is considered statistically significant and included in the final network topology only if the CI for its (r{ij}) does not cross zero. This step ensures the inferred network meets biological sparsity constraints [12].

- Differential Analysis Across Cell States: To compare GRN architectures between different cell fates (e.g., epithelial vs. mesenchymal), repeat Steps 1-4 for each distinct cell state. Then, compute a relative local response matrix by comparing the (r_{ij}) values between states. This highlights which regulatory interactions have significantly changed in strength, identifying the key rewiring events driving cell fate decisions [12].

GRN Inference via Perturbation Workflow

Protocol: Comparative Analysis of GRN Architecture Using Role-Based Embedding (Gene2role)

This protocol uses the Gene2role method to project genes from different GRNs into a unified embedding space based on their multi-hop topological roles, enabling a deep comparative analysis of network structure across species, cell types, or states [10].

Procedure:

- Network Preparation: Construct or obtain signed GRNs (({G} = (V,E^{+}, E^{-}))) for the conditions to be compared. Networks can be inferred from scRNA-seq data using algorithms like EEISP, from multi-omics data (e.g., CellOracle), or from curated databases [10].

- Topological Representation: For each gene in each network, calculate its signed-degree vector ( \mathbf{d} = [d^+, d^-] ), which encapsulates its direct regulatory role [10].

- Similarity Calculation: The topological similarity between two genes (u) and (v) is not just based on direct neighbors. Gene2role constructs a multilayer graph that encodes node similarities for different neighborhood depths (k-hops). The similarity at each layer (k) is calculated using a specialized distance function like Exponential Biased Euclidean Distance (EBED) on the signed-degree vectors of nodes in their k-hop neighborhoods, which is robust to the scale-free nature of GRNs [10].

- Embedding Generation: Using the struc2vec framework, generate a low-dimensional vector embedding for each gene that preserves its multi-hop topological identity. This step projects all genes from all networks being compared into a common latent space [10].

- Downstream Comparative Analysis:

- Identify Differentially Topological Genes (DTGs): Calculate the Euclidean distance between the embeddings of the same gene across two different cellular states (e.g., State A vs. State B). Genes with large embedding distances have undergone significant topological role changes and are prioritized as DTGs [10].

- Gene Module Stability Analysis: For a pre-defined gene module (e.g., a co-expression module), calculate the average pairwise embedding distance for the module's genes between two states. A smaller average distance indicates a more stable module across the compared conditions [10].

Visualization of GRN Architecture and Evolutionary Constraints

Understanding the flow of information and hierarchical organization within a GRN is crucial for EvoDevo studies. The following diagram illustrates a generic, hierarchically structured GRN, highlighting architectural features that influence evolutionary trajectories.

Hierarchical GRN Architecture Features

This diagram illustrates a GRN with a hierarchical and modular structure, characterized by a core regulatory layer of transcription factors (TF1, TF2, TF3) that is highly constrained and evolves slowly. This core receives external signals and governs downstream modules (e.g., the group involving G1 and G2). The network contains both activating (green) and inhibitory (red) edges, forming specific motifs like feedback loops (dashed line). Evolutionary changes in this structure, such as rewiring a connection in a downstream module (e.g., G2 to Effector4), are more likely to produce viable phenotypic variation than alterations to the core, demonstrating how network architecture shapes evolutionary potential.

Gene Regulatory Networks (GRNs) represent the complex web of interactions between transcription factors (TFs) and their target genes, governing critical biological processes. Within these networks, sequence expression modules (SEMs) refer to groups of co-regulated genes that share common regulatory logic and exhibit coordinated expression patterns under specific conditions. The identification of SEMs provides a powerful framework for reducing the complexity of transcriptomic data by grouping genes into functionally coherent units, thereby revealing the modular organization of biological systems. In the context of comparative GRN analysis, SEMs enable researchers to identify conserved and divergent regulatory programs across species, tissues, developmental stages, or environmental conditions, offering fundamental insights into evolutionary biology, disease mechanisms, and potential therapeutic interventions.

Recent advances in machine learning and the availability of large-scale epigenomic data have significantly enhanced our ability to identify SEMs with high precision. Studies have demonstrated that convolutional neural networks (CNN) and random forest classifiers (RFC) can predict transcription factor binding site co-operativity with remarkable accuracy (AUC 0.94 vs. 0.88, respectively) [13]. The integration of these computational approaches with transcriptomic data from various conditions has enabled the systematic mapping of co-regulatory modules and their associated gene regulatory networks, providing a more comprehensive understanding of biological systems [13]. This progress is particularly evident in cardiac research, where such approaches have identified 1,784 cardiac-specific CRMs containing at least four cardiac TFs, revealing novel regulators and highlighting the importance of the NKX family of transcription factors in cardiac development [13].

Computational Methodologies for Module Identification

Weighted Gene Co-expression Network Analysis (WGCNA)

Weighted Gene Co-expression Network Analysis (WGCNA) represents one of the most robust and widely employed methods for identifying sequence expression modules from transcriptomic data. This systematic approach constructs scale-free topological networks based on pairwise correlations between genes across multiple samples, organizing thousands of genes into functionally cohesive modules [14]. The WGCNA algorithm implements several key steps: (1) construction of a gene co-expression similarity matrix, (2) transformation of the similarity matrix into an adjacency matrix using a soft-thresholding power to approximate scale-free topology, (3) conversion of the adjacency matrix into a topological overlap matrix (TOM) to measure network interconnectedness, and (4) hierarchical clustering of genes based on TOM dissimilarity to identify modules of highly co-expressed genes [14].

A significant advantage of WGCNA lies in its ability to relate identified modules to specific experimental traits or conditions through module-trait correlations. This approach has been successfully applied across diverse biological contexts, from identifying light-responsive modules in Arabidopsis to unraveling stem development programs in sorghum [14] [15]. In practice, researchers have identified 14 distinct co-expression modules significantly associated with different light treatments in Arabidopsis, with the honeydew1 and ivory modules showing particularly strong associations with dark-grown seedlings [14]. The hub genes identified within these modules demonstrated significant enrichment in light responses, including responses to red, far-red, blue light, and photosynthesis-related processes [14].

Table 1: Key Parameters for WGCNA Implementation

| Parameter | Description | Typical Setting |

|---|---|---|

| Soft-thresholding Power | Power value applied to correlation matrix to achieve scale-free topology | β = 6-12 (determined by scale-free topology fit) |

| Minimum Module Size | Smallest number of genes allowed in a module | 30 genes |

| Module Detection Sensitivity | deepSplit parameter controlling branch splitting in clustering | 0-4 (0=low, 4=high) |

| Module Merging Threshold | Cutheight for merging similar modules | 0.25 |

| Network Type | Type of correlation network | signed hybrid (recommended) |

Advanced Machine Learning Approaches

Beyond traditional correlation-based methods, advanced machine learning techniques have emerged as powerful tools for identifying co-regulatory modules from epigenomic data. These approaches leverage the wealth of information available from resources like the UniBind database, which contains ChIP-Seq data from over 1,983 samples and 232 TFs [13]. By employing a combination of random forest classifiers and convolutional neural networks, researchers can predict transcription factor binding site co-operativity and identify all potential clusters of co-binding TFs with high precision (F1 scores of 0.938 for CNN vs. 0.872 for RFC) [13].

These machine learning pipelines have demonstrated remarkable versatility in predicting putative CRMs, successfully identifying approximately 200,000 CRMs for over 50,000 human genes with complete overlap when validated against recent CRM prediction methods [13]. The adaptability of these approaches extends their utility to diverse biological systems and datasets beyond the specific contexts in which they were developed, making them particularly valuable for comparative GRN analysis across species [13].

Figure 1: WGCNA Workflow for SEM Identification

Experimental Design for Transcriptomic Studies

RNA-Seq Experimental Considerations

The reliability of sequence expression module identification depends fundamentally on appropriate experimental design for transcriptomic studies. A clearly defined hypothesis and specific objectives should guide the experimental design from the choice of model system through to sequencing setup and quality control parameters [16]. Key considerations include determining whether the project requires a global, unbiased readout or a targeted approach, anticipating the expected patterns of differential expression, and selecting model systems appropriate for capturing the biological phenomena of interest [16].

Sample size and statistical power significantly impact the quality and reliability of SEM identification. Statistical power refers to the ability to identify genuine differential gene expression in naturally variable datasets, with larger sample sizes generally providing more robust results [16]. Practical constraints, particularly when working with precious patient samples from biobanks, often limit sample availability, making careful planning essential. Consulting with bioinformaticians during the experimental design phase can help optimize study design and ensure appropriate statistical power given sample size limitations [16].

Table 2: Replicate Considerations for RNA-Seq Experiments

| Replicate Type | Definition | Purpose | Recommended Number |

|---|---|---|---|

| Biological Replicates | Different biological samples or entities | Assess biological variability and ensure findings are reliable and generalizable | 3-8 per condition |

| Technical Replicates | Same biological sample measured multiple times | Assess and minimize technical variation from sequencing runs and lab workflows | 2-3 per sample |

Sample Processing and Quality Control

The wet lab workflow for RNA-Seq experiments requires careful consideration of multiple factors, including RNA extraction methods, sample pre-treatment, and library preparation protocols [16]. For large-scale drug screens based on cultured cells aiming to assess gene expression patterns or pathways, 3'-Seq approaches with library preparation directly from lysates offer significant advantages by omitting RNA extraction, thereby saving time and resources while enabling larger sample numbers [16]. When isoforms, fusions, non-coding RNAs, or variants are of interest, whole transcriptome approaches combined with mRNA enrichment or ribosomal rRNA depletion are more appropriate [16].

Batch effects represent a critical consideration in large-scale transcriptomic studies. These systematic, non-biological variations arise from how samples are collected and processed over time [16]. Strategic experimental design, including proper plate layout and randomization, can minimize batch effects and enable computational correction during data analysis. The use of artificial spike-in controls, such as SIRVs, provides valuable tools for measuring assay performance, particularly dynamic range, sensitivity, reproducibility, and quantification accuracy [16]. Pilot studies with representative sample subsets are highly recommended to validate experimental parameters and workflows before committing to full-scale experiments [16].

Protocol: Identifying SEMs Using WGCNA

RNA-seq Data Preprocessing

The initial phase of SEM identification involves rigorous preprocessing of RNA-seq data to ensure data quality and reliability. This process begins with quality assessment of raw sequencing reads using tools such as FastQC and MultiQC, followed by removal of low-quality samples and adapter sequences [14]. For the sorghum stem development study, researchers analyzed 96 samples from various organ types (stem, leaf, root, panicle, peduncle, seed) collected at five developmental stages, retaining genes with a mean transcripts per million (TPM) ≥ 5 in at least one organ for downstream analysis [15].

Read alignment and quantification typically employ tools like Kallisto, which uses pseudoalignment to rapidly determine read compatibility with reference transcripts [14]. The resulting transcript abundance values are then imported into DESeq2 for differential expression analysis using tximport [14]. The VarianceStabilizingTransformation function from DESeq2 normalizes gene-level counts, with significant genes typically defined as those with adjusted p-values < 0.05 [14]. In the Arabidopsis light signaling study, this process identified 24,447 differentially expressed genes that were subsequently used for WGCNA [14].

Network Construction and Module Detection

The core WGCNA algorithm is implemented using the WGCNA R package, which transforms normalized expression data into co-expression networks [14]. A critical step involves selecting an appropriate soft-thresholding power (β) to achieve approximate scale-free topology, which is typically determined by evaluating the scale-free topology fit index for a range of power values [14]. The resulting adjacency matrix is then transformed into a topological overlap matrix (TOM) to measure network interconnectedness, followed by hierarchical clustering of genes based on TOM dissimilarity to identify modules of highly co-expressed genes [15].

Figure 2: Network Construction Process in WGCNA

Hub Gene Identification and Functional Validation

Within each identified module, hub genes represent highly interconnected genes that often play central regulatory roles. These genes are typically identified by high gene significance (GS) and module membership (MM) values, with common thresholds set at MM > 0.8 and GS > 0.3 with p-value < 0.05 [14]. In the sorghum stem development study, this approach identified SbTALE03 and SbTALE04 as stem hub transcription factors, which were subsequently empirically validated for their stem specificity across developmental stages [15].

Functional enrichment analysis of module genes provides critical insights into the biological processes, molecular functions, and cellular components associated with each SEM. Gene ontology (GO) enrichment analysis is typically performed using tools such as DAVID (The Database for Annotation, Visualization and Integrated Discovery), with significantly enriched terms identified based on false discovery rate (FDR) ≤ 0.05 [14]. In the Arabidopsis light signaling study, hub genes from light-responsive modules showed significant enrichment in responses to specific light wavelengths, light stimulus, auxin responses, and photosynthesis-related processes [14].

Protocol: Advanced Machine Learning Approaches for CRM Identification

Data Integration and Feature Extraction

The machine learning-based pipeline for identifying co-regulatory modules begins with comprehensive data integration from diverse epigenomic sources. The foundation typically involves curating data from resources like the UniBind database, which contains extensive ChIP-Seq data from hundreds of samples and transcription factors [13]. This data provides the necessary information for evaluating and learning features that predict the co-binding of transcription factors, enabling the identification of all potential clusters of co-binding TFs [13].

Feature extraction focuses on identifying TF binding characteristics that facilitate biologically significant co-binding. This process involves analyzing various binding patterns and sequence features that distinguish functional cooperativity from random co-occurrence [13]. The extracted features serve as input for machine learning models trained to predict co-binding between transcription factors, with performance typically evaluated using precision-recall Receiver Operating Characteristic (ROC) curves and F1 scores [13].

Model Training and Validation

The core of this protocol involves implementing and comparing multiple machine learning models, with convolutional neural networks (CNN) and random forest classifiers (RFC) representing two prominent approaches [13]. In comparative analyses, CNN has demonstrated superior performance over RFC, achieving higher AUC (0.94 vs. 0.88) and F1 scores (0.938 vs. 0.872) in predicting transcription factor binding site cooperativity [13]. This performance advantage makes CNNs particularly valuable for identifying putative CRMs from DNA motifs.

The CRMs generated by clustering algorithms require rigorous validation against established databases such as ChipAtlas and MCOT [13]. This validation process not only confirms the accuracy of predictions but often reveals additional motifs forming CRMs that may have been overlooked in previous analyses [13]. Successful validation typically demonstrates complete (100%) overlap with recent CRM prediction methods while potentially identifying novel regulatory relationships [13].

Table 3: Machine Learning Performance Comparison for CRM Identification

| Model Type | AUC Score | F1 Score | Precision | Recall |

|---|---|---|---|---|

| Convolutional Neural Network (CNN) | 0.94 | 0.938 | Not specified | Not specified |

| Random Forest Classifier (RFC) | 0.88 | 0.872 | Not specified | Not specified |

Table 4: Key Research Reagent Solutions for SEM Identification

| Resource | Function | Application Context |

|---|---|---|

| UniBind Database | Repository of ChIP-Seq data from 1,983 samples and 232 TFs | Identifying transcription factor binding sites and co-binding patterns [13] |

| Spike-in Controls (SIRVs) | Internal standards for quantifying RNA levels between samples | Normalizing data, assessing technical variability, quality control for large-scale experiments [16] |

| Kallisto | Tool for quantifying transcript abundance using pseudoalignment | Rapid determination of read compatibility with target transcripts [14] |

| DESeq2 | R package for differential expression analysis | Normalizing gene-level counts and identifying statistically significant differentially expressed genes [14] |

| WGCNA R Package | Comprehensive tool for weighted gene co-expression network analysis | Constructing scale-free co-expression networks and identifying modules of co-expressed genes [14] [15] |

| DAVID | Functional annotation tool for gene ontology enrichment analysis | Identifying biological processes, molecular functions, and cellular components associated with gene modules [14] |

Applications in Drug Discovery and Development

The identification of sequence expression modules has profound implications for drug discovery and development, particularly in target identification, biomarker discovery, and mode-of-action studies [16]. By grouping genes into functionally coherent modules, researchers can identify key regulatory networks and hub genes that represent potential therapeutic targets for various diseases. In cardiac research, for example, the identification of 1,784 cardiac-specific CRMs revealed potential novel regulators like ARID3A and RXRB for SCAD, including known TFs like PPARG for F11R [13]. These findings provide valuable targets for further investigation in cardiac disease and potential therapeutic development.

In the context of pharmacogenomics, SEM analysis can reveal how drug treatments alter coordinated gene expression patterns, providing insights into both therapeutic mechanisms and potential side effects. Weighted gene co-expression network analysis has been successfully applied to study drug effects on gene expression networks, identify biomarker candidates, and understand the molecular basis of treatment response variability [16]. The application of these approaches to large-scale drug screening projects, particularly those using cultured cells, benefits significantly from 3'-Seq approaches with library preparation directly from lysates, enabling efficient processing of large sample numbers [16].

Figure 3: SEM Application in Drug Discovery Pipeline

The identification of sequence expression modules represents a powerful approach for deciphering the complex regulatory logic underlying biological systems. Through methods such as WGCNA and machine learning-based CRM prediction, researchers can reduce the dimensionality of transcriptomic data while preserving biologically meaningful patterns. The integration of these approaches across multiple species, tissues, and conditions enables comparative GRN analysis that reveals both conserved and divergent regulatory programs, advancing our understanding of evolutionary biology, disease mechanisms, and potential therapeutic interventions.

Future developments in this field will likely focus on multi-omics integration, combining transcriptomic data with epigenomic, proteomic, and metabolomic information to construct more comprehensive regulatory networks. Additionally, advances in single-cell sequencing technologies will enable the identification of SEMs at cellular resolution, revealing regulatory heterogeneity within tissues and providing unprecedented insights into cellular differentiation and function. As these technologies mature, the systematic identification of sequence expression modules will continue to transform our understanding of biological systems and accelerate the development of novel therapeutic strategies.

Gene Regulatory Networks (GRNs) represent the complex circuits of interactions between transcription factors (TFs) and their target genes, governing cellular processes and phenotypic outcomes. Comparative GRN analysis across species—particularly between model organisms like mice and humans—reveals both deeply conserved regulatory principles and critically diverged network connections that underlie phenotypic similarities and differences. Understanding this evolutionary rewiring is paramount for translational research, as it explains why mouse models often fail to recapitulate human diseases despite highly conserved gene sequences [17] [18]. The ∼100-million-year evolutionary divergence between humans and mice has resulted in substantial rewiring of regulatory relationships, affecting functional modules and contributing to species-specific phenotypes [17]. This framework provides essential context for interpreting disease mechanisms and developing therapeutic strategies.

Quantitative Foundations of GRN Conservation and Divergence

Systematic analyses reveal quantitative patterns of GRN evolution. The divergence in regulatory networks can be measured through phenotypic similarity scores, transcription factor binding site conservation, and the presence of species-specific regulatory elements.

Table 1: Quantitative Measures of GRN Conservation and Divergence Between Mice and Humans

| Measurement Dimension | Conservation Level | Divergence Level | Key Findings |

|---|---|---|---|

| Phenotypic Similarity (PS) Score [17] | High PS (HPG): Conserved phenotypes | Low PS (LPG): Diverged phenotypes | LPGs are enriched in mouse genes that fail to mimic human disease phenotypes [17] |

| REST Cistrome Conservation [19] | 15.3% of hESC REST peaks | 84.7% of hESC peaks are human-specific | Conserved binding is associated with core neural development genes [19] |

| Regulatory Element Sequence Conservation [20] | ~10% of heart enhancers | ~90% of heart enhancers | Most cis-regulatory elements lack sequence conservation despite functional conservation [20] |

| Trans-regulatory Circuitry [17] | Highly conserved TF-to-TF networks | Substantial plasticity in cis-regulatory regions | Changes in cis-regulatory regions often have minimal impact due to trans-conservation [17] |

Table 2: Functional Consequences of Regulatory Divergence

| Biological System | Conserved Elements | Diverged Elements | Functional Impact |

|---|---|---|---|

| Neural Development (REST) [19] | Genes important for neural development and function | >3000 genes with human-specific REST regulation | Human-specific targeting of learning/memory genes (APP, HTT) and disease genes [19] |

| Immune System [18] | Core transcriptional programs | Species-specific SNVs and structural variants | Altered effector molecule expression and differential drug responses [18] |

| Heart Development [20] | Developmental gene expression patterns | ~90% of enhancer sequences | Maintained functional conservation despite sequence divergence [20] |

Experimental Protocols for Comparative GRN Analysis

Protocol 1: Quantifying Phenotypic Divergence and Regulatory Rewiring

This protocol enables researchers to systematically measure the relationship between regulatory network rewiring and phenotypic divergence between species.

Materials:

- Phenotype ontology databases (HPO, MPO)

- Ortholog mapping resources (Ensembl)

- Gene expression data (RNA-seq)

- Regulatory interaction databases (RegNetwork, TRRUST)

Procedure:

- Calculate Phenotype Similarity (PS) Scores:

- Collect human gene–phenotype relationships from OMIM and HPO databases

- Compile mouse gene–phenotype relationships from MGI database

- Perform semantic comparisons utilizing PhenoDigm to calculate quantitative PS scores for orthologous genes

- Normalize PS scores by computing Z-scores and classify genes as High PS Genes (HPGs) or Low PS Genes (LPGs) [17]

Construct Species-Specific Regulatory Networks:

- For a gene of interest, build a human functional module by selecting genes involved in the same biological process (from GSEA msigdb)

- Transfer the functional module to the mouse orthologous gene using one-to-one orthology relationships

- Gather TF–target relationships from Regulatory Network Repository (RegNetwork) or TRRUST, using only experimentally validated connections [17]

Identify Rewired Regulatory Connections:

- Use hypergeometric testing to identify significant RCs between TFs and functional modules in each species (adjusted p-value < 0.01)

- Compare human and mouse networks to identify conserved versus rewired connections

- Correlate network rewiring extent with PS scores [17]

Validate with Expression Divergence:

- Analyze transcriptomic data from equivalent tissues/developmental stages

- Correlate expression divergence of target genes with rewired RCs and phenotypic differences [17]

Protocol 2: Identifying Indirectly Conserved Regulatory Elements

This protocol uses synteny-based approaches to identify functional regulatory elements that lack obvious sequence conservation, enabling discovery of "covert" orthologs across large evolutionary distances.

Materials:

- Chromatin profiling data (ATAC-seq, ChIPmentation, Hi-C)

- Multiple genome assemblies (target and bridging species)

- IPP algorithm software

- In vivo enhancer validation system (e.g., transgenic mice)

Procedure:

- Profile Regulatory Genomes:

- Generate comprehensive chromatin profiles from equivalent developmental stages in both species (e.g., E10.5 mouse and HH22 chicken hearts)

- Perform ATAC-seq, histone modification ChIP-seq (H3K27ac, H3K4me3), Hi-C, and RNA-seq

- Identify high-confidence enhancers and promoters by integrating chromatin accessibility and gene expression data [20]

Apply Interspecies Point Projection (IPP):

- Select appropriate bridging species (e.g., 14 species from reptilian and mammalian lineages for mouse-chicken comparison)

- Build collection of anchor points from pairwise alignments between all species

- Project regulatory elements from source to target genome using IPP's synteny-based algorithm

- Classify projections as: Directly Conserved (DC, <300bp from direct alignment), Indirectly Conserved (IC, >300bp but summed distance <2.5kb), or Nonconserved (NC) [20]

Validate Functional Conservation:

- Select IC enhancers for functional testing using in vivo reporter assays

- Test enhancer activity in appropriate model systems (e.g., mouse transgenic assays for chicken enhancers)

- Confirm tissue-specific activity patterns comparable to native context [20]

Advanced Computational Methods for GRN Comparison

Recent computational advances enable more nuanced comparison of GRN topology and dynamics across species. The Gene2role method employs role-based graph embedding to capture multi-hop topological information within signed GRNs, moving beyond simple direct connectivity metrics [10]. This approach allows identification of genes with significant topological changes across cell types or states, providing fresh perspective beyond traditional differential gene expression analyses [10].

The DuCGRN framework utilizes a dual context-aware model with K-hop aggregation and multiscale feature extraction to capture both direct and indirect regulatory relationships [21]. By employing adversarial training to address data sparsity, this method generates more robust GRN predictions that facilitate more accurate cross-species comparisons [21].

Table 3: Key Research Reagents and Computational Tools for Comparative GRN Analysis

| Resource Category | Specific Tools/Databases | Function and Application |

|---|---|---|

| Phenotype Ontology Databases | HPO, MPO, OMIM, MGI | Provide standardized phenotype annotations for semantic similarity calculations and PS score derivation [17] |

| Regulatory Interaction Databases | RegNetwork, TRRUST | Curated repositories of experimentally validated TF-target relationships for network construction [17] |

| Chromatin Profiling Tools | ATAC-seq, ChIPmentation, Hi-C | Identify active regulatory elements and 3D chromatin architecture in specific cell types [19] [20] |

| Synteny-Based Algorithms | Interspecies Point Projection (IPP) | Identify orthologous regulatory regions independent of sequence conservation using synteny [20] |

| Network Embedding Methods | Gene2role | Capture multi-hop topological information for comparative network analysis [10] |

| Advanced GRN Inference | DuCGRN, SCENIC, CellOracle | Infer regulatory networks from single-cell data using sophisticated machine learning approaches [22] [21] |

| Experimental Validation Systems | Transgenic reporter assays, CRISPR-Cas9 | Functionally test putative regulatory elements and their cross-species activity [19] [20] |

Implications for Disease Modeling and Therapeutic Development

The rewiring of GRNs between species has profound implications for biomedical research. Studies reveal that many disease-associated genes, including those involved in Alzheimer's disease (APP, PSEN2), Huntington's disease (HTT), and Parkinson's disease (PARK2, PARK7), exhibit human-specific REST regulation absent in mouse models [19]. This regulatory divergence likely contributes to the limited translational success of neurobiological findings from mice to humans.

Similarly, in immunology, divergent gene regulation between mouse and human immune cells affects the expression of key effector molecules, potentially explaining differential drug responses and the failure of some therapeutic candidates that showed promise in mouse models [18]. Incorporating comparative GRN analysis into the drug development pipeline could help identify these discordances earlier, saving resources and improving clinical translation success rates.

Understanding both conserved and divergent aspects of GRNs enables more informed use of model organisms. Rather than abandoning mouse models, researchers can leverage insights from GRN comparisons to design more sophisticated experiments that account for species-specific regulatory architectures, ultimately accelerating the development of effective therapies for human diseases.

From Data to Networks: A Methodological Toolkit for GRN Inference and Comparison

The inference of Gene Regulatory Networks (GRNs) is a central problem in computational biology, essential for understanding how cells perform diverse functions, respond to environments, and how non-coding genetic variants cause disease [23]. GRN analysis seeks to unravel the complex web of interactions where transcription factors (TFs) bind DNA regulatory elements to activate or repress the expression of target genes [23]. The choice between bulk and single-cell RNA sequencing (RNA-seq) technologies represents a fundamental strategic decision in experimental design for GRN research, with profound implications for resolution, scale, and biological insight. Bulk RNA-seq provides a population-average perspective of gene expression, while single-cell RNA-seq (scRNA-seq) deconvolutes this average into its cellular constituents, enabling the discovery of heterogeneity, rare cell types, and cell-state transitions [24] [25]. This application note provides a structured framework for selecting and implementing these technologies within comparative GRN analysis research, offering detailed protocols, analytical workflows, and resource guidance tailored for researchers, scientists, and drug development professionals.

Technology Comparison: Resolution and Application Landscape

The strategic selection between bulk and single-cell RNA-seq hinges on research goals, sample characteristics, and resource constraints. Each method offers distinct advantages and limitations for GRN inference and expression module analysis.

Bulk RNA-seq is a next-generation sequencing (NGS) method to measure the whole transcriptome across a population of thousands to millions of cells. It provides an average gene expression profile for the entire sample, yielding a composite readout of all cellular constituents [24] [26]. This approach is analogous to viewing an entire forest from a distance. Its key strength lies in identifying consistent, population-level transcriptional changes, making it ideal for differential gene expression analysis between conditions (e.g., disease vs. healthy, treated vs. control) [24] [27]. It is also well-suited for transcriptome annotation, including identifying novel transcripts, isoforms, and gene fusions [24] [26]. However, its primary limitation is the inability to resolve cellular heterogeneity, potentially masking biologically significant rare cell populations or distinct expression programs of minority cell types [24] [25].

Single-cell RNA-seq profiles the whole transcriptome of individual cells. This high-resolution view is akin to examining every tree in a forest [24]. The core technological advance enabling this is the physical separation and barcoding of individual cells, often using microfluidic systems like the 10x Genomics Chromium platform, which partitions cells into Gel Beads-in-emulsion (GEMs) [24] [25]. Each cell's RNA is labeled with a unique cellular barcode, and each mRNA molecule with a Unique Molecular Identifier (UMI), allowing transcripts to be traced back to their cell of origin and mitigating PCR amplification biases [25] [28] [29]. scRNA-seq is uniquely powerful for characterizing heterogeneous cell populations, discovering novel cell types or states, and reconstructing developmental trajectories [24] [29]. For GRN analysis, it enables the inference of cell-type-specific regulatory networks [23]. The trade-offs include higher per-sample costs, more complex sample preparation (requiring viable single-cell suspensions), and computationally intensive data analysis [24].

The following table provides a quantitative comparison to guide experimental design.

Table 1: Strategic Comparison of Bulk and Single-Cell RNA-seq for GRN Research

| Feature | Bulk RNA-seq | Single-Cell RNA-seq |

|---|---|---|

| Resolution | Population average [24] | Single cell [24] |

| Key Applications | Differential expression, biomarker discovery, isoform & fusion detection [24] [26] | Cell type identification, heterogeneity analysis, developmental trajectories, cell-type-specific GRN inference [24] [26] |

| Tissue Input | Homogeneous or heterogeneous tissues | Requires dissociation into single-cell suspension [24] |

| Cost per Sample | Lower [24] | Higher [24] |

| Data Complexity | Lower; established analysis pipelines [24] | Higher; specialized tools for preprocessing and downstream analysis [24] [28] |

| Ideal for GRN Studies | Inferring aggregate network models from population data | Inferring context-specific, cell-type-resolved regulatory networks [23] |

Experimental Protocols: From Sample to Sequence

Bulk RNA-seq Workflow Protocol

The bulk RNA-seq workflow converts a tissue or cell sample into a gene expression count matrix. Adherence to protocol is critical for data quality.

Diagram 1: Bulk RNA-seq workflow. RIN: RNA Integrity Number.

Step 1: Sample Collection and RNA Extraction. Begin with tissue or a pooled cell population. Lyse cells using mechanical (bead beating), chemical (detergents), or enzymatic (proteinase K) methods, often with RNase inhibitors to preserve RNA integrity [30]. Isolate total RNA using phenol-chloroform (e.g., TRIzol) or silica column-based purification kits [30].

Step 2: RNA Quality Control (QC). Assess RNA concentration and purity spectrophotometrically (NanoDrop) or fluorometrically (Qubit). Critically, evaluate integrity using capillary electrophoresis (Agilent Bioanalyzer/TapeStation), aiming for an RNA Integrity Number (RIN) >7 [30].

Step 3: mRNA Selection. Enrich for messenger RNA using either:

- Poly(A) Selection: Uses oligo(dT) primers to bind polyadenylated tails of mRNAs. Ideal for high-quality RNA and focusing on protein-coding genes [30].

- rRNA Depletion: Removes abundant ribosomal RNAs via hybridization. Preferred for degraded samples (e.g., FFPE) or to capture non-polyadenylated RNAs [30].

Step 4-6: Library Preparation. Fragment purified RNA (~200 bp) enzymatically or chemically [30]. Reverse-transcribe fragments into cDNA using reverse transcriptase with random hexamer or oligo(dT) primers [30]. Prepare sequencing libraries by blunting ends, adding 'A' tails, ligating platform-specific adapters (including sample barcodes for multiplexing), and performing PCR amplification [30].

Step 7: Sequencing. Pooled libraries are sequenced on high-throughput platforms (e.g., Illumina NovaSeq), generating FASTQ files for downstream analysis [30].

Single-Cell RNA-seq Workflow Protocol

The scRNA-seq workflow introduces critical steps for partitioning and labeling individual cells.

Diagram 2: Single-cell RNA-seq workflow. GEMs: Gel Beads-in-emulsion; RT: Reverse Transcription; UMI: Unique Molecular Identifier.

Step 1: Tissue Dissociation and Single-Cell Suspension. Digest tissue using enzymatic or mechanical dissociation to create a single-cell suspension [24] [29]. This is a critical and delicate step; harsh conditions can induce artifactual stress gene expression. Performing dissociation at lower temperatures (e.g., 4°C) can minimize this [29]. Assess cell concentration, viability (typically >80%), and absence of clumps or debris [24].

Step 2: Cell Partitioning and Barcoding. In platforms like 10x Genomics, a single-cell suspension is loaded onto a microfluidic chip with gel beads. Each bead contains millions of oligonucleotides with a unique cell barcode, a UMI, and a poly(dT) sequence. The instrument partitions thousands of cells into nanoliter-scale GEMs, each ideally containing a single cell and a single gel bead [24] [25].

Step 3: Cell Lysis and Barcoding within GEMs. Inside each GEM, the gel bead dissolves, releasing the oligos. The cell is lysed, and its polyadenylated mRNA is captured by the poly(dT) primers. Reverse transcription occurs, creating cDNA molecules tagged with the cell barcode (indicating cell of origin) and a UMI (identifying the unique mRNA molecule) [24] [25].

Step 4: cDNA Amplification and Library Construction. The barcoded cDNA from all GEMs is pooled. Following cleanup, the cDNA is amplified via PCR, and a sequencing library is constructed by fragmentation, end-repair, and adapter ligation [24] [29].

Step 5: Sequencing. Libraries are sequenced on Illumina platforms. Sequencing depth must be sufficient to detect a robust number of genes per cell, typically requiring deeper sequencing than bulk RNA-seq [24].

Analytical Workflows: From Sequence Data to GRN Inference

Bulk RNA-seq Data Analysis

The goal of bulk RNA-seq analysis is to identify differentially expressed genes (DEGs) between conditions, which can inform GRN models.

Diagram 3: Bulk RNA-seq analysis workflow. DEG: Differentially Expressed Gene.

Preprocessing and Quantification: Process raw FASTQ files with quality control (FastQC) and adapter/quality trimming (Trimmomatic) [31]. A recommended best practice is a hybrid alignment-quantification approach: align reads to the genome using a splice-aware aligner like STAR to generate BAM files for QC, then use Salmon (in alignment-based mode) to accurately quantify transcript abundances, handling uncertainty in read assignment [27]. The output is a gene-level count matrix (rows=genes, columns=samples).

Differential Expression Analysis: In R/Bioconductor, the count matrix is used for statistical testing. The limma package (with its voom function to handle count-based data) is a powerful tool for this purpose [27]. It fits a linear model to the expression data for each gene and computes moderated t-statistics to identify DEGs between experimental groups, adjusting for multiple testing.

Single-Cell RNA-seq Data Analysis

scRNA-seq analysis requires specialized steps to handle technical noise and extract cellular heterogeneity.

Preprocessing and Quality Control: Using pipelines like Cell Ranger, sequencing reads are demultiplexed based on their cellular barcodes and UMIs, aligning them to the genome to generate a cell-by-gene count matrix [28]. Critical QC is performed on this matrix per barcode, filtering out:

- Low-quality cells: with low total counts, few detected genes, and high mitochondrial RNA fraction (indicating broken cells) [28].

- Doublets/multiplets: with unexpectedly high counts and gene numbers [28].

Normalization, Dimensionality Reduction, and Clustering: Counts are normalized to account for sequencing depth (e.g., by library size). Highly variable genes are selected for downstream analysis [28]. Technical noise is modeled, and the data is scaled. Principal Component Analysis (PCA) is performed, followed by graph-based clustering and non-linear dimensionality reduction (e.g., UMAP or t-SNE) to visualize cell groups [28]. These clusters represent putative cell types or states.

Differential Expression and GRN Inference: Marker genes for each cluster are identified by performing differential expression between clusters. For GRN inference, methods like SCENIC or LINGER can be applied. LINGER is a recent advancement that uses lifelong learning on atlas-scale external bulk data to dramatically improve the accuracy of inferring trans-regulatory (TF-TG) and cis-regulatory (RE-TG) interactions from single-cell multiome (RNA+ATAC) data [23].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Computational Tools for RNA-seq

| Category | Item | Function & Application |

|---|---|---|

| Wet-Lab Reagents | TRIzol / Qiazol | Monophasic solution of phenol and guanidine isothiocyanate for effective cell lysis and RNA stabilization during homogenization. [30] |

| RNase Inhibitors | Enzymes that non-competitively bind and inactivate RNases, crucial for preserving RNA integrity throughout the extraction process. [30] | |

| Oligo(dT) Beads | Magnetic beads coated with oligo(dT) sequences for isolation of eukaryotic mRNA from total RNA via poly(A) tail binding. [30] | |

| 10x Genomics Barcoded Gel Beads | Core component of single-cell partitioning; contain cell barcode, UMI, and oligo(dT) for mRNA capture in each reaction vessel. [24] [25] | |

| Computational Tools | STAR | Spliced aligner for mapping RNA-seq reads to a reference genome, accounting for intron junctions. [27] [31] |

| Salmon / kallisto | Ultra-fast alignment-free tools for transcript-level quantification from RNA-seq data using pseudoalignment. [27] | |

| Seurat / Scanpy | Comprehensive R and Python packages, respectively, for the preprocessing, normalization, analysis, and exploration of single-cell genomics data. [28] | |

| LINGER | Machine-learning method for inferring Gene Regulatory Networks (GRNs) from single-cell multiome data with high accuracy by incorporating external data. [23] |

Bulk and single-cell RNA-seq are complementary technologies that form the data foundation for modern transcriptomics and Gene Regulatory Network analysis. Bulk RNA-seq remains a powerful, cost-effective tool for profiling homogeneous samples or conducting large-scale differential expression studies. In contrast, single-cell RNA-seq is indispensable for deconstructing heterogeneity, identifying novel cell states, and inferring context-specific GRNs. The experimental and analytical protocols outlined herein provide a roadmap for generating high-quality data. For GRN research, the integration of scRNA-seq with other modalities (e.g., scATAC-seq via multiome assays) and advanced computational methods like LINGER represents the cutting edge, enabling a more precise and comprehensive dissection of the regulatory logic underlying development, disease, and treatment response. Strategic experimental design, beginning with a clear definition of biological questions, is paramount for selecting the appropriate sequencing technology and unlocking the full potential of transcriptomic data.

Gene Regulatory Networks (GRNs) are graph-level representations that describe the complex regulatory interactions between transcription factors (TFs) and their target genes, providing a systems-level understanding of cellular function, development, and disease pathology [32] [33]. The reconstruction of these networks from high-throughput genomic data represents a fundamental challenge in computational systems biology, with implications for drug target discovery and personalized medicine [32] [34]. The evolution of GRN inference has paralleled technological advancements in data generation—from microarray to single-cell RNA sequencing (scRNA-seq)—and methodological progress from simple correlation-based approaches to sophisticated machine learning and deep learning frameworks [35]. This review provides a comprehensive overview of GRN inference methodologies, detailing their experimental protocols, performance characteristics, and practical applications within the context of comparative GRN analysis.

Methodological Spectrum of GRN Inference

Correlation and Information-Theoretic Approaches

Early GRN inference methods primarily relied on statistical measures of association between gene expression profiles. Relevance Networks (RN) use pairwise correlation coefficients to identify potential regulatory relationships, while ARACNE and CLR advance this approach by incorporating mutual information and context likelihood, respectively, to eliminate indirect interactions [32] [34]. These unsupervised methods are computationally efficient and perform well on large-scale datasets but often struggle to distinguish direct causal relationships from indirect associations [34].

Machine Learning and Deep Learning Frameworks

More recent approaches leverage machine learning to capture the non-linear and combinatorial nature of gene regulation. GENIE3 employs tree-based ensemble methods to rank potential regulatory interactions, while SIRENE applies supervised learning using known regulatory interactions as training data [32] [34]. The field has since evolved to incorporate deep learning architectures:

- DeepSEM and DAZZLE utilize autoencoder-based structural equation models to parameterize the regulatory adjacency matrix while reconstructing gene expression inputs [36] [37].

- GRLGRN implements graph transformer networks to extract implicit links from prior GRN structures and refines gene embeddings using attention mechanisms [33].

- HyperG-VAE employs hypergraph variational autoencoders to model scRNA-seq data, simultaneously addressing cellular heterogeneity and gene module identification [38].

These neural network-based approaches demonstrate enhanced capability to model complex regulatory relationships but require substantial computational resources and careful regularization to prevent overfitting [36] [33].

Multi-Omic Integration Approaches

Methods such as PANDA and SPIDER bridge the gap between network reconstruction and epigenetic data by integrating transcriptomic information with chromatin accessibility data from DNase-seq or ATAC-seq [39]. SPIDER specifically overlaps transcription factor motif locations with open chromatin regions to construct an initial "seed" network, which is then refined through message-passing algorithms to estimate cell-line-specific regulatory interactions [39]. This epigenetic integration allows for the recovery of ChIP-seq verified transcription factor binding events even in the absence of corresponding sequence motifs [39].

Table 1: Classification of Representative GRN Inference Methods

| Method Class | Representative Methods | Core Algorithm | Data Requirements | Key Advantages |

|---|---|---|---|---|

| Correlation-Based | RN, WGCNA | Pearson/Spearman Correlation | Steady-state or time-series expression | Computational simplicity, fast execution |

| Information-Theoretic | ARACNE, CLR, PIDC | Mutual Information | Steady-state expression | Captures non-linear relationships |

| Machine Learning | GENIE3, SIRENE | Random Forests, Supervised Learning | Expression data, known interactions for supervised | Handles complex feature interactions |

| Deep Learning | DeepSEM, DAZZLE, GRLGRN | Autoencoders, Graph Neural Networks | scRNA-seq data, prior networks (optional) | High accuracy, models complex dependencies |

| Multi-Omic Integration | PANDA, SPIDER | Message Passing, Epigenetic Seeding | Expression + epigenetic data (DNase-seq, ATAC-seq) | Cell-type-specific predictions, incorporates chromatin accessibility |

Experimental Protocols and Workflows

Protocol for Epigenetically-Informed GRN Inference with SPIDER

The SPIDER pipeline enables GRN reconstruction by incorporating chromatin accessibility data [39].

Input Requirements:

- Genomic locations of transcription factor motifs (from position weight matrices mapped using FIMO)

- Open chromatin regions (from DNase-seq or ATAC-seq narrowPeak files)

- Gene regulatory regions (typically defined as 2kb windows centered on transcriptional start sites)

Procedure:

- Seed Network Construction: Intersect transcription factor motif locations with open chromatin regions and gene regulatory regions to create a binary seed network.

- Degree Normalization: Normalize edge weights in the seed network to emphasize connections to high-degree transcription factors and genes.

- Message Passing Optimization: Apply the PANDA message-passing algorithm to harmonize connections across all transcription factors and genes, optimizing the network structure.

- Network Validation: Compare predicted regulatory interactions against independently derived ChIP-seq data using AUC-ROC analysis.

Technical Notes: SPIDER networks have been validated using ENCODE ChIP-seq data across six human cell lines, demonstrating recovery of binding events without corresponding sequence motifs [39].

Protocol for scRNA-seq GRN Inference with DAZZLE

DAZZLE addresses zero-inflation in single-cell data through dropout augmentation [36] [37].

Input Requirements:

- scRNA-seq count matrix (cells × genes) transformed using log(x+1)

Procedure:

- Dropout Augmentation: During each training iteration, introduce simulated dropout noise by randomly setting a proportion of expression values to zero.

- Model Architecture: Implement a variational autoencoder with parameterized adjacency matrix, incorporating a noise classifier to identify likely dropout events.

- Stabilized Training: Delay introduction of sparse loss term by customizable epochs and use closed-form Normal distribution priors to improve stability.

- Network Extraction: Extract weights from the trained adjacency matrix as the inferred GRN.

Technical Notes: DAZZLE reduces parameter count by 21.7% and runtime by 50.8% compared to DeepSEM while maintaining improved stability and robustness to dropout noise [36].

Protocol for Prior-Informed GRN Inference with GRLGRN

GRLGRN leverages graph representation learning to incorporate prior network information [33].

Input Requirements:

- scRNA-seq expression matrix

- Prior GRN graph (can be from databases like STRING)

Procedure:

- Implicit Link Extraction: Use graph transformer network to extract implicit regulatory relationships from the prior GRN by analyzing five graph perspectives (TF→target, target→TF, TF→TF, reverse TF→TF, and self-connections).

- Gene Embedding Generation: Encode gene features using both the implicit link adjacency matrix and gene expression matrix.

- Feature Enhancement: Apply convolutional block attention module (CBAM) to refine gene embeddings.

- Regulatory Relationship Prediction: Feed refined embeddings into output module to infer gene regulatory relationships while using graph contrastive learning regularization to prevent over-smoothing.

Technical Notes: GRLGRN achieves average improvements of 7.3% in AUROC and 30.7% in AUPRC compared to baseline methods across seven cell-line datasets [33].

Performance Assessment and Validation

Benchmarking Standards and Metrics

GRN inference methods are typically evaluated using both synthetic and empirical datasets with known interactions [32] [34]. The Area Under the Receiver Operating Characteristic Curve (AUC-ROC) serves as the primary metric for method comparison, as it provides threshold-independent assessment of prediction accuracy [39] [34]. Additional measures including Area Under the Precision-Recall Curve (AUPRC), F1 score, and Matthews Correlation Coefficient offer complementary insights, particularly for imbalanced datasets [34] [33].

Table 2: Performance Comparison of GRN Inference Methods

| Method | Data Type | AUC-ROC Range | Key Strengths | Limitations |

|---|---|---|---|---|

| SPIDER | Bulk + Epigenetic | 0.57-0.60 (Naïve) to significantly improved with epigenetics | Cell-type-specific predictions, recovers non-motif binding | Requires epigenetic data |

| DAZZLE | scRNA-seq | Superior to DeepSEM in benchmarks | Robust to dropout, improved stability | Computational intensity |