Comparative Functional Genomics: Decoding the Evolution and Regulation of Gene Circuits

This article provides a comprehensive overview of comparative functional genomics and its pivotal role in deciphering the architecture and evolution of gene regulatory circuits.

Comparative Functional Genomics: Decoding the Evolution and Regulation of Gene Circuits

Abstract

This article provides a comprehensive overview of comparative functional genomics and its pivotal role in deciphering the architecture and evolution of gene regulatory circuits. It explores the foundational principles of regulatory network conservation across species, details cutting-edge methodological and computational tools for circuit mapping and analysis, and addresses key challenges in data interpretation and network optimization. By integrating validation and comparative frameworks, we highlight how these approaches yield insights into phenotypic divergence and disease mechanisms, offering powerful strategies for identifying novel therapeutic targets and advancing personalized medicine.

Blueprint of Life: Evolutionary Principles of Gene Regulatory Networks

Defining Gene Regulatory Networks (GRNs) and Their Core Components

Gene Regulatory Networks (GRNs) are collections of molecular regulators that interact with each other and determine gene activation and silencing in specific cellular contexts [1]. A comprehensive understanding of GRNs is fundamental to explaining cellular functions, responses to environmental changes, and how genetic variants cause disease [1]. In functional genomics, comparing the performance of GRN inference methods is crucial for selecting the right tool to uncover the regulatory mechanisms underlying complex phenotypes.

Core Components and Architecture of GRNs

GRNs are structured as interconnected, modular components with a hierarchical architecture [2]. The nodes of a GRN consist of genes and their cis-regulatory modules (CRMs), which control spatio-temporal gene expression patterns, while trans-acting transcription factors (TFs) and signaling pathways serve as the network "edges" [2]. This hierarchy ranges from evolutionarily stable "kernels" that specify essential developmental fields, through reusable "plug-in" modules, down to highly labile "differentiation gene batteries" responsible for cell type-specific processes [2].

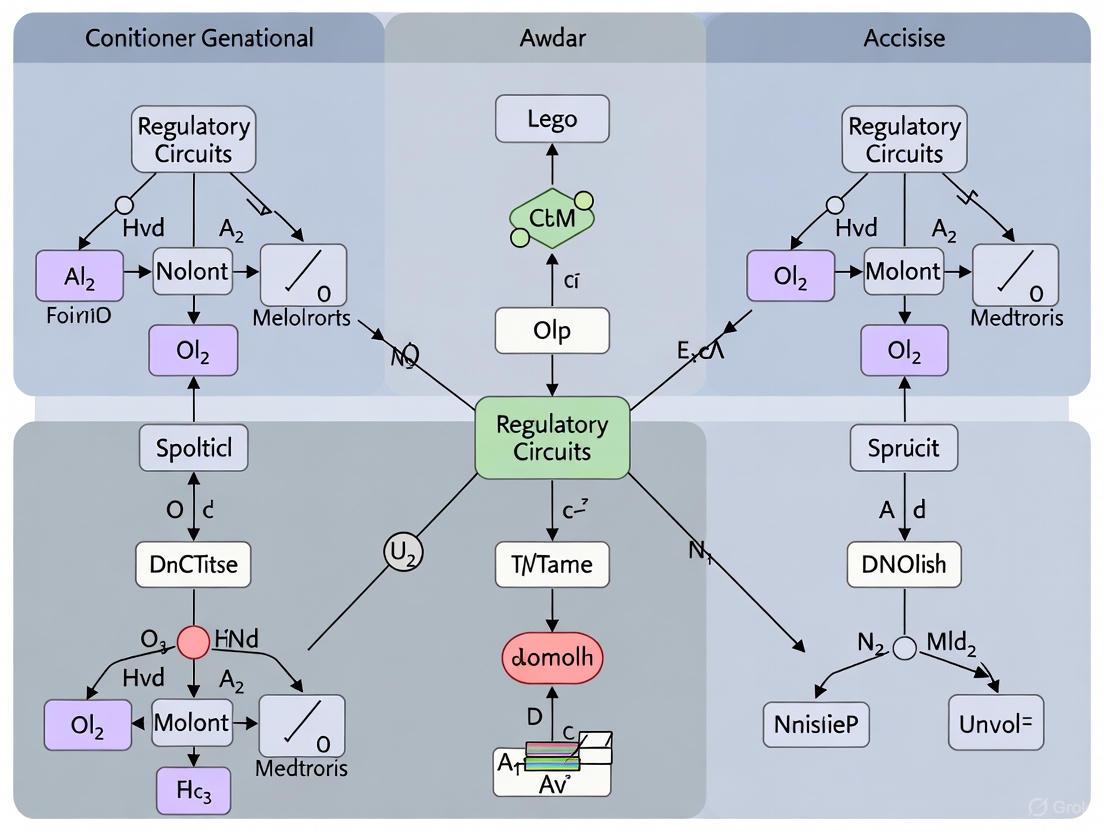

The following diagram illustrates the fundamental flow of information within a GRN and the hierarchical organization of its subcircuits.

Comparative Analysis of GRN Inference Methods

Inferring accurate GRNs from genomic data remains a major computational challenge [3]. Key desired properties of GRNs include sparsity (each gene regulated by few TFs), modular organization, hierarchical structure, and a scale-free topology where node connectivity follows a power-law distribution [4]. The following methods represent state-of-the-art approaches for GRN inference.

Performance Benchmarking of GRN Inference Methods

Table 1: Comparative Performance of GRN Inference Methods

| Method | Underlying Approach | Key Innovation | Reported Accuracy | Computational Speed | Best Use Case |

|---|---|---|---|---|---|

| LINGER [1] | Lifelong neural network | Integrates atlas-scale external bulk data with single-cell multiome data via elastic weight consolidation | 4-7x relative increase in AUC over existing methods; significantly higher AUPR ratio [1] | Moderate (neural network training) | Cell type-specific GRNs from single-cell multiome data; disease variant interpretation |

| SCORPION [5] | Message-passing algorithm + meta-cells | Coarse-grains single-cell data to reduce sparsity; integrates protein-protein interaction and motif data | 18.75% higher precision and recall than 12 benchmarked methods [5] | Fast (message-passing on desparsified data) | Population-level comparisons; large single-cell atlases (e.g., cancer cohorts) |

| LSCON [6] | Normalized least squares regression | Adds normalization to LSCO to prevent hyper-connected genes from extreme expression values | Better or equal accuracy to LASSO, especially with extreme values in data [6] | Very fast (order of magnitude faster than LASSO) [6] | Large-scale perturbation data (e.g., L1000); rapid screening |

| Hybrid ML/DL [7] | Combined CNN + machine learning | Hybrid models leveraging convolutional neural networks and ensemble methods | >95% accuracy on holdout test datasets [7] | Moderate (model training) | Non-model species via transfer learning; plant genomics |

Detailed Experimental Protocols

LINGER Protocol for Single-Cell Multiome Data

Objective: Infer cell population, cell type-specific, and cell-level GRNs from single-cell multiome (RNA+ATAC) data.

Input Requirements:

- Count matrices of gene expression and chromatin accessibility

- Cell type annotations

- External bulk data from diverse cellular contexts (e.g., ENCODE)

- TF-motif prior knowledge

Methodology:

- Pre-training: Train a neural network model (BulkNN) on external bulk data to predict target gene expression from TF expression and regulatory element accessibility [1].

- Refinement: Apply Elastic Weight Consolidation (EWC) loss to fine-tune on single-cell data, using bulk data parameters as a prior to prevent catastrophic forgetting [1].

- Regularization: Incorporate TF-RE motif matching through manifold regularization, enriching for TF motifs binding to REs in the same regulatory module [1].

- Inference: Extract regulatory strengths using Shapley values to estimate feature contributions for each gene [1].

- Network Construction: Build cell type-specific GRNs based on the general GRN and cell type-specific profiles [1].

Validation: Compare against ChIP-seq ground truth data using AUC and AUPR metrics; validate cis-regulatory predictions against eQTL data from GTEx and eQTLGen [1].

LSCON Protocol for Perturbation Data

Objective: Infer GRN from gene perturbation data (e.g., knockout) while minimizing false positives from extreme expression values.

Input Requirements:

- Gene expression fold-change matrix from perturbation experiments

- Experimental design matrix specifying perturbation conditions

Methodology:

- Data Processing: Calculate fold changes as log2 ratios between perturbed and wild-type expression levels [6].

- Model Fitting: Apply least squares regression to fit gene expression responses to perturbations [6].

- Normalization: Perform column-wise normalization using the equation:

Xij = Aij / (∑|Aj|/N), where N is gene count, A is the predicted GRN, j is regulator, and i is target [6]. - Thresholding: Apply cut-off to identify significant regulatory interactions.

Validation: Benchmark using synthetic data from GeneSPIDER and GeneNetWeaver with known ground truth; compare to GENIE3, LASSO, and Ridge regression using precision-recall metrics [6].

Visualization of GRN Inference Workflows

SCORPION Algorithm for Single-Cell Data

The SCORPION algorithm addresses the challenge of high sparsity in single-cell RNA-seq data through a multi-step message-passing approach.

Key Properties of Biological GRNs

Understanding the structural properties of GRNs is essential for developing accurate inference methods and interpreting their results.

Table 2: Key Research Reagent Solutions for GRN Studies

| Resource Category | Specific Examples | Function in GRN Research | Key Applications |

|---|---|---|---|

| Sequencing Assays | scRNA-seq, scATAC-seq, Multiome (10x Genomics) | Profile gene expression and chromatin accessibility at single-cell resolution | Cell type-specific GRN inference; regulatory heterogeneity analysis [5] [1] |

| Perturbation Tools | CRISPR-based Perturb-seq, shRNA knockdown (LINCS L1000) | Systematically perturb genes and measure transcriptomic effects | Causal inference of regulatory relationships; validation of TF-target interactions [6] [4] |

| Prior Knowledge Bases | STRING (protein-protein interactions), JASPAR (TF motifs), ENCODE | Provide validated regulatory information for integration with omics data | Message-passing algorithms (SCORPION); neural network regularization (LINGER) [5] [1] |

| Validation Resources | ChIP-seq data, eQTL datasets (GTEx, eQTLGen) | Ground truth data for benchmarking GRN inference accuracy | Method validation; calculation of AUC/AUPR performance metrics [1] |

| Synthetic Data Tools | GeneSPIDER, GeneNetWeaver (GNW) | Generate simulated data with known ground truth networks | Method development and benchmarking without experimental noise [6] |

The field of GRN inference has evolved from correlation-based methods to sophisticated approaches that integrate multi-omics data, prior knowledge, and advanced machine learning. Method selection should be guided by data type (bulk, single-cell, or multiome), biological question, and computational constraints. LINGER excels for single-cell multiome data with available external references, SCORPION is ideal for population-level comparisons across many single-cell samples, LSCON offers speed for large perturbation datasets, and hybrid ML/DL methods facilitate cross-species knowledge transfer. Understanding the core architectural principles of GRNs—their sparsity, modularity, and hierarchy—enhances the interpretation of inferred networks and their biological implications in comparative functional genomics.

Conservation of Regulatory Network Structures Across Metazoans

A fundamental question in evolutionary biology is how the diverse body plans and physiological traits of metazoans are encoded by genomic regulatory programs. Gene regulatory networks (GRNs), comprising transcription factors, their target cis-regulatory elements, and the interactions between them, represent the core control systems governing development and cellular functions [8]. Understanding the extent to which the structures of these networks are conserved across evolution provides crucial insights into the mechanisms driving both phenotypic stability and innovation. Comparative functional genomics approaches have begun to unravel the complex interplay between network conservation and rewiring, revealing both remarkably preserved architectural principles and species-specific adaptations. This guide objectively compares the conservation of regulatory network structures across metazoan species, synthesizing experimental data from large-scale comparative studies to provide researchers with a framework for analyzing GRN evolution.

Comparative Analysis of Regulatory Network Properties

Structural Conservation Amidst Functional Divergence

Large-scale comparative studies have revealed a paradoxical relationship between regulatory network structure and function: while global architectural properties show remarkable conservation, the specific regulatory connections undergo extensive evolutionary rewiring.

Table 1: Conservation of Regulatory Network Properties Across Metazoans

| Network Property | Human | D. melanogaster | C. elegans | Conservation Pattern |

|---|---|---|---|---|

| High-Occupancy Target (HOT) Regions | ~50% of binding events | ~50% of binding events | ~50% of binding events | Highly Conserved proportion [9] |

| Feed-Forward Loop Motif | Most abundant | Most abundant | Most abundant | Highly Conserved enrichment pattern [9] |

| Cascade Motif | Least abundant | Least abundant | Least abundant | Highly Conserved depletion pattern [9] |

| Network Hierarchy | 33% master regulators | 7% master regulators | 13% master regulators | Divergent organizational structure [9] |

| Upward-Flowing Edges | 30% | 7% | 22% | Variable feedback patterns [9] |

| TF Binding Motif Recognition | Similar motifs for 12/31 families | Similar motifs for 12/31 families | Similar motifs for 12/31 families | Conserved for orthologous families [9] |

| Target Gene Function | Limited conservation | Limited conservation | Limited conservation | Extensive rewiring of connections [9] |

A landmark study mapping 1,019 genome-wide transcription factor binding datasets across human, fly, and worm demonstrated that structural properties of regulatory networks remain remarkably conserved despite extensive functional divergence of individual network connections [9]. This conservation is particularly evident in the prevalence of high-occupancy target regions, which consistently account for approximately 50% of all regulatory factor binding events across these evolutionarily distant species [9]. Similarly, local network motifs show consistent enrichment patterns, with feed-forward loops representing the most abundant motif type and cascade motifs being consistently depleted across all three species [9].

Mechanisms of Network Evolution

The evolution of regulatory networks occurs primarily through alterations in cis-regulatory elements, which serve as the functional nodes where transcription factors interact with DNA to control gene expression.

Table 2: Types of Cis-Regulatory Changes and Their Functional Consequences

| Type of Change | Sequence Alteration | Potential Functional Consequence | Evidence |

|---|---|---|---|

| Internal Changes | Appearance of new TF binding site | Input gain within GRN; Cooptive redeployment | Site gains enable new regulatory connections [8] |

| Loss of existing TF binding site | Input loss within GRN; Loss of function | Site losses disrupt ancestral regulation [8] | |

| Change in site number/spacing | Quantitative output change | Alters expression levels without changing pattern [8] | |

| Contextual Changes | Translocation of module to new gene | Cooptive redeployment to new GRN | Mobile elements translocate regulatory modules [8] |

| Module deletion | Loss of function | Eliminates regulatory control [8] | |

| Module duplication | Subfunctionalization | Enables specialization of paralogous genes [8] |

The evolution of cis-regulatory elements follows distinct patterns depending on the type of regulatory change. While the identity of transcription factor binding sites is crucial for determining regulatory function, the arrangement, spacing, and number of these sites often show considerable flexibility [8]. Studies of Drosophila eve stripe enhancers across drosophilid species revealed that >70% of specific binding sites were not conserved, yet these modules produced identical expression patterns because they responded to the same qualitative inputs [8]. This demonstrates that cis-regulatory function can be preserved despite extensive sequence divergence, provided that the critical regulatory logic is maintained.

Experimental Approaches for Comparative GRN Analysis

Methodologies for Mapping Regulatory Networks

Chromatin Immunoprecipitation with Sequencing (ChIP-seq) Protocol Summary: Cells are cross-linked to preserve protein-DNA interactions, followed by chromatin fragmentation and immunoprecipitation with specific transcription factor antibodies. After reversing cross-links, purified DNA is sequenced and mapped to the reference genome to identify binding sites [9]. Quality Control: The modENCODE/ENCODE standards require extensive antibody characterization and at least two independent biological replicates per experiment. Binding sites are identified using Irreproducible Discovery Rate analysis to ensure robust peak calling [9]. Applications: Used to map 165 human, 93 worm, and 52 fly transcription factors across diverse cell types and developmental stages, generating 1,019 datasets for comparative analysis [9].

Single-Cell Multiomics Assays Protocol Summary: Single-nucleus sequencing approaches simultaneously profile multiple molecular modalities from the same cells. The 10x Multiome assay couples gene expression (RNA-seq) with chromatin accessibility (ATAC-seq) in the same cell, while snm3C-seq profiles DNA methylation with 3D genome architecture [10]. Cross-Species Integration: Unsupervised clustering based on gene expression or DNA methylation patterns, with datasets integrated across species using orthologous genes as features for comparative analysis [10]. Applications: Enabled comparison of primary motor cortex regulatory programs across human, macaque, marmoset, and mouse, profiling over 200,000 cells total [10].

Computational Framework for GRN Comparison

Network Construction and Motif Analysis Regulatory networks are constructed by predicting gene targets of each transcription factor using algorithms like TIP (Transcriptional Interaction Predictor) [9]. Simulated annealing algorithms then reveal network organization into hierarchical layers of master regulators, intermediate regulators, and low-level regulators [9]. Network motifs are identified by searching for enriched sub-graphs within the overall network structure, with statistical significance determined through comparison to randomized networks [9].

Self-Organizing Maps for Co-Association Patterns Self-organizing maps provide an approach to detect contextual transcription factor co-associations at distinct genomic regions, enabling exploration of the full combinatorial space of regulatory factor binding beyond traditional co-association methods [9]. This method reveals that specific contextual co-associations are often conserved for orthologous regulatory factors, with few being entirely organism-specific [9].

Diagram 1: Hierarchical organization of gene regulatory networks showing master regulators, intermediate regulators, and target genes. Feed-forward loops (blue) represent the most conserved network motif, while cascade connections (green) show variable conservation across species.

Case Studies in Regulatory Network Evolution

Vertebrate Brain Evolution

Recent single-cell multiomics analysis of the primary motor cortex across human, macaque, marmoset, and mouse revealed both conserved and divergent aspects of regulatory programs [10]. The study profiled over 200,000 cells, identifying 2,689 mammal-conserved genes with similar expression patterns across all four species, representing approximately 20% of expressed orthologues [10]. These conserved genes primarily function in fundamental processes including nervous system development and cation channel regulation.

Notably, the research demonstrated that species-biased candidate cis-regulatory elements are more likely to contribute to divergent gene expression patterns, with transposable elements contributing to nearly 80% of human-specific candidate cis-regulatory elements in cortical cells [10]. This highlights the importance of repetitive elements in driving regulatory innovation during mammalian evolution.

Adaptive Radiation in Cichlid Fishes

The spectacular adaptive radiation of East African cichlid fishes provides an exceptional model for studying regulatory network evolution associated with ecological adaptation. Comparative GRN analysis of five cichlid species revealed extensive network rewiring events associated with phenotypic traits under selection [11].

A novel computational pipeline predicted regulators for co-expression modules along the cichlid phylogeny, identifying 7587 orthologous genes (40% of total) exhibiting state changes in module assignment across evolutionary branches [11]. This transcriptional rewiring from the last common ancestor included several developmental transcription factors such as tbx20, nkx3-1, and hoxd10, with unique state changes observed in 655 genes along ancestral nodes [11]. In the visual system, discrete regulatory variants in transcription factor binding sites disrupted regulatory edges across species and segregated according to lake species phylogeny and ecology, demonstrating GRN rewiring associated with visual adaptation [11].

Diagram 2: Model of gene regulatory network rewiring during cichlid fish adaptive radiation. Transcription factor binding site mutations drive the evolution of distinct regulatory networks in different lake environments, leading to ecological adaptations through modified gene expression.

Table 3: Key Research Reagents for Comparative GRN Studies

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| ChIP-Validated Antibodies | Immunoprecipitation of specific transcription factors for binding site mapping | Profiling 165 human, 93 worm, and 52 fly transcription factors [9] |

| Single-Cell Multiome Kits | Simultaneous profiling of gene expression and chromatin accessibility in same cell | Comparing regulatory programs across human, macaque, marmoset, mouse motor cortex [10] |

| Cross-Species Orthologue Annotations | Mapping homologous genes and regulatory elements across species | Identifying 2,689 mammal-conserved genes with similar expression patterns [10] |

| Genome Assemblies & Annotations | Reference sequences for mapping functional genomic data | Cape coral snake genome (1.82 Gb, 704 scaffolds, N50 80.2 Mb) for venom gland analysis [12] |

| Motif Discovery Tools | Identification of enriched transcription factor binding motifs | Finding conserved motifs across 12 of 31 orthologous transcription factor families [9] |

| Network Inference Algorithms | Construction of regulatory networks from binding and expression data | TIP algorithm for predicting gene targets of transcription factors [9] |

| Self-Organizing Map Software | Analysis of contextual transcription factor co-associations | Revealing complex combinatorial binding patterns at distinct genomic regions [9] |

The comparative analysis of regulatory networks across metazoans reveals a complex evolutionary landscape characterized by deeply conserved architectural principles alongside extensive rewiring of specific regulatory connections. The structural properties of networks—including the prevalence of high-occupancy target regions and specific network motifs—show remarkable preservation across large evolutionary distances, while the functional implementation of these networks through specific gene regulatory connections demonstrates considerable divergence. This evolutionary dynamic enables both phenotypic stability in fundamental biological processes and innovation in species-specific adaptations. The integration of functional genomics approaches across multiple species and cell types provides researchers with powerful experimental frameworks for deciphering the regulatory logic underlying metazoan diversity, with important implications for understanding the genetic basis of evolutionary innovations and human disease.

Evolutionary Divergence and Re-wiring of Regulatory Connections

The divergence of phenotypes across species is driven not merely by changes in gene sequences, but profoundly by the rewiring of gene regulatory networks (GRNs)—the control systems that govern when, where, and to what extent genes are expressed [13] [14]. This paradigm shift, prefigured by the insight that evolutionary innovation often stems from molecular changes "other than sequence differences in proteins," places the evolution of regulatory logic at the center of comparative functional genomics [14]. Rewiring—the gain, loss, or alteration of regulatory connections between transcription factors (TFs) and their target genes—serves as a fundamental mechanism for the evolution of novel traits, disease states, and species-specific adaptations [15] [16]. By comparing GRNs across species and conditions, researchers can illuminate the genetic basis of diverse phenotypes, from fungal morphology to cardiometabolic disease in humans [13] [15] [17]. This guide objectively compares the performance of different experimental approaches for dissecting regulatory rewiring, providing a foundational resource for scientists investigating the evolution of regulatory circuits.

Comparative Analysis of Key Model Systems and Their Findings

The investigation of regulatory rewiring employs diverse model systems, each offering unique insights and technical advantages. The table below synthesizes core findings from key studies in fungal and bacterial systems, which provide tractable models for unraveling evolutionary principles.

Table 1: Comparative Findings from Key Rewiring Studies in Model Organisms

| Study System | Key Regulatory Factor | Core Finding on Rewiring | Phenotypic Consequence | Experimental Evidence |

|---|---|---|---|---|

| Aspergillus nidulans vs. A. flavus [15] | NsdD (GATA-type TF) | Extensive GRN rewiring despite conserved DNA-binding domain; 502 vs. 674 direct targets identified. | Species-specific differences in conidiophore morphology and mycotoxin (ST/AF) production. | RNA-seq, ChIP-seq, cross-complementation. |

| Pseudomonas fluorescens [16] | NtrC & PFLU1132 (RpoN-EBPs) | Hierarchical rewiring; alternative pathways unmasked only upon deletion of preferred TF (NtrC). | Rescue of flagellar motility in a ΔfleQ mutant. | Whole-genome resequencing, knockout/complementation, RNA-seq. |

These studies demonstrate that rewiring is a pervasive mechanism for innovation. The fungal study reveals how a conserved transcription factor can be redeployed through network changes to generate species-specific traits [15]. The bacterial system illustrates that rewiring potential is hierarchical and constrained by network architecture, with some TFs being "preferred" for co-option due to specific molecular properties [16].

Detailed Methodologies for Mapping Regulatory Rewiring

A multi-faceted, omics-driven approach is essential to conclusively demonstrate evolutionary rewiring. The following protocols detail key methodologies used in the featured studies.

Protocol 1: Comparative GRN Analysis Using Multi-Omics

This protocol, adapted from the Aspergillus study, identifies rewiring by comparing regulatory networks across two species [15].

Strain and Growth Conditions:

- Utilize wild-type and transcription factor knockout strains (e.g., ΔnsdD) for both species under comparison.

- Culture biological replicates under defined conditions relevant to the phenotype (e.g., vegetative growth, asexual development). Harvest cells at specific, comparable developmental stages.

Transcriptomic Profiling (RNA-seq):

- Extract total RNA using a standardized kit (e.g., Qiagen RNeasy). Assess RNA integrity (RIN > 8.0).

- Prepare sequencing libraries (e.g., Illumina TruSeq) and sequence on an appropriate platform (e.g., Illumina NovaSeq) to generate >20 million paired-end reads per sample.

- Process data: Quality-trim reads (Trimmomatic), align to respective reference genomes (HISAT2), and quantify gene-level counts (featureCounts).

- Identify differentially expressed genes (DEGs) using a statistical framework (e.g., DESeq2) with a threshold of |log2FoldChange| > 1 and adjusted p-value < 0.05.

Genome-Wide TF Binding Mapping (ChIP-seq):

- For each species, engineer a strain expressing a functional, epitope-tagged version of the TF (e.g., NsdD::3xFLAG) from its native locus.

- Cross-link cells (1% formaldehyde, 10 min), quench with glycine, and lyse. Sonicate chromatin to an average fragment size of 200–500 bp.

- Immunoprecipitate DNA-protein complexes using an antibody against the tag (e.g., anti-FLAG M2 antibody). Reverse crosslinks and purify DNA.

- Prepare and sequence ChIP-seq libraries. Use input DNA as a control.

- Process data: Align reads (Bowtie2), call peaks (MACS2) with a q-value < 0.01. Identify high-confidence direct targets.

Data Integration and Network Inference:

- Integrate ChIP-seq (direct targets) and RNA-seq (DEGs) data to define the core, direct regulon of the TF in each species.

- Compare the sets of direct targets between species to identify conserved targets (orthologous genes bound in both) and rewired targets (genes bound only in one species).

- Perform motif analysis (HOMER) on the bound regions to identify and validate the conserved binding motif.

Protocol 2: Experimental Evolution for Hierarchical Rewiring

This protocol, based on the P. fluorescens motility rescue model, reveals hidden rewiring potential and TF hierarchy [16].

Strain Construction:

- Start with a base strain deleted for a master regulator of a selectable phenotype (e.g., ΔfleQ, rendering the bacterium non-motile).

- Construct a double-knockout strain by additionally deleting the "preferred" rewiring TF (e.g., ΔfleQ ΔntrC).

Selection for Phenotypic Rescue:

- Plate each knockout strain onto soft agar plates (e.g., 0.25% LB agar) that impose strong selection for the lost phenotype (e.g., motility is required to access nutrients).

- Incubate and monitor for the emergence of motile variants. Isolate independent motile clones from the expansion frontier.

Genetic Analysis of Motile Variants:

- Perform whole-genome resequencing (Illumina) of motile isolates and the ancestral strain.

- Identify causal mutations via variant calling (e.g., BCFtools) by comparing isolate genomes to the ancestor.

- Confirm causality by reintroducing the identified mutation into the original non-motile strain via allelic exchange and testing for phenotype restoration.

Transcriptomic Validation:

- Conduct RNA sequencing (as in Protocol 1, steps 2.2-2.3) on the evolved motile variant and the non-motile ancestor.

- Analyze the transcriptome to confirm that the rewiring event has restored expression of the genes required for the selected phenotype (e.g., flagellar genes).

Visualization of Core Concepts and Workflows

Transcriptional Network Rewiring Logic

Multi-Omics Workflow for Comparative GRN Analysis

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful dissection of regulatory rewiring relies on a suite of specialized reagents and tools. The following table catalogues critical solutions employed in the featured studies.

Table 2: Key Research Reagent Solutions for Rewiring Studies

| Reagent / Solution | Function / Application | Example Use-Case |

|---|---|---|

| Epitope-Tagged TF Strains | Enables immunoprecipitation of TF-DNA complexes in ChIP-seq experiments. | Constructing NsdD::3xFLAG strains in A. nidulans and A. flavus for genome-wide binding site mapping [15]. |

| TF-Knockout Mutant Strains | Provides a baseline to identify TF-dependent gene expression and phenotypes through comparison with wild-type. | ΔnsdD strains used to define the NsdD regulon via RNA-seq [15]; ΔfleQ and ΔfleQΔntrC strains used to select for rewiring events [16]. |

| Chromatin Immunoprecipitation (ChIP) Kits | Standardized protocols and buffers for efficient and reproducible cross-linking, shearing, and IP of chromatin. | Mapping direct targets of NsdD using an anti-FLAG antibody [15]. |

| RNA-seq Library Prep Kits | Facilitate the conversion of purified RNA into sequencing-ready libraries with high fidelity and minimal bias. | Profiling gene expression in wild-type vs. mutant strains across different cell types and conditions [15] [16]. |

| Soft Agar Motility Assay | A phenotypic selection platform that imposes strong selection for motility, enabling experimental evolution of rewiring. | Selecting for P. fluorescens mutants that have rewired motility regulation in a ΔfleQ background [16]. |

| Phylogenetic Inference Algorithms (e.g., MRTLE) | Computational tools that leverage evolutionary relationships to improve the accuracy of regulatory network predictions across species. | Inferring ancestral GRN states and tracing the evolution of network connections [18] [14]. |

High-Occupancy Target (HOT) Regions and Their Dynamic Roles

High-Occupancy Target (HOT) regions represent one of the most intriguing findings in modern genomics, constituting compact genomic loci bound by a surprisingly large number of transcription factors (TFs). These regulatory hubs were initially identified in invertebrate model organisms like Caenorhabditis elegans and Drosophila melanogaster, where they were found to be bound by 15 or more different TFs, often functionally unrelated and sometimes lacking their consensus binding motifs [19]. Subsequent research has confirmed that HOT regions are a ubiquitous feature of the human gene-regulation landscape, serving as critical integration points where signals from diverse regulatory pathways converge to quantitatively tune promoters for RNA polymerase II recruitment [20].

The fundamental mystery of HOT regions lies in understanding how hundreds of transcription factors coordinate clustered binding to regulatory DNA and what functional roles these regions play in gene regulation. Proposed functions have included mediators of ubiquitously expressed genes, sinks for sequestering excess TFs, insulators, DNA origins of replication, and patterned developmental enhancers [19]. Within the context of comparative functional genomics regulatory circuits research, HOT regions represent specialized regulatory architectures that potentially operate as master control nodes within broader gene regulatory networks, with particular relevance to developmental processes and disease pathogenesis [21].

Comparative Analysis of Methodologies for HOT Region Identification

Computational Versus Experimental Approaches

The identification and characterization of HOT regions have proceeded along two primary methodological pathways: computational motif-based prediction and experimental ChIP-seq based discovery. Each approach offers distinct advantages and limitations, with significant implications for the resulting HOT region catalogs and their biological interpretations.

Table 1: Comparison of Computational vs. Experimental HOT Region Identification Methods

| Feature | Computational Motif-Based Approach | Experimental ChIP-Seq Approach |

|---|---|---|

| Data Source | DNase I hypersensitive sites (DHS) combined with TF motif scanning [19] | Chromatin immunoprecipitation followed by sequencing [20] |

| TF Coverage | 542 TFs using position weight matrices (PWMs) [19] | 96 DNA-associated proteins across 5 cell lines [20] |

| Identification Basis | Colocalization of TF motif binding sites ("TFBS complexity") [19] | Empirical binding peaks from multiple TF ChIP-seq experiments [20] |

| Key Advantage | Not limited by antibody availability; consistent analysis pipeline [19] | Captures in vivo binding including indirect recruitment [20] |

| Key Limitation | Predictive rather than empirically confirmed binding [19] | Limited to TFs with available antibodies/chip-grade reagents [20] |

| Typical HOT Region Count | 59,986 distinct HOT regions across 154 cells/tissues [19] | 7,227 regions with 75 canonical TFs after filtering [20] |

| Cell-Type Coverage | Broad coverage across many cell types [19] | Deeper coverage in specific well-studied cell lines [20] |

The computational approach, as exemplified by the iFORM method applied to DHS data, identifies HOT regions through TF motif scanning using position weight matrices for hundreds of TFs [19]. This method defined a "TFBS complexity" score based on the number and proximity of contributing transcription factor binding sites, with regions exhibiting high scores designated as HOT regions. In contrast, the experimental approach identifies HOT regions through comprehensive analysis of ChIP-seq data from multiple DNA-associated proteins, considering regions occupied by many different TFs as HOT regions [20].

Notably, these approaches identify different sets of genomic regions with varying properties. Computational HOT regions demonstrate stronger skewing toward occupancy by large numbers of transcription factors (median = 9 TFs in H1 cells) compared to experimental HOT regions (median = 2 TFs in H1 cells) [19]. Furthermore, the proportion of motifless HOT regions (those without recognizable binding motifs for the bound TFs) differs significantly between methods, with computational HOT regions having a higher percentage (36% vs 20%) [19]. This discrepancy highlights the fundamental distinction between predicted binding potential and empirically demonstrated occupancy.

Functional Correlates and Validation

Both methodological approaches enable the correlation of HOT regions with various genomic features and functional elements. The majority of HOT regions colocalize with RNA polymerase II binding sites, though many are not near the promoters of annotated genes [20]. HOT regions identified through ChIP-seq data show strong enrichment at promoters, with 61% located at consensus promoters in H1-hESC cells, compared to only 22-39% in other cell types like HeLa-S3 and GM12878 [20]. This pattern suggests heightened HOT region activity in pluripotent cells, potentially reflecting a more interconnected regulatory architecture in stem cells.

At HOT promoters, transcription factor occupancy demonstrates strong predictive power for transcription preinitiation complex recruitment and moderate predictive value for initiating Pol II recruitment, but only weak correlation with elongating Pol II and RNA transcript abundance [20]. This finding suggests that HOT regions primarily function in the initial stages of transcription initiation rather than later stages of elongation or RNA processing.

Experimental Protocols for HOT Region Analysis

ChIP-Seq Workflow for Empirical HOT Region Identification

The Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) protocol represents the gold standard for empirical identification of HOT regions. The detailed methodology encompasses several critical stages:

Cell Culture and Crosslinking: Human cell lines (e.g., GM12878, H1-hESC, HeLa-S3, HepG2, K562) are cultured under standard conditions. Proteins are crosslinked to DNA using 1% formaldehyde for 10 minutes at room temperature, followed by quenching with 125mM glycine [20].

Chromatin Preparation and Shearing: Crosslinked cells are lysed, and chromatin is fragmented by sonication to generate 200-600 bp fragments. Optimal shearing efficiency is verified by agarose gel electrophoresis [20].

Immunoprecipitation: Sheared chromatin is incubated with target-specific antibodies against transcription factors of interest. Immune complexes are recovered using protein A/G magnetic beads. Multiple individual ChIP experiments are performed for each transcription factor [20].

Library Preparation and Sequencing: Immunoprecipitated DNA is reverse-crosslinked, purified, and converted into sequencing libraries using standard kits. Libraries are quantified by qPCR and sequenced on high-throughput platforms (typically Illumina) to generate 25-50 million reads per sample [20].

Peak Calling and HOT Region Identification: Sequence reads are aligned to the reference genome (hg19). The UniPeak software extends the QuEST peak-calling algorithm to parallel analysis of multiple samples, employing kernel density estimation to compute smooth density profiles and identify enriched regions where the profile exceeds a threshold of fold enrichment relative to background [20]. After normalizing peak intensities with variance-stabilizing transformations, regions occupied by numerous TFs are classified as HOT regions.

Figure 1: ChIP-seq workflow for empirical HOT region identification. The process begins with wet-lab procedures (yellow) followed by computational analysis (green), culminating in HOT region identification (red).

Computational Identification Using DHS and Motif Scanning

The computational pipeline for HOT region identification leverages DNase I hypersensitivity data and transcription factor motif analysis:

DNase-Seq Data Collection: DNase I hypersensitive sites are identified through DNase-seq experiments from ENCODE and Roadmap Epigenomics for 154 human cell and tissue types. Only regions of open chromatin are considered for subsequent analysis [19].

Transcription Factor Motif Scanning: The iFORM algorithm scans DHS regions with position weight matrices for 542 transcription factors to identify potential binding sites. The FIMO (Find Individual Motif Occurrences) algorithm is typically employed with a significance threshold of p < 1×10⁻⁵ [19].

TFBS Complexity Calculation: A "TFBS complexity" score is computed for each region based on the number and proximity of contributing transcription factor binding sites. Gaussian kernel density estimation is applied across binding profiles to identify TFBS-clustered regions [19].

HOT Region Classification: Regions with complexity scores in the top 10th percentile are classified as HOT regions, while those in the lower percentiles are designated LOT (low-occupancy target) regions. Validation against experimental ChIP-seq data confirms the predictive power of this approach [19].

Saturation Analysis: To assess catalog completeness, saturation analysis is performed by sampling subsets of cell types and extrapolating to predict the total number of HOT regions genome-wide (approximately 107,184), suggesting current catalogs cover more than half of all potential HOT regions [19].

Figure 2: Computational workflow for HOT region identification using DHS data and motif scanning. The process integrates epigenetic data with bioinformatic prediction to generate genome-wide HOT region catalogs.

Functional Roles of HOT Regions in Development and Disease

Association with Developmental Processes and Cell Identity

HOT regions demonstrate strong associations with genes that control and define developmental processes of respective cell and tissue types. During embryonic stem cell differentiation, HOT regions show dynamic regulation, with evidence of developmental persistence at primitive enhancers [19]. This pattern suggests that HOT regions function as stable regulatory hubs that maintain core transcriptional programs while allowing for coordinated responses to developmental cues.

The functional significance of HOT regions is further underscored by their unique epigenetic signatures that distinguish them from typical enhancers and super-enhancers. HOT regions are associated with decreased nucleosome density and increased nucleosome turnover, primarily occurring in open chromatin regions marked by DNase I hypersensitivity [19]. These features facilitate the coordinated binding of multiple transcription factors and enable precise control of gene expression during critical developmental transitions.

In the context of brain development, HOT regions have been implicated in the regulatory genomic circuitry that determines brain age, with specific HOT regions associated with genes like RUNX2 and KLF3 that connect to diverse aging-related biological pathways [22]. Furthermore, hub transcription factors such as KLF3 and SOX10, identified through HOT region analysis, function as regulators of pleiotropic risk genes from diverse brain disorders [22].

HOT Regions as Regulatory Hubs in Disease

The central positioning of HOT regions within gene regulatory networks renders them potentially critical in disease pathogenesis. In cancer, for example, inappropriate HOT region activity can disrupt normal transcriptional programs, leading to malignant transformation. The SNP rs339331, located in a HOT region, increases prostate cancer risk by creating a novel binding site for HOXB13, which in combination with FOXA1 and AR, activates RFX6 and promotes cell migration and metastatic disease [21].

The finding that the vast majority of trait-associated SNPs from genome-wide association studies are non-exonic and occur within putative regulatory elements more often than expected by chance further highlights the potential disease relevance of HOT regions [21]. These noncoding variants likely disrupt the precise combinatorial code that determines cell-specific transcription factor occupancy at HOT regions, leading to altered gene expression programs that contribute to disease susceptibility.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Research Reagents for HOT Region Analysis

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Cell Lines | H1-hESC, GM12878, K562, HepG2, HeLa-S3 [20] | Provide cellular context for HOT region mapping across diverse tissues and developmental stages |

| Antibodies | TF-specific ChIP-grade antibodies [20] | Enable immunoprecipitation of specific transcription factors for ChIP-seq experiments |

| Sequencing Kits | Illumina sequencing kits [20] | Generate high-throughput sequencing libraries from immunoprecipitated DNA |

| Software Tools | UniPeak [20], iFORM [19], FIMO [19], HOMER [19] | Analyze ChIP-seq data, identify peaks, scan for motifs, and classify HOT regions |

| Databases | ENCODE ChIP-seq data [20], DHS sites [19], GWAS catalog [21] | Provide reference data for comparative analysis and validation |

| Genome Engineering | CRISPR/Cas9 systems [21] | Enable functional validation through targeted perturbation of HOT regions |

| Epigenetic Marks | H3K4me3, H3K27ac antibodies [20] | Characterize chromatin state at HOT regions and correlate with activity |

High-Occupancy Target regions represent specialized regulatory architectures that function as integration hubs within gene regulatory networks. Comparative analysis of methodological approaches reveals distinct advantages to both computational and empirical strategies for HOT region identification, with the former offering broader coverage and the latter providing deeper biological validation. The dynamic nature of HOT regions during development and their involvement in disease pathogenesis highlights their significance as key regulatory nodes. Future research leveraging single-cell methodologies and advanced genome engineering approaches will further elucidate the precise mechanisms by which HOT regions coordinate transcriptional programs and how their dysfunction contributes to human disease.

Conserved Transcription Factor Binding Motifs and Co-associations

The precise mapping of transcription factor (TF) binding sites is fundamental to deciphering the regulatory code that controls gene expression. A major challenge in functional genomics is distinguishing functional regulatory interactions from the vast background of non-functional TF binding events. A significant portion of transcription factor binding does not result in measurable changes in gene expression of nearby genes, highlighting the need for more sophisticated predictive models [23] [24]. This guide objectively compares the leading computational and experimental methodologies for identifying functional transcription factor binding motifs and their cooperative interactions, providing researchers with a structured analysis of their performance, applications, and limitations.

Methodological Comparison

Core Computational Approaches

The table below summarizes the primary methodologies for identifying functional TF binding motifs.

Table 1: Comparison of Core Methodological Approaches

| Method | Core Principle | Data Inputs | Key Outputs | Strengths | Limitations |

|---|---|---|---|---|---|

| Affinity-Based Conservation [25] | Compares total predicted TF affinity across orthologous promoters | TF Position-Specific Scoring Matrix (PSSM), Orthologous promoter sequences | Conserved promoter affinity (NC), Functional regulatory targets | Identifies low-affinity functional sites; Independent of local alignment | Requires multiple sequenced genomes |

| Binding-Expression Correlation [26] | Correlates TF binding profiles with gene expression across multiple conditions/cell types | ChIP-seq data, RNA-seq data from multiple cell types/conditions | Correlation scores (PC, SC, CARS) predictive of functional targets | Uses "guilt-by-association"; High predictive value for knockdown outcomes | Requires extensive multi-condition datasets |

| Combinatorial Motif Discovery [27] | Data mines genome for over-represented pairs of distinct TF motifs | Genome sequence, Library of TF Position Weight Matrices (PWMs) | Association rules (Support, Confidence) for TF pairs; Prioritized cooperative TF pairs | Predicts novel TF cooperativity; Genome-wide scale | Does not directly measure function |

| Functional Fine-Mapping [28] | Integrates functional genomic annotations with statistical genetics | GWAS summary statistics, ATAC-seq/ChIP-seq data, Chromatin interaction data | Credible sets of putative causal variants, Element PIP (ePIP) scores | Links non-coding variants to genes and molecular mechanisms | Complex integration pipeline; Cell-type specificity of data |

Performance Metrics and Experimental Validation

The performance of these methods is validated through their ability to predict functional outcomes, such as gene expression changes in perturbation experiments and enrichment for biological knowledge.

Table 2: Experimental Validation and Performance Metrics

| Method | Validation Experiment | Key Performance Result | Biological Enrichment |

|---|---|---|---|

| Affinity-Based Conservation [25] | Correlation with TF deletion expression microarrays, MA-Networker coupling T-values | Conserved affinity (NC) showed dramatically improved correlation with functional data vs. single-genome affinity | NC showed greater bias toward relevant Gene Ontology (GO) categories |

| Binding-Expression Correlation [26] | TF knockdown/knockout with measurement of differential expression | Correlation across cell types was significantly more predictive of functional targets than binding in a single cell type | N/A |

| Combinatorial Motif Discovery [27] | Literature co-citation analysis in PubMed abstracts | High-confidence, high-significance mined TF pairs showed enrichment for co-citation | Prioritized pairs were often readily verifiable in existing literature |

| Functional Fine-Mapping [28] | Massively Parallel Reporter Assays (MPRA), Luciferase assays | Experimentally validated allele-specific regulatory properties of candidate causal variants | Prioritized effector genes were enriched for immune and inflammatory responses |

Experimental Protocols

Protocol 1: Affinity-Based Conservation Analysis

This protocol identifies functional TF targets by evolutionary conservation of total promoter affinity [25].

- Obtain Orthologous Promoter Sequences: For the organism of interest (e.g., S. cerevisiae), extract promoter sequences (e.g., 500 bp upstream of start codons). Obtain orthologous promoter sequences from multiple closely related species (e.g., S. bayanus, S. mikatae, S. paradoxus).

- Convert TF Specificity to an Affinity Model: Obtain a Position-Specific Scoring Matrix (PSSM) for the TF of interest. Convert the PSSM to a Position-Specific Affinity Matrix (PSAM) using the transformation: wjb = 2sjb, where sjb is the log-likelihood score from the PSSM. Normalize each column of the PSAM so the highest affinity base has a weight of 1.

- Calculate Total Promoter Affinity: For each promoter sequence in each species, use the PSAM to calculate the total predicted occupancy (Ng) using a sliding window approach. This value is proportional to the sum of association constants for all subsequences within the promoter.

- Define Conserved and Unconserved Affinity: For each promoter in the reference species, define:

- Total Affinity (NT): Ng in the reference species.

- Conserved Affinity (NC): The minimum Ng among all orthologous promoters.

- Unconserved Affinity (NU): NT - NC.

- Validate with Functional Genomics Data: Correlate NC and NU with functional data such as gene expression changes in TF deletion mutants or nucleosome occupancy data. NC is expected to show a stronger correlation with functional outcomes.

Workflow for Affinity-Based Conservation Analysis

Protocol 2: Binding-Expression Correlation Across Compendia

This protocol distinguishes functional TF binding by correlating binding and expression profiles across diverse cellular contexts [26].

- Data Collection and Harmonization:

- Binding Data: Download ChIP-seq peak files and corresponding mapped read files (BED) for the TF across multiple cell types or conditions from resources like ENCODE. Calculate normalized coverage counts (e.g., using BEDTOOLs) to quantify peak height.

- Expression Data: Download matching RNA-seq data (e.g., RPKM values) for the same cell types. Perform quantile normalization to make expression levels comparable across samples.

- Map Binding to Genes: Using a defined regulatory model (e.g., peaks within 5 kb of the Transcription Start Site (TSS)), create a gene-by-cell-type matrix of cumulative binding signals for the TF.

- Calculate Correlation: For each gene, calculate the correlation between its TF-binding profile and its expression profile across the compendium of cell types. Use multiple correlation measures:

- Pearson Correlation (PC): Captures linear relationships.

- Spearman Correlation (SC): Captures monotonic non-linear relationships.

- Combined Angle Ratio Statistic (CARS): A variant of the Angle Ratio Statistic designed to detect associations with outlier cell types.

- Predict Functional Targets: Genes with high correlation scores (individually or in combination) are prioritized as functional targets of the TF. The performance of this prediction is validated against independent TF perturbation data (e.g., genes differentially expressed upon TF knockdown).

Visualization of Regulatory Relationships

Transcription Factor Co-association and Cooperativity

Transcription factors often function in combination, binding DNA cooperatively to regulate target genes. The following diagram illustrates major models of TF co-association and their functional outcomes, integrating concepts from affinity conservation, combinatorial binding, and lineage-specific deployment [25] [29] [24].

Models of Functional TF Binding and Co-association

The Scientist's Toolkit

This section details key reagents and computational resources essential for research on conserved TF binding motifs.

Table 3: Essential Research Reagents and Resources

| Tool / Resource | Type | Primary Function | Example Sources / Formats |

|---|---|---|---|

| Position Weight Matrix (PWM) | Computational Model | Represents the DNA binding specificity of a TF, quantifying nucleotide preference at each position. | JASPAR [30], CIS-BP [30] |

| ChIP-seq Data | Experimental Data (NGS) | Provides genome-wide mapping of in vivo TF binding locations under specific cellular conditions. | ENCODE Consortium [26] [24] |

| DNase I Hypersensitive Sites (DHS) | Experimental Data (NGS) | Identifies nucleosome-depleted, accessible chromatin regions harboring active regulatory elements. | ENCODE Consortium [30] |

| Orthologous Genomic Sequences | Genomic Data | Enables phylogenetic footprinting and evolutionary conservation analysis of regulatory sequences. | UCSC Genome Browser, Ensembl |

| MatrixREDUCE | Software Package | Implements affinity-based conservation analysis to predict functional TF targets. | Bussemaker Lab [25] |

| Massively Parallel Reporter Assay (MPRA) | Experimental Method | High-throughput functional validation of thousands of candidate regulatory sequences and their variants. | Used in fine-mapping studies [28] |

| MOA-seq (MNase-defined Cistrome Occupancy Analysis) | Experimental Method | Identifies TF-occupied loci and footprints at high resolution in a single, quantitative experiment. | Alternative to ChIP-seq [31] |

From Data to Discovery: Tools and Applications for Mapping Regulatory Circuits

The emergence of genome-wide mapping technologies has revolutionized our understanding of genomic architecture and gene regulation. Chromatin Immunoprecipitation followed by sequencing (ChIP-seq) and Hi-C represent two pivotal methodologies that capture distinct yet complementary aspects of genome organization. ChIP-seq identifies protein-DNA interactions and histone modifications, providing a one-dimensional landscape of regulatory elements. In contrast, Hi-C captures chromatin conformation and three-dimensional spatial contacts, revealing the structural framework that facilitates gene regulation. This guide provides a comprehensive comparison of these technologies, their integration, and their collective application in deciphering functional genomics regulatory circuits.

ChIP-seq: Mapping Protein-DNA Interactions

Principle: ChIP-seq combines chromatin immunoprecipitation with high-throughput sequencing to identify genome-wide binding sites for transcription factors and histone modifications. The method begins with formaldehyde cross-linking to preserve protein-DNA interactions, followed by chromatin fragmentation and immunoprecipitation with specific antibodies. The purified DNA is then sequenced, and the resulting reads are aligned to a reference genome to identify enriched regions (peaks) representing protein-binding sites or histone marks [32].

Key Applications:

- Transcription factor binding site identification

- Histone modification profiling (e.g., H3K4me3 at promoters, H3K27ac at enhancers)

- Epigenetic state characterization through chromatin states [32]

Hi-C: Capturing 3D Chromatin Architecture

Principle: Hi-C is an extension of the chromosome conformation capture (3C) technique that enables genome-wide, unbiased profiling of chromatin interactions. Cells are cross-linked with formaldehyde, and chromatin is digested with restriction enzymes. The resulting DNA fragments are labeled with biotin and ligated under dilute conditions to favor proximity ligation of spatially adjacent DNA fragments. After reversing cross-links, the ligation products are purified and sequenced using paired-end sequencing [33]. The analysis of chimeric sequences reveals long-range chromatin interactions across the entire genome.

Key Applications:

- Identification of topologically associating domains (TADs)

- Compartment analysis (A/B compartments)

- Chromatin loop detection

- Nuclear organization studies [34]

Direct Comparison of Technical Specifications

Table 1: Core Characteristics of ChIP-seq and Hi-C

| Feature | ChIP-seq | Hi-C |

|---|---|---|

| Primary Focus | Protein-DNA interactions | 3D chromatin architecture |

| Resolution | Single-base pair for binding sites | 1 kb - 100 kb (dependent on sequencing depth) |

| Input Material | 100,000 - 1 million cells | 1 - 10 million cells |

| Key Output | Binding sites/peaks | Contact probability maps |

| Sequencing Depth | 20-50 million reads | 500 million - 3 billion reads |

| Data Interpretation | 1D linear genome annotation | 3D spatial interaction networks |

| Primary Limitations | Antibody quality dependency, limited to known factors | High sequencing cost, computational complexity |

Table 2: Performance Metrics and Experimental Considerations

| Parameter | ChIP-seq | Hi-C |

|---|---|---|

| Typical Timeline | 3-5 days | 5-7 days |

| Cost per Sample | $$ | $$$$ |

| Technical Variability | Moderate (antibody efficiency dependent) | High (ligation efficiency dependent) |

| Data Analysis Complexity | Moderate | High |

| Single-cell Applications | scChIP-seq, CUT&RUN | scHi-C |

| Integration Potential | High with RNA-seq, ATAC-seq | High with genomic annotations, ChIP-seq |

Integrated Analysis Approaches

Multi-Omics Integration Strategies

Integrating ChIP-seq and Hi-C data enables researchers to connect linear epigenetic information with 3D genome architecture, providing unprecedented insights into gene regulatory mechanisms. Several computational approaches have been developed for this purpose:

Hidden Markov Models (HMMs) and Chromatin State Discovery: Tools like ChromHMM and Segway use combinatorial patterns of histone modifications from ChIP-seq data to segment the genome into chromatin states, which can then be correlated with Hi-C contact maps to understand how epigenetic states influence 3D organization [32].

Self-Organizing Maps (SOMs): SOMs provide an unsupervised machine learning approach to integratively analyze high-dimensional ChIP-seq data by identifying recurrent patterns of transcription factor co-localization and their relationship to chromatin features observed in Hi-C data [32].

Regression-Based Integration: Methods like Mixture Poisson Regression Models (MPRM) enable the identification of specific chromatin interactions in Hi-C data that are significantly associated with particular transcription factor binding or histone modifications identified through ChIP-seq [33].

Advanced Integrated Technologies

ChIA-PET (Chromatin Interaction Analysis by Paired-End Tag Sequencing): This method combines chromatin immunoprecipitation with proximity ligation to identify long-range chromatin interactions mediated by specific protein factors. While offering protein-specific interaction data, ChIA-PET requires substantial sequencing depth and large cell numbers compared to Hi-C [35].

HiChIP: An efficient alternative to ChIA-PET that incorporates in situ ligation and transposase-mediated on-bead library construction. HiChIP improves the yield of conformation-informative reads by over 10-fold and lowers input requirements over 100-fold relative to ChIA-PET, providing enhanced signal-to-background for protein-directed interactions [35].

Micro-C-ChIP: A recent innovation that combines Micro-C (which uses MNase for nucleosome-resolution fragmentation) with chromatin immunoprecipitation to map 3D genome organization for defined histone modifications at nucleosome resolution. This approach provides high-resolution, cost-efficient mapping of histone-mark-specific chromatin folding [36].

Experimental Protocols

Standardized ChIP-seq Protocol

Cell Cross-linking and Lysis:

- Cross-link cells with 1% formaldehyde for 10 minutes at room temperature

- Quench cross-linking with 125 mM glycine for 5 minutes

- Wash cells with cold PBS and resuspend in cell lysis buffer (10 mM Tris-HCl pH 8.0, 10 mM NaCl, 0.2% NP-40)

- Centrifuge and resuspend nuclei in nuclear lysis buffer (50 mM Tris-HCl pH 8.0, 10 mM EDTA, 1% SDS)

Chromatin Immunoprecipitation:

- Sonicate chromatin to 200-500 bp fragments

- Dilute sonicated chromatin 10-fold in ChIP dilution buffer

- Pre-clear with protein A/G beads for 1 hour at 4°C

- Incubate with specific antibody overnight at 4°C

- Add protein A/G beads and incubate for 2 hours

- Wash beads sequentially with low salt, high salt, LiCl, and TE buffers

- Elute chromatin with elution buffer (1% SDS, 0.1 M NaHCO3)

Library Preparation and Sequencing:

- Reverse cross-links at 65°C overnight

- Treat with RNase A and proteinase K

- Purify DNA using phenol-chloroform extraction

- Prepare sequencing library using standard protocols

- Sequence on appropriate platform (Illumina recommended)

Comprehensive Hi-C Protocol

Cell Cross-linking and Digestion:

- Cross-link cells with 2% formaldehyde for 10 minutes at room temperature

- Quench with 125 mM glycine for 15 minutes

- Lyse cells with ice-cold lysis buffer (10 mM Tris-HCl pH 8.0, 10 mM NaCl, 0.2% Igepal CA-630, protease inhibitors)

- Digest chromatin with 100 units of MboI or HindIII restriction enzyme overnight at 37°C

Marking and Ligation:

- Fill restriction fragment overhangs with biotin-14-dATP using Klenow fragment

- Perform proximity ligation with T4 DNA ligase for 4 hours at 16°C

- Reverse cross-links overnight at 65°C with proteinase K

- Purify DNA with phenol-chloroform extraction

Library Preparation:

- Shear DNA to 300-500 bp using sonication

- Perform size selection using SPRI beads

- Enrich biotin-containing fragments using streptavidin beads

- Prepare sequencing library using standard methods

- Sequence using paired-end sequencing on Illumina platform

Computational Analysis Pipelines

ChIP-seq Data Analysis

Quality Control and Read Alignment:

- FastQC for read quality assessment

- Alignment with Bowtie2 or BWA to reference genome

- PCR duplicate removal using Picard Tools

Peak Calling and Annotation:

- MACS2 for transcription factor peak calling

- SICER or BroadPeak for broad histone marks

- HOMER for motif discovery and annotation

- Integration with genome browsers for visualization

Hi-C Data Analysis

Data Processing and Normalization:

- HiC-Pro or Juicer for raw data processing

- ICE or KR normalization for technical bias correction

- Identification of valid interaction pairs

Feature Identification:

- HiCCUPS for loop identification

- Arrowhead for TAD boundary calling

- PCA for A/B compartment analysis

- Comparison methods for differential analysis [37]

Integrated Analysis Tools

MAGICAL (Multiome Accessibility Gene Integration Calling and Looping): A hierarchical Bayesian approach that leverages paired single-cell RNA sequencing and single-cell ATAC-seq data to map regulatory circuits by modeling signal variation across cells and conditions [38].

DeepChIA-PET: A supervised deep learning approach that predicts ChIA-PET interactions from Hi-C and ChIP-seq data using dilated residual convolutional networks, effectively learning the mapping between these data types at high resolution [39].

Loop Calling Comparisons: Comprehensive benchmarking of loop detection tools reveals variations in performance across resolutions, with methods like HiCCUPS, FitHiC2, and Mustache showing robust performance under different conditions [40].

Research Reagent Solutions

Table 3: Essential Research Reagents and Their Applications

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| Formaldehyde | Cross-linking agent | Preserves protein-DNA and protein-protein interactions |

| Protein A/G Magnetic Beads | Antibody binding | Efficient immunoprecipitation with low background |

| MNAse/MboI/HindIII | Chromatin digestion | Enzyme choice affects resolution and bias |

| Biotin-14-dATP | Marking ligation junctions | Enables pull-down of ligation products |

| Streptavidin Beads | Enrichment of biotinylated fragments | Critical for Hi-C library complexity |

| T4 DNA Ligase | Proximity ligation | Forms chimeric molecules from spatially proximal fragments |

| Klenow Fragment | Fill-in of restriction ends | Incorporates biotinylated nucleotides for labeling |

| MACS2 Antibodies | Target-specific IP | Quality critically affects ChIP-seq specificity |

Signaling Pathways and Workflow Integration

The following diagram illustrates the integrated experimental workflow and analytical pipeline for combining ChIP-seq and Hi-C data to decipher gene regulatory circuits:

Integrated Workflow for Regulatory Circuit Mapping

Applications in Regulatory Circuit Research

Elucidating Gene Regulatory Mechanisms

The integration of ChIP-seq and Hi-C data has been instrumental in uncovering the principles of gene regulation across multiple biological contexts:

Enhancer-Promoter Communication: Studies integrating H3K27ac ChIP-seq (marking active enhancers) with Hi-C contact maps have revealed that spatial proximity is a stronger predictor of functional enhancer-promoter relationships than linear genomic distance, explaining how distal regulatory elements control gene expression [33] [32].

Transcription Factor-Mediated Chromatin Organization: Research in K562 cells demonstrated that transcription factors like GATA1 and GATA2 not only bind to specific genomic loci but also mediate long-range chromatin interactions. Knockdown experiments confirmed that these factors regulate expression of genes in both nearby and spatially interacting loci, establishing causal relationships between 3D genome organization and transcriptional programs [33].

Disease-Associated Regulatory Circuits: In infectious disease research, integrated analysis of single-cell multiomics data using approaches like MAGICAL has identified sepsis-associated regulatory circuits in CD14+ monocytes that respond differently to methicillin-resistant versus methicillin-susceptible Staphylococcus aureus infections, revealing epigenetic circuit biomarkers that distinguish these clinical states [38].

Advancing Therapeutic Development

The application of integrated ChIP-seq and Hi-C analyses in drug development has enabled:

Identification of Disease-Relevant Non-Coding Variants: By mapping GWAS variants to regulatory elements through ChIP-seq and connecting them to target genes through Hi-C, researchers can prioritize functional non-coding variants in complex diseases and identify potential therapeutic targets.

Epigenetic Therapy Assessment: Comprehensive evaluation of epigenetic drug effects requires understanding both the direct binding changes (via ChIP-seq) and the consequent alterations in 3D genome organization (via Hi-C), providing a systems-level view of therapeutic mechanisms.

Cell-Type Specific Circuit Mapping: Single-cell multiomics approaches now enable the reconstruction of cell-type-specific regulatory circuits, essential for understanding cell-type-specific functions in heterogeneous tissues and developing targeted therapies [38].

Future Perspectives

The continuing evolution of genome-wide mapping technologies points toward several promising directions:

Multi-Scale Integration: Future methods will likely bridge nucleosome-resolution interactions with higher-order chromosomal structures through techniques like Micro-C-ChIP, providing a more complete understanding of chromatin organization across spatial scales [36].

Single-Cell Multi-Omics: Approaches that simultaneously profile chromatin conformation, histone modifications, and transcription factor binding in the same single cells will eliminate integration challenges and enable direct observation of regulatory principles in heterogeneous cell populations.

Machine Learning Enhancement: Deep learning models like DeepChIA-PET will become increasingly sophisticated, accurately predicting chromatin interaction maps from sequence and epigenetic features, thus reducing experimental costs while expanding predictive capabilities [39].

Dynamic Circuit Analysis: Time-resolved studies capturing the dynamics of 3D genome reorganization during cellular differentiation and in response to stimuli will provide insights into the causal relationships between chromatin architecture and gene regulatory programs.

As these technologies mature, their integration will continue to illuminate the complex regulatory circuits that govern cellular identity and function, ultimately advancing both basic biological knowledge and therapeutic development for human disease.

Functional Genomics and High-Throughput Perturbation Screens

Functional genomics aims to elucidate the roles and interactions of genes and genetic elements, providing crucial insights into their involvement in biological processes and disease. Despite more than two decades since the completion of the first draft of the Human Genome Project, a substantial proportion of human genes remain poorly characterized. Perturbomics has emerged as a powerful functional genomics approach that systematically annotates gene function based on phenotypic changes resulting from targeted gene perturbations [41]. This methodology operates on the principle that gene function can be most directly inferred by altering gene activity and measuring consequent phenotypic changes across multiple molecular layers.

The field has evolved significantly from its early applications using arrayed small interfering RNAs (siRNAs) to contemporary CRISPR–Cas-based screening platforms. High-throughput perturbation screens represent the methodological core of perturbomics, enabling systematic functional characterization of gene networks at unprecedented scale and resolution. Within comparative functional genomics research, these screens provide the empirical foundation for deciphering regulatory circuits that control cellular processes across different biological contexts, from development to disease states [41]. The integration of perturbation screens with single-cell genomics and other multidimensional readouts has transformed our capacity to map regulatory networks with cellular precision, advancing both basic science and therapeutic discovery.

Comparative Analysis of Screening Modalities

The landscape of high-throughput perturbation screens has diversified significantly with the development of various CRISPR-based systems, each offering distinct advantages and limitations for specific research applications in regulatory circuit mapping.

Table 1: Comparison of Major Perturbation Screening Modalities

| Screening Modality | Mechanism of Action | Primary Applications | Key Advantages | Technical Limitations |

|---|---|---|---|---|

| CRISPR Knockout | Cas9 nuclease induces double-strand breaks causing frameshift indels [41] | Identification of essential genes; resistance/sensitivity screens [41] | Complete, permanent gene disruption; high efficiency | Limited to protein-coding genes; DNA break toxicity [41] |

| CRISPR Interference (CRISPRi) | dCas9-KRAB fusion protein mediates transcriptional repression [41] | lncRNA functional studies; enhancer mapping; essential gene screening [41] | Reversible knock-down; minimal off-target effects; targets non-coding regions [41] | Partial suppression only; variable efficiency across genomic contexts |

| CRISPR Activation (CRISPRa) | dCas9 fused to transcriptional activators (VP64, VPR, SAM) [41] | Gain-of-function studies; suppressor screens; gene dosage effects | Controlled overexpression; identifies synthetic rescue interactions | Potential for non-physiological expression levels |

| Base Editing | Cas9 nickase fused to deaminase enzymes enables precise nucleotide conversion [41] | Functional analysis of single-nucleotide variants; disease modeling [41] | Single-base resolution; no double-strand breaks; models patient mutations | Restricted editing windows; limited to specific nucleotide transitions [41] |

| Prime Editing | Cas9-reverse transcriptase fusions enable small insertions, deletions, and all base-to-base conversions [41] | Saturation mutagenesis; pathological variant modeling [41] | Versatile editing outcomes; no double-strand breaks | Lower efficiency compared to other methods; complex gRNA design |

The selection of an appropriate screening modality depends heavily on the biological question and regulatory circuit under investigation. For comprehensive mapping of genetic interactions within a pathway, complementary screening approaches (e.g., CRISPR knockout and CRISPRa) provide orthogonal validation and enhance confidence in candidate genes [41]. For instance, while knockout screens effectively identify essential genes, they may miss genes whose partial inhibition produces phenotypic effects—a gap effectively addressed by CRISPRi screens. Similarly, base editing and prime editing screens enable functional assessment of disease-associated variants at nucleotide resolution, bridging the gap between human genetics and functional mechanism [41].

Experimental Frameworks for Perturbation Screening

Core Workflow for Pooled CRISPR Screens

The fundamental workflow for pooled CRISPR screens has been standardized through extensive community adoption and refinement, encompassing key stages from library design to hit validation [41].

Diagram 1: Pooled CRISPR screen workflow

Library design represents the critical first step, involving computational selection of guide RNAs (gRNAs) targeting genes of interest. For genome-wide screens, current libraries typically include 3-10 gRNAs per gene to ensure statistical robustness and mitigate off-target effects [41]. These gRNA collections are synthesized as chemically modified oligonucleotide pools and cloned into lentiviral or other viral vectors for efficient delivery. The resulting viral library is transduced into Cas9-expressing cells at a low multiplicity of infection (MOI~0.3) to ensure most cells receive a single gRNA, enabling clear genotype-to-phenotype associations [41].

Following transduction, cells undergo phenotypic selection relevant to the biological question—this may include drug treatment for resistance/sensitivity screens, fluorescence-activated cell sorting (FACS) for marker expression, or simple viability monitoring for essential gene identification [41]. After selection, genomic DNA is extracted, gRNAs are amplified via PCR, and their abundance is quantified by next-generation sequencing. Computational analysis using specialized tools (e.g., MAGeCK, CERES) identifies gRNAs significantly enriched or depleted under selection, linking specific genetic perturbations to phenotypic outcomes [41]. Candidate hits then proceed to validation phases employing individual gene knockouts, mechanistic studies, and assessment of therapeutic potential.

Advanced Screening Readouts and Applications

Traditional CRISPR screens relied primarily on cell viability or surface marker expression as phenotypic readouts, substantially limiting the complexity of addressable biological questions. Recent technological advances have dramatically expanded the phenotypic landscape measurable in perturbation screens.

Single-cell RNA sequencing coupled with CRISPR screening (Perturb-seq) represents a particularly powerful approach for regulatory circuit mapping [41]. This method enables comprehensive transcriptomic characterization of individual cells following genetic perturbation, revealing not just primary phenotypic effects but entire gene regulatory networks downstream of targeted genes. The resulting data provide unprecedented resolution of how individual perturbations rewire transcriptional programs across diverse cell states and types [41].

Spatial functional genomics extends this paradigm by preserving tissue architecture context during perturbation screening. Emerging approaches combine in situ CRISPR perturbations with spatial transcriptomics or multiplexed protein imaging, enabling direct investigation of how genetic perturbations affect cellular organization, cell-cell communication, and niche-dependent functions [22]. These methods are particularly valuable for studying complex tissues like the brain, where spatial positioning fundamentally influences cellular function in health and disease [22].