Comparative Analysis of Gene Regulatory Networks: From Sequence-Based Prediction to Expression-Driven Inference

This article provides a comprehensive comparative analysis of modern computational approaches for constructing Gene Regulatory Networks (GRNs), bridging sequence-based deep learning with expression-based network inference.

Comparative Analysis of Gene Regulatory Networks: From Sequence-Based Prediction to Expression-Driven Inference

Abstract

This article provides a comprehensive comparative analysis of modern computational approaches for constructing Gene Regulatory Networks (GRNs), bridging sequence-based deep learning with expression-based network inference. Tailored for researchers and drug development professionals, it explores foundational concepts in GRN modeling, evaluates cutting-edge methodologies including Graph Neural Networks (GNNs) and transformer architectures, addresses key troubleshooting and optimization challenges in single-cell data analysis, and establishes rigorous validation frameworks. By synthesizing insights from recent benchmark studies and community challenges, this review serves as a strategic guide for selecting appropriate GRN inference methods based on data availability and research objectives, ultimately accelerating discovery in functional genomics and therapeutic development.

Decoding Gene Regulatory Networks: From DNA Sequence to Expression Patterns

Fundamental Principles of Gene Regulation and Network Biology

Gene Regulatory Networks (GRNs) are foundational to systems biology, offering a contextual model of the intricate interactions between genes that control development, cell identity, and disease pathology [1] [2]. The inference of these networks from high-throughput data, particularly single-cell RNA sequencing (scRNA-seq), has become a central challenge in functional genomics. Single-cell technologies provide unprecedented resolution to analyze cellular diversity, but they also introduce specific challenges such as data sparsity, cellular heterogeneity, and technical noise like "dropout" events, where transcripts are erroneously not captured [1] [3] [4]. This comparative guide examines the current landscape of GRN inference methodologies, evaluating their performance, underlying assumptions, and applicability to different biological questions. We focus on objective performance comparisons across a range of algorithms, from co-expression networks and message-passing approaches to modern machine learning and hybrid methods, providing researchers with a framework for selecting appropriate tools based on experimental design and analytical goals.

Methodological Approaches and Comparative Performance

Gene-Gene Co-expression Network Analysis

Gene-gene co-expression network analysis has been widely applied to both bulk and single-cell RNA sequencing data to investigate phenotypic variation. A comprehensive study comparing co-expression network approaches for analyzing cell differentiation on scRNA-seq data revealed that the choice of network analysis strategy has a more substantial impact on biological interpretation than the specific network model itself [5] [6]. Key findings include:

- Combined time point modeling demonstrates greater stability compared to single time point modeling when analyzing dynamic processes like cell differentiation [5].

- Differential gene expression-based methods most effectively model cell differentiation processes [5].

- The largest differences in biological interpretation emerge between node-based and community-based network analysis methods, representing fundamentally different analytical philosophies [5].

Table 1: Comparison of Gene-Gene Co-expression Network Approaches

| Method Category | Stability | Differentiation Modeling | Key Strengths |

|---|---|---|---|

| Single Time Point Modeling | Lower | Variable | Context-specific snapshots |

| Combined Time Point Modeling | Higher | Good | Captures dynamic processes |

| Node-based Analysis | N/A | N/A | Focus on individual gene properties |

| Community-based Analysis | N/A | N/A | Identifies functional modules |

Message-Passing and Multi-Omic Integration Approaches

SCORPION (Single-Cell Oriented Reconstruction of PANDA Individually Optimized gene regulatory Networks) represents a distinct class of algorithms that use message-passing to integrate multiple data sources [4]. This approach addresses data sparsity through coarse-graining, collapsing similar cells into "SuperCells" or "MetaCells" to reduce sparsity and improve correlation structure detection. The methodology integrates three network types:

- Co-regulatory network: Built from correlation analyses of coarse-grained transcriptomic data

- Cooperativity network: Derived from protein-protein interaction data (e.g., from STRING database)

- Regulatory network: Based on transcription factor footprint motifs in promoter regions

In systematic benchmarking using BEELINE, SCORPION outperformed 12 other GRN reconstruction techniques, generating networks that were 18.75% more precise and sensitive than competing methods [4]. The algorithm consistently ranked first across seven evaluation metrics, demonstrating its robustness for transcriptome-wide network inference.

Machine Learning and Deep Learning Frameworks

Machine learning approaches, particularly hybrid models combining convolutional neural networks (CNNs) with traditional machine learning, have shown remarkable performance in GRN construction. Studies integrating prior knowledge and large-scale transcriptomic data from Arabidopsis thaliana, poplar, and maize have demonstrated that:

- Hybrid models combining CNNs and machine learning consistently outperform traditional machine learning and statistical methods, achieving over 95% accuracy on holdout test datasets [7].

- These models identify more known transcription factors regulating biological pathways and demonstrate higher precision in ranking key master regulators [7].

- Transfer learning enables effective cross-species GRN inference by applying models trained on data-rich species (e.g., Arabidopsis) to species with limited data (e.g., poplar, maize) [7].

Table 2: Performance Comparison of GRN Inference Methods

| Method Type | Representative Tools | Accuracy Range | Data Requirements | Strengths |

|---|---|---|---|---|

| Co-expression Networks | PIDC, PPCOR | Variable | scRNA-seq | Captures correlation structures |

| Message-Passing | SCORPION, PANDA | High (Precision/Recall +18.75%) | Multi-omic preferred | Integrates multiple prior knowledge sources |

| Hybrid ML/DL | CNN-ML Hybrids | >95% | Large transcriptomic datasets | Captures nonlinear relationships |

| Autoencoder-based | DAZZLE, DeepSEM | High on benchmarks | scRNA-seq | Handles zero-inflation effectively |

Addressing Technical Challenges in Single-Cell Data

The prevalence of "dropout" events in scRNA-seq data (57-92% zero values across datasets) presents a major challenge for GRN inference [1] [3]. Unlike imputation methods that attempt to replace missing values, Dropout Augmentation (DA) takes a novel regularization approach by intentionally adding synthetic dropout noise during training [1] [3]. The DAZZLE model implements this approach within an autoencoder-based structural equation model framework, demonstrating:

- Improved model stability and robustness compared to DeepSEM

- Reduced parameter count (21.7% reduction) and faster computation (50.8% reduction in running time)

- Enhanced performance on real-world single-cell data with minimal gene filtration

Additional innovations in DAZZLE include delayed introduction of sparse loss terms, closed-form Normal distribution priors, and a noise classifier to predict augmented dropout values [1].

Experimental Protocols and Benchmarking Frameworks

Standardized Evaluation Using BEELINE

The BEELINE framework provides systematic evaluation of GRN inference algorithms using synthetic and curated real datasets with known ground truth interactions [4]. Standard protocols include:

- Network Construction: Algorithms process expression matrices without additional information

- Precision-Recall Analysis: Comparison of inferred networks against established ground truth interactions

- Multi-Metric Assessment: Evaluation across seven complementary metrics including precision, recall, and F1-score

In these standardized assessments, methods like SCORPION have demonstrated superior performance, though simpler methods like PPCOR and PIDC can show competitive results for specific network sizes and structures [4].

Expression Forecasting Benchmarking with PEREGGRN

The PEREGGRN (PErturbation Response Evaluation via a Grammar of Gene Regulatory Networks) platform provides a specialized benchmarking framework for expression forecasting methods [8]. Key experimental protocols include:

- Nonstandard Data Splitting: No perturbation condition appears in both training and test sets

- Handling of Directly Targeted Genes: Omission of samples where a gene is directly perturbed when training models to predict that gene's expression

- Multi-Metric Evaluation: Assessment using mean absolute error, mean squared error, Spearman correlation, direction-of-change accuracy, and cell type classification accuracy

This framework has revealed that expression forecasting methods frequently struggle to outperform simple baselines when predicting responses to novel genetic perturbations [8].

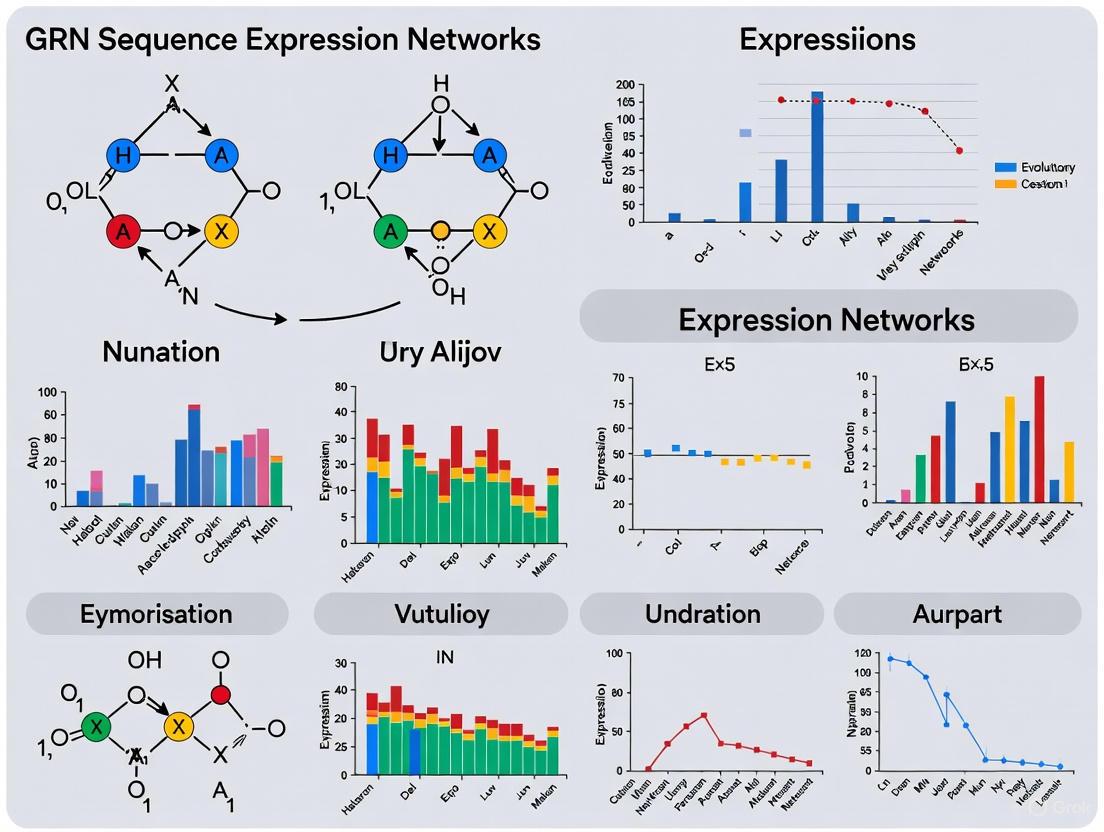

Diagram Title: GRN Inference Experimental Workflow

Advanced Network Analysis and Comparison Techniques

Role-Based Network Embedding for Comparative Analysis

Gene2role introduces a novel approach to GRN comparison by applying role-based graph embedding to signed regulatory networks [9]. This method enables:

- Multi-hop topological analysis: Capturing structural information beyond direct connections through 1-hop and 2-hop neighborhoods

- Cross-network comparability: Projecting genes from separate networks into closely positioned embedding spaces using structural similarity

- Differentially Topological Gene identification: Detecting genes with significant structural changes across cell types or states

The framework uses signed-degree vectors (d = [d⁺, d⁻]) to represent each gene's positive and negative regulatory relationships, with Exponential Biased Euclidean Distance (EBED) accounting for the scale-free nature of GRNs [9].

Structural Properties and Perturbation Analysis

Understanding GRN architecture provides critical insights into their functional properties and perturbation responses. Key structural characteristics include:

- Sparsity: Most genes are directly regulated by few transcription factors (41% of transcript-targeting perturbations significantly affect other genes) [2]

- Hierarchical Organization: Directional relationships with pervasive feedback loops (3.1% of ordered gene pairs show perturbation effects) [2]

- Scale-free Topology: Power-law distribution of node in- and out-degrees [2]

- Modularity: Group-like structure with enrichment for specific structural motifs [2]

Simulation frameworks that incorporate these properties demonstrate that network structure significantly influences perturbation effect distributions, with biological networks tending to dampen perturbation effects through their organizational principles [2].

Diagram Title: GRN Structural Properties and Modules

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagents and Computational Tools for GRN Studies

| Resource Type | Specific Examples | Function/Purpose | Application Context |

|---|---|---|---|

| Single-Cell Platforms | 10X Genomics Chromium, inDrops | Generate scRNA-seq data | Data generation for network inference |

| Prior Knowledge Databases | STRING (protein interactions), Motif databases (GimmeMotifs) | Provide regulatory priors | Message-passing algorithms (SCORPION, PANDA) |

| Benchmarking Frameworks | BEELINE, PEREGGRN | Standardized algorithm evaluation | Method validation and comparison |

| Perturbation Databases | CRISPR-based Perturb-seq datasets | Ground truth for causal inference | Expression forecasting validation |

| Software Tools | SCORPION, DAZZLE, Gene2role, GENIE3, GRNBoost2 | Implement specific inference algorithms | Network construction from expression data |

| Visualization & Analysis | Graph embedding tools, Network visualization software | Interpret and explore inferred networks | Downstream analysis and hypothesis generation |

The comparative analysis of GRN inference methods reveals that methodological performance is highly context-dependent, with different approaches excelling in specific biological and computational scenarios. Co-expression networks provide valuable insights when analyzing dynamic processes across time points, while message-passing algorithms like SCORPION demonstrate superior performance when integrating multiple prior knowledge sources. Machine learning hybrids offer exceptional accuracy when sufficient training data exists, and innovative approaches like dropout augmentation address specific technical challenges in single-cell data.

For researchers embarking on GRN analysis, selection criteria should include data type and quality, availability of prior knowledge, biological question, and computational resources. No single method universally outperforms all others across all scenarios, emphasizing the importance of method selection aligned with specific research objectives. As the field advances, improved benchmarking frameworks, standardized evaluation metrics, and more biologically realistic simulation models will further enhance our ability to reconstruct accurate gene regulatory networks and elucidate the fundamental principles governing gene regulation and network biology.

The quantitative understanding of cis-regulation represents a major challenge in genomics, requiring sophisticated models that can decipher the complex language encoded in DNA sequences [10]. For decades, genetic analysis focused predominantly on open reading frames (ORFs) and their protein-coding potential. However, the regulatory genome, once dismissed as "junk" DNA, is now recognized as containing critical instructions that govern gene expression through an intricate system of promoters, enhancers, and transcription factor binding sites [11]. Sequence-based paradigms have evolved from simply identifying coding regions to modeling the complex regulatory code that controls when, where, and to what extent genes are expressed.

This evolution has been driven by technological advances in high-throughput sequencing and computational methods. Where initial approaches could only analyze individual regulatory elements, modern frameworks now model entire gene regulatory networks (GRNs) from sequence data [12]. The emergence of neural networks in genomics has mirrored progress in computer vision and natural language processing, though the field has historically lacked standardized benchmarks for proper comparison [10]. The recent development of gold-standard datasets and community challenges has finally enabled rigorous evaluation of how model architectures and training strategies impact performance on genomics tasks [10] [13]. This guide provides a comparative analysis of current sequence-based approaches for modeling gene regulation, examining their experimental foundations, performance characteristics, and optimal applications for research and drug development.

Experimental Frameworks for Benchmarking Regulatory Models

Community-Driven Benchmarking: The DREAM Challenge

To address the lack of standardized evaluation in genomics modeling, the Random Promoter DREAM Challenge was organized as a community effort to optimize sequence-based deep learning models of gene regulation [10] [13]. This competition provided participants with a massive-scale experimental dataset containing 6,739,258 random promoter sequences of 80-bp length and their corresponding mean expression values measured in yeast through fluorescence-activated cell sorting (FACS) [10]. Competitors were tasked with designing sequence-to-expression models that could predict expression levels from regulatory DNA sequences alone, with strict restrictions against using external datasets or ensemble methods to ensure fair comparison of architectures [10].

The evaluation framework employed a comprehensive suite of 71,103 test sequences designed to probe different aspects of model performance [10]. These included not only random sequences and native yeast genomic sequences, but also strategically designed challenge sets:

- High-expression and low-expression extremes to test performance boundaries

- Single-nucleotide variants (SNVs) with the highest evaluation weight due to their relevance to complex trait genetics [10]

- Motif perturbation pairs differing in specific transcription factor binding sites

- Motif tiling pairs testing context-dependence of regulatory elements

- Challenging sequences designed to maximize disagreement between previous model types [10]

Performance was quantified using both Pearson's r² and Spearman's ρ, with weighted sums across test subsets producing final Pearson and Spearman scores [10]. This robust evaluation framework enabled direct comparison of diverse architectural approaches on identical training data and evaluation metrics.

Single-Cell RNA Sequencing Validation Protocols

For gene regulatory network inference, benchmark experiments typically employ single-cell RNA sequencing (scRNA-seq) data from both human and mouse cell lines [12] [1]. Standard evaluation datasets include human embryonic stem cells (hESC), human hepatocytes (hHep), mouse dendritic cells (mDC), mouse embryonic stem cells (mESC), and mouse hematopoietic stem cell lineages (mHSC-E, mHSC-L, mHSC-GM) [12].

The evaluation process involves several standardized steps:

- Data Preprocessing: Gene count matrices are filtered to retain only highly variable genes, and counts are normalized using scran pooling-based normalization [12]

- Ground Truth Definition: Experimentally validated regulatory interactions from resources like REGNetwork and TRRUST serve as reference networks [12]

- Performance Metrics: Models are evaluated using Area Under the Precision-Recall Curve (AUPR) and Area Under the Receiver Operating Characteristic Curve (AUROC) against ground truth networks [12]

- Ablation Studies: Systematic removal of model components tests the contribution of each architectural element [12]

This protocol ensures consistent comparison across GRN inference methods while accounting for the sparse, high-dimensional nature of single-cell data.

Table 1: Standardized Evaluation Datasets for GRN Inference

| Dataset | Species | Cell Type | Key Features | Primary Application |

|---|---|---|---|---|

| hESC [12] | Human | Embryonic stem cells | Pluripotency regulation | Differentiation studies |

| hHep [12] | Human | Hepatocytes | Metabolic function | Disease modeling |

| mESC [12] | Mouse | Embryonic stem cells | Developmental potential | Stem cell biology |

| mDC [12] | Mouse | Dendritic cells | Immune response | Immunogenomics |

| mHSC lineages [12] | Mouse | Hematopoietic stem cells | Lineage commitment | Cellular differentiation |

Comparative Analysis of Model Architectures and Performance

Sequence-to-Expression Models

The DREAM Challenge revealed significant differences in how model architectures perform on sequence-based expression prediction tasks. While all top-performing submissions used neural networks, they diverged substantially in their architectural choices and training strategies [10].

The top-performing solution, developed by team Autosome.org, adapted the EfficientNetV2 architecture from computer vision and transformed the regression task into a soft-classification problem by predicting expression bin probabilities [10]. This approach effectively mirrored the experimental data generation process. Notably, this model achieved state-of-the-art performance with only 2 million parameters—the smallest among top submissions—demonstrating that efficient design can outperform larger parameter-heavy models [10].

Other leading approaches included:

- Fully Convolutional Networks: Teams achieving 4th and 5th places used ResNet-based architectures [10]

- Transformer Models: One transformer architecture placed 3rd, incorporating random masking of 5% of input sequences and joint prediction of masked nucleotides and gene expression [10]

- Bidirectional LSTM: The 2nd-place solution employed recurrent networks with bidirectional long short-term memory layers [10]

- Augmented Encoding: Some teams extended traditional one-hot encoding with additional channels indicating sequence measurement conditions and orientation [10]

The modular Prix Fixe framework, developed to dissect architectural contributions, revealed that hybrid approaches combining successful elements from different models could further improve performance beyond individual submissions [10].

Table 2: Performance Comparison of Sequence-Based Model Architectures

| Model Architecture | Key Features | Training Innovations | Performance Highlights |

|---|---|---|---|

| EfficientNetV2 [10] | Soft-classification output, minimal parameters (2M) | Expression bin probability prediction, augmented encoding | 1st place DREAM Challenge, most parameter-efficient |

| Bidirectional LSTM [10] | Recurrent structure for sequence dependencies | Standard Adam/AdamW optimization | 2nd place DREAM Challenge |

| Transformer [10] | Attention mechanisms, contextual sequence processing | Masked nucleotide prediction as regularizer | 3rd place DREAM Challenge, stabilized training |

| ResNet-based CNN [10] | Fully convolutional, residual connections | Traditional one-hot encoding | 4th and 5th place DREAM Challenge |

| Scover [14] | Single convolutional layer, interpretable filters | k-nearest neighbor pooling for scRNA-seq sparsity | Explains 29% of expression variance in mouse tissues |

Gene Regulatory Network Inference Models

Beyond sequence-to-expression prediction, significant architectural innovation has occurred in GRN inference from gene expression data. Current methods can be broadly categorized into statistical, machine learning, and deep learning approaches, each with distinct strengths and limitations.

The DuCGRN framework represents a advanced graph neural network approach that employs K-hop aggregation to capture both direct and indirect regulatory relationships, along with multiscale feature extraction to model diverse regulatory mechanisms [12]. This dual context-aware model explicitly addresses the challenges of feedback loops and combinatorial regulation that simpler models struggle to capture [12].

DAZZLE introduces a different approach specifically designed to handle the zero-inflation (dropout) characteristic of single-cell RNA sequencing data [1]. Rather than imputing missing values, DAZZLE uses dropout augmentation as a regularization strategy, synthetically generating additional dropout events during training to improve model robustness [1]. This approach demonstrates how domain-specific data characteristics can drive architectural innovations.

GT-GRN leverages transformer architectures to integrate multiple information sources, including autoencoder-based embeddings, structural embeddings from previously inferred GRNs, and positional encodings capturing network topology [15]. This multi-network integration mitigates methodological bias by combining strengths across inference techniques [15].

Table 3: Comparative Analysis of GRN Inference Methods

| Method | Architecture | Data Input | Key Innovations | Reported Performance |

|---|---|---|---|---|

| DuCGRN [12] | Graph Neural Network | scRNA-seq | K-hop aggregation, multiscale feature extraction | Superior AUPR on 7 benchmark datasets |

| DAZZLE [1] | VAE with regularization | scRNA-seq | Dropout augmentation, noise classifier | Improved stability vs. DeepSEM, handles zero-inflation |

| GT-GRN [15] | Graph Transformer | Multi-network + expression | Multimodal embedding fusion, global attention | Enhanced cell-type-specific reconstruction |

| Scover [14] | Shallow CNN | scRNA-seq + sequence | De novo motif discovery, pool-based sparsity reduction | 29% variance explained in mouse tissues |

| DeepSEM [1] | Variational Autoencoder | scRNA-seq | Structure equation modeling, parameterized adjacency | Baseline performance on BEELINE benchmarks |

Cross-Species and Cross-Tissue Generalization

A critical test for sequence-based models is their ability to generalize across species and tissue contexts. The top DREAM Challenge models demonstrated remarkable transfer learning capabilities, consistently surpassing existing benchmarks not only on the yeast data they were trained on, but also on Drosophila and human genomic datasets [10]. This cross-species performance suggests that these models capture fundamental aspects of transcriptional regulation that transcend specific organisms.

In human contexts, Scover has been successfully applied to identify cell type-specific motif activities in both kidney and developing human brain datasets [14]. In the kidney, the model identified 16 reproducible motif families corresponding to known regulators, explaining 15% of gene expression variance in validation sets [14]. Application to human fetal and adult kidney scRNA-seq data further revealed distinct regulatory programs between nephron progenitors and nephron epithelium cells along developmental trajectories [14].

Experimental Protocols and Methodological Details

Massively Parallel Reporter Assays (MPRAs)

MPRAs represent a powerful experimental framework for characterizing sequence determinants of gene regulation at unprecedented scale [16]. These assays systematically test the transcriptional activity of DNA sequences representing ~100 times larger sequence space than the human genome [16]. The standard protocol involves:

Library Design:

- Cloning putative regulatory elements into reporter constructs

- Using STARR-seq designs where enhancers are cloned downstream of a minimal promoter

- Generating ultrahigh complexity libraries (billions of unique fragments) [16]

Transfection and Measurement:

- Delivering libraries to target cells (e.g., GP5d colon carcinoma, HepG2 hepatocellular carcinoma)

- Purifying total poly(A)+ RNA after appropriate incubation

- Recovering transcribed sequences via reverse-transcription PCR

- Quantifying sequence abundance by massively parallel sequencing [16]

Data Analysis:

- Calculating enhancer activities from RNA/DNA ratios

- Generating activity position weight matrices from single-base substitutions

- Comparing transcriptional activities with DNA-binding activities from complementary assays [16]

This protocol enables systematic characterization of how individual motifs, their combinations, spacing, and orientation contribute to regulatory activity, providing crucial training data for sequence-based models.

Model Training and Optimization Protocols

Training performant sequence-based models requires specialized protocols adapted to genomic data:

Data Preprocessing:

- DNA sequences are typically one-hot encoded into 4-channel representations

- Additional channels may encode sequence metadata (e.g., measurement conditions) [10]

- scRNA-seq data is transformed using log(x+1) to reduce variance while handling zeros [1]

Regularization Strategies:

- Dropout augmentation: synthetic zero-inflation to improve robustness to scRNA-seq dropout [1]

- Masked nucleotide prediction: jointly predicting expression and randomly masked bases [10]

- Adversarial training: generating realistic expression patterns through discriminator networks [12]

Architecture-Specific Optimization:

- Convolutional networks: using multiple filter widths to capture motifs of varying lengths [14]

- Graph networks: employing K-hop aggregation to propagate information across network neighbors [12]

- Transformer models: leveraging self-attention to capture long-range dependencies in sequences [15]

The Prix Fixe framework exemplifies a systematic approach to architecture optimization, decomposing models into modular components that can be mixed and matched to identify optimal configurations [10].

Visualization of Model Architectures and Workflows

Sequence-to-Expression Prediction Workflow

Diagram 1: Sequence-to-expression model workflow comparing architectural approaches. Top DREAM Challenge models diverged in fundamental architecture while converging on strong performance.

GRN Inference from Single-Cell Data

Diagram 2: GRN inference workflow highlighting method-specific approaches to handling single-cell data challenges like zero-inflation and network sparsity.

Table 4: Key Experimental Resources for Sequence-Based Regulatory Analysis

| Resource Category | Specific Tools/Datasets | Function and Application | Key Features |

|---|---|---|---|

| Benchmark Datasets | DREAM Challenge random promoters [10] | Training and evaluation of sequence-to-expression models | 6.7M random sequences with expression measurements |

| BEELINE scRNA-seq benchmarks [1] | Standardized GRN inference evaluation | Multiple cell types with reference networks | |

| Software Frameworks | Prix Fixe [10] | Modular model architecture analysis | Component-wise testing and optimization |

| Scover [14] | De novo motif discovery from scRNA-seq | Interpretable CNN with motif influence scoring | |

| DAZZLE [1] | GRN inference with dropout robustness | Augmentation-based regularization | |

| Experimental Assays | MPRA/STARR-seq [16] | High-throughput regulatory activity measurement | Billions of sequences tested in parallel |

| scRNA-seq [12] | Single-cell expression profiling | Cellular resolution of transcriptional states | |

| ATI assay [16] | Transcription factor binding activity | Complementary to transcriptional measurements | |

| Reference Databases | CIS-BP [14] | Motif discovery and annotation | Curated transcription factor binding specificities |

| REGNetwork/TRRUST [12] | Validated regulatory interactions | Ground truth for GRN inference evaluation |

The comparative analysis of sequence-based paradigms reveals several emerging trends in gene regulatory modeling. First, community-driven benchmarks have catalyzed rapid progress by enabling direct comparison of diverse architectural approaches [10]. Second, the best-performing models increasingly combine insights from multiple domains—incorporating elements from computer vision, natural language processing, and graph theory while addressing genomics-specific challenges like sequence sparsity and zero-inflation [10] [1]. Third, interpretability remains crucial, with leading methods providing not just predictions but also mechanistic insights through discovered motifs and influence scores [14].

The most impactful advances have come from models that successfully balance architectural sophistication with biological plausibility. The top DREAM Challenge performers approached the estimated inter-replicate experimental reproducibility for some sequence types, suggesting that models are approaching fundamental limits of predictability for certain regulatory tasks [10]. However, considerable improvement remains necessary for other sequence types, particularly in predicting the effects of non-coding variants and understanding complex regulatory grammars [10].

For researchers and drug development professionals, selecting appropriate sequence-based models requires careful consideration of experimental context and regulatory questions. Convolutional approaches excel at motif discovery and expression prediction from sequence alone [10] [14], while graph-based methods provide superior performance for network inference from expression data [12] [15]. As these paradigms continue to converge and evolve, they promise to unlock deeper understanding of regulatory mechanisms and their implications for human health and disease.

The emergence of single-cell RNA sequencing (scRNA-seq) has fundamentally transformed our ability to decipher Gene Regulatory Networks (GRNs), providing unprecedented resolution to analyze cellular heterogeneity and gene expression dynamics at the single-cell level. scRNA-seq technology enables high-throughput profiling of gene expression in individual cells, capturing cell-to-cell biological variability and identifying cell-type-specific expression patterns that are often obscured in bulk sequencing approaches [12] [15]. This technological advancement has revolutionized GRN inference—the process of mapping complex regulatory interactions between genes—by providing the data resolution necessary to uncover regulatory mechanisms driving cellular processes, development, differentiation, and disease progression [12]. The ensuing sections provide a comparative analysis of contemporary computational methods leveraging scRNA-seq data for GRN inference, examining their methodological foundations, performance characteristics, and applicability to different biological contexts.

Comparative Analysis of scRNA-seq-Based GRN Inference Methods

Method Categories and Underlying Principles

Computational methods for GRN inference from scRNA-seq data have evolved significantly, ranging from traditional statistical approaches to sophisticated deep learning frameworks. Table 1 summarizes the key methodological categories, their underlying principles, and representative algorithms.

Table 1: Computational Method Categories for GRN Inference from scRNA-seq Data

| Method Category | Underlying Principle | Key Algorithms/Examples | Typical Applications |

|---|---|---|---|

| Statistical & Information-Theoretic | Infers associations based on correlation, mutual information, or differential equations | LEAP [12], ARACNE, CLR, MRNET [15] | Initial network inference, hypothesis generation |

| Supervised Machine Learning | Treats GRN inference as a classification task using labeled training data | Support Vector Machines (SVM) [15], GRADIS [15] | Prediction when partial ground truth networks exist |

| Graph Neural Network (GNN) Models | Models gene interactions as graph structures using neural networks | GRGNN [15], GNE [15] | Capturing local network topology and dependencies |

| Graph Transformer Models | Employs self-attention mechanisms to capture global regulatory contexts | GT-GRN [15], DuCGRN [12] | Integrating multimodal data, capturing long-range dependencies |

Performance Comparison Across Methodologies

Recent benchmarking studies on diverse scRNA-seq datasets enable objective performance comparisons between different GRN inference approaches. Table 2 presents quantitative performance metrics for several advanced methods across multiple biological contexts, highlighting their predictive accuracy and robustness.

Table 2: Performance Comparison of Advanced GRN Inference Methods on Benchmark Datasets

| Method | Core Architecture | hESC (AUROC) | mESC (AUROC) | mDC (AUROC) | Key Strengths |

|---|---|---|---|---|---|

| GT-GRN [15] | Graph Transformer | 0.912 | 0.896 | 0.885 | Superior integration of multimodal embeddings, excellent capture of global context |

| DuCGRN [12] | Dual Context-Aware GNN | 0.874 | 0.862 | 0.841 | Effective capture of direct/indirect regulation via K-hop aggregation |

| GNE [15] | Graph Neural Network | 0.832 | 0.819 | 0.798 | Scalable integration of known interactions and expression profiles |

| GRGNN [15] | Graph Neural Network | 0.815 | 0.801 | 0.782 | Formulates GRN inference as graph classification problem |

| NSCGRN [15] | Network Structure Control | 0.791 | 0.783 | 0.769 | Combines global partitioning with local network motif refinement |

The performance data reveal that transformer-based architectures (GT-GRN) consistently achieve superior predictive accuracy across diverse cell types, including human embryonic stem cells (hESC), mouse embryonic stem cells (mESC), and mouse dendritic cells (mDC) [15]. The strength of these models lies in their ability to integrate multiple data sources—including gene expression patterns, network topology, and prior biological knowledge—through self-attention mechanisms that capture both local and global regulatory contexts [15]. Methods like DuCGRN demonstrate particular effectiveness in modeling complex regulatory interactions, including indirect relationships, feedback loops, and combinatorial regulation through their K-hop aggregation and multiscale feature extraction modules [12].

Experimental Protocols for GRN Inference

Standardized Workflow for scRNA-seq Data Analysis

A robust GRN inference pipeline requires meticulous data preprocessing and analysis. The following workflow, implemented using tools like Seurat, represents a community-standard approach for scRNA-seq data analysis [17]:

- Quality Control (QC) and Filtering: Cells are filtered based on metrics including the number of detected genes, total molecular counts, and the proportion of mitochondrial gene expression to eliminate low-quality cells and technical artifacts [18].

- Data Normalization: Normalizing gene expression counts to account for technical variability (e.g., sequencing depth) without introducing biases, enabling valid cross-cell comparisons [19].

- Feature Selection: Identifying highly variable genes that drive biological heterogeneity, often focusing on transcription factors and potential regulatory elements for GRN construction [17].

- Dimensionality Reduction: Applying techniques like Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE) to reduce data complexity while preserving biological signal [20].

- Cell Clustering and Annotation: Grouping cells based on gene expression patterns and annotating cell types using marker gene databases (e.g., CellMarker, PanglaoDB) or reference-based correlation methods [18].

- Differential Expression Analysis: Identifying statistically significant gene expression changes between conditions or cell populations to inform potential regulatory relationships [17].

- GRN Inference: Applying specialized computational methods (as compared in Section 2) to predict regulatory interactions from the processed single-cell data.

Diagram 1: Standard scRNA-seq Data Analysis Workflow

Specialized Protocol for Advanced GRN Inference Methods

For implementing advanced methods like GT-GRN and DuCGRN, specialized protocols are required:

GT-GRN Implementation Protocol [15]:

- Multimodal Embedding Generation:

- Gene Expression Embedding: Process normalized expression data through an autoencoder to extract biologically meaningful latent representations.

- Structural Embedding: Convert previously inferred GRNs into node sequences, then apply Bidirectional Encoder Representations from Transformers (BERT) to learn global gene representations.

- Positional Encoding: Capture each gene's topological role within the network structure.

- Feature Fusion and Processing: Integrate the three embedding types and process through a Graph Transformer model using self-attention mechanisms.

- Model Training and Validation: Train the integrated model using adversarial training for robustness, then validate on benchmark datasets with known regulatory interactions.

DuCGRN Implementation Protocol [12]:

- Graph Construction: Represent partially known regulatory interactions as a graph structure G = (V,E) where V represents genes and E represents verified regulatory relationships.

- Dual Context-Aware Feature Extraction:

- Employ K-hop aggregation to capture both direct and indirect regulatory relationships by aggregating information from multi-hop neighbors.

- Apply multiscale feature extraction using parallel graph convolution layers to capture diverse regulatory mechanisms.

- Adversarial Training: Implement Generative Adversarial Network (GAN) framework to address data sparsity and generate biologically plausible gene expression patterns.

- GRN Prediction: Frame the inference task as a link prediction problem to identify novel regulatory interactions Epred not present in the initially observed network Eobs.

Diagram 2: Advanced GRN Inference Architecture

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of scRNA-seq-based GRN inference requires both computational tools and biological resources. Table 3 catalogues essential research reagents, databases, and computational tools that form the foundation of this research domain.

Table 3: Essential Research Reagent Solutions for scRNA-seq GRN Studies

| Resource Category | Specific Resource | Key Function | Application Context |

|---|---|---|---|

| Marker Gene Databases | CellMarker 2.0 [18] | Provides cell-type-specific marker genes | Cell type annotation and validation |

| PanglaoDB [18] | Curated database of cell type markers | Cross-referencing and cell identity confirmation | |

| Reference Atlases | Human Cell Atlas (HCA) [18] | Multi-organ single-cell reference data | Contextualizing findings within human tissues |

| Tabula Muris [18] | Comprehensive mouse cell atlas | Mouse model studies and cross-species comparison | |

| Allen Brain Atlas [18] | Brain-specific single-cell data | Neuroscience-focused GRN studies | |

| Computational Tools | Seurat [17] | Comprehensive scRNA-seq analysis toolkit | Data preprocessing, clustering, and visualization |

| bigPint [19] | Interactive visualization for RNA-seq data | Quality assessment and differential expression visualization | |

| SCTrans [18] | Deep learning for gene selection | Automatic feature discovery and marker gene identification | |

| Experimental Validation | ChIP-seq [12] | Transcription factor binding site mapping | Experimental confirmation of predicted regulatory interactions |

| CRISPR-Cas9 Screening [21] | Functional perturbation of candidate genes | Validation of regulatory relationships through knockout studies |

The revolution in expression-based approaches leveraging single-cell RNA-seq data has fundamentally advanced GRN inference, enabling researchers to decipher complex regulatory landscapes with unprecedented cellular resolution. The comparative analysis presented herein demonstrates that while traditional statistical methods provide foundational approaches, advanced deep learning architectures—particularly graph transformer models—consistently achieve superior performance by effectively integrating multimodal data and capturing complex regulatory contexts. As the field progresses, key challenges remain in addressing data sparsity, improving model interpretability, and dynamically updating marker gene databases through integration of deep learning feature selection with biological validation [18]. The continued development of specialized computational frameworks that can handle the unique characteristics of single-cell data—including its heterogeneity, technical noise, and complex hierarchical structure—will further empower researchers to unravel the intricate gene regulatory mechanisms underlying development, disease, and cellular function.

Gene Regulatory Networks (GRNs) are mathematical representations of the complex interactions between transcription factors (TFs) and their target genes, serving as crucial models for understanding cellular fate, development, and disease mechanisms [22]. The inference of these networks from omics data has evolved significantly over the past two decades, transitioning from bulk transcriptomic analyses to sophisticated single-cell and multi-omics approaches [22]. This evolution addresses a fundamental challenge in computational biology: reconstructing accurate causal relationships from observational and interventional data despite cellular heterogeneity, technical noise, and the inherent complexity of biological systems [23] [2].

Current GRN inference methods grapple with several persistent challenges. Single-cell RNA sequencing (scRNA-seq) data, while offering unprecedented resolution, is characterized by significant "dropout" events—erroneous zero counts that create zero-inflated data and obscure true biological signals [3] [1]. Furthermore, regulatory relationships are highly dynamic, changing across cell types and states, which traditional bulk methods fail to capture [23]. The integration of diverse data types, particularly sequence-based information (e.g., chromatin accessibility) with expression data, has emerged as a promising path toward more comprehensive GRN maps, though this integration presents its own computational challenges [22] [24].

This guide provides a comparative analysis of contemporary GRN inference methodologies, focusing on their approaches to data integration, handling of single-cell specific challenges, and performance in realistic benchmarking environments. We examine experimental protocols, key findings, and practical implementations to equip researchers with the knowledge needed to select appropriate tools for their specific biological questions.

Methodological Approaches: From Single-Cell to Multi-Omics Integration

Overcoming Single-Cell Data Challenges

Single-cell RNA sequencing data presents unique obstacles for GRN inference, primarily due to dropout events where transcripts are not captured by sequencing technology, resulting in 57-92% zero values in typical datasets [3] [1]. Several innovative methods have been developed to address this fundamental limitation:

DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) introduces a counter-intuitive but effective regularization strategy called Dropout Augmentation (DA) [3] [1]. Rather than imputing missing values, DAZZLE augments training data with synthetic dropout events, exposing the model to multiple versions of the data with different dropout patterns. This approach builds upon an autoencoder-based structural equation model (SEM) framework similar to DeepSEM but incorporates several modifications: improved sparsity control for the adjacency matrix, a simplified model structure, and a closed-form prior distribution [3]. These innovations result in a 21.7% parameter reduction and 50.8% faster computation compared to DeepSEM while demonstrating improved stability and robustness in benchmarks [1].

PMF-GRN utilizes a probabilistic matrix factorization approach to decompose observed gene expression into latent factors representing transcription factor activity and regulatory relationships [24]. This variational inference framework incorporates prior knowledge from genomic databases and chromatin accessibility measurements to guide the factorization process. A key advantage of PMF-GRN is its well-calibrated uncertainty estimates for each predicted regulatory interaction, providing researchers with confidence metrics for downstream analyses [24].

inferCSN addresses cellular heterogeneity and dynamic network changes by incorporating pseudotemporal ordering of cells [23]. The method accounts for uneven cell distribution across pseudotime by partitioning cells into windows to eliminate density-related biases, then applies a sparse regression model combined with reference network information to construct cell state-specific regulatory networks [23].

Table 1: Key Methodological Approaches for GRN Inference

| Method | Core Approach | Data Requirements | Unique Features | Scalability |

|---|---|---|---|---|

| DAZZLE | Autoencoder SEM with dropout augmentation | scRNA-seq | Enhanced robustness to dropout events; No gene filtration needed | Handles 15,000+ genes efficiently [3] |

| PMF-GRN | Probabilistic matrix factorization with VI | scRNA-seq + prior networks | Uncertainty quantification; Hyperparameter optimization | GPU acceleration via stochastic gradient descent [24] |

| inferCSN | Sparse regression + pseudotime analysis | scRNA-seq | Cell state-specific networks; Density-aware windowing | Robust across datasets of different scales [23] |

| HyperG-VAE | Hypergraph variational autoencoder | scRNA-seq | Captures gene modules and cellular heterogeneity simultaneously | Effective for B cell development analysis [25] |

| SCENIC | TF coexpression + motif analysis | scRNA-seq | Regulon identification; Cell-type specific regulators | Widely adopted; extensive community support [22] |

Multi-Omics Integration Strategies

The integration of transcriptomic and epigenomic data provides a more robust foundation for GRN inference by incorporating direct evidence of potential regulatory interactions through chromatin accessibility measurements [22]. ATAC-seq data reveals accessible genomic regions where transcription factors can bind, complementing expression-based inference with structural evidence.

Multiple tools have been developed specifically for multi-omics GRN inference, employing diverse statistical frameworks:

Pando utilizes a flexible framework that integrates single-cell ATAC-seq and RNA-seq data, employing either linear or non-linear models to infer signed, weighted regulatory interactions [22]. It operates within both frequentist and Bayesian statistical paradigms, allowing for different assumptions about the underlying data distributions.

SCENIC+ extends the popular SCENIC framework to incorporate chromatin accessibility data, enabling the identification of candidate enhancer elements and their target genes [22]. This expansion allows for more precise mapping of regulatory interactions by combining co-expression patterns with physical evidence of regulatory potential.

GRaNIE and FigR both employ linear modeling approaches but differ in their implementation details. GRaNIE works with both paired and integrated multi-omics data, while FigR provides signed, weighted interaction scores based on frequentist statistics [22].

Table 2: Multi-Omics GRN Inference Tools

| Tool | Multimodal Data Type | Modeling Approach | Interaction Type | Statistical Framework |

|---|---|---|---|---|

| ANANSE | Unpaired | Linear | Weighted | Frequentist [22] |

| CellOracle | Unpaired | Linear | Signed, weighted | Frequentist/Bayesian [22] |

| DIRECT-NET | Paired/Integrated | Non-linear | Binary | Frequentist [22] |

| FigR | Paired/Integrated | Linear | Signed, weighted | Frequentist [22] |

| GLUE | Paired/Integrated | Non-linear | Weighted | Frequentist [22] |

| GRaNIE | Paired/Integrated | Linear | Weighted | Frequentist [22] |

| Pando | Paired/Integrated | Linear/Non-linear | Signed, weighted | Frequentist/Bayesian [22] |

| SCENIC+ | Paired/Integrated | Linear | Signed, weighted | Frequentist [22] |

Figure 1: Workflow for Integrated GRN Inference from Multi-Omics Data

Experimental Protocols and Benchmarking Frameworks

Standardized Evaluation Methodologies

Robust benchmarking of GRN inference methods requires carefully designed experimental protocols and evaluation metrics. The BEELINE benchmark has emerged as a standard framework, providing synthetic and real datasets with approximately known "ground truth" networks for method validation [3] [24]. Typical evaluation workflows include:

Data Preprocessing: Raw sequencing data in FASTQ format undergoes quality control using tools like FastQC, adapter trimming with Trimmomatic, alignment to reference genomes with STAR, and gene-level quantification [7]. Count normalization methods like the weighted trimmed mean of M-values (TMM) from edgeR are applied to correct for technical variability [7].

Performance Metrics: Area Under the Precision-Recall Curve (AUPRC) and Area Under the Receiver Operating Characteristic (AUROC) serve as primary metrics for evaluating binary classification performance in network inference [23] [24]. These metrics provide complementary views of method performance across different class imbalance scenarios common in GRN inference where true edges are sparse.

CausalBench Evaluation: The CausalBench framework introduces biologically-motivated metrics including mean Wasserstein distance and false omission rate (FOR) to evaluate performance on large-scale single-cell perturbation data [26]. This suite utilizes data from genetic perturbation experiments (CRISPRi) in cell lines like RPE1 and K562, containing over 200,000 interventional datapoints [26].

Comparative Performance Analysis

Recent benchmarking studies reveal distinct performance patterns across method categories:

CausalBench Results: In comprehensive evaluations using real-world perturbation data, methods like Mean Difference and Guanlab demonstrated superior performance in statistical evaluations, while GRNBoost achieved high recall but with lower precision [26]. Notably, methods specifically designed to utilize interventional data did not consistently outperform those using only observational data, contrary to theoretical expectations [26].

BEELINE Benchmarks: PMF-GRN consistently outperformed state-of-the-art methods including Inferelator, SCENIC, and Cell Oracle in recovering true underlying GRNs across multiple datasets [24]. The method demonstrated particular strength in providing well-calibrated uncertainty estimates, with prediction accuracy increasing as uncertainty decreased [24].

inferCSN Validation: When tested on both simulated and real scRNA-seq datasets, inferCSN outperformed competing methods (GENIE3, SINCERITIES, PPCOR, LEAP, SCINET) across multiple performance metrics [23]. The method demonstrated robust performance across different dataset types (steady-state, linear) and scales (varying cell and gene numbers) [23].

Table 3: Performance Comparison Across Benchmarking Studies

| Method | AUROC Range | AUPRC Range | Key Strengths | Limitations |

|---|---|---|---|---|

| DAZZLE | Not reported | Not reported | Stability; Handles zero-inflation; Minimal gene filtration | Less effective without dropout characteristics [3] |

| PMF-GRN | High | 0.85-0.95 (yeast) | Uncertainty quantification; Hyperparameter optimization | Requires prior network information [24] |

| inferCSN | 0.75-0.92 (simulated) | Not reported | Cell state-specific networks; Robust to dataset scale | Complex parameter tuning [23] |

| GENIE3 | Moderate | Moderate | Widely adopted; No species restrictions | High false positive rate; Ignores cellular heterogeneity [23] [22] |

| SCENIC | Moderate | Moderate | Regulon identification; Extensive validation | Performance varies by cell type [24] |

Implementation and Practical Application

Research Reagent Solutions

Successful GRN inference requires not only computational tools but also appropriate data resources and software implementations:

Table 4: Essential Research Reagents and Resources for GRN Inference

| Resource | Type | Function | Example Sources/Implementations |

|---|---|---|---|

| CisTarget Databases | Motif collection | TF binding site enrichment analysis | SCENIC reference databases [22] |

| Prior Network Information | Network database | Guides probabilistic inference | Genomic databases integrated in PMF-GRN [24] |

| BEELINE Datasets | Benchmark data | Method validation and comparison | Synthetic networks with partial ground truth [3] [24] |

| CausalBench Suite | Evaluation framework | Performance metrics on perturbation data | RPE1 and K562 CRISPRi datasets [26] |

| Single-Cell Multi-Omics | Paired datasets | Integrated sequence + expression analysis | SCENIC+, Pando, GRaNIE inputs [22] |

Workflow Implementation

Figure 2: Standard GRN Inference Workflow

Implementation of GRN inference methods follows a general workflow with method-specific adaptations:

DAZZLE Implementation: The method preprocesses raw count data using a log(x+1) transformation to reduce variance and avoid undefined values [3] [1]. Training incorporates alternating optimization between the adjacency matrix and other network parameters, with delayed introduction of sparsity constraints to improve stability [1].

PMF-GRN Execution: This framework utilizes stochastic gradient descent on GPUs for scalable inference, enabling application to large-scale single-cell datasets [24]. The variational inference approach automatically performs hyperparameter selection through evidence lower bound (ELBO) optimization, replacing heuristic model selection with principled probabilistic comparison [24].

SCENIC Pipeline: The standard SCENIC workflow includes co-expression network construction using GENIE3, regulon identification through motif enrichment analysis, and cellular network activity scoring using AUCell [22]. This multi-step process generates both the global regulatory network and cell-specific regulatory activities.

The field of GRN inference continues to evolve with several promising research directions. Transfer learning approaches that leverage knowledge from data-rich species (e.g., Arabidopsis) to inform networks in less-characterized organisms have shown potential for cross-species analysis [7]. Hybrid models that combine convolutional neural networks with traditional machine learning consistently outperform single-method approaches, achieving over 95% accuracy in some holdout tests [7].

The development of more realistic benchmarking frameworks like CausalBench, which utilizes real-world perturbation data rather than synthetic networks, represents a crucial advancement for proper method evaluation [26]. Additionally, methods that explicitly model network properties including sparsity, hierarchical organization, and modular structure show promise for better capturing biological reality [2].

In conclusion, no single GRN inference method universally outperforms all others across all data types and biological contexts. DAZZLE offers particular advantages for single-cell data with significant dropout characteristics, while PMF-GRN provides crucial uncertainty estimates for probabilistic interpretation. inferCSN enables the discovery of dynamic, cell-state-specific networks, and multi-omics tools like SCENIC+ and Pando leverage complementary data types for more accurate inference. Researchers should select methods based on their specific data characteristics, biological questions, and need for interpretability versus scalability.

As the field progresses, the integration of more diverse data types, improved scalability for ever-larger single-cell datasets, and more sophisticated modeling of regulatory dynamics will continue to enhance our ability to map the complex regulatory landscapes underlying cellular function and disease.

Key Applications in Drug Discovery and Functional Genomics

Functional genomics is an emerging field that aims to deconvolute the link between genotype and phenotype by utilizing large -omic datasets and next-generation gene editing tools [27]. This discipline has become increasingly transformative for drug discovery, as many complex diseases—including diabetes, autoimmune diseases, cancer, and neurological disorders—are caused by a dysregulation of a complex interplay of genes [27]. The incorporation of functional genomic capabilities into conventional drug development pipelines is predicted to expedite the development of first-in-class therapeutics by improving disease modeling and identifying novel drug targets with higher validation rates [27] [28].

Gene Regulatory Network (GRN) inference represents a crucial methodology within functional genomics that systematically maps the complex interactions between genes, transcription factors, and regulatory elements [12]. By elucidating the intricate regulatory mechanisms driving cellular processes, GRN analysis provides a powerful framework for understanding disease pathogenesis and identifying therapeutic intervention points [12] [29]. The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized this field by enabling high-resolution gene expression profiling at cellular resolution, providing unprecedented insights into cellular heterogeneity and disease mechanisms [12] [15] [29].

Comparative Analysis of Modern GRN Inference Methods

Methodologies and Technical Approaches

Recent advances in computational methods have significantly improved the accuracy and biological relevance of GRN inference. Several innovative approaches have emerged that leverage different computational frameworks to address the challenges of data sparsity, noise, and complex regulatory relationships in single-cell data.

Table 1: Key Methodological Features of Modern GRN Inference Approaches

| Method | Computational Framework | Key Innovation | Data Integration Capabilities |

|---|---|---|---|

| LINGER [29] | Lifelong neural network with elastic weight consolidation | Incorporates atlas-scale external bulk data as prior knowledge | Single-cell multiome data + external bulk resources + TF motif prior |

| DuCGRN [12] | Graph Neural Networks with K-hop aggregation | Dual context-aware mechanism for topological/contextual feature extraction | Single-cell RNA-seq data + partially observed regulatory networks |

| GT-GRN [15] | Graph Transformer with multimodal embedding | Integrates topological, expression, and positional gene embeddings | Multiple inferred networks + gene expression profiles + network structures |

| Gene2role [9] | Role-based graph embedding (SignedS2V) | Focuses on comparative analysis of signed GRNs across cell states | Single-cell co-expression networks + multi-omics networks |

| NeighbourNet [30] | Local regression within k-nearest neighbors | Constructs cell-specific co-expression networks without predefined clusters | Single-cell RNA-seq data (requires no prior cluster definitions) |

LINGER (Lifelong neural network for gene regulation) employs a sophisticated lifelong learning framework that pre-trains a neural network on external bulk data from diverse cellular contexts, then refines the model on single-cell multiome data using elastic weight consolidation (EWC) to prevent catastrophic forgetting of prior knowledge [29]. The model architecture consists of a three-layer neural network that fits target gene expression using transcription factor expression and regulatory element accessibility as inputs, with the second layer forming regulatory modules guided by TF-RE motif matching through manifold regularization [29].

DuCGRN (Dual Context-aware model for GRN prediction) addresses the challenge of capturing complex regulatory interactions by introducing a K-hop aggregation mechanism that updates gene representations by aggregating information from both immediate and distant neighbors in the network [12]. This approach is complemented by a multiscale feature extractor composed of multiple parallel graph convolution layers to capture features at varying scales, enabling the model to reflect diverse regulatory mechanisms and combinatorial effects on target genes [12].

GT-GRN leverages a Graph Transformer framework that integrates three complementary sources of information: autoencoder-based embeddings capturing high-dimensional gene expression patterns; structural embeddings derived from previously inferred GRNs and encoded via random walks with a BERT-based language model; and positional encodings capturing each gene's role within the network topology [15]. This multimodal embedding approach allows the joint modeling of both local and global regulatory structures through attention mechanisms [15].

Performance Comparison and Validation Metrics

Rigorous benchmarking against experimental validation datasets demonstrates the superior performance of these modern methods compared to traditional approaches.

Table 2: Performance Comparison of GRN Inference Methods on Validation Benchmarks

| Method | Trans-regulation AUC | Trans-regulation AUPR Ratio | Cis-regulation AUC | Experimental Validation |

|---|---|---|---|---|

| LINGER [29] | ~4-7x relative improvement | ~4-7x relative improvement | Significant improvement over scNN | ChIP-seq targets (20 blood cell datasets) |

| DuCGRN [12] | Outperforms existing methods | Outperforms existing methods | Not explicitly reported | Seven scRNA-seq datasets (human and mouse) |

| GT-GRN [15] | Outperforms existing methods | High predictive accuracy | Not explicitly reported | Benchmark datasets + cell-type classification |

| Traditional Methods [29] | Marginally better than random | Low precision | Limited accuracy | Various experimental validations |

LINGER has demonstrated remarkable performance improvements, achieving a fourfold to sevenfold relative increase in accuracy over existing methods when validated against ChIP-seq data from 20 different blood cell datasets [29]. For cis-regulatory inference, LINGER also showed significantly higher AUC and AUPR ratio compared to neural network baselines across different distance groups between regulatory elements and target genes when validated against eQTL data from GTEx and eQTLGen [29].

DuCGRN was comprehensively evaluated on seven real-world scRNA-seq datasets comprising two human and five mouse cell lines, including human embryonic stem cells (hESC), human hepatocytes (hHep), mouse dendritic cells (mDC), mouse embryonic stem cells (mESC), and three mouse hematopoietic stem cell lineages [12]. Experimental results demonstrated that DuCGRN effectively learns complex gene regulatory interactions and outperforms existing methods in GRN prediction [12].

A critical finding from comparative studies of network analysis approaches reveals that the network modeling choice has less impact on downstream results than the network analysis strategy selected [5] [6]. The largest differences in biological interpretation were observed between node-based and community-based network analysis methods, with additional differences noted between single time point and combined time point modeling [5] [6].

Experimental Protocols for GRN Inference

LINGER Experimental Workflow and Validation

The LINGER framework follows a systematic protocol for GRN inference from single-cell multiome data:

Step 1: Data Preprocessing and Integration

- Input: Count matrices of gene expression and chromatin accessibility with cell type annotations

- Integration of external bulk data from ENCODE project (hundreds of samples across diverse cellular contexts)

- Matrix normalization and quality control

Step 2: Model Pre-training

- Neural network pre-training on external bulk data (BulkNN)

- Architecture: Three-layer neural network fitting TG expression using TF expression and RE accessibility

- Incorporation of TF-RE motif matching as manifold regularization

Step 3: Model Refinement

- Application of Elastic Weight Consolidation (EWC) loss using bulk data parameters as prior

- Fisher information calculation to determine parameter deviation magnitude

- Bayesian updating of posterior distribution combining prior knowledge with new data likelihood

Step 4: Regulatory Inference

- Calculation of regulatory strength of TF-TG and RE-TG interactions using Shapley values

- TF-RE binding strength generation by correlation of TF and RE parameters from second layer

- Construction of cell type-specific and cell-level GRNs based on general GRN and cell type-specific profiles

Validation Framework:

- Trans-regulation validation: 20 ChIP-seq datasets from blood cells as ground truth

- Cis-regulation validation: eQTL data from GTEx (whole blood) and eQTLGen

- Performance metrics: Area Under ROC Curve (AUC) and Area Under Precision-Recall Curve (AUPR) ratio

DuCGRN Model Architecture and Training

The DuCGRN framework employs these specific experimental procedures:

Network Representation:

- GRN represented as G=(V,E) where V represents gene set and E represents regulatory relationships

- Partially observed network Gobs=(V,Eobs) with experimentally verified edges E_obs

Model Components:

- K-hop aggregator: Captures long-range regulatory relationships by propagating information across multi-hop neighbors

- Multiscale feature extractor: Multiple parallel graph convolution layers capturing features at varying scales

- Dual context-aware mechanisms: Extract topological and contextual features from GRNs

- Adversarial training: Generates realistic gene expression patterns using GAN framework

Training Procedure:

- Encoder: Graph convolutional network combined with K-hop graph attention network (GAT)

- Decoder: Inner product decoder predicting potential regulatory relationships

- Loss function: Binary cross-entropy loss with adversarial training component

Datasets for Evaluation:

- Seven scRNA-seq datasets: hESC, hHep, mDC, mESC, mHSC-E, mHSC-L, mHSC-GM

- Pre-processing: Gene count matrices filtered to include only highly variable genes

- Data partitioning: 70% for training, 15% for validation, 15% for testing

Visualization of GRN Inference Workflows

LINGER Method Workflow

DuCGRN Model Architecture

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Functional Genomics and GRN Analysis

| Reagent/Technology | Function | Application in GRN Studies |

|---|---|---|

| Next-Generation Sequencing Kits [31] | Library preparation for high-throughput sequencing | scRNA-seq library construction for gene expression profiling |

| CRISPR Screening Tools [27] [28] | High-throughput gene editing and functional validation | Identification of critical disease genes and drug targets |

| Single-cell Multiome Kits [29] | Simultaneous profiling of gene expression and chromatin accessibility | Paired scRNA-seq + scATAC-seq for enhanced GRN inference |

| Chromatin Immunoprecipitation Kits [29] | Protein-DNA interaction mapping | Experimental validation of TF binding sites (ChIP-seq) |

| Quality Control Reagents [31] | Nucleic acid quality assessment and quantification | Ensure data integrity for accurate GRN reconstruction |

| Transcription Factor Assays [9] | TF activity measurement and profiling | Validation of predicted regulatory interactions |

| Bioinformatics Platforms [28] [15] | Data analysis and visualization | Implementation of computational GRN inference methods |

The functional genomics market reflects the critical importance of these research tools, with kits and reagents expected to dominate the market share at 68.1% in 2025 [31]. Within the technology segment, Next-Generation Sequencing is projected to lead with a 32.5% share, underscoring its fundamental role in modern genomic analysis [31]. The significant investment in these research tools—with the global functional genomics market estimated at USD 11.34 billion in 2025 and expected to reach USD 28.55 billion by 2032—demonstrates their essential position in advancing drug discovery and therapeutic development [31].

Applications in Drug Discovery and Therapeutic Development

The integration of advanced GRN inference methods with functional genomics approaches has enabled several key applications in drug discovery:

Target Identification and Validation

Functional genomics approaches utilizing CRISPR screens and GRN analysis have dramatically improved the identification and validation of novel drug targets [27] [28]. By precisely mapping regulatory relationships in specific disease contexts, researchers can prioritize targets with higher confidence in their therapeutic relevance. For example, LINGER's ability to achieve fourfold to sevenfold improvements in accuracy enables more reliable identification of master regulator transcription factors that drive disease phenotypes [29]. These factors represent promising therapeutic targets, as their modulation can potentially reset entire disease-associated regulatory programs.

Personalized Medicine and Biomarker Discovery

GRN analysis at single-cell resolution enables the identification of patient-specific regulatory programs that can guide personalized treatment strategies [28] [29]. Methods like LINGER can estimate transcription factor activity solely from bulk or single-cell gene expression data, leveraging the abundance of available gene expression data to identify driver regulators from case-control studies [29]. This capability facilitates the development of companion diagnostics and patient stratification biomarkers based on regulatory network activity rather than single gene expression levels.

Disease Mechanism Elucidation

The application of comparative GRN analysis across different cell states or disease conditions provides unprecedented insights into disease mechanisms [9]. Gene2role enables the identification of genes with significant topological changes across cell types or states, offering a fresh perspective beyond traditional differential gene expression analyses [9]. This approach can reveal master regulator genes whose regulatory influence changes dramatically in disease states, potentially uncovering novel pathogenic mechanisms and therapeutic intervention points.

Drug Repurposing and Combination Therapy

GRN-based approaches can identify new indications for existing drugs by revealing shared regulatory programs between apparently unrelated diseases [27]. Additionally, analysis of regulatory networks can inform rational combination therapy design by identifying co-regulatory modules that control disease resilience or resistance mechanisms. The ability of methods like GT-GRN to integrate multiple networks and capture global regulatory structures makes them particularly valuable for understanding complex drug response mechanisms [15].

The integration of advanced GRN inference methods with functional genomics approaches represents a paradigm shift in drug discovery and therapeutic development. Methods like LINGER, DuCGRN, and GT-GRN demonstrate that substantial improvements in accuracy and biological relevance are achievable through innovative computational frameworks that leverage multiple data modalities and prior knowledge [12] [15] [29]. These approaches enable researchers to move beyond static gene expression analysis to dynamic regulatory network modeling, providing deeper insights into disease mechanisms and more reliable target identification.

As the field continues to evolve, several trends are likely to shape future developments: the increasing integration of multi-omics data at single-cell resolution, the adoption of continuous learning frameworks that accumulate knowledge across studies, and the development of more sophisticated visualization and interpretation tools for complex network data [28] [31]. With the functional genomics market poised for significant growth—projected to reach USD 28.55 billion by 2032—the continued innovation in GRN inference methodologies will play a crucial role in accelerating the development of novel therapeutics for complex diseases [31].

Advanced Methodologies: Graph Neural Networks, Transformers and Hybrid Models for GRN Construction

In the field of genomics, accurately modeling gene regulation represents a fundamental challenge with profound implications for understanding cellular biology and advancing therapeutic development. Sequence-based deep learning architectures have emerged as powerful tools for deciphering the complex relationship between DNA sequences and gene expression levels, enabling researchers to move beyond traditional statistical methods. Among these architectures, Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Transformers have demonstrated particular promise, each offering distinct mechanisms for processing genomic information [32]. These models have been increasingly applied to predict gene expression from regulatory sequences and to reconstruct Gene Regulatory Networks (GRNs), which map the causal relationships between transcription factors and their target genes [33] [34].

The performance of these architectures varies significantly based on their structural inductive biases, training requirements, and ability to capture both local cis-regulatory elements and long-range genomic dependencies. This comparative analysis examines these architectures within the specific context of GRN and gene expression prediction research, synthesizing evidence from recent benchmarking studies and experimental implementations to guide researchers in selecting appropriate models for their scientific inquiries.

Core Architectural Principles

Each major architecture brings fundamentally different approaches to processing biological sequences:

Convolutional Neural Networks (CNNs) employ hierarchical filters that scan local regions of input sequences to detect motifs and regulatory elements. This architecture excels at identifying spatially local patterns through weight sharing and translational invariance, making it particularly suitable for recognizing transcription factor binding sites regardless of their precise position within a regulatory region [32] [10].

Recurrent Neural Networks (RNNs), including Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) variants, process sequences sequentially while maintaining an internal hidden state that functions as a memory mechanism. This design allows them to capture temporal dependencies and dynamic patterns in time-series gene expression data, making them valuable for modeling the temporal aspects of gene regulation [35] [36].

Transformer architectures utilize a self-attention mechanism that computes pairwise interactions between all positions in a sequence simultaneously. This global receptive field enables Transformers to model long-range dependencies and complex interactions between distant regulatory elements without the constraint of sequential processing inherent in RNNs [34] [37].

Quantitative Performance Comparison

Experimental benchmarking across genomic prediction tasks reveals distinct performance profiles for each architecture. The following table summarizes key quantitative findings from recent studies: