Bridging Deep Time and Modern Data: Integrating Fossils into Phylogenetic Comparative Methods for Evolutionary Insight and Drug Discovery

This article provides a comprehensive overview of the methods, applications, and challenges of integrating fossil data with phylogenetic comparative analyses.

Bridging Deep Time and Modern Data: Integrating Fossils into Phylogenetic Comparative Methods for Evolutionary Insight and Drug Discovery

Abstract

This article provides a comprehensive overview of the methods, applications, and challenges of integrating fossil data with phylogenetic comparative analyses. Tailored for researchers, scientists, and drug development professionals, it explores the foundational importance of this integration for accurate evolutionary time scaling and macroevolutionary hypothesis testing. The content details cutting-edge methodological approaches like tip dating and total-evidence analysis, addresses common pitfalls and biases, and outlines frameworks for model validation. By synthesizing perspectives from paleontology and modern genomics, this guide aims to equip scientists with the knowledge to harness the full power of the fossil record in phylogenetic research, with specific implications for identifying drug targets and understanding pathogen evolution.

Why Fossils Are Indispensable: The Foundational Role of Paleontological Data in Evolutionary Frameworks

The reconstruction of evolutionary relationships represents a cornerstone of modern biological sciences, providing critical insights into the history of life on Earth. Phylogenetic trees serve as powerful tools for visualizing relationships between both extinct and extant organisms, enabling researchers to estimate the timing of significant evolutionary events such as speciation events [1]. Traditionally, paleontological data derived from the fossil record and genomic data from living organisms have been analyzed in separate methodological silos, limiting the comprehensive understanding of evolutionary processes across deep time. This division has persisted despite the recognized value of integrating these complementary data sources to create more robust and accurate phylogenetic hypotheses.

The fossilized birth-death (FBD) process, introduced a decade ago, represents a groundbreaking statistical framework that explicitly models fossil sampling through time, allowing for the joint estimation of phylogeny and divergence times using both extinct and extant taxa [1]. This model family has revolutionized phylogenetic inference by providing a coherent approach to integrating molecular sequences from living organisms, fossil age information, and morphological character data within a single analytical framework. The FBD model acknowledges that both extinct and extant observations originate from the same generating process, thereby offering a more biologically realistic approach to phylogenetic reconstruction than previous methods that treated these data sources separately [1].

Theoretical Foundation: The Fossilized Birth-Death Model

Core Model Assumptions and Parameters

The fossilized birth-death (FBD) model operates on several fundamental assumptions about evolutionary processes. As a generative model, it simulates the diversification of species through time while explicitly accounting for both fossil preservation and modern sampling. The model incorporates four key parameters: birth rate (λ, speciation rate), death rate (μ, extinction rate), fossil sampling rate (ψ), and modern sampling fraction (ρ) [1]. These parameters allow the model to estimate phylogenetic trees that include both living species and fossils as tips, with fossils positioned along the branches of the tree according to their geological ages.

The FBD process represents a significant advancement over previous phylogenetic methods because it treats fossils not as supplementary information but as integral components of the evolutionary tree. This approach recognizes that fossil taxa are typically ancestors to living species or belong to extinct lineages, and their placement in the tree should reflect their chronological position in evolutionary history. Importantly, the model accommodates the reality that not all organisms and environments are equally preserved in the fossil record, providing a flexible framework for working with the inherent incompleteness of paleontological data [1].

Advantages Over Traditional Approaches

The FBD model offers several distinct advantages compared to traditional phylogenetic methods:

Unified Treatment of Extant and Fossil Data: Unlike approaches that analyze molecular and morphological data separately, the FBD model allows for simultaneous analysis of all available data, providing more accurate estimates of evolutionary relationships and divergence times [1].

Explicit Modeling of Fossil Sampling: The model incorporates a dedicated parameter for fossil preservation rate (ψ), which accounts for the uneven probability of fossilization across different lineages and time periods [1].

Natural Handling of Stratigraphic Ranges: The FBD model can incorporate information about the first and last appearance dates of fossil taxa, providing a more nuanced representation of their known temporal distributions [1].

Coherent Uncertainty Quantification: As a Bayesian method, the FBD framework naturally accommodates and quantifies uncertainty in fossil ages, morphological character scoring, and evolutionary parameters [1].

Quantitative Framework: FBD Model Parameters and Extensions

Table 1: Core Parameters of the Fossilized Birth-Death Model

| Parameter | Symbol | Description | Biological Interpretation |

|---|---|---|---|

| Speciation Rate | λ | Rate at which lineages split into new species | Measures evolutionary diversification potential |

| Extinction Rate | μ | Rate at which lineages go extinct | Quantifies species turnover through time |

| Fossil Sampling Rate | ψ | Probability of a lineage being preserved as a fossil | Reflects taphonomic and preservation biases |

| Modern Sampling Fraction | ρ | Proportion of extant species included in analysis | Accounts for incomplete taxonomic sampling |

| Clock Model | - | Models rate of evolutionary change | Can be strict, relaxed, or autocorrelated |

Table 2: Software Implementations of FBD Models

| Software | Primary Function | FBD Extensions | Data Types Supported |

|---|---|---|---|

| BEAST2 | Joint estimation of tree topology and divergence times | Skyline and stratigraphic range implementations | Molecular, morphological, fossil occurrence |

| MrBayes | Bayesian phylogenetic inference | FBD model for total-evidence dating | Molecular, morphological, continuous characters |

| RevBayes | Modular phylogenetic analysis | Custom FBD model specifications | Molecular, morphological, biogeographic |

Experimental Protocols for Integrated Analysis

Protocol 1: Total-Evidence Dating with FBD Models

Purpose: To simultaneously infer phylogenetic relationships and divergence times using combined molecular, morphological, and fossil data under the FBD process.

Materials:

- Molecular sequence data from extant taxa (DNA or amino acid sequences)

- Morphological character matrix for extant and fossil taxa

- Fossil occurrence data with age estimates and uncertainties

- Computational resources for Bayesian phylogenetic analysis

Procedure:

- Data Compilation: Assemble molecular sequence data for extant taxa and morphological character data for both extant and fossil taxa. Ensure consistent taxonomic alignment across datasets.

- Fossil Age Modeling: Specify prior distributions for fossil ages based on geological evidence, incorporating uncertainty in stratigraphic placement.

- Model Specification: Configure the FBD model with appropriate birth-death priors, fossil sampling rate, and modern sampling fraction.

- Clock Model Selection: Choose among strict, relaxed, or autocorrelated clock models based on preliminary analyses or prior knowledge.

- MCMC Configuration: Set up Markov Chain Monte Carlo parameters with sufficient chain length and sampling frequency to ensure convergence.

- Analysis Execution: Run the Bayesian analysis, monitoring convergence using diagnostic tools such as Tracer.

- Post-processing: Summarize posterior tree distributions, parameter estimates, and divergence time uncertainties.

Troubleshooting:

- If MCMC convergence is poor, consider adjusting prior distributions or increasing chain length.

- If computational demands are excessive, explore data partitioning strategies or approximate methods.

- If fossil placement is problematic, verify morphological character scoring and fossil age assignments.

Protocol 2: Morphological Clock Calibration

Purpose: To establish evolutionary rates for morphological characters when molecular data are unavailable for fossil taxa.

Materials:

- Morphological character matrix with comprehensive taxon sampling

- Fossil specimens with reliable age constraints

- Phylogenetic framework with established node relationships

- Bayesian evolutionary analysis software (e.g., BEAST2, MrBayes)

Procedure:

- Character Coding: Develop a morphological character matrix with clear character state definitions and ordered/unordered specifications.

- Age Priors: Establish conservative prior distributions for fossil ages based on stratigraphic evidence.

- Clock Model Setup: Configure a morphological clock model (strict or relaxed) with appropriate rate priors.

- Tree Model Specification: Implement the FBD process as the tree prior to account for fossil sampling.

- MCMC Analysis: Execute Bayesian analysis with adequate chain length to sample posterior distributions effectively.

- Rate Estimation: Extract posterior estimates of morphological evolutionary rates and their variation across characters.

- Validation: Compare rate estimates with independent assessments from molecular dating or other fossil evidence.

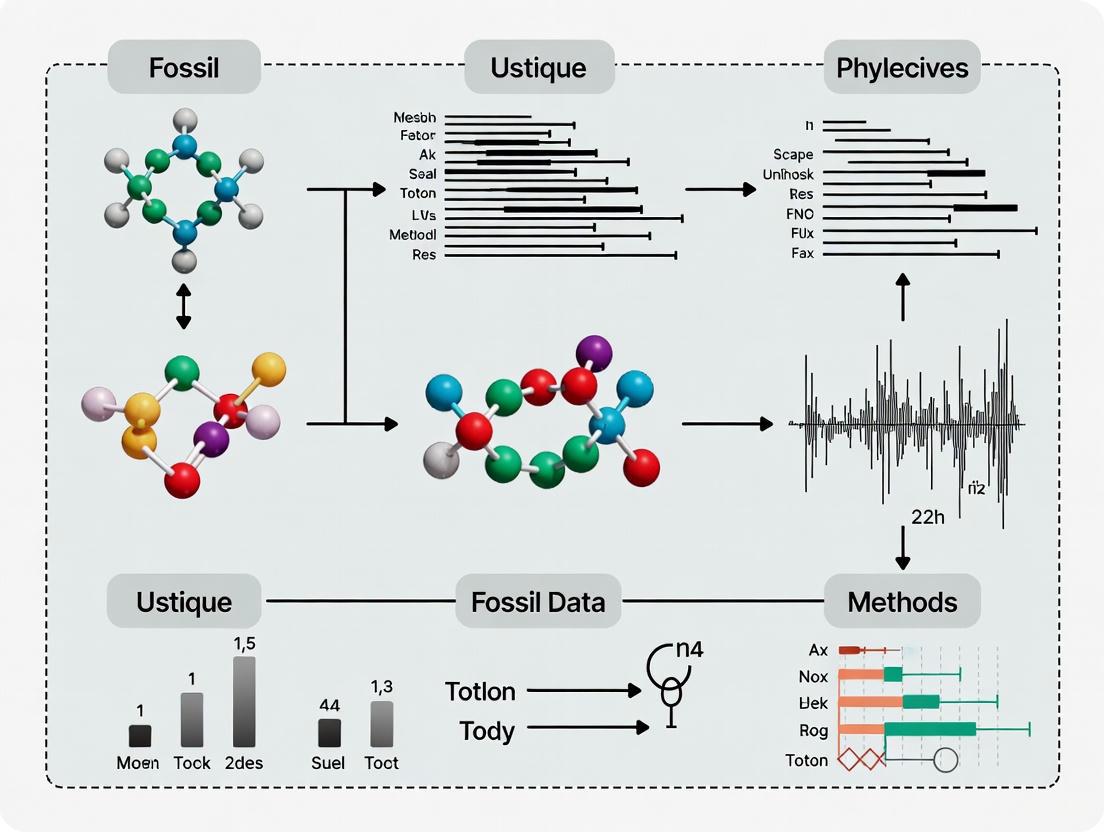

Visualizing Integrated Phylogenetic Workflows

Integrated Phylogenetic Analysis Workflow

Fossilized Birth-Death Process Diagram

Table 3: Research Reagent Solutions for Integrated Phylogenetics

| Resource Type | Specific Solution | Function and Application |

|---|---|---|

| Phylogenetic Software | BEAST2 with SA package | Implements FBD models for total-evidence dating [1] |

| Morphological Data Tools | MorphoBank | Collaborative platform for scoring morphological characters |

| Fossil Calibration Databases | Fossil Calibration Database | Curated fossil constraints for divergence time estimation |

| Molecular Sequence Repositories | GenBank, EMBL-EBI | Source of molecular data for extant taxa |

| Evolutionary Model Libraries | RevBayes model library | Customizable model specifications for FBD analyses |

| Taxonomic Name Resolvers | Global Names Resolver | Standardizes taxonomic names across data sources |

| Biogeographic Data Tools | BioGeoBEARS | Integrates biogeographic history with phylogenetic inference |

Applications in Evolutionary Research and Drug Development

The integration of fossil data with genomic information through FBD models has transformative potential for applied research, including drug development. By providing more accurate estimates of evolutionary rates and divergence times, these integrated approaches can inform several critical areas:

Protein Evolution and Functional Divergence: Integrated phylogenetic analyses enable researchers to trace the evolutionary history of protein families, identifying key functional shifts that occurred through deep time. For example, phylogenetic analysis of carbonic anhydrases has revealed how different families (α, β, γ, δ, ζ) independently evolved to catalyze the same biochemical reaction through convergent evolution [2]. Understanding these evolutionary patterns can inform drug target selection by identifying conserved functional domains and lineage-specific adaptations.

Gene Family Expansion and Diversification: The FBD framework allows researchers to reconstruct the timing of gene duplication events and subsequent functional specialization. Studies of carbonic anhydrase evolution show how groups like CA I/II/III (cytosolic), CA IV/IX/XII (membrane-bound), and CA VA/VB (mitochondrial) arose through duplication events and specialized over time [2]. Such analyses can reveal evolutionary constraints on potential drug targets and predict functional redundancy.

Ancestral Sequence Reconstruction: With robust time-calibrated phylogenies, researchers can infer ancestral protein sequences and experimentally resurrect these molecules to study functional evolution. This approach can identify historically conserved regions that may represent critical functional domains for therapeutic targeting.

Evolutionary Rate Variation: Integrated analyses can identify lineages with accelerated evolutionary rates, which may indicate periods of functional innovation or adaptive evolution. Such signals can highlight proteins or domains that have undergone significant functional changes, potentially revealing new therapeutic opportunities.

The application of these methods extends beyond basic evolutionary questions to practical challenges in biotechnology and medicine. For instance, phylogenetic analysis of carbonic anhydrase diversity has informed the selection of enzyme candidates for biotechnological applications such as microbially induced calcium carbonate precipitation (MICP), with potential applications in sustainable construction and carbon sequestration [2]. Similarly, understanding the evolutionary history of disease-related genes can provide insights into conserved functional mechanisms and potential therapeutic vulnerabilities.

Future Directions and Implementation Challenges

Despite significant advances, several challenges remain in the widespread implementation of integrated phylogenetic approaches. The complexity of FBD models requires a working knowledge of paleontological data, Bayesian phylogenetics, and evolutionary model assumptions, creating a substantial barrier for empirical researchers [1]. Future developments should focus on creating more user-friendly implementations, comprehensive documentation, and specialized training resources to make these powerful methods more accessible.

Technical challenges include developing more realistic models of fossil preservation that account for geographic and temporal heterogeneity in sampling, incorporating additional sources of uncertainty in fossil age estimates, and creating efficient computational algorithms to handle increasingly large datasets. Furthermore, better integration between phylogenetic inference and comparative methods will enable researchers to directly test evolutionary hypotheses using the time-calibrated trees produced by FBD analyses.

The continued development and refinement of integrated approaches will require close collaboration between paleontologists, molecular biologists, computational scientists, and statisticians. As these fields become increasingly interdisciplinary, the unification of genomic and fossil data will provide ever more powerful insights into the evolutionary processes that have shaped the diversity of life on Earth.

Phylogenetic trees, the graphs representing evolutionary histories, are foundational to evolutionary biology and genomic epidemiology [3]. Modern phylogenetics increasingly relies on molecular data, with technological advances enabling the construction of trees from millions of genomic sequences [3]. However, an over-reliance on molecular data alone creates a significant information gap in macroevolutionary studies, particularly concerning deep-time evolutionary processes, trait evolution, and diversification patterns. Molecular-only phylogenies face challenges in accurately modeling evolutionary rates, reconciling gene tree-species tree discordance, and accounting for the role of chromosomal and genomic changes in diversification. This Application Note details the quantitative challenges arising from molecular-only approaches and provides protocols for integrating fossil and phenotypic data to bridge the micro- and macroevolutionary divide, framed within a thesis advocating for the integration of fossil data into phylogenetic comparative methods.

Molecular-only phylogenetic approaches face several critical limitations that can obscure macroevolutionary patterns. The table below summarizes the primary challenges and their quantitative impacts, as revealed by recent research.

Table 1: Key Challenges of Molecular-Only Phylogenies and Their Macroevolutionary Consequences

| Challenge | Quantitative Impact | Evidence |

|---|---|---|

| Computational Limitations & Lack of Confidence Assessment | Traditional bootstrap methods require 2+ orders of magnitude more runtime/memory than newer methods (SPRTA) and become computationally prohibitive for pandemic-scale trees (e.g., >2M SARS-CoV-2 genomes) [3]. | SPRTA enables confidence assessment on million-tip trees where Felsenstein's bootstrap and its approximations fail [3]. |

| Sensitivity to Phylogenetic Misspecification | False positive rates in phylogenetic regression can soar to nearly 100% under incorrect tree choice (e.g., using a species tree for a trait evolving along a gene tree) [4]. This risk increases with more data (more traits/species). | Simulation studies show robust regression can reduce false positive rates from 56-80% down to 7-18% under tree misspecification [4]. |

| Discordance between Microevolutionary Predictors and Macroevolutionary Outcomes | Developmental bias (mutational covariance, M) in Drosophila melanogaster wing shape predicts 40 million years of divergence across Drosophilidae [5]. This alignment persists over 185 million years across >900 dipteran species, challenging constraint-based hypotheses [5]. | Genetic constraints alone are a poor fit for the data; correlational selection is a more plausible explanation for the long-term alignment [5]. |

| Unaccounted Chromosomal Drivers of Diversification | Dysploidy (chromosome number change without genome size change) is more frequent and persistent over macroevolutionary time than polyploidy in angiosperms [6]. Chromosomal rearrangements are more strongly linked to trait differentiation at micro- than macroevolutionary scales [6]. | Karyotype diversity from dysploidy is challenging to link to diversification rates at a macroevolutionary scale, creating a knowledge gap [6]. |

Detailed Experimental Protocols

Protocol 1: Assessing Phylogenetic Confidence at Scale with SPRTA

Background: Felsenstein’s bootstrap, the standard method for assessing phylogenetic confidence, is computationally infeasible for massive datasets, leaving large molecular phylogenies without uncertainty measures. Subtree Pruning and Regrafting-based Tree Assessment (SPRTA) provides an efficient, placement-focused alternative [3].

Application: This protocol is essential for evaluating the reliability of phylogenetic inferences in large-scale molecular studies, such as those tracking pandemic-scale pathogen evolution.

Table 2: Research Reagent Solutions for Phylogenetic Confidence Assessment

| Reagent / Software Solution | Function | Application Note |

|---|---|---|

| SPRTA Algorithm | Calculates branch support as the approximate probability that a lineage evolved directly from its inferred ancestor. | Shifts support measurement from clade membership (topological) to evolutionary origin (mutational/placement). |

| MAPLE Software | Performs efficient maximum-likelihood phylogenetic inference and calculates tree likelihoods (\Pr(D|T)) required for SPRTA scores [3]. | Efficiently computes likelihoods for the original tree and SPR-altered topologies. |

| Multiple Sequence Alignment (D) | The input genetic data matrix, where rows are taxon sequences and columns are homologous nucleotides [3]. | Foundation for all subsequent likelihood calculations. |

| Inferred Rooted Phylogenetic Tree (T) | The phylogenetic tree whose branches b are to be assessed [3]. |

The tree T is divided for each branch b into subtree S_b and its complement T\S_b. |

Methodology:

- Input: Begin with a multiple sequence alignment

Dand an inferred rooted phylogenetic treeT[3]. - Branch Selection: For a target branch

b(with ancestor A and descendant B), define subtreeS_b(all descendants of B) and the complement subtreeT\S_b. - Generate Alternative Topologies: For branch

b, perform a series of Single Subtree Pruning and Regrafting (SPR) moves. Each moveirelocatesS_bto a different nodeA_iwithinT\S_b, creating an alternative topologyT_i^b. The first topology (i=1) is the original treeT[3]. - Compute Likelihoods: Calculate the likelihood (\Pr(D|T_i^b)) for each topology

T_i^b, including the original tree. - Calculate SPRTA Support: Compute the SPRTA support score for branch

busing the formula: [ {\rm{SPRTA}}(b) = \frac{\Pr(D|T)}{\sum{1\leqslant i\leqslant Ib}\Pr(D|T_i^b)} ] This score approximates the probability (\Pr(b| D,T\backslash b)) that B evolved directly from A along branchb[3].

The following workflow diagram illustrates the SPRTA process for a single branch b.

Protocol 2: Implementing Robust Phylogenetic Regression to Mitigate Tree Misspecification

Background: Phylogenetic comparative methods (PCMs) assume the chosen tree accurately reflects trait evolution. Using an incorrect tree (e.g., a species tree for a trait with a distinct gene tree history) can lead to catastrophically high false positive rates, a risk that intensifies with larger datasets [4].

Application: This protocol is critical for any study correlating traits across species (e.g., genotype-phenotype mapping, comparative genomics) where the true underlying phylogenetic history of the traits is unknown.

Methodology:

- Trait and Tree Data Collection: Compile the dataset of traits for the

nspecies and the set of candidate phylogenetic trees (e.g., species tree, gene trees). - Model Specification: Define the phylogenetic regression model. For a simple bivariate regression for

ptraits acrossnspecies, the model is: [ \mathbf{Y} = \mathbf{X}\beta + \mathbf{\epsilon} ] whereYis ann x pmatrix of trait values,Xis ann x 1matrix of the predictor variable,βis the regression coefficient, andεcontains phylogenetically correlated errors [4]. - Conventional Regression: Perform standard phylogenetic generalized least squares (PGLS) regression under the assumed phylogenetic tree.

- Robust Regression: Apply a robust sandwich estimator to the same model to account for potential misspecification of the phylogenetic covariance structure. This estimator adjusts the standard errors of the regression coefficients, making them less sensitive to an incorrect tree [4].

- Result Comparison: Compare the statistical significance (e.g., p-values) of the regression coefficients obtained from the conventional and robust methods. A result that is significant under conventional regression but non-significant under robust regression indicates potential sensitivity to tree misspecification and should be interpreted with caution.

The logical relationship between tree choice and regression outcomes is shown below.

Bridging Micro and Macroevolution: The Critical Role of Fossil and Phenotypic Data

The protocols above address specific analytical gaps, but closing the macroevolutionary information gap requires integrating beyond-molecular data.

- Calibrating Evolutionary Timescales: Molecular clocks alone provide estimates of divergence times, but these can be uncertain. Fossil data provide absolute, minimum-age calibrations that are indispensable for anchoring phylogenetic trees in geological time, transforming relative branch lengths into a meaningful timeline of diversification.

- Testing Macroevolutionary Hypotheses: Molecular phylogenies can identify shifts in diversification rates, but explaining the causes of these shifts requires phenotypic and environmental data. For example, the finding that developmental bias in fly wings predicts macroevolution over 185 million years was only testable by integrating extensive morphological wing shape data from taxonomic illustrations and photographs with the molecular phylogeny [5]. This bypasses the limitations of a molecular-only approach.

- Understanding Diversification Drivers: Chromosomal evolution is a key driver of plant diversification [6]. Molecular phylogenies can track changes in chromosome numbers, but inferring the macroevolutionary consequences—such as whether dysploidy is associated with higher speciation rates—requires integrating karyotypic and fossil evidence to model diversification dynamics through time [6]. This integration reveals that chromosomal dynamics fixed over macroevolutionary time provide the variation for selection at microevolutionary scales.

Molecular data alone are insufficient to capture the complex fabric of macroevolution. The challenges of computational intensity, extreme sensitivity to model misspecification, and the discordance between different evolutionary scales create a significant information gap. The protocols outlined here—SPRTA for confidence assessment at scale and robust regression for mitigating tree error—provide actionable paths forward for researchers. However, these methods must be employed within a broader framework that actively seeks to integrate fossil calibrations, phenotypic trait data, and genomic structural variants. Only by synthesizing molecular, morphological, and paleontological evidence can we truly bridge the gap between micro- and macroevolution and achieve a predictive understanding of evolutionary processes across deep time.

In phylogenetic comparative methods research, establishing an accurate timescale is paramount. The evolutionary time tree of life is not inferred from molecular sequences alone; it requires the anchoring points provided by the fossil record. Fossils provide the absolute chronological framework that transforms a relative branching pattern into a calibrated timeline, enabling researchers to date divergence events, track the origins of traits, and understand the tempo of evolutionary processes such as those underlying disease susceptibility and drug target conservation [7]. This protocol outlines the rigorous application of fossil data to calibrate molecular clocks, a foundational practice for generating robust, time-scaled phylogenetic hypotheses essential for comparative oncology, pathogen evolution studies, and drug discovery [8] [7].

Quantitative Evidence: The Impact of Fossil Calibration

The critical influence of fossil calibration strategy on divergence time estimates is empirically demonstrated by the case of crown Palaeognathae birds. The discrepancy between a proposed Early Eocene age (~51 million years ago) and the more widely supported K-Pg boundary age (~66 million years ago) was investigated by testing the effects of calibration strategy versus phylogenomic data type [9].

Table 1: Impact of Calibration Strategy on Crown Palaeognathae Age Estimates

| Study/Dataset | Calibration Strategy | Ingroup Palaeognathae Fossils? | Estimated Age (Million Years) |

|---|---|---|---|

| Prum et al. (2015) - Original | All priors restricted to Neognathae clade | No | ~51 (Early Eocene) [9] |

| Prum et al. (2015) - Reanalyzed | Priors at Neornithine root & within Palaeognathae | Yes | ~62-68 (K-Pg boundary) [9] |

| Mitogenomic (MTG) Dataset | Multiple internal calibrations | Yes | ~62-68 (K-Pg boundary) [9] |

| Nuclear (nu) Dataset | Multiple internal calibrations | Yes | ~62-68 (K-Pg boundary) [9] |

The data consistently shows that the inclusion of multiple internal fossil calibrations, particularly for deep nodes, yields congruent and robust age estimates across different data types. The absence of such calibrations can lead to significant underestimation of node ages, potentially misdirecting evolutionary inferences [9].

Application Notes & Protocols

Workflow for Fossil-Based Molecular Dating

The following diagram outlines the standard protocol for integrating fossil data into Bayesian molecular clock analyses.

Detailed Experimental Protocol

Protocol: Bayesian Molecular Dating with Fossil Calibration Priors

Objective: To estimate a time-calibrated phylogeny using genomic data and carefully selected fossil calibration points.

Materials:

- Molecular Sequence Alignment: Genomic or mitogenomic data in FASTA or PHYLIP format [9].

- Fossil Calibration Data: Information on fossil specimens and their stratigraphic ranges.

- Software:

- BEAST2: Bayesian Evolutionary Analysis Sampling Trees package for Bayesian molecular dating [9].

- Tracer: For analyzing Markov Chain Monte Carlo (MCMC) output and assessing convergence.

- TreeAnnotator: For generating a maximum clade credibility tree from the posterior tree distribution.

- FigTree or IcyTree: For visualizing the final time-scaled phylogeny.

Procedure:

Sequence Alignment and Partitioning:

- Assemble and align molecular data (e.g., conserved non-exonic elements, ultraconserved elements, coding sequences, or mitogenomes) [9].

- Partition the data and select appropriate nucleotide substitution models for each partition using model-testing software (e.g., ModelTest-NG).

Fossil Prior Selection and Justification:

- Identify Fossils: Identify fossil specimens that can be reliably assigned to specific clades (stem or crown) based on shared derived morphological characteristics [9].

- Establish Minimum Age Bounds: The minimum age of a calibration is defined by the geochronological date of the fossil. This provides a hard minimum constraint, as the clade must be at least this old.

- Define Probability Distributions: Assign a calibrated prior density for the node age. Common choices include:

- Lognormal Distribution: Ideal for representing a hard minimum bound (the offset) with a soft maximum, reflecting the likelihood that the true divergence is somewhat older than the fossil [9].

- Exponential Distribution: Useful when there is less prior information about the upper bound.

- Uniform Distribution: Applied when only minimum and maximum bounds are known with confidence.

Bayesian Molecular Clock Analysis:

- Set up the BEAST2 XML file, specifying:

- The sequence alignment and partition models.

- The tree prior (e.g., Birth-Death model).

- The clock model (e.g., Relaxed Clock Log Normal).

- The fossil calibration priors on the corresponding tree nodes.

- Execute the MCMC analysis for a sufficient number of generations (typically tens to hundreds of millions) to achieve adequate sampling of the posterior distribution.

- Set up the BEAST2 XML file, specifying:

Diagnostics and Summarization:

- Use Tracer to assess MCMC performance. Ensure all parameters have an Effective Sample Size (ESS) > 200, indicating independent sampling from the posterior.

- If convergence is poor, extend the MCMC run length or re-parameterize the model.

- Use TreeAnnotator to combine the posterior tree sample, discarding an appropriate burn-in (e.g., 10%), to produce a single maximum clade credibility tree with median node heights.

Interpretation and Visualization:

- Analyze the final time tree, focusing on the mean/median age estimates and the 95% highest posterior density (HPD) intervals for key nodes of interest.

- Visually inspect the tree using visualization software, ensuring the fossil calibration priors are consistent with the final estimated node ages.

Conceptual Framework of Evolutionary Models

Phylogenetic comparative methods rely on models of trait evolution, which are built upon the phylogenetic variance-covariance matrix derived from the time-scaled tree.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Phylogenetic Dating and Comparative Methods

| Research Reagent / Resource | Function & Application | Example Tools / Databases |

|---|---|---|

| Genomic Data Repositories | Provides raw molecular data (DNA, protein sequences) for constructing phylogenetic matrices. | NCBI GenBank, RefSeq [9] |

| Bayesian Evolutionary Analysis Software | Implements relaxed molecular clock models and integrates fossil calibration priors to estimate divergence times. | BEAST2, MrBayes [9] |

| Fossil Calibration Databases | Curated resources providing fossil specimen data and suggested calibration priors for specific clades. | Fossil Calibration Database, Paleobiology Database |

| Phylogenetic Comparative Methods (PCM) Packages | Statistical software for fitting models of trait evolution (e.g., Brownian Motion, OU) to time-scaled trees. | phytools (R), geiger (R), caper (R) [7] |

| Evolutionary Model Testing Tools | Determines the best-fit model of sequence evolution for different genomic partitions. | ModelTest-NG, PartitionFinder |

| MCMC Diagnostics & Visualization Software | Analyzes convergence of Bayesian runs and visualizes final time-scaled phylogenetic trees. | Tracer, TreeAnnotator, FigTree [9] |

Total-evidence dating and the fossilized birth-death (FBD) process represent a paradigm shift in Bayesian phylogenetic analysis, enabling direct integration of molecular, morphological, and stratigraphic data to infer evolutionary relationships and divergence times for both living and extinct species. This framework moves beyond treating fossils as mere calibration points, instead modeling them as samples directly derived from the diversification process [10]. For researchers in comparative biology and drug discovery, where understanding deep evolutionary relationships can inform functional analyses of genes and proteins [11] [8], these methods provide a statistically robust approach for incorporating paleontological data. This protocol outlines the core principles and practical steps for implementing total-evidence analysis with morphological clocks and the FBD model, using RevBayes software as an exemplar platform [10] [12].

Core Principles and Definitions

Total-Evidence Analysis

Total-evidence analysis is a Bayesian phylogenetic approach that jointly models multiple data partitions—typically molecular sequences from extant taxa and morphological characters from both extant and fossil taxa—to infer a single, time-calibrated phylogeny [13]. This method avoids the potential biases of a priori fossil placement by allowing the morphological data to determine the phylogenetic positions of fossils within the context of the molecular tree and the FBD tree prior [14].

The Fossilized Birth-Death Process

The FBD process is a probabilistic model that describes the generation of phylogenetic trees containing both extant samples and fossil samples. It defines a joint prior distribution on tree topology and divergence times based on five key parameters [15] [10]:

- Speciation rate (λ): The rate at which lineages split.

- Extinction rate (μ): The rate at which lineages go extinct.

- Fossil recovery rate (ψ): The rate at which fossils are sampled along lineages.

- Extant sampling proportion (ρ): The probability of sampling a living species at the present.

- Origin time (φ): The time when the process starts.

The model accounts for the probability of sampled ancestors, where a fossil may be a direct ancestor of a later-sampled taxon [10]. An important extension, the FBD Range Process, incorporates stratigraphic ranges (the time between the first and last appearance of a fossil species) rather than treating individual fossil specimens as separate tips, using a model of asymmetric (budding) speciation to assign specimens to species [15] [12].

Morphological Clocks

The morphological clock refers to models of evolutionary rate for discrete morphological characters. Unlike molecular relaxed clocks that often allow rate variation across branches, a strict morphological clock (constant rate across the tree) is frequently used due to the typically smaller size of morphological matrices [10] [12]. The Mk model is the standard for morphological character evolution, representing a generalization of the Jukes-Cantor model for discrete morphological data [10]. It is crucial to account for sampling bias in morphological datasets, as the exclusion of invariant characters and autapomorphies (characters unique to a single taxon) can artificially inflate branch length estimates [10] [12].

Table 1: Core Components of a Total-Evidence Model

| Component | Description | Typical Model |

|---|---|---|

| Tree Prior | Fossilized Birth-Death (FBD) Process | $\mathcal{T} \sim FBD(\lambda, \mu, \psi, \rho, \phi)$ |

| Molecular Evolution | Nucleotide substitution model | GTR+Γ or partitioned equivalent |

| Morphological Evolution | Discrete morphological character model | Mk model (often with bias correction) |

| Molecular Clock | Model of rate variation for molecular data | Uncorrelated relaxed clock (e.g., UExp or ULognormal) |

| Morphological Clock | Model of rate variation for morphological data | Strict clock |

Experimental Protocol

Data Compilation and Alignment

1. Molecular Data:

- Compile nucleotide sequences for extant taxa. The dataset can be a concatenated alignment or partitioned by gene or codon position.

- Align sequences using appropriate tools (e.g., MAFFT). Visually inspect and refine alignments as necessary.

- Format the aligned sequences into a NEXUS file. Taxa include all extant species; fossil taxa are listed but can be represented as entirely missing data (

?) [13].

2. Morphological Data:

- Code discrete morphological characters for all taxa (extant and fossil). Characters are typically binary (0/1) or multi-state.

- Critical Consideration: Document whether the dataset includes only parsimony-informative characters or also includes parsimony-uninformative variable characters (autapomorphies). This determines the bias correction applied in the Mk model [10] [12].

- Format the data into a NEXUS file using the

standarddata type and define thesymbolsused (e.g.,symbols="012") [13]. Ambiguities can be denoted with curly braces (e.g.,{01}) [13].

3. Fossil Age Data:

- For the FBD model, compile the age information for each fossil taxon. This can be:

Table 2: Essential Data Files for a Total-Evidence Analysis

| File Type | Contents | Format | Key Consideration |

|---|---|---|---|

| Molecular Alignment | Nucleotide sequences for extant taxa. | NEXUS | Fossil taxa should be included but can be all missing data. |

| Morphological Matrix | Discrete character states for all taxa. | NEXUS | Document the inclusion/exclusion of autapomorphies. |

| Fossil Age Table | Age estimates or ranges for fossil taxa. | TSV/CSV | Distinguish between specimen-level age uncertainty and species-level stratigraphic ranges. |

Model Specification and Configuration in RevBayes

The following workflow outlines the key steps for model specification. The subsequent diagram illustrates the logical relationships between these steps and the model components.

Figure 1. Workflow for configuring a total-evidence phylogenetic analysis in RevBayes. The process integrates multiple data types and model components into a single cohesive analysis.

Step 1: Define the FBD Tree Prior

- Specify probability distributions (priors) for the FBD parameters: $\lambda$, $\mu$, $\psi$, $\rho$, and $\phi$ [10].

- Choose between the specimen-level FBD process (

FBDP) or the stratigraphic range FBD process (FBDRP). UseFBDRPwhen multiple fossils can be assigned to a single species lineage [12]. - Account for fossil age uncertainty by specifying a uniform distribution for the fossil's age within its observed interval [10].

Step 2: Specify Site Models

- Molecular Data: Apply a suitable nucleotide substitution model (e.g., GTR) with among-site rate heterogeneity (e.g., +Γ). Use model selection tools like PartitionFinder to determine the best partitioning scheme and models [16].

- Morphological Data: Apply the Mk model to the discrete morphological matrix. Correct for sampling bias using the

+vindicator to exclude unobserved character states if autapomorphies and invariant characters were not collected [10] [12].

Step 3: Specify Clock Models

- Molecular Clock: Use an uncorrelated relaxed clock model (e.g., an uncorrelated exponential or lognormal distribution) to allow substitution rates to vary independently across branches [10] [12].

- Morphological Clock: Typically, apply a strict clock model, which assumes a constant rate of morphological change across all branches of the tree [10] [12]. For very large morphological datasets, exploring multiple morphological clocks for different character partitions is feasible [16].

Step 4: Combine Model Components and Run MCMC

- Create the full model by combining the FBD tree prior, the site models, and the clock models [10].

- Configure the Markov chain Monte Carlo (MCMC) algorithm to sample from the posterior distribution of trees and model parameters. Run the analysis until convergence is achieved, assessing effective sample sizes (ESS) for all parameters (ESS > 200 is a common benchmark) [13].

Post-Analysis and Tree Summarization

- Use software like

TreeAnnotator(BEAST2) or analogous functions in RevBayes to generate a maximum clade credibility (MCC) tree from the posterior sample of trees [13]. - The final MCC tree will include:

- Divergence time estimates for all nodes, with 95% highest posterior density (HPD) intervals.

- Phylogenetic positions of fossil taxa inferred from their morphological data.

- Potential identification of sampled ancestors.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Resources for Total-Evidence Analysis

| Tool/Resource | Function | Application Note |

|---|---|---|

| RevBayes [10] [12] | Bayesian phylogenetic inference using probabilistic graphical models. | Highly flexible for implementing custom models like FBD; steep learning curve but powerful. |

| BEAST2 [13] | Bayesian evolutionary analysis with BEAUti GUI for setup. | More accessible for standard analyses; requires MM and SA packages for morphology/FBD. |

| Tracer [13] | Diagnose MCMC convergence and summarize parameter estimates. | Check ESS values and parameter traces post-analysis. |

| Mesquite [17] | Code and manage morphological character matrices. | Integral for the morphological data compilation step. |

| MAFFT [17] | Multiple sequence alignment of molecular data. | Produces the input molecular alignment. |

| PartitionFinder [16] | Select best-fit substitution models and partitioning schemes. | Used prior to analysis to determine optimal molecular model. |

Critical Considerations and Troubleshooting

- Data Conflict: Be aware of potential strong signal conflict between molecular and morphological data partitions, which can affect the inferred topology [14]. Prior sensitivity analysis is recommended.

- Morphological Clock Models: The assumption of a single, strict morphological clock is a simplification. Morphological evolution is likely heterogenous, but reliably estimating multiple rates is often challenging without large datasets [18] [16].

- Time Structure: The FBD process relies on the morphological data to provide the time structure for placing fossils. If the morphological data have weak phylogenetic signal, time estimates can be poor [18].

- Concordance Testing: Before a full total-evidence analysis, compare divergence times inferred from molecular data alone versus morphological data from extant taxa alone to check for major discordance [18].

The modular graphical model below depicts how the different components of a combined-evidence analysis interact within the RevBayes framework.

Figure 2. Modular graphical model of a combined-evidence analysis. The FBD process and fossil age data jointly model the time tree, which, together with substitution and clock models for molecular and morphological data, forms the complete phylogenetic model. Adapted from RevBayes tutorials [10] [12].

From Theory to Practice: Methodological Approaches and Their Applications in Biomedicine

Integrating fossil data into phylogenetic analyses is a cornerstone of macroevolutionary research, providing a temporal dimension essential for understanding evolutionary timelines and processes. Two principal Bayesian analytical frameworks exist for this integration: the traditional node dating approach and the increasingly prominent tip dating method, the latter often being a key component of total-evidence dating [19] [20]. The fundamental distinction between them lies in how fossil information is incorporated. Node dating uses fossils to construct a priori probability distributions on the ages of specific internal nodes (calibration points). In contrast, tip dating, also known as total-evidence dating, includes fossils as direct participants in the analysis, treating them as terminal tips with known ages (stratigraphic ranges) and using their morphological data, alongside molecular data from extant taxa, to simultaneously infer phylogenetic relationships and divergence times [19] [20] [21]. This protocol details the application of both frameworks within the context of a broader research program on phylogenetic comparative methods, providing a structured comparison and practical guidance for their implementation.

Comparative Framework: Tip Dating vs. Node Dating

Table 1: Core conceptual and methodological differences between Node Dating and Tip Dating.

| Feature | Node Dating | Tip Dating (Total-Evidence Dating) |

|---|---|---|

| Primary Citation | (Ronquist et al., 2012) [19] | (Ronquist et al., 2012; Zhang et al., 2016) [19] [21] |

| Role of Fossils | Used to calibrate node age a priori via probability distributions. | Included as tips in the matrix; directly inform topology and node ages. |

| Data Utilization | Typically uses only the oldest fossil for a clade; discards younger/ambiguous fossils. | Uses all available fossil specimens, including those with uncertain placement. |

| Fossil Placement | Fixed to a node prior to analysis; no uncertainty in placement is incorporated. | Placement is inferred during analysis, with phylogenetic uncertainty integrated. |

| Handling of Uncertainty | Uncertainty is primarily on the node age (via the calibration density). | Uncertainty encompasses topology, node age, and fossil placement. |

| Tree Prior | Typically Yule or Birth-Death process for extant taxa. | Fossilized Birth-Death (FBD) process, which models speciation, extinction, and fossil sampling [20] [21]. |

| Key Challenge | Translating fossil evidence into an appropriate node calibration prior [19]. | Requires explicit modeling of the fossil sampling process and morphological evolution [21]. |

Table 2: Quantitative data comparison from a Hymenoptera study applying both methods [19].

| Parameter | Node Dating Analysis | Total-Evidence Dating Analysis |

|---|---|---|

| Total Taxa | 76 (68 extant, 8 outgroups) | 113 (68 extant, 45 fossil, 8 outgroups) |

| Molecular Data | ~5 kb from 7 markers for extant taxa | ~5 kb from 7 markers for extant taxa |

| Morphological Data | Not used for extant taxa in dating | 343 characters for 45 fossil and 68 extant taxa |

| Calibration Points | 9 fixed node calibrations | 0 fixed node calibrations; fossil ages used directly |

| Crown Group Age (Ma) | Not explicitly stated (less precise) | ~309 Ma (95% HPD: 291-347 Ma) |

| Sensitivity to Priors | Higher sensitivity | Lower sensitivity; more robust posterior |

| Resulting Precision | Less precise posterior age distributions | More precise posterior age distributions |

Workflow and Analytical Procedures

The logical progression from data preparation to final time-scaled tree inference differs significantly between the two frameworks. The following diagram illustrates the core workflows for Node Dating and Tip Dating, highlighting their distinct approaches to handling fossil data.

Protocol for Node Dating Analysis

Objective: To infer a time-calibrated phylogeny by applying age constraints derived from the fossil record to specific internal nodes.

Procedure:

- Phylogenetic and Fossil Data Assembly:

- Assemble a molecular sequence alignment for extant taxa.

- Conduct a separate, morphology-based phylogenetic analysis (e.g., using parsimony or Bayesian inference) to determine the probable placement of key fossils.

- Select fossils to be used as calibrations. This typically involves choosing the oldest unequivocal fossil for a clade.

Calibration Prior Selection:

- For each selected fossil, define a calibration prior on the corresponding internal node (the most recent common ancestor of the clade the fossil belongs to).

- The prior must account for the fact that the fossil provides a minimum age for the node. The actual node age is therefore

>=the fossil's age. - Use statistical distributions with soft bounds (e.g., lognormal, gamma, or exponential) to model the probability density of the node age, allowing for a small probability of the node being younger than the fossil [19]. Setting hard minimum bounds is also common practice.

- Example: For a fossil dated at 100 Ma, one might use a lognormal(mean=100, sd=1) with an offset of 100 Ma as the prior for the node age, giving a minimum age of 100 Ma but a soft maximum.

Bayesian Divergence Time Analysis:

- Use software such as BEAST2 or MrBayes [21] [22].

- Input the molecular alignment and the tree model (e.g., Yule or Birth-Death process).

- Specify a relaxed clock model (e.g., uncorrelated lognormal) to account for rate variation among lineages [19].

- Apply the calibration priors defined in Step 2 to their respective nodes.

- Run a Markov Chain Monte Carlo (MCMC) simulation to approximate the posterior distribution of tree topologies and node ages.

Protocol for Total-Evidence Tip Dating Analysis

Objective: To jointly infer phylogenetic relationships (including the placement of fossils) and divergence times in a single analysis by directly incorporating fossil specimens as tips.

Procedure:

- Total-Evidence Matrix Construction:

- Assemble a combined data matrix:

- Code morphological characters as discrete states (e.g., binary or multi-state).

- Compile stratigraphic age ranges for all fossil taxa (e.g., minimum and maximum ages).

Model Specification:

- Substitution Models:

- Apply a nucleotide substitution model (e.g., GTR+Γ) to the molecular partition.

- Apply a morphological evolution model (e.g., the Mk model) to the morphological partition. Correcting for ascertainment bias (

coding=variable) is often necessary [21].

- Clock Models:

- Specify a relaxed clock model for the molecular data.

- Specify a clock model for the morphological data (often a simple strict or relaxed clock with an exponential prior on the rate) [21].

- Tree Prior: Implement the Fossilized Birth-Death (FBD) process as the tree prior. This model explicitly parameters speciation, extinction, and fossil recovery rates, and it naturally accommodates fossils as sampled ancestors or extinct lineages [20] [21].

- Substitution Models:

Bayesian Total-Evidence Analysis:

- Use software that implements the FBD model and total-evidence dating, such as RevBayes [21] or MrBayes [19].

- Input the combined data matrix and the stratigraphic information for the fossils.

- Run an MCMC analysis to sample from the joint posterior distribution of the tree topology (including fossil placement), divergence times, and all model parameters.

Post-Processing and Summarization:

- After the MCMC run, check for convergence using diagnostic tools (e.g., Tracer).

- Summarize the posterior sample of trees to generate a maximum clade credibility (MCC) tree.

- Visualize the resulting time-scaled tree, which will include both extant and fossil taxa. Use specialized viewers like IcyTree to properly represent sampled ancestors [21].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key software, packages, and models required for implementing tip and node dating frameworks.

| Tool Name | Type | Primary Function | Relevance |

|---|---|---|---|

| BEAST 2 | Software Package | Bayesian evolutionary analysis sampling trees. | Node dating; molecular dating with relaxed clocks. |

| RevBayes | Software Package | Probabilistic graphical modeling for phylogenetics. | Highly flexible; implements both node and tip dating with FBD process [21]. |

| MrBayes | Software Package | Bayesian phylogenetic inference. | Implements total-evidence dating as described in Ronquist et al. (2012) [19]. |

| Fossilized Birth-Death (FBD) Process | Probabilistic Model | Tree prior modeling speciation, extinction, and fossil sampling. | Essential tree prior for coherent tip-dating analyses [20] [21]. |

| Mk Model | Evolutionary Model | Models discrete morphological character evolution. | Standard model for analyzing morphological character matrices in tip dating [21]. |

| Tracer | Software Tool | MCMC diagnostic and posterior analysis. | Analyzing convergence and summarizing parameter estimates (e.g., from BEAST/RevBayes) [21]. |

| IcyTree | Web Tool | Browser-based tree visualization. | Particularly effective for viewing trees with sampled ancestors [21]. |

Critical Considerations for Method Selection

The choice between node dating and tip dating involves trade-offs. The following diagram outlines the key decision points and their implications for analysis outcomes.

Key Decision Factors

- Fossil Record Quality and Abundance: Tip dating is uniquely powerful when analyzing groups with a rich fossil record, as it allows all available specimens—including those with uncertain phylogenetic affinity—to contribute to the analysis. In contrast, node dating often requires discarding younger or morphologically ambiguous fossils [19].

- Handling of Uncertainty: A principal advantage of tip dating is its ability to integrate over uncertainty in fossil placement. The method simultaneously estimates the phylogenetic position of fossils and their impact on divergence times, leading to posterior distributions that are often more precise and less sensitive to prior assumptions than those from node dating [19].

- Model Complexity and Computational Demand: Tip dating analyses are inherently more complex. They require explicit models for morphological evolution (e.g., Mk), the fossil sampling process (FBD), and a joint inference framework. This complexity increases computational time and requires careful model specification to avoid biases [20] [21].

The selection between node dating and tip dating is a fundamental decision in any phylogenetic analysis aiming to incorporate fossil evidence. Node dating, with its longer history and simpler workflow, remains a valid approach, particularly when fossil data is sparse or computational resources are limited. However, total-evidence tip dating represents a more rigorous and powerful framework. It makes fuller use of paleontological data, explicitly models the processes that generate the observed data (fossils and extant species), and directly integrates over key sources of uncertainty. As computational power and Bayesian modeling techniques continue to advance, tip dating under the FBD process is poised to become the standard for integrating fossil data into phylogenetic comparative methods, ultimately providing a more robust and detailed understanding of the evolutionary timescale.

The integration of morphological data from extant and fossil taxa represents a cornerstone for advancing phylogenetic comparative methods. Such integration allows researchers to trace evolutionary trajectories, calibrate divergence times, and understand the processes that shape phenotypic diversity. A fundamental challenge in this endeavor is the robust handling of both discrete characters (e.g., presence/absence of a feature) and continuous characters (e.g., measurements of size or shape) within a unified analytical framework. This protocol provides a detailed guide for constructing such datasets, with a particular emphasis on the practical stages of data acquisition, processing, and preparation for phylogenetic analysis. The principles outlined are broadly applicable across organismal biology, from paleontology to drug discovery, where high-content cellular phenotyping relies on similar quantitative morphological profiling [23] [24].

Foundational Concepts: Data Types in Morphology

A critical first step in dataset construction is the accurate identification of data types, as this classification dictates subsequent analytical choices. Morphological data can be fundamentally categorized as follows [25]:

- Categorical Variables: Describe qualities or characteristics. These are subdivided into:

- Nominal Variables: Categories with no inherent order (e.g., blood types A, B, AB, O).

- Ordinal Variables: Categories with a logical sequence (e.g., Fitzpatrick skin types I-V).

- Dichotomous (Binary) Variables: A subtype with only two categories (e.g., presence or absence of a skeletal feature).

- Numerical Variables: Represent quantifiable measurements. These are subdivided into:

- Discrete Variables: Counts that can only take specific integer values (e.g., number of vertebrae).

- Continuous Variables: Measurements on a continuous scale that can take any value within a range (e.g., bone length, branch thickness) [26].

Table 1: Classification and Presentation of Morphological Data Types

| Data Type | Subtype | Key Characteristics | Example in Morphology | Recommended Summary Table | Recommended Visualization |

|---|---|---|---|---|---|

| Categorical | Nominal | Unordered categories | Suture type: planar, sutured, fused | Frequency table (Absolute & Relative %) | Bar chart, Pie chart |

| Ordinal | Ordered categories | Tooth wear score: low, medium, high | Frequency table (Absolute & Relative %) | Bar chart | |

| Dichotomous | Two mutually exclusive states | Wing presence: Yes/No | Frequency table (Absolute & Relative %) | Bar chart, Pie chart | |

| Numerical | Discrete | Countable integers | Number of dentary teeth | Frequency table (Absolute, Relative & Cumulative %) | Bar chart, Frequency polygon |

| Continuous | Infinitely divisible measures | Femur length (mm), Branch thickness (px) [26] | Table with summary statistics (Mean, SD, etc.) | Histogram, Box plot |

Workflow for Morphological Data Generation and Integration

The process of building a robust morphological dataset, from specimen to phylogenetic matrix, involves a series of methodical steps. The following workflow integrates both discrete and continuous data collection.

Step 1: Data Acquisition & Character Definition

Objective: To capture high-quality raw data (images, measurements) and define a character list encompassing both discrete and continuous traits.

Protocol:

Imaging and Raw Data Collection:

- Fossils/Macro-specimens: Use high-resolution photography, computed tomography (CT), or laser scanning. Ensure consistent lighting and scale across all specimens.

- Micro-specimens/Cells: For cellular morphological phenotyping, use automated microscopy. Protocols like the Cell Painting assay [23] are recommended. This assay uses up to six fluorescent dyes to stain eight major organelles and sub-cellular compartments (see Table 4), providing a rich, multiparametric morphological profile.

- Document all imaging parameters (e.g., magnification, resolution, exposure time) for reproducibility.

Character Definition:

- Discrete Characters: Compile a list of categorical traits relevant to your taxonomic group. Pre-define all possible states for each character (e.g., Character: Tooth Cusp Shape; States: 0=Sharp, 1=Rounded, 2=Absent). Avoid ambiguous state definitions.

- Continuous Characters: Identify measurable traits. These can be traditional linear measurements (e.g., greatest skull length) or quantitative features extracted from images (e.g.,

branch_thickness,branch_angle,cell_nuclear_area) [26] [23].

Step 2: Data Processing & Feature Extraction

Objective: To convert raw images into quantifiable morphological data.

Protocol:

Image Pre-processing [26]:

- Color to Grayscale Conversion: Convert color images to 8-bit grayscale.

- Thresholding: Use automated methods (e.g., Otsu's method) to create a binary image (foreground vs. background). Manually adjust the threshold if necessary to best represent the original structure.

- Morphological Operations: Apply "opening" (erosion followed by dilation) to remove stray foreground pixels and "closing" (dilation followed by erosion) to fill small holes in the foreground. This cleans the binary image.

Feature Extraction:

- For Complex Branching Structures (e.g., plants, vascular systems) [26]:

- Skeletonization: Apply a thinning algorithm to the binary image to reduce it to a single-pixel-wide skeleton. This preserves the topology and connectivity of the structure.

- Graph Generation: From the skeleton, detect key features: junctions (branch points), terminals (end points), and branches.

- Quantification: Use the original image and the skeleton to calculate measurements.

Branch Length: The number of pixels between two junctions or a junction and a terminal.Branch/Junction/Terminal Thickness: Twice the mean distance from skeleton points to the nearest background pixel within the local foreground region.Branch Angle: The angle between connected branches at a junction.

- For Cellular and Sub-cellular Phenotyping [23] [24]:

- Use open-source software like CellProfiler.

- Illumination Correction: Correct for uneven fluorescence distribution across the image field.

- Cell Identification: Identify individual cells and sub-cellular compartments (nuclei, cytoplasm) based on fluorescent markers.

- Morphological Feature Extraction: For each cell, extract hundreds of size, shape, intensity, and texture features (e.g.,

Area,Eccentricity,Zernike moments,Granularity).

- For Complex Branching Structures (e.g., plants, vascular systems) [26]:

Step 3: Data Integration & Quality Control

Objective: To assemble the extracted data into a final matrix ready for phylogenetic analysis.

Protocol:

Create the Integrated Data Matrix:

- Construct a matrix where rows represent operational taxonomic units (OTUs; e.g., species, specimens) and columns represent all characters.

- Continuous Characters: Input the actual measured values (e.g.,

8.72,15.41). It is often useful to log-transform these values to conform to assumptions of normality. - Discrete Characters: Input the state codes (e.g.,

0,1,2). Use a standard like "?" for missing data and "-" for inapplicable data.

Quality Control (QC):

- Profile Averaging: In high-content screens, average features across all cells in a well or across replicate samples to create a stable morphological profile for each treatment or taxon [23].

- Data Validation: Check for outliers and inconsistencies. Re-inspect specimens or images associated with extreme values to determine if it is a biological reality or a measurement error.

- Missing Data: Document the proportion and pattern of missing data. Apply appropriate strategies (e.g., pruning, imputation) as required by the chosen phylogenetic method.

Table 2: Example Integrated Data Matrix for Phylogenetic Analysis

| Taxon/Specimen | Discrete Character 1(Tooth Cusp Shape) | Discrete Character 2(Foramen Presence) | Continuous Character 1(Skull Length mm) | Continuous Character 2(Branch Thickness px) |

|---|---|---|---|---|

| Taxon_A | 0 (Sharp) | 1 (Yes) | 45.2 | 12.5 |

| Taxon_B | 1 (Rounded) | 0 (No) | 52.1 | 8.7 |

| Taxon_C | 2 (Absent) | 1 (Yes) | 38.9 | 15.4 |

| Fossil_X | 1 (Rounded) | ? (Missing) | 48.5 | - |

The Scientist's Toolkit: Essential Research Reagents & Software

This section details key reagents, software, and materials essential for generating quantitative morphological datasets, particularly in high-content screening and image-based profiling.

Table 3: Essential Toolkit for Morphological Data Generation

| Category | Item/Reagent | Specific Example | Function in Protocol |

|---|---|---|---|

| Imaging & Hardware | Automated Microscope | ImageXpress Micro XLS [23] | High-throughput, automated image acquisition of multi-well plates. |

| Binocular Microscope with Camera | Nikon Coolpix P6000 [26] | High-resolution 2D imaging of small biological specimens. | |

| Fluorescent Dyes (Cell Painting) [23] | Hoechst 33342 | Nucleus stain (DNA) | Labels the nucleus for identification and segmentation. |

| Concanavalin A, Alexa Fluor 488 | Endoplasmic reticulum stain | Visualizes the structure of the endoplasmic reticulum. | |

| SYTO 14 green | Nucleoli & cytoplasmic RNA stain | Highlights RNA-rich regions within the cell. | |

| Phalloidin & WGA, Alexa Fluor 594 | F-actin, Golgi, plasma membrane stain (AGP) | Labels the actin cytoskeleton, Golgi apparatus, and plasma membrane. | |

| MitoTracker Deep Red | Mitochondria stain | Visualizes mitochondrial network and location. | |

| Image Analysis Software | CellProfiler [23] | Open-source | Extracts morphological features from images; used for illumination correction, cell identification, and measurement. |

| Fiji / ImageJ [26] | Open-source | Performs image pre-processing: conversion to grayscale, thresholding, and morphological operations. | |

| Custom Branchometer Software [26] | C-based, GNU GPL | Quantifies 2D images of complex branching forms (skeletonization, measures branch length/angle/thickness). | |

| Data Analysis Environment | R Statistical Software [24] [26] | Open-source | Used for downstream statistical analysis, canonical discriminant analysis, and data visualization. |

Detailed Experimental Protocol: Cell Painting Assay for Morphological Profiling

The following is a detailed protocol for the Cell Painting assay, a cornerstone method for generating high-dimensional continuous morphological data in cellular systems [23].

Objective: To stain multiple cellular compartments for subsequent high-content imaging and quantitative morphological profiling.

Materials:

- U2OS cells (or other relevant cell line)

- 384-well plates

- Compound library for treatment (optional)

- Staining dyes (as listed in Table 3)

- Formaldehyde (for fixation)

- Triton X-100 (for permeabilization)

- Automated plate washer and liquid handler (recommended for throughput)

- High-content microscope with at least 5 fluorescent channels

Procedure:

Cell Plating and Treatment:

- Plate cells in 384-well plates at an optimized density for confluency after the assay duration.

- Incubate cells with small-molecule compounds or other perturbations in quadruplicate to ensure robustness.

Live Cell Staining:

- Mitochondrial Stain: Add MitoTracker Deep Red dye to the live cells in culture medium. Incubate for 30-45 minutes at 37°C.

Fixation and Permeabilization:

- Aspirate the medium containing the live-cell dye.

- Fix cells with a 3.7% formaldehyde solution for 20-30 minutes at room temperature.

- Wash cells with a buffer solution.

- Permeabilize cells with a 0.1% Triton X-100 solution for 10-15 minutes.

Staining of Fixed Cells:

- Prepare a master mix containing the remaining dyes:

- Hoechst 33342 (DNA/Nucleus)

- Concanavalin A, Alexa Fluor 488 (ER)

- SYTO 14 (RNA)

- Phalloidin, Alexa Fluor 594 (F-actin)

- Wheat Germ Agglutinin (WGA), Alexa Fluor 594 (Golgi/Plasma Membrane)

- Add the dye mix to the permeabilized cells and incubate for 30-60 minutes at room temperature, protected from light.

- Perform a final wash to remove unbound dye.

- Prepare a master mix containing the remaining dyes:

Image Acquisition:

- Image the plates using an automated microscope (e.g., ImageXpress Micro) with a 20x objective.

- Acquire images in 5 fluorescent channels corresponding to each dye (see Table 1 in [23]).

- Image 6 fields of view per well to ensure adequate cell sampling.

Image Analysis and Feature Extraction (as described in Section 3.2):

- Process the raw images using CellProfiler pipelines for illumination correction, quality control, and feature extraction.

- Output single-cell and per-well averaged morphological profiles for downstream analysis.

Table 4: Cell Painting Assay Dye Channels and Targets

| Dye | Primary Cellular Target | CellProfiler Channel Name | Example ImageXpress Wavelength |

|---|---|---|---|

| Hoechst 33342 | Nucleus (DNA) | DNA | w1 |

| Concanavalin A, Alexa Fluor 488 | Endoplasmic Reticulum | ER | w2 |

| SYTO 14 green | Nucleoli, Cytoplasmic RNA | RNA | w3 |

| Phalloidin/WGA, Alexa Fluor 594 | F-actin, Golgi, Plasma Membrane | AGP | w4 |

| MitoTracker Deep Red | Mitochondria | Mito | w5 |

The foundational science of plant taxonomy is facing a critical capacity crisis, particularly in biodiversity-rich regions where species may become extinct before being scientifically described [27]. A comprehensive global survey reveals that 48% of countries have fewer than ten active plant taxonomists, creating severe limitations in documenting, studying, and conserving biodiversity [27]. This taxonomic impediment directly affects research integrating fossil data with phylogenetic comparative methods, as inaccurate species delimitation compromises evolutionary analyses and misinterpretation of evolutionary relationships.

The challenge is compounded by the tension between cryptic species (genetically distinct but morphologically similar lineages) and phenotypic noise (non-genetic phenotypic variations within a single genotype), creating substantial complications for developing clear taxonomy and understanding evolutionary processes [28]. This application note provides structured frameworks and methodological solutions to address these challenges, with particular emphasis on quantitative data presentation and standardized protocols for species-level phenotypic characterization.

Quantitative Assessment of Taxonomic Challenges

Table 1: Global Disparities in Taxonomic Capacity and Infrastructure [27]

| Region Type | Active Plant Taxonomists | Access to Basic Tools | Limitations Index |

|---|---|---|---|

| Low-income, biodiversity-rich | <10 experts in 48% of countries | Severely limited | High |

| High-income regions | Substantially higher | Full access | Low |

| Most affected countries | Notable gaps | Critical shortages | Severe challenges |

| Angola, Benin, Botswana | Fewer than 10 experts | Laboratory equipment, literature | Extreme limitations |

| Colombia, Sierra Leone, Venezuela | Insufficient training capacity | Computational resources | Major constraints |

Table 2: Cryptic Species vs. Phenotypic Noise in Evolutionary Studies [28]

| Characteristic | Cryptic Species Concept | Phenotypic Noise Concept |

|---|---|---|

| Genetic Basis | Genetically distinct evolutionary lineages | Isogenic population (same genotype) |

| Morphological Features | Morphologically indistinguishable | Phenotypic variations expressed |

| Reproductive Compatibility | Reproductively isolated | Fully interbreeding |

| Primary Drivers | Genetic divergence, reproductive isolation | Environmental influences, developmental plasticity |

| Impact on Taxonomy | Leads to underestimation of species diversity | Leads to overestimation of species diversity |

| Recommended Detection Method | Molecular phylogenetics, genomic analyses | Common garden experiments, environmental controls |

Experimental Protocols for Phenotypic Characterization

Protocol: Integrated Morphometric Analysis for Species Delimitation

Purpose: To quantitatively distinguish cryptic species from phenotypic noise through standardized morphological characterization.

Materials:

- Digital calipers (precision ±0.01 mm)

- Standardized imaging setup with scale reference

- Morphometric software (ImageJ, MorphoJ)

- Multivariate statistical package (R with vegan, ape packages)

- Herbarium specimens or living material from multiple populations

Procedure:

- Sample Selection: Select minimum of 20 specimens per putative taxonomic group across geographical range

- Character Scoring: Measure 30+ continuous morphological characters (leaf dimensions, floral parts, reproductive structures)

- Data Standardization: Apply log-transformation to allometric measurements to reduce size-dependent variation

- Multivariate Analysis: Perform Principal Components Analysis (PCA) to identify major axes of morphological variation

- Statistical Validation: Implement Discriminant Function Analysis to test predetermined groupings

- Integration with Molecular Data: Correlate morphological clusters with genetic distances from DNA barcode regions

Expected Outcomes: Quantitative assessment of morphological discontinuities corresponding to genetic divergences; identification of diagnostic characters for cryptic species recognition.

Protocol: Common Garden Experiments for Phenotypic Plasticity Assessment

Purpose: To discriminate genetically fixed traits from environmentally induced phenotypic variation.

Materials:

- Climate-controlled growth facilities

- Standardized potting medium and container size

- Automated environmental monitoring system

- DNA extraction kit for genetic verification

- Portable photosynthesis system for physiological measurements

Procedure:

- Genetic Material Collection: Propagate plant material from cuttings or seeds from multiple natural populations

- Experimental Design: Implement randomized complete block design with 10 replicates per population across 3 environmental treatments

- Environmental Manipulation: Apply controlled variations in light intensity, water availability, and nutrient regimes

- Phenotypic Monitoring: Record growth parameters, photosynthetic rates, and reproductive traits weekly

- Statistical Analysis: Calculate reaction norms and plasticity indices for each trait

- Heritability Estimation: Partition variance components using mixed models

Expected Outcomes: Quantification of phenotypic plasticity magnitude; identification of canalized traits with taxonomic value; assessment of genotype-by-environment interactions.

Visualizing Methodological Approaches

Figure 1: Integrated workflow for species delimitation combining morphological, genetic, and experimental approaches.

Figure 2: Phylogenetic comparative methods framework integrating fossil calibration data.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Taxonomic and Phylogenetic Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| DNA Extraction Kits (CTAB protocol) | High-quality DNA isolation from diverse tissue types | Essential for degraded herbarium specimens; modified protocols for recalcitrant taxa |

| DNA Barcode Primers | Amplification of standard marker regions (rbcL, matK, ITS) | Enable species identification and cryptic species detection |

| Herbarium Specimen Materials | Long-term preservation of voucher specimens | Critical for morphological reference and typification |

| Morphometric Software (ImageJ, MorphoJ) | Quantitative analysis of morphological characters | Enables statistical discrimination of subtle phenotypic differences |

| Phylogenetic Analysis Packages (BEAST, RAxML) | Molecular dating and tree inference | Integrates fossil calibration points with molecular data |

| Common Garden Infrastructure | Controlled environment plant growth facilities | Discrimination of genetic vs. environmental variation in phenotypes |

Data Presentation Standards for Taxonomic Research

Effective presentation of taxonomic data requires careful consideration of data types and appropriate visualization methods [29] [30]. Continuous data (measurements of morphological characters) should be presented using histograms, box plots, or scatterplots to show full data distributions, while discrete data (counts of meristic characters) are better represented with bar graphs or line graphs [29].

For complex multivariate morphological data, table presentation is recommended when precise values are required or when dealing with multiple units of measure [29] [30]. Well-designed tables should have clearly defined categories, sufficient spacing, clearly defined units, and easy-to-read typography [30]. All non-textual elements should be self-explanatory with clear titles and legends that enable them to stand alone from the main text [30].

Addressing the critical need for species-level phenotypic data requires concerted efforts to build taxonomic capacity, particularly in biodiversity-rich regions facing the greatest expertise shortages [27]. Strategic investment in inclusive training programs, improved infrastructure access, and strengthened collaboration between molecular systematists and morphologists is essential to overcome current taxonomic hurdles [27]. The protocols and frameworks presented here provide actionable methodologies for robust species delimitation that effectively integrates phenotypic data with fossil-calibrated phylogenetic analyses, enabling more accurate reconstruction of evolutionary history and informed biodiversity conservation decisions.