Beyond the Data Limit: Advanced Strategies for Maximizing Molecular Information Extraction in Drug Discovery

This article addresses the critical challenge of extracting robust and meaningful information from limited molecular datasets, a common bottleneck in early-stage drug discovery.

Beyond the Data Limit: Advanced Strategies for Maximizing Molecular Information Extraction in Drug Discovery

Abstract

This article addresses the critical challenge of extracting robust and meaningful information from limited molecular datasets, a common bottleneck in early-stage drug discovery. Aimed at researchers and development professionals, we explore the foundational principles of treating molecules as a chemical language, detail cutting-edge methodological approaches including multimodal feature fusion and AI-driven fragmentation, provide practical troubleshooting for data scarcity and model overfitting, and present a framework for the rigorous validation and comparison of extraction techniques. The synthesis of these areas provides a comprehensive guide for optimizing predictive models and accelerating the identification of viable drug candidates, even with constrained data.

The Language of Molecules: Foundational Principles for Extracting Meaning from Limited Data

Artificial Intelligence holds transformative potential for drug discovery, promising to address the field's persistent challenges of high costs, lengthy timelines, and low success rates [1]. However, AI's effectiveness is fundamentally constrained by a critical bottleneck: limited molecule counts in training data. This data scarcity impacts model reliability, generalizability, and ultimately, the translation of AI predictions into viable clinical candidates. This technical support center provides researchers with practical strategies to optimize information extraction from limited molecular datasets, enabling more robust AI-driven discovery despite data constraints.

FAQs: Addressing Critical Bottlenecks

How does limited data specifically impact AI model performance in drug discovery?

Limited molecular data directly compromises AI model performance through three primary mechanisms:

- Reduced Predictive Accuracy: Models trained on small datasets fail to learn the complex structure-activity relationships essential for predicting bioactivity, ADMET properties, and binding affinities accurately. With insufficient examples, models cannot generalize beyond their training data [1].

- Increased Overfitting Risk: Sparse data increases the likelihood that models will memorize training examples rather than learning underlying patterns. This results in excellent training performance but poor generalization to new molecular structures [1].

- Limited Chemical Space Exploration: AI-driven generative models produce less diverse and novel molecular structures when trained on limited data, constraining their ability to propose innovative chemical matter for difficult targets [2].

What strategies can improve AI model training with limited molecule sets?

Several methodologies can enhance learning from limited molecular data:

- Transfer Learning: Pre-train models on large, general chemical databases (like PubChem or ChEMBL) then fine-tune on your specific, smaller dataset. This approach leverages general chemical knowledge while specializing for particular targets [1].

- Data Augmentation: Apply controlled molecular transformations (e.g., scaffold preservation, functional group interconversion) to artificially expand training sets while maintaining biochemical relevance [1].

- Multi-Task Learning: Train models to predict multiple endpoints simultaneously (e.g., activity, solubility, toxicity) to leverage shared representations across related tasks and improve data efficiency [3].

- Active Learning: Implement iterative cycles where models selectively identify the most informative molecules for experimental testing, maximizing knowledge gain from limited synthesis and screening resources [2].

How can we validate AI models developed with limited data?

Robust validation is crucial when working with limited molecular datasets:

- Use Strict Validation Protocols: Employ nested cross-validation with outer loops for performance estimation and inner loops for hyperparameter tuning. This prevents over-optimistic performance estimates [1].

- Implement External Testing: Reserve a completely held-out test set that is never used during model development or training. This provides the most realistic estimate of real-world performance [1].

- Apply Domain-Aware Splitting: Use scaffold-based or temporal splits instead of random splits to better simulate performance on truly novel chemical classes [1].

- Quantify Uncertainty: Implement methods that provide confidence estimates alongside predictions, such as Bayesian neural networks or ensemble approaches, to flag less reliable predictions for researcher review [4].

Troubleshooting Guides

Problem: Poor Model Generalization to Novel Scaffolds

Symptoms:

- Excellent performance on training molecules but poor prediction on new structural classes

- Model consistently recommends molecules similar to training data without structural innovation

Solutions:

- Apply Scaffold-Based Splitting during validation to identify this issue early

- Incorporate External Chemical Information through pre-trained molecular representations

- Use Multi-objective Optimization that balances predicted activity with structural novelty

- Implement Domain Adaptation Techniques to bridge knowledge from data-rich chemical domains to your specific target

Problem: High-Variance Performance Across Validation Folds

Symptoms:

- Dramatically different performance metrics across cross-validation folds

- Model performance sensitive to specific molecules in training set

Solutions:

- Increase Dataset Size through strategic augmentation of high-value regions of chemical space

- Implement Robust Regularization techniques including dropout, weight decay, and early stopping

- Use Ensemble Methods that combine predictions from multiple models trained on different data subsets

- Apply Bayesian Optimization for hyperparameter tuning to find more stable configurations

Experimental Protocols for Limited Data Scenarios

Protocol 1: Transfer Learning for Activity Prediction

Purpose: Optimize predictive performance for target-specific activity using limited proprietary data enhanced with public chemical databases.

Materials:

- Limited proprietary molecule set with assay data (50-500 compounds)

- Large public compound database (e.g., ChEMBL, PubChem)

- Computing resources capable of deep learning (GPU recommended)

Methodology:

- Pre-training Phase:

- Curate large-scale molecular dataset from public sources (1M+ compounds)

- Pre-train deep neural network or graph convolutional network using self-supervised learning (e.g., masked atom prediction) or multi-task learning across diverse assays

- Validate base model on benchmark datasets to ensure competitive performance

Fine-tuning Phase:

- Initialize model with pre-trained weights from first phase

- Continue training using proprietary dataset with smaller learning rate

- Apply progressive unfreezing of layers if dataset is very small (<100 compounds)

Validation:

- Use time-based or scaffold-based splits to simulate real-world performance

- Compare against baseline model trained from scratch on proprietary data only

- Statistical significance testing using paired t-test across multiple data splits

Expected Outcomes: Models implementing this protocol typically show 15-30% improved mean squared error and better calibration on external test sets compared to models trained exclusively on limited proprietary data [1].

Protocol 2: Active Learning for Hit Expansion

Purpose: Intelligently select molecules for testing to maximize information gain and hit rates while minimizing experimental resources.

Materials:

- Initial screening dataset (100-1000 compounds)

- Access to larger virtual compound library (10,000+ structures)

- Experimental capacity for iterative testing cycles

Methodology:

- Initial Model Training:

- Train initial model on available screening data

- Quantize model uncertainty estimates using ensemble methods or Bayesian approaches

Selection Strategy Implementation:

- Apply acquisition function (e.g., expected improvement, upper confidence bound) to rank unexplored compounds

- Balance exploration (high uncertainty regions) and exploitation (high predicted activity)

- Select top candidates for experimental testing based on budget constraints

Iterative Cycle:

- Test selected compounds experimentally

- Incorporate new data into training set

- Retrain model and repeat selection process

- Continue for 3-5 cycles or until performance plateaus

Validation Metrics:

- Hit rate improvement over random selection

- Maximum potency achieved across cycles

- Diversity of discovered active scaffolds

- Rate of model improvement per compound tested

Expected Outcomes: Active learning implementations typically achieve 2-5x higher hit rates compared to random screening and identify more diverse chemotypes [2].

Data Presentation: Performance Metrics with Limited Data

Table 1: AI Model Performance Degradation with Decreasing Training Data (Simulated Analysis)

| Training Set Size | R² (Activity Prediction) | AUC (Classification) | Novel Scaffold Success Rate |

|---|---|---|---|

| 10,000 compounds | 0.78 | 0.91 | 35% |

| 1,000 compounds | 0.65 | 0.83 | 22% |

| 500 compounds | 0.52 | 0.74 | 14% |

| 100 compounds | 0.31 | 0.62 | 5% |

Table 2: Data Enhancement Technique Efficacy with Limited Base Data (n=200 compounds)

| Enhancement Technique | R² Improvement | Hit Rate Increase | Required Computational Overhead |

|---|---|---|---|

| Transfer Learning | +0.21 | +185% | Medium |

| Data Augmentation | +0.14 | +95% | Low |

| Multi-Task Learning | +0.17 | +130% | Medium |

| Active Learning | +0.23 | +210% | High (requires iterations) |

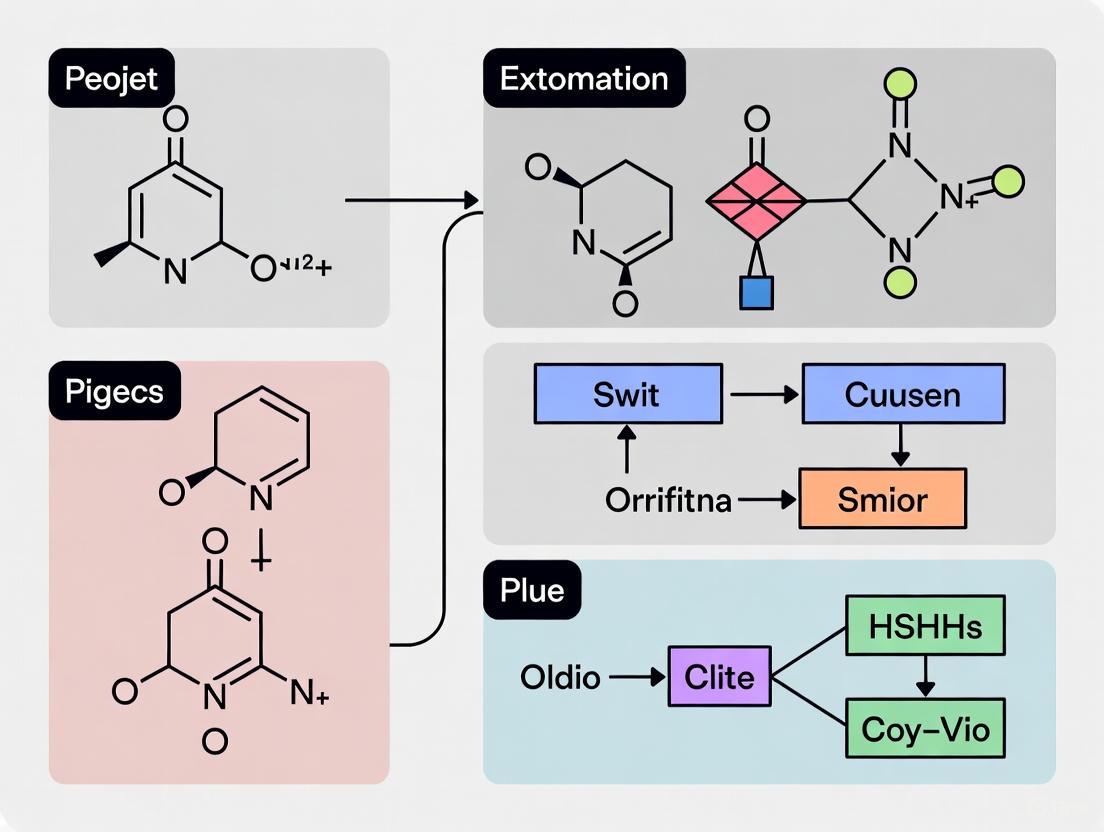

Workflow Visualization: Optimizing Limited Data Utilization

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Research Reagent Solutions for Limited Data Challenges

| Tool/Platform | Primary Function | Application Context | Data Requirements |

|---|---|---|---|

| AIDDISON | AI-driven molecule design & optimization | Hit identification & lead optimization | Can start with small seed sets (10s of compounds) [5] |

| SYNTHIA | Retrosynthesis planning | Synthetic feasibility assessment of AI-proposed molecules | Large reaction database enables pathway prediction [5] |

| LLM-AIx Pipeline | Information extraction from unstructured text | Mining existing literature & reports for additional data points | Flexible to available textual data [6] |

| Digital Twins | In silico control arms for preclinical studies | Reducing animal studies while generating comparative data | Can be built from historical experimental data [3] |

| Graph Neural Networks | Learning molecular structure-activity relationships | Predictive modeling with limited labeled data | Leverages molecular graph representation [1] |

Limited molecule counts present a fundamental constraint in AI-driven drug discovery, but strategic approaches can significantly mitigate this challenge. By implementing transfer learning, active learning, and data augmentation techniques—validated through robust evaluation frameworks—researchers can extract maximum value from limited datasets. The integration of these methods with practical experimental design creates a virtuous cycle of knowledge generation, progressively enhancing AI capabilities while respecting the practical constraints of drug discovery research. As these methodologies mature, they promise to unlock more efficient discovery pipelines capable of addressing previously intractable therapeutic targets.

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using Fragment-Based Drug Discovery (FBDD) over traditional High-Throughput Screening (HTS)?

FBDD screens smaller, less complex molecules than HTS. While initial hits have weaker affinity, they are more "atom-efficient" in their binding and allow a much broader coverage of chemical space with a far smaller number of compounds. This makes FBDD particularly valuable for identifying leads for hard-to-drug targets [7].

Q2: Our team is new to FBDD. What are the key properties that define a good fragment for our library?

A good fragment is typically a small organic molecule, often defined by the "Rule of Three" (Ro3) [7]:

- Molecular weight ≤ 300 Da

- Hydrogen bond donors ≤ 3

- Hydrogen bond acceptors ≤ 3

- cLogP ≤ 3 Many successful fragments may violate one of these rules, most commonly the hydrogen bond acceptor count [7].

Q3: How does molecular fragmentation relate to modern AI models in drug discovery?

Molecular fragmentation is a fundamental step in applying powerful AI models, like Generative Pre-trained Transformers (GPT), to chemistry. By breaking down molecules into smaller, meaningful substructures (fragments), we can treat them as the "words" of a chemical language. This allows the AI model to learn the underlying "grammar" and semantic relationships between substructures, significantly enhancing its understanding of compounds and its ability to generate novel, valid molecular structures [8].

Q4: We have a limited set of active compounds. How can fragmentation help us extract more information for our research?

Fragmenting your existing active compounds allows you to move the analysis from the whole-molecule level to the substructure level. This helps identify the specific chemical motifs that are crucial for biological activity. By understanding these key fragments, you can design new compounds that combine these active elements more efficiently, thereby maximizing the informational yield from your limited initial dataset [8] [9].

Troubleshooting Common Experimental Issues

Issue 1: Low Hit Rate or No Confirmed Binds from a Fragment Screen

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient library diversity | Analyze the physicochemical property space (e.g., molecular weight, logP, polar surface area) and pharmacophore diversity of your library. [7] | Curate or supplement your fragment library to ensure broad coverage of chemical space. Incorporate fragments with greater 3D character to escape planarity. [7] |

| Low fragment solubility | Check for precipitate in assay buffers. Use techniques like NMR to assess solubility directly. [7] | Prioritize fragments with higher solubility or use specialized "high solubility" fragment sets. Adjust buffer conditions if possible. |

| Weak affinity below detection limit | Use sensitive, orthogonal biophysical methods to validate binding. [7] | Employ more sensitive techniques like NMR or Surface Plasmon Resonance (SPR). Consider X-ray crystallography to detect very weak binds. |

Issue 2: Challenges in AI-Based Molecular Design Using Fragments

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Fragmentation method is not chemically logical | Check if the generated fragments consistently break important functional groups or rings. | Adopt a retrosynthetically-inspired fragmentation method like BRICS or RECAP, which respect chemical logic by breaking bonds in a way that mimics synthetic chemistry. [8] |

| Fragment vocabulary is too large or sparse | Calculate the size of your unique fragment set and the frequency of each fragment. | Tune the fragmentation parameters (e.g., minimum/maximum fragment size) or use a predefined fragment library to create a manageable, focused set of building blocks for AI models. [8] |

Experimental Protocols for Key Methodologies

Protocol 1: Constructing a Diverse Fragment Library for Screening

Objective: To assemble a collection of 1,000-2,000 fragments that maximizes the exploration of chemical space for a primary screen.

Materials:

- Commercial Fragment Libraries: Source compounds from vendors providing pre-filtered fragments.

- In-house Compound Collection: Include simple, drug-like molecules from internal archives.

- Software: RDKit or Open Babel for computational filtering and property calculation. [8]

Methodology:

- Property Filtering: Apply the "Rule of Three" as an initial filter:

- Molecular weight ≤ 300 Da

- Hydrogen Bond Donors (HBD) ≤ 3

- Hydrogen Bond Acceptors (HBA) ≤ 3

- cLogP ≤ 3

- (Optional) Rotatable bonds ≤ 3 and Polar Surface Area ≤ 60 Ų [7]

- Diversity Selection: Use computational tools to analyze the filtered set. Select fragments that maximize:

- Structural Diversity: Ensure a variety of ring systems, linkers, and functional groups.

- Shape Diversity: Prioritize fragments with high three-dimensionality (high Fsp3) to avoid flat, aromatic-heavy libraries. [7]

- Solubility Assessment: Experimentally validate solubility in your standard assay buffer to ensure concentrations of at least 0.1-1 mM are achievable. [7]

- Orthogonal Validation: Plan to use at least two biophysical methods (e.g., NMR and SPR) to confirm any initial hits from the screen. [7]

Protocol 2: Implementing a RECAP Fragmentation for AI Representation Learning

Objective: To systematically fragment a dataset of molecules into chemically meaningful, retrosynthetically derived substructures for training AI models.

Materials:

- Molecular Dataset: A set of small molecules in SMILES format.

- Software: RDKit or other cheminformatics toolkit that supports the RECAP algorithm.

Methodology:

- Data Preprocessing: Standardize the molecular structures in your dataset (e.g., neutralize charges, remove duplicates).

- Define Cleavage Rules: RECAP uses a set of 11 predefined chemical rules for fragmentation, prioritizing the cleavage of bonds in retrosynthetically interesting ways (e.g., amide, ester, ether linkages). [8]

- Execute Fragmentation: Process each molecule in the dataset through the RECAP algorithm. The output is a set of molecular fragments.

- Post-process Fragments: Filter the generated fragments based on size (e.g., heavy atom count) and frequency to create a final vocabulary of fragments.

- Encode Molecules: Represent each original molecule as a sequence or a set of its constituent RECAP fragments, which can then be used as input for AI models like Transformers. [8]

Workflow Visualization

Molecular Fragmentation to AI Analysis

FBDD Hit-to-Lead Optimization Path

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function / Application |

|---|---|

| Rule of Three (Ro3) | A guideline for selecting fragment-like molecules with suitable physicochemical properties for screening, emphasizing low molecular weight and polarity. [7] |

| RECAP (Retrosynthetic Combinatorial Analysis Procedure) | A fragmentation algorithm that breaks molecules around retrosynthetically interesting chemical substructures, generating chemically meaningful fragments for AI learning and library design. [8] |

| BRICS (Breaking of Retrosynthetically Interesting Chemical Substructures) | Another key fragmentation methodology used to decompose molecules into plausible synthetic building blocks, useful for in silico fragment generation. [7] |

| Fragment Library | A curated collection of 1,000-2,000 small, simple compounds designed to efficiently sample a vast chemical space for initial binding hits against a biological target. [7] |

| Generative Pre-trained Transformer (GPT) Models | A class of AI models that, when trained on molecular fragments as "words," can learn the complex relationships between chemical substructures and generate novel, valid molecular designs. [8] |

In artificial intelligence-assisted molecular discovery, the choice of how a molecule is represented is a limiting factor in model performance and explicability [10]. Unlike natural language processing or image recognition, the field lacks a naturally applicable, complete "raw" molecular representation [10]. This technical guide explores three predominant molecular representation schemes—SMILES strings, molecular graphs, and molecular fingerprints—focusing on their practical implementation, common challenges, and optimization strategies for research involving limited molecule counts. Efficient representation becomes particularly crucial when working with sparse data, as it directly impacts the chemical information retained, including physicochemical properties, pharmacophores, and functional groups [10].

Molecular Representation Frameworks: Technical Specifications

SMILES and Its Advanced Variants

The Simplified Molecular-Input Line-Entry System (SMILES) is a line notation using short ASCII strings to describe chemical structures [11]. In SMILES, atoms are represented by their atomic symbols (with two-character symbols like Cl requiring the second letter in lowercase), bonds are denoted with symbols (- for single, = for double, # for triple, : for aromatic), branches are specified with parentheses, and rings are represented by breaking one bond and designating the closure point with a digit [11]. For example, benzene is c1ccccc1 and cyclohexane is C1CCCCC1 [11].

Despite its widespread use, classical SMILES has known limitations. When generating SMILES, parentheses and ring numbers must occur in pairs with deep nesting, which can lead to syntactical mistakes and invalid strings when processed by AI models, especially those trained on small datasets [10]. Several advanced variants have been developed to address these issues:

- DeepSMILES (DSMILES): Resolves most syntactical mistakes caused by long-term dependencies but still allows semantically incorrect strings [10].

- SELFIES (Self-referencing Embedded Strings): Ensures every string specifies a valid chemical graph, though this robustness can make representations more challenging to read [10].

- t-SMILES (tree-based SMILES): A fragment-based, multiscale molecular representation framework that describes molecules using SMILES-type strings obtained by performing a breadth-first search on a full binary tree formed from a fragmented molecular graph [10]. Introduces only two new symbols ("&" and "^") to encode multi-scale and hierarchical molecular topologies [10].

- R-SMILES (Root-aligned SMILES): Specifically designed for chemical reaction prediction, it establishes a tightly aligned one-to-one mapping between product and reactant SMILES by selecting the same root atom, significantly reducing edit distance and improving synthesis prediction efficiency [12].

Molecular Graphs

Molecular graphs explicitly describe the topological structure of a molecule, where atoms are represented as nodes and bonds as edges [12]. This representation serves as the foundation for Graph Neural Networks (GNNs), which can generate 100% valid molecules by easily implementing valence bond constraints and verification rules [10].

However, standard GNNs face the challenge of being bounded by the Weisfeiler-Leman graph isomorphism test, potentially lacking ways to model long-range interactions and higher-order structures [10]. Recent research has proposed improvements through subgraph isomorphism, message-passing simple networks, and other techniques to enhance the expressive power of standard GNNs [10].

Molecular Fingerprints

Molecular fingerprints encode structural characteristics as vectors for fast similarity comparisons, forming the basis for structure-activity relationship studies, virtual screening, and chemical space mapping [13]. Different fingerprint types excel in different scenarios:

- Substructure fingerprints (e.g., ECFP4, Morgan fingerprints): Perform best for small molecules like drugs but have poor perception of global molecular features and may struggle to distinguish structural differences in larger molecules [13].

- Atom-pair fingerprints: Encode molecular shape and are preferable for large molecules like peptides, often used for scaffold-hopping, but perform poorly in small molecule benchmarks compared to substructure fingerprints [13].

- Hybrid fingerprints (e.g., MAP4): Combine substructure and atom-pair concepts to create a universal fingerprint suitable for both small and large molecules [13]. MAP4 writes circular substructures as SMILES strings for each atom in a pair, combines them with topological distance, and uses MinHashing to form the fingerprint vector [13].

Table 1: Performance Comparison of Molecular Fingerprints Across Molecule Types

| Fingerprint Type | Small Molecule Performance | Large Molecule Performance | Key Strengths |

|---|---|---|---|

| Substructure (ECFP4) | Excellent [13] | Poor [13] | Predictive of bioactivity for small molecules [13] |

| Atom-Pair | Poor [13] | Excellent [13] | Excellent perception of molecular shape [13] |

| Hybrid (MAP4) | Outperforms substructure fingerprints [13] | Outperforms other atom-pair fingerprints [13] | Universal description across molecule sizes [13] |

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My AI model trained on SMILES strings produces a high rate of invalid molecules. What steps can I take to improve validity?

A1: This common issue typically arises because models must learn both SMILES syntax and chemical rules simultaneously. Consider these approaches:

- Switch to Robust Representations: Implement SELFIES to ensure 100% theoretical validity by design, as every SELFIES string corresponds to a valid molecular graph [10].

- Adopt Fragment-Based Methods: Use t-SMILES or similar fragment-based approaches that significantly reduce the search space and generate chemically valid fragments, increasing the probability of valid molecule generation [10].

- Apply Data Augmentation: Generate multiple canonical SMILES representations for each molecule in your training set to help the model learn SMILES syntax more effectively, though note this is incompatible with some canonical SMILES approaches [12].

Q2: For limited data scenarios, which molecular representation approach is most effective at preventing overfitting?

A2: When working with limited molecule counts, fragment-based representations like t-SMILES have demonstrated superior performance. Systematic evaluations show that t-SMILES can avoid overfitting and achieve higher novelty scores while maintaining reasonable similarity on labeled low-resource datasets, regardless of whether the model is original, data-augmented, or pre-trained then fine-tuned [10]. The reduced search space of fragment-based strategies provides a regularization effect that is particularly beneficial in data-scarce environments.

Q3: How do I choose the right molecular fingerprint for a diverse compound library containing both small drug-like molecules and larger peptide compounds?

A3: Traditional fingerprints specialize in one molecule type, but newer hybrid approaches offer unified solutions:

- Use MAP4 Fingerprint: The MinHashed Atom-Pair fingerprint with a diameter of four bonds (MAP4) is specifically designed for this scenario, combining substructure and atom-pair concepts to work effectively across molecule sizes [13].

- Benchmark Performance: MAP4 has been shown to significantly outperform other fingerprints on extended benchmarks combining small molecule evaluation with peptide benchmarks recovering BLAST analogs [13].

- Chemical Space Mapping: MAP4 produces well-organized chemical space tree-maps (TMAPs) for diverse databases including DrugBank, ChEMBL, SwissProt, and the Human Metabolome Database [13].

Q4: In chemical reaction prediction tasks, how can I minimize the syntactic complexity that models must learn to focus on the actual chemical transformation?

A4: Standard SMILES representations create significant syntactic divergence between reactants and products despite minimal structural changes. The R-SMILES (Root-aligned SMILES) representation addresses this by:

- Establishing Atom Mapping: Using atom mapping or substructure matching algorithms to find common structures between products and reactants [12].

- Root Alignment: Selecting the same root atom for both product and reactant SMILES strings, creating a tight one-to-one mapping [12].

- Reducing Edit Distance: This approach minimizes the syntactic differences between input and output, bringing reaction prediction closer to an autoencoding problem where models can focus on learning chemical knowledge rather than complex syntax [12].

Troubleshooting Common Experimental Challenges

Problem: Poor Model Generalization on Unseen Molecular Scaffolds

- Symptoms: Good performance on molecules similar to training data but failure on novel scaffolds or structural motifs.

- Diagnosis: The molecular representation may be too focused on local features without capturing global molecular shape.

- Solution:

- Implement hybrid fingerprint approaches like MAP4 that capture both local substructures and global shape features [13].

- Supplement substructure fingerprints with atom-pair fingerprints to enhance shape awareness [13].

- For graph-based models, investigate advanced GNN architectures that go beyond standard message-passing to capture higher-order structures [10].

Problem: Significant Performance Discrepancies Between Similarity Search Methods

- Symptoms: Different fingerprint types or similarity metrics return substantially different nearest neighbors for the same query compound.

- Diagnosis: This often reflects the fundamental differences in what various fingerprints encode (local substructures vs. global shape).

- Solution:

- Understand that this is expected behavior rather than a technical bug—different fingerprints answer different similarity questions.

- Characterize your dataset using tools like AssayInspector to detect distributional differences and inconsistencies before modeling [14].

- Select fingerprints based on your specific goal: substructure fingerprints for bioactivity prediction, atom-pair fingerprints for scaffold hopping, or hybrid fingerprints for general-purpose applications [13].

Problem: Data Integration Issues When Combining Multiple Molecular Datasets

- Symptoms: Model performance decreases when additional datasets are incorporated, despite increased training data volume.

- Diagnosis: Likely caused by distributional misalignments, batch effects, or inconsistent experimental annotations between datasets [14].

- Solution:

- Conduct systematic data consistency assessment before integration using specialized tools like AssayInspector [14].

- Identify and address outliers, batch effects, and annotation discrepancies between data sources [14].

- Perform statistical comparisons of endpoint distributions and molecular feature spaces to ensure compatibility [14].

Table 2: Troubleshooting Guide for Common Molecular Representation Issues

| Problem | Root Cause | Solution Approaches | Expected Outcome |

|---|---|---|---|

| High invalid molecule generation | SMILES syntax complexity [10] | Switch to SELFIES or t-SMILES [10] | Near 100% theoretical validity [10] |

| Overfitting on small datasets | High-dimensional search space [10] | Implement fragment-based methods (t-SMILES) [10] | Higher novelty, maintained similarity [10] |

| Poor cross-size performance | Specialized fingerprint limitations [13] | Adopt hybrid fingerprints (MAP4) [13] | Consistent performance across molecule sizes [13] |

| Low reaction prediction accuracy | Large syntactic divergence in SMILES [12] | Apply R-SMILES for aligned representations [12] | Reduced edit distance, improved accuracy [12] |

Experimental Protocols & Methodologies

Protocol: Implementing t-SMILES for Low-Resource Molecular Generation

Purpose: To generate valid, novel molecules while avoiding overfitting when training data is limited.

Materials: Chemical dataset (e.g., ChEMBL, ZINC, QM9), t-SMILES implementation, sequence-based model architecture (e.g., Transformer).

Procedure:

- Molecular Fragmentation: Fragment each molecule in your dataset using a validated algorithm (JTVAE, BRICS, MMPA, or Scaffold) [10].

- Tree Construction: For each fragmented molecule, generate an acyclic molecular tree (AMT) and transform it into a full binary tree (FBT) [10].

- String Generation: Perform breadth-first traversal of the FBT to yield a t-SMILES string, using only two additional symbols ("&" and "^") beyond standard SMILES [10].

- Model Training: Train your sequence-based model on the resulting t-SMILES strings.

- Multi-Code System (Optional): Implement multiple t-SMILES code algorithms (TSSA, TSDY, TSID) where various descriptions complement each other to enhance overall performance [10].

Validation: Evaluate using distribution-learning benchmarks, goal-directed benchmarks, and Wasserstein distance metrics for physicochemical properties [10]. t-SMILES has demonstrated significant outperformance over classical SMILES, DeepSMILES, SELFIES and baseline models in goal-directed tasks while maintaining higher novelty and reasonable similarity to training distributions [10].

Protocol: Calculating and Applying MAP4 Fingerprints for Diverse Compound Libraries

Purpose: To create a unified molecular representation that performs well across both small molecules and large biomolecules.

Materials: Molecular structures in canonical isomeric SMILES format, RDKit cheminformatics toolkit, MAP4 implementation.

Procedure:

- Input Preparation: Ensure all molecules are represented as canonical and isomeric SMILES [13].

- Circular Substructure Generation: For each non-hydrogen atom in the molecule, write the circular substructures at radii 1 to r (default r=2 for MAP4) as canonical, non-isomeric, rooted SMILES strings [13].

- Topological Distance Calculation: Compute the minimum topological distance separating each atom pair in the molecule [13].

- Atom-Pair Shingle Creation: For each atom pair and each radius value, write atom-pair shingles in the format:

CSr(j) | TPj,k | CSr(k), placing the two SMILES strings in lexicographical order [13]. - Hashing and MinHashing: Hash the resulting set of atom-pair shingles using SHA-1 mapping, then apply MinHashing to form the final MAP4 fingerprint vector [13].

Validation: MAP4 significantly outperforms both substructure fingerprints on small molecule benchmarks and other atom-pair fingerprints on peptide benchmarks, while producing well-organized chemical space maps for diverse databases [13].

Workflow: Molecular Representation Selection for Limited Data Scenarios

The following workflow provides a systematic approach for selecting molecular representations when working with limited molecule counts:

Diagram 1: Representation selection workflow for limited data (44 characters)

Table 3: Essential Computational Tools for Molecular Representation Research

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit [13] | Cheminformatics Library | Calculate molecular descriptors, fingerprints, and process SMILES | Fundamental toolkit for all molecular representation tasks |

| t-SMILES Framework [10] | Molecular Representation | Fragment-based molecular representation with SMILES-type strings | Low-resource molecular generation avoiding overfitting |

| MAP4 Fingerprint [13] | Hybrid Fingerprint | Unified molecular representation for small and large molecules | Virtual screening across diverse compound libraries |

| R-SMILES [12] | Specialized SMILES | Root-aligned representation for chemical reaction prediction | Forward and retrosynthesis prediction tasks |

| AssayInspector [14] | Data Quality Tool | Detect dataset discrepancies and distributional misalignments | Data consistency assessment before model training |

| PubChem [15] | Chemical Database | Access to chemical structures, properties, and bioactivities | Compound searching and retrieval for training data |

| ChEMBL [15] | Bioactivity Database | Curated bioactive molecules with drug-like properties | Structure-activity relationship analysis |

| SciFinder [16] | Research Database | Comprehensive chemical information resource | Literature and compound research for experimental design |

Optimizing molecular representation selection is particularly crucial when working with limited molecule counts, where efficient information extraction becomes paramount. As demonstrated through this technical guide, the choice between SMILES variants, molecular graphs, and fingerprints should be driven by specific research goals, molecule types, and validity requirements. Fragment-based approaches like t-SMILES show particular promise for low-resource scenarios by reducing search space and maintaining novelty while preventing overfitting [10]. Meanwhile, unified representations like MAP4 fingerprints enable effective screening across diverse molecular sizes [13], and specialized approaches like R-SMILES optimize for specific tasks like reaction prediction [12]. By applying the systematic troubleshooting methodologies, experimental protocols, and selection workflows outlined in this guide, researchers can significantly enhance their molecular design and discovery processes even when working with constrained data resources.

Troubleshooting Guide: Molecular Segmentation & NLP-Based Drug Discovery

This guide addresses common challenges researchers face when applying Natural Language Processing (NLP) principles to molecular segmentation for drug discovery.

Problem: Poor Semantic Meaning of Generated Chemical Words

- Symptoms: Machine learning models using the segmented "chemical words" show poor performance in downstream tasks like property prediction or generated molecules are chemically invalid.

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Inappropriate Segmentation Method | Choose a method aligned with chemical logic. Data-driven methods often outperform random character slicing [17]. |

| Lack of Fragment Library | Utilize established fragment libraries (e.g., RECAP, BRICS) that contain chemically meaningful and synthetically accessible building blocks [17]. |

Problem: Inefficient Exploration of Chemical Space

- Symptoms: AI models generate molecules with high similarity to the training set, failing to propose novel scaffolds with desired properties.

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Over-reliance on Local Search | Implement algorithms that combine global and local search strategies, such as genetic algorithms with crossover and mutation operations [18]. |

| Limited Fragment Diversity | Move beyond predefined fragment libraries. Employ non-expertise-dependent fragmentation methods to expand the diversity of chemical building blocks [17]. |

Problem: Difficulty Identifying Key Functional Groups

- Symptoms: Models struggle to correlate specific molecular substructures (chemical words) with target protein binding or other biological activities.

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Lack of Interpretability | Apply interpretation pipelines to highlight which "chemical words" are most important for a model's prediction, allowing validation against known pharmacophores [19]. |

| General-Purpose Word Embeddings | Train domain-specific word embedding models (e.g., using FastText) on a specialized corpus of scientific literature to better capture chemical semantics [20] [21]. |

Frequently Asked Questions (FAQs)

Q1: Why should we treat molecules as a language? Text-based representations of chemicals (like SMILES) and proteins can be considered unstructured languages codified by humans. Advances in NLP allow us to unearth hidden knowledge in these representations to predict properties or design new molecules, accelerating drug discovery [22].

Q2: What is the main advantage of fragment-based drug discovery (FBDD) over high-throughput screening (HTS)? FBDD screens smaller, lower molecular weight compounds. This allows it to explore a broader chemical space with fewer compounds and provides more efficient optimization paths, often leading to higher-quality lead compounds [17].

Q3: My molecular optimization is stuck in a local optimum. What can I do? Consider using a Pareto-based genetic algorithm (GA). Unlike methods that aggregate properties into a single score, Pareto-based GAs can perform a multi-objective optimization, identifying a set of optimal trade-off solutions, which helps in exploring the chemical space more globally [18].

Q4: How can I ensure my segmented 'chemical words' are chemically meaningful? Recent research indicates that data-driven segmentation methods can produce "chemical words" that correspond to known pharmacophores and functional groups. You can validate this by interpreting your model to see if the key chemical words it uses align with established chemical knowledge [19].

The table below summarizes key characteristics of various molecular fragmentation approaches to aid in method selection [17].

Table 1: Comparison of Molecular Fragmentation Techniques

| Method / Aspect | Fragmentation Logic | Preserves Ring Structures? | Retains Fragmentation Information? | Requires Pre-defined Library? | Key Application Tasks |

|---|---|---|---|---|---|

| Library-Based (e.g., RECAP, BRICS) | Pre-defined chemical rules | Yes | Yes | Yes | Fragment-Based Drug Discovery (FBDD), Virtual Screening |

| Character Slicing (CS) | Sequential character split | No | No | No | Basic sequence model input (e.g., DeepDTA) |

| SMILES Enumeration | Multiple SMILES strings per molecule | Varies | No | No | Data augmentation for neural network training |

| Data-Driven Segmentation | Statistical learning from corpus | Varies | Yes | No | De novo drug design, interpretable ML models |

Experimental Protocols: Key Methodologies

Protocol 1: Building a Domain-Specific Chemical Language Model

This methodology is adapted from a study on identifying reagents for nano-FeCu synthesis [20] [21].

- Corpus Creation: Collect a specialized text corpus from scientific literature focused on your domain (e.g., "Fe, Cu, synthesis").

- Pre-processing: Clean the text, split it into sentences, and perform tokenization.

- Model Training: Train a word embedding model (like FastText) on the specialized corpus in an unsupervised manner. FastText is recommended for its ability to handle rare words using subword information.

- Hyperparameter Tuning: Perform a grid search, paying close attention to the learning rate, which has shown a strong correlation (r = 0.8962) with average cosine similarity, a key performance metric.

- Validation: Use metrics such as average cosine similarity, t-SNE visualization, and synonym analysis to validate that the model captures chemical relationships effectively.

Protocol 2: Interpreting Chemical Words as Pharmacophores

This pipeline is used to validate that data-driven chemical words capture meaningful chemistry [19].

- Model Training: Train a chemical-word-based model on a specific protein family bioactivity dataset.

- Importance Identification: Apply an interpretation method (e.g., SHAP) to identify which chemical words are most important for the model's strong binding predictions.

- Substructure Mapping: Map the key chemical words back to their corresponding molecular substructures.

- Literature Validation: Conduct an extensive literature review to find evidence that the identified substructures are known pharmacophores or functional groups for that protein family.

Workflow Visualization

Diagram 1: Molecular Segmentation and NLP Application Workflow

Diagram 2: Chemical Word Interpretation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for NLP-Driven Molecular Segmentation

| Item Name | Function / Explanation |

|---|---|

| RDKit | An open-source cheminformatics toolkit used for fragmenting molecules, working with SMILES strings, and computing molecular descriptors [17]. |

| Pre-defined Fragment Libraries (RECAP, BRICS) | Libraries of chemically relevant and synthetically accessible molecular fragments used for heuristic-based fragmentation in FBDD [17]. |

| FastText | A word embedding model effective for creating domain-specific chemical language models due to its ability to handle morphological variations and rare words [20] [21]. |

| SELFIES | A robust molecular representation (string-based) that guarantees 100% chemical validity in generated molecules, useful for genetic algorithm-based optimization [18]. |

| Word2Vec / BERT | Alternative word embedding models. BERT, in particular, uses a deep transformer architecture to understand word context but requires significant computational resources [21]. |

Frequently Asked Questions

What does "limited data" typically mean in drug discovery? In drug discovery, "limited data" refers to scenarios where the volume of available data is insufficient for standard data-hungry deep learning models to perform effectively. This is common in tasks involving novel target classes, rare diseases, or newly discovered molecular structures where only a small number of known active compounds or experimental data points exist [23].

What are the main challenges of working with limited molecule counts? The primary challenge is that deep learning approaches, which have shown great promise in drug discovery, are notoriously data-hungry. In low-data regimes, these models are at high risk of overfitting and may fail to learn generalized, reliable patterns, ultimately limiting their predictive power for identifying new drug candidates [23].

How can I extract more information from a small set of molecules? Strategies include using specialized AI techniques and leveraging multiple data modalities. Low-data-learning approaches are an active area of research. Furthermore, information extraction can be optimized by mining existing scientific literature at the page level to discover previously overlooked molecular structures and reaction data, thereby enriching your small dataset [24] [25].

Are there tools designed specifically for low-data information extraction? Yes, new tools are emerging. For instance, the MolMole toolkit is a vision-based AI framework designed to automatically detect and extract molecular structures and reaction data directly from the full pages of scientific documents (e.g., PDFs). This can help build datasets from literature where manual extraction is too time-consuming [24].

Troubleshooting Guides

Problem: Poor Performance of AI Models on a Small Dataset

| Troubleshooting Step | Description & Action |

|---|---|

| 1. Diagnose Data Quality | Manually review a sample of your data for inconsistencies, noise, or errors. Low data quality has a magnified negative impact in small datasets [26]. |

| 2. Explore Data Augmentation | Systematically increase the size and diversity of your training data using techniques appropriate for your data type (e.g., generating similar molecular structures) [23]. |

| 3. Implement a Model-in-the-Loop Pipeline | Adopt an iterative labeling process. Use your model to identify data points where it is most uncertain, have a human expert label only those, then retrain the model. This optimizes human effort [27]. |

| 4. Consider a Multimodal AI Approach | Integrate diverse data sources (e.g., genomic, clinical, structural) to create a richer information context, which can compensate for limited data in any single modality [25]. |

| 5. Verify Tool Performance | If using automated extraction tools, confirm their accuracy on your specific document types. Consult benchmark performance tables to set realistic expectations [24]. |

Problem: Difficulty Extracting Molecules from Scientific Literature

| Troubleshooting Step | Description & Action |

|---|---|

| 1. Check Document Layout Compatibility | Older tools may fail on documents with complex layouts. Use a modern, vision-based framework like MolMole that processes full page images without relying on error-prone layout parsers [24]. |

| 2. Validate OCSR Output | After using an Optical Chemical Structure Recognition (OCSR) tool, spot-check the generated machine-readable files (e.g., SMILES, MOLfiles) against the original image to catch conversion errors [24]. |

| 3. Assess Reaction Parsing | Ensure your tool can distinguish between simple molecular structures and complex reaction diagrams, correctly identifying roles like "reactant," "product," and "condition" [24]. |

Experimental Protocols & Data

Protocol 1: Model-in-the-Loop Training for Named Entity Recognition (NER)

This protocol is designed to efficiently build a training dataset for identifying drug-like molecules in text with minimal human labeling effort [27].

- Bootstrap Dataset Creation: Assemble a small, initial set of text samples (e.g., 50-100 sentences from scientific papers) and have a human labeler identify and tag all drug-like molecule names.

- Initial Model Training: Use this bootstrap dataset to train a preliminary NER model (e.g., a model based on SpaCy or a Keras LSTM).

- Iterative Labeling and Retraining:

- Use the current model to predict entities on a large, unlabeled corpus.

- Select the samples where the model's prediction confidence is lowest.

- Present only these low-confidence samples to the human labeler for verification and correction.

- Add the newly labeled data to the training set and retrain the model.

- Convergence Check: Repeat Step 3 until the model's performance (e.g., F1 score) on a validation set stops improving significantly.

The workflow for this protocol is outlined below.

Protocol 2: Page-Level Molecular Information Extraction with MolMole

This protocol uses the MolMole toolkit to automatically find and extract molecular data directly from scientific publication PDFs [24].

- Document Preparation: Convert your source documents (scientific articles or patents in PDF format) into high-resolution PNG images.

- Run MolMole Pipeline: Process the images through the unified MolMole framework, which executes three core tasks in parallel:

- Molecule Detection (ViDetect): Identifies and draws bounding boxes around all molecular structures on the page.

- Reaction Diagram Parsing (ViReact): Detects reaction diagrams and parses them to label reactants, products, and conditions.

- Structure Recognition (ViMore): Converts the detected molecular images into machine-readable MOLfiles or SMILES strings.

- Data Consolidation: The tool outputs the final extracted data, which can be saved in structured formats like JSON or Excel for further analysis.

The following diagram illustrates this automated pipeline.

Performance Benchmark: Molecule Detection & Recognition

The table below summarizes the page-level performance of MolMole compared to other tools, demonstrating its effectiveness in accurately extracting information [24].

| Model / Toolkit | Test Set | Average Precision (AP) | Average Recall (AR) | F1 Score |

|---|---|---|---|---|

| MolMole (ViDetect) | Articles | 0.928 | 0.949 | 0.938 |

| DECIMER Segmentation | Articles | 0.872 | 0.895 | 0.883 |

| OpenChemIE (MolDetect) | Articles | 0.785 | 0.823 | 0.804 |

| MolMole (ViDetect) | Patents | 0.914 | 0.938 | 0.926 |

| DECIMER Segmentation | Patents | 0.854 | 0.886 | 0.870 |

| OpenChemIE (MolDetect) | Patents | 0.763 | 0.802 | 0.782 |

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational tools and materials essential for experiments in low-data drug discovery and information extraction.

| Item | Function & Application |

|---|---|

| Named Entity Recognition (NER) Model | A statistical model (e.g., based on SpaCy or LSTM) trained to identify and extract names of drug-like molecules from free text in scientific literature [27]. |

| MolMole Toolkit | An end-to-end vision-based framework that unifies molecule detection, reaction parsing, and OCSR to extract chemical data directly from page-level document images [24]. |

| Data Use Agreement (DUA) | A required legal contract when sharing or receiving a "Limited Data Set" of patient information for research. It establishes permitted uses and mandates security safeguards to protect privacy [28]. |

| Multimodal AI Platform | A system that integrates diverse data types (genomic, chemical, clinical) to create a holistic view for drug discovery, helping to overcome limitations posed by scarce data in any single domain [25]. |

| OCSR Model (e.g., ViMore) | An Optical Chemical Structure Recognition model that converts images of molecular structures into machine-readable formats (e.g., SMILES, MOLfiles), enabling computational analysis [24]. |

From Theory to Practice: Methodologies for Maximizing Information Yield from Small Datasets

Troubleshooting Guide: Common FBDD Experimental Issues

Q1: Our fragment screen yielded an unusually high hit rate. What could be the cause? A high hit rate often indicates non-specific binding or assay interference.

- Potential Causes and Solutions:

- Cause: Compound aggregation, leading to promiscuous inhibition.

- Solution: Validate hits using a biophysical method like Surface Plasmon Resonance (SPR) which is less prone to aggregation artifacts [29].

- Cause: Contaminants or chemical reactivity in the fragment library.

- Solution: Implement quality control (LC-MS) on library compounds and include counter-screens to identify reactive compounds.

- Cause: The assay buffer or conditions are inadvertently promoting weak interactions.

- Solution: Review and optimize buffer composition, including salt and detergent concentrations.

Q2: We have a confirmed fragment hit, but it lacks a measurable IC50 in our functional assay. How should we proceed? This is a common scenario due to the weak potency (high micromolar to millimolar) of initial fragments.

- Potential Causes and Solutions:

- Cause: The fragment's binding affinity is below the detection limit of the functional assay.

- Solution: Use more sensitive, direct binding techniques like NMR or X-ray crystallography to confirm binding and obtain a structural starting point for optimization [29]. Isothermal Titration Calorimetry (ITC) can provide precise affinity measurements for stronger hits.

Q3: During fragment optimization, our "grown" molecules are becoming too lipophilic and are failing solubility assays. What are the best practices to avoid this? This is a typical challenge in fragment-to-lead chemistry, often called "molecular obesity."

- Potential Causes and Solutions:

- Cause: Adding large, hydrophobic groups to increase potency.

- Solution: Prioritize structural information from X-ray crystallography to guide the addition of polar or charged functional groups that form specific hydrogen bonds or electrostatic interactions with the target [29].

- Cause: Insufficient monitoring of physicochemical properties during design.

- Solution: Implement strict property-based criteria (e.g., Lipinski's Rule of Five for fragments) and use metrics like Ligand Lipophilicity Efficiency (LLE) to track optimization efficiency.

Q4: Our X-ray crystallography efforts are failing to produce a co-crystal structure with our bound fragment. What alternatives exist? Without a structure, optimization becomes significantly more challenging.

- Potential Causes and Solutions:

- Cause: The fragment binding is too weak to stabilize the protein for crystallization.

- Solution: Use computational methods like molecular docking or Free Energy Perturbation (FEP) calculations to model the binding pose based on known ligand structures or homologous proteins [29].

- Cause: Crystallography is not feasible for the target.

- Solution: Rely on NMR-based structural constraints or employ cryo-Electron Microscopy (cryo-EM) if the target is a large complex.

Experimental Protocols for Key FBDD Workflows

Table 1: Comparison of Primary Fragment Screening Methodologies

| Method | Detection Principle | Typical Sample Consumption | Key Advantage(s) | Primary Limitation(s) |

|---|---|---|---|---|

| X-ray Crystallography | Electron density map of bound fragment | High (requires crystal) | Provides direct, atomic-resolution structural data [29] | Technically challenging; not all targets crystallize |

| Surface Plasmon Resonance (SPR) | Change in refractive index at sensor surface | Low | Provides real-time kinetics (on/off rates) [29] | Susceptible to nonspecific binding; requires immobilization |

| Nuclear Magnetic Resonance (NMR) | Chemical shift perturbation or signal loss | High | Detects weak binding; can identify binding site [29] | Low throughput; requires isotopic labeling for large proteins |

| Thermal Shift Assay (TSA) | Protein thermal stabilization upon binding | Very Low | Low cost, high throughput initial screen [29] | Indirect measure; prone to false positives/negatives |

Protocol 1: Validating a Fragment Hit from a Primary Screen

- Confirmatory Assay: Re-test the primary hit in a dose-response format using the original screening assay.

- Orthogonal Biophysical Assay: Employ a method with a different detection principle to rule out assay-specific artifacts. For example, follow up a TSA hit with SPR or NMR.

- Selectivity Check: Test the fragment against an unrelated protein to check for promiscuous binding.

- Determine Affinity: Use ITC or SPR to measure the binding constant (KD) of the validated hit.

- Initial SAR: Test commercially available analogues of the hit to establish a preliminary Structure-Activity Relationship (SAR).

Protocol 2: Structure-Guided Fragment Optimization via "Growing"

- Obtain Structure: Solve a high-resolution co-crystal structure of the protein-fragment complex.

- Analyze Binding Pose: Identify key interactions and map adjacent unexplored sub-pockets.

- Design Analogs: Chemically modify the fragment core to add functional groups that interact with the nearby sub-pocket.

- Synthesize & Test: Synthesize a focused library of "grown" fragments and test for improved potency and maintained ligand efficiency.

- Iterate: Repeat steps 1-4 using the new structural data to guide further optimization into a lead compound [29].

Workflow and Pathway Visualizations

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for FBDD

| Item | Function in FBDD | Key Considerations |

|---|---|---|

| Pre-defined Fragment Library | A collection of 500-5000 low molecular weight (<300 Da) compounds for screening [29]. | Optimize for chemical diversity, solubility, and synthetic tractability for future chemistry. |

| Stabilized Target Protein | The purified, recombinant protein used for binding and structural studies. | Purity, monodispersity, and conformational stability are critical for successful assays and crystallization. |

| Crystallization Screening Kits | Sparse matrix screens to identify initial conditions for growing protein and protein-fragment co-crystals. | Include commercial and custom screens to maximize the chance of obtaining diffractable crystals. |

| NMR Isotopes (¹⁵N, ¹³C) | Isotopically labeled protein for NMR-based screening and binding site characterization. | Required for protein-observed NMR techniques; cost can be a limiting factor. |

| Biophysical Assay Kits (e.g., SPR Chips, TSA Dyes) | Reagents for configuring specific, sensitive binding assays. | Choose kits and surfaces compatible with your target protein and buffer systems. |

| AI/ML Computational Tools | Software for virtual screening, binding pose prediction, and optimization guidance [29]. | Integration with experimental data streams is key for iterative design cycles. |

Within the field of AI-driven drug discovery, efficiently extracting meaningful information from a limited number of available molecules is a significant challenge. Sequence-based molecular fragmentation is a pivotal technique for addressing this, breaking down complex molecular representations into smaller, manageable units that computational models can process. This guide provides troubleshooting and methodological support for two primary sequence-based techniques: Character Slicing and SMILES processing, enabling researchers to optimize their workflows for fragment-based drug discovery (FBDD) [30].

Troubleshooting Guide: Common Issues and Solutions

1. Issue: Generated SMILES Strings are Chemically Invalid

- Problem: The model outputs SMILES strings that do not correspond to valid chemical structures, for example, with incorrect ring closure numbers or mismatched parentheses [31].

- Solution:

- Review Training Data: Ensure the model was trained on a large dataset of valid SMILES strings to learn proper syntax and grammar [31].

- Implement Validity Checks: Incorporate a chemical validation tool or library (e.g., RDKit) in your workflow to automatically filter out invalid SMILES during the generation process.

- Adjust Model Complexity: If using a Recurrent Neural Network (RNN), consider using a more advanced architecture like Long Short-Term Memory (LSTM) or Gated Recurrent Unit (GRU), which are better at learning long-range dependencies in sequence data [31].

2. Issue: Model Fails to Capture Essential Structural Features

- Problem: The fragmented sequences or generated molecules do not retain the key functional groups or scaffolds necessary for biological activity.

- Solution:

- Re-evaluate Fragmentation Method: Simple Character Slicing may break apart important functional groups. Consider using a more chemically-aware fragmentation method like Byte-Pair Encoding (BPE) or one that incorporates existing fragment libraries to respect chemical logic [30].

- Inspect Fragment Vocabulary: Analyze the generated fragment vocabulary to ensure it contains a comprehensive set of chemically meaningful substructures. A limited or skewed vocabulary can hinder the model's representational power [30].

3. Issue: Sparse or Uninterpretable Feature Vectors from SMILES

- Problem: Features extracted from SMILES strings are too sparse or lack clear chemical meaning, making it difficult to build effective machine learning models or understand predictions [32].

- Solution:

- Use N-gram Based Feature Extraction: Instead of single characters, use N-grams (contiguous sequences of N characters) from the SMILES string. This captures local atomic associations and produces more interpretable features [32].

- Combine with Dense Features: Sparse NLP-based features can be blended with dense features like gene expression data to build better-performing predictive models for tasks like personalized drug screening [32].

4. Issue: High Computational Cost for Large-Scale Fragmentation

- Problem: Fragmenting a large library of molecules is time-consuming and resource-intensive.

- Solution:

- Utilize Non-Expertise-Dependent Methods: Move away from manual, expert-driven fragmentation. Implement large-scale, automated fragmentation methods like BPE or other data-driven tokenization algorithms to process large compound libraries efficiently [30].

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using sequence-based fragmentation like SMILES processing in AI-based drug design?

SMILES processing allows molecular structures to be treated as a language. By breaking them into fragments (akin to words), Generative Pre-trained Transformer (GPT) models and other natural language processing (NLP) architectures can learn the underlying "chemical grammar." This enables the generation of novel, synthetically accessible molecules with desired properties, significantly expanding the explorable chemical space compared to traditional methods [30] [31].

Q2: How does Character Slicing differ from more advanced methods like Byte-Pair Encoding (BPE)?

- Character Slicing is a naive method that breaks a SMILES string into individual characters or fixed-length blocks. It is simple but often severs chemically important substructures, as it does not consider the semantic meaning of atomic groupings [30].

- Byte-Pair Encoding (BPE) is a data-driven compression algorithm adapted for molecular fragmentation. It iteratively merges the most frequent pairs of characters or existing fragments in the dataset, building a vocabulary of common, chemically meaningful sub-sequences. This leads to fragments that more closely resemble functional groups or common rings [30].

Q3: Why are my NLP-based molecular features not performing well in property prediction models?

This could be due to several reasons. The fragmentation method may not be generating features that adequately capture the structural elements responsible for the target property. It is also crucial to ensure that the feature vectors, while potentially sparse, are distinctive enough to differentiate between molecules. Combining these sparse NLP features with other relevant biological data (e.g., gene expression profiles) often improves model performance for specific tasks like personalized drug efficacy prediction [32].

Q4: Can I use standard NLP models directly on SMILES strings without fragmentation?

While it is possible, performance is often suboptimal. Standard NLP models are designed for words, not atoms. Fragmenting SMILES strings into chemically logical units (e.g., via BPE) before feeding them into a model provides a more foundational representation for the model to learn from, similar to how words form the basis for understanding sentences in natural language [30].

Comparative Analysis of Fragmentation Techniques

The table below summarizes key sequence-based fragmentation methods to guide selection.

| Method Name | Core Principle | Key Characteristics | Best Suited For |

|---|---|---|---|

| Character Slicing (CS) [30] | Divides SMILES string into individual characters. | Simple; breaks cyclic structures and double bonds; does not retain bond information. | Basic sequence processing and initial prototyping. |

| Byte-Pair Encoding (BPE) [30] | Data-driven; iteratively merges frequent character pairs. | Builds a vocabulary of common sub-sequences; breaks cyclic structures. | Interaction prediction and molecular generation tasks. |

| Frequent Consecutive Sub-sequence (FCS) [30] | Identifies and uses the most common consecutive sub-sequences. | Data-driven tokenization; breaks cyclic structures and double bonds. | General interaction prediction tasks [30]. |

| Sequential Piecewise Encoding (SPE) [30] | Segments the sequence based on a learned model. | Does not break cyclic structures; is a data-driven tokenization algorithm. | Molecular generation tasks [30]. |

Essential Research Reagent Solutions

The following reagents and tools are fundamental for experimental work in this field.

| Item / Reagent | Function / Application |

|---|---|

| Validated SMILES Dataset | A large, curated set of chemically valid SMILES strings for training generative AI models like RNNs and Transformers [31]. |

| NLP-Based Feature Extraction Tool | A software library (e.g., custom Python code using N-grams) to convert drug SMILES into interpretable, sparse feature vectors for machine learning [32]. |

| Chemical Validation Library | Software (e.g., RDKit) to check the validity of generated SMILES strings and filter out chemically impossible structures [31]. |

| Fragment Library | A curated collection of molecular fragments used in traditional FBDD for screening against biological targets, providing a benchmark for fragmentation quality [30]. |

Experimental Workflow and Signaling Pathways

The diagram below outlines a standard workflow for applying sequence-based fragmentation in AI-driven molecular generation.

Diagram Title: Workflow for AI-Driven Molecular Generation Using SMILES Fragmentation

The diagram below illustrates the conceptual relationship between molecular fragmentation, AI model processing, and the resulting chemical space exploration.

Diagram Title: Logical Relationship of Fragmentation in AI-Based Drug Discovery

FAQs: Core Concepts

Q1: What is multimodal fusion in the context of molecular property prediction? Multimodal data fusion is the process of integrating disparate data sources or types—such as 1D descriptors, 2D molecular graphs, and fingerprints—into a common representational space. This leverages the complementarity and unique characteristics of each modality to create a more comprehensive understanding of a molecule, which enhances the accuracy and robustness of predictive models in drug discovery [33].

Q2: Why should I use multimodal fusion instead of relying on a single molecular representation? Mono-modal learning is inherently limited as it relies solely on a single modality of molecular representation, which restricts a comprehensive understanding of drug molecules. Multimodal fusion overcomes this by harnessing comprehensive information from multiple data sources, leading to higher predictive accuracy, improved reliability, better noise resistance, and the ability to process intricate bioinformatics data more effectively [34].

Q3: What are the primary levels of multimodal fusion, and how do I choose? The three primary fusion levels are Early (Data-Level), Intermediate (Feature-Level), and Late (Decision-Level) fusion [33]. The choice depends on your data availability and project goals, as summarized in the table below:

| Fusion Level | Description | Best Use Case | Key Consideration |

|---|---|---|---|

| Early Fusion | Integrates raw or low-level data (e.g., concatenated 1D and 2D vectors) before model input [35] [33]. | All modalities are always available; you want to extract a large amount of information [33]. | Sensitive to noise and modality-specific variations; can lead to high-dimensional data [33]. |

| Intermediate Fusion | Combines extracted features from each modality into a joint representation using deep learning models [35] [33]. | Capturing complex interactions between modalities early in the process; often yields superior performance [35] [34]. | Requires all modalities to be present for each sample; requires careful model design [33]. |

| Late Fusion | Integrates decisions or outputs from modality-specific models after independent processing [35] [33]. | Handling missing data; leveraging highly specialized, pre-trained models for each modality [35] [33]. | May lose some cross-modal interactions and is less effective in capturing deep relationships [33]. |

Q4: Can I benefit from multimodal data if some modalities are missing in my downstream task? Yes. Frameworks like MMFRL (Multimodal Fusion with Relational Learning) are designed for this. They leverage multimodal data during a pre-training phase to enrich the embedding initialization for molecular graphs. This allows downstream models to benefit from the auxiliary modalities, even when they are absent during inference [35] [36].

FAQs: Implementation & Troubleshooting

Q5: What are some common model architectures for fusing 1D and 2D molecular data? A proven methodology is to construct a triple-modal learning model by employing different neural networks to process each representation. For instance, you can use a Graph Convolutional Network (GCN) for 2D molecular graphs, a Transformer-Encoder or Bidirectional Gated Recurrent Unit (BiGRU) for 1D SMILES strings, and a Multi-Layer Perceptron for ECFP fingerprints [34]. These are then fused at an intermediate stage.

Q6: My multimodal model is not outperforming my best mono-modal model. What could be wrong? This is a common challenge. Consider the following troubleshooting guide:

| Symptom | Possible Cause | Solution |

|---|---|---|

| Poor overall performance | Improper data alignment or high data heterogeneity [33]. | Ensure meticulous data preprocessing and normalization across modalities. |

| The fusion method is mismatched to the data characteristics [35]. | Re-evaluate your fusion strategy; if one modality is very noisy, switch from early to late fusion. | |

| One modality dominates | Large scale differences between feature vectors from different modalities [33]. | Apply feature-level normalization or scaling to balance the influence of each modality. |

| Model fails to generalize | Overfitting on the training set. | Incorporate regularization techniques (e.g., dropout, weight decay) and use relational learning during pre-training to enhance the model's ability to generalize [35] [36]. |

Q7: How can I assess the contribution of each modality to the final prediction? To ensure interpretability, perform a post-hoc analysis of the learned representations. Techniques like t-SNE can be used to visualize the fused embeddings in a lower-dimensional space. Furthermore, you can analyze the assigned contribution of each modal model by examining attention weights or conducting ablation studies where you systematically remove one modality at a time to observe the performance drop [35] [34].

Experimental Protocol: Implementing a Basic Multimodal Fusion Workflow

This protocol provides a foundational methodology for integrating 1D descriptors and 2D molecular fingerprints, based on established approaches in the literature [34].

Objective: To predict molecular properties (e.g., solubility, toxicity) by fusing 1D SMILES strings and 2D molecular graphs.

Materials & Reagents:

| Research Reagent Solution | Function in Experiment |

|---|---|

| Molecular Dataset (e.g., from MoleculeNet) | Provides standardized benchmarks (e.g., ESOL, Lipophilicity, BACE) for training and evaluation [35] [36]. |

| Extended-Connectivity Fingerprints (ECFPs) | Serve as a canonical 1D/vector representation of molecular structure, capturing key functional groups and features [34]. |

| Graph Convolutional Network (GCN) | The primary deep learning model for processing the 2D molecular graph representation [34]. |

| Transformer-Encoder or BiGRU | Deep learning models used to process the sequential data of SMILES strings, capturing contextual information [34]. |

| Joint Representation Layer | The layer in the neural network where feature vectors from the GCN and Transformer/BiGRU are combined (e.g., via concatenation) [34]. |

Step-by-Step Procedure:

Data Preparation:

- Input Data: Obtain a molecular dataset (e.g., Lipophilicity from MoleculeNet).

- Modality 1 - 2D Graph: Represent each molecule as a graph where atoms are nodes and bonds are edges.

- Modality 2 - 1D Descriptor: Generate SMILES strings and ECFP fingerprints for each molecule.

- Split Data: Randomly split the dataset into training, validation, and test sets (e.g., 80/10/10).

Model Construction (Intermediate Fusion):

- 2D Graph Branch: Build a GCN that takes the molecular graph as input and outputs a feature vector.

- 1D Descriptor Branch: Build a model (e.g., Transformer-Encoder) that takes the SMILES string or ECFP as input and outputs a feature vector.

- Fusion: Concatenate the feature vectors from both branches into a single, joint representation vector.

- Prediction Head: Feed the joint representation into a final fully connected layer to produce the property prediction.

Training & Evaluation:

- Loss Function: Use a task-appropriate loss function (e.g., Mean Squared Error for regression, Cross-Entropy for classification).

- Training: Train the model on the training set and use the validation set for hyperparameter tuning and early stopping.

- Evaluation: Assess the final model's performance on the held-out test set using standard metrics (e.g., RMSE, ROC-AUC). Compare its performance against mono-modal baselines.

The following workflow diagram illustrates the experimental protocol for intermediate fusion:

Diagram 1: Intermediate Fusion Workflow for Molecular Property Prediction

Visualizing Fusion Strategies

The following diagram outlines the three core fusion strategies to help you select the right architectural approach for your project.

Diagram 2: A Comparison of Multimodal Fusion Strategies

FAQs: Architecture and Implementation

Q1: What is the advantage of using a hybrid CNN-Bi-LSTM model over either model alone for molecular data?

Hybrid CNN-Bi-LSTM architectures are powerful because they leverage the strengths of both components. The CNN layers are exceptional at extracting local, spatial features—for instance, identifying specific functional groups or structural patterns from molecular fingerprints or SMILES string representations [37] [38]. The Bi-LSTM layers then process these extracted features as sequences, capturing long-range, temporal dependencies and contextual information from both forward and backward directions. This is crucial for understanding complex molecular structures where the relationship between distant atoms matters [37] [39]. Finally, attention mechanisms can be integrated to dynamically weigh the importance of different features or sequence parts, further boosting the model's performance and interpretability [37] [40].

Q2: Our model is achieving high training accuracy but poor validation accuracy on a small molecular dataset. What could be the cause?