Beyond Structure: How Dynamical Modules Drive Whole-Network Behavior in Development and Disease

This article explores the paradigm shift from structural to dynamical modularity in understanding complex biological networks.

Beyond Structure: How Dynamical Modules Drive Whole-Network Behavior in Development and Disease

Abstract

This article explores the paradigm shift from structural to dynamical modularity in understanding complex biological networks. For researchers and drug development professionals, we dissect how identifiable, dissociable dynamical processes—rather than static structural subunits—orchestrate core network functions in development, from pattern formation to cell fate decision. The scope spans foundational concepts, methodological advances for identifying these modules, challenges in their optimization and control, and comparative validation against traditional structural approaches. We highlight the profound implications of this framework for predicting emergent drug toxicities, identifying novel therapeutic targets, and advancing network-based drug discovery by targeting dynamic network processes rather than isolated components.

From Static Blueprints to Dynamic Engines: Redefining Biological Modules

The Critical Limitation of Structural Modularity in Dense Networks

In the analysis of complex biological networks, modularity is a fundamental measure of structure, characterizing the strength of a network's division into groups or communities of densely interconnected nodes that have sparse connections to nodes in other groups [1]. This structural definition has been powerfully applied across diverse fields, from mapping brain connectivity to analyzing gene regulatory networks. However, a significant limitation emerges when this structural perspective is exclusively relied upon in dense, dynamic systems: structural modularity does not determine function [2] [3]. The assumption that a network's physical architecture directly corresponds to its operational capabilities represents a critical oversimplification that can misdirect research, particularly in developmental biology and drug discovery.

This article examines the fundamental disconnect between structural topology and dynamic function in dense biological networks. We demonstrate through experimental evidence and computational models that networks can exhibit robust modular behavior without structural modularity, and conversely, that structurally modular networks may not yield functionally independent subsystems. For researchers and drug development professionals, understanding this distinction is paramount, as therapeutic interventions often target dynamic network behaviors rather than static architectures. The following sections delineate the quantitative evidence for this limitation, present methodologies for analyzing dynamical modules, and provide practical tools for advancing beyond structural analyses in network-based research.

Quantitative Evidence: The Structural-Functional Disconnect

Empirical research across multiple biological domains consistently reveals the limitations of structural modularity as a proxy for understanding network function. The following comparative analysis synthesizes key findings from neurodevelopmental and gene regulatory studies:

Table 1: Comparative Evidence on Structural Modularity Limitations

| Biological System | Structural Findings | Functional/Behavioral Evidence | Implication |

|---|---|---|---|

| Human Brain Networks (N=882, ages 8-22) | Structural modules become more segregated with age (decreased participation coefficient, p<1×10⁻¹⁰) [4] | Modular segregation mediates executive function development; follows non-linear trajectory with greatest changes in childhood/adolescence [4] | Structural changes support but do not determine functional maturation |

| Gap Gene Network (Drosophila melanogaster) | The network lacks clear structural modularity with extensive cross-regulation [3] | Exhibits distinct dynamical modules driving specific expression features; differential evolvability of outputs [3] | Identical structure produces multiple functional modules through dynamical regulation |

| Multifunctional GRNs (Computational Screen) | Spectrum from hybrid (disjoint) to emergent (fully overlapping) structures [3] | Most networks show partial structural overlap between functional modules; same nodes/connections implement different behaviors [3] | Structural modularity is neither necessary nor sufficient for functional modularity |

The evidence underscores a fundamental principle: while structural modularity may be present and even developmentally refined, it does not reliably predict functional outcomes. The context-dependence of network behavior—where quantitative parameters, boundary conditions, and regulatory dynamics determine function—emerges as the critical factor missing from purely structural analyses [2] [3].

Methodological Approaches: From Structure to Dynamics

Experimental Framework for Dynamical Modularity Analysis

Research into dynamical modularity requires methodologies that capture the temporal, contextual, and quantitative dimensions of network behavior. The following experimental protocols represent established approaches in the field:

Table 2: Key Methodologies for Analyzing Dynamical Modularity

| Methodology | Experimental Protocol | Application Example | Limitations |

|---|---|---|---|

| Activity-Function Decomposition | 1. Perturb system parameters extensively2. Map parameter changes to output features3. Cluster behavioral responses4. Identify critical regulatory inputs for each behavioral cluster [2] | Partitioning gap gene network into dynamical modules driving specific expression features [3] | Requires comprehensive perturbation space exploration; computationally intensive |

| Criticality Analysis | 1. Measure system responses to graded perturbations2. Calculate sensitivity metrics across parameter space3. Identify parameter regions with maximal information transmission4. Correlate critical regions with evolutionary plasticity [3] | Explaining differential evolvability of expression features in dipteran gap gene systems [3] | Difficult to apply in vivo; often requires precise parameter quantification |

| Network Perturbation Mapping | 1. Systematically knock down network components2. Quantify phenotypic outcomes across multiple dimensions3. Construct perturbation-response matrices4. Identify co-functional components through correlated outcomes [2] | Classifying Drosophila segmentation genes into gap, pair-rule, segment-polarity modules [2] | May miss redundant functions; limited by pleiotropic effects |

Visualizing the Structural-Dynamic Relationship

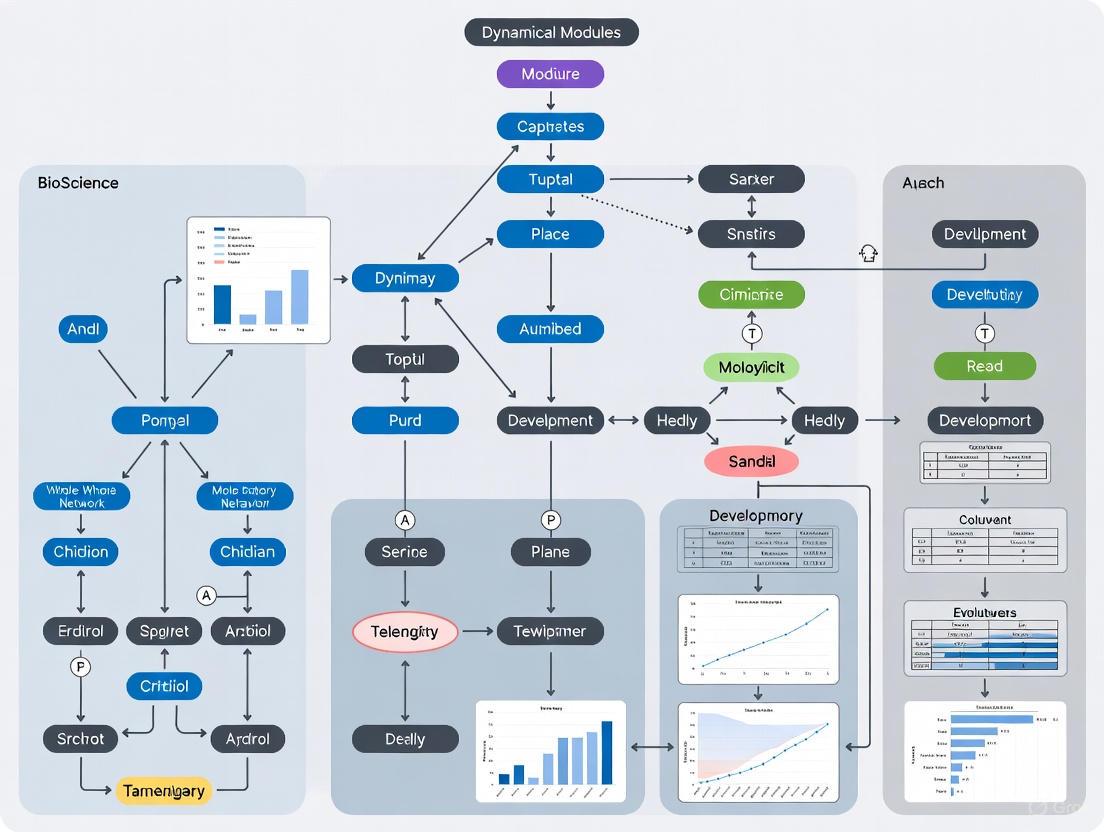

The relationship between structural networks and dynamical modules can be conceptually visualized through the following diagram:

Diagram 1: Structural constraints versus dynamic determination of function. Structural topology constrains but does not determine dynamic behavior, which is primarily governed by quantitative parameters and context.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Dynamical Modularity Analysis

| Reagent/Resource | Function/Application | Example Use Case |

|---|---|---|

| Diffusion MRI & Tractography | Reconstructs structural brain networks from white matter connectivity [4] | Mapping developmental changes in structural modularity (PNC study, N=882) [4] |

| Deterministic/Probabilistic Tractography | Estimates structural connectivity between brain regions; multiple algorithms available [4] | Quantifying within-module vs between-module connectivity strength [4] |

| Community Detection Algorithms | Data-driven identification of network modules (e.g., Newman-Girvan) [1] | Calculating modularity quality index (Q) for structural networks [4] |

| Gene Perturbation Tools (CRISPR, RNAi) | Targeted disruption of network components to test functional independence [2] | Establishing necessity of genes for specific dynamical outputs [3] |

| Quantitative Live Imaging | Tracks dynamic expression patterns in real-time with spatial resolution [3] | Measuring kinematic shifts of gap gene expression boundaries [3] |

| Configuration Models | Statistical null models that randomize edges while preserving node degrees [1] | Calculating expected connectivity for modularity comparison [1] |

Case Study: The Gap Gene Network

The gap gene system of Dipteran insects provides a compelling case study of the limitations of structural modularity and the primacy of dynamical organization. This gene regulatory network patterns the anterior-posterior axis during embryonic development and exhibits precisely defined dynamical outputs despite lacking clear structural modularity [3].

Experimental Workflow for Dynamical Decomposition

The methodological approach for analyzing this system illustrates how functional modules can be identified without structural correlates:

Diagram 2: Experimental workflow for dynamical module identification in gene regulatory networks through systematic perturbation and quantitative phenotyping.

Key Findings and Implications

Research on the gap gene network reveals several critical insights that challenge structural determinism:

Shared Structure, Multiple Functions: The same structural network (genes hb, Kr, kni, gt with cross-regulatory interactions) produces distinct dynamical modules responsible for different expression features [3].

Differential Evolvability: Various expression features exhibit different evolutionary plasticity, explained by the fact that some dynamical subcircuits operate near criticality while others do not [3].

Context-Dependent Behavior: The regulatory influence of connections varies based on quantitative parameters and spatial context, meaning the "function" of a connection cannot be determined from structure alone [3].

These findings have direct implications for drug development, suggesting that therapeutic strategies targeting network structures may have unpredictable functional outcomes, while interventions tuned to specific dynamical regimes could achieve more precise modulation of network behavior.

The critical limitation of structural modularity in dense networks necessitates a paradigm shift in how we conceptualize, analyze, and therapeutically target biological systems. The evidence from brain development, gene regulation, and computational modeling consistently demonstrates that structural topology constrains but does not determine functional output [2] [4] [3]. For researchers and drug development professionals, this insight demands new approaches that prioritize dynamical analysis over structural mapping.

Future research must develop more sophisticated methods for identifying dynamical modules—subsystems defined by their coordinated activity patterns rather than their connection density. This will require advances in live imaging, parameter quantification, and computational modeling that capture the temporal and contextual dimensions of network behavior. The potential payoff is substantial: a more predictive understanding of how network perturbations translate to functional outcomes, enabling more precise therapeutic interventions that account for the dynamic complexity of biological systems.

Moving beyond structural modularity represents not just a technical challenge but a conceptual evolution in systems biology—from seeing networks as static architectures to understanding them as dynamic processes that generate function through their temporal coordination and contextual adaptation.

Dynamical Patterning Modules (DPMs) represent a foundational concept for understanding the evolution and development of complex multicellular forms. This technical guide delineates the core principle that DPMs are defined by their activity-functions—the dynamic, physicogenetic processes they execute—rather than their static structural architecture. We advance the thesis that these activity-functions are the fundamental drivers of whole-network behavior in developmental systems. Framed within evolutionary developmental biology (Evo-Devo), this perspective explains how a limited set of conserved modules can generate immense morphological diversity. This whitepaper provides a rigorous definition of DPMs, details experimental methodologies for their characterization, and visualizes their operational logic, offering researchers a comprehensive framework for investigating pattern formation across biological scales.

The concept of Dynamical Patterning Modules (DPMs) provides a mechanistic framework for integrating physical processes with molecular genetics to explain the development and evolution of multicellular organisms [5] [6]. Traditionally, biological modules were often identified as structural components within regulatory networks—'cliques' of densely connected genes or proteins. The DPM framework challenges this structuralist view by positing that the essential unit of morphogenesis is a reusable process, not a fixed architectural entity.

A DPM is defined as a set of conserved gene products and molecular networks, operating in conjunction with the physical morphogenetic and patterning processes they mobilize [5] [7]. These physical processes—including adhesion, diffusion, oscillation, and viscoelasticity—are characteristic of chemically and mechanically excitable mesoscopic systems like cell aggregates [8]. The core thesis of this guide is that by prioritizing the activity-function of these modules—their specific, context-dependent dynamical behavior—over their compositional inventory, researchers can achieve a more predictive and unifying understanding of how complex forms arise and evolve. This activity-centric view reveals that the morphological motifs defining body plans constitute a "pattern language" generated by DPMs acting singly and in combination [8].

Core Principles: Reconceptualizing Modularity

The Activity-Function as the Defining Feature

The distinction between structural and dynamical modularity is critical. A structural module is identified by local topology in a network, such as a set of nodes with a high density of internal connections [2]. Its identity is tied to its fixed composition and arrangement. In contrast, a dynamical module (or DPM) is identified by its characteristic activity or behavior, which can persist even if its underlying structural components change [2].

- Context-Independent Behavior: Dynamical modules exhibit internal causal cohesion and can operate robustly across a range of circumstances, making them reusable and evolvable [2]. This functional autonomy from a specific structural instantiation is what allows the same morphogenetic outcome (e.g., tissue elongation) to be achieved by non-orthologous genes in different lineages [5].

- Physical-Genetic Coupling: The activity-function of a DPM invariably couples specific gene products to generic physical processes. For example, the production of extracellular adhesives like collagen in animals or extensins in plants links to the physical process of cohesion, forming a primary DPM for multicellularity [5] [6]. The module's function is the cohesive activity itself, not merely the list of adhesive molecules.

Table 1: Comparison of Structural vs. Dynamical Modularity

| Feature | Structural Module | Dynamical Patterning Module (DPM) |

|---|---|---|

| Defining Characteristic | Network topology & connectivity | Specific activity-function or process |

| Identity | Based on component list & arrangement | Based on dynamical behavior & outcome |

| Context Dependence | High; function sensitive to network structure | Lower; activity can be robust across contexts |

| Role in Evolution | Can be a constraint on variation | Enabler of phenotypic exploration & novelty |

DPMs in Evolutionary and Developmental Context

The activity-function perspective resolves a key paradox in evolutionary developmental biology: how widely divergent organisms utilize a shared molecular toolkit yet generate vastly different forms. The explanation is that the toolkit components are assembled into DPMs whose outputs are determined by their dynamic activity, which can be reconfigured without fundamental rewiring of the genome [8].

DPMs had their origins in the co-option of molecular species present in unicellular ancestors. For instance, pathways controlling cell shape and polarity in unicellular organisms were mobilized by novel proteins like Wnt in animals to form DPMs for lumen formation in metazoans [5] [6]. This evolutionary trajectory highlights that the activity-functions predated multicellularity; their recruitment into DPMs involved new scales and contexts for pre-existing dynamical processes.

Quantitative Characterization of DPMs

Empirical research supports the existence of core DPMs responsible for major evolutionary transitions. The table below summarizes quantitative data on primary DPMs involved in the evolution of plant multicellularity, illustrating how similar activity-functions can be achieved by different molecular components.

Table 2: Primary Dynamical Patterning Modules in Plant Evolution [5] [6]

| DPM Activity-Function | Core Physical Process | Example Molecular Players (Plant Lineages) | Phyletic Distribution |

|---|---|---|---|

| Cell-Cell Adhesion | Cohesion | Extensin superfamily glycoproteins | Multiple algal lineages, embryophytes |

| Cell Division & Wall Formation | Viscoelasticity, Self-assembly | Phragmoplastic (Streptophyta) vs. Phycoplastic (Chlorophyta) division | Different mechanisms within plant clades |

| Cell Differentiation | Diffusion, Activator-Inhibitor dynamics | Symplastic transport via plasmodesmata | Critical for complex multicellularity in plants |

| Cell/Tissue Polarity | Symmetry breaking | PIN/PAN1 proteins, auxin transport | Land plants |

The data demonstrates that DPMs can be achieved in different ways, even within the same clade. For example, the DPM for cell division and wall formation is instantiated by the phragmoplastic mechanism in Streptophyta and the phycoplastic mechanism in Chlorophyta [5]. Both execute the same core activity-function—the partitioning of cytoplasmic space and deposition of new wall material—but via different structural architectures.

Experimental Protocols for DPM Analysis

Validating a Dynamical Patterning Module requires demonstrating that a specific activity-function, arising from a gene-physics interaction, is responsible for a defined morphogenetic pattern. The following protocols outline a multidisciplinary approach.

Protocol 1: Mapping a Morphogenetic Activity-Function

Objective: To identify and characterize the components and dynamics of a putative DPM responsible for a specific morphological motif (e.g., a segmented pattern, a branched structure).

Materials:

- Genetic Model Organism: (e.g., Arabidopsis thaliana for plants, Drosophila melanogaster for animals).

- Perturbation Tools: CRISPR-Cas9 for targeted gene knockout, chemical inhibitors for disrupting physical processes (e.g., cytoskeletal disruptors like latrunculin B).

- Live Imaging Setup: Confocal or light-sheet microscope with environmental control.

- Reporters: Fluorescent protein fusions for key proteins (e.g., PIN:GFP for auxin flux).

- Computational Tools: Software for image analysis (e.g., Fiji/ImageJ) and for simulating reaction-diffusion systems or mechanical models.

Method:

- Phenotypic Characterization: Use live imaging to quantitatively describe the spatiotemporal emergence of the morphological pattern in wild-type embryos/tissues.

- Genetic Perturbation Screen: Systematically perturb candidate genes from the "developmental-genetic toolkit" and quantify the effect on the pattern. Classify genes based on the specific aspect of the pattern they disrupt (e.g., gap, pair-rule) [2].

- Physical Perturbation: Modulate the physical environment (e.g., ambient pressure, viscosity) or inhibit key physical processes (e.g., adhesion, diffusion) to test if the pattern is disrupted in ways analogous to genetic mutations.

- Dynamical Profiling: Measure the rates and localization of key processes (e.g., ligand diffusion, adhesion strength, mechanical stress) in wild-type and perturbed states.

- Network Reconstruction: Integrate genetic and physical perturbation data to build a functional interaction network that describes the module, prioritizing causal influences over mere structural connections.

Protocol 2: Validating a DPM via Evolutionary Comparison

Objective: To test if the same activity-function is implemented by non-identical structural modules in phylogenetically divergent species.

Materials:

- Comparative Species Set: Select species from different clades that exhibit a similar morphological motif (e.g., filamentous growth in algae and fungi).

- Cross-Species Molecular Tools: Heterologous expression systems, antibody staining for conserved proteins.

- Bioinformatics Pipeline: For comparative genomics and transcriptomics.

Method:

- Identify Homologous Motifs: Document the convergent or analogous morphological pattern in the target species.

- Profile Molecular Components: Use transcriptomics and proteomics to identify the genes and proteins expressed during the pattern formation in each species.

- Test Functional Equivalence: Attempt to "rescue" a genetic perturbation in one species by expressing a key gene from another species. This tests if the activity-function is conserved even if the specific gene has diverged.

- Assess Physical Conservedness: Determine if the same core physical process (e.g., Turing-type instability, viscoelastic buckling) underlies the pattern in both species through physical modeling and experimentation.

Visualization of DPM Logic and Workflows

The following diagrams, generated using Graphviz DOT language, illustrate the core conceptual and experimental workflows for defining and analyzing DPMs.

The Logic of a Dynamical Patterning Module

This diagram depicts the fundamental principle of a DPM: the integration of genetic components and physical laws to execute a specific activity-function that generates a morphological motif.

Diagram 1: Core Logic of a DPM

Experimental Workflow for DPM Analysis

This flowchart outlines the multi-pronged experimental strategy for characterizing a putative DPM, integrating genetic, physical, and computational approaches.

Diagram 2: DPM Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents

Research into DPMs requires reagents that target both molecular and physical aspects of the system. The following table details key resources for a typical DPM research program.

Table 3: Research Reagent Solutions for DPM Investigation

| Reagent / Tool Category | Specific Example | Function in DPM Research |

|---|---|---|

| Genetic Perturbation | CRISPR-Cas9 knockout lines | To disrupt candidate toolkit genes and assess impact on the activity-function and resulting morphology. |

| Live Imaging Reporters | PIN:GFP (Auxin efflux carrier) [5] | Visualizes dynamic polarity and transport processes in real-time, revealing the spatiotemporal dynamics of the module. |

| Cytoskeletal Probes | Fluorescent phalloidin (F-actin), GFP-TUBULIN | Labels cytoskeletal architecture to correlate cellular mechanics with morphogenetic changes. |

| Physical Perturbation Agents | Latrunculin B (Actin disruptor) | Used to dissect the contribution of mechanical structures to the module's activity, separate from genetic function. |

| Computational Modeling Software | Morpheus, FIJI/ImageJ, Custom PDE solvers | Simulates the integrated genetic-physical systems to test if hypothesized DPM interactions can generate the observed pattern. |

Defining Dynamical Patterning Modules by their activity-functions provides a powerful and unifying lens for research in evolutionary developmental biology. This framework seamlessly integrates the roles of genetic networks and physical processes, positioning the dynamic, executable process as the central unit of morphogenesis. For drug development professionals, this perspective underscores that interventions targeting network dynamics may be more effective than those targeting static components, a principle already reflected in the use of Disease Progression Models (DPMs) in clinical pharmacology [9].

Future research will be driven by the ability to acquire high-dimensional quantitative data on developing systems. The challenge lies in developing new analytical methods to decompose this data into dynamical, rather than structural, modules. Success in this endeavor will not only illuminate the deep principles of biological form but also provide a more robust foundation for therapeutic interventions in complex diseases, where network dynamics, not just static components, dictate pathological outcomes.

The gap gene network of the fruit fly Drosophila melanogaster represents one of the most thoroughly studied developmental gene regulatory networks and serves as a powerful model for understanding how dynamical modules drive whole-network behavior in development [10]. This network operates at the most upstream regulatory layer of the segmentation gene hierarchy, where it solves a fundamental problem in embryonic patterning: how to establish discrete territories of gene expression from continuous maternal protein gradients [10] [11]. The gap gene system exemplifies a dynamical patterning module—a set of conserved gene products and molecular networks that mobilize specific physical patterning processes within multicellular contexts [2] [12]. Unlike structural modules defined by network topology alone, the gap gene network operates through dynamic regulatory interactions that generate precise spatiotemporal expression patterns through cross-regulatory feedback and hierarchical control mechanisms [10] [11] [13]. This case study examines the operational principles of this network, focusing on its quantitative dynamics, experimental methodologies for its characterization, and its implications for understanding modularity in developmental systems.

Biological Foundations of the Gap Gene Network

Network Components and Hierarchical Organization

The gap gene network functions within the broader segmentation hierarchy that patterns the anterior-posterior (A-P) axis of the Drosophila embryo. This hierarchy begins with maternal coordinate genes that establish initial polarity, followed by the gap genes which translate this information into broad expression domains, which subsequently regulate pair-rule genes that establish segmental periodicity, and finally segment-polarity genes that define cellular identities within segments [10] [13]. The trunk gap genes—hunchback (hb), Krüppel (Kr), knirps (kni), and giant (gt)—are among the earliest zygotic targets of maternal gradients and exhibit broad, overlapping expression domains approximately 10-20 nuclei wide [10] [14].

The initial patterning of the embryo occurs during the syncytial blastoderm stage, characterized by rapid nuclear divisions without cytoplasmic membranes, allowing transcription factors to diffuse between nuclei [10]. During cleavage cycles 13 and 14A (approximately 1.5-3 hours after egg laying), the gap gene network becomes active and establishes its expression patterns through a combination of maternal input and cross-regulatory interactions [14] [13]. This dynamic process results in the formation of discrete expression domains that prefigure the segmented body plan of the larva and adult fly.

Maternal Inputs and Initial Pattern Formation

The gap gene network receives its initial regulatory inputs from three maternal systems that establish the A-P axis:

- The anterior system localizes bicoid (bcd) mRNA to the anterior pole, producing a diffusion-based Bcd protein gradient that declines exponentially from anterior to posterior [10].

- The posterior system involves Nanos and other factors that pattern the posterior regions.

- The terminal system activates Torso signaling at both poles [10].

These maternal gradients activate zygotic gap gene expression in a concentration-dependent manner. For example, Bcd protein functions as a concentration-dependent activator of target gap genes, with different affinity binding sites responding to different threshold levels of the morphogen [10]. Simultaneously, Bcd represses translation of the maternal caudal (cad) mRNA, establishing a complementary Cad protein gradient that increases toward the posterior [10]. The combination of these opposing gradients provides positional information along the A-P axis.

Table 1: Key Maternal Inputs to the Gap Gene Network

| Maternal Factor | Type | Expression Pattern | Primary Role in Gap Gene Regulation |

|---|---|---|---|

| Bicoid (Bcd) | Transcription factor | Anterior-to-posterior gradient | Concentration-dependent activator of anterior gap genes |

| Caudal (Cad) | Transcription factor | Posterior-to-anterior gradient | Activator of posterior gap genes |

| Nanos | RNA-binding protein | Posterior gradient | Translational repressor of hunchback mRNA |

| Torso | Receptor tyrosine kinase | Activated at terminal ends | Patterns terminal regions through MAPK signaling |

Dynamical Modules Framework for Gap Gene Patterning

Conceptualizing Dynamical Modules

The concept of dynamical patterning modules (DPMs) provides a framework for understanding how conserved gene products and molecular networks mobilize physical processes to generate morphological patterns during development [2] [12]. Unlike structural modules defined solely by network topology, dynamical modules are characterized by their activity-functions—specific behaviors that contribute to pattern formation regardless of the structural implementation [2]. In this framework, the gap gene network constitutes a dynamical module responsible for converting continuous morphogen gradients into discrete transcriptional domains through specific regulatory dynamics.

Dynamical modules exhibit internal causal cohesion coupled with a degree of context autonomy, enabling them to operate robustly across varying conditions [2]. The gap gene system demonstrates these properties through its ability to establish consistent expression patterns despite variations in embryo size or environmental conditions, a property known as size-regulation [10]. This robustness emerges from the dynamical properties of the network rather than specific structural features.

Operational Principles of the Gap Gene Dynamical Module

The gap gene network operates through several key dynamical principles:

- Differential Threshold Response: Gap genes exhibit different activation thresholds in response to maternal gradients, enabling distinct expression domains from the same morphogen inputs [10].

- Cross-Regulatory Feedback: Repressive interactions between complementary gap genes create sharp boundaries and stabilize expression domains [11] [14].

- Temporal Progression: Gap domain formation occurs progressively, with posterior domains exhibiting anterior shifts during cycle 14A due to dynamical regulatory interactions [13].

- Posterior Dominance: Repressive interactions show anteroposterior asymmetry, with posterior factors dominating over anterior ones—a phenomenon also known as posterior prevalence [11].

These principles collectively enable the gap gene network to function as a dynamical module that transforms continuous input into discrete output patterns through its intrinsic regulatory logic.

Diagram 1: Hierarchical structure and regulatory logic of the gap gene network. Maternal gradients (yellow) activate zygotic gap genes (blue), which engage in cross-regulatory interactions (red) before activating downstream targets (green).

Quantitative Analysis of Network Dynamics

Regulatory Interactions and Their Functional Roles

Mathematical modeling of the gap gene network has revealed specific functional roles for individual regulatory interactions. Through gene circuit models and reverse engineering approaches, researchers have quantified the strength and nature of these interactions, leading to a refined understanding of network dynamics [11] [14]. These models reproduce gap gene expression with high accuracy and temporal resolution, enabling detailed analysis of regulatory mechanisms.

Table 2: Key Regulatory Interactions in the Gap Gene Network

| Regulatory Interaction | Type | Functional Role | Evidence |

|---|---|---|---|

| Bcd → Hb | Activation | Establishes anterior Hb domain | Mutant analysis, binding studies [10] |

| Hb auto-activation | Positive feedback | Maintains Hb expression | Modeling, mutant analysis [14] |

| Hb → Kr | Repression | Sharpens anterior Kr boundary | Gene circuit models [11] [14] |

| Kr → Hb | Repression | Sharpens posterior Hb boundary | Gene circuit models [11] |

| Kni → Kr | Repression | Defines posterior Kr boundary | Gene circuit models, mutants [11] [14] |

| Cad → Kr | Activation | Promotes central Kr expression | Modeling predictions [11] |

| Gt → Kni | Repression | Defines anterior kni boundary | Mutant analysis, modeling [14] |

The regulatory weights of individual transcription factor binding sites show weak correlation with their position weight matrix (PWM) scores, indicating that functional importance is not determined solely by binding affinity [13]. Furthermore, functionally important sites are not exclusively located in classical cis-regulatory modules but are often dispersed throughout regulatory regions [13].

Temporal Dynamics of Domain Formation

Gap gene expression patterns undergo significant temporal progression during cycles 13 and 14A. In the posterior half of the embryo, gap domains exhibit anterior shifts during cycle 14A, a dynamical behavior that would be impossible to understand from static observations alone [13]. These shifts result from the interplay between maternal gradients and gap gene cross-regulation, particularly the repressive interactions that show posterior dominance [11].

Gene circuit models have demonstrated that the timing of gap domain boundary formation correlates with regulatory contributions from the terminal maternal system, suggesting integrated timing mechanisms across different patterning systems [11]. The dynamic nature of gap gene expression highlights the importance of temporal regulation in addition to spatial control, with the network implementing a sequential activation and refinement process that culminates in stable expression domains by the end of cycle 14A.

Experimental and Computational Methodologies

Genetic and Molecular Analysis Techniques

The characterization of the gap gene network has relied on a combination of genetic, molecular, and computational approaches:

- Mutagenesis Screens: Large-scale genetic screens identified segmentation mutants, classifying genes into gap, pair-rule, and segment-polarity classes based on mutant phenotypes [10].

- Gene Expression Analysis: In situ hybridization and antibody staining visualize spatial and temporal expression patterns of gap genes in wild-type and mutant backgrounds [10] [14].

- Cis-Regulatory Analysis: DNA-binding assays (e.g., DNase I footprinting) and transgenic reporter constructs identify functional transcription factor binding sites and regulatory elements [13].

- Quantitative Imaging: High-resolution imaging coupled with image processing techniques provides quantitative spatiotemporal expression data at cellular resolution [13].

Table 3: Key Experimental Methodologies for Gap Gene Network Analysis

| Methodology | Application | Key Insights Generated | Technical Considerations |

|---|---|---|---|

| Genetic mutagenesis screens | Identify segmentation genes | Classification of gap, pair-rule, segment-polarity genes | Limited to essential genes with viable mutants |

| In situ hybridization | Visualize spatial mRNA patterns | Expression domains and boundaries | Qualitative to semi-quantitative |

| Immunofluorescence | Visualize protein patterns | Protein expression dynamics and shifts | Antibody quality critical |

| DNA-binding assays | Map transcription factor binding sites | Identification of functional cis-regulatory elements | In vitro conditions may not reflect in vivo |

| Transgenic reporter assays | Test regulatory element function | Dissection of cis-regulatory logic | Genomic position effects |

| Quantitative image analysis | Extract concentration profiles | Spatiotemporal dynamics of expression | Requires standardized fixation and imaging |

Mathematical Modeling Approaches

Mathematical modeling has been essential for understanding the dynamical properties of the gap gene network. Three major classes of models have been applied:

- Boolean Models: Represent regulatory relationships as logic gates and identify feedback loops that account for network topology at steady-state [13].

- Differential Equation-Based Models (Gene Circuits): Describe regulatory networks using kinetic equations that capture spatiotemporal dynamics of mRNA and protein concentrations [11] [14] [13].

- Thermodynamic Models: Use biophysical descriptions of DNA-protein interactions to predict gene expression from transcription factor binding sites [13].

More recently, sequence-based dynamical models have emerged that combine thermodynamic calculations of transcriptional activation with reaction-diffusion equations describing mRNA and protein dynamics [13]. These integrated models incorporate detailed DNA-based information alongside transcription factor concentration data to simulate gap gene expression patterns in wild-type and mutant embryos.

Diagram 2: Integrated experimental and computational workflow for gap gene network analysis, showing the iterative cycle of data collection, model construction, and experimental validation.

Reverse Engineering Network Structure

Reverse engineering approaches have been particularly valuable for inferring regulatory relationships directly from quantitative expression data. Gene circuit models combined with optimization algorithms can efficiently fit different types of regulatory rules and test alternative network structures [14]. This approach has enabled researchers to:

- Compare different modeling formalisms (continuous vs. logical) for representing regulatory relationships [14].

- Test network structures suggested by the literature against quantitative expression data [14].

- Identify core regulatory relationships necessary and sufficient for gap gene patterning [14].

- Resolve ambiguities in qualitative models, such as the regulatory effects of Hb on Kr and Kr on kni [14].

These computational studies have led to revised network models that eliminate certain links present in traditional textbook models while confirming the essential role of repressive feedback between complementary gap genes [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents for Gap Gene Network Studies

| Reagent/Category | Specific Examples | Function/Application | Key Considerations |

|---|---|---|---|

| Antibodies | α-Bcd, α-Hb, α-Kr, α-Kni, α-Gt | Protein localization and quantification | Species specificity, validation required |

| DNA Probes | hb, Kr, kni, gt mRNA antisense probes | In situ hybridization for mRNA patterns | Probe design for specificity |

| Transgenic Reporter Lines | LacZ/GFP reporters with gap gene regulatory elements | Cis-regulatory analysis in vivo | Genomic position effects must be controlled |

| Mutant Strains | bcd⁻, hb⁻, Kr⁻, kni⁻, gt⁻ loss-of-function | Functional analysis of network components | Maternal vs. zygotic phenotypes |

| Position Weight Matrices | Bcd, Cad, Hb, Kr, Kni, Gt binding motifs | TFBS prediction in regulatory elements | Quality and specificity of matrix critical |

| Mathematical Modeling Tools | Gene circuit models, thermodynamic models | Quantitative simulation of network dynamics | Parameter identifiability, validation |

Discussion: Implications for Developmental and Evolutionary Biology

Dynamical Modules in Development

The gap gene network exemplifies how dynamical modules operate as functional units in development, generating specific morphological outcomes through their characteristic activities [2]. Unlike structural modules defined by physical connectivity alone, the gap gene system demonstrates dynamical modularity through its reproducible spatiotemporal patterning behavior across varying contexts [2]. This perspective emphasizes the importance of network dynamics rather than just topology for understanding developmental processes.

The concept of dynamical modules provides a framework for comparing patterning mechanisms across different biological systems. For example, similar principles of gradient interpretation and boundary formation through cross-repression operate in various developmental contexts beyond Drosophila segmentation, suggesting conserved dynamical motifs in pattern formation [2] [12].

Evolutionary Implications

The gap gene network has played a crucial role in the evolution of insect segmentation strategies. While most segmented animals add segments sequentially during growth, higher insects like Drosophila employ long-germband development where segments form simultaneously by subdividing the embryo [10]. This evolutionary transition likely involved the recruitment of gap genes into the segmentation network, with their dynamical properties enabling simultaneous rather than sequential segment determination [10].

Comparative studies across insect species reveal both conserved and divergent aspects of gap gene regulation, suggesting evolutionary tinkering with network dynamics rather than complete rewiring of network structure [10]. The modular nature of the gap gene system—with its combination of maternal inputs, cross-regulatory interactions, and downstream outputs—may facilitate evolutionary changes by allowing partial modification of network components without disrupting overall functionality.

Future Directions and Applications

Recent advances in spatial transcriptomics and single-cell analysis offer new opportunities for studying gap gene network dynamics with unprecedented resolution. Methods like NicheCompass—a graph deep-learning approach that models cellular communication—enable quantitative characterization of cell niches based on signaling events [15]. Applying such approaches to early embryonic patterning could reveal new aspects of gap gene network operation and regulation.

The principles uncovered through studying the gap gene network have broader implications for understanding developmental disorders and designing synthetic biological systems. The dynamical modules perspective may inform strategies for engineering pattern formation in synthetic tissues or organoids, while insights into network robustness could shed light on buffering mechanisms that prevent developmental defects. As a model system, the gap gene network continues to provide fundamental insights into how dynamical regulatory processes generate biological form.

The Link Between Dynamical Modules, Criticality, and Evolutionary Evolvability

The existence of discrete phenotypic traits suggests that the complex regulatory processes underlying them must be functionally modular to evolve independently. Traditionally, functional modularity has been approximated by detecting structural modularity in network architecture, based on the assumption that densely connected subnetworks correspond to functional units [3]. However, a growing body of evidence reveals that the correlation between network structure and function is often loose [3]. Many regulatory networks exhibit modular behavior without structural modularity, challenging this traditional view [3].

This whitepaper introduces dynamical modules as an alternative framework for understanding how complex biological systems are partitioned into functional units. Unlike structural approaches that identify network motifs or communities based on connection density, dynamical modularity focuses on decomposing system behavior into elementary activity-functions that drive different aspects of whole-network behavior [2]. This perspective is particularly relevant for understanding evolvability—the capacity of evolving systems to generate adaptive change [16] [3]. We demonstrate how specific dynamical modules can exist in states of criticality, which explains the differential evolvability of various expression features within the same regulatory network [3].

Theoretical Foundations: From Structural to Dynamical Modules

Limitations of Structural Modularity

Structural definitions of modularity, while useful in many contexts, face several fundamental limitations [3]:

- Context Dependence: Even simple subcircuits exhibit rich dynamic repertoires depending on boundary conditions, parameter values, and specific regulation-expression functions [3].

- Structural Overlap: Most functionally modular networks show partial rather than complete structural separation between modules [3].

- Behavioral Plasticity: Network structure constrains but does not determine function, as even simple topologies can generate multiple dynamical behaviors [2].

The gap gene system of dipteran insects exemplifies these limitations. Although not structurally modular, this system is composed of dynamical modules driving different aspects of whole-network behavior [3].

Defining Dynamical Modules

Dynamical modules represent dissociable causal processes within the genotype-phenotype map that generate specific phenotypic outcomes [2]. They are defined not by physical structure but by their activity-functions—orchestrated patterns of dynamic behavior that contribute to specific system-level functions [2].

| Module Type | Basis of Definition | Key Characteristics | Limitations |

|---|---|---|---|

| Structural | Network topology & connection density | Statistical enrichment of motifs; dense intra-module connections | Loose structure-function correlation; context dependence |

| Variational | Statistical independence of traits | Covariation of functionally related traits | Identifies patterns but not mechanistic causes |

| Functional | Contribution to organismal organization | Classified via perturbatory approaches (e.g., mutagenesis) | Difficult to recompose internal module workings |

| Dynamical | Activity-functions & behavioral decomposition | Internal causal cohesion with contextual autonomy; can operate without structural modularity | Complex to identify and characterize empirically |

Experimental Evidence: Evolvability and the Emergence of Adaptive Mutation Systems

Experimental Evolution of Evolvability

Groundbreaking research at the Max Planck Institute for Evolutionary Biology provides direct experimental evidence that natural selection can shape evolvability itself [16]. In a three-year experiment with microbial populations, researchers subjected lineages to intense selection requiring repeated transitions between phenotypic states under fluctuating environmental conditions [16].

Key Experimental Protocol:

- Selection Regime: Microbial populations underwent repeated environmental fluctuations requiring phenotypic transitions [16].

- Lineage Replacement: Lineages unable to develop required phenotypes were eliminated and replaced by successful ones [16].

- Mutation Analysis: Researchers analyzed more than 500 mutations across evolving populations [16].

Results: The experiment revealed the emergence of a localized hyper-mutable locus through a multi-step evolutionary process [16]. This mechanism exhibited a mutation rate up to 10,000 times higher than the original lineage and enabled rapid, reversible phenotypic transitions [16]. This genetic mechanism resembles contingency loci in pathogenic bacteria, allowing microbes to "anticipate" environmental changes through evolutionary history embedded in genetic architecture [16].

Quantitative Analysis of Evolved Mutational Systems

Table: Quantitative Characteristics of Evolved Hypermutable Locus in Microbial Evolution Experiment

| Parameter | Original Lineage | Evolved Hyper-mutable Locus | Functional Significance |

|---|---|---|---|

| Mutation Rate | Baseline | Up to 10,000x higher | Enables rapid adaptation to fluctuating environments |

| Phenotypic Transitions | Limited capacity | Rapid and reversible | Allows population survival despite repeated environmental changes |

| Genetic Mechanism | Standard mutation rates | Similar to bacterial contingency loci | Provides evolutionary "foresight" through embedded history |

| Evolutionary Process | Random mutation | Multi-step evolutionary trajectory | Demonstrates natural selection can act on evolvability itself |

Case Study: Dynamical Modules in the Gap Gene System

The gap gene network in Drosophila melanogaster represents an ideal model for studying dynamical modularity [3]. This gene regulatory network is involved in pattern formation and segment determination during early embryogenesis, reading and interpreting morphogen gradients along the antero-posterior axis [3].

Key Components:

- Maternal coordinate genes: bicoid (bcd), caudal (cad), hunchback (hb)

- Trunk gap genes: hunchback (hb), Krüppel (Kr), knirps (kni), giant (gt)

- Extensive cross-regulation: Particularly during cleavage cycle 14A [3]

Dynamical Decomposition

Research reveals that although the gap gene system lacks strict structural modularity, it can be decomposed into dynamical modules that drive different aspects of pattern formation [3]. These subcircuits share the same regulatory structure but differ in their components and sensitivity to regulatory interactions [3].

Critically, some of these subcircuits exist in a state of criticality, while others do not, which directly explains the differential evolvability of various expression features within the system [3]. This finding provides a mechanistic basis for understanding why some aspects of developmental systems are more evolutionarily flexible than others.

Diagram: Dynamical Modules in the Gap Gene Network. The system decomposes into functional modules despite shared regulatory structure, with some modules (like Module 2) exhibiting criticality that enhances evolvability.

Methodological Framework: Identifying and Analyzing Dynamical Modules

Experimental Workflow for Dynamical Decomposition

Diagram: Experimental Workflow for Identifying Dynamical Modules. The process involves systematic perturbation, quantitative measurement, and behavioral decomposition to identify functionally autonomous modules.

Research Reagent Solutions for Dynamical Analysis

Table: Essential Research Reagents and Computational Tools for Analyzing Dynamical Modules

| Reagent/Tool | Function | Application Example |

|---|---|---|

| Live Imaging & Quantitative Microscopy | High-resolution time-series measurement of expression patterns | Tracking gap gene expression dynamics in Drosophila embryos [3] |

| CRISPR-Cas9 Mutagenesis | Targeted gene knockout and regulatory perturbation | Testing necessity of specific interactions for module function [3] |

| Fluorescent Reporter Constructs | Visualizing expression dynamics of multiple genes simultaneously | Monitoring overlapping expression domains in gap gene system [3] |

| Parameter Optimization Algorithms | Inferring regulatory parameters from quantitative data | Reconstructing data-compatible models of network dynamics [3] |

| Bifurcation Analysis Tools | Identifying critical transitions in parameter space | Detecting states of criticality in specific subcircuits [3] |

| Data-Driven Model Discovery Methods | Inferring model structure directly from measurements | Complementary approach to classical mechanistic modeling [17] |

Implications for Evolutionary Developmental Biology and Biomedical Research

The recognition that evolvability itself can evolve through selection on mutational mechanisms represents a paradigm shift in evolutionary biology [16]. The emergence of hyper-mutable loci under specific selective regimes demonstrates that evolution can favor genetic architectures that enhance future adaptive potential [16].

In the context of developmental evolution, the identification of dynamical modules provides a mechanistic explanation for differential evolvability—why some traits evolve more readily than others even within integrated developmental systems [3]. Modules in critical states may be more responsive to evolutionary change, directing phenotypic variation along specific axes.

For biomedical research, particularly in drug development, understanding dynamical modularity has significant implications:

- Network Medicine: Identifying critical dynamical modules in disease networks reveals new therapeutic targets beyond single-gene approaches.

- Antibiotic Resistance: Understanding how contingency loci evolve informs strategies to combat rapidly adapting pathogens [16].

- Cancer Evolution: Analyzing dynamical modules in tumor progression may predict evolutionary trajectories and resistance mechanisms.

The dynamical perspective bridges evolutionary and developmental biology, providing a unified framework for understanding how complex phenotypes are generated and evolve. By focusing on activity-functions rather than structural components, researchers can identify the fundamental building blocks of evolvable biological systems.

Modularity, the organization of systems into discrete, interconnected units, is a fundamental architectural principle observed across biological networks, from neural circuits to metabolic pathways. This whitepaper synthesizes cutting-edge research demonstrating how dynamical modules—functionally cohesive units with distinct regulatory dynamics—drive whole-network behavior in developmental and regulatory contexts. We present a detailed analysis of modular architecture's role in conferring robustness, evolvability, and functional specialization across biological scales. For researchers and drug development professionals, understanding these principles provides novel frameworks for tackling complex diseases, where dysregulation of modular coordination often underlies pathological phenotypes. Supported by experimental data and quantitative analyses from recent studies, this review establishes modularity as a universal design principle governing biological complexity.

Biological systems exhibit extraordinary complexity, yet this complexity is hierarchically organized through modular architectures that facilitate robust functionality and adaptive evolution. Modularity describes systems composed of "sets of strongly interacting parts that are relatively autonomous with respect to each other" [18]. In both neural and metabolic networks, this manifests as densely interconnected subunits with sparser between-module connections, creating functional compartments that can operate semi-autonomously while contributing to integrated network behavior [19] [20].

The dynamical modules framework posits that the fundamental building blocks of biological regulation are robust regulatory switches controlling discrete sets of phenotypic outcomes [21]. These modules maintain dynamical autonomy despite being embedded in densely wired cellular networks, enabling the combinatorial generation of distinct phenotypic states through their coordinated interactions [21]. This perspective shifts focus from static structural descriptions to the functional dynamics that ultimately determine physiological and pathological behaviors.

For drug development professionals, understanding modular principles is particularly crucial when tackling complex diseases like cancer, where breakdown in the coordination between multiple functional modules creates unhealthy phenotype-combinations [21]. Traditional target-based approaches often fail because they disregard this modular architecture and the emergent behaviors it produces. This whitepaper examines the principles of modularity through case studies from neural and metabolic networks, providing researchers with experimental frameworks and analytical tools for studying modular systems.

Theoretical Foundations of Dynamical Modularity

Defining Modularity Across Biological Scales

Modularity represents a universal organizing principle with consistent features across scales:

- Structural Modularity: Densely connected network components with higher intra-module than inter-module connection density [19]

- Functional Modularity: Units performing specific, separable functions within the larger system [2]

- Variational Modularity: Sets of traits that vary together with statistical independence from other modules [18]

- Dynamical Modularity: Regulatory subsystems that maintain discrete behavioral states and can toggle between them [21]

The dynamical modularity concept is particularly powerful because it directly addresses how modules generate distinct phenotypic outcomes through their coordinated activities. As Jaeger et al. argue, "All biological traits are generated by some underlying regulatory dynamics" [2], making the identification of dynamical modules essential for understanding phenotype generation.

Advantages of Modular Architectures

Modular organization confers several evolutionarily advantageous properties:

Table 1: Key Advantages of Modular Biological Networks

| Advantage | Mechanism | Example |

|---|---|---|

| Robustness | Containment of perturbations within modules | Sigma factor regulatory networks in bacteria maintaining function despite individual factor dysfunction [19] |

| Evolvability | Quasi-independent modification of modules | Independent evolution of fore- and hind-limbs in aerial vertebrates [2] |

| Functional Specialization | Division of labor among specialized modules | Distinct neural circuits for segregated information processing [22] |

| Efficient Learning | Reuse and recombination of existing modules | Curriculum learning in artificial neural networks via modular growth [23] |

These advantages explain modularity's pervasive evolution across biological systems. As Wagner et al. noted, modularity enables the evolution of complexity by allowing parts to evolve independently without disrupting overall function [18]. In neural networks, this facilitates the coexistence of segregated (specialized) and integrated (binding) information processes [22]. In metabolic systems, modular organization allows for the efficient coordination of biochemical pathways under changing environmental conditions [19].

Modularity in Neural Networks: From Biological to Artificial Systems

Structural and Functional Modularity in Biological Neural Networks

The brain's connectivity follows a modular and hierarchical organization at different spatial and functional scales [22]. This architecture is suggested to facilitate the coexistence of segregation and integration of information: neuronal circuits associated with specific functions are densely connected with each other, while long-range connections and network hubs allow for integration of different information streams [22].

Experimental studies on cultured neuronal networks with engineered modular traits demonstrate how modular architecture confers robustness to damage. When these modular networks suffered focal lesions, the frequency of spontaneous collective activity events initially declined but recovered to pre-damage levels within 24 hours [24]. Numerical models incorporating spike-timing-dependent plasticity (STDP) captured this recovery phenomenon, demonstrating that the combination of modularity and plasticity prevents total loss of network-wide activity and facilitates functional restoration [24].

Emergence and Maintenance of Neural Modules

A crucial question in neural connectivity is understanding how modular organization naturally emerges as a consequence of functional needs. Bergoin et al. demonstrated that simple STDP rules, based only on pre- and post-synaptic spike times, can lead to the stable encoding of memories in spiking neural networks without control mechanisms [22]. Their model incorporated both excitatory and inhibitory neurons with Hebbian and anti-Hebbian STDP, revealing that only the combination of two inhibitory STDP sub-populations allows for the formation of stable modules [22].

Table 2: Key Findings from Neural Network Modularity Studies

| Study | Network Type | Key Finding | Mechanism |

|---|---|---|---|

| Bergoin et al. [22] | Spiking neural network with STDP | Two inhibitory STDP sub-populations enable stable module formation | Hebbian neurons control firing activity; anti-Hebbian neurons promote pattern selectivity |

| Montala-Flaquer et al. [24] | Cultured neuronal networks on engineered substrates | Modular structure enhances recovery from focal damage | STDP-mediated reorganization preserves network-wide activity |

| Béna & Bourne [25] | Artificial neural networks | Functional specialization requires meaningful separability in environment and resource constraints | Limited network resources drive specialization in separable environments |

After learning phases, these networks settle into asynchronous irregular resting-state activity associated with spontaneous memory recalls, which prove fundamental for long-term memory consolidation [22]. This demonstrates how modular architecture supports both active learning and offline memory maintenance through naturally emerging dynamics.

Implications for Artificial Intelligence and Neuromorphic Systems

Research on biological neural modularity has profound implications for artificial intelligence. Studies comparing modular versus non-modular artificial neural networks found that modular networks consistently outperform their non-modular counterparts across multiple metrics, including training time, generalizability, and robustness to perturbations [23].

The modular growth approach—adding specialized modules incrementally through curriculum learning—enables more efficient learning of complex tasks by building on previously acquired capabilities [23]. This mirrors evolutionary processes where new functionalities emerge through the duplication and specialization of existing modules. Furthermore, modular architectures demonstrate superior robustness to connection errors, though they can be sensitive to changes in processing timescales [23].

Modularity in Metabolic and Regulatory Networks

Dynamical Modules in Metabolic Control

Metabolic networks exhibit pronounced hierarchical modular organization, with highly connected modules composed of smaller, less connected modules [20]. This hierarchical structure correlates with functional classification of metabolic reactions, suggesting modularity is essential for efficient metabolic functioning. From an evolutionary perspective, modularity in metabolic networks enables organisms to adapt to diverse environmental challenges by reconfiguring metabolic fluxes through modular pathways [19].

Dynamical modeling reveals that metabolic control is often organized through coupled regulatory switches that toggle between discrete functional states. For instance, in the mammalian cell cycle, three well-characterized bistable switches control commitment to division (Restriction Point), entry into mitosis (G2/M transition), and exit from mitosis (Spindle Assembly Checkpoint) [21]. When modeled as a Boolean network, these switches display discrete attractor states corresponding to distinct phenotypic outcomes, with regulatory barriers ensuring sharp transitions between cell cycle phases [21].

Principles of Dynamical Modularity in Regulatory Networks

Analysis of coupled switch ensembles reveals three general principles governing their coordinated function [21]:

- Combinatorial Generation of Global States: Global cellular states emerge as discrete combinations of switch-level phenotypes

- Hierarchical Dependencies: Specific hierarchies and dependencies exist among switches

- Context-Dependent Coupling: The functional effect of a switch depends on the state of other switches

These principles explain how a limited number of regulatory switches can generate a diverse repertoire of coordinated phenotypic responses. In cancer cells, for example, breakdown in the normal coordination of these switches enables the emergence of pathological phenotype-combinations, such as simultaneous proliferation, resistance to cell death, and invasive migration [21].

Experimental Approaches and Methodologies

Engineering Modular Neuronal Networks

Montalà-Flaquer et al. developed a robust protocol for creating modular neuronal cultures using topographically modulated substrates [24]:

Fabrication of Engineered Topographical Substrates:

- Materials: Polydimethylsiloxane (PDMS) base and curing agent (Sylgard 184), fiberglass-copper molds with parallel stripe patterns (300μm wide, 70μm high)

- Method: PDMS mixture (90% base, 10% curing agent) poured onto mold and cured at 90°C for 2 hours

- Result: PDMS substrates with parallel tracks guiding neuronal growth and creating strongly connected modules along tracks with weaker connections across them

Cell Culture and Monitoring:

- Primary neurons from rat embryonic cortices (E18-19) seeded at ~400 neurons/mm² density

- Transduction with GCaMP6s calcium indicator on DIV1 via AAV9.Syn.GCaMP6s.WPRE.SV40

- Wide-field calcium imaging at 50 frames/s using inverted fluorescence microscope (Zeiss Axiovert 25C) with high-speed camera (Hamamatsu Orca Flash 4.0v3)

- Focal lesion induced using scalpel, with activity monitoring pre- and post-damage

This experimental system enables precise investigation of how modular architecture influences network response to injury and subsequent recovery dynamics.

Computational Modeling of Modular Networks

Spiking Neural Network (SNN) Model with STDP [24] [22]:

- Network architecture: Excitatory and inhibitory neurons with Hebbian and anti-Hebbian STDP rules

- Plasticity mechanism: Spike-timing-dependent plasticity modifies synaptic strength based on relative timing of pre- and postsynaptic spikes

- Modular implementation: Simulated modules with high intra-module and sparse inter-module connectivity

- Damage simulation: Selective silencing of neuronal populations to model focal lesions

- Recovery quantification: Measurement of spontaneous collective activity events post-damage

Boolean Modeling of Regulatory Switches [21]:

- Framework: Logical modeling where molecules are ON (active) or OFF (inactive)

- Advantage: Captures qualitative features of regulatory interactions without detailed kinetic parameters

- State transition analysis: Enumeration of all possible trajectories from 2^N initial states

- Attractor identification: Locally stable states representing distinct phenotypic outcomes

- Coupling analysis: Investigation of how switches toggle each other to generate coordinated dynamics

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Modularity Studies

| Reagent/Technology | Function | Example Use |

|---|---|---|

| PDMS Topographical Substrates | Guides neuronal growth to create engineered modular networks | Creating spatially constrained modular neuronal cultures [24] |

| GCaMP6s Calcium Indicator | Fluorescence-based monitoring of neuronal activity | Wide-field calcium imaging of spontaneous network activity [24] |

| STDP Models | Implementation of timing-dependent synaptic plasticity | Simulating activity-dependent reorganization in spiking neural networks [24] [22] |

| Boolean Network Modeling | Logical representation of regulatory dynamics | Identifying attractor states in coupled switch systems [21] |

| Community Detection Algorithms | Identification of modules in network data | Detecting variational modules in correlation matrices [18] |

Visualization of Key Concepts

Modular Network Response to Focal Damage

Coordination of Coupled Switches in Cell Cycle Regulation

Implications for Drug Development and Disease Treatment

The dynamical modularity perspective offers transformative insights for therapeutic development, particularly for complex diseases characterized by coordinated breakdown of multiple functions. Traditional single-target approaches often fail because they disregard the modular organization of regulatory networks and the emergent behaviors that arise from module interactions [21].

Targeting Modular Coordination in Cancer

Cancer cells exploit modular organization to generate adaptable, therapy-resistant phenotype-combinations. Rather than targeting individual pathways, effective therapeutic strategies might target:

- Module Coordination Mechanisms: Interventions that disrupt the abnormal coupling between switches driving proliferation, survival, and invasion

- Attractor Landscape Modifiers: Treatments that shift the balance of attractor states toward healthy phenotypes rather than eliminating specific molecules

- Robustness Vulnerabilities: Identification of context-dependent module vulnerabilities that emerge only in specific phenotypic states

Enhancing Neural Repair Through Modularity Principles

In neurological disorders and brain injury, understanding modular architecture suggests novel rehabilitation approaches:

- Activity-Dependent Plasticity: Leveraging STDP mechanisms to reinforce compensatory pathways

- Module-Specific Stimulation: Targeted neuromodulation of specific functional modules to facilitate recovery

- Network-Wide Effects: Recognizing that focal interventions can have distributed benefits through modular network architecture

Modularity represents a universal design principle governing biological organization across scales, from molecular networks to entire ecosystems. The dynamical modules perspective—focusing on robust regulatory switches that control discrete phenotypic outcomes—provides a powerful framework for understanding how local interactions generate global system behaviors.

Future research directions should prioritize:

- Quantitative Measures of Dynamical Modularity: Developing robust metrics to quantify the degree and nature of modular coordination in living systems

- Multi-Scale Integration: Bridging modular phenomena across spatial and temporal scales to understand hierarchical control

- Therapeutic Applications: Exploiting modular principles for developing network-level interventions in complex diseases

- Artificial Intelligence: Implementing biological modularity principles to create more efficient, adaptable, and robust artificial learning systems

For researchers and drug development professionals, embracing this modular perspective requires a shift from reductionist, target-focused approaches to network-level thinking that acknowledges the emergent properties of dynamically coupled regulatory modules. By understanding the universal principles of modularity manifest in neural and metabolic networks, we can develop more effective strategies for addressing complex diseases and engineering adaptive intelligent systems.

Mapping the Dynamics: Computational and Experimental Tools for Module Identification

Decomposing Network Behavior into Elementary Activity-Functions

The analysis of complex biological networks represents a cornerstone of systems biology, particularly in understanding developmental processes and disease mechanisms such as cancer. Traditional structural analyses of network topology provide necessary but insufficient insights into dynamic functional behaviors. This whitepaper presents a methodological framework for decomposing overall network behavior into discrete, quasi-independent elementary activity-functions. Grounded in the theory of dynamical modules—subsystems characterized by internal causal cohesion and contextual autonomy—this approach enables researchers to map specific phenotypic outcomes to specific regulatory dynamics [2]. We provide comprehensive experimental protocols for identifying these modules, quantitative frameworks for their analysis, and visualizations of their hierarchical relationships, creating an essential toolkit for researchers and drug development professionals aiming to target specific network functionalities.

All phenotypic traits, from morphological structures to disease susceptibilities, are generated by underlying regulatory dynamics that constitute the organism's epigenotype [2]. This complex genotype-phenotype map exhibits a modular architecture that enables the quasi-independent evolution and functioning of traits—a principle fundamental to evolvability and, by extension, to the pathological dysregulation seen in diseases like cancer [2]. While variational modules are identified through statistical independence of traits and structural modules through dense network connectivity, these approaches cannot fully explain emergent, context-sensitive behaviors [2].

Dynamical modularity offers a more functionally relevant perspective by decomposing the behavior of a complex regulatory system into elementary activity-functions. These modules are defined by their coherent temporal activity patterns and functional contributions, which may occur even in networks lacking clear structural modularity [2]. For drug development, this is transformative: it shifts the therapeutic target from a static structural component (e.g., a highly connected network node) to a specific, dysfunctional dynamical activity. This paper establishes a framework for this decomposition, with protocols designed for researchers investigating the dynamical modules that drive whole-network behavior in developmental and disease contexts.

Core Theoretical Framework: From Structural to Dynamical Modules

Kinds of Biological Modules

Biological modules can be classified by their defining principles, each with distinct strengths for analysis:

- Variational Modules: Defined by the statistical co-variance of traits, enabling their quasi-independent evolution [2].

- Structural Modules: Identified as densely interconnected subnetworks (e.g., network cliques or motifs) within larger regulatory networks [2].

- Functional Modules: Defined by their contribution to a specific biological function, often identified through perturbation studies (e.g., mutagenesis screens) [2].

- Dynamical Modules: The focus of this framework, these are processes or activities distinguished by coherent temporal patterns and functional outputs, which may or may not align with structural modules [2].

The Concept of Elementary Activity-Functions

An elementary activity-function is the most basic, functionally coherent unit of network dynamics. It is characterized by:

- Temporal Coherence: The activity exhibits a distinct pattern over time (e.g., pulsed, oscillatory, sustained).

- Functional Specificity: The activity contributes to a specific, discrete phenotypic outcome.

- Contextual Autonomy: The activity can be distinguished from, and can operate semi-independently of, other concurrent network activities.

- Generative Capacity: The activity is a building block that can be recomposed with others to generate complex system-level behaviors.

For example, in a developmental signaling network, a "transient pulse generator" and a "bistable switch" are distinct elementary activity-functions that, when combined, can pattern a tissue.

Experimental Protocols for Identifying Dynamical Modules

The following workflow outlines a multi-stage process for decomposing network behavior.

Protocol 1: High-Resolution Time-Course Data Acquisition

Objective: To capture the high-fidelity dynamical data necessary for identifying activity-functions from a biological system.

- 3.1.1. Experimental Design: Utilize tools like Figure One (an open-source web tool for visualizing experimental designs) to schematize complex studies involving multiple timepoints, perturbations, and data collection phases [26]. This ensures clarity and reproducibility.

- 3.1.2. Data Collection:

- Live-Cell Imaging: For transcriptional or signaling dynamics, use fluorescent reporters (e.g., GFP, Luciferase) under the control of key regulatory elements. Acquire images at a temporal resolution sufficient to capture the fastest known dynamics in the system.

- Single-Cell 'Omics: Perform single-cell RNA-Seq, ATAC-Seq, or proteomics on synchronized cell populations across a dense time series of developmental or drug-response progression.

- Perturbation Experiments: Combine time-course data with targeted perturbations—genetic (CRISPRi/a), chemical (small molecule inhibitors), or environmental—to probe module boundaries and resilience.

Protocol 2: Inferring Activity-Functions from Quantitative Data

Objective: To process raw time-course data into discrete, candidate elementary activity-functions.

- 3.2.1. Data Preprocessing: Normalize data, perform dimensionality reduction (e.g., PCA, UMAP), and align trajectories from multiple replicates.

- 3.2.2. Dynamical Systems Modeling: Fit mathematical models (e.g., systems of ordinary differential equations) to the multi-variate time-course data. The terms and parameters of these models directly represent regulatory interactions and kinetic properties.

- 3.2.3. Activity-Function Decomposition: Apply blind source separation techniques, such as Independent Component Analysis (ICA), to the processed dynamical data. ICA identifies underlying source signals (putative activity-functions) that, when mixed, generate the observed complex patterns. Each independent component is a candidate elementary activity-function characterized by its unique temporal signature.

Protocol 3: Functional Validation of Candidate Modules

Objective: To experimentally confirm that a candidate activity-function is a genuine, quasi-independent dynamical module with a specific phenotypic outcome.