Beyond Relatedness: A Practical Guide to Correcting for Phylogenetic History in Comparative Analysis

This article provides a comprehensive guide for researchers and drug development professionals on the critical need to correct for phylogenetic history in comparative analyses.

Beyond Relatedness: A Practical Guide to Correcting for Phylogenetic History in Comparative Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical need to correct for phylogenetic history in comparative analyses. It covers foundational concepts explaining why standard statistical tests fail with phylogenetically structured data and introduces key models of trait evolution. The piece delivers a practical toolkit of methodological approaches, including Phylogenetic Generalized Least Squares (PGLS) and independent contrasts, illustrated with case studies from evolutionary biology and drug discovery. It further addresses common challenges in model selection, data quality, and computational limitations, while outlining robust protocols for validating analytical results and comparing methodological performance. The synthesis empowers scientists to conduct evolutionarily-aware analyses that yield reliable, biologically meaningful insights for fields ranging from basic evolutionary research to applied pharmaceutical development.

Why Phylogeny Matters: The Foundation of Non-Independence in Comparative Data

A foundational principle in evolutionary biology is that species are not independent data points. This phenomenon, known as phylogenetic non-independence, arises because species share portions of their evolutionary history to varying degrees. Standard statistical tests (e.g., standard linear regression, correlation, t-tests) assume that all data points are independent. When this assumption is violated—as it is with comparative biological data across related species—it can lead to inflated Type I error rates (false positives), biased parameter estimates, and ultimately, incorrect biological conclusions [1] [2].

This guide explains the core issues, provides solutions for researchers, and outlines methodologies to correctly account for shared evolutionary history.

FAQs on Phylogenetic Non-Independence

1. What is phylogenetic non-independence, and why is it a problem for statistical analysis?

Phylogenetic non-independence, or phylogenetic signal, describes the tendency for closely related species to resemble each other more than they resemble species chosen at random from the same tree. This shared history arises from descent with modification [2].

Treating these related species as independent in an analysis is a statistical flaw known as pseudoreplication. It artificially inflates your sample size because traits from multiple species may effectively represent a single evolutionary event. This can cause a standard statistical test to detect a significant relationship between two traits when, in fact, none exists [1] [3].

2. My study compares traits across populations within a single species. Do I need to worry about phylogenetics?

Yes, but the source of non-independence is more complex. While you are working within a single phylogeny, populations can be non-independent due to two key processes:

- Shared Common Ancestry: Populations that diverged more recently will be more similar, just as species are [3].

- Gene Flow: The exchange of migrants between populations can make their traits more similar [3].

Standard phylogenetic comparative methods designed for species may not be directly applicable. Instead, mixed models that can incorporate a population-level pedigree or a matrix of genetic similarity are often recommended to account for both shared ancestry and gene flow [3].

3. I've used Phylogenetic Independent Contrasts (PIC). What are its key assumptions, and how can I check them?

PIC is a foundational method for accounting for phylogenetic non-independence [1]. Its three major assumptions are:

- The phylogeny's topology is accurate.

- The branch lengths are correct.

- Traits evolve under a Brownian motion model (where trait variance accumulates proportionally with time) [1].

You can test these assumptions using model diagnostic plots, which are standard in software packages like caper in R. These include:

- Plotting standardized contrasts against their standard deviations.

- Looking for a relationship between contrasts and node heights [1]. Failure to test these assumptions is a common pitfall that can lead to poor model fit and misinterpreted results [1].

4. The Ornstein-Uhlenbeck (OU) model is often presented as a better alternative to Brownian motion. What are its caveats?

The OU model is popular as it can model trait evolution under a stabilizing selection constraint. However, it has several well-documented caveats:

- Small Sample Sizes: It is frequently and incorrectly favored over simpler models in small datasets (e.g., median of 58 taxa) using likelihood ratio tests [1].

- Measurement Error: Even small amounts of error in the data can cause an OU model to be favored over Brownian motion, not due to biological process but because OU can accommodate more variance towards the tips of the tree [1].

- Over-interpretation: A good fit to an OU model is often interpreted as evidence of clade-wide stabilising selection, but the original literature cautions that this is an overly simplistic biological interpretation [1].

5. What are the common pitfalls in trait-dependent diversification models (like BiSSE)?

Models such as BiSSE are used to test if a particular trait influences speciation or extinction rates. A major pitfall is that these methods can infer a strong correlation between a trait and diversification rate from a single, trait-independent diversification rate shift within the tree. This can lead to biologically meaningless false positives [1]. It is critical to test for and account for background rate heterogeneity in the tree that is unrelated to the trait of interest.

Troubleshooting Guides & Experimental Protocols

Guide 1: Diagnosing Phylogenetic Signal in Your Data

Purpose: To determine whether your trait data exhibit significant phylogenetic non-independence, indicating whether phylogenetic correction is necessary.

Materials/Software Needed:

- A phylogenetic tree of your study taxa (with branch lengths).

- Trait data for those taxa.

- Statistical software (e.g., R with packages like

ape,phytools,caper).

Methodology:

- Data Preparation: Ensure your trait data and phylogeny are correctly matched, with no missing species.

- Calculate Pagel's λ: This is a commonly used metric of phylogenetic signal. It scales between 0 (no signal, as if traits evolved independently of the phylogeny) and 1 (signal consistent with a Brownian motion model).

- In R, use the

phylosig()function from thephytoolspackage.

- In R, use the

- Statistical Test: The function will typically provide a likelihood ratio test to determine if λ is significantly greater than 0.

- Interpretation:

- If λ is not significantly different from 0, standard statistical methods might be appropriate, though caution is still advised.

- If λ is significantly greater than 0, you must use phylogenetic comparative methods to avoid pseudoreplication.

Guide 2: Selecting and Applying a Phylogenetic Comparative Method

Purpose: To correctly analyze the relationship between two continuous traits while accounting for phylogeny.

Materials/Software Needed:

- As in Guide 1, plus two continuous trait datasets.

Methodology:

- Initial Diagnosis: Follow Guide 1 to confirm phylogenetic signal in your traits.

- Method Selection: The most straightforward and widely used methods are Phylogenetic Generalized Least Squares (PGLS) and its equivalent, Phylogenetic Independent Contrasts (PIC). PGLS is more flexible and is generally recommended.

- Model Fitting:

- Use the

pgls()function in thecaperpackage in R. - The function requires a formula (e.g.,

trait1 ~ trait2), the comparative data, and a model of evolution (often Brownian motion as a starting point).

- Use the

- Model Diagnostics:

- Check the model residuals for homoscedasticity and normality.

- Examine plots of residuals against fitted values.

- Check for a relationship between residuals and node height to see if the evolutionary model is adequate [1].

- Interpretation: Interpret the slope, p-value, and R² from the PGLS summary output as you would in a standard regression, noting that the analysis now controls for phylogenetic non-independence.

Table 1: Common Phylogenetic Comparative Methods and Their Applications

| Method | Data Type | Key Assumptions | Common Pitfalls |

|---|---|---|---|

| Phylogenetic Independent Contrasts (PIC) [1] | Continuous | Accurate tree topology & branch lengths; Brownian motion evolution. | Not testing assumptions; misinterpreting contrasts as raw data. |

| Phylogenetic Generalized Least Squares (PGLS) [3] | Continuous | Specified model of evolution (e.g., Brownian, OU). | Choosing an inappropriate evolutionary model for the data. |

| Ornstein-Uhlenbeck (OU) Models [1] | Continuous | A defined selective optimum and strength of selection. | Being incorrectly favored in small datasets; over-biological interpretation. |

| Binary State Speciation & Extinction (BiSSE) [1] | Binary | Traits are fixed within lineages; no hidden rate heterogeneity. | Inferring trait-dependent diversification from background rate shifts. |

The Scientist's Toolkit: Essential Analytical Reagents

Table 2: Key Research Reagent Solutions for Phylogenetic Comparative Analysis

| Item/Software | Function/Brief Explanation |

|---|---|

| Molecular Sequence Data | The raw material (e.g., from chloroplast, mitochondrial, or nuclear genomes) used to reconstruct the phylogenetic relationships among your taxa [4]. |

| Multiple Sequence Alignment Tool (e.g., MAFFT) | Software that aligns molecular sequences to identify homologous positions, a crucial step before tree building [4]. |

| Phylogenetic Inference Software (e.g., MrBayes, RAxML) | Tools used to estimate the phylogenetic tree (topology and branch lengths) from the aligned sequence data [5]. |

| R Statistical Environment | The primary platform for conducting statistical analyses, including phylogenetic comparative methods. |

R Package: ape/phytools |

Core packages for reading, manipulating, and visualizing phylogenetic trees and for basic phylogenetic analyses [5]. |

R Package: caper |

Implements Phylogenetic Independent Contrasts and Phylogenetic Generalized Least Squares (PGLS) with robust diagnostic tools [1]. |

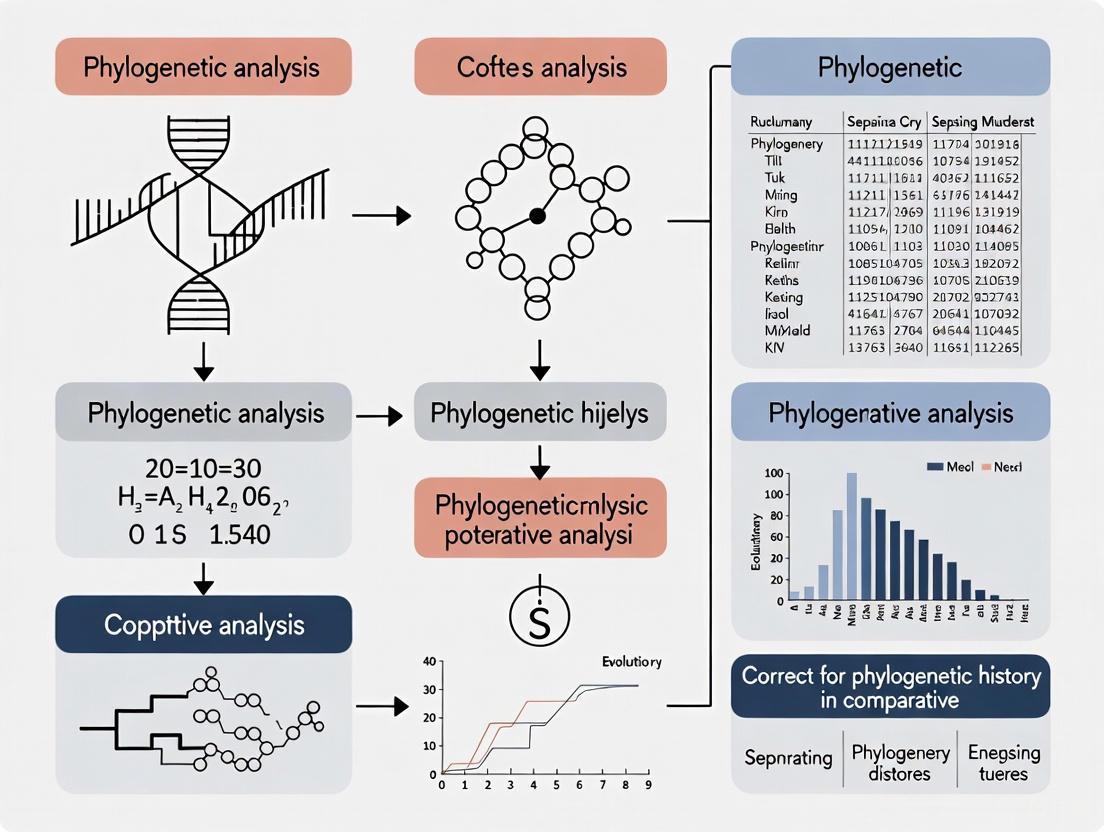

Workflow Visualization

The following diagram illustrates the logical workflow for diagnosing and correcting for phylogenetic non-independence in a comparative study.

Frequently Asked Questions

Q1: What is the fundamental difference between Phylogenetic Signal and Phylogenetic Niche Conservatism? A1: While related, they are distinct concepts. Phylogenetic Signal (PS) is the simple tendency for related species to resemble each other more than distant relatives or random species from a tree [6] [7]. Phylogenetic Niche Conservatism (PNC) is a more specific and restrictive concept. It describes the tendency for species to retain their ancestral ecological niche characteristics over time, and many argue it should imply that niches evolve more slowly than expected under a neutral model like Brownian motion [6] [7]. Not all phylogenetic signal indicates conservatism; labile niches can sometimes produce a strong PS [6].

Q2: My analysis found a significant phylogenetic signal. Can I conclude my trait is under niche conservatism? A2: Not necessarily. A significant phylogenetic signal is consistent with PNC but is not sufficient proof on its own [6]. A finding of significant PS could arise simply from neutral drift (Brownian motion). To robustly infer PNC, you must compare your results to a null model and demonstrate that trait evolution is significantly slower than expected under that model [6] [7]. Strong PNC can sometimes exist without a strong pattern of PS [6].

Q3: I am bewildered by the diversity of genes in my gene family. How can I determine which are comparable for my analysis? A3: Phylogenetic methodology is key to solving this. You should build a gene tree to infer orthology and paralogy [8]. Orthologous genes are those that diverged due to a speciation event and are typically the members of a well-defined clade descending from a single common ancestor. These are ideal for most comparative studies across species. Paralogous genes diverged due to a gene duplication event; comparing these can be misleading as they may have evolved new functions [8].

Q4: Why do I keep getting inconsistent conclusions about PNC in my study system? A4: Inconsistencies often arise from two main issues:

- Definition and Measurement: Different studies use different definitions and measures for PNC (e.g., phylogenetic signal tests, evolutionary rates), which are not directly comparable [6].

- Violation of Model Assumptions: Common measures of PNC rely on assumptions about the underlying model of trait evolution (e.g., Brownian motion). If these assumptions are violated, results and conclusions can be misleading [6]. It is crucial to test these assumptions and compare alternative evolutionary models.

Q5: Where can I find a reliable phylogenetic tree for my group of interest? A5: Several online resources provide phylogenetic trees and contact information for experts.

- TreeBASE: A repository of phylogenetic trees and the data used to generate them [8].

- Angiosperm Phylogeny Website: Provides detailed phylogenetic information for flowering plants [8].

- Consult an Expert: The table below lists phylogenetic consultants for major clades of land plants [8].

| Clade(s) | Contact Person | Affiliation |

|---|---|---|

| Mosses, Liverworts | Jonathan Shaw | Duke University |

| Ferns | Kathleen Pryer | Duke University |

| Basal Angiosperms | Douglas Soltis | University of Florida |

| Monocots | Mark Chase | Royal Botanic Gardens, Kew |

| Poaceae | Elizabeth Kellogg | University of Missouri |

| Rosids | Douglas Soltis | University of Florida |

| Fabaceae | Jeff Doyle | Cornell University |

| Brassicaceae | Ishan Al-Shehbaz | Missouri Botanical Garden |

| Asterids | Richard Olmstead | University of Washington |

Troubleshooting Experimental Guides

Problem: Choosing the wrong metric or model for phylogenetic signal and niche conservatism.

Solution: Follow a model-comparison framework to select the best-fitting model of evolution for your trait data. Do not rely on a single metric.

Protocol: A Robust Workflow for Testing PNC

- Define Your Trait and Phylogeny: Clearly define the continuous niche-related trait (e.g., climatic optimum, soil pH tolerance) and obtain a robust, dated phylogeny for your study species [6].

- Fit Multiple Evolutionary Models: Use software like

geiger(R) orbayouto fit a series of models to your trait data:- Brownian Motion (BM): Assumes neutral drift.

- Ornstein-Uhlenbeck (OU): Models trait evolution under stabilizing selection toward a central optimum, which is a model of PNC.

- Multiple-Optima OU Models (OUM): Allows different adaptive peaks for different clades or regimes [6].

- Compare Models: Use statistical criteria like AICc (Akaike Information Criterion corrected for small sample sizes) to identify the best-supported model. A model with one or a few OU peaks (OUM) provides strong evidence for PNC, especially if the selection strength parameter (α) is high [6].

- Estimate Evolutionary Rates: If a BM model is preferred, you can estimate its evolutionary rate (σ²). However, to conclude PNC, you must show this rate is significantly slower than a null expectation, which may require a comparison with another clade or trait [6].

Problem: Misinterpreting the relationship between gene evolution and species evolution.

Solution: Always construct a gene tree to distinguish between orthologs and paralogs before performing comparative analyses [8].

Protocol: Resolving Gene Families for Comparative Analysis

- Sequence Collection: Gather coding sequences for your gene family of interest from a diverse set of species within your clade.

- Multiple Sequence Alignment: Use an aligner like MAFFT or MUSCLE to create a high-quality multiple sequence alignment.

- Gene Tree Construction: Build a phylogenetic tree from the alignment using maximum likelihood (e.g., RAxML, IQ-TREE) or Bayesian methods (e.g., MrBayes).

- Identify Clades: On the gene tree, identify well-supported clades where all genes are related by speciation events. These clades contain your putative orthologs.

- Comparative Analysis: Use only the identified orthologous sequences for downstream cross-species comparative genetic or genomic research. Treat genes from distinct clades (paralogs) separately [8].

Key Metrics and Models for Phylogenetic Signal and Niche Conservatism

Table 1: Common Metrics for Testing Phylogenetic Signal

| Metric | What it Measures | Null Model (No Signal) | Interpretation for PS | Caveats |

|---|---|---|---|---|

| Blomberg's K | Tendency for related species to resemble each other | K = 0 | K > 0 indicates PS. K = 1 matches BM expectation. | Sensitive to tree size and topology [7]. |

| Pagel's λ | Strength of phylogenetic dependence on trait correlation | λ = 0 | λ = 1 matches BM expectation; 0 < λ < 1 indicates less PS than BM [9]. | A low λ does not necessarily rule out PNC [6]. |

Table 2: Models of Trait Evolution Used in PNC Studies

| Model | Key Parameters | Biological Interpretation | Indicates PNC? |

|---|---|---|---|

| Brownian Motion (BM) | σ² (evolutionary rate) | Neutral drift or random evolution in a constant adaptive landscape. | No, this is the null. |

| Ornstein-Uhlenbeck (OU) | α (selection strength), θ (optimum) | Evolution under stabilizing selection toward a single primary optimum. | Yes, indicates constraining forces. |

| Multiple-Peak OU (OUM) | α, multiple θ values | Evolution under stabilizing selection with shifts to new optima at specific points in history. | Yes, especially if few shifts and/or high α [6]. |

Research Reagent Solutions

Table 3: Essential Materials and Tools for Phylogenetic Comparative Analysis

| Item/Tool Name | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Dated Molecular Phylogeny | The historical framework showing relationships and divergence times between species. | Essential for all comparative analyses to control for shared evolutionary history [8]. |

| Phylogenetic Generalized Least Squares (PGLS) | A statistical method that incorporates the phylogenetic relationships into a regression model. | Testing for a correlation between two continuous traits (e.g., leaf area and rainfall) while accounting for phylogeny [9]. |

| Phylogenetically Independent Contrasts (PIC) | A method that transforms species data into statistically independent values based on the phylogeny. | An alternative to PGLS for testing trait correlations under a Brownian motion model of evolution [9]. |

| Orthologous Gene Set | A group of genes related by speciation events only, not duplication. | Provides a comparable set of genes for cross-species genomic studies, avoiding functional divergence in paralogs [8]. |

R Package geiger |

A tool for fitting diverse models of trait evolution and comparing them. | Testing whether an OU model (PNC) fits your trait data better than a Brownian model (neutral drift). |

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between Brownian Motion and Ornstein-Uhlenbeck models?

Brownian Motion (BM) models trait evolution as a random walk without any constraints, where the variance in trait values increases linearly with time [10] [11]. In contrast, the Ornstein-Uhlenbeck (OU) model incorporates a centralizing force that pulls the trait towards an optimal value, $\theta$ [12] [13]. This "rubber band" effect, governed by the strength of selection parameter $\alpha$, models stabilizing selection and prevents the trait variance from increasing indefinitely, leading to a stationary distribution of trait values around the optimum [12] [13]. When $\alpha = 0$, the OU model collapses to the BM model [12].

2. When should I use an OU model instead of a Brownian Motion model?

An OU model is often more appropriate when you have a biological rationale that a trait is under stabilizing selection towards a specific optimum or when exploring scenarios of convergent evolution [14] [13]. BM is typically used as a neutral model where traits evolve randomly due to genetic drift, without directional selection [10] [15]. Model selection criteria, such as AIC, can help determine which model provides a better fit for your data [16].

3. My OU model analysis suggests high phylogenetic signal. Does this indicate a phylogenetic constraint?

Not necessarily. A common misinterpretation is equating high phylogenetic signal with evolutionary constraint [17]. A high phylogenetic signal (often measured with Pagel's $\lambda$ near 1) can result from unconstrained Brownian motion evolution [17]. Conversely, a lack of phylogenetic signal can result from an OU model with a high $\alpha$ parameter, where evolution away from the optimum is highly constrained [17]. The biological interpretation of parameters should be made cautiously and in context [17] [13].

4. Why are my estimates for the OU parameters $\alpha$ and $\sigma^2$ uncertain or highly correlated?

The parameters $\alpha$ and $\sigma^2$ in the OU model can be difficult to estimate separately because they both influence the long-term variance of the process, which is proportional to $\sigma^2 / 2\alpha$ [12] [13]. When the rate of evolution is high or branches on the phylogeny are long, these parameters become correlated, leading to flat likelihood surfaces and unreliable estimates [12] [13]. Using a multivariate proposal mechanism in MCMC algorithms or examining the joint posterior distribution can help diagnose this issue [12].

5. Can I model the evolution of traits when species interact or exchange genes?

Yes, standard OU models assume species evolve independently, but recent extensions allow for the inclusion of migration or ecological interactions between species [14]. These models are particularly useful for studying phenotypic evolution among diverging populations within species or between closely related species that hybridize [14]. Ignoring these interactions can lead to misinterpretations, where similarity due to migration is mistaken for very strong convergent evolution [14].

Troubleshooting Guides

Problem: Model selection consistently favors complex OU models even for simple, simulated BM data.

- Potential Cause: Likelihood-ratio tests and information criteria like AIC can have a bias towards preferring more complex models (like OU) even when the true generating process is simpler Brownian Motion [13] [16].

- Solution:

- Simulate and Validate: Simulate new datasets under the fitted BM and OU models. Compare summary statistics (e.g., the distribution of trait values at tips, changes along branches) from these simulations to your empirical data. The best-fitting model should produce simulated data that most closely resemble your real data [13].

- Use Penalized Criteria: Consider using criteria with stronger penalties for model complexity, such as the Bayesian Information Criterion (BIC) [16].

- Explore New Methods: Investigate emerging methods like Evolutionary Discriminant Analysis (EvoDA), which uses supervised learning to predict evolutionary models and can show improved performance, especially with noisy data [16].

Problem: Parameter estimates for my evolutionary model are unstable or sensitive to small changes in the dataset.

- Potential Cause: This instability can be caused by several factors, including small sample sizes (few species in the phylogeny) or the presence of even small amounts of measurement error in the trait data [13].

- Solution:

- Account for Measurement Error: Explicitly incorporate a parameter for measurement error into your model. This can prevent the model from over-interpreting small, non-phylogenetic variations as a signal of a specific evolutionary process [13] [16].

- Check Sample Size: Be cautious when interpreting models fit to small phylogenies. The power to reliably discriminate between complex models is low with limited data [13].

- Robustness Check: Perform a robustness analysis by systematically removing one species or clade at a time and refitting the model to see if your parameter estimates are consistent.

Problem: I need to model heterogeneous evolutionary rates across my phylogeny.

- Potential Cause: The rate of evolution ($\sigma^2$) is not constant but varies between different branches or clades of the tree [17] [11].

- Solution:

- A Priori Rate Tests: If you have hypotheses about specific clades having different rates, you can use methods that allow you to assign different rate categories to pre-specified parts of the phylogeny [11].

- Variable-Rate Models: Implement a variable-rate Brownian motion model where the instantaneous rate of evolution ($\sigma^2$) itself evolves along the branches of the tree (e.g., via geometric Brownian motion) [11]. This can be fit using penalized-likelihood approaches [11].

- Tree Transformations: Use tree transformations like Pagel's $\delta$, which can capture patterns where the rate of evolution speeds up ($\delta > 1$) or slows down ($\delta < 1$) through time [17].

Key Parameters and Model Comparison

Table 1: Core Parameters of Primary Trait Evolution Models

| Model | Key Parameters | Biological Interpretation |

|---|---|---|

| Brownian Motion (BM) | $\sigma^2$: Evolutionary rate parameter$z_0$: Root trait value | Rate of increase in trait variance over time. Often interpreted as neutral evolution (genetic drift) [10] [11]. |

| Ornstein-Uhlenbeck (OU) | $\alpha$: Strength of selection$\theta$: Optimal trait value$\sigma^2$: Evolutionary rate parameter | Strength of pull towards an optimum $\theta$. Models stabilizing selection [12] [13]. |

| Pagel's $\lambda$ | $\lambda$: Phylogenetic signal scalar ($0 \leq \lambda \leq 1$) | Scales the internal branches of the phylogeny. Measures the "phylogenetic signal" or departure from BM expectations ($\lambda=1$ is BM; $\lambda=0$ is no phylogenetic influence) [17]. |

| Pagel's $\delta$ | $\delta$: Time transformation parameter ($\delta > 0$) | Models accelerating ($\delta > 1$) or decelerating ($\delta < 1$) rates of evolution through time [17]. |

Table 2: Model Selection Guide Based on Common Research Questions

| Research Question | Suggested Model(s) | Key Analysis |

|---|---|---|

| Did my trait evolve neutrally? | BM, OU with a single optimum | Compare the fit of BM vs. OU using AIC/AICc. A better fit for BM suggests neutral evolution [13] [16]. |

| Is there evidence for stabilizing selection? | OU with a single optimum, OU with multiple optima | A significantly better fit for an OU model over BM, with $\alpha > 0$, is consistent with stabilizing selection. However, caution in interpretation is needed [13]. |

| Does the evolutionary rate vary across the tree? | Variable-rate BM, OU with multiple rate categories, Pagel's $\delta$ | Fit heterogeneous rate models and compare them to constant-rate models. Also, simulate under the fitted model to validate [11]. |

| Has convergent evolution occurred? | OU with multiple optima | Fit an OU model where distinct clades or species are assigned to the same optimum $\theta$ [14] [13]. |

Experimental Protocols

Protocol 1: Basic Model Fitting and Selection for a Continuous Trait

This protocol outlines the core workflow for fitting and comparing standard models of trait evolution.

- Data and Tree Preparation: Format your data into a table where rows are species and columns are traits. Ensure your trait data for all species is continuous and matches the tip labels in your time-calibrated phylogenetic tree.

- Model Specification: Define the set of models you wish to compare. A standard starting set includes:

- Brownian Motion (BM)

- Ornstein-Uhlenbeck (OU1) with a single optimum

- Early-Burst (EB) / ACDC

- Pagel's $\lambda$

- Parameter Estimation: For each model, use appropriate software (e.g.,

geiger,phytools, orRevBayesin R) to find the parameter values that maximize the likelihood of observing your trait data given the phylogeny. - Model Selection: Calculate the Akaike Information Criterion (AIC) or sample-size corrected AICc for each fitted model. The model with the lowest AIC/AICc score is considered the best fit [16].

- Model Validation: Simulate a large number of trait datasets (e.g., 1000) under the best-fitting model. Compare the distribution of your empirical trait data to the distribution of simulated data. A good model will produce simulations that often contain data similar to your real observations [13].

Protocol 2: Fitting an Ornstein-Uhlenbeck Model in a Bayesian Framework

This protocol details the steps for implementing an OU model using Bayesian inference with RevBayes [12].

- Read the Data: Load your time-calibrated phylogeny and continuous character data into

RevBayes. - Specify Priors:

- Rate parameter ($\sigma^2$): Assign a loguniform prior, e.g.,

dnLoguniform(1e-3, 1)[12]. - Adaptation parameter ($\alpha$): Assign an exponential prior. A common parameterization is to set the mean of the exponential to

root_age / 2.0 / ln(2.0), which centers the prior on a phylogenetic half-life of half the tree's age [12]. - Optimum ($\theta$): Assign a vague uniform prior over a biologically realistic range, e.g.,

dnUniform(-10, 10)[12].

- Rate parameter ($\sigma^2$): Assign a loguniform prior, e.g.,

- Define the OU Model: Create the OU model using a function like

dnPhyloOrnsteinUhlenbeckREML, providing the tree and the parameter nodes. Clamp the observed trait data to this model [12]. - Run MCMC: Specify monitors to track parameters and run a Markov chain Monte Carlo analysis for a sufficient number of generations (e.g., 50,000), ensuring convergence and adequate effective sample size (ESS > 200) for all parameters of interest [12].

- Calculate Derived Statistics: Compute the posterior distributions of biologically meaningful transformations:

- Phylogenetic half-life: $t_{1/2} = \ln(2) / \alpha$. This is the expected time for a lineage to get halfway to the optimum [12].

- Decrease in variance ($p{th}$): $p{th} = 1 - (1 - \exp(-2.0 \alpha \cdot \text{root_age})) / (2.0 \alpha \cdot \text{root_age})$. This metric represents the percent decrease in trait variance caused by selection over the study period compared to the variance expected under pure drift (BM) [12].

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Trait Evolution Modeling

| Tool / Reagent | Function / Description | Example Use Case |

|---|---|---|

| R Statistical Environment | A free software environment for statistical computing and graphics. The primary platform for most phylogenetic comparative methods. | Core platform for all analyses. |

geiger R Package |

A tool for fitting and simulating a wide range of evolutionary models, including BM, OU, and EB. | Initial model fitting and likelihood comparison [13]. |

phytools R Package |

A extensive package for phylogenetic analysis, including visualization, ancestral state reconstruction, and fitting models like Pagel's $\lambda$ and variable-rate BM [11]. | Creating phylogenetic trait graphs; fitting Pagel's models; implementing the multirateBM function [17] [11]. |

RevBayes Software |

A Bayesian framework for phylogenetic inference using probabilistic graphical models. Highly flexible for implementing custom models like OU with specific priors [12]. | Bayesian implementation of OU models; estimating parameters with credible intervals; calculating derived statistics like phylogenetic half-life [12]. |

| Phylogenetic Half-Life ($t_{1/2}$) | A derived parameter from the OU model, calculated as $\ln(2)/\alpha$. Represents the expected time for a trait to evolve halfway to its optimum [12]. | Interpreting the strength of selection in a time-calibrated context. A short half-life suggests rapid adaptation. |

| Measurement Error Parameter | An additional parameter ($\sigma_e^2$) added to the model to account for intraspecific variation or instrument error in the trait data. | Preventing model misidentification by ensuring small errors are not misinterpreted as an evolutionary signal [13] [16]. |

Troubleshooting Guides & FAQs for Phylogenetic Comparative Analysis

Frequently Asked Questions

1. My phylogenetic independent contrasts analysis yields unexpected results. What are the key assumptions I might have violated? Phylogenetic Independent Contrasts (PIC) has three major assumptions that are often overlooked [1]:

- Assumption 1: The topology of the phylogeny is accurate.

- Assumption 2: The branch lengths of the phylogeny are correct.

- Assumption 3: Traits evolve under a Brownian motion model.

Troubleshooting Steps: Always check these assumptions using model diagnostic plots available in standard R packages like

caper. Look for relationships between standardized contrasts and node heights, and check for heteroscedasticity in model residuals [1].

2. When should I use an Ornstein-Uhlenbeck (OU) model over a Brownian motion model? The OU model is often interpreted as evidence of stabilising selection or evolutionary constraints. However, it has key caveats [1]:

- It is frequently incorrectly favoured over simpler models in likelihood ratio tests, especially with small datasets (median taxa ~58).

- Even small amounts of measurement error can cause OU to be favoured, not due to biological process but because it accommodates more variance towards the tips.

- A simple clade-wide stabilising selection is an unlikely biological explanation for an OU model fit. Recommendation: Use OU models cautiously. Ensure you have a sufficiently large dataset and have accounted for measurement error before making strong biological inferences.

3. How can I effectively visualize a large, annotated phylogenetic tree? For large trees with rich metadata, manual customization is time-consuming. Use tools that support automatic customization via simple file formats [18].

- Recommended Tool: Iroki, a web application that uses tab-separated text files to automatically style tree components (branches, labels, etc.) based on your metadata [18].

- Alternative: The R package

ggtreeprovides a programmable platform within R for complex tree annotation and integration of diverse data types [19].

Key Experimental Protocols

Protocol 1: Testing for Phylogenetic Signal in Traits This protocol is used to assess whether closely related species tend to have similar trait values, indicating phylogenetic conservatism [20].

- Data Compilation: Compile a species-level trait dataset and a corresponding phylogeny. Ensure trait data is matched correctly to the tips of the phylogenetic tree.

- Model Selection: Choose an evolutionary model to test for phylogenetic signal. Common models include Brownian motion and Ornstein-Uhlenbeck.

- Analysis: Using a software package such as

phytoolsin R, fit the model to your trait data and the phylogeny. - Interpretation: A significant phylogenetic signal (often measured by metrics like Pagel's λ or Blomberg's K) indicates that trait evolution is constrained by phylogeny, demonstrating phylogenetic niche conservatism [20].

Protocol 2: Conducting a Phylogenetic Generalized Least Squares (PGLS) Regression PGLS is used to test the relationship between two or more continuous variables while accounting for phylogenetic non-independence [9].

- Model Specification: Define your regression model (e.g.,

y ~ x). - Define Error Structure: Incorporate the phylogenetic tree into the variance-covariance matrix (often denoted V) of the model residuals. This matrix is defined by the phylogeny and an evolutionary model (e.g., Brownian motion) [9].

- Parameter Estimation: Co-estimate the parameters of the regression model and the parameters of the evolutionary model.

- Significance Testing: Evaluate the significance of the regression slope using phylogenetically corrected standard errors.

Table 1: Summary of Key Findings from the Dipterocarpaceae Case Study [20]

| Analysis Type | Key Finding | Interpretation |

|---|---|---|

| Phylogenetic Signal | Moderate to strong phylogenetic signal found for plant traits. | Trait variation is not independent; closely related species share similar traits due to common ancestry (Phylogenetic Niche Conservatism). |

| Species Distribution | Elevational gradient identified as a key driver of species distribution. | Species are phylogenetically structured across environmental gradients. |

| Trait-Environment Relationship | Morphological traits (height, diameter) show phylogenetically dependent relationships with soil type. | The relationship between species' traits and their environment is influenced by shared evolutionary history. |

| Conservation Status | Conservation status is related to phylogeny and correlated with population trends. | Threatened species and those with decreasing population trends are not randomly distributed across the phylogeny. |

Table 2: Essential Research Reagent Solutions for Phylogenetic Comparative Analysis

| Item | Function / Explanation |

|---|---|

| Phylogenetic Tree | The historical hypothesis of relationships among lineages. It is the essential data structure for all PCMs to account for non-independence [9]. |

| Trait Dataset | A matrix of species-specific phenotypic or ecological measurements for the traits of interest (e.g., height, leaf mass, diet). |

| R Statistical Environment | A programming language and environment for statistical computing. It is the primary platform for implementing PCMs [19]. |

ggtree R Package |

An R package for the visualization and annotation of phylogenetic trees with associated data. It allows for complex, layered plots and integrates with the ggplot2 syntax [19]. |

caper R Package |

An R package that provides functions for performing phylogenetic independent contrasts and related analyses, including key diagnostic tests [1]. |

| Evolutionary Model (e.g., BM, OU) | A statistical model describing how a trait is hypothesized to have evolved along the branches of a phylogeny. Model choice can influence biological interpretation [9] [1]. |

Experimental Workflow Visualization

Frequently Asked Questions

FAQ 1: My protein sequences are too divergent for a reliable sequence-based phylogeny. What are my options? You can use structural phylogenetics. Because protein 3D structure evolves more slowly than the underlying sequence, it can resolve evolutionary relationships where sequence-based methods fail. A recommended approach is FoldTree, which uses a structural alphabet to create alignments and infer trees, proving particularly effective for fast-evolving protein families like the RRNPPA quorum-sensing receptors [21].

FAQ 2: What software can I use to visualize and annotate phylogenetic trees for publication? The R package ggtree is a powerful tool for this purpose. It extends the ggplot2 system, allowing you to visualize trees using a layered grammar of graphics. You can create various layouts (rectangular, circular, slanted, etc.) and annotate trees with associated data from different sources [19] [22]. The basic workflow in R is:

FAQ 3: How can I test if my phylogenetic tree adheres to a molecular clock? You can use the Taxonomic Congruence Score (TCS). This metric assesses the congruence of your reconstructed gene tree with the known species taxonomy. A higher TCS indicates a topology that is more congruent with expected vertical inheritance, which is often associated with adherence to a molecular clock. Structure-informed methods like FoldTree have been shown to produce trees with better TCS on divergent datasets [21].

FAQ 4: My tree visualization needs to highlight specific clades and add experimental data. How can I do this programmatically?

In ggtree, you can use geom_hilight() to highlight clades and geom_cladelab() to label them. These layers can be combined with other ggplot2-compatible geoms to map experimental data onto your tree. First, you may need to identify internal nodes using geom_text(aes(label=node)) or the MRCA() function with a vector of tip names [22].

Troubleshooting Guides

Problem: Low Branch Support in Deep Phylogeny

- Symptoms: Short internal branches and low bootstrap values in parts of the tree representing deep evolutionary divergences.

- Diagnosis: The phylogenetic signal in the sequence data has been eroded by multiple substitutions at the same site (saturation).

- Solution: Employ structural phylogenetics. Use AI-based protein structure prediction tools (e.g., AlphaFold2) to generate 3D models for your protein homologs. Then, use a pipeline like FoldTree to infer the phylogeny based on structural alignments, which are more conserved over long timescales [21].

- Protocol: Structural Phylogenetics with FoldTree

- Data Collection: Gather amino acid sequences for the protein family of interest.

- Structure Prediction: Generate 3D structural models for each sequence using AlphaFold2 or a similar tool.

- Structure Alignment: Perform an all-against-all structural alignment using Foldseek to obtain a statistically corrected similarity score (Fident) [21].

- Tree Building: Use the neighbor-joining algorithm with the Foldseek-derived distance matrix to reconstruct the phylogenetic tree.

Problem: Inability to Reconcile Gene Tree with Species Tree

- Symptoms: A gene tree topology that is strongly incongruent with the established species taxonomy.

- Diagnosis: This could be due to deep sequence divergence, horizontal gene transfer, or other non-vertical evolutionary processes.

- Solution: Use the Taxonomic Congruence Score (TCS) to quantitatively evaluate the congruence of your gene tree with the known species tree. Benchmark your tree against others built with different methods (e.g., maximum-likelihood on sequences vs. FoldTree on structures) to find the most parsimonious evolutionary history [21].

Problem: Visualizing Complex Annotations on a Phylogenetic Tree

- Symptoms: Needing to display associated data (e.g., experimental measurements, geographic location) alongside tree tips but lacking an easy method.

- Diagnosis: Standard tree visualization software has limited, pre-defined annotation functions.

- Solution: Use the ggtree R package. It allows for the integration of diverse data types and uses a layered approach to visualization, similar to ggplot2 [19] [22].

- Protocol: Annotating a Tree with ggtree

- Import Data: Read your tree file (e.g., Newick format) using

read.tree()and any associated data (e.g., a CSV file) into R. - Basic Tree: Create a basic tree plot using the

ggtree()function. - Merge Data: Join the tree object with your associated data using the

%<+%operator or thefull_join()function from dplyr. - Add Layers: Use

+to add annotation layers likegeom_tippoint(),geom_tiplab(), orgeom_facet()to map your data onto the tree.

- Import Data: Read your tree file (e.g., Newick format) using

Diagnostic Data and Benchmarks

The table below summarizes a benchmark comparing different phylogenetic approaches, highlighting the performance of structural methods on divergent datasets [21].

Table 1: Benchmarking Phylogenetic Inference Methods

| Method Category | Specific Method | Input Data | Key Metric: Taxonomic Congruence Score (TCS) on Divergent Protein Families | Key Metric: Performance on Highly Divergent Datasets |

|---|---|---|---|---|

| Structure-informed | FoldTree (NJ with Fident distance) | Structural Alphabet Alignment | Top performing | Outperforms sequence-based methods [21] |

| Structure-informed | Partitioned Likelihood | Sequence + Structure | Competitive | Better than sequence-only methods [21] |

| Sequence-based | Maximum-Likelihood | Amino Acid Sequence Alignment | Lower than structure-informed methods | Performance decreases with higher sequence divergence [21] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Resources for Phylogenetic Analysis

| Item | Function | Resource Link |

|---|---|---|

| Foldseek | Fast and accurate comparison of protein structures, used for structural alignment in pipelines like FoldTree. | https://foldseek.com/ |

| AlphaFold2 | AI system that predicts a protein's 3D structure from its amino acid sequence with high accuracy. | https://github.com/deepmind/alphafold |

| ggtree | An R package for the visualization and annotation of phylogenetic trees with associated data. | https://bioconductor.org/packages/ggtree |

| TreeIO | An R package for parsing and exporting phylogenetic trees with associated data, often used with ggtree. | https://bioconductor.org/packages/treeio |

Workflow Visualization

The following diagram illustrates the diagnostic workflow for identifying phylogenetic structure when sequence-based methods are insufficient.

The Methodological Toolkit: Implementing Phylogenetic Corrections in Practice

Troubleshooting Guides

Common PGLS Errors and Solutions

| Error Message | Cause | Solution | Relevant Context |

|---|---|---|---|

| "no covariate specified" [23] | A recent update to the ape package requires explicit specification of the taxa covariate. |

Add a form parameter to the correlation structure, e.g., corBrownian(phy=your_tree, form = ~Species) [23]. |

Ensure your dataframe contains a column (e.g., "Species") with names matching the tree's tip labels [23]. |

"non-numeric argument to mathematical function" when comparing procD.pgls models [24] |

A bug where necessary output for model comparison is not generated by default. | Run procD.pgls with the argument verbose = TRUE [24]. This ensures all required output is available for anova and model.comparison functions. |

This issue is specific to the geomorph package's procD.pgls function and has been addressed in subsequent updates to the RRPP package [24]. |

| Inaccurate parameter estimates when trait data contains measurement error [25] | Standard PGLS does not account for sampling error (measurement variance) in the predictor and response variables. | Use specialized methods like the pgls.Ives function, which incorporates sampling variances and covariances for both traits [25]. |

This method uses a likelihood framework to simultaneously estimate the regression parameters and the evolutionary rates (σ²) while accounting for known measurement error [25]. |

Failure of corPagel or corMartins models to converge [26] |

Optimization issues, often related to the scale of the phylogenetic tree's branch lengths. | Rescale the branch lengths of the tree (e.g., tempTree$edge.length <- your_tree$edge.length * 100) and re-fit the model [26]. |

This rescaling affects a nuisance parameter and does not change the biological interpretation of the model results [26]. |

Key Experimental Protocols and Methodologies

Protocol 1: Implementing a Basic PGLS Model in R

This protocol outlines the steps to perform a basic Phylogenetic Generalized Least Squares (PGLS) regression analysis in R, which is a cornerstone of modern phylogenetic comparative methods [27] [26].

- Data and Tree Preparation: Load your trait data and phylogenetic tree into R. Use the

geigerpackage functionname.check()to ensure species names in the data frame match those in the tree [26]. - Model Formulation: Define your model formula. For a bivariate regression, this would be

Response_Trait ~ Predictor_Trait[26]. - Model Fitting: Use the

gls()function from thenlmepackage to fit the model. Specify the phylogenetic correlation structure using thecorrelationargument. For a Brownian motion model of evolution, usecorrelation = corBrownian(phy = your_tree, form = ~Species)[23] [26]. - Result Interpretation: Examine the output using the

summary()function to obtain regression coefficients, t-values, p-values, and other model diagnostics [26].

Protocol 2: Phylogenetically Informed Prediction

This methodology outperforms simple predictive equations derived from PGLS or OLS models, especially for traits with weak correlations or when predicting for species with long branch lengths [27].

- Model Training: Fit a phylogenetic regression model (e.g., PGLS) using species with known values for both the predictor and response traits [27].

- Prediction: For a species with an unknown value for the response trait, the phylogenetically informed prediction explicitly uses its phylogenetic position and the known value of its predictor trait. This is done by calculating independent contrasts or using a phylogenetic variance-covariance matrix that includes the new species [27].

- Uncertainty Quantification: Generate prediction intervals, which naturally increase with increasing phylogenetic distance (branch length) from species with known data [27].

The Scientist's Toolkit: Research Reagent Solutions

Essential R Packages for PGLS Analysis

| Package Name | Function/Brief Explanation |

|---|---|

ape |

A core package for phylogenetic analysis in R; provides functions for reading, manipulating, and visualizing trees, and is a dependency for many other comparative method packages [23]. |

nlme |

Provides the gls() function, which is the standard tool for fitting PGLS models using various phylogenetic correlation structures [26]. |

geiger |

Offers utility functions, such as name.check(), for data management and ensuring congruence between trait datasets and phylogenetic trees [26]. |

phytools |

A comprehensive package for phylogenetic comparative methods. It includes advanced functions, such as pgls.Ives() for PGLS with sampling error [25]. |

geomorph |

Used for the geometric morphometric analysis of shape. Its procD.pgls() function performs PGLS on shape data [24]. |

Frequently Asked Questions (FAQs)

What is the main advantage of PGLS over ordinary least squares (OLS) regression?

PGLS explicitly accounts for the non-independence of species data due to their shared evolutionary history. Ignoring this phylogenetic structure can lead to pseudo-replication, misleadingly high confidence in results (spurious results), and incorrect parameter estimates [27]. PGLS incorporates a model of evolution (e.g., Brownian motion) to correct for this non-independence.

My analysis worked before but now gives a "no covariate specified" error. What happened?

This error is likely due to an update to the ape package. The functions for phylogenetic correlation structures (e.g., corBrownian) now require you to explicitly specify the species covariate using the form argument. The solution is to add, for example, form = ~Species to your correlation function call, assuming you have a "Species" column in your data frame [23].

How do I choose the right correlation structure (e.g., Brownian, OU, Pagel's λ) for my PGLS model?

The choice of correlation structure depends on the assumed model of evolution. Brownian motion (corBrownian) is often a default. More complex models like Ornstein-Uhlenbeck (corMartins) or those with Pagel's λ (corPagel) can model traits under stabilizing selection or to assess the strength of phylogenetic signal. You can compare models using information criteria (like AIC) to find the best fit for your data [26].

What is phylogenetically informed prediction and why is it better than using PGLS coefficients?

Phylogenetically informed prediction is a method that directly uses the phylogenetic relationships and the regression model to predict unknown trait values. It is superior to simply plugging values into an equation derived from PGLS coefficients because it incorporates information on the phylogenetic position of the predicted species. Simulations show it can be two- to three-fold more accurate, and predictions from weakly correlated traits using this method can be as good or better than predictive equations from strongly correlated traits [27].

Can PGLS account for measurement error in my trait data?

Standard PGLS implementations in gls() do not. However, specialized methods exist, such as the one implemented in the pgls.Ives() function, which can incorporate known sampling variances and covariances for both the predictor and response traits, leading to more accurate parameter estimates [25].

Workflow and Conceptual Diagrams

PGLS Analysis and Troubleshooting Workflow

Phylogenetically Informed Prediction Concept

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind Phylogenetically Independent Contrasts (PIC)? PIC operates on the principle that species share traits due to common ancestry, violating the statistical assumption of data independence. The method calculates contrasts, or differences, in trait values between pairs of closely related species or nodes on a phylogenetic tree. These contrasts represent evolutionary changes independent of phylogeny, allowing for statistically valid comparative analyses by transforming raw species data into independent data points [27].

Q2: My PIC analysis shows a significant correlation, but how do I interpret this evolutionarily? A significant correlation between standardized contrasts for two traits indicates that the evolutionary changes in these traits are correlated. This suggests that the traits have evolved in a coordinated manner along the branches of your phylogeny. For example, an increase in one trait is consistently associated with an increase (or decrease) in another trait over evolutionary time, providing evidence for adaptation or constraint [27].

Q3: What should I do if the absolute values of standardized contrasts correlate with their standard deviations? This correlation often indicates that the branch length information in your phylogenetic tree may not be optimal for the traits you are analyzing. You should:

- Check your tree: Ensure the branch lengths are appropriate (e.g., time, genetic distance).

- Transform branch lengths: Try applying a branch length transformation, such as using Pagel's lambda (λ) or log-transforming lengths, to find a model that better fits the data and removes this correlation.

- Re-calculate contrasts: Use the transformed tree to compute new contrasts and check the correlation again [27].

Q4: How does PIC performance compare to non-phylogenetic methods? Simulation studies demonstrate that phylogenetically informed prediction, which includes PIC-based methods, significantly outperforms predictive equations from non-phylogenetic models like Ordinary Least Squares (OLS). Performance improvements of two- to three-fold are common. Using PIC with weakly correlated traits (r=0.25) can yield results as good as or better than using OLS with strongly correlated traits (r=0.75) [27].

Q5: What are the best practices for visualizing a tree with PIC results?

The ggtree package in R is a powerful tool for visualizing phylogenetic trees and associated data. You can map your calculated contrasts directly onto the tree using various aesthetic features [19] [28]:

| Visualization Method | Description | ggtree Function Example |

|---|---|---|

| Branch Color | Color branches based on the magnitude or value of evolutionary contrasts. | geom_tree(aes(color=contrast_value)) |

| Node Symbols | Use node shape, size, or color to represent contrast values at internal nodes and tips. | geom_nodepoint(aes(size=contrast)), geom_tippoint(aes(color=contrast)) |

| Metadata Layers | Add adjacent colored bars to display contrast values alongside leaf nodes. | geom_facet(...) |

Troubleshooting Guides

Issue 1: Handling Polytomies in the Phylogenetic Tree

Problem: Your phylogenetic tree contains multifurcating nodes (polytomies), but the PIC algorithm requires a strictly bifurcating tree.

Solution:

- Soft Polytomies: If the polytomy reflects true uncertainty about relationships, you can randomly resolve it into a series of bifurcations by adding branches of negligible length (e.g.,

1e-6). It is good practice to repeat the analysis over multiple random resolutions to ensure your results are robust. - Hard Polytomies: If the polytomy represents a true simultaneous divergence event, some software packages can automatically handle them by calculating contrasts as the average of all possible resolutions.

Prevention: Whenever possible, use a fully resolved, bifurcating tree from your phylogenetic analysis. Using consensus trees from Bayesian analyses can help avoid this issue.

Issue 2: Diagnostics Reveal Non-Brownian Motion Evolution

Problem: Diagnostic checks suggest your trait data does not evolve according to a Brownian motion (BM) model, violating a key assumption of the standard PIC method.

Solution:

- Model Selection: Use a different evolutionary model. Implement Phylogenetic Generalized Least Squares (PGLS) with models like:

- Ornstein-Uhlenbeck (OU): Models trait evolution under a restraining force (stabilizing selection).

- Early Burst (EB): Models rates of evolution that decrease over time.

- Branch Length Transformation: Use PGLS to find the maximum likelihood value of Pagel's λ or δ, which transforms the tree to best fit the data, and then perform your analysis using this transformed tree [27].

Workflow:

Issue 3: Missing Trait Data for Some Taxa

Problem: Trait data is unavailable for some species in your phylogeny, making it impossible to calculate complete contrasts.

Solution:

- Phylogenetic Imputation: Use advanced phylogenetically informed prediction methods to estimate missing values. These methods leverage the phylogenetic relationships and data from known species to provide much more accurate imputations than non-phylogenetic methods [27].

- Prune Taxa: As a simpler alternative, you can prune species with missing data from the tree, though this results in a loss of statistical power and information.

Experimental Protocols & Data Presentation

Standard Protocol for a PIC Analysis

The following workflow outlines the key steps for a robust PIC analysis [29]:

Detailed Methodologies:

- Obtain Phylogenetic Tree: Use a time-calibrated ultrametric tree for comparative analysis. Tree sources can include Tree of Life web projects, published trees from literature, or trees you infer yourself using molecular data and software like BEAST or MrBayes [29].

- Import Trait Data: Compile trait data for the terminal taxa in your tree from literature, databases, or original research. Ensure data is correctly matched to tree tip labels.

- Check/Transform Data:

- Prune the tree and trait data to include only shared taxa.

- Check for normality of continuous traits; apply log or square-root transformations if necessary.

- Calculate Standardized PICs:

- Use functions like

pic()in the R packageape. - The algorithm works by traversing the tree from tips to root, calculating differences in trait values between sister nodes/clades, and standardizing them by branch lengths.

- Use functions like

- Run Diagnostics:

- Plot the absolute value of standardized contrasts against their standard deviations (square root of the sum of branch lengths). A lack of correlation validates the branch length model.

- Ensure contrasts are normally distributed around zero.

- Analyze Contrasts: Regress the contrasts of one trait against the contrasts of another through the origin. A significant relationship indicates correlated evolution.

- Interpret Results: A positive relationship suggests traits evolve in the same direction; a negative relationship suggests trade-offs.

Quantitative Data Comparison: PIC vs. Other Methods

Simulation studies on ultrametric trees demonstrate the superior performance of phylogenetically informed prediction (which includes PIC) over predictive equations from other regression models [27].

Table 1: Performance Comparison of Prediction Methods on Ultrametric Trees

| Method | Principle | Data Used For Prediction | Variance (σ²) of Prediction Error (r=0.25) | More Accurate Than PIC? (r=0.25) |

|---|---|---|---|---|

| Phylogenetically Informed Prediction (PIC) | Uses evolutionary model and tree structure | Phylogeny + Trait Correlation | 0.007 | (Baseline) |

| PGLS Predictive Equations | Uses regression coefficients from phylogenetic model | Trait Correlation Only | 0.033 | No (3.8% of trees) |

| OLS Predictive Equations | Uses regression coefficients from non-phylogenetic model | Trait Correlation Only | 0.030 | No (4.3% of trees) |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Phylogenetic Comparative Analysis

| Item | Function/Description | Example Use in PIC |

|---|---|---|

| Molecular Sequence Data | Raw DNA or protein sequences used to infer the phylogenetic tree. | Obtain from databases like GenBank, EMBL, or DDBJ for tree construction [29]. |

| Sequence Alignment Software | Aligns homologous sequences for phylogenetic analysis. | Software like MAFFT or ClustalW for creating the input for tree-building [29]. |

| Tree Inference Software | Constructs phylogenetic trees from aligned sequences. | Use Maximum Likelihood (RAxML, IQ-TREE) or Bayesian (MrBayes, BEAST) methods to build the essential tree input for PIC [29]. |

| R Statistical Environment | A programming language and environment for statistical computing. | The primary platform for running phylogenetic comparative analyses. |

ape R Package |

A core package for Analyses of Phylogenetics and Evolution. | Provides the foundational pic() function for calculating contrasts [29]. |

ggtree R Package |

An R package for visualizing and annotating phylogenetic trees. | Used to create publication-ready figures of your tree with mapped trait data or contrast values [19]. |

phytools R Package |

A package for phylogenetic comparative biology. | Offers tools for fitting evolutionary models (e.g., BM, OU) and conducting phylogenetic regression [19]. |

| Trait Databases | Repositories for species-level morphological, ecological, and physiological data. | Sources like TRY (plant traits) or AnimalTraits to gather data for analysis. |

Frequently Asked Questions (FAQs)

Q1: What are the primary differences between RAxML and MrBayes in phylogenetic inference? RAxML (Randomized Axelerated Maximum Likelihood) uses maximum likelihood methods, optimizing the likelihood of the tree given the data and evolutionary model. It is known for its computational speed and efficiency on large datasets [30]. In contrast, MrBayes employs Bayesian inference, using Markov Chain Monte Carlo (MCMC) algorithms to approximate the posterior probability distribution of trees. This allows for direct quantification of uncertainty in phylogenetic hypotheses [31] [32].

Q2: How do I choose an appropriate evolutionary model for my analysis?

Automated model selection is recommended for reliability. For nucleotide data, use MrModeltest2, and for protein data, use ProtTest3 [32] [33]. These tools calculate statistical criteria like AIC or BIC to identify the model that best fits your data. RAxML also includes an option for automatic protein model selection with the -m PROTGAMMAAUTO flag [34].

Q3: My RAxML analysis fails with a "could not read data" error. What should I check? This is commonly a file format issue. Ensure your PHYLIP-formatted alignment uses relaxed PHYLIP format: a single space between the taxon name and the sequence, and no blank lines within the data matrix [35]. Also, verify that all sequence names are unique and that no taxon names contain spaces (use underscores instead) [35].

Q4: What does a "too few species" error in RAxML mean? This error often occurs if there is a blank line between the header line (which states the number of taxa and sites) and the start of the sequence data in your PHYLIP file. Removing this blank line typically resolves the issue [35].

Q5: How can I perform an ANOVA that accounts for phylogenetic relationships?

Standard ANOVA assumes data independence, which is violated by phylogenetic relationships. Use the phylANOVA function in the R phytools package or aov.phylo in the geiger package [36]. These functions require your data vector and grouping factor to be properly named to match the tip labels in your phylogenetic tree.

Troubleshooting Guides

RAxML Common Errors

Problem: Error reading alignment or "too few species."

- Solution: Validate your PHYLIP file format. Use the

-f ccheck algorithm in RAxML to identify specific issues like misaligned sequences [35].

- Solution: Validate your PHYLIP file format. Use the

Problem: "IMPORTANT WARNING" about identical sequences.

- Solution: RAxML generates a

.reducedfile with duplicates removed. Exclude identical sequences as they do not add new phylogenetic information [35].

- Solution: RAxML generates a

Problem: Determining sufficient computational resources.

- Solution: Estimate memory requirements a priori. RAxML memory consumption depends on taxa count (

n), distinct patterns (m), and data type [30].

Table: Estimated RAxML Memory Requirements

Data Type & Model Memory Estimation Formula DNA + GAMMA (n-2) * m * (16 * 8) bytes DNA + CAT (n-2) * m * (4 * 8) bytes Protein + GAMMA (n-2) * m * (80 * 8) bytes Protein + CAT (n-2) * m * (20 * 8) bytes - Solution: Estimate memory requirements a priori. RAxML memory consumption depends on taxa count (

MrBayes Workflow and Diagnostics

A robust MrBayes workflow involves careful setup and diagnostics to ensure MCMC convergence [32] [33].

Problem: MCMC chains fail to converge (average standard deviation of split frequencies remains high).

- Solution: Increase the number of generations (

mcmc ngen=number) in your MrBayes command. Visually inspect trace plots for stationarity using Tracer software [32].

- Solution: Increase the number of generations (

Problem: "Error reading nexus file" in MrBayes.

Phylogenetic ANOVA in R

Problem:

aov.phyloerror:'formula' must be of the form 'dat~group'.- Solution: Your data vectors are likely not named correctly. Ensure the

datvector (continuous trait) andgroupvector (categorical factor) have names that exactly match the species names in the phylogeny [36].

- Solution: Your data vectors are likely not named correctly. Ensure the

Problem:

phylANOVAreturnsNAfor post-hoc test results.- Solution: This can occur with small sample sizes or insufficient variation. Check your data for outliers and verify that the tree and data are correctly linked [36].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Software and Resources for Phylogenetic Comparative Analysis

| Tool Name | Function & Purpose | Key Features / Use Case |

|---|---|---|

| RAxML [34] [30] | Maximum Likelihood Tree Inference | High-speed, scalable for large datasets; offers GTRGAMMA, PROTGAMMA models. |

| MrBayes [31] [32] | Bayesian Tree Inference | MCMC sampling; quantifies uncertainty via posterior probabilities. |

| MEGA X [32] [33] | Sequence Alignment & Format Conversion | User-friendly interface; converts FASTA, PHYLIP, NEXUS formats. |

| GUIDANCE2 [32] [33] | Robust Sequence Alignment | Evaluates alignment uncertainty; integrates with MAFFT. |

| MrModeltest2 [32] [33] | Nucleotide Model Selection | Works with PAUP*; selects best-fit model using AIC/BIC. |

| ProtTest3 [32] [33] | Protein Model Selection | Java-based; identifies optimal AA substitution model. |

| Phytools / Geiger [36] | Phylogenetic Comparative Methods | R packages for phylogenetic ANOVA, trait evolution modeling. |

| Dendroscope [34] | Tree Visualization | Handles large trees; views RAxML/MrBayes output. |

Experimental Protocol: Integrated Bayesian Phylogenetic Workflow

This detailed protocol outlines a reproducible workflow for Bayesian phylogenetic analysis, from sequence alignment to tree visualization [32] [33].

A. Sequence Alignment: Upload your multi-sequence FASTA file to the GUIDANCE2 server, selecting MAFFT as the alignment tool. Use default parameters for most datasets. For complex data, adjust the

Max-Iterateoption or choose a pairwise alignment method (localpairfor local similarities,genafpairfor longer sequences) [32] [33]. Download the resulting alignment in FASTA format.B. Format Conversion: Use MEGA X to convert the FASTA alignment file to NEXUS format. Further refine the NEXUS file using PAUP* to ensure compatibility with MrBayes, ensuring the file begins with

#NEXUSand the data block is non-interleaved [32] [33].C. Model Selection: For nucleotide data, execute the

MrModelblockfile in PAUP* to generatemrmodel.scoresand select the model with the best AIC/BIC score. For protein data, run ProtTest3 from the command line in its directory [32] [33].D. Bayesian Inference in MrBayes: Execute MrBayes with your NEXUS file and selected model. A typical command block within the NEXUS file is:

Monitor the average standard deviation of split frequencies; a value below 0.01 indicates convergence [32].E. Validation and Visualization: Check that the Potential Scale Reduction Factor (PSRF) is close to 1.0 for all parameters, indicating good MCMC convergence. Visualize the final consensus tree with posterior probabilities in Dendroscope [34] [32].

Frequently Asked Questions

Q1: Why do my analyses of drug target conservation yield inconsistent results when I use different phylogenetic trees? Inconsistent results often stem from differences in tree topology or branch lengths, which directly impact calculations like independent contrasts. Ensure your trees are built using robust, comparable methods (e.g., the same sequence alignment algorithm and evolutionary model). The algorithm for Phylogenetic Independent Contrasts (PICs) is sensitive to branch length, as raw contrasts are divided by their expected standard deviation under a Brownian motion model, which is a function of branch length [37].

Q2: How can I troubleshoot a low contrast ratio when calculating evolutionary rates using independent contrasts? A low contrast ratio (indicating little divergence between sister lineages) can be biologically real or a methodological artifact. First, verify the quality of your sequence alignment and the accuracy of the trait values at the tips. Second, check the branch lengths of your tree; very short branches logically result in small raw contrasts. If the standardized contrast is unusually low, confirm that the correct variances (vi + vj) are being used in the denominator for calculation [37].

Q3: What does it mean if a potential drug target shows a high evolutionary rate (dN/dS) in a pathogen? A high evolutionary rate (dN/dS) suggests that the gene is undergoing positive selection or is less constrained functionally. For a drug target, this is typically undesirable, as it indicates the pathogen can mutate the target without losing fitness, potentially leading to rapid drug resistance. Our findings confirm that known drug target genes have significantly lower evolutionary rates than non-target genes [38].

Q4: What are the essential validation steps after identifying a conserved gene as a potential drug target? After identifying a conserved gene, you must move beyond computational prediction. Key steps include:

- Experimental Deletion/Knockdown: Validate essentiality by knocking out the gene in the pathogen and confirming a loss of fitness or viability.

- In Vitro Inhibition Assays: Test if small molecules or inhibitors targeting the gene product disrupt the pathogen's growth or function.

- Structural Analysis: If possible, solve the protein structure to facilitate rational drug design against the conserved binding pocket.

Troubleshooting Guides

Issue: Phylogenetic Independent Contrasts (PICs) Calculations are Statistically Non-Significant

Problem: The contrasts calculated for your tree show no significant relationship with the trait of interest, or the variance is poorly explained.

Solution:

- Verify Evolutionary Model: The PIC method assumes a Brownian motion model of evolution. Use likelihood-based tools to test if your data fits this model better than alternatives (e.g., Ornstein-Uhlenbeck).

- Check for Outliers: Identify if any specific contrasts have exceptionally high leverage. Investigate the biology of those lineages or check for data entry errors.

- Inspect Branch Lengths: PICs are standardized using branch lengths. Ensure your tree has meaningful, non-zero branch lengths. Consider using a different method for estimating branch lengths if necessary.

- Confirm Data Independence: The strength of PICs relies on the assumption of independent evolution after divergence. Ensure your trait data has been properly mapped to the tips of the phylogeny [37].

Issue: Poor Conservation Scores for Putative Drug Targets in a Target Pathogen

Problem: BLAST-based conservation analysis reveals low sequence identity for your candidate drug target genes across related species, suggesting it may not be a conserved target.

Solution:

- Refine Your Ortholog Set: Manually curate the orthologous genes used in the analysis. Automated pipelines can sometimes include paralogs (genes related by duplication rather than speciation), which inflate divergence estimates.

- Adjust Conservation Metric: Instead of simple percent identity, use a more nuanced metric like the conservation score from BLAST, which considers the quality and length of alignments. Drug target genes have been shown to have significantly higher conservation scores than non-target genes [38].

- Focus on Functional Domains: The entire protein may not be conserved, but specific functional domains essential for activity often are. Perform a domain-based conservation analysis (e.g., using Pfam domains) to identify conserved, druggable pockets.

- Re-evaluate Target Suitability: A gene with low conservation might be a poor broad-spectrum target but could be excellent for a pathogen-specific therapy.

Quantitative Data on Evolutionary Features

Table 1: Summary of Evolutionary Rate (dN/dS) Comparisons [38] This table provides a snapshot of the statistical difference in evolutionary rate between drug target genes and non-target genes across a selection of species.

| Species Code | Median dN/ds (Drug Targets) | Median dN/ds (Non-Targets) | P-value (Wilcoxon Test) |

|---|---|---|---|

| mmus | 0.0910 | 0.1125 | 4.12E-09 |

| btau | 0.1028 | 0.1246 | 7.93E-06 |

| cfam | 0.1057 | 0.1270 | 2.94E-06 |

| ptro | 0.1718 | 0.2184 | 2.73E-06 |

Table 2: Summary of Conservation Score (Sequence Identity) Comparisons [38] This table illustrates the higher sequence conservation observed in drug target genes compared to non-target genes.

| Species Code | Median Conservation Score (Drug Targets) | Median Conservation Score (Non-Targets) | P-value (Wilcoxon Test) |

|---|---|---|---|

| amel | 838.00 | 613.00 | 2.44E-34 |

| btau | 840.00 | 615.00 | 6.18E-38 |

| cfam | 859.00 | 622.00 | 1.11E-33 |

Experimental Protocols

Protocol 1: Calculating Phylogenetic Independent Contrasts (PICs) [37]

Purpose: To estimate the amount of character change across nodes in a phylogeny, providing independent data points for comparative analysis corrected for phylogenetic history.

Methodology:

- Input Data: A rooted phylogenetic tree with branch lengths and continuous trait data (e.g., gene expression, biochemical activity) for each tip species.

- Algorithm: Follow a pruning algorithm from the tips towards the root:

- Step 1: Find two adjacent sister tips, i and j, with a common ancestor k.

- Step 2: Compute the raw contrast: (c{ij} = xi - xj), where x is the trait value.

- Step 3: Calculate the variance of this contrast, which is (vi + vj), where v is the branch length leading to the tip.

- Step 4: Compute the standardized contrast: (s{ij} = \frac{c{ij}}{vi + vj}).

- Step 5: Calculate the ancestral state for node k as a weighted average: (xk = \frac{(1/vi)xi + (1/vj)xj}{(1/vi) + (1/vj)}).

- Step 6: Remove the two tips i and j from the tree, replacing them with the new node k, which has the calculated value (xk) and a branch length to its ancestor of (vk = \frac{1}{(1/vi) + (1/vj)}).

- Output: A set of independent, standardized contrasts that can be used in regression or correlation analyses.

Protocol 2: Assessing Evolutionary Conservation of Candidate Drug Targets

Purpose: To systematically determine if a candidate drug target gene is evolutionarily conserved, a hallmark of its essentiality and potential as a broad-spectrum target.

Methodology:

- Sequence Acquisition: Obtain the protein sequence of the candidate gene from your target organism.

- Ortholog Identification: Use BLASTP to search against a database of non-redundant proteins from a set of pre-defined reference species (e.g., 21 species from bacteria to mammals) [38].

- Data Extraction: For each species, extract the top hit (best ortholog) and record: