Beyond Model Selection: A Comprehensive Guide to Assessing Phylogenetic Comparative Model Fit

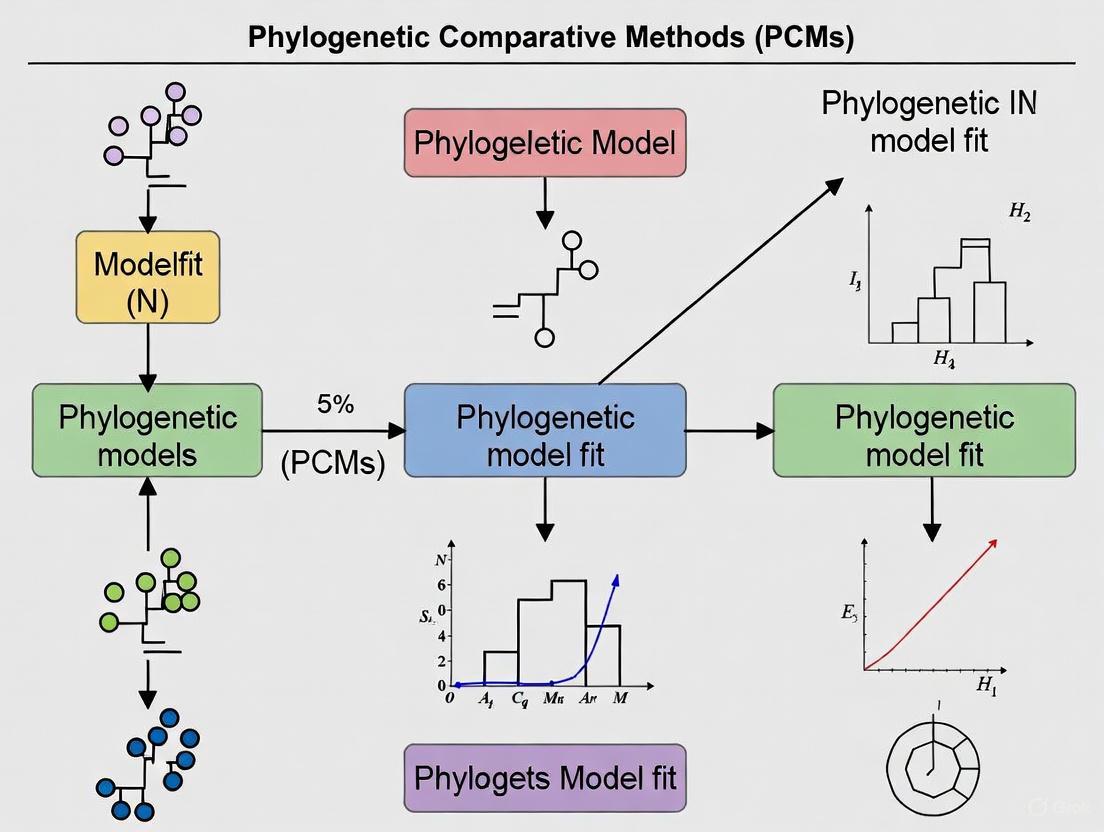

This article provides a comprehensive framework for assessing the fit of Phylogenetic Comparative Methods (PCMs), a critical step often overlooked in evolutionary biology and biomedical research.

Beyond Model Selection: A Comprehensive Guide to Assessing Phylogenetic Comparative Model Fit

Abstract

This article provides a comprehensive framework for assessing the fit of Phylogenetic Comparative Methods (PCMs), a critical step often overlooked in evolutionary biology and biomedical research. It guides researchers from foundational concepts and the consequences of poor model fit through the application of major model families like Brownian Motion and Ornstein-Uhlenbeck processes. The piece details rigorous methodologies for model validation, including absolute goodness-of-fit tests and posterior predictive simulations, and offers troubleshooting strategies for common model inadequacies. By synthesizing foundational knowledge with advanced validation techniques, this guide empowers scientists to produce more reliable and robust evolutionary inferences, with direct implications for comparative genomics and drug development studies.

Why Model Fit Matters: The Foundations of Phylogenetic Comparative Methods

The Critical Role of Model Fit in Evolutionary Inference

Troubleshooting Guides and FAQs

Common Model Fit Issues and Solutions

| Problem Area | Specific Issue | Potential Causes | Recommended Solutions & Diagnostics |

|---|---|---|---|

| Fit Indices & Reporting | Selective reporting of fit indices; justifying poor-fitting models [1]. | Variability in fit index sensitivity; post-hoc selection of favorable indices [1]. | Adopt standardized reporting (e.g., χ², RMSEA, CFI, SRMR); assess residuals; use multi-step fit assessment [1]. |

| Parameter Estimation | Parameter estimates hitting upper bounds (e.g., in fitPagel) [2]. |

Highly correlated trait data creating unstable state combinations; optimization limits too low [2]. | Increase the max.q parameter during model fitting; diagnose and report when bounds are reached [2]. |

| Tree Misspecification | High false positive rates in phylogenetic regression [3]. | Trait evolution history mismatched with assumed species tree (Gene tree-Species tree conflict) [3]. | Use robust regression estimators; consider trait-specific gene trees instead of a single species tree [3]. |

| Model Implementation | Correctness of a new Bayesian model implementation is unknown [4]. | Errors in the model's likelihood function or MCMC sampling mechanism [4]. | Validate the simulator S[ℳ]; then validate the inferential engine I[ℳ] using coverage tests [4]. |

Frequently Asked Questions

Q1: My model fails the chi-square exact fit test with a large sample size, but some approximate fit indices look good. Should I proceed? Proceeding requires extreme caution. With large samples (N > 400), the chi-square test is overly sensitive to minor misspecifications. However, ignoring a significant result is unethical. Follow a systematic process: 1) Report the exact fit test, 2) Examine standardized and correlational residuals for large values (e.g., > |0.1|), and 3) If numerous large residuals exist, reject the model as a poor fit to your data [1].

Q2: When I fit a correlated trait evolution model (e.g., with fitPagel), some rate parameters hit the upper bound. What does this mean?

This often occurs when the data for two traits are highly correlated. Certain state combinations (e.g., 0|0 and 1|1) may be so unstable that the model infers an extremely high transition rate away from them to best explain the observed tip data. While you can increase the upper bound (max.q), the result qualitatively indicates this biological phenomenon. Developers are working on better diagnostics for this issue [2].

Q3: How can I be more confident that my Bayesian evolutionary model is implemented correctly? A thorough validation is a two-part process [4]:

- Validate the Simulator (

S[ℳ]): Ensure that data simulated from your model, given fixed parameters, matches expectations. - Validate the Inferencer (

I[ℳ]): Perform coverage tests. This involves: a) simulating many datasets under known true parameters, b) running your MCMC analysis on each, and c) checking that the 95% credible interval contains the true parameter in ~95% of simulations. Significantly lower or higher coverage indicates an implementation problem [4].

Q4: My analysis uses a large dataset of many traits, but I'm worried the species tree is wrong for some of them. What are the risks? Your concern is valid. Using an incorrect tree (e.g., a species tree for traits that evolved along different gene trees) can lead to catastrophically high false positive rates in phylogenetic regression. Counterintuitively, this problem gets worse with more data (more traits and more species). To mitigate this, use robust regression methods, which have been shown to be less sensitive to tree misspecification and can rescue analyses under realistic evolutionary scenarios [3].

Experimental Protocols for Model Validation

Protocol 1: Coverage Analysis for Bayesian Model Validation

Purpose: To verify the statistical correctness of a Bayesian model implementation [4].

Workflow:

- Define True Parameters: Select a set of fixed parameter values (θ) from their prior distributions.

- Simulate Data: Use the model simulator

S[ℳ]to generate a dataset (D) using the true parameters. - Infer Parameters: Run the full MCMC analysis

I[ℳ]on the simulated dataset D to obtain a posterior distribution for the parameters. - Check Coverage: Determine if the true parameter value lies within the 95% credible interval of the posterior.

- Repeat: Iterate this process many times (e.g., 100-1000).

- Calculate Coverage Frequency: The proportion of iterations where the true value was contained in the credible interval is the empirical coverage.

- Diagnose: The empirical coverage should be close to the nominal rate (e.g., 95%). Consistively lower coverage indicates overconfident (too narrow) credible intervals, while higher coverage indicates underconfident (too wide) intervals. Both suggest a problem in the model implementation [4].

Protocol 2: A Three-Step Framework for Assessing Model Fit in SEM

Purpose: To provide a robust, ethical alternative to over-reliance on selective fit indices when assessing Structural Equation Models [1].

Workflow:

- Step 1: Exact Fit Test

- Fit the model and report the chi-square (χ²) exact fit test.

- If p ≥ α, tentatively retain the model.

- If p < α, tentatively reject the model.

- Step 2: Residual Examination

- Examine standardized and correlational residuals.

- Reject the model if numerous correlational residuals associated with significant standardized residuals have an absolute value > 0.1.

- A model failing Step 1 can be retained if it passes Step 2.

- Step 3: Report Fit Indices

- Report a standardized set of fit indices (e.g., χ², RMSEA, CFI, SRMR) regardless of the outcome, to provide a complete picture for the reader [1].

The Scientist's Toolkit: Essential Research Reagents & Software

| Tool Name | Type | Primary Function in Evolutionary Inference | Key Reference / Link |

|---|---|---|---|

| BEAST 2 | Software Platform | Bayesian evolutionary analysis sampling trees and parameters using MCMC; supports complex joint models [5]. | BEAST 2 |

| RAxML-NG | Software Tool | Extremely large-scale phylogenetic inference under Maximum Likelihood; part of the Exelixis Lab toolset [6]. | Exelixis Lab |

| ggtree | R Package | Visualizing and annotating phylogenetic trees with associated data using the grammar of graphics [7] [8]. | ggtree book |

| phytools | R Package | Performing a wide range of phylogenetic comparative analyses, including fitting models of trait evolution [2]. | phytools blog |

| ColorPhylo | Algorithm / Tool | Automatically generating an intuitive color code that reflects taxonomic/evolutionary relationships for visualization [9]. | ColorPhylo Paper |

| Robust Phylogenetic Regression | Statistical Method | Mitigating high false positive rates in comparative analyses caused by misspecification of the phylogenetic tree [3]. | BMC Ecology & Evolution |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: How can I visually identify potential model misspecification in my phylogenetic tree?

Examine the visualization of key parameters like branch support values. Unexpected patterns, such as uniformly high confidence across all nodes despite known data incompleteness, can be a red flag. Use tree annotation features in tools like ggtree to map confidence values and other metrics directly onto the tree structure for inspection [7]. Tools like iTOL allow for the coloring of tree branches based on user-specified color gradients calculated from associated bootstrap values, helping to identify potentially inflated support [10].

Q2: What is a key symptom of false precision in my analysis results?

A key symptom is overly narrow confidence intervals on parameter estimates (e.g., ancestral state reconstructions, divergence times) when the model used is known to be overly simplistic for the data. This creates a false sense of security. Detailed inspection of these parameters on the tree, using annotation layers such as geom_range in ggtree to display uncertainty, is a practical diagnostic step [7].

Q3: My model selection test prefers a complex model, but my software struggles with computation. What can I do? Consider using model adequacy tests on the simpler model. If the simpler model is shown to be a poor fit (e.g., failing a posterior predictive simulation), it justifies the computational investment in the more complex model or the search for a different modeling approach. This moves beyond mere model selection to assessing whether a model is fit for purpose.

Q4: How can poor model fit lead to inflated significance in a hypothesis test? Poor model fit, such as ignoring rate variation across sites, can cause the analytical framework to misestimate the variance in the data. The test may attribute this unexplained variance to the effect you are testing (e.g., positive selection), making it appear statistically significant when it is not. Using a more appropriate model that accounts for this variation often causes such "significant" results to vanish.

Key Diagnostics and Quantitative Indicators of Poor Fit

The following table summarizes core metrics that can signal issues with phylogenetic model fit.

| Diagnostic Metric | Indicator of Good Fit | Indicator of Poor Fit (False Precision/Inflated Significance) |

|---|---|---|

| Parameter Confidence Intervals | Intervals are reasonably wide, reflecting epistemic uncertainty. | Implausibly narrow confidence intervals on parameters like divergence times or evolutionary rates. |

| Branch Support Values | A mix of support values reflecting the differential resolution of various clades. | Uniformly high support (e.g., all bootstrap values ≥95) in a complex, data-limited analysis. |

| Posterior Predictive P-values | P-values are around 0.5, indicating the data simulated under the model looks like the empirical data. | Extreme P-values (e.g., <0.05 or >0.95), indicating the model cannot recapitulate key statistics of the data. |

| Residual Discrepancies | Small, random, and unsystematic residuals in anamorphic plots. | Large, systematic patterns in residuals, indicating the model is missing a key feature of the data. |

Detailed Experimental Protocol: Assessing Model Adequacy via Posterior Predictive Simulation

This protocol provides a methodology to empirically test whether your phylogenetic model is an adequate fit for your data.

1. Problem Definition: Formulate a specific question about your model's performance. For example, "Does my site-homogeneous model adequately fit the data, or is it producing inflated branch support?"

2. Model Fitting and Simulation:

- Fit your candidate phylogenetic model (e.g., GTR+Γ) to your empirical sequence alignment using Bayesian inference. Save the posterior distribution of trees and model parameters.

- For a large number of samples from the posterior distribution, simulate a new sequence alignment of the same size as your original data. This creates a reference distribution of datasets that would be expected if your model were true.

3. Calculate Test Statistics (Discrepancies):

- For each simulated alignment and the empirical alignment, calculate a test statistic that probes the model's weakness. To investigate false precision/inflated significance, a powerful statistic is the Distribution of Branch Support. Calculate bootstrap support values or posterior clade probabilities for all nodes across the simulated and empirical datasets.

4. Compare and Evaluate:

- Construct a histogram of a summary statistic (e.g., the mean branch support) from the simulated datasets.

- Plot the value of the same statistic from your empirical data on this histogram.

- Interpretation: If the empirical value lies in the tails of the simulated distribution (e.g., empirical data has systematically higher average support), it indicates the model is producing falsely precise and inflated results. The model is inadequate.

Research Reagent Solutions: Essential Materials for Phylogenetic Analysis

The table below lists key software tools and their primary functions in phylogenetic analysis and visualization.

| Item Name | Primary Function | Application in Assessing Model Fit |

|---|---|---|

| ggtree (R package) | A visualization toolkit for annotating phylogenetic trees with diverse data [7]. | Used to map model adequacy test statistics (e.g., confidence intervals, support values) directly onto the tree structure for visual diagnosis. |

| ETE Toolkit | A programmable environment for building, analyzing, and visualizing trees and tree-associated data [11]. | Its scripting API allows for the automation of analyses and the creation of custom workflows to systematically test model performance across a tree. |

| iTOL (Interactive Tree of Life) | A web-based platform for displaying, manipulating, and annotating phylogenetic trees [10]. | Enables the interactive overlay of various data types (e.g., bootstrap values, branch colors) to visually inspect trees for patterns suggesting model misspecification. |

| ColorPhylo Algorithm | An automatic coloring method that uses a dimensionality reduction technique to project taxonomic "distances" onto a 2D color space [9]. | Can be repurposed to color-code trees based on statistical discrepancies, making it easier to spot clusters of branches where the model fits poorly. |

Workflow for Diagnosing Poor Model Fit

The following diagram illustrates a logical workflow for identifying and addressing the consequences of poor phylogenetic model fit.

Frequently Asked Questions (FAQs)

Q1: How can I visualize uncertainty in branch lengths or node positions on my phylogenetic tree?

Uncertainty in phylogenetic inferences, such as confidence intervals for branch lengths, can be visualized using the geom_range() and geom_rootpoint() layers in ggtree. These layers add error bars or symbols to represent the uncertainty associated with nodes and branches [7] [8].

- Experimental Protocol: After constructing your tree (e.g., with BEAST or MrBayes), import the tree file into R using the

treeiopackage. Use theggtree()function to create a basic tree plot. Then, add thegeom_range()layer to display branch length uncertainty as error bars. Thegeom_nodepoint()orgeom_rootpoint()layers can be used to annotate internal or root nodes with symbolic points, often sized or colored by measures of statistical support like posterior probabilities [7] [8].

Q2: What is the best way to annotate a specific clade to highlight it for a presentation?

The ggtree package provides the geom_hilight() and geom_cladelab() layers for this purpose. The geom_hilight() layer highlights a selected clade with a colored rectangle or round shape behind the clade. The geom_cladelab() layer adds a colored bar and a text label (or even an image) next to the clade [7].

- Experimental Protocol: To highlight a clade, you must first identify its internal node number. Using the ggtree plot, add

geom_hilight(node=[node_number], fill="steelblue", alpha=.6)to draw a semi-transparent blue rectangle behind the clade. To label it, addgeom_cladelab(node=[node_number], label="Your Label", align=TRUE, offset=.2, textcolor='red', barcolor='red')[7].

Q3: My data includes intraspecific variation for several taxa. How can I represent this on a tree?

Intraspecific variation can be visualized by linking related taxa on the tree. The geom_taxalink() layer in ggtree is designed to draw a curved line between taxa or nodes, explicitly showing their association. This is particularly useful for representing gene flow, host-pathogen interactions, or other non-tree-like processes [7].

- Experimental Protocol: Prepare a data frame that specifies the pairs of taxa you want to link. In the ggtree plot, add the layer

geom_taxalink(data=your_data_frame, mapping=aes(node1=taxa1, node2=taxa2)). You can customize the appearance of these links with parameters likecolor,alpha(transparency), andlinetypeto represent different strengths or types of association [7].

Q4: I used Phylogenetic Independent Contrasts (PIC) and the correlation between my traits disappeared. What does this mean?

A significant correlation between traits that disappears after applying PIC suggests that the initial correlation may have been a byproduct of the phylogenetic relatedness of the species, rather than a functional relationship. Closely related species often share similar traits due to common ancestry, creating a pattern that mimics correlation. PIC controls for this non-independence, and a non-significant result post-PIC indicates no evidence for a correlation between the traits independent of phylogeny [12].

- Experimental Protocol: This involves a two-step analytical process. First, calculate the independent contrasts for your trait data using a function like

pic()in theapepackage. Second, perform a correlation test (e.g., usingcor.test()) on the calculated contrasts instead of the original raw trait data. The interpretation should be based on the results of this second test on the contrast data [12].

Q5: How can I ensure that text labels on my tree have sufficient color contrast against their background for accessibility?

For any node that contains text, the text color (fontcolor) must be explicitly set to have high contrast against the node's background color (fillcolor) [13]. The Web Content Accessibility Guidelines (WCAG) define sufficient contrast as a ratio of at least 4.5:1 for normal text. You can use automated tools to choose the color.

- Experimental Protocol: In R, you can programmatically determine the best text color based on the background color. One method uses the

prismatic::best_contrast()function within ggplot2'safter_scale()feature. For example, in ageom_text()orgeom_label()layer, you can setaes(color = after_scale(prismatic::best_contrast(fill, c("white", "black")))to automatically set the text to either white or black, whichever has the highest contrast with thefillcolor [13] [14].

Table 1: Key WCAG Color Contrast Requirements for Scientific Visualizations [13]

| Text Type | Minimum Contrast Ratio | Example Application |

|---|---|---|

| Normal Text (small) | 7:1 | Tip labels, node annotations, legend text. |

| Large-Scale Text (18pt+) | 4.5:1 | Figure titles, large axis labels, clade labels. |

| Incidental / Logos | No requirement | Text that is part of a logo or purely decorative. |

Table 2: Essential ggtree Geometric Layers for Addressing Phylogenetic Uncertainty and Variation [7] [8]

| Layer | Primary Function | Key Parameters |

|---|---|---|

geom_range() |

Visualizes uncertainty in branch lengths (e.g., confidence intervals). | x, xmin, xmax, color |

geom_nodepoint() |

Annotates internal nodes, often with support values (e.g., bootstrap). | aes(size=support_value), color, shape |

geom_hilight() |

Highlights a selected clade with a colored shape. | node, fill, alpha (transparency) |

geom_cladelab() |

Annotates a clade with a bar and text or image label. | node, label, offset, barcolor, textcolor |

geom_taxalink() |

Links related taxa to show intraspecific variation or associations. | node1, node2, color |

Research Reagent Solutions

Table 3: Key Software and Packages for Phylogenetic Analysis and Visualization

| Item | Function | Application in PCMs |

|---|---|---|

| R Statistical Environment | A programming language and environment for statistical computing. | The core platform for running phylogenetic comparative analyses and generating visualizations. |

ape Package |

A fundamental R package for reading, writing, and performing basic analysis of evolutionary trees. | Used for core phylogenetic operations, including reading tree files and calculating Phylogenetic Independent Contrasts (PIC) [12] [15]. |

ggtree Package |

An R package for visualizing and annotating phylogenetic trees using the grammar of graphics. | Essential for creating highly customizable and reproducible tree figures, enabling the visualization of uncertainty, intraspecific variation, and other complex annotations [7] [8] [16]. |

treeio Package |

An R package for parsing and managing phylogenetic data with associated information. | Works with ggtree to import and handle diverse tree data and annotations from various software outputs (BEAST, MrBayes, etc.) [7] [8]. |

phytools Package |

An R package for phylogenetic comparative biology. | Provides a wide array of methods for fitting models of trait evolution and other comparative analyses [8] [16]. |

| FigTree / iTOL | Standalone applications for tree visualization. | Used for quick viewing and initial styling of trees, though often with less programmatic flexibility than ggtree [8] [17] [16]. |

Experimental Workflow for Phylogenetic Comparative Model Assessment

Diagram for Annotating Phylogenetic Uncertainty and Variation

FAQs on Phylogenetic Comparative Methods

Q1: What is the primary goal of using Phylogenetic Comparative Methods (PCMs)? PCMs are statistical models designed to link present-day trait variation across species with the unobserved evolutionary processes that occurred in the past. The primary goal is to identify the model of trait evolution that best explains the variation in your data, which is a critical first step for accurate evolutionary inference, such as estimating ancestral states or testing adaptive hypotheses [18].

Q2: What are the fundamental assumptions of common PCM models? Common models and their key assumptions include:

- Brownian Motion (BM): Assumes that traits evolve by random walks along phylogenetic branches, with changes that are neutral, continuous, and proportional to time [18].

- Ornstein-Uhlenbeck (OU): Assumes trait evolution under stabilizing selection, where a trait is pulled towards a specific optimum or adaptive peak. It adds parameters for the strength of selection and the optimum trait value [18].

- Early-Burst (EB): Assumes that rates of trait evolution are highest early in the history of a clade and slow down over time, consistent with a model of adaptive radiation [18].

Q3: My trait data contains measurement error. How does this affect model selection? Measurement error can significantly mislead conventional model selection procedures like AIC. Noisy data can make a Brownian Motion process appear to be under stabilizing selection, and vice versa [18]. It is crucial to account for measurement error in your models whenever possible. Studies have shown that methods like Evolutionary Discriminant Analysis (EvoDA) can be more robust to measurement error than standard AIC-based approaches [18].

Q4: For molecular data, does the choice of model selection software matter? Recent evidence suggests that the choice of software program (e.g., jModelTest2, ModelTest-NG, or IQ-TREE) does not significantly affect the accuracy of identifying the true nucleotide substitution model [19]. However, the choice of information criterion is critical. The Bayesian Information Criterion (BIC) has been shown to consistently outperform AIC and AICc in accurately identifying the true model [19].

Q5: What are Structurally Constrained Substitution (SCS) models, and when should I use them? SCS models incorporate information about protein structure and folding stability into the model of molecular evolution. They are more realistic than traditional empirical models because they consider how the 3D structure of a protein constrains which amino acid changes are acceptable. They are particularly useful when you need high accuracy in phylogenetic inference or ancestral sequence reconstruction, and when studying proteins where folding stability is a key selective pressure, such as in viral proteins [20] [21]. The trade-off is that they demand more computational resources.

Troubleshooting Common Experimental Issues

Problem: Inconsistent model selection results when analyzing the same dataset.

- Potential Cause: The statistical strength to discriminate between models like BM and OU can be low, especially with small phylogenies or traits with high measurement error [18].

- Solution:

- Use multiple criteria for model selection (e.g., AIC and BIC) and see if there is a consensus.

- Consider employing newer methods like Evolutionary Discriminant Analysis (EvoDA), which uses supervised learning and may offer improved accuracy, particularly with noisy data [18].

- If using molecular data, consistently apply BIC, as it has demonstrated superior performance in identifying the true model [19].

Problem: My phylogenetic tree or ancestral sequence reconstruction lacks accuracy.

- Potential Cause: The use of an oversimplified or incorrect substitution model for sequence evolution [21].

- Solution:

- Ensure you perform a rigorous model selection process for your nucleotide or amino acid sequence data.

- For protein-coding sequences, explore Structurally Constrained Substitution (SCS) models. These models have been shown to provide more accurate evolutionary inferences than traditional empirical models because they incorporate biophysical constraints [20] [21].

Problem: Computational time for model selection or phylogenetic inference is prohibitively long.

- Potential Cause: Advanced models, particularly SCS models or models applied to large datasets, are computationally intensive [20] [21].

- Solution:

- Start with faster, empirical models to narrow down candidate models.

- For trait evolution, use a sub-set of models informed by biological knowledge (e.g., don't test an OU model if there is no biological reason to expect a trait optimum).

- Utilize high-performance computing (HPC) resources or cloud computing for the most demanding analyses.

Model Selection & Performance Data

The table below summarizes a quantitative comparison of model selection criteria based on a study of nucleotide substitution models [19].

Table 1: Performance of Information Criteria in Model Selection

| Information Criterion | Full Name | Accuracy in Identifying True Model | Key Characteristic |

|---|---|---|---|

| BIC | Bayesian Information Criterion | Consistently High [19] | Stronger penalty for model complexity than AIC [19] |

| AIC | Akaike Information Criterion | Lower than BIC [19] | Preferable if goal is prediction rather than identification of true model |

| AICc | Corrected Akaike Information Criterion | Lower than BIC [19] | Corrected for small sample sizes |

The following table compares the performance of conventional model selection with a new machine learning approach, EvoDA, under different experimental conditions [18].

Table 2: EvoDA vs. Conventional Model Selection Under Measurement Error

| Methodology | Basis for Selection | Performance with Noiseless Data | Performance with Measurement Error |

|---|---|---|---|

| Conventional (AIC) | Likelihood-based, penalized by parameters | Good | Decreases significantly; prone to selecting wrong model [18] |

| EvoDA | Supervised learning (discriminant analysis) | Good | More robust; maintains higher accuracy [18] |

Experimental Protocol: Model Selection for Trait Evolution

This protocol outlines the steps for identifying the best-fit model of trait evolution using both conventional and machine learning approaches.

1. Define the Candidate Models

- Select a set of candidate models to test (e.g., BM, OU, EB) [18].

2. Prepare the Input Data

- Phylogenetic Tree: A time-calibrated phylogeny of the study species.

- Trait Data: A numerical vector of trait values for the species at the tips of the tree. Ensure data is properly aligned with the tree.

3. Fit the Models and Perform Conventional Selection

- Use a phylogenetic comparative methods framework (e.g.,

geigerorphytoolsin R) to fit each candidate model to your trait data. - Extract the log-likelihood and number of parameters for each fitted model.

- Calculate information criteria (AIC, BIC) for each model.

- Identify the best-fit model as the one with the lowest AIC or BIC value.

4. (Optional) Perform Model Selection with EvoDA

- Format your data for input into the EvoDA framework [18].

- Train the discriminant analysis algorithms (e.g., LDA, QDA) using the provided tools.

- Use the trained EvoDA model to predict the most likely evolutionary model for your empirical trait data.

5. Validate and Report

- Report the best-fit model and all supporting statistics (log-likelihood, AIC, BIC scores).

- Where possible, perform simulations to assess the statistical power of your analysis to correctly distinguish between your candidate models.

Workflow Visualization

The diagram below illustrates the logical workflow for phylogenetic model selection as described in the experimental protocol.

Model Selection Workflow

The Scientist's Toolkit: Key Research Reagents & Software

Table 3: Essential Software and Analytical Tools for PCM Research

| Tool Name | Type | Primary Function in PCM Research |

|---|---|---|

| EvoDA | Software/Method | A suite of supervised learning algorithms for predicting models of trait evolution; offers robustness against measurement error [18]. |

| ProteinEvolver | Software Framework | A computer framework for forecasting protein evolution by integrating birth-death population genetics with structurally constrained substitution models [20]. |

| jModelTest2/ModelTest-NG/IQ-TREE | Software Program | Tools for statistical selection of best-fit nucleotide substitution models for phylogenetic analysis [19]. |

| Structurally Constrained Substitution (SCS) Models | Evolutionary Model | A class of substitution models that use protein structure to inform evolutionary constraints, leading to more accurate phylogenetic inferences [21]. |

| BIC (Bayesian Information Criterion) | Statistical Criterion | An information criterion used for model selection; demonstrated to be highly accurate for selecting nucleotide substitution models [19]. |

A Practical Guide to Major PCMs and Model Selection

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between Brownian Motion and Ornstein-Uhlenbeck models in phylogenetic comparative methods?

Brownian Motion (BM) models trait evolution as a random walk where variance increases linearly with time, predicting that closely related species are more similar. The Ornstein-Uhlenbeck (OU) model extends BM by adding a parameter (α) that pulls traits toward a theoretical optimum, which is often interpreted as modeling stabilizing selection or adaptation. However, researchers should note that the OU model's α parameter is frequently misinterpreted - it measures the strength of pull toward a central trait value among species, not stabilizing selection within a population in the population genetics sense [22].

2. My OU model analysis consistently favors the OU model over simpler Brownian Motion, even with small datasets. Is this reliable?

This is a known problem. Likelihood ratio tests frequently incorrectly favor the more complex OU model over simpler models when using small datasets [22]. With limited data, the α parameter of the OU model is inherently biased and prone to overestimation. Best practice recommends:

- Using datasets with sufficient taxonomic sampling (larger n)

- Simulating fitted models and comparing with empirical results

- Being particularly cautious with datasets containing less than 20-30 taxa

- Considering that very small amounts of measurement error can profoundly affect OU model inferences [22]

3. How do I implement Phylogenetically Independent Contrasts (PICs) to account for phylogenetic relationships in R?

PICs provide a method to make species data statistically independent by calculating differences between sister taxa and nodes [23]. The standard implementation in R uses the ape package:

The key steps involve calculating standardized contrasts for each trait using the phylogeny, then fitting a linear model without an intercept. This effectively "controls for phylogeny" when testing trait correlations [24].

4. When I try to set the criterion to likelihood in PAUP*, why does the option sometimes remain unavailable?

To use maximum likelihood in PAUP*, your dataset must be composed of DNA, Nucleotide, or RNA characters, and the datatype option under the format command must also be set to one of these values. For example [25]:

After ensuring the data type is set correctly, you can use:

Common Error Messages and Solutions

Error: "OU model convergence issues" or "parameter α at boundary"

Solution: This often occurs with small datasets or when the true evolutionary process is close to Brownian Motion. Try these steps:

- Simulate data under Brownian Motion and test if your analysis incorrectly infers an OU process

- Increase sample size (number of taxa) if possible

- Consider constraining α to reasonable values based on biological knowledge

- Use multiple starting values for optimization algorithms

- Report when α estimates are at boundaries and interpret with caution [22]

Error: "PIC calculation failed" or "negative branch lengths"

Solution: Phylogenetically Independent Contrasts require:

- Fully bifurcating trees (no polytomies)

- All branch lengths to be positive and non-zero

- Complete trait data for all taxa or proper handling of missing data

- Verify your tree is rooted properly for standard PIC implementation [23] [24]

Quantitative Model Comparison Framework

Table 1: Key Characteristics of Brownian Motion and OU Models

| Characteristic | Brownian Motion Model | Ornstein-Uhlenbeck Model |

|---|---|---|

| Number of Parameters | 1 (σ²) | 2-3 (σ², α, sometimes θ) |

| Biological Interpretation | Genetic drift or random evolution | Constrained evolution toward an optimum |

| Trait Distribution | Multivariate normal | Multivariate normal |

| Trait Variance | Increases linearly with time | Approaches stationary variance |

| Best For | Neutral evolution, random walks | Adaptive peaks, constrained evolution |

| Common Issues | May not capture constrained evolution | Overfitting with small datasets, α bias |

Table 2: Troubleshooting Common Model Implementation Problems

| Problem | Diagnostic Signs | Recommended Solutions |

|---|---|---|

| OU Model Overfitting | Likelihood ratio test always favors OU; α estimates at boundaries | Simulate BM data to test false positive rate; increase sample size; use model averaging |

| Poor Model Convergence | Parameter estimates vary widely between runs; warning messages | Check branch lengths; use multiple starting values; simplify model |

| Incorrect Likelihood Calculation | Likelihood values dramatically different between programs | Check tree ultrametricity; verify data scaling; confirm model parameterization |

| PIC Assumption Violation | Contrasts not independent; non-normal residuals | Check tree structure; verify branch lengths; consider alternative methods (PGLS) |

Experimental Protocols and Methodologies

Protocol 1: Standard Implementation of Phylogenetically Independent Contrasts

Data Requirements: A rooted phylogenetic tree with branch lengths and continuous trait measurements for all tips [23]

Algorithm:

- Identify two adjacent tips (i, j) with common ancestor k

- Compute raw contrast: cij = xi - x_j

- Calculate standardized contrast: sij = (xi - xj)/(vi + v_j) where v represents branch lengths

- Replace tips i and j with their ancestor k, assigning it value xk = (xi/vi + xj/vj)/(1/vi + 1/v_j)

- Assign branch length vk = (vi × vj)/(vi + v_j)

- Repeat until all nodes processed [23]

Verification:

- Standardized contrasts should be independent and identically distributed

- No phylogenetic signal should remain in residuals

- Contrasts should be normally distributed with mean zero

Protocol 2: Model Selection Framework for BM vs. OU Models

Model Fitting:

- Fit Brownian Motion model to obtain log-likelihood (lnL_BM)

- Fit Ornstein-Uhlenbeck model to obtain log-likelihood (lnL_OU)

- Calculate difference: ΔlnL = lnLOU - lnLBM

Statistical Testing:

- Use likelihood ratio test: LR = 2 × ΔlnL

- Compare to χ² distribution with degrees of freedom equal to parameter difference (df = 1)

- Apply correction for small sample sizes (AICc)

Validation:

- Simulate data under BM model to assess false positive rate

- Check parameter estimability (α not at boundaries)

- Evaluate biological plausibility of estimated optimum (θ)

Research Reagent Solutions

Table 3: Essential Computational Tools for PCM Implementation

| Tool/Software | Primary Function | Implementation Notes |

|---|---|---|

| R with ape package | Phylogenetic Independent Contrasts | Use pic() function; requires ultrametric tree |

| R with geiger package | OU model fitting | fitContinuous() function for various models |

| R with ouch package | Multiple optimum OU models | More complex OU implementations with shifting optima |

| PAUP* | General phylogenetic analysis | Set criterion=likelihood for ML implementation [25] |

| Custom simulation code | Model validation | Critical for verifying model performance with your data |

Workflow Visualization

Diagram 1: Phylogenetic Comparative Methods Workflow

Diagram 2: Phylogenetic Independent Contrasts Algorithm

Frequently Asked Questions (FAQs)

FAQ 1: Why is incorporating phylogenetic uncertainty important in Bayesian comparative analyses?

Assuming a single phylogeny is known without error can lead to overconfidence in results, such as falsely narrow confidence intervals and inflated statistical significance [26]. Bayesian approaches address this by integrating over a distribution of plausible trees, providing more honest parameter estimates and uncertainty measures that reflect our actual knowledge [26].

FAQ 2: What software can I use to implement these Bayesian models?

Several flexible software options are available. OpenBUGS and JAGS are general-purpose Bayesian analysis tools that allow custom model specification, including those that incorporate a prior distribution of trees [26]. The BayesTraits program is specifically designed for phylogenetic comparative analyses and can fit multiple regression models [26]. For learning the fundamentals, tutorials in R are available to guide users in writing simple MCMC code for phylogenetic inference [27].

FAQ 3: My model has converged, but how can I assess its absolute performance, not just its relative fit?

Assessment of absolute model performance is critical and can be done via parametric bootstrapping (for maximum likelihood) or posterior predictive simulations (for Bayesian inference) [28]. These methods simulate new datasets under the fitted model and parameters; if the observed data resembles the simulated data, the model performs well. The R package 'Arbutus' implements such procedures for phylogenetic models of continuous trait evolution [28].

FAQ 4: What are the common sources of uncertainty in phylogenetic comparative methods?

The two primary sources are:

- Phylogenetic Uncertainty: Uncertainty in the tree topology (the branching order) and/or branch lengths [26].

- Trait Value Uncertainty: Uncertainty due to intraspecific variation, which can stem from measurement error or natural variation among individuals [26].

Troubleshooting Common Experimental Issues

Issue 1: Analysis yields overly precise results and potentially false significance.

- Problem: The analysis was conducted using a single consensus phylogeny, ignoring phylogenetic uncertainty.

- Solution: Instead of a single tree, use an empirical prior distribution of trees (e.g., a posterior sample of trees from BEAST or MrBayes) in a Bayesian framework. This integrates over multiple plausible trees and provides more realistic confidence intervals [26].

Issue 2: The chosen model of trait evolution is a poor fit for the gene expression data.

- Problem: Evolutionary models derived for complex morphological traits may not adequately describe gene expression data, leading to unreliable inferences.

- Solution: Systematically evaluate model performance, not just perform model selection. After fitting a model (e.g., an Ornstein-Uhlenbeck process), use tools like 'Arbutus' to check if the model's distributional assumptions match your data. Be aware that heterogeneity in the rate of evolution is a common reason for poor model performance [28].

Issue 3: Inaccessible or poorly documented software for Bayesian phylogenetic analysis.

- Problem: Difficulty in finding or using specialized software.

- Solution: Utilize the comprehensive CRAN Task View: Phylogenetics to discover and evaluate R packages for phylogenetic analysis. Core packages like ape, phytools, and geiger provide foundational functions for reading, manipulating, and analyzing phylogenetic trees and comparative data [29].

Quantitative Data and Model Comparison

Table 1: Key Properties of Major Phylogenetic Comparative Models (PCMs). This table summarizes the univariate variance-covariance structures and their biological interpretations for several common models. [30]

| Model | Full Name | Variance-Covariance Structure (Σ) | Free Parameter | Biological Interpretation |

|---|---|---|---|---|

| ID | Independent | I (Identity Matrix) |

None | Species traits evolve independently; no phylogenetic signal. |

| FIC | Felsenstein's Independent Contrasts | V (from branch lengths) |

None | Traits evolve under a Brownian Motion (BM) process along the phylogeny. |

| PMM | Phylogenetic Mixed Model | λ*V + (1-λ)*I |

λ (heritability) |

The trait comprises a phylogenetic component (BM) and a species-specific independent component. |

| PA | Phylogenetic Autocorrelation | (I - ρ*W)⁻¹ * I * [(I - ρ*W)⁻¹]' |

ρ (autocorrelation) |

A species' trait is influenced by the traits of its phylogenetic neighbors. |

| OU | Ornstein-Uhlenbeck | e^(-α*t)*V |

α (selection strength) |

Traits evolve under stabilizing selection towards an optimum value. |

Table 2: Simulation Results Comparing Parameter Estimation Precision. This table is inspired by the simulation study in [26], which compared using a single consensus tree versus an empirical distribution of trees.

| Analysis Method | Tree Input | Mean Estimate of β₁ | 95% Credible/Confidence Interval Width | Coverage of True Parameter |

|---|---|---|---|---|

| Generalized Least Squares (GLS) | Single "Correct" Tree | ~2.0 | Narrow | Good (with the true tree) |

| Generalized Least Squares (GLS) | Single Consensus Tree | ~2.0 | Narrower than true uncertainty | Poor |

| Bayesian MCMC (One Tree - OT) | Single Consensus Tree | ~2.0 | Narrow | Poor |

| Bayesian MCMC (All Trees - AT) | Empirical Prior (100 Trees) | ~2.0 | Wider, more realistic | Good |

Experimental Protocols

Protocol 1: Bayesian Linear Regression Incorporating Phylogenetic Uncertainty

This protocol outlines the steps for performing a Bayesian phylogenetic regression while accounting for uncertainty in the phylogeny [26].

- Obtain a Tree Distribution: Generate a posterior sample of phylogenetic trees (e.g., 100-10,000 trees) using Bayesian phylogenetic software like BEAST [26] or MrBayes.

- Specify the Model in BUGS/JAGS: Define the statistical model. The core regression for a response variable

Yand predictorXis specified asY ~ multivariate_normal(mean = X * beta, prec = inverse(Sigma)), whereSigmais the phylogenetic variance-covariance matrix. - Incorporate the Tree Prior: For each MCMC iteration, the analysis samples a tree from the empirical prior distribution (the tree set from step 1) and constructs the corresponding

Sigmamatrix under a Brownian Motion model. - Set Priors and Run MCMC: Assign prior distributions for regression parameters (e.g., diffuse normal priors for

beta) and the residual variance. Run the Markov Chain Monte Carlo (MCMC) simulation to obtain posterior distributions. - Check Convergence and Analyze Output: Diagnose MCMC convergence using statistics like Gelman-Rubin's R-hat. The final output is a posterior distribution for all parameters (e.g.,

beta) that has integrated over phylogenetic uncertainty.

Protocol 2: Assessing Phylogenetic Model Fit with Parametric Bootstrapping

This protocol assesses whether a fitted phylogenetic model provides an adequate description of the data [28].

- Fit the Model: Fit your phylogenetic model (e.g., BM, OU) to the observed trait data using maximum likelihood or Bayesian inference.

- Rescale the Tree: Use the parameter estimates from the fitted model to rescale the branch lengths of the original tree, creating a "unit tree" where the expectations under a Brownian Motion model with a rate of 1 are met.

- Simulate Data: On the rescaled unit tree, simulate a large number (e.g., 1000) of new trait datasets under the BM(1) process.

- Calculate Discrepancy Statistics: For both the observed data and each simulated dataset, calculate a set of test statistics (e.g., C-statistic, slope of a morphological disparity plot) that capture different aspects of the data's distribution.

- Compare and Evaluate: Compare where the test statistics from the observed data fall within the distribution of statistics from the simulated data. If the observed statistics are atypical (e.g., in the extreme tails), the fitted model is deemed inadequate.

Workflow Visualization

Bayesian Phylogenetic Regression Workflow

Model Adequacy Assessment Workflow

Research Reagent Solutions

Table 3: Essential Software and Packages for Bayesian Phylogenetic Analysis.

| Item Name | Type | Primary Function | Relevance to Field |

|---|---|---|---|

| BEAST / MrBayes | Software Package | Bayesian phylogenetic inference to generate posterior distributions of trees. | Provides the empirical prior distribution of phylogenies essential for incorporating topological and branch length uncertainty into downstream comparative analyses [26]. |

| OpenBUGS / JAGS | Software Package | General-purpose platforms for Bayesian analysis using MCMC sampling. | Offers flexibility for specifying custom phylogenetic comparative models, including those that integrate over a set of trees and account for measurement error [26]. |

| R (ape, phytools, geiger) | Programming Environment & Packages | Core infrastructure for reading, manipulating, plotting, and analyzing phylogenetic trees and comparative data. | Provides the foundational toolkit for handling phylogenetic data, implementing various PCMs, and connecting different parts of the analytical workflow [29]. |

| Arbutus R Package | R Package | Assesses the absolute fit of phylogenetic models of continuous trait evolution. | Used to diagnose model inadequacy by testing whether the data deviate from the expectations of the best-fit model, which is crucial for reliable inference [28]. |

| BayesTraits | Software Package | Specialized software for performing Bayesian phylogenetic comparative analyses. | Fits multiple regression models to multivariate Normal trait data while allowing for the incorporation of phylogenetic uncertainty [26]. |

FAQs: Core Concepts of AIC and BIC

What are AIC and BIC, and what is their primary purpose? The Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC) are probabilistic measures used for model selection. They help researchers choose the best model from a set of candidates by balancing how well the model fits the data against its complexity. Their main goal is to prevent overfitting—the creation of models that are too tailored to the specific dataset and perform poorly on new data [31] [32].

How do AIC and BIC differ in their approach? While both criteria aim to select a good model, their underlying philosophies and penalties for model complexity differ.

- AIC (Akaike Information Criterion): Derived from frequentist statistics, AIC seeks to find the model that best explains the data with a reasonable number of parameters. It is more forgiving of complex models. The formula is:

AIC = 2k - 2ln(L)[31] [32] - BIC (Bayesian Information Criterion): Derived from Bayesian statistics, BIC is stricter, especially with large datasets. It more heavily penalizes models with more parameters. The formula is:

BIC = k ln(n) - 2ln(L)[31] [33]

Here, k is the number of parameters, n is the number of observations, and L is the model's likelihood.

When should I use AIC versus BIC? The choice depends on your goals and dataset [31] [34]:

- Use AIC if your primary concern is predictive accuracy. It is often preferred for smaller datasets as it is less harsh on complexity. AIC is efficient and will asymptotically select the model that minimizes prediction error, even if it is not the "true" model.

- Use BIC if your goal is to identify the true underlying model, especially with larger datasets. BIC has a stronger penalty for complexity as the sample size grows, which helps prevent overfitting. It is consistent, meaning that if the true model is among the candidates, BIC will select it as the sample size approaches infinity.

In phylogenetic comparative methods (PCMs), how are these criteria applied? In PCMs, AIC and BIC are used to compare different models of evolution (e.g., Brownian Motion, Ornstein-Uhlenbeck) fitted to trait data across species. A meta-analysis of 122 phylogenetic datasets found that for smaller phylogenies (under 100 taxa), simpler models like Independent Contrasts and non-phylogenetic models often provide the best fit according to AIC [35] [30] [36]. For bivariate analyses, correlation estimates between traits were found to be qualitatively similar across different PCMs, making the choice of method less critical for this specific task [30].

Troubleshooting Guides

Issue 1: Consistently Selecting Overly Complex Models

Problem: Your model selection process always chooses the model with the most parameters, which you suspect is overfitting the data.

Solution:

- Check Your Criterion: If you are using AIC, consider switching to BIC. The BIC's penalty term includes the log of the sample size (

k ln(n)), which makes it more cautious about adding parameters, particularly with larger datasets [31] [33]. - Cross-Validation: Use a hold-out test dataset or resampling techniques like k-fold cross-validation to validate the model's performance on unseen data. Probabilistic criteria like AIC and BIC are useful but do not account for model uncertainty, and can sometimes favor simpler models than necessary [32].

- Examine the Penalty: The core of the issue lies in the penalty for complexity. Ensure you are using the correct formula. For example, some software implementations might use different scaling factors. The standard formulas are

AIC = 2k - 2ln(L)andBIC = k ln(n) - 2ln(L)[31] [32] [33].

Issue 2: Interpreting and Comparing AIC/BIC Values

Problem: You have calculated AIC and BIC for several models but are unsure how to determine the "best" one.

Solution:

- The Lower, The Better: For both AIC and BIC, the model with the lowest value is preferred [31].

- Relative Comparison: The values themselves have no absolute meaning; their power is in relative comparison between models fitted to the same dataset [31]. A difference of more than 2 points is generally considered substantial evidence for one model over another.

- Focus on the Goal: Reconcile the differences between AIC and BIC by considering your research question. If the criteria disagree, it may be because AIC is prioritizing predictive performance while BIC is prioritizing the identification of a true model. There is no universal rule to reconcile this; it requires a thoughtful decision by the researcher [34].

Issue 3: Implementing Model Selection for Phylogenetic Comparative Methods

Problem: You want to implement a model selection workflow for phylogenetic comparative models in R.

Solution:

- Use Specialized Packages: The

PCMFitR package is a tool designed for the inference and selection of phylogenetic comparative models. It supports complex tasks like fitting models with unknown evolutionary shift points on a phylogeny [37]. - Leverage Parallel Computing: For computationally intensive tasks (e.g., large trees or complex models), use the parallel execution features in

PCMFit. This can dramatically speed up your analysis [37]. - Ensure Proper Installation: To use

PCMFit, install it from GitHub. For optimal performance, also installPCMBaseCpp, which uses C++ to accelerate likelihood calculations. Ensure you have a C++ compiler configured on your system [37].

Experimental Protocols & Data

Meta-Analysis of Phylogenetic Comparative Methods Fit

Objective: To assess the goodness-of-fit of various Phylogenetic Comparative Methods (PCMs) across many empirical datasets and determine if one method is generally more appropriate [30] [36].

Methodology:

- Data Collection: A systematic literature search was conducted using keywords related to comparative methods and independent contrasts. The final meta-analysis included 122 traits from 47 phylogenetic trees, with the number of species per tree ranging from 9 to 117 [30].

- Models Tested: The study compared several PCMs, including:

- ID: Independent (non-phylogenetic) model.

- FIC: Felsenstein's Independent Contrasts (Brownian motion model).

- PMM: Phylogenetic Mixed Model.

- PA: Phylogenetic Autocorrelation model.

- OU: Ornstein-Uhlenbeck model [30].

- Model Fitting and Selection: For each dataset and model, parameters were estimated using Maximum Likelihood Estimation (MLE). The Akaike Information Criterion (AIC) was then used to compare the fit of the different models to each dataset [30] [36].

Key Quantitative Findings: The following table summarizes the core findings from the meta-analysis regarding model fit and correlation estimates [35] [30] [36]:

| Aspect Investigated | Primary Finding | Implication for Researchers |

|---|---|---|

| Overall Model Fit | For phylogenies with less than 100 taxa, the Independent Contrasts (FIC) and the independent, non-phylogenetic models (ID) provided the best fit most frequently. | For smaller trees, simpler evolutionary models may be adequate. |

| Bivariate Correlation | Correlation estimates between two traits were qualitatively similar across different PCMs. | The choice of PCM may have less impact on the sign and general magnitude of estimated correlations between traits. |

| Recommendation | Researchers might apply the PCM they believe best describes the evolutionary mechanisms underlying their data. | The biological justification for a model remains paramount. |

Workflow and Logical Diagrams

Model Selection Workflow for PCMs

The following diagram illustrates a logical workflow for conducting model selection in phylogenetic comparative analysis:

The Complexity vs. Fit Trade-Off

This diagram visualizes the fundamental trade-off that AIC and BIC manage: model fit versus model complexity.

The Scientist's Toolkit: Essential Research Reagents

The following table details key materials, software, and statistical concepts used in model selection, particularly in the context of phylogenetic comparative methods.

| Item | Type | Function / Explanation |

|---|---|---|

| AIC (Akaike Information Criterion) | Statistical Criterion | Scores models based on log-likelihood and number of parameters; prefers models that fit well without unnecessary complexity [31] [32]. |

| BIC (Bayesian Information Criterion) | Statistical Criterion | Scores models more strictly than AIC, with a penalty that grows with sample size; tends to favor simpler models, especially with large datasets [31] [33]. |

| Log-Likelihood (LL) | Statistical Measure | A measure of how well a model explains the observed data. It is the foundation for calculating AIC and BIC [32]. |

| Maximum Likelihood Estimation (MLE) | Statistical Method | A technique for estimating the parameters of a model by maximizing the likelihood function. It provides the L in the AIC/BIC formulas [38] [32]. |

| PCMFit R Package | Software Tool | A specialized R package for fitting and selecting mixed Gaussian phylogenetic comparative models (MGPMs) with unknown evolutionary shifts, using criteria like AIC [37]. |

| Phylogenetic Tree | Data Structure | A graphical representation of the evolutionary relationships among species. It is the essential input structure for all phylogenetic comparative methods [30]. |

| Brownian Motion (BM) Model | Evolutionary Model | A null model of evolution that assumes trait changes are random and independent over time [30]. |

| Ornstein-Uhlenbeck (OU) Model | Evolutionary Model | A model that incorporates stabilizing selection, pulling a trait towards an optimal value [30]. |

FAQs: Core Concepts and Troubleshooting

Q1: Why is the choice of phylogenetic tree critical in gene expression analysis? All Phylogenetic Comparative Methods (PCMs) require an assumed tree to model trait evolution. If the chosen tree does not accurately reflect the evolutionary history of the gene expression traits under study, it can lead to severely inflated false positive rates in your analysis. This risk increases with larger datasets (more traits and species), counter to the intuition that more data mitigates model issues [39].

Q2: What are the common scenarios for tree choice and their potential pitfalls? Researchers often use a species tree estimated from genomic data. However, gene expression evolution may better follow the specific genealogy of the gene itself (gene tree). The mismatch between these trees is a major source of phylogenetic conflict [39]. The following table summarizes the performance of conventional phylogenetic regression under different tree-choice scenarios, where a trait evolves along one tree but is analyzed assuming another.

Table: Impact of Tree Choice on Conventional Phylogenetic Regression

| Scenario Code | Trait Evolved Along | Tree Assumed in Analysis | Impact on False Positive Rate |

|---|---|---|---|

| SS / GG | Species Tree / Gene Tree | Species Tree / Gene Tree | False positive rate remains acceptable (~5%) [39]. |

| GS | Gene Tree | Species Tree | Leads to high false positive rates, exacerbated by more data [39]. |

| SG | Species Tree | Gene Tree | Leads to high false positive rates, but generally performs better than GS [39]. |

| RandTree | Species/Gene Tree | Random Tree | Leads to the worst outcomes, with very high false positive rates [39]. |

| NoTree | Species/Gene Tree | No Tree (Phylogeny ignored) | Leads to high false positive rates, but may be better than assuming a random tree [39]. |

Q3: My dataset includes many gene expression traits, each with its own complex history. How can I manage this? In this realistic scenario, each trait evolves along its own trait-specific gene tree. Assuming a single species tree for all analyses (the GS scenario) consistently yields unacceptably high false positive rates with conventional regression. Using robust regression methods is a promising solution, as they can significantly reduce this sensitivity to tree misspecification [39].

Q4: What tools are available for integrated gene expression and genetic variation analysis?

The exvar R package is designed for this purpose. It provides a user-friendly set of functions for RNA-seq data preprocessing, differential gene expression analysis, and genetic variant calling (SNPs, Indels, CNVs), along with integrated data visualization apps, making it accessible to users with basic programming skills [40].

Troubleshooting Guide: Common Experimental Issues

Issue 1: High False Positive Rates in Phylogenetic Regression

- Problem: Your analysis detects many significant associations, but you suspect many are spurious due to an incorrectly specified phylogenetic tree.

- Solution:

- Robust Regression: Implement a robust sandwich estimator within your phylogenetic regression framework. Simulations show this method can rescue poor tree choices, reducing false positive rates from over 80% to near or below the 5% threshold, even when traits have heterogeneous evolutionary histories [39].

- Sensitivity Analysis: Perform your analysis under multiple plausible tree hypotheses (e.g., species tree vs. relevant gene trees) to check if your core findings are robust [39].

Issue 2: Inadequate Visualization of Dynamic Gene Expression Patterns

- Problem: Traditional heatmaps and static clusters fail to capture the temporal dynamics of gene expression in your time-course experiment.

- Solution: Employ advanced visualization methods like Temporal GeneTerrain. This technique creates a continuous, integrated view of gene expression trajectories by mapping normalized expression values onto a fixed protein-protein interaction network layout, revealing delayed responses and transient patterns that static methods miss [41].

Issue 3: Complexity of RNA-Seq Data Manipulation Workflows

- Problem: The workflow from raw sequencing data (Fastq files) to biologically significant information is complex and requires expertise in multiple tools.

- Solution:

- Integrated Packages: Use integrated packages like

exvarto streamline the process. The general workflow is as follows [40]:

- Integrated Packages: Use integrated packages like

- Step 1 - Preprocessing: Use

processfastq()on raw Fastq files for quality control (viarfastp), read trimming, and alignment to a reference genome (viagmapR), producing BAM files. - Step 2 - Expression Analysis: Use

expression()on BAM files to perform differential expression analysis (viaDESeq2). - Step 3 - Variant Calling: Use

callsnp(),callindel(), andcallcnv()on BAM files to identify genetic variants. - Step 4 - Visualization: Use

vizexp(),vizsnp(), andvizcnv()to generate interactive plots and apps for interpretation.- User-Friendly Software: For researchers preferring a graphical interface, point-and-click software like Partek Flow can be used for differential expression analysis and generating visualizations like PCA plots and heatmaps [42].

Experimental Protocol: Gene Expression and Phylogenetic Analysis

This protocol outlines the key steps for analyzing gene expression data within a phylogenetic framework, from data generation to evolutionary interpretation.

Step 1: Data Generation and Preprocessing

- RNA-seq Processing: Begin with raw Fastq files from your species of interest. Perform quality control, read trimming, and alignment to a relevant reference genome to produce BAM files. This can be done using the

processfastq()function in theexvarpackage [40]. - Generate Count Data: Quantify gene expression levels by counting the reads aligned to each gene, resulting in a count matrix for all genes and samples [40].

Step 2: Phylogenetic Tree Selection

- This is a critical step. Consider the genetic architecture of your traits:

- Species Tree: Use a well-supported species-level phylogeny if you are analyzing organism-level phenotypes or expression of many genes across the genome [39].

- Gene Trees: If the analysis focuses on the evolution of a specific gene or set of co-regulated genes, consider using the relevant gene trees, which may differ from the species tree [39].

- Documentation: Clearly state and justify the tree(s) used in your analysis.

Step 3: Phylogenetic Comparative Analysis

- Apply a Phylogenetic Generalized Least Squares (PGLS) model or similar PCM to test your hypotheses about gene expression evolution.

- Crucial Step: Implement robust regression techniques to account for potential phylogenetic uncertainty or tree misspecification [39].

Step 4: Visualization and Interpretation

- Standard Plots: Generate standard plots for differential expression (e.g., Volcano plots, PCA) using tools like

exvaror Partek Flow [40] [42]. - Temporal Dynamics: For time-course data, use advanced methods like Temporal GeneTerrain to visualize dynamic expression patterns [41].

- Functional Context: Integrate your results with functional annotations and pathway databases (e.g., Reactome, BioGRID) using tools like Cytoscape to gain biological insights [42].

Experimental Workflow and Signaling Pathways

The diagram below illustrates the integrated workflow for processing gene expression data and analyzing it within a phylogenetic framework.

Integrated Workflow for Phylogenetic Gene Expression Analysis

Research Reagent Solutions

Table: Key Tools and Resources for Phylogenetic Gene Expression Analysis

| Tool / Resource | Function / Application | Key Features / Notes |

|---|---|---|

| exvar R Package [40] | Integrated analysis of gene expression and genetic variation from RNA-seq data. | Includes functions for Fastq processing, differential expression, variant calling (SNPs, Indels, CNVs), and visualization Shiny apps. |

| Robust Regression [39] | Statistical method to reduce sensitivity to phylogenetic tree misspecification. | Employs a robust sandwich estimator to control false positive rates in PCMs when the assumed tree is incorrect. |

| Temporal GeneTerrain [41] | Advanced visualization of dynamic gene expression over time. | Creates continuous trajectories on a fixed network layout, revealing transient patterns and delayed responses. |

| DESeq2 [40] | Differential expression analysis of RNA-seq count data. | A core statistical engine used within packages like exvar for identifying differentially expressed genes. |

| VariantTools [40] | Genetic variant calling from sequencing data. | Used by the exvar package for identifying SNPs and indels. |

| Cytoscape [42] | Network data integration, analysis, and visualization. | Used for visualizing protein interaction networks and functional enrichment from gene lists. |

| Partek Flow [42] | Graphical user interface (GUI) software for bioinformatics analysis. | Enables differential expression analysis and visualization (PCA, heatmaps) without command-line programming. |

Diagnosing and Troubleshooting Common PCM Inadequacies

Frequently Asked Questions

What are the most common red flags indicating poor phylogenetic model performance? Common red flags include the model failing to converge during analysis, parameter estimates having excessively wide confidence intervals, the model showing poor fit to the data compared to simpler alternatives, and the model producing biologically implausible results [43].

How can I tell if my phylogenetic model is overfitting the data? A key sign of overfitting is when a highly complex model fails to find a better explanation for the data than a much simpler one. This can be assessed using criteria like AIC. If adding parameters does not yield a significantly better fit, the complex model may be overfitting [35] [43].

My model's performance drops significantly when applied to new data. What does this indicate? A sharp drop in performance on new data often signals problems like overfitting or data leakage, where information from the test set inadvertently influenced the training process. This means the model learned the specific noise in your training data rather than the general evolutionary pattern [44].

What does it mean if the correlations from my comparative analysis are not robust? In a meta-analysis, correlations from different Phylogenetic Comparative Methods (PCMs) are often qualitatively similar. If your results change drastically between well-fitting models, it is a red flag that the identified evolutionary signal may not be reliable [35].

Why is it a problem if my team cannot clearly articulate the project's goals? Unclear, non-measurable goals make it impossible to select the right data, algorithms, or evaluate the model's effectiveness. This foundational misalignment is a major red flag that often leads to project failure [43].

Troubleshooting Guide: Diagnosing Common Issues

Overfitting and Underfitting

| Symptom | Description | Diagnostic Check | Corrective Protocol |

|---|---|---|---|

| Overfitting | Model learns noise/training data specifics; performs poorly on new data [43] [44] | Large performance gap (e.g., training accuracy 98% vs. validation accuracy 70%) [43] | Simplify model; use cross-validation; apply regularization; use early stopping [43] |

| Underfitting | Model is too simple; fails to capture underlying data trend [43] | Low accuracy on both training and validation data; high bias [43] | Increase model complexity; add relevant features; reduce constraints [43] |

Data and Validation Problems

| Symptom | Description | Diagnostic Check | Corrective Protocol |

|---|---|---|---|

| Data Leakage | Model uses information during training that is unavailable during prediction, creating overly optimistic performance [44] | Unusually high performance on validation data; relies on features unavailable at prediction time [44] | Ensure proper data splitting; perform preprocessing (e.g., scaling) after split; use time-series validation for temporal data [44] |

| Poor Data Quality | Data is unrepresentative, has insufficient quantity, or contains significant errors [43] | Model struggles to generalize; results are unstable; presence of many missing values or outliers [43] | Perform rigorous data validation; clean data; use data augmentation; address class imbalance [43] |

| Ignored Validation | No robust validation process exists, so model performance is not reliably assessed [43] | No separate validation set; performance metrics only reported on training data [43] | Implement k-fold cross-validation; use a strict hold-out test set [43] |

Model Output and Team Dynamics

| Symptom | Description | Diagnostic Check | Corrective Protocol |

|---|---|---|---|

| Unclear Goals | Project lacks clear, measurable objectives, leading to misaligned efforts [43] | Team cannot articulate a clear business problem or key performance indicators (KPIs) [43] | Engage stakeholders to define precise objectives and measurable KPIs before modeling [43] |

| Skewed Metrics | Reliance on a single or inappropriate performance metric provides a misleading success picture [43] | High accuracy on imbalanced dataset but poor precision/recall; metrics misaligned with project goals [43] | Use a balanced set of metrics (e.g., precision, recall, F1-score) relevant to the biological question [43] |

| Poor Team Dynamics | Lack of collaboration, communication, or key expertise hinders project progress [43] | Inadequate communication between team members; lack of essential ML or domain skills [43] | Foster clear communication and collaboration; ensure team possesses necessary mix of skills [43] |

Specific Red Flags in Phylogenetic Comparative Methods

The table below summarizes quantitative findings from a meta-analysis on the fit of Phylogenetic Comparative Methods, providing a benchmark for model selection [35].

| Number of Taxa | Best-Fitting Model Type | Notes on Correlation Robustness |

|---|---|---|

| Less than 100 | Independent Contrasts and Independent (non-phylogenetic) Models [35] | For bivariate analysis, correlations from different PCMs are often qualitatively similar, making actual correlations from real data robust to the PCM chosen for analysis [35]. |

| Not Specified | Varies | Researchers might apply the PCM they believe best describes the underlying evolutionary mechanisms for their data [35]. |

Experimental Protocols for Assessment

Protocol 1: Assessing Model Fit with Akaike Information Criterion (AIC)

Purpose: To compare different phylogenetic comparative models and select the one that best explains the data without overfitting [35]. Methodology:

- Model Fitting: Fit a set of candidate PCMs (e.g., Independent Contrasts, Brownian Motion, Ornstein-Uhlenbeck) to your trait data using Restricted Maximum Likelihood (REML) analysis [35].

- AIC Calculation: Calculate the AIC value for each fitted model. AIC balances model fit with complexity, penalizing models with more parameters [35].

- Model Comparison: Compare the AIC values across all candidate models. The model with the lowest AIC value is considered the best-fit model [35]. Interpretation: A model with many parameters that does not achieve a significantly lower AIC than a simpler model is likely overfitting and should be viewed with caution.

Protocol 2: Detecting Data Leakage via Proper Data Splitting

Purpose: To ensure that a model's performance estimates are reliable and generalizable by preventing information from the validation set from influencing the training process [44]. Methodology:

- Pre-Split Data Handling: Before any preprocessing or analysis, split your dataset into training and validation (or test) sets. For time-series phylogenetic data, use a chronological split to ensure the model is trained on past data and validated on "future" data [44].

- Independent Preprocessing: Perform all data preprocessing steps (e.g., scaling, normalization, handling missing values) based only on the statistics of the training set. Then, apply the same transformations to the validation set [44].

- Validation: Train your model exclusively on the processed training data. Finally, evaluate its performance on the untouched validation set [44]. Interpretation: A significant drop in performance on the validation set compared to the training set is a strong indicator of overfitting or data leakage.

Workflow Diagram

The following diagram illustrates the logical workflow for identifying and addressing red flags in phylogenetic model performance.

Diagram: Red Flag Identification and Resolution Workflow

The Scientist's Toolkit: Key Research Reagents & Software

The following table details essential software tools and conceptual "reagents" used in phylogenetic comparative analysis and model assessment.

| Item Name | Type | Function / Application |

|---|---|---|

| PAUP* | Software Package | A comprehensive software package for phylogenetic analysis using parsimony, likelihood, and distance methods. Used for tree inference and implementing comparative methods [25]. |

| Simple Phylogeny | Web Tool | A tool for performing basic phylogenetic analysis on a multiple sequence alignment, useful for quick tree building [45]. |

| Akaike Information Criterion (AIC) | Statistical Criterion | Used to compare the relative quality of statistical models for a given dataset, helping to select the best-fit model while penalizing overfitting [35]. |

| Independent Contrasts (IC) | Phylogenetic Comparative Method | A method that uses differences between sister taxa to analyze trait evolution, correcting for phylogenetic non-independence [35] [46]. |

| Phylogenetic Generalized Least Squares (PGLS) | Phylogenetic Comparative Method | Extends traditional generalized least squares regression to account for phylogenetic relationships in the data [46]. |

| Newick Format | Data Format | A standard format for representing tree structures (e.g., phylogenetic trees) in a computer-readable form, enabling data exchange between different programs [45]. |

Addressing Rate Heterogeneity Across the Tree

Why is my phylogenetic analysis detecting unexpected evolutionary patterns, such as a false signal of constrained evolution (OU)?

This often occurs when unmodeled rate heterogeneity is present in your data. Standard single-process models like Brownian motion (BM) assume a constant rate of evolution across the entire tree. When this assumption is violated—for instance, if there are isolated branches or specific clades with accelerated rates—these models can be misled, systematically mislabeling temporal trends in trait evolution [47]. An Ornstein-Uhlenbeck (OU) model might be incorrectly selected as the best fit simply because it can partially accommodate the pattern created by the unaccounted-for rate variation, not because it represents the true generating process [47].

How can I test if rate heterogeneity is affecting my analysis?

The most robust method involves comparing the relative and absolute fit of homogeneous and heterogeneous models [47].