Beyond Brownian Motion: Critical Limitations of the Ornstein-Uhlenbeck Model in Evolutionary Biology and Biomedical Research

This article provides a critical examination of the Ornstein-Uhlenbeck (OU) process as a standard model for continuous trait evolution, specifically tailored for researchers and drug development professionals.

Beyond Brownian Motion: Critical Limitations of the Ornstein-Uhlenbeck Model in Evolutionary Biology and Biomedical Research

Abstract

This article provides a critical examination of the Ornstein-Uhlenbeck (OU) process as a standard model for continuous trait evolution, specifically tailored for researchers and drug development professionals. We first explore the foundational assumptions of the OU model—its stabilizing selection paradigm and mathematical elegance—and contrast them with biological reality. We then detail methodological challenges in parameter estimation and application to complex, high-dimensional traits like gene expression or disease biomarkers. The troubleshooting section addresses common pitfalls in model fitting, identifiability issues, and violations of core assumptions such as constant selection and single adaptive peak. Finally, we present a comparative analysis of OU against alternative models (e.g., Brownian Motion with trends, multi-OU, Hansen models, and Lévy processes) for validation in phylogenetic comparative methods. The synthesis argues for a more nuanced, multi-model framework to accurately infer evolutionary processes and rates, which is crucial for predicting pathogen evolution, understanding cancer progression, and identifying druggable evolutionary constraints.

What is the OU Model? Core Assumptions vs. Biological Reality in Trait Evolution

Technical Support Center: Ornstein-Uhlenbeck (OU) Process in Trait Evolution Research

Frequently Asked Questions (FAQs)

Q1: My model estimation consistently returns a very low mean-reversion rate (θ). What does this imply about my trait evolution data? A: A low θ (near zero) suggests weak or absent stabilizing selection. The trait may be evolving under a neutral process (like Brownian motion) or directional selection rather than stabilizing selection. Check: 1) Data quality for measurement error, 2) Phylogeny correctness, 3) If the 95% confidence interval for θ includes zero, the OU model may be overparameterized for your data.

Q2: How do I differentiate between an OU process and a simple Brownian Motion (BM) process in my phylogenetic comparative analysis? A: Use model comparison tools like AICc (Akaike Information Criterion corrected for small sample size). Fit both BM and OU models to your trait data. A lower AICc for OU suggests mean-reverting dynamics. A ΔAICc > 4-7 provides substantial support for the better model. Be wary of overfitting if the OU model's α (selection strength) is estimated with high uncertainty.

Q3: During stochastic simulation of an OU process for power analysis, what is a common source of unrealistic trait values? A: Incorrect scaling of the stochastic term (σ). Ensure the variance of the Wiener process (dW_t) is scaled correctly by the square root of the time step (√Δt) in your discrete-time approximation: X(t+Δt) = X(t) + θ(μ - X(t))Δt + σ√Δt * Z, where Z ~ N(0,1).

Q4: My OU model fitting yields multiple, biologically implausible "optima" (μ). Is this a technical artifact? A: Yes, this is a known limitation called "multi-modal likelihood surfaces." The OU likelihood function can have multiple local maxima. Solutions: 1) Use multiple, widely dispersed starting values for parameters in optimization routines. 2) Employ Bayesian approaches with informed priors to constrain optima to plausible regions. 3) Consider if your model has too many adaptive regimes for the data.

Q5: How sensitive is OU model parameter estimation to errors in the phylogenetic branch lengths? A: Highly sensitive. The OU process is explicitly time-dependent. Incorrect branch lengths (e.g., using divergent time instead of generation time, or poor fossil calibration) will bias estimates of α (selection strength) and σ² (random drift). Always conduct sensitivity analyses by re-fitting under reasonable alternative tree scalings.

Troubleshooting Guides

Issue: Parameter Identifiability Failure in Multi-OU Regime Models Symptoms: Optimization fails to converge, or parameter estimates have extremely large confidence intervals. Diagnostic Steps:

- Check for correlation between estimated α (rate) and σ² (variance) using the variance-covariance matrix. High correlation (>0.9) indicates identifiability problems.

- Simplify the model. Reduce the number of hypothesized selective regimes (optima).

- Verify that your phylogeny has sufficient species in each defined selective regime.

Solution: Use a

lasso-OUorHansen-type penalty to regularize estimates, or revert to a simpler single-optimum OU or BM model.

Issue: Poor Model Fit Despite Significant OU Parameters Symptoms: Significant θ and α parameters, but diagnostic plots (e.g., residuals vs. fitted) show strong patterns, indicating lack of fit. Diagnostic Steps:

- Plot the phylogenetically independent contrasts (PICs). Check if they are normally distributed (QQ-plot).

- Test for omitted variables or shifts in the optimum not specified in your model.

Solution: Consider more complex models like

OUwie(OU with multiple optima) ormvOU(multivariate OU) if traits are correlated. Account for measurement error in the model.

Issue: Computational Overhead in Large Phylogenies (>500 tips) Symptoms: Extremely long computation times or memory overflow when calculating the variance-covariance matrix. Solution:

- Use

transformPhylo.MLor similar functions that utilize fast algorithms for the phylogenetic covariance matrix (e.g.,vcvcaching). - Consider approximations like

phylolmwithmethod = "reml". - Use high-performance computing resources for likelihood calculation.

Table 1: Key OU Process Parameters and Their Biological Interpretations

| Parameter | Mathematical Symbol | Typical Notation in Biology | Interpretation in Trait Evolution | Common Pitfall in Estimation |

|---|---|---|---|---|

| Mean Reversion Rate | θ | α (alpha) | Strength of stabilizing selection. High α = rapid return to optimum. | Correlated with σ²; unidentifiable with small phylogenies. |

| Long-Term Mean | μ | θ (theta) | Adaptive optimum trait value. | Can be confounded with the ancestral state. |

| Stochastic Volatility | σ | σ² (sigma-squared) | Rate of random drift or unpredictable change. | Sensitive to branch length scaling. |

| Decay Rate / Half-Life | - | t₁/₂ = ln(2)/α | Time for expected deviation from μ to halve. | Misinterpreted if tree is in units of Myr vs. generations. |

Table 2: Model Comparison Metrics for OU vs. Brownian Motion

| Metric | Brownian Motion (BM) Model | Ornstein-Uhlenbeck (OU) Model | Interpretation |

|---|---|---|---|

| Number of Parameters | 2 (ancestral state, σ²) | 3-4+ (α, μ, σ², sometimes ε) | OU is more complex. |

| AICc Score (Example on 100-tip tree) | -120.5 | -150.2 | Lower AICc favors OU. |

| ΔAICc | 29.7 | 0.0 | Strong support for OU (ΔAICc > 10). |

| Estimated α | 0 (by definition) | 0.8 [CI: 0.3, 1.5] | α > 0 indicates mean reversion. |

| Biological Implication | Neutral evolution / drift. | Stabilizing selection toward an optimum. |

Experimental Protocols

Protocol 1: Fitting a Single-Optimum OU Model to Phylogenetic Trait Data

Objective: Estimate the parameters of stabilizing selection (α, μ, σ²) on a continuous trait.

Materials: Time-calibrated phylogeny (Newick format), trait data for all terminal taxa (csv).

Software: R with packages ape, geiger, ouch, or phylolm.

Method:

- Data Preparation: Match trait data to tree tips. Log-transform trait if necessary to stabilize variance.

- Model Specification: Define the OU model structure:

dX = α(μ - X)dt + σ dW. - Likelihood Calculation: Use

phylolm(trait ~ 1, phy=tree, model="OU")or equivalent. - Parameter Optimization: Employ maximum likelihood (ML) or restricted ML (REML) to find best-fit α, μ, σ².

- Diagnostics: Analyze residuals, conduct likelihood ratio test against BM (where α=0).

- Validation: Perform parametric bootstrap (n=100 simulations) to assess parameter confidence intervals.

Protocol 2: Power Analysis via OU Simulation for Experimental Design

Objective: Determine the minimum phylogenetic sample size (tips) needed to detect a given selection strength α.

Materials: Hypothetical or resampled phylogenies, target effect sizes for α and σ².

Software: R packages geiger, TreeSim, mvtnorm.

Method:

- Simulation Framework: Generate a set of random phylogenies (e.g., 100 trees) with varying numbers of tips (N=50, 100, 200).

- Parameter Setting: Fix known parameters: μ=0, σ²=1, and a range of α values (e.g., 0.1, 0.5, 1.0).

- Trait Simulation: For each tree and α, simulate trait data using the exact OU process:

sim.char()or custom Euler-Maruyama discretization. - Model Fitting: Fit an OU model to each simulated dataset and record if α is estimated significantly different from zero (p < 0.05).

- Power Calculation: Power = (Number of simulations with significant α) / (Total simulations). Plot power vs. N for each α.

Visualizations

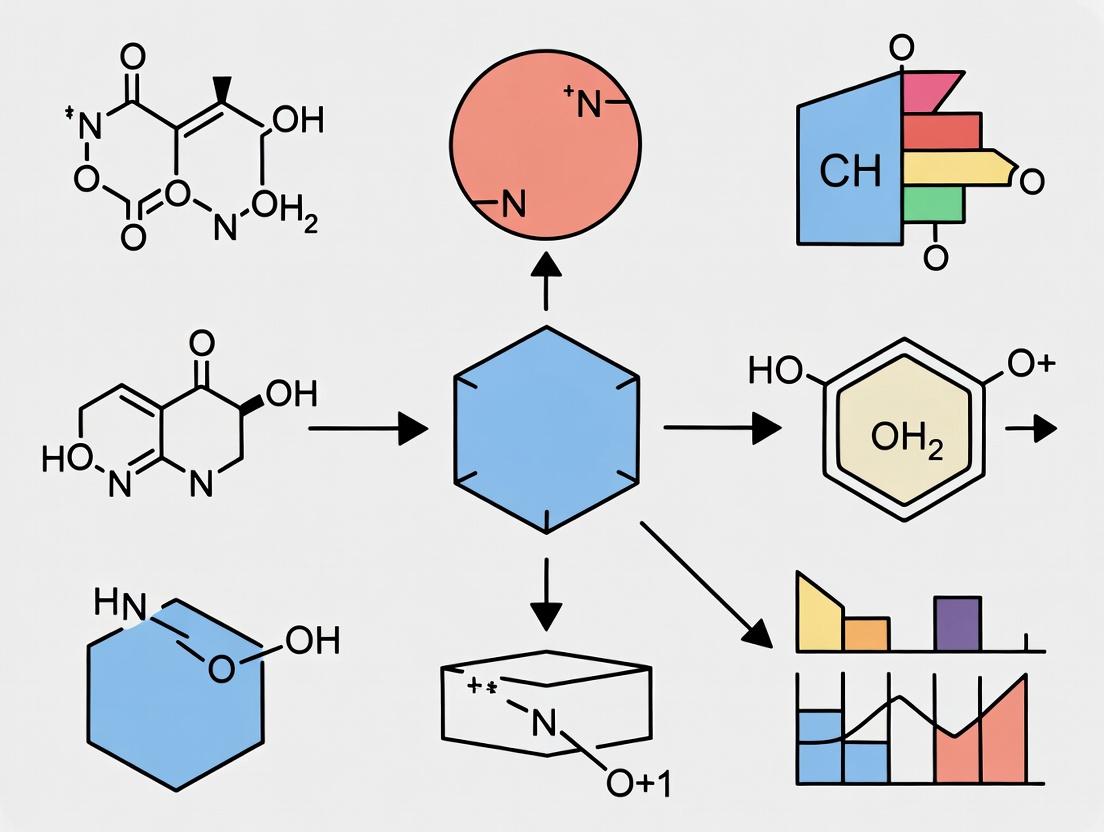

Title: Phylogenetic OU Model Fitting Workflow

Title: OU Process Equation Components

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for OU Analysis in Trait Evolution

| Item/Software Package | Function/Biological Analogue | Brief Explanation of Use |

|---|---|---|

R Package phylolm |

High-throughput estimator. | Efficiently fits phylogenetic regression under BM/OU models. Use for large trees. |

R Package OUwie |

Multi-optima detector. | Fits OU models with multiple, discrete selective regimes across the phylogeny. |

R Package geiger |

Data & tree curator. | Harmonizes trait data with phylogeny; performs simulations (sim.char) for power analysis. |

R Package mvMORPH |

Multivariate trait analyzer. | Fits multivariate OU (mvOU) models for correlated traits evolving under stabilizing selection. |

Bayou (R package) |

Bayesian OU explorer. | Uses reversible-jump MCMC to sample multiple shift locations and magnitudes in OU parameters. |

TreeSim |

Phylogenetic simulator. | Generates realistic phylogenies for simulation-based power analysis and model validation. |

| Euler-Maruyama Script | Custom simulator. | Discretizes the OU SDE for fine-control simulations (e.g., for population-level data). |

Technical Support Center: Troubleshooting OU Model Analyses in Trait Evolution

Frequently Asked Questions (FAQs)

Q1: My OU model fit consistently returns an optimum (θ) with an unreasonably large confidence interval. What experimental or data issues could cause this? A: Overly wide confidence intervals for the adaptive peak (θ) often indicate insufficient phylogenetic signal or data points relative to the strength of selection (α). This is common when studying traits under very weak stabilizing selection. Troubleshooting Guide:

- Check Data Structure: Ensure your trait data spans a sufficient range of phenotypic values. A cluster of species with nearly identical trait values provides little information about the location of the peak.

- Verify Phylogeny: Use

phylosigin R to calculate Blomberg's K. A low K (<0.5) suggests weak phylogenetic signal, making α and θ difficult to estimate. Consider if your trait is truly heritable. - Protocol – Power Analysis: Simulate trait data under an OU process with your study phylogeny and hypothesized parameters (α, σ², θ). Re-fit models to multiple simulated datasets to determine the sample size (number of species) required for precise θ estimation.

- Experimental Consideration: In laboratory evolution studies, ensure your measurement timepoints capture the approach to equilibrium, not just the initial or final state.

Q2: During model selection, Brownian Motion (BM) is favored over OU for a trait believed to be under stabilizing selection. What does this imply? A: This result suggests that for your specific dataset and phylogeny, a model of unconstrained drift (BM) explains the trait variation as well as or better than a model with constraint (OU). Key interpretations:

- Weak Selection: The strength of stabilizing selection (α) may be too low to be statistically detectable given your phylogenetic tree's length and branching structure.

- Model Misspecification: The single-optimum OU model may be incorrect. The trait might be evolving under multiple selective regimes (shifting peaks) not captured by your model.

- Protocol – Regime Shift Test:

- Use

OUwieorbayouin R to fit multi-optima OU models based on a priori hypothesized selective regimes (e.g., habitat, diet). - Compare AICc scores of BM, single-optimum OU, and multi-optimum OU models.

- Visually inspect phylogenetic residuals for clade-specific patterns.

- Use

Q3: How do I interpret a very low estimate for the selection strength (α) that is not statistically different from zero? A: A non-significant α indicates failure to reject the null hypothesis of α=0, which corresponds to a Brownian Motion process. The biological interpretation is not necessarily "no selection," but that the data lack power to distinguish constrained evolution from drift. Report this as an upper bound on the possible strength of stabilizing selection (e.g., "α < [upper CI value]"). Consider increasing sample size or using experimental approaches (see Toolkit).

Q4: My OU model analysis suggests a shift in the optimum (θ) at a specific node, but the trait data for descendent clades overlap broadly. Is this result valid? A: Possibly not. Overlapping trait distributions weaken evidence for a distinct adaptive peak. This can be a spurious result from overfitting.

- Troubleshooting Protocol:

- Calculate the phenotypic disparity (variance) within each putative regime.

- Perform a phylogenetic ANOVA (

phylANOVAinphytools) to test for a significant difference in means while accounting for phylogeny. - Use a multi-optima model that estimates a single θ for clades with overlapping distributions and compare its fit via AICc.

Table 1: Common OU Model Output Parameters and Their Biological Interpretations

| Parameter | Symbol | Interpretation | Value Range | Indicates |

|---|---|---|---|---|

| Selection Strength | α | Rate of trait evolution toward the optimum. | α ≥ 0 | High α: Strong stabilizing selection. α=0: Neutral drift (BM). |

| Optimum Trait Value | θ | The adaptive peak or selective optimum. | Real number | The preferred phenotype under the model's regime. |

| Evolutionary Rate | σ² | Rate of stochastic motion under BM. Scaled by α in OU. | σ² > 0 | The background rate of unpredictable change. |

| Phylogenetic Half-life | t₁/₂ = ln(2)/α | Time for expected trait disparity from θ to halve. | t₁/₂ > 0 | Short t₁/₂: Rapid adaptation. Long t₁/₂: Slow approach to peak. |

| Stationary Variance | ν² = σ²/(2α) | Expected trait variance at equilibrium. | ν² > 0 | The balance between stochastic (σ²) and deterministic (α) forces. |

Table 2: Troubleshooting Diagnostic Checks for OU Model Fits

| Issue | Diagnostic Check | Acceptable Range | Corrective Action |

|---|---|---|---|

| Unstable θ Estimate | Width of 95% CI for θ | CI should be small relative to total trait range. | Increase species sampling within clades; verify trait heritability. |

| α Not Significant | P-value for α > 0.05 & Upper CI for α | -- | Report as an upper bound; consider power limitations; test for multi-regime models. |

| Poor Model Fit | Model AICc vs. BM AICc | ΔAICc(OU - BM) < -2 favors OU. | Check for regime shifts; consider more complex models (OUMA, OUMV). |

| Poor Data Likelihood | Log-likelihood value | Compare to simulations. | Check for outliers; ensure phylogeny and trait data are correctly aligned. |

Experimental Protocols

Protocol 1: Power Analysis for OU Model Parameter Estimation

- Objective: Determine the minimum number of species required to reliably detect a given strength of stabilizing selection (α).

- Software: R packages

geiger,phytools,mvMORPH. - Method:

- Simulate 1000 trait datasets under an OU process on your study phylogeny, using fixed, biologically plausible values for σ² and θ, and a range of α values you wish to detect.

- For each simulated dataset, fit an OU model and record the estimated α and its 95% confidence interval.

- Calculate statistical power as the proportion of simulations where the estimated α's confidence interval excludes zero.

- Repeat simulations on trimmed/expanded phylogenies to see how power scales with species number.

Protocol 2: Testing for Multiple Adaptive Regimes

- Objective: Determine if a trait evolves under different selective optima (θ) in different clades or ecological contexts.

- Software: R packages

OUwie,bayou. - Method:

- Define a priori hypotheses for regime shifts (e.g., based on ecology, morphology). Map these onto your phylogeny.

- Fit at least three models: a single-regime OU model (OUM), a multi-regime model with different θ but shared α and σ² (OUM), and a more complex model with different σ² per regime (OUMV).

- Compare models using sample-size-corrected AIC (AICc). A ΔAICc > 2 suggests meaningful improvement.

- Perform a Likelihood Ratio Test (LRT) between the single- and multi-regime OUM models to assess statistical significance.

Mandatory Visualizations

Diagram Title: OU Model Fitting & Diagnostic Workflow

Diagram Title: Force Dynamics of BM vs. OU Trait Evolution

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Validating OU Model Predictions

| Item / Reagent | Function in Experimental Validation | Example / Specification |

|---|---|---|

| Isogenic Model Organism Lines | Provides controlled genetic background to study trait evolution without confounding variation. | Drosophila melanogaster isofemale lines, Zebrafish clonal strains. |

| Controlled Environmental Chambers | Allows application of defined, sustained selective pressure (e.g., temperature, salinity) to track trait change. | Precision incubators with programmable temperature/humidity cycles. |

| High-Throughput Phenotyping System | Enables precise, longitudinal measurement of quantitative traits (e.g., morphology, growth) across many individuals/generations. | Automated image analysis (e.g., PhenoTyper), multi-well spectrophotometers. |

| Genotyping-by-Sequencing Kit | For tracking allele frequency changes at candidate loci or QTLs underlying the trait, linking model parameters to genetics. | DArTseq, RADseq, or whole-genome sequencing services. |

| Phylogenetic Comparative Software | Core analytical tool for fitting and diagnosing OU and related models. | R packages: geiger, phytools, OUwie, bayou, mvMORPH. |

| Time-Series Evolution Simulation Platform | For power analysis and generating null expectations under BM/OU processes. | R simulate functions in phytools/geiger; MESQUITE software. |

Technical Support Center: Troubleshooting OU Model Analyses

Troubleshooting Guides

Issue 1: Poor Model Fit Indicated by Low AICc Scores or Significant Likelihood Ratio Test (LRT) p-values.

- Problem: The single-optimum OU model (Brownian motion with a single adaptive peak) is a poor fit for your trait data.

- Diagnosis: This often indicates a violation of the Stationary Optimum assumption. The selective landscape may have shifted during the phylogeny's history.

- Solution: Implement a multi-optimum OU model (e.g., OUwie, l1ou, bayou). Test hypotheses where selective regimes (optima) shift at specific phylogenetic nodes (e.g., habitat transition, key innovation).

- Protocol: Using the

OUwieR package:- Prune your phylogeny and match trait data.

- Fit a BM1 (Brownian Motion) model:

fitBM <- OUwie(phy, data, model="BM1", simmap.tree=FALSE). - Fit a single-optimum OUM model:

fitOUM <- OUwie(phy, data, model="OUM", simmap.tree=FALSE). - Fit a multi-regime OUM model using an a priori hypothesis matrix (

simmap.tree=TRUE). - Compare models using AICc:

aicc <- c(fitBM$AICc, fitOUM$AICc, fitOUM_multi$AICc).

Issue 2: Unrealistically High or Low Estimates of the Selection Strength Parameter (Alpha).

- Problem: Estimated alpha is near zero (effectively BM) or extremely high (indicating extremely rapid adaptation).

- Diagnosis: Likely violates Constant Strength of Selection or interacts with Rate Homogeneity. A single alpha may be inadequate if the rate of adaptation varies across clades or branches.

- Solution: Test models that allow alpha to vary across the tree. Consider early-burst models or adaptive radiation models as alternatives.

- Protocol: Using

bayouR package for multi-alpha models:- Set up priors for alpha and sigma².

- Configure the MCMC chain parameters (iterations, thinning).

- Run the reversible-jump MCMC to sample across models with varying numbers of alpha shifts.

- Check convergence (effective sample size > 200, Gelman-Rubin diagnostic ~1.0).

- Use posterior probabilities to identify branches with different alpha values.

Issue 3: Model Cannot Distinguish Between Adaptive and Neutral Evolution (BM vs. OU).

- Problem: Likelihoods for BM and OU1 models are statistically indistinguishable.

- Diagnosis: Low phylogenetic signal or insufficient data. The Rate Homogeneity assumption may be violated if trait evolution rate (sigma²) varies significantly across the tree, confounding alpha estimation.

- Solution: Increase taxon sampling. Test for rate heterogeneity using models like BMS (variable sigma²) before testing OU models.

- Protocol: Using

geigerR package:- Fit a homogenous-rate BM model (

fitContinuous(phy, data, model="BM")). - Fit a multi-rate BM model (

fitContinuous(phy, data, model="BMS")). - Compare models using LRT or AICc. If BMS is better, account for rate heterogeneity before fitting OU models.

- Fit a homogenous-rate BM model (

Frequently Asked Questions (FAQs)

Q1: My trait data is multivariate. How do these assumptions apply?

A: The assumptions become more restrictive. A Stationary Optimum assumes a constant multivariate adaptive peak. Constant Strength of Selection extends to a stability matrix (A), and Rate Homogeneity applies to the evolutionary rate matrix (R). Violations are more complex to diagnose. Use multivariate OU (mvOU) implementations in mvMORPH or RPANDA.

Q2: Can I test the Stationary Optimum assumption directly?

A: Yes. Use survival or changepoint analysis on phylogenetic independent contrasts (PICs) to detect significant shifts in trait evolution rate and direction, which may indicate optimum shifts. The l1ou package performs automated detection of shift points.

Q3: What are the practical consequences of ignoring Rate Homogeneity? A: It can lead to spurious detection of selection (nonzero alpha). If a clade has a higher intrinsic rate of evolution (sigma²), an OU model may misinterpret this as strong pull toward an optimum. Always test for rate variation first.

Q4: Are there experimental protocols in drug development that are sensitive to these assumptions? A: Yes. When modeling the evolution of pathogen drug resistance traits or host protein target conservation, an incorrect Stationary Optimum assumption can lead to wrong predictions about future evolutionary pathways. Use model-averaged forecasts that account for optimum shifts.

Table 1: Common OU Model Violations and Diagnostic Signals

| Assumption Violated | Key Diagnostic Model Fit Statistic | Typical Software Output Warning |

|---|---|---|

| Stationary Optimum | Multi-optimum OU (OUM) has ΔAICc > 2 vs. OU1 | High parameter correlation between alpha & optima |

| Constant Strength of Selection | Multi-alpha OU model preferred | Alpha estimate confidence interval includes 0 or is extremely large |

| Rate Homogeneity | Multi-sigma² (BMS) model better than BM | Poor chain mixing in MCMC for alpha/sigma² |

Table 2: Recommended Experimental & Analytical Validation Steps

| Research Phase | Action to Validate Assumptions | Target Metric |

|---|---|---|

| Study Design | Maximize taxon sampling within clades of interest | >20 species per hypothesized selective regime |

| Model Fitting | Always fit a series of nested models | ΔAICc, LRT p-value, parameter confidence intervals |

| Model Checking | Perform phylogenetic residual diagnostics | Check for autocorrelation, outliers |

Visualizations

Diagram Title: OU Model Assumption Testing Workflow

Diagram Title: Core OU Process and Its Foundational Inputs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for OU Model Analysis

| Tool / Reagent | Function in Analysis | Key Consideration |

|---|---|---|

R Package: OUwie |

Fits OU models under multiple evolutionary hypotheses. Primary tool for testing Stationary Optimum. | Requires a priori regime paintings on the tree. |

R Package: geiger / phytools |

Data and tree manipulation, basic BM/OU fitting, phylogenetic diagnostics. | Essential for checking data integrity before complex analysis. |

R Package: bayou |

Bayesian MCMC fitting of multi-optimum, multi-alpha OU models. Tests Constant Strength & Stationary Optimum. | Computationally intensive; requires careful prior tuning and convergence checks. |

R Package: mvMORPH |

Fits multivariate OU (mvOU) models. Essential for complex, correlated traits. | High parameter count demands large phylogenies for reliable inference. |

Software: BEAST2 |

Bayesian phylogenetic inference with relaxed clock models. Can generate trees that inform Rate Homogeneity tests. | Use calibrated trees with branch lengths in meaningful time units for alpha interpretation. |

Database: TimeTree.org |

Source for divergence time calibrations to create ultrametric phylogenies (required for OU). | Ensure calibration quality matches your study clade. |

This technical support center addresses common issues researchers face when applying the Ornstein-Uhlenbeck (OU) model to study constrained trait evolution, particularly in comparative phylogenetic studies relevant to drug target discovery.

Troubleshooting Guides & FAQs

Q1: My model selection (OU vs. BM) consistently favors a single-optimum OU model for every trait, even when biological intuition suggests neutral drift. What might be wrong? A: This often indicates a model overparameterization or insufficient data issue.

- Check: Run a simulation under a pure Brownian Motion (BM) null. Fit both BM and OU models to this simulated data. If OU is consistently selected, your statistical power is too low for reliable discrimination. Power increases with more species (>50 for moderate constraints) and stronger measured constraint (higher

α). - Protocol: Power Analysis Simulation

- Simulate trait data under BM on your empirical phylogeny using

rTraitContin R (phytools/ape). - Fit both BM (

fitContinuousingeiger) and OU (OUorHansen) models. - Use AICc to select the best model. Repeat 1000x.

- Calculate the percentage of times the true BM model is correctly recovered. If <80%, consider your study underpowered.

- Simulate trait data under BM on your empirical phylogeny using

Q2: The estimated primary optimum (θ) for my trait of interest seems biologically implausible. How can I validate it? A: The parameter θ is highly sensitive to the root state assumption and taxon sampling.

- Check 1 (Root State): Re-fit the model using different root state reconstruction methods (e.g., maximum likelihood, ancestral state) and compare θ estimates.

- Check 2 (Sampling Bias): If your phylogeny over-represents a specific clade adapted to an extreme environment, θ will be biased toward that clade's mean. Perform a sensitivity analysis by pruning clades iteratively.

- Protocol: Sensitivity Analysis for θ

- Estimate θ for the full phylogeny.

- Systematically prune one major clade at a time and re-estimate θ.

- Create a table of θ estimates vs. pruned clade. Major shifts indicate high sensitivity to that clade's data.

Q3: When fitting a multi-optimum OU model (OUwie), the algorithm fails to converge or yields infinite likelihoods. What steps should I take? A: This is a classic symptom of overly complex models for the available data.

- Check 1 (Parameter Boundaries): Constrain the

α(selection strength) andσ²(volatility) parameters to be equal across regimes to reduce the number of estimated parameters. - Check 2 (Regime Definition): Ensure your a priori shift regimes (e.g., dietary categories, habitat types) are well-represented in the tree. Regimes with very few species (<5) can cause estimation failure.

- Protocol: Simplified Model Workflow

- Start with a BM1 model (single

σ²). - Fit an OUM model (different

θper regime, but sharedαandσ²). - Only if OUM fits significantly better, proceed to OUMV (different

σ²) or OUMA (differentα). - Always compare models using AICc or likelihood ratio tests.

- Start with a BM1 model (single

Key Data & Parameter Tables

Table 1: Interpreting OU Model Parameters in a Biological Context

| Parameter | Symbol | Interpretation | Common Pitfall |

|---|---|---|---|

| Primary Optimum | θ | The "pull" or adaptive target trait value. | Confusing statistical optimum with a true adaptive peak without external evidence. |

| Selection Strength | α | Rate of adaptation toward θ. High α = strong constraint. | High α can also mask omitted variables or model misspecification. |

| Volatility | σ² | Rate of stochastic motion under random evolution. | Correlated with α; difficult to estimate independently with small datasets. |

| Phylogenetic Half-Life | t₁/₂ = ln(2)/α | Time for the expected trait disparity to reduce by half. | Very short half-lives can make OU indistinguishable from white noise. |

Table 2: Example Model Selection Output (Simulated Data)

| Trait | Model | Log-Lik | AICc | ΔAICc | Parameters | Estimated θ (SE) | Estimated α (SE) |

|---|---|---|---|---|---|---|---|

| Metabolic Rate | BM | -23.5 | 51.2 | 0.0 | 1 | - | - |

| OU1 | -22.9 | 53.1 | 1.9 | 3 | 1.05 (0.45) | 0.8 (1.1) | |

| Bone Density | OU1 | -18.1 | 42.5 | 0.0 | 3 | 2.3 (0.2)* | 3.5 (1.4)* |

| BM | -25.7 | 53.6 | 11.1 | 1 | - | - |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in OU Modeling Research |

|---|---|

| Curated Time-Calibrated Phylogeny | The essential scaffold. Branch lengths must be proportional to time (e.g., from fossil calibration or molecular clocks). |

Comparative Dataset Manager (e.g., rotl, RRphylo) |

Tools to acquire, align, and manage trait data for species lists from databases like Open Tree of Life. |

Model-Fitting Engine (geiger, OUwie, RPANDA) |

Software packages implementing likelihood or Bayesian estimation of OU and related model parameters. |

Phylogenetic Simulation Library (phytools, simulate) |

For generating null datasets under BM/OU processes to validate methods and conduct power analyses. |

| High-Performance Computing (HPC) Cluster Access | Essential for bootstrapping, large simulations, and fitting complex multi-regime models across thousands of species. |

Visualizations

Diagram 1: OU Process vs. Brownian Motion

Diagram 2: Multi-Regime OU Model Fitting Workflow

Technical Support Center

FAQ: Common Experimental Issues & Troubleshooting

Q1: My OU model fitting returns an optimal trait value (θ) that is biologically implausible (e.g., outside measurable physiological range). What went wrong? A: This is a classic sign of model misspecification. The OU assumption of a single, constant adaptive peak may be too simple. Consider:

- Check for Shifts: Use phylogenetic hypothesis testing (e.g.,

l1ou,bayou) to test for shifts in θ or σ² across clades. - Validate Parameters: Constrain θ using empirical data from the literature as Bayesian priors.

- Protocol: To test for shifts, use the following workflow in R:

- Load your tree and trait data.

- Run a basic OU fit using

geiger::fitContinuousorouch::glss. - Use

l1ou::estimate_shift_configurationto detect shift points. - Compare models via corrected AIC or cross-validation scores.

Q2: The rate parameter (σ²) in my model is near zero, suggesting no evolution, but the trait clearly varies across species. A: A low σ² with observed variation can indicate that variation is due to measurement error or is constrained by an unmodeled factor.

- Troubleshooting:

- Incorporate Measurement Error: Explicitly include known standard errors of trait measurements in your model (e.g.,

mvMORPHorphyr::pglswith error weights). - Test for Constraints: The model may be missing a key constraint parameter (α). A very high α (strong pull to θ) can suppress σ². Check if α is estimable or if it's conflated with θ.

- Incorporate Measurement Error: Explicitly include known standard errors of trait measurements in your model (e.g.,

- Protocol for Adding Error:

Q3: How do I handle a trait that is clearly influenced by multiple, interacting selective pressures? The single-peak OU model seems inadequate. A: You are likely encountering the core disconnect. Simplistic OU models cannot capture multi-peak landscapes or gene network interactions. Upgrade your approach:

- Multi-Peak Models: Use

bayouorsurfaceto fit multi-optima OU models. - Integrate Predictors: Use phylogenetic regression (PGLs) with OU residuals, or OU models where θ is a function of a continuous predictor (e.g., climate variable).

- Protocol for Multi-Predictor PGLs:

- Fit an OU model to your trait and extract residuals (phylogenetically independent contrasts).

- Use these residuals as the response in a standard linear model with your environmental or genetic predictors.

- Account for any remaining phylogenetic structure in the residuals of this second model.

Experimental Protocols for Validating OU Assumptions

Protocol: Testing the Constancy of Evolutionary Rate (σ²) Objective: To empirically test the OU assumption of a constant stochastic rate over time and across lineages. Materials: See "Research Reagent Solutions" table. Method:

- Data Collection: Assemble trait data (e.g., protein expression level, IC50 value) for a set of species with a resolved phylogeny. Include replicate measurements for error estimation.

- Model Fitting: Fit two models using Bayesian (e.g.,

bayou) or maximum likelihood methods:- M1: Single-rate OU model.

- M2: Multi-rate OU model (allows σ² to change at specific branches).

- Model Comparison: Calculate Bayes Factors (for Bayesian) or corrected AICc (for ML) to compare M1 and M2.

- Validation: If M2 is better, map rate shifts onto the phylogeny. Correlate shift locations with known historical events (e.g., colonization, mass extinction) or the emergence of key genetic regulators.

Protocol: Correlating Trait Evolution with a Signaling Pathway Component Objective: To determine if evolution of a drug target trait is linked to changes in a specific signaling node. Method:

- Quantification: For each species/cell line, quantify: A) The primary trait (e.g., cell proliferation rate), and B) Activity level of a key signaling node (e.g., phosphorylated protein level via ELISA).

- Phylogenetic Independent Contrasts (PIC): Calculate PICs for both the trait and the signaling node activity using the species phylogeny.

- Regression: Perform a linear regression through the origin of the trait PICs on the signaling node PICs.

- Interpretation: A significant relationship suggests correlated evolution between the trait and the signaling component, indicating the pathway's historical role in shaping the trait.

Table 1: Common OU Model Parameters and Their Biological Interpretations

| Parameter | Symbol | Typical Output | Biological Meaning | Common Issue |

|---|---|---|---|---|

| Optimal Value | θ (Theta) | Scalar or vector | The "adaptive peak" trait value. | Can be an unrealistic average of multiple true peaks. |

| Selection Strength | α (Alpha) | Rate (units/time) | Strength of pull towards θ. High α = constraint. | Often unidentifiable; conflated with σ². |

| Evolutionary Rate | σ² (Sigma²) | Rate (units²/time) | Rate of stochastic motion (drift, unseen factors). | Assumed constant; may spike during innovations. |

| Stationary Variance | Vy = σ²/(2α) | Variance (units²) | Expected trait variance at equilibrium. | Rarely matches actual, observed variance. |

Table 2: Comparison of Advanced Phylogenetic Models

| Model | Package/Tool | Key Feature | Use Case | Limitation |

|---|---|---|---|---|

| Multi-Optima OU | surface, bayou |

Allows θ to shift across branches. | Traits evolving toward different peaks. | Computationally intense; many shift configurations. |

| Multi-Rate OU | bayou, RevBayes |

Allows σ² to shift across branches. | Rates of evolution change (e.g., after gene duplication). | Requires strong prior information. |

| OU with Drivers | phytools, mvMORPH |

θ varies as a linear function of a predictor. | Climate or co-evolving trait drives optimal value. | Assumes linear relationship. |

| Early Burst | geiger |

σ² decays exponentially from root. | Adaptive radiation following an innovation. | Cannot account for later rate increases. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Trait Evolution Research |

|---|---|

| Phylogenetic Tree | The essential scaffold for all comparative models. Represents evolutionary relationships and time. |

| Trait Dataset | Quantitative phenotypic or molecular measurements across species. Must account for measurement error. |

Comparative Method Software (R: geiger, phytools, bayou) |

Fits statistical models (like OU) to trait data on a tree to infer evolutionary processes. |

Bayesian MCMC Sampler (e.g., RevBayes, JAGS interfaced) |

For fitting complex models where parameters are interdependent, providing full posterior distributions. |

| Signaling Pathway Inhibitor/Activator Set | Used in validation experiments to perturb pathways and test causal links between molecular nodes and traits. |

Visualizations

Diagram 1: OU Process Schematic

Diagram 2: Model Selection Workflow

Diagram 3: Pathway-Trait Evolution Correlation

Applying the OU Model: Practical Challenges in Phylogenetic Comparative Methods

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During model fitting, my optimization algorithm fails to converge or returns a "singular Hessian" error. What does this mean and how can I resolve it? A: This typically indicates insufficient phylogenetic signal in your trait data or an over-parameterized model relative to the data. Recommended steps:

- Simplify the Model: Start with a Brownian Motion (BM) model to establish a baseline. Then, fit a single-optimum OU model (OU1). Only proceed to multi-optimum (OUM) or multi-rate models if likelihood ratio tests (LRTs) strongly reject simpler models.

- Check Data: Verify your trait data for outliers and ensure the phylogenetic tree is properly scaled (e.g., in units of time). Use phylogenetic signal metrics (e.g., Pagel's λ, Blomberg's K) to confirm non-random trait distribution.

- Constrain Parameters: Set biologically plausible bounds for parameters (e.g., α > 0, σ² > 0). Use multiple starting points for the optimization routine to avoid local maxima.

- Increase Sample Size: The OU model often requires more species (N > 50) for reliable parameter estimation than BM.

Q2: How do I choose the correct number of evolutionary regimes (selective optima, θ) for my multi-optimum OU model (OUM)? A: Avoid subjective selection. Implement a rigorous model selection protocol:

- A Priori Hypotheses: Define regimes based on independent, non-trait evidence (e.g., habitat, diet, morphology).

- Comparative Fit: Use information-theoretic criteria (AICc, BIC) to compare models with different numbers and arrangements of regimes. A lower AICc score (ΔAICc > 2) indicates better fit.

- Statistical Testing: Perform LRTs between nested models (e.g., OUM vs. OU1). A significant p-value suggests the multi-optimum model is justified.

- Visualization: Always map inferred regimes onto the phylogeny to assess biological plausibility.

Q3: The estimated strength of selection (α) is extremely low or high, or the confidence intervals are extremely wide. How should I interpret this? A: Extreme α values often point to model inadequacy or unidentifiable parameters.

- α → 0: The process is indistinguishable from Brownian Motion. Your trait may not be under stabilizing selection as modeled, or the phylogenetic signal is too weak.

- α → very high: The process approaches a white-noise model, suggesting very rapid adaptation to the optimum, potentially faster than the tree can resolve. This can also occur if the model is trying to account for measurement error or within-species variation not included in the model.

- Wide CIs: This indicates low statistical power. Consider: Are there too few species per regime? Is the phylogenetic structure insufficient to disentangle α from σ²?

Q4: How do I account for and diagnose the influence of within-species measurement error in my OU model fitting?

A: Ignoring measurement error can bias parameter estimates, particularly inflating the estimated stochastic variance (σ²). Many modern R packages (e.g., phylolm, OUwie) allow you to incorporate measurement error variances directly.

- Protocol: Fit two models: one ignoring measurement error (set

meserr=0) and one incorporating known standard errors for each tip value. - Diagnosis: Compare parameter estimates (especially σ² and α) between the two models. A significant change suggests measurement error is influential. Use AIC to determine if the model with measurement error provides a better fit to the data.

Table 1: Common OU Model Parameter Interpretations and Diagnostic Flags

| Parameter | Biological Interpretation | Typical Range | Diagnostic Warning Signs |

|---|---|---|---|

| α (alpha) | Strength of selection/trait pull. | > 0. High = strong stabilizing pull. | ≈0 (BM-like); >100 (white-noise-like); very wide CIs. |

| σ² (sigma²) | Evolutionary rate (stochastic variance). | > 0. | Extremely high when measurement error is unaccounted for. |

| θ (theta) | Primary adaptive optimum (trait mean). | Data-dependent. | Unrealistic values given known biology (e.g., negative mass). |

| Half-life (ln(2)/α) | Time for expected trait divergence to halve. | Measured in tree units. | Exceeds tree height (α too weak) or is minuscule (α too strong). |

Table 2: Model Selection Workflow for Multi-Regime OU Models

| Step | Action | Tool/Function (R example) | Goal |

|---|---|---|---|

| 1 | Map a priori regimes onto tree. | paintSubTree (phytools) |

Create a hypothesis-based tree painting. |

| 2 | Fit simpler baseline models. | fitContinuous (geiger), brownie.lite (phytools) |

Obtain BM and OU1 log-likelihoods. |

| 3 | Fit multi-regime OU model (OUM). | OUwie (OUwie) |

Estimate α, σ², and multiple θ. |

| 4 | Compare models statistically. | aicc, lrtest |

Select best-supported model via AICc or LRT. |

| 5 | Simulate & check model adequacy. | simulate (OUwie), phylocurve |

Assess if fitted model can produce data like yours. |

Experimental Protocols

Protocol 1: Standard Pipeline for Fitting a Single-Optimum OU Model

- Data Preparation: Format your ultrametric phylogenetic tree (Newick format) and trait data (CSV with species names matching tree tips). Check for and correct polytomies.

- Model Fitting (R):

- Parameter Extraction: Extract α, σ², θ, and the log-likelihood from the

fit_ouobject. - Model Comparison: Calculate AIC scores for BM and OU. Perform a Likelihood Ratio Test:

pchisq(2*(fit_ou$opt$lnL - fit_bm$opt$lnL), df=1, lower.tail=FALSE).

Protocol 2: Incorporating Measurement Error in OU Models

- Variance Calculation: For each species trait value, calculate the standard error (SE) or variance (e.g., from sample size or technical replicates).

- Model Fitting with

phylolm:

- Comparison: Fit an identical model with

measurement_error=FALSE. Compare the σ² and α estimates to quantify the bias.

Visualizations

Title: OU Model Fitting and Selection Workflow

Title: OU Process: Deterministic Pull and Stochastic Noise

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Analytical Tools for OU Modeling

| Item (Package/Software) | Primary Function | Key Considerations |

|---|---|---|

| R Statistical Environment | Core platform for analysis and visualization. | Use current version for optimized libraries. |

ape, phytools, geiger |

Phylogenetic data manipulation, visualization, and basic comparative methods. | Foundation for all tree-based analyses. |

OUwie, phylolm, PCMFit |

Specialized packages for fitting OU and other Gaussian process models. | OUwie handles multi-regime models; phylolm is fast and handles measurement error. |

ggplot2, ggtree |

High-quality, reproducible graphics for traits-on-tree plots. | Essential for visualizing regimes and model predictions. |

diversitree, Surface |

Advanced model fitting, including hidden-state and macroevolutionary models. | For complex, multi-regime hypotheses but requires more expertise. |

Bayesian Framework (RevBayes, MCMCglmm) |

Bayesian inference of OU parameters, useful for complex models and incorporating uncertainty. | Computationally intensive but provides full posterior distributions. |

Technical Support Center

Troubleshooting Guides

Issue: Non-Convergence in Parameter Estimation (OU Model)

- Symptom: Parameter estimation algorithm fails to converge or returns implausibly large values (e.g., Alpha near zero or infinite).

- Diagnosis: Common when the phylogenetic tree is small, trait data lacks signal, or the model is overly complex for the data (e.g., multi-optima model on a simple tree).

- Resolution:

- Simplify the model. Fit a single-optimum Brownian Motion (BM) model first.

- Check tree ultrametricity and trait units.

- Use parameter bounds (e.g., set a prior on Alpha).

- Consider using a simulation approach to check identifiability: simulate data under your estimated parameters and see if re-estimation recovers them.

Issue: Unrealistic or Biased Theta (Optimum) Estimates

- Symptom: Estimated trait optimum seems disconnected from biological reality or varies wildly with model specification.

- Diagnosis: Often indicates confounding between Alpha (selection strength) and Sigma (random drift rate). Weak phylogenetic signal can also be a cause.

- Resolution:

- Fix Alpha to a small positive value and re-estimate Theta to see stability.

- Conduct a sensitivity analysis by fitting models across a range of fixed Alpha values.

- Incorporate measurement error into the model to account for intraspecific variance.

Issue: Poor Model Discrimination (OU vs. BM)

- Symptom: Little difference in AIC/BIC between Brownian Motion (BM) and OU models, making selection hard to infer.

- Diagnosis: The phylogenetic half-life (ln(2)/Alpha) may be longer than the root-to-tip time of your tree, making the OU process indistinguishable from BM.

- Resolution:

- Calculate the phylogenetic half-life from your Alpha estimate.

- Report and acknowledge this limitation; an OU model may not be justified by your data.

- Use model-averaging for downstream analysis instead of relying on a single "best" model.

Frequently Asked Questions (FAQs)

Q1: Can I estimate different Alpha (selection strength) values for different branches or clades? A: Standard single-optimum OU models assume a constant Alpha across the tree. While technically possible in some software, estimating clade-specific Alphas requires very strong data and risks overparameterization. It is often more interpretable to fit separate Theta (optimum) parameters with a shared, global Alpha.

Q2: My Sigma (rate) parameter increases when I add more species. Is this normal? A: Potentially. Sigma is a per-unit-time rate. Adding more species, especially from previously undersampled, rapidly evolving lineages, can reveal higher overall rate heterogeneity. Consider models that allow Sigma to vary across the tree (e.g., OU with multiple rate regimes).

Q3: How do I interpret Theta (optimum) if my trait is a composite index (e.g., a PC score)? A: Interpret with extreme caution. Theta is in the same units as your trait data. A selective "optimum" for an abstract multivariate index may not correspond to a biologically intuitive state. Always relate findings back to the original traits that load onto the composite axis.

Q4: What are the main data requirements for reliable OU parameter estimation? A: 1) A well-resolved, time-calibrated (ultrametric) phylogeny. 2) Trait data with strong phylogenetic signal (high lambda). 3) Sufficient taxon sampling (>30 tips is often a minimum for simple models). 4) Consideration of intraspecific variation and measurement error.

Table 1: Common Software/Packages for OU Parameter Estimation

| Software/Package | Method | Key Outputs | Key Limitations (Thesis Context) |

|---|---|---|---|

R: geiger/OUwie |

Maximum Likelihood | Theta, Alpha, Sigma, AIC | Assumes single trait, no within-species sampling error. |

R: mvMORPH |

ML, Bayesian (MCMC) | Multi-variate Theta, Alpha matrix | Computationally intensive for large trees/traits. |

| BayesTraits | Bayesian (MCMC) | Theta, Alpha, Sigma with credible intervals | Requires careful prior specification; mixing can be poor. |

| PHYLOPARS | ML, REML | Estimates ancestral states, accounts for within-species variation. | Primarily for missing data imputation; less focused on hypothesis testing. |

Table 2: Impact of Common Data/Model Issues on Parameter Estimates

| Issue | Effect on Theta (Optimum) | Effect on Alpha (Strength) | Effect on Sigma (Rate) |

|---|---|---|---|

| Small Tree (< 30 tips) | High variance, often biased. | Tends toward zero (BM-like). | Unreliable, often overestimated. |

| Weak Phylogenetic Signal | Unstable, sensitive to model choice. | Underestimated; model prefers BM. | May be confounded with Alpha. |

| High Measurement Error | Biased toward grand mean. | Attenuated (underestimated). | Overestimated (error absorbed as drift). |

| Non-Ultrametric Tree | Biased, not interpretable as an optimum. | Meaningless (model assumes time). | Meaningless (model assumes time). |

Experimental Protocols

Protocol 1: Power Analysis via Simulation for OU Model Fitting

- Define Parameters: Choose a working phylogeny and set "true" parameter values for Theta, Alpha, and Sigma.

- Simulate Data: Use

geiger::sim.char()ormvMORPH::simul()to generate trait data along the phylogeny under the OU process. - Model Fitting: Fit the OU model to the simulated data using ML (e.g.,

OUwie). - Repeat: Perform 100-1000 iterations.

- Analyze Power: Calculate the proportion of iterations where: a) the true Theta falls within the 95% CI, b) Alpha is significantly > 0 (via likelihood ratio test vs. BM).

Protocol 2: Accounting for Measurement Error in OU Models

- Quantify Error: For each species/tip, calculate the standard error (SE) of the trait mean from sample measurements.

- Data Structure: Create a dataframe with columns: species, trait value, and trait standard error.

- Use Appropriate Software: Employ methods that incorporate known measurement error. In a Bayesian framework (e.g.,

MCMCglmm), use the SE to define trait precision. In an ML framework, consider meta-analytic approaches or usemvMORPHwith a known error matrix. - Comparison: Fit the model with and without measurement error incorporation and compare parameter estimates (especially Sigma).

Visualizations

Title: OU Model Parameter Estimation & Diagnostic Workflow

Title: Parameter Interdependencies in OU Model Fitting

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational & Data Resources

| Item | Function/Description | Example/Tool |

|---|---|---|

| Ultrametric Phylogeny | Time-calibrated tree essential for modeling trait evolution as a time-based process. | Tree from TimeTree.org; calibrated using treePL or BEAST. |

| Trait Data Curation Tool | For managing, cleaning, and aligning species trait data with phylogeny tip labels. | R packages tidyr, dplyr, rotl. |

| Phylogenetic Comparative Suite | Core software for fitting OU and other evolutionary models. | R packages geiger, OUwie, mvMORPH, phytools. |

| High-Performance Computing (HPC) Access | Bayesian MCMC and large simulations are computationally intensive. | University cluster, cloud computing (AWS, GCP). |

| Data Simulation Scripts | Critical for power analysis and testing model identifiability. | Custom R scripts using geiger::sim.char() or mvMORPH::simul(). |

| Model Diagnostic Scripts | For checking parameter identifiability, convergence (MCMC), and residuals. | Custom scripts for profile likelihoods, parametric bootstrapping. |

Challenges with High-Dimensional Trait Data (e.g., Gene Expression, Morphometrics)

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the fitting of an Ornstein-Uhlenbeck (OU) model to high-dimensional gene expression data, the covariance matrix becomes singular or non-invertible. What causes this and how can I resolve it?

A: This is a classic "curse of dimensionality" issue where the number of traits (p, e.g., 20,000 genes) far exceeds the number of observations (n, e.g., 50 species). The empirical covariance matrix is ( p \times p ), but its rank is at most ( n ), guaranteeing singularity when ( p > n ). This breaks the OU model's likelihood calculation, which requires matrix inversion.

Solution Protocol:

- Dimensionality Reduction First: Apply Principal Component Analysis (PCA) to the expression matrix (species × genes). Retain the top k principal components (PCs) that explain >95% of variance, ensuring ( k < n ).

- Fit OU on PCs: Fit the multivariate OU model to the reduced ( n \times k ) data matrix. The PCs are orthogonal, mitigating collinearity.

- Regularization: Employ a penalized likelihood method, adding a ridge term (( \lambda I )) to the empirical covariance matrix ( \Sigma ) to make ( (\Sigma + \lambda I) ) invertible. Use cross-validation on the phylogenetic tree to select ( \lambda ).

Q2: When testing for convergent evolution in morphometric shape data (high-dimensional Procrustes coordinates) using OU models, the power is low. How can I improve the experimental design?

A: Low power stems from overparameterization. A full OU model for p-dimensional data estimates a ( p \times p ) selection strength matrix (θ), which is infeasible.

Solution Protocol:

- Define A Priori Hypotheses: Don't test all traits. Define specific trait modules (e.g., cranial shape indices) hypothesized to be under convergent selection.

- Use SURF or l1OU Methods: Implement Stochastic Search Variable Selection (SSVS) or LASSO-type (l1OU) methods to identify a sparse set of traits with significant shifts in optimum (θ) on specific lineages. These methods penalize complex models.

- Simulate to Validate Power:

- Simulate Data: Use

geigerormvMORPHin R to simulate shape evolution under a known convergent OU regime on your phylogeny. - Analyze: Run your convergent test (e.g.,

l1ou) on 100+ simulated datasets. - Calculate Power: Power = (Number of simulations where convergence is correctly detected) / (Total simulations). Aim for >0.8.

- Simulate Data: Use

Q3: How do I validate that an inferred adaptive peak (OU optimum) from high-dimensional data is biologically meaningful and not an artifact?

A: Inference of high-dimensional optima is prone to overfitting.

Validation Protocol:

- Phylogenetic Cross-Validation:

- Randomly prune 20% of the species from the phylogeny to form a "test set."

- Fit the OU model on the remaining 80% ("training set") and predict trait values for the test set tips.

- Compare predicted vs. observed values via mean squared error (MSE). Repeat with k-fold pruning.

- Functional Enrichment Analysis (for gene expression):

- Take the genes with the highest loadings in the OU optimum vector.

- Input this gene list into enrichment tools (g:Profiler, Enrichr) to test for overrepresented biological pathways. An artifact peak will show no enrichment.

- Comparison to Null: Simulate trait data under a Brownian Motion (BM) model (no optimum). Fit an OU model to this BM data. Compare the magnitude of your inferred θ to this null distribution.

Table 1: Comparison of Multivariate Phylogenetic Models for High-Dimensional Data

| Model | Key Parameters | Computational Complexity (Big O) | Suitable Data Dimension (p) | Primary Limitation with High p |

|---|---|---|---|---|

| Multivariate BM | ( p \times p ) Rate (Σ) matrix | ( O(p^3 \cdot n) ) | p < 30 | Covariance matrix unidentifiable for p >> n. |

| Multivariate OU (Full) | ( p \times p ) Σ, α (strength), θ (optimum) | ( O(p^3 \cdot n \cdot \text{iter}) ) | p < 10 | Severe overparameterization; α, θ non-identifiable. |

| OU with Decomposition | α as a scalar or diagonal, θ as k-d vector | ( O(p \cdot k^2 \cdot n) ) | p ≤ 1000 | Assumes traits evolve independently or share same α. |

| Phylogenetic Factor Analysis | Latent factors (q), q << p | ( O(p \cdot q^2 \cdot n) ) | p ~ 10,000+ | Interpretation of latent factors can be challenging. |

| Regularized (l1) OU | Sparse shifts in θ | ( O(p^2 \cdot n \cdot \text{iter}) ) | p ~ 100-500 | Computationally intensive for very large p. |

Table 2: Power Analysis for Convergence Detection in Simulated Shape Data (n=50 species)

| True Number of Convergent Traits | Mean Traits Detected (BM Background) | Mean Traits Detected (OU Background) | False Positive Rate | Recommended Minimum Effect Size |

|---|---|---|---|---|

| 5 (of 100) | 4.1 | 3.8 | 0.02 | 1.5 SD |

| 10 (of 100) | 8.9 | 7.2 | 0.03 | 1.2 SD |

| 5 (of 10,000) | 4.5 | 1.1 | < 0.001 | 2.5 SD |

Experimental Workflow & Pathway Diagrams

Title: Troubleshooting Workflow for High-Dimensional OU Analysis

Title: Core OU Model Limitations with High-Dimensional Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for High-Dimensional Trait Evolution Research

| Tool / Reagent | Function / Purpose | Key Consideration for High-p Data |

|---|---|---|

R Package: mvMORPH |

Fits multivariate BM & OU models. | Use scale.height=TRUE and method="sparse" or "ridge" for regularization. |

R Package: RRphylo |

Phylogenetic ridge regression for high-p. | Specifically designed to avoid matrix inversion; good for p >> n. |

R Package: l1ou |

Infers convergent shifts under OU with LASSO. | Penalizes number of shift events; critical for identifying sparse signals. |

Software: geiger / simulate |

Simulates trait evolution under BM/OU on trees. | Essential for power analysis & validating methods before using real data. |

Tool: g:Profiler |

Functional enrichment analysis of gene lists. | Validates biological meaning of inferred OU optima from expression data. |

| Method: Phylogenetic PCA | Dimensionality reduction respecting phylogeny. | Reduces p to a manageable k while accounting for non-independence of species. |

R Package: ape / phytools |

Core phylogeny manipulation & visualization. | Always prune/check tree alignment with trait matrix dimensions (n species). |

Troubleshooting Guides & FAQs

Q1: I receive a "Likelihood calculation returns NA/Inf" error in OUwie. What are the primary causes?

A: This typically stems from three issues: 1) An incorrectly specified simmap.map (stochastic character map) where a selective regime is missing from the phylogeny's branches. 2) Extreme parameter values (e.g., very high sigma.sq or very low alpha) causing numerical overflow. 3) A mismatch between the order of tip data in your dataframe and the tip labels in the phylogeny.

Experimental Protocol for Diagnosis:

- Validate your phylogenetic tree and trait data using

name.check(tree, data). - Check your

simmapobject structure: Ensuremapsandmapped.edgeelements exist for all regimes. UseplotSimmapto visually confirm regimes are painted on the tree. - Run the model with fixed, reasonable starting values (

alpha=1,sigma.sq=0.1,theta=mean(y)). Use thequiet=FALSEargument to monitor optimization steps.

Q2: How do I choose between the OU1, OUM, OUMV, OUMA, and OUMVA models in OUwie? A: Model choice is based on the biological hypothesis you wish to test regarding which parameters (θ - optimum, α - strength of selection, σ² - evolutionary rate) vary across selective regimes.

Experimental Protocol for Model Comparison:

- Fit a sequence of increasingly complex models (e.g., OU1 → OUM → OUMV).

- For each model, extract the AICc score using

aicc <- model$AICc. - Calculate AICc weights to infer the best-supported model. Use

aicw <- aic.w(c(model1$AICc, model2$AICc, ...)). - Validate the best model by checking parameter confidence intervals from

print(model)orsummary(model).

Q3: bayou MCMC chains do not converge (ESS < 200, Gelman diagnostic > 1.05). How do I improve mixing? A: Poor mixing in bayou often relates to proposal mechanism tuning or overly diffuse priors.

Experimental Protocol for MCMC Tuning:

- Adjust proposal weights: Increase the frequency of sliding window (

sliding) and intercept (theta) proposals relative to branch length (sb) proposals. Example:probs <- list(alpha=3, sig2=2, k=1, theta=3, slide=1). - Tune proposal widths: Use the pilot adaptation phase. Monitor acceptance rates in the output; target rates are ~0.2-0.4. Manually adjust

move.alpha$tuneormove.theta$tuneif needed. - Run longer chains: Substantially increase

Ngen(e.g., to 2-5 million) and adjustburninandthinproportionally.

Q4: How can I test for a specific a priori shift location hypothesized from my experimental data in bayou? A: You can set a prior that places high probability on shifts occurring on specific branches.

Experimental Protocol for Testing A Priori Shifts:

- Identify the branch(es) of interest from your phylogeny.

- Construct a custom

distributions$sbprior. For example, to strongly favor a shift on branch #150:prior$sb <- function(x) dnorm(x, mean=150, sd=5, log=TRUE). - Compare the posterior probability of this constrained model against an unconstrained model using Bayes Factors (calculated via stepping-stone sampling or harmonic mean estimators from the MCMC samples).

Q5: What is the most effective way to visualize selective regime optima (θ) and their uncertainty from an OUwie or bayou analysis? A: Create a plot of regime-specific θ estimates with confidence/credible intervals.

Experimental Protocol for Visualization:

- For OUwie: Extract the

thetaestimates and their standard errors (theta.se) from the model object. Plot as point estimates (e.g., dots) with error bars (θ ± 1.96*SE). - For bayou: From the posterior distribution, extract all sampled θ values for each regime. Plot the 95% credible interval (2.5% to 97.5% quantiles) as a boxplot or density plot.

- Comparative Table: Summarize key outputs for clarity.

Table 1: Comparison of OUwie and bayou Output Interpretation

| Parameter | OUwie Output | bayou Output | Key Interpretation |

|---|---|---|---|

| Optimum (θ) | MLE estimate per regime. | Posterior distribution per regime. | The adaptive peak for a trait in a regime. |

| Selection Strength (α) | Single or regime-specific MLE. | Posterior distribution. | Rate of trait pull towards θ. Low α implies weak constraint. |

| Evolutionary Rate (σ²) | Single or regime-specific MLE. | Posterior distribution. | Stochastic motion rate around θ. |

| Shift Locations | Not directly estimated. | Posterior set of branches with shifts. | Phylogenetic positions of regime changes. |

| Uncertainty | Standard errors from Hessian matrix. | 95% Credible Intervals from MCMC. | bayou provides full parameter correlation structure. |

Research Reagent Solutions

Table 2: Essential Toolkit for OU Modeling with Selective Regimes

| Item | Function | Example / Note |

|---|---|---|

| Annotated Time-Calibrated Phylogeny | The evolutionary scaffold for all models. Branch lengths must be in time units (e.g., Myr). | Use ape::chronos or treePL for calibration. |

Stochastic Character Map (simmap) |

Defines probabilistic mappings of discrete selective regimes on the tree. | Created via phytools::make.simmap. Run multiple (n=100) for uncertainty. |

| Trait Data Matrix | Continuous trait measurements for tip species. Must align perfectly with tree tips. | Dataframe columns: Genus_species, regime, trait_value. |

| OUwie R Package | Fits predefined multi-regime OU models (OUM, OUMV, etc.) via maximum likelihood. | Primary functions: OUwie, print.OUwie, plot.OUwie. |

| bayou R Package | Bayesian MCMC method to infer shifts in OU parameters without predefined regimes. | Primary functions: configureMCMC, bayou.mcmc, summary.bayou. |

R Package phytools |

Critical for tree manipulation, simulation, and simmap creation/plotting. |

Key functions: make.simmap, plotSimmap, fastAnc. |

| High-Performance Computing (HPC) Cluster | Essential for running long bayou MCMC chains or large OUwie model sets. |

Manages Ngen > 1e6 efficiently. Use job arrays for replicates. |

Experimental Workflow Diagrams

Title: Workflow for Multi-Regime OU Modeling

Title: OU Process Mechanics Within a Single Regime

Technical Support Center: Troubleshooting OU Model Estimation in Trait Evolution

Troubleshooting Guides & FAQs

Q1: My optimization algorithm (e.g., L-BFGS-B, Nelder-Mead) consistently converges to different parameter estimates (θ, σ², α) from different starting points. How do I know if I've found the global optimum?

A: This is a classic symptom of a complex likelihood surface with multiple local optima. Follow this protocol:

- Systematic Multi-Start: Implement a multi-start optimization strategy. Use at least 50-100 random starting points drawn from a broad prior distribution (e.g., α ~ Uniform(0, 100)). Record the final log-likelihood (lnL) and parameters from each run.

- Identify the Best Mode: Tabulate results. The run with the highest lnL is your candidate global optimum. Consistency can be assessed using the table below from a typical simulation study:

Table 1: Multi-Start Optimization Results for a Simulated 50-Tip Phylogeny

| Number of Random Starts | Runs Converging to Highest lnL (%) | Average lnL Range Among Local Optima | Typical α Estimate Disparity |

|---|---|---|---|

| 10 | 40% | 15.2 | 0.5 - 12.7 |

| 50 | 85% | 15.5 | 0.3 - 14.1 |

| 100 | 98% | 15.6 | 0.2 - 14.3 |

- Profile Likelihood Check: Fix the α (selection strength) parameter at a range of values and optimize over θ and σ². Plot the resulting profile likelihood. A well-identified global optimum will show a single, clear peak.

Q2: I receive "Model convergence failure" or "Hessian matrix is singular" errors in geiger or OUwie in R. What are the immediate steps?

A: These errors indicate that the optimization routine cannot reliably compute the curvature of the likelihood surface, often due to parameter non-identifiability or data insufficiency.

- Immediate Diagnostics:

- Check for parameter boundary violations (e.g., α ≈ 0, or σ² ≈ 0). This suggests the data may favor a simpler model (BM or white noise).

- Simplify the model: Temporarily fit a Brownian Motion (BM) model. If BM fits as well as or better than OU, the OU model's extra parameters may be unwarranted.

- Reduce regime complexity: If using multi-regime OU models (

OUwie), reduce the number of selective regime categories.

- Protocol - Hessian Diagnostics:

Q3: For large phylogenies (>500 tips), likelihood calculation becomes computationally prohibitive. What are the available workarounds?

A: The computational complexity of inverting the phylogenetic variance-covariance matrix scales with O(n³).

- Solution 1: Use Linear-Time Algorithms. Employ methods that exploit the tree structure, such as phylogenetic pruning (via

phylolmpackage in R). - Solution 2: Variance-Reducing Transformations. Use the

mvMORPHpackage in R which implements efficient linear-time algorithms for OU models. - Solution 3: Approximate Methods. Consider using INLA (Integrated Nested Laplace Approximation) or Gaussian Markov Random Field approximations for very large trees (>10k tips).

Q4: How can I validate that my inferred selective regimes (OU processes) are statistically robust and not an artifact of the heuristic search?

A: This requires a rigorous model comparison and simulation protocol.

- Simulate Under the Null: Use the inferred parameters from a simpler model (e.g., single-regime OU or BM) to simulate 1000 trait datasets on your phylogeny.

- Fit Complex Model: On each simulated dataset, fit your multi-regime OU model.

- Generate Null Distribution: Record the ΔAIC or log-likelihood gain for each fit. This creates a null distribution of improvement expected by chance.

- Compare to Observed: Compare your empirically observed ΔAIC/log-likelihood gain to this null distribution. A p-value can be calculated as the proportion of simulated gains that exceed the observed gain.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for OU Model Analysis

| Tool / Reagent | Function in Analysis | Example / Package |

|---|---|---|

| Phylogenetic Pruning Algorithm | Enables linear-time likelihood calculation for Gaussian models on trees, bypassing slow matrix inversion. | phylolm R package |

| Multi-Start Optimization Wrapper | Automates running optimization from many random starting points to probe for global optima. | bbmle::mle2 with optimx backend |

| Hessian Matrix Calculator | Numerically computes the matrix of second derivatives to assess parameter identifiability and standard errors. | numDeriv::hessian R function |

| Profile Likelihood Framework | Systematically explores likelihood surface shape for individual parameters to check for flat ridges or multi-modality. | Custom R code using optimize |

| High-Performance Computing (HPC) Cluster | Provides parallel processing for multi-start searches, bootstraps, and simulations. | SLURM, Sun Grid Engine job arrays |

Experimental & Computational Protocols

Protocol 1: Assessing Convergence in Multi-Regime OU Models (OUwie)

- Input: Phylogeny (

tree), trait data (data), regime map (map). - Run:

fit <- OUwie(tree, data, model="OUM", simmap.tree=FALSE, root.station=TRUE, algorithm="invert", opt.method="LN_SBPLX") - Diagnose: Extract

fit$solutionmatrix. Check forNAor0values on the diagonal (α and σ² per regime). This indicates failure. - Remedy: Set

root.station=FALSEor switchalgorithm="three.point". Increasemax.iterandepsinopt.controllist. - Validate: Run

OUwie.boot(fit, nboot=100)to check confidence intervals on parameters. Abnormally large CIs suggest poor identifiability.

Protocol 2: Simulating Traits to Test Power/Identifiability

Visualizations

Title: OU Model Fitting & Validation Workflow

Title: Common Likelihood Surface Challenges in OU Models

Diagnosing and Overcoming OU Model Failures in Evolutionary Inference

Technical Support Center: Troubleshooting OU Model Fit in Trait Evolution

Troubleshooting Guides

Guide 1: Diagnosing Poor OU Model Fit in Comparative Phylogenetic Data

Issue: A researcher has fitted an Ornstein-Uhlenbeck (OU) model to trait evolution data but the model diagnostics suggest a poor fit, potentially leading to incorrect inferences about evolutionary optima (θ) or selection strength (α).

Diagnostic Steps:

Conduct a Graphical Residual Analysis.

- Protocol: Plot the standardized residuals from the OU model fit against (a) the predicted values, (b) phylogenetic distance (or node height), and (c) in the order of data collection (if applicable).

- Red Flag: Patterns (e.g., trends, heteroscedasticity) in these plots indicate the model fails to capture the structure of the data. Random scatter is expected under a good fit.

Perform a Phylogenetic Signal Test on Residuals.

- Protocol: Calculate Pagel's λ or Blomberg's K on the residuals of the OU model fit. Use a likelihood ratio test or permutation test (≥ 1000 replicates) to assess significance.

- Red Flag: Significant phylogenetic signal in the residuals indicates the OU process has not adequately accounted for the phylogenetic structure, suggesting missing adaptive shifts or incorrect tree.

Compare with More Complex Models.

- Protocol: Fit alternative models (e.g., OU with multiple adaptive regimes (OUM), Brownian Motion with trends, Early Burst). Use information criteria (AICc, wAIC) for formal comparison. Perform simulation-based goodness-of-fit tests (e.g.,

gfitingeiger). - Red Flag: A more complex model (e.g., OUM) is strongly favored (ΔAICc > 4), or the observed data falls outside the distribution of test statistics from simulated data under the OU model.

- Protocol: Fit alternative models (e.g., OU with multiple adaptive regimes (OUM), Brownian Motion with trends, Early Burst). Use information criteria (AICc, wAIC) for formal comparison. Perform simulation-based goodness-of-fit tests (e.g.,

Guide 2: Addressing Suspected Time-Varying or State-Dependent Dynamics

Issue: The strength of selection (α) or the optimum (θ) may not be constant over time or across clades, violating a core OU assumption.

Diagnostic Steps:

Implement a

surpriseIndex Test.- Protocol: Calculate the "surprise" index (Slater & Friscia, 2019) for individual branches or clades. This metric identifies lineages where trait change deviates significantly from model expectations under a constant-parameter OU.

- Red Flag: High surprise values concentrated in specific clades indicate localized model failure, pinpointing where parameters may have shifted.

Apply a Model-Randomization Test (Michele & Michael, 2023).

- Protocol: Randomly shuffle the mapping of trait data across the tips of the phylogeny. Re-fit the OU model to hundreds of randomized datasets. Compare the observed model log-likelihood or α estimate to this null distribution.

- Red Flag: The observed model fit statistic falls within the null distribution, suggesting the fitted OU model is not capturing meaningful evolutionary signal beyond what random chance would produce.

Frequently Asked Questions (FAQs)

Q1: My OU model converges, and the α parameter is significantly greater than zero. Doesn't this confirm a good fit? A: No. A non-zero α only indicates that an OU model fits better than Brownian Motion in your specific comparison. It does not confirm the OU model is adequate. The model may still be misspecified (e.g., missing shifts in θ, incorrect single-peak assumption). You must perform the diagnostic checks above.

Q2: What are the most common biological reasons for OU model inadequacy in drug target trait evolution? A: Common reasons include:

- Multiple Adaptive Regimes: The protein trait (e.g., binding site architecture) evolved toward different optimal values in different lineages (e.g., in response to different pathogens).

- Time-Varying Selection: The strength of stabilizing selection (α) changed over time (e.g., relaxed selection during a period of low pathogen pressure).

- Punctuated Evolution: Traits change rapidly at speciation events, not via continuous Gaussian processes.

- Measurement Error: Ignoring intraspecific variation or measurement error can bias parameter estimates and lead to poor fit.

Q3: What quantitative thresholds should I look for to flag a problematic fit? A: Use these benchmarks as starting points for investigation:

Table 1: Key Diagnostic Thresholds for OU Model Fit

| Diagnostic Test | Metric | Potential Red Flag Value | Interpretation |

|---|---|---|---|

| Residual Phylogenetic Signal | Pagel's λ (residuals) | λ > 0.3 with p < 0.05 | Significant unmodeled phylogenetic structure. |

| Model Comparison | ΔAICc (vs. OUM) | ΔAICc > 4 | Strong support for a multi-optima model. |

| Parameter Confidence | α 95% CI Lower Bound | CI includes 0 | Selection strength may not be significant. |

| Goodness-of-Fit Test | Simulation p-value | p < 0.05 | Observed data is unlikely under the fitted OU model. |

Q4: What experimental or data protocols can I follow to robustly test an OU hypothesis from the start? A:

- Protocol for A Priori Regime Definition: Use independent ecological/physiological data (e.g., host cell type, environmental niche data from WHO) to a priori define hypothesized selective regimes on the phylogeny. Test an OUM model against this explicit hypothesis.

- Protocol for Data Quality Control: Quantify and incorporate measurement error variances for each trait datum (e.g., from replicate assays) directly into the model fitting procedure using