Beyond Brownian Motion: A Comparative Framework for Diversification Models in Trait Evolution and Biomedical Innovation

This article provides a comprehensive analysis of stochastic diversification models used in phylogenetic comparative methods (PCMs) to study trait evolution.

Beyond Brownian Motion: A Comparative Framework for Diversification Models in Trait Evolution and Biomedical Innovation

Abstract

This article provides a comprehensive analysis of stochastic diversification models used in phylogenetic comparative methods (PCMs) to study trait evolution. Tailored for researchers and drug development professionals, it explores the mathematical foundations of models like Brownian Motion, Ornstein-Uhlenbeck, and Early Burst, detailing their applications for testing evolutionary hypotheses. The content covers methodological implementation, common pitfalls in model selection and parameter estimation, and strategies for model validation and comparison. By synthesizing foundational theory with practical application, this guide aims to equip scientists with the knowledge to robustly analyze evolutionary trajectories, with direct implications for understanding disease mechanisms and informing therapeutic development.

The Stochastic Engine of Evolution: Unpacking Core Concepts in Trait Diversification

Phylogenetic comparative methods (PCMs) are a suite of statistical approaches that use phylogenetic trees to test evolutionary hypotheses, accounting for the statistical non-independence of species due to their shared evolutionary history [1] [2]. This guide objectively compares the performance, applications, and limitations of major PCMs used in studies of trait evolution and diversification.

The Problem of Phylogenetic Non-Independence

Species are related through a branching phylogenetic tree and share similarities often because they inherit them from a common ancestor, not due to independent evolution [3]. Analyzing trait data across species without accounting for this shared history can invalidate statistical tests by inflating Type I error rates, as data points cannot be treated as independent [1]. PCMs were developed to control for this phylogenetic history, transforming raw species data into independent comparisons for robust hypothesis testing [1] [3].

Comparison of Major Phylogenetic Comparative Methods

The table below summarizes the key features, applications, and limitations of the primary PCMs.

| Method | Core Principle | Primary Application | Key Assumptions | Statistical Implementation |

|---|---|---|---|---|

| Phylogenetic Independent Contrasts (PIC) [1] [3] | Transforms tip data into statistically independent differences (contrasts) at nodes. | Testing for adaptation or correlation between continuous traits. | Accurate tree topology and branch lengths; trait evolution follows a Brownian motion model. | Special case of Phylogenetic Generalized Least Squares (PGLS). |

| Phylogenetic Generalized Least Squares (PGLS) [1] | Incorporates phylogenetic non-independence into the error structure of a linear model. | Regression analysis of continuous traits while accounting for phylogeny. | The structure of the residuals (not the traits themselves) follows a specified evolutionary model. | A special case of generalized least squares (GLS). |

| Monte Carlo Simulations [1] | Generates a null distribution of test statistics by simulating trait evolution along the phylogeny. | Creating phylogenetically correct null distributions for any test statistic. | The specified model of evolution (e.g., Brownian motion) is appropriate for the data. | Computer-based simulations (e.g., via phytools). |

| Ancestral State Reconstruction (ASR) [4] | Uses extant species' traits and a phylogeny to infer the probable states of ancestors. | Inferring the evolutionary history of a trait and the ancestral state at the root. | The model of discrete or continuous character evolution (e.g., Mk, Brownian) is correct. | Implemented in phytools (fitMk, fastBM) and other R packages. |

| Ornstein-Uhlenbeck (OU) Models [3] | Models trait evolution under a stabilizing selection constraint towards an optimum value. | Testing for evolutionary constraints, stabilising selection, or adaptation to niches. | Trait evolution can be modeled with a selective pull towards a specific optimum. | Often compared to Brownian motion models using likelihood ratio tests. |

Experimental Protocols for Key PCM Workflows

Protocol for Phylogenetic Independent Contrasts and PGLS

This protocol outlines the steps for a standard analysis testing the relationship between two continuous traits.

- Phylogeny and Data Preparation: Obtain a rooted, time-calibrated phylogeny for the taxa of interest. Collate continuous trait data for all species in the tree, ensuring data is log-transformed if necessary to meet linear model assumptions.

- Model Selection and Diagnostics: For PIC, check that standardized contrasts are not correlated with their standard deviations or node heights [3]. For PGLS, compare the fit of different evolutionary models (e.g., Brownian motion, Ornstein-Uhlenbeck, Pagel's λ) using Akaike Information Criterion (AIC) to select the best model for the covariance structure [1].

- Model Fitting: Execute the analysis using the selected model. In PGLS, this involves fitting the regression model with a phylogenetic variance-covariance matrix (V) [1].

- Result Interpretation: Interpret the slope, confidence intervals, and p-value of the relationship between the independent and dependent variables, noting that the analysis now accounts for phylogenetic history.

Protocol for Ancestral State Reconstruction of Discrete Traits

This protocol is for inferring the history of a binary or multi-state character.

- Data and Tree Alignment: Match a discrete character dataset (e.g., presence/absence of a morphological feature) with the tips of the phylogenetic tree.

- Model Fitting: Fit a Markovian model of character evolution (e.g., the Mk model) to the data. This can be an equal-rates (ER) or all-rates-different (ARD) model [5].

- State Estimation: Use the fitted model to compute the marginal probabilities of each character state at the internal nodes of the tree. This can be achieved via maximum likelihood or Bayesian approaches.

- Visualization: Project the ancestral state estimates onto the phylogeny using a tool like

plotBranchbyTraitorcontMapin thephytoolsR package to visualize the evolutionary history of the trait [6] [5].

Figure 1: A generalized workflow for phylogenetic comparative analysis, highlighting the critical steps of model fitting and diagnostics.

The Scientist's Toolkit: Essential Research Reagents and Software

Successful application of PCMs relies on a suite of computational tools and repositories.

| Tool/Resource | Function/Purpose | Key Features |

|---|---|---|

| R Statistical Environment [5] | The primary computing platform for implementing PCMs. | An open-source environment for statistical computing and graphics. |

phytools R Package [5] |

A comprehensive library for phylogenetic comparative analysis. | Functions for trait evolution, diversification, ancestral state reconstruction, and visualization. |

ape R Package [5] |

A core library for reading, writing, and manipulating phylogenetic trees. | Provides the foundational data structures and functions for phylogenetic analysis in R. |

| Time-Calibrated Phylogeny | The historical hypothesis of species relationships, used as the backbone for all analyses. | Often estimated from molecular data with fossil calibrations; branch lengths represent time. |

caper R Package [3] |

Implements phylogenetic independent contrasts and related diagnostic tests. | Contains functions to calculate contrasts and check their validity against model assumptions. |

Performance Considerations and Limitations

Each PCM has specific limitations that can affect performance and interpretation if not properly considered.

- Phylogenetic Independent Contrasts: A major limitation is the assumption that traits evolve under a Brownian motion model. The method is also sensitive to inaccuracies in the tree topology and branch lengths [3].

- Ornstein-Uhlenbeck Models: These models are often incorrectly favored over simpler Brownian motion models in small datasets. They can also be misinterpreted as evidence of clade-wide stabilizing selection, even when other processes are at play [3].

- Trait-Dependent Diversification: Methods like BiSSE can falsely infer a correlation between a trait and diversification rate if there is underlying rate heterogeneity in the tree that is unrelated to the trait [3].

- General Pitfalls: A common issue across PCMs is the failure to assess whether the chosen method and its underlying model are appropriate for the biological question and dataset. Diagnostic tests to check model assumptions are available but are often underused in empirical studies [3].

Phylogenetic comparative methods are essential for rigorous testing of evolutionary hypotheses. While PGLS and related regression-based frameworks offer flexibility for modeling continuous traits, methods like ASR and OU models provide powerful ways to infer evolutionary history and mode. The performance and reliability of any PCM depend critically on the choice of an appropriate evolutionary model and thorough diagnostic checking. Researchers are encouraged to leverage the robust toolkit available in R, particularly through the phytools and ape packages, to apply these methods correctly [5].

In phylogenetic comparative methods, the Brownian Motion (BM) model serves as the fundamental null model for trait evolution, providing a mathematical baseline for testing evolutionary hypotheses. BM models the random walk of a continuous trait—such as body size or gene expression level—over evolutionary time along the branches of a phylogenetic tree. Under this model, trait changes are random in both direction and magnitude, with an expected mean change of zero and a variance that increases proportionally with time [7]. This characteristic makes BM the standard for modeling neutral genetic drift, where trait changes accumulate randomly without directional selection. Its mathematical simplicity and well-understood statistical properties have cemented its role as the starting point for comparing more complex models of evolutionary processes, such as those involving adaptive peaks or divergent evolutionary rates [7] [8].

Mathematical and Biological Foundations of Brownian Motion

Core Mathematical Properties

Brownian motion as applied to trait evolution is defined by several key mathematical properties that make it statistically tractable for phylogenetic analyses. When we let $\bar{z}(t)$ represent the mean trait value at time t, with $\bar{z}(0)$ as the ancestral starting value, BM exhibits three fundamental characteristics [7]:

- Constant Expected Value: $E[\bar{z}(t)] = \bar{z}(0)$. This means that over many replications, the average trait value does not systematically increase or decrease, reflecting the neutral nature of the process.

- Independent Increments: Changes over any two non-overlapping time intervals are statistically independent. This "memoryless" property simplifies calculations and simulations.

- Normally Distributed Traits: $\bar{z}(t) \sim N(\bar{z}(0),\sigma^2 t)$. The trait value at any time t follows a normal distribution with mean equal to the starting value and variance proportional to both time and the evolutionary rate parameter $\sigma^2$.

The evolutionary rate parameter $\sigma^2$ (sigma-squared) fundamentally controls how rapidly traits wander through phenotypic space. Higher values of $\sigma^2$ produce greater dispersion of trait values among lineages over the same time period, as illustrated in simulations where tripling the rate parameter substantially increased the spread of trait values across replicates [7].

Biological Interpretations and Assumptions

Biologically, BM can be derived from several underlying processes, with neutral genetic drift representing the most straightforward interpretation [7]. In this scenario, traits influenced by many genes of small effect evolve through random sampling of alleles across generations in finite populations. The mathematical properties of BM emerge when evolutionary changes accumulate through numerous small, random shifts—analogous to the physical process where particles diffuse due to many random molecular collisions [7].

Critical biological assumptions underlie the application of BM to trait evolution. The model assumes continuous trait values that can be represented as real numbers (e.g., body mass in kilograms), with changes accumulating proportionally to time rather than being constrained by optimal values or adaptive zones [7]. This makes BM particularly suitable for traits where stabilizing selection is weak or absent, and where phylogenetic relationships primarily explain trait distributions among species.

Experimental Protocols for BM Model Fitting

Workflow for Comparative Analysis

The following diagram illustrates the standard workflow for fitting and comparing Brownian Motion models with alternative evolutionary models using phylogenetic comparative data:

Detailed Methodological Framework

Implementing BM model fitting requires specific statistical protocols and software tools. Researchers typically employ the following methodology when conducting comparative analyses of trait evolution [8]:

Data Requirements and Preparation:

- Phylogenetic Tree: A fully resolved phylogenetic tree with branch lengths proportional to time or molecular divergence. BM analysis assumes the tree is known rather than estimated simultaneously with trait evolution parameters [9].

- Trait Data: Continuous trait measurements for extant and/or extinct species at the tips of the phylogeny. Data should be appropriately transformed if necessary to meet model assumptions.

- Species-Trait Matrix: A matched dataset ensuring each species in the phylogeny has corresponding trait measurements.

Model Fitting Procedure:

The standard approach uses maximum likelihood estimation to fit the BM model, implemented through R packages such as geiger using the fitContinuous function [8]. This procedure estimates two key parameters:

- Evolutionary Rate ($\sigma^2$): The rate at which variance accumulates per unit time.

- Root State ($\bar{z}(0)$): The estimated ancestral trait value at the root of the phylogeny.

Model Comparison Protocol: Researchers typically fit BM alongside multiple alternative models in a hypothesis-testing framework [8]:

- Fit BM and alternative models (e.g., Ornstein-Uhlenbeck, Early-Burst) to the same dataset.

- Calculate size-corrected Akaike Information Criterion (AICc) scores for each model.

- Compare AICc values to identify the best-supported model, with lower scores indicating better fit.

- Compute model weights to quantify relative support for each evolutionary scenario.

Advanced Shift Detection:

For testing hypotheses about changes in evolutionary regimes, packages like mvMORPH enable fitting BM models that allow the tempo and/or mode of evolution to differ across designated points in time (e.g., across mass extinction boundaries) [8].

Comparative Analysis of Evolutionary Models

Quantitative Model Comparison

The Brownian Motion model must be evaluated against alternative evolutionary models to understand its relative performance in explaining observed trait data. The following table summarizes key models commonly compared in trait evolution studies, along with their characteristic parameters and biological interpretations [8]:

| Evolutionary Model | Key Parameters | Biological Interpretation | Typical AIC Performance |

|---|---|---|---|

| Brownian Motion (BM) | $\sigma^2$, $\bar{z}(0)$ | Neutral drift; random walk | Baseline for comparison |

| Ornstein-Uhlenbeck (OU) | $\sigma^2$, $\bar{z}(0)$, $\alpha$, $\theta$ | Constrained evolution toward optimum | Better when traits are stabilized |

| Early-Burst (EB) | $\sigma^2$, $\bar{z}(0)$, $r$ | Rapid early divergence; slowing over time | Better for adaptive radiations |

| BM with Trend | $\sigma^2$, $\bar{z}(0)$, $m$ | Directional selection | Better with consistent trends |

| BM Shift Models | $\sigma^2$, $\bar{z}(0)$, shift parameters | Different rates across regimes | Better with clear historical shifts |

Performance Considerations and Limitations

Empirical studies reveal that BM model performance depends critically on the match between the assumed phylogenetic tree and the true evolutionary history of the trait. Recent simulations demonstrate that phylogenetic regression using BM assumptions becomes highly sensitive to tree misspecification as dataset size increases, sometimes yielding false positive rates approaching 100% with large numbers of traits and species [10]. This sensitivity exacerbates when traits evolve along gene trees that conflict with the assumed species tree.

The Ornstein-Uhlenbeck (OU) model typically outperforms BM when traits evolve toward adaptive optima under stabilizing selection, as it incorporates a "pull" toward a preferred trait value [8]. Similarly, Early-Burst models fit better when traits diversify rapidly following cladogenesis then slow as ecological niches fill. BM often remains superior for traits evolving through neutral processes or when phylogenetic time depth insufficiently constrains parameter estimation for more complex models.

Notably, robust regression techniques can substantially improve BM model performance under phylogenetic uncertainty. When tree misspecification occurs, robust estimators reduce false positive rates from 56-80% down to 7-18% in analyses of large trees, making BM-based inferences more reliable despite phylogenetic inaccuracies [10].

Successful implementation of Brownian Motion analyses in trait evolution research requires specific computational tools and methodological resources. The following table details essential components of the methodological toolkit for BM-based comparative studies:

| Resource Category | Specific Tools/Packages | Primary Function | Application Context |

|---|---|---|---|

| R Packages | geiger, phytools, mvMORPH, ape |

BM model fitting, simulation, visualization | Core phylogenetic comparative analysis |

| Programming Languages | R, Python | Data manipulation, custom analysis | Flexible pipeline development |

| Model Comparison | AICc, likelihood ratio tests | Statistical model selection | Objective model comparison |

| Visualization | phytools, ggplot2 |

Trait mapping, rate visualization | Results communication |

| Data Standards | Nexus, Newick formats | Phylogenetic tree representation | Data interoperability |

Contemporary BM analyses increasingly leverage multiple R packages in complementary workflows. For instance, researchers might use ape for tree manipulation, geiger for basic BM model fitting, phytools for visualization, and mvMORPH for more complex multi-regime BM models [9] [8]. Specialized courses in comparative methods provide training in these integrative approaches, covering packages including ape, geiger, phytools, evomap, l1ou, bayou, surface, OUwie, mvMORPH, and geomorph [9].

The robustness of BM-based inferences can be enhanced through sensitivity analyses that test conclusions across multiple plausible phylogenetic hypotheses and through robust regression estimators that reduce vulnerability to tree misspecification [10]. These approaches help maintain the utility of BM as a foundational baseline in comparative biology despite inevitable phylogenetic uncertainties.

Model Comparison: OU Process vs. Alternative Evolutionary Models

The Ornstein-Uhlenbeck (OU) process has become a fundamental tool in phylogenetic comparative methods for modeling trait evolution under stabilizing selection. The table below provides a systematic comparison between the OU process and other primary models used in evolutionary research.

Table 1: Quantitative Comparison of Trait Evolution Models

| Feature | Brownian Motion (BM) | Standard Ornstein-Uhlenbeck (OU) | Extended Multi-Optima OU | Wiener Process (for Prognostics) |

|---|---|---|---|---|

| Core Mechanism | Neutral random drift [11] [12] | Random drift + Stabilizing Selection [13] [11] | Multiple selective regimes [13] | Drift + Diffusion [14] |

| Key Parameters | Rate of drift (σ²) [11] | Selective strength (α), Optimum (θ), Rate (σ) [13] [11] | Multiple θ, α, and/or σ parameters [13] | Drift & Diffusion parameters [14] |

| Trait Variance | Increases linearly with time (unbounded) [14] | Bounded, converges to a stable equilibrium [14] | Bounded, with shifts per regime [13] | Unbounded, diverges over time [14] |

| Biological Interpretation | Genetic drift [11] | Constrained drift with a single adaptive peak [11] | Adaptive shifts, convergent evolution [13] [12] | Not designed for biological traits [14] |

| Strength in Modeling | Neutral evolution; null model [11] | Stabilizing selection around a fixed optimum [13] | Complex, regime-dependent selection [13] | Mathematical tractability [14] |

| Limitation in Modeling | Cannot model stabilizing selection [11] | Assumes constant rate and strength of selection in standard form [13] | Increased complexity requires more data [13] | Variance divergence contradicts physical systems [14] |

Experimental Protocols for Model Validation

Protocol for Simulating and Comparing Evolutionary Models

This protocol outlines the methodology for evaluating the performance of the OU process against other models, such as Brownian Motion, using simulated data where the true evolutionary process is known [13] [11].

1. Phylogeny and Parameter Setup:

- Input: Simulate or use a known phylogenetic tree with a specific number of tips (e.g., 100, 300) [11] [12].

- Parameters: Define true parameter values for the models to be tested. For the OU model, this includes the strength of selection (α), the trait optimum (θ), and the rate of stochastic motion (σ) [13] [11].

2. Data Simulation:

- Process: Simulate trait data along the phylogeny under different evolutionary models (e.g., BM, standard OU, multi-optima OU). This creates datasets where the underlying evolutionary process is known with certainty [13] [11].

- Considerations: Simulations should test various scenarios, such as different tree sizes and the presence of within-species variation, to assess model robustness [11].

3. Model Fitting and Parameter Estimation:

- Procedure: Fit the candidate models (BM, OU, etc.) to the simulated datasets using maximum likelihood or Bayesian inference [13] [11].

- Output: For each model and dataset, record the estimated parameter values and the model's log-likelihood.

4. Performance Evaluation:

- Parameter Accuracy: Compare the estimated parameters from Step 3 to the true parameters defined in Step 1. Calculate bias and precision [13].

- Model Selection Power: Use statistical criteria like AIC (Akaike Information Criterion) to determine how often the correct model (e.g., OU when data was simulated under OU) is identified from the set of candidate models [11].

- Hypothesis Testing: Use likelihood ratio tests to compare nested models (e.g., a model with a single optimum versus a model with multiple optima) to assess the power to detect specific evolutionary processes [13] [11].

Protocol for Validating OU Models with Empirical Data

This protocol describes the process of applying the OU model to real-world data, such as gene expression datasets, to test specific biological hypotheses [11].

1. Data Collection and Curation:

- Input: Collect comparative trait data (e.g., gene expression levels, morphological measurements) across multiple species or populations [11].

- Phylogeny: Obtain a well-supported phylogenetic tree for the species in the study [12].

- Within-Species Variation: If possible, collect data from multiple individuals per species to account for non-evolutionary variation [11].

2. Model Specification:

- Define Selective Regimes: A priori, formulate biological hypotheses by assigning branches or clades on the phylogeny to different "selective regimes" (e.g., different dietary niches, environments) [13]. Each regime can be associated with a distinct trait optimum (θ).

- Select Models: Choose a set of candidate models to compare. This typically includes:

- Null Model: Brownian Motion [11].

- Single-Peak OU: A single OU process for the entire tree [13].

- Multi-Peak OU: An OU model with different θ parameters for the pre-defined selective regimes [13].

- Extended OU: Models that allow other parameters, like the strength of selection (α) or the stochastic rate (σ), to also vary between regimes [13].

3. Statistical Analysis:

- Model Fitting: Fit all specified models to the empirical data.

- Model Selection: Compare the fitted models using AIC or likelihood ratio tests to determine which hypothesis best explains the observed data [13] [11].

- Parameter Inference: Extract and interpret the parameters (α, θ, σ) from the best-supported model to make biological inferences about the strength of selection and location of adaptive peaks [13].

Workflow and Conceptual Diagrams

Conceptual Workflow for OU Model Selection and Testing

The following diagram illustrates the logical workflow a researcher would follow when conducting an analysis of trait evolution using the OU process.

Dynamics of OU Process vs. Brownian Motion

This diagram visualizes the fundamental behavioral differences between the OU process, which models stabilizing selection, and the Brownian Motion model, which models neutral drift.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Analytical Tools for OU Model-Based Research

| Item | Function in Research |

|---|---|

| Comparative Dataset | A matrix of continuous trait measurements (e.g., gene expression levels, morphological data) across multiple species. This is the primary input for analysis [11]. |

| Time-Calibrated Phylogeny | A phylogenetic tree with branch lengths proportional to time. This provides the evolutionary structure and context for modeling trait change [12]. |

| Model-Fitting Software | Computational tools and packages (e.g., geiger, OUwie in R) used to perform maximum likelihood or Bayesian estimation of OU model parameters [13]. |

| Within-Species Variance Data | Measurements from multiple individuals per species. This allows researchers to account for non-evolutionary variation, preventing its misinterpretation as strong stabilizing selection [11]. |

| Data Simulation Pipeline | Custom scripts or software functions to generate synthetic trait data on a phylogeny under a known model. This is critical for validating methods and testing power [13] [11]. |

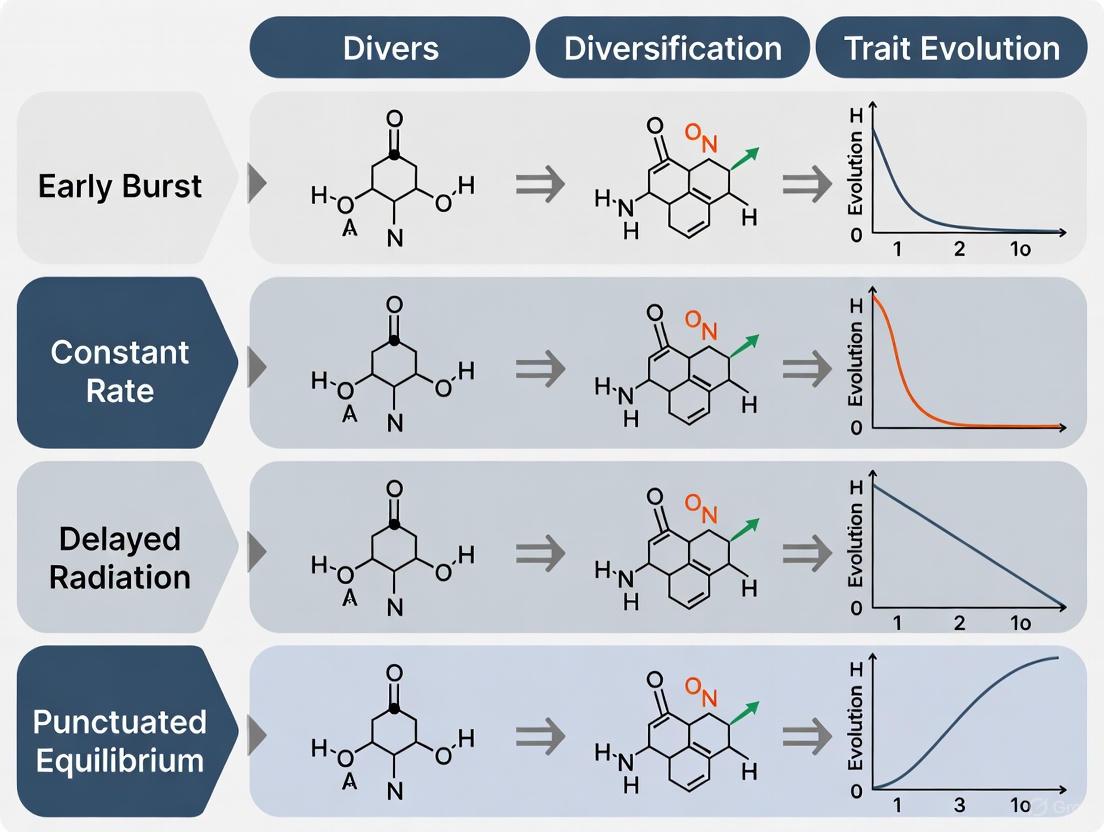

The Early Burst (EB) model represents a cornerstone hypothesis in evolutionary biology, proposing that phenotypic and lineage diversification follows a rapid, explosive trajectory early in a clade's history before slowing as ecological niches become saturated [15] [16] [17]. This model sits at the heart of adaptive radiation theory, which posits that ecological opportunity—such as access to new habitats or resources—drives this rapid initial diversification [16] [17]. Understanding the EB model's applicability, alongside its alternatives, is fundamental for researchers interpreting macroevolutionary patterns from phylogenetic and fossil data. This guide provides a comparative overview of the EB model's performance against other major models of trait evolution, summarizing empirical evidence, detailing key experimental protocols, and equipping scientists with the tools for robust evolutionary inference.

Model Performance: Empirical Evidence and Key Comparisons

The following table synthesizes findings from recent studies that test the fit of the Early Burst model against other evolutionary models across diverse taxa.

Table 1: Empirical Evidence for the Early Burst Model Across Biological Systems

| Study System / Clade | Trait(s) Studied | Best-Supported Model(s) | Key Quantitative Finding | Biological Interpretation |

|---|---|---|---|---|

| Lake Victoria Cichlids [15] | Oral jaw tooth shape (geometric morphometrics) | Early Burst | Largest morphospace expansion occurred within the first 3 millennia after lake formation. | Rapid phenotypic diversification into vacant ecological niches, followed by slowdown as niches filled. |

| Arctic Fjord Macrobenthos [18] | 21 functional traits (e.g., tube-dwelling, feeding mode) | Early Burst (Overall); Brownian Motion (specific traits) | EB best model for overall trait evolution; Pagel's λ ≥ 1.0 for most traits indicating phylogenetic conservatism. | Rapid initial diversification reflects adaptation to extreme Arctic conditions; some traits evolved more gradually. |

| South American Liolaemus Lizards [17] | Lineage diversification; Body size | Density-Dependent diversification; Ornstein-Uhlenbeck (Body size) | Lineage-through-time analysis rejected EB (γ statistic); OU model with 3 optima best for body size. | Continental radiation driven by Andean uplift, but diversification did not follow an early-burst pattern. |

| Global Landbirds [19] | Morphological traits | Models accounting for limited ecological opportunity | Widespread signature of decelerating trait evolution when accounting for similar habitats/diets. | Ecological opportunity, not just time, influences diversification tempo; supports niche-filling predictions. |

Experimental Protocols for Model Testing

Testing evolutionary models like EB involves a workflow of phylogenetic comparative methods. The diagram below outlines the core analytical pathway.

Workflow for Testing Evolutionary Models

Detailed Methodologies for Key Experiments

A. Fossil-Based Trait Time-Series (e.g., Cichlid Fish) [15]

- Sediment Core Sampling: Extract continuous sediment cores from lake bottoms. Core sections are radiometrically dated (e.g., radiocarbon dating) to establish a precise chronological framework from the present to the lake's origin (~17 ka for Lake Victoria).

- Fossil Extraction and Identification: Process sediment samples to isolate microscopic fossilized teeth (or other durable structures). Use morphological criteria to assign fossils to specific taxonomic groups (e.g., haplochromine cichlids).

- Geometric Morphometrics: Capture high-resolution images of fossil teeth. Place digital landmarks on tooth outlines to quantify shape. Analyze landmark data using Principal Components Analysis (PCA) to create a multi-dimensional morphospace.

- Temporal Pattern Analysis: Assign fossil teeth to specific time bins based on their depth in the sediment core. Calculate morphospace occupation (e.g., disparity, volume) for each time bin. Statistically test for trends in morphospace expansion/contraction over time, comparing observed patterns to EB model predictions.

B. Phylogenetic Comparative Analysis (e.g., Lizards, Macrobenthos) [18] [17]

- Data Compilation:

- Model Fitting Procedure:

- Specify Models: Define the candidate set of evolutionary models. Key models include:

- Brownian Motion (BM): Traits evolve via random walk with constant variance. The null model.

- Early Burst (EB): The rate of trait evolution is highest at the root and decays exponentially over time [17] [20].

- Ornstein-Uhlenbeck (OU): Traits evolve under stabilizing selection toward a central optimum or multiple optima [17].

- Maximum Likelihood Estimation: For each model, use numerical optimization to find the parameter values (e.g., rate decay parameter for EB, strength of selection for OU) that make the observed trait data most probable, given the phylogeny.

- Specify Models: Define the candidate set of evolutionary models. Key models include:

- Model Selection:

- Calculate the Akaike Information Criterion (AIC) for each fitted model. The model with the lowest AIC score is considered the best fit [20].

- Use Likelihood Ratio Tests to determine if more complex models (e.g., EB) provide a statistically significant improvement over simpler ones (e.g., BM).

Comparative Analysis of Evolutionary Models

The table below provides a direct comparison of the EB model and its primary alternatives, detailing their mathematical structure, biological interpretation, and strengths/limitations.

Table 2: Comparison of Major Models of Trait Evolution

| Model | Mathematical Foundation & Key Parameters | Core Biological Interpretation | Advantages | Disadvantages/Limitations |

|---|---|---|---|---|

| Early Burst (EB) | Rate of evolution: σ²(t) = σ²₀ * e(-rt)Parameters: σ²₀ (initial rate), r (decay parameter) | Rapid diversification into empty ecological niches, followed by slowdown as niches fill [15] [17]. | Directly captures the classic prediction of adaptive radiation theory. | Empirical support is often weak; signature can be erased by high extinction or later diversification pulses [17]. |

| Brownian Motion (BM) | Trait variance increases linearly with time: Var[z(t)] = σ²tParameter: σ² (rate of evolution) | Evolution by random drift or under fluctuating selection with no clear direction. | Simple null model; computationally straightforward. | Lacks biological realism for many traits; cannot model stabilizing selection or adaptive trends. |

| Ornstein-Uhlenbeck (OU) | Traits pulled toward an optimum θ: dz = -α(z - θ)dt + σdWParameters: α (selection strength), θ (optimum), σ (stochastic rate) | Evolution under stabilizing selection[ [18] [17]. Can model adaptation to different niches with multiple optima (OUmv). | Biologically realistic for many functional traits; can model adaptation. | More complex, requiring more parameters. Can be mis-specified if the true process is different. |

| Fabric/Fabric-Regression [21] | Identifies localized directional shifts (β) and changes in evolvability (υ) on specific branches. | Detects heterogeneous evolutionary processes across a phylogeny, free from the influence of trait covariates. | More flexible; can pinpoint specific historical events and account for trait correlations. | A newer model; performance and interpretation still being explored. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Studying Trait Evolution

| Tool/Reagent | Function/Application in Evolutionary Studies |

|---|---|

| Mitochondrial Cytochrome c Oxidase I (mtCOI) Gene | A standard DNA barcode region used for species identification and for building molecular phylogenies, especially in invertebrate groups [18]. |

| Geometric Morphometric Software (e.g., MorphoJ, tps series) | Used to quantify and statistically analyze shape from 2D or 3D landmark data, crucial for studying phenotypic disparity in fossils or extant species [15]. |

R Packages for Phylogenetics (e.g., ape, geiger, laser, phytools) |

Software libraries in R that provide functions for reading, plotting, and analyzing phylogenetic trees, including fitting EB, OU, and BM models [17] [20]. |

| Supercomputing Resources (e.g., TACC Frontera, Lonestar6) | High-performance computing (HPC) systems essential for processing large datasets (e.g., genomic data, thousands of MRI/3D images) and running complex model-fitting analyses [22]. |

| UK Biobank-scale Phenomic Data | Large-scale repositories of phenotypic data (e.g., 3D MRI scans, whole-body X-rays) that enable high-resolution mapping of traits across many individuals and species [22]. |

Understanding the dramatic variation in evolutionary diversification rates across lineages and regions remains a central challenge in evolutionary biology. The Evolutionary Arena (EvA) framework has emerged as a synthetic conceptual approach for studying context-dependent species diversification by integrating lineage-specific traits with abiotic and biotic environmental conditions [23]. This framework conceptualizes diversification rate (d) as a function of the abiotic environment (a), biotic environment (b), and clade-specific phenotypes or traits (c), expressed as d ~ a,b,c [23]. The EvA framework provides a heuristic structure for parameterizing relevant processes in evolutionary radiations, stasis, and biodiversity decline, enabling quantitative comparisons across clades and spatial-temporal scales.

Traditional approaches to studying diversification have struggled with terminology proliferation and challenges in quantitative comparison between evolutionary lineages and geographical regions. The EvA framework addresses these limitations by building on current theoretical foundations to provide a conceptual structure for integrative study of diversification rate shifts and stasis. This framework can be applied across biological systems, from cellular to global spatial scales, and spans ecological to evolutionary timeframes [23].

Core Components of the Evolutionary Arena Framework

The EvA framework synthesizes three fundamental components that collectively influence evolutionary diversification rates, creating a comprehensive model for understanding evolutionary patterns across taxa and environments.

Abiotic Environment (a)

The abiotic component encompasses physical environmental factors including climate, geology, geography, and disturbance regimes that create evolutionary opportunities and constraints. According to the framework, species may enter new adaptive zones through climate change or the formation of novel landscapes, such as newly emerged volcanic islands or human-altered environments [23]. These abiotic factors determine the fundamental niche of species and act as environmental filters that shape which species can establish in a given area [24]. The importance of abiotic factors is exemplified by key events in evolutionary history such as mass extinctions, climate change episodes like late Miocene aridification, and orogeny events including the formation of the Andes [23].

Biotic Environment (b)

The biotic component includes interactions between living organisms such as competition, predation, mutualism, and facilitation. The biotic environment corresponds to positive and negative interactions within communities that determine the set of coexisting species [24]. This component defines the realized niche of species along a spectrum from competitive exclusion to facilitation [24]. The EvA framework emphasizes that lack of effective competition in an environment—because no suitably adapted lineages already occur there—represents a crucial condition for adaptive radiation to occur [23]. Biotic interactions can drive trait divergence through ecological speciation when divergent selection pressures from the environment promote reproductive isolation.

Clade-Specific Traits (c)

Clade-specific phenotypes or traits represent morphological, physiological, or phenological heritable features measurable at the individual level that influence diversification potential [24]. These traits are categorized into response traits (influencing how organisms respond to environmental drivers) and effect traits (influencing how organisms affect ecosystem functions) [24]. The framework recognizes that traits can have opposing effects on diversification depending on ecological context, spatiotemporal scale, and associations with other traits [25]. For example, outcrossing may simultaneously increase the efficacy of selection and adaptation while decreasing mate availability, creating contrasting effects on lineage persistence [25].

Table 1: Core Components of the Evolutionary Arena Framework

| Component | Definition | Role in Diversification | Examples |

|---|---|---|---|

| Abiotic Environment (a) | Physical, non-living environmental factors | Creates evolutionary opportunities and constraints; determines fundamental niche | Climate, geology, disturbance regimes, island formation [23] |

| Biotic Environment (b) | Interactions between living organisms | Shapes realized niche through competition, predation, mutualism | Lack of competitors, predator-prey relationships, facilitation [23] [24] |

| Clade-Specific Traits (c) | Heritable morphological, physiological, or phenological features | Determines genetic capacity and adaptability; includes response and effect traits | Key innovations, freezing tolerance, life-history strategies [23] [24] |

Comparative Analysis: EvA Framework Versus Alternative Diversification Models

The EvA framework differs from traditional diversification models in its integrative approach and conceptual structure. The table below provides a systematic comparison of its features against other prominent frameworks in evolutionary biology.

Table 2: Comparison of EvA Framework with Alternative Diversification Models

| Model Feature | EvA Framework | Traditional Adaptive Radiation | Trait-Based Frameworks (TBF) | Phylogenetic Comparative Methods |

|---|---|---|---|---|

| Core Focus | Integration of a,b,c components on diversification [23] | Adaptation and ecological speciation [23] | Linking traits to ecosystem functions [24] | Estimating temporal dynamics of diversification [23] |

| Primary Drivers | Abiotic environment, biotic environment, traits [23] | Ecological opportunity, key innovations [23] | Response and effect traits [24] | Speciation and extinction rates [23] |

| Spatial Scale Application | Cellular to global [23] | Typically population to ecosystem | Community to ecosystem [24] | Clade to biogeographic region |

| Temporal Scale Application | Ecological to evolutionary timeframes [23] | Evolutionary timescales | Ecological to evolutionary [24] | Evolutionary timescales |

| Mathematical Foundation | Conceptual framework with parameterization options [23] | Qualitative paradigm with some quantitative implementations | Statistical models of trait-environment relationships [24] | Maximum likelihood, Bayesian inference [23] |

| Context Dependency | Explicitly incorporated [23] | Implicit | Explicit through environmental filters [24] | Limited |

| Stochastic Processes | Incorporated through abiotic filters [24] | Limited consideration | Recognized through neutral assembly [24] | Fundamental component |

Key Distinctions and Advantages

The EvA framework's primary advantage lies in its integrative capacity to simultaneously address multiple drivers of diversification that are typically treated separately in other models. While traditional adaptive radiation focuses predominantly on adaptation and ecological opportunity, and trait-based frameworks emphasize trait-environment relationships, the EvA framework explicitly models the interactions between all three components (a, b, c) [23]. This integration allows for more nuanced understanding of complex evolutionary scenarios where multiple factors interact to shape diversification patterns.

Another significant distinction is the framework's explicit consideration of context dependency, recognizing that the effects of specific traits on diversification are likely to differ across lineages and timescales [25]. This contrasts with many phylogenetic comparative methods that estimate diversification rates without fully incorporating contextual factors. The framework's applicability across multiple spatial and temporal scales further enhances its utility for comparative studies [23].

Experimental Applications and Case Studies

Conifer Diversification Analysis

The EvA framework was parameterized in a case study on conifers, yielding results consistent with the long-standing scenario that low competition and high rates of niche evolution promote diversification [23]. The experimental protocol for this application involved:

Phylogenetic Reconstruction: Building a comprehensive phylogeny of conifer species using molecular dating approaches (Bayesian molecular dating) and multispecies coalescence methods [23].

Trait Characterization: Quantifying functional traits relevant to environmental adaptation, including morphological and physiological characteristics.

Environmental Data Integration: Incorporating abiotic environmental data and biotic interaction data across the distribution ranges of conifer species.

Model Parameterization: Implementing the d ~ a,b,c relationship using comparative phylogenetic methods to quantify the relative contributions of abiotic environment, biotic environment, and clade-specific traits to diversification rates.

Rate Analysis: Estimating speciation and extinction rates across the conifer phylogeny and correlating these rates with the identified a, b, and c factors.

This experimental approach demonstrated how the general EvA model can be operationalized for empirical studies, providing a template for applications to other taxonomic groups.

Conceptual Validation Studies

Beyond the conifer case study, researchers have applied the EvA framework to several conceptual examples that illustrate its utility:

Lupinus radiation in the Andes: Examined in the context of emerging ecological opportunity and fluctuating connectivity due to climatic oscillations [23].

Oceanic island radiations: Studied regarding island formation and erosion dynamics [23].

Biotically driven radiations: Investigated in the Mediterranean orchid genus Ophrys [23].

These conceptual applications demonstrate how the EvA framework helps identify and structure research directions for evolutionary radiations by providing a systematic approach to organizing hypotheses and evidence.

Innovative Mathematical Approaches

Recent research has developed new mathematical models that complement the EvA framework by bridging microevolutionary and macroevolutionary processes. Dr. Simone Blomberg from The University of Queensland has created a mathematical model that combines short-term natural selection (microevolution) with species evolution over millions of years (macroevolution) [26]. This approach:

- Borrows mathematics from finance sector formulas developed to illustrate share portfolios

- Has been applied to genetic trait data from Anolis lizards, incorporating variations in eight traits including leg bone lengths, jaw size, and head width

- Uses advanced geometrical methods to trace evolution while maintaining genetic relationships among traits

- Provides a mathematical foundation for testing theories about trait convergence and natural selection's role in evolutionary history [26]

This innovative approach represents a significant advancement in operationalizing framework concepts like those in EvA through quantitative methods.

Research Protocols and Methodologies

Standardized Experimental Protocol for EvA Framework Application

Implementing the Evolutionary Arena framework in empirical research requires a systematic approach that integrates data from multiple sources and analytical techniques. The following protocol provides a detailed methodology for applying the EvA framework to diversification studies:

Phase 1: Phylogenetic Reconstruction

- Utilize molecular dating approaches (Bayesian molecular dating) and multispecies coalescence methods [23]

- Apply maximum likelihood and Bayesian inference for tree building [23]

- Generate progressively higher quality phylogenies to recognize monophyletic groups and estimate temporal dynamics of evolutionary radiations [23]

Phase 2: Trait Characterization

- Identify and measure functional traits following standardized protocols [24]

- Categorize traits into response traits (influencing how organisms respond to environmental drivers) and effect traits (influencing how organisms affect ecosystem functions) [24]

- Account for intraspecific trait variability, which can constitute a relatively large part of overall community-level trait variability [24]

Phase 3: Environmental Data Collection

- Quantify abiotic environmental factors relevant to the study system (climate, geology, etc.)

- Document biotic interactions through direct observation, experimental manipulation, or literature synthesis

- Consider both contemporary environmental data and historical reconstructions where applicable

Phase 4: Model Parameterization

- Implement the d ~ a,b,c relationship using comparative phylogenetic methods [23]

- Quantify the relative contributions of abiotic environment, biotic environment, and clade-specific traits to diversification rates

- Use statistical approaches to account for phylogenetic non-independence

Phase 5: Hypothesis Testing

- Test specific hypotheses about context-dependent effects of traits on diversification [25]

- Evaluate the relative importance of neutral and niche assembly processes [24]

- Assess the influence of trait dominance or complementarity in ecosystem function provision [24]

Phase 6: Validation

- Compare results across multiple clades or regions

- Validate predictions using independent data sources

- Assess model performance against alternative frameworks

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Materials for Implementing EvA Framework Studies

| Research Tool Category | Specific Examples | Function in EvA Research | Implementation Considerations |

|---|---|---|---|

| Molecular Phylogenetics | DNA sequencers, Bayesian molecular dating software, multispecies coalescence tools [23] | Reconstruct evolutionary relationships and time trees | Requires massive DNA sequence datasets and increased computing power [23] |

| Trait Measurement Systems | Morphometric tools, physiological assays, environmental sensors | Quantify functional traits relevant to adaptation | Must account for intraspecific variability [24] |

| Environmental Data Platforms | Climate databases, soil mapping resources, biotic interaction databases | Characterize abiotic and biotic filters | Data should span relevant spatial and temporal scales |

| Comparative Method Software | Diversification rate analysis packages, phylogenetic comparative methods [23] | Parameterize d ~ a,b,c relationships | Should incorporate both speciation and extinction rates |

| Statistical Analysis Tools | R packages for phylogenetic analysis, geometric methods for trait evolution [26] | Test hypotheses about trait-diversification links | New geometrical methods are essential for uniting micro- and macroevolution [26] |

Discussion: Synthesis and Research Implications

The Evolutionary Arena framework represents a significant advancement in diversification research by providing a synthetic structure for comparing evolutionary trajectories across lineages and regions. Its primary contribution lies in making quantitative results comparable between case studies, thereby enabling new syntheses of evolutionary and ecological processes to emerge [23]. By conceptualizing context-dependent species diversification in concert with lineage-specific traits and environmental conditions, the framework promotes a more general understanding of variation in evolutionary rates.

The framework's identification of opposing effects of plant traits on diversification highlights the complexity of pathways linking traits to diversification rates [25]. This complexity suggests that mechanistic interpretations behind trait-diversification correlations may be difficult to parse, with effects likely differing across lineages and timescales [25]. This context dependence underscores the value of the EvA framework's integrated approach, which can accommodate such complexity more effectively than single-factor models.

Future research applying the EvA framework should prioritize taxonomically and context-controlled approaches to studies correlating traits and diversification [25]. The framework's flexibility across spatial and temporal scales provides opportunities for novel research syntheses, particularly when combined with emerging mathematical models that bridge microevolutionary and macroevolutionary processes [26]. As the framework continues to be applied and refined across diverse biological systems, it promises to enhance our understanding of the interacting processes that drive evolutionary diversification, stasis, and decline across the tree of life.

From Theory to Practice: Implementing Diversification Models in Biomedical Research

Stochastic Differential Equations (SDEs) serve as the fundamental mathematical framework for modeling trait evolution across biological scales, from molecular changes to macroevolutionary patterns. These equations extend classical differential equations by incorporating stochastic processes, enabling researchers to model random influences that are intrinsic to evolutionary processes [27]. In evolutionary biology, SDEs provide the mathematical backbone for understanding how phenotypic traits change over time under the simultaneous influences of deterministic evolutionary forces (such as selection) and stochastic processes (including genetic drift and environmental fluctuations) [28] [27]. The power of SDE-based approaches lies in their ability to translate biological hypotheses about evolutionary processes into testable mathematical models, creating a crucial bridge between theoretical predictions and empirical observations across comparative biology.

The application of SDEs to trait evolution represents a significant advancement over purely deterministic models, as it formally acknowledges that evolutionary change contains an inherent random component. This mathematical formalism originated in the theory of Brownian motion, with foundational work by Einstein and Smoluchowski in 1905, and was later developed into a rigorous mathematical framework through the groundbreaking work of Japanese mathematician Kiyosi Itô in the 1940s [27]. In biological terms, SDEs allow evolutionary biologists to quantify both the expected direction of evolutionary change (the deterministic component) and the variability around that expectation (the stochastic component), providing a more complete and realistic picture of evolutionary dynamics than was previously possible with simpler mathematical approaches.

Core Mathematical Framework: From Brownian Motion to Adaptive Landscapes

Fundamental SDE Formulations in Evolution

At the most basic level, SDEs in evolutionary biology model how a trait value, denoted as X(t), changes over time in response to various evolutionary forces. The general form of these equations mirrors those used in physics and finance:

In this biological context, X(t) represents the trait value at time t, F(X(t)) is the deterministic component representing directional evolutionary forces such as natural selection, and the summation term captures the cumulative effect of multiple stochastic influences (ξₐ(t)) on the evolutionary process [27]. These stochastic terms represent random fluctuations that affect evolutionary trajectories, such as environmental variability, random mutations, or genetic drift. The functions gₐ determine how these random influences scale with the trait value itself, allowing for more realistic biological scenarios where the impact of randomness may depend on the current state of the trait.

For practical applications in evolutionary biology, this general form is often implemented in specific models that incorporate biological realism. A typical SDE in evolutionary studies takes the form:

Here, μ(Xₜ,t) represents the directional component of evolution (including selection toward an optimum), while σ(Xₜ,t)dBₜ captures the stochastic component of evolutionary change, with Bₜ denoting a Brownian motion process [27]. In biological terms, a small time interval δ sees the trait value Xₜ change by an amount normally distributed with expectation μ(Xₜ,t)δ and variance σ(Xₜ,t)²δ, independently of past behavior. This mathematical formulation closely mirrors the biological understanding of evolution as a process with both deterministic (selection) and stochastic (drift) components operating over time.

The Moving Optimum Model: SDEs for Climate Change Response

One of the most influential applications of SDEs in evolutionary biology is the "moving optimum" model, which has become particularly relevant for understanding adaptation to climate change. This framework models stabilizing selection with a phenotypic optimum that changes over time, representing the shifting environmental conditions that populations must track to maintain fitness [28].

The core quantitative-genetic model for a single trait follows the Lande equation:

Where Δz̄ represents the change in mean phenotype per generation, G is the additive genetic variance, and ∇lnw̄ is the selection gradient [28]. When the phenotypic optimum moves linearly over time, this deterministic equation can be extended to include stochastic components, resulting in an SDE that captures both the systematic response to the moving optimum and random fluctuations due to environmental variability and genetic sampling.

For multivariate trait evolution, the model expands to:

Where G is the genetic covariance matrix and β is the multivariate selection gradient pointing toward the phenotypic optimum [28]. The structure of the G-matrix influences the response to selection, with genetic correlations potentially constraining or facilitating evolution depending on their alignment with the fitness landscape. The mathematical description of these constraints relies on eigenanalysis of the G-matrix, identifying "genetic lines of least resistance" (gmax) along which evolution proceeds most readily [28].

Table 1: Key SDE-Based Models in Trait Evolution

| Model Type | Mathematical Formulation | Biological Interpretation | Primary Applications |

|---|---|---|---|

| Geometric Brownian Motion | dXₜ = μXₜdt + σXₜdBₜ | Exponential growth/drift with rate proportional to current state | Population size dynamics; morphological evolution |

| Ornstein-Uhlenbeck Process | dXₜ = θ(μ-Xₜ)dt + σdBₜ | Stabilizing selection toward optimum μ with strength θ | Adaptation under constrained evolution; niche conservatism |

| Moving Optimum Model | dXₜ = Gγ(θ(t)-Xₜ)dt + σdBₜ | Tracking a changing phenotypic optimum θ(t) | Climate change adaptation; evolutionary rescue |

Comparative Framework: SDE Approaches in Trait Evolution

Benchmarking Detection Power in Evolutionary Studies

The practical implementation of SDE-based models requires sophisticated statistical tools for parameter estimation and model selection. Recent benchmarking studies have evaluated the performance of different analytical approaches for detecting selection in evolve-and-resequence (E&R) studies, which provide powerful experimental platforms for studying trait evolution in real-time [29].

These evaluations tested 15 different test statistics implemented in 10 software tools across three evolutionary scenarios: selective sweeps, truncating selection, and polygenic adaptation to a new trait optimum. The performance was assessed using receiver operating characteristic (ROC) curves, with particular attention to the true-positive rate at a low false-positive rate threshold of 0.01 [29]. The results demonstrated that method performance varies substantially across evolutionary scenarios, with no single approach dominating across all contexts.

For selective sweep detection, the LRT-1 test performed best among tools supporting multiple replicates, while the χ² test excelled for single-replicate analyses [29]. In contrast, for detecting polygenic adaptation—particularly relevant for quantitative trait evolution—methods that leverage time series data and replicate information generally outperformed simpler approaches. The CLEAR method provided the most accurate estimates of selection coefficients across scenarios, highlighting the importance of matching analytical tools to the underlying evolutionary model [29].

Table 2: Performance Comparison of Selection Detection Methods

| Software Tool | Selective Sweeps (pAUC) | Truncating Selection (pAUC) | Stabilizing Selection (pAUC) | Computational Time | Data Requirements |

|---|---|---|---|---|---|

| LRT-1 | 0.021 | 0.017 | 0.015 | Fast | Two time points + replicates |

| CLEAR | 0.019 | 0.022 | 0.020 | Moderate | Time series + replicates |

| CMH Test | 0.018 | 0.019 | 0.016 | Fast | Two time points + replicates |

| χ² Test | 0.017 | 0.015 | 0.012 | Very fast | Single replicate |

| WFABC | 0.015 | 0.018 | 0.017 | Very slow | Time series |

The Scientist's Toolkit: Essential Research Reagents

Implementing SDE-based models in trait evolution research requires both conceptual and computational tools. The following table summarizes key "research reagents" essential for this work:

Table 3: Essential Research Reagents for SDE-Based Trait Evolution Studies

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| Phylogenetic Comparative Methods | Characterize macroevolutionary patterns from species trait data | Fabric model; Ornstein-Uhlenbeck models |

| Evolve-and-Resequence (E&R) Data | Experimental evolution with genomic tracking | Drosophila populations; microbial evolution experiments |

| Pool-Seq Sequencing | Measure allele frequencies in entire populations | Time-series allele frequency data |

| Stochastic Calculus Framework | Mathematical foundation for SDE solutions | Itô calculus; Stratonovich calculus |

| Numerical SDE Solvers | Compute solutions to evolution equations | Euler–Maruyama method; Milstein method |

| Genetic Covariance Estimation | Quantify constraints and opportunities for multivariate evolution | G-matrix estimation; principal component analysis |

Methodological Deep Dive: Experimental Protocols and Analytical Workflows

Standardized E&R Experimental Protocol

Evolve-and-resequence studies represent one of the most powerful approaches for generating data to parameterize SDE models of trait evolution. A standardized protocol for such experiments includes the following key steps [29]:

Founder Population Construction: Establish replicate populations from a genetically diverse founder population. Benchmark studies used 10 replicate diploid populations of 1000 individuals founded from 1000 haploid chromosomes capturing natural polymorphisms [29].

Experimental Evolution: Subject replicates to defined selection regimes for multiple generations (typically 50-60 generations). Maintain control populations when possible to distinguish selection from drift.

Time-Series Sampling: Collect genomic samples at regular intervals (e.g., every 10 generations) throughout the experiment. This enables reconstruction of allele frequency trajectories rather than just endpoint comparisons.

Pooled Sequencing: Sequence pooled individuals from each population time point (Pool-Seq) to estimate allele frequencies across the genome.

Variant Calling and Frequency Estimation: Identify segregating SNPs and estimate their frequencies in each population at each time point.

Selection Detection Analysis: Apply multiple statistical tests to identify SNPs showing frequency changes inconsistent with neutral drift.

For quantitative trait architectures, the selection protocol involves either truncating selection (selecting top/bottom x% of individuals based on phenotype) or laboratory natural selection (exposing populations to novel environments and allowing polygenic adaptation to occur). In truncating selection, the effect sizes of quantitative trait nucleotides (QTNs) are typically drawn from gamma distributions, with a proportion of individuals culled each generation based on their phenotypic values [29]. For stabilizing selection experiments, populations evolve toward a new trait optimum, with selection strength determined by the fitness function landscape.

The Fabric-Regression Model Protocol

For macroevolutionary studies across species, the Fabric-regression model provides a method for identifying historical directional shifts and changes in evolvability while accounting for trait covariates [30]. The implementation protocol involves:

Data Collection: Compile trait values and covariates across a phylogeny of species (e.g., 1504 mammalian species for brain/body size evolution) [30].

Model Specification: Define the Fabric-regression model as:

where Yᵢ is the trait value, Xᵢⱼ are covariates, βⱼ are regression coefficients, βᵢₖ are directional shifts along branches, and eᵢ ~ N(0,υσ²) represents the Brownian process with evolvability parameter υ [30].

Parameter Estimation: Use maximum likelihood estimation to fit the model, with log-likelihood:

where V(υ) is the variance-covariance matrix determined by the phylogeny and evolvability parameter [30].

Model Selection: Compare models with and without directional shifts (βᵢₖ) and evolvability changes (υ) using likelihood-ratio tests or information criteria.

Biological Interpretation: Interpret significant βᵢₖ parameters as historical directional changes and υ ≠ 1 as changes in evolutionary potential after accounting for covariate influences.

Visualization Framework: Conceptualizing SDE Models in Evolution

Workflow Diagram: SDE-Based Evolutionary Analysis

The following diagram illustrates the integrated workflow for applying SDE models in trait evolution research, from experimental design through biological interpretation:

Evolutionary Process Classification Diagram

This diagram categorizes the main evolutionary processes and their corresponding SDE formulations, highlighting the mathematical structure of each model type:

Discussion: Synthesis and Future Directions

The integration of SDE frameworks into trait evolution research has fundamentally transformed evolutionary biology from a predominantly historical science to one capable of making quantitative predictions about evolutionary processes. The benchmarking studies reveal that method performance is highly context-dependent, with different statistical tools excelling under different evolutionary scenarios [29]. This underscores the importance of matching analytical approaches to biological realities rather than relying on one-size-fits-all solutions.

Emerging methodologies like Evolutionary Discriminant Analysis (EvoDA) demonstrate how supervised learning approaches can complement traditional statistical methods for predicting evolutionary models, particularly for traits subject to measurement error [31]. Similarly, the development of Fabric-regression models represents a significant advance for partitioning trait variation into components shared with covariates and unique components that may reflect independent evolutionary processes [30]. These methodological innovations expand the toolkit available for testing evolutionary hypotheses using SDE-based frameworks.

Looking forward, the field is moving toward more integrated models that simultaneously capture microevolutionary processes operating within populations and macroevolutionary patterns evident across phylogenies. The challenge remains to develop models that are mathematically tractable yet biologically realistic, with sufficient complexity to capture essential evolutionary dynamics but sufficient simplicity to permit parameter estimation with limited data. As genomic and phenotypic datasets continue to grow in breadth and resolution, SDE-based approaches will likely play an increasingly central role in unifying evolutionary theory across biological scales and taxonomic groups.

The accurate parameterization of evolutionary models is fundamental to testing hypotheses about the processes that shape biological diversity. In phylogenetic comparative methods, mathematical models are used to infer unobserved evolutionary processes from present-day trait variation across species [20]. Key parameters within these models quantify core evolutionary concepts: selection strength, which describes the intensity of directional or stabilizing selection; evolutionary optima, which represent trait values favored by selection; and evolutionary rates, which capture the pace of trait change over time [32]. Interpreting these parameters correctly requires understanding their mathematical definitions, biological meanings, and the interrelationships between them.

As model complexity has grown, so too have the challenges in parameter estimation and interpretation. Studies have demonstrated that inadequate data can lead to high error rates in parameter estimation and model selection, potentially resulting in false biological conclusions [32]. For example, estimates of phylogenetic signal (Pagel's λ) can vary dramatically from no signal (λ = 0) to approximately Brownian (λ ≈ 1) when applied to different simulated realizations of the same process, particularly on small phylogenies [32]. This highlights the critical importance of quantifying uncertainty and power in comparative analyses.

Core Parameters and Their Biological Interpretations

Selection Strength (α)

Selection strength, typically denoted by the parameter α in Ornstein-Uhlenbeck (OU) models, quantifies the strength of stabilizing selection pulling a trait toward an optimal value [32]. Mathematically, it represents the rate of force returning the trait to the optimum θ in the stochastic differential equation: dYₜ = -α(Yₜ - θ)dt + σdBₜ [32]. Biologically, a higher α value indicates stronger stabilizing selection, meaning traits are more constrained and deviate less from their optimal values. When α = 0, the OU model reduces to a Brownian motion model, indicating the absence of stabilizing selection and allowing traits to drift freely [32].

Evolutionary Optima (θ)

Evolutionary optima, represented by the parameter θ in OU models, signify the trait values that selection favors in a given selective regime [32]. These optima represent the "pull" points in the adaptive landscape toward which traits evolve under stabilizing selection. Extended OU models allow for multiple θ values across different branches or clades of a phylogeny, representing distinct adaptive zones or selective regimes [32]. The identification of these shifts helps researchers understand how different ecological factors have shaped trait evolution across lineages.

Evolutionary Rates (σ²)

Evolutionary rates, typically denoted as σ² (sigma squared) in Brownian motion models, measure the variance accumulated per unit time in a continuously valued trait [33] [32]. Under Brownian motion, trait evolution follows the stochastic differential equation: dYₜ = σdBₜ, where Bₜ is standard Brownian motion [32]. This parameter reflects the rate of increase in trait variance over time and is often interpreted as the pace of evolutionary change. Recent innovations have modeled evolutionary rates as time-correlated stochastic variables rather than constants, using approaches like autoregressive-moving-average (ARMA) models to capture more complex evolutionary dynamics [33].

Table 1: Key Parameters in Evolutionary Models and Their Interpretations

| Parameter | Common Symbol | Primary Interpretation | Biological Meaning |

|---|---|---|---|

| Selection Strength | α | Strength of stabilizing selection | How strongly traits are pulled toward an optimum |

| Evolutionary Optima | θ | Ideal trait value in a selective regime | Trait value favored by natural selection |

| Evolutionary Rate | σ² | Pace of trait divergence over time | Rate of variance accumulation per unit time |

| Phylogenetic Signal | λ | Degree to which traits reflect shared ancestry | How well phylogeny predicts trait similarity |

Comparative Analysis of Modeling Frameworks

Conventional Model Selection Approaches

Traditional phylogenetic comparative methods have relied heavily on information-theoretic approaches for model selection, particularly variants of the Akaike Information Criterion (AIC) [20]. These methods compare fitted models based on their likelihood scores, penalized by the number of parameters to mitigate overfitting [20]. The fundamental principle is to balance model complexity against goodness-of-fit, with the optimal model exhibiting the best trade-off between these competing demands [20].

While widely used, these conventional approaches have notable limitations. Studies using Monte Carlo simulations have demonstrated that information criteria can have remarkably high error rates, particularly when analyzing small phylogenies or complex models [32]. The power to distinguish between models depends significantly on both the number of taxa and the structure of the phylogeny, with inadequate data leading to unreliable inferences [32]. Furthermore, these methods often struggle with traits subject to measurement error, which reflects realistic conditions in empirical datasets [20].

Machine Learning Approaches

Evolutionary Discriminant Analysis (EvoDA) represents an innovative machine learning approach that applies supervised learning to predict evolutionary models via discriminant analysis [20]. This framework includes multiple algorithms: linear discriminant analysis (LDA), quadratic discriminant analysis (QDA), regularized discriminant analysis (RDA), mixture discriminant analysis (MDA), and flexible discriminant analysis (FDA) [20]. Unlike conventional methods that focus on model fitting and comparison, EvoDA emphasizes minimizing prediction error by building a predictive function where the response variable (evolutionary model) is predicted from input feature variables [20].

Simulation studies demonstrate that EvoDA offers substantial improvements over conventional AIC-based approaches, particularly when analyzing traits subject to measurement error [20]. The method has shown strength in challenging classification tasks involving multiple candidate models (e.g., distinguishing between seven different evolutionary models), establishing it as a promising framework for the next era of comparative research [20].

Bayesian Inference Methods

Approximate Bayesian Computation (ABC) frameworks, such as implemented in ABC-SysBio, provide an alternative approach for parameter estimation and model selection within the Bayesian formalism [34]. These methods are particularly valuable for investigating the challenging problem of fitting stochastic models to data [34]. ABC approaches are "likelihood-free," relying instead on simulations from the model and comparisons between simulated and observed data using distance functions [34].

The key advantage of Bayesian methods lies in their ability to naturally accommodate uncertainty through posterior distributions, providing meaningful confidence intervals for parameter estimates [34] [32]. While computationally expensive, the additional insights gained from considering joint distributions over parameters often outweigh these costs, especially for complex problems [34]. ABC sequential Monte Carlo (ABC-SMC) approaches gradually move from sampling from the prior to sampling from the posterior using a decreasing schedule of tolerance values [34].

Table 2: Performance Comparison of Model Selection Frameworks

| Framework | Key Strength | Primary Limitation | Optimal Use Case |

|---|---|---|---|

| Information Criteria (AIC) | Computational efficiency; Widely implemented | High error rates with limited data; Sensitive to measurement error | Initial model screening; Large phylogenies |

| Evolutionary Discriminant Analysis | High accuracy with noisy data; Superior prediction | Less established; Limited software implementation | Complex classification tasks; Traits with measurement error |

| Bayesian Methods | Natural uncertainty quantification; Robust confidence intervals | Computationally intensive; Prior specification challenges | Complex stochastic models; Problems requiring uncertainty estimates |

Experimental Protocols and Methodologies

Protocol for EvoDA Implementation

The implementation of Evolutionary Discriminant Analysis follows a structured workflow designed to maximize predictive accuracy for evolutionary model selection [20]:

Training Data Generation: Simulate trait data under known evolutionary models (BM, OU, EB, etc.) across the phylogenetic tree of interest. The number of simulations per model should be balanced to avoid classification bias.

Feature Extraction: Calculate summary statistics from the simulated trait data that capture relevant aspects of trait distribution and phylogenetic structure. These features serve as predictor variables in the discriminant analysis.

Classifier Training: Apply EvoDA algorithms (LDA, QDA, RDA, MDA, or FDA) to the simulated dataset, using the known generating models as response variables and the summary statistics as predictors.

Model Validation: Assess classifier performance using cross-validation techniques to estimate prediction accuracy and avoid overfitting. This involves holding out a portion of simulated data during training and testing predictions on the withheld data.

Empirical Application: Apply the trained classifier to empirical trait data to predict the most likely evolutionary model, along with probability estimates for each candidate model.

This protocol has been validated through case studies of escalating difficulty, demonstrating substantial improvements over conventional approaches when studying traits subject to measurement error [20].

Protocol for Phylogenetic Monte Carlo Analysis

The Phylogenetic Monte Carlo (pmc) approach addresses uncertainty quantification and power analysis in comparative methods [32]:

Model Fitting: Fit candidate evolutionary models to the empirical trait data using maximum likelihood or Bayesian methods.

Parametric Bootstrapping: Simulate new trait datasets under each fitted model, preserving the original phylogenetic tree and estimated parameters.

Model Reselection: Fit all candidate models to each simulated dataset and record the selected model based on information criteria or likelihood ratios.