Beyond Benchmarks: How the Comparative Approach Powers Modern Drug Discovery

This article explores the transformative role of comparative methodologies in accelerating biomedical research and drug development.

Beyond Benchmarks: How the Comparative Approach Powers Modern Drug Discovery

Abstract

This article explores the transformative role of comparative methodologies in accelerating biomedical research and drug development. We first establish the foundational principles and historical context of comparative analysis, then examine its cutting-edge applications in target identification, model selection, and predictive analytics. We address common challenges in experimental design and data interpretation, and evaluate validation strategies through case studies in oncology, neurodegenerative diseases, and infectious diseases. Aimed at researchers and drug development professionals, this guide provides actionable insights for implementing robust comparative frameworks to enhance research efficiency and therapeutic innovation.

What is Comparative Analysis? Core Principles for Research and Drug Discovery

The comparative approach is a foundational scientific methodology that infers function, mechanism, and evolutionary history by systematically analyzing similarities and differences across entities. Its origins lie in 19th-century biology, where Charles Darwin and others compared anatomical traits across species to deduce common descent and adaptation. In modern data science, this approach has been computationally scaled, enabling the comparison of molecular datasets, disease states, or drug responses to generate actionable biological insights. This document provides application notes and protocols for implementing the comparative approach in biomedical research, emphasizing practical utility in target discovery and validation.

Key Applications in Modern Research

Cross-Species Genomic Comparison for Target Identification

Comparing conserved genetic elements across species highlights functionally critical genes and regulatory regions, prioritizing them for therapeutic intervention.

Table 1: Key Conserved Pathways in Human and Model Organisms

| Pathway/Element | Human Gene | Mouse Ortholog | Zebrafish Ortholog | Conservation Score (%) | Implication for Drug Targeting |

|---|---|---|---|---|---|

| PD-1/PD-L1 Immune Checkpoint | PDCD1 | Pdcd1 | pdcd1 | 85 | High; validates immuno-oncology models |

| Amyloid Precursor Protein Processing | APP | App | appa, appb | 90 | High; Alzheimer's disease modeling relevant |

| Telomerase Activity | TERT | Tert | tert | 78 | Moderate; cancer target, species-specific nuances |

| ACE2 Receptor (SARS-CoV-2 entry) | ACE2 | Ace2 | ace2 | 82 | High; validates infection & therapeutic models |

Protocol 2.1.1: Phylogenetic Footprinting for Conserved Non-Coding Elements

- Objective: Identify evolutionarily conserved regulatory sequences (e.g., enhancers) near a disease-associated gene.

- Materials: Genomic sequences (FASTA format) for human and at least 5 vertebrate species (e.g., chimp, mouse, rat, chicken, zebrafish) from ENSEMBL or UCSC Genome Browser.

- Software: Tools like

phastCons(PHAST package) or web servers like ECR Browser. - Procedure:

- Data Retrieval: Download a genomic region (± 100 kb from the gene's TSS) for all target species.

- Multiple Alignment: Use a whole-genome aligner (e.g., MULTIZ) pre-computed for vertebrate clusters (available via UCSC).

- Conservation Scoring: Run

phastConson the alignment using a neutral evolutionary model. This assigns a probability score (0-1) of conservation for each base. - Peak Calling: Define conserved elements as contiguous bases with conservation scores >0.7 and length >100bp.

- Functional Annotation: Overlap identified elements with epigenetic marks (e.g., H3K27ac ChIP-seq data) from relevant cell types to predict regulatory activity.

Comparative Transcriptomics for Disease Subtyping

Comparing gene expression profiles across patient cohorts identifies disease subtypes, biomarkers, and deregulated pathways.

Table 2: Comparative Transcriptomics in NSCLC Subtyping

| Study (Year) | Cohorts Compared (Sample Size) | Key Comparative Finding | Clinical/Biological Implication |

|---|---|---|---|

| TCGA NSCLC (2023 Update) | Lung Adenocarcinoma (LUAD, n=576) vs. Lung Squamous Cell Carcinoma (LUSC, n=551) | NKX2-1 high in LUAD; TP63 high in LUSC | Defines lineage-specific diagnostic markers and dependencies. |

| Single-Cell Atlas of Lung (2024) | Immune cells from early-stage (n=45) vs. advanced-stage (n=38) NSCLC | Exhausted T-cell signatures increase with stage; specific macrophage subset expands. | Identifies stage-specific immune evasion mechanisms for combo therapy. |

Protocol 2.2.1: Differential Expression and Pathway Analysis (Bulk RNA-Seq)

- Objective: Identify genes and pathways differentially active between two conditions (e.g., treated vs. control, disease vs. healthy).

- Materials: Processed RNA-Seq count matrices, sample metadata.

- Software: R/Bioconductor packages (

DESeq2,limma-voom,clusterProfiler). - Procedure:

- Normalization: Load counts into

DESeq2. Perform median-of-ratios normalization (DESeq2::DESeqDataSetFromMatrix). - Differential Testing: Run

DESeq2::DESeq()followed byresults()to obtain log2 fold changes and adjusted p-values for all genes. - Thresholding: Apply significance filters (e.g., \|log2FC\| > 1, padj < 0.05) to define differentially expressed genes (DEGs).

- Pathway Enrichment: Using

clusterProfiler, perform over-representation analysis (ORA) or Gene Set Enrichment Analysis (GSEA) on the DEG list against databases (KEGG, GO, Reactome). - Visualization: Generate volcano plots (log2FC vs -log10(p-value)) and enriched pathway bar plots.

- Normalization: Load counts into

Essential Signaling Pathways: A Comparative View

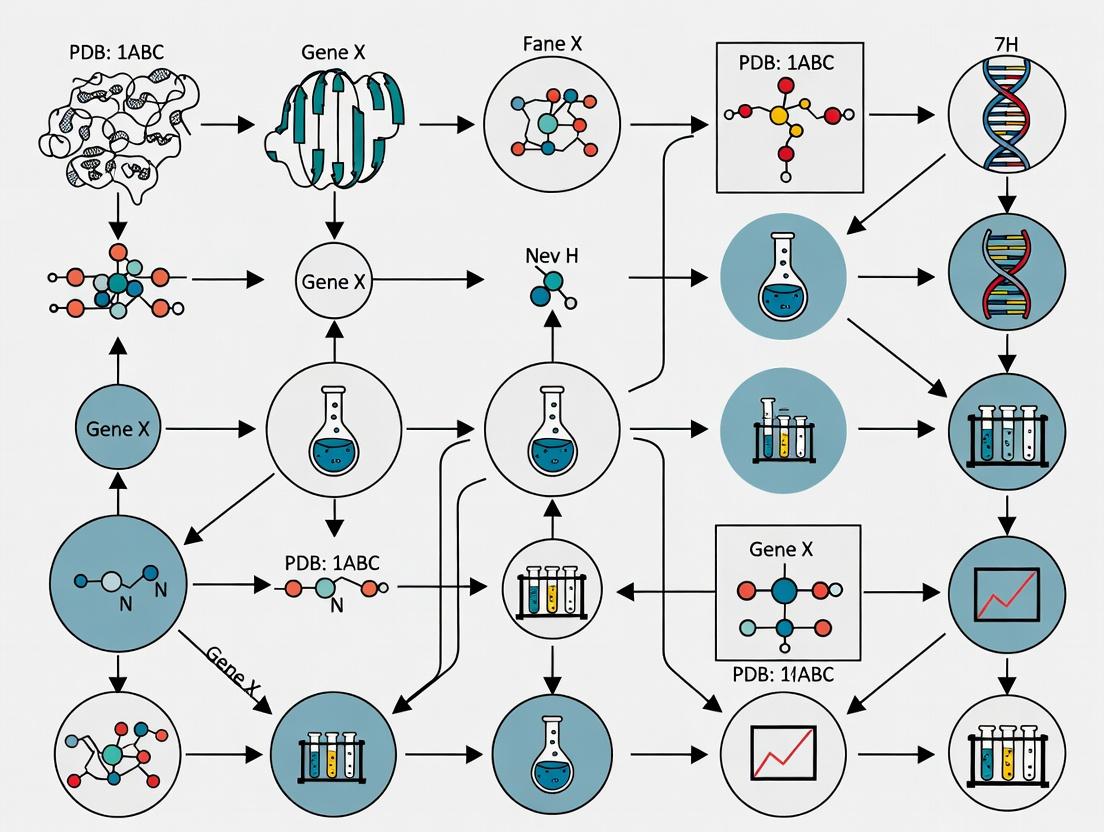

Diagram 1: Core Apoptosis Pathway - Comparative Regulation

Diagram 2: Comparative Transcriptomics Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Comparative Cell-Based Assays

| Reagent Category | Specific Example(s) | Function in Comparative Approach |

|---|---|---|

| Cell Line Panels | NCI-60 Human Tumor Cell Lines, Cancer Cell Line Encyclopedia (CCLE) panels. | Enable high-throughput comparison of drug sensitivity or genetic dependency across diverse genetic backgrounds. |

| Pathway Reporter Assays | NF-κB, Wnt/β-catenin, or STAT luciferase reporter constructs. | Quantitatively compare pathway activity between experimental conditions (e.g., wild-type vs. mutant, treated vs. untreated). |

| Multiplex Immunoassays | Luminex xMAP or MSD multi-cytokine/phosphoprotein panels. | Simultaneously compare concentrations of multiple analytes from limited sample volumes, profiling signaling states. |

| Live-Cell Imaging Dyes | Fluorescent probes for ROS (CellROX), Ca2+ (Fluo-4), apoptosis (Annexin V-FITC). | Enable kinetic comparison of cellular responses in real-time across different cell types or treatment groups. |

| CRISPR Screening Libraries | Whole-genome (e.g., Brunello) or focused (e.g., kinase) sgRNA libraries. | Systematically compare gene essentiality or drug resistance mechanisms across different cell models in parallel. |

| Species-Specific Antibodies | Anti-human vs. anti-mouse CD3ε for flow cytometry; phospho-specific antibodies validated for cross-reactivity. | Accurately measure and compare protein expression/post-translational modifications in cross-species studies. |

Advanced Protocol: Comparative Drug Sensitivity Screening

Protocol 5.1: High-Throughput Compound Screening Across Cell Line Panels

- Objective: Identify compounds with selective efficacy in a defined genetic context.

- Materials:

- Cell lines (e.g., 10-50 lines representing disease heterogeneity).

- 384-well tissue culture plates.

- Compound library (e.g., 1000+ small molecules in DMSO).

- Automated liquid handler.

- CellTiter-Glo 2.0 Assay (Promega) for viability.

- Plate reader with luminescence detection.

- Procedure:

- Cell Seeding: Harvest and count all cell lines. Seed cells in 384-well plates at density optimized for logarithmic growth after 72h (e.g., 500-1000 cells/well in 30 µL medium) using an automated dispenser. Incubate overnight.

- Compound Transfer/Pinning: Using a liquid handler or pin tool, transfer compounds from source plates to assay plates. Include DMSO-only wells as controls. Final compound concentration is typically 1-10 µM in 0.1% DMSO.

- Incubation: Incubate plates for 72-120 hours at 37°C, 5% CO2.

- Viability Readout: Equilibrate plates to room temperature. Add 30 µL of CellTiter-Glo 2.0 reagent per well. Shake for 2 minutes, incubate for 10 minutes, and record luminescence.

- Data Analysis:

- Normalization: For each plate, calculate % viability = (Lumsample - Lummedian(no cells)) / (Lummedian(DMSO) - Lummedian(no cells)) * 100.

- Dose-Response (if multiple concentrations): Fit curves using a 4-parameter logistic model (e.g., in

DRCR package) to calculate IC50 per cell line. - Comparative Analysis: Generate heatmaps of % viability or IC50 across the cell line panel. Use biostatistical tests (e.g., ANOVA with post-hoc test) to identify genetic features (mutations, expression) correlated with sensitivity via integration with CCLE genomic data.

Application Notes

Within the framework of comparative approach research in drug development, the principles of Controlled Contrasts and Contextual Inference provide a rigorous philosophical foundation for experimental design and data interpretation. Controlled Contrasts mandate the systematic comparison of experimental groups where only the variable of interest differs, isolating its effect. Contextual Inference requires the interpretation of results not in isolation, but within the layered context of cellular environment, tissue system, organismal physiology, and patient population.

Application in Target Validation: A candidate oncology target (e.g., a novel kinase) is studied not by single-gene knockdown alone, but through parallel, controlled contrasts: (1) Knockdown vs. wild-type in a sensitive cell line, (2) Knockdown in sensitive vs. inherently resistant cell lines, (3) Pharmacological inhibition vs. genetic knockdown. Contextual inference integrates these data layers to infer the target's role within signaling networks and predict therapeutic windows.

Application in Mechanism of Action (MoA) Elucidation: For a phenotypic screening hit, controlled contrasts are engineered using a series of perturbations (CRISPR, tool compounds, pathway reporters). Inference about the MoA is contextualized against reference databases of genetic and chemical signatures, moving from correlation to causal understanding within the biological system.

Protocols

Protocol 1: Multiplexed Target Validation via Controlled Genetic Contrasts

Objective: To validate a novel metabolic enzyme as a cancer dependency across genetic backgrounds.

Methodology:

- Cell Line Selection: Choose a panel of 5 isogenic cell line pairs, each pair consisting of a wild-type (WT) and a specific cancer-associated mutation (e.g., in KRAS, TP53, or a related pathway).

- Perturbation: Using lentiviral transduction, introduce into each cell line:

- Non-targeting control (NTC) shRNA

- Two independent shRNAs targeting the novel enzyme

- A positive control shRNA (e.g., targeting an essential gene).

- Controlled Contrast Setup:

- Contrast A (Within-genotype efficacy): For each cell line, compare viability (shTarget) vs. (shNTC).

- Contrast B (Across-genotype specificity): For each shRNA, compare fold-change in viability in Mutant vs. WT isogenic pairs.

- Readout: Measure cell viability at 96h and 144h using a ATP-based luminescent assay. Normalize reads to Day 0.

- Contextual Inference: Integrate viability data with transcriptomic (RNA-seq) profiles of each isogenic pair. Perform Gene Set Enrichment Analysis (GSEA) to infer which pathway contexts confer sensitivity.

Table 1: Sample Viability Data (Normalized Luminescence, 144h)

| Cell Line (Genotype) | NTC shRNA | shTarget_1 | shTarget_2 | shPositiveCtrl |

|---|---|---|---|---|

| A549 (KRAS Mut) | 1.00 ± 0.08 | 0.35 ± 0.05 | 0.41 ± 0.06 | 0.15 ± 0.02 |

| Isogenic WT | 1.00 ± 0.07 | 0.92 ± 0.09 | 0.88 ± 0.10 | 0.18 ± 0.03 |

| HCT116 (TP53 Mut) | 1.00 ± 0.09 | 0.90 ± 0.11 | 0.85 ± 0.08 | 0.17 ± 0.02 |

| Isogenic WT | 1.00 ± 0.06 | 0.95 ± 0.07 | 0.91 ± 0.09 | 0.16 ± 0.02 |

Protocol 2: Contextual MoA Deconvolution Using Signature-Based Inference

Objective: To infer the primary pathway affected by a novel compound from a phenotypic screen.

Methodology:

- Reference Signature Generation: Treat a reference cell line (e.g., MCF10A) with a panel of 10 well-characterized tool compounds (e.g., PI3K inhibitor, MEK inhibitor, DNA damage agent) for 6h. Perform RNA-seq in triplicate.

- Test Compound Contrast: Treat the same cell line with 3 concentrations of the novel compound (IC10, IC50, IC90) and vehicle (DMSO) for 6h. Perform RNA-seq in triplicate.

- Differential Analysis: Generate gene expression signatures (list of differentially expressed genes) for each tool compound (vs. DMSO) and for the test compound at each concentration.

- Controlled Comparison: Use a similarity metric (e.g., Connectivity Map's KS statistic) to compare the test compound signature to each reference signature.

- Contextual Inference: The MoA is inferred not from the highest-matching single reference, but from the pattern of matches across concentrations and the biological coherence of the ensemble of top matches.

Table 2: Signature Similarity Scores (Enrichment Scores) for Novel Compound X

| Reference Compound (Pathway) | IC10 Conc. | IC50 Conc. | IC90 Conc. |

|---|---|---|---|

| Torin1 (mTOR inhibitor) | 0.15 | 0.58 | 0.72 |

| Trametinib (MEK inhibitor) | 0.08 | 0.22 | 0.31 |

| Olaparib (PARP inhibitor) | -0.05 | 0.10 | 0.65 |

| Staurosporine (Pan-kinase) | 0.12 | 0.45 | 0.48 |

Visualizations

Controlled Contrasts Experimental Workflow

Contextual Inference Logic Diagram

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Comparative Studies

| Reagent / Material | Function in Controlled Contrasts & Inference |

|---|---|

| Isogenic Cell Line Pairs (WT vs. Mutant) | Provides the foundational genetic control for Contrast B, isolating the effect of a specific mutation on compound response or target essentiality. |

| Validated shRNA or CRISPR Libraries (e.g., Broad Institute's) | Ensures specific, reproducible genetic perturbations for creating clean contrasts between target and non-targeting control conditions. |

| Pathway-Focused Tool Compound Set | A collection of well-annotated inhibitors/activators used to generate reference molecular signatures for contextual inference of MoA. |

| Multiplexed Viability Assay Kits (e.g., ATP-based, Caspase-based) | Enables high-throughput, quantitative readouts for multiple contrasts in parallel, minimizing inter-assay variability. |

| Transcriptomic Profiling Service (Bulk or Single-Cell RNA-seq) | Generates the high-dimensional data required for contextual inference, moving beyond single endpoints to system-wide profiles. |

| Signature Analysis Software (e.g., GSEA, Connectivity Map tools) | Computational tools necessary to quantitatively compare experimental signatures to reference databases and infer biological context. |

Application Notes

The comparative approach in biomedical research has transitioned from reliance on whole-organism physiology to high-resolution molecular systematics. This evolution underpins the modern drug development pipeline, where cross-species validation meets targeted human omics profiling for precision medicine.

1. From Phenotypic Screening to Target Identification: Traditional animal models (e.g., murine disease models) provided invaluable in vivo data on systemic physiology, toxicity, and efficacy. The comparative approach here involved translating findings from model organisms to human pathophysiology. The limitation was the frequent failure due to interspecies genomic and physiological discrepancies. Contemporary protocols now initiate with comparative omics (e.g., genomic alignment, single-cell RNA-seq across species) to identify evolutionarily conserved disease pathways, ensuring targets have higher translational relevance.

2. Integrative Pharmacogenomics: Drug response data from animal models is now augmented with human population-scale genomic data. This comparative tier identifies genetic variants (e.g., in CYP450 enzymes) that predict adverse drug reactions or efficacy, explaining why compounds safe in animals may fail in specific human sub-populations.

3. Multi-Omic Biomarker Discovery: The shift from histological biomarkers in tissues to multi-omic signatures in liquid biopsies (e.g., cfDNA, exosomes) exemplifies this evolution. Protocols compare omic profiles (methylation, proteomic) from animal model biofluids against human patient samples to validate non-invasive disease monitoring tools.

Table 1: Quantitative Comparison of Research Paradigms

| Aspect | Animal Model-Centric (c. 1990-2010) | Integrated Omics-Centric (Current) |

|---|---|---|

| Primary Data Output | Survival curves, histopathology scores, behavioral metrics. | Sequence reads (DNA/RNA), spectral counts (proteomics), peak intensities (metabolomics). |

| Throughput | Low to moderate (n=10-100 per study). | Very high (n=1000s of samples, 1000s of molecules/sample). |

| Translational Attrition Rate | >90% failure from animal efficacy to human approval. | ~85% failure; omics used to de-risk and stratify. |

| Key Cost Driver | Animal husbandry, long-term in vivo studies. | Sequencing, mass spectrometry, computational infrastructure. |

| Time to Target Validation | 2-5 years. | 6 months - 2 years. |

Protocols

Protocol 1: Cross-Species Conserved Pathway Analysis for Target Prioritization

Objective: To identify high-confidence therapeutic targets by analyzing evolutionarily conserved gene expression signatures across mouse model and human disease tissues.

Materials: See "Research Reagent Solutions" below. Method:

- Sample Preparation:

- Obtain diseased and control tissues from a validated mouse model (e.g., ApcMin/+ for colorectal cancer) and matched human biopsy samples (e.g., from biobank).

- Homogenize tissues in TRIzol Reagent. Isolate total RNA following manufacturer's protocol. Assess RNA integrity (RIN > 8.0).

- Transcriptomic Profiling:

- Prepare stranded mRNA sequencing libraries using the NEBNext Ultra II Directional RNA Library Prep Kit.

- Sequence on an Illumina platform to a minimum depth of 30 million 150bp paired-end reads per sample.

- Bioinformatic Analysis:

- Alignment & Quantification: Map mouse reads to GRCm39 and human reads to GRCh38 using STAR aligner. Quantify gene-level counts with featureCounts.

- Differential Expression: Perform analysis using DESeq2 in R (adj. p-value < 0.05, |log2FC| > 1).

- Ortholog Mapping: Map differentially expressed genes (DEGs) between species using Ensembl Compara orthology databases.

- Pathway Enrichment: Input conserved DEGs into Enrichr for joint KEGG/Reactome pathway analysis. Prioritize pathways with significant enrichment (FDR < 0.01) in both species.

Diagram Title: Workflow for Cross-Species Target Prioritization

Protocol 2: Integrated Metabolomic & Pharmacokinetic Profiling in Preclinical Development

Objective: To correlate systemic drug exposure (PK) with target organ metabolic response in a rodent model, informing translational biomarkers.

Method:

- Dosing and Sampling:

- Administer lead compound or vehicle to Sprague-Dawley rats (n=8/group) via defined route (e.g., oral gavage).

- Collect serial blood samples (e.g., at 0.25, 0.5, 1, 2, 4, 8, 24h) into EDTA tubes via cannulation. Centrifuge (2000xg, 10min, 4°C) to obtain plasma.

- Euthanize animals at trough and peak plasma concentration timepoints. Harvest target organs (e.g., liver, tumor), flash-freeze in liquid N₂.

- Pharmacokinetic (PK) Analysis:

- Quantify compound concentration in plasma using a validated LC-MS/MS method. Perform non-compartmental analysis (NCA) using Phoenix WinNonlin to calculate AUC, Cmax, Tmax, t₁/₂.

- Metabolomic Profiling:

- Homogenize frozen tissue in 80% methanol/H₂O (v/v) at -20°C. Centrifuge (15,000xg, 15min). Dry supernatant under N₂ gas.

- Reconstitute in LC-MS compatible solvent. Analyze using a HILIC/UHPLC-QTOF-MS system in both positive and negative ionization modes.

- Process raw data with XCMS Online for feature detection, alignment, and annotation against HMDB/KEGG.

- Integrative Data Fusion:

- Use multi-block PLS-DA analysis (via ropls R package) to correlate PK parameters (X-block) with tissue metabolomic profiles (Y-block). Identify metabolites whose levels co-vary with drug exposure.

Diagram Title: PK-Metabolomics Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Protocol | Example/Catalog |

|---|---|---|

| TRIzol Reagent | Monophasic solution of phenol and guanidine isothiocyanate for simultaneous dissociation of biological samples and isolation of intact total RNA, proteins, and DNA. | Thermo Fisher Scientific, 15596026 |

| NEBNext Ultra II Directional RNA Library Prep Kit | For construction of strand-specific sequencing libraries from purified poly(A)+ mRNA or ribosomal RNA-depleted total RNA. | New England Biolabs, E7760S/L |

| DESeq2 R Package | Statistical software for differential analysis of count-based NGS data (e.g., RNA-seq), using a negative binomial model and shrinkage estimation. | Bioconductor Package |

| Ensembl Compara | Database providing cross-species gene orthology/paralogy predictions, essential for translating findings between model organisms and humans. | ensembl.org/info/genome/compara |

| HILIC Chromatography Column | (e.g., Acquity UPLC BEH Amide). For polar metabolite separation prior to MS, complementing reverse-phase methods. | Waters, 186004802 |

| XCMS Online | Cloud-based platform for automated processing, statistical analysis, and annotation of mass spectrometry-based metabolomics data. | xcmsonline.scripps.edu |

| ropls R Package | Implementation of multivariate regression and classification methods (PCA, PLS-DA) for omics data integration and biomarker analysis. | Bioconductor Package |

Within the paradigm of comparative approach research in biomedical sciences, the precise definition and implementation of controls, benchmarks, and counterfactuals are fundamental to deriving causal inference and validating therapeutic efficacy. This article provides structured Application Notes and Protocols for researchers and drug development professionals, detailing methodologies to design robust experiments, select appropriate reference points, and model unobserved outcomes to advance preclinical and clinical programs.

Practical applications of the comparative approach hinge on a triad of conceptual anchors: Controls (baseline conditions), Benchmarks (standard reference points for performance), and Counterfactuals (inferences about what would have happened in the absence of an intervention). Together, they enable the isolation of treatment effects, contextualization of results, and estimation of causal impact.

Core Concepts & Definitions

Controls

- Purpose: To account for variability not due to the experimental intervention (e.g., plate effects, vehicle toxicity, natural disease progression).

- Types:

- Negative Control: Establishes baseline noise (e.g., vehicle-treated cells, sham surgery, placebo group).

- Positive Control: Verifies experimental system responsiveness (e.g., a known agonist, standard-of-care drug).

- Internal Control: Normalizes data within an experiment (e.g., housekeeping gene in qPCR, untreated well in a plate).

Benchmarks

- Purpose: To provide a standard of comparison for evaluating the performance or efficacy of a novel intervention.

- Types: Includes historical controls, gold-standard therapeutics, clinical endpoints (e.g., overall survival, PFS), and predefined performance thresholds (e.g., IC50 < 100 nM).

Counterfactuals

- Purpose: To estimate the causal effect by reasoning about the outcome that would have occurred if the subject had not received the intervention.

- Application: Central to randomized controlled trial (RCT) analysis and increasingly modeled in real-world evidence (RWE) studies using statistical techniques.

Table 1: Efficacy of Novel Oncology Drug (NX-202) vs. Benchmark & Controls in Phase II RCT

| Group (N=50/arm) | Median Progression-Free Survival (months) | Overall Response Rate (%) | Serious Adverse Events (%) |

|---|---|---|---|

| NX-202 (Intervention) | 8.2 | 42 | 18 |

| Standard of Care (Benchmark) | 6.5 | 35 | 22 |

| Placebo + BSC (Control) | 4.1 | 10 | 12 |

| Counterfactual Estimate (Modeled) | 4.0* | 11* | N/A |

*Estimated via g-computation from trial data. BSC = Best Supportive Care.

Table 2: In Vitro Potency Assay Data for Candidate Molecules

| Compound | IC50 (nM) [95% CI] | Efficacy (% of Max Response) | Z'-Factor (Assay QC) |

|---|---|---|---|

| Test Compound A | 24 [19-31] | 98 | 0.78 |

| Benchmark Drug B | 45 [38-53] | 100 | 0.75 |

| Positive Control C | 10 [8-13] | 102 | 0.81 |

| Vehicle (Negative Control) | N/A | 2 | N/A |

Experimental Protocols

Protocol 4.1:In VivoEfficacy Study with Integrated Controls & Benchmark

Objective: Evaluate antitumor activity of a novel compound against a xenograft model. Materials: See Scientist's Toolkit (Section 6). Method:

- Randomization: 48 mice with established subcutaneous tumors (150-200 mm³) are randomized into 4 groups (n=12).

- Dosing Regimen (28 days):

- Group 1 (Test Article): 10 mg/kg, IP, QD.

- Group 2 (Benchmark): Clinical standard-of-care, 25 mg/kg, PO, BID.

- Group 3 (Vehicle Control): PBS with 0.1% Tween-80, IP, QD.

- Group 4 (Positive Control for Tumor Reduction): Reference cytotoxic agent at MTD.

- Endpoint Measurements:

- Tumor volume (caliper measurements) 3x weekly.

- Body weight 2x weekly (toxicity surrogate).

- Terminal blood collection for PK/PD analysis.

- Counterfactual Analysis: Apply a linear mixed-effects model to tumor growth curves, using vehicle group data to estimate potential growth in treated groups had they received vehicle.

Protocol 4.2: High-Throughput Screening (HTS) Hit Validation

Objective: Confirm activity of primary HTS hits while controlling for assay artifacts. Method:

- Dose-Response: Test hits in 10-point dose-response in triplicate.

- Control Plates: Include on every plate:

- High Control (0% inhibition): DMSO-only wells (n=16).

- Low Control (100% inhibition): Wells with a known potent inhibitor (n=16).

- Benchmarking: Run a reference drug (benchmark) curve in parallel.

- Counterscreening: Test all hits against a related but irrelevant target (orthogonal counterfactual condition) to identify non-specific inhibitors.

- Data Analysis: Calculate % inhibition, fit curves, and derive IC50. Apply strict thresholds: % activity >50% at test concentration, IC50 < 10 µM, and >10-fold selectivity in counterscreen.

Visualizations

Title: The Comparative Research Workflow

Title: Drug Mechanism & Control Pathways

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Comparative Studies

| Reagent / Material | Function in Experimental Design |

|---|---|

| Isotype Control Antibody | Negative control for flow cytometry or IHC; matches the primary antibody's host species and isotype but lacks specific target binding. |

| Pharmacologic Agonist/Antagonist (e.g., Forskolin, Staurosporine) | Positive control for modulating a specific pathway to validate assay responsiveness. |

| Validated siRNA/shRNA (Non-targeting) | Negative control for gene knockdown studies to distinguish sequence-specific effects from off-target or transfection effects. |

| Reference Standard Compound (e.g., WHO International Standard) | Benchmark for calibrating bioassays (e.g., cytokine activity, vaccine potency) to ensure cross-study comparability. |

| Vehicle Matched to Formulation | Critical negative control to dissect drug effects from solvent (e.g., DMSO, cyclodextrin) effects on cells or organisms. |

| Internal Standard (Stable Isotope Labeled) | For mass spectrometry-based assays; corrects for variability in sample processing and instrument response, serving as an internal control. |

| Cell Viability Indicator (e.g., ATP assay) | Positive control for cytotoxicity (high signal) and negative control for background (no cells). Used to benchmark compound toxicity. |

Application Notes & Protocols

Application Note: Comparative Dose-Response Analysis in Lead Optimization

Thesis Context: This protocol exemplifies the comparative approach for selecting the most promising drug candidate by systematically comparing efficacy and toxicity profiles under identical experimental conditions.

Objective: To quantitatively compare the in vitro potency and therapeutic window of three candidate small-molecule inhibitors (CM-101, CM-102, CM-103) targeting the same kinase in a cancer cell line.

Quantitative Data Summary: Table 1: Summary of Dose-Response Parameters for Candidate Molecules (72-hour assay).

| Compound | Target IC₅₀ (nM) | Cell Viability IC₅₀ (nM) | Therapeutic Index (TI)* | Hill Slope |

|---|---|---|---|---|

| CM-101 | 10.2 ± 1.5 | 550 ± 45 | 54 | -1.2 |

| CM-102 | 45.5 ± 6.1 | 2100 ± 310 | 46 | -1.1 |

| CM-103 | 5.8 ± 0.9 | 125 ± 22 | 22 | -1.5 |

*TI = IC₅₀ (Cell Viability) / IC₅₀ (Target Inhibition)

Interpretation: While CM-103 is the most potent (lowest target IC₅₀), CM-101 offers the widest theoretical therapeutic window (highest TI), making it the preferred candidate for progression based on this comparative analysis.

Experimental Protocol: Parallel Dose-Response Profiling

A. Materials & Reagent Solutions Table 2: Research Reagent Solutions Toolkit.

| Item | Function & Specification |

|---|---|

| Recombinant Kinase Protein | Target for biochemical IC₅₀ determination. |

| ATP-Glo Max Assay Kit | Homogeneous, luminescent kinase activity assay. |

| Cancer Cell Line (e.g., A549) | Disease-relevant cellular model. |

| CellTiter-Glo 3D Kit | Luminescent assay for cell viability/cytotoxicity. |

| DMSO (Cell Culture Grade) | Universal solvent for compound serial dilution. |

| 384-Well Assay Plates (White) | Optimal for luminescence detection. |

| Automated Liquid Handler | For precise, high-throughput compound dispensing. |

B. Procedure

- Compound Preparation: Prepare 10mM stocks of CM-101, CM-102, CM-103 in DMSO. Using an automated liquid handler, create 11-point, 1:3 serial dilutions in DMSO in a source plate.

- Biochemical Kinase Assay (Target Potency):

- Transfer 20 nL of each compound dilution to a 384-well assay plate (n=4 per concentration).

- Add kinase/substrate mixture in reaction buffer.

- Initiate reaction with ATP. Incubate for 60 min at RT.

- Add ATP-Glo detection reagent, incubate 40 min, record luminescence.

- Calculate % inhibition, fit to a 4-parameter logistic model to derive IC₅₀.

- Cellular Viability Assay (Therapeutic Window):

- Seed A549 cells at 1,000 cells/well in 384-well plates. Culture for 24h.

- Using the identical compound source plate, transfer 20 nL to cell plates (n=4 per concentration).

- Incubate for 72 hours at 37°C, 5% CO₂.

- Equilibrate plate to RT, add CellTiter-Glo 3D reagent, shake, incubate 25 min.

- Record luminescence. Calculate % viability, derive IC₅₀.

C. Visualization of Workflow & Interpretation

Comparative Lead Optimization Workflow (99 chars)

Application Note: Comparative Signaling Pathway Analysis via Phospho-Proteomics

Thesis Context: This protocol uses comparative phospho-proteomics to infer mechanism of action (MoA) and off-target effects by contrasting signaling networks before and after treatment.

Objective: To identify differential phosphorylation events induced by CM-101 compared to a known standard-of-care (SoC) inhibitor and a DMSO control.

Quantitative Data Summary: Table 3: Top Phospho-Site Changes (CM-101 vs. DMSO, 2h treatment).

| Protein (Site) | Fold Change | p-value | Pathway Association |

|---|---|---|---|

| MAPK1 (T185/Y187) | +4.5 | 3.2e-6 | MAPK/ERK Proliferation |

| AKT1 (S473) | -3.2 | 1.1e-5 | PI3K/AKT Survival |

| STAT3 (Y705) | -5.1 | 4.7e-7 | JAK/STAT Immune |

| RPS6 (S235/236) | -2.8 | 2.3e-4 | mTOR Translation |

Interpretation: Comparative analysis confirms on-target kinase inhibition (reduced AKT/mTOR signaling) and reveals a unique suppressive effect on STAT3 not seen with the SoC, suggesting a distinct MoA and potential combinatorial utility.

Experimental Protocol: Comparative Phospho-Proteomic Profiling

A. Materials & Reagent Solutions Table 4: Phospho-Proteomics Toolkit.

| Item | Function & Specification |

|---|---|

| Titanium Dioxide (TiO₂) Beads | Enrichment of phosphorylated peptides. |

| TMTpro 18plex Reagents | Tandem mass tag reagents for multiplexed comparison. |

| High-pH Reversed-Phase Fractionation Kit | Peptide fractionation to reduce complexity. |

| LC-MS/MS System (e.g., Orbitrap Eclipse) | High-resolution mass spectrometry analysis. |

| Cell Lysis Buffer (RIPA + Phosphatase/Protease Inhibitors) | Preserves post-translational modifications. |

| Anti-Phosphotyrosine Antibody (optional) | For specific pTyr enrichment. |

B. Procedure

- Sample Preparation & Multiplexing:

- Treat A549 cells in triplicate with: a) DMSO, b) SoC (1μM), c) CM-101 (1μM) for 2 hours.

- Lyse cells, digest proteins with trypsin.

- Label peptides from each sample with a unique isobaric TMTpro tag. Pool all 9 samples.

- Phosphopeptide Enrichment:

- Desalt pooled sample. Incubate with TiO₂ beads in loading buffer (2M lactic acid/50% ACN).

- Wash beads, elute phosphopeptides with ammonium hydroxide.

- Optional: Perform subsequent pTyr immunoaffinity purification.

- LC-MS/MS & Data Analysis:

- Fractionate enriched phosphopeptides by high-pH reversed-phase HPLC.

- Analyze each fraction by LC-MS/MS on an Orbitrap platform.

- Database search (e.g., Sequest HT) for identification and TMT quantification.

- Normalize data, perform statistical comparison (ANOVA) to find differentially phosphorylated sites.

C. Visualization of Inferred Signaling Network

CM-101 Induced Phospho-Signaling Network (84 chars)

Major Disciplines Utilizing Comparative Methods (Phylogenetics, Genomics, Phenotypic Screening)

Within the broader thesis on the Practical Applications of the Comparative Approach Research, this article details the specific methodologies and protocols central to three disciplines that fundamentally rely on comparative analysis. By systematically contrasting biological entities—be they species, genomes, or cellular phenotypes—these fields generate actionable insights for evolutionary biology, functional genomics, and therapeutic discovery. The following Application Notes and Protocols provide structured, executable frameworks for researchers.

Application Note 1: Phylogenetics in Pathogen Surveillance & Drug Target Identification

Objective: To construct a phylogeny of viral sequences (e.g., SARS-CoV-2) to track transmission clusters and identify conserved regions for broad-spectrum antiviral targeting.

Quantitative Data Summary:

Table 1: Key Metrics for Phylogenetic Analysis of a Hypothetical Pathogen Dataset

| Metric | Value | Interpretation |

|---|---|---|

| Number of Sequences Analyzed | 1,500 | Sample size for robust clade definition. |

| Sequence Length (bp) | 29,903 | Full genome alignment. |

| Average Genetic Distance | 0.0021 | Low diversity suggests recent emergence. |

| Number of Major Clades (Lineages) | 5 | Identified monophyletic groups. |

| Branch Support (Average Bootstrap) | 92% | High confidence in tree topology. |

| Conserved Region Identified (Spike Protein) | 98.7% identity | Potential target for universal vaccine. |

Experimental Protocol:

Data Acquisition & Curation:

- Source raw sequence reads (FASTQ) from public repositories (NCBI SRA, GISAID).

- Assemble reads de novo or map to a reference genome using tools like SPAdes or BWA.

- Generate a consensus sequence for each isolate.

Multiple Sequence Alignment (MSA):

- Input: Collection of consensus sequences (FASTA format).

- Use MAFFT (with

--autoparameter) or Clustal Omega to generate the MSA. - Visually inspect and manually refine the alignment in AliView, trimming poorly aligned terminal regions.

Phylogenetic Inference:

- Model Selection: Use ModelTest-NG or jModelTest2 on the alignment to determine the best nucleotide substitution model (e.g., GTR+I+G).

- Tree Building: Execute Maximum Likelihood analysis using IQ-TREE2:

iqtree2 -s alignment.fasta -m GTR+I+G -bb 1000 -alrt 1000 -nt AUTO. - Visualization & Annotation: Load the resulting tree file (.treefile) in FigTree or ITOL to root the tree, collapse nodes by support value, and color-code clades.

Analysis & Reporting:

- Calculate pairwise genetic distances from the alignment using the

dist.dnafunction in R's ape package. - Identify clade-defining mutations.

- Map metadata (geographic location, date of collection) onto the tree to infer transmission patterns.

- Calculate pairwise genetic distances from the alignment using the

Research Reagent Solutions:

| Item | Function |

|---|---|

| QIAamp Viral RNA Mini Kit | Extracts high-quality viral RNA from clinical specimens for sequencing. |

| Illumina COVIDSeq Test | Provides an end-to-end solution for amplicon-based whole-genome sequencing of SARS-CoV-2. |

| NEBNext Ultra II FS DNA Library Prep Kit | Prepares sequencing libraries from low-input DNA/cDNA for Illumina platforms. |

| Phusion High-Fidelity DNA Polymerase | Ensures accurate amplification of target viral genomic regions prior to sequencing. |

Title: Phylogenetic Analysis Workflow for Pathogen Genomics

Application Note 2: Comparative Genomics for Gene Family Analysis & Functional Prediction

Objective: To identify and characterize the cytochrome P450 (CYP) gene family across three plant species to infer evolutionary relationships and predict substrate specificity.

Quantitative Data Summary:

Table 2: Comparative Genomics Output for CYP Gene Family Analysis

| Metric | Arabidopsis thaliana | Oryza sativa | Zea mays |

|---|---|---|---|

| Total CYP Genes Identified | 246 | 458 | 261 |

| Number of CYP Subfamilies | 45 | 71 | 52 |

| Avg. Gene Length (bp) | 1,550 | 1,620 | 1,590 |

| Tandem Duplication Events | 28 | 67 | 41 |

| Segmental Duplication Events | 12 | 35 | 19 |

| Species-Specific Expansions | CYP71 | CYP76 | CYP87 |

Experimental Protocol:

Data Retrieval:

- Download annotated genome assemblies (GFF3/GTF & FASTA files) from Ensembl Plants or Phytozome.

Gene Family Identification:

- Compile a set of known seed protein sequences for the target gene family (e.g., 5-10 well-characterized CYP proteins from UniProt).

- Perform a local BLASTP search (

blastp -db proteome.fasta -query seeds.fasta -out results.txt -evalue 1e-5) against each species' proteome. - Use HMMER to search with a hidden Markov model (Pfam: PF00067) for additional sensitivity:

hmmsearch CYP.hmm proteome.fasta.

Phylogenetic & Synteny Analysis:

- Align the identified protein sequences using MAFFT. Trim alignment with TrimAl.

- Construct a gene tree (as per Protocol 1). Include known seed sequences to root the tree.

- Use MCScanX to analyze whole-genome synteny and classify duplication events (tandem, segmental, etc.).

Selective Pressure & Motif Analysis:

- Use the CodeML program in PAML to calculate non-synonymous/synonymous substitution rates (dN/dS) across branches to detect positive selection.

- Scan protein sequences for conserved functional motifs using MEME Suite.

Research Reagent Solutions:

| Item | Function |

|---|---|

| KAPA HyperPrep Kit | For preparing high-complexity, whole-genome sequencing libraries from plant genomic DNA. |

| NEBNext Poly(A) mRNA Magnetic Isolation Module | Isolates high-integrity mRNA from plant tissue for transcriptomic studies to validate gene expression. |

| Phire Plant Direct PCR Master Mix | Rapid PCR genotyping directly from plant tissue to confirm gene presence/absence. |

| Gateway LR Clonase II Enzyme Mix | Enables efficient recombination-based cloning of candidate CYP genes into expression vectors for functional characterization. |

Title: Comparative Genomics Pipeline for Gene Family Study

Application Note 3: High-Content Phenotypic Screening for Mechanism of Action (MoA) Studies

Objective: To compare the cellular phenotypic profiles induced by a new chemical entity (NCE) versus known reference compounds to deconvolute its potential Mechanism of Action (MoA).

Quantitative Data Summary:

Table 3: Phenotypic Profiling Data for MoA Classification

| Phenotypic Feature (Channel) | NCE (Mean Intensity) | Reference A: Microtubule Inhibitor | Reference B: DNA Damager | NCE Similarity Score |

|---|---|---|---|---|

| Nuclear Area (DAPI) | 185 ± 22 px² | 210 ± 35 px² | 165 ± 18 px² | 0.85 (vs. A) |

| Microtubule Integrity (Tubulin) | 15 ± 5 (S.D.) | 8 ± 3 (S.D.) | 92 ± 10 (S.D.) | 0.92 (vs. A) |

| Actin Stress Fibers (Phalloidin) | 120 ± 15 (S.D.) | 135 ± 20 (S.D.) | 75 ± 12 (S.D.) | 0.78 (vs. A) |

| Cell Count | 65% of Control | 60% of Control | 30% of Control | 0.95 (vs. A) |

| Predicted MoA Class | - | Microtubule Destabilizer | Topoisomerase Inhibitor | Microtubule Agent |

Experimental Protocol:

Cell Culture & Compound Treatment:

- Seed U2OS cells in 384-well imaging plates (1,500 cells/well) and culture overnight.

- Using a liquid handler, treat cells with the NCE and a panel of 10-15 reference compounds (each with known MoA) across a 8-point dose range (e.g., 1 nM – 10 µM). Include DMSO controls. Incubate for 24-48 hours.

Immunofluorescence & Staining:

- Fix cells with 4% PFA for 15 min. Permeabilize with 0.1% Triton X-100 for 10 min.

- Block with 3% BSA for 1 hour.

- Stain with primary antibodies (e.g., anti-α-tubulin, anti-γH2AX) and appropriate fluorescent secondary antibodies (e.g., Alexa Fluor 488, 568).

- Counterstain with DAPI (nuclei) and Phalloidin-Alexa Fluor 647 (actin). Seal plates.

High-Content Imaging & Feature Extraction:

- Image plates using an automated microscope (e.g., PerkinElmer Opera, ImageXpress Micro).

- Acquire 4 fields/well across 4 channels (DAPI, FITC, TRITC, Cy5).

- Use onboard analysis software (e.g., Harmony, MetaXpress) to segment cells/nuclei and extract 500+ morphological features (size, intensity, texture, shape) per cell.

Data Analysis & MoA Prediction:

- Aggregate single-cell data to well-level median values. Normalize to DMSO controls.

- Perform dimensionality reduction (t-SNE, UMAP) on the feature matrix.

- Compute similarity (e.g., Pearson correlation) between the NCE's phenotypic profile and all reference profiles across doses.

- The reference compound with the highest similarity score indicates the predicted MoA class.

Research Reagent Solutions:

| Item | Function |

|---|---|

| CellPlayer Kinetic MMP Assay Reagent | Real-time, dye-free measurement of cell health and confluency in living cells. |

| Cell Mask Deep Red Stain | A cytoplasmic stain for accurate cell segmentation in high-content analysis. |

| Anti-α-Tubulin Antibody (DM1A), Alexa Fluor 488 Conjugate | Directly conjugated antibody for streamlined microtubule network visualization. |

| Toxilight BioAssay Kit | Measures adenylate kinase release for quantitative, early cytotoxicity assessment. |

| Cellular Dielectric Spectroscopy (CDS) on xCELLigence RTCA | Label-free, real-time monitoring of dynamic cellular responses to compounds. |

Title: High-Content Screening for Mechanism of Action Prediction

From Theory to Lab Bench: Implementing Comparative Methods in R&D Pipelines

Application Notes

Within the broader thesis on the Practical applications of the comparative approach in biomedical research, this document details methodologies for systematically identifying and prioritizing therapeutic targets. The comparative approach, analyzing differential omics data across disease states, genotypes, or treatments, is central to moving from associative observations to causal, druggable targets. This process directly informs lead discovery and reduces late-stage attrition in drug development.

Core Comparative Paradigms

Target identification leverages multi-omic comparisons to pinpoint critical nodes. Key comparative datasets include:

- Disease vs. Healthy: Transcriptomic/proteomic profiling of patient tissues versus controls.

- Genetic Perturbation: Comparisons between wild-type and mutant (e.g., CRISPR knockout) cell lines, or genome-wide association studies (GWAS).

- Drug Response: Omics profiles of sensitive versus resistant cell lines or patient cohorts.

- Evolutionary & Structural: Comparing binding sites across pathogen strains or homologous human proteins to achieve selectivity.

Quantitative Prioritization Framework

Prioritization integrates multiple evidence streams into a quantitative score. The following table summarizes common data layers and their scoring metrics.

Table 1: Quantitative Data Layers for Target Prioritization

| Data Layer | Key Metrics | Typical Source | Priority Implication |

|---|---|---|---|

| Genetic Evidence | GWAS p-value, Odds Ratio; LoF mutation burden; CRISPR essentiality score (e.g., DEMETER2, Chronos) | UK Biobank, gnomAD, DepMap | High priority for strong human genetic association and essentiality in relevant cell lines. |

| Omics Differential | Log2 Fold-Change; Adjusted p-value (e.g., DESeq2, limma); Protein Abundance Change | RNA-Seq, Proteomics (LC-MS/MS) | Large, significant dysregulation in disease tissue increases priority. |

| Druggability | PocketDruggability score; Presence of known drug-like binding sites; Tractable protein class (e.g., kinase, GPCR) | PDB, AlphaFold DB, CANCERDRUG | Defines feasibility; targets with known small-molecule binders are lower risk. |

| Pathway Context | Centrality metrics (Betweenness, Degree); Pathway enrichment FDR; Upstream/downstream node analysis | KEGG, Reactome, STRING network | Critical pathway hubs or bottlenecks are preferred over peripheral targets. |

| Safety/Toxicity | Tissue-specific expression (GTEx); Mouse knockout phenotype; Essential gene status in healthy tissues | GTEx, IMPC, Tox21 | Low expression in vital organs and non-essential phenotypes suggest a wider therapeutic window. |

Integrated Workflow Protocol

The following protocol outlines a standard workflow for comparative target identification using transcriptomic and genetic data.

Protocol 1: Integrated Omics and Genetic Prioritization Workflow

Objective: To identify and prioritize druggable protein targets from differential gene expression data, reinforced by human genetic evidence and computational druggability assessment.

Materials & Reagents:

- Disease and Control RNA-Seq Datasets (e.g., from GEO, TCGA).

- High-performance Computing Cluster or local server with sufficient RAM (>32 GB).

- Bioinformatics Software: R/Bioconductor (DESeq2, limma), Python (pandas, numpy), GATK for variant calling if needed.

- Reference Databases: gnomAD, DepMap CRISPR screens, GTEx, PDB, Drug-Gene Interaction Database (DGIdb).

Procedure:

- Differential Expression Analysis:

- Align RNA-Seq reads to a reference genome (e.g., GRCh38) using STAR or HISAT2.

- Quantify gene-level counts using featureCounts or HTSeq.

- Perform differential expression in R using

DESeq2. Apply thresholds of|log2FC| > 1and adjusted p-value< 0.05.

Genetic Evidence Integration:

- For the differentially expressed genes (DEGs), query the latest GWAS catalog (ebi.ac.uk/gwas/) and gnomAD (gnomad.broadinstitute.org) via API.

- Extract association p-values and loss-of-function constraint scores (pLI/LOEUF).

- Query the Cancer Dependency Map (depmap.org) for CRISPR essentiality scores (Chronos) in relevant cell lines.

Network & Pathway Analysis:

- Submit the DEG list to Enrichr (maayanlab.cloud/Enrichr) or perform over-representation analysis using the

clusterProfilerR package against KEGG and Reactome. - Construct a Protein-Protein Interaction (PPI) network using STRINGdb (confidence score > 0.7) and calculate network centrality metrics.

- Submit the DEG list to Enrichr (maayanlab.cloud/Enrichr) or perform over-representation analysis using the

Druggability Assessment:

- For prioritized candidate proteins, retrieve predicted structures from AlphaFold DB or experimental structures from the PDB.

- Run computational druggability pipelines (e.g., fpocket, DoGSiteScorer) to identify and score potential binding pockets.

- Cross-reference with DGIdb and ChEMBL to identify known drugs, tool compounds, or chemical starting points.

Consensus Scoring & Prioritization:

- Normalize each metric (log2FC, -log10(p-value), -log10(GWAS p-value), Essentiality Score, Druggability Score) to a 0-1 scale.

- Apply a weighted sum based on project-specific weights (e.g., Genetic Evidence: 0.3, Druggability: 0.3, Differential Expression: 0.2, Network Centrality: 0.2).

- Rank all candidates by the final composite score and generate a shortlist for experimental validation.

Visualization

Diagram 1: Comparative Target ID Workflow

Diagram 2: Multi-Layer Target Prioritization Scoring

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Target Identification & Validation

| Reagent / Material | Provider Examples | Primary Function in Target ID |

|---|---|---|

| CRISPR-Cas9 Knockout Libraries (e.g., Brunello, GeCKO) | Synthego, Horizon Discovery | Genome-wide loss-of-function screens to identify essential genes in disease-specific contexts. |

| siRNA/shRNA Pools (Gene-specific or pathway-focused) | Dharmacon, Sigma-Aldrich | Rapid, transient knockdown of candidate targets for phenotypic validation (proliferation, apoptosis). |

| Phospho-Specific Antibodies | Cell Signaling Technology, Abcam | Detection of pathway activation states (e.g., p-ERK, p-AKT) downstream of target modulation. |

| Activity-Based Probes (ABPs) | ActivX, Thermo Fisher | Chemoproteomic tools to directly profile and quantify the activity of enzyme families (e.g., kinases, proteases) in native lysates. |

| PROTAC Molecules (Bespoke or library) | Arvinas, MedChemExpress | Induce targeted protein degradation; used as chemical probes to validate target dependency acutely. |

| NanoBRET Target Engagement Kits | Promega | Measure intracellular binding of small molecules to target proteins in live cells, confirming compound engagement. |

| Recombinant Human Proteins (Active) | Sino Biological, R&D Systems | Used in biochemical assays (e.g., kinase, binding assays) for direct functional testing of candidate targets and inhibitor screening. |

| Organoid or Primary Cell Co-culture Models | ATCC, STEMCELL Technologies | Provide physiologically relevant in vitro systems for testing target necessity in a more complex, human-derived tissue context. |

Selecting an appropriate model system is a critical, foundational decision in biomedical research and drug development. This application note, framed within a broader thesis on the practical applications of comparative research, provides a structured comparison of four cornerstone models: 2D cell cultures, 3D cell cultures, organoids, and animal models. We present quantitative data, detailed protocols for key experiments, and essential research tools to guide researchers in making informed, context-driven choices.

Comparative Analysis: Key Parameters

The selection of a model system involves trade-offs across multiple dimensions. The following tables summarize core characteristics.

Table 1: Fundamental Characteristics and Applications

| Parameter | 2D Cell Culture | 3D Cell Culture (Spheroids) | Organoids | Animal Models (e.g., Mouse) |

|---|---|---|---|---|

| System Complexity | Low (Monolayer) | Medium (Cell Aggregates) | High (Tissue-like Structures) | Very High (Whole Organism) |

| Cellular Physiology | Altered polarity; High proliferation | Improved cell-cell contact; Gradients (O2, nutrients) | Near-physiological architecture; Multiple cell types | Full physiological context; Systemic interactions |

| Genetic/Pathological Fidelity | Limited (often immortalized lines) | Moderate (can use patient cells) | High (patient-derived; can model disease) | High (transgenic, xenograft, or syngeneic) |

| Throughput & Cost | Very High; Low cost/well | High; Moderate cost | Low-Moderate; High cost | Very Low; Very High cost |

| Typical Applications | High-throughput screening, mechanistic studies, toxicity assays | Drug penetration studies, hypoxia research, intermediate complexity | Disease modeling (e.g., cystic fibrosis), personalized medicine, development | Pre-clinical efficacy, PK/PD, toxicity, complex behavior |

Table 2: Quantitative Performance Metrics (Representative Data)

| Metric | 2D Culture | 3D Spheroid | Organoid | Animal Model |

|---|---|---|---|---|

| Assay Throughput (wells/day) | 10,000+ | 1,000 - 5,000 | 100 - 500 | 10 - 50 |

| Experimental Duration | 1-7 days | 7-21 days | 14-60+ days | 30-180+ days |

| Approximate Cost per Data Point | $1 - $10 | $10 - $100 | $100 - $1,000 | $1,000 - $10,000+ |

| Predictive Validity for Human Response (Correlation)* | ~0.3-0.5 | ~0.5-0.7 | ~0.6-0.8 | ~0.7-0.9 |

| Gene Expression Concordance with Human Tissue* | Low (R² ~0.2-0.4) | Moderate (R² ~0.4-0.6) | High (R² ~0.6-0.8) | Variable (R² ~0.5-0.8) |

*Generalized estimates from literature; context- and disease-dependent.

Detailed Protocols

Protocol 1: Generation and Drug Treatment of 3D Cancer Spheroids using Ultra-Low Attachment Plates

Objective: To establish a mid-throughput 3D model for assessing compound efficacy and penetration. Materials: See "The Scientist's Toolkit" below. Workflow:

- Cell Preparation: Harvest adherent cancer cells (e.g., HCT-116, MCF-7) at 80-90% confluence. Prepare a single-cell suspension in complete growth medium.

- Seeding: Count cells and dilute to a density of 500-5,000 cells per 50 µL, depending on desired spheroid size. Seed 50 µL/well into a 96-well U-bottom ultra-low attachment (ULA) plate.

- Spheroid Formation: Centrifuge the plate at 300 x g for 3 minutes to aggregate cells at the well bottom. Incubate at 37°C, 5% CO₂ for 3-5 days. Spheroids will form within 24-72 hours.

- Drug Treatment: On day 3-5, prepare 2X drug solutions in complete medium. Carefully add 50 µL of 2X drug solution to each well containing 50 µL of existing medium, for a final 1X concentration. Include vehicle controls.

- Incubation & Analysis: Incubate for an additional 72-120 hours. Assess viability using assays like CellTiter-Glo 3D. Image spheroids daily using an inverted microscope.

Diagram 1: 3D spheroid generation and assay workflow

Protocol 2: Establishing Patient-Derived Organoid (PDO) Cultures for Personalized Medicine

Objective: To generate a biobank of patient-derived organoids for ex vivo drug sensitivity testing. Materials: See "The Scientist's Toolkit" below. Workflow:

- Tissue Processing: Obtain fresh tumor tissue (ethical approval required). Mince tissue into <1 mm³ fragments in cold PBS. Digest with collagenase (e.g., 2 mg/mL) for 30-60 minutes at 37°C with agitation.

- Cell Isolation & Embedding: Dissociate into fragments/crypts. Pellet and resuspend in Basement Membrane Extract (BME, e.g., Matrigel). Plate 30-50 µL BME domes in pre-warmed culture plates. Polymerize for 30 minutes at 37°C.

- Organoid Culture: Overlay domes with organoid-specific complete medium (containing niche factors like Wnt3a, R-spondin, Noggin). Culture at 37°C, 5% CO₂. Change medium every 2-3 days.

- Passaging: Upon confluence (7-14 days), mechanically disrupt and enzymatically digest organoids. Re-embed fragments in fresh BME for expansion.

- Drug Sensitivity Testing (DST): Seed organoid fragments in 384-well plates. After 5-7 days of growth, treat with a compound library for 5-7 days. Quantify viability using ATP-based luminescence.

Diagram 2: Patient-derived organoid culture and testing pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Featured Experiments

| Item | Function | Example Product/Brand |

|---|---|---|

| Ultra-Low Attachment (ULA) Plates | Prevents cell attachment, forcing 3D aggregation via gravity. | Corning Spheroid Microplates |

| Basement Membrane Extract (BME) | Extracellular matrix scaffold providing structural support and biochemical cues for organoid growth. | Cultrex Basement Membrane Extract, Corning Matrigel |

| Organoid Growth Medium Supplements | Essential niche factors that maintain stemness and drive lineage-specific differentiation. | Recombinant Wnt-3a, R-spondin-1, Noggin (e.g., from R&D Systems) |

| 3D-Viability Assay Reagent | Luminescent ATP detection assay optimized for penetration into 3D structures. | CellTiter-Glo 3D (Promega) |

| Collagenase/Dispase Enzymes | Digest extracellular matrix in patient tissue to isolate viable cells/crypts for organoid culture. | Collagenase Type II (Thermo Fisher) |

| ROCK Inhibitor (Y-27632) | Improves survival of dissociated single cells and organoid fragments by inhibiting apoptosis. | Y-27632 dihydrochloride (Tocris) |

Application Notes

Within the broader thesis on the Practical applications of the comparative approach in research, systematic head-to-head assay evaluation is a critical exercise for optimizing experimental strategy and resource allocation. This document provides a framework for comparing three common assay platforms—ELISA, Electrochemiluminescence (ECL), and High-Throughput Flow Cytometry—in the context of quantifying a soluble inflammatory biomarker (e.g., IL-6) in a drug discovery screening campaign.

Table 1: Assay Platform Comparison Summary

| Parameter | ELISA (Colorimetric) | Electrochemiluminescence (ECL, e.g., MSD) | High-Throughput Flow Cytometry (e.g., FACS) |

|---|---|---|---|

| Detection Mechanism | Enzyme-linked antibody, colorimetric read | Ruthenium-labeled antibody, electrochemical luminescence | Fluorescently-labeled antibody, laser detection |

| Sensitivity (LoD) | ~1-10 pg/mL | ~0.1-1 pg/mL | ~0.5-5 pg/mL (cell-bound); ~10-50 pg/mL (bead-based) |

| Dynamic Range | ~2-3 logs | ~4-6 logs | ~3-4 logs |

| Assay Throughput | Medium (2-4 hours hands-on) | High (1-2 hours hands-on) | Very High (≤1 hour hands-on for plate-based) |

| Sample Throughput | 96-well plate (~40 samples/run) | 96- or 384-well plate (~40-150 samples/run) | 96- or 384-well plate (~40-150 samples/run) |

| Cost per Sample | Low ($2-$5) | Medium ($5-$15) | High ($15-$30, excluding instrument cost) |

| Key Advantages | Inexpensive, widely established, simple. | High sensitivity & broad range, low sample volume. | Multiplex potential, cellular context possible. |

| Key Limitations | Narrow range, lower sensitivity, multiplexing is difficult. | Higher reagent cost, specialized reader required. | High instrument cost, complex data analysis. |

Experimental Protocols

Protocol 1: Comparative Sensitivity & Dynamic Range Determination Objective: Establish the Lower Limit of Detection (LLoD) and upper limit of quantification (ULoQ) for IL-6 across platforms.

- Prepare a 2-fold serial dilution series of recombinant IL-6 in assay diluent, spanning from 500 pg/mL to 0.5 pg/mL.

- Run the dilution series in triplicate on each platform according to manufacturer protocols (see key reagents below).

- For each platform, plot Mean Fluorescence/ Luminescence/ Absorbance vs. log[IL-6].

- Fit a 4-parameter logistic (4PL) curve. LLoD = mean signal of zero standard + (2 x standard deviation of zero standard). ULoQ is the highest concentration where precision (CV%) remains <20%.

Protocol 2: Throughput & Practical Workflow Analysis Objective: Quantify hands-on time and total time-to-result for a batch of 80 test samples.

- Preparatory Step: Aliquot a master set of 80 unknown samples + 16 controls/calibrators.

- Parallel Processing: A single trained technician processes the identical sample set on each platform.

- Timing Metrics: Record: a) Total hands-on time (plate coating, reagent addition, washes), b) Total incubation time, c) Instrument read/analysis time.

- Calculation: Total time-to-result = Hands-on time + Incubation time + Analysis time. Throughput efficiency = (Samples processed) / (Total hands-on time in hours).

Protocol 3: Cost Analysis per Data Point Objective: Calculate the total direct cost required to generate a single quantifiable data point.

- Reagent Cost: Calculate consumable cost per sample (plate, antibodies, buffers, detection reagents) from current vendor price lists.

- Instrument Cost: Apply a prorated instrument depreciation/lease cost per run. Assume 5-year lifespan, 250 working days/year.

- Labor Cost: Apply a standard hourly rate to the hands-on time per sample (from Protocol 2).

- Formula: Total Cost per Sample = (Reagent Cost) + (Instrument Cost/Run / Samples per Run) + (Labor Cost/hour * Hands-on hours/Sample).

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in IL-6 Assay | Example (Vendor) |

|---|---|---|

| Matched Antibody Pair (Capture/Detection) | Specifically bind distinct epitopes on IL-6 for sandwich immunoassay. | DuoSet ELISA (R&D Systems), V-PLEX Plus (Meso Scale Discovery) |

| Streptavidin-Conjugated Label | Bridges biotinylated detection antibody to the reporting enzyme or fluorophore. | Streptavidin-HRP (ELISA), Streptavidin-Ruthenium (ECL), Streptavidin-PE (Flow Cytometry) |

| Assay Diluent/Buffer | Dilutes samples and standards; minimizes non-specific background signal. | PBS/BSA-based diluent, often with proprietary blockers (e.g., MSD Blocker A) |

| Electrochemiluminescence Read Buffer | Contains tripropylamine (TPA); provides coreactant for electrochemical luminescence excitation at the electrode surface. | MSD GOLD Read Buffer B |

| Flow Cytometry Assay Buffer | Contains azide and protein to prevent non-specific antibody binding and maintain cell/bead integrity. | Cell Staining Buffer (BioLegend), FACS Buffer (PBS + 2% FBS) |

| Multiplex Bead Set | For flow cytometry; distinct bead populations with unique spectral signatures, each coated with a different capture antibody. | LEGENDplex Beads (BioLegend), CBA Beads (BD Biosciences) |

Diagram 1: Comparative Assay Evaluation Workflow

Diagram 2: Core Immunoassay Detection Pathways Compared

Within the broader thesis on Practical Applications of the Comparative Approach Research, these application notes detail the implementation of artificial intelligence (AI)-driven in silico comparative tools. These tools are designed to transcend traditional boundaries in biological research by enabling robust, scalable, and predictive analyses across disparate species and heterogeneous datasets. The core value lies in identifying conserved biological mechanisms, translating findings from model organisms to human physiology, and de-risking drug development through cross-validation.

Key Applications:

- Translational Biomarker Discovery: Identify evolutionarily conserved gene signatures or protein networks indicative of disease states (e.g., oncogenic pathways in mouse, zebrafish, and human tumors).

- Drug Target Prioritization & Safety Assessment: Cross-species analysis of target gene expression, essentiality, and pathway context to predict efficacy and potential adverse effects (e.g., comparing heart tissue transcriptomes).

- Meta-Analysis of Public Repositories: Integrate and harmonize data from sources like GEO, ArrayExpress, and TCGA using AI to uncover novel correlations obscured in single-study analyses.

- Phenotypic Prediction from Genomic Variants: Use deep learning models trained on multi-species variant databases (e.g., gnomAD, Ensembl Comparative Genomics) to predict variant pathogenicity.

Table 1: Performance Metrics of Representative AI Models for Cross-Species Analysis

| Model Name | Primary Task | Species Covered | Key Metric | Reported Score | Dataset Used |

|---|---|---|---|---|---|

| DeepOrtho | Gene Orthology Prediction | Human, Mouse, Fly, Worm | Area Under Precision-Recall Curve (AUPRC) | 0.92 | Ensembl Compara v110 |

| CellBERT | Cross-Species Cell Type Annotation | Human, Mouse, Zebrafish | Median F1-Score | 0.89 | Tabula Sapiens, Tabula Muris |

| TransNet | Pathway Activity Translation | Human to Rat | Concordance Correlation Coefficient | 0.81 | LINCS L1000, Rat Toxicogenomics |

| MetaIntegrator | Cross-Dataset Gene Signature Fusion | Pan-mammalian | Stability Score (Scaled) | 0.75 | GEO Meta-Collection (50+ studies) |

Table 2: Public Data Resources for Comparative Analysis

| Resource | Data Type | Key Comparative Feature | Access |

|---|---|---|---|

| Ensembl Compara | Genomic Alignments, Homologies | Pre-computed gene trees, orthologs/paralogs for >700 species | REST API, BioMart |

| Alliance of Genome Resources | Genotypes & Phenotypes | Curated genotype-phenotype associations across major model organisms | Web Portal, Downloads |

| BioGPS | Gene Expression Profiles | Tissue-specific expression patterns across multiple species | Web Portal, Plugins |

| Harmonizome | Integrated Knowledge | Aggregated datasets from 70+ sources with uniform processing | Downloaded Datasets |

Detailed Experimental Protocols

Protocol 3.1: Cross-Species Transcriptomic Meta-Analysis for Conserved Biomarker Identification

Objective: To identify a core set of conserved differentially expressed genes (DEGs) in lung fibrosis across mouse model and human patient datasets.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Dataset Curation (Harmonization):

- Source RNA-seq datasets (raw counts or FPKM/TPM) from public repositories (e.g., GEO: GSE12345 [mouse bleomycin model], GSE67890 [human IPF biopsies]).

- Perform uniform quality control using FastQC v0.11.9 and MultiQC v1.14.

- Map all reads to respective reference genomes (mm39, GRCh38) using STAR aligner with identical, stringent parameters.

- Generate gene-level counts using featureCounts from the Subread package.

Differential Expression Analysis:

- For each species dataset independently, perform DEG analysis using DESeq2 in R.

- Apply a significance threshold of adjusted p-value (FDR) < 0.05 and absolute log2 fold change > 1.

Orthology Mapping & Gene List Translation:

- Download the Ensembl Compara homology table for human and mouse via BioMart.

- Map mouse DEGs to their one-to-one orthologs in humans. Discard genes with many-to-many or non-unique orthology relationships.

Conserved Signature Identification (AI-Assisted):

- Input the lists of human DEGs and ortholog-mapped mouse DEGs into a reciprocal best hit analysis to find the intersecting conserved set.

- Optional: Use a tool like MetaIntegrator or a custom Random Forest classifier trained on gene features (e.g., sequence conservation score, pathway membership) to rank and prioritize the intersecting genes for conservation strength.

Validation & Pathway Enrichment:

- Subject the final conserved gene list to functional enrichment analysis using g:Profiler against the KEGG and Reactome databases.

- Validate the expression pattern of top candidate genes in an independent, held-out dataset (e.g., single-cell RNA-seq data from lung tissue).

Protocol 3.2: In Silico Target Safety Profiling Using Cross-Tissue Expression Analysis

Objective: To assess potential on- and off-target tissue expression of a novel drug target (e.g., PKMYT1) across species.

Procedure:

- Baseline Expression Profiling:

- Query the BioGPS portal or GTEx Atlas API for baseline RNA expression of the target gene across all normal tissues in human and rat.

- Extract normalized expression values (e.g., TPM). Summarize data into a table (see Table 2 concept).

Outlier Tissue Identification:

- Calculate the median expression across all tissues for each species.

- Flag tissues where expression is >95th percentile (potential on-target effect sites) and tissues with expression >2 standard deviations above the species median (potential off-target risk tissues).

Comparative Heatmap Generation & AI Similarity Scoring:

- Generate a cross-species tissue expression heatmap using a tool like pheatmap in R, clustering tissues by expression profile similarity.

- Compute a tissue expression conservation score using a pre-trained model (e.g., a Siamese neural network) that compares the human and rat expression vectors. A low score indicates divergent expression patterns, highlighting a translational risk.

Integrated Risk Report:

- Compile results, highlighting tissues of high, conserved expression (potential efficacy drivers) and tissues with discordant expression (safety assessment focus).

Visualization Diagrams

Diagram 1: Cross-Species Transcriptomic Analysis Workflow

Diagram 2: Conserved Inflammatory Pathway Derived from Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Materials for In Silico Comparative Analysis

| Item/Category | Function/Benefit | Example/Format |

|---|---|---|

| High-Performance Computing (HPC) Access | Enables processing of large-scale genomic datasets and running complex AI models. | Local cluster (SLURM), Cloud (AWS, GCP), or NIH STRIDES. |

| Containerization Software | Ensures reproducibility of analysis pipelines across different computing environments. | Docker or Singularity containers with pre-installed tools (e.g., Biocontainers). |

| Comparative Genomics Database API Access | Programmatic retrieval of orthology, homology, and conservation data. | Ensembl REST API, NCBI E-utilities, Alliance of Genome Resources API. |

| Integrated Analysis Platform | Provides a unified environment for data wrangling, analysis, and visualization. | R/Bioconductor, Python (Scanpy, SciPy), or commercial platforms (Partek Flow, QIAGEN CLC). |

| AI/ML Framework | Library for building, training, and deploying custom comparative models. | PyTorch with PyTorch Geometric (for graph-based biological data) or scikit-learn. |

| Data Harmonization Tool | Standardizes disparate datasets into a common format for joint analysis. | Harmonizome processed datasets, or custom pipelines using ComBat (sva R package). |

| Visualization Suite | Generates publication-ready comparative graphics (heatmaps, networks, etc.). | R ggplot2 & pheatmap, Python seaborn & matplotlib, or Cytoscape for networks. |

1. Introduction and Thesis Context Within the broader thesis on Practical applications of the comparative approach research, this case study demonstrates its critical utility in early-stage oncology drug discovery. Rather than evaluating candidates in isolation, a comparative framework, executed via standardized Application Notes and Protocols, enables direct, parallel assessment of multiple drug candidates against shared biological targets and disease models. This methodology systematically identifies lead compounds with superior efficacy, safety, and mechanistic profiles, de-risking progression to clinical development.

2. Application Note: Parallel Profiling of PI3Kα/δ/γ Inhibitors in Hematologic Malignancies

2.1 Objective To comparatively evaluate the in vitro potency, selectivity, and functional activity of three clinical-stage PI3K inhibitors (Idelalisib, Duvelisib, Copanlisib) against a panel of B-cell lymphoma cell lines.

2.2 Quantitative Data Summary

Table 1: Comparative IC₅₀ (nM) in B-Cell Lymphoma Lines (72h viability assay)

| Cell Line | Disease Model | Idelalisib (PI3Kδ) | Duvelisib (PI3Kδ/γ) | Copanlisib (PI3Kα/δ) |

|---|---|---|---|---|

| SU-DHL-4 | ABC-DLBCL | 85 ± 12 | 52 ± 8 | 18 ± 3 |

| JeKo-1 | Mantle Cell Lymphoma | 120 ± 25 | 45 ± 6 | 22 ± 4 |

| Ramos | Burkitt’s Lymphoma | 250 ± 40 | 110 ± 15 | 65 ± 9 |

Table 2: Kinase Selectivity Profile (% Inhibition at 1 µM)

| Kinase Target | Idelalisib | Duvelisib | Copanlisib |

|---|---|---|---|

| PI3Kα | <10% | <15% | 98% |

| PI3Kδ | 99% | 97% | 95% |

| PI3Kβ | <5% | <5% | <10% |

| PI3Kγ | <20% | 94% | <30% |

Table 3: Functional Readouts in SU-DHL-4 Cells (Treatment @ 100 nM, 24h)

| Parameter | Idelalisib | Duvelisib | Copanlisib |

|---|---|---|---|

| pAKT (S473) Reduction | 30% ± 5% | 60% ± 7% | 85% ± 6% |

| Apoptosis (Caspase 3/7+) | 15% ± 4% | 35% ± 5% | 55% ± 6% |

| Cell Cycle Arrest (G1) | 20% increase | 40% increase | 55% increase |

3. Experimental Protocols

3.1 Protocol: Multiparametric In Vitro Screening of Kinase Inhibitors

A. Cell Viability Assay (IC₅₀ Determination)

- Seed cells: Plate relevant oncology cell lines (e.g., SU-DHL-4, JeKo-1) in 96-well plates at 2,500-5,000 cells/well in 80 µL of complete growth medium. Incubate overnight (37°C, 5% CO₂).

- Prepare inhibitor dilutions: Prepare a 10-point, 1:3 serial dilution series of each drug candidate (Idelalisib, Duvelisib, Copanlisib) in DMSO, then further dilute in assay medium. Final top concentration typically 10 µM. Include DMSO-only controls (0.1% v/v).

- Treat cells: Add 20 µL of diluted compound or control to each well (n=4 technical replicates). Incubate for 72 hours.

- Assay viability: Add 20 µL of CellTiter-Glo 2.0 reagent to each well. Shake for 2 minutes, then incubate for 10 minutes at room temperature in the dark.

- Readout: Measure luminescence on a plate reader. Normalize data to DMSO controls (100% viability). Calculate IC₅₀ values using four-parameter logistic (4PL) curve fitting in analysis software (e.g., GraphPad Prism).

B. Intracellular Phospho-Protein Analysis by Western Blot

- Treat cells: Seed cells in 6-well plates. At ~70% confluency, treat with inhibitors at desired concentrations (e.g., 100 nM) or DMSO control for 1-4 hours.

- Lyse cells: Aspirate medium, wash with ice-cold PBS. Add 100-200 µL of RIPA lysis buffer containing protease and phosphatase inhibitors. Scrape and collect lysates.

- Process samples: Centrifuge lysates (14,000 rpm, 15 min, 4°C). Determine protein concentration via BCA assay. Denature 20-30 µg of protein with Laemmli buffer at 95°C for 5 min.

- Western Blot: Load samples onto 4-12% Bis-Tris gels. Run electrophoresis, then transfer to PVDF membranes. Block with 5% BSA/TBST for 1 hour.

- Probe: Incubate with primary antibodies (e.g., anti-pAKT S473, total AKT, β-Actin) overnight at 4°C. Wash, then incubate with HRP-conjugated secondary antibodies for 1 hour.

- Detect: Apply chemiluminescent substrate and image on a digital imager. Quantify band density.

C. Apoptosis and Cell Cycle Analysis by Flow Cytometry

- Treat and harvest: Treat cells in 12-well plates for 24h. Harvest by trypsinization, pool with floating cells, wash with PBS.

- Apoptosis (Caspase 3/7): Resuspend cell pellet in 100 µL of serum-free medium containing a Caspase-3/7 green detection reagent (e.g., CellEvent). Incubate for 30-45 min at 37°C. Analyze green fluorescence by flow cytometry (FITC channel).

- Cell Cycle (DNA content): Fix cells in 70% ice-cold ethanol for at least 2 hours. Wash with PBS, then treat with RNase A (100 µg/mL) for 30 min at 37°C. Stain DNA with propidium iodide (50 µg/mL) for 10 min in the dark. Analyze PI fluorescence by flow cytometry (PE-Texas Red channel). Use software (e.g., ModFit) to deconvolute cell cycle phases.

4. Visualizations

PI3K-AKT-mTOR Pathway and Drug Inhibition

Comparative Oncology Drug Screening Workflow

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Comparative Screening

| Reagent / Material | Function / Purpose | Example Product (Supplier) |

|---|---|---|

| Validated Oncology Cell Lines | Disease-relevant in vitro models for primary efficacy screening. | SU-DHL-4, JeKo-1 (ATCC, DSMZ) |

| Selective Kinase Inhibitors (Tool Compounds) | Reference standards for target validation and assay calibration. | Idelalisib, Duvelisib, Copanlisib (MedChemExpress) |

| Cell Viability Assay Kit | Luminescent measurement of ATP content as a proxy for live cell count. | CellTiter-Glo 2.0 (Promega) |

| Phospho-Specific Antibodies | Detection of target pathway modulation (e.g., AKT phosphorylation). | anti-pAKT (S473) (Cell Signaling Tech #4060) |