Benchmarking Gene Regulatory Network Inference: A Comprehensive Guide to Methods, Challenges, and Validation on Synthetic Networks

Inferring accurate Gene Regulatory Networks (GRNs) from high-throughput data is fundamental for understanding cellular mechanisms and advancing drug discovery.

Benchmarking Gene Regulatory Network Inference: A Comprehensive Guide to Methods, Challenges, and Validation on Synthetic Networks

Abstract

Inferring accurate Gene Regulatory Networks (GRNs) from high-throughput data is fundamental for understanding cellular mechanisms and advancing drug discovery. This article provides a comprehensive guide for researchers and bioinformaticians on the critical process of benchmarking GRN inference methods using synthetic networks. We explore the foundational challenges, including data sparsity and the lack of reliable ground truth, and survey the landscape of inference algorithms from traditional to cutting-edge machine learning approaches. The content details major benchmarking frameworks like BEELINE and CausalBench, offers strategies for troubleshooting common pitfalls such as overfitting and poor scalability, and presents a rigorous framework for the comparative validation of method performance. By synthesizing insights from recent large-scale evaluations, this article serves as an essential resource for selecting, optimizing, and validating GRN inference methods in computational biology.

The Foundation of GRN Inference: Core Concepts and the Critical Need for Benchmarking

Defining the GRN Inference Problem and Its Impact on Systems Biology

Gene regulatory networks (GRNs) consist of intricate sets of interactions between genetic materials, dictating fundamental biological processes including how cells develop in living organisms and react to their surrounding environment [1]. A robust comprehension of these interactions provides the key to explaining cellular functions and predicting cellular reactions to external factors, offering tremendous potential benefits for developmental biology and clinical research such as drug development and epidemiology studies [1]. The fundamental problem of GRN inference involves reconstructing these networks from gene expression data, where the input typically consists of measurements for N genes across M experimental conditions, and the output is a ranked list of potential regulatory links from most to least confident [2].

Despite the advent of high-throughput technologies like microarrays and RNA sequencing that have generated tremendous amounts of data, inferring GRNs solely from gene expression data remains a daunting challenge due to the small number of available measurements relative to gene count, high-dimensionality, and noisy data characteristics [2]. This challenge persists across biological domains, making the development of accurate computational methods for GRN reconstruction a central effort of the interdisciplinary field of systems biology [2]. The emergence of single-cell sequencing technologies, which push transcriptomic profiling to individual cell resolution, has further intensified both the challenges and opportunities in this field, requiring specialized methods that can cope with high levels of sparsity and cellular heterogeneity [1].

Computational Approaches to GRN Inference: Method Categories and Mechanisms

Various computational methods have been proposed for GRN inference, falling into distinct categories with different underlying assumptions and granularity levels [2]. These approaches can be broadly divided into two fundamental categories: methods that predict the presence or absence of gene interactions to provide static topological information, and methods that predict the rate of gene interactions to describe both topological and dynamic information [2].

Table 1: Categories of GRN Inference Methods

| Method Category | Key Principle | Representative Methods | Strengths | Limitations |

|---|---|---|---|---|

| Correlation & Information Theory | Measures statistical dependencies between gene expressions | ARACNE, PID, PMI [2] | Captures non-linear relationships; Simple interpretation | Prone to false positives from indirect regulation |

| Boolean Networks | Represents gene states as discrete (0/1) with Boolean logic [2] | Boolean Pseudotime, BTR, SCNS [1] | Conceptual simplicity; Computational efficiency | Loses continuous expression information |

| Bayesian Networks | Models regulatory processes using probability and graph theory [2] | Traditional Bayesian, DBN [2] | Handles uncertainty; Robust to noise | Computationally intensive for large networks |

| Ordinary Differential Equations | Relates gene expression changes to regulatory influences [2] | Inferelator, S-system [2] | Captures dynamics; High flexibility | Large parameter space; Computationally demanding |

| Regression-based Ensemble | Formulates GRN inference as feature selection with ensemble strategy [2] | GENIE3, TIGRESS, D3GRN [2] | High accuracy; Handles high dimensionality | Complex implementation; Parameter sensitivity |

Single-cell specific methods have emerged as a distinct class to address the unique challenges of scRNA-seq data, with at least 15 available methods categorized into boolean models, differential equations, gene correlation, and correlation ensemble over pseudotime approaches [1]. These methods must efficiently cope with high levels of sparsity (dropouts) and the large number of cells characteristic of single-cell data, challenges that bulk analysis methods are poorly equipped to handle [1].

Benchmarking GRN Methods: Performance Comparison on Synthetic Networks

Robust benchmarking frameworks are essential for evaluating GRN inference methods, typically employing synthetic networks with known ground truth to objectively assess performance. The DREAM (Dialogue for Reverse Engineering Assessments and Methods) challenges have established standardized benchmark datasets that enable direct comparison of GRN inference algorithms [2]. Recent research has developed innovative benchmark datasets comprising synthetic networks categorized into various classes and subclasses specifically crafted to test the effectiveness and resilience of different network classification methods [3].

Performance evaluation on the DREAM4 and DREAM5 benchmark datasets demonstrates that methods like D3GRN perform competitively with state-of-the-art algorithms in terms of Area Under the Precision-Recall curve (AUPR) [2]. The D3GRN method transforms the regulatory relationship of each target gene into a functional decomposition problem and solves each subproblem using the Algorithm for Revealing Network Interactions (ARNI), employing a bootstrapping and area-based scoring method to infer the final network [2]. This approach addresses limitations in previous dynamic network construction methods that focused solely on the unit level rather than comprehensive network recovery [2].

Table 2: Performance Comparison of GRN Inference Methods on Benchmark Datasets

| Method | Underlying Approach | DREAM4 AUPR | DREAM5 AUPR | Time Complexity | Noise Robustness |

|---|---|---|---|---|---|

| D3GRN | Dynamic network construction with ARNI and bootstrapping [2] | Competitive | Competitive | Moderate | High |

| GENIE3 | Ensemble of random forests [2] | State-of-the-art | State-of-the-art | High | Moderate |

| TIGRESS | Least angle regression with stability selection [2] | High | High | Moderate-High | Moderate |

| bLARS | Modified LARS with bootstrapping [2] | High | High | Moderate | High |

| Graph2Vec | Graph embedding approach [3] | N/A | N/A | Low | Medium |

| DTWB | Deterministic Tourist Walk with Bifurcation [3] | N/A | N/A | Low | High |

Evaluation of feature extraction techniques for network classification reveals that Deterministic Tourist Walk with Bifurcation (DTWB) surpasses other methods in classifying both classes and subclasses, even when faced with significant noise [3]. Life-Like Network Automata (LLNA) and Deterministic Tourist Walk (DTW) also perform well, while Graph2Vec demonstrates intermediate accuracy, and traditional topological measures consistently show the weakest classification performance despite their simplicity and common usage [3].

Experimental Protocols for GRN Benchmarking

Synthetic Network Generation Using RECCS Protocol

The RECCS (Replicating Empirical Clustered Complex Systems) protocol generates synthetic networks for benchmarking through a structured process [4]. The protocol begins with an input network and clustering obtained by any algorithm, which passes input parameters to a stochastic block model (SBM) generator. The output is subsequently modified to improve fit to the input real-world clusters, after which outlier nodes are added using one of three different strategies [4]. This process can be implemented using graph_tool software and supports different versions (v1 and v2) with optional Connectivity Modifier (CM++) pre-processing to filter small clusters both before and after treatment [4].

For benchmarking studies, synthetic networks are generated from inspirational networks such as the Curated Exosome Network (CEN), cithepph, citpatents, and wiki_topcats [4]. The naming convention follows a systematic pattern: a_b_c.tsv.gz where a represents the inspirational network name, b indicates the resolution value used when clustering with the Leiden algorithm optimizing the Constant Potts Model, and c specifies the RECCS option used to approximate edge count and connectivity [4]. Replication experiments evaluate consistency by producing multiple replicates under controlled conditions across different RECCS configurations [4].

Standardized Evaluation Metrics and Framework

A comprehensive benchmarking framework for GRN methods requires multiple metrics assessing different aspects of similarity, focusing on both data-driven and domain-based characteristics [5]. Data-driven measures evaluate aspects such as data distribution, correlations, and population characteristics, while domain-driven metrics assess syntax checks and practical application performance [5]. These metrics can be aggregated into composite scores: the Data Dissimilarity Score and Domain Dissimilarity Score, enabling quicker comparisons of data generation approaches by reducing analysis from multiple individual metrics to two comprehensive composite metrics [5].

The evaluation process involves applying metrics to real data samples to establish baseline similarity scores, then comparing synthetic data against these baselines [5]. For GRN inference specifically, standard evaluation includes accuracy in reconstructing reference networks using scRNA-seq data, sensitivity to different levels of dropout/sparsity, and time complexity analysis [1]. Benchmarking frameworks specifically designed for network classification methods apply various types and levels of structural noise to test method robustness [3].

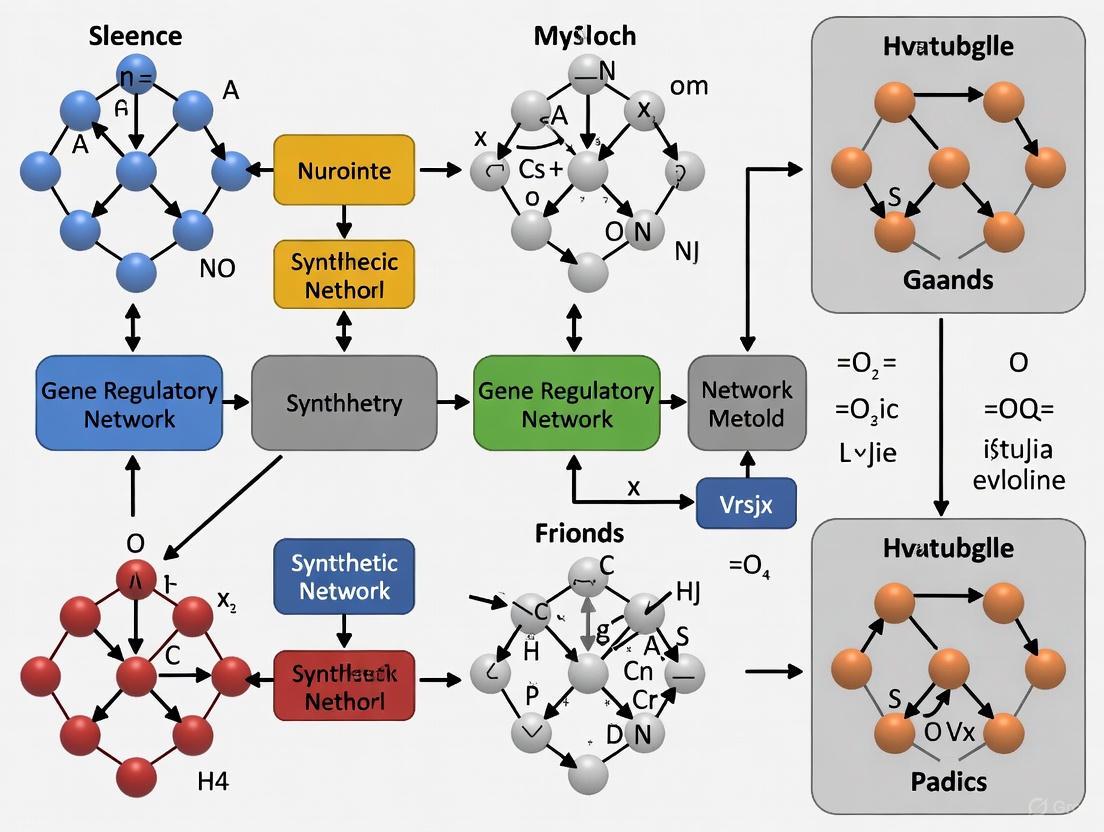

Diagram 1: GRN Method Benchmarking Workflow. This workflow illustrates the standardized process for generating synthetic networks with known ground truth and using them to evaluate GRN inference methods.

Table 3: Essential Research Reagents and Computational Tools for GRN Inference

| Resource Name | Type | Function/Purpose | Availability |

|---|---|---|---|

| RECCS Protocol | Synthetic network generator | Produces benchmark networks with ground truth from input networks [4] | University of Illinois Urbana-Champaign dataset |

| DREAM Challenge Datasets | Benchmark data | Standardized datasets for comparing GRN method performance [2] | Publicly available |

| graph_tool | Python library | Network analysis and generation using stochastic block models [4] | Open source (figshare) |

| GENIE3 | GRN inference software | Ensemble random forest-based network inference [2] | R/Python implementation |

| D3GRN | GRN inference software | Dynamic network construction with ARNI and bootstrapping [2] | Research implementation |

| SCENIC | Single-cell GRN tool | Gene regulatory network inference from scRNA-seq data [1] | R/Python (GitHub) |

| Curated Exosome Network | Biological network data | Input network for synthetic benchmark generation [4] | Illinois Data Bank |

| Wasserstein GAN | Generative model | Synthetic data generation for training and evaluation [5] | Open source implementations |

| GPT-2 | Generative model | Network data synthesis and augmentation [5] | Open source implementations |

The selection of appropriate computational tools depends on the specific data type and research question. For bulk sequencing data, established methods like GENIE3, TIGRESS, and D3GRN provide robust performance [2]. For single-cell RNA-seq data, specialized tools such as SCENIC, SCODE, and SINCERITIES are specifically designed to handle high sparsity and cellular heterogeneity [1]. The programming language implementation varies across tools, with R and Python being the most common platforms, though some tools utilize Julia, C++, or MATLAB [1]. Licensing considerations are also important, with most tools free for noncommercial use, though some require specific permissions for redistribution or commercial application [1].

Impact on Systems Biology and Future Directions

GRN inference methods have increasingly demonstrated value in determining the role of transcriptional regulators in cell fate decisions, contributing significantly to understanding cellular heterogeneity in both normal and dysfunctional tissues [1]. The comprehensive decomposition and monitoring of complex tissues made possible by these methods holds enormous potential in both developmental biology and clinical research [1]. However, significant challenges remain in translating these computational advances to real-world applications, particularly in dealing with technical limitations of scRNA-seq platforms and the inherent heterogeneity of single-cell data [1].

Future development in the field must address several outstanding challenges, including improving method reliability and validation, enhancing scalability to accommodate the increasing volume of single-cell data, and developing standardized evaluation frameworks that enable fair comparison across methods [1]. The creation of robust benchmarking frameworks using synthetic networks represents a crucial step toward establishing GRN inference as a reliable tool for biological discovery and therapeutic development [3] [4]. As these methods mature, they are expected to find applications in identifying disease biomarkers and pathways, advancing network medicine, and supporting drug design initiatives [1].

The Pervasive Challenge of Zero-Inflation and Dropout in Single-Cell Data

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the characterization of gene expression at unprecedented resolution. However, this technology introduces a fundamental statistical challenge: zero-inflation, where an excessive number of zero values appear in the gene expression matrix [6]. While bulk RNA-seq data typically contains 10–40% zeros, scRNA-seq data can contain as many as 90% zeros, creating significant analytical hurdles [6]. These zeros arise from two distinct sources: biological zeros representing genuine absence of gene expression in certain cell types or states, and non-biological zeros (including technical zeros and sampling zeros) caused by methodological limitations in transcript capture, amplification, and sequencing [6]. The prevalence of these zeros, often termed "dropout events," where expressed genes fail to be detected, biases the estimation of gene expression correlations and hinders the capture of gene expression dynamics [6] [7] [8].

The controversy surrounding zero-inflation centers on whether these zeros should be treated as a problem to be corrected or as biological signals to be embraced. This debate is particularly relevant for gene regulatory network (GRN) inference, where accurate quantification of gene-gene interactions is essential for understanding cellular mechanisms. Benchmarking GRN inference methods requires careful consideration of how different approaches handle zero-inflation, as performance on synthetic datasets may not reflect real-world effectiveness [9]. This review comprehensively examines the sources and impacts of zero-inflation, compares computational strategies for addressing it, and provides experimental protocols for evaluating these methods in GRN inference benchmarks.

Zeros in scRNA-seq data emanate from fundamentally different processes, each with distinct biological interpretations:

Biological zeros represent the true absence of a gene's transcripts in a cell, occurring either because the gene is not expressed in that cell type or due to stochastic transcriptional bursting—a phenomenon where genes switch between active and inactive states in a bursty pattern [6]. This bursting process follows a two-state model where the rates of active/inactive state switching, transcription, and mRNA degradation jointly determine the distribution of a gene's mRNA copy numbers across cells [6].

Non-biological zeros include both technical zeros and sampling zeros. Technical zeros arise from inefficiencies in library preparation steps before cDNA amplification, particularly imperfect mRNA capture efficiency during reverse transcription, which can be as low as 20% [6]. Sampling zeros result from limited sequencing depth and inefficient cDNA amplification during polymerase chain reaction (PCR), where genes with low expression levels or unfavorable sequence properties (e.g., GC-rich content) are disproportionately undetected [6].

The distinction between these zero types has profound implications for data interpretation. As shown in Table 1, the cellular context and experimental parameters determine whether zeros represent meaningful biological signals or technical artifacts.

Table 1: Classification and Characteristics of Zeros in scRNA-seq Data

| Category | Subtype | Definition | Primary Causes | Biological Interpretation |

|---|---|---|---|---|

| Biological Zeros | N/A | True absence of gene transcripts in a cell | Unexpressed genes; Stochastic transcriptional bursting | Meaningful signal of cell state/type |

| Non-biological Zeros | Technical Zeros | Loss of information before cDNA amplification | Low mRNA capture efficiency; mRNA secondary structure | Technical artifact to be corrected |

| Sampling Zeros | Undetected transcripts due to sequencing limitations | Limited sequencing depth; PCR amplification bias | Technical artifact to be corrected |

Protocol-Dependent Variability in Zero Inflation

The proportion and distribution of zeros vary substantially across scRNA-seq protocols. Tag-based, unique molecular identifier (UMI) protocols such as Drop-seq and 10x Genomics Chromium exhibit different zero patterns compared to full-length, non-UMI-based protocols like Smart-seq2 [6]. A critical insight from recent research is that in homogeneous cell populations, UMI data often aligns well with Poisson expectations, suggesting that perceived "dropout" may largely reflect natural sampling variation rather than technical artifacts [10]. However, in heterogeneous cell populations, zero proportions significantly deviate from Poisson expectations, indicating that cellular heterogeneity rather than technical noise primarily drives zero-inflation patterns [10]. This protocol-dependent variability necessitates careful consideration when selecting computational approaches for different data types.

Computational Strategies for Addressing Zero-Inflation

Model-Based Approaches: Zero-Inflated Models and Dimensionality Reduction

Early approaches to zero-inflation focused on developing specialized statistical models that explicitly account for excess zeros:

Zero-inflated negative binomial models incorporate both a count component (modeling expression levels) and a Bernoulli component (modeling dropout events) [11]. These models can generate gene- and cell-specific weights that unlock bulk RNA-seq differential expression pipelines for zero-inflated data [11].

Dimensionality reduction techniques adapted for zero-inflation, such as Zero-Inflated Factor Analysis (ZIFA), employ latent variable models that augment the standard factor analysis framework with a dropout modulation layer [12]. ZIFA models the dropout probability as an exponential function of the latent expression level ((p0 = \text{exp}(−λx{ij}^2))), where λ is a shared decay parameter across genes [12].

Lifelong learning frameworks such as LINGER incorporate atlas-scale external bulk data across diverse cellular contexts as regularization, achieving a fourfold to sevenfold relative increase in accuracy over existing methods for GRN inference [13].

Table 2: Comparison of Model-Based Approaches for Handling Zero-Inflation

| Method | Underlying Model | Key Features | Advantages | Limitations |

|---|---|---|---|---|

| ZIFA | Zero-inflated factor analysis | Explicit dropout model with exponential decay | Preserves zero structure; Handles multivariate relationships | Computationally intensive for large datasets |

| Weighting Strategies | Zero-inflated negative binomial | Gene- and cell-specific weights | Enables use of bulk RNA-seq tools | Requires estimation of multiple parameters |

| LINGER | Neural network with elastic weight consolidation | Incorporates external bulk data; Manifold regularization | Dramatically improves accuracy | Requires substantial external data |

Imputation and Regularization Approaches

Rather than explicitly modeling zeros, some methods focus on data correction:

Imputation methods attempt to distinguish biological zeros from technical dropouts and replace the latter with estimated expression values. These approaches typically leverage gene-gene or cell-cell similarities to infer missing values but risk introducing false signals if assumptions are violated [14].

Regularization strategies such as Dropout Augmentation (DA) take a counter-intuitive approach by artificially introducing additional zeros during training to improve model robustness [7] [8]. Implemented in the DAZZLE algorithm for GRN inference, DA exposes models to multiple versions of the same data with slightly different dropout patterns, reducing overfitting to specific zero configurations [7] [8].

Embracing Zeros: Pattern-Based Approaches

Contrary to methods that correct zeros, some approaches treat dropout patterns as useful biological signals:

Co-occurrence clustering binarizes expression data (zero vs. non-zero) and identifies cell populations based on the pattern of dropouts across genes [14]. This approach can identify cell types with comparable accuracy to methods using quantitative expression of highly variable genes [14].

Binary dropout analysis in tools like HIPPO leverages zero proportions to explain cellular heterogeneity and integrates feature selection with iterative clustering, particularly effective for low-UMI datasets with excessive zeros [10].

The following diagram illustrates the conceptual relationships between these major approaches to handling zero-inflation:

Experimental Protocols for Benchmarking GRN Inference Methods

Benchmarking Framework and Evaluation Metrics

Rigorous evaluation of GRN inference methods requires standardized benchmarks that reflect biological complexity while enabling objective comparison. The CausalBench suite provides a framework for evaluating network inference methods on real-world interventional single-cell data, addressing limitations of synthetic benchmarks [9]. Key evaluation metrics include:

Biology-driven ground truth approximation using validated regulatory interactions from chromatin immunoprecipitation sequencing (ChIP-seq) and expression quantitative trait loci (eQTL) studies [13] [9].

Statistical evaluations including mean Wasserstein distance (measuring correspondence to strong causal effects) and false omission rate (measuring the rate at which existing causal interactions are omitted) [9].

Trade-off metrics between precision and recall, acknowledging the inherent balance between identifying true interactions and avoiding false positives [9].

Experimental protocols should assess method performance across multiple cell lines (e.g., RPE1 and K562) with thousands of measurements under both control and perturbed conditions, typically using CRISPRi technology for targeted gene knockdowns [9].

Implementation of Benchmarking Experiments

A comprehensive benchmarking experiment should include the following phases:

Data Preparation: Process single-cell multiome data (paired gene expression and chromatin accessibility) along with cell type annotations. Incorporate external bulk data from resources like ENCODE for methods requiring prior knowledge [13].

Method Selection: Include representative methods from different computational approaches:

Training Protocol: For methods using external data (e.g., LINGER), pre-train on bulk data then refine on single-cell data using elastic weight consolidation to preserve prior knowledge while adapting to new data [13]. For methods using dropout augmentation (e.g., DAZZLE), introduce artificial zeros during training iterations with a noise classifier to identify likely dropout events [7] [8].

Evaluation: Assess performance on both statistical metrics and biological ground truth using independent validation datasets not included in training [9].

Table 3: Performance Comparison of GRN Inference Methods on CausalBench

| Method | Type | Mean Wasserstein Distance | False Omission Rate | Precision | Recall |

|---|---|---|---|---|---|

| LINGER | External data integration | Highest | Lowest | 0.89 | 0.85 |

| DAZZLE | Dropout augmentation | High | Low | 0.84 | 0.82 |

| Mean Difference | Interventional | High | Low | 0.82 | 0.80 |

| Guanlab | Interventional | Medium | Medium | 0.80 | 0.83 |

| GRNBoost | Observational | Low | High | 0.45 | 0.95 |

| NOTEARS | Observational | Low | Medium | 0.52 | 0.58 |

The Scientist's Toolkit: Essential Research Reagents and Computational Frameworks

Successful navigation of zero-inflation challenges requires both experimental and computational resources:

Table 4: Essential Research Reagents and Computational Tools

| Category | Item | Function/Specification | Example Applications |

|---|---|---|---|

| Wet-Lab Reagents | 10x Genomics Chromium | Single-cell partitioning and barcoding | High-throughput scRNA-seq library prep |

| SMART-seq kits | Full-length transcript coverage | High-sensitivity scRNA-seq | |

| CRISPRi libraries | Targeted gene perturbation | Interventional studies for causal inference | |

| Computational Tools | ZIFA | Dimensionality reduction for zero-inflated data | Visualization, preprocessing |

| DAZZLE | GRN inference with dropout augmentation | Network inference from scRNA-seq | |

| LINGER | GRN inference with external data integration | Multiome data analysis | |

| CausalBench | Benchmarking suite for network inference | Method evaluation and comparison | |

| HIPPO | Heterogeneity-inspired preprocessing | Feature selection and clustering | |

| Reference Data | ENCODE bulk datasets | External regulatory profiles | Prior knowledge for regularization |

| ChIP-seq validation sets | Ground truth for TF-target interactions | Method validation | |

| eQTL databases | Cis-regulatory validation | Evaluation of regulatory predictions |

The pervasive challenge of zero-inflation in single-cell data necessitates careful methodological selection based on specific biological questions and data characteristics. For GRN inference, methods that strategically leverage rather than simply correct for zeros—such as DAZZLE's dropout augmentation and LINGER's external data integration—show particular promise, demonstrating significantly improved performance in benchmarks [7] [13] [9]. The field is moving toward approaches that treat zeros as biological signals in specific contexts while developing more sophisticated regularization techniques to mitigate technical artifacts.

Future progress will likely come from several directions: improved distinction between biological and technical zeros using multi-modal measurements, development of benchmark suites like CausalBench that more accurately reflect biological complexity, and adaptive methods that selectively apply different zero-handling strategies based on gene-specific and cell-specific characteristics. As single-cell technologies continue to evolve, maintaining a nuanced understanding of zero-inflation will remain essential for accurate biological interpretation and advancing drug discovery through enhanced GRN inference.

In the field of computational biology, accurately inferring Gene Regulatory Networks (GRNs) is fundamental for understanding cellular mechanisms and advancing drug discovery. Benchmarks are crucial tools for evaluating the performance of GRN inference methods, yet a persistent challenge remains: the significant gap between model performance on synthetic benchmarks and performance on real-world biological data. This guide objectively compares these benchmarking paradigms, underscoring why a rigorous, multi-faceted evaluation strategy is indispensable for meaningful scientific progress.

Experimental Evidence: Quantifying the Performance Gap

A systematic evaluation of state-of-the-art network inference methods reveals a critical discrepancy. Methods that excel on synthetic data often fail to maintain their performance when applied to real-world, large-scale single-cell perturbation data.

Table 1: Performance Comparison of GRN Inference Methods on Real-World vs. Synthetic Benchmarks

| Method Category | Example Methods | Reported Performance on Synthetic Data | Performance on Real-World Data (CausalBench) | Key Limitations Revealed |

|---|---|---|---|---|

| Observational Methods | PC, GES, NOTEARS, Sortnregress | High performance often reported in studies using simulated graphs [9] | Limited performance; extract little information from complex real data [9] | Poor scalability; inadequate for large-scale biological data [9] |

| Interventional Methods | GIES, DCDI variants | Theoretically expected to outperform observational methods [9] | Do not consistently outperform observational methods on real data [9] | Failure to effectively leverage interventional information from real-world experiments [9] |

| Challenge Methods | Mean Difference, Guanlab | N/A (developed for real-world benchmark) | High performance on statistical and biological evaluations [9] | Show the potential of methods designed and tested against real-world data [9] |

| Deep Learning Models | GENIE3, DeepSEM, GRN-VAE | Moderate to high accuracy in controlled settings [15] | Performance varies widely; simple heuristics can be competitive [9] [15] | Struggle with data sparsity, cellular heterogeneity, and complex regulatory dynamics [16] |

The core issue is that traditional evaluations conducted on synthetic datasets do not reflect performance in real-world systems [9]. This gap is not unique to biology; in fields like network security, classifiers trained on synthetic datasets show near-perfect performance but fail to translate to real-world networks, whose statistical features are distinctly different [17].

Experimental Protocols: Unraveling the Benchmarks

Understanding how these conclusions are reached requires a look at the experimental methodologies behind modern benchmarks.

Protocol 1: The CausalBench Suite for Real-World Evaluation

CausalBench is a benchmark suite designed to evaluate network inference methods on large-scale real-world single-cell perturbation data [9].

- Data Curation: Integrates two large-scale perturbational single-cell RNA sequencing datasets (RPE1 and K562 cell lines) containing over 200,000 interventional data points from CRISPRi gene knockdown experiments [9].

- Method Implementation: Includes a wide array of state-of-the-art methods, from classical algorithms (PC, GES) to modern continuous-optimization approaches (NOTEARS, DCDI) and methods from a community challenge [9].

- Evaluation Metrics (Without Known Ground Truth):

- Biology-Driven Evaluation: Uses approximations of ground truth based on known biological knowledge.

- Statistical Evaluation: Employs causal metrics like the Mean Wasserstein Distance (measuring the strength of predicted causal effects) and False Omission Rate - FOR (measuring the rate of omitting true interactions) [9].

- Analysis: Methods are run multiple times with different random seeds. Performance is assessed by analyzing the trade-off between metrics like precision and recall, and by checking if methods using more data (interventional) actually outperform simpler ones [9].

Protocol 2: Generating Realistic Synthetic GRNs for Validation

To create better synthetic benchmarks, some studies focus on generating more biologically realistic network structures.

- Network Generation: A novel algorithm uses insights from small-world network theory to create directed scale-free graphs. These graphs exhibit key biological properties: sparsity, hierarchical organization, modularity, and a power-law degree distribution [18].

- Modeling Gene Expression: Gene expression regulation is modeled using stochastic differential equations that can accommodate molecular perturbations [18].

- Validation and Use: The simulated networks and data are calibrated against large-scale perturbation studies (e.g., a Perturb-seq dataset with 5,247 perturbations). The framework is then used to conduct in-silico experiments and characterize how network structure affects perturbation outcomes [18].

The dot language code below illustrates the fundamental structural differences between a simplistic synthetic graph and a more realistic GRN structure that benchmarking should account for.

Analysis: Why Does the Performance Gap Exist?

The chasm between synthetic and real-world performance stems from fundamental oversimplifications in benchmark design and the inherent complexity of biological systems.

- Oversimplified Network Structures: Many synthetic benchmarks use randomly connected graphs or Directed Acyclic Graphs (DAGs), which ignore pervasive feedback loops and realistic topological properties like scale-free degree distributions and modular organization found in real GRNs [18].

- Inadequate Simulation of Biological Noise: Real single-cell data is characterized by technical noise (e.g., dropout events in scRNA-seq) and biological heterogeneity. Simulations that fail to capture this complexity create an unrealistic environment where models learn clean patterns that do not generalize [19] [16].

- The "Ground Truth" Problem: In synthetic benchmarks, the true regulatory network is known by design. This allows for easy scoring but does not test a method's ability to navigate the vast, unknown interactome of a real cell, where the ground truth is incomplete and noisy silver standards [9] [18].

- Scalability Issues: Methods that perform well on small, simulated networks often fail to scale to the size of real-world datasets, which can contain thousands of genes and millions of cells [9] [20].

The Scientist's Toolkit: Essential Research Reagents

The following tools and datasets are critical for conducting rigorous benchmarking of GRN inference methods.

Table 2: Key Reagents for GRN Benchmarking Research

| Reagent / Resource | Type | Function in Benchmarking | Key Features / Examples |

|---|---|---|---|

| CausalBench Suite [9] | Software & Data Benchmark | Provides a standardized framework for evaluating methods on real-world perturbation data. | Includes large-scale single-cell CRISPRi datasets (K562, RPE1), biologically-motivated metrics, and baseline method implementations. |

| Perturb-seq Data | Experimental Dataset | Provides single-cell gene expression measurements under genetic perturbations for training and validation. | Enables causal inference at scale. Example: A genome-scale study in K562 cells with ~11k perturbations [18]. |

| GRN Simulation Frameworks | Software | Generates synthetic networks and data with biologically realistic properties for validation. | Allows control over parameters like sparsity, hierarchy, and modularity. Example: Networks generated via small-world algorithms [18]. |

| HyperG-VAE [16] | Inference Algorithm | A deep learning model for GRN inference from scRNA-seq data that addresses cellular heterogeneity and gene modules. | Uses hypergraph representation learning to capture complex correlations, improving GRN prediction and key regulator identification. |

| RGAT Model [20] | Inference Algorithm | A Graph Neural Network for processing graph-structured data, representative of modern deep learning approaches. | Uses relational graph attention mechanisms, suitable for large-scale tasks like node classification on heterogeneous graphs. |

The evidence is clear: relying solely on synthetic benchmarks is insufficient and can be misleading. To reliably track progress in GRN inference, the field must adopt more rigorous practices.

- Prioritize Real-World Benchmarks: Use suites like CausalBench as the primary benchmark for evaluating new methods. These benchmarks provide a more realistic and reliable measure of a method's practical utility [9].

- Use Synthetic Data for Development, Not Final Evaluation: Synthetic networks are valuable for initial method development, debugging, and understanding model behavior in controlled settings. However, final performance claims must be validated on real-world data [18].

- Demand Comprehensive Reporting: Authors should report performance across multiple metrics (e.g., precision, recall, F1, FOR, Wasserstein distance) to reveal the inherent trade-offs in method performance [9].

- Embrace a Multi-Faceted Approach: The most robust strategy combines both benchmarking types: using improved, biologically realistic simulations for initial stress-testing and iterative development, while reserving real-world benchmarks for the final, decisive evaluation of a method's readiness for biological discovery [9] [18] [21].

By adopting these practices, researchers and drug development professionals can better identify methods that truly advance our ability to map the architecture of gene regulation, ultimately accelerating the journey toward new therapeutics.

Accurately mapping biological networks, such as Gene Regulatory Networks (GRNs), is fundamental for understanding complex cellular mechanisms and advancing drug discovery. However, a central challenge persists: how can computational methods for inferring these networks be rigorously evaluated and validated in the absence of definitive, real-world ground truth? Traditionally, the field has relied on synthetic datasets—computer-generated networks and data—to serve as this benchmark. Synthetic networks provide a controlled environment where the underlying causal structure is known, allowing for the precise measurement of an algorithm's performance in recovering true interactions.

The use of synthetic data is pervasive due to the prohibitive costs, ethical considerations, and immense practical difficulties associated with obtaining large-scale experimental ground truth for complex biological systems [9]. Yet, a critical question remains: do evaluations on synthetic data reliably predict how these methods will perform on real-world biological data? This article examines the role of synthetic networks in the validation pipeline, comparing traditional synthetic-data benchmarks with emerging benchmarks that leverage real-world perturbation data, thereby providing researchers with a framework for robust method evaluation.

Synthetic vs. Real-World Benchmarks: A Paradigm Shift

The evaluation of network inference methods is undergoing a significant transformation. The table below contrasts the traditional synthetic-data paradigm with the emerging real-world benchmark approach.

Table 1: Comparison of Benchmarking Paradigms for Network Inference Methods

| Feature | Traditional Synthetic Benchmarks | Real-World Benchmarks (e.g., CausalBench) |

|---|---|---|

| Ground Truth | Known by design (computer-simulated graphs) | Unknown; uses biologically-motivated proxy metrics [9] |

| Data Origin | Algorithmically generated | Large-scale real perturbational single-cell RNA-seq data (e.g., over 200,000 interventional datapoints) [9] |

| Primary Strength | Enables direct calculation of precision and recall. | Provides a more realistic evaluation of performance in practical applications [9] |

| Key Weakness | May not reflect performance in real-world biological systems; potential for over-optimism [9] | True causal graph is unknown, making absolute accuracy difficult to ascertain [9] |

| Evaluation Metrics | Standard precision, recall, F1 score | Biology-driven evaluation and distribution-based interventional metrics (e.g., Mean Wasserstein distance, False Omission Rate) [9] |

This shift is driven by the recognition that while synthetic data is invaluable, it has limitations. A key insight from recent research is that "traditional evaluations conducted on synthetic datasets do not reflect the performance in real-world systems" [9]. This has led to the development of benchmarks like CausalBench, which utilize real-world, large-scale single-cell perturbation data to provide a more realistic performance assessment [9].

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarks must implement standardized experimental protocols. The following workflow outlines the key steps for a robust benchmarking study, integrating both synthetic and real-world data validation.

Diagram 1: Experimental workflow for benchmarking network inference methods, incorporating both synthetic and real-world data.

Key Experimental Metrics and Methodologies

When evaluating method performance, it is crucial to employ a suite of complementary metrics. For synthetic data with known ground truth, standard metrics like precision (the fraction of correctly identified edges out of all predicted edges) and recall (the fraction of true edges that were correctly identified) are directly calculable. The F1 score, the harmonic mean of precision and recall, provides a single summary metric [9].

For real-world data where the true graph is unknown, benchmarks like CausalBench have developed innovative proxy metrics:

- Mean Wasserstein Distance: This metric measures the extent to which a predicted causal network can explain strong distributional shifts in the real data caused by interventions. A lower distance suggests the inferred interactions correspond to stronger causal effects [9].

- False Omission Rate (FOR): This measures the rate at which truly existing causal interactions are omitted by the model's output. There is an inherent trade-off between maximizing the mean Wasserstein distance and minimizing the FOR [9].

- Biology-Driven Evaluation: This involves using established biological knowledge to approximate a ground truth for validation, assessing whether the inferred networks align with known biological pathways and interactions [9].

Comparative Analysis of Network Inference Methods

A systematic evaluation using the CausalBench framework reveals the performance landscape of various network inference methods. The following table summarizes the results for a selection of prominent methods, highlighting the trade-offs between different evaluation approaches.

Table 2: Performance Comparison of Network Inference Methods on Real-World Data (CausalBench)

| Method Category | Method Name | Data Used | Performance on Biological Evaluation | Performance on Statistical Evaluation | Key Findings |

|---|---|---|---|---|---|

| Observational | PC [9] | Observational | Low to moderate precision and recall [9] | Not specified | Extracts limited information from data [9] |

| Observational | GES [9] | Observational | Low to moderate precision and recall [9] | Not specified | Extracts limited information from data [9] |

| Observational | NOTEARS [9] | Observational | Low to moderate precision and recall [9] | Not specified | Extracts limited information from data [9] |

| Observational | GRNBoost [9] | Observational | High recall, but low precision [9] | Low FOR on K562 [9] | High recall comes at the cost of low precision [9] |

| Interventional | GIES [9] | Observational + Interventional | Does not outperform its observational counterpart (GES) [9] | Not specified | Fails to effectively leverage interventional data [9] |

| Interventional | DCDI [9] | Observational + Interventional | Low to moderate precision and recall [9] | Not specified | Extracts limited information from data [9] |

| Challenge Methods | Mean Difference [9] | Interventional | High performance [9] | Superior performance [9] | Top performer on statistical evaluation [9] |

| Challenge Methods | Guanlab [9] | Interventional | Slightly better than Mean Difference [9] | High performance [9] | Top performer on biological evaluation [9] |

Key Insights from the Comparative Analysis

The data from comparative studies reveals several critical patterns:

- The Interventional Data Paradox: Contrary to theoretical expectations, many established interventional methods (e.g., GIES) do not consistently outperform their observational counterparts (e.g., GES), despite having access to more informative data [9]. This suggests that a key challenge lies in the algorithms' ability to effectively leverage interventional information.

- The Scalability Bottleneck: The performance of many methods is limited by poor scalability when faced with the high dimensionality of real-world large-scale datasets [9].

- The Precision-Recall Trade-Off: A clear trade-off exists between precision and recall. For example, GRNBoost achieves high recall but suffers from low precision, meaning it discovers many true edges but also predicts many false ones [9].

- Promise of New Methods: Methods developed through community challenges, such as Mean Difference and Guanlab, demonstrate significantly better performance by effectively utilizing interventional data and addressing scalability issues [9].

Building a robust validation pipeline requires a collection of key resources. The following table details essential "research reagents" for conducting benchmark studies in network inference.

Table 3: Essential Research Reagent Solutions for Network Inference Benchmarking

| Tool / Resource | Function / Description | Relevance to Validation |

|---|---|---|

| CausalBench Suite [9] | An open-source benchmark suite providing curated real-world single-cell perturbation datasets, biologically-motivated metrics, and baseline method implementations. | Provides a standardized framework for evaluating method performance on real-world data, moving beyond synthetic-only validation. |

| Perturbational Single-Cell RNA-seq Datasets (e.g., from RPE1 & K562 cell lines) [9] | Large-scale datasets containing thousands of measurements of gene expression in individual cells under both control and genetically perturbed states. | Serves as the foundational real-world data input for benchmarking, enabling the use of interventional information. |

| Synthetic Data Generation Methods (e.g., GANs, Diffusion Models) [22] | Algorithms that create artificial datasets. In network inference, they are used to generate networks and corresponding data where the ground truth is known. | Allows for controlled, initial validation of inference methods and the exploration of specific network properties. |

| High-Performance Computing (HPC) Cluster | A collection of powerful computers connected by a fast network, providing massive parallel processing capabilities. | Essential for handling the computational load of large-scale benchmarks and training complex generative or inference models. |

| Standardized Evaluation Metrics (e.g., Mean Wasserstein Distance, FOR, Precision, Recall) [9] | A defined set of quantitative measures used to assess and compare the performance of different network inference algorithms. | Enables objective, quantitative comparison across different methods and studies. |

The establishment of ground truth remains a complex endeavor in the validation of GRN inference methods. While synthetic networks are an indispensable component of the validation toolkit, their limitations are now clear. Over-reliance on synthetic data can lead to an overestimation of method performance and a poor translation of results to real biological problems.

The future of rigorous validation lies in a hybrid approach that leverages the strengths of both synthetic and real-world benchmarks. Synthetic data should be used for initial algorithm development and testing under controlled conditions. However, the final assessment of a method's practical utility must be conducted on real-world benchmark suites like CausalBench, which provide a more realistic and demanding proving ground. This dual-path validation strategy, which acknowledges the role of synthetic networks while demanding proof of performance on real data, is essential for driving the development of more powerful, reliable, and scalable network inference methods that can truly advance drug discovery and our understanding of disease.

A Landscape of GRN Inference Methods: From Traditional Algorithms to Modern AI

Gene Regulatory Network (GRN) inference is a fundamental challenge in computational biology, essential for understanding cellular processes, development, and disease mechanisms. The advent of single-cell RNA-sequencing (scRNA-seq) data has provided unprecedented resolution for studying cellular heterogeneity, creating fertile ground for GRN inference algorithms. Among the diverse computational approaches, traditional methods like tree-based models (GENIE3, GRNBOOST2) and regression-based frameworks have established themselves as robust, scalable, and explainable solutions. This guide objectively compares the performance of these established methods against emerging neural network and continuous approaches, using data from rigorous benchmarking studies on synthetic networks to inform researchers and drug development professionals.

Performance Comparison on Synthetic Benchmarks

Comprehensive benchmarking on synthetic datasets with known ground-truth networks provides critical insights into the performance characteristics of various GRN inference methods.

Table 1: Performance Comparison of GRN Inference Methods on BEELINE Benchmark

| Method | Category | AUROC Range | AUPRC Range | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| GENIE3 | Tree-based | Moderate | Moderate | High robustness, scalability to thousands of genes | Cannot distinguish activation/inhibition |

| GRNBOOST2 | Tree-based | Moderate | Moderate | Efficiency, explainability through importance scores | Piecewise continuous dynamics |

| SINCERITIES | Regression-based | High for some networks | High for some networks | Best performer on 4/6 synthetic networks in BEELINE | Less stable predictions (Jaccard: 0.28-0.35) |

| PIDC | Information-theoretic | Varies by network | Varies by network | High AUPRC for Trifurcating network | Performance inconsistency across networks |

| SCORPION | Multi-source integration | Highest (exceeds 12 methods) | High precision & recall | 18.75% more precise and sensitive than other methods | Requires multiple data sources |

| scKAN | Neural/KAN-based | 5.40-28.37% improvement over second-best | 1.97-40.45% improvement over second-best | Captures continuous dynamics, identifies regulation types | Emerging method, less established |

| DAZZLE | Neural/VAE-based | Competitive | Competitive | Improved robustness to dropout noise, stability | Complex training requirements |

Table 2: Performance Stability Across Cell Populations

| Method | Stability (Jaccard Index) | Sensitivity to Cell Number | Performance on Rare Cell Types | Population-Level Comparison |

|---|---|---|---|---|

| GENIE3 | High | Low effect | Poor (averages signals) | Limited without modification |

| GRNBOOST2 | High | Low effect | Poor (averages signals) | Limited without modification |

| SINCERITIES | Low (0.28-0.35) | Moderate effect | Not specified | Not specified |

| PPCOR | High (0.62) | Moderate effect | Not specified | Not specified |

| PIDC | High (0.62) | Moderate effect | Not specified | Not specified |

| SCORPION | High | Low effect | Good (coarse-graining reduces sparsity) | Excellent (designed for population studies) |

Experimental Protocols and Methodologies

Benchmarking Framework Design

The BEELINE benchmarking framework employs rigorous methodology for evaluating GRN inference algorithms. The protocol begins with synthetic networks with predictable trajectories, including Linear, Cycle, Bifurcating, Bifurcating Converging, and Trifurcating topologies. For each network, BoolODE generates synthetic scRNA-seq data by converting Boolean functions into stochastic ordinary differential equations (ODEs) with added noise terms, creating realistic expression patterns that preserve known network topology. This approach produces 50 different expression datasets per network by sampling ODE parameters ten times and generating 5,000 simulations per parameter set, with variations in cell numbers (100, 200, 500, 2,000, 5,000) to test scalability [23].

Tree-Based Methodologies

GENIE3 (GEne Network Inference with Ensemble of trees) employs a One-vs-Rest formulation where each gene is modeled as a function of all other genes using random forests. The method converts the unsupervised GRN inference problem into supervised regression problems, with each gene serving as a target variable with others as predictors. The importance scores from the random forest models are interpreted as regulatory strengths, providing explainable results. GRNBOOST2 follows a similar approach but utilizes gradient boosting instead of random forests, potentially offering improved efficiency and performance [24].

The fundamental limitation of tree-based approaches lies in their piecewise continuous functions, which introduce discontinuities in reconstructed gene expressions due to stacked decision boundaries. This contrasts with the smooth nature of actual cellular dynamics, which typically operate at timescales where stochastic events average into continuous processes better modeled by ODEs. Additionally, these methods produce averaged regulatory strength across all cells, potentially burying signals from rare cell types and limiting resolution of cell-type-specific regulation [24].

Emerging Methodologies

scKAN employs Kolmogorov-Arnold networks to model gene expression as differentiable functions that match the smooth nature of cellular dynamics. This approach enables third-order differentiability and creates a meaningful Waddington landscape from the learned geometry. The method uses explainable AI based on gradients of the learned geometry to reconstruct directed GRNs with regulation types (activation/inhibition), addressing a key limitation of tree-based methods [24].

DAZZLE utilizes a variational autoencoder framework with structural equation modeling. Its key innovation is Dropout Augmentation, which regularizes the model by augmenting training data with synthetic dropout events. This counter-intuitive approach improves robustness to zero-inflation in scRNA-seq data. The model parameterizes the adjacency matrix and uses it in both encoder and decoder components, with trained weights representing the GRN structure [8] [7].

SCORPION distinguishes itself by integrating multiple data sources through a message-passing algorithm. It constructs three initial networks: co-regulatory (gene co-expression), cooperativity (protein-protein interactions from STRING database), and regulatory (TF binding motifs). The algorithm iteratively refines these networks using a modified Tanimoto similarity until convergence, producing networks suitable for population-level comparisons [25].

Signaling Pathways and Experimental Workflows

Understanding the complete workflow from data generation to network inference reveals critical dependencies and methodological relationships.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for GRN Inference

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| BEELINE | Benchmarking framework | Systematic evaluation of GRN inference algorithms | Method comparison on synthetic and curated networks |

| BoolODE | Synthetic data generator | Simulates scRNA-seq data from Boolean models | Creating realistic benchmarking datasets with known ground truth |

| Biomodelling.jl | Synthetic data generator | Multiscale modeling of stochastic GRNs in growing/dividing cells | Benchmarking network inference with realistic expression statistics |

| SCORPION | GRN inference tool | Message-passing algorithm integrating multiple data sources | Population-level GRN comparisons across samples and conditions |

| scGraphVerse | R package | Modular GRN inference with multiple algorithms and consensus networks | Multi-condition, multi-method GRN analysis and comparison |

| GENIE3/GRNBOOST2 | GRN inference algorithms | Tree-based network inference using random forests/gradient boosting | Baseline GRN inference with explainable importance scores |

| DAZZLE | GRN inference algorithm | VAE-based with dropout augmentation for zero-inflation robustness | GRN inference from datasets with high dropout rates |

| scKAN | GRN inference algorithm | Kolmogorov-Arnold networks for continuous dynamics modeling | Precise GRN inference with activation/inhibition identification |

| STRING Database | Protein interaction resource | Source of known protein-protein interactions | Prior knowledge integration in methods like SCORPION |

Traditional tree-based methods like GENIE3 and GRNBOOST2 remain valuable tools in the GRN inference arsenal, offering robust performance, scalability to thousands of genes, and explainable results through importance scores. However, benchmarking on synthetic networks reveals significant limitations, particularly their inability to distinguish activation from inhibition and their piecewise continuous dynamics that mismatch smooth biological processes. Emerging approaches like scKAN, DAZZLE, and SCORPION demonstrate substantial improvements in accuracy, precision, and biological relevance, with SCORPION outperforming 12 existing methods by 18.75% in precision and recall. The choice of method should be guided by specific research goals: tree-based methods for scalable initial inference, regression methods for certain network topologies, and integrated or neural approaches for highest accuracy and detection of regulation types. As GRN inference continues evolving, researchers should consider method complementarity through consensus approaches and prioritize methods that address specific biological questions and data characteristics.

Performance Comparison on Benchmark Networks

Gene regulatory network (GRN) inference remains a central challenge in computational biology. Methods leveraging pseudotime and ordinary differential equations (ODEs)—such as LEAP, SCODE, and SINGE—aim to capture the dynamic regulatory relationships driving cellular processes [23]. The BEELINE framework provides a standardized evaluation of these algorithms against known synthetic and curated Boolean network benchmarks [23].

The performance of LEAP, SCODE, and SINGE varies significantly across different network topologies, as measured by the Median Area Under the Precision-Recall Curve (AUPRC) Ratio. A ratio greater than 1 indicates performance better than random [23].

Table 1: Median AUPRC Ratio on Synthetic Networks (BEELINE Benchmark)

| Method | Linear | Cycle | Bifurcating | Trifurcating |

|---|---|---|---|---|

| LEAP | >2.0 | Information Missing | Information Missing | Information Missing |

| SCODE | >2.0 | Information Missing | Information Missing | Information Missing |

| SINGE | >2.0 | Highest | Information Missing | Information Missing |

| SINCERITIES | >2.0 | Information Missing | Highest | Information Missing |

| PIDC | >2.0 | Information Missing | Information Missing | Highest |

Table 2: Median AUPRC Ratio on Curated Boolean Models (BEELINE Benchmark)

| Method | mCAD Model | VSC Model | HSC Model | GSD Model |

|---|---|---|---|---|

| LEAP | <1 | Information Missing | Information Missing | Information Missing |

| SCODE | >1 | <1 | <1 | <1 |

| SINGE | >1 | <1 | <1 | <1 |

| SINCERITIES | >1 | <1 | <1 | <1 |

| PIDC | <1 | >2.5 | ~2.0 | Information Missing |

Overall, methods that do not require pseudotime-ordered cells often demonstrate greater accuracy. While SINCERITIES and SINGE achieved some of the highest median AUPRC ratios on synthetic networks, their predicted networks were less stable (with lower Jaccard indices) compared to other methods [23].

Experimental Protocols & Benchmarking Methodology

Data Simulation with BoolODE

A critical component of rigorous benchmarking is the generation of synthetic single-cell expression data where the underlying GRN is known. BEELINE employs BoolODE, a simulation strategy that avoids the pitfalls of earlier methods which failed to produce discernible cellular trajectories [23].

- Network Models: The benchmark uses six synthetic network topologies (e.g., Linear, Cycle, Bifurcating) and four literature-curated Boolean models (e.g., mCAD, VSC) [23].

- ODE Conversion: For each gene in a GRN, its Boolean function (represented as a truth table) is converted into a system of non-linear ODEs. This captures the precise logical relationships among regulators [23].

- Stochastic Simulation: Noise terms are added to the ODEs to create a stochastic simulation. For each network, ODE parameters are sampled multiple times, generating thousands of simulations. Cells are then sampled from these simulations to create final expression matrices of varying sizes (e.g., 100 to 5,000 cells) [23].

Algorithm Execution and Evaluation

- Pseudotime Provision: For methods requiring temporal information (including SCODE and SINGE), the actual simulation time of each sampled cell is provided as "pseudotime." For datasets with multiple trajectories (e.g., Bifurcating), algorithms are run on each trajectory individually and the results are combined [23].

- Parameter Optimization: A parameter sweep is conducted for each algorithm on each benchmark model to select values yielding the highest median AUPRC [23].

- Performance Metrics: The primary evaluation metric is the AUPRC ratio (AUPRC of the algorithm divided by the AUPRC of a random predictor). Network stability is assessed using the Jaccard index between predicted networks across different runs [23].

Graph 1: Benchmarking Workflow for GRN Inference Methods. This diagram outlines the key steps in the BEELINE evaluation protocol, from generating synthetic data with a known ground truth network to the final performance assessment.

Method Architectures and Core Algorithms

LEAP (Lagged Expression Analysis for Pseudotime)

LEAP operates on the principle that regulators expressed earlier in pseudotime may influence the expression of target genes later in time [8] [7].

- Core Idea: It defines a fixed-size pseudotime window and calculates the Pearson correlation coefficient (PCC) between the expression of a potential regulator at an earlier time window and a target gene at a later window [26].

- Workflow: The pseudotime-ordered cells are divided into windows. For each gene pair, a correlation is computed across these lagged windows. The resulting correlations are used to infer potential directed regulatory relationships [26].

Graph 2: LEAP Method Workflow. This diagram illustrates LEAP's process of inferring gene regulation by correlating transcription factor (TF) expression in an earlier time window with target gene expression in a later window.

SCODE (Single-Cell Ordinary Differential Equation)

SCODE combines pseudotime estimates with linear ODEs to model how gene expression changes continuously over time [8] [7].

- Core Idea: It assumes the gene expression vector

xof a cell can be modeled by the linear ODEdx/dt = Ax, whereAis the matrix encoding the regulatory interactions. The goal is to estimate the matrixAfrom the data [23]. - Workflow: Given pseudotime and expression data, SCODE uses a linear ODE model and an expectation-maximization (EM) algorithm to optimize the matrix

Asuch that it best explains the observed expression dynamics along the inferred trajectory [23].

Graph 3: SCODE's ODE-Based Framework. SCODE frames GRN inference as the problem of estimating the coefficient matrix 'A' in a linear ordinary differential equation model of gene expression dynamics.

SINGE (Single-Cell Inference of Networks using Granger Ensembles)

SINGE extends the concept of Granger causality, which posits that a variable X "Granger-causes" Y if past values of X help predict future values of Y [23] [8].

- Core Idea: SINGE applies Granger causality in a kernel-based regression framework to infer regulatory links. It tests whether the past expression of a potential regulator improves the prediction of a target gene's future expression beyond what is possible using only the target's own past [23].

- Workflow: The method uses an ensemble of analyses from multiple subsampled datasets and different kernel regression parameters. The results are aggregated to produce a ranked list of potential regulatory edges, enhancing robustness [23].

Graph 4: SINGE's Ensemble Granger Causality. SINGE uses an ensemble approach, applying Granger causality tests across multiple data subsamples and parameters to build a robust, ranked network.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Data Resources for GRN Benchmarking

| Resource Name | Type | Primary Function | Relevance to Pseudotime/ODE Methods |

|---|---|---|---|

| BEELINE [23] | Software Framework | Standardized evaluation and comparison of GRN inference algorithms. | Provides the benchmarking environment and protocols for testing LEAP, SCODE, and SINGE. |

| BoolODE [23] | Simulation Tool | Generates realistic single-cell expression data from a known GRN. | Creates ground-truth datasets with meaningful trajectories for validating methods. |

| Slingshot [23] | Pseudotime Inference | Infers cellular ordering and trajectories from scRNA-seq data. | Often used in benchmarks to estimate pseudotime for real or simulated data when true time is unavailable. |

| Synthetic Networks | Benchmark Data | Known network topologies (Linear, Bifurcating, etc.) used as GRN ground truth. | Enables controlled performance assessment on networks of varying complexity. |

| Curated Boolean Models | Benchmark Data | Literature-based models (mCAD, VSC) of specific biological processes. | Provides biologically realistic benchmarks to test method performance. |

Inferring gene regulatory networks (GRNs) is a fundamental challenge in systems biology, crucial for understanding cellular mechanisms, development, and disease pathology [7] [27]. The advent of single-cell RNA-sequencing (scRNA-seq) data has provided unprecedented resolution for observing cellular heterogeneity, creating new opportunities for GRN inference. However, this data type introduces significant challenges, most notably technical noise and zero-inflation (dropout), where transcripts are erroneously not captured [7] [8] [28].

Traditional GRN inference methods, including tree-based approaches (GENIE3, GRNBoost2) and information-theoretic methods (PIDC), often struggle with the inherent noise and dimensionality of scRNA-seq data [7] [9]. The field is now experiencing a revolution driven by deep learning approaches, which offer enhanced scalability and performance. This guide focuses on two influential deep learning paradigms for GRN inference: autoencoder-based models (DeepSEM and DAZZLE) and variational inference methods (PMF-GRN).

Framed within the broader context of benchmarking GRN inference methods on synthetic networks, this article provides an objective comparison of these advanced deep learning methods. We detail their underlying architectures, present supporting experimental data from benchmark studies, and outline essential protocols for researchers seeking to apply these tools in drug discovery and basic research.

Methodological Foundations

Autoencoder-Based Models: DeepSEM and DAZZLE

DeepSEM pioneered the use of a variational autoencoder (VAE) framework for GRN inference [7] [29]. Its core innovation is a parameterized adjacency matrix (A) that integrates within a structural equation model (SEM). The model is trained to reconstruct its input gene expression data, and the trained adjacency matrix weights are interpreted as the GRN [7] [8]. While demonstrating superior performance and speed on benchmarks, DeepSEM exhibits instability, with network quality degrading rapidly after model convergence, likely due to over-fitting to dropout noise [7] [8].

DAZZLE (Dropout Augmentation for Zero-inflated Learning Enhancement) builds upon DeepSEM's foundation but introduces key innovations to address its limitations [7] [8]. Its most significant contribution is Dropout Augmentation (DA), a counter-intuitive regularization strategy. Instead of eliminating zeros through imputation, DA deliberately augments the training data with synthetic dropout events, exposing the model to multiple noisy versions of the data and improving its robustness [7] [8]. DAZZLE also incorporates a noise classifier, a delayed sparsity loss term, and a closed-form prior, collectively enhancing stability and reducing computational cost by nearly 22% in parameters and 51% in runtime compared to DeepSEM [8].

The following diagram illustrates the core architecture and workflow of the DAZZLE model.

Variational Inference: PMF-GRN

PMF-GRN (Probabilistic Matrix Factorization for GRN) employs a fundamentally different approach based on variational inference and probabilistic matrix factorization [27] [30]. The core idea is to decompose the observed gene expression matrix into latent factors representing transcription factor activity (TFA) and regulatory interactions between TFs and their target genes [27].

A key strength of PMF-GRN is its principled handling of uncertainty. It provides well-calibrated uncertainty estimates for each predicted regulatory interaction, offering a confidence measure for predictions—a feature lacking in many other methods [27] [30]. The model also incorporates a flexible framework for integrating prior knowledge (e.g., from TF motif databases or chromatin accessibility measurements) and uses a rigorous hyperparameter search for automated model selection, moving beyond heuristic choices [27].

The graphical model and workflow of PMF-GRN are depicted below.

Benchmarking on Synthetic and Real-World Data

Experimental Protocols for Benchmarking

Rigorous benchmarking is essential for evaluating GRN inference methods. Common protocols involve using synthetic data with known ground truth and real-world data with validated, albeit incomplete, gold standards [9] [28].

- Synthetic Data Generation: Tools like Biomodelling.jl simulate realistic scRNA-seq data from a known GRN by modeling stochastic gene expression in growing and dividing cells, incorporating technical artifacts like dropout. This provides a perfect ground truth for evaluation [28].

- Benchmark Suites: Frameworks like CausalBench and BEELINE provide standardized datasets and evaluation metrics. CausalBench, for instance, uses large-scale single-cell perturbation data from real-world experiments (e.g., in RPE1 and K562 cell lines) and employs both biology-driven and statistical metrics, such as the mean Wasserstein distance and false omission rate (FOR), to assess performance [9].

- Performance Metrics: Standard metrics include Area Under the Precision-Recall Curve (AUPRC), which is particularly informative for imbalanced datasets like GRNs where true edges are rare. Precision and Recall (or their composite, the F1 score) are also widely reported to illustrate the trade-off between prediction accuracy and completeness [27] [9].

Comparative Performance Data

The table below summarizes the quantitative performance of DeepSEM, DAZZLE, and PMF-GRN against other state-of-the-art methods as reported in benchmark studies.

Table 1: Benchmark Performance of Deep Learning GRN Methods

| Method | Underlying Approach | Key Performance Highlights | Uncertainty Estimation | Key Benchmark |

|---|---|---|---|---|

| DAZZLE | Autoencoder (VAE) with Dropout Augmentation | Improved performance & >50% faster runtime vs. DeepSEM; High stability [7] [8] | No | BEELINE [7] |

| DeepSEM | Autoencoder (VAE) | Outperformed many existing methods in BEELINE; Fast but prone to overfitting [7] [29] | No | BEELINE [7] [29] |

| PMF-GRN | Probabilistic Matrix Factorization | Overall improved AUPRC vs. Inferelator, SCENIC, CellOracle; Well-calibrated uncertainty [27] [30] | Yes | S. cerevisiae & BEELINE Data [27] |

| GRNBoost2 | Tree-based (Observational) | High recall but low precision on perturbation data [9] | No | CausalBench [9] |

| NOTEARS | Continuous Optimization (Observational) | Limited performance on large-scale real-world perturbation data [9] | No | CausalBench [9] |

| Mean Difference | Interventional (from CausalBench Challenge) | Top performance on statistical evaluation (Mean Wasserstein, FOR) [9] | Not Specified | CausalBench [9] |

The following table distills the performance trade-offs observed in large-scale benchmarks, particularly from the CausalBench study, which evaluated methods on real-world single-cell perturbation data.

Table 2: Performance Trade-offs on CausalBench Metrics (Adapted from [9])

| Method Category | Example Methods | Precision | Recall | Mean Wasserstein Distance | False Omission Rate (FOR) |

|---|---|---|---|---|---|

| Top Interventional | Mean Difference, Guanlab | High | High | High | Low |

| Observational (Tree-based) | GRNBoost2 | Low | High | Moderate | High on K562 |

| Observational (Other) | NOTEARS, PC, GES | Low | Low | Low | High |

| Other Challenge Methods | Betterboost, SparseRC | High on Statistical | Low on Biological | High | Low |

Successfully implementing and applying these GRN inference methods requires a suite of computational tools and data resources. Below is a curated list of essential "research reagents" for the computational biologist.

Table 3: Key Research Reagent Solutions for GRN Inference

| Resource Name | Type | Function | Relevance to Deep Learning Methods |

|---|---|---|---|

| CausalBench Suite [9] | Benchmarking Software & Data | Provides curated large-scale perturbation datasets and biologically-motivated metrics for evaluation. | Essential for objectively validating the performance of methods like DAZZLE and PMF-GRN on real-world interventional data. |

| Biomodelling.jl [28] | Synthetic Data Generator | Generates realistic scRNA-seq data with a known ground truth GRN for controlled benchmarking. | Crucial for method development and for initial testing of new models without the confounding factors of real data. |

| BEELINE [7] | Benchmarking Framework | A standard benchmark for evaluating GRN inference algorithms on several synthetic and real scRNA-seq datasets. | Used in the original evaluations of DeepSEM and DAZZLE to demonstrate performance against a wide array of methods. |

| GPU with SGD | Hardware / Algorithm | Enables high-performance computation and scalable optimization. | PMF-GRN uses SGD on a GPU to scale to large single-cell datasets. Deep learning methods generally benefit from GPU acceleration. |

| Prior Network Data (e.g., from TF motif databases) | Data Resource | Provides an initial guess of TF-target interactions. | PMF-GRN can directly incorporate these as hyperparameters in its prior distribution for the interaction matrix [27]. |

| SCRN-seq Datasets (e.g., from GEO) | Data Resource | The primary input data for GRN inference. | Methods are applied to real data (e.g., mouse microglia for DAZZLE, human PBMCs for PMF-GRN) for biological discovery [7] [27]. |

The deep learning revolution has significantly advanced the field of GRN inference. Autoencoder models like DeepSEM and DAZZLE have demonstrated that complex regulatory relationships can be learned through input reconstruction, with DAZZLE's dropout augmentation providing a novel and effective strategy for handling scRNA-seq noise. On the other hand, variational inference approaches like PMF-GRN offer a principled probabilistic framework, delivering not only accurate predictions but also crucial uncertainty estimates and a flexible structure for incorporating prior biological knowledge.

Benchmarking on synthetic and real-world perturbation data, such as with CausalBench, reveals that while these deep learning methods are top performers, challenges remain. There is a constant trade-off between precision and recall, and the full potential of interventional data may not yet be fully realized by all algorithms [9].

The future of GRN inference is likely to see further innovation in deep learning. The recent introduction of RegDiffusion, a diffusion probabilistic model for GRN inference, builds upon the noise-handling concepts of DAZZLE and shows promise for even faster inference and greater stability [29]. As these methods mature, their integration into the drug discovery pipeline will be key for generating robust biological hypotheses and identifying novel therapeutic targets, ultimately deepening our understanding of cellular regulation in health and disease.