Benchmarking Evolutionary Algorithms on CEC 2017 and CEC 2020 Test Suites: A Guide for Biomedical Research

This article provides a comprehensive framework for researchers and drug development professionals to effectively utilize the CEC 2017 and CEC 2020 benchmark suites for evaluating evolutionary algorithms (EAs).

Benchmarking Evolutionary Algorithms on CEC 2017 and CEC 2020 Test Suites: A Guide for Biomedical Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to effectively utilize the CEC 2017 and CEC 2020 benchmark suites for evaluating evolutionary algorithms (EAs). It covers the foundational principles and design of these competitions, outlines methodologies for implementing and applying EAs to complex optimization problems, presents strategies for troubleshooting and enhancing algorithm performance, and establishes rigorous protocols for validation and comparative analysis. The insights are tailored to support the development of robust, computationally efficient models in biomedical and clinical research, where solving high-dimensional, constrained optimization problems is paramount.

Understanding the CEC Benchmarking Landscape: From Principles to Problem Design

The Role of CEC Competitions in Advancing Evolutionary Computation

The field of evolutionary computation has witnessed remarkable growth over the past decades, with researchers proposing numerous novel algorithms claiming superior performance. Without standardized evaluation methodologies, however, comparing these algorithms objectively remained challenging. The IEEE Congress on Evolutionary Computation (IEEE CEC) addressed this critical gap by establishing a structured framework for algorithmic assessment through its specialized competitions and benchmark test sets. These competitions have fundamentally shaped research practices in evolutionary computation by providing standardized evaluation platforms that enable direct, meaningful comparisons between optimization algorithms across diverse problem landscapes [1] [2].

Within this ecosystem, the CEC2017 and CEC2020 test sets have emerged as particularly influential benchmarks. CEC2017 introduced unprecedented complexity through rotated, shifted, and hybrid functions that more closely mimic real-world optimization challenges [3] [4]. CEC2020 further advanced the field by emphasizing scalability challenges through ultra-high-dimensional problems [5] [6]. Together, these test suites form complementary pillars for assessing algorithmic performance across different dimensions of difficulty, establishing themselves as fundamental tools in the evolutionary computation toolkit [4] [6].

This article analyzes the transformative impact of CEC competitions by examining the experimental frameworks, algorithmic progress, and performance trends emerging from systematic benchmarking on CEC2017 and CEC2020 test sets. Through detailed comparison of results and methodologies, we reveal how these competitions have driven innovation while establishing rigorous standards for claiming algorithmic improvements in the field.

CEC Benchmark Design Philosophy and Evolution

Fundamental Design Principles

CEC benchmarks are meticulously constructed to address specific challenges in optimization algorithm development. Unlike simplistic academic functions, CEC test suites incorporate mathematical transformations like rotation and shifting that eliminate exploitable regularities [7]. This design approach ensures that algorithms demonstrate genuine problem-solving capabilities rather than leveraging specialized tricks that work only on idealized problems. The benchmarks progressively increase in complexity from single unimodal functions to complex composite structures, systematically testing different algorithmic capabilities including exploration-exploitation balance, local optima avoidance, and search space navigation [4] [6].

The philosophical underpinning of CEC benchmark development centers on creating a hierarchy of difficulty that mirrors real-world optimization challenges. As noted in reports by Professor Liang Jing, a leading contributor to CEC benchmarks, traditional test functions suffered from oversimplification with small dimensions, no variable interactions, and predictable landscapes [7]. Modern CEC test sets specifically address these limitations through non-separable variables (where parameters cannot be optimized independently), adaptive landscape features, and dimensional scalability that allows testing from low to extremely high dimensions [1] [2].

Historical Progression from CEC2017 to CEC2020

The evolution from CEC2017 to CEC2020 represents a strategic shift in focus toward contemporary optimization challenges. CEC2017 established a comprehensive foundation with 30 diverse test functions categorized into unimodal, multimodal, hybrid, and composition types [3] [4]. This structure enabled researchers to identify specific algorithmic strengths and weaknesses across different problem categories. The hybrid functions (F11-F20) combined different basic functions with varying properties in different subcomponents, while composition functions (F21-F30) created even more complex landscapes with multiple global and local optima [4] [6].

CEC2020 built upon this foundation with a heightened emphasis on scalability and real-world relevance. While maintaining the categorical structure, CEC2020 introduced problems specifically designed to challenge algorithms in high-dimensional spaces (up to 1000 dimensions), addressing the "curse of dimensionality" that plagues many optimization methods [5] [8]. Furthermore, CEC2020 placed greater emphasis on numerical stability and constraint handling, reflecting practical considerations that algorithms must address in applied settings [6] [8]. This progression demonstrates how CEC competitions continuously adapt to push the boundaries of evolutionary computation research.

Table: Comparative Characteristics of CEC2017 and CEC2020 Test Sets

| Feature | CEC2017 Test Set | CEC2020 Test Set |

|---|---|---|

| Total Functions | 29 (originally 30, F2 removed) | 10 |

| Problem Dimensions | Standard 30D, 50D, 100D | Scalable up to 1000D |

| Function Categories | Unimodal, Multimodal, Hybrid, Composition | Unimodal, Multimodal, Hybrid, Composition |

| Key Innovations | Rotation & shift operations, hybrid/compostion structures | Extreme scalability, enhanced constraint handling |

| Primary Challenge | Local optima avoidance, multi-modal optimization | Dimensionality curse, computational efficiency |

| Real-world Relevance | Moderate (theoretical foundations) | High (emphasis on practical scalability) |

Experimental Framework for Benchmarking Optimization Algorithms

Standardized Evaluation Methodology

The CEC competitions establish rigorous experimental protocols to ensure fair and meaningful comparisons between optimization algorithms. The standard evaluation approach specifies independent runs (typically 20-30) for each algorithm on every test function to account for stochastic variations [4] [8]. Performance is primarily assessed using mean error values (the difference between the found optimum and the known global optimum), with standard deviations providing indications of algorithmic reliability [4] [6]. To control computational effort, evaluations typically employ a fixed maximum number of function evaluations (usually 10,000 times the problem dimension), making efficiency a critical performance factor [2].

Beyond simple solution quality metrics, comprehensive CEC evaluation incorporates multiple statistical measures. Researchers commonly employ Wilcoxon rank-sum tests for pairwise algorithm comparisons, Friedman tests for ranking multiple algorithms across all functions, and performance profiles that visualize the distribution of solution quality across different problems [8]. This multi-faceted assessment methodology ensures that reported performance advantages are statistically significant and consistent across diverse problem types rather than artifacts of selective reporting or favorable parameter tuning on specific functions.

Key Performance Metrics and Statistical Assessment

The CEC benchmarking process employs a hierarchical metrics approach to capture different aspects of algorithmic performance. The primary metric remains the solution accuracy measured through mean error values from multiple independent runs [4]. Additionally, convergence speed is frequently analyzed through generational progression plots, revealing how quickly algorithms approach high-quality solutions [8]. For dynamic and large-scale problems, computational efficiency (measured by CPU time or function evaluations until convergence) becomes increasingly important [5].

Statistical rigor forms the cornerstone of credible CEC benchmarking. As illustrated in experimental reports, proper evaluation must include not just average performance but measures of algorithmic robustness such as standard deviation, worst-case performance, and success rates across multiple runs [4] [8]. The non-parametric Friedman test with corresponding post-hoc analysis has emerged as the standard for determining statistical significance in algorithm rankings, with the critical difference diagram providing intuitive visual representation of performance hierarchies [6] [8].

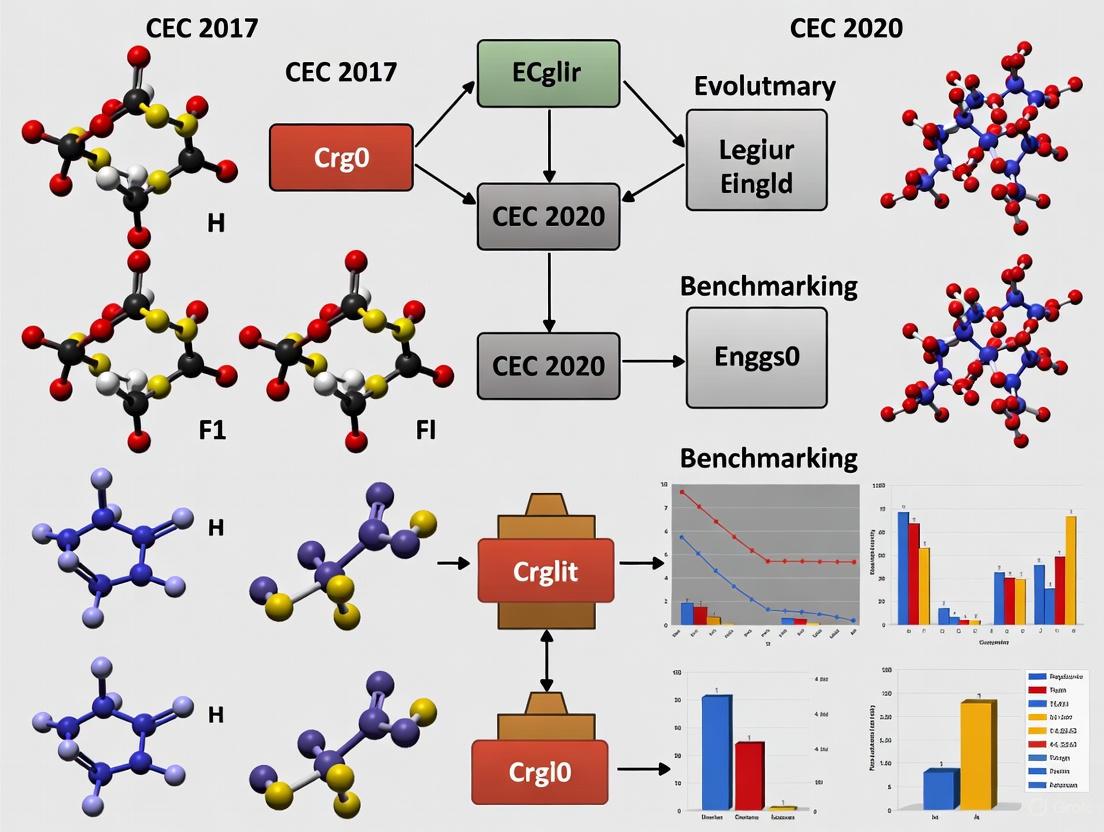

Diagram 1: Standard experimental workflow for CEC benchmark evaluations, highlighting the critical stages of performance metrics collection and statistical analysis.

Comparative Analysis of Algorithm Performance on CEC2017 and CEC2020

Performance Across Function Categories

Systematic evaluation across CEC2017 and CEC2020 test sets reveals distinct algorithmic performance patterns based on problem characteristics. On CEC2017's unimodal functions (F1, F3), algorithms with strong exploitation tendencies typically demonstrate faster convergence, with differential evolution variants often outperforming particle swarm optimization methods [4] [6]. However, on multimodal functions (F4-F10), algorithms incorporating diversity maintenance mechanisms show superior performance in avoiding local optima, with novel approaches like comprehensive learning PSO (CLPSO) displaying particular strength [4] [5].

The most significant performance differentiators emerge on the most challenging hybrid (F11-F20) and composition (F21-F30) functions in CEC2017, where no single algorithm dominates across all problems [6]. The hierarchical and rotated structures of these functions create deceptive landscapes that challenge an algorithm's ability to adapt search strategies dynamically. Similarly, on CEC2020's high-dimensional instances, algorithms with dimension reduction strategies or cooperative coevolution architectures demonstrate marked advantages, exemplified by the success of CCS-TG algorithms in CEC2021 competitions [9].

Champion Algorithm Progression

The historical record of CEC competition winners reveals an evolutionary trajectory in algorithm development, with a clear dominance of differential evolution (DE) variants in recent years. As shown in Table 2, the L-SHADE algorithm and its numerous enhancements have consistently ranked at the top, particularly through incorporating success-history based parameter adaptation and linear population size reduction [10] [5]. These innovations address DE's sensitivity to control parameter settings while maintaining its strong exploratory capabilities.

The progression from SHADE to L-SHADE and subsequently to NL-SHADE variants demonstrates how CEC competitions have driven specific algorithmic improvements. The introduction of non-linear parameter adaptation in NL-SHADE better mirrors the non-linear nature of optimization processes, while neighborhood-based mutation strategies enhance exploitation capabilities without sacrificing diversity [5]. This focused innovation, directly responsive to benchmark challenges, illustrates how CEC competitions serve as catalyst for algorithmic refinement rather than merely evaluation arenas.

Table: Champion Algorithms in CEC Competitions (2017-2022)

| Competition Year | Champion Algorithm | Base Algorithm | Key Innovations |

|---|---|---|---|

| CEC 2017 | LSHADE-cnEpSin | L-SHADE | Constraint handling, ensemble sinusoidal adaptation |

| CEC 2018 | LSHADE-SPA | L-SHADE | Semi-parameter adaptation strategy |

| CEC 2019 | EBOwithCMAR | Energy-Based Optimization | Covariance matrix adaptation & recombination |

| CEC 2020 | LSHADE-ND | L-SHADE | Neighborhood-based directed mutation |

| CEC 2021 | NL-SHADE-RSP | L-SHADE | Non-linear parameter adaptation, random scaling |

| CEC 2022 | NL-SHADE-LBC | L-SHADE | Local binary crossover operator |

Critical Research Reagents: The Algorithm Developer's Toolkit

Essential Computational Components

Successful participation in CEC competitions requires mastery of a sophisticated toolkit of computational components and strategies. The foundation consists of standard benchmark functions implemented with precise rotation, shifting, and composition operations to create the prescribed problem landscapes [3] [4]. These are coupled with statistical evaluation frameworks that automate the calculation of performance metrics and significance testing across multiple independent runs [8]. Additionally, visualization utilities for convergence curves, search trajectories, and solution distributions provide critical insights into algorithmic behavior beyond aggregate metrics [4] [6].

Advanced competitors employ specialized components to address specific benchmark challenges. For high-dimensional CEC2020 problems, dimension decomposition strategies break the search space into manageable subcomponents, while adaptive resource allocation directs computational effort toward the most promising regions [9] [8]. For multi-modal and hybrid functions, ensemble approaches combine multiple search strategies with switching mechanisms that activate appropriate behaviors for different problem phases or landscapes [10] [5].

Implementation and Validation Tools

The credibility of CEC competition results depends heavily on rigorous implementation and validation practices. Reference implementations of benchmark functions, available through the CEC website and repositories, ensure consistent problem definitions across research groups [3]. Validation scripts check compliance with competition guidelines regarding function evaluation limits, constraint handling, and measurement protocols [4] [8]. Additionally, comparison templates facilitate standardized reporting of results against reference algorithms, enabling meaningful cross-study comparisons [6] [8].

Table: Essential Research Reagents for CEC Benchmarking

| Tool Category | Specific Examples | Function in Research | Implementation Considerations |

|---|---|---|---|

| Benchmark Functions | CEC2017 (30 functions), CEC2020 (10 functions) | Standardized problem sets for evaluation | Proper rotation matrix implementation, boundary constraint handling |

| Performance Metrics | Mean error, Standard deviation, Success rate | Quantifying solution quality and reliability | Statistical significance testing, multiple run management |

| Reference Algorithms | L-SHADE, CMA-ES, jDE | Baseline for performance comparison | Parameter settings as specified in literature |

| Visualization Tools | Convergence plots, Search history animation | Algorithm behavior analysis | Consistent scales and formats for cross-study comparison |

| Statistical Test Suites | Wilcoxon test, Friedman test | Determining significance of results | Correct implementation of non-parametric procedures |

Signaling Pathways: Algorithmic Innovation Triggered by CEC Benchmarks

The L-SHADE Innovation Cascade

The progression of L-SHADE algorithms represents a paradigmatic example of how CEC benchmarks trigger specific algorithmic innovations. The original SHADE algorithm introduced success-history based parameter adaptation, maintaining memory archives of successful control parameters and using them to guide future parameter choices [10] [5]. This addressed DE's critical sensitivity to the scaling factor F and crossover rate Cr parameters. L-SHADE added linear population size reduction, systematically decreasing population size during evolution to transition from exploratory to exploitative search [5].

Subsequent enhancements responded directly to challenges posed by CEC2017 and CEC2020 benchmarks. The incorporation of neighborhood-based mutation in L-SHADE-ND improved performance on hybrid functions with variable structures across dimensions [5]. The transition to non-linear parameter adaptation in NL-SHADE variants better reflected the non-linear nature of optimization processes, particularly beneficial for composition functions with multiple funnels and complex basins of attraction [10] [5]. Each innovation targeted specific weaknesses revealed through systematic benchmarking on CEC test suites.

Specialized Strategies for Emerging Challenges

Beyond the L-SHADE lineage, CEC competitions have stimulated diverse innovations targeting specific benchmark characteristics. For CEC2020's large-scale problems, cooperative coevolution with time-dependent grouping (CCS-TG) emerged as a powerful strategy, intelligently decomposing high-dimensional spaces based variable interactions [9]. This approach proved particularly effective in the CEC2021 energy optimization competition, where it achieved first place by leveraging domain knowledge about temporal couplings in smart grid optimization problems [9].

For dynamic optimization problems in CEC2022, memory-based approaches combined with change detection mechanisms enabled algorithms to track moving optima efficiently [5]. The winning NL-SHADE-LBC algorithm incorporated local binary crossover to maintain diversity while facilitating knowledge transfer from previous environments [5]. These specialized strategies demonstrate how CEC competitions have expanded from testing general-purpose optimization capabilities to fostering domain-specific innovations with practical relevance.

Diagram 2: The innovation cascade in differential evolution algorithms driven by CEC benchmark challenges, showing how specific benchmark characteristics triggered corresponding algorithmic improvements.

The systematic benchmarking approach established through CEC competitions has fundamentally transformed evolutionary computation research practices. By providing standardized, challenging test suites with known global optima, these competitions enable objective comparison and drive targeted innovation. The progression from CEC2017 to CEC2020 demonstrates a strategic shift toward real-world relevance through heightened complexity, scalability demands, and practical constraint handling. The consistent outperformance of L-SHADE variants and their descendants highlights the effectiveness of success-history based parameter adaptation combined with population management strategies specifically refined in response to benchmark characteristics.

Future CEC competitions will likely continue this trajectory with increased emphasis on dynamic environments, multi-objective tradeoffs, and computation-intensive real-world simulations. The emerging paradigm shifts toward benchmarking problem families rather than fixed functions, and automated algorithm configuration represent promising directions that could further accelerate progress in evolutionary computation. Through these evolving frameworks, CEC competitions will continue their vital role as both arbiters of performance and catalysts of innovation in the optimization community.

A Critical Review of Real-Valued Constrained Optimization Benchmarks

The rigorous benchmarking of evolutionary algorithms (EAs) and metaheuristics is fundamental to advancement in optimization research. Benchmarks provide the standardized foundation for comparing algorithmic performance, tracking progress, and identifying promising new methodologies. Within evolutionary computation, the benchmark suites developed for the Congress on Evolutionary Computation (CEC) competitions have become widely adopted standards. This review provides a critical examination of two significant benchmarks: the CEC 2017 Constrained Real-Parameter Optimization benchmark and the CEC 2020 Real-World Constrained Engineering Optimization suite. Framed within a broader thesis on benchmarking practices, this analysis contrasts their design philosophies, experimental protocols, and the consequent implications for algorithm evaluation and development. Evidence suggests that the choice of benchmark suite can dramatically alter algorithmic rankings, highlighting a critical methodological concern for researchers [11].

Benchmark Suite Specifications and Design Philosophies

The CEC 2017 and CEC 2020 benchmark suites embody distinct design philosophies that reflect evolving perspectives on how constrained optimization algorithms should be evaluated.

CEC 2017 Constrained Real-Parameter Optimization Benchmark

The CEC 2017 benchmark is a comprehensive set of 28 constrained optimization problems with dimensions (D) ranging from 10 to 100 [12]. The evaluation protocol allows a maximum computational budget of 20,000 × D function evaluations for each problem [13]. This suite is characterized by its breadth, featuring a diverse mixture of objective functions constrained by various combinations of inequality, equality, and boundary constraints. The primary evaluation metric is the quality of the solution obtained within the fixed, relatively limited computational budget, emphasizing an algorithm's efficiency in rapid convergence and effective constraint handling under restricted resources [11].

CEC 2020 Real-World Constrained Engineering Optimization Suite

In contrast, the CEC 2020 suite comprises seven real-world engineering design problems [14]. This benchmark shifts focus toward practical applicability, featuring problems such as the Speed Reducer Weight Minimization, Tension/Compression Spring Design, and Welded Beam Design [14]. While dimensionalities are generally lower (typically 5 to 20), the allocated computational budget is substantially larger—up to 10,000,000 function evaluations for 20-dimensional cases [11]. This design rewards thorough exploration of the search space and favors algorithms with strong global exploration capabilities, even if they converge more slowly [11].

Table 1: Key Specifications of CEC 2017 and CEC 2020 Benchmark Suites

| Feature | CEC 2017 Benchmark | CEC 2020 Benchmark |

|---|---|---|

| Number of Problems | 28 [12] | 7 [14] |

| Problem Types | Synthetic mathematical functions | Real-world engineering problems [14] |

| Dimensionality (D) | 10, 30, 50, 100 [13] [12] | Primarily 5 - 20 [11] |

| Max Function Evaluations | 20,000 × D [13] | Up to 10,000,000 [11] |

| Primary Focus | Solution quality under limited budget | Finding highly precise solutions [11] |

Comparative Analysis of Algorithmic Performance

The structural differences between the CEC 2017 and CEC 2020 benchmarks significantly influence the relative performance and ranking of optimization algorithms. Large-scale studies reveal that algorithms excelling on one suite often achieve only moderate-to-poor performance on the other [11].

Performance Disparities Across Benchmarks

The extended computational budget of the CEC 2020 suite favors explorative algorithms that may initially converge slower but possess robust mechanisms for escaping local optima and thoroughly searching complex landscapes. Conversely, the CEC 2017 benchmark, with its tighter evaluation limit, rewards exploitative algorithms that can quickly converge to a good-quality solution [11]. This dichotomy leads to a notable divergence in rankings; algorithms that top the leaderboard on CEC 2020 frequently achieve only middle-tier results on CEC 2017, and vice-versa [11]. Furthermore, algorithms demonstrating strong performance on the synthetic CEC 2017 problems do not necessarily translate well to the real-world problems of the CEC 2017 suite, raising important questions about generalizability [11].

Case Studies of Competitive Algorithms

The performance landscape across these benchmarks is illustrated by the success of various advanced Differential Evolution (DE) variants:

- LSHADE Variants: Algorithms like L-SHADE and its modifications (e.g., LSHADE44-IEpsilon, CAL-SHADE) were highly competitive in CEC 2017 competitions, demonstrating exceptional performance under its specific budget constraints [13].

- IUDE (Improved Unified DE): This algorithm, which draws on advantages from multiple DE variants, won first place in the CEC 2018 constrained optimization competition, a benchmark similar to CEC 2017 [13].

- εMAg-ES: A variant of the matrix adaptation evolution strategy that incorporates a gradient-based repair mechanism, this algorithm secured second place in the CEC 2018 competition [13].

- mpmL-SHADE: A multi-population modified L-SHADE algorithm developed for CEC 2020, which finished 7th in that competition. Its design reflects adaptations needed for the different benchmark profile, such as dynamic control of mutation intensity [15].

- BROMLDE: An enhanced DE incorporating a Bernstein operator and a refracted oppositional-mutual learning strategy, recently evaluated on CEC 2020 benchmarks where it demonstrated high global optimization capability and convergence speed [16].

Table 2: Representative Algorithms and Their Benchmark Performance

| Algorithm | Key Features | Performance Highlights |

|---|---|---|

| IUDE | Improved parameter adaptation and offspring selection; unified framework [13]. | 1st place, CEC 2018 Competition [13]. |

| εMAg-ES | Combines ε-constraint and gradient-based repair with MA-ES [13]. | 2nd place, CEC 2018 Competition [13]. |

| RDR-εMA-ES | Replaces gradient-based repair with Random Direction Repair (RDR) [13]. | Competitive performance on CEC 2017 benchmarks [13]. |

| BROMLDE | Bernstein operator; refracted oppositional-mutual learning; no intrinsic parameter tuning [16]. | High performance on CEC 2020 benchmarks and engineering problems [16]. |

| LSHADESPA | Linear population reduction; SA-based scaling factor; oscillating crossover [17]. | Superior results on CEC 2014, 2017, and 2022 benchmarks [17]. |

Experimental Protocols and Evaluation Methodologies

To ensure fair and reproducible comparisons, researchers adhere to standardized experimental protocols when evaluating algorithms on these benchmarks.

Standard Experimental Workflow

The following diagram illustrates the common workflow for conducting a benchmark comparison study, from algorithm selection to result analysis.

Detailed Methodological Components

- Algorithm Selection and Parameter Configuration: In large-scale comparisons, a diverse set of algorithms (e.g., 73 as in one study) is selected. A critical choice is whether to run algorithms with their author-proposed parameters ("as-is") or to perform parameter tuning for each benchmark. While "as-is" testing is common, tuning is recommended for fair comparison, though computationally expensive [11].

- Execution and Data Collection: For each problem in the benchmark suite, algorithms are typically run 25 independent times to account for stochasticity [13]. Key performance indicators recorded include the mean and standard deviation of the best objective function value found, the convergence trajectory, and the constraint violation measure [13] [16].

- Statistical Testing and Ranking: Non-parametric statistical tests are standard for determining significance. The Friedman test with corresponding average ranking is widely used to establish an overall performance ranking across all problems in a suite [16] [17]. Post-hoc analysis via the Wilcoxon signed-rank test is then employed for pairwise comparisons between algorithms [17].

Researchers working with CEC benchmarks utilize a standard set of computational tools and problem definitions.

Table 3: Essential Research Reagents for Constrained Optimization Studies

| Tool/Resource | Type | Function and Purpose |

|---|---|---|

| CEC 2017 Benchmark | Problem Suite | 28 constrained problems for evaluating algorithmic efficiency under limited budgets (20,000× D FEs) [13] [12]. |

| CEC 2020 Benchmark | Problem Suite | 7 real-world engineering problems for evaluating precision and robustness with high budgets (up to 10M FEs) [11] [14]. |

| Success-History Based Parameter Adaptation (SHADE) | Algorithm Framework | A DE variant with history-based adaptive parameter control, forming the base for many advanced algorithms like L-SHADE [13] [17]. |

| Random Direction Repair (RDR) | Constraint Handling Technique | A repair strategy that guides infeasible solutions using random directions, reducing function evaluation costs vs. gradient-based methods [13]. |

| Friedman Rank Test | Statistical Tool | Non-parametric statistical test used to rank multiple algorithms across various benchmark problems [16] [17]. |

Implications for Research and Practice

The significant performance disparities observed across benchmark suites carry profound implications for both researchers and practitioners in the field.

Impact on Algorithm Development and Evaluation

The demonstrated lack of a universal winner underscores the critical importance of benchmark selection. Relying on a single benchmark set for evaluating new algorithms can lead to biased conclusions and specialized algorithms that lack generalizability [11]. The research community must therefore prioritize comprehensive testing across multiple benchmark suites with varying characteristics, including both synthetic and real-world problems. Furthermore, the common practice of using author-proposed parameters without tuning, while computationally pragmatic, may not reveal an algorithm's true potential or robustness [11].

Guidance for Practitioners

For practitioners seeking suitable algorithms for specific applications, the findings advise a problem-driven selection process. If the target application involves real-world engineering design with sufficient computational resources for high-precision solutions, algorithms ranked highly on the CEC 2020 benchmark may be more appropriate. Conversely, for applications requiring good solutions under strict computational limits, top performers on the CEC 2017 benchmark are likely preferable [11]. This highlights the necessity of aligning the evaluation scenario with the practical operational context.

This critical review demonstrates that the CEC 2017 and CEC 2020 constrained optimization benchmarks serve complementary yet distinct roles in evaluating evolutionary algorithms. The CEC 2017 suite tests efficiency and rapid convergence, while the CEC 2020 suite assesses precision and explorative robustness. The stark differences in algorithmic performance and ranking across these suites confirm that the choice of benchmark is not merely a procedural detail but a fundamental factor that shapes research outcomes and conclusions. Future progress in the field depends on the development of more robust, generalizable algorithms and a commitment to multi-faceted evaluation that acknowledges the "no free lunch" reality, wherein no single algorithm dominates across all problem types [11].

The CEC 2017 test suite represents a cornerstone in the field of evolutionary computation, providing a standardized set of benchmark problems designed to rigorously test and compare the performance of single-objective, real-parameter optimization algorithms. Developed for a special session and competition at the IEEE Congress on Evolutionary Computation (CEC), this suite presents a collection of 29 scalable benchmark functions that encapsulate a wide spectrum of challenges and problem characteristics commonly encountered in real-world optimization scenarios [18] [19].

Benchmarking plays an indispensable role in the development and assessment of evolutionary algorithms (EAs), particularly given the scarcity of theoretical performance results for optimization tasks of notable complexity [19]. The CEC 2017 suite builds upon earlier benchmark environments while introducing enhanced complexities through techniques such as shifting, rotation, and hybridization of basic functions [18]. This article provides a comprehensive deconstruction of the CEC 2017 test suite, examining its problem features, inherent challenges, and performance evaluation methodologies within the broader context of benchmarking evolutionary algorithms.

Problem Formulation and Categorization

The CEC 2017 test suite is structured around a black-box optimization paradigm, where algorithms evaluate candidate solutions without access to the analytical structure of the underlying problems. All test functions in the suite are subject to shifting by a predefined vector ((\vec{o})) and rotation using specific rotation matrices ((\mathbf{M}_i)) assigned to each function [20]. The general form of these functions can be represented as:

[Fi = fi(\mathbf{M}(\vec{x}-\vec{o})) + F_i^*]

where (fi(.)) represents the base function derived from classical mathematical functions, and (Fi^*) denotes the known global optimum value [20]. The search space for all functions is defined within ([-100, 100]^d), where (d) represents the dimensionality of the problem [20].

The suite organizes its 29 functions into four distinct categories, each designed to test specific algorithmic capabilities:

Unimodal Functions (F1-F3)

These functions contain only one global optimum without any local optima. They primarily test the exploitation capacity and convergence speed of optimization algorithms. Despite their seemingly simple structure, the inclusion of shifting and rotation mechanisms introduces significant challenges for algorithm performance [18].

Simple Multimodal Functions (F4-F10)

This category introduces multiple local optima alongside the global optimum, creating a more complex fitness landscape. These functions evaluate an algorithm's exploration capability and its ability to escape from local optima while navigating deceptive gradient information [18].

Hybrid Functions (F11-F20)

Hybrid functions combine different subcomponents derived from various basic function types with dissimilar characteristics. These functions feature variable dependencies and non-separability in different dimensions, creating highly challenging optimization landscapes. The subcomponents are assigned to different segments of the decision space through a partitioning procedure [18].

Composition Functions (F21-F30)

Composition functions represent the most complex category, constructed by combining multiple basic functions with different properties. These functions create asymmetric and non-linear fitness landscapes with varying local optima densities and basin sizes. They test an algorithm's ability to adapt to different function characteristics simultaneously [18].

Table 1: CEC 2017 Test Suite Problem Categories and Characteristics

| Category | Function Numbers | Key Characteristics | Primary Algorithmic Capability Tested |

|---|---|---|---|

| Unimodal | F1-F3 | Single global optimum, no local optima | Exploitation, convergence speed |

| Simple Multimodal | F4-F10 | Multiple local optima | Exploration, local optima avoidance |

| Hybrid | F11-F20 | Combined subcomponents with different properties | Navigating variable dependencies, non-separability |

| Composition | F21-F30 | Multiple basic functions with different features | Adaptation to diverse landscape characteristics |

Key Challenges and Problem Features

The CEC 2017 test suite incorporates several sophisticated design features that significantly increase the difficulty of optimization compared to earlier benchmark sets:

Shifted and Rotated Landscapes

All functions in the suite are subjected to coordinate system transformations through shifting and rotation operations. The shifting mechanism moves the global optimum away from the center of the search space, while rotation introduces variable interactions, making the problems non-separable [20]. This means that variables cannot be optimized independently, effectively disabling coordinate descent approaches and requiring more sophisticated optimization strategies.

Variable Linkages and Non-Separability

Through the application of rotation matrices, the suite creates strong variable linkages, where the effect of changing one variable depends on the values of other variables. This characteristic mirrors the complexity of real-world optimization problems, where parameters often exhibit complex interdependencies that must be considered simultaneously during the optimization process [18].

High-Dimensional and Scalable Formulations

The test functions are designed to be scalable to different dimensions, typically evaluated in dimensions ranging from 10 to 100 [11]. This scalability allows researchers to assess how algorithm performance degrades as problem dimensionality increases—a critical consideration for real-world applications where high-dimensional parameter spaces are common.

Imbalanced Subcomponents

In hybrid and composition functions, the integration of multiple subfunctions with different properties and scales creates imbalanced fitness landscapes. Some subcomponents may dominate the overall fitness function, while others present much smaller basins of attraction. This imbalance can mislead search algorithms toward prominent but suboptimal regions [18].

Experimental Protocols and Evaluation Methodology

Proper experimental design is crucial for obtaining meaningful and comparable results when using the CEC 2017 test suite. The following protocols represent standard practices in the field:

Performance Assessment Criteria

The CEC competitions typically employ a fixed-budget evaluation approach, where algorithms are allocated a predetermined number of function evaluations (often up to 10,000×D, where D is the problem dimensionality) and ranked based on the quality of solutions found within this computational budget [11]. This contrasts with the Black-Box Optimization Benchmarking (BBOB) approach, which measures the speed at which algorithms reach a desired solution quality [11].

Statistical Significance Testing

To ensure robust comparisons, researchers typically perform multiple independent runs (commonly 51 runs as mentioned in CEC 2017 documentation) of each algorithm on every test function. Statistical tests, particularly the Wilcoxon signed-rank test and Friedman rank test, are then employed to determine significant performance differences between algorithms [17] [21].

Error Measurement

Performance is typically evaluated based on the error value ((f(x) - f(x^))), where (f(x)) is the best solution found by the algorithm and (f(x^)) is the known global optimum. This error metric provides a standardized measure of how close an algorithm gets to the true optimum within the allocated computational budget [18].

Result Reporting

Comprehensive reporting should include not only mean and standard deviation values but also ranking statistics across the entire benchmark suite. This holistic view helps identify algorithms that perform consistently well across diverse problem types rather than excelling on only specific function categories [11].

Performance Analysis of Representative Algorithms

Extensive testing of various optimization algorithms on the CEC 2017 test suite has revealed distinct performance patterns across different problem categories:

Differential Evolution Variants

Differential Evolution (DE) algorithms and their enhanced variants have demonstrated particularly strong performance on the CEC 2017 problems. Recent improvements include:

- LSHADESPA: Incorporates a proportional shrinking population mechanism, simulated annealing-based scaling factor, and oscillating inertia weight-based crossover rate [17].

- ACRIME: Enhances the RIME algorithm with an adaptive hunting mechanism and criss-crossing mechanism to improve solution diversity [21].

These advanced DE implementations have achieved top rankings in comparative studies, particularly for hybrid and composition functions where their adaptive mechanisms effectively navigate complex fitness landscapes [17].

Algorithm Performance Variations

Recent large-scale comparisons of 73 optimization algorithms on multiple CEC benchmark sets revealed that algorithms performing well on older benchmarks (like CEC 2011 and CEC 2014) often show moderate-to-poor performance on the CEC 2017 set, and vice versa [11]. This highlights the unique challenges posed by the CEC 2017 suite and suggests that algorithm performance is highly benchmark-dependent.

Table 2: Recent Algorithm Performance on CEC 2017 Test Suite

| Algorithm | Key Mechanisms | Performance Highlights | Statistical Significance |

|---|---|---|---|

| LSHADESPA | Population shrinking, SA-based scaling factor, oscillating crossover | Superior on CEC 2014, 2017, 2021, 2022 benchmarks | Friedman rank test: 1st rank on multiple suites [17] |

| ACRIME | Adaptive hunting, criss-crossing mechanism | Excellent performance in CEC 2017 tests | Wilcoxon signed-rank test shows significance [21] |

| iEACOP | Improved evolutionary algorithm | Outperforms basic version on 27 of 29 functions | Comparable to top CEC 2017 competition algorithms [22] |

Comparative Analysis with Other Benchmark Environments

Understanding how the CEC 2017 test suite relates to other benchmark environments provides valuable context for interpreting research findings:

Comparison with CEC 2020 Benchmark

The CEC 2020 benchmark introduced significant changes from earlier suites, including fewer problems (only 10 functions) and a much higher allocation of function evaluations (up to 10,000,000 for 20-dimensional problems) [11]. This shift in evaluation criteria favors more explorative, slower-converging algorithms compared to the CEC 2017 suite, which employs a more constrained computational budget [11].

Comparison with Real-World Problem Benchmarks

While mathematical benchmarks like CEC 2017 provide controlled testing environments, studies have shown that algorithms performing well on these synthetic problems may not necessarily excel on real-world constrained optimization problems [23]. Recent efforts have created benchmark suites containing 57 real-world constrained optimization problems to better evaluate algorithm performance on practical applications [23].

Comparison with COCO/BBOB Framework

The Comparing Continuous Optimizers (COCO) platform, particularly its Black-Box Optimization Benchmarking (BBOB) component, represents an alternative benchmarking approach with different evaluation philosophies. While CEC benchmarks typically fix the computational budget and measure solution quality, BBOB often fixes solution quality targets and measures the computational effort required to achieve them [19] [11].

Diagram 1: CEC 2017 Test Suite Structure and Algorithm Challenges. This diagram illustrates the hierarchical organization of the test suite and how different problem features create specific challenges for optimization algorithms.

Successfully conducting research with the CEC 2017 test suite requires familiarity with several key resources and implementation strategies:

Reference Implementations

Official CEC 2017 function implementations are available in multiple programming languages, including MATLAB, C, and Java. These reference implementations ensure consistent evaluation across different studies and prevent implementation discrepancies from affecting performance comparisons [18].

Algorithm Frameworks

Several algorithmic frameworks provide built-in support for the CEC 2017 benchmark suite:

- NEORL: A Python-based framework that includes implementations of all CEC 2017 functions alongside various evolutionary algorithms, facilitating straightforward experimentation and comparison [20].

- PlatEMO: A MATLAB platform for evolutionary multi-objective optimization that has been extended to handle single-objective benchmarks like CEC 2017 [22].

Experimental Design Tools

Proper experimental design requires tools for:

- Statistical analysis: Packages for conducting Wilcoxon signed-rank tests, Friedman tests, and post-hoc analysis to determine statistical significance of performance differences.

- Data visualization: Libraries for creating performance profiles, convergence graphs, and box plots to effectively communicate results.

- Result aggregation: Scripts for calculating mean performance, standard deviations, and ranking metrics across multiple independent runs.

Table 3: Essential Research Resources for CEC 2017 Benchmarking

| Resource Category | Specific Tools/Approaches | Primary Function | Implementation Examples |

|---|---|---|---|

| Benchmark Implementations | Official CEC 2017 code | Provide standardized function evaluations | MATLAB, C, Java versions [18] |

| Algorithm Frameworks | NEORL, PlatEMO | Integrated algorithm and benchmark implementations | Python, MATLAB environments [20] [22] |

| Statistical Analysis | Wilcoxon, Friedman tests | Determine significance of performance differences | Scipy (Python), Statistics Toolbox (MATLAB) [21] [17] |

| Performance Assessment | Error value, convergence speed | Measure algorithm effectiveness | Custom scripts based on CEC criteria [18] |

The CEC 2017 test suite represents a significant milestone in the evolution of benchmarking environments for single-objective real-parameter optimization. Through its carefully designed categories of unimodal, multimodal, hybrid, and composition functions—enhanced with shifting, rotation, and variable linkage techniques—the suite provides a comprehensive testbed for evaluating algorithm performance across diverse problem characteristics.

Research conducted with this benchmark suite has yielded several important insights. First, the choice of benchmark environment significantly impacts algorithm rankings, with different algorithms excelling on different benchmark sets [11]. Second, advanced adaptive mechanisms, such as those employed in state-of-the-art Differential Evolution variants, have demonstrated remarkable effectiveness on the suite's most challenging problems [17]. Finally, the relationship between performance on synthetic benchmarks like CEC 2017 and real-world optimization problems remains complex, emphasizing the need for continued benchmarking research using both mathematical and practical problems [23].

As the field progresses, the CEC 2017 test suite continues to serve as a vital tool for understanding algorithm strengths and weaknesses, guiding algorithmic development, and fostering innovation in evolutionary computation. Its structured complexity ensures it will remain relevant for evaluating new optimization methodologies while providing insights into how algorithms can be better designed to handle the challenges of real-world optimization problems.

Benchmarking plays a crucial role in the development and assessment of contemporary evolutionary algorithms (EAs), providing a common foundation for comparing algorithmic performance across diverse optimization challenges [19]. The IEEE Congress on Evolutionary Computation (CEC) competitions have established themselves as key platforms for this evaluation, with their test function environments turning out "very popular for benchmarking Evolutionary Algorithms" [19]. This comparison guide examines the significant evolution from the CEC 2017 to the CEC 2020 benchmark suites, analyzing how new problem classes and modified scalability have reshaped performance evaluation standards and algorithm design requirements. Understanding these changes is essential for researchers and practitioners seeking to develop robust optimization algorithms capable of addressing modern computational challenges in fields including drug development and complex systems modeling.

The transition from CEC 2017 to CEC 2020 represents more than just routine updates—it constitutes a paradigm shift in testing methodologies and evaluation criteria that has fundamentally altered what constitutes a state-of-the-art optimization algorithm [11]. Where older benchmarks like CEC 2017 typically allowed up to 10,000D function calls and contained 20-30 problems, the CEC 2020 set introduced dramatically different parameters: only ten problems with dimensions from 5 to 20, but with allowed function evaluations increased to as many as 10,000,000 for 20-dimensional cases [11]. This substantial shift "changes the expectations from competing algorithms – those slower and more explorative would be favored over those quicker and more exploitative ones" [11], potentially creating a significant divergence in algorithm rankings between benchmark generations.

Comparative Analysis of Benchmark Suites

Structural Framework and Design Philosophy

The CEC competition benchmarks for constrained real-parameter optimization have evolved through multiple iterations, with CEC 2017 and CEC 2020 representing distinct philosophies in benchmark design. The CEC 2017 benchmark set continued the tradition of previous CEC competitions by providing a comprehensive suite of problems with varying characteristics and complexity levels [19]. These benchmarks were designed to test algorithm performance across a diverse landscape of optimization challenges, including functions with different analytical structures, modality, ruggedness, and conditioning [19].

In contrast, the CEC 2020 benchmark suite introduced a more focused approach with significant modifications to testing parameters and scalability requirements. Rather than simply expanding upon previous designs, CEC 2020 reimagined the fundamental benchmarking paradigm by dramatically increasing the allowed function evaluations while reducing the total number of test problems [11]. This strategic shift enables more thorough exploration of the search space, rewarding algorithms with sustained convergence capabilities over extended evaluation periods.

Technical Specifications and Problem Characteristics

Table 1: Comparative Specifications of CEC 2017 and CEC 2020 Benchmark Suites

| Feature | CEC 2017 Benchmark | CEC 2020 Benchmark |

|---|---|---|

| Number of Problems | 20-30 problems [11] | 10 problems [11] [24] |

| Dimensionality | 10-, 30-, 50-, and 100-D [11] | 5-, 10-, 15-, and 20-D [11] |

| Function Evaluations | Up to 10,000D [11] | Up to 10,000,000 for 20-D [11] |

| Problem Types | Unimodal, multimodal, hybrid, composite [25] | Unimodal, multimodal, hybrid, composite [25] |

| Primary Focus | Performance under limited budget | Convergence quality with extensive evaluations |

The CEC 2017 benchmark suite maintained the traditional structure of previous CEC competitions, featuring a substantial number of problems (20-30) across various dimensionalities (10-, 30-, 50-, and 100-D) [11]. The maximum number of function evaluations was typically set at 10,000D, creating a challenging environment where algorithms needed to demonstrate efficiency under constrained computational budgets [11]. This approach mirrored real-world scenarios where objective function evaluations might be computationally expensive or time-consuming.

The CEC 2020 benchmark suite represents a departure from this tradition by focusing on fewer problems (10) at lower dimensionalities (5-, 10-, 15-, and 20-D) but allowing substantially more function evaluations—up to 10,000,000 for 20-dimensional problems [11]. This design shift favors "those slower and more explorative" algorithms over "quicker and more exploitative ones" [11], fundamentally changing the algorithmic traits rewarded by the benchmarking process. The CEC 2020 problems maintain similar taxonomic classifications to their predecessors (unimodal, multimodal, hybrid, and composite functions) but with updated mathematical constructions that present contemporary challenges to optimization algorithms [25].

Performance Evaluation and Experimental Protocols

Standardized Testing Methodologies

The experimental protocols for evaluating algorithm performance on CEC benchmarks follow rigorous methodologies to ensure fair and reproducible comparisons. For both CEC 2017 and CEC 2020 benchmarks, standardized testing procedures include independent multiple runs (typically 20-30 independent runs per problem) to account for stochastic variations in algorithm performance [26]. The use of fixed evaluation budgets ensures consistent comparison metrics across different algorithmic approaches.

Performance assessment employs quantitative metrics centered on solution quality and computational efficiency. For CEC 2017-style benchmarks with limited function evaluations, the primary metric is the quality of solutions found within the allocated computational budget [11]. In contrast, CEC 2020 benchmarks emphasize convergence behavior over extended evaluation sequences, monitoring how solution quality improves with increasing function evaluations [11]. Statistical significance testing, typically using non-parametric tests like the Wilcoxon rank-sum test, validates performance differences between algorithms [27].

Algorithmic Performance Across Benchmark Generations

Table 2: Performance Comparison of Representative Algorithms on CEC Benchmarks

| Algorithm | CEC 2017 Performance | CEC 2020 Performance | Key Characteristics |

|---|---|---|---|

| CSsin | Competitive results on CEC 2017 benchmarks [25] | Strong performance, utilizes dual search strategy [25] | Linearly decreasing switch probability, adaptive population size |

| LSHADESPA | Effective on CEC 2017 problems [17] | Superior results on CEC 2020 suite [17] | Proportional population reduction, SA-based scaling factor |

| j2020 | Not specifically reported for CEC 2017 | Specifically designed for CEC 2020 challenges [24] | Two subpopulations, crowding mechanism, hybrid mutation |

| AGSK | Moderate performance on older benchmarks [24] | Enhanced performance on CEC 2020 [24] | Adaptive knowledge factor and ratio parameters |

| COLSHADE | Applied to CEC 2017 constrained optimization [24] | Effective on CEC 2020 constrained problems [24] | Adaptive Lévy flight mutation, dynamic tolerance handling |

Comparative studies reveal that algorithm performance rankings can vary significantly between CEC 2017 and CEC 2020 benchmarks due to their divergent evaluation criteria [11]. Algorithms that excel on CEC 2017 benchmarks typically demonstrate rapid initial convergence and efficient exploitation characteristics, enabling them to find reasonable solutions within limited evaluation budgets. In contrast, top performers on CEC 2020 benchmarks often incorporate more sophisticated exploration mechanisms and sustained convergence strategies that continue to refine solutions through millions of function evaluations [11] [25].

The CSsin algorithm, an enhanced Cuckoo Search variant, demonstrates this divergence through its performance across benchmark generations. CSsin incorporates four major modifications: new techniques for global and local search, a dual search strategy, linearly decreasing switch probability, and linearly decreasing population size [25]. These enhancements enable competitive performance on both CEC 2017 and CEC 2020 benchmarks, though its architectural advantages are more pronounced in the extended evaluation environment of CEC 2020 [25].

Similarly, the LSHADESPA algorithm exemplifies specialization for modern benchmarking environments through its incorporation of three significant modifications: proportional shrinking population mechanism, simulated annealing-based scaling factor, and oscillating inertia weight-based crossover rate [17]. These features enable superior performance on CEC 2020 problems by maintaining exploration diversity while progressively refining solution quality across extensive evaluation sequences.

Evolution of Algorithm Design Requirements

Adaptation to Shifting Benchmark Paradigms

The evolution from CEC 2017 to CEC 2020 benchmarks has fundamentally altered algorithm design priorities, necessitating architectural changes to maintain competitiveness. CEC 2017 benchmarks rewarded algorithms capable of rapid initial convergence and effective resource allocation within tight evaluation budgets [11]. Successful algorithms for these environments typically employed aggressive exploitation strategies, efficient memory mechanisms, and adaptive parameter control responsive to immediate performance feedback.

In contrast, CEC 2020 benchmarks favor algorithms with sustained convergence characteristics, balanced exploration-exploitation tradeoffs, and resilience to premature convergence [11] [25]. The dramatically increased evaluation budget enables more sophisticated search strategies that maintain population diversity while progressively focusing on promising regions. Algorithms like j2020 exemplify this approach through their use of multiple subpopulations, crowding mechanisms to preserve diversity, and hybrid mutation strategies that dynamically adapt to search progression [24].

Impact on Real-World Application Performance

The paradigm shift between benchmark generations has important implications for real-world applications, particularly in domains like drug development where optimization challenges may involve complex simulation-based evaluations. Research indicates that "algorithms that perform best on older sets are more flexible than those that perform best on CEC 2020 benchmark" when applied to real-world problems [11]. This suggests that while CEC 2020 benchmarks may better approximate problems requiring extensive computational resources, older benchmarks might more accurately represent scenarios with constrained evaluation budgets.

Studies testing 73 optimization algorithms on multiple benchmark sets including CEC 2011 real-world problems found that "almost all algorithms that perform best on CEC 2020 set achieve moderate-to-poor performance on older sets, including real-world problems from CEC 2011" [11]. This performance cross-over effect highlights the risk of overspecialization and underscores the importance of selecting benchmarks that accurately reflect target application domains.

Visualization of Benchmark Evolution

Visualization of Benchmark Evolution and Algorithm Impact

This diagram illustrates the fundamental shifts between CEC 2017 and CEC 2020 benchmarking paradigms and their implications for algorithm design. The evolutionary pathway highlights how changes in problem set composition, dimensionality, and evaluation budgets have driven corresponding adaptations in algorithm architecture and performance characteristics.

Essential Research Toolkit

Table 3: Research Reagent Solutions for CEC Benchmark Experiments

| Research Tool | Function | Implementation Examples |

|---|---|---|

| CEC Benchmark Functions | Standardized problem sets for algorithm comparison | CEC 2017 (30 problems), CEC 2020 (10 problems) [11] [24] |

| Performance Metrics | Quantify solution quality and algorithmic efficiency | Best, median, worst objective values; statistical significance tests [26] [27] |

| Parameter Tuning Methods | Optimize algorithm control parameters for specific benchmarks | SHADE, LSHADE population reduction strategies [17] |

| Constraint Handling Techniques | Manage feasible region search in constrained optimization | Adaptive tolerance, penalty functions, feasibility rules [19] [24] |

| Statistical Testing Frameworks | Validate performance differences between algorithms | Wilcoxon signed-rank test, Friedman test [27] [17] |

The research toolkit for contemporary evolutionary computation experiments requires both standardized benchmarking resources and sophisticated analysis methodologies. CEC benchmark functions provide the foundational testbed for algorithm comparison, with each generation introducing new challenges and refined problem structures [11] [24]. Performance metrics must be carefully selected to align with benchmarking objectives—emphasizing solution quality under limited budgets for CEC 2017-style evaluations versus convergence behavior across extended evaluations for CEC 2020 environments [26] [11].

Advanced parameter control mechanisms have become essential components of competitive algorithms, with methods like the linear population size reduction in LSHADE and simulated annealing-based scaling factors in LSHADESPA demonstrating significant performance improvements [17]. Similarly, sophisticated constraint handling techniques remain crucial for real-world applications, with approaches like dynamic tolerance adjustment in COLSHADE enabling more effective navigation of complex feasible regions [24].

The evolution from CEC 2017 to CEC 2020 benchmarks represents a significant transformation in evolutionary computation evaluation methodologies, with profound implications for algorithm design and performance assessment. The reduction in problem count coupled with dramatically increased evaluation budgets has shifted the competitive landscape, favoring algorithms with sustained convergence properties over those optimized for rapid initial progress. This paradigm shift necessitates careful consideration when selecting benchmarking environments for algorithm development, particularly for real-world applications where computational constraints may align more closely with older benchmarking approaches.

The emergence of specialized algorithms optimized for CEC 2020 challenges—including CSsin, LSHADESPA, and j2020—demonstrates the adaptive response of the research community to these evolving standards [24] [25] [17]. However, the observed performance cross-over effect, where algorithms excelling on CEC 2020 benchmarks show reduced effectiveness on older benchmarks and real-world problems, highlights the ongoing challenge of developing universally capable optimization techniques [11]. Future benchmarking efforts must continue to balance mathematical sophistication with practical relevance, ensuring that evolutionary computation research remains grounded in the authentic challenges facing scientific computing and industrial applications.

Benchmarking forms the cornerstone of progress in evolutionary computation, providing a standardized framework for evaluating and comparing the performance of optimization algorithms. Within this ecosystem, the Competition on Evolutionary Computation (CEC) benchmark sets, particularly CEC 2017, serve as critical proving grounds for new methodologies. These benchmarks are meticulously designed to represent diverse problem characteristics that mirror challenges found in real-world optimization scenarios, from drug discovery to engineering design. Understanding problem hardness—shaped by factors such as modality, constraints, and the structure of feasible regions—is paramount for researchers developing next-generation evolutionary algorithms. The CEC 2017 benchmark suite specifically presents a collection of 30 search problems with diverse characteristics including unimodal, multimodal, hybrid, and composition functions, designed to rigorously test algorithm performance under various conditions [22] [11].

This guide provides a comprehensive analysis of how contemporary evolutionary algorithms perform on these established benchmarks, examining the relationship between problem characteristics and algorithmic performance. We present experimental data from recent studies, detailed methodologies for proper benchmarking, and essential resources for researchers working at the intersection of computational intelligence and applied optimization.

Decoding Problem Hardness in CEC Benchmarks

Problem hardness in evolutionary computation is not an intrinsic property but rather emerges from the interaction between a problem's characteristics and an algorithm's operational mechanics. The CEC benchmarks are explicitly designed to probe specific dimensions of problem hardness through controlled problem features.

Modality and Ruggedness

Modality refers to the number of optima in a search space, directly influencing an algorithm's ability to locate global rather than local solutions. Unimodal functions contain a single optimum, primarily testing an algorithm's convergence behavior and exploitation capabilities. Multimodal functions introduce multiple optima, creating deceptive landscapes that challenge an algorithm's exploration abilities and its capacity to escape local attractors [22]. The CEC 2017 suite includes both unimodal and multimodal functions, with the latter category further divided into simple and composition functions that combine multiple benchmark functions with different properties within a single search space [11].

Constraints and Feasible Regions

Constrained optimization problems introduce boundaries that define feasible solutions, creating complex, non-linear relationships between variables. The structure of the feasible region significantly impacts algorithm performance; when feasible regions become disjointed or constitute only a small portion of the overall search space, algorithm performance typically degrades as maintaining feasibility while progressing toward optima becomes increasingly challenging [22]. The CEC 2017 benchmark includes rotated and shifted functions, where variables undergo linear transformations, creating non-separable problems where variables cannot be optimized independently [11].

Dimensionality and Scalability

Problem dimension (D) exponentially increases search space volume, creating what is commonly known as the "curse of dimensionality." The CEC 2017 benchmark tests algorithms across dimensions typically ranging from 10 to 100, requiring strategies that can maintain effectiveness as search spaces expand [11]. Higher-dimensional problems demand sophisticated population management and adaptation strategies to maintain adequate coverage of the search space while still converging to high-quality solutions.

Table 1: Problem Hardness Characteristics in CEC 2017 Benchmark Suite

| Characteristic | Description | Impact on Algorithm Performance |

|---|---|---|

| Modality | Number of optima in search space | Multimodal functions test exploration capability and premature convergence resistance |

| Variable Interaction | Degree of dependency between variables | Non-separable problems challenge coordinate-based search strategies |

| Constraints | Boundaries defining feasible solutions | Complex feasible regions increase difficulty of maintaining feasibility while optimizing |

| Dimensionality | Number of decision variables | Higher dimensions exponentially increase search space volume |

| Function Landscape | Geometry of fitness landscape | Discontinuous, narrow, or deceptive landscapes challenge convergence |

Experimental Protocols for Benchmark Evaluation

Proper experimental methodology is essential for obtaining valid, comparable results when evaluating evolutionary algorithms on CEC benchmarks. The following protocols represent community-established standards derived from recent literature.

Standard Experimental Setup

The CEC 2017 benchmark specification defines a rigorous experimental framework. Each algorithm should be run 51 times independently on each function with different random seeds to account for stochastic variations [11]. The maximum number of function evaluations (MFE) is typically set to 10,000 × D, where D represents the problem dimension [11]. This fixed budget approach tests an algorithm's efficiency in utilizing limited computational resources, mirroring constraints often encountered in real-world applications like molecular docking simulations or clinical trial optimization in pharmaceutical development.

Performance is primarily measured using error values, calculated as ( f(x) - f(x^) ), where ( x^ ) is the known global optimum. The mean and standard deviation of these error values across independent runs provide robust indicators of algorithm consistency and reliability [17].

Statistical Validation Methods

To establish statistical significance between algorithm performances, researchers employ non-parametric tests such as the Wilcoxon signed-rank test at a standard significance level (α = 0.05) [21] [17]. This approach avoids distributional assumptions that may not hold for algorithm performance data. The Friedman test with corresponding post-hoc analysis can rank multiple algorithms across the entire benchmark suite, providing an overall performance hierarchy [17].

Recent Experimental Variations

Recent studies have explored variations to these standard protocols. Some researchers employ a proportional shrinking population mechanism that gradually reduces population size throughout a run to decrease computational burden while maintaining optimization pressure [17]. Others have implemented oscillating inertia weight-based crossover rates to dynamically balance exploration and exploitation phases during the search process [17].

Performance Analysis of Contemporary Evolutionary Algorithms

Recent large-scale studies have evaluated numerous evolutionary algorithms on CEC benchmarks, revealing how different algorithmic strategies respond to various problem characteristics. A comprehensive examination of 73 optimization algorithms published between the 1960s and 2022 on four CEC benchmark sets (CEC 2011, 2014, 2017, and 2020) demonstrated that benchmark choice significantly impacts algorithm ranking [11]. Algorithms that excelled on older benchmarks with limited function evaluations (10,000×D) often performed moderately on newer benchmarks allowing millions of evaluations, highlighting how computational budget interacts with problem hardness [11].

Performance on CEC 2017 Benchmarks

The CEC 2017 benchmark presents particular challenges due to its mixture of unimodal, multimodal, hybrid, and composition functions. Recent variants of established algorithms have shown promising results on this diverse problem set.

The LSHADESPA algorithm, which incorporates a proportional shrinking population mechanism, simulated annealing-based scaling factor, and oscillating inertia weight-based crossover, demonstrated superior performance on CEC 2017 benchmarks [17]. Its Friedman rank test results achieved a top ranking of 1st with a value of 77, significantly outperforming other metaheuristic algorithms [17].

The ACRIME algorithm, which enhances the RIME algorithm with an adaptive hunting mechanism and criss-crossing strategy, also showed excellent performance on CEC 2017 benchmarks [21]. When evaluated against 10 basic algorithms and 9 state-of-the-art approaches, ACRIME demonstrated statistically significant improvements according to Wilcoxon signed-rank tests [21].

For binary optimization problems derived from CEC 2017 benchmarks, the BinDMO algorithm, which applies Z-shaped, U-shaped, and taper-shaped transfer functions to convert continuous search spaces to binary, outperformed other binary heuristic algorithms including Binary SO, Binary PDO, and Binary AFT in average results [28].

Table 2: Algorithm Performance on CEC 2017 Benchmark Suite

| Algorithm | Key Mechanisms | Reported Performance | Strengths |

|---|---|---|---|

| LSHADESPA [17] | Proportional population shrinking, SA-based scaling factor, oscillating crossover | Friedman rank: 77 (1st place) | Effective balance of exploration/exploitation, efficient resource use |

| ACRIME [21] | Adaptive hunting mechanism, criss-crossing strategy | Statistically superior to 19 competitors | Enhanced solution diversity, effective multimodal optimization |

| BinDMO [28] | Z-shaped/U-shaped/taper-shaped transfer functions | Top performer in binary optimization | Effective continuous-to-binary conversion, superior feature selection |

| iEACOP [22] | Modified ensemble approach | Outperformed baseline on 27/29 functions | Strong performance on bound-constrained real-parameter problems |

Algorithmic Strategies for Different Problem Types

Analysis of top-performing algorithms reveals specialized strategies for different problem characteristics. For highly multimodal problems, successful algorithms typically employ: (1) diversity preservation mechanisms to maintain exploration throughout the search process; (2) adaptive parameter control to adjust search behavior based on problem landscape; and (3) multiple search operators to address different phases of optimization [21] [17].

For problems with complex constraints and feasible regions, effective strategies include: (1) dynamic population management to focus computational resources; (2) hybrid approaches that combine global and local search; and (3) problem decomposition techniques that address variable interactions [17].

Benchmarking Platforms and Software

- PlatEMO: A MATLAB platform for evolutionary multi-objective optimization that provides implementations of numerous algorithms and benchmarks, facilitating standardized comparisons [22].

- CEC Official Test Suites: The standardized benchmark functions from CEC competitions (2014, 2017, 2020, 2022) provide controlled environments for algorithm comparison [29] [11] [17].

- LSHADESPA Implementation: The top-performing algorithm variant noted for its proportional population shrinking and adaptive mechanisms [17].

Performance Analysis Tools

- Wilcoxon Signed-Rank Test: Non-parametric statistical test for comparing algorithm performance with minimal assumptions about data distribution [21] [17].

- Friedman Test with Post-hoc Analysis: Statistical approach for ranking multiple algorithms across complete benchmark sets [17].

- Convergence Graph Analysis: Visual tool for understanding algorithm behavior throughout the optimization process [28].

The characterization of problem hardness through CEC benchmarks provides invaluable insights for researchers selecting or developing evolutionary algorithms for specific applications. The experimental evidence presented demonstrates that no single algorithm dominates across all problem types, reinforcing the "no free lunch" theorem in optimization [11]. Instead, algorithm performance is intimately connected to problem characteristics, particularly modality, variable interactions, and constraint structures.

For researchers working on real-world optimization problems in fields like drug development, these findings suggest that benchmark performance on relevant problem classes may provide better guidance for algorithm selection than overall benchmark rankings. Problems with specific constraint structures or modality patterns similar to a target application should receive greater weight in the evaluation process. Furthermore, the development of specialized algorithm variants for particular problem classes continues to yield significant performance improvements, as demonstrated by the success of approaches like LSHADESPA and ACRIME on the diverse problem types within the CEC 2017 benchmark [21] [17].

As evolutionary computation continues to advance, the rigorous characterization of problem hardness through standardized benchmarks remains essential for meaningful progress. The CEC benchmarks, with their carefully designed problems spanning diverse hardness characteristics, provide an indispensable resource for developing more effective optimization strategies for complex real-world challenges.

Implementing EAs for CEC Benchmarks: From Code to Practical Application

Selecting and Configuring Evolutionary Algorithms for CEC Problems

The IEEE Congress on Evolutionary Computation (CEC) special sessions and competitions have established themselves as the cornerstone for benchmarking and advancing evolutionary algorithms (EAs) in the field of computational intelligence. These competitions provide rigorously designed test suites that mirror the complexities of real-world optimization challenges, serving as a critical proving ground for new algorithmic approaches. For researchers and practitioners—particularly those in demanding fields like drug development where optimization plays a crucial role in tasks such as molecular design and pharmacokinetic modeling—navigating the landscape of high-performing algorithms is essential.

This guide provides an objective comparison of modern EAs, focusing on their performance on the CEC 2017 and CEC 2020 benchmark test suites. We synthesize performance data from multiple studies, detail standardized experimental protocols to ensure reproducible comparisons, and visualize the key relationships and workflows that underpin successful algorithm deployment. The aim is to equip scientists with the knowledge to select and configure the most appropriate evolutionary algorithm for their specific optimization challenges.

Performance Comparison of Modern Evolutionary Algorithms

The following tables summarize the performance of various state-of-the-art algorithms on the CEC 2017 and CEC 2020 benchmark suites, based on published comparative studies.

Performance on CEC 2017 Benchmark Problems

The CEC 2017 test suite comprises 30 single-objective bound-constrained numerical optimization problems, including unimodal, multimodal, hybrid, and composition functions designed to challenge an algorithm's convergence speed, precision, and robustness [21] [25].

Table 1: Algorithm Performance on CEC 2017 Benchmark Problems

| Algorithm | Key Mechanism | Reported Performance (Friedman Rank) | Strengths |

|---|---|---|---|

| ACRIME [21] | Adaptive hunting, Criss-crossing mechanism | 1st (Best) | Excellent exploration/exploitation balance, high solution diversity |

| CSsin [25] | Dual search, Linearly decreasing switch probability | Competitive with SaDE, JADE | Balanced local and global search |

| LSHADESPA [17] | Population shrinking, SA-based scaling factor | 1st (Friedman Rank: 77) | Effective computational burden reduction |

| Original RIME [21] | Soft-rime and hard-rime search | Baseline for ACRIME | Good global search capability |

Performance on CEC 2020 and Other Recent Benchmarks