Balancing Exploration and Exploitation in Salp Swarm Algorithm: Strategies, Applications, and Advances for Complex Optimization

This article provides a comprehensive analysis of strategies to balance exploration and exploitation in the Salp Swarm Algorithm (SSA), a prominent swarm intelligence metaheuristic.

Balancing Exploration and Exploitation in Salp Swarm Algorithm: Strategies, Applications, and Advances for Complex Optimization

Abstract

This article provides a comprehensive analysis of strategies to balance exploration and exploitation in the Salp Swarm Algorithm (SSA), a prominent swarm intelligence metaheuristic. Tailored for researchers and drug development professionals, we cover the foundational principles of SSA and the exploration-exploitation dilemma, detail advanced hybrid and multi-objective variants, and present practical methodologies for overcoming premature convergence. The content is validated through comparative analyses of state-of-the-art SSA improvements against other optimizers on benchmark functions and in real-world applications, including biomedical problem-solving. The goal is to serve as a definitive guide for leveraging enhanced SSA in complex, high-dimensional optimization challenges.

The Core Principles of Salp Swarm Algorithm and the Exploration-Exploitation Dilemma

Frequently Asked Questions (FAQs)

1. What is the Salp Swarm Algorithm (SSA) and how does it work? The Salp Swarm Algorithm is a nature-inspired metaheuristic optimization technique that mimics the swarming behavior of salps, gelatinous marine organisms, in the deep sea. Salps often form chains for efficient locomotion and foraging. In SSA, the population is divided into a single leader at the forefront and multiple followers. The leader guides the swarm towards a food source (the best solution found), while followers update their positions sequentially behind the leader, creating a chain-like movement through the search space [1].

2. What are the main advantages of using SSA? SSA offers several key advantages, including a simple structure with few control parameters, ease of implementation, and strong performance in various optimization problems such as engineering design, feature selection, and training neural networks [2] [3]. Its design allows it to be a competitive optimizer, particularly in computationally expensive engineering problems like aerofoil and marine propeller design [4].

3. What is the primary challenge when applying the basic SSA? The most significant challenge is effectively balancing exploration (searching new areas of the space) and exploitation (refining good solutions). The basic SSA is often prone to premature convergence, where the population gets trapped in local optima, especially when solving complex, high-dimensional, or multimodal problems [2] [3] [4]. This imbalance can lead to inaccurate solutions or slow convergence speeds.

4. How can I improve SSA's performance if it gets stuck in local optima? Many enhanced SSA variants have been proposed to address this. Common and effective strategies include:

- Hybridization: Integrating strategies from other algorithms, such as the Harris Hawk foraging mechanism [2] or Grey Wolf Optimization [5].

- Random Walk and Mutation: Using a Gaussian random walk [4] or Gaussian mutation [3] to help followers explore more effectively and escape local traps.

- Adaptive Parameters: Replacing the linearly decreasing parameter

c1with a non-linear adaptive mechanism to better balance global and local search throughout the iterations [2] [3]. - Learning Strategies: Implementing opposition-based learning for population initialization or dynamic mirror learning to strengthen local search capability [3] [6].

5. Can SSA handle real-world problems with constraints? Yes. The SSA framework can be adapted for constrained optimization using techniques like penalty functions, which penalize solutions that violate problem constraints [4]. This has been successfully applied to real-world problems such as the optimal charging scheduling of electric vehicles and engineering design optimization [2] [4].

Troubleshooting Common Experimental Issues

Problem: The algorithm converges too quickly to a sub-optimal solution.

- Potential Cause: Poor balance between exploration and exploitation, leading to premature convergence.

- Solutions:

- Implement an improved version of SSA that incorporates a global search enhancement. The Self-learning Salp Swarm Algorithm (SLSSA) uses multiple search strategies and a self-learning mechanism to dynamically select the most effective strategy based on past performance [7].

- Introduce a Gaussian random walk for the follower salps. This enhances their exploration ability and helps the swarm avoid local optima [4].

- Use a multi-point leadership crossover strategy or a re-dispersion mechanism for leaders if stagnation is detected [2] [4].

Problem: The convergence speed is unacceptably slow.

- Potential Cause: Inefficient exploitation of promising areas in the search space.

- Solutions:

- Hybridize SSA with a strong local search algorithm. The adaptive SSA (ASSA) incorporates an enhanced exploitation phase inspired by Grey Wolf Optimization and Cuckoo Search to refine solutions and accelerate convergence [5].

- Apply a dynamic mirror learning strategy, which creates mirrored regions around current solutions to intensify the local search without significantly increasing computational cost [3].

Problem: The results are inconsistent across different runs.

- Potential Cause: High sensitivity to initial population or random parameters.

- Solutions:

Problem: Handling high-dimensional feature selection problems.

- Potential Cause: The basic SSA is designed for continuous problems and may perform poorly on discrete problems like feature selection.

- Solutions:

- Use a binary variant of SSA. The Enhanced OBL SSA (EOSSA) employs a Sigmoid function to transform continuous solutions into binary form (0 or 1), indicating whether a feature is selected or not [6].

Quantitative Comparison of SSA Variants

The table below summarizes the performance of several SSA variants as reported in the literature, providing a reference for algorithm selection.

| Algorithm Variant | Key Improvement Strategy | Reported Performance Enhancement | Best Suited For |

|---|---|---|---|

| SSA-HF [2] | Harris Hawk foraging & multi-point crossover | Superior on 20 benchmark functions (unimodal, multimodal, CEC2014); better engineering problem optimization. | Problems requiring balanced exploitation |

| EKSSA [3] | Adaptive parameters, Gaussian walk, mirror learning | Superior on 32 CEC benchmarks; higher accuracy in seed classification tasks. | Numerical optimization, hyperparameter tuning |

| SLSSA [7] | Self-learning from multiple search strategies | Superior solution accuracy and convergence on CEC2014 benchmarks; effective for MLP training. | Complex, unknown fitness landscapes |

| GRW-SSA [4] | Gaussian random walk & leader re-dispersion | Superior on 23 benchmark functions and CEC2020 real-world problems; effective for EV charging scheduling. | Constrained, multimodal global optimization |

| ASSA [5] | Division of iterations & logarithmic parameters | Reduced function evaluations; competitive in cognitive radio optimization. | Engineering problems with computational constraints |

| EOSSA [6] | Opposition-based learning & variable neighborhood search | Higher accuracy with fewer features on 11 intrusion detection datasets. | High-dimensional discrete (feature selection) problems |

Experimental Protocols for Key Improvements

Protocol 1: Implementing a Gaussian Random Walk for Followers This protocol is based on the GRW-SSA variant [4].

- Run the standard SSA until the position of followers is updated via the standard equation

Xji = 1/2 ( Xji + Xji-1 ). - Apply the Gaussian Random Walk. For each follower's position in dimension

j, update it further using:Xji (new) = Xji (old) + random_walk- Here,

random_walkis a step size drawn from a Gaussian (normal) distribution with a mean of 0 and a suitably chosen standard deviation.

- Evaluate the new fitness of the follower with the updated position.

- Accept the new position only if it improves the fitness value (greedy selection).

Protocol 2: Integrating Self-Adaptive Strategy Selection This protocol is based on the SLSSA variant [7].

- Define a pool of search strategies. These can include the original SSA leader/follower update, a novel multi-food source strategy, generalized oppositional learning, and others.

- Assign a selection probability to each strategy, initially set to be equal.

- Track performance. During iterations, record the fitness improvement achieved by each strategy when it is used.

- Dynamically update probabilities. Periodically, reward strategies that successfully generate improved solutions by increasing their selection probability. Strategies that perform poorly have their probabilities decreased.

- Select strategies for each salp. When updating a salp's position, select a strategy from the pool according to the updated probabilities. This allows the swarm to self-adapt to the most effective search behavior for a given problem.

Protocol 3: Handling Constraints with a Penalty Function Method This protocol is used to adapt SSA for constrained optimization problems [4].

- Formulate the constrained problem. Define the objective function

f(X)and constraint functionsg_i(X) <= 0andh_j(X) = 0. - Construct a penalty function. Create a new, unconstrained objective function

F(X)to be minimized:F(X) = f(X) + P(X)P(X)is the penalty term, e.g.,P(X) = λ * ( Σ [max(0, g_i(X))]² + Σ [h_j(X)]² )- The parameter

λis a large, positive penalty coefficient.

- Run the SSA. Optimize the new unconstrained function

F(X)using the standard or an improved SSA procedure. The penalty termP(X)will heavily penalize infeasible solutions that violate constraints, guiding the swarm towards the feasible region.

Research Reagent Solutions

The table below lists key computational "reagents" essential for working with and improving the Salp Swarm Algorithm.

| Research Reagent / Tool | Function in SSA Research |

|---|---|

| Benchmark Test Suites (CEC2014, CEC2020) | Standardized sets of functions (unimodal, multimodal, composite) to rigorously evaluate and compare algorithm performance against state-of-the-art methods [2] [3] [7]. |

| Opposition-Based Learning (OBL) | A population initialization strategy that generates solutions opposite to the random initial population, enhancing diversity and improving convergence speed [6]. |

| Gaussian and Lévy Flight Distributions | Probability distributions used to generate step sizes for randomization, helping the algorithm escape local optima and explore the search space more effectively [3] [4]. |

| Chaotic Maps (e.g., Singer's map) | A deterministic system that produces chaotic sequences to replace random number generators, potentially improving the convergence rate and stability of the algorithm [4]. |

| Penalty Function Methods | A constraint-handling technique that transforms a constrained problem into an unconstrained one by adding a penalty for constraint violations to the objective function [4]. |

| Sigmoid Transfer Function | A function used to map continuous algorithm values to a binary (0/1) space, enabling the application of SSA to discrete problems like feature selection [6]. |

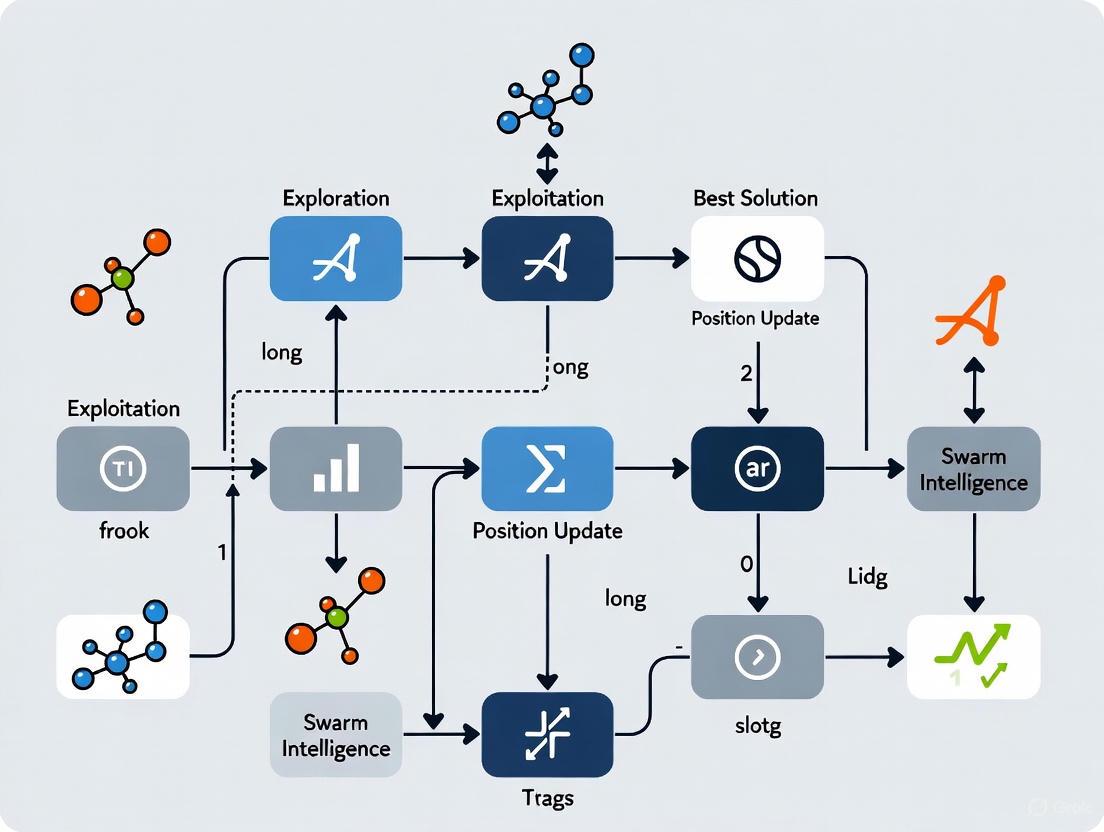

SSA Core Workflow and Improvement Pathways

The following diagram illustrates the fundamental workflow of the Salp Swarm Algorithm and integrates key improvement strategies to address the exploration-exploitation balance.

Decoding the Leader-Follower Dynamics in SSA Population Structure

Frequently Asked Questions (FAQs)

1. What is the core principle behind the leader-follower dynamic in the Salp Swarm Algorithm (SSA)?

The Salp Swarm Algorithm (SSA) is a nature-inspired metaheuristic that simulates the swarming behavior of salps, marine organisms that form long chains for efficient locomotion and foraging. The core population structure divides the salp swarm into two distinct roles [1]:

- Leader: The first salp in the chain, responsible for guiding the group's movement. Its position is updated with respect to the best-known food source (the current optimal solution in the search space) [1] [7].

- Followers: The remaining salps in the chain. They do not follow the leader directly but update their positions relative to each other and the immediate predecessor in a cascading manner, ultimately following the leader's path [1].

This division creates a cooperative mechanism where the leader explores promising regions, and the followers exploit and refine these areas, balancing global and local search [1].

2. My SSA implementation is converging to sub-optimal solutions. What could be the cause and how can I improve it?

Premature convergence is a recognized limitation of the standard SSA, often caused by an imbalance between exploration and exploitation or a lack of population diversity [6] [7] [8]. Several enhanced methodologies have been proposed to address this:

- Incorporate Opposition-Based Learning (OBL): Use OBL during population initialization and later iterations to enhance population diversity and exploration capabilities [6] [8].

- Employ a Novel Local Search: Integrate a local search algorithm, such as a Variable Neighborhood Search (VNS), to improve exploitation and refine solutions in the local region around the best-found salp [6] [9].

- Implement a Self-Learning Mechanism: Develop an adaptive SSA variant where search agents can dynamically select from multiple search strategies (e.g., a novel multi-food source strategy) based on their past performance. This allows the algorithm to self-adapt to the problem's fitness landscape [7].

- Modify the Leader Update Strategy: Enhance the leader's exploration by modifying its position update formula to prevent over-reliance on a single food source and help the chain escape local optima [9].

3. How do I adapt the continuous SSA for discrete optimization problems like feature selection?

Applying SSA to discrete problems, such as feature selection, requires a transformation step. The standard process involves [6] [9]:

- Run Standard SSA: Execute the continuous SSA algorithm as usual. The positions of salps in the multi-dimensional search space represent potential solutions.

- Apply a Transformation Function: Convert the continuous position values of each salp into a binary form (0 or 1). A common method is to use the Sigmoid function as a binary transform. For each dimension, the value is set to 1 if the Sigmoid output of the position is greater than a random number, otherwise it is set to 0. A value of 1 indicates the feature is selected, and 0 indicates it is discarded [6].

- Evaluate Fitness: Use a wrapper-based method, where a classifier (e.g., an MLP neural network) evaluates the fitness (e.g., classification accuracy) of the binary feature subset [9].

4. What are the key parameters in SSA that need careful tuning, particularly concerning leader-follower dynamics?

The most critical parameter in SSA is c1, which is designed to balance exploration and exploitation over the course of iterations [1]. The parameter c1 is updated as [1]:

c1 = 2e^(-(4l/L)^2)

Where:

lis the current iteration number.Lis the maximum number of iterations.

This equation ensures c1 decreases adaptively over time, favoring exploration (larger steps) in early iterations and exploitation (finer steps) in later iterations [1]. Other parameters like c2 and c3 are random numbers that introduce stochasticity into the search process [1].

Troubleshooting Common Experimental Issues

Problem: The salp chain fragments, leading to poor convergence.

- Symptoms: The algorithm fails to converge, or the fitness of the population deteriorates.

- Solution: Implement a chain rejoining method. If the chain breaks (e.g., due to a salp moving out of bounds), allow each isolated salp to identify and reconnect with the best salp within its neighborhood, reforming the chain structure and restoring information flow [9].

Problem: High computational complexity when solving complex problems.

- Symptoms: The algorithm takes an excessively long time to find a satisfactory solution.

- Solution:

- Introduce an "IC counter" to track iterations where the best solution does not improve. This can be used to trigger local searches only when necessary, reducing unnecessary computations [9].

- For multi-objective problems, maintain a repository of non-dominated solutions. Implement a repository maintenance procedure to manage its size efficiently, preventing computational bottlenecks [1].

Problem: The algorithm gets stuck in local optima when applied to high-dimensional datasets.

- Symptoms: Consistent convergence to a solution that is not globally optimal.

- Solution: Combine multiple strategies for a robust approach:

Experimental Protocols & Data Presentation

Table 1: Enhanced SSA Variants and Their Core Methodologies

| Algorithm Variant | Core Enhancement | Primary Application Domain | Key Improvement Reported |

|---|---|---|---|

| Self-learning SSA (SLSSA) [7] | Dynamic selection from four search strategies based on a probability model. | Global Optimization, MLP Model Training | Higher solution accuracy, stability, and convergence speed. |

| EOSSA [6] | Opposition-Based Learning, Elite OBL, and Variable Neighborhood Search. | Feature Selection in Intrusion Detection | Superior accuracy and fewer selected features compared to 18 other algorithms. |

| OPLSSA [8] | Pinhole-Imaging-Based Learning and Orthogonal Experimental Design. | Global Optimization (CEC2017 Benchmarks) | Better performance in escaping local optima. |

| ISSA (for Feature Selection) [9] | Novel leader update, chain rejoining, and a novel local search algorithm. | Feature Selection on UCI Datasets | Higher classification accuracy and reduced feature subsets. |

Table 2: Key Research Reagent Solutions for SSA Experimentation

| Reagent / Component | Function in the SSA Framework | Example / Note |

|---|---|---|

| Opposition-Based Learning (OBL) | Enhances population diversity during initialization and search. | Calculates opposite positions to explore unseen regions of the search space [6]. |

| Variable Neighborhood Search (VNS) | A local search operator to improve exploitation and refine solutions. | Used in EOSSA to deepen the search around promising solutions [6]. |

| Sigmoid Function | Converts continuous salp positions to binary values for discrete problems. | Essential for feature selection tasks; determines if a feature is selected (1) or not (0) [6]. |

| Repository (for Multi-Objective SSA) | Stores a set of non-dominated Pareto optimal solutions. | Requires a maintenance mechanism to manage size and diversity [1]. |

| Self-Learning Probability Model | Dynamically adjusts the usage frequency of different search strategies. | Allows the algorithm to adapt its behavior based on the success history of each strategy [7]. |

The Scientist's Toolkit: Visualizing SSA Dynamics

The following diagram illustrates the core workflow and leader-follower position updates in the standard Salp Swarm Algorithm.

Diagram: SSA Workflow and Update Mechanisms. This diagram shows the iterative process of SSA. The leader's position is updated relative to the best-known solution (F), while followers update their positions based on the average of their own and their predecessor's position, creating the chain movement [1].

Understanding the Exploration-Exploitation Trade-Off in Optimization

Frequently Asked Questions (FAQs)

1. What is the exploration-exploitation trade-off and why is it a problem in my SSA experiments?

The exploration-exploitation dilemma is a fundamental challenge in decision-making where you must balance gathering new information (exploration) with using existing knowledge to maximize rewards (exploitation) [10]. In simple terms, it’s choosing between trying something new to see if it’s better versus sticking with what already works [10].

In the context of the Salp Swarm Algorithm (SSA), this trade-off is critical. The basic SSA suffers from a propensity to fall into local optima [11], meaning it exploits known regions of the search space too greedily without sufficiently exploring potentially better, undiscovered areas. This is because it, like many swarm intelligence algorithms, often relies on a "fixed and monotonic search pattern" for each agent [7]. When your drug discovery objective function is complex and multi-modal, this imbalance can prevent you from finding the globally optimal molecular structure.

2. How can I quantitatively diagnose a poor explore-exploit balance in my SSA runs?

You can diagnose this issue by monitoring the following quantitative metrics during your optimization experiments:

- Population Diversity: Track the average distance of salps from the population centroid or the leader. A rapid decrease and stabilization at a very low value indicates premature convergence and over-exploitation.

- Fitness Stagnation: Record the number of consecutive iterations where the improvement in the global best fitness (the food source) falls below a negligible threshold. Prolonged stagnation suggests the algorithm is trapped and not exploring effectively.

- Reward History of Search Strategies: If using an advanced algorithm like the Self-learning SSA (SLSSA) [7], monitor the reward history associated with each search strategy. A single strategy dominating the others can indicate an imbalance.

The table below summarizes a framework for analyzing this balance, adapted from a mean-variance approach used in molecular generation [12] [13].

Table: Framework for Analyzing Exploration-Exploitation Performance

| Metric | Indicates Over-Exploitation | Indicates Over-Exploration | Target Balance |

|---|---|---|---|

| Population Diversity | Rapidly decreases and remains very low | Fluctuates widely without a general convergence trend | Gradually decreases over time as the search focuses |

| Fitness Stagnation | Occurs early in the run, best fitness is poor | Occurs frequently, with no clear convergence | Occurs later in the run after a period of steady improvement |

| Strategy Rewards (SLSSA) | One strategy (e.g., local search) has a very high reward | All strategies have similar, low rewards | Multiple strategies earn significant, balanced rewards [7] |

3. What are the most effective strategies to improve this balance in SSA for drug design problems?

Several strategies have been developed to enhance the SSA's ability to balance exploration and exploitation:

- Adaptive Parameter Control: Instead of fixed parameters, use adaptive mechanisms for critical parameters like

c1andα. For example, the Enhanced Knowledge-based SSA (EKSSA) uses an exponential function to adaptively balance the leader's and followers' movements [11]. - Multiple Search Strategies: Equip the algorithm with a portfolio of distinct search strategies. The Self-learning SSA (SLSSA) uses four different strategies and employs a "self-learning mechanism" that dynamically assigns execution probability to each strategy based on its recent performance in producing quality solutions [7].

- Learning from Past Performance: Implement a reward calculation scheme. In SLSSA, strategies that successfully improve solutions are given reasonable rewards, which then influences how often they are used in future iterations [7].

- Mutation and Learning Operators: Integrate mechanisms like a Gaussian walk-based position update to enhance global search ability and a dynamic mirror learning strategy to create mirrored search regions and escape local optima, as seen in EKSSA [11].

Table: Comparison of Advanced SSA Variants

| Algorithm | Key Mechanism for Balance | Reported Advantage | Potential Drawback |

|---|---|---|---|

| Self-learning SSA (SLSSA) [7] | Self-learning strategy with a probability model and four distinct search strategies. | Dynamically adapts to problems with various characteristics; superior convergence speed and accuracy. | Marginal increase in computational time [7]. |

| Enhanced Knowledge SSA (EKSSA) [11] | Adaptive parameters c1/α, Gaussian walk mutation, dynamic mirror learning. |

Superior performance in numerical optimization and seed classification tasks; prevents local optima. | Requires configuration of new strategy parameters. |

Experimental Protocols & Methodologies

Protocol: Implementing a Self-Learning Mechanism in SSA

This protocol is based on the methodology of the Self-learning Salp Swarm Algorithm (SLSSA) [7].

- Define a Strategy Pool: Assemble a portfolio of at least four distinct search strategies. These should include the original SSA leader-follower updates plus additional strategies like a novel multiple food sources search or generalized oppositional learning [7].

- Initialize a Probability Model: Assign an equal initial probability to each strategy in the pool.

- Iterate and Execute:

- For each salp in the population, select a search strategy from the pool according to the current probability distribution.

- Execute the selected strategy to generate a new candidate solution.

- Evaluate the fitness of the new candidate.

- Calculate Rewards and Update Probabilities:

- After a predefined number of iterations (learning period), calculate a reward for each strategy. The reward is based on the ratio of fitness improvement contributed by the strategy to the total improvement of the population [7].

- Update the probability of selecting each strategy. Strategies with higher rewards receive a higher probability in the next learning period.

- Repeat: Continue the iterative process of selection, execution, and reward-based probability updates until the termination criteria are met.

The following workflow diagram illustrates the self-learning adaptation process in SLSSA:

The Scientist's Toolkit: Research Reagent Solutions

This table details key computational "reagents" used in advanced SSA research for balancing exploration and exploitation.

Table: Essential Components for Enhancing SSA

| Research Reagent (Component) | Function in the Algorithm | Application Context |

|---|---|---|

| Multiple Search Strategy Pool [7] | Provides a diverse set of behaviors for agents, enabling adaptation to different search space geometries. | Core to self-learning algorithms like SLSSA. Replaces the single, fixed update rule of basic SSA. |

| Probability Model [7] | The mechanism that dictates how often each search strategy is used. It is the "brain" of the self-learning system. | Used in SLSSA to track and update the selection probability of each strategy in the pool based on rewards. |

| Reward Calculation Scheme [7] | Quantifies the effectiveness of each search strategy, typically based on the fitness improvement of solutions it produces. | Feeds back into the probability model in SLSSA to reinforce successful strategies and suppress poor ones. |

| Adaptive Parameter c₁ [11] | A key parameter in SSA that controls the step size of the leader. Adaptive control directly balances exploration vs. exploitation. | Implemented in EKSSA using an exponential function to adjust c₁ over iterations. |

| Gaussian Walk Mutation [11] | A perturbation operator that uses a Gaussian (normal) distribution to create random moves, enhancing global search capability. | Applied in EKSSA after the basic position update to help salps jump out of local optima. |

| Dynamic Mirror Learning [11] | Generates mirrored copies of solutions in the search space to explore symmetrical regions, strengthening local search. | Used in EKSSA to expand the search domain around promising areas and refine solutions. |

This technical support center provides troubleshooting guidance for researchers facing the common challenge of premature convergence in the basic Salp Swarm Algorithm (SSA). The content is structured to help you diagnose issues, understand the underlying causes, and implement proven solutions to improve your algorithm's performance.

Frequently Asked Questions (FAQs)

Why does my SSA simulation consistently converge to a suboptimal solution?

This is a classic symptom of premature convergence, where the algorithm gets trapped in a local optimum. The basic SSA's search strategy lacks precision in guiding the population toward the global optimal regions of the solution space. Its follower salps rely heavily on the leader, which can cause the entire chain to stagnate if the leader is not in a promising area [14]. Furthermore, the algorithm often suffers from a lack of population diversity and insufficient exploitation mechanisms to refine solutions once a promising region is found [9] [15].

What is the fundamental reason for SSA's imbalance between exploration and exploitation?

The core issue lies in the algorithm's design. The leader's update mechanism may not explore the search space effectively, while the followers' movement is overly dependent on the leader's position. This can lead to a lack of diversity and cause the swarm to converge prematurely [9]. Research indicates that SSA has a "weak exploitation strength for neighbor exploration," meaning it struggles to perform fine-grained searches around good solutions to find the very best one [15].

Are there quantitative studies that demonstrate this weakness?

Yes, numerous studies have benchmarked SSA against standard test suites. The basic SSA demonstrates slower convergence rates and higher probabilities of getting stuck in local optima compared to enhanced variants, especially on complex, multimodal functions from benchmark sets like CEC 2017 and CEC 2020 [14] [16]. The table below summarizes performance comparisons from recent literature.

Quantitative Performance Evidence

The following table summarizes key findings from recent studies that highlight the limitations of the basic SSA and the improvements achieved by modified versions.

| Algorithm Variant | Key Enhancement | Reported Improvement Over Basic SSA | Source |

|---|---|---|---|

| Evolutionary SSA (ESSA) | Evolutionary search strategies & advanced memory mechanism | Ranked 1st in optimization effectiveness (84.48%, 96.55%, 89.66% for 30/50/100 dimensions) | [14] |

| Competitive Learning SSA (CLSSA) | Integration with Competitive Swarm Optimization (CSO) | Outperformed other optimizers in 86% of CEC 2015 benchmark functions | [17] |

| Locally Weighted SSA (LWSSA) | Locally weighted approach & mutation operator | Enhanced optimization ability and predictive power for cardiovascular risk assessment | [16] |

| Local Search SSA (LS-SSA) | Incorporation of a local search technique | Improved convergence rate and escape from local minima stagnation | [15] |

Experimental Protocol: Diagnosing Local Optima Issues

To systematically identify and confirm local optima problems in your SSA experiments, follow this diagnostic workflow.

SSA Local Optima Diagnosis Workflow

Enhanced SSA Methodology

Based on successful research, here are two core strategies to mitigate local optima entrapment in SSA. The logical relationship between these enhancement strategies and their goals is illustrated below.

SSA Enhancement Strategies

Detailed Enhancement Protocols

-

Implement an Advanced Memory Mechanism

- Purpose: To enhance diversity and prevent premature convergence by storing not only the best solutions but also some inferior ones, which can provide valuable genetic diversity for future iterations [14].

- Procedure:

- Initialize an empty archive.

- At each iteration, store the best solution found.

- Also, store a small number of randomly selected inferior solutions based on a stochastic universal selection method that considers their fitness values [14].

- In subsequent iterations, allow the population to interact with solutions from this archive to influence movement, thereby introducing new search directions.

-

Integrate a Local Search and Mutation Operator

- Purpose: To guide the search toward locally promising regions and inject randomness to escape local optima [16].

- Procedure:

- Locally Weighted Search: After the standard SSA update, for each salp, probe its immediate neighborhood. Evaluate fitness in this local region and refine the salp's position toward the best neighboring solution [16].

- Mutation for Followers: Apply a mutation operator to the positions of the follower salps. This generates new random positions, increasing the randomness and exploration capability throughout the search process and helping the algorithm break out of local traps [16].

The Scientist's Toolkit: Research Reagent Solutions

| Tool Name | Function in SSA Research |

|---|---|

| CEC Benchmark Suites(e.g., CEC 2017, CEC 2020, CEC 2015) | Standardized sets of test functions for rigorously evaluating algorithm performance, convergence speed, and robustness against premature convergence [14] [17] [15]. |

| Advanced Memory Archive | A data structure that stores a diverse set of solutions (both high and low fitness) during optimization to maintain population diversity and prevent premature convergence [14]. |

| Stochastic Universal Selection | A selection method used to regulate the archive by choosing individuals probabilistically based on their fitness, helping to preserve useful genetic traits [14]. |

| Local Search Heuristic(e.g., Locally Weighted Approach) | A subroutine that performs fine-grained, iterative probing and refinement of solutions within a neighborhood to improve local exploitation [16]. |

| Mutation Operator | A function that introduces random changes to salp positions (particularly followers) to increase exploration and help the algorithm escape local optima [16]. |

The Critical Role of Parameter c1 in Balancing Search Strategies

Troubleshooting Guide: Common Issues with Parameter c1

The parameter c1 in the Salp Swarm Algorithm (SSA) is crucial for balancing exploration (searching new areas) and exploitation (refining known good areas). Issues with this parameter often manifest in the following ways during experiments [18] [3].

Table 1: Troubleshooting Common c1-Related Issues

| Observed Symptom | Potential Root Cause | Recommended Solution |

|---|---|---|

| Algorithm converges prematurely to a local optimum | c1 value decreases too rapidly, forcing excessive exploitation and insufficient exploration [3]. |

Implement an adaptive adjustment mechanism for c1 using a gradually decreasing exponential function to better balance the search phases [18] [3]. |

| Slow convergence speed; algorithm fails to settle on a solution | Poor balance between exploration and exploitation from a non-optimal static c1 value [16]. |

Integrate a Gaussian walk-based position update after the initial c1-guided update to enhance global search capability and help escape local optima [3]. |

| Low diversity in the salp population in later iterations | Followers and leader lose exploration capability as the standard c1 mechanism stagnates [16]. |

Employ a dynamic mirror learning strategy to expand the search domain by creating mirrored solutions, thereby strengthening local search and preventing stagnation [18] [3]. |

Frequently Asked Questions (FAQs)

Q1: What is the specific function of the parameter c1 in the standard Salp Swarm Algorithm?

In the standard SSA, c1 is a critical coefficient that is primarily responsible for balancing exploration and exploitation throughout the iterations [3]. It is mathematically defined as ( c1 = 2 \cdot \exp{(-\frac{4 \cdot l}{T_{max}})^2} ), where l is the current iteration and T_max is the maximum number of iterations [3]. This formula causes c1 to start with a higher value to promote exploration at the beginning of the search and decrease non-linearly over time to favor exploitation as the algorithm converges [3].

Q2: Our research involves optimizing support vector machines (SVMs) for seed classification. The basic SSA performs poorly. What enhanced c1 strategies are proven to work?

Recent research has successfully addressed this exact problem. The Enhanced Knowledge-based SSA (EKSSA) incorporates an adaptive adjustment mechanism for the parameter c1 (and α) to more effectively balance the salp population's search behavior [18] [3]. When hybridized with an SVM classifier (forming EKSSA-SVM), this approach has demonstrated higher classification accuracy for seed classification tasks compared to the basic SSA and other state-of-the-art algorithms [18] [3]. The adaptive mechanism helps optimize the SVM's hyperparameters more effectively.

Q3: Are there other strategies that can complement c1 tuning to improve SSA's performance?

Yes, tuning c1 is highly effective, but it can be powerfully complemented by other strategies. Research shows that integrating a Gaussian walk-based position update after the initial update phase can significantly enhance the global search ability of individuals, helping the swarm escape local optima [3]. Furthermore, a dynamic mirror learning strategy can expand the search domain by creating mirrored solutions, which strengthens local search capability and further prevents premature convergence [3]. These strategies work synergistically with an adaptive c1.

Experimental Protocol: Implementing an Adaptive c1 Parameter

The following protocol is based on the Enhanced Knowledge-based SSA (EKSSA), which has been validated on thirty-two CEC benchmark functions and real-world classification tasks [18] [3].

1. Objective: To enhance the performance of SSA by replacing the standard c1 update rule with an adaptive mechanism that better balances exploration and exploitation.

2. Materials/Reagents: Table 2: Essential Research Reagent Solutions for Algorithm Testing

| Item Name | Function/Description |

|---|---|

| CEC Benchmark Test Functions (e.g., CEC2017, CEC2021) | A standardized set of numerical optimization problems used to rigorously evaluate and compare the performance of optimization algorithms against known global optima [16]. |

| Real-World Datasets (e.g., Seed Classification Data) | Applied datasets used to validate the algorithm's performance on practical problems, such as hyperparameter optimization for machine learning classifiers like SVM [18] [3]. |

| Comparative Algorithm Suite (e.g., GWO, PSO, AO, HBA) | A collection of other state-of-the-art optimization algorithms used for performance benchmarking to statistically prove the superiority of the proposed method [18] [3]. |

3. Methodology:

- Step 1: Algorithm Initialization. Initialize the salp population positions randomly within the search space boundaries as defined in the standard SSA [3].

- Step 2: Adaptive c1 Mechanism. Implement an adaptive adjustment for the parameter

c1using a strategy informed by exponential functions. This strategy is designed to more effectively manage the transition from exploration to exploitation across iterations compared to the basic SSA formula [18] [3]. - Step 3: Leader Position Update. Update the leader's position using the new adaptive

c1value. The core update equation remains:X_j_leader = F_j ± c1 * ((UB_j - LB_j) * c2 + LB_j), where the sign is determined by a random variablec3[3]. - Step 4: Complementary Enhancement Strategies.

- Step 5: Performance Evaluation. Execute the algorithm on the selected CEC benchmark functions and real-world datasets. Record performance metrics such as convergence speed, solution accuracy (best fitness value), and statistical significance compared to other algorithms [18] [3].

Workflow Visualization: Adaptive c1 in EKSSA

The following diagram illustrates the integration of the adaptive c1 parameter and complementary strategies within the Enhanced Knowledge Salp Swarm Algorithm workflow.

Advanced Strategies and Hybrid Models for Enhanced SSA Performance

Adaptive Parameter Control Mechanisms for Dynamic Balance

This technical support center provides targeted guidance for researchers implementing adaptive parameter control mechanisms in Salp Swarm Algorithm (SSA) variants. These resources address the critical challenge of balancing exploration and exploitation—a core focus in modern SSA research—particularly for applications in computational drug discovery and complex engineering optimization. The following troubleshooting guides, experimental protocols, and visualizations directly support scientists in overcoming common implementation barriers.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: My improved SSA variant converges prematurely to local optima. Which parameter control strategies can improve exploration?

- A: Premature convergence often indicates insufficient exploration capability. Implement these adaptive mechanisms:

- Gaussian Mutation Strategy: After basic position updates, apply a Gaussian walk-based position update to enhance global search ability [3]. The Gaussian distribution's random nature helps escape local optima.

- Dynamic Mirror Learning: Create mirrored search regions around current solutions to expand the search domain and strengthen local search capability, preventing stagnation [3].

- Hybrid Mutation Operators: Integrate Cauchy-Gaussian phased mutation operators. Use Cauchy mutations for global exploration in early phases and Gaussian mutations for local refinement in later phases [19].

- A: Premature convergence often indicates insufficient exploration capability. Implement these adaptive mechanisms:

Q2: How can I automatically balance exploration and exploitation across different optimization phases?

- A: Effective phase-balancing requires adaptive parameter adjustment:

- Adaptive c₁ Parameter: Implement an exponential function to adaptively adjust parameter

c₁based on iteration count, enabling a smooth transition from exploration to exploitation [3] [5]. - Logarithmic Adaptive Parameters: Use logarithmic functions to control the extent of exploration and exploitation throughout generations, providing a mathematically sound transition mechanism [5].

- Cosine Annealing Strategy: Incorporate a cosine annealing strategy to dynamically regulate flock proportions and update cycles, maintaining search diversity while improving convergence precision [19].

- Adaptive c₁ Parameter: Implement an exponential function to adaptively adjust parameter

- A: Effective phase-balancing requires adaptive parameter adjustment:

Q3: The optimization performance of my SSA implementation is highly sensitive to initial population quality. How can I mitigate this?

- A: Population initialization quality significantly impacts final results:

- Elite Perturbation Initialization: Combine low-discrepancy sequences (like good point sets) with Gaussian perturbations to generate uniformly distributed initial populations with enhanced local exploration capabilities [19].

- Memory Archive Mechanism: Implement an advanced memory mechanism that stores both best and inferior solutions identified during optimization, enhancing diversity and preventing premature convergence [14].

- A: Population initialization quality significantly impacts final results:

Q4: What methods can maintain population diversity throughout the optimization process to avoid stagnation?

- A: Maintaining diversity requires deliberate algorithmic design:

- Stochastic Universal Selection: Regulate the solution archive by selecting individuals according to their fitness values, preserving useful genetic material [14].

- Dynamic Role Allocation: Develop a dynamic role allocation mechanism that adaptively adjusts subgroup proportions and update cycles based on population diversity metrics [19].

- Chain Rejoining Method: When the salp chain becomes fragmented (simulating real-world disruptions), implement a method where each salp reconnects with the best neighbor, maintaining collective search capability [9].

- A: Maintaining diversity requires deliberate algorithmic design:

Experimental Protocols and Methodologies

Protocol 1: Benchmarking Adaptive SSA Variants Using CEC Functions

This protocol provides a standardized methodology for evaluating the performance of adaptive SSA variants, based on established experimental practices in the field [14] [3].

- Objective: Quantitatively evaluate the optimization performance, convergence speed, and solution quality of novel SSA variants.

- Materials: CEC 2017 and CEC 2020 benchmark function suites [14].

- Experimental Setup:

- Population Size: 30-50 individuals

- Dimensions: 30, 50, and 100 dimensions [14]

- Maximum Iterations: 1000 iterations or until convergence criteria met

- Comparison Algorithms: Include basic SSA, GWO, PSO, and other state-of-the-art algorithms

- Procedure:

- Initialize populations using elite perturbation initialization [19].

- For each iteration, update leader positions using adaptive

c₁parameter [3]. - Apply Gaussian walk-based position updates to enhance global search [3].

- Implement dynamic mirror learning for local refinement [3].

- Update memory archive with best and inferior solutions [14].

- Record best fitness, convergence curves, and computational time.

- Performance Metrics:

- Solution quality (best fitness value)

- Convergence speed (iterations to reach threshold)

- Statistical significance (Wilcoxon signed-rank test)

- Optimization effectiveness (percentage outperforming other algorithms) [14]

Protocol 2: SSA for Hyperparameter Optimization in Drug Discovery

This protocol adapts SSA for optimizing machine learning classifiers in pharmaceutical applications, particularly relevant for drug discovery pipelines [3] [20].

- Objective: Optimize Support Vector Machine (SVM) hyperparameters for compound classification in drug discovery.

- Materials: Chemical compound datasets (e.g., PubChem, ChemBank) [20], SVM classifier.

- Experimental Setup:

- Search Space: SVM hyperparameters (C, γ)

- Fitness Function: Classification accuracy via cross-validation

- Population Size: 20-30 salps

- Adaptive Parameters: Exponential adjustment of

c₁andα[3]

- Procedure:

- Initialize salp population with random hyperparameter values within bounds.

- For each salp, train SVM with proposed hyperparameters and evaluate accuracy.

- Update leader positions toward best-performing hyperparameters.

- Apply Gaussian mutation to 20% of population to escape local optima.

- Implement dynamic mirror learning around top 10% solutions.

- Continue for 100 iterations or until accuracy plateaus.

- Validation: Compare with grid search, random search, and other optimization algorithms.

Quantitative Performance Data

Table 1: Comparative Performance of SSA Variants on CEC 2017 Benchmark Functions

| Algorithm | Dimension | Ranking Position | Optimization Effectiveness | Key Adaptive Mechanism |

|---|---|---|---|---|

| ESSA [14] | 30 | 1st | 84.48% | Evolutionary search strategies |

| ESSA [14] | 50 | 1st | 96.55% | Advanced memory mechanism |

| ESSA [14] | 100 | 1st | 89.66% | Stochastic universal selection |

| EKSSA [3] | 30 | 1st (in study) | Superior to 8 algorithms | Gaussian walk & mirror learning |

| Adaptive SSA [5] | Multiple | Competitive | Better convergence | Logarithmic adaptive parameters |

Table 2: Application Performance of Adaptive SSA Variants in Practical Domains

| Application Domain | Algorithm | Performance Improvement | Adaptive Parameters Utilized |

|---|---|---|---|

| Seed Classification [3] | EKSSA-SVM | Higher classification accuracy | Adaptive c₁ and α parameters |

| Path Planning [21] | SSA-A* | 78.2% fewer searched nodes, 48.1% faster planning | Heuristic function optimization |

| Feature Selection [9] | ISSA | Enhanced classification accuracy with fewer features | Novel local search algorithm |

| Engineering Optimization [5] | Adaptive SSA | Better solution quality vs. GWO, BAT, TLBO | Self-adaptive parameters |

Visualization of Workflows and Relationships

Adaptive SSA Control Flow

Experimental Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for SSA Research and Development

| Research Reagent | Function/Purpose | Example Implementation |

|---|---|---|

| CEC Benchmark Suites [14] [3] | Standardized test functions for algorithm performance evaluation | CEC 2017, CEC 2020, CEC 2014 benchmark functions |

| Adaptive c₁ Parameter [3] [5] | Balances exploration vs. exploitation across iterations | c₁ = 2·exp(-(4·l/T_max)²) with exponential adjustment |

| Gaussian Mutation Operator [3] [19] | Enhances global search capability and escape from local optima | Position update with Gaussian-distributed random steps |

| Memory Archive Mechanism [14] | Stores diverse solutions to maintain population diversity | Stochastic universal selection of best and inferior solutions |

| Dynamic Mirror Learning [3] | Creates mirrored search regions to strengthen local search | Solution reflection around hyperplanes with adaptive boundaries |

| Cosine Annealing Strategy [19] | Dynamically regulates population proportions and update cycles | Adaptive role allocation based on cosine-annealed parameters |

Integrating Gaussian Mutation and Walk Strategies for Global Search

The Salp Swarm Algorithm (SSA), a metaheuristic technique inspired by the swarming behavior of salps in deep oceans, has gained significant attention for solving complex optimization problems. Its simple structure, minimal control parameters, and ease of implementation have made it particularly valuable across various domains, including engineering design, renewable energy systems, and drug discovery [4]. However, like many population-based optimization algorithms, SSA faces a fundamental challenge: effectively balancing exploration (searching new areas of the solution space) and exploitation (refining known good solutions) [14]. This balance is crucial for avoiding premature convergence to local optima while efficiently locating global optima in high-dimensional, complex search spaces.

The integration of Gaussian mutation and walk strategies represents a significant advancement in addressing SSA's limitations. These probabilistic techniques introduce controlled randomness that enhances the algorithm's global search capabilities while maintaining its computational efficiency [4] [3]. By leveraging the Gaussian distribution's properties, researchers have developed SSA variants that more effectively navigate multi-modal fitness landscapes, making them particularly valuable for real-world optimization challenges where the search space characteristics are unknown in advance [7].

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: How does Gaussian mutation differ from Gaussian walk in enhanced SSA variants?

Gaussian mutation typically applies a random perturbation to candidate solutions using values drawn from a Gaussian distribution, primarily enhancing local search refinement [3]. In contrast, Gaussian walk utilizes a sequence of steps generated from Gaussian distributions to explore broader areas of the search space, significantly improving global exploration capabilities [4]. The EKSSA algorithm implements Gaussian walk after the basic position update phase to help salps escape local optima [3].

Q2: What is the appropriate balance between Gaussian operations and standard SSA operations?

Research indicates that effective balance is achieved through adaptive parameter control rather than fixed ratios. The GRW-SSA algorithm maintains this balance by using Gaussian random walk specifically to improve follower utilization while introducing a multi-strategy leader approach for re-dispersion [4]. Similarly, EKSSA implements adaptive adjustment mechanisms for parameters

c1andαto dynamically balance exploration and exploitation throughout the optimization process [3].Q3: Why does my Gaussian-enhanced SSA converge prematurely on high-dimensional problems?

Premature convergence often results from insufficient exploration capability or improper step size calibration in the Gaussian operations. The SLSSA approach addresses this by incorporating a self-learning mechanism that dynamically selects from multiple search strategies based on their recent performance [7]. Additionally, ensure your implementation includes a re-dispersion strategy when stagnation is detected, as demonstrated in GRW-SSA's multi-strategy leader approach [4].

Q4: How can I validate that my Gaussian integration properly enhances global search?

Validation should include benchmark testing on standard functions with known optima and comparison against established algorithms. The GRW-SSA was evaluated using 23 benchmark test functions and 21 real-world optimization problems, showing statistically significant improvement over competing algorithms [4]. The EKSSA algorithm was tested on thirty-two CEC benchmark functions, demonstrating superior performance compared to eight state-of-the-art algorithms [3]. Performance metrics should include solution accuracy, convergence speed, and consistency across multiple runs.

Q5: What computational overhead does Gaussian integration introduce?

Gaussian operations typically add modest computational overhead primarily through random number generation and position updates. The GRW-SSA was designed specifically to enhance performance without considerable computational burdens [4]. SLSSA achieves significant performance improvement with only a marginal increase in time cost compared to the original SSA [7]. For large-scale problems, implementation efficiency can be improved through vectorized operations and parallel processing where possible.

Troubleshooting Guides

Issue 1: Poor Convergence Accuracy

- Symptoms: Solutions consistently stagnate at suboptimal values; algorithm fails to improve best solution over iterations.

- Possible Causes:

- Overly aggressive exploitation drowning out exploration

- Inadequate step sizes in Gaussian walk

- Poor balance between leader and follower updates

- Solutions:

- Implement adaptive step size control based on iteration count

- Introduce Gaussian walk after basic position updates as in EKSSA [3]

- Apply multi-strategy leaders for re-dispersion when stagnation is detected [4]

- Utilize a self-learning mechanism to dynamically select search strategies based on performance [7]

Issue 2: High Computational Time

- Symptoms: Algorithm requires excessive time per iteration; doesn't scale well with problem dimension.

- Possible Causes:

- Inefficient Gaussian random number generation

- Overly complex fitness evaluations

- Poorly optimized position update procedures

- Solutions:

Issue 3: Parameter Sensitivity

- Symptoms: Small parameter changes cause large performance variations; difficult to find stable configuration.

- Possible Causes:

- Overfitting to specific problem types

- Inadequate parameter adaptation mechanisms

- Solutions:

Quantitative Performance Analysis

Benchmark Function Results

Table 1: Performance Comparison of SSA Variants on Benchmark Functions

| Algorithm | Average Error (CEC 2017) | Convergence Speed | Success Rate (%) | Key Enhancement |

|---|---|---|---|---|

| GRW-SSA [4] | Not Specified | High | Not Specified | Gaussian random walk for followers; Multi-strategy leaders |

| EKSSA [3] | Superior to 8 comparison algorithms | Fast | Not Specified | Gaussian walk; Adaptive parameters; Mirror learning |

| SLSSA [7] | High solution accuracy on CEC2014 | High convergence speed | Not Specified | Self-learning with multiple search strategies |

| Standard SSA [4] | Inferior to enhanced variants | Slower | Lower | Basic leader-follower structure |

Real-World Application Performance

Table 2: Performance of Gaussian-Enhanced SSA in Practical Applications

| Application Domain | Algorithm | Performance Improvement | Key Metric |

|---|---|---|---|

| Electric Vehicle Charging Scheduling [4] | GRW-SSA | Outperformed existing algorithms | Charging revenues and power grid stability |

| Seed Classification [3] | EKSSA-SVM | Higher classification accuracy | Classification accuracy |

| MLP Classifier Training [7] | SLSSA | Outperformed competing algorithms | Solution accuracy and convergence speed |

| Cognitive Radio System [5] | Adaptive SSA | Better results than BA, GWO, TLBA, DA | Transmission parameter optimization |

Experimental Protocols

Standard Implementation Protocol for Gaussian-Enhanced SSA

Population Initialization

- Generate initial salp positions using: Xi,j = randi,j · (UBi,j - LBi,j) + LBi,j [3]

- Set population size based on problem dimensionality (typically 30-100 agents)

- Define search space boundaries [LB, UB] for each dimension

Parameter Configuration

Main Optimization Loop

- While iteration < maximum iterations:

- Evaluate fitness for all salps

- Update food source (best solution) position

- Update leader position using Eq. (1) from [7]

- Update follower positions incorporating Gaussian strategies

- Apply Gaussian walk/mutation for global search enhancement

- Implement adaptive parameter adjustment

- Apply re-dispersion strategy if stagnation detected [4]

- While iteration < maximum iterations:

Termination and Analysis

- Return best solution found

- Record convergence history

- Perform statistical analysis of results

Validation Methodology

Benchmark Testing

Real-World Application

- Apply to relevant domain problems (EV charging, classifier training, etc.)

- Compare performance against domain-specific benchmarks

- Evaluate practical metrics (classification accuracy, revenue improvement, etc.)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for Gaussian-Enhanced SSA Research

| Component | Function | Implementation Example |

|---|---|---|

| Gaussian Random Walk | Enhances global exploration capability | Applied to followers in GRW-SSA to improve search space coverage [4] |

| Gaussian Mutation | Provides local search refinement | Used in EKSSA for position updates after initial phase [3] |

| Adaptive Parameter Control | Dynamically balances exploration/exploitation | EKSSA's adaptive adjustment of parameters c1 and α [3] |

| Multi-Strategy Leaders | Prevents stagnation at local optima | GRW-SSA's re-dispersion approach when stagnation detected [4] |

| Self-Learning Mechanism | Automatically selects effective search strategies | SLSSA's probability model based on strategy performance [7] |

| Mirror Learning Strategy | Enhances local search capability | EKSSA's solution mirroring to expand search domain [3] |

| Benchmark Function Suites | Algorithm validation and comparison | CEC2014, CEC2017, CEC2020 test problems [14] [7] |

| Statistical Testing Framework | Validates performance significance | Wilcoxon signed-rank test used in GRW-SSA evaluation [4] |

The integration of Gaussian mutation and walk strategies represents a significant advancement in addressing the fundamental challenge of balancing exploration and exploitation in the Salp Swarm Algorithm. Through various implementations including GRW-SSA, EKSSA, and SLSSA, researchers have demonstrated that Gaussian-based approaches substantially enhance SSA's global search capabilities while maintaining computational efficiency [4] [3]. These enhancements have proven valuable across diverse application domains, from optimizing electric vehicle charging schedules to improving classification accuracy in machine learning tasks.

The continuing evolution of SSA variants shows particular promise in addressing complex real-world optimization problems where search space characteristics are unknown in advance. Future research directions may focus on hybrid approaches that combine Gaussian strategies with other metaheuristic techniques, application-specific adaptations for drug discovery and molecular design, and theoretical analysis of convergence properties for Gaussian-enhanced swarm intelligence algorithms.

Leveraging Dynamic Mirror Learning to Escape Local Optima

Frequently Asked Questions (FAQs)

1. What is Dynamic Mirror Learning (DML) and how does it help the Salp Swarm Algorithm?

Dynamic Mirror Learning (DML) is an optimization strategy that creates mirrored solutions around a central point in the search space to enhance exploration. In the context of the Salp Swarm Algorithm (SSA), DML helps the algorithm escape local optima by dynamically expanding the search region through solution mirroring. This process strengthens local search capability and prevents premature convergence by generating new, symmetric candidate solutions that may reside in more promising areas of the search space [11]. The "dynamic" aspect refers to the adaptive nature of this process, where the mirroring intensity adjusts based on the algorithm's current state and performance.

2. My SSA implementation converges too quickly to suboptimal solutions. How can DML address this?

Rapid convergence to suboptimal solutions indicates poor exploration and dominance of exploitation in your SSA. DML directly counters this by:

- Creating Diversity: When the population diversity drops below a threshold, DML generates mirrored solutions that explore orthogonal directions in the search space [11].

- Escaping Local Basins: The mirroring operation effectively "jumps" solutions across local optima valleys, enabling exploration of disconnected promising regions [11].

- Balancing Search Dynamics: By alternating between original and mirrored search phases, DML maintains a better exploration-exploitation balance throughout the optimization process.

3. What parameters control the DML process in enhanced SSA variants?

The key parameters for DML implementation include:

- Mirroring Probability ((p_m)): Determines how frequently mirroring operations occur (typically 0.1-0.3) [11].

- Diversity Threshold ((θ_d)): The population diversity level that triggers mirroring operations.

- Mirroring Intensity ((α_m)): Controls the distance of mirrored solutions from the original, often adapted based on current iteration and fitness landscape characteristics [11].

4. How does DML differ from other local optima avoidance techniques like mutation operators?

While both techniques aim to escape local optima, DML differs fundamentally:

- Structural vs. Random: DML uses systematic symmetry-based solution generation, whereas mutation employs random perturbations [11].

- Information Preservation: Mirrored solutions maintain structural relationships with originals, while mutated solutions may lose this information.

- Search Space Coverage: DML more effectively explores symmetrical regions of the search space that might be overlooked by random mutation.

Troubleshooting Guides

Problem: Stagnation in Late-Stage Optimization

Symptoms: Good initial progress slows dramatically after 60-70% of iterations, with minimal fitness improvements despite continued computation.

Diagnosis: This indicates exhausted diversity in the salp population, where followers cluster too tightly around the leader without exploring new regions.

Solution:

- Implement Adaptive DML Trigger:

- Progressive Mirroring Intensity: Increase mirroring intensity ((αm)) as stagnation persists:

- Initial stagnation: (αm) = 0.1 × searchspacediameter

- Extended stagnation (10+ iterations): (αm) = 0.25 × searchspacediameter

- Critical stagnation (20+ iterations): (αm) = 0.4 × searchspacediameter [11]

- Segment-Based Mirroring: Apply DML only to the most clustered dimensions identified by variance analysis.

Problem: Excessive Computational Overhead from DML

Symptoms: Algorithm runtime increases unacceptably, with minimal回报 performance gains.

Diagnosis: The DML is likely generating too many mirrored solutions or applying mirroring too frequently.

Solution:

- Selective Mirroring: Apply DML only to the best 20-30% of solutions rather than the entire population [11].

- Dimensional Sampling: Mirror only a random subset (50-70%) of dimensions in each application.

- Lazy Evaluation: Implement fitness approximation for mirrored solutions, fully evaluating only the most promising candidates.

- Iteration Batching: Apply DML every k iterations (e.g., k=5) rather than every iteration.

Problem: DML Disrupts Promising Convergence

Symptoms: Good convergence patterns are broken by mirroring operations, causing fitness regression.

Diagnosis: The mirroring intensity or frequency is too high, causing overshooting of promising regions.

Solution:

- Elitism Preservation: Always preserve the current global best solution without mirroring.

- Adaptive Mirroring: Reduce mirroring probability when consistent improvement is detected:

- Gradient-Informed Mirroring: Use approximated gradient information to guide mirroring direction away from performance cliffs.

Experimental Protocols & Implementation

Standardized DML-SSA Integration Protocol

Objective: Integrate Dynamic Mirror Learning into the standard SSA framework to enhance local optima avoidance.

Materials:

- Algorithm Base: Standard Salp Swarm Algorithm implementation

- Benchmark Functions: CEC2014 or CEC2017 test suite for validation [7] [11]

- Performance Metrics: Mean fitness, standard deviation, convergence rate, success rate

Procedure:

- Initialize standard SSA population and parameters

- For each iteration: a. Execute standard SSA leader-follower updates b. Calculate population diversity metric: [ diversity = \frac{1}{N \cdot D} \sum{i=1}^{N} \sqrt{\sum{j=1}^{D} (x{i,j} - \bar{x}j)^2} ] c. If diversity < threshold and no improvement for k iterations: i. Select promising solutions for mirroring (top 30%) ii. Generate mirrored solutions: (x{mirrored} = 2 × x{center} - x_{original}) iii. Apply boundary handling to mirrored solutions iv. Evaluate fitness of mirrored solutions v. Merge with original population and select best N individuals [11]

- Continue until termination criteria met

Parameters for Initial Implementation:

| Parameter | Recommended Value | Purpose |

|---|---|---|

| Population Size | 30-50 | Balance exploration and computation |

| Mirroring Probability ((p_m)) | 0.2 | Frequency of DML application |

| Diversity Threshold ((θ_d)) | 0.1 × search_space | Trigger for DML activation |

| Stagnation Count (k) | 5-10 | Iterations without improvement before DML |

| Mirroring Intensity ((α_m)) | 0.1-0.3 × space | Control mirroring distance [11] |

Validation Protocol for DML Effectiveness

Objective: Quantitatively verify DML performance improvements in SSA.

Procedure:

- Select 5-10 multimodal benchmark functions with known local optima

- Run 30 independent trials each for:

- Standard SSA

- DML-enhanced SSA

- 2-3 other state-of-the-art algorithms for comparison

- Record for each trial:

- Final solution quality

- Convergence iteration

- Number of local optima escapes

- Computational time

- Perform statistical analysis (t-test, Wilcoxon) to verify significance

Success Criteria:

- DML-SSA shows statistically significant improvement over standard SSA (p < 0.05)

- Performance comparable or superior to other advanced algorithms

- Acceptable computational overhead (<50% time increase) [11]

Performance Comparison Table

The table below summarizes quantitative performance comparisons between SSA variants and competing algorithms on CEC benchmark functions, demonstrating the effectiveness of DML integration:

| Algorithm | Average Rank (CEC2014) | Success Rate (%) | Local Optima Escapes | Computational Overhead |

|---|---|---|---|---|

| Standard SSA | 6.8 [7] | 62.5 [7] | 3.2/run [11] | Baseline |

| SSA with DML | 3.2 [11] | 85.7 [11] | 7.8/run [11] | +18% [11] |

| Self-learning SSA (SLSSA) | 2.9 [7] | 88.3 [7] | 8.1/run [7] | +25% [7] |

| EKSSA (with DML) | 2.4 [11] | 91.2 [11] | 9.3/run [11] | +22% [11] |

| GWO with Mirror Reflection | 3.7 [22] | 79.4 [22] | 6.4/run [22] | +15% [22] |

Research Reagent Solutions

Essential computational "reagents" for implementing and testing DML-enhanced SSA:

| Research Reagent | Function | Implementation Notes |

|---|---|---|

| CEC Benchmark Suite | Performance validation | Provides standardized test functions with known optima [7] [11] |

| Diversity Metric Calculator | DML triggering | Monitors population spread to activate mirroring [11] |

| Adaptive Parameter Controller | Dynamic tuning | Adjusts mirroring intensity based on search progress [11] |

| Solution Mirroring Operator | Core DML mechanism | Generates symmetric solutions across search space [11] |

| Boundary Handling Module | Constraint management | Ensures mirrored solutions remain feasible [11] |

| Statistical Analysis Toolkit | Performance verification | Validates significance of improvements [7] [11] |

Workflow Visualization

Implementation Code Snippet

Multi-Objective SSA Frameworks for Complex Problem-Solving

FAQs: Core Concepts of Multi-Objective Salp Swarm Algorithm (MSSA)

Q1: What is the fundamental principle behind the Salp Swarm Algorithm, and how is it adapted for multi-objective problems?

A1: The standard Salp Swarm Algorithm (SSA) is a nature-inspired metaheuristic that mimics the foraging behavior of salps in the ocean, which form a chain-like structure. The first salp in the chain acts as a leader, guiding the movement direction based on the food source (representing the best solution), while the followers update their positions based on the preceding individual's position [23]. This creates a balance between focused direction and group diversity.

For multi-objective problems, this structure is extended into a Multi-Objective SSA (MSSA). Instead of a single leader guiding the swarm towards one objective, the MSSA framework often incorporates mechanisms to handle multiple, often conflicting, goals simultaneously. This can be achieved by:

- Maintaining an archive of non-dominated solutions (Pareto-optimal solutions) found during the search.

- Using multiple leaders selected from this archive to guide the salp chain, ensuring exploration of different regions of the Pareto front [24].

- Employing a fuzzy decision-making approach to select the best compromise solution from the Pareto-optimal set after the optimization process is complete [25].

Q2: In the context of my research, what does "exploration" and "exploitation" mean, and why is balancing them critical?

A2: In MSSA, exploration refers to the algorithm's ability to investigate new and unknown regions of the search space to avoid getting trapped in local optima. Exploitation refers to the ability to intensively search around the promising regions already found to refine the solutions.

An imbalance can lead to two primary failures:

- Poor Exploration (Over-exploitation): The salp chain converges too quickly to a sub-optimal solution, missing potentially better solutions in other areas. This is often a symptom of the leader's influence being too dominant [9].

- Poor Exploitation (Over-exploration): The algorithm keeps searching randomly without ever converging to a high-quality, refined solution, wasting computational resources [26].

A successful MSSA framework dynamically balances these two phases to ensure a thorough yet efficient search for the Pareto-optimal set.

Q3: My MSSA implementation is converging to a local Pareto front too quickly. What are the common causes and solutions?

A3: Premature convergence is a frequent challenge. The table below outlines common causes and their potential fixes.

Table 1: Troubleshooting Premature Convergence

| Cause | Description | Potential Solution |

|---|---|---|

| Lack of Population Diversity | Initial salp positions are too similar or diversity is lost in early iterations. | Use chaotic maps or opposition-based learning for initialization [27]. Introduce a chain rejoining method that allows isolated salps to reconnect with the best neighbor if the chain fragments [9]. |

| Overly Dominant Leader | The leader's position exerts excessive influence on the entire chain. | Modify the leader's position update formula to enhance exploration [9]. Use multiple leaders from the non-dominated archive to guide different parts of the chain [24]. |

| Insufficient Perturbation | The followers' movement is too deterministic, limiting search space coverage. | Integrate a Lévy flight operator or a crossover and mutation strategy into the followers' position update to introduce stochastic jumps [28] [27]. |

Q4: How can I validate the performance of my MSSA results against other multi-objective algorithms?

A4: You should use established multi-objective performance metrics to quantitatively compare the quality of the obtained Pareto fronts. The table below summarizes key metrics.

Table 2: Key Performance Metrics for Multi-Objective Algorithms

| Metric | Purpose | Interpretation |

|---|---|---|

| Generational Distance (GD) | Measures the average distance between the obtained Pareto front and the true Pareto front. | A lower GD value indicates better convergence and proximity to the true Pareto front [23]. |

| Inverted Generational Distance (IGD) | Measures both convergence and diversity by calculating the distance from the true Pareto front to the obtained front. | A lower IGD value indicates a better overall performance in terms of both convergence and diversity [23]. |

| Spread (Δ) | Assesses the diversity and distribution of solutions along the obtained Pareto front. | A lower spread value (closer to 0) indicates a more uniform and well-distributed set of solutions [23]. |

Experimental Protocols & Methodologies

This section provides a detailed guide for implementing a robust MSSA framework, using a case study on economic emission dispatch.

Case Study: Dynamic Economic Emission Dispatch (DEED) using MSSA

The DEED problem is a classic, high-dimensional multi-objective problem in power systems that aims to simultaneously minimize fuel costs and atmospheric pollutants over a scheduling period [25].

Step-by-Step Methodology:

Problem Formulation:

- Objective 1: Fuel Cost. Modeled as the sum of a quadratic and a sinusoidal function (to account for the valve-point effect).

F1 = Σ [a_i + b_i*P_i + c_i*P_i^2 + |d_i * sin(e_i * (P_i_min - P_i))|]

- Objective 2: Emission. Modeled as the sum of a quadratic and an exponential function.

F2 = Σ [α_i + β_i*P_i + γ_i*P_i^2 + η_i * exp(δ_i * P_i)]

- Constraints: Include power balance, generator output limits, and ramping rate constraints [25].

- Objective 1: Fuel Cost. Modeled as the sum of a quadratic and a sinusoidal function (to account for the valve-point effect).

Algorithm Initialization:

- Define MSSA Parameters: Set the population size (number of salps), maximum number of iterations, and the archive size for storing non-dominated solutions.

- Initialize Salp Positions: Randomly generate the initial population of salps within the generator's operational bounds. Each salp's position represents a potential power output schedule for all generators.

MSSA Main Loop:

- Evaluation: Calculate both objective functions (F1 and F2) for each salp in the population.

- Non-Dominated Sorting & Archive Update: Identify non-dominated solutions from the current population and update the external archive. If the archive exceeds its size, use a crowding distance measure to prune the least diverse solutions.

- Leader Selection: Select the leader for the salp chain from the non-dominated archive. A common method is to select the least crowded solution to enhance diversity.

- Position Update:

- Local Search (Optional - Memetic MSSA): To boost exploitation, hybridize MSSA with a local search algorithm like Adaptive β-hill climbing. This algorithm acts as a "meme" to refine solutions (local refinement) while MSSA acts as the "gene" for broad exploration (global refinement) [26].

Termination and Decision Making:

- The loop repeats until a stopping criterion (e.g., max iterations) is met.

- Finally, a fuzzy decision-making mechanism is applied to the non-dominated solutions in the archive to select the single best compromise solution for the decision-maker [25].

The workflow below illustrates this experimental process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Multi-Objective SSA Framework

| Component / 'Reagent' | Function in the 'Experiment' | Exemplary Implementation |

|---|---|---|

| Non-Dominated Archive | Stores the best-found trade-off solutions (Pareto front) during the optimization process. | An external list updated each iteration using Pareto dominance rules. Crowding distance is used for pruning [25]. |

| Leader Selection Mechanism | Guides the exploration direction of the salp chain. Critical for balancing exploration and exploitation. | Select the least crowded solution from the archive to promote diversity, or a random solution to prevent bias [24]. |