Balancing Convergence and Diversity in Evolutionary Multi-Task Optimization: Strategies, Applications, and Biomedical Implications

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in computational problem-solving, enabling the concurrent optimization of multiple tasks through strategic knowledge transfer.

Balancing Convergence and Diversity in Evolutionary Multi-Task Optimization: Strategies, Applications, and Biomedical Implications

Abstract

Evolutionary Multi-Task Optimization (EMTO) represents a paradigm shift in computational problem-solving, enabling the concurrent optimization of multiple tasks through strategic knowledge transfer. This article provides a comprehensive analysis of the critical challenge in EMTO: balancing convergence speed with population diversity to prevent premature convergence and negative knowledge transfer. We explore foundational concepts, advanced methodological frameworks including adaptive transfer mechanisms and domain adaptation techniques, and troubleshooting strategies for mitigating performance degradation. The article further presents rigorous validation protocols and comparative analyses of state-of-the-art algorithms, with specific emphasis on applications relevant to researchers, scientists, and drug development professionals seeking to leverage EMTO for complex biomedical optimization problems.

The Fundamental Trade-Off: Understanding Convergence and Diversity in EMTO

Core Principles of Evolutionary Multi-Task Optimization

FAQs: Addressing Common Researcher Questions

Q1: What is negative transfer, and how can I mitigate it in my EMTO experiments?

Negative transfer occurs when knowledge exchanged between tasks is unhelpful or misleading, degrading optimization performance. This is a primary risk when tasks are dissimilar. To mitigate it:

- Use Adaptive Transfer Strategies: Implement mechanisms that predict the success rate of information exchange between tasks based on historical data, allowing the algorithm to control both the probability of transfer and the selection of beneficial source tasks [1] [2].

- Employ Explicit Mapping: For tasks with different dimensionalities, use methods like linear domain adaptation (LDA) based on multi-dimensional scaling (MDS) to learn robust linear mappings in a low-dimensional latent space, enabling more stable and effective knowledge transfer [3].

Q2: How can I balance convergence and diversity in a multi-task setting?

Balancing convergence (finding optimal solutions) and diversity (exploring the search space) is a core challenge. Advanced EMTO algorithms use multi-stage strategies:

- Early Stage: Allow relatively free information exchange between tasks to accelerate initial convergence and thoroughly explore the search space for each task [1].

- Later Stage: Introduce controlled knowledge transfer. For example, use a success rate prediction function to assess the effectiveness of information and control its exchange, thereby shifting the focus to maintaining population diversity and preventing premature convergence [1]. Incorporating strategies like a Golden Section Search (GSS)-based linear mapping can also help explore new, promising regions of the search space [3].

Q3: My algorithm is converging prematurely. What steps can I take?

Premature convergence often stems from excessive or misdirected knowledge transfer. To address this:

- Diversify the Population: Integrate strategies that promote exploration, such as a GSS-based linear mapping, to help the population escape local optima [3].

- Implement Competitive Scoring: Use a competitive scoring mechanism that quantifies the outcomes of transfer evolution versus self-evolution. This allows the algorithm to adaptively reduce unhelpful transfer and favor evolutionary paths that maintain diversity [2].

- Design Dislocation Transfer: Rearranging the sequence of decision variables during transfer can increase individual diversity and improve convergence [2].

Troubleshooting Guides for Experimental Issues

Issue 1: Poor Performance Due to Negative Transfer

Problem: The performance on one or more tasks is worse when optimized together compared to being solved in isolation, indicating harmful knowledge transfer.

| Troubleshooting Step | Action & Verification |

|---|---|

| Check Task Relatedness | Verify if the tasks have latent synergy. Performance degradation is common when optimizing highly unrelated tasks together. |

| Enable Adaptive Control | Switch from a fixed transfer probability to an adaptive strategy that selects source tasks and controls transfer intensity based on a competitive scoring of evolutionary success [2]. |

| Validate with Benchmarks | Test your algorithm on standard benchmark suites (e.g., CEC17-MTSO, WCCI20-MTSO) to confirm the issue is not specific to your problem design [2]. |

Issue 2: Ineffective Knowledge Transfer in High/Unequal Dimensionality Tasks

Problem: Knowledge transfer is ineffective when component tasks have high or different numbers of decision variables.

| Troubleshooting Step | Action & Verification |

|---|---|

| Implement Subspace Alignment | Apply a method like MDS-based Linear Domain Adaptation. This creates low-dimensional subspaces for each task and learns a mapping between them to facilitate more robust transfer [3]. |

| Review Transfer Operator | Ensure your crossover or migration operator is designed to handle dimensional mismatch, for example, through variable shuffling or selective transfer strategies. |

Issue 3: Algorithm Falling into Local Optima

Problem: The population converges quickly to a suboptimal solution, lacking diversity.

| Troubleshooting Step | Action & Verification |

|---|---|

| Audit Transfer Frequency | In the early stages, ensure the algorithm is not overly reliant on transfer. A multi-stage approach that allows extensive independent search first can help [1]. |

| Integrate Diversity Mechanisms | Incorporate a GSS-based linear mapping strategy to explore new areas of the search space and avoid local traps [3]. |

| Analyze Success Rates | Use an information transfer success rate prediction function in the later stages to control exchange and maintain diversity [1]. |

Experimental Protocols & Data

Standardized Evaluation Protocol for Multi-Task Single-Objective Optimization (MTSOO)

The following protocol, based on the CEC 2025 Competition, ensures fair and comparable results [4].

| Protocol Aspect | Detailed Specification |

|---|---|

| Benchmark Problems | Use the prescribed test suites, which include nine complex problems (each with 2 tasks) and ten 50-task benchmark problems [4]. |

| Independent Runs | Execute 30 independent runs of the algorithm for each benchmark problem. Each run must use a different random seed. It is prohibited to execute multiple sets of runs and select the best one [4]. |

| Termination Criterion | The maximal number of function evaluations (maxFEs) is 200,000 for all 2-task problems and 5,000,000 for all 50-task problems. One function evaluation is counted for calculating the objective value of any component task [4]. |

| Parameter Setting | The parameter setting of an algorithm must remain identical for every benchmark problem within the test suite. All settings must be reported in the final submission [4]. |

| Data Recording | For each run, record the Best Function Error Value (BFEV) for every component task at predefined evaluation intervals (k*maxFEs/Z, where Z=100 for 2-task and Z=1000 for 50-task problems). Save results for each benchmark in separate ".txt" files [4]. |

| Overall Ranking | The overall ranking considers performance on each component task across all computational budgets. The exact formulation of the ranking criterion is released after the competition submission deadline to avoid calibration bias [4]. |

Performance Metrics for Constrained Multi-Modal Multi-Objective Problems

When evaluating algorithms for constrained multi-modal multi-objective optimization, the following metrics are commonly used, as in the M3TMO algorithm study [1]:

- Inverted Generational Distance (IGD): Measures convergence and diversity by calculating the distance from a set of reference points on the true Pareto front to the nearest solution in the obtained solution set.

- Hypervolume (HV): Measures the volume of the objective space dominated by the obtained solution set and bounded by a reference point, capturing both convergence and spread.

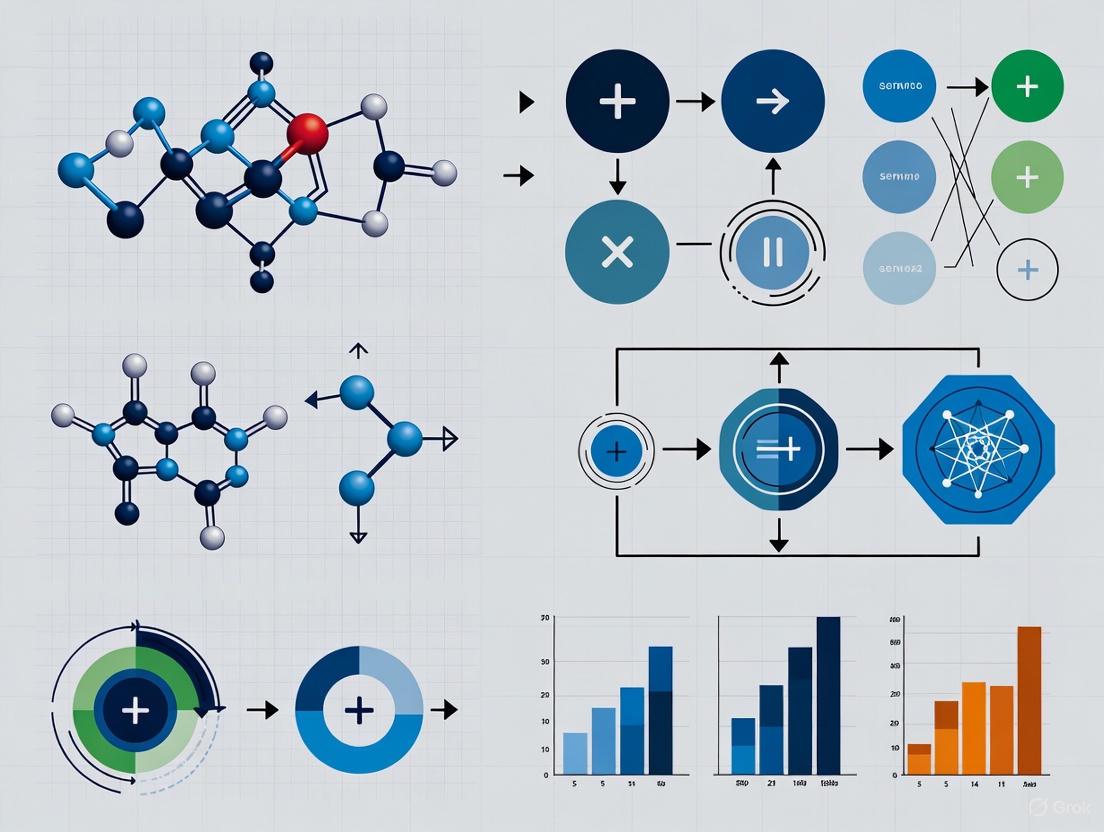

Key Algorithm Workflows & Relationships

MFEA-MDSGSS Knowledge Transfer Workflow

M3TMO Multi-Stage Strategy for Constrained Optimization

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Component | Function in EMTO Experiment |

|---|---|

| Multi-Factorial Evolutionary Algorithm (MFEA) | The foundational algorithmic framework that enables implicit knowledge transfer between tasks by using a unified search space and skill-factor based crossover [3]. |

| Success Rate Prediction Function | A specialized function used in multi-stage algorithms to assess the historical effectiveness of information exchange between tasks, allowing for adaptive control of transfer to improve diversity [1]. |

| Multi-Dimensional Scaling (MDS) & Linear Domain Adaptation (LDA) | Used together to create low-dimensional subspaces for tasks and learn robust linear mappings between them. This facilitates effective knowledge transfer, especially for tasks with high or differing dimensionality [3]. |

| Golden Section Search (GSS) Linear Mapping | An evolutionary operator that promotes exploration of new regions in the search space, helping populations escape local optima and maintain diversity during the search process [3]. |

| Competitive Scoring Mechanism | A metric system that quantifies the outcomes of transfer evolution versus self-evolution. It enables the algorithm to adaptively select source tasks and set transfer probabilities, thereby reducing negative transfer [2]. |

FAQ: Fundamental Concepts

What do "convergence" and "diversity" mean in Evolutionary Multitask Optimization (EMTO)? In EMTO, convergence refers to the ability of an algorithm to steer the population toward the true optimal solutions for each task. Diversity refers to the maintenance of a wide variety of solutions within the population, which prevents premature convergence to local optima and ensures a good spread of solutions across the Pareto front in multi-objective problems. The core challenge is balancing these two competing goals; excessive focus on convergence can kill diversity, while too much diversity can stagnate progress [3] [5].

Why is balancing convergence and diversity particularly challenging in large-scale optimization? In large-scale problems involving many decision variables, it becomes difficult to simultaneously and effectively manage diversity using specific parameters in both the objective space (which deals with solution quality) and the decision space (which deals with the variables themselves). A lack of balance here hinders the algorithm's performance [6].

What is "negative transfer" and how does it impact EMTO? Negative transfer occurs when knowledge shared between tasks is unhelpful or misleading. For example, if one task converges prematurely to a local optimum, transferring this knowledge can pull other tasks into the same local optimum, degrading overall performance. This is a significant risk when optimizing dissimilar tasks simultaneously [3] [2].

Troubleshooting Guides

Problem: Algorithm converges prematurely to a local optimum. Premature convergence indicates a loss of diversity, often caused by negative transfer from other tasks.

- Diagnosis: Monitor the population's fitness and distribution. A rapid decline in fitness variance or a cluster of solutions in one region of the search space are key indicators.

- Solution: Implement mechanisms that explicitly preserve diversity.

- Strategy 1: Integrate a Golden Section Search (GSS)-based linear mapping strategy. This helps explore more promising areas in the search space, preventing tasks from getting trapped [3].

- Strategy 2: Employ an Entropy-based Diversity Preservation Strategy. This uses information entropy to actively maintain a diverse set of solutions in the objective space [6].

- Protocol: Follow the workflow in Diagram 1 to integrate a diversity preservation component into your EMTO framework.

Problem: Performance degradation due to negative knowledge transfer. This is common when tasks are unrelated or have differing dimensionalities, causing transferred knowledge to be harmful.

- Diagnosis: Track the performance of individual tasks before and after knowledge transfer events. A consistent performance drop after transfer is a clear sign.

- Solution: Use robust knowledge transfer frameworks that assess task similarity and adapt accordingly.

- Strategy 1: Apply Multidimensional Scaling (MDS) with Linear Domain Adaptation (LDA). This establishes low-dimensional subspaces for each task and learns a robust linear mapping between them, facilitating more stable and effective transfer, even for tasks of different dimensions [3].

- Strategy 2: Implement a Competitive Scoring Mechanism. This quantifies the outcomes of transfer evolution versus self-evolution, allowing the algorithm to adaptively select beneficial source tasks and reduce the probability of negative transfer [2].

- Protocol: The methodology for MDS-based LDA involves:

- For each task, use MDS to construct a low-dimensional representation of its population.

- Employ LDA to learn a linear mapping matrix between the subspaces of each task pair.

- Use this mapping to transform and transfer solutions during the evolutionary process [3].

Problem: Poor performance on Multiobjective Multitask Optimization Problems (MMOPs). Ineffective knowledge transfer that ignores the objective space leads to a poor balance of convergence and diversity across multiple objectives.

- Diagnosis: Analyze the obtained Pareto fronts for each task. A front with poor coverage or convergence indicates the issue.

- Solution: Adopt algorithms that perform collaborative knowledge transfer across both search and objective spaces.

- Strategy: Utilize a Bi-Space Knowledge Reasoning (bi-SKR) method. This method exploits population distribution in the search space and evolutionary information in the objective space to acquire more comprehensive knowledge, preventing transfer bias [5].

- Protocol: Implement the Collaborative Knowledge Transfer-based Multiobjective Multitask PSO (CKT-MMPSO) framework, which uses bi-SKR and an Information Entropy-based Collaborative Knowledge Transfer (IECKT) mechanism to adaptively switch between transfer patterns [5]. The general structure is shown in Diagram 2.

Experimental Protocols & Performance Data

Protocol 1: Implementing an MDS-GSS Framework for Single- and Multi-Objective MTO This protocol is based on the MFEA-MDSGSS algorithm designed to mitigate negative transfer and avoid local optima [3].

- Initialization: Generate a population of individuals for each task.

- Subspace Creation: Use Multidimensional Scaling (MDS) to establish a low-dimensional subspace for each task's population.

- Subspace Alignment: Apply Linear Domain Adaptation (LDA) to learn the mapping relationships between the subspaces of different tasks.

- Evolutionary Cycle: For each generation:

- Crossover/Mutation: Perform standard evolutionary operations.

- Knowledge Transfer: Use the learned mappings to transfer individuals between tasks.

- Diversity Preservation: Apply the GSS-based linear mapping strategy to explore new regions.

- Termination: Repeat until a stopping criterion is met (e.g., max iterations).

Table 1: Key Components of the MFEA-MDSGSS "Research Reagent Solutions"

| Research Reagent | Function in the Experiment |

|---|---|

| Multidimensional Scaling (MDS) | Creates comparable, low-dimensional latent representations of high-dimensional task search spaces [3]. |

| Linear Domain Adaptation (LDA) | Learns a robust linear mapping to align the latent subspaces of different tasks, enabling stable knowledge transfer [3]. |

| Golden Section Search (GSS) | Provides a heuristic for a linear mapping strategy that helps the population escape local optima and explore promising areas [3]. |

| Multifactorial Evolutionary Algorithm (MFEA) | Serves as the base optimizer framework that handles multiple tasks simultaneously in a unified search space [3]. |

Protocol 2: Evaluating Balance in Large-Scale Optimization This protocol outlines the evaluation of a particle swarm optimizer designed for large-scale problems, focusing on dual-space diversity [6].

- Algorithm Setup: Configure the velocity update structure to integrate diversity preservation in both objective and decision spaces.

- Mechanism Activation:

- Activate the entropy-based strategy for objective space diversity.

- Activate the adaptive difference-mutation strategy for decision space diversity.

- Dynamic Learning: Utilize a dynamic convergence learning strategy to balance the two diversity mechanisms with convergence pressure.

- Benchmarking: Run the algorithm on large-scale benchmark test suites (e.g., CEC17-MTSO, WCCI20-MTSO) [2].

- Performance Measurement: Compare results against state-of-the-art algorithms using metrics like accuracy and convergence speed.

Table 2: Quantitative Performance Comparison on Benchmark Problems

| Algorithm | Key Feature | Reported Performance |

|---|---|---|

| CKT-MMPSO [5] | Collaborative knowledge transfer in search and objective spaces. | Demonstrated desirable performance and improved solution quality on multi-objective multitask benchmarks. |

| MTCS [2] | Competitive scoring mechanism for adaptive transfer. | Competitive and superior overall performance on multitask and many-task benchmark problems. |

| MFEA-MDSGSS [3] | MDS-based domain adaptation and GSS-based exploration. | Performed better on single- and multi-objective MTO benchmarks compared to state-of-the-art algorithms. |

| LS PSO with Dual-Space Diversity [6] | Entropy-based and mutation-based diversity in dual spaces. | Competitiveness in large-scale optimization and effectiveness in balancing convergence and diversity. |

Diagram 1: Workflow for an MDS-GSS based EMTO algorithm, integrating subspace alignment and diversity preservation.

Diagram 2: Collaborative knowledge transfer in CKT-MMPSO, leveraging information from both search and objective spaces.

Core Definitions in an EMTO Context

In Evolutionary Multitask Optimization (EMTO), knowledge transfer is the mechanism that allows different optimization tasks, solved concurrently, to share information, thereby potentially enhancing each other's performance [2]. The strategies for this sharing can be categorized as implicit or explicit.

The table below summarizes the core characteristics of these two approaches.

| Feature | Explicit Knowledge Transfer | Implicit Knowledge Transfer |

|---|---|---|

| Definition | The direct, articulated transfer of encoded information or solutions between tasks [7] [8]. | The automatic or indirect application of learned skills or behaviors across tasks [7] [9] [10]. |

| Nature | Logical, objective, and structured [11]. | Practical, intuitive, and applied [7] [10]. |

| Codification | Easily documented, stored, and transferred (e.g., in databases) [8] [10]. | Difficult to articulate and codify into documents [9]. |

| Primary Mechanism | Direct mapping and exchange of genetic material or model parameters [2]. | Learned evolutionary strategies or shared representation spaces [2]. |

| Example in EMTO | Transferring a promising solution vector from a source task to the population of a target task [2]. | A single search engine (e.g., L-SHADE) autonomously applying its high-performance operators across multiple tasks [2]. |

Mechanisms and Experimental Protocols

Explicit Knowledge Transfer Protocol

Explicit transfer involves the deliberate mapping and insertion of information from a source task into a target task.

Detailed Methodology: A common experimental protocol for explicit transfer in a multi-population EMTO setting involves the following steps [2]:

- Source Task Selection: Based on a metric (e.g., evolutionary score or task similarity), identify a suitable source task

T_sourcefor a given target taskT_target. - Knowledge Extraction: Select one or more high-quality individual solutions from the population of

T_source. - Transfer Operation: Map the genetic material (decision variables) from the source individual(s) to a suitable location in the search space of

T_target. This may involve:- Direct Transfer: Copying decision variables directly if the solution encodings are compatible.

- Dislocation Transfer: Rearranging the sequence of decision variables before transfer to increase population diversity and maximize evolutionary effects [2].

- Integration: The transferred genetic material is inserted into the population of

T_target, often replacing less fit individuals, to guide the evolutionary process.

Implicit Knowledge Transfer Protocol

Implicit transfer achieves cross-task learning without direct solution mapping, often through shared structures or learned behaviors.

Detailed Methodology: The Competitive Scoring Mechanism (MTCS) is a sophisticated protocol for implicit transfer [2]:

- Dual Evolution Components: For each task, maintain two parallel evolution paths:

- Transfer Evolution: Generates new candidate solutions by leveraging knowledge inferred from other tasks.

- Self-Evolution: Generates new candidates using only task-specific information.

- Competitive Scoring: After each generation, calculate a score for both transfer and self-evolution components. This score quantifies the ratio of successfully evolved individuals and their degree of improvement.

- Adaptive Selection: Based on the competition scores, the algorithm autonomously adapts:

- The probability of initiating a knowledge transfer event.

- The selection of which source task to consult implicitly.

- Operator Application: A high-performance search engine (the "implicit knowledge") is applied across all tasks, and its behavior is refined by the competitive scoring feedback, thereby balancing convergence and diversity [2].

Troubleshooting Guides and FAQs

FAQ 1: How can I mitigate negative transfer in my EMTO algorithm?

- Problem: Negative transfer occurs when knowledge from a source task harms the performance of a target task, often due to low task relatedness or excessive transfer frequency [2].

- Solution:

- Implement an adaptive transfer strategy [2]. Use a competitive scoring mechanism, like MTCS, to quantify the effectiveness of transfer and self-evolution, allowing the algorithm to automatically reduce the probability of transfer when it is detrimental [2].

- Employ a dislocation transfer strategy [2]. Rearranging the sequence of decision variables during transfer can enhance individual diversity and improve convergence, reducing the risk of negative transfer.

FAQ 2: My EMTO algorithm is converging prematurely. How can I improve population diversity?

- Problem: The population for one or more tasks has lost genetic diversity, leading to stagnation at a local optimum.

- Solution:

- Adjust Transfer Intensity: Reduce the frequency or number of individuals involved in explicit knowledge transfer to prevent a single task from dominating others [2].

- Promote Self-Evolution: Recalibrate the balance between transfer and self-evolution using a scoring mechanism. If self-evolution scores higher, the algorithm will naturally favor it, preserving task-specific diversity [2].

- Diversity-Preserving Operators: Ensure that your mutation and crossover operators are strong enough to maintain diverse populations independently.

FAQ 3: How do I select the most appropriate source task for knowledge transfer?

- Problem: Selecting an irrelevant source task leads to inefficient optimization or negative transfer.

- Solution:

- Do not use a fixed strategy. Instead, use an adaptive selection process [2]. The competitive scoring mechanism in MTCS can be extended to track the historical success of transfers from specific source tasks. Tasks that consistently contribute to successful transfer evolution should be selected with higher probability.

Signaling Pathways and Workflows

The following diagram illustrates the logical workflow of the adaptive knowledge transfer process in the MTCS algorithm, integrating both implicit and explicit elements.

Diagram 1: Adaptive Knowledge Transfer in MTCS.

Research Reagent Solutions

The table below details key algorithmic components used in advanced EMTO experiments.

| Research Reagent | Function in EMTO Experiment |

|---|---|

| Competitive Scoring Mechanism (MTCS) | Quantifies the outcome of transfer vs. self-evolution, enabling adaptive and autonomous control of knowledge transfer to reduce negative transfer [2]. |

| Dislocation Transfer Strategy | A specific explicit transfer operator that rearranges the sequence of an individual's decision variables to increase diversity and improve convergence [2]. |

| L-SHADE Search Engine | A high-performance evolutionary operator used as a core "implicit knowledge" component that can be applied across multiple tasks to assist rapid convergence [2]. |

| Multi-Armed Bandit Model | An adaptive operator selection mechanism that allows the algorithm to autonomously choose the most appropriate mutation operator for offspring generation during evolution [2]. |

Frequently Asked Questions (FAQs)

Q1: What is negative transfer in the context of Evolutionary Multitask Optimization (EMTO)?

Negative transfer refers to the phenomenon where the transfer of knowledge from one optimization task (the source task) to another (the target task) interferes with or degrades the performance of the target task [12]. It occurs when a previously learned, adaptive response for one problem is incompatible with or misleads the search for an optimal solution to a similar but different problem [12]. In EMTO, this can happen when the implicit correlations between tasks are low, causing knowledge transfer to reduce algorithm performance rather than improve it [13].

Q2: Why is managing negative transfer critical for balancing convergence and diversity?

Effectively managing negative transfer is fundamental to balancing convergence and diversity because unchecked negative transfer can prematurely narrow the population's diversity by pushing it toward suboptimal regions of the search space. This leads to a loss of genetic diversity and can trap the algorithm in local optima, hindering its ability to converge to the true Pareto front in multi-objective problems [2]. Mitigation strategies often aim to preserve beneficial diversity by selectively transferring knowledge, thus preventing the population from converging too quickly on misleading solutions.

Q3: What are the common signs that my EMTO experiment is suffering from negative transfer?

The primary indicators of negative transfer include:

- Slower Convergence Rate: The target task converges significantly more slowly than when solved in isolation [12] [2].

- Degraded Solution Quality: The final solutions found for the target task are of lower quality (e.g., worse hypervolume or higher error) compared to single-task optimization [13].

- Increased Error Rates: A higher frequency of poor-quality solutions is generated during the evolutionary process [12].

- Stagnation: The algorithm's performance plateaus at a suboptimal level, unable to escape a local optimum introduced by misleading knowledge [5].

Q4: How can I measure the impact of negative transfer in a controlled experiment?

You can quantify the impact by comparing the performance of a multitask algorithm against a single-task baseline. The following table summarizes key metrics for this comparison:

Table: Quantitative Metrics for Assessing Negative Transfer

| Metric | Description | How it Indicates Negative Transfer |

|---|---|---|

| Convergence Speed | Measures the number of iterations or function evaluations needed to reach a satisfactory solution [2]. | Slower convergence in the multitask setting versus single-task. |

| Optimality Gap | The difference in objective function value between the found solution and the known global optimum (or a high-quality reference point). | A larger optimality gap in the multitask setting. |

| Hypervolume (for Multi-objective) | The volume of the objective space covered by the obtained non-dominated solutions relative to a reference point [5]. | A smaller hypervolume in the multitask setting indicates poorer diversity and convergence. |

| Transfer Success Rate | The proportion of knowledge transfer events that lead to an improvement in the target task's solution [2]. | A low success rate indicates frequent detrimental transfers. |

Troubleshooting Guides

Issue: Slow Convergence and Performance Degradation in Target Task

Problem: When running a multitask optimization, one or more tasks are performing worse than if they were optimized independently. The algorithm's convergence has slowed, and solution quality has dropped.

Diagnosis: This is a classic symptom of negative transfer. The knowledge being shared between tasks is likely incompatible or misleading for the target task.

Solution: Implement an adaptive knowledge transfer strategy.

- Quantify Transfer Effects: Adopt a competitive scoring mechanism, like the one used in MTCS, to quantify the outcomes of both transfer evolution and self-evolution [2]. The score should reflect the ratio of successfully evolved individuals and their degree of improvement.

- Adapt Transfer Probability: Use the calculated scores to adaptively adjust the probability of initiating a knowledge transfer event. If transfer evolution scores are consistently low, reduce the transfer frequency [2].

- Select Source Tasks Intelligently: Base the selection of source tasks for a given target task on their historical evolutionary scores, favoring tasks that have previously provided beneficial knowledge [2].

Table: Methodology for the Competitive Scoring Mechanism (MTCS)

| Component | Implementation Detail |

|---|---|

| Objective | To balance transfer evolution and self-evolution, reducing the probability of negative transfer [2]. |

| Scoring | Scores are calculated based on the ratio of individuals that successfully evolve and the degree of improvement of those successful individuals [2]. |

| Adaptation | The probability of knowledge transfer is adaptively set based on the outcome of the competition between transfer and self-evolution scores [2]. |

| Source Task Selection | The source task for a given target task is selected based on its evolutionary score [2]. |

Issue: Loss of Population Diversity Leading to Local Optima

Problem: The population for a task has lost diversity and converged to a local optimum, seemingly influenced by another task's search path.

Diagnosis: Negative transfer has caused the over-representation of certain genetic material from the source task, overwhelming the target task's own search space exploration.

Solution: Employ a collaborative knowledge transfer mechanism that leverages multiple spaces.

- Bi-Space Knowledge Reasoning: Do not rely solely on the search space. Design a method, like the Bi-SKR method in CKT-MMPSO, that also exploits population distribution information in the search space and evolutionary information (e.g., dominance relationships) in the objective space [5].

- Information Entropy for Stage Detection: Use information entropy to dynamically identify the current evolutionary stage (early, mid, late) of the population [5].

- Adaptive Transfer Patterns: Based on the identified stage, adaptively switch between different knowledge transfer patterns. For example:

- Early Stage: Favor patterns that enhance diversity.

- Late Stage: Favor patterns that enhance convergence [5].

Diagram: Adaptive Knowledge Transfer Based on Evolutionary Stage

The Scientist's Toolkit: Research Reagent Solutions

This table outlines key algorithmic components ("reagents") for designing EMTO experiments resistant to negative transfer.

Table: Essential Reagents for Mitigating Negative Transfer in EMTO

| Reagent Solution | Function in the Experiment | Key Consideration |

|---|---|---|

| Association Mapping (e.g., PLS) | Strengthens the connection between source and target search spaces by extracting correlated principal components, enabling higher-quality, bidirectional knowledge transfer [13]. | Effective for tasks where a linear or non-linear subspace relationship exists. |

| Competitive Scoring Mechanism | Quantifies the outcomes of transfer vs. self-evolution, providing a metric to adaptively control transfer probability and select beneficial source tasks [2]. | Requires a clear definition of a "successful" evolutionary step. |

| Information Entropy | Measures population diversity and is used to divide the evolutionary process into distinct stages, allowing for stage-specific knowledge transfer strategies [5]. | Crucial for dynamically balancing exploration and exploitation. |

| Bi-Space Knowledge Reasoning | Mitigates transfer bias by exploiting information from both the search space (solution locations) and the objective space (fitness, dominance) to guide knowledge transfer [5]. | Increases computational complexity but provides a more holistic view. |

| Adaptive Population Reuse | Retains historically successful individuals and reuses their genetic information to guide evolution, helping to preserve valuable traits and prevent loss of diversity [13]. | The number of retained individuals must be managed to avoid excessive memory usage. |

Theoretical Frameworks for Dual Search Space Management

Frequently Asked Questions (FAQs)

1. What is the most significant cause of negative transfer when optimizing tasks with different dimensionalities, and how can it be mitigated? The primary cause is the difficulty in learning robust mapping relationships between high-dimensional tasks, particularly those with differing dimensionalities, from limited population data. This often induces significant negative transfer, where knowledge from one task hinders progress on another [3]. A prominent mitigation strategy is to use Multidimensional Scaling (MDS) to establish low-dimensional subspaces for each task. Subsequently, Linear Domain Adaptation (LDA) is employed to learn linear mapping relationships between these subspaces. This method aligns the latent manifolds of different tasks, enabling more stable and effective knowledge transfer, even for tasks of different dimensions [3].

2. Our multi-objective multitasking algorithm is converging prematurely. What strategies can help maintain population diversity? Premature convergence often occurs when knowledge transfer from a source task pulls a target task into a local optimum [3]. Several strategies can counteract this:

- Golden Section Search (GSS): Integrating a GSS-based linear mapping strategy can help explore more promising search areas, preventing tasks from becoming trapped in local optima and enhancing population diversity [3].

- Level-Based Learning: Instead of only learning from the global best solution, particles or individuals can learn from others at different, higher fitness levels. This utilizes a more diverse set of excellent information, preventing premature convergence caused by over-reliance on a single leader [14].

- Collaborative Knowledge Transfer: Leveraging information from both the search space and the objective space can help balance convergence and diversity. An information entropy-based mechanism can adaptively switch between different knowledge transfer patterns depending on the evolutionary stage [5].

3. How can we effectively identify which knowledge to transfer between tasks, especially when their optimal solutions are far apart? Relying solely on elite solutions for transfer can be ineffective when task optima are distant [15]. An adaptive method based on population distribution information is recommended. This involves:

- Dividing each task's population into sub-populations based on fitness.

- Using a metric like Maximum Mean Discrepancy (MMD) to calculate the distribution difference between sub-populations in the source task and the sub-population containing the best solution in the target task.

- Selecting individuals from the source sub-population with the smallest MMD value for transfer. This approach identifies and transfers solutions that are distributionally similar, rather than just those with the best fitness, which can be more effective for tasks with low inter-task relevance [15].

4. For multi-objective multitask problems, how can we exploit relationships in the objective space to improve transfer? Most algorithms focus solely on knowledge transfer in the search space, ignoring potential relationships in the objective space [5]. A bi-space knowledge reasoning method can be designed to:

- Exploit distribution information of similar populations from the search space.

- Simultaneously leverage particle evolutionary information from the objective space.

- By combining knowledge from both spaces, this method prevents transfer bias and provides a more comprehensive foundation for generating promising solutions, thereby improving the overall quality of the non-dominated solution set [5].

Quantitative Data on Algorithm Performance

The following tables summarize experimental results from recent EMTO studies, providing a comparative view of algorithm performance on standard benchmarks.

Table 1: Performance Comparison on Single-Objective Multitask Benchmark Problems

| Algorithm Base | Key Transfer Mechanism | Key Diversity/Convergence Mechanism | Performance on Problems with Low Inter-Task Relevance |

|---|---|---|---|

| MFEA (Genetic) | Implicit (chromosome crossover) | Assortative mating | Susceptible to negative transfer [3] |

| MFEA-MDSGSS (Genetic) | Explicit (MDS-based LDA) | GSS-based linear mapping | Superior performance, mitigates negative transfer [3] |

| Adaptive MT (Distribution-based) | Explicit (MMD-based distribution transfer) | Improved randomized interaction probability | High solution accuracy and fast convergence [15] |

Table 2: Performance Comparison on Multi-Objective Multitask Benchmark Problems

| Algorithm Base | Knowledge Transfer Space | Adaptive Mechanism | Balance of Convergence & Diversity |

|---|---|---|---|

| MO-MFEA (Genetic) | Search Space | Implicit (crossover) | Acceptable balance but can be unstable [5] |

| CKT-MMPSO (PSO) | Search & Objective Space | Information Entropy | Desirable, adaptively switches patterns [5] |

| MTLLSO (PSO) | Search Space | Level-based learning | Satisfying balance, utilizes diverse knowledge [14] |

Detailed Experimental Protocols

Protocol 1: Implementing MDS-based Linear Domain Adaptation for Knowledge Transfer

This protocol is designed to facilitate knowledge transfer between tasks with different search space dimensionalities, a common challenge in EMTO [3].

- Objective: To align the search spaces of two or more tasks to enable effective and robust knowledge transfer.

- Materials/Reagents: A population of solutions for each task, a Multifactorial Evolutionary Algorithm (MFEA) framework.

- Methodology:

- Subspace Creation: For each task ( Ti ), apply Multidimensional Scaling (MDS) to the current population's decision variables. The goal is to create a low-dimensional subspace ( Si ) that preserves the pairwise distances or similarities between individuals as much as possible. The dimensions of these subspaces can be set to be equal, even if the original task dimensions differ.

- Mapping Learning: For a pair of tasks (source ( Ts ), target ( Tt )), use Linear Domain Adaptation (LDA). This involves learning a linear mapping matrix ( M{s \to t} ) that minimizes the discrepancy between the source subspace ( Ss ) and the target subspace ( S_t ). This matrix effectively "aligns" the two subspaces.

- Knowledge Transfer: To transfer a solution ( xs ) from ( Ts ) to ( Tt ), first map it to its subspace representation. Then, use the learned matrix ( M{s \to t} ) to project it into the target subspace ( St ). Finally, decode this projected point back to the original search space of ( Tt ) to create an offspring solution.

- Integration: This transfer mechanism is integrated into the evolutionary cycle of an MFEA, allowing for periodic cross-task knowledge exchange.

Protocol 2: Evaluating Algorithm Performance on Multi-Objective Multitask Problems

This protocol outlines the standard procedure for benchmarking EMTO algorithms on problems with multiple objectives per task [5].

- Objective: To quantitatively assess an algorithm's ability to find well-converged and diverse Pareto fronts for all tasks simultaneously.

- Materials/Reagents: Standard multi-objective multitask benchmark suites (e.g., adaptations of CEC2017), performance indicators (Hypervolume, IGD).

- Methodology:

- Experimental Setup: Run the algorithm on a selected benchmark problem for a fixed number of function evaluations or generations. Record the final population for each task.

- Performance Calculation: For each task, calculate quality indicators.

- Inverted Generational Distance (IGD): Measures convergence and diversity by calculating the average distance from each point in the true Pareto front to the nearest solution in the obtained set. A lower IGD indicates better performance.

- Hypervolume (HV): Measures the volume of the objective space covered by the obtained non-dominated solutions relative to a reference point. A higher HV indicates better performance.

- Statistical Analysis: Perform multiple independent runs of the algorithm. Use statistical tests (e.g., Wilcoxon rank-sum test) to compare the performance of the proposed algorithm against other state-of-the-art EMTO algorithms based on the IGD and HV metrics from these runs.

- Knowledge Transfer Analysis: Monitor the frequency and success of knowledge transfer events during the search process to correlate them with performance improvements.

Framework and Workflow Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Methodological Components for Dual Search Space Management

| Research Reagent | Function in EMTO | Primary Reference |

|---|---|---|

| Multidimensional Scaling (MDS) | Reduces dimensionality of task search spaces to create alignable latent subspaces, mitigating negative transfer. | [3] |

| Linear Domain Adaptation (LDA) | Learns a linear mapping matrix to align the subspaces of different tasks, enabling robust knowledge transfer. | [3] |

| Golden Section Search (GSS) | A linear mapping strategy used to explore promising search areas, helping populations escape local optima. | [3] |

| Maximum Mean Discrepancy (MMD) | A metric to compute distribution differences between sub-populations, guiding the selection of transferable knowledge. | [15] |

| Level-Based Learning Swarm Optimizer (LLSO) | A PSO variant where particles learn from others at different fitness levels, maintaining diversity and preventing premature convergence. | [14] |

| Information Entropy | Used to adaptively divide the evolutionary process into stages and switch knowledge transfer patterns for balancing convergence and diversity. | [5] |

Advanced Algorithms and Transfer Mechanisms for Effective EMTO

Adaptive Multi-Task Optimization with Competitive Scoring Mechanisms

Troubleshooting Guides

Guide 1: Addressing Negative Knowledge Transfer

Problem: The algorithm exhibits performance degradation on certain tasks despite knowledge transfer.

Q1: How can I identify if my experiment is experiencing negative transfer?

- Symptoms: Decline in convergence speed on one or more tasks, population diversity loss, or stagnation in solution quality despite active knowledge transfer.

- Diagnosis: Monitor per-task performance metrics throughout evolution. A consistent performance drop coinciding with transfer events indicates potential negative transfer. Implement the competitive scoring mechanism from MTCS to quantify effects of transfer versus self-evolution [2].

Q2: What strategies can mitigate negative transfer in competitive scoring systems?

- Source Task Selection: Use evolutionary scores to adaptively select source tasks rather than random or fixed selection. Scores quantify improvement ratios and degree of enhancement from both transfer and self-evolution components [2].

- Transfer Probability Adjustment: Balance transfer evolution and self-evolution by allowing the algorithm to automatically set knowledge transfer probability based on competitive score outcomes [2].

- Dislocation Transfer: Implement dislocation transfer strategy to rearrange sequence of individual decision variables, increasing diversity and improving convergence during knowledge transfer [2].

Guide 2: Balancing Convergence and Diversity

Problem: Solutions converge prematurely or lack sufficient diversity across tasks.

Q3: How does the competitive scoring mechanism balance convergence and diversity?

- Mechanism: MTCS uses two evolution components (transfer and self-evolution) that compete via scoring. Scores reflect both ratio of successfully evolved individuals and improvement degree, automatically balancing exploration and exploitation [2].

- Implementation: The dislocation transfer strategy enhances diversity by rearranging decision variable sequences, while leadership group selection guides transfer to maintain convergence [2].

Q4: What parameters most significantly affect convergence-diversity balance?

- Key Parameters: Transfer probability settings, source task selection criteria, and leadership group size in dislocation transfer.

- Optimization Approach: Use adaptive rather than fixed parameters. Allow the competitive scoring mechanism to automatically adjust transfer probability based on quantified evolution outcomes [2].

Frequently Asked Questions

Algorithm Implementation Questions

Q: What distinguishes MTCS from other evolutionary multitask optimization algorithms? MTCS introduces a novel competitive scoring mechanism that quantifies outcomes of both transfer evolution and self-evolution. This allows automatic adjustment of knowledge transfer probability and source task selection, significantly reducing negative transfer while balancing convergence and diversity across tasks [2].

Q: How does the dislocation transfer strategy improve performance? The dislocation transfer strategy rearranges the sequence of individual decision variables to increase population diversity. It then selects leading individuals from different leadership groups to guide transfer evolution, effectively improving algorithm convergence [2].

Q: Can MTCS handle many-task optimization problems? Yes, MTCS has been validated on both multitask (2-3 tasks) and many-task (more than 3 tasks) benchmark problems, demonstrating superior performance compared to ten state-of-the-art EMTO algorithms [2].

Experimental Design Questions

Q: What are appropriate performance metrics for evaluating MTCS?

- Convergence metrics: Measure proximity to known optima for each task

- Diversity metrics: Assess solution distribution across trade-off surfaces

- Transfer efficiency: Quantify knowledge exchange effectiveness using competitive scores

- Computational efficiency: Evaluate resource requirements relative to performance gains [2]

Q: How should researchers set up control experiments for MTCS validation? Compare against established EMTO algorithms using standardized benchmark suites like CEC17-MTSO and WCCI20-MTSO. Include problems with varying intersection degrees (complete, partial, no intersection) and similarity levels (high, medium, low) to comprehensively assess performance [2].

Experimental Protocols and Data

Benchmark Performance Results

Table 1: MTCS Performance Comparison on Standard Benchmarks

| Benchmark Suite | Problem Type | Competitive Score Ratio | Transfer Efficiency | Overall Ranking |

|---|---|---|---|---|

| CEC17-MTSO | Complete Intersection | 0.89 | 0.92 | 1/10 |

| CEC17-MTSO | Partial Intersection | 0.85 | 0.88 | 1/10 |

| CEC17-MTSO | No Intersection | 0.82 | 0.79 | 2/10 |

| WCCI20-MTSO | High Similarity | 0.91 | 0.94 | 1/10 |

| WCCI20-MTSO | Medium Similarity | 0.87 | 0.86 | 1/10 |

| WCCI20-MTSO | Low Similarity | 0.83 | 0.81 | 2/10 |

Note: Performance metrics represent average values across multiple problem instances. Competitive score ratio measures the proportion of successful evolution events, while transfer efficiency quantifies the effectiveness of knowledge exchange between tasks [2].

Implementation Methodology

Competitive Scoring Mechanism Protocol:

- Initialize K populations for K tasks with uniform encoding

- Calculate Evolutionary Scores:

- Track successful evolution events for both transfer and self-evolution components

- Quantify improvement degree for successfully evolved individuals

- Compute competitive scores as weighted combination of success ratio and improvement magnitude

- Adaptive Transfer Setup:

- Use score differentials to set knowledge transfer probabilities

- Select source tasks based on historical transfer success scores

- Execute Dislocation Transfer:

- Rearrange decision variable sequences to increase diversity

- Select leading individuals from leadership groups

- Implement transfer with guided evolution

- Iterate until termination criteria met, updating scores each generation [2]

The Scientist's Toolkit

Table 2: Essential Research Components for MTCS Implementation

| Component | Function | Implementation Notes |

|---|---|---|

| Competitive Scoring Module | Quantifies transfer vs self-evolution effectiveness | Calculate success ratios and improvement degrees per evolutionary event |

| Dislocation Transfer Engine | Rearranges decision variables to enhance diversity | Implement variable sequence permutation and leadership group selection |

| Adaptive Probability Controller | Dynamically adjusts knowledge transfer rates | Use score differentials to modulate transfer intensity between tasks |

| Multi-Population Framework | Maintains separate evolving populations for each task | Ensure uniform encoding across all task populations |

| L-SHADE Search Engine | Provides high-performance evolutionary operations | Embed as core search operator for rapid convergence [2] |

Algorithm Workflow Visualization

MTCS Algorithm Flow

Competitive Scoring Mechanism

Dual-Mode Evolutionary Frameworks with Self-Adjusting Capabilities

Frequently Asked Questions (FAQs)

Q1: What is the primary goal of a self-adjusting dual-mode evolutionary framework in Multi-Task Optimization? The primary goal is to efficiently solve multiple optimization tasks simultaneously by curbing performance degradation. It achieves this through a self-adjusting strategy that guides the selection between different evolutionary modes based on spatial-temporal information, and employs mechanisms like variable classification and dynamic knowledge transfer to balance convergence speed and population diversity [16].

Q2: What is "negative transfer" and how can my experiment prevent it? Negative transfer occurs when knowledge shared between tasks is incompatible, leading to performance degradation instead of improvement [5]. To prevent it, your experimental setup should:

- Implement a dynamic weighting strategy for efficient knowledge utilization [16].

- Use a bi-space knowledge reasoning method that considers both search space distribution and objective space evolutionary information to reduce transfer bias [5].

- Employ a censor module or similar to estimate the usefulness of knowledge before transfer, filtering out unreliable information [17].

Q3: My algorithm is converging prematurely. Which component should I investigate first? First, investigate the self-adjusting strategy based on spatial-temporal information that guides the selection of evolutionary modes [16]. Ensure it correctly identifies population stagnation. You should also verify the parameters of the information entropy-based collaborative knowledge transfer mechanism, as it is designed to balance convergence and diversity by adapting transfer patterns across different evolutionary stages [5].

Q4: How do I quantify the balance between convergence and diversity in my results for the thesis? You should use established multi-objective optimization performance indicators. The referenced experiments often use metrics like Inverted Generational Distance (IGD) and Hypervolume (HV) to simultaneously measure convergence toward the true Pareto front and diversity of the solution set [16] [5]. Present these metrics in comparative tables against peer algorithms.

Troubleshooting Guides

Problem: Low Convergence Speed Across Multiple Tasks

Symptoms: The algorithm requires an excessive number of iterations to find acceptable solutions for one or more tasks, or fails to converge altogether.

Diagnosis and Resolution:

| Possible Cause | Diagnostic Check | Solution |

|---|---|---|

| Inefficient Knowledge Transfer | Analyze the transfer weights or success rates between tasks. Are they consistently low? | Implement a dynamic weighting strategy that prioritizes knowledge from high-performing tasks and reduces influence from low-performing ones [16] [5]. |

| Incorrect Evolutionary Mode Selection | Log the frequency of mode switches. Is the algorithm stuck in a mode inappropriate for the current evolutionary state? | Calibrate the spatial-temporal information thresholds in the self-adjusting strategy to trigger mode switches more effectively [16]. |

| Poor Variable Grouping | Check if variables with different attributes (e.g., position vs. scale) are being grouped and evolved with unsuitable operators. | Refine the classification mechanism for decision variables to ensure more coherent grouping, allowing for targeted evolution [16]. |

Problem: Loss of Population Diversity

Symptoms: The population collapses to a few similar solutions, leading to premature convergence and an inability to explore other areas of the Pareto front.

Diagnosis and Resolution:

| Possible Cause | Diagnostic Check | Solution |

|---|---|---|

| Over-exploitation in one mode | Monitor the diversity metric (e.g., spread) within each task over generations. Does it drop sharply after a mode switch? | Adjust the information entropy-based mechanism to initiate diversity-oriented knowledge transfer patterns earlier in the evolutionary process [5]. |

| Lack of Niche Preservation | Verify if the algorithm has a mechanism to promote solutions in underrepresented regions. | Introduce or strengthen a niche-based selection mechanism within the evolutionary operator pool to maintain diverse sub-populations [16]. |

| Biased Transfer | Check if knowledge transfer is overwhelmingly coming from a single, fast-converging task. | Use the collaborative knowledge transfer mechanism to balance the influence of convergence-focused and diversity-focused knowledge from different tasks [5]. |

Experimental Protocols

Protocol 1: Benchmarking Against State-of-the-Art Algorithms

This protocol validates the performance of a new self-adjusting dual-mode framework against established algorithms.

1. Objective: To empirically demonstrate that the proposed framework significantly outperforms peers in solving multi-task optimization benchmark instances [16].

2. Materials/Reagents:

- Software Platform: MATLAB or Python with necessary optimization toolboxes.

- Benchmark Problems: A set of standardized multi-objective, multi-task optimization benchmark functions (e.g., ZDT, DTLZ series for multi-objective tasks) [16] [5].

- Peer Algorithms: Implementations of state-of-the-art algorithms for comparison, such as:

3. Methodology:

- Step 1 - Parameter Setup: Define a common parameter set (population size, number of generations, etc.) for all algorithms to ensure a fair comparison.

- Step 2 - Independent Runs: Execute each algorithm (including the proposed one) on the selected benchmark problems for a statistically significant number of independent runs (e.g., 30 runs) to account for stochasticity.

- Step 3 - Performance Evaluation: For each run, calculate performance indicators like Hypervolume (HV) and Inverted Generational Distance (IGD) at fixed generation intervals.

- Step 4 - Data Collection & Analysis: Collect the final HV and IGD values from all runs. Perform statistical tests (e.g., Wilcoxon signed-rank test) to determine if performance differences are statistically significant.

4. Data Presentation: Summarize the quantitative results in a table for clear comparison. Below is a template:

Table 1: Performance Comparison (Mean ± Standard Deviation) on Benchmark Problems

| Benchmark Problem | Performance Indicator | Proposed Framework | MO-MFEA | MOMFEA-SADE |

|---|---|---|---|---|

| Problem A | Hypervolume | 0.85 ± 0.02 | 0.78 ± 0.03 | 0.80 ± 0.02 |

| Problem A | IGD | 0.05 ± 0.01 | 0.09 ± 0.02 | 0.07 ± 0.01 |

| Problem B | Hypervolume | 0.90 ± 0.01 | 0.82 ± 0.04 | 0.85 ± 0.03 |

| Problem B | IGD | 0.03 ± 0.01 | 0.08 ± 0.03 | 0.06 ± 0.02 |

Protocol 2: Validating the Self-Adjusting Mechanism

This protocol tests the efficacy of the core self-adjusting strategy.

1. Objective: To confirm that the self-adjusting strategy based on spatial-temporal information correctly guides evolutionary mode selection in response to different population states [16].

2. Methodology:

- Step 1 - Instrumentation: Modify the algorithm's code to log the trigger events and mode switches initiated by the self-adjusting strategy during a run.

- Step 2 - Controlled Run: Execute the algorithm on a benchmark problem and record the generation numbers at which mode switches occur.

- Step 3 - Correlation Analysis: Correlate the logged mode switches with key population metrics (e.g., diversity metric, rate of fitness improvement) calculated for the same generations. The switches should correlate with periods of stagnation or diversity loss.

- Step 4 - Ablation Study: Run a version of the algorithm with the self-adjusting mechanism disabled (e.g., forced to use a single mode) and compare its performance with the full algorithm using the methods from Protocol 1.

Workflow and System Diagrams

Dual-Mode Evolutionary Framework Workflow

Bi-Space Knowledge Reasoning Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational "Reagents" for EMTO Experiments

| Item | Function in the Experiment |

|---|---|

| Benchmark Problem Suites | Standardized test functions (e.g., ZDT, DTLZ) that serve as a controlled environment to evaluate and compare the performance of different EMTO algorithms [16] [5]. |

| Performance Indicators (HV, IGD) | Quantitative metrics that act as assays for measuring algorithm performance. Hypervolume (HV) measures convergence and diversity, while Inverted Generational Distance (IGD) measures proximity to the true Pareto front [16] [5]. |

| Knowledge Transfer Metrics | Custom metrics to track the frequency, source, target, and success rate of cross-task knowledge transfer, helping to diagnose positive or negative transfer effects [5]. |

| Statistical Testing Package | Software libraries (e.g., in Python or R) for performing statistical significance tests (e.g., Wilcoxon test) to ensure observed performance differences are not due to random chance [16]. |

| Dynamic Parameter Control | The implementation of the self-adjusting strategy that acts as a regulator, dynamically tuning algorithm parameters (e.g., mode selection, transfer weights) in response to the current search state [16] [17]. |

Frequently Asked Questions (FAQs)

Q1: What is the core challenge in Evolutionary Multi-task Optimization (EMTO) that domain adaptation aims to solve?

A1: The core challenge is negative transfer, which occurs when knowledge shared between optimization tasks is dissimilar or misaligned, leading to performance degradation and premature convergence instead of improvement. Domain adaptation techniques, such as Multidimensional Scaling (MDS) and Auto-Encoding (AE), aim to align the search spaces of different tasks, enabling more effective and positive knowledge transfer. This is crucial for balancing convergence speed with population diversity in EMTO [18] [3].

Q2: In the context of EMTO, how does Auto-Encoding differ from traditional usage in machine learning?

A2: In traditional machine learning, auto-encoders are often used for static feature learning or dimensionality reduction on fixed datasets. In EMTO, they are adapted for dynamic, progressive domain adaptation. This means the auto-encoders are continuously updated throughout the evolutionary process to adapt to the changing populations, moving beyond static pre-trained models. Techniques like Segmented PAE (for staged alignment) and Smooth PAE (using eliminated solutions for gradual refinement) are specifically designed for this dynamic environment [18].

Q3: When should I prefer MDS over Auto-Encoding for domain adaptation in my experiments?

A3: The choice depends on the nature of your tasks and computational constraints. MDS-based Linear Domain Adaptation (LDA) is particularly effective when dealing with tasks of differing dimensionalities, as it learns a robust linear mapping in a compact latent space. It can be more stable when learning from limited population data. Conversely, Auto-Encoders are powerful for learning complex, non-linear mappings between tasks and can be integrated into the evolutionary process for continuous adaptation [3] [18].

Q4: What are the common signs of negative transfer in an EMTO experiment, and how can it be mitigated?

A4: Common signs include:

- Premature convergence of one or more tasks.

- A noticeable decline in solution quality after knowledge transfer operations.

- Stagnation of the population, where diversity is lost without corresponding improvements in convergence. Mitigation strategies include employing explicit knowledge transfer controls, using information entropy to adapt transfer patterns during different evolutionary stages, and implementing robust mapping techniques like MDS-LDA to ensure task similarity before transfer [5] [3].

Troubleshooting Guides

Issue: Poor Convergence Due to Negative Transfer

Problem: Your multi-task algorithm is converging slower than single-task baselines, or solution quality is degrading, indicating potential negative transfer.

| Possible Cause | Recommended Solution | Key References |

|---|---|---|

| Highly dissimilar tasks with unaligned search spaces. | Implement MDS-based Linear Domain Adaptation (LDA) to project tasks into aligned low-dimensional subspaces before transfer. | [3] |

| Static or misaligned knowledge transfer mechanism. | Adopt a Progressive Auto-Encoder (PAE) that updates continuously using the evolving population to dynamically align domains. | [18] |

| Lack of adaptive control over transfer. | Use an Information Entropy-based Collaborative Knowledge Transfer (IECKT) mechanism to adaptively switch transfer patterns based on evolutionary stage. | [5] |

Step-by-Step Protocol: Implementing MDS-LDA for Negative Transfer Mitigation

- Population Sampling: For each task ( T_i ), select a representative subset of the current population.

- Subspace Construction: Apply Multidimensional Scaling (MDS) to the selected samples from each task to construct a low-dimensional subspace ( S_i ). This reduces the dimensionality and captures the essential manifold of the task.

- Mapping Learning: Use Linear Domain Adaptation (LDA) to learn a linear mapping matrix ( M{i→j} ) between the subspaces ( Si ) and ( S_j ) of a source and target task.

- Knowledge Transfer: To transfer knowledge from ( Ti ) to ( Tj ), map a solution from ( Si ) to ( Sj ) using ( M{i→j} ), then decode it back to the original search space of ( Tj ).

- Integration: Introduce the mapped solution into the population of the target task ( T_j ) [3].

Issue: Loss of Population Diversity

Problem: The population for one or more tasks has lost diversity, leading to premature convergence and an inability to explore promising regions of the search space.

| Possible Cause | Recommended Solution | Key References |

|---|---|---|

| Over-exploitation from aggressive knowledge transfer. | Integrate a Golden Section Search (GSS)-based linear mapping strategy to explore new, promising areas and escape local optima. | [3] |

| Ineffective balancing of convergence and diversity. | Implement a bi-space knowledge reasoning (bi-SKR) method that leverages both search space distribution and objective space evolutionary information to guide transfer. | [5] |

| Static resource allocation to tasks. | Employ an adaptive solver that dynamically allocates computational resources to different tasks based on their solving state, preventing one task from dominating. | [18] |

Step-by-Step Protocol: Leveraging GSS for Diversity Enhancement

- Identify Promising Direction: Within the latent subspace of a task, identify a search direction towards an unexplored region, often away from current local optima.

- Define Search Interval: Establish an interval along this direction for a linear search.

- Golden Section Search: Apply the GSS algorithm to efficiently sample new points within this interval. The GSS reduces the interval of uncertainty in a way that minimizes the number of function evaluations required to find an improved region.

- Population Update: Evaluate the newly generated points and incorporate high-quality, diverse individuals back into the population to help escape local optima [3].

Experimental Protocols & Data

Detailed Protocol: Progressive Auto-Encoding (PAE)

This protocol outlines the methodology for integrating a Progressive Auto-Encoder into an EMTO algorithm [18].

- Initialization: Initialize separate populations for each task (in a multi-population framework) or a unified population (in a multifactorial framework).

- Auto-Encoder Training:

- Segmented PAE: Train distinct auto-encoders at different, predefined stages of the evolutionary process (e.g., early, mid, late). Each stage's auto-encoder is trained on the current population data to capture phase-specific features.

- Smooth PAE: Continuously update a single auto-encoder throughout the run. Use a memory buffer that includes high-quality eliminated solutions from previous generations to facilitate gradual and refined domain adaptation without forgetting useful past knowledge.

- Latent Space Alignment: The trained encoder projects solutions from different tasks into a shared latent space. The alignment is learned implicitly through the reconstruction objective of the auto-encoder.

- Knowledge Transfer: Select parent solutions from this shared latent space for crossover or mapping operations. Offspring are then decoded back to their native task's search space.

- Evaluation & Selection: Evaluate new solutions and perform environmental selection to update the population(s). Repeat from Step 2.

Performance Data from Benchmark Studies

The following tables summarize quantitative results from key studies, providing a benchmark for expected performance.

Table 1: Performance Comparison of MDS-based Algorithms on Single-Objective MTO Benchmarks [3]

| Algorithm | Average Best Fitness (Task 1) | Average Best Fitness (Task 2) | Positive Transfer Rate | Negative Transfer Rate |

|---|---|---|---|---|

| MFEA-MDSGSS | 0.941 | 0.885 | 92.5% | 3.8% |

| MFEA-AKT | 0.905 | 0.842 | 85.1% | 8.5% |

| MFEA | 0.872 | 0.811 | 78.3% | 15.2% |

| Traditional EA (Single-Task) | 0.860 | 0.802 | - | - |

Table 2: Performance of Auto-Encoder based Methods on Real-World Applications [18] [19]

| Application Domain | Algorithm | Key Metric | Performance |

|---|---|---|---|

| Drug Target Identification | optSAE + HSAPSO | Classification Accuracy | 95.52% |

| Multi-objective MTO | MO-MTEA-PAE | Hypervolume Indicator | ~15% improvement over MO-MFEA |

| Production Scheduling | MTEA-PAE | Makespan Improvement | ~10% faster convergence |

Workflow Visualization

The following diagram illustrates the logical workflow of a domain adaptation process integrating both MDS and Auto-Encoder techniques within an EMTO framework.

Diagram 1: Integrated DA Workflow in EMTO.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Materials for Domain Adaptation in EMTO

| Item Name | Function / Purpose | Example / Note |

|---|---|---|

| Domain Adaptation Toolbox for Medical (DomainATM) | An open-source platform (MATLAB) for fast facilitation and customization of feature-level and image-level domain adaptation methods. | Useful for medical data analysis; provides a user-friendly GUI and interface for self-defined algorithms [20]. |

| Progressive Auto-Encoder (PAE) | A dynamic domain adaptation technique to continuously align search spaces throughout the evolutionary process, preventing static model limitations. | Implement as "Segmented PAE" for stage-wise alignment or "Smooth PAE" for gradual refinement [18]. |

| MDS-based Linear Domain Adaptation (LDA) | Mitigates negative transfer in high-dimensional tasks by learning robust linear mappings between low-dimensional subspaces created by MDS. | Effective for tasks with differing dimensionalities [3]. |

| Information Entropy-based Collaborative Knowledge Transfer (IECKT) | A mechanism to balance convergence and diversity by adaptively selecting knowledge transfer patterns based on the evolutionary stage. | Divides evolution into stages (early, mid, late) for different transfer strategies [5]. |

| Benchmark Platform (MToP) | A standardized benchmarking platform for Evolutionary Multi-task Optimization. | Essential for fair comparison and validation of new algorithms against state-of-the-art methods [18]. |

Multi-Population vs. Multi-Factorial Evolutionary Frameworks

Frequently Asked Questions

Q1: What is the fundamental difference between multi-population and multi-factorial evolutionary frameworks?

Multi-population models primarily use spatial separation (e.g., island models) to maintain population diversity and prevent premature convergence, where subpopulations evolve independently with occasional migration [21]. In contrast, multi-factorial evolutionary algorithms (MFEA) represent a novel multi-population model where each population is evolved for a specific task, leveraging implicit genetic transfer across tasks through a unified representation and assortative mating [22]. The key distinction lies in MFEA's explicit design for concurrent optimization of multiple tasks by exploiting potential genetic complementarities.

Q2: How can I prevent negative transfer when knowledge is shared between unrelated optimization tasks?

Negative transfer occurs when knowledge sharing hinders performance, often due to transferring information between unrelated tasks. Implement a Population Distribution-based Measurement (PDM) to dynamically evaluate task relatedness during evolution [23]. This technique uses:

- Similarity measurement: Assesses landscape similarity between tasks

- Intersection measurement: Evaluates the degree of intersection of global optima Combine PDM with a Multi-Knowledge Transfer (MKT) mechanism that employs both individual-level and population-level learning operators to regulate knowledge transfer based on the computed relatedness [23].

Q3: Why does my multi-population algorithm converge to local optima despite using multiple subpopulations?

This "population drift" phenomenon often occurs due to:

- Insufficient diversity maintenance between subpopulations

- Ineffective migration policies that don't preserve elitist solutions

- Poor balancing of exploration and exploitation across populations

Implement an elitist probability-based migration policy that considers only the Pareto front during migration [21]. Additionally, adaptively adjust the number of migrants and migration interval based on population size and problem dimensionality, as classical recommendations may not suit modern high-dimensional problems [21].

Q4: How do I handle badly scaled objective functions in real-world multi-objective optimization problems?

For badly scaled objective spaces common in real-world applications like mechanical design problems:

- Implement a fitness function with normalization to handle disparate scales [24]

- Employ a heterogeneous operator strategy combining Genetic Algorithm operators (for enhanced convergence) and Differential Evolution operators (to tackle variable linkages) [24]

- This approach maintains diversity while ensuring effective convergence across differently scaled objectives

Q5: What strategies effectively balance convergence and diversity in multi-population frameworks for constrained problems?

The Adaptive Coevolutionary Multitasking (ACEMT) framework demonstrates success through:

- Dual auxiliary tasks: Constraint relaxation (for diversity) and constraint selection (for convergence) [25]

- Dynamic constraint handling: Adaptively narrows constraint boundaries to facilitate exploration [25]

- Knowledge transfer: Enables complementary optimization focus between coevolving populations [25]

Table 1: Troubleshooting Common Experimental Issues

| Problem | Root Cause | Solution | Key References |

|---|---|---|---|

| Negative transfer between tasks | High knowledge transfer between unrelated tasks | Implement dynamic task-relatedness measurement (PDM) with adaptive transfer | [23] |

| Population drift to local optima | Insufficient diversity preservation in migration | Adopt elitist probability-based migration focusing on Pareto front | [21] |

| Poor performance on badly-scaled objectives | Disparate objective function scales | Use normalized fitness functions with heterogeneous operators | [24] |

| Slow convergence in high-dimensional spaces | Ineffective variable grouping | Implement multi-stage adaptive weighted optimization (MPSOF) | [26] |

| Difficulty handling constraints | Imbalance between constraint satisfaction and objective optimization | Apply adaptive coevolutionary multitasking with dual auxiliary tasks | [25] |

Experimental Protocols & Methodologies

Protocol 1: Implementing Hybrid Knowledge Transfer (HKT) for Multi-Task Optimization

Purpose: To enable effective knowledge transfer across related optimization tasks while minimizing negative transfer.

Materials: Standard evolutionary algorithm framework, benchmark multi-task optimization problems.

Procedure:

- Initialize a unified population with random skill factor assignment

- Design Population Distribution-based Measurement (PDM):

- Calculate similarity measurement based on distribution characteristics of evolving populations

- Compute intersection measurement to evaluate overlap of global optima

- Dynamically update task relatedness metrics each generation

- Implement Multi-Knowledge Transfer (MKT) mechanism:

- Individual-level learning: Share evolutionary information between solutions with different skill factors based on similarity

- Population-level learning: Replace unpromising solutions with transferred solutions from assisted tasks based on intersection measurement

- Regulate transfer intensity adaptively based on PDM outputs

- Evaluate on CEC 2017 multi-task optimization test suite [23]

Expected Outcome: Superior performance compared to fixed random mating probability approaches, with better balance between convergence and diversity.

Protocol 2: Multi-Population Multi-Stage Adaptive Weighted Optimization (MPSOF)

Purpose: To address large-scale multi-objective optimization problems while maintaining diversity and avoiding local optima.

Materials: Large-scale multi-objective benchmark functions, computational resources for multiple populations.

Procedure:

- Stage I - Multi-population initialization:

- Create multiple subpopulations with different initialization strategies

- Maintain separate weight vectors for each subpopulation

- Stage II - Adaptive mixed-weight individual updating:

- Process optimal weight vectors from each subpopulation

- Calculate weight vector repetition frequency

- Adaptively select individuals for updating based on evolutionary status

- Generate new individuals using diversified weight combinations

- Stage III - Global optimization:

- Integrate promising solutions from all subpopulations

- Perform final refinement focusing on convergence and distribution

- Evaluate using Inverse Generation Distance, Hypervolume, and Spacing metrics [26]

Expected Outcome: Improved performance on large-scale problems compared to single-population weighted optimization frameworks.

Protocol 3: Adaptive Coevolutionary Multitasking (ACEMT) for Constrained Problems