AI-Powered Comparative Genomics: Decoding Evolutionary Processes for Biomedical Innovation

This article synthesizes the latest conceptual and technological advances in comparative genomics to elucidate the evolutionary processes shaping biological diversity.

AI-Powered Comparative Genomics: Decoding Evolutionary Processes for Biomedical Innovation

Abstract

This article synthesizes the latest conceptual and technological advances in comparative genomics to elucidate the evolutionary processes shaping biological diversity. We explore foundational mechanisms of genomic evolution, from de novo gene birth to regulatory element conservation, and detail cutting-edge methodologies, including AI-driven tools and large-scale databases, that are revolutionizing the field. For a research-focused audience, we address key challenges in data analysis and interpretation, while highlighting validation strategies and biomedical applications in zoonotic disease tracking, antimicrobial discovery, and drug target identification. The integration of these perspectives provides a comprehensive framework for leveraging evolutionary insights to advance human health.

Core Evolutionary Mechanisms: From Genomic Sequence to Functional Innovation

De novo gene origination represents a paradigm shift in our understanding of evolutionary innovation, challenging the long-held belief that new protein-coding genes must necessarily derive from pre-existing genetic templates [1] [2]. This process involves the emergence of functional genes from previously non-coding DNA sequences through the acquisition of open reading frames (ORFs), regulatory elements, and functional capacity [3] [4]. Once considered evolutionary rarities, de novo genes have been identified across all domains of life, from bacteria to plants and animals, with particularly high origination rates observed in flowering plants [1] [3].

The study of de novo genes provides crucial insights into the fundamental mechanisms driving evolutionary innovation and adaptive evolution [1] [3]. These genes can integrate into and modify pre-existing gene networks primarily through mutation and selection, revealing new patterns and rules with stable origination rates across various organisms [3]. Evidence now demonstrates that de novo genes play substantive roles in phenotypic and functional evolution across diverse biological processes, with detectable fitness effects that can shape species divergence [3].

Table 1: Key Characteristics of De Novo Genes Across Organisms

| Feature | Plants | Animals | Human |

|---|---|---|---|

| Typical Protein Length | Short (<100 amino acids) [1] | Variable, often short [2] | Short to medium [5] |

| Structural Features | Low intrinsic structural disorder, lacking conserved domains [1] | Enriched in disordered regions [2] | Varied structural properties [5] |

| Expression Pattern | Highly restricted spatiotemporal patterns, stress-responsive [1] | Often testis-biased, tissue-specific [2] | Temporospatial expansion in tumors [5] |

| Evolutionary Fate | ~25-30% become essential [1] | Rapid turnover, some stabilized by selection [2] | Some associated with human-specific traits [5] |

Genomic and Molecular Mechanisms

Genomic Architecture Facilitating De Novo Emergence

Plant genomes provide an exceptionally fertile ground for de novo gene origination due to their unique architectural features [1]. Large-scale comparative genomic analyses reveal that extensive noncoding regions, comprising up to 85% of some plant genomes, harbor abundant cryptic open reading frames that can potentially evolve into functional genes [1]. This vast noncoding landscape, combined with frequent whole-genome duplications and chromosomal rearrangements characteristic of plant evolution, creates numerous opportunities for the emergence of novel coding sequences [1].

Transposable elements (TEs) play a particularly crucial role as catalysts for de novo gene birth in plants [1]. TEs, which constitute 45-85% of many plant genomes, actively facilitate gene origination through multiple mechanisms. TE insertions can directly provide promoters, enhancers, and transcription factor binding sites that activate transcription of nearby noncoding sequences [1]. Additionally, TEs mediate chromosomal rearrangements that bring together previously separated noncoding fragments, creating novel transcriptional units [1]. Analysis of rice, maize, and Arabidopsis genomes reveals that approximately 30-40% of recently originated de novo genes show clear associations with TE activity [1].

Molecular Features of De Novo Proteins

De novo genes exhibit distinctive molecular signatures that differentiate them from conserved genes and facilitate rapid functional exploration [1]. These genes typically encode remarkably short proteins, often less than 100 amino acids, with high intrinsic disorder content and lacking recognizable conserved domains [1]. This structural "permissiveness" appears advantageous rather than detrimental—the abundance of disordered regions allows de novo proteins to escape strict folding constraints that govern canonical proteins, enabling them to act as flexible molecular probes capable of transient interactions and regulatory fine-tuning [1].

Studies in rice, Arabidopsis, and other plants consistently show that de novo proteins have lower intrinsic structural disorder (ISD) values, reduced GC content, and fewer secondary structure elements compared to conserved genes [1]. These properties enable rapid evolutionary testing of novel biochemical functions while minimizing the risk of misfolding and aggregation, essentially providing organisms with a low-cost experimental platform for molecular innovation under selective pressures [1].

Research Methods and Experimental Protocols

Comparative Genomics Identification Pipeline

Objective: To identify candidate de novo genes through comparative genomic analysis across related species.

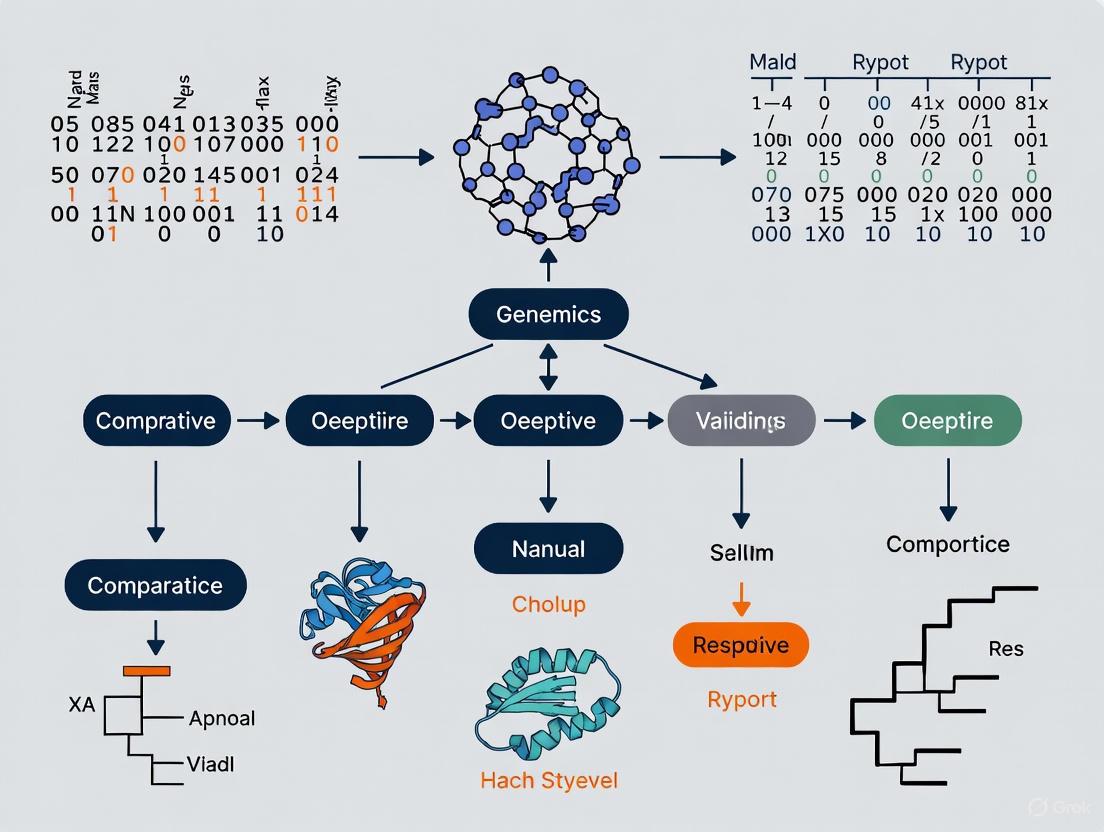

Figure 1: Computational identification workflow for de novo genes.

Protocol Steps:

High-Quality Genome Assembly

- Generate chromosome-level assemblies for focal species and closely related taxa

- Use PacBio or Oxford Nanopore long-read sequencing for comprehensive coverage [6]

- Annotate genes using evidence-based pipelines (RNA-seq, homology)

Ortholog Mapping and Phylostratigraphy

- Perform all-against-all BLAST or DIAMOND searches of predicted proteomes

- Construct phylogenetic trees for gene families using maximum likelihood methods

- Apply phylostratigraphy to classify genes by evolutionary age [1]

Synteny Analysis

- Use whole-genome alignment tools like Cactus for high-confidence synteny identification [1]

- Identify conserved non-genic regions in ancestral species corresponding to candidate genes in descendant species

- Verify absence of coding potential in ancestral sequences

Ancestral Sequence Reconstruction

- Reconstruct ancestral sequences using probabilistic methods (PAML, HYPHY)

- Analyze selective constraints (dN/dS ratios) [1]

- Confirm non-coding status through multiple sequence alignment

Table 2: Key Bioinformatics Tools for De Novo Gene Identification

| Tool Category | Specific Tools | Application | Key Parameters |

|---|---|---|---|

| Genome Assembly | Canu, Flye, Hifiasm | Generate chromosome-level assemblies | Minimum contig N50: 1Mb |

| Gene Prediction | BRAKER, AUGUSTUS, GeMoMa | Evidence-based gene annotation | Integration of RNA-seq, protein homology |

| Comparative Genomics | Cactus, OrthoFinder, BLAST | Identify lineage-specific genes | E-value < 1e-5, coverage >50% |

| Selection Analysis | PAML, HYPHY, SLiM | Calculate dN/dS ratios | dN/dS > 1 indicates positive selection |

Functional Validation Through CRISPR Screening

Objective: Experimentally validate the functional significance of candidate de novo genes using CRISPR-Cas9 technology.

Figure 2: CRISPR screening workflow for functional validation.

Protocol Steps:

sgRNA Design and Library Construction

- Design 3-5 sgRNAs per candidate de novo gene targeting coding regions

- Include non-targeting controls and essential gene targeting positive controls

- Clone sgRNA library into lentiviral vector (lentiCRISPRv2)

- Validate library representation through next-generation sequencing

Cell Line Engineering and Screening

- Transduce target cell lines (e.g., plant protoplasts, mammalian cell lines) at low MOI (0.3-0.5)

- Apply selection pressure (puromycin 1-5 μg/mL) for 5-7 days

- Maintain sufficient library coverage (>500 cells per sgRNA)

- Passage cells for 3-4 weeks to allow phenotypic manifestation

Sequencing and Analysis

- Extract genomic DNA at multiple timepoints (T0, T14, T28)

- Amplify integrated sgRNA sequences with barcoded PCR

- Sequence on Illumina platform (minimum 50x coverage per sgRNA)

- Analyze differential sgRNA abundance using MAGeCK or BAGEL algorithms

Phenotypic Validation

- For hits showing significant depletion, generate individual knockout clones

- Assess phenotypic consequences: proliferation assays, transcriptomics, stress challenges

- Conduct rescue experiments with cDNA complementation

Research Reagent Solutions

Table 3: Essential Research Reagents for De Novo Gene Studies

| Reagent Category | Specific Examples | Application | Key Features |

|---|---|---|---|

| Sequencing Platforms | PacBio Revio, Oxford Nanopore PromethION | Genome assembly, isoform sequencing | Long-read capability, direct RNA sequencing |

| CRISPR Systems | lentiCRISPRv2, Alt-R S.p. Cas9 Nuclease | Functional gene validation | High efficiency, minimal off-target effects |

| Single-Cell RNA-seq | 10x Genomics Chromium, Parse Biosciences | Expression profiling at cellular resolution | Cell-type specific expression patterns |

| Mass Spectrometry | Thermo Fisher Orbitrap Eclipse, timsTOF | Proteomic validation of novel proteins | High sensitivity for low-abundance proteins |

| Library Prep Kits | SMART-Seq v4, NEBNext Ultra II | RNA/DNA library preparation | Low input requirements, high complexity |

Case Studies and Applications

Plant De Novo Genes in Stress Adaptation

Several well-characterized examples in plants demonstrate the functional importance of de novo genes in adaptation [1]. The rice OsDR10 gene confers pathogen resistance, while the Arabidopsis AtQQS gene regulates carbon-nitrogen metabolism and enhances disease resistance [1]. Recent research has identified Rosa SCREP as a de novo gene regulating eugenol biosynthesis, and numerous other de novo genes have been implicated in stress tolerance, reproductive success, and developmental regulation [1]. These discoveries underscore that de novo genes are not merely evolutionary noise but can provide substantive adaptive benefits.

Population genomic evidence increasingly supports the functional importance of de novo genes in plant adaptation [1]. Expression analyses consistently show that plant de novo genes exhibit highly restricted spatiotemporal patterns, often being activated only during specific developmental stages, in particular tissues, or in response to environmental stresses—suggesting fine-tuned regulatory roles in adaptive responses [1]. Selection-signature analyses (e.g., dN/dS ratios and population frequency distributions) show that de novo genes follow diverse evolutionary trajectories, with many genes (especially those involved in stress response and reproduction) being subject to positive or balancing selection [1].

Human De Novo Genes in Cancer and Therapeutics

Recent research has identified 37 young human de novo genes with clear evolutionary trajectories that show significant upregulation and temporospatial expression expansion across tumors [5]. Functional studies demonstrated that depletion of 57.1% of these genes suppresses tumor cell proliferation, underscoring their roles in tumorigenesis [5]. This discovery has important translational implications, as these young de novo genes represent potential neoantigens for cancer immunotherapy.

As a proof of concept, researchers developed mRNA vaccines expressing ELFN1-AS1 and TYMSOS—young genes specifically expressed during early development but reactivated exclusively in tumors [5]. In humanized mice, these vaccines triggered specific T cell activation and inhibited tumor growth [5]. The antigens derived from these genes are immunogenic and capable of eliciting antigen-specific T cell activation in colorectal cancer patients, highlighting the clinical potential of targeting de novo genes in oncology [5].

Emerging Technologies and Future Directions

AI-Driven De Novo Gene Design

Recent advances in generative artificial intelligence have opened new possibilities for designing functional de novo genes [7]. The Evo genomic language model can leverage genomic context to perform function-guided design that accesses novel regions of sequence space [7]. By learning semantic relationships across prokaryotic genes, Evo enables a genomic 'autocomplete' in which a DNA prompt encoding genomic context for a function of interest guides the generation of novel sequences enriched for related functions, an approach termed semantic design [7].

This technology has been successfully applied to generate novel anti-CRISPR proteins and type II and III toxin–antitoxin systems, including de novo genes with no significant sequence similarity to natural proteins [7]. The in-context design of proteins and non-coding RNAs with Evo achieves robust activity and high experimental success rates even in the absence of structural priors, known evolutionary conservation or task-specific fine-tuning [7]. This represents a paradigm shift from analyzing naturally evolved de novo genes to actively engineering synthetic de novo genes with predetermined functions.

Single-Cell Resolution of De Novo Gene Expression

The application of single-cell RNA sequencing (scRNA-seq) technologies has revolutionized our understanding of de novo gene expression patterns and regulation [2]. Research in Drosophila testes has demonstrated that de novo genes exhibit tightly regulated expression rather than transcriptional noise, with complex expression patterns—some appearing only in specific cell types, while others are active much earlier in development [2]. The most active window for de novo gene expression in Drosophila is during the spermatocyte phase of sperm development [2].

These findings challenge earlier assumptions about de novo genes representing mere transcriptional noise and instead support their roles as finely regulated functional components of the genome. The creation of searchable databases cataloging gene expression across tissues at single-cell resolution provides valuable resources for exploring de novo gene function in specific cellular contexts [2]. This approach is particularly powerful for identifying roles in development and tissue-specific functions that might be masked in bulk transcriptome analyses.

The Role of Transposable Elements as Genomic Innovation Catalysts

Transposable Elements (TEs), once dismissed as "junk DNA," are now recognized as powerful catalysts of genomic innovation and key drivers of evolutionary processes [8] [9]. These mobile genetic sequences, which constitute approximately 45% of the human genome and up to 90% of some plant genomes like maize, function as dynamic engines that generate genetic diversity, rewire regulatory networks, and shape genome architecture across evolutionary timescales [8] [10]. The discovery of TEs by Barbara McClintock in the 1940s fundamentally challenged the view of the genome as a static entity, introducing instead the concept of the "dynamic genome" [8].

In comparative genomics, understanding TE dynamics provides crucial insights into species differentiation, adaptive evolution, and the emergence of novel regulatory mechanisms. TEs contribute to genome evolution through various mechanisms including serving as sources of novel regulatory sequences, mediating chromosomal rearrangements, and generating structural variants that can lead to new gene functions [9] [11]. This application note provides researchers with current protocols and analytical frameworks for investigating the role of TEs in genomic innovation, with particular emphasis on their implications for evolutionary biology research and potential applications in biomedical science.

Quantitative Landscape of Transposable Elements Across Species

The abundance, diversity, and activity of TEs vary dramatically across species, reflecting their diverse evolutionary histories and genomic strategies. The table below summarizes the quantitative variation of TEs across representative eukaryotic species, highlighting their significant contributions to genome size and organization.

Table 1: Transposable Element Composition Across Eukaryotic Genomes

| Species | Total Genomic TE Content | Retrotransposons (Class I) | DNA Transposons (Class II) | Notable Active Elements |

|---|---|---|---|---|

| Homo sapiens (Human) | ~45% [8] [9] | ~42% total [10] | ~2% [10] | LINE-1, Alu, SVA, HERV-K [8] [9] |

| Mus musculus (Mouse) | ~40% [10] | ~39% total [10] | Similar proportion to human [10] | B2 SINEs, IAP, Etns [11] [10] |

| Zea mays (Maize) | ~90% [10] | ~85% total [10] | ~5% [10] | Ac/Ds system [10] |

| Gossypium spp. (Cotton) | 57% (D5) - 81% (K2) [12] | LTR retrotransposons dominant (Gypsy) [12] | Variable | Lineage-specific LTR expansions [12] |

| Bees (75 species) | 4.4% - 82.1% [13] | Variable across families | Variable across families | Lineage-specific accumulations [13] |

Recent comparative studies across 75 bee genomes reveal astonishing variation in TE content, ranging from 4.4% in Apis dorsata to 82.1% in Xylocopa violacea, demonstrating that TE dynamics are a major factor in genome size variation across closely related species [13]. This variation is largely responsible for genome size differences, with lineages exhibiting unique signatures of TE accumulation [13]. In the cotton genus (Gossypium), differential TE expansion has been directly linked to post-transcriptional regulatory divergence following species divergence, with TE content ranging from 57% to 81% across different genome types [12].

Table 2: Active TE Families in the Human Genome and Their Characteristics

| TE Family | Class | Autonomy | Approximate Length | Key Structural Features | Genomic Abundance |

|---|---|---|---|---|---|

| LINE-1 (L1) | Non-LTR Retrotransposon | Autonomous | ~6 kb [8] | 5' UTR, ORF1, ORF2, 3' UTR, poly-A tail [8] | ~17-20% of genome [8] |

| Alu | Non-LTR Retrotransposon | Non-autonomous | ~300 bp [8] | Two monomers, A- and B-boxes, poly-A tail [8] | ~11% of genome [8] |

| SVA | Non-LTR Retrotransposon | Non-autonomous | 2-3 kb [8] | CCCTCT repeat, Alu-like, VNTR, SINE-R [8] | ~0.2% of genome [8] |

| HERV-K (HML2) | LTR Retrotransposon | Autonomous | 9-10 kb [8] | LTRs, gag, pol-pro, env genes [8] | ~1% of genome [8] |

Mechanisms of Genomic Innovation

Regulation of 3D Genome Architecture

TEs significantly contribute to the evolution of 3D genome organization by serving as binding sites for architectural proteins such as CTCF, which shapes nuclear architecture by creating loops, domains, and compartment borders [11]. Recent research demonstrates that 8-37% of loop anchor and TAD (Topologically Associating Domain) boundary CTCF sites across multiple mammalian species are derived from TEs, with species-specific distributions of contributing TE families [11].

In mouse cells, SINE elements contribute disproportionately to 3D genome organization, accounting for 63.3-76.9% of TE-derived loop anchor CTCF sites despite occupying approximately 5% less genomic space compared to other species [11]. The human genome shows more balanced contributions from LINE, LTR, and DNA transposon classes [11]. This TE-mediated rewiring of chromatin architecture creates species-specific regulatory landscapes that can facilitate new interactions between regulatory elements and genes.

Diagram 1: TE-mediated 3D genome reorganization. TEs can introduce novel CTCF binding sites that reshape chromatin architecture, creating new regulatory interactions.

Post-Transcriptional Regulatory Innovation

Beyond their well-established roles in transcriptional regulation, TEs significantly impact post-transcriptional processes including alternative splicing, translation efficiency, and microRNA-mediated regulation [12]. In cotton species, TE expansion has been shown to contribute to the turnover of transcription splicing sites and regulatory sequences, leading to changes in alternative splicing patterns and expression levels of orthologous genes [12].

TE-derived sequences can form upstream open reading frames (uORFs) that regulate translation and generate novel microRNAs that fine-tune gene expression networks [12]. These mechanisms demonstrate how TEs provide raw material for the evolution of complex regulatory hierarchies that operate at multiple levels of gene expression control.

Species-Specific Adaptation and Evolution

TEs drive species-specific adaptation through several mechanisms, including the formation of lineage-specific regulatory elements and genes. Research in cotton species has revealed that TE activity contributes to the formation of species-specific genes, with significant enrichment of TEs found in these genes compared to conserved orthologs [12].

The presence of conserved TE insertions in orthologous gene families correlates with evolutionary relationships, with closely related species sharing similar TE insertion profiles while distantly related species show significant divergence [12]. This phylogenetic signal demonstrates the utility of TEs as markers for evolutionary studies and underscores their role in species differentiation.

Experimental Protocols for TE Analysis

Protocol 1: Genome-Wide TE Annotation and Manual Curation

Comprehensive TE annotation requires a combination of computational prediction and manual curation to generate high-quality TE libraries suitable for evolutionary analyses [14].

Materials and Reagents:

- High-quality genome assembly

- Computing cluster or high-performance computer (minimum 16 GB RAM for small genomes)

- RepeatModeler2 for de novo TE family identification

- RepeatMasker for homology-based annotation

- BEDTools for genomic interval operations

- MAFFT or MUSCLE for multiple sequence alignment

- Alignment viewer (AliView or BioEdit)

Procedure:

- De Novo TE Prediction: Run RepeatModeler2 on the target genome assembly to identify putative TE families.

Homology-Based Annotation: Use RepeatMasker with the generated TE library to annotate TEs in the genome.

Manual Curation: For each putative TE family, extract full-length copies from the genome using BEDTools.

Multiple Sequence Alignment: Generate and manually inspect alignments of TE copies.

Consensus Generation: Create refined consensus sequences from curated alignments, paying particular attention to structural features (ORFs, terminal repeats, target site duplications).

Classification: Classify TEs based on structural characteristics and homology to known elements using Wicker et al. (2007) and Feschotte & Pritham (2007) classification schemes [14].

Troubleshooting Tips:

- Chimeric sequences (fusions of distinct TEs) are common in automated predictions and require manual separation.

- For degenerate TEs, consider reconstructing ancestral sequences using methods like that described by Kojima (2024) [15].

- Validate problematic classifications by examining protein domains (Pfam database) and conserved motifs.

Diagram 2: TE annotation and curation workflow. Manual curation is essential for generating high-quality TE libraries from automated predictions.

Protocol 2: Functional Analysis of TE-Derived Regulatory Elements

This protocol outlines methods for investigating the functional impact of TEs on gene regulation, particularly their role in 3D genome organization and enhancer function.

Materials and Reagents:

- Curated TE library

- Hi-C or ChIA-PET data for 3D genome structure

- Chromatin immunoprecipitation (ChIP) data for CTCF, H3K27ac, or other relevant marks

- CRISPR-Cas9 system for genome editing

- RNA-seq library preparation kit

- qPCR reagents for validation

Procedure:

- Identify TE-Derived Regulatory Elements:

- Intersect TE annotations with ChIP-seq peaks for architectural proteins (CTCF) and enhancer marks (H3K27ac).

Analyze 3D Genome Contributions:

- Overlap TE-derived CTCF sites with loop anchors and TAD boundaries from Hi-C data.

- Calculate species-specific contributions using comparative genomics approaches.

Functional Validation:

- Design sgRNAs targeting candidate TE-derived regulatory elements.

- Transfert CRISPR-Cas9 components into appropriate cell lines.

- Confirm deletion by PCR and Sanger sequencing.

- Assess impact on 3D genome structure using Hi-C or 4C-seq.

- Evaluate gene expression changes by RNA-seq or qRT-PCR.

Evolutionary Analysis:

- Map TE insertions onto phylogenetic trees to determine evolutionary timing.

- Correlate lineage-specific TE insertions with phenotypic differences.

Applications in Drug Development:

- Identify TE-derived regulatory elements that affect disease-relevant genes.

- Explore species-specific TE insertions that may explain differential drug responses.

- Develop biomarkers based on polymorphic TE insertions for personalized medicine.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Transposable Element Analysis

| Reagent/Resource | Function | Example Applications | Key Features |

|---|---|---|---|

| RepeatModeler2 [14] | De novo TE discovery | Identification of novel TE families | Integrates RECON, RepeatScout, and LTR harvest algorithms |

| Earl Grey [13] | TE annotation pipeline | Comprehensive repeat annotation | Specialized for non-model organisms, consistent classification |

| Ancestral Genome Reconstruction [15] | Identification of degenerate TEs | Finding evolutionarily old TE-derived sequences | Reveals ~10.8% more TEs in human genome than standard methods |

| Manual Curation Toolkit [14] | Refinement of TE consensus sequences | Generating gold-standard TE libraries | Includes CD-HIT, BLAST+, BedTools, MAFFT, AliView |

| Hi-C/ChIA-PET [11] | 3D genome architecture mapping | Identifying TE contributions to chromatin organization | Reveals loop anchors and TAD boundaries derived from TEs |

| CRISPR-Cas9 [11] | Functional validation | Testing regulatory impact of specific TEs | Enables precise deletion of TE-derived regulatory elements |

Transposable elements serve as fundamental catalysts of genomic innovation, driving evolutionary processes through multiple mechanisms including 3D genome restructuring, regulatory network rewiring, and species-specific adaptation. The protocols and analytical frameworks presented here provide researchers with comprehensive tools to investigate TE-mediated genomic innovation in evolutionary and biomedical contexts. As recognition of TE functional importance grows, these dynamic genomic elements will continue to reveal insights into genome evolution, species diversification, and the molecular basis of phenotypic diversity. The integration of advanced sequencing technologies with sophisticated computational methods promises to further illuminate the extensive contributions of TEs to genomic innovation across the tree of life.

Within the vast non-coding landscape of eukaryotic genomes lies a critical class of functional elements that govern transcriptional regulation. Conserved Non-coding Elements (CNEs) are genomic sequences that exhibit an extraordinary degree of evolutionary conservation, often exceeding that of protein-coding exons [16]. These elements are disproportionately involved in regulating genes that control multicellular development and differentiation, and their disruption is frequently associated with disease pathogenesis [16] [17]. This Application Note provides a structured overview of the quantitative landscape, definitive experimental protocols, and essential research tools for the identification and functional validation of CNEs, framed within the context of comparative genomics and evolutionary biology research.

Quantitative Landscape of Non-Coding Conservation

Prevalence and Conservation Metrics

Systematic genomic studies have enabled the quantification of CNEs and their conservation patterns across species. The data reveal that while a significant fraction of the human genome is functionally constrained, only a minority of this comprises protein-coding sequences.

Table 1: Genome-Wide Conservation Statistics

| Metric | Value | Context/Species | Reference |

|---|---|---|---|

| Functionally Constrained Human Genome | ~5% | Total genome under selection | [17] |

| Annotated Protein-Coding Exons | ~1.5% | Fraction of human genome | [17] |

| Likely Functional CNEs | ~3.5% | Fraction of human genome | [17] |

| Sequence-Conserved Heart Enhancers | ~10% | Mouse-Chicken comparison | [18] |

| Positionally Conserved Heart Enhancers (via IPP) | ~42% | Mouse-Chicken comparison | [18] |

| Ultraconserved Elements (UCRs) | 481 segments | >200 bp, 100% identity (Human/Rat/Mouse) | [19] |

Functional Classification of Conserved Elements

CNEs can be categorized based on their sequence properties and functional roles. The following table summarizes key types of conserved non-coding regions and their characteristics.

Table 2: Types of Conserved Non-Coding Elements and Their Features

| Element Type | Definition | Key Characteristics | Functional Role |

|---|---|---|---|

| Ultraconserved Regions (UCRs) | >200 bp with 100% identity across species [19] | Often transcribed (T-UCRs); dysregulated in cancer [19] | Largely unelucidated; some under miRNA control [19] |

| Conserved Non-Coding Elements (CNEs) | Non-coding sequences with extreme conservation [16] | Cluster near developmental genes; form Genomic Regulatory Blocks (GRBs) [16] | Predominantly developmental enhancers [16] |

| Human Accelerated Regions (HARs) | Genomic regions with accelerated substitution rates in humans [19] | Bidirectionally transcribed as lncRNAs; evidence of positive selection [19] | Potential roles in human brain evolution (e.g., HAR1) [19] |

Experimental Protocols for Identification and Validation

Computational Identification of CNEs

Objective: To identify putative conserved non-coding elements from genomic sequences using comparative genomics.

Workflow Overview:

Procedure:

- Multi-Species Genome Alignment: Obtain high-quality genome assemblies for the species of interest and relevant outgroups. For deep conservation studies, include species spanning the evolutionary distance of interest (e.g., human, mouse, chicken, zebrafish). Perform whole-genome alignments using tools like MULTIZ [20] or Cactus [21].

- Identify Conserved Regions: Scan alignments for regions of significantly reduced mutation rate. Use programs like phastCons [20] or GERP++ [17] that model neutral evolution and identify sequences evolving slower than the background rate.

- Filter Out Coding Sequences: Annotate and mask protein-coding exons using resources like RefSeq or Ensembl to ensure the focus remains on non-coding conservation [16] [20].

- Synteny-Based Orthology Mapping: For distantly related species where sequence alignment fails, use synteny-based algorithms like Interspecies Point Projection (IPP) [18]. This method projects genomic coordinates between species using flanking blocks of alignable sequences ("anchor points") and bridging species, identifying "indirectly conserved" orthologs.

- Annotate Genomic Context: Determine the genomic features associated with the identified CNEs (e.g., proximity to developmental genes, location within introns or gene deserts, overlap with known regulatory marks from ENCODE [17]).

In Vivo Functional Validation via Enhancer Assay

Objective: To experimentally validate the enhancer activity of a predicted CNE in a living organism.

Workflow Overview:

Procedure:

- Cloning: Amplify the candidate CNE from genomic DNA using PCR. Clone this fragment upstream of a minimal promoter driving a reporter gene (e.g., LacZ, GFP, or mCherry) in a plasmid vector. The choice of minimal promoter is critical, as it should have negligible inherent enhancer activity [20].

- Preparation: Linearize the plasmid to remove bacterial backbone sequences. Purify the linear DNA fragment containing the CNE, promoter, and reporter gene. Resuspend in microinjection buffer at a typical concentration of 1-5 ng/μL.

- Microinjection: Microinject the purified DNA construct into the pronucleus of fertilized single-cell embryos (e.g., mouse) or the cytoplasm of zebrafish embryos [20]. For aquatic species, electroporation can be an efficient alternative.

- Analysis: Allow injected embryos to develop to pre-determined stages corresponding to key developmental time windows (e.g., E10.5-E11.5 for mouse organogenesis [18], specific somite stages for zebrafish). Fix embryos and stain for reporter activity (e.g., X-Gal staining for LacZ). For fluorescent reporters, analyze live or fixed embryos using fluorescence microscopy. Compare the expression pattern to the known expression profile of the putative target gene.

- Interpretation: A successful validation is concluded when the reporter expression pattern recapitulates all or part of the endogenous expression pattern of the nearby developmental gene, in a specific spatiotemporal context [16]. The CNE is then classified as a functional developmental enhancer.

This section catalogs essential reagents, data resources, and computational tools crucial for research on conserved non-coding elements.

Table 3: Key Research Reagents and Resources for CNE Studies

| Category | Resource/Reagent | Function and Application |

|---|---|---|

| Data Repositories | UCbase [16] | Database of ultraconserved elements (UCRs). |

| UCNEbase [16] | Catalog of ultraconserved non-coding elements. | |

| VISTA Enhancer Browser [16] | Repository of in vivo validated enhancers. | |

| ANCORA [16] | Atlas of conserved regions across multiple animals. | |

| Genomic Data | Zoonomia Project Alignments [21] | Whole-genome alignment of 240 mammalian species for identifying evolutionary constraint. |

| ENCODE Data [17] | Functional genomic data (chromatin accessibility, histone marks) for annotating putative CREs. | |

| Computational Tools | LiftOver [18] | Tool for mapping genomic coordinates between species based on sequence alignment. |

| Interspecies Point Projection (IPP) [18] | Synteny-based algorithm for identifying orthologous regions in highly diverged species. | |

| GERP++ [17] | Identifies constrained elements by measuring evolutionary constraint from multi-species alignments. | |

| Experimental Vectors | Reporter Plasmids (e.g., pGL4.23) | Vectors containing minimal promoter and reporter genes (luciferase, LacZ, GFP) for enhancer assays. |

| Model Organisms | Mouse (Mus musculus) | Primary model for in vivo transgenic validation of mammalian CNEs [16]. |

| Zebrafish (Danio rerio) | Vertebrate model for high-throughput, transient in vivo enhancer assays [20]. | |

| Chicken (Gallus gallus) | Model for studying evolutionary conservation in birds and testing CNEs via electroporation [18]. |

Protein Evolution and the Expansion of Functional Repertoires

The field of protein evolution is being transformed by an influx of large-scale genomic data and innovative computational methods, enabling researchers to move beyond simple sequence comparisons to quantitative analyses of physico-chemical properties and high-throughput experimental evolution [22] [23]. These advancements are revealing the molecular mechanisms through which proteins gain new functions, insights that are critical for understanding evolutionary adaptation and for engineering proteins with novel functions in therapeutic and industrial applications. This Application Note synthesizes current methodologies and provides structured protocols for studying protein evolution, with a focus on the expansion of functional repertoires through gene duplication, the emergence of novel genes, and the experimental evolution of new functions.

Quantitative Analysis of Protein Evolution

From Sequence Letters to Quantitative Properties

Conventional phylogenetic analysis of proteins typically relies on counting mismatches in amino acid or coding sequences. However, this approach primarily captures the mutation component of evolution while overlooking the critical dimension of selection, which favors certain mutations based on their functional properties [23]. A more discriminating method converts amino acid sequences ("strings of letters") into quantitative representations based on their physico-chemical characteristics ("strings of numbers") [23] [24].

Table 1: Quantifiable Physico-Chemical Properties for Evolutionary Analysis

| Property | Biological Significance | Measurement Scale |

|---|---|---|

| Volume | Impacts steric constraints and packing efficiency | ų or cm³/mol |

| Hydropathy Index | Determines hydrophobicity/hydrophilicity and membrane association | Kyte-Doolittle scale |

| Solubility | Influences protein solubility and aggregation propensity | Log-scale or g/100mL |

| Octanol Interface | Measures partitioning behavior in biphasic systems | Free energy of transfer |

| Isoelectric Point (pI) | Determines charge characteristics at specific pH | pH units |

This quantitative framework enables the application of sophisticated mathematical tools from complex systems research, including autocorrelation, average mutual information, fractal dimension, and bivariate wavelet analysis [23]. These methods provide more nuanced measures of evolutionary distance that account for both mutation and selection pressures.

Mathematical Tools for Quantitative Analysis

Autocorrelation measures the linear dependence within a sequence, quantifying how values at different positions are related. The autocorrelation coefficient Rm ranges from -1 (perfect mirror images) to +1 (perfect synchrony), with 0 indicating no correlation [23].

Average Mutual Information is an information theory measure that quantifies the non-linear correlation between sequences, representing the amount of information shared between two species' sequence data. It is calculated as MI = H(X) + H(Y) - H(X,Y), where H(·) represents marginal or joint entropy [23].

Box Counting Dimension provides a fractal dimension estimate that serves as a quantitative measure of geometric complexity between sequences from different taxa. Values range between 1 (identity between taxa) and 2 (total independence between sequences), with smaller dimensions indicating closer relatedness [23].

Bivariate Wavelet Analysis enables pairwise comparison between taxa from the frequency domain, distinguishing hypermutable from conserved protein regions through cross-wavelet power plots and wavelet coherence analysis [23] [24].

Experimental Models for Protein Evolution

Phage-Assisted Continuous Evolution (PACE)

The PACE platform enables rapid directed evolution of proteins through continuous selection in bacterial hosts, performing up to 40 theoretical rounds of evolution every 24 hours [25]. This system uncouples gene-of-interest evolution from host genome evolution, allowing large gene populations to evolve over hundreds of generations with minimal intervention.

Table 2: PACE System Components and Functions

| Component | Type | Function in Evolution System |

|---|---|---|

| Selection Phage (SP) | Phage vector | Encodes the evolving gene of interest (e.g., T7 RNAP) |

| Accessory Plasmid (AP) | Bacterial plasmid | Provides essential gene III under control of target promoter |

| Mutagenesis Plasmid (MP) | Bacterial plasmid | Arabinose-inducible source of mutations in lagoon |

| Lagoon | Fixed-volume vessel | Continuous culture with ~40mL volume, 2.0 volume/h dilution |

| E. coli S109 cells | Host strain | Derived from DH10B; hosts phage and plasmid components |

PACE Experimental Protocol

System Setup and Pre-optimization

- Clone gene of interest (e.g., T7 RNA polymerase) into selection phage vector

- Continuously propagate helper phage for 6 days with arabinose induction to minimize potential fitness advantages from phage genome mutations

- Subclone wild-type gene into randomly chosen phage backbone from pre-optimization and sequence to verify correct cloning [25]

Evolution Conditions

- Inoculate lagoons with 5×10⁴ plaque-forming units (pfu) of starting SP

- Maintain continuous flow rate of 2.0 volumes/hour

- Sample lagoon populations at defined intervals (6, 12, 24, 30, 36, 48, 54, 60, 72, 78, 84, 96 hours)

- For multi-stage selections, use 40μL of lagoon sample from previous stage to reinitiate PACE with modified selection pressure [25]

Parameter Variation Systematically vary mutation rates through arabinose induction levels of MP and selection stringency through promoter identity controlling pIII expression (e.g., hybrid T7/T3 promoter for low stringency, pure T3 promoter for high stringency) [25].

3Dseq: Protein Structure Determination from Experimental Evolution

The 3Dseq methodology leverages experimental evolution to determine protein structures through the following workflow [26]:

This approach has successfully generated accurate 3D structures for β-lactamase PSE1 and acetyltransferase AAC6, confirming that genetic encoding of structural constraints can be captured through experimental evolution and computational analysis [26].

Comparative Genomics of Gene Family Evolution

Genomic Analysis of Functional Adaptation

Comparative genomics across related species reveals how gene family expansions drive functional adaptation. A study comparing Stratiomyidae (soldier flies) and Asilidae (robber flies) demonstrated lineage-specific expansions correlated with ecological specialization [27].

Table 3: Gene Family Expansions and Functional Specialization

| Taxonomic Group | Expanded Gene Families | Biological Functions | Ecological Correlation |

|---|---|---|---|

| Stratiomyidae | Digestive enzymes, metabolic genes | Proteolysis, metabolism | Decomposer lifestyle in decaying matter |

| Hermetia illucens (specific) | Olfactory receptors, immune response | Chemosensation, immunity | Adaptive ability in diverse decomposing environments |

| Asilidae | Longevity-associated genes | Cellular maintenance, stress response | Extended lifespan (1-3 years vs. short Stratiomyidae cycles) |

Protocol for Comparative Genomic Analysis

Genome Quality Assessment

- Download reference genome assemblies and annotations from NCBI and Darwin Tree of Life Project

- Use BUSCO 5.8.2 with diptera_odb10 database to assess genome completeness

- Filter annotation files to retain only the longest transcript per gene using OrthoFinder's primary_transcript.py script [27]

Repetitive Element Identification

- Run Earl Grey 5.1.1 pipeline with RepeatMasker and RepeatModeler2 for de novo TE identification

- Perform ten iterations of the "BLAST, Extract, Align, Trim" process for comprehensive TE library development

- Combine RepeatMasker output with LTR_Finder results using RepeatCraft

- Calculate Kimura distance using Earl Grey's divergence_calc.py script [27]

Orthogroup Identification and Synteny Analysis

- Use OrthoFinder 2.5.5 with "-M msa" argument for orthogroup assignment and species tree construction

- Construct species tree using STAG method with single-copy orthologs

- Convert GFF annotations to bed format for GENESPACE 1.2.3 synteny analysis [27]

Computational Advances in Evolutionary Analysis

Artificial Intelligence and Machine Learning

Deep learning approaches are revolutionizing evolutionary genomics through tools like:

- Pythia: Predicts phylogenetic inference difficulty from multiple sequence alignments prior to tree construction [22]

- Adaptive RAxML-NG: Automatically adjusts tree search thoroughness based on Pythia difficulty scores [22]

- Educated Bootstrap Guesser: Uses machine learning to rapidly predict bootstrap support values [22]

- FANTASIA: Integrates protein language models for functional annotation beyond traditional sequence similarity [22]

Critical datasets enabling large-scale evolutionary analyses include:

- Y1000+ Project: Genomic, phenotypic, and environmental data from nearly all >1000 known yeast species [22]

- MATEDB: Homogeneous genomic, transcriptomic, and functional database covering animal diversity [22]

- Vertebrate Genomes Project (VGP) and Darwin Tree of Life: Standardized reference genomes across diverse taxa [22]

- Microbial Protein Universe: Catalog of protein families from bacterial genomes and metagenomes [22]

The Scientist's Toolkit

Table 4: Essential Research Reagents and Resources

| Reagent/Resource | Application | Key Features |

|---|---|---|

| PACE System Components | Continuous protein evolution | SP, AP, MP plasmids; E. coli S109 host strain |

| OrthoFinder | Orthogroup inference | MSA-based phylogeny; STAG species tree construction |

| Earl Grey | Repetitive element annotation | Integrates RepeatMasker, RepeatModeler2, LTR_Finder |

| BUSCO | Genome completeness assessment | Diptera-specific database (diptera_odb10) |

| GENESPACE | Synteny analysis | Works with OrthoFinder output for cross-species comparison |

| Quantitative Analysis R Suite | Physico-chemical property analysis | Autocorrelation, mutual information, wavelet tools [23] [24] |

The integration of quantitative analysis methods, high-throughput experimental evolution platforms, and comparative genomics across diverse taxa provides unprecedented insights into the mechanisms of protein evolution and functional diversification. These approaches, supported by the rich data resources and computational tools now available, enable researchers to move beyond descriptive studies to predictive understanding of how protein functions evolve and expand. The protocols and methodologies detailed in this Application Note offer a roadmap for investigating protein evolution in both natural and laboratory settings, with applications ranging from basic evolutionary biology to drug development and protein engineering.

Evolutionary Constraints and Adaptation Across the Tree of Life

The increasing availability of genomic data from across the tree of life has revolutionized the study of evolutionary processes [22]. Comparative genomics provides a powerful framework for identifying the molecular basis of adaptations and the constraints that shape them. By analyzing genomes from diverse organisms, researchers can pinpoint evolutionary innovations, from new protein functions to large-scale genomic rearrangements, that underlie biological diversity [22]. This application note outlines current methodologies and resources for investigating these patterns, providing a practical guide for researchers exploring evolutionary constraints and adaptation.

Quantitative Frameworks in Evolutionary Genomics

Evolutionary genomics relies on quantitative measures to infer selection, constraint, and divergence. The following table summarizes key data types and metrics used in the field.

Table 1: Key Quantitative Data and Metrics in Evolutionary Genomics

| Data Type / Metric | Description | Application in Evolutionary Studies |

|---|---|---|

| dN/dS Ratio (ω) | Ratio of non-synonymous to synonymous substitution rates. | Inference of selective pressure: ω ~1 (neutral evolution), ω <1 (purifying selection), ω >1 (positive selection) [22]. |

| Gene Tree / Species Tree Discordance | Mismatch between genealogies of genes and the species phylogeny. | Uncovering biological processes like Incomplete Lineage Sorting (ILS), gene duplication/loss, and Horizontal Gene Transfer (HGT) [28]. |

| Phylogenetic Signal | Measure of how trait variation follows a phylogenetic structure. | Assessing the extent to which closely related species resemble each other, indicating evolutionary constraint [22]. |

| Convergent Evolution | Independent emergence of analogous traits in separate lineages. | Identifying robust adaptive solutions to common environmental challenges (e.g., metabolic adaptations) [29]. |

| Pangenome Metrics | Analysis of core (shared) and accessory (variable) genes within a species or clade. | Understanding genomic diversity, niche adaptation, and the dynamic nature of genomes, especially in microbes [22]. |

Experimental and Computational Protocols

Protocol: Phylogenomic Inference and Dating of Evolutionary Events

This protocol details the steps for inferring a robust species phylogeny and estimating divergence times, addressing key challenges in assembling the Tree of Life [28].

I. Data Collection and Orthology Assessment

- Genome Acquisition: Source high-quality genome assemblies from databases like the Vertebrate Genomes Project (VGP) or the Earth Biogenome Project (EBP) [22].

- Homology Identification: Identify homologous gene families across the target species set using tools like

OrthoFinder. - Orthology Inference: Filter for Single-Copy Orthologs (SCOs) to minimize discordance from paralogy and HGT. For deeper phylogenetic analyses, this set may be limited (e.g., ~50 genes for all cellular life) [28].

II. Sequence Alignment and Curation

- Multiple Sequence Alignment (MSA): Align amino acid or nucleotide sequences for each SCO using aligners like

MAFFTorClustal Omega. - Alignment Trimming: Trim unreliably aligned regions using tools like

TrimAlorBMGE. Note that aggressive trimming can sometimes reduce accuracy [28]. - Uncertainty Assessment (Optional): Use tools like

Pythiato predict the phylogenetic difficulty (signal strength) of each MSA prior to tree inference, allowing for appropriate analysis strategy [22].

III. Phylogenetic Inference

- Species Tree Reconstruction:

- Concatenation: Combine all aligned SCOs into a supermatrix for analysis with maximum likelihood (e.g.,

RAxML-NG) or Bayesian methods (e.g.,MrBayes). - Summary Methods: To account for gene tree discordance, use coalescent-based methods like

ASTRALwhich estimate a species tree from a set of individual gene trees [28].

- Concatenation: Combine all aligned SCOs into a supermatrix for analysis with maximum likelihood (e.g.,

- Tree Search Heuristics: Employ adaptive search algorithms (e.g., adaptive

RAxML-NG) that adjust computational effort based on the inferred difficulty of the alignment [22].

IV. Divergence Time Estimation

- Fossil Calibration: Compile reliable fossil data to place minimum (and sometimes maximum) age constraints on specific nodes in the phylogeny.

- Molecular Clock Model: Apply a relaxed molecular clock model (e.g., in

MCMCTreeorBEAST2) to estimate divergence times, allowing substitution rates to vary across lineages [28]. - Time-Tree Inference: Run a Bayesian analysis to integrate the phylogenetic tree, sequence data, and fossil calibrations to produce a dated phylogeny with confidence intervals on node ages.

Protocol: Identifying Molecular Convergence Using Protein Language Models

This protocol leverages artificial intelligence to identify convergent evolutionary changes at the molecular level that may be beyond the reach of traditional methods [22].

- Target Gene/Protein Set Selection: Define a set of candidate genes or proteins implicated in a convergent phenotypic adaptation (e.g., toxin resistance, vision proteins in disparate species).

- Sequence Retrieval and Pre-processing: Gather amino acid sequences for the target protein from a wide phylogenetic range of species, including both those with and without the trait of interest.

- Functional Annotation with Protein Language Models: Use pipelines like

FANTASIAto generate deep, sequence-based functional annotations for each protein. This step can reveal remote homology and functional sites not detected by BLAST [22]. - Site-wise Evolutionary Analysis: For each sequence, calculate site-wise evolutionary rates or other substitution constraints.

- Identification of Convergent Substitutions: Statistically compare the patterns of substitution across the phylogeny to identify specific sites that have independently evolved similar biochemical properties in lineages sharing the convergent phenotype.

- Functional Validation: Prioritize identified sites for experimental validation (e.g., site-directed mutagenesis, biochemical assays) to confirm their role in the adaptive trait.

Visualizing Workflows and Evolutionary Concepts

Phylogenomic Inference and Dating Workflow

The following diagram outlines the core protocol for reconstructing a dated Tree of Life, integrating steps from data collection to final time-tree estimation.

Mechanisms of Gene Tree / Species Tree Discordance

A fundamental challenge in phylogenomics is reconciling the different evolutionary histories of genes and species. This diagram illustrates the primary biological processes that cause this discordance [28].

Successful research in this field relies on curated data, advanced algorithms, and robust computational infrastructure.

Table 2: Essential Research Reagents and Resources for Evolutionary Genomics

| Resource / Tool | Type | Function and Application |

|---|---|---|

| Vertebrate Genomes Project (VGP) / Earth Biogenome Project (EBP) | Data Repository | Provides high-quality, standardized reference genome assemblies for comparative genomic studies across the tree of life [22]. |

| Y1000+ Project | Data Repository | A comprehensive resource of genomic, phenotypic, and environmental data for nearly all known yeast species, enabling genotype-phenotype linking [22]. |

| Pythia | Computational Tool | Predicts the difficulty of phylogenetic analysis from a multiple sequence alignment, allowing researchers to optimize their computational strategy [22]. |

| FANTASIA | Computational Pipeline | Integrates protein language models for functional annotation of proteins, enabling the discovery of function beyond the limits of sequence similarity [22]. |

| Single-Copy Orthologs (SCOs) | Data Filter | A curated set of genes used as the backbone for robust species tree reconstruction, minimizing artifacts from gene duplication and horizontal transfer [28]. |

| Unified Human Gastrointestinal Catalogue | Data Repository | An exhaustive catalogue of genes and protein families from human gut prokaryotes, serving as a model for understanding host-associated microbial evolution [22]. |

| ASTRAL | Computational Tool | Infers a species tree from a set of unrooted gene trees using the multi-species coalescent model, accounting for incomplete lineage sorting [28]. |

| Adaptive RAxML-NG | Computational Tool | A tree search heuristic that automatically adapts its thoroughness based on the predicted difficulty of the dataset, improving computational efficiency [22]. |

Next-Generation Tools and Workflows: From Data to Biomedical Insights

Comparative genomics provides a powerful framework for understanding the evolution, structure, and function of genes, proteins, and non-coding regions across species [30]. This approach systematically explores biological relationships and evolution to illuminate the genetic basis of phenotypic diversity, with profound implications for biomedical research [30] [31]. The field now leverages massive-scale genomic resources that have emerged from global consortia and technological advances in sequencing and bioinformatics.

This application note details practical methodologies for utilizing three pivotal resources: the Vertebrate Genomes Project (VGP), the Y1000+ Project, and the NIH Comparative Genomics Resource (CGR). We provide structured protocols for accessing and analyzing these datasets to investigate evolutionary processes and address human health challenges, framed within the context of a broader thesis on comparative genomics evolutionary processes research.

Large-scale genomic databases provide distinct data types and organisms of focus, making them suitable for different research applications. The table below summarizes the key quantitative and descriptive features of the VGP, Y1000+, and CGR resources for direct comparison.

Table 1: Comparative Overview of Major Genomic Databases and Resources

| Resource | Primary Scope & Organisms | Key Data Types | Primary Access Method | Notable Applications |

|---|---|---|---|---|

| Vertebrate Genomes Project (VGP) | Vertebrate species; Goal: reference genomes for all ~70,000 vertebrate species [22] [32] | High-quality, near-error-free, gap-free, chromosome-level, haplotype-phased genome assemblies [32] | Data accessible via public repositories (e.g., Darwin Tree of Life) [22] | Genome evolution, structural variant discovery, phylogenetic studies across vertebrates |

| Y1000+ Project | Yeast (subphylum Saccharomycotina); ~1,000 known yeast species [22] | Genomic, phenotypic, and environmental data [22] | Publicly available dataset (Resource: Opulente et al. 2024) [22] | Linking genotype to phenotype, metabolic niche breadth, trait evolution |

| NIH Comparative Genomics Resource (CGR) | Eukaryotic organisms [30] | Tools, interfaces, and high-quality data for connecting community resources with NCBI [30] | NCBI genomics toolkit and associated interfaces [30] | Zoonotic disease research, antimicrobial therapeutic discovery, enhancing genomic data interoperability |

Detailed Resource Protocols and Applications

Protocol: De Novo Genome Assembly with the VGP Pipeline

The VGP assembly protocol generates high-quality, diploid-aware reference genomes suitable for detecting complex structural variations and performing precise cross-species comparisons [32].

Experimental Workflow and Materials

Table 2: Research Reagent Solutions for VGP Genome Assembly

| Item Name | Function/Description |

|---|---|

| PacBio HiFi Reads | Provides long (10-25 kbp) reads with high accuracy (>Q20) to traverse repetitive regions and resolve complex genomic structures [32]. |

| Bionano Optical Maps | Genome-wide restriction maps used for scaffolding contigs, verifying assembly structure, and detecting misassemblies [32]. |

| Hi-C Data (Chromatin Conformation) | Provides long-range interaction information to scaffold contigs into chromosome-length sequences and perform haplotype phasing [32]. |

| VGP Assembly Pipeline | An integrated workflow that uses HiFi reads, Bionano, and Hi-C data to produce chromosome-level, haplotype-phased assemblies [32]. |

Figure 1: VGP genome assembly and scaffolding workflow.

Key Analysis Steps

- Data Generation: Sequence high-molecular-weight DNA to generate PacBio HiFi reads (target 30X coverage). Prepare libraries for Bionano optical mapping and Hi-C chromatin interaction analysis [32].

- Initial Assembly and Purging: Perform initial de novo assembly of HiFi reads into unitigs and contigs using a string graph-based assembler. Purge the primary assembly of false duplications, which often represent unresolved heterozygous alleles, moving these haplotigs to an alternate assembly file [32].

- Scaffolding and Phasing: Use Bionano maps to scaffold contigs into larger sequences, then apply Hi-C data to generate chromosome-scale scaffolds. Leverage Hi-C read-pairs to phase heterozygous blocks and assign contigs to parental haplotypes, producing a diploid-aware assembly [32].

- Quality Control: Evaluate assembly completeness (BUSCO), continuity (contig N50), and base-level accuracy (QV score). Manually curate using Hi-C contact maps to identify and correct misassemblies or missed joins [32].

Protocol: Leveraging the Y1000+ Project for Genotype-Phenotype Mapping

The Y1000+ Project provides a unique resource for evolutionary genomics due to its comprehensive sampling of Saccharomycotina yeast species and associated phenotypic data [22].

Experimental Workflow

Figure 2: Y1000+ genotype-phenotype mapping workflow.

Analytical Methodology

- Data Acquisition and Curation: Download the uniformly processed Y1000+ dataset, which includes genome assemblies, phenotypic screens (e.g., carbon source utilization), and environmental isolation metadata [22].

- Phylogenetic Framework: Reconstruct a high-resolution phylogeny of the Saccharomycotina clade using a set of conserved single-copy orthologs. This tree serves as the evolutionary backbone for all comparative analyses [22].

- Trait Evolution Analysis: Map a discrete phenotypic trait of interest (e.g., "galactose utilization" or "cactophily") onto the phylogeny. Use methods like ancestral state reconstruction to infer the evolutionary history of the trait, identifying independent gains or losses [22].

- Correlative Genomics: Employ computational approaches such as phylogenetic regression or random forest models to identify genomic features (e.g., gene presence/absence, amino acid changes) whose evolutionary patterns are statistically correlated with the trait's distribution [22]. This can pinpoint genetic drivers of adaptation.

Protocol: Utilizing CGR for Zoonotic Disease and Antimicrobial Research

The NIH CGR facilitates reliable comparative genomics for all eukaryotes, providing specialized tools and data to connect genomic variation with phenotypes relevant to human health, such as disease susceptibility and resistance mechanisms [30].

Application Workflow for Zoonotic Pathogen Research

Figure 3: CGR workflow for zoonotic disease research.

Step-by-Step Procedure

- Define a Comparative Question: Formulate a hypothesis, such as "Which key host receptor variants determine susceptibility to a broad-range virus?" or "Which AMPs in frog species show activity against drug-resistant bacteria?" [30].

- Select Genomes and Retrieve Data: Use the CGR interface at NCBI to select and download high-quality genome assemblies and annotated protein sequences for relevant species (e.g., bats, agricultural animals, humans, or diverse frog species) [30].

- Perform Comparative Analysis:

- For Zoonotic Disease: Identify and extract sequences of host factors (e.g., ACE2 for coronaviruses). Perform multiple sequence alignment and structural modeling to identify critical residues affecting virus binding and predict new potential host species [30].

- For Antimicrobial Peptides (AMPs): Use BLAST or profile hidden Markov models to discover novel AMP homologs in newly sequenced eukaryotic genomes by querying known AMP sequences from specialized databases (e.g., APD, DRAMP) against CGR-hosted genomes [30].

- Functional Inference: Synthesize candidate peptides in vitro for validation. Test antimicrobial activity against panels of resistant pathogens and assess cytotoxicity in human cell lines to evaluate therapeutic potential [30].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Comparative Genomics

| Category | Specific Tool / Resource | Function in Research |

|---|---|---|

| Databases & Catalogs | Y1000+ Project Data [22] | Provides a curated resource of genomic, phenotypic, and environmental data for nearly all known yeast species for genotype-phenotype mapping. |

| Microbial Protein Family Databases [22] | Catalogs the microbial protein universe from bacterial genomes and metagenomes, enabling discovery of novel protein families and functions. | |

| Antimicrobial Peptide Databases (APD, DRAMP) [30] | Central repositories of known AMP sequences and structures used as references for discovering novel antimicrobials in genomic data. | |

| Computational Tools | VGP Assembly Pipeline [32] | An integrated suite of tools for generating high-quality, chromosome-level, diploid-aware genome assemblies from multi-platform sequencing data. |

| FANTASIA [22] | A pipeline that integrates protein language models for large-scale functional annotation of proteins beyond the reach of traditional similarity searches. | |

| Pythia & Adaptive RAxML-NG [22] | Machine learning tools for predicting phylogenetic inference difficulty and adapting search strategies, improving the efficiency and robustness of evolutionary trees. | |

| Sequencing Technologies | PacBio HiFi Reads [32] | Long-read sequencing technology (10-25 kbp) with high accuracy (>99.9%) essential for resolving complex repeats and producing high-quality assemblies. |

| Hi-C Data [32] | Chromatin conformation capture data providing long-range genomic contact information used for scaffolding assemblies to chromosome scale and for haplotype phasing. |

The VGP, Y1000+, and CGR resources provide the foundational data and specialized tools required to tackle complex questions in evolutionary and biomedical comparative genomics. The detailed application notes and protocols outlined here provide researchers with a practical framework for employing these resources to generate high-quality genomes, map genotypes to phenotypes, and investigate the genomic basis of disease and resistance. As these databases continue to expand and integrate with advanced computational methods like deep learning, they will undoubtedly unlock further transformative discoveries across the tree of life.

The integration of artificial intelligence (AI) and deep learning into biological research is revolutionizing how scientists study evolutionary processes. Within comparative genomics, two technological fronts are advancing at an unprecedented pace: protein language models (PLMs) and phylogenetic prediction methods. PLMs, adapted from natural language processing, learn evolutionary patterns from millions of protein sequences without explicit supervision, enabling breakthroughs in structure prediction, function annotation, and protein design [33] [34]. Concurrently, phylogenetic prediction methods are becoming increasingly sophisticated, with recent research demonstrating that phylogenetically informed predictions significantly outperform traditional equation-based approaches across evolutionary studies [35]. Together, these technologies provide powerful tools for decoding evolutionary histories, understanding functional divergence, and accelerating biomedical discoveries within comparative genomics frameworks.

This article provides application notes and experimental protocols for leveraging these technologies in evolutionary research, offering practical guidance for researchers seeking to implement these methods in their investigations of evolutionary processes.

Protein Language Models in Evolutionary Research

Protein language models treat amino acid sequences as textual documents where residues form a 20-letter alphabet, applying transformer architectures similar to those used in natural language processing [33]. The fundamental insight is that evolutionary relationships encoded in sequence data can be captured through self-supervised learning on massive sequence databases. PLMs generally fall into three architectural categories: (1) encoder-only models (e.g., ESM, ProtBERT) that generate contextual embeddings for classification and prediction tasks; (2) decoder-only models (e.g., ProtGPT2, ProGen) specialized for conditional sequence generation; and (3) encoder-decoder models for sequence-to-sequence tasks [33] [36].

These models are typically pre-trained on databases like UniRef (containing over 240 million sequences) and Big Fantastic Database (BFD) using objectives like masked language modeling (MLM) where the model learns to predict randomly masked residues in sequences based on their context [33] [37]. This pre-training captures evolutionary constraints, structural constraints, and functional patterns without manual annotation. The resulting representations can then be fine-tuned for specific downstream applications with limited labeled data, making them particularly valuable for biological discovery where experimental annotations are scarce [34].

Application Notes for Evolutionary Analysis

Table 1: Protein Language Models and Their Applications in Evolutionary Research

| Model Class | Representative Examples | Primary Applications in Evolutionary Research | Key Advantages |

|---|---|---|---|

| Encoder-only | ESM-1b, ESM-2, ProtBERT, ProtTrans | Function prediction, mutation effect analysis, fitness landscape mapping, epistatic interaction detection | Captures bidirectional contextual information, excels at comparative analyses, produces fixed-length embeddings for classification |

| Decoder-only | ProtGPT2, ProGen | Protein design, ancestral sequence reconstruction, exploring sequence space beyond natural diversity | Autoregressive generation enables de novo protein design, can optimize for multiple properties simultaneously |

| Encoder-decoder | ProteinLM, T5-style models | Sequence optimization, function transfer between homologs, remote homology detection | Flexible input-output paradigm, suitable for conditional generation and translation tasks |

PLMs enable several key applications in evolutionary research. For function prediction, models like ESM-1b generate embeddings that capture functional constraints, achieving state-of-the-art performance in Gene Ontology term prediction and enzyme commission number classification [34]. For evolutionary trace analysis, PLMs can identify functionally important residues without multiple sequence alignments by assessing the impact of mutations through computed log-likelihood differences [37]. For ancestral sequence reconstruction, generative models like ProtGPT2 can sample plausible ancestral sequences, while encoder models can validate the functional viability of proposed reconstructions [38] [36].

Protocol: Protein Function Prediction Using PLM Embeddings

Purpose: Predict Gene Ontology (GO) terms for uncharacterized protein sequences using protein language model embeddings.

Materials:

- Computational Resources: GPU with ≥16GB memory (e.g., NVIDIA V100, A100)

- Software: Python 3.8+, PyTorch, Transformers library, scikit-learn, BioPython

- Model Checkpoints: ESM-2 (650M parameters) or ProtT5-XL from Hugging Face Hub

- Data: Protein sequences in FASTA format, reference GO annotations (e.g., from UniProt-GOA)

Procedure:

- Sequence Preprocessing:

- Remove low-complexity regions and signal peptides using tools like SMART or Phobius

- Truncate sequences longer than 1024 residues (model-dependent) to accommodate context window

- For multi-domain proteins, consider processing domains separately

Embedding Generation:

- Load pre-trained model:

model = esm.pretrained.esm2_t33_650M_UR50D() - Extract per-residue embeddings:

results = model.get_sequence_representations(sequences) - Generate sequence-level embeddings via mean pooling or attention-based pooling

- Reduce dimensionality using UMAP or PCA for visualization of evolutionary relationships

- Load pre-trained model:

Classifier Training:

- Use hierarchical multi-label classification approach for GO term prediction

- Train Random Forest or XGBoost classifiers on PLM embeddings using known annotations

- Implement cross-validation stratified by protein families to avoid homology bias

- For deep learning approach, add prediction heads on top of frozen PLM embeddings

Validation and Interpretation:

- Assess performance using F-max, area under precision-recall curve

- Compare against baseline methods (BLAST, interProScan) to establish improvement

- Perform ablation studies to determine contribution of different model components

- Use SHAP or integrated gradients to interpret which sequence regions drive predictions

Troubleshooting: For low prediction accuracy on specific protein families, consider fine-tuning the PLM on family-specific sequences before embedding extraction. For memory limitations, use gradient checkpointing or switch to smaller model variants.

Phylogenetic Prediction Methods

Technical Foundations of Phylogenetically Informed Prediction

Phylogenetic prediction encompasses methods that explicitly account for evolutionary relationships when predicting trait values. These approaches leverage the fundamental insight that closely related species share similar characteristics due to common descent [39] [35]. Unlike standard regression models that treat data points as independent, phylogenetic methods incorporate a variance-covariance matrix derived from phylogenetic trees, which captures the expected non-independence due to shared evolutionary history [35].

The field has evolved from distance-based methods like Unweighted Pair Group Method with Arithmetic Mean (UPGMA) and Neighbor-Joining (NJ) to character-based approaches including Maximum Parsimony (MP), Maximum Likelihood (ML), and Bayesian Inference (BI) [40] [41]. Recent advances demonstrate that phylogenetically informed predictions that directly incorporate phylogenetic structure during imputation significantly outperform predictive equations derived from phylogenetic generalized least squares (PGLS) or ordinary least squares (OLS) models, showing 2-3 fold improvement in prediction accuracy [35].

Application Notes for Comparative Genomics

Table 2: Phylogenetic Prediction Methods and Applications

| Method Category | Key Algorithms | Typical Applications in Evolutionary Research | Performance Considerations |

|---|---|---|---|

| Distance-based | Neighbor-Joining, UPGMA | Rapid tree building, large dataset screening, taxonomic classification | Computationally efficient but may oversimplify evolutionary processes |

| Character-based | Maximum Parsimony, Maximum Likelihood | Ancestral state reconstruction, trait evolution modeling, convergent evolution detection | More statistically rigorous but computationally intensive |

| Bayesian | Bayesian Inference with MCMC | Divergence time estimation, relaxed clock models, uncertainty quantification | Incorporates prior knowledge and provides posterior probabilities |

Phylogenetically informed predictions enable diverse applications in evolutionary research. For ancestral state reconstruction, these methods can infer morphological, physiological, or molecular characteristics of extinct ancestors [35]. For trait imputation, they can predict missing values in comparative datasets while accounting for phylogenetic autocorrelation [35]. In functional genomics, phylogenetic predictions can link genetic variation to phenotypic divergence across species [42]. For drug discovery, phylogenetic approaches can identify related species likely to produce similar bioactive compounds [39] [42].

Protocol: Phylogenetically Informed Trait Prediction

Purpose: Predict unknown trait values for species within a phylogenetic context using continuous trait data from related species.

Materials:

- Software: R with packages ape, phytools, nlme, caper; or specialized tools like BEAST, RevBayes

- Data: Phylogenetic tree (Newick or Nexus format), trait dataset with missing values, evolutionary model specifications

- Computational Resources: Standard desktop computer sufficient for most analyses; MCMC analyses may require high-performance computing

Procedure:

- Data Preparation and Alignment:

- Curate phylogenetic tree ensuring tip labels match species in trait dataset

- For molecular data: perform multiple sequence alignment using MAFFT or MUSCLE

- Assess phylogenetic signal using Mantel test or Pagel's λ

- For morphological traits: verify homology of characters across taxa

Model Selection:

- Compare evolutionary models (Brownian motion, Ornstein-Uhlenbeck, Early Burst)

- Use AICc or Bayes factors for model comparison

- Validate model assumptions through residual diagnostics

- Consider multi-rate models if evolutionary rates vary across clades

Prediction Implementation:

- For Bayesian approaches: set up MCMC chain with appropriate priors

- Implement phylogenetic prediction using

phylopredict()in phytools or custom scripts - Run convergence diagnostics (Gelman-Rubin statistic, effective sample size)

- For maximum likelihood: use

contMap()orfastAnc()functions for ancestral state reconstruction

Validation and Visualization:

- Use cross-validation by masking known values and assessing prediction accuracy

- Calculate prediction intervals to quantify uncertainty

- Visualize predictions on phylogeny using traitgram or phylomorphospace plots

- Compare performance against non-phylogenetic methods (OLS) to quantify improvement

Troubleshooting: For poor model convergence, adjust MCMC parameters or use different proposal mechanisms. For unrealistic predictions, check for phylogenetic signal and consider alternative evolutionary models. For computational bottlenecks with large trees, use approximate methods or divide into subtrees.

Integrated Applications in Evolutionary Research

Synergistic Applications of PLMs and Phylogenetic Prediction

The integration of protein language models and phylogenetic prediction creates powerful synergies for evolutionary research. PLMs can generate evolutionary-informed protein embeddings that capture deep phylogenetic signals beyond what is apparent from sequence similarity alone [33] [37]. These embeddings can then serve as input for phylogenetic comparative methods, enabling more accurate reconstructions of ancestral protein states and evolutionary trajectories [35] [36].