Advancing Dynamic Modeling of Ontogeny: From Mechanistic Insights to Clinical Application in Drug Development

This article provides a comprehensive framework for improving dynamic modeling of ontogeny to address critical challenges in drug development, particularly for pediatric and rare diseases.

Advancing Dynamic Modeling of Ontogeny: From Mechanistic Insights to Clinical Application in Drug Development

Abstract

This article provides a comprehensive framework for improving dynamic modeling of ontogeny to address critical challenges in drug development, particularly for pediatric and rare diseases. It explores the foundational principles of mechanistic modeling and the unique complexities of physiological maturation. The content details cutting-edge methodological approaches, including Model-Informed Drug Development (MIDD) frameworks, PBPK modeling, and hybrid machine learning techniques. It further addresses key troubleshooting strategies for model identifiability and optimization, alongside rigorous validation frameworks for regulatory acceptance. Designed for researchers, scientists, and drug development professionals, this resource synthesizes current state-of-the-art practices to enhance the prediction of drug safety and efficacy across developmental stages.

Understanding Ontogeny and Its Impact on Drug Disposition: Core Concepts and Challenges

Ontogeny refers to the development of an individual organism or biological system from the earliest stages to maturity [1]. In the context of clinical pharmacology and drug development, pediatric ontogeny encompasses all aspects of developmental biology that affect drug therapy from the fetus to the adolescent child [2]. Understanding these developmental changes is crucial for predicting how children of different ages will process medications, as the continually changing physiology of pediatric patients leads to rapid and often unpredictable changes in drug disposition [2].

The scientific community has collected vast amounts of information on pediatric ontogeny over the past 60 years, primarily from drug disposition studies in varying pediatric age groups [2]. However, the interplay between maturing drug metabolizing enzymes, transporters, and simultaneous changes in plasma protein binding, body composition, and absorption creates a complex environment that makes accurate estimates of drug clearance a daunting task [2]. This complexity is further compounded by the fact that the ontogeny of receptors—critical for understanding both drug efficacy and safety—is less clearly defined than that of metabolic enzymes [2].

Key Physiological Processes in Ontogeny

Organ Function and Metabolic System Development

Table 1: Developmental Changes in Key Physiological Parameters

| Physiological Parameter | Developmental Pattern | Clinical Significance |

|---|---|---|

| Renal Function | Glomerular filtration rate increases until ~1-2 years of age, then declines to adult levels; active secretion follows similar trajectory until age 2, then gradually increases into adulthood [2] | Critical for drugs primarily renally eliminated; rapid changes in first days of life [2] |

| Hepatic CYP Enzymes | Variable patterns for different CYP isoforms; CYP3A4 activity increases substantially in first days of life [3] | Affects clearance of hepatically metabolized drugs; requires age-appropriate dosing [3] |

| Transporters (OCT1) | Age-dependent increase in protein expression from birth up to 8-12 years; TM50 approximately 6 months [4] | Impacts drug distribution and elimination; must be considered in pediatric PBPK models [4] |

| Transporters (OATP1B1) | mRNA expression in neonates and infants 90-500 fold lower than in adults [4] | Significantly affects drug disposition for transporter substrates [4] |

| Intestinal P-gp | mRNA levels in neonates and infants comparable to adults [4] | Similar oral drug absorption patterns for P-gp substrates across ages [4] |

Membrane Transporter Ontogeny

Membrane transporters facilitate the active movement of drug molecules and endogenous compounds into and out of cells, significantly affecting drug absorption, distribution, and excretion [4]. The ontogeny of these transporters follows distinct patterns across different tissues:

Hepatic transporters: Organic Cation Transporter 1 (OCT1) shows a clear age-dependent increase in protein expression from birth through childhood, reaching 50% of adult levels at approximately 6 months (TM50 ~6 months) and mature expression by 8-12 years [4]. In contrast, Organic Anion Transporting Polypeptide 1B1 (OATP1B1) demonstrates an unusual pattern where mRNA expression in neonates and infants is substantially lower (90-500 fold) than in adults [4].

Intestinal transporters: P-glycoprotein (P-gp) mRNA levels in neonates and infants are generally comparable to adult levels, suggesting similar function throughout development [4]. Breast Cancer Resistance Protein (BCRP) distribution appears similar in fetal (5.5-28 weeks gestation) and adult samples [4].

Renal transporters: The ontogeny of renal transporters contributes to the changing drug excretion capacity throughout childhood, working in concert with the maturation of glomerular filtration and active secretion mechanisms [2].

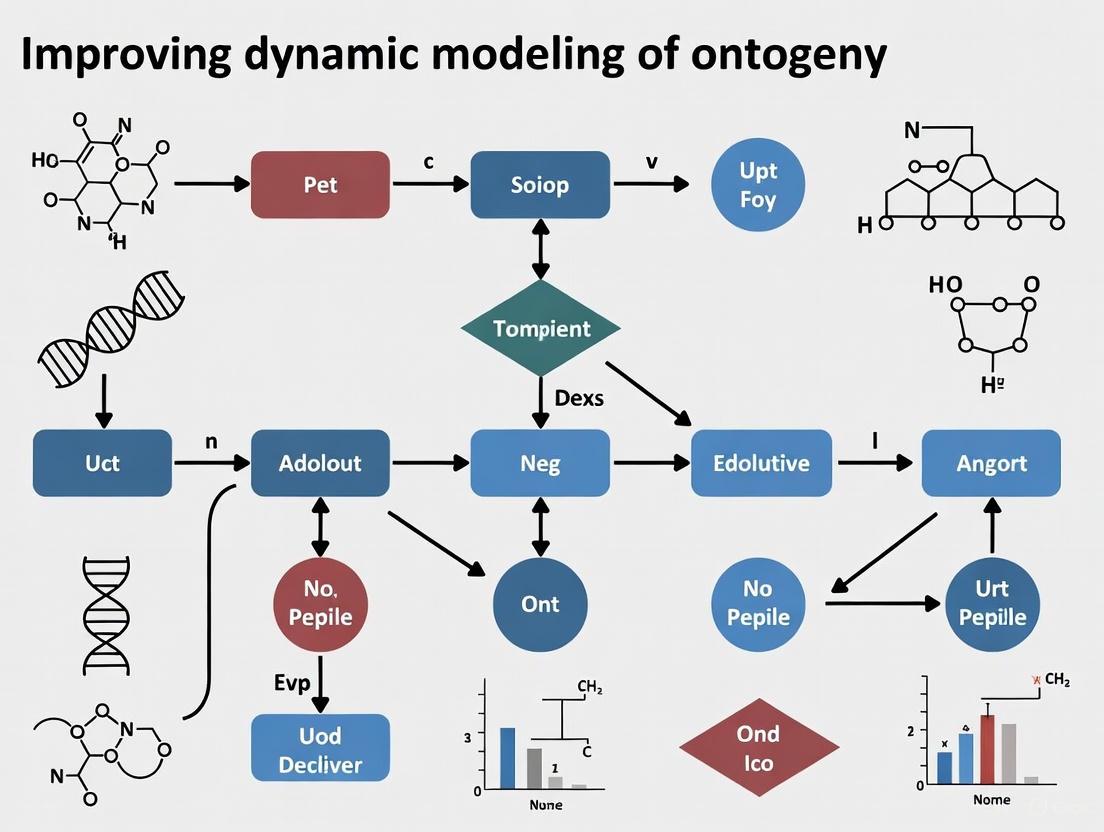

Figure 1: Key Physiological Systems Affected by Ontogeny

Modeling Approaches for Ontogenetic Processes

Physiologically Based Pharmacokinetic (PBPK) Modeling

PBPK modeling represents a mechanistic approach to predicting drug pharmacokinetics using knowledge of human physiology and drug physiochemical properties [3]. This approach is particularly valuable for predicting drug behavior in under-studied populations like pediatrics, where clinical trials are rarely conducted [3]. PBPK modeling incorporates unique patient physiology, making it powerful for anticipating how drug pharmacokinetics may differ in pediatric populations compared to extensively studied adult populations [3].

Recent advances in PBPK modeling include the introduction of time-based changing physiology, which allows subjects to be redefined over time, incorporating changes due to growth and maturation [3]. This is particularly important for neonates who experience rapid growth and organ maturation over short time frames. Additionally, the ability to account for both gestational age and postnatal age has improved simulations in preterm infants, capturing pharmacokinetics in developmentally less mature neonatal subpopulations [3].

Integrated Dynamical Modeling with High-Dimensional Data

Novel approaches integrate dynamical modeling with high-dimensional single-cell data to understand cellular ontogeny in immune responses. These methods employ deep learning and stochastic variational inference to simultaneously model the structure and dynamics of observed marker expression via lower-dimensional representations of data [5]. This approach is particularly useful for modeling phenotypically diverse cell populations with highly distinct and time-dependent dynamics, such as tissue-resident memory T cells (TRM) during immune responses [5].

The integrated methodology contrasts with sequential approaches that first perform unsupervised clustering followed by dynamical modeling of cluster sizes. The integrated method jointly models the distribution of experimental data and underlying cellular dynamics, potentially providing more accurate representations of evolving biological systems [5].

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q: What is the primary challenge in modeling pediatric ontogeny for drug development?

A: The primary challenge lies in the complex interplay between multiple simultaneously developing systems. As described in the literature, "the interplay between maturing drug metabolizing enzymes, including phase I and phase II enzymes, and transporters coupled with simultaneous changes in plasma protein binding, body composition, absorption, etc. create an environment that makes accurate estimates of drug clearance a daunting task" [2].

Q: How can researchers address the significant knowledge gaps in neonatal ontogeny?

A: The scientific community has identified the necessity for creating an integrated knowledge base focusing on the ontogeny of drug metabolizing enzymes and impactful covariates, which can be extended to transporters, receptors, and other key factors in drug action [2]. Collaborative work and international efforts have improved our understanding of the interplay between developmental physiology and drug disposition [4].

Q: What recent advances have improved PBPK modeling in neonates?

A: Two important developments include: (1) the introduction of time-based changing physiology, allowing subjects to be redefined over time to incorporate growth changes, and (2) the ability to account for both gestational age and postnatal age in neonatal PBPK models, which is particularly important for preterm infants [3].

Q: How does membrane transporter ontogeny impact pediatric drug development?

A: Developmental changes in membrane transporter expression and activity can significantly alter drug exposure and clearance in pediatric patients. For example, the age-dependent increase in OCT1 expression impacts the disposition of its substrate drugs throughout childhood [4]. These ontogeny patterns must be incorporated into PBPK models to accurately predict drug behavior in children.

Troubleshooting Experimental Protocols

Problem: Diminished signal in ontogeny characterization experiments

Solution Protocol:

Repeat the experiment: Unless cost or time prohibitive, repeat the experiment since simple mistakes might have occurred [6].

Verify experimental failure: Consider whether there are other plausible reasons for unexpected results. For example, "a dim fluorescent signal could indicate a problem with the protocol but it could also simply mean that the protein in question is not expressed at detectable levels in that specific type of tissue" [6].

Implement appropriate controls: Include both positive and negative controls to confirm experimental validity. "If we still fail to see a good fluorescent signal, it is likely that there is a problem with the protocol" [6].

Check equipment and materials: "Molecular biology reagents can be very sensitive to improper storage. Have the reagents been stored at the correct temperature or have they possibly gone bad?" [6].

Systematically change variables: "It's critical that you isolate variables and only change one at time" [6]. Generate a list of potential contributing factors and test them sequentially, beginning with the easiest to adjust.

Document everything: "Take very detailed notes in your lab notebook that you and the others in your group can go back and understand" [6].

Figure 2: Troubleshooting Protocol for Ontogeny Experiments

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials for Ontogeny Studies

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| PBPK Software (Simcyp, Gastroplus, PK-Sim) | Simulates drug PK using physiological parameters and drug properties [3] | Incorporate ontogeny profiles for enzymes, transporters; account for gestational and postnatal age [3] |

| Tissue-specific mRNA Expression Data | Quantifies gene expression changes during development [4] | Critical for establishing ontogeny patterns of transporters and enzymes [4] |

| Proteomic Assays | Measures protein expression levels across development [4] | Provides more functional data than mRNA alone (e.g., OCT1 protein quantification) [4] |

| Validated Antibody Panels | Identifies cell populations and protein localization [5] | Enables high-dimensional phenotyping of diverse cell populations [5] |

| Flow Cytometry with High-Parameter Capability | Characterizes phenotypically diverse cell populations [5] | Essential for studying immune cell ontogeny and heterogeneity [5] |

| Clinical PK Data from Pediatric Populations | Validates PBPK model predictions [3] | Sparse for neonates but critical for model qualification [3] |

The systematic characterization of ontogenetic processes from neonates to adults represents a critical frontier in biomedical research, particularly for improving pediatric drug therapy. While significant challenges remain due to the complexity of developmental changes and ethical constraints in pediatric research, emerging technologies and collaborative approaches offer promising paths forward. The development of integrated knowledge bases, refinement of PBPK modeling platforms with time-based changing physiology, and application of novel computational methods to high-dimensional data will continue to enhance our understanding of ontogeny. These advances will ultimately support more effective and safer pharmacotherapy for pediatric patients across the developmental spectrum.

The Critical Role of Ontogeny in Pharmacokinetics and Pharmacodynamics

Frequently Asked Questions (FAQs)

1. What is ontogeny in the context of pharmacology? Ontogeny refers to the developmental maturation processes that affect drug therapy from the fetus to the adolescent child. This includes developmental changes in biological processes involved in drug disposition and action, such as the maturation of drug-metabolizing enzymes, transporters, and receptors, as well as changes in body composition and organ function [2] [4].

2. Why is incorporating ontogeny critical for pediatric drug development? Children are not small adults; they undergo complex developmental changes that significantly alter drug pharmacokinetics and pharmacodynamics. Understanding ontogeny is essential to predict drug exposure, efficacy, and safety accurately across different pediatric age groups, thereby avoiding subtherapeutic or toxic exposures [2] [7] [4]. This is particularly vital given the high prevalence of off-label drug use in pediatrics [4].

3. Which ontogeny factors are most important for predicting drug clearance? The most critical factors depend on the drug's elimination pathway.

- For hepatically metabolized drugs: The ontogeny of cytochrome P450 (CYP) enzymes (e.g., CYP3A4, CYP2D6) and Phase II enzymes (e.g., UGTs) is paramount [7].

- For renally eliminated drugs: The maturation of glomerular filtration rate (GFR) and active tubular secretion processes are key [2] [7].

- For transporter substrates: The ontogeny of membrane transporters in the liver (e.g., OATP1B1, OCT1), kidney (e.g., OATs, OCT2), and intestine (e.g., P-gp, BCRP) must be considered [4].

4. What are the main modeling approaches that incorporate ontogeny? The three principal approaches are:

- Physiologically Based Pharmacokinetic (PBPK) Modeling: A mechanistic approach that integrates physiological parameters and ontogeny functions for enzymes and transporters to predict drug exposure [2] [4] [8].

- Population Pharmacokinetic (PopPK) Modeling: A statistical approach that identifies and quantifies sources of variability in drug exposure, using covariates like body weight and age (as a surrogate for maturation) [9] [7] [10].

- Allometric Scaling: Uses body size (e.g., body weight) and fixed exponents to scale clearance and volume of distribution from adults to children, often combined with maturation functions [7] [10].

5. My PBPK model for children is inaccurate. What are common pitfalls? Common issues include:

- Using outdated or incorrect ontogeny profiles for your drug's specific elimination enzymes or transporters.

- Neglecting the ontogeny of key transporters, which can be a significant source of variability [4].

- Failing to account for the interplay between maturation and disease state on drug disposition [10].

- Insufficient model qualification for the intended predictive purpose [10].

Troubleshooting Guides

Issue 1: Poor Predictive Performance of Pediatric Pharmacokinetic (PK) Models

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Incorrect ontogeny function | - Verify the ontogeny profile (enzyme/transporter) used matches the drug's primary elimination pathway.- Check if the model uses a linear maturation model where a sigmoidal (Hill) model is more appropriate. | - Incorporate a scientifically justified and well-vetted ontogeny function for the relevant enzyme (e.g., from the PBPK software library). For renal clearance, use a established maturation model for GFR [7] [10]. |

| Over-reliance on size-based scaling only | - Plot observed clearance vs. body weight. If a strong age-dependent trend remains, maturation is not accounted for. | - Integrate a maturation function with allometric scaling. Use fixed allometric exponents (e.g., 0.75 for clearance) to avoid over-parameterization when combined with age-dependent maturation [10]. |

| Ignoring transporter ontogeny | - Review literature to determine if your drug is a substrate for key transporters like OATP1B1, OATP1B3, or OCT1. | - Incorporate recent data on transporter ontogeny into your PBPK model. Collaborative efforts have improved the available data for these proteins [4]. |

Issue 2: High Variability in Pharmacodynamic (PD) Response in Pediatric Populations

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Use of an insensitive or non-validated PD endpoint | - Confirm the pain, sedation, or disease scale used has been validated for the specific pediatric age group and clinical scenario in your study. | - Use consensus-recommended scales like the Premature Infant Pain Profile (PIPP) for neonates or the Faces Pain Scale–Revised (FPS-R) for older children [9]. |

| Ontogeny of drug receptors or targets | - Literature search for known age-related differences in the expression or function of the drug's target receptor. | - When possible, incorporate known ontogeny of the drug target or physiological system into the PK-PD model. This is complex but critical for some drug classes [2] [10]. |

| Indirect response mechanisms | - Analyze the PK-PD data to see if the time course of effect lags behind the plasma concentration, suggesting an indirect mechanism. | - Use an indirect response PD model structure to account for the time delay between plasma concentration and observed effect [11]. |

Quantitative Ontogeny Data for Modeling

The following tables summarize key ontogeny patterns for major drug elimination pathways, essential for building dynamic models.

Table 1: Ontogeny Patterns of Major Human Cytochrome P450 (CYP) Enzymes

Data derived from in vitro hepatic microsomal studies and incorporated into PBPK platforms [7] [10].

| Enzyme | Reported Ontogeny Pattern | Key Milestone |

|---|---|---|

| CYP3A4 | Very low at birth; rapid increase after the first week; reaches ~50% adult activity by 1 month; peaks at 130-150% of adult levels around 1-2 years; declines to adult levels after puberty. | Reaches 50% adult activity at ~1 month postnatal. |

| CYP2D6 | Detectable in fetal liver; reaches ~50% adult activity by 1 year of age; matures slowly to adult levels by puberty. | Reaches 50% adult activity at ~1 year postnatal. |

| CYP1A2 | Not detectable at birth; activity rises slowly after birth; reaches 50% adult levels by ~1.5-2 years. | Reaches 50% adult activity at ~1.5-2 years postnatal. |

| CYP2C9 | Low activity at birth; reaches 50% adult activity by ~6 months; matures by ~5 years of age. | Reaches 50% adult activity at ~6 months postnatal. |

| CYP2C19 | Active at birth; may exceed adult activity levels during infancy. | Fetal and neonatal activity can be higher than in adults. |

Table 2: Ontogeny Patterns of Selected Hepatic and Renal Transporters

Data consolidated from quantitative proteomic and gene expression studies [4].

| Transporter | Organ | Reported Ontogeny Pattern |

|---|---|---|

| OATP1B1 | Liver | mRNA is very low in neonates and infants. Protein expression patterns are complex and may be higher in fetal livers than in term neonates, with potential variability due to genetic polymorphism. |

| OATP1B3 | Liver | Shows a clear age-dependent increase in protein expression. |

| OCT1 | Liver | Protein expression shows an age-dependent increase from birth, with maturation (TM50) estimated to occur around 6 months of age. |

| MRP2 | Liver | Protein abundance is low at birth and increases with age, reaching adult levels by 1-2 years. |

| P-gp | Intestine | mRNA levels in neonates and infants are generally comparable to adults. |

| OAT1 | Kidney | Not detectable in fetal kidney; expression increases after birth and matures during early childhood. |

| OAT3 | Kidney | Expression is low in the neonatal kidney and increases during the first year of life. |

Experimental Protocols for Key Assays

Protocol 1: Developing a Pediatric Physiologically Based Pharmacokinetic (PBPK) Model

This methodology outlines the steps for building and qualifying a PBPK model for pediatric exposure prediction, as demonstrated for drugs like diphenhydramine [8].

1. Objective: To predict systemic exposure of a drug in pediatric populations by leveraging adult data and incorporating ontogeny.

2. Materials and Software:

- Software: PBPK platform (e.g., PK-Sim, Simcyp, GastroPlus).

- Input Data:

- Drug-Specific Parameters: Physicochemical properties (log P, pKa), binding data (plasma protein binding), and in vitro ADME data (permeability, metabolic stability, enzyme/transporter kinetics).

- Clinical PK Data: Plasma concentration-time profiles from adult studies (both IV and oral, if available) for model building and verification.

- Pediatric Data: Published clinical PK studies in children for model evaluation.

3. Workflow Diagram: PBPK Model Development and Scaling

4. Procedure:

- Step 1: Adult Model Building. Develop a PBPK model for healthy adults using drug-specific parameters and verify it against observed adult PK data. The model must adequately describe absorption, distribution, metabolism, and excretion.

- Step 2: Pathway Identification. Determine the primary routes of elimination (e.g., specific CYP enzyme metabolism, renal filtration, transporter-mediated uptake).

- Step 3: Ontogeny Incorporation. Replace the adult values for key elimination pathways in the software with age-dependent ontogeny functions. This includes selecting the appropriate maturation profiles for enzymes (e.g., CYPs), transporters, and renal function.

- Step 4: Pediatric Scaling. Use the qualified adult model and simulate PK in virtual pediatric populations. The software will automatically adjust physiological parameters (organ sizes, blood flows, body composition) and the incorporated ontogeny functions based on age.

- Step 5: Simulation & Evaluation. Simulate the pediatric PK using the proposed dosing regimen. Compare the predicted exposure metrics (AUC, Cmax) with any available observed data from literature using pre-defined acceptance criteria (e.g., predicted/observed ratio within 2-fold) [8].

Protocol 2: Population PK Model Building with Allometric Scaling and Maturation

1. Objective: To characterize the typical population PK parameters and quantify the impact of size and maturation on drug clearance in a pediatric study population.

2. Materials and Software:

- Software: Nonlinear mixed-effects modeling software (e.g., NONMEM, Monolix, R).

- Input Data: Rich or sparse plasma concentration-time data from pediatric patients, along with covariate information (e.g., body weight, age, postmenstrual age, serum creatinine).

3. Procedure:

- Step 1: Base Model Development. Develop a structural PK model (e.g., one- or two-compartment) and a statistical model for inter-individual and residual variability without covariates.

- Step 2: Allometric Scaling. Introduce body size into the model. Typically, clearances (CL) are scaled using (Body Weight/70)0.75 and volumes of distribution (V) are scaled using (Body Weight/70)1 [10].

- Step 3: Maturation Function. For neonates, infants, and young children, add a maturation function to account for age-dependent changes in organ function that are not explained by size alone. A sigmoidal Emax or Hill model is often used for this purpose:

CL = CL<sub>std</sub> × (WT/70)<sup>0.75</sup> × [AGE<sup>HILL</sup> / (TM50<sup>HILL</sup> + AGE<sup>HILL</sup>)]where TM50 is the age at which maturation reaches 50% of adult capacity, and HILL is the Hill coefficient describing the steepness of the maturation curve [7] [10]. - Step 4: Covariate Model Building. Evaluate other potential covariates (e.g., renal function using serum creatinine) to explain remaining inter-individual variability.

- Step 5: Model Validation. Validate the final model using techniques like bootstrap or visual predictive check to ensure its robustness and predictive performance.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item / Resource | Function / Application in Research |

|---|---|

| Pediatric PBPK Software | Platforms like PK-Sim and Simcyp contain built-in virtual pediatric populations and curated ontogeny functions for enzymes and transporters, enabling mechanistic simulation of drug exposure [8]. |

| Human Ontogeny Data Repositories | Systematic knowledge bases (e.g., PharmGKB, Reactome) and published meta-analyses provide consolidated in vitro and in vivo data on the developmental trajectories of enzymes and transporters [2]. |

| Probe Substrates | Drugs with well-characterized and specific pathways (e.g., caffeine for CYP1A2, midazolam for CYP3A4) are used in clinical studies to phenotype the activity of a specific enzyme in different age groups [7]. |

| Validated Pediatric PD Scales | Standardized and age-appropriate tools (e.g., FLACC for pain, Ramsey Sedation Score for sedation) are crucial for obtaining reliable pharmacodynamic data to build PK-PD relationships [9]. |

| Population PK Modeling Software | Tools like NONMEM are essential for analyzing sparse, real-world clinical PK data from pediatric patients to quantify the effects of covariates like weight and age on drug disposition [7] [10]. |

Frequently Asked Questions

Q: What are the primary data-related challenges in dynamic modeling of ontogenesis? A: The key challenges stem from the multi-level nature of ontogenesis, which involves complex interactions between genetic and epigenetic regulation across different system levels. This creates a "dynamic landscape of inter-dependent regulative states," making it difficult to collect sufficient quantitative data, especially on spatial and temporal patterns emerging from local cell interactions [12]. Working with small populations intensifies this issue, as it limits the data available to parameterize and validate these complex models.

Q: How can I model ontogenetic processes despite limited experimental data? A: A combined approach is often necessary. Start with model structures derived from fundamental biological principles (e.g., balance equations) [13]. Unknown parameters can then be adjusted to fit the limited available process data [13]. Leveraging modeling formalisms that support the integration of heterogeneous knowledge sources, such as Nets-Within-Nets (NWN), can also help compose a more complete model from disparate data snippets [12].

Q: My model simulation fails or the solver does not find a solution. What should I check? A: Follow these troubleshooting steps:

- Initial Simulation: Ensure the initial simulation runs. Check for issues like components running empty (e.g., storages) or chattering problems that cause the simulation to stall [14].

- Solver Issues: If the optimization solver fails, check that your objective is well-defined and the sampling time is reasonable [14]. For gradient-based solvers, a common failure point is a lack of model smoothness; the problem must be twice continuously differentiable (C2-smooth). Avoid using non-smooth functions like

abs,min, ormaxwithout smooth approximations [14]. - Initialization: Improve the initialization of control elements. While feasibility is not always required, avoiding significant constraint violations in the initial simulation is beneficial [14].

Q: Are there specific modeling tools that can help address these challenges? A: Yes, the choice of formalism is critical. The Nets-Within-Nets (NWN) formalism is particularly suited for ontogeny research because it uses a single, uniform framework to represent the hierarchical organization of biological systems, from intracellular mechanisms to supra-cellular spatial structures [12]. This capability to handle different levels of regulation within one model helps manage complexity when data is limited. An implementation is available in the Renew simulation engine [12].

Experimental Protocols & Methodologies

Protocol 1: Developing a Dynamic Model from First Principles and Data This methodology is adapted from general dynamic modeling guidelines for engineering and can be applied to biological systems like ontogeny [13].

- Define Objective: Clearly state the goal of the simulation (e.g., simulate the formation of a specific morphogenetic pattern).

- Create Schematic: Draw a diagram of the system, labeling all relevant variables and interactions.

- List Assumptions: Document all simplifying assumptions (e.g., "cell division occurs at a constant rate").

- Determine Spatial Dependence: Decide if the system requires Partial Differential Equations (PDEs) for spatial modeling or if Ordinary Differential Equations (ODEs) are sufficient.

- Write Dynamic Balances: Formulate balance equations (e.g., for mass, energy, species) based on conservation principles.

- Add Other Relations: Incorporate thermodynamic, reaction rate, or geometric relationships.

- Check Degrees of Freedom: Ensure the number of independent equations matches the number of unknown variables.

- Classify Variables:

- Inputs: Fixed values, disturbances, manipulated variables.

- Outputs: States, controlled variables.

- Simplify Equations: Use your listed assumptions to simplify the balance equations.

- Simulate: First, simulate steady-state conditions if possible. Then, perform a dynamic simulation (e.g., with an input step) to analyze the system's behavior [13].

Protocol 2: A Nets-Within-Nets Approach for Ontogenetic Pattern Formation This protocol is based on the strategy used to model Vulval Precursor Cells (VPC) specification in C. Elegans [12].

- System Decomposition: Identify the key hierarchical levels in the ontogenetic process (e.g., organism, tissue, cell, intracellular signaling pathways).

- Formalism Selection: Utilize the Nets-Within-Nets (NWN) formalism, where tokens in a high-level Petri net can themselves be lower-level Petri nets, representing the hierarchical structure [12].

- Model Construction:

- Represent each cell or major biological entity as a separate Petri net (token in the higher-level system net).

- Within each cell-net, model intracellular regulatory dynamics using places (representing biological states or conditions) and transitions (representing biochemical events).

- Define communication channels between cell-nets to model local inter-cellular interactions (e.g., signaling via morphogens).

- Stochastic Integration: Configure transitions to fire stochastically to capture the inherent randomness of biological systems [12].

- Simulation and Validation: Run stochastic simulations to observe emergent patterns. Compare the simulation outcomes with known experimental results, both physiological and from mutations, to validate the model [12].

Research Reagent Solutions

The table below lists key resources used in computational modeling of ontogeny.

| Item/Reagent | Function in Research |

|---|---|

| Renew Software | An extensible editor and simulation engine for Reference Nets, a type of Nets-Within-Nets formalism. It allows for the simulation of hierarchical and stochastic models of ontogenesis [12]. |

| Petri Net Models | A graphical and mathematical modeling formalism used to represent and study systems with concurrent, distributed, and stochastic processes. It is the foundation for NWN [12]. |

| Ordinary Differential Equations (ODEs) | A mathematical framework used for modeling the continuous, deterministic dynamics of homogeneous systems, such as the concentration dynamics of molecules in a large cell population [12]. |

| Stochastic Simulation Algorithm | A computational method used to simulate the dynamics of a system where randomness is a key factor, such as in gene expression or signaling events involving small molecule counts [12]. |

The Scientist's Toolkit: Essential Computational Methods

| Method | Application in Dynamic Modeling of Ontogeny |

|---|---|

| Nets-Within-Nets (NWN) | Models hierarchical organization and interplay between different regulatory layers (e.g., cell population dynamics and intracellular signaling) [12]. |

| Ordinary Differential Equations (ODEs) | Describes continuous concentration dynamics in largely homogeneous cellular compartments. Best for systems with large entity numbers [12]. |

| Stochastic Discrete-Event Simulation | Models inherently discrete and stochastic biological processes (e.g., plasmid dynamics, cell fate determination). Allows control over the granularity of observation [12]. |

| Hybrid Modeling | Combines continuous (e.g., ODE) and discrete (e.g., PN) modeling approaches to capture different aspects of a complex ontogenetic system within a single framework [12]. |

Quantitative Data for Dynamic Modeling

Table 1: WCAG 2.1 Color Contrast Ratios for Accessibility This is critical for ensuring that any diagrams or visualizations created are accessible to all researchers, including those with low vision or color blindness [15] [16].

| Content Type | Level AA (Minimum) | Level AAA (Enhanced) |

|---|---|---|

| Normal Body Text | 4.5 : 1 | 7 : 1 |

| Large-Scale Text (18pt+ or 14pt+bold) | 3 : 1 | 4.5 : 1 |

| User Interface Components & Graphical Objects | 3 : 1 | Not Defined |

Table 2: Key Characteristics of Modeling Formalisms for Ontogeny

| Formalism | Primary Strength | Best Suited for Ontogenetic Processes Involving... |

|---|---|---|

| Nets-Within-Nets (NWN) | Hierarchical organization; Multi-level regulation; Stochasticity [12]. | The interplay between different system levels (e.g., tissue patterning driven by intracellular signaling). |

| Ordinary Differential Equations (ODEs) | Continuous, deterministic dynamics of concentrations [12]. | Well-mixed systems with large numbers of molecules or cells where average behavior is key. |

| Stochastic Discrete-Event Models | Discrete, qualitative, and stochastic events; Controlled granularity [12]. | Processes with small entity numbers or where qualitative, stepwise changes are important (e.g., cell fate decisions). |

Signaling Pathway and Experimental Workflow Visualizations

The following diagrams are generated using the DOT language, adhering to the specified color and contrast rules.

What is Model-Informed Drug Development and why is it particularly important for pediatric populations?

Model-Informed Drug Development (MIDD) is "an approach that involves developing and applying exposure-based biological and statistical models derived from preclinical and clinical data sources to inform drug development or regulatory decision-making" [17]. For pediatric populations, MIDD is especially crucial due to the practical and ethical limitations in collecting experimental pharmacokinetic (PK), pharmacodynamic (PD), and clinical data in children. These approaches leverage data from literature and older patients to quantify the effects of growth and maturation on Dose-Exposure-Response (DER) relationships [10].

How are regulatory agencies supporting the use of MIDD in pediatric drug development?

Regulatory agencies strongly encourage MIDD for pediatric studies. The FDA's MIDD Paired Meeting Program provides a formal mechanism for sponsors to discuss MIDD approaches with the Agency, including for pediatric development plans [18] [19]. The European Medicines Agency (EMA) also highlights that MIDD "can serve as the basis for dose/regimen selection, clinical trial optimisation, extrapolation, and posology claims" for children [10]. Recent FDA draft guidances, including "General Clinical Pharmacology Considerations for Paediatric Studies of Drugs, Including Biological Products," further elaborate on the role of modeling and simulation in pediatric drug development [20].

Core Challenges: Dynamic Modeling of Ontogeny

What specific physiological factors related to ontogeny must be accounted for in pediatric MIDD?

Modeling ontogeny—the process of growth and development—requires accounting for numerous dynamic physiological changes. The following table summarizes key ontogenetic factors and their impacts on drug disposition and response.

Table: Key Ontogenetic Factors to Consider in Pediatric MIDD

| Factor Category | Specific Parameters | Impact on Drug Disposition/Response |

|---|---|---|

| Body Size & Composition | Body weight, organ weight, water/fat composition [10] | Affects drug distribution volume and clearance [10] |

| Organ Function Maturation | Renal function [10], biliary clearance, cardiac output, GI tract parameters (pH, volume, transit times) [10] | Determines the maturation profile of drug absorption and elimination |

| Metabolic Enzyme Ontogeny | Cytochrome P450s (CYPs) [10] [20], Uridine diphosphate-glucuronosyltransferase (UGTs) [10] | Governs the developmental trajectory of metabolic capacity, crucial for predicting PK |

| System-Specific Development | Neurological development [10], blood-brain barrier maturity [20] | Can influence drug targets, safety, and pharmacodynamic response |

What are the common pitfalls when modeling ontogeny, and how can they be avoided?

- Ignoring Maturation Functions: Using allometric scaling based on body size alone is insufficient. Maturation functions (e.g., sigmoid Emax or Hill models) must be incorporated to describe the time-dependent development of organ function and metabolic pathways, especially in neonates and infants [10].

- Incorrect Allometric Exponent Application: Using allometric exponents estimated from adult data for pediatric models is not advised, as adult exponents are influenced by factors like obesity. Fixed theoretical exponents (0.75 for clearance, 1.0 for volume) are often scientifically justified for children, but the approach must be specified and justified in the analysis plan [10].

- Failing to Account for Dynamic Changes: In rapidly developing populations like premature neonates, simply using baseline body weight is inadequate. Models must account for changing body weight and maturation over the course of treatment [10].

- Overlooking Disease-Ontogeny Interaction: The impact of the disease itself on maturation and ontogeny must be considered, as disease progression can alter physiological development [10].

Methodologies and Experimental Protocols

What is a standard workflow for developing a pediatric pharmacokinetic model?

The following diagram illustrates the core workflow for developing and applying a pediatric PK model, integrating ontogeny and leveraging prior knowledge.

What are the key methodologies and reagent solutions used in pediatric MIDD?

Table: Essential Methodologies and Tools for Pediatric MIDD

| Methodology / Tool | Brief Explanation & Function |

|---|---|

| Population PK (PopPK) Modeling | Analyzes sparse data collected in pediatric patients to identify sources of variability and quantify the impact of covariates like weight and age. |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Mechanistic models incorporating tissue volumes, blood flows, and enzyme ontogeny information to simulate drug PK; highly valuable for pediatric dose prediction and formulation bridging [20]. |

| Disease Progression Modeling | Mathematical models of a disease's natural history without treatment; used for trial optimization and endpoint selection, especially critical in rare diseases [17]. |

| Clinical Trial Simulation (CTS) | Uses drug-trial-disease models to inform trial duration, select response measures, and predict outcomes; a priority area for FDA's MIDD Paired Meeting Program [18] [19]. |

| Extrapolation Methodologies | Approaches to leverage efficacy data from adult populations to reduce the burden of clinical trials in children, guided by quantitative models [10]. |

Troubleshooting Common Issues

My model poorly predicts neonatal pharmacokinetics. What could be wrong?

This is a common challenge. The solution often lies in a more refined incorporation of ontogeny.

- Check Enzyme Maturation Profiles: Ensure you are using the most current and compound-specific information on the ontogeny of relevant metabolic enzymes (CYPs, UGTs) and transporters [10] [20].

- Verify Renal Function Models: Glomerular filtration rate (GFR) and tubular secretion mature rapidly after birth. Use established maturation functions for renal clearance, especially for drugs primarily eliminated by the kidneys [10].

- Account for Unique Neonatal Physiology: Neonates have an immature blood-brain barrier, different body composition, and unique organ development. A simple allometric scaling from adults will not capture these nuances. Using a PBPK platform that includes robust neonatal ontogeny functions can be particularly helpful [20].

How can I justify my model-based pediatric dosing strategy to regulators?

Justification rests on model credibility and transparent communication.

- Conduct a Model Risk Assessment: Proactively assess and document the model's risk level, considering the "weight of model predictions" and the "potential risk of making an incorrect decision" [19]. This is a requested component of the FDA MIDD Paired Meeting Program.

- Use Comprehensive Visualizations: When submitting to regulators, provide clear plots showing predicted exposure metrics versus body weight and age on a continuous scale. Overlay the proposed dosing regimen and the reference adult therapeutic range to visually demonstrate adequacy [10].

- Engage Early via Regulatory Pathways: Utilize programs like the MIDD Paired Meeting Program to get FDA feedback on your proposed MIDD approach and modeling plans before finalizing your strategy [18] [17] [19].

The Scientist's Toolkit: Research Reagent Solutions

Table: Key Reagent and Data Solutions for Pediatric MIDD

| Item / Solution | Function in Pediatric MIDD |

|---|---|

| In Vitro System Data | Data from recombinant enzymes or hepatocytes to inform enzyme-specific clearance and its ontogeny [10]. |

| Alternative Bio-specimens | Use of urine, saliva, or cerebrospinal fluid (CSF) to enable PK analysis where blood sampling is limited [20]. |

| Validated Biomarkers | Biomarkers for safety, efficacy, or disease progression that can be measured in small sample volumes and are consistent across age groups. |

| PBPK Software Platforms | Commercially available software with built-in pediatric and ontogeny modules to facilitate mechanistic modeling [20]. |

| Passive Integrated Transponder (PIT) Tags | Used in preclinical ontogeny studies (e.g., in animal models) to track individual growth and development over time, generating data for dynamic models [21]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is MIDD and why is it critical for SMA drug development? Model-Informed Drug Development (MIDD) uses mathematical and computational models to integrate multidisciplinary data, enhancing decision-making across all stages of drug development. For Spinal Muscular Atrophy (SMA), a rare genetic disease caused by mutations in the SMN1 gene, MIDD is particularly vital. It addresses unique challenges such as small patient populations, ethical constraints on clinical trials in children, and considerable variability in disease progression. MIDD helps optimize dosing, support extrapolation of data from adults to children, and enable more efficient and ethical clinical trial strategies, thereby accelerating the development of safe and effective treatments [22].

FAQ 2: Which MIDD approaches were used in the development of risdiplam? The development and regulatory approval of risdiplam, an oral SMN2-splicing modifier, was supported by two primary MIDD approaches [22]:

- Physiologically Based Pharmacokinetic (PBPK) Modeling: A PBPK model was developed to predict the drug-drug interaction (DDI) potential of risdiplam as a perpetrator of CYP3A4-based DDI in the pediatric population. This was crucial as a clinical DDI study was not feasible in pediatric patients with SMA. The model simulated the DDI effect with midazolam and demonstrated a low potential for clinically relevant interactions in children aged 2 months and older [22].

- Population PK (popPK) Modeling: A mechanistic popPK model integrated with the PBPK model was used to derive the in vivo flavin-containing monooxygenase 3 (FMO3) ontogeny, a key enzyme in risdiplam's metabolism. This refined ontogeny function improved the prediction of risdiplam pharmacokinetics in children and informed weight-based and fixed-dose recommendations for different age and weight groups [22].

FAQ 3: How can MIDD inform dosing strategies for pediatric patients? MIDD approaches, such as popPK analysis, directly support pediatric dose optimization. For instance, the popPK model for risdiplam identified that age and body weight influenced its pharmacokinetics. Based on this analysis, a weight-based dosing regimen was recommended for patients aged ≤2 years and those ≥2 years but with a body weight <20 kg. A fixed dose was recommended for patients ≥2 years old weighing >20 kg [22].

FAQ 4: What are the emerging therapeutic targets beyond SMN in SMA? While approved therapies like nusinersen, onasemnogene abeparvovec, and risdiplam target SMN protein restoration, the SMA drug pipeline includes promising "SMN-independent" therapies. These often target muscle function directly. A key emerging target is the myostatin pathway. Inhibiting myostatin, a protein that naturally limits muscle growth, is a strategy to increase muscle mass and strength. Investigational therapies like apitegromab and taldefgrobep alfa are designed to inhibit myostatin activation and are being evaluated, often in combination with SMN-dependent therapies [23].

Troubleshooting Common MIDD Challenges in SMA

Challenge 1: Accounting for Ontogeny in Pediatric PK Models

- Problem: Standard adult physiological parameters do not accurately predict drug metabolism and disposition in children, whose organ function and enzyme systems mature with age.

- Solution: Incorporate established ontogeny functions for relevant drug-metabolizing enzymes and transporters into PBPK models.

- Example from SMA: The risdiplam model successfully derived and applied an in vivo FMO3 ontogeny function from clinical data, which was critical for accurate PK prediction in children [22].

Challenge 2: Predicting Drug-Drug Interactions (DDIs) in Vulnerable Populations

- Problem: Conducting clinical DDI studies in pediatric or severely ill SMA patients is often unethical or unfeasible.

- Solution: Use PBPK modeling to extrapolate DDI risk from healthy adult studies to the target pediatric patient population.

- Example from SMA: A PBPK model simulated the DDI between risdiplam and midazolam (a CYP3A substrate) in children, concluding a low risk of clinically relevant interactions without needing a clinical trial [22].

Challenge 3: Optimizing Trial Design for Small Populations

- Problem: Rare diseases like SMA have small, heterogeneous patient populations, making traditional randomized controlled trials difficult.

- Solution: Leverage model-based meta-analysis (MBMA), disease progression models (DPM), and Bayesian trial designs to optimize trial design, leverage natural history data, and maximize information from every patient [22].

Experimental Protocols & Data

Protocol 1: Developing a PBPK Model for DDI Assessment

This protocol outlines the steps for using a PBPK model to assess drug-drug interaction potential, as demonstrated in the risdiplam case study [22].

- Model Development (in adults):

- Gather in vitro data on the drug's physicochemical properties and enzyme kinetics (e.g., CYP3A TDI parameters for risdiplam).

- Develop and qualify a PBPK model in a healthy adult population using clinical PK data from Phase I studies.

- Refine model parameters (e.g., adjust in vivo inactivation constant from in vitro values) to capture observed DDI data in adults.

- Model Extrapolation (to pediatrics):

- Scale the qualified adult PBPK model to a pediatric population by incorporating age-dependent physiological changes (e.g., body weight, organ size, blood flow).

- Integrate relevant enzyme ontogeny functions (e.g., for CYP3A and FMO3).

- Simulation and Analysis:

- Simulate the DDI with a common probe substrate (e.g., midazolam) across different pediatric age groups.

- Analyze the simulated exposure changes (AUC ratio) to determine clinical relevance.

Protocol 2: Building a PopPK Model for Dose Selection

This protocol describes the development of a population pharmacokinetic model to inform dosing, as used for risdiplam and nusinersen [22].

- Data Collection: Pool rich or sparse PK data from multiple clinical trials, including data from healthy volunteers and patients (infants, children, adults) with varying demographics.

- Structural Model Development: Identify the model that best describes the drug's PK (e.g., a 2-compartment model with transit absorption for risdiplam).

- Statistical Model Development: Identify and quantify sources of inter-individual variability and residual unexplained variability.

- Covariate Analysis: Test the influence of patient demographics (e.g., body weight, age, renal function) and disease status on PK parameters. Use stepwise covariate modeling to identify statistically significant relationships.

- Model Validation: Validate the final model using diagnostic plots, visual predictive checks, and, if possible, external data.

- Simulation for Dosing: Use the validated model to simulate exposure under various dosing regimens. Recommend a dosing strategy that achieves target exposure across the population.

Table: Key MIDD Applications in Approved SMA Therapeutics

| Therapeutic / Class | MIDD Approach Applied | Key Application / Question Answered | Outcome / Impact |

|---|---|---|---|

| Risdiplam (small molecule, SMN2-splicing modifier) | PBPK Modeling | Predict CYP3A-mediated DDI risk in pediatric patients [22]. | Demonstrated low DDI risk, supporting labeling without a clinical DDI study in children. |

| Population PK (popPK) Modeling | Identify sources of PK variability and optimize dosing [22]. | Recommended weight-based and fixed dosing regimens for different pediatric subgroups. | |

| Nusinersen (antisense oligonucleotide) | Population PK (popPK) Modeling | Characterize PK in CSF and plasma across infant and child populations [22]. | Supported the approved dosing regimen (12 mg loading and maintenance doses). |

Table: Quantitative Data from SMA MIDD Case Studies

| Parameter / Metric | Value / Finding | Context / Model |

|---|---|---|

| Midazolam AUC Ratio (with/without Risdiplam) | 1.09 - 1.18 [22] | Simulated in pediatric patients (2 months-18 years) using PBPK; indicates low DDI potential. |

| Primary Metabolic Pathways of Risdiplam | FMO3 (75%), CYP3A (20%) [22] | Informing the need for ontogeny functions for these enzymes in pediatric models. |

| Nusinersen Dosing Regimen | 12 mg (loading & maintenance) [22] | PopPK analysis supported this fixed dose across age groups. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Research Reagents for SMA and MIDD Research

| Item | Function / Application in SMA & MIDD |

|---|---|

| SMN2 Transgenic Mouse Models | In vivo models for studying disease pathogenesis, pharmacokinetic/pharmacodynamic relationships, and preclinical efficacy of SMN-targeting therapies [22]. |

| Induced Pluripotent Stem Cells (iPSCs) | Patient-derived cells that can be differentiated into motor neurons; used for in vitro disease modeling, toxicity screening, and studying basic disease mechanisms [24]. |

| Clinical PK/PD Datasets | Pooled data from healthy volunteer and patient trials; essential for developing and validating popPK and PK/PD models [22]. |

| Ontogeny Function Libraries | Mathematically described functions for the maturation of drug-metabolizing enzymes and transporters; critical input for PBPK models in pediatric drug development [22]. |

Workflow and Pathway Diagrams

MIDD Application Workflow in SMA

Risdiplam DDI Prediction Pathway

Methodological Innovations: PBPK, QSP, and AI-Driven Modeling Approaches

Physiologically Based Pharmacokinetic (PBPK) Modeling for Ontogeny

Frequently Asked Questions (FAQs)

FAQ 1: What is ontogeny and why is it critical for pediatric PBPK modeling? Ontogeny refers to the developmental changes in the biological processes that affect drug disposition in pediatric patients. This includes age-dependent changes in the expression and activity of membrane transporters and drug-metabolizing enzymes [4]. Incorporating accurate ontogeny information is essential because these developmental changes can significantly alter drug exposure and clearance in children compared to adults, leaving pediatric patients at risk for subtherapeutic or toxic exposures if not properly accounted for in dosing [4].

FAQ 2: My PBPK model predictions for children do not match observed data. What could be wrong? Mismatches between predictions and observations often stem from incomplete or inaccurate ontogeny profiles for the specific ADME (Absorption, Distribution, Metabolism, and Excretion) processes relevant to your drug [25]. Key troubleshooting steps include:

- Verify Clearance Mechanisms: Confirm that the ontogeny functions for all relevant clearance pathways (e.g., specific CYP enzymes, transporters) are correctly implemented and are appropriate for the age range being simulated [25].

- Review System Parameters: Ensure that the physiological parameters (e.g., organ volumes, blood flows, tissue composition) for the pediatric population in your software are accurate and up-to-date [26].

- Check for Knowledge Gaps: Significant knowledge gaps still exist in developmental biology. Consult recent literature to see if new ontogeny data for your drug's key transporters or enzymes have emerged [4].

FAQ 3: When is a PBPK model for ontogeny considered sufficiently validated? A PBPK model is generally considered qualified for a specific pediatric application when its predictions fall within a pre-defined acceptance benchmark (e.g., 2-fold) of observed clinical data for key pharmacokinetic parameters like AUC (Area Under the Curve) and Cmax (maximum concentration) [8] [27]. This involves demonstrating the predictive capability of the PBPK platform and the specific drug model for its intended context of use, such as predicting exposure in a particular pediatric age range [27] [25].

FAQ 4: Can I use a PBPK model to predict doses for children if no pediatric clinical trial data exists? Yes. A key strength of PBPK modeling is its "bottom-up" approach. By integrating drug-specific properties with the physiological and ontogeny information of a pediatric population, PBPK models can simulate drug PK in populations where no clinical studies have been conducted, such as for first-dose selection in pediatric trials [28] [26]. However, the confidence in such predictions depends on the quality of the underlying ontogeny data and the model's verification in other scenarios [29].

Troubleshooting Guides

Addressing Common PBPK Modeling Challenges

The table below summarizes frequent issues, their potential causes, and recommended solutions.

Table 1: Troubleshooting Guide for Ontogeny PBPK Modeling

| Problem | Potential Root Cause | Recommended Solution |

|---|---|---|

| Systemic over-prediction of drug exposure in infants | The ontogeny function for the primary drug-clearing enzyme or transporter is inaccurate, leading to an underestimation of clearance in this age group. | Re-evaluate the literature on the ontogeny of the relevant enzyme/transporter. Consider using a different, well-vetted ontogeny function within the PBPK platform if available. |

| Poor prediction of drug absorption in neonates | Incomplete knowledge of developmental changes in gastrointestinal physiology (e.g., gastric pH, intestinal surface area, bile salt levels) [8]. | Incorporate established ontogeny patterns for GI physiology. If available, use system data specific to preterm neonates or infants. Sensitivity analysis can help identify the most critical parameters. |

| High uncertainty in model predictions for a new chemical entity | Lack of clinical data for model evaluation and potential gaps in the ontogeny of relevant ADME processes. | Clearly document all assumptions. Use the PBPK model to explore different scenarios based on uncertainty. Prioritize obtaining in vitro data on specific enzymes/transporters involved to inform the model. |

| Difficulty in recruiting expert peer reviewers for the model | A common challenge noted by the modeling community, which can delay regulatory acceptance [29]. | Follow a rigorous model-building workflow and provide comprehensive documentation as per regulatory guidance (e.g., FDA's format for PBPK reports) to facilitate review [30] [31]. |

| Model cannot be transferred across different software platforms | Lack of standardization and interoperability between different PBPK modeling platforms [29]. | Maintain detailed records of all model parameters, equations, and assumptions. When possible, use open-source and transparent platforms like the Open Systems Pharmacology Suite to enhance reproducibility and transferability [25] [31]. |

Quantitative Ontogeny Data for Key Transporters

Incorporating accurate quantitative data is fundamental. The table below summarizes the ontogeny patterns of selected clinically relevant membrane transporters based on human data.

Table 2: Ontogeny Patterns of Selected Human Membrane Transporters [4]

| Membrane Transporter (Gene Name) | Reported Ontogeny Pattern |

|---|---|

| Hepatic OCT1 (SLC22A1) | Protein expression shows an age-dependent increase from birth, reaching a transition midpoint (TM50) at approximately 6 months, with adult levels achieved around 8-12 years [4]. |

| Hepatic OATP1B1 (SLCO1B1) | mRNA expression is very low in fetuses and neonates (500-fold and 90-fold lower than adults, respectively). Protein expression patterns from different studies show some variation, potentially influenced by age and genetic polymorphism [4]. |

| Hepatic OATP1B3 (SLCO1B3) | Protein expression is generally lower in infants (< 2.5 years) compared to adults. Some data suggest genetic polymorphism (*17) may influence its expression profile [4]. |

| Intestinal P-gp (ABCB1) | mRNA expression levels in neonates and infants are generally comparable to those in adults [4]. |

| Intestinal BCRP (ABCG2) | Tissue distribution and expression appear to be similar in fetal samples (as early as 5.5 weeks of gestation) and adult samples [4]. |

Experimental Protocols

Workflow for Developing a Pediatric PBPK Model

The following diagram illustrates the best-practice workflow for building and qualifying a PBPK model for pediatric extrapolation, integrating ontogeny information.

Workflow for Pediatric PBPK Model Development

This workflow is adapted from established best practices and tutorials in the field [26] [25] [31]. The process begins by developing a robust adult PBPK model, which serves as the foundation. The key step for pediatric extrapolation is the identification of the drug's clearance pathways and the subsequent incorporation of verified ontogeny functions for those specific enzymes and transporters [25]. The model is then scaled using age-dependent physiological system parameters. Finally, the model must be evaluated by comparing its predictions to any available observed pediatric data, with troubleshooting focused on the ontogeny assumptions if predictions fall outside acceptable limits [8] [27].

The Scientist's Toolkit

The following table lists key resources essential for conducting PBPK modeling for ontogeny.

Table 3: Key Resources for Ontogeny PBPK Modeling

| Tool / Resource | Function / Application |

|---|---|

| PBPK Software Platforms Commercial (e.g., GastroPlus, Simcyp) and open-source (e.g., PK-Sim/MoBi) platforms provide integrated physiological databases, ontogeny functions, and modeling frameworks to build, simulate, and evaluate PBPK models [26] [25] [31]. | |

| Ontogeny Databases Compiled data on the age-dependent expression and activity of enzymes and transporters. These are often integrated within PBPK platforms but should be supplemented with ongoing literature review [4] [25]. | |

| In Vitro-In Vivo Extrapolation (IVIVE) | A methodology used to quantify organ-level clearance by scaling data from in vitro systems (e.g., microsomes, hepatocytes) to the whole-body level in the PBPK model [26]. |

| Sensitivity Analysis Tools Features within PBPK software that help identify which parameters (e.g., enzyme activity, tissue permeability) have the greatest impact on model output, guiding refinement efforts [26]. | |

| Qualification/Validation Reports Documentation provided by software vendors or the community that demonstrates the predictive performance of the platform for specific uses, such as pediatric extrapolation [27] [25]. |

Quantitative Systems Pharmacology (QSP) for Pathway-Level Insights

Frequently Asked Questions (FAQs)

Q1: What is Quantitative Systems Pharmacology, and how is it distinct from traditional PK/PD modeling?

Quantitative Systems Pharmacology (QSP) is a computational approach that integrates biological pathways, pharmacology, and mathematical models for drug development [32]. Unlike traditional Pharmacokinetic/Pharmacodynamic (PK/PD) models which often focus on empirical relationships between drug concentration and effect, QSP uses a "bottom-up" approach to examine the interface between experimental drug data and the biological "system" [32]. This system can include specific disease pathways, physiological consequences of a disease, or various "omics" data (e.g., genomics, proteomics) [32]. While physiologically based pharmacokinetic (PBPK) modeling predicts PK outcomes in patient populations, QSP predicts pharmacodynamic (PD) and clinical efficacy outcomes, making it especially valuable for translating results from animal models to humans and recommending clinical doses [32].

Q2: When during the drug development process should QSP be employed?

QSP can and should be employed at all stages of drug development, from pre-clinical research through Phase 3 clinical trials [32]. Its use is particularly critical when:

- Evaluating a new Mechanism of Action or repurposing an existing drug [32].

- Translating PK/PD responses across species to better predict clinical outcomes from pre-clinical models [32].

- Forecasting drug responses in special populations (e.g., pediatrics, patients with comorbidities) via in silico patient simulations [32].

- Designing dosing regimens and rational selection of combination therapies for different patient populations [32].

Q3: My QSP model predictions do not align with our initial experimental data. What are the first steps I should take?

Begin by systematically verifying the foundational elements of your model.

- Review Model Assumptions: Re-examine the biological assumptions embedded in your model, particularly around the relevant pathways. QSP is valuable for simplifying complex biological systems by distinguishing between relevant and irrelevant pathways [32]. Ensure your model's core logic accurately reflects current biological understanding.

- Audit Input Data Quality and Relevance: Check the quality and context of the data used to parameterize your model. For models involving ontogenetic shifts, verify that data used for calibration is specific to the correct developmental stage, as diet and resource use can change with age and size [21]. Using inappropriate data can lead to significant prediction errors.

- Check Parameter Identifiability and Sensitivity: Perform a sensitivity analysis to identify which parameters have the most significant impact on your model's outputs. Focus your refinement efforts on these high-sensitivity parameters.

Q4: How can I improve the translation of my QSP model from a pre-clinical to a clinical context?

Improving translation requires a focus on the key interspecies differences.

- Incorporate Ontogenetic and Biological Scaling: Explicitly account for interspecies differences in the expression levels and characteristics of biological targets [32]. Do not simply assume a 1:1 relationship between animal and human physiology.

- Utilize Stage-Structured Populations: If the system involves life-stage-dependent behaviors (e.g., ontogenetic diet shifts), structure your population model to reflect this. Research has shown that stage-based models can be stronger predictors of prey response than total predator density models [21]. For example, in a system with a predator that changes its diet, the density of juvenile predators may correlate more strongly with one prey type, while adult density correlates with another [21].

- Leverage Available Clinical and "Omics" Data: Integrate available human "omics" data to refine the biological system within your model. Coupling "omics" with QSP can generate powerful insights that decrease uncertainty at key decision points [32].

Troubleshooting Guides

Problem: Model Fails to Capture Observed Efficacy in a Specific Patient Subpopulation

This often occurs when the model does not adequately account for population heterogeneity or specific physiological conditions.

Investigation and Resolution Protocol:

- Verify Comorbidity Factors: Check if the subpopulation has a known comorbidity (e.g., liver or kidney disease) that could alter the PD response [32]. Incorporate the known physiological impact of this comorbidity into your system model.

- Analyze Pharmacogenomic Data: Investigate whether the subpopulation has a higher prevalence of genetic polymorphisms that affect drug metabolism (e.g., rapid or reduced metabolizer phenotypes) or transporter expression [32]. Introduce these variabilities into your in silico population.

- Simulate the Subpopulation: Use your QSP platform to generate a virtual population that mirrors the characteristics of the subpopulation in question. Re-run simulations to see if the model can now recapitulate the observed clinical outcome.

Problem: Difficulty in Scaling a Pathway Model from an Animal Model to Humans

A common translational challenge arises from an oversimplified view of species differences.

Investigation and Resolution Protocol:

- Identify Key Interspecies Differences: Go beyond standard allometric scaling. Systematically catalog differences in the expression levels, kinetics, and dynamics of the biological targets within your pathway between the animal model and humans [32].

- Incorporate Stage-Based Dynamics: If the pathway or disease mechanism is influenced by ontogeny (development) or aging, ensure your model accounts for this. For instance, in a trophic interaction model, a stage-structured population that considers ontogenetic diet shifts provided a better prediction of prey response than a model based on total predator density [21]. Apply this principle to human developmental stages.

- Calibrate with Available Human Data: Use any available in vitro human data or early clinical biomarker data to recalibrate the scaled model. This helps to ground the model in human biology before making full-scale clinical predictions.

Problem: Inability to Identify the Root Cause of a Predicted Safety Concern (e.g., Drug-Induced Liver Injury)

QSP models can predict adverse effects, but pinpointing the exact mechanism is key to mitigation.

Investigation and Resolution Protocol:

- Map the Safety Endpoint to Biomarkers: Link the predicted clinical safety endpoint (e.g., liver injury) to earlier, mechanistic biomarkers within your model [32]. This creates a traceable path from system perturbation to adverse outcome.

- Perform Virtual Knock-Out/Inhibition Studies: Use the model to perform in silico experiments. Systematically "knock-out" or inhibit specific pathways in the model to see which intervention alleviates the safety signal. This can help identify the most critical pathway responsible for the toxicity.

- Explore Dosing Regimen Adjustments: Leverage the model to test if alternative dosing regimens (e.g., different doses, dose frequencies, or combination therapies) can maintain efficacy while mitigating the predicted safety risk [32].

Experimental Protocols for Key Cited Studies

Protocol: Evaluating the Impact of Stage-Structured Predator Populations on Prey Dynamics

This protocol is adapted from research on brown treesnakes, demonstrating how ontogenetic shifts can be formally incorporated into a dynamic model [21].

1. Objective: To quantify whether stage-structured population densities of a predator (based on ontogenetic diet shifts) are better predictors of specific prey population responses than total predator density.

2. Methodology:

- System Manipulation: Artificially manipulate the predator population density. In the cited study, this was achieved by removing approximately 40% of the brown treesnake population via toxic mammal carrion baits, which selectively targeted larger, rodent-eating individuals [21].

- Stage Class Definition: Define discrete stage classes for the predator based on known ontogenetic shifts in dietary preference. For example [21]:

- Class 1 (Juveniles): SVL < 700 mm; primarily consume ectothermic prey (e.g., lizards).

- Class 2 (Adults): SVL ≥ 900 mm; reliably consume endothermic prey (e.g., rodents).

- Population Monitoring: Conduct rigorous mark-recapture studies to estimate the total and stage-specific population densities of the predator over time. All captured individuals should be measured and marked (e.g., with PIT tags or scale clips) [21].

- Prey Response Monitoring: Implement standardized visual surveys along fixed transects to estimate prey detection rates (e.g., sightings-per-unit-effort, or SPUE) for the different prey types (e.g., lizards and mammals). Surveys should be conducted consistently before and after the predator manipulation [21].

- Data Analysis: Use statistical modeling (e.g., regression analysis) to evaluate the strength of the relationship between the response of each prey type and: a) The total density of the predator population. b) The stage-specific densities of the predator population.

3. Application to QSP: The core principle of this protocol—using discrete, mechanism-based subpopulations to refine dynamic models—can be directly translated to QSP. For instance, a patient population could be segmented based on metabolizer status (e.g., CYP450 polymorphism) or disease severity, and the model's predictive power can be tested for these subpopulations versus the population as a whole.

Protocol: Building and Validating an Integrative Drug-Disease QSP Model

1. Objective: To develop a computational model that integrates knowledge of drug action with disease pathways to predict clinical efficacy and safety outcomes.

2. Methodology:

- Systems Definition:

- Drug System: Incorporate the drug's mechanism of action, including binding kinetics, target engagement, and downstream signaling effects [32].

- Disease System: Map the key biological pathways related to the disease, including signal transduction, regulatory feedback loops, and pathophysiological consequences [32].

- Host System: Include relevant host factors such as pharmacogenomics, organ function (e.g., liver, kidney), and comorbidity effects on the PD response [32].

- Model Construction: Use a "bottom-up" approach to build a mathematical model (often ordinary differential equations) that quantitatively describes the interactions between the drug, disease, and host systems.

- Model Calibration and Validation:

- Calibration: Parameterize the model using in vitro and pre-clinical in vivo data.

- Validation: Test the model's predictions against independent experimental data sets that were not used for calibration. This can include data from animal models of disease or early clinical biomarker data [32].

- Model Application:

- Run simulations to forecast drug response in virtual patient populations, including special populations (e.g., pediatrics, renally impaired) [32].

- Use the model to propose and optimize dosing regimens and rational combination therapies [32].

- Identify knowledge gaps and suggest what additional experiments are needed to improve the model [32].

Research Reagent Solutions

The table below details key materials and their functions as utilized in the featured ontogeny and QSP-related research.

| Research Reagent / Material | Function in Experiment / Field |

|---|---|

| Acetaminophen Toxic Baits | Used for the selective removal of a specific predator stage class (rodent-consuming snakes) to manipulate population structure and study top-down effects on prey [21]. |

| Passive Integrated Transponder (PIT) Tags | A unique identifier implanted into study animals (e.g., snakes) to enable robust mark-recapture studies and accurate tracking of individual growth, survival, and movement over time [21]. |

| High-Powered Headlamps | Essential equipment for conducting standardized nocturnal visual surveys to detect and count cryptic species (predators and prey) along established transects [21]. |

| Biological "Omics" Data (Genomics, Proteomics) | Data sources used in QSP model construction to identify intersecting disease themes and pathways, thereby decreasing uncertainty at key decision points in drug development [32]. |

| In Silico Patient Populations | Virtual populations generated within a QSP model that incorporate patient variability (e.g., genetics, organ function) to forecast drug response and optimize therapies before clinical trials [32]. |

| Computational Modeling Software | The platform used to implement, simulate, and analyze QSP models, which are a convergence of biological pathways, pharmacology, and mathematical models [32]. |

Signaling Pathway and Workflow Visualizations

Model Construction Workflow

Ontogenetic Shift Impact on Modeling

Drug-Disease System Integration

Integrating Machine Learning with Mechanistic Dynamic Models

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed for researchers integrating machine learning with mechanistic dynamic models, specifically within the context of improving dynamic modeling of ontogeny and drug development. The guidance below addresses common technical challenges, provides validated experimental protocols, and lists essential research tools.

Frequently Asked Questions (FAQs)

Q1: Our hybrid model is overfitting to the training data. How can we improve its generalizability? A1: Overfitting in hybrid models often arises from a mismatch between model complexity and data quantity.

- Diagnosis: The model performs well on training data but poorly on validation or test sets.

- Solution: Integrate synthetic data generation using your mechanistic model. Use the mechanistic model to generate in silico, multi-dimensional molecular time-series data that reflects known biological variability. This augmented dataset provides a more comprehensive training landscape, reducing overfitting and improving model generalizability for ontogeny applications [33].

Q2: How can we effectively incorporate sparse, multi-scale biological data into a single hybrid model? A2: Leverage ML for data fusion and use the mechanistic model as a structural scaffold.

- Diagnosis: Data from different scales (e.g., molecular, cellular, tissue) are difficult to integrate, leading to poor model performance.

- Solution: Use machine learning, such as graph-based semi-supervised learning, to integrate the intensities from multiparametric measurements (e.g., from MRI or omics). The mechanistic model then uses this processed information to constrain its predictions, ensuring they are biologically plausible. This approach effectively leverages limited, multi-source data [34] [33].

Q3: Our mechanistic model is computationally expensive, slowing down hybrid model development. What are the options? A3: Replace the computationally expensive components with a fast, accurate ML-based surrogate.

- Diagnosis: Simulations with the full mechanistic model are too slow for rapid parameter exploration or uncertainty analysis.

- Solution: Develop a neural network surrogate of the mechanistic model. For example, a 3D finite element model of embryonic patterning was successfully replaced by a neural network, enabling rapid parameter exploration and the discovery of new biological insights, such as the role of advection in morphogen gradient formation [33].

Q4: How can we ensure our hybrid model remains interpretable and biologically grounded? A4: Prioritize "deep integration" where biological mechanisms are embedded within the ML architecture.

- Diagnosis: The model is a "black box," making it difficult to understand its predictions or gain biological insight.