Advanced Latent Vector Regularization in Graph Autoencoders: Methods and Biomedical Applications

This article provides a comprehensive exploration of advanced regularization techniques for latent vectors in graph autoencoders, tailored for researchers and professionals in computational biology and drug discovery.

Advanced Latent Vector Regularization in Graph Autoencoders: Methods and Biomedical Applications

Abstract

This article provides a comprehensive exploration of advanced regularization techniques for latent vectors in graph autoencoders, tailored for researchers and professionals in computational biology and drug discovery. We begin by establishing the foundational role of regularization in learning robust graph representations, then delve into specific methodologies including adversarial, Wasserstein, and random walk-based regularization. The guide further addresses common challenges like uneven latent distributions and non-smooth manifolds, offering practical optimization strategies. Finally, we present a comparative analysis of these techniques using validation metrics from real-world biomedical applications, such as gene regulatory network inference, demonstrating their impact on predictive accuracy and robustness in research settings.

The Critical Role of Latent Space Regularization in Graph Representation Learning

Troubleshooting Guide: Common Latent Space Regularization Issues

FAQ: Why is latent vector regularization necessary in Graph Autoencoders?

Answer: Latent vector regularization is crucial in Graph Autoencoders (GAEs) to prevent overfitting and ensure the learned representations preserve the underlying geometric structure of graph data. Without proper regularization, GAEs tend to learn overly complex representations that model training data too well but generalize poorly to unseen data [1] [2]. Regularization techniques help maintain the geometric integrity of the data manifold in the latent space, which is particularly important for downstream tasks like node classification, link prediction, and anomaly detection in biological networks [3] [4].

FAQ: How can I address overfitting in my Graph Autoencoder model?

Answer: Overfitting manifests as excellent training performance but poor test accuracy. These strategies can help:

Implement Spatial Regularization: For spatiotemporal graph data, add a spatial consistency regularization term to your loss function:

ℒ = ℒ_Rec + λℒ_SCR, whereℒ_SCR = (1/N)∑_i∑_j w_ij‖z_i - z_j‖²ensures geographically neighboring nodes have similar latent representations [5].Apply Random Walk Regularization: When latent vectors exhibit uneven distribution, use random walk-based methods to regularize the latent vectors learned by the encoder, which improves feature separation and model robustness [6].

Utilize Geometry-Preserving Regularization: Implement Riemannian geometric distortion measures that preserve geometry derived from graph Laplacians, particularly effective for learning dynamics in latent space [3].

FAQ: What should I do if my GAE fails to capture long-range dependencies in graph data?

Answer: Traditional autoencoders often struggle with long-range dependencies. Address this by:

Upgrade to Graph Attention Autoencoders: Implement Graph Attention Networks (GAT) in your encoder/decoder, which use self-attention mechanisms to dynamically weight the importance of neighboring nodes, regardless of their distance [5].

Enhance with Mutual Isomorphism: Use frameworks like colaGAE that employ mutual isomorphism as a pretext task, sampling from multiple views in the latent space to better capture global graph structure [4].

FAQ: How can I reduce error accumulation in dynamic graph predictions?

Answer: For temporal graph data, error accumulation is a common issue in recurrent architectures:

Integrate Neural ODEs: Combine GNNs with Neural Ordinary Differential Equations (Neural ODEs) to learn continuous-time dynamics in the latent space, using numerical integration to obtain solutions at each timestep. This approach significantly reduces error accumulation in long-term predictions [7] [8].

Adopt Latent-Space Dynamics: Move from physical-space to latent-space learning paradigms, which naturally reduce model complexity and error propagation while maintaining predictive accuracy [8].

Quantitative Performance Comparison of Regularization Methods

Table 1: Performance Metrics of Various GAE Regularization Approaches

| Regularization Method | Application Context | Key Metric Improvements | Computational Efficiency |

|---|---|---|---|

| Spatial Consistency Regularization [5] | Rainfall anomaly detection | Effective anomaly identification validated against traditional surveys | Training: ~6 minutes (4,827 nodes, 72K-85K edges) |

| Gravity-Inspired Graph Autoencoder [6] | Gene regulatory network reconstruction | High accuracy & strong robustness across 7 cell types | Not specified |

| Graph Geometry-Preserving [3] | General graph geometry preservation | Outperforms state-of-the-art geometry-preserving autoencoders | Suitable for large-scale training |

| Mutual Isomorphism (colaGAE) [4] | Node classification tasks | 4 SOTA results; 0.3% average accuracy enhancement | Avoids complex contrastive learning requirements |

| Neural ODE Integration [7] [8] | Neurite material transport | Mean relative error: 3%; Max error: <8%; 10× speed improvement | Reduced training data requirements |

Table 2: Regularization Techniques and Their Specific Applications

| Regularization Type | Mathematical Formulation | Primary Benefit | Ideal Use Cases |

|---|---|---|---|

| L2 Regularization [9] [1] | J'(θ;X,y) = J(θ;X,y) + (α/2)‖w‖²₂ |

Prevents large weights without eliminating features | General-purpose regularization for graph features |

| L1 Regularization [9] [1] | J'(θ;X,y) = J(θ;X,y) + α‖w‖₁ |

Creates sparsity by forcing some weights to zero | Feature selection in high-dimensional graph data |

| Spatial Consistency [5] | ℒ_SCR = (1/N)∑_i∑_j w_ij‖z_i - z_j‖² |

Maintains geographic coherence | Spatiotemporal graphs with positional relationships |

| Random Walk Regularization [6] | Not specified in detail | Addresses uneven latent vector distribution | Graphs with complex topological structures |

| Elastic Net [1] | Ω(θ) = λ₁‖w‖₁ + λ₂‖w‖²₂ |

Combines feature elimination and coefficient reduction | Graphs with correlated features requiring selection |

Experimental Protocols for Latent Vector Regularization

Protocol 1: Spatial Regularization for Anomaly Detection

Based on: Spatially Regularized Graph Attention Autoencoder for rainfall extremes [5]

Workflow:

- Graph Construction: Create daily graphs with nodes representing geographical locations and edges determined through event synchronization.

- Model Architecture: Implement a Graph Attention Autoencoder with encoding/decoding phases, each containing two GAT layers.

- Attention Mechanism: Compute attention coefficients using:

α_ij = exp(LeakyReLU(a^T[Wx_i∥Wx_j])) / ∑_k∈𝒩(i) exp(LeakyReLU(a^T[Wx_i∥Wx_k])) - Loss Function: Combine reconstruction loss with spatial regularization:

ℒ = ℒ_Rec + λℒ_SCR - Anomaly Identification: Flag nodes with high reconstruction error as potential anomalies.

Protocol 2: Mutual Isomorphism for Enhanced Representation Learning

Based on: colaGAE framework for continuous latent space sampling [4]

Workflow:

- Multiple Encoder Training: Train multiple encoders simultaneously rather than a single encoder.

- Mutual Isomorphism: Enforce that outputs from different encoders are mutually isomorphic.

- Graph Reconstruction: Use the mutually isomorphic representations to reconstruct graph structure.

- Pretext Task: Utilize graph isomorphism as the self-supervised pretext task.

- Downstream Application: Apply learned representations to node classification tasks.

Research Reagent Solutions

Table 3: Essential Computational Tools for GAE Regularization Research

| Research Tool | Function/Purpose | Implementation Example |

|---|---|---|

| Graph Attention Networks (GAT) [5] | Captures spatial dependencies with dynamic neighbor weighting | α_ij = exp(LeakyReLU(a^T[Wx_i∥Wx_j])) / ∑_k∈𝒩(i) exp(LeakyReLU(a^T[Wx_i∥Wx_k])) |

| Neural Ordinary Differential Equations (Neural ODEs) [7] [8] | Models continuous-time dynamics in latent space | Integration with GNNs for error-free long-term predictions |

| Riemannian Geometric Distortion Measures [3] | Preserves graph geometry in latent representations | Regularizer based on graph Laplacian for large-scale training |

| Event Synchronization [5] | Quantifies temporal relationships for edge construction | Determines adjacency matrix through synchronized events |

| Random Walk Regularizer [6] | Addresses uneven latent vector distribution | Improves separation of features in encoded representations |

Frequently Asked Questions (FAQs)

1. What are the primary symptoms of overfitting in a Graph Autoencoder (GAE)? You can identify overfitting in your GAE by observing a large performance gap; the model will have very high accuracy or low loss on the training data but perform significantly worse on a separate validation or test set [10]. This often occurs when the model has excessive capacity and learns the noise in the training data rather than the underlying pattern.

2. How does an uneven latent distribution negatively impact my model? Uneven or non-smooth latent distributions can severely limit your model's performance and usability. They often lack clear semantic separation, making it difficult for downstream tasks (like classification or generation) to leverage the latent vectors effectively [11]. This can lead to poor generalization and reduced quality in generated samples [11] [12].

3. What is the key difference between a standard Autoencoder and a Variational Autoencoder (VAE) in terms of latent space structure? The key difference lies in the nature of the latent space. A standard autoencoder learns to map inputs to fixed points in the latent space, which often results in a non-smooth manifold that is poorly structured and difficult to interpolate [12]. In contrast, a VAE learns a probability distribution for each latent dimension (typically Gaussian), leading to a smooth and continuous latent space that is better regularized and more suitable for generative tasks [12] [13].

4. Why is my Graph Autoencoder failing to learn meaningful representations on a small dataset? This is a classic symptom of overfitting, which is exacerbated in scenarios with scarce labeled data [14]. When initial feature vectors are sparse (e.g., bag-of-words features), the model may only update parameters associated with non-zero feature dimensions during training. This fails to fully represent the range of learnable parameters, causing the model to perform poorly on test nodes that have different active feature dimensions [14].

5. Can a model suffer from both overfitting and underfitting? Not simultaneously, but a model can oscillate between these two states during the training process. This is why it is crucial to monitor performance metrics on a validation set throughout the training cycle, not just at the end [10].

Troubleshooting Guide

The following table outlines common problems, their diagnoses, and potential solutions based on recent research.

| Core Challenge | Symptoms & Diagnosis | Recommended Solutions & Methodologies |

|---|---|---|

| Overfitting [10] [14] | - High training accuracy, low validation accuracy.- Model memorizes training data noise.- Prevalent with sparse features and limited labeled data. | - Apply Regularization: Use L1/L2 regularization to penalize model complexity [10].- Implement Early Stopping: Halt training when validation performance stops improving [10].- Feature/Hyperplane Perturbation: Introduce noise to initial features and projection hyperplanes to create variability and improve robustness [14]. |

| Uneven Latent Distributions [6] | - Latent vectors form a non-smooth manifold.- Poor semantic structure hinders downstream tasks.- Clusters in latent space do not correspond to meaningful biological groups. | - Random Walk Regularization: Apply a random walk-based method to the latent vectors to promote a more uniform and well-structured distribution [6].- Leverage Self-Supervised Features: Construct the latent space using pre-trained, semantically discriminative features (e.g., DINOv3) to ensure a more meaningful structure [11]. |

| Non-Smooth Manifolds [12] | - Latent space is discontinuous and non-smooth.- Difficult to generate realistic new samples via interpolation. | - Adopt a VAE Framework: Replace a deterministic autoencoder with a VAE, whose loss function includes a KL divergence term that regularizes the latent space to be smooth and continuous [12] [13].- Use Flexible Priors: Employ more complex prior distributions, such as a Gamma Mixture Model, to capture a richer variety of latent structures [13]. |

Detailed Experimental Protocols

Protocol 1: Mitigating Overfitting via Feature and Hyperplane Perturbation

This methodology is designed to address overfitting caused by sparse initial features in Graph Neural Networks, including Graph Autoencoders [14].

- Model Setup: Begin with a standard GNN architecture (e.g., GCN, GAT) or a Graph Autoencoder.

- Perturbation Injection: During training, simultaneously apply shifts to both the initial node features and the model's learnable weight matrices (hyperplanes).

- Feature Shifting: Add a small, randomized noise vector to the initial sparse feature matrix. This helps ensure that a wider range of feature dimensions are activated during training.

- Hyperplane Shifting: Apply a corresponding transformation to the model's weights to maintain the consistency of the learning process despite the shifted features.

- Training and Evaluation: Train the model with this dual-shifting mechanism and evaluate its performance on a held-out test set. The objective is to observe a reduction in the performance gap between training and test accuracy, indicating improved generalization [14].

Protocol 2: Regularizing Latent Distributions with Random Walks

This protocol is based on the GAEDGRN model for gene regulatory network inference and addresses uneven latent distributions in Graph Autoencoders [6].

- Encoder Processing: Input your graph data (e.g., gene co-expression networks) into the graph encoder to generate a set of initial latent vectors.

- Random Walk Application: On the graph structure defined by your data, perform multiple random walks starting from each node.

- Latent Space Smoothing: Use the statistics from these random walks (e.g., node visitation frequencies) to compute a regularization loss. This loss penalizes latent vectors if connected nodes in the graph have dissimilar representations, thereby enforcing smoothness based on the graph topology.

- Loss Integration: Combine this random walk regularization loss with the standard Graph Autoencoder reconstruction loss (and any other task-specific losses).

- Model Optimization: Train the entire model end-to-end. The resulting latent space should exhibit a more even and topologically informed structure, improving performance on tasks like link prediction or gene importance scoring [6].

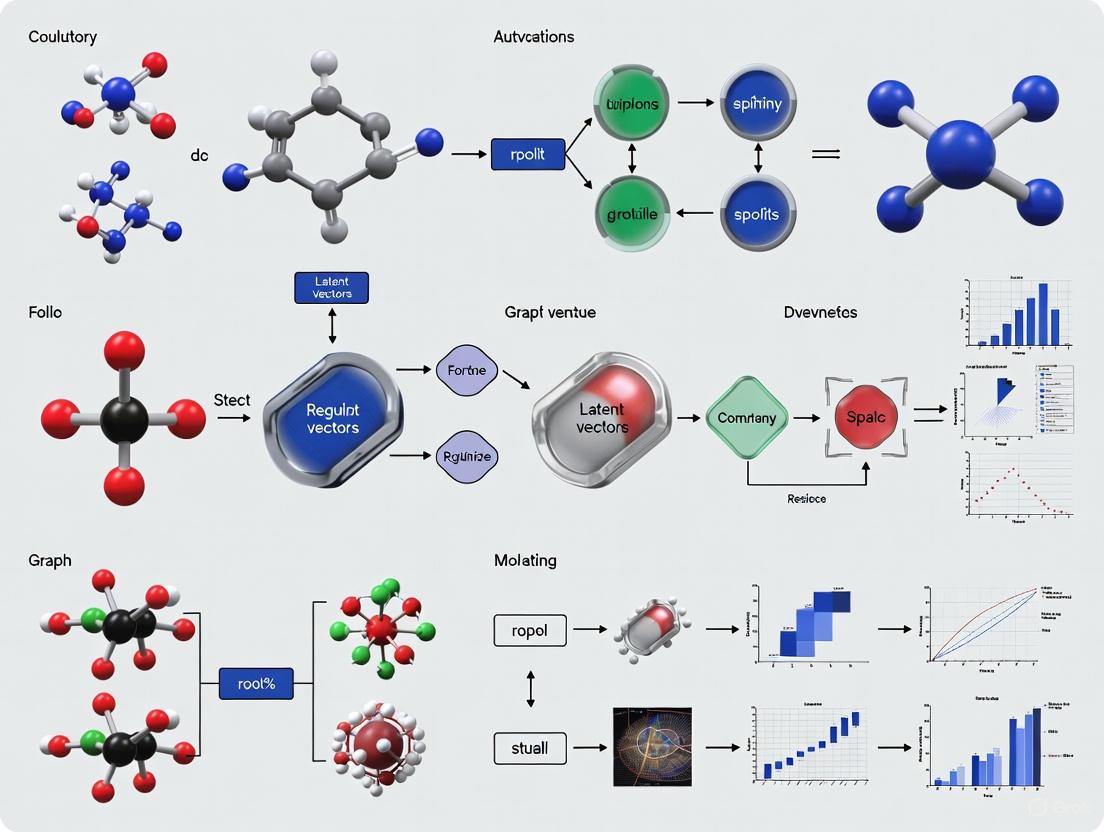

Experimental Workflow Visualization

The diagram below illustrates a high-level workflow for integrating various regularization techniques to tackle the core challenges in Graph Autoencoder research.

GAE Regularization Workflow

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational "reagents" and their functions for developing robust Graph Autoencoder models.

| Research Reagent | Function & Explanation |

|---|---|

| L1 / L2 Regularizer [10] | A penalty term added to the loss function to discourage complex models. L1 promotes sparsity, while L2 shrinks weight magnitudes, both helping to prevent overfitting. |

| Random Walk Regularizer [6] | A method that uses graph topology to smooth the latent space. It ensures that nodes close in the graph have similar latent representations, leading to more even distributions. |

| VAE Framework (KL Divergence) [12] [13] | The Kullback-Leibler divergence in a VAE acts as a powerful regularizer, forcing the latent distribution to conform to a smooth prior (e.g., Gaussian), which mitigates non-smooth manifolds. |

| Feature/Hyperplane Perturbation [14] | A data augmentation technique that adds noise to input features and model weights. It simulates a wider data distribution, improving model robustness and combating overfitting from sparse data. |

| Gamma Mixture Prior [13] | A more flexible alternative to the standard Gaussian prior in VAEs. It can model asymmetric data distributions, potentially capturing complex latent structures more effectively for tasks like clustering. |

| Gravity-Inspired Graph Encoder [6] | An encoder designed to capture directed relationships and complex network topology in graphs, which is crucial for accurately modeling systems like gene regulatory networks. |

The Manifold Hypothesis and its Implications for Graph-Structured Data

Frequently Asked Questions (FAQs)

Q1: What is the Manifold Hypothesis and why is it important for graph-structured data?

The Manifold Hypothesis is a widely accepted tenet of Machine Learning which asserts that nominally high-dimensional data are in fact concentrated near a low-dimensional manifold, embedded in the high-dimensional space [15]. For graph-structured data, this means that the complex relationships and structures within graphs (like social networks or molecular structures) can be represented in a much lower-dimensional, dense latent space. Autoencoders are instrumental in learning this underlying latent manifold [16]. Understanding this hypothesis is crucial because it allows researchers to develop more efficient models for tasks such as drug discovery, where representing molecules as graphs and learning their latent manifolds can accelerate the generation of new candidate compounds [17].

Q2: Why does my graph autoencoder poorly reconstruct the graph structure, especially in sparse graphs?

This is a common problem, particularly in sparse networks with low density (e.g., ~0.05) [18]. The core issue often lies in the reconstruction loss. Graphs lack a canonical node ordering, meaning many different adjacency matrices can represent the same underlying graph structure (a concept known as isomorphism) [19]. Therefore, a simple side-by-side comparison between the input and output adjacency matrices using a loss like BCEWithLogits can be maximally high even if the decoder has produced a perfect (but isomorphic) reconstruction of the input graph [19]. This ambiguity makes the reconstruction objective difficult to learn. Using a pos_weight parameter in your loss function can help account for sparsity, but may not solve the fundamental issue [18].

Q3: What is the difference between the latent manifolds of standard and variational graph autoencoders?

Empirical and theoretical evidence shows that the latent spaces of standard autoencoders (AEs) and variational autoencoders (VAEs) have fundamentally different manifold structures. The latent manifolds of standard AEs and Denoising AEs (DAEs) are often non-smooth and stratified. This means the space is composed of multiple, disconnected smooth components (strata), which explains why interpolating in this space can lead to incoherent outputs [16]. In contrast, the latent manifold of a VAE is typically a smooth product manifold [16]. This smoothness, enforced by the prior distribution on the latent space, is what enables VAEs to perform meaningful interpolation and generate novel, valid data points, such as new molecular structures [16] [17].

Q4: What are the main strategies to solve the graph reconstruction loss problem?

Researchers have proposed several innovative strategies to tackle the challenge of permutation-invariant reconstruction loss [19]:

- Graph Matching: This involves finding the best alignment between the nodes of the input and output graphs before calculating the loss. While accurate, it can be computationally complex (

O(V^2)) [19]. - Heuristic Node Ordering: Enforcing a fixed node order using simple heuristics, such as ordering nodes via a breadth-first search starting from the highest-degree node [19].

- Discriminator Loss: Replacing the traditional reconstruction loss with an adversarial loss. A discriminator network is trained to map isomorphic graph structures to similar latent vectors, and the reconstruction loss is computed as the distance between these embeddings [19].

- Node-Level Embeddings: Focusing on generating node-level embeddings instead of whole-graph embeddings. This bypasses the problem but may be unsuitable for tasks requiring a single graph-level representation [19].

Troubleshooting Guides

Issue 1: Poor or Incoherent Graph Reconstruction

Problem: Your model fails to reconstruct the input graph's structure, or decoded graphs are not meaningful representations of the input.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Permutation Variance | Check if the output graph is isomorphic to the input by comparing graph properties (e.g., degree distribution). | Implement a permutation-invariant reconstruction method, such as a discriminator loss or heuristic node ordering [19]. |

| Over-Smoothing in GNN Encoder | Monitor the node embeddings; if they become indistinguishable, over-smoothing is likely. | Use architectural improvements in your Graph Neural Network (GNN) to prevent over-smoothing, a known issue when training GNNs [17]. |

| Overfitting on Training Data | Evaluate reconstruction performance on a held-out validation set. If training loss is low but validation loss is high, the model is overfitting. | Introduce regularization techniques such as dropout in GNN layers or employ a variational framework to encourage a more robust latent space [18] [20]. |

Issue 2: Discontinuous or Non-Smooth Latent Manifold

Problem: Interpolating between two points in the latent space does not produce a smooth, semantically meaningful transition in the graph space.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Standard Autoencoder Framework | Perform interpolation by decoding convex combinations of latent vectors from two graphs. Observe if the outputs are chaotic. | Switch from a standard autoencoder to a Variational Autoencoder (VAE). The VAE's regularization loss (KL divergence) encourages the formation of a smooth, continuous latent manifold [16] [17]. |

| Posterior Collapse in VAE | In a VAE, if the KL divergence loss becomes zero too quickly, the model ignores the latent codes. | Employ techniques to mitigate posterior collapse, a common issue in VAEs that can hinder the learning of a useful latent space [17]. |

Issue 3: Model Fails to Generalize to New Data

Problem: The model performs well on its training data but fails to generate valid or meaningful graphs outside of it.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient or Non-Representative Training Data | Analyze the diversity of your training dataset. | Ensure you have a large, representative dataset. Autoencoders are data-specific; a model trained on one graph type (e.g., molecules) will not generalize to another (e.g., social networks) [20]. |

| Bottleneck Layer is Too Narrow | Experiment with progressively larger bottleneck layers. If performance improves, the layer was too restrictive. | Systematically test different sizes for the bottleneck layer to find a balance between compression and retaining enough information for reconstruction and generalization [20]. |

| Algorithm Became Too Specialized | The network may have simply memorized the training inputs. | Introduce regularization via a contractive autoencoder architecture or add random noise to inputs during training to improve robustness [20]. |

Protocol 1: Characterizing Latent Space Smoothness

Objective: To determine whether a graph autoencoder has learned a smooth latent manifold.

Methodology:

- Train Models: Train different autoencoder variants (e.g., Graph AE, Graph VAE) on your graph dataset.

- Encode Graphs: Select two distinct graphs from the test set and encode them to obtain their latent representations,

z1andz2. - Linear Interpolation: Generate a sequence of latent vectors

z_i = α * z1 + (1-α) * z2forαranging from 0 to 1. - Decode Interpolants: Decode each

z_iback into a graph structure. - Analysis: Qualitatively and quantitatively assess the decoded graphs. A smooth manifold will show coherent, gradually transitioning graphs. Non-smooth manifolds will yield erratic, meaningless outputs [16].

Protocol 2: Evaluating Graph Reconstruction Under Noise

Objective: To test the robustness of the learned latent representations.

Methodology:

- Introduce Perturbations: Corrupt the input graphs by adding varying levels of noise (e.g., randomly adding or removing a small percentage of edges).

- Reconstruct: Use the autoencoder to reconstruct the clean graph from the noisy input.

- Model the Manifold: Model the encoded latent tensors as points on a product manifold of Symmetric Positive Semi-Definite (SPSD) matrices. This technique helps in analyzing the structure of the learned latent manifold [16].

- Compare Structure: Analyze the ranks of the SPSD matrices. A robust model will maintain a stable manifold structure despite input noise. The results often show that VAEs maintain a smooth product manifold, while standard AEs exhibit a stratified manifold structure under perturbation [16].

Research Reagent Solutions

The table below lists key computational "reagents" used in advanced graph autoencoder research, as featured in the cited literature.

| Research Reagent | Function in Experiment |

|---|---|

| Transformer Graph VAE (TGVAE) | An AI model that combines a transformer, GNN, and VAE to generate novel molecular graphs, effectively capturing complex structural relationships [17]. |

| Graph Matching Network | Used to find the optimal node alignment between two graphs, enabling a permutation-invariant calculation of the reconstruction loss [19]. |

| Attentional Aggregation | A technique (e.g., in PyG's AttentionalAggregation) to pool node-level embeddings into a single, graph-level embedding, which is crucial for whole-graph tasks [18]. |

| Product Manifold of SPSD Matrices | A mathematical framework used to model and characterize the geometry of latent spaces, helping to explain their smoothness and structure [16]. |

| Inner Product Decoder | A simple decoder that computes edge probabilities via the inner product of node embeddings. It may not perform well on sparse graphs without additional modifications [18]. |

Workflow and Relationship Visualizations

Graph Autoencoder with Reconstruction Problem

Comparison of Latent Manifold Types

This technical support document provides a framework for diagnosing and resolving a core challenge in graph autoencoder research: the management of latent space geometry. A well-regularized latent space is crucial for downstream tasks in drug development, such as molecular property prediction and novel compound generation. This guide details experimental protocols and troubleshooting methodologies to help researchers characterize latent space smoothness, a key indicator of robustness and generalizability. The content is contextualized within a broader thesis on regularizing latent vectors, synthesizing recent findings on how different autoencoder architectures and regularization techniques shape the underlying data manifold.

Frequently Asked Questions (FAQs)

FAQ 1: Why do my graph autoencoder's latent interpolations produce unrealistic or artifact-ridden molecular structures?

This is a classic symptom of a non-smooth latent manifold. In autoencoders (AEs) and Denoising AEs (DAEs), the latent space forms a stratified manifold. This means it is composed of multiple smooth sub-manifolds (strata) connected by discontinuous jumps [21] [22] [23]. When you interpolate between two points from different strata, the decoder traverses through "invalid" regions of the latent space that do not correspond to any realistic data point, resulting in incoherent outputs. In contrast, Variational Autoencoders (VAEs) learn a smooth, continuous manifold, enabling meaningful interpolation [21] [23].

FAQ 2: How does the choice of regularization impact the geometry of the latent space in graph autoencoders?

Regularization is the primary tool for enforcing a desired latent geometry.

- KL Divergence (in VGAE): Enforces a Gaussian prior on the latent distribution, encouraging continuity and smoothness. However, it can lead to over-regularization and "posterior collapse," where the latent space is under-utilized [24].

- Adversarial Regularization (in ARGA): Uses a discriminator to make the latent distribution match a prior. This can be more flexible than KL divergence but may suffer from unstable training [24].

- Wasserstein Distance (in WARGA): Provides a more stable and meaningful metric for comparing distributions, especially those with disjoint supports. It effectively regularizes the latent space to be smooth and has been shown to outperform KL-based and adversarial methods on tasks like link prediction and node clustering [24].

- Spatial Regularization: Used in spatiotemporal graphs, this adds a penalty term to ensure that geographically proximate nodes have similar latent representations, enforcing local smoothness directly into the loss function [25].

FAQ 3: My model's performance degrades significantly with slightly noisy input data. Is this a latent space issue?

Yes, this is frequently a sign of a non-robust, non-smooth latent space. Empirical results show that the latent manifolds of Convolutional AEs (CAEs) and Denoising AEs (DAEs) are highly sensitive to input perturbations. As noise increases, the ranks of their latent representations' constituent matrices become highly variable, and the principal angles between clean and noisy subspaces increase, indicating a fundamental shift in the manifold's structure [21] [22]. Conversely, the Variational Autoencoder (VAE) maintains a stable matrix rank and shows minimal change in principal angles, demonstrating its robustness to noise due to its inherently smooth latent manifold [21] [23].

Troubleshooting Guides

Guide 1: Diagnosing a Non-Smooth Latent Manifold

Symptoms: Poor interpolation results, high sensitivity to input noise, and sudden jumps in latent space visualization (e.g., t-SNE plots) when parameters are slightly varied.

Experimental Protocol for Verification:

Interpolation Test:

- Method: Select two valid data points (e.g., two molecular graphs). Encode them to get their latent vectors,

z1andz2. Generate a sequence of vectors by taking convex combinations:z_{interp} = α * z1 + (1-α) * z2forαfrom 0 to 1. Decode allz_{interp}. - Interpretation: A smooth manifold will produce a coherent and gradual transition between the two original data points. A non-smooth manifold will yield unrealistic, blurry, or artifact-ridden outputs in between [21] [23].

- Method: Select two valid data points (e.g., two molecular graphs). Encode them to get their latent vectors,

Noise Robustness Analysis:

- Method: To your test set inputs, add varying levels of additive white Gaussian noise. Encode both clean and noisy versions and measure the distance between their latent representations.

- Interpretation: A smooth and robust manifold will project a clean input and its noisy version to nearby points in the latent space. Large distances indicate high sensitivity and a non-smooth geometry [21].

Matrix Manifold Rank Analysis (Advanced):

- Method: Model the latent representations as points on a product manifold of Symmetric Positive Semi-Definite (SPSD) matrices. Analyze the ranks of these matrices for a dataset under different noise conditions [21] [22].

- Interpretation: A smooth manifold (like in VAEs) will exhibit stable ranks regardless of noise. A non-smooth, stratified manifold (like in CAEs/DAEs) will show significant variability in the ranks of these matrices, indicating a discontinuous structure [21] [22] [23].

Guide 2: Applying Regularization for a Smoother Latent Space

Objective: To enforce a continuous and well-structured latent space in graph autoencoders for improved generalization.

Methodology:

Architecture Selection:

Regularizer Selection:

- Wasserstein Regularization: Consider using the Wasserstein distance as your regularizer, as implemented in WARGA. It provides a more stable gradient and can handle distributions with little common support better than KL divergence [24].

- Spatial Regularization: If your graph data has inherent spatial or topological relationships (e.g., in molecular graphs or climate networks), add a spatial consistency loss. This term minimizes the distance between the latent representations of connected or nearby nodes, directly enforcing local smoothness [25].

Implementation Steps for WARGA:

- Encoder: Use a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) to map input graphs to a latent distribution, typically parameterized by a mean

μand log-variancelogσ². - Latent Sampling: Use the reparameterization trick to sample a latent vector

z. - Wasserstein Regularization: Instead of a KL divergence loss, employ a critic function

f_ϕto approximate the 1-Wasserstein distance between the aggregated posteriorq(z)and the target priorp(z). Ensure Lipschitz continuity of the critic via either Weight Clipping (WARGA-WC) or a Gradient Penalty (WARGA-GP) [24]. - Total Loss: Minimize the combined reconstruction loss (between input and decoded graph) and the Wasserstein regularizer.

- Encoder: Use a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) to map input graphs to a latent distribution, typically parameterized by a mean

Protocol: Characterizing Manifold Smoothness via Matrix Ranks

This protocol is based on the methodology from Latent Space Characterization of Autoencoder Variants [21] [22].

- Model Training: Train different autoencoder variants (CAE, DAE, VAE) on your dataset.

- Latent Tensor Extraction: Pass a set of clean input images through the encoder to obtain the latent representations

Z. - Modeling as SPSD Matrices: For each latent representation, construct three scaffold matrices (S1, S2, S3) and form them into Symmetric Positive Semi-Definite (SPSD) matrices. The collection of these points lies on a Product Manifold [21].

- Perturbation Introduction: Repeat steps 2-3 with noisy versions of the input data.

- Rank Analysis: Compute the rank of each SPSD matrix for both clean and noisy inputs. Analyze the stability of these ranks across the dataset and under perturbation.

Expected Results Summary (from original study):

Table 1: Empirical Results on Manifold Structure and Noise Robustness

| Autoencoder Type | Latent Manifold Structure | Rank Stability under Noise | PSNR at 10% Noise | Key Characteristic |

|---|---|---|---|---|

| Convolutional AE (CAE) | Stratified (Non-smooth) [21] [22] | Variable (e.g., S3: 29-48) [23] | Drops significantly [23] | Discontinuous transitions between strata |

| Denoising AE (DAE) | Stratified (Non-smooth) [21] [22] | Variable (e.g., S3: 29-48) [23] | Drops significantly [23] | Learns to map corrupted data to manifold |

| Variational AE (VAE) | Smooth Product Manifold [21] [22] | Fixed (e.g., S1:7, S2:7, S3:48) [23] | Stable at ~25 dB [23] | Continuous, probabilistic latent space |

Workflow: From Input to Manifold Characterization

The following diagram illustrates the core experimental workflow for characterizing a latent space, from data input to geometric analysis.

Comparative Analysis of Regularization Techniques

Table 2: Comparison of Latent Vector Regularization Methods in Graph Autoencoders

| Regularization Method | Mechanism | Advantages | Disadvantages | Suitable For |

|---|---|---|---|---|

| KL Divergence (e.g., VGAE [24]) | Minimizes KL div. between latent distribution and Gaussian prior. | Simple to implement, encourages a continuous latent space. | Can lead to over-regularization and posterior collapse; limited for complex priors. | Baseline projects, well-behaved data with Gaussian-like structure. |

| Adversarial (e.g., ARGA [24]) | Uses a discriminator to match latent distribution to a target prior. | More flexible than KL, can learn complex latent distributions. | Training can be unstable and mode-seeking; requires careful balancing. | Tasks requiring a complex, non-Gaussian latent prior. |

| Wasserstein (e.g., WARGA [24]) | Minimizes 1-Wasserstein distance between latent and target distributions. | Stable training, meaningful distance metric, handles disjoint supports. | Requires enforcing Lipschitz continuity (e.g., via gradient penalty). | Robust applications where stable training and distribution matching are critical. |

| Spatial/Spectral (e.g., SRGAttAE [25]) | Adds loss term for similarity of connected/neighboring nodes. | Enforces domain-specific structure (e.g., spatial coherence). | Requires predefined graph structure or node proximity matrix. | Spatiotemporal data, molecules, any data with known relational structure. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Item / Conceptual Tool | Function / Purpose in Experimentation |

|---|---|

| Graph Attention Network (GAT) [25] [24] | An encoder architecture that assigns different importance to neighboring nodes using self-attention, ideal for learning on graph-structured data like molecules. |

| Product Manifold (PM) of SPSD Matrices [21] [22] | A mathematical framework for modeling latent tensors as points on a manifold, enabling rigorous analysis of the latent space's geometric structure through matrix rank. |

| Wasserstein Distance (Earth-Mover) [24] | A robust metric for comparing probability distributions. Used as a regularizer to enforce a smooth latent space, superior to KL divergence for distributions with disjoint supports. |

| Spatial Consistency Regularization (SCR) [25] | A penalty term in the loss function that minimizes the distance between latent codes of geographically or topologically proximate nodes, enforcing local smoothness. |

| Proper Orthogonal Decomposition (POD) [26] | A linear dimensionality reduction technique used to identify characteristic modes in data. Can be used to analyze and interpret the organization of latent spaces by linking latent dimensions to physical modes. |

| t-SNE / UMAP Visualization | Standard techniques for visualizing high-dimensional latent spaces in 2D or 3D, allowing for an intuitive check of cluster separation and manifold continuity. |

Implementing Advanced Regularization Techniques: From Theory to Biomedical Practice

Adversarially Regularized Graph Autoencoder (ARGA) Framework

The Adversarially Regularized Graph Autoencoder (ARGA) is a advanced framework for graph embedding, which integrates graph autoencoders with adversarial training to regularize the latent representations of graph data. Traditional graph embedding algorithms primarily focus on preserving the topological structure or minimizing graph reconstruction errors. However, they often ignore the data distribution of the latent codes, which can lead to inferior embeddings, particularly when applied to real-world graph data. The ARGA framework addresses this critical limitation by encoding the topological structure and node content into a compact representation, and then enforcing the latent representation to match a prior distribution through an adversarial training scheme [27] [28].

This framework introduces a significant innovation by applying adversarial regularization, a concept popularized by Generative Adversarial Networks (GANs), to the domain of graph representation learning. The model consists of two main components: a graph autoencoder that reconstructs the graph structure from a low-dimensional embedding, and a discriminator that attempts to distinguish between the latent codes produced by the encoder and samples from a prior distribution. This adversarial process encourages the encoder to generate latent representations that follow a smooth and continuous prior distribution, typically a Gaussian distribution. This results in more robust and generalized embeddings that perform better across various downstream tasks [27] [28]. A variant of this model, the Adversarially Regularized Variational Graph Autoencoder (ARVGA), extends this approach by incorporating the variational inference framework, further enhancing its capability to model uncertainty in the latent space [27].

The ARGA framework is particularly relevant for researchers, scientists, and drug development professionals because it provides a powerful method for learning meaningful representations from complex biological networks. These networks, which can include drug-target interactions, protein-protein interactions, and circRNA-drug associations, are fundamental to modern drug discovery and development pipelines. By producing high-quality, regularized embeddings, ARGA enables more accurate link prediction, graph clustering, and visualization, which are essential tasks in computational drug discovery [29] [30] [28].

Troubleshooting Guide: Common Experimental Issues and Solutions

Implementing and training ARGA models can present several challenges. This guide addresses common issues encountered during experiments, providing solutions grounded in the methodology and recent research advancements.

FAQ 1: The model's link prediction performance is poor. The reconstructed graph lacks meaningful structure.

- Problem Identification: The model fails to learn discriminative latent representations, resulting in inaccurate link predictions.

- Theory of Probable Cause: This issue often stems from the over-smoothing problem in the Graph Convolutional Network (GCN) encoder. As GCNs get deeper, node features can become indistinguishable, or the model may be unable to capture higher-order semantic information from the graph [29] [30].

- Plan of Action & Implementation: Implement a more robust graph convolutional module. Propose to replace the standard GCN with a Dynamic Weighting Residual GCN (DWR-GCN) [29].

- Verification: After implementing DWR-GCN, monitor the training and validation loss. A steady decrease in reconstruction loss indicates the encoder is now learning more effective representations. Link prediction metrics like Area Under the Curve (AUC) and Area Under the Precision-Recall Curve (AUPR) should show significant improvement [29].

FAQ 2: The latent codes produced by the encoder do not match the desired prior distribution, leading to poor sampling and generalization.

- Problem Identification: The adversarial regularization is ineffective. The discriminator fails to properly guide the encoder, or the encoder collapses.

- Theory of Probable Cause: The traditional adversarial training process may be unstable, or the Gaussian prior might be too simplistic for complex graph data distributions [28].

- Plan of Action & Implementation: Strengthen the adversarial regularization component. You can:

- Inspect the loss functions: Ensure the adversarial loss is correctly formulated. For ARGA, the regulator loss is computed as the binary cross-entropy loss from the discriminator, aiming to "fool" it [31] [28].

- Adopt an advanced prior: Recent research proposes using a Gaussian Cloud Distribution instead of a simple Gaussian distribution to better model the uncertainty and complexity of real-world networks [28].

- Verification: Visualize the latent space using tools like t-SNE. A well-regularized space should show a smooth distribution that aligns with the prior. Quantitative evaluation on node clustering tasks can also confirm improved structure in the latent space [27] [28].

FAQ 3: The model suffers from posterior collapse, where the latent variables do not capture meaningful information from the input data.

- Problem Identification: The Kullback-Leibler (KL) divergence loss vanishes during training, and the generator ignores the encoder's output.

- Theory of Probable Cause: This is a known issue in variational autoencoders where the decoder becomes too powerful or the regularization term is too strong, causing the latent codes to become uninformative [28].

- Plan of Action & Implementation: Modify the training objective to prevent posterior collapse.

- Introduce a new similarity measure: Replace the standard KL divergence with an uncertainty similarity measurement method based on cloud envelopes, which is more robust for graph-structured data [28].

- Adjust the loss weights: Fine-tune the weight of the adversarial loss term relative to the reconstruction loss to maintain a balance.

- Verification: Monitor the KL divergence (or its replacement) during training. It should not converge to zero. The reconstruction loss should also remain high enough, indicating the model is using the latent variables effectively [28].

FAQ 4: The model does not perform well on the specific task of predicting circRNA-Drug Associations (CDAs).

- Problem Identification: The model's generic architecture cannot capture the intricate geometric relationships in the biological network.

- Theory of Probable Cause: Standard GNNs often fail to capture higher-order geometric and topological information, which is crucial for modeling biological interactions [30].

- Plan of Action & Implementation: Integrate geometric learning into the graph encoder. Adopt a framework like G2CDA, which incorporates torsion-based geometric encoding [30]. This involves constructing local simplicial complexes for potential associations and using their torsion values as adaptive weights during message propagation.

- Verification: Evaluate the model on benchmark CDA datasets. The geometric-enhanced model should outperform standard ARGA and other state-of-the-art baselines in identifying novel associations, as confirmed by case studies on specific biomarkers [30].

Key Experimental Protocols and Data Presentation

Quantitative Performance Data

The following table summarizes the performance of ARGA and its advanced variants on benchmark tasks, demonstrating their effectiveness in graph embedding.

Table 1: Performance Comparison of Graph Embedding Models on Standard Tasks [27] [29] [30]

| Model | Task | Dataset | Metric | Score |

|---|---|---|---|---|

| ARGA | Link Prediction | Citation Network (Cora) | AUC | 0.924 |

| AP | 0.926 | |||

| ARVGA | Link Prediction | Citation Network (Cora) | AUC | 0.924 |

| AP | 0.926 | |||

| DDGAE (with DWR-GCN) | Drug-Target Interaction Prediction | Public DTI Dataset | AUC | 0.9600 |

| AUPR | 0.6621 | |||

| G2CDA (Geometry-Enhanced) | circRNA-Drug Association Prediction | circRic Database | AUC | Outperformed SOTA |

| AUPR | Outperformed SOTA |

Essential Research Reagents and Materials

To replicate state-of-the-art experiments in drug discovery using graph autoencoders, the following computational "reagents" are essential.

Table 2: Key Research Reagent Solutions for Graph Autoencoder Experiments [29] [30]

| Item Name | Function / Explanation | Example Source / Specification |

|---|---|---|

| Drug-Target Interaction Data | Provides known interactions to construct the heterogeneous graph for model training. | DrugBank, HPRD, CTD, SIDER [29] |

| circRNA-Drug Association Data | Forms the core dataset for training models predicting circRNA therapeutic targets. | circRic database (~~1,000 cancer cell lines) [30] |

| Drug/Target Similarity Matrices | Provides node features and is used to normalize the graph structure, enhancing numerical stability. | Chemical structure (drugs), amino acid sequences (targets) [29] |

| Graph Neural Network Framework | Provides the software infrastructure for building and training ARGA and variant models. | PyTorch Geometric (includes ARGA implementation) [31] |

| Dynamic Weighting Residual GCN (DWR-GCN) | An enhanced graph convolutional module that prevents over-smoothing in deep networks, improving representation power. | Custom module as described in [29] |

Workflow and Architecture Visualization

Core ARGA Framework Architecture

The following diagram illustrates the fundamental structure of the ARGA model, showing the interaction between the graph autoencoder and the adversarial network.

Drug-Target Interaction Prediction Workflow

This diagram outlines the integrated workflow of a modern graph autoencoder model like DDGAE, which incorporates dynamic graph convolution and dual training for DTI prediction.

Wasserstein Adversarially Regularized Graph Autoencoder (WARGA) for Enhanced Distribution Matching

Troubleshooting Guide: Common WARGA Experimentation Issues

This section addresses specific problems researchers may encounter when implementing or training WARGA models.

Q1: The model output shows high noise and fails to converge during link prediction tasks. What could be the cause?

A primary cause is a violation of the Lipschitz continuity assumption, which is critical for the Wasserstein distance calculation. Two established solutions are recommended:

- Solution A (WARGA-WC): Implement a weight clipping method. Enforce a hard constraint on the weights of the critic (discriminator) network by clipping them to a small interval, such as ([-c, c]), after each optimizer step.

- Solution B (WARGA-GP): Apply a gradient penalty method (WARGA-GP). Instead of weight clipping, add a soft constraint to the loss function that directly penalizes the norm of the critic's gradient with respect to its input, which is often more stable and leads to better performance [24].

Q2: The latent distribution of node embeddings fails to effectively match the target prior distribution. How can this be improved?

This indicates that the Wasserstein regularizer is not exerting sufficient influence. First, verify that the Lipschitz constraint is properly enforced using the methods above. Second, adjust the weighting hyperparameter ((\lambda)) that controls the strength of the Wasserstein adversarial loss term relative to the graph reconstruction loss. A systematic hyperparameter search is recommended. Compared to KL divergence, the Wasserstein metric is more effective at handling distributions with disjoint supports, providing a more natural distance measure [24].

Q3: During node clustering, the model performance is sub-optimal and the embedding visualization appears poorly separated.

This can result from a deviation of the optimization objective, a known issue in variational graph autoencoders where the model prioritizes network reconstruction over learning a meaningful latent structure. To mitigate this, consider a dual optimization approach that guides the learning process more explicitly toward the primary task (e.g., clustering), preventing the objective from collapsing. Additionally, ensure the encoder is sufficiently powerful, but also consider that linearizing the encoder can sometimes reduce parameters and improve generalization for certain tasks [32].

Q4: Training is unstable, with the loss for the critic (discriminator) becoming very large or oscillating wildly.

This is a classic symptom of an poorly conditioned critic. For the WARGA-WC model, try reducing the weight clipping value ((c)). For the WARGA-GP model, increase the coefficient of the gradient penalty term. It is also crucial to ensure that the critic is trained to optimality (or near-optimality) before each update of the generator (encoder) to provide a reliable gradient signal [24].

Experimental Protocols & Methodologies

This section provides detailed methodologies for key experiments that validate WARGA's performance, as outlined in the foundational research [24].

Link Prediction Protocol

Objective: To evaluate the model's ability to reconstruct the graph structure by predicting missing links.

Dataset Specifications: The model is validated on standard citation network datasets. The table below summarizes their key statistics [24].

Table 1: Citation Network Dataset Statistics

| Dataset | Nodes | Edges | Features | Classes |

|---|---|---|---|---|

| Cora | 2,708 | 5,429 | 1,433 | 7 |

| Citeseer | 3,327 | 4,732 | 3,703 | 6 |

| PubMed | 19,717 | 44,338 | 500 | 3 |

Methodology:

- Data Splitting: The edges of the graph are randomly split into training, validation, and test sets (e.g., 85%/5%/10%).

- Model Training: The WARGA model is trained on the training subgraph. The encoder maps nodes to latent embeddings, and the decoder reconstructs the adjacency matrix by computing inner products between these embeddings.

- Evaluation: The model's performance is measured on the held-out test edges. The primary metrics are the Area Under the Curve (AUC) and Average Precision (AP) scores, which evaluate the ranking of actual edges against non-existent edges [24].

Node Clustering Protocol

Objective: To assess the quality of the latent embeddings for discovering community structure without using label information.

Methodology:

- Embedding Generation: The trained WARGA encoder is used to generate latent representations for all nodes in the graph.

- Clustering Algorithm: A clustering algorithm, such as K-means, is applied directly to the latent embeddings. The number of clusters is typically set to the true number of classes in the dataset.

- Evaluation: The clustering results are compared against the ground-truth labels. Accuracy (Acc) is a common metric, achieved by finding the optimal mapping between cluster assignments and true labels [24].

Workflow Visualization

The following diagram illustrates the end-to-end architecture and data flow of the WARGA model.

Performance Benchmarking

The following table summarizes quantitative results comparing WARGA against other state-of-the-art graph autoencoder models on the link prediction task, measured by AUC and AP scores (values are illustrative based on reported superior performance) [24].

Table 2: Link Prediction Performance (AUC/AP Scores in %)

| Model | Cora | Citeseer | PubMed |

|---|---|---|---|

| GAE | 91.0 / 92.0 | 89.5 / 90.3 | 96.4 / 96.5 |

| VGAE | 91.4 / 92.6 | 90.8 / 92.0 | 94.4 / 94.7 |

| ARGA | 92.4 / 93.2 | 92.1 / 92.8 | 96.8 / 96.9 |

| ARVGA | 92.4 / 93.0 | 92.3 / 92.9 | 96.7 / 96.9 |

| WARGA-WC | 93.0 / 93.5 | 92.5 / 93.2 | 97.0 / 97.1 |

| WARGA-GP | 93.5 / 94.0 | 92.8 / 93.5 | 97.2 / 97.3 |

The Scientist's Toolkit: Research Reagent Solutions

This table details the key computational tools and conceptual components essential for implementing WARGA.

Table 3: Essential Research Reagents for WARGA

| Research Reagent | Function / Description |

|---|---|

| Graph Convolutional Network (GCN) | Serves as the encoder to generate node embeddings by aggregating feature information from a node's local neighborhood [24]. |

| Inner Product Decoder | Maps the latent node embeddings (Z) back to graph space by computing pairwise inner products to reconstruct the adjacency matrix [24]. |

| Wasserstein Critic (f_φ) | A neural network that acts as the feature extractor, calculating the Wasserstein distance between the latent embedding distribution and the target prior [24]. |

| Gradient Penalty | A soft constraint applied to the critic's loss function to enforce the Lipschitz continuity condition, central to the WARGA-GP variant [24]. |

| Citation Network Datasets | Standard benchmark datasets (e.g., Cora, Citeseer, PubMed) used for validation and comparison in graph learning tasks [24]. |

| Adam / Stochastic Gradient Descent | Optimization algorithms used to iteratively update the model parameters (weights) by minimizing the combined reconstruction and regularization loss [32]. |

Random Walk Regularization for Structured Latent Space Organization

Frequently Asked Questions (FAQs)

1. What is Random Walk Regularization (RWR) and what problem does it solve in Graph Autoencoders (GAEs)?

Random Walk Regularization is a technique used to improve the latent representations learned by a Graph Autoencoder. It introduces an additional loss term that ensures nodes connected by short random walks in the graph obtain similar embeddings in the latent space [33] [6]. This addresses a key limitation of standard GAEs, whose reconstruction loss often ignores the distribution of the latent representation, which can lead to inferior and poorly structured embeddings [33]. By enforcing this geometric structure, RWR helps the model learn a more meaningful and organized latent space.

2. How do I know if my model will benefit from implementing RWR?

Your model is a strong candidate for RWR if you are working on tasks like node clustering or link prediction [33] [6] [34]. This is particularly true if your downstream analysis relies on the geometric properties of the latent space. For example, if you are clustering nodes, RWR can help ensure that nodes within the same community are mapped closer together. Empirical results have shown that RWR can improve state-of-the-art models by up to 7.5% in node clustering tasks [33].

3. My RWR-regularized model is over-smoothing the latent representations. What can I do?

Over-smoothing, where node embeddings become too similar and lose discriminative power, is a common challenge. To mitigate this:

- Adjust the Regularization Strength (

λ): The hyperparameterλbalances the reconstruction loss and the RWR loss. If over-smoothing occurs, try reducing the value ofλ[25]. - Review Random Walk Parameters: The length and number of random walks determine the neighborhood scope. Very long walks might incorporate too much global information, causing local distinctions to blur. Experiment with shorter walk lengths [33].

- Combine with Other Techniques: Consider integrating an attention mechanism, which can help the model focus on the most important neighbors during aggregation, thus preserving finer distinctions [25] [34].

4. Can RWR be combined with other types of autoencoders and regularizations?

Yes, RWR is a flexible concept. It has been successfully integrated with a Gravity-Inspired Graph Autoencoder (GIGAE) to infer directed relationships in Gene Regulatory Networks [6]. Furthermore, it can be used alongside other regularization strategies. For instance, one study linearly combined L1 and L2 regularization to address user preference and overfitting, while a denoising autoencoder component handled noisy data [35]. The key is to carefully balance the weights of the different loss terms.

5. What are the common failure modes when the RWR loss does not decrease?

If the RWR loss is not converging, consider these troubleshooting steps:

- Check Graph Connectivity: Random walks require a reasonably connected graph. If the graph has many isolated components, walks will be truncated, and the regularization signal will be weak. Analyze your graph's structure first.

- Verify Correct Sampling: Ensure that your random walk sampling algorithm is implemented correctly and that it covers a diverse and representative set of node neighborhoods.

- Inspect Gradient Flow: Use debugging tools to confirm that gradients from the RWR loss term are flowing back through the encoder network. This will help identify any potential issues with the integration of the loss into the training process.

Troubleshooting Guides

Issue 1: Poor Performance on Downstream Tasks

Problem: After implementing a GAE with RWR, the performance on primary tasks like node clustering or link prediction remains poor or has degraded.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Improperly weighted loss function | Plot the individual loss terms (reconstruction and RWR) during training. See if one dominates the other. | Systematically tune the hyperparameter λ that balances the two losses. Start with a small value and increase it [25]. |

| Low-quality random walks | Analyze the statistics of your sampled random walks (e.g., average length, coverage). | Adjust random walk parameters: increase the walk length or the number of walks per node to capture more context [33]. |

| Mismatch between walk topology and task | The "context" defined by the random walks may not align with your task's goal. | For tasks requiring strong local structure, use shorter walks. For global structure, use longer walks. Consider using second-order biased walks like in Node2Vec [34]. |

Issue 2: Instability During Model Training

Problem: The training loss shows high variance, fails to converge consistently, or the model produces NaN values.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Exploding gradients | Monitor the gradient norms using your deep learning framework's tools. | Apply gradient clipping. This is a standard technique to prevent gradients from becoming too large during backpropagation. |

| Poorly initialized parameters | Re-run the training with different random seeds to see if instability is consistent. | Use established initialization schemes (e.g., Xavier/Glorot) for the model weights. |

| Numerical instability in loss | Check the values of the latent vectors Z and the distance calculations in the RWR loss. |

Add a small epsilon (e.g., 1e-7) to denominators or inside logarithmic functions in the loss calculation to avoid division by zero or log(0). |

Experimental Protocols & Data

Core Protocol: Implementing RWR for Node Clustering

This protocol is based on the methodology described for the RWR-GAE model [33].

1. Model Architecture:

- Encoder: A Graph Convolutional Network (GCN) that maps node features to a latent representation.

- Latent Space: The low-dimensional representation

Zoutput by the encoder. - Regularization: The RWR loss is computed by comparing the latent representations of nodes connected via random walks.

- Decoder: A simple inner product decoder that reconstructs the graph adjacency matrix from

Z. - Overall Loss:

L_total = L_reconstruction + λ * L_RWR, whereL_RWRencourages nearby nodes in the random walk to have similar embeddings [33].

2. Step-by-Step Methodology:

1. Input Graph: Start with an attributed graph G = (X, A), where X is the node feature matrix and A is the adjacency matrix.

2. Generate Random Walks: For each node, simulate multiple fixed-length random walks across the graph.

3. Train GAE: For each training iteration:

* The encoder processes X and A to produce latent variable Z.

* The decoder reconstructs the graph from Z.

* The reconstruction loss L_reconstruction (e.g., binary cross-entropy) is calculated.

* The RWR loss L_RWR is computed based on the similarity of node pairs from the random walks.

* The model parameters are updated to minimize the combined loss L_total.

4. Extract Embeddings: After training, use the encoder to generate the final latent embeddings Z.

5. Perform Clustering: Apply a clustering algorithm like K-means to the latent embeddings Z to group the nodes.

Quantitative Performance Data

The following table summarizes the performance gains achieved by RWR-GAE over other state-of-the-art models on benchmark datasets, as reported in its foundational paper [33].

Table 1: Node Clustering Accuracy (NMI %) Improvement with RWR-GAE

| Dataset | Baseline Model Performance | RWR-GAE Performance | Accuracy Gain |

|---|---|---|---|

| Cora | Reported baseline | 52.5% | Up to 7.5% |

| Citeseer | Reported baseline | 41.6% | Up to 7.5% |

| Pubmed | Reported baseline | 34.1% | Up to 7.5% |

Table 2: Link Prediction (AUC Score) Performance

| Dataset | VGAE [36] | RWR-GAE |

|---|---|---|

| Cora | 91.4% | ~94.0% |

| Citeseer | 90.8% | ~93.0% |

| Pubmed | 92.6% | ~96.0% |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions

| Item | Function in RWR-GAE Experiments | Example / Specification |

|---|---|---|

| Benchmark Citation Networks | Standard datasets for evaluating graph representation learning models. | Cora, Citeseer, Pubmed [33]. |

| Graph Convolutional Network (GCN) | Serves as the encoder in the GAE, transforming node features and structure into latent codes. | A 2-layer GCN as used in the original VGAE and RWR-GAE papers [33] [36]. |

| Random Walk Sampler | Generates sequences of nodes that define the local context for the RWR loss. | In-house script to perform fixed-length, unbiased random walks on the graph [33]. |

| Inner Product Decoder | Reconstructs the graph adjacency matrix from the latent embeddings Z. |

Decoder(Z) = σ(Z * Z^T), where σ is the logistic sigmoid function [33] [36]. |

| Evaluation Metrics | Quantify model performance on downstream tasks. | Node Clustering: Normalized Mutual Information (NMI). Link Prediction: Area Under the Curve (AUC) and Average Precision (AP) [33]. |

Workflow and Conceptual Diagrams

Diagram 1: RWR-GAE Architecture

Diagram 2: Random Walk Regularization Mechanism

Gravity-Inspired Graph Autoencoders (GAEDGRN) for Directed Network Topology Capture

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using a gravity-inspired graph autoencoder (GIGAE) in GAEDGRN over a standard Graph Autoencoder (GAE)?

The primary advantage is GIGAE's ability to capture directed network topology. Standard GAEs and VAEs are designed for undirected graphs and perform poorly on directed link prediction. The gravity-inspired decoder in GIGAE effectively models the directionality of edges, which is crucial for reconstructing accurate Gene Regulatory Networks (GRNs) where causal relationships are asymmetric [37] [38]. Furthermore, GAEDGRN enhances this by integrating a random walk regularization to address uneven latent vector distribution and a modified PageRank* algorithm to focus on genes with high out-degree [6] [39].

Q2: During training, my model's latent vector distribution becomes uneven, leading to poor embedding performance. How can I resolve this?

GAEDGRN specifically addresses this with a random walk regularization module. This technique captures the local topology of the network by performing random walks on the graph. The node sequences from these walks, along with the latent embeddings from the GIGAE, are used to minimize a loss function in a Skip-Gram module. The gradient feedback from this process regularizes the latent vectors, ensuring a more uniform distribution and improving the overall embedding quality [39].

Q3: How does GAEDGRN identify and prioritize important genes during GRN reconstruction?

GAEDGRN uses an improved algorithm called PageRank to calculate gene importance scores. Unlike the standard PageRank algorithm, which assesses importance based on in-degree (links pointing to a node), PageRank focuses on out-degree (links pointing from a node). This is based on the biological hypothesis that genes which regulate many other genes are of higher importance. This score is then fused with gene expression features, allowing the model to pay more attention to these important genes during both encoding and decoding [39].

Q4: What types of input data are required to run the GAEDGRN framework?

The framework requires two main types of input data [39]:

- scRNA-seq Gene Expression Data: This provides the feature matrix for the genes (nodes).

- A Prior GRN: This serves as the initial, albeit incomplete, directed graph structure (adjacency matrix) which GAEDGRN aims to refine and complete.

Troubleshooting Guides

Problem 1: Poor Directed Link Prediction Performance

Symptoms: The model reconstructs edges but performs poorly at predicting the correct direction of regulatory relationships (e.g., TF → Gene).

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Standard GAE/VGAE Decoder: Using a decoder designed for undirected graphs. | Review the decoder architecture in your code. Check if it uses a simple inner product. | Implement the gravity-inspired decoder (GIGAE). This models the probability of a directed edge using a function that accounts for the "mass" (node properties) and "distance" (embedding similarity) [37] [39]. |

| Ignoring Direction in Prior Graph: The input graph is treated as undirected. | Verify that your input adjacency matrix is formatted as a directed graph (asymmetric). | Ensure the prior GRN is loaded as a directed graph object before feeding it into the model. |

Problem 2: Unstable Training or Slow Convergence

Symptoms: Training loss fluctuates wildly or decreases very slowly across epochs.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Unregularized Latent Space: The embedding vectors are unevenly distributed. | Visualize the latent vectors using PCA or t-SNE before and after training. | Integrate the random walk regularization module. This uses random walks on the graph to capture local structure and applies a Skip-Gram objective to regularize the embeddings, leading to a smoother and more stable latent space [39]. |

| Improper Learning Rate. | Experiment with different learning rates. | Implement a learning rate scheduler to reduce the rate as training progresses. Perform a grid search over a range of values (e.g., 1e-4 to 1e-2). |

Problem 3: Model Fails to Identify Key Regulator Genes

Symptoms: The reconstructed network misses well-known master transcription factors or hub genes.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Model is unaware of gene importance. | Check if the gene importance score is being calculated and incorporated. | Implement the PageRank* algorithm to calculate gene importance scores based on node out-degree in the prior GRN. Fuse these scores with the gene expression features before the encoding process in the GIGAE [39]. |

| Weak Prior Graph: The input GRN has too few connections for PageRank* to be effective. | Analyze the density and connectedness of your prior GRN. | Consider using a more comprehensive prior network or integrating multiple sources of prior biological knowledge to strengthen the initial graph structure. |

Experimental Protocols & Data Presentation

Protocol for GAEDGRN Model Training

This protocol outlines the key steps for training the GAEDGRN model as described in the source materials [39].

Input Data Preparation:

- Gene Expression Matrix: Obtain a normalized single-cell RNA sequencing (scRNA-seq) gene expression matrix (cells x genes).

- Prior GRN Adjacency Matrix: Construct a directed adjacency matrix representing a prior gene regulatory network. Nodes are genes, and a directed edge from node i to node j indicates a known or hypothesized regulatory relationship.

Gene Importance Score Calculation:

- Apply the PageRank* algorithm to the prior GRN's adjacency matrix.

- The algorithm is modified to prioritize out-degree, assigning higher importance scores to genes that regulate many other genes.

- Fuse the calculated importance scores with the gene expression features to create weighted node features.

Gravity-Inspired Graph Autoencoder (GIGAE) Training:

- Encoder: The encoder (e.g., a Graph Convolutional Network) takes the weighted node features and the prior adjacency matrix to generate low-dimensional latent node embeddings (Z).

- Random Walk Regularization: Simultaneously, perform random walks on the graph. Use the sequences of visited nodes and their latent embeddings (Z) to compute a regularization loss via a Skip-Gram model.

- Gravity-Inspired Decoder: The decoder reconstructs the directed graph. It uses a gravity-inspired function to compute the probability of a directed edge from node i to node j, often formulated as a function of the nodes' latent properties and their distance in the embedding space [37] [39].

Loss Optimization:

- The total loss is a combination of the graph reconstruction loss (from the GIGAE) and the random walk regularization loss.

- Use a stochastic gradient descent optimizer to minimize the total loss and train the model end-to-end.

The following workflow diagram illustrates this integrated process:

Performance Benchmarking Protocol

To evaluate GAEDGRN against other methods, the following protocol was used [39]:

- Datasets: Use seven different cell types from three distinct GRN types (e.g., from human embryonic stem cells).

- Baseline Models: Compare against state-of-the-art methods, which may include:

- GENELink: Uses Graph Attention Networks but does not explicitly model direction.

- DeepTFni: Uses a Variational Graph Autoencoder but for undirected graphs.

- GNE: A gene network embedding method using Multilayer Perceptrons (MLP).

- Evaluation Metric: Calculate the Area Under the Precision-Recall Curve (AUPR) to evaluate link prediction performance, which is suitable for imbalanced datasets like GRNs where true edges are sparse.

The following table summarizes quantitative results comparing GAEDGRN to other methods, as achieved in the original study [39]:

| Model / Method | Core Approach to Directionality | Reported AUPR (Example) | Training Time (Relative) |

|---|---|---|---|

| GAEDGRN | Gravity-Inspired Decoder + PageRank* + Random Walk Regularization | High | Low |

| GENELink | Graph Attention Network (ignores direction in structure) | Medium | Medium |

| DeepTFni | Variational Graph Autoencoder (for undirected graphs) | Medium | Medium |

| GNE | Multilayer Perceptron (MLP) on node features | Low | High |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and their functions in the GAEDGRN framework [39]:

| Item | Type / Function | Role in the GAEDGRN Experiment |

|---|---|---|

| Gravity-Inspired Decoder | Algorithmic Component | Reconstructs the directed graph from node embeddings by modeling edge directionality based on a physical analogy [37] [39]. |

| PageRank* | Algorithm (Modified from PageRank) | Calculates gene importance scores based on out-degree in the GRN, allowing the model to focus on key regulator genes during inference [39]. |

| Random Walk Regularization | Optimization Technique | Captures local graph topology to ensure a uniform and meaningful distribution of latent vectors in the embedding space, improving model stability [39]. |

| scRNA-seq Data | Biological Data | Provides the input gene expression feature matrix for the nodes (genes) in the network. |

| Prior GRN | Network Data (Directed Graph) | Serves as the initial, incomplete graph structure that the model aims to refine and complete through the link prediction task. |

Application in Gene Regulatory Network Inference and Drug Discovery Pipelines

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of using regularized graph autoencoders over standard methods for Gene Regulatory Network (GRN) inference? Regularized graph autoencoders bring a critical advantage in learning robust, low-dimensional representations of complex biological networks by enforcing the latent space to adhere to a meaningful structure or distribution. This prevents overfitting and enhances the model's ability to generalize, which is paramount when working with high-dimensional, noisy omics data common in drug discovery pipelines. For instance, adversarially regularized or geometrically regularized autoencoders guide the latent representation to match a target distribution or preserve intrinsic data geometry, leading to more accurate and biologically plausible inferred networks compared to standard autoencoders or correlation-based methods [24] [40].

FAQ 2: My single-cell RNA-seq data is sparse and noisy. Which methods and regularizations are best suited for this challenge? Sparsity and noise are significant challenges in single-cell data. Methods that incorporate specific regularizations to handle this are recommended:

- Trajectory Sampling and Continuous-Time Models: Tools like BINGO use Bayesian inference and Gaussian process dynamical models to sample continuous gene expression trajectories from sparse time-points, effectively performing statistical interpolation between measurements [41].

- Multi-Omic Integration: Pipelines like SCENIC+ and others in the netZoo package integrate transcriptomic data with complementary data types, such as chromatin accessibility (ATAC-seq), to add robust information on transcription factor binding site availability, thereby reducing reliance on noisy expression data alone [42] [43].

- Wasserstein Regularization: This approach is theoretically better at handling distributions with little common support, which can be a useful property when dealing with heterogeneous cell populations and dropouts in single-cell data [24].

FAQ 3: How can I validate that my inferred GRN is biologically accurate and not a computational artifact? Validation is a multi-step process:

- In-silico Benchmarking: Use gold-standard benchmark datasets, such as those from the DREAM challenges, to compare your method's performance against known networks [41].

- Experimental Validation: Perform targeted experimental validation. The NEEDLE pipeline, for example, emphasizes rapid in planta validation using transient reporter assays to confirm predicted transcription factor-target gene interactions [44].