Adaptive Parameter Tuning for Multifactorial Evolutionary Algorithms: Strategies for Enhanced Optimization in Drug Discovery

This article provides a comprehensive exploration of parameter tuning strategies for Multifactorial Evolutionary Algorithms (MFEAs), with a specific focus on applications in drug design and development.

Adaptive Parameter Tuning for Multifactorial Evolutionary Algorithms: Strategies for Enhanced Optimization in Drug Discovery

Abstract

This article provides a comprehensive exploration of parameter tuning strategies for Multifactorial Evolutionary Algorithms (MFEAs), with a specific focus on applications in drug design and development. It covers foundational concepts, including the critical distinction between parameter tuning and control, and the unique challenges posed by the multi-task environment of MFEAs. The content delves into advanced methodological frameworks, such as multi-stage adaptation schemes and diversity enhancement mechanisms, and addresses common troubleshooting scenarios like premature convergence and parameter interaction. Furthermore, it presents a rigorous validation framework, comparing MFEA performance against state-of-the-art single-task evolutionary algorithms using both benchmark functions and real-world drug design problems. Aimed at researchers and drug development professionals, this guide synthesizes theoretical insights with practical applications to improve the efficacy and reliability of optimization in complex biomedical research.

The Core Concepts of Parameter Control in Evolutionary Computation

Defining Parameter Tuning vs. Parameter Control in Evolutionary Algorithms

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between parameter tuning and parameter control?

Parameter tuning is the process of finding good values for an algorithm's parameters before the run, and these values remain fixed throughout the entire optimization process. In contrast, parameter control adjusts the parameter values on-the-fly during the algorithm's execution, allowing them to change dynamically in response to the search progress [1] [2].

2. Why is parameter control often preferred for complex problems like those in drug discovery?

Parameter control allows an algorithm to adapt its behavior during the search. This is crucial because different stages of the optimization process often require different strategies; for instance, more exploration (global search) at the beginning and more exploitation (local refinement) towards the end. This dynamic adaptation can lead to more robust performance on complex, real-world problems without the need for expensive pre-tuning [1] [3].

3. I'm new to Evolutionary Algorithms. Should I start with tuning or control?

It is generally recommended to start with established parameter tuning methods to establish a performance baseline. This involves using standard values from the literature or conducting simple tuning experiments. Once a baseline is established, you can explore parameter control methods to seek further performance improvements and robustness [2].

4. What are the main types of parameter control methods?

Parameter control methods can be broadly categorized into three types [1]:

- Deterministic: Parameters are changed according to a predetermined, fixed rule without using any feedback from the search.

- Adaptive: Changes are made based on feedback received from the search process (e.g., based on fitness or population distribution).

- Self-adaptive: The parameters are encoded into the individuals' chromosomes and are evolved alongside the solutions themselves.

5. Which key parameters of an Evolutionary Algorithm typically require adjustment?

The most common parameters that need adjustment are [4] [1] [3]:

- Population size (

NP) - Crossover rate (

CR) - Mutation rate and the scaling factor for mutation (

F) The optimal values for these parameters are highly dependent on the specific problem and search landscape.

Troubleshooting Guides

Issue 1: Poor Convergence or Stagnating Solutions

Problem: Your algorithm converges to a sub-optimal solution too quickly or stops improving before a satisfactory result is found.

Potential Causes and Solutions:

- Cause: Ineffective Exploration/Exploitation Balance.

- Solution: Implement an adaptive parameter control strategy. For example, use a method that starts with a higher mutation rate (promoting exploration) and gradually reduces it over generations (shifting to exploitation). The

L-SHADEalgorithm family is a prominent example that uses adaptiveFandCRparameters [3].

- Solution: Implement an adaptive parameter control strategy. For example, use a method that starts with a higher mutation rate (promoting exploration) and gradually reduces it over generations (shifting to exploitation). The

- Cause: Fixed Parameters are Unsuitable for the Problem Landscape.

- Solution: If using tuning, conduct a broader parameter sweep. Research suggests that the parameter space for EAs is often "rife with viable parameters," so testing a wider range of values can reveal a better configuration [2]. Consider using a meta-genetic algorithm to automate the search for good parameter sets [2].

Issue 2: Excessive Computational Cost

Problem: The time or resources required to find a good solution are prohibitively high.

Potential Causes and Solutions:

- Cause: Expensive Fitness Evaluations.

- Solution: This is common in drug discovery, such as when using flexible protein-ligand docking. Employ an evolutionary algorithm (EA) designed for efficiency. For example, the

REvoLdalgorithm was specifically designed to search ultra-large chemical spaces with full flexibility by docking only a few thousand molecules instead of billions, using a smart evolutionary protocol [5].

- Solution: This is common in drug discovery, such as when using flexible protein-ligand docking. Employ an evolutionary algorithm (EA) designed for efficiency. For example, the

- Cause: Poorly Tuned Population Size.

- Solution: Use a parameter control method that dynamically reduces the population size during the run. Algorithms like

L-SHADEincorporate a linear population size reduction (LPSR), which starts with a larger population for exploration and shrinks it to focus computational resources later [3].

- Solution: Use a parameter control method that dynamically reduces the population size during the run. Algorithms like

Issue 3: Algorithm Performs Inconsistently Across Different Problems

Problem: Your carefully tuned EA works well on one problem but fails to generalize to others.

Potential Causes and Solutions:

- Cause: Over-tuning to a Specific Problem.

- Solution: Shift from parameter tuning to a general parameter control method. The core strength of control methods is their robustness and flexibility across different problems, as they adapt to the problem at hand rather than relying on a one-size-fits-all setting [1].

- Cause: High Sensitivity to Initial Parameter Settings.

- Solution: Adopt algorithms that reduce the number of sensitive parameters. Some modern

L-SHADE-based variants aim to be less sensitive to initial settings by using sophisticated adaptation mechanisms forFandCR[3].

- Solution: Adopt algorithms that reduce the number of sensitive parameters. Some modern

Experimental Protocols for Parameter Adjustment

Protocol 1: Baseline Establishment via Parameter Tuning

This protocol is designed to find a robust set of static parameters before moving to more advanced control methods.

1. Objective: Identify a fixed set of parameters (Population Size, Crossover Rate, Mutation Rate/Scaling Factor) that provides acceptable performance across a set of benchmark problems representative of your domain.

2. Materials/Reagents:

- Software: Your Evolutionary Algorithm implementation (e.g., in Python, C++, or a framework like EvoTorch [6]).

- Hardware: Standard computing resources.

- Data: A curated set of benchmark problems (e.g., from CEC competition suites [3] or domain-specific problems).

3. Methodology:

- Step 1: Define the parameter ranges you wish to test (e.g., population size from 50 to 500).

- Step 2: Choose a tuning method. A simple random search across the parameter space has been shown to be effective and is often sufficient [2].

- Step 3: For each parameter set, run your EA on all benchmark problems. Perform multiple independent runs to account for stochasticity.

- Step 4: Evaluate performance using metrics like final fitness, convergence speed, and consistency.

- Step 5: Select the parameter set that offers the best overall robust performance.

4. Analysis: The output is a single, fixed parameter set to be used for subsequent experiments or as a baseline for comparing against parameter control methods.

Protocol 2: Implementing a Simple Adaptive Parameter Control

This protocol outlines how to implement a basic adaptive mechanism for a mutation parameter.

1. Objective: Dynamically adjust the mutation scale F during a run to improve convergence and final solution quality.

2. Materials/Reagents:

- Software: An EA implementation that allows for modification of parameters during the run.

- Hardware: Standard computing resources.

- Data: Your target optimization problem.

3. Methodology:

- Step 1: Initialize a memory for successful

Fvalues,M_F, as an empty list. - Step 2: For each generation, for each individual, generate its

Fvalue from a Cauchy distribution with a location parameter based on the mean ofM_Fand a fixed scale parameter (e.g., 0.1 or a modified value as suggested in recent research [3]). - Step 3: Run the variation and selection steps of your EA.

- Step 4: After selection, for each successful individual (whose offspring was selected for the next generation), record its

Fvalue intoM_F. - Step 5: Periodically update the location parameter for the Cauchy distribution based on the contents of

M_F(e.g., using a Lehmer mean).

4. Analysis: Compare the convergence curve and final result against the baseline from Protocol 1. A successful implementation should show more robust convergence and often a better final result.

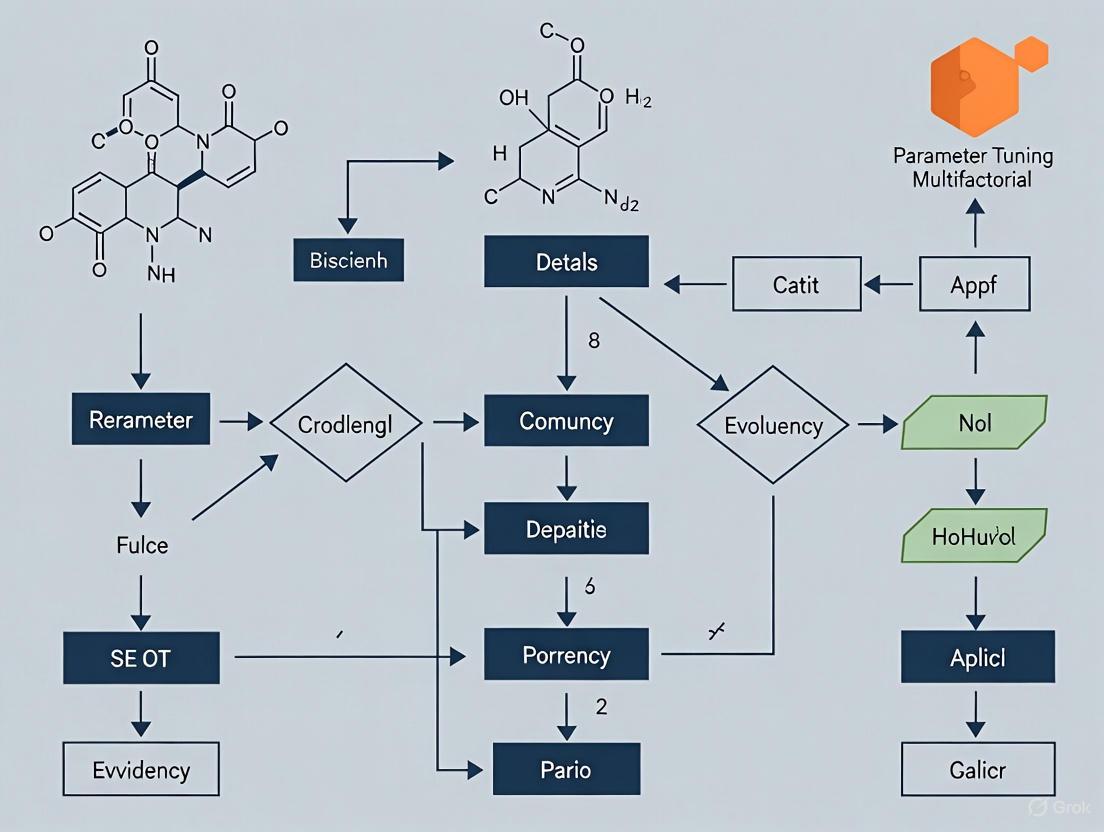

Parameter Adjustment: A Visual Workflow

The following diagram illustrates the fundamental difference between the parameter tuning and parameter control processes.

Research Reagent Solutions

The table below lists key algorithmic components and their functions, analogous to research reagents in a wet-lab environment.

| Research Reagent | Function in Parameter Adjustment |

|---|---|

| Meta-Genetic Algorithm [2] | An automated "reagent" for parameter tuning. It is a GA used to optimize the parameters of another GA, removing manual effort. |

| Success-History Based Adaptation [3] | A core "reagent" for parameter control. It maintains a memory of successful parameter values (F, CR) from previous generations and uses them to generate new values. |

| Linear Population Size Reduction (LPSR) [3] | A deterministic control "reagent" for the population size parameter. It linearly decreases the population to shift focus from exploration to exploitation. |

| Cauchy and Normal Distributions [3] | Mathematical "reagents" used to generate new values for parameters like F and CR in adaptive DE algorithms. Their scale parameters are critical for performance. |

| Decision Variable Scoring [7] | A specialized "reagent" for sparse optimization problems. It calculates and updates scores for each variable to guide crossover/mutation, improving sparsity. |

The Multifactorial Evolutionary Algorithm (MFEA) Framework and Its Unique Parameter Challenges

Frequently Asked Questions (FAQs)

Q1: What is the primary parameter challenge when first applying MFEA to a new set of problems?

The most immediate challenge is setting the Random Mating Probability (rmp). This single parameter controls the frequency of knowledge transfer between different optimization tasks. Without prior knowledge of how related your tasks are, setting an inappropriate rmp value can lead to negative transfer, where cross-task interference degrades performance rather than improving it [8].

Q2: Our multi-task optimization is suffering from performance degradation. How can we determine if negative transfer is the cause?

Performance degradation across tasks, or in one task while another improves, often signals negative transfer. This frequently occurs when the rmp value is too high for tasks that are not sufficiently related, forcing unproductive genetic exchange. To diagnose this, you can run a sensitivity analysis by testing a range of rmp values and observing the performance impact on each task [8].

Q3: Are there advanced algorithms that mitigate the rigidness of a fixed rmp parameter?

Yes, next-generation algorithms have been developed to dynamically manage knowledge transfer. MFEA-II introduces an adaptive rmp matrix that captures non-uniform synergies between different task-pairs, updating these values online during the search process. Other algorithms, like EMT-ADT, use a decision tree to predict an individual solution's "transfer ability" before allowing it to cross between tasks, thereby promoting positive transfer [8] [9].

Q4: For a researcher new to MFEA, what is a robust initial parameter set to begin experiments?

While the optimal parameters are problem-dependent, a viable starting point can be found. Research suggests that the parameter space for evolutionary algorithms often contains many viable configurations [2]. The following table summarizes commonly used parameters from literature that can serve as an initial setup.

Table: Foundational MFEA Parameters and Common Settings

| Parameter | Common Setting / Range | Function |

|---|---|---|

| Random Mating Probability (rmp) | 0.3 (or uses an adaptive matrix) [8] [9] | Controls cross-task crossover rate. |

| Population Size | Problem-dependent [2] | Number of individuals per generation. |

| Crossover Rate | Insensitive in some studies [2] | Controls intra-task gene recombination. |

| Mutation Rate | Can be highly sensitive [2] | Introduces new genetic material. |

Q5: Beyond parameter tuning, what other factors critically impact MFEA success?

Two factors are paramount: First, the relatedness of the tasks. MFEA thrives when tasks can share beneficial genetic building blocks. Second, a unified search space representation. All tasks must be encoded in a common format for the algorithm to operate, which can be a significant design challenge [8].

Troubleshooting Guides

Issue 1: Negative Knowledge Transfer Between Tasks

Symptoms: Optimization performance for one or more tasks is worse in the multi-task environment than when solved independently.

Solution Protocol:

- Diagnosis: Conduct a per-task performance audit. Run each task independently with a simple optimizer (e.g., GA or PSO) and compare the results with the MFEA output [9].

- Apply an Adaptive Strategy: Implement an algorithm like MFEA-II that replaces the fixed

rmpwith an adaptive matrix. This allows the algorithm to automatically learn and exploit the specific relationships between each pair of tasks during the run [8] [9]. - Experimental Validation: After implementing the adaptive strategy, re-run the per-task performance audit to confirm that the performance degradation has been alleviated.

Issue 2: Inefficient Search and Slow Convergence

Symptoms: The algorithm requires excessive time to find satisfactory solutions, or it stalls prematurely.

Solution Protocol:

- Refine the Search Engine: The core search operator in MFEA (e.g., crossover and mutation) can be enhanced. Consider integrating more powerful algorithms like Success-History Based Adaptive Differential Evolution (SHADE) as the underlying search engine to improve efficiency and solution precision [8].

- Implement a Selective Transfer Strategy: Use a strategy like EMT-ADT to intelligently control knowledge transfer.

- Step 1: Define a metric to quantify the "transfer ability" of an individual solution.

- Step 2: Train a decision tree model online to predict which individuals are likely to result in positive transfer.

- Step 3: Allow only high-potential individuals to cross between tasks, conserving evolutionary resources [8].

- Benchmarking: Compare the convergence speed and final solution quality against the basic MFEA to quantify the improvement.

Table: Comparison of Advanced MFEA Variants for Troubleshooting

| Algorithm Variant | Core Mechanism | Best for Solving |

|---|---|---|

| MFEA-II [8] [9] | Online learning of an RMP matrix | Negative transfer due to fixed, inappropriate rmp |

| EMT-ADT [8] | Decision tree-based individual selection | Unproductive cross-talk wasting resources |

| EMTO-HKT [8] | Hybrid knowledge transfer (individual & population level) | Complex tasks with varying relatedness levels |

Experimental Protocols for Parameter Tuning

Protocol 1: Benchmarking Multi-Task Performance with MFEA-II

This protocol uses the online transfer parameter estimation in MFEA-II to address negative transfer [8] [9].

- Problem Formulation: Define your set of

Kdistinct optimization tasks. For reliability engineering, this could be different Reliability Redundancy Allocation Problems (RRAPs) [9]. - Algorithm Setup: Configure the MFEA-II framework. Encode the solution structures of all

Kproblems into a unified chromosome representation. - Integration: Implement the adaptive

rmpmatrix, which is updated based on the observed success of cross-task transfers during the run. - Execution & Analysis: Run the algorithm. Evaluate performance based on the average best reliability (or other relevant metric) across tasks and the total computation time. Compare these results against those obtained from a basic MFEA and single-task optimizers like GA and PSO [9].

Protocol 2: Testing Individual Transfer Ability with EMT-ADT

This protocol uses the EMT-ADT algorithm to screen individuals for productive knowledge transfer [8].

- Define Transfer Ability: Establish a quantitative metric to evaluate how much useful knowledge a candidate solution from one task contains for another task. This metric is the foundation for supervised learning.

- Model Training: During the evolutionary process, use the defined metric to label individuals. Construct a decision tree model (using the Gini coefficient for splitting) to predict the transfer ability of new individuals.

- Selective Mating: During the assortative mating phase, use the trained decision tree to identify promising individuals with high predicted transfer ability. Only these individuals are permitted to be used for cross-task crossover.

- Validation: Verify the effectiveness of the strategy by comparing the frequency of positive transfers and the final solution quality against a standard MFEA run.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Computational Tools for MFEA Research

| Tool / Component | Function in the MFEA Context | Application Note |

|---|---|---|

| Unified Search Space | A common encoding that represents solutions for all tasks. | Critical for crossover; design is non-trivial and problem-specific [8]. |

| Adaptive RMP Matrix | Replaces the scalar rmp to capture pair-wise task relationships. |

Core component of MFEA-II; mitigates negative transfer [8] [9]. |

| Decision Tree Predictor | A supervised learning model to filter individuals for cross-task transfer. | Key to the EMT-ADT algorithm; improves transfer quality [8]. |

| SHADE Search Engine | A powerful differential evolution variant used as the core search operator. | Can be integrated into the MFEA paradigm to enhance search efficiency [8]. |

| Benchmark Problem Sets | Standardized multi-task problems for algorithm validation. | Examples include CEC2017 MFO benchmarks and reliability problems like series-system or bridge-system RRAPs [8] [9]. |

MFEA Workflow and Knowledge Transfer Logic

The MFEA framework consolidates multiple optimization tasks into a unified population. The Skill Factor assigns each individual to its best-performed task. The crucial Assortative Mating step, governed by the rmp parameter, determines when individuals from different tasks crossover, enabling knowledge transfer. This process of evaluation and selection refines the population over generations, producing optimized solutions for all tasks simultaneously.

Troubleshooting Guide: Common Parameter Control Issues

Problem 1: Algorithm Performance Stagnates Mid-Run

- Symptoms: Good initial progress slows or halts after a number of generations; algorithm appears to stop exploring.

- Diagnosis: Static parameters may be favoring exploration early on but are hindering necessary exploitation later in the search.

- Solution: Implement an adaptive parameter control method. Use feedback from the search, such as population diversity metrics or recent improvement rates, to dynamically adjust parameters like mutation rates. If population diversity falls below a threshold, increase the mutation rate to encourage exploration [10].

Problem 2: Infeasible Solutions Dominate in Constrained Optimization

- Symptoms: The population converges on solutions that violate problem constraints; the algorithm struggles to find a feasible region.

- Diagnosis: The penalty weight for constraint violations in the fitness function is incorrectly balanced.

- Solution: Use a deterministic or adaptive schedule for the penalty weight. A deterministic approach would increase the penalty weight over time according to a pre-set rule (e.g.,

W(t) = (C * t)^σ). An adaptive method would adjust the weight based on the feasibility of recent best solutions [10].

Problem 3: High Computational Cost of Parameter Tuning

- Symptoms: Excessive time is spent testing different parameter values before the main run, with no guarantee that these settings will remain optimal.

- Diagnosis: Reliance on parameter tuning (offline adjustment) instead of parameter control (online adjustment).

- Solution: Transition to a self-adaptive parameter control framework. Encode parameters like mutation step sizes directly into the chromosome (e.g.,

(x1, …, xn, σ1, …, σn)). This allows the algorithm to evolve the best parameters for different stages of the search automatically, reducing pre-processing overhead [10] [11].

Problem 4: Parameter Settings Are Problem-Specific

- Symptoms: Parameters tuned for one problem class perform poorly on a new, slightly different problem.

- Diagnosis: The parameter configuration is too specialized and lacks generality.

- Solution: Employ a general parameter control method. These methods are designed to be applicable across various algorithms, parameters, and problems, reducing the need for re-tuning and leveraging insights from broader search landscapes [1].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between parameter tuning and parameter control?

- Answer: Parameter Tuning involves finding good parameter values before the main run through extensive preliminary testing; these values remain static throughout the evolutionary process. Parameter Control adjusts parameters on-the-fly during the execution of the algorithm, allowing it to dynamically respond to the state of the search [1].

FAQ 2: When should I choose a deterministic method over a self-adaptive one?

- Answer: Choose a deterministic method when you have strong prior knowledge about how the search should progress over time and prefer predictable, user-defined control. Choose a self-adaptive method when you lack this domain knowledge and want to delegate the parameter adjustment task to the evolutionary process itself, accepting less predictability for potentially greater autonomy [10].

FAQ 3: How does self-adaptation avoid "cheating" when parameters are part of the chromosome?

- Answer: In self-adaptation, an individual's encoded parameters (like a mutation step size,

σ) influence how it is mutated, but its fitness is evaluated based only on the solution variables (x). An individual with a "good"σthat leads to better solutions will be selected for, thereby propagating good parameter values. This is different from encoding a penalty weight, which would directly alter the fitness evaluation and could be gamed [10].

FAQ 4: Can parameter control handle categorical parameters, like choosing between different operators?

- Answer: The focus of general parameter control is typically on numerical parameters. Methods that dynamically change the inner logic of operators (categorical parameters) are often considered under the separate category of "operator selection" [1].

Quantitative Data on Parameter Control Methods

Table 1: Classification and Characteristics of Common Parameter Control Methods

| Control Method | What is Controlled | How it's Controlled | Evidence for Change | Scope of Change |

|---|---|---|---|---|

σ(t) = 1 - 0.9*t/T [10] |

Mutation Step Size | Deterministic | Time / Generation Count | Entire Population |

σ' = σ/c, if p_s > 1/5 [10] |

Mutation Step Size | Adaptive | Successful Mutation Rate | Entire Population |

(x1, …, xn, σ) [10] |

Mutation Step Size | Self-adaptive | (Implicitly by Fitness) | Individual |

W(t) = (C*t)^σ [10] |

Penalty Weight | Deterministic | Time / Generation Count | Entire Population |

W'=β*W, if champs feasible [10] |

Penalty Weight | Adaptive | Constraint Satisfaction History | Entire Population |

Adaptive Cauchy-based F & CR [11] |

Scaling & Crossover Rate | Self-adaptive | Success-based Average & Cauchy Distribution | Individual |

Table 2: Experimental Parameters from a Self-Adaptive Differential Evolution (DESAP) Study [11]

| Parameter | Role in Algorithm | Self-Adaptation Method |

|---|---|---|

Scaling Factor (F) |

Amplifies difference vectors during mutation. | Encoded in each individual; updated based on successful values. |

Crossover Rate (CR) |

Controls gene mixing between target and mutant vectors. | Encoded in each individual; updated based on successful values. |

Population Size (NP) |

Number of individuals in the population. | Encoded in each individual; evolves to balance exploration/exploitation. |

Experimental Protocol: Implementing an Adaptive Cauchy Differential Evolution

This protocol details the methodology for implementing a state-of-the-art adaptive parameter control algorithm as described in research on constrained optimization [11].

Objective: To solve a constrained optimization problem by dynamically controlling the scaling factor (F) and crossover rate (CR) using a success-based Cauchy distribution.

Materials/Software Requirements: A programming environment (e.g., Python, MATLAB, C++) and a defined optimization problem with constraints.

Step-by-Step Procedure:

- Initialization:

- Set the initial population size (

NP) and randomly initialize the population ofNPindividuals within the parameter bounds. - Initialize each individual

iwith its own personal control parametersF_iandCR_i(common starting values areF_i = 0.5andCR_i = 0.9).

- Set the initial population size (

Main Generational Loop (Repeat until termination criteria are met):

- Mutation: For each target vector

X_i,G, generate a mutant vectorV_i,Gusing a strategy like DE/rand/1:V_i,G = X_r1,G + F_i * (X_r2,G - X_r3,G), wherer1, r2, r3are distinct random indices [11]. - Crossover: Generate a trial vector

U_i,Gby mixing the target and mutant vectors based on the individual'sCR_i. - Selection: Compare the fitness of the trial vector

U_i,Gto its target vectorX_i,G. If the trial vector is better or equal, it survives to the next generation and is considered a "successfully evolved individual." - Parameter Adaptation:

- Calculate the average

FandCRvalues from all successfully evolved individuals in this generation. - For each individual in the new generation, generate new

F_iandCR_ivalues by drawing from a Cauchy distribution. The location (peak) of this distribution is the successful generation's average value. This ensures new parameters are either near the average or take a large step away from it, balancing convergence and exploration [11].

- Calculate the average

- Mutation: For each target vector

Termination: Upon meeting a stopping condition (e.g., maximum generations, convergence), output the best solution found.

Visualizing Parameter Control Taxonomies and Workflows

Parameter Control Classification

Adaptive Parameter Control Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Parameter Control Research Framework

| Tool / Component | Function / Role in Research | Example Implementation |

|---|---|---|

| Benchmark Problem Suites | Provides standardized, diverse test functions for fair comparison and robustness evaluation of new algorithms. | CEC (Congress on Evolutionary Computation) constrained problem sets [12]. |

| Performance Metrics | Quantifies algorithm performance beyond simple "best fitness," enabling rigorous comparison. | Success rate, convergence speed, computational overhead, and performance measures for constrained problems [12]. |

| Long-Tail Probability Distributions | Used in parameter adaptation rules to generate large steps, helping the algorithm escape local optima. | The Cauchy distribution, used to generate new parameter values that can be far from the current successful average [11]. |

| Feedback Monitors | Tracks search progress in real-time, providing the data needed for adaptive control mechanisms. | Monitors for population diversity, recent improvement rates, and feasibility rates of best solutions [10]. |

The Exploration vs. Exploitation Trade-off in a Multi-Task Context

Frequently Asked Questions (FAQs)

Q1: What is the exploration-exploitation dilemma in the context of evolutionary algorithms? The exploration-exploitation dilemma describes the challenge of balancing two opposing strategies: exploitation, which involves selecting the best-known options based on current knowledge, and exploration, which involves testing new options that might lead to better future outcomes at the expense of short-term gains. Finding the optimal balance is crucial for maximizing long-term performance in decision-making problems like parameter tuning in evolutionary algorithms [13].

Q2: Why is maintaining a balance between exploration and exploitation particularly challenging in a multi-task environment? In a multi-task context, the algorithm must manage this trade-off across different tasks or fitness landscapes simultaneously. Over-exploiting one task can lead to premature convergence on that task while neglecting others, whereas excessive exploration can prevent all tasks from converging to satisfactory solutions efficiently. The optimal balance may also differ from one task to another.

Q3: What are some common symptoms of poor exploration in my algorithm? Common symptoms include:

- Premature Convergence: The population gets stuck in a local optimum early in the run, with a lack of genetic diversity.

- Loss of Niche Representation in Multi-Task Scenarios: The population becomes dominated by individuals optimized for a single task, failing to maintain good solutions for other tasks.

- Inability to Escape Local Optima: The algorithm cannot find better solutions even after many generations.

Q4: What are some common symptoms of poor exploitation? Common symptoms include:

- Slow or No Convergence: The population's average fitness improves very slowly or not at all, resembling a random walk.

- High-Performance Fluctuations: The best-found solution changes frequently without stabilizing.

- Failure to Refine Good Solutions: The algorithm discovers promising regions of the search space but cannot fine-tune the solutions to achieve high performance.

Q5: How can I use the REvoLd protocol to improve sampling in ultra-large search spaces? The REvoLd (RosettaEvolutionaryLigand) protocol is an evolutionary algorithm designed for efficient screening of ultra-large combinatorial chemical libraries. It uses a population of molecules and applies selection, crossover, and mutation over generations, guided by a flexible docking score. Key parameters that aid exploration include conducting multiple independent runs and using mutation steps that switch fragments for low-similarity alternatives [5].

Troubleshooting Guides

Issue 1: Premature Convergence in Multi-Task Optimization

Problem: The algorithm converges quickly to a good solution for one task but fails to find competitive solutions for other tasks.

Diagnosis: This is a classic sign of over-exploitation on one task and insufficient exploration of the search space for other tasks.

Resolution:

- Increase Exploration Pressure: Adjust algorithmic parameters to favor exploration.

- Diversity Preservation Mechanisms: Implement or strengthen techniques like fitness sharing or niching to explicitly maintain sub-populations for different tasks.

- Review Migration Rates: In island models, ensure the migration rate is high enough to allow beneficial genetic material to spread between populations focused on different tasks, but not so high that it causes premature homogenization.

Experimental Protocol for Resolution:

- Objective: To determine the effect of mutation rate and niche radius on maintaining population diversity across multiple tasks.

- Methodology:

- Run your multi-factorial evolutionary algorithm with a base parameter set.

- Systematically increase the mutation rate (e.g., from 1% to 5% to 10%) while keeping other parameters constant.

- In parallel, for algorithms with fitness sharing, systematically reduce the niche radius (σ_share) to force individuals to compete more directly with their nearest neighbors.

- Metrics: Monitor the Inverse Generational Distance (IGD) for each task and the overall population diversity (e.g., average Hamming distance between individuals).

Issue 2: Slow or Failed Convergence Across All Tasks

Problem: The algorithm seems to be "wandering" and does not refine solutions to achieve high performance on any task.

Diagnosis: This indicates over-exploration and a lack of effective exploitation.

Resolution:

- Increase Selection Pressure: Adjust parameters to more strongly favor the fittest individuals. This can be done by using a more aggressive selection strategy (e.g., tournament selection with a larger group size).

- Reduce Disruptive Genetic Operations: Lower the probability of high-disruption operations like large segment crossover or mutation. Focus on fine-tuning mutations with smaller effects.

- Re-evaluate Crossover Utility: If crossover between solutions from different tasks consistently produces low-quality offspring, consider restricting crossover to individuals within the same task niche.

Experimental Protocol for Resolution:

- Objective: To assess the impact of selection pressure and crossover rate on convergence speed.

- Methodology:

- Increase the tournament size in tournament selection from 2 to 3 or 4.

- Incrementally reduce the mutation rate.

- Experiment with different crossover rates (e.g., 60%, 80%, 95%).

- Metrics: Track the generational best fitness for each task. Successful exploitation should show a steady, upward trend in these values.

Quantitative Data on Algorithm Performance

The following table summarizes the performance of the REvoLd evolutionary algorithm compared to random screening, demonstrating the profound efficiency gains achievable with a well-tuned approach [5].

Table 1: Performance Benchmark of REvoLd Evolutionary Algorithm vs. Random Screening [5]

| Drug Target | Library Size Searched | REvoLd Total Molecules Docked | Hit Rate Improvement Factor vs. Random |

|---|---|---|---|

| Target A | ~20 Billion | 49,000 - 76,000 | 869x - 1622x |

| Target B | ~20 Billion | 49,000 - 76,000 | 869x - 1622x |

| Target C | ~20 Billion | 49,000 - 76,000 | 869x - 1622x |

| Target D | ~20 Billion | 49,000 - 76,000 | 869x - 1622x |

| Target E | ~20 Billion | 49,000 - 76,000 | 869x - 1622x |

Table 2: Hyperparameter Optimization for Exploration-Exploitation Balance in REvoLd [5]

| Hyperparameter | Tested Value | Impact on Trade-off | Recommended Value |

|---|---|---|---|

| Initial Population Size | 200 | Provides sufficient variety to start optimization without excessive runtime cost. | 200 |

| Generations | 30 | Good balance; good solutions often found by generation 15, but discovery continues. | 30 (multiple runs advised) |

| Selection Pressure | High (Elitist) | Fast convergence but limited exploration. | Moderate (e.g., top 25% advance) |

| Low-similarity Mutation | Added | Keeps well-performing parts intact but enforces significant changes on small parts, boosting exploration. | Include |

| Crossover Rate | Increased | Enforces variance and recombination between well-suited ligands. | High |

Experimental Protocols for Parameter Tuning

Protocol 1: Systematic Grid Search for Multi-Task Balance

- Objective: To find the optimal combination of mutation rate and migration frequency in an island-based multi-task algorithm.

- Procedure:

- Define a grid of parameter values (e.g., mutationrate = [0.01, 0.05, 0.1]; migrationfrequency = [5, 10, 20] generations).

- Execute the algorithm for every combination of parameters in the grid.

- For each run, record the mean IGD across all tasks after a fixed number of generations.

- Output: A table or surface plot showing the performance landscape, allowing identification of the most robust parameter set.

Protocol 2: Adaptive Parameter Control based on Population Diversity

- Objective: To dynamically adjust the mutation rate based on the current state of the population to automatically balance exploration and exploitation.

- Procedure:

- Define a threshold for population diversity (e.g., using entropy or Hamming distance).

- At each generation, calculate the current population diversity.

- If diversity drops below the threshold, increase the mutation rate to encourage exploration.

- If diversity is above the threshold, decrease the mutation rate to encourage exploitation.

- Output: An algorithm that self-tunes its exploration/exploitation pressure, leading to more robust performance across different problems.

Visualization of Workflows and Relationships

Troubleshooting Logic for the Exploration-Exploitation Trade-off

Multi-Task Evolutionary Algorithm Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Evolutionary Algorithm Research in Drug Discovery

| Tool / Reagent | Function / Purpose |

|---|---|

| RosettaLigand (REvoLd) | A flexible protein-ligand docking protocol used within an evolutionary algorithm framework to score and optimize molecules in ultra-large combinatorial libraries [5]. |

| Enamine REAL Space | A "make-on-demand" combinatorial chemical library comprising billions of readily synthesizable molecules, serving as a prime search space for virtual drug discovery campaigns [5]. |

| Multi-Armed Bandit (MAB) Algorithms | A set of classic methods (e.g., ε-greedy, UCB, Thompson Sampling) for managing the exploration-exploitation trade-off at decision points, often used within reinforcement learning and evolutionary frameworks [13]. |

| Genetic Operators (Crossover/Mutation) | The core "reagents" for generating new candidate solutions. Crossover exploits existing good building blocks, while mutation explores new genetic material [5]. |

| Intrinsic Reward Functions | In reinforcement learning, these are designed rewards (e.g., for visiting novel states) that encourage exploration by making it a goal in itself, converting the exploration-exploitation dilemma into a pure exploitation problem [13]. |

Why Parameter Space is Ripe with Viable Configurations for Complex Problems

For researchers in drug development and computational sciences, finding optimal parameters for complex models often feels like searching for a needle in a haystack. Evolutionary algorithms (EAs) provide a powerful solution to this challenge by treating parameter configuration as an optimization problem itself. These metaheuristic, population-based algorithms imitate biological evolution processes including reproduction, mutation, recombination, and selection to explore high-dimensional parameter spaces efficiently [14].

Within this framework, Multifactorial Evolutionary Algorithms (MFEAs) represent a significant advancement, enabling simultaneous optimization across multiple related tasks. This parallel exploration allows knowledge gained while optimizing one task to inform and accelerate progress on other related tasks, making them particularly valuable for complex research problems with multiple objectives [15] [16].

Technical Support Center

Troubleshooting Common MFEA Experimental Issues

FAQ 1: How can I reduce negative knowledge transfer between tasks in my MFEA?

Problem: When optimizing multiple tasks simultaneously, transfers between tasks sometimes degrade performance rather than improving it.

Solution: Implement adaptive parameter control mechanisms:

MFEA-II introduces online transfer parameter estimation to dynamically control random mating probability (rmp) based on historical success rates [15]. Recent approaches like SA-MFEA and LSA-MFEA maintain historical memory of successful rmp values and adapt this parameter throughout the optimization process, significantly reducing harmful interactions [16].

Experimental Protocol:

- Initialize population with random rmp values between 0-1

- Track transfer success rates across generations

- Update rmp probabilities based on historical performance

- Apply linear population size reduction (LPSR) in LSA-MFEA to enhance exploitation

- Validate on benchmark problems before applying to research data

FAQ 2: Why does my evolutionary algorithm converge prematurely on pharmaceutical dataset?

Problem: The algorithm appears stuck in local optima, unable to explore the full parameter space for viable configurations.

Solution: Address both selection pressure and population diversity:

Table: Strategies to Prevent Premature Convergence

| Strategy | Mechanism | Application Context |

|---|---|---|

| Elitist Preservation [14] | Maintains best individuals across generations | Ensures monotonic fitness improvement |

| Restricted Mate Selection [14] | Limits mating to subpopulations | Reduces convergence speed, maintains diversity |

| Quality-Diversity Algorithms [14] | Separates solution finding from diversity maintenance | Explores wider parameter regions |

| Linear Population Size Reduction [16] | Adaptively reduces population size | Balances exploration and exploitation |

Theoretical analyses confirm that elitist evolutionary algorithms guarantee convergence to optimal solutions, but practical implementations must balance this with sufficient diversity maintenance [14] [17]. For drug discovery applications where parameter spaces often contain multiple promising regions, maintaining population diversity is particularly crucial.

FAQ 3: How do I determine if my parameter space contains sufficient viable configurations?

Problem: Uncertainty about whether the defined search space contains enough good solutions to justify optimization effort.

Solution: Perform preliminary landscape analysis:

Evolutionary algorithms ideally make no assumption about the underlying fitness landscape, allowing them to identify viable configurations even in complex parameter spaces [14]. The L2L (Learning to Learn) framework provides specialized tools for parameter space exploration, particularly valuable for neuroscience models and pharmacological applications [18].

Experimental Protocol for Landscape Assessment:

- Define parameter boundaries based on biological constraints

- Perform Latin Hypercube sampling for initial coverage

- Evaluate fitness correlation across nearby samples

- Estimate viable region density using preliminary optimization runs

- Apply fitness approximation techniques if computational complexity is prohibitive [14]

FAQ 4: What computational infrastructure do I need for large-scale parameter exploration?

Problem: Parameter space exploration becomes computationally prohibitive for high-dimensional problems.

Solution: Leverage high-performance computing (HPC) infrastructure with appropriate frameworks:

Table: Computational Solutions for Parameter Space Exploration

| Resource | Function | Implementation Example |

|---|---|---|

| HPC Infrastructure [18] | Enables parallel fitness evaluations | L2L framework execution |

| Fitness Approximation [14] | Reduces computational burden | Surrogate models, simplified simulations |

| Embarrassingly Parallel Optimization [18] | Simultaneous parameter set evaluation | Population-based algorithms |

| Gradient-Free Optimization [18] | Handles non-differentiable problems | Evolution strategies, MAML variants |

The L2L framework specifically addresses computational complexity by providing an easy-to-use Python-based framework for HPC infrastructure, supporting parallel execution of optimization targets [18]. For pharmaceutical researchers, this enables exploration of high-dimensional parameter spaces that would be infeasible with traditional computing resources.

The Scientist's Toolkit: Essential Research Reagents

Table: Key Computational Tools for MFEA Research

| Tool/Resource | Function | Application in Parameter Optimization |

|---|---|---|

| L2L Framework [18] | Two-loop optimization infrastructure | Enables meta-learning for parameter space exploration |

| BluePyOpt [18] | Neuroscience-focused optimization | Single-cell to whole-brain model parameterization |

| DEAP [18] | Evolutionary algorithms framework | Provides optimization algorithm implementations |

| SCOOP [18] | Parallelization framework | Enables distributed fitness evaluations |

| Benchmark Problems [16] | Algorithm validation | Many-task optimization performance assessment |

| Fitness Approximations [14] | Computational complexity reduction | Surrogate models for expensive evaluations |

The parameter space of complex biological and pharmacological problems contains numerous viable configurations that can be efficiently discovered through multifactorial evolutionary approaches. By implementing adaptive knowledge transfer mechanisms, maintaining appropriate population diversity, and leveraging modern computational infrastructure, researchers can navigate these high-dimensional spaces effectively. The continued development of MFEA methodologies promises enhanced capability for tackling the increasingly complex optimization challenges in drug development and computational biology.

Advanced Tuning Strategies and Their Application in Drug Design

Multi-Stage Parameter Adaptation Schemes for Dynamic Control

This technical support center provides specialized guidance for researchers implementing Multi-Stage Parameter Adaptation Schemes for Dynamic Control within multifactorial evolutionary algorithms (MFEAs). These advanced optimization approaches are essential for handling complex problems involving multiple distinct tasks simultaneously, where improper parameter control can lead to premature convergence, population stagnation, and negative knowledge transfer between tasks [19] [8]. The following troubleshooting guides and FAQs address specific implementation challenges, supported by experimental protocols and visualization tools to facilitate successful deployment in research applications, including pharmaceutical development where multi-task optimization frequently occurs.

Troubleshooting Guides

Frequently Asked Questions

1. How can I prevent premature convergence when implementing multi-stage parameter adaptation?

Premature convergence often indicates insufficient population diversity or improper balancing between exploration and exploitation across evolutionary stages [19].

Solution: Implement a multi-stage parameter adaptation scheme with distinct control mechanisms for each evolutionary phase [19]. For early stages, focus on exploration using wavelet basis functions to generate scaling factors [19]. In middle stages, transition using Laplace distributions [19]. For final stages, employ Cauchy distributions to fine-tune solutions [19]. Complement this with a diversity enhancement mechanism that uses hypervolume-based metrics to identify stagnant individuals and apply hierarchical intervention to reintroduce diversity [19].

Verification: Monitor population diversity using a hypervolume-based diversity metric throughout evolution [19]. If diversity drops below 15% of initial values before convergence criteria are met, increase perturbation intensity in your intervention mechanism [19].

2. What strategies effectively mitigate negative knowledge transfer in multifactorial evolutionary algorithms?

Negative transfer occurs when inappropriate genetic information flows between unrelated optimization tasks, degrading performance [8].

Solution: Implement an adaptive transfer strategy based on decision trees (EMT-ADT) to predict individual transfer ability before migration [8]. Define transfer ability metrics to quantify useful knowledge contained in potential transfer candidates [8]. Use a supervised learning model to select only promising positive-transfer individuals for cross-task knowledge exchange [8].

Verification: Compare success rates of transferred individuals versus locally generated individuals. If transferred individuals produce better offspring in less than 60% of cases, tighten transfer ability thresholds in your decision tree model [8].

3. How should I adapt control parameters across different evolutionary stages?

Fixed parameters throughout evolution cannot effectively address changing search requirements [19] [20].

Solution: Develop a success-history based adaptive mechanism that tracks and rewards successful parameter combinations [20]. For scaling factor F adaptation, consider using Taylor series expansion to represent the relationship between success rate and parameter values [20]. Employ multiple memory cells to store effective parameter values from different evolutionary stages and recall them based on current search characteristics [20].

Verification: Maintain memory cells for successful parameter values and monitor their utilization patterns. If certain memory cells are rarely accessed, adjust their initialization values or implement a recombination mechanism to improve quality [20].

4. What approaches maintain solution quality while addressing complex constraints in real-world applications?

Real-world problems like pharmaceutical formulation optimization often involve intricate constraints that challenge standard evolutionary operators [21].

Solution: Implement a repair algorithm to correct infeasible solutions while preserving their useful genetic information [21]. Combine this with a local search strategy that incorporates feedback from current optima and considers relative positions to the global optimum [21]. For problems with multiple coupling stages, employ a multi-stage differential evolution approach that optimizes subproblems in parallel [21].

Verification: Track the ratio of feasible to infeasible solutions generated each generation. If this ratio remains below 0.4 for more than 20 generations, adjust your repair algorithm to preserve more building blocks from parent solutions [21].

Comparative Analysis of Parameter Adaptation Techniques

Table 1: Performance Comparison of Parameter Adaptation Schemes in Differential Evolution

| Adaptation Technique | Key Mechanism | Optimization Context | Reported Advantages | Implementation Complexity |

|---|---|---|---|---|

| Multi-Stage Parameter Adaptation (MD-DE) [19] | Wavelet basis, Laplace/Cauchy distributions, Minkowski distance weighting | Numerical optimization on CEC2013-CEC2017 benchmarks | Balanced exploration-exploitation, effective stagnation avoidance | High |

| Success-History Adaptation (SHADE) [20] | Memory cells with historical successful parameters, Cauchy/normal distributions | Black-box numerical optimization (CEC2017, CEC2022) | Robust performance across diverse problems, simplified parameter tuning | Medium |

| Hyper-Heuristic Tuning [20] | Taylor series expansion, Student's t-distribution, upper-level DE tuning | Automated parameter adaptation design | Automatic design capability, flexibility in parameter response | Very High |

| Adaptive Transfer Strategy (EMT-ADT) [8] | Decision tree prediction of transfer ability, individual evaluation | Multifactorial optimization (CEC2017 MFO benchmarks) | Reduced negative transfer, improved solution precision | High |

| jDE Self-Adaptation [20] | Conditional parameter resetting with predetermined probabilities | Bound-constrained optimization problems | Simplicity, minimal computational overhead | Low |

Research Reagent Solutions

Table 2: Essential Algorithmic Components for Multi-Stage Parameter Adaptation Research

| Component | Function | Implementation Example |

|---|---|---|

| Wavelet Basis Functions [19] | Generate scaling factors in early evolutionary stages to promote exploration | Mexican hat or Morlet wavelets for F generation |

| Laplace Distribution [19] | Provide heavy-tailed random values for middle stage parameter adaptation | Location parameter μ=0.5, scale parameter b=0.1 |

| Cauchy Distribution [19] [20] | Generate diverse parameter values with increased exploration probability | Location parameter from memory cells, scale parameter 0.1 |

| Minkowski Distance Weighting [19] | Guide historical memory pool updates based on individual proximity | p-norm distance calculation with p=2 (Euclidean) |

| Student's t-Distribution [20] | Flexible random distribution with tunable degrees of freedom for parameter sampling | Degrees of freedom ν=5, location from success history |

| Orthonormal Basis Filters (OBF) [22] | Parametrize dynamic system models with reduced parameters for adaptive control | Laguerre or Kautz filters for model identification |

| Decision Tree Classifier [8] | Predict transfer ability of individuals in multifactorial environments | Gini impurity splitting criterion, maximum depth 5-7 |

Experimental Protocols

Benchmark Validation Methodology

Comprehensive performance evaluation requires standardized testing across diverse problem domains:

Test Problem Selection: Utilize recognized benchmark suites including CEC2013 (28 functions), CEC2014 (30 functions), and CEC2017 (30 functions) for single-task numerical optimization [19]. For multifactorial optimization, employ CEC2017 MFO benchmarks and WCCI20-MTSO problems [8].

Performance Metrics: Record mean error values, standard deviations, and success rates across multiple independent runs [19]. For multifactorial environments, calculate factorial costs, factorial ranks, and scalar fitness values according to established definitions [8].

Statistical Validation: Perform Wilcoxon signed-rank tests with significance level α=0.05 to confirm performance differences [19]. Use Friedman ranking procedures when comparing multiple algorithms across various problems [20].

Real-World Validation: Apply algorithms to practical problems such as planetary gear design optimization [19], copper industry ingredient optimization [21], or drug formulation problems relevant to pharmaceutical applications.

Implementation Protocol for Multi-Stage Adaptation

Follow this detailed protocol to implement a robust multi-stage parameter adaptation scheme:

Initialization Phase:

Early Stage Adaptation (Exploration Focus):

Middle Stage Adaptation (Transition Phase):

Late Stage Adaptation (Exploitation Focus):

Diversity Preservation:

- Continuously calculate hypervolume-based diversity metrics [19].

- When diversity drops below threshold θ=15% of initial value, identify stagnant individuals using a stagnation tracker [19].

- Apply hierarchical intervention: first intensify local search, if no improvement, apply moderate perturbation, and for prolonged stagnation, replace worst-performing individuals [19].

Multifactorial Optimization Protocol

For multifactorial environments with multiple simultaneous tasks:

Unified Representation:

Individual Assessment:

Controlled Knowledge Transfer:

- Quantify transfer ability for each individual using improvement potential metrics [8].

- Build decision tree classifiers using Gini impurity to predict transfer success [8].

- Allow knowledge transfer only for individuals predicted to provide positive transfer [8].

- Adjust random mating probability (rmp) based on success rates of recent transfers [8].

Advanced Diagnostics

Performance Validation Tests

Convergence Diagnostic: Plot best fitness values versus function evaluations across multiple independent runs. Healthy convergence shows steady improvement without prolonged plateaus exceeding 15% of total evaluation budget [19].

Diversity Monitoring: Track population diversity using hypervolume-based metrics throughout evolution. If diversity prematurely collapses, adjust perturbation intensity in your diversity enhancement mechanism [19].

Transfer Effectiveness: In multifactorial environments, monitor the success ratio of knowledge transfer. Calculate as the percentage of transfers resulting in improved offspring. Maintain above 60% for positive overall impact [8].

Parameter Sensitivity: Conduct sensitivity analysis on key adaptation parameters including memory size H, learning rates, and distribution parameters. Optimal ranges typically are H=6-10, learning rates 0.3-0.7 [20].

Common Implementation Issues and Resolutions

Parameter Drift: Unbounded parameter changes leading to performance degradation. Implement OBF-ARX parametrization with orthonormal basis filters to maintain stability [22].

Computational Overhead: Complex adaptation schemes slowing optimization. Employ success-history with limited memory cells (H=6) and periodic rather than generational updates [20].

Constraint Handling Difficulties: Infeasible solutions dominating population. Integrate repair algorithms that preserve useful solution components while restoring feasibility [21].

Negative Transfer Persistence: Continued performance degradation despite transfer controls. Implement more conservative decision tree thresholds or semi-supervised learning to identify promising transfer candidates [8].

Leveraging Diversity Enhancement Mechanisms to Prevent Premature Convergence

Premature convergence is a fundamental challenge in evolutionary computation, occurring when a population loses genetic diversity too early, causing the search process to become trapped in local optima rather than progressing toward the global optimum [23]. This phenomenon is particularly problematic in complex optimization landscapes where maintaining exploratory capability is essential for finding high-quality solutions. Within the specific context of parameter tuning for multifactorial evolutionary algorithms, the precise management of population diversity becomes even more critical, as the interplay between different tasks and their shared search space can amplify the risk of premature stagnation.

The core of the problem lies in the balance between exploration and exploitation. While selection pressure drives the population toward better solutions, it can inadvertently eliminate valuable genetic material too quickly, causing the algorithm to converge on suboptimal solutions [23]. For researchers and drug development professionals, this translates to missed opportunities in discovering novel compound configurations, optimal treatment parameters, or efficient biomolecular structures. Understanding and implementing mechanisms to preserve diversity is therefore not merely a theoretical exercise but a practical necessity for achieving robust and reliable optimization outcomes in computationally expensive domains like pharmaceutical research.

Core Concepts: Understanding Diversity and Convergence

What is premature convergence and how can I identify it in my experiments?

Premature convergence occurs when an evolutionary algorithm's population loses diversity too quickly, becoming trapped in local optima before discovering the global optimum or sufficiently high-quality solutions [23]. In practical terms, you'll observe that the parental solutions can no longer generate offspring that outperform them, indicating a loss of exploratory power.

Key indicators of premature convergence include:

- Stagnation of Fitness: The average and best fitness values of the population show no significant improvement over consecutive generations.

- Loss of Allelic Diversity: Specific genes across the population converge to identical values, with an allele considered "converged" when 95% of individuals share the same value for that gene [23].

- Reduced Population Diversity: A noticeable decrease in the genotypic or phenotypic variation within the population, often measured using diversity metrics specific to your problem domain.

- Inability to Escape Local Optima: The algorithm consistently returns the same or very similar solutions regardless of parameter adjustments or extended runtimes.

What are the primary causes of premature convergence in evolutionary algorithms?

Several interconnected factors contribute to premature convergence, with their relative importance varying across problem domains:

- Excessive Selection Pressure: Overly aggressive selection mechanisms can cause the population to converge too rapidly around initially promising but ultimately suboptimal solutions.

- Insufficient Population Diversity: Small population sizes or inadequate diversity maintenance strategies fail to preserve the genetic variation necessary for continued exploration.

- Inadequate Genetic Operator Balance: Poorly calibrated crossover and mutation rates may either disrupt building blocks too frequently or fail to introduce sufficient novelty.

- Panmictic Population Structures: Traditional unstructured populations where every individual is eligible to mate with any other can allow slightly better individuals to rapidly dominate the gene pool [23].

- Self-Adaptive Mutation Mismanagement: While self-adaptive mutations can enhance local search, they may also accelerate convergence to local optima if not properly regulated [23].

Troubleshooting Guide: Common Scenarios and Solutions

My population diversity is decreasing too rapidly. What mechanisms can help?

When facing rapid diversity loss, several evidence-based mechanisms can help restore balance to your search process:

- Regional Mating Mechanisms: Implement a co-evolutionary approach where main and auxiliary populations explore different regions of the search space. When the main population stagnates, regional mating between populations can introduce diversity while preserving valuable genetic information [24].

- Opposition-Learning Based Diversity Enhancement: For differential evolution variants, incorporate opposition learning to renew stagnated individuals. This approach uses a stagnation indicator to identify trapped individuals and applies opposition-based learning to generate corresponding solutions in underrepresented regions of the search space [25].

- External Archive Optimization: Maintain an external archive of promising solutions with controlled diversity. The number of generations individuals stay in the archive can be optimized based on the successful evolution rate of the current population, preventing premature convergence while preserving useful genetic material [26].

- Memory-Based Approaches: Leverage historical information to prevent premature convergence. Techniques such as incorporating concepts from the Ebbinghaus forgetting curve can help maintain useful historical solutions while discarding outdated information [27].

Table: Diversity Enhancement Mechanisms and Their Applications

| Mechanism | Primary Algorithm | Key Principle | Best For Problem Types |

|---|---|---|---|

| Regional Mating | Constrained Multi-objective Co-evolutionary Algorithm | Facilitates escape from local optima via inter-population mating | CMOPs with disconnected feasible regions [24] |

| Opposition Learning | Adaptive DE with Opposition-Learning (OLBADE) | Generates opposites of stagnated individuals using stagnation indicators | Single-objective, multimodal optimization [25] |

| Gaussian Similarity | Multi-Modal Multi-objective EA with Gaussian Similarity (MMEA-GS) | Balances diversity in decision and objective spaces simultaneously | MMOPs requiring balance in both spaces [28] |

| Diversity-First Selection | DESCA | Uses regional distribution index to rank individual diversity | Complex CMOPs with fragmented Pareto fronts [24] |

| Memory with Forgetting Curve | PSOMR | Augments memory using Ebbinghaus forgetting curve concepts | PSO applications needing historical solution management [27] |

How can I balance exploration and exploitation through parameter control?

Effective parameter control is essential for maintaining the exploration-exploitation balance throughout the evolutionary process:

- Adaptive Parameter Control with Non-linear Weighting: Implement a two-stage parameter adaptation strategy that adjusts control parameters based on evolutionary states. This approach uses non-linear fitness increment-based weighting to adapt parameters, shifting emphasis between exploration and exploitation as the run progresses [25].

- Wavelet Basis Functions and Cauchy Distributions: Employ wavelet basis functions and Cauchy distributions for generating scaling factors across different evolutionary stages. This hybrid approach provides different perturbation characteristics that can enhance both global exploration and local refinement [26].

- Success-Rate Based Parameter Adaptation: Utilize information from successfully evolved individuals to guide parameter adjustments. The dimension change information of these successful individuals can refine adaptive parameter control schemes, creating a self-reinforcing cycle of improvement [26].

- Donor Vector Perturbation: In differential evolution, complement existing trial vector generation strategies with donor vector perturbation. This additional diversity mechanism helps individuals escape local optima without significantly disrupting convergence progress [25].

My algorithm is stagnating in local optima. What restart strategies are effective?

When your algorithm shows clear signs of stagnation, these restart mechanisms can help reinvigorate the search:

- Dimension-Learning Based Restart: Identify stagnant individuals using a combination of stagnation tracking and diversity assessment indicators, then regenerate them using dimension-learning approaches that preserve valuable dimensional information from the evolutionary process [26].

- Population Partial Reinitialization: Reinitialize specific portions of the population that have shown limited improvement over successive generations while preserving elite individuals that may contain valuable building blocks [29].

- Subpopulation Restart Strategies: In algorithms with multiple subpopulations, implement staggered restart schedules where subpopulations showing diversity metrics below predetermined thresholds are reinitialized while others continue their search.

- Stagnation Indicator-Based Renewal: Develop explicit stagnation indicators that monitor both fitness improvement and population diversity, triggering renewal procedures when both metrics fall below acceptable thresholds for a specified duration [25] [26].

Experimental Protocols and Methodologies

Standard experimental framework for evaluating diversity enhancement

When implementing diversity enhancement mechanisms, follow this standardized experimental protocol to ensure reproducible and comparable results:

- Benchmark Selection: Choose appropriate benchmark suites that represent the problem characteristics relevant to your research. Comprehensive evaluation should include functions from CEC2013, CEC2014, CEC2017, and CEC2022 test suites to ensure broad coverage of problem types [25].

- Baseline Establishment: Implement and test standard algorithms (e.g., classic DE, PSO, GA) without diversity enhancement to establish baseline performance metrics.

- Incremental Implementation: Introduce diversity enhancement mechanisms incrementally to isolate their individual contributions to performance improvement.

- Performance Metrics: Employ multiple performance metrics including:

- Solution accuracy (best, median, and worst objective values)

- Convergence speed (number of function evaluations to reach target fitness)

- Success rate (percentage of runs finding satisfactory solutions)

- Diversity metrics (genotypic and phenotypic diversity measures)

- Statistical Validation: Perform multiple independent runs (typically 30-51) and apply appropriate statistical tests (e.g., Wilcoxon signed-rank test) to validate significance of results.

- Parameter Sensitivity Analysis: Systematically analyze the sensitivity of the proposed methods to their control parameters to establish robustness across different settings.

Implementation workflow for diversity-enhanced evolutionary algorithms

The following diagram illustrates a generalized workflow for implementing diversity enhancement mechanisms in evolutionary algorithms:

Figure 1. Implementation workflow for diversity-enhanced evolutionary algorithms, showing how diversity mechanisms integrate with standard evolutionary operations.

The Researcher's Toolkit: Essential Components for Diversity Maintenance

Key methodological components for diversity maintenance

Table: Essential Methodological Components for Diversity Maintenance

| Component | Function | Implementation Example |

|---|---|---|

| Regional Distribution Index | Assesses individual diversity based on regional distribution | Used in DESCA to rank individuals and guide selection [24] |

| Stagnation Indicator | Detects when populations or individuals stop improving | Combines fitness history and diversity metrics to trigger restarts [25] [26] |

| External Archive | Stores promising historical solutions for future use | Size controlled by successful evolution rate; uses timestamp-based decay [26] |

| Balanced Gaussian Distance | Enhances environmental selection by considering both decision and objective spaces | Prevents solutions crowded in only one space in MMEA-GS [28] |

| Opposition Learning Operator | Generates solutions opposite to current stagnated individuals | Creates corresponding solutions in underrepresented regions [25] |

| Donor Vector Perturbation | Complements existing mutation strategies in DE | Increases population diversity without disrupting convergence [25] |

Quantitative performance comparison of diversity enhancement approaches

Table: Performance Comparison of Diversity Enhancement Approaches on Standard Benchmark Problems

| Algorithm | Average Rank | Success Rate (%) | Diversity Metric | Convergence Speed | Key Strength |

|---|---|---|---|---|---|

| DESCA [24] | 2.1 | 94.3 | 0.782 | Medium-High | Balanced diversity-convergence |

| OLBADE [25] | 1.8 | 96.7 | 0.815 | High | Stagnation avoidance |

| ADE-DMRM [26] | 2.3 | 92.5 | 0.795 | Medium | Effective restart mechanism |

| MMEA-GS [28] | 2.5 | 89.8 | 0.831 | Medium | Dual-space diversity balance |

| PSOMR [27] | 3.1 | 87.2 | 0.763 | Medium-Low | Historical memory utilization |

Advanced Techniques: Multi-Modal and Constrained Optimization

How do diversity strategies differ for multi-modal multi-objective problems?

Multi-modal multi-objective optimization problems (MMOPs) present unique challenges where multiple solutions in decision space may map to similar objective values [28]. In such cases, diversity maintenance requires specialized approaches:

- Dual-Space Diversity Balancing: Traditional methods that calculate crowding distance separately in decision and objective spaces can create imbalances. Gaussian similarity-based approaches simultaneously evaluate proximity in both spaces, promoting more balanced diversity [28].

- Hierarchical Archiving: Maintain separate archives for different solution clusters, ensuring that equivalent Pareto optimal solutions from different regions of the decision space are preserved throughout the optimization process.

- Niche Preservation Techniques: Implement fitness sharing or crowding mechanisms specifically designed to maintain subpopulations in different basins of attraction, preventing the entire population from converging to a single Pareto optimal region.

What special considerations apply to constrained optimization problems?

Constrained optimization problems, particularly those with complex constraints that create disconnected feasible regions, require specialized diversity maintenance:

- Dual-Population Approaches: Maintain separate populations exploring constrained and unconstrained Pareto fronts, allowing transfer of genetic information between them when stagnation occurs [24].

- Constraint-Aware Diversity Metrics: Develop diversity measures that account for both objective space performance and constraint satisfaction, ensuring that diversity maintenance doesn't compromise feasibility.

- Feasible Region Boundary Exploration: Allocate specific resources to exploring the boundaries of feasible regions, as these often contain promising solutions in constrained optimization landscapes.

The following diagram illustrates the co-evolutionary approach with two populations for constrained optimization:

Figure 2. Co-evolutionary approach with two populations for constrained optimization, showing how stagnation triggers different diversity enhancement responses.

FAQ: Addressing Common Implementation Challenges

How do I determine the optimal balance between diversity maintenance and convergence speed?

Finding the optimal balance requires careful consideration of your specific problem domain and computational constraints:

- For problems with numerous local optima: Prioritize diversity maintenance (60-70% focus) especially in early generations, gradually shifting toward convergence (60-70% focus) in later stages.

- For computationally expensive evaluations: Lean slightly toward convergence (55-60% focus) to maximize information gain from each evaluation, but maintain sufficient diversity (40-45%) to avoid catastrophic premature convergence.

- When using multiple populations or archives: Allocate approximately 70-80% of resources to convergence-focused search and 20-30% to diversity-preserving exploration.

- As a general rule: Monitor both diversity metrics and improvement rates, adjusting the balance dynamically when either falls below threshold values for consecutive generations.

What are the most common pitfalls when implementing diversity mechanisms?

Even well-designed diversity enhancement strategies can fail if these common pitfalls are not avoided:

- Overly Aggressive Diversity Preservation: Excessive focus on diversity can prevent necessary convergence, resulting in random search behavior and wasted computational resources.

- Inadequate Stagnation Detection: Overly sensitive stagnation indicators may trigger diversity mechanisms prematurely, while insensitive indicators may allow populations to remain trapped too long.

- Parameter Sensitivity: Many diversity mechanisms introduce additional parameters that require careful tuning specific to your problem domain.

- Computational Overhead: Some diversity preservation techniques (e.g., sophisticated niching methods) can significantly increase computational costs, reducing overall efficiency.

- Problem-Dependent Effectiveness: Diversity mechanisms that work well on one class of problems may perform poorly on others, necessitating careful mechanism selection based on problem characteristics.

How can I adapt these mechanisms for high-dimensional optimization problems?

High-dimensional problems present unique challenges for diversity maintenance:

- Dimensional Selection in Restarts: When implementing restart mechanisms, focus on dimensions that show minimal improvement or early convergence, rather than reinitializing all dimensions uniformly [26].

- Subspace Diversity Maintenance: Maintain diversity across different subspaces of the high-dimensional problem, as exhaustive diversity across all dimensions becomes computationally prohibitive.

- Adaptive Neighborhood Sizes: Use larger neighborhood sizes for diversity operations in early generations, gradually focusing on more localized diversity as the run progresses.

- Projection-Based Diversity Metrics: Implement diversity measures that operate on projected versions of the solution space to reduce computational complexity while preserving meaningful diversity information.