Adaptive Knowledge Transfer in Evolutionary Multitasking: Advanced Strategies for Complex Optimization in Biomedicine

This article comprehensively explores the paradigm of Evolutionary Multitasking Optimization (EMTO) with a focus on adaptive knowledge transfer mechanisms.

Adaptive Knowledge Transfer in Evolutionary Multitasking: Advanced Strategies for Complex Optimization in Biomedicine

Abstract

This article comprehensively explores the paradigm of Evolutionary Multitasking Optimization (EMTO) with a focus on adaptive knowledge transfer mechanisms. It systematically covers the foundational principles of EMTO, detailing innovative methodological advances including self-adjusting dual-mode evolutionary frameworks, adaptive solver selection, and explicit autoencoding techniques. The discussion extends to critical troubleshooting aspects such as mitigating negative transfer and managing dynamic optimization environments, supported by empirical validation on benchmark suites and real-world applications. Tailored for researchers, scientists, and drug development professionals, this review highlights how adaptive knowledge transfer significantly enhances optimization efficiency in complex biomedical problems, from drug discovery to clinical protocol optimization, by effectively leveraging synergies between related tasks.

The Foundations of Evolutionary Multitasking: From Basic Principles to Knowledge Transfer Mechanisms

Defining Evolutionary Multitasking Optimization (EMTO) and Its Core Objectives

Frequently Asked Questions (FAQs)

Q1: What is Evolutionary Multitasking Optimization (EMTO) and what is its primary goal? A1: Evolutionary Multitasking Optimization (EMTO) is a branch of evolutionary computation that aims to solve multiple optimization tasks simultaneously within a single problem, outputting the best solution for each task. Unlike traditional evolutionary algorithms that solve problems in isolation, EMTO creates a multi-task environment where a single population evolves, and knowledge transfer occurs between different, potentially related, tasks. The primary objective is to improve overall search efficiency and solution quality by leveraging the implicit parallelism of population-based search and exploiting potential synergies between tasks [1].

Q2: What is 'negative transfer' and why is it a critical challenge in EMTO? A2: Negative transfer occurs when knowledge exchanged between tasks is not beneficial or is even detrimental, leading to performance degradation instead of improvement. This is a central challenge in EMTO because the relationships between tasks are often unknown beforehand. If the algorithm transfers knowledge between unrelated or poorly-matched tasks, it can misguide the search, impede convergence, and yield inferior solutions. Mitigating negative transfer is a key focus of modern EMTO research [2].

Q3: How can I adaptively control knowledge transfer in my EMTO experiments? A3: Adaptive control of knowledge transfer can be achieved through several advanced strategies:

- Machine Learning-Guided Transfer: Train an online model (e.g., a neural network) to predict the survival success of offspring generated from cross-task transfers. This model can then guide individual-level transfer decisions, boosting positive transfer and inhibiting negative ones [2].

- Adaptive Operator Selection: Dynamically adjust the selection probability of different evolutionary search operators (e.g., GA, DE) based on their recent performance on specific tasks. This ensures the most suitable search strategy is used for each problem [3].

- Dynamic Random Mating Probability (rmp): Instead of a fixed

rmp, implement mechanisms that allow this key parameter, which controls the frequency of inter-task crossover, to adapt during the optimization process based on measured inter-task similarities [3].

Q4: My multi-objective EMTO algorithm is converging prematurely. How can I enhance its population diversity? A4: For multi-objective EMTO, consider a two-stage adaptive knowledge transfer mechanism based on population distribution.

- In the first stage, use an adaptive weight to adjust the search step size of each individual, reducing the impact of negative transfer.

- In the second stage, dynamically adjust the search range of each individual based on a probability model of the population. This helps maintain diversity and escape local optima [4].

- Alternatively, employ a collaborative knowledge transfer mechanism that uses information entropy to balance convergence and diversity across different evolutionary stages [5].

Troubleshooting Common Experimental Issues

| Problem Area | Specific Issue | Potential Causes | Recommended Solutions |

|---|---|---|---|

| Knowledge Transfer | Consistent performance degradation in one or more tasks. | High likelihood of negative transfer due to low inter-task similarity. | Implement similarity estimation between tasks (e.g., using domain adaptation techniques like TCA) to filter transfers [5] [3]. |

| Algorithm Convergence | Slow convergence across all tasks. | Ineffective evolutionary search operator; insufficient or ineffective knowledge exchange. | Use an adaptive bi-operator strategy (e.g., BOMTEA) that combines GA and DE, letting the algorithm select the best operator per task [3]. |

| Multi-Objective Optimization | Poor diversity in the non-dominated solution set. | Search is trapped in local Pareto fronts; transfer mechanism overlooks objective space. | Adopt a collaborative transfer mechanism (e.g., CKT-MMPSO) that exploits information from both the search and objective spaces to balance convergence and diversity [5]. |

| Parameter Tuning | Sensitivity to the rmp parameter. |

Fixed rmp value is not suitable for the specific task relationships in your problem. |

Utilize algorithms with self-adaptive rmp (e.g., MFEA-II) that can online estimate and adjust transfer parameters [3] [2]. |

Experimental Protocols for Key EMTO Strategies

Protocol 1: Implementing Adaptive Knowledge Transfer using Machine Learning

This protocol is based on the MFEA-ML algorithm, which uses a machine learning model to guide transfer decisions [2].

- Initialization: Initialize a single population and assign skill factors (the task each individual is optimizing) randomly.

- Offspring Generation & Data Collection: For several initial generations, allow knowledge transfer to occur randomly. For each inter-task crossover, record the parent individuals and the survival status (success/failure) of the resulting offspring as training data.

- Model Training: Use the collected data to train a classifier (e.g., a Feedforward Neural Network) to predict the success of a potential transfer between two parent individuals from different tasks.

- Adaptive Transfer: In subsequent generations, before performing a crossover between individuals from different tasks, query the trained ML model. Only proceed with the crossover if the model predicts a high probability of successful offspring.

- Model Retraining: Periodically update the ML model with new data from recent generations to adapt to the changing search landscape.

Protocol 2: A Two-Stage Knowledge Transfer for Multi-Objective EMTO

This protocol is designed to improve convergence and diversity in multi-objective problems (EMT-PD) [4].

- Stage 1 - Convergent Search:

- Build a probability model (e.g., a Gaussian distribution) that captures the search trend and distribution of the entire population.

- Extract knowledge from this model to guide the search.

- Apply an adaptive weight to adjust the step size of each individual's search, preventing large, potentially disruptive moves that could lead to negative transfer.

- Stage 2 - Diversity Enhancement:

- Dynamically adjust the search range for each individual based on the evolving population distribution.

- This expanded and adaptive search range helps the population explore new regions of the objective space, increasing diversity and aiding escape from local Pareto fronts.

- The switch between stages can be controlled by monitoring the improvement in hypervolume or spread of the non-dominated solution set.

The Researcher's Toolkit: Essential EMTO Components

| Component / Reagent | Function in EMTO Experiments | Key Considerations |

|---|---|---|

| Multifactorial Evolutionary Algorithm (MFEA) | The foundational framework for many EMTO algorithms. It uses a single population with "skill factors" and controls transfer via rmp [1]. |

Ideal for getting started; however, its performance is highly sensitive to the fixed rmp setting. |

| Evolutionary Search Operators (GA & DE) | The variation operators that generate new offspring. GA (e.g., SBX) and DE (e.g., DE/rand/1) are commonly used [3]. | No single operator is best for all tasks. Using multiple adaptively (e.g., in BOMTEA) is often superior. |

| Random Mating Probability (rmp) | A key parameter that controls the probability of crossover between individuals from different tasks, thus regulating knowledge transfer [1] [3]. | A low rmp may stifle useful transfer, while a high rmp can cause negative transfer. Adaptive methods are preferred. |

| Skill Factor | A scalar tag assigned to each individual, identifying the primary task it is evaluating [1]. | Used to group the population by task and to identify candidates for inter-task crossover. |

| Benchmark Suites (CEC17, CEC22) | Standardized sets of test problems for fairly evaluating and comparing the performance of different EMTO algorithms [3]. | Essential for validating new algorithms against state-of-the-art methods before real-world application. |

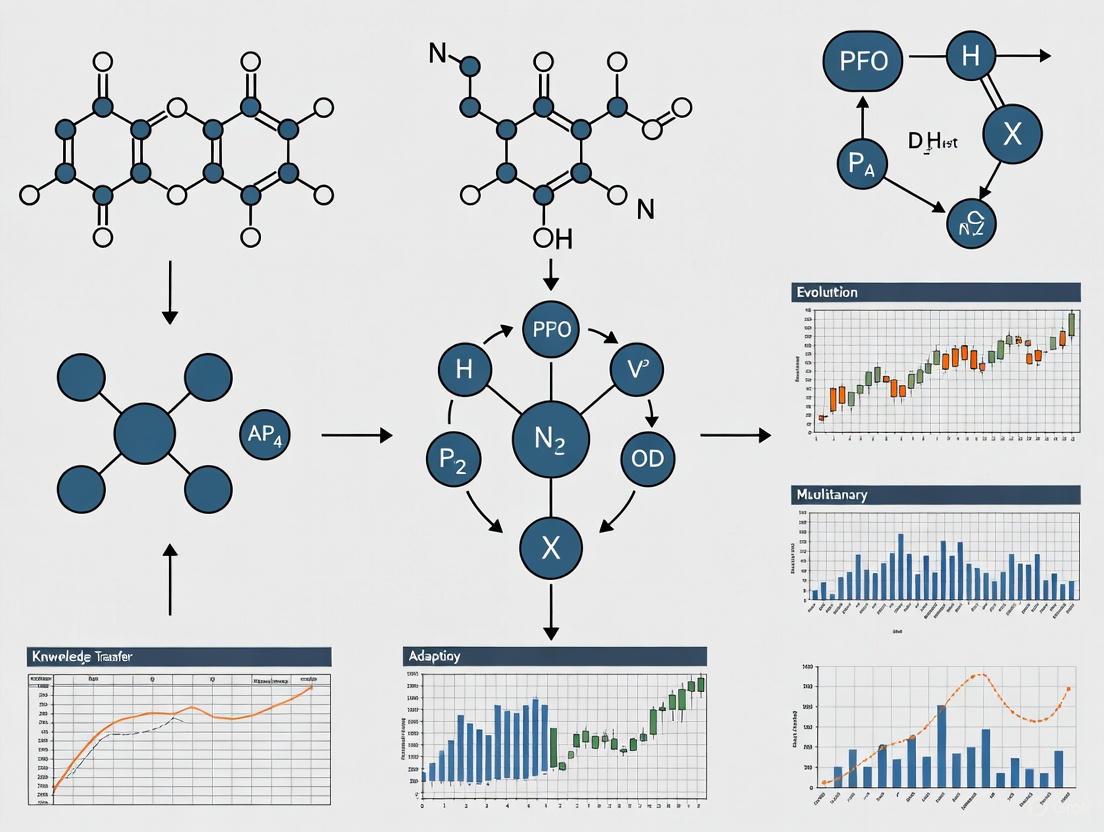

Workflow Visualization

The following diagram illustrates the core adaptive knowledge transfer workflow in a modern EMTO system:

Adaptive Knowledge Transfer Workflow in EMTO

The diagram below outlines a typical experimental setup for benchmarking a new EMTO algorithm:

EMTO Experimental Benchmarking Protocol

The Multifactorial Evolutionary Algorithm (MFEA) represents a pioneering computational framework in the field of evolutionary multitasking optimization (EMTO). Unlike traditional evolutionary algorithms that solve optimization problems in isolation, MFEA enables the simultaneous solution of multiple distinct optimization tasks within a single unified search process. This innovative approach leverages implicit knowledge transfer between tasks, allowing genetic information to be shared across different problem domains through cultural transmission and assortative mating mechanisms [6]. The fundamental insight driving MFEA development is that real-world optimization problems rarely occur in isolation, and leveraging potential synergies between related tasks can significantly accelerate convergence and improve solution quality across all optimized tasks [7].

Within the broader context of evolutionary multitasking with adaptive knowledge transfer research, MFEA establishes the foundational architecture upon which numerous advanced extensions have been built. The algorithm's core innovation lies in its ability to maintain a unified population of individuals that collectively address multiple tasks, with each individual specializing in a particular task while potentially carrying beneficial genetic material for other tasks [6]. This bio-inspired approach mirrors natural evolution, where species develop specialized traits while sharing a common genetic pool that can transfer advantageous characteristics across related species through mechanisms like horizontal gene transfer.

For research scientists and drug development professionals, MFEA offers particular promise in complex optimization scenarios such as multi-objective drug design, where simultaneous optimization of potency, selectivity, and pharmacokinetic properties is required, or in clinical trial optimization, where multiple trial parameters must be coordinated across different patient populations [8]. The algorithm's ability to implicitly transfer knowledge between related optimization tasks can significantly reduce computational costs and accelerate the discovery of optimal solutions in these high-stakes applications.

MFEA Fundamentals: Core Concepts and Terminology

Understanding MFEA requires familiarity with its specialized terminology and operational concepts, which extend beyond conventional evolutionary algorithms:

Factorial Cost (Ψᵢⱼ): The objective value of an individual solution (pᵢ) when evaluated on a specific task (Tⱼ) [6]. This represents the raw performance of a solution on a given task before any normalization or ranking.

Factorial Rank (rᵢⱼ): The relative standing of an individual when the entire population is sorted in ascending order according to their factorial cost for a particular task [6]. This ranking enables meaningful comparison across tasks with different objective function scales.

Scalar Fitness (φᵢ): A unified measure of an individual's overall performance across all tasks, defined as φᵢ = 1/minⱼ{rᵢⱼ} [6]. This scalar value determines selection probability during evolutionary operations.

Skill Factor (τᵢ): The index of the task on which an individual performs best, formally defined as τᵢ = argminⱼ{rᵢⱼ} [6]. The skill factor identifies an individual's specialization and determines which task it contributes to during evaluation.

Random Mating Probability (rmp): A crucial control parameter that determines the likelihood of crossover between individuals with different skill factors [6]. This parameter directly regulates the intensity of knowledge transfer between tasks.

Table 1: Key Properties of Individuals in MFEA

| Property | Symbol | Definition | Role in MFEA |

|---|---|---|---|

| Factorial Cost | Ψᵢⱼ | Objective value fⱼ(pᵢ) | Raw performance measure |

| Factorial Rank | rᵢⱼ | Performance ranking on task j | Enables cross-task comparison |

| Scalar Fitness | φᵢ | 1/minⱼ{rᵢⱼ} | Determines selection probability |

| Skill Factor | τᵢ | argminⱼ{rᵢⱼ} | Identifies task specialization |

The operational workflow of MFEA maintains a single population that evolves to address all tasks simultaneously. Each individual is evaluated on its specialized task (as indicated by its skill factor) during initial generations, with the scalar fitness enabling selection pressure across tasks. Through assortative mating and vertical cultural transmission, MFEA facilitates implicit knowledge transfer: individuals with different skill factors may mate with a probability determined by rmp, allowing genetic material to flow between task domains [6]. This creates a powerful symbiotic relationship where progress on one task can potentially accelerate progress on other related tasks through the transfer of beneficial building blocks.

Troubleshooting Common MFEA Implementation Challenges

Negative Knowledge Transfer Issues

Problem: How can I identify and mitigate negative transfer between unrelated tasks?

Negative transfer occurs when knowledge exchange between dissimilar tasks degrades optimization performance, typically manifesting as slowed convergence, premature stagnation, or deterioration of solution quality on one or more tasks [7]. This frequently arises when tasks have misaligned fitness landscapes or competing objectives.

Diagnosis Protocol:

- Monitor per-task convergence curves for sudden plateaus or regression

- Calculate inter-task similarity metrics (Kullback-Leibler divergence, Maximum Mean Discrepancy) using population distribution statistics [9]

- Track transfer success rates by evaluating offspring fitness improvements from cross-task vs. within-task mating

Resolution Strategies:

- Implement adaptive rmp control using online transfer parameter estimation (MFEA-II approach) [6]

- Apply individual-level transfer filtering using machine learning models (MFEA-ML) to predict beneficial transfers [8]

- Utilize domain adaptation techniques like Linearized Domain Adaptation (LDA) or affine transformations to align task search spaces [6] [7]

- Employ explicit similarity learning to measure task relatedness and adjust transfer intensity accordingly [10]

Preventative Measures:

- Conduct preliminary task relatedness analysis before full optimization

- Implement conservative initial rmp values (0.1-0.3) for unknown task relationships

- Use multi-population architectures with controlled migration for highly dissimilar tasks [9]

Skill Factor Assignment Problems

Problem: Why does improper skill factor assignment degrade MFEA performance, and how can it be optimized?

Incorrect skill factor assignment leads to inefficient resource allocation, where individuals may specialize on tasks where they provide minimal contribution, wasting evaluations that could have been better applied to other tasks.

Diagnosis Indicators:

- Skilled factor distribution becomes heavily imbalanced

- High-performing individuals consistently misassigned to tasks

- Excessive computational resources devoted to certain tasks with minimal improvement

Advanced Resolution Methods:

- Implement dynamic skill factor reassignment using ResNet-based adaptive assignment that leverages high-dimensional residual information [11]

- Apply Gini coefficient-based decision trees (EMT-ADT) to predict individual transfer ability and optimize assignments [6]

- Utilize random mapping mechanisms to enhance crossover operations and mitigate negative transfer risks [11]

Table 2: Skill Factor Assignment Strategies Comparison

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Static Assignment | Fixed at initialization | Simple implementation | Inflexible to changing optimization landscape |

| Factorial Rank-Based | Reassign based on current rankings | Adapts to population changes | May cause oscillating assignments |

| ResNet Dynamic | Neural network prediction using residual learning | Handles complex task relationships | Increased computational overhead |

| Decision Tree Prediction | ML model based on transfer ability | Explicit transfer optimization | Requires training data collection |

Parameter Configuration Challenges

Problem: What are the optimal configurations for critical MFEA parameters like rmp, and how should they be adapted during optimization?

Parameter sensitivity represents a significant challenge in MFEA, with improper settings leading to suboptimal performance, particularly when task relatedness is unknown a priori.

Experimental Configuration Protocol:

- Initial rmp setting: For unknown task relationships, begin with rmp = 0.2-0.3 as a conservative baseline [6]

- Population sizing: Allocate 50-100 individuals per task, with minimum total population of 200 for multitask scenarios [8]

- Crossover operator selection: Choose operators based on problem domain (SBX for continuous, PMX for permutation problems) [11]

Adaptive Parameter Control Methods:

- Online rmp estimation: MFEA-II uses a symmetric matrix to capture non-uniform inter-task synergies, continuously adapted during search [6]

- Success-history based adaptation: Adjust parameters based on mutation success rates and transfer effectiveness [6]

- Golden Section Search (GSS): Apply GSS-based linear mapping to explore promising search areas and avoid local optima [7]

- Reinforcement learning control: Use multi-role RL agents to dynamically adjust where, what, and how to transfer [10]

Scalability to High-Dimensional Tasks

Problem: How can MFEA be effectively applied to tasks with high-dimensional search spaces or differing dimensionalities?

Traditional MFEA implementations struggle with high-dimensional optimization due to the curse of dimensionality and challenges in learning effective mappings between spaces of different dimensions.

Dimensionality Alignment Techniques:

- Multidimensional Scaling (MDS): Establish low-dimensional subspaces for each task before applying linear domain adaptation [7]

- Very Deep Super-Resolution (VDSR) models: Transform low-dimensional individuals into high-dimensional representations to model complex variable interactions [11]

- Block-level knowledge transfer: Segment individuals into distinct blocks before transfer to handle differing dimensions [9]

- Affine transformation: Learn mapping relationships between distinct problem domains to bridge dimensionality gaps [6]

Implementation Workflow for High-Dimensional Problems:

- Perform dimensionality analysis across all tasks

- Apply MDS to project all tasks to aligned latent spaces

- Implement VDSR-based crossover operators for high-dimensional knowledge transfer

- Use block-level transfer with dimensionality-aware alignment

- Monitor transfer effectiveness and adjust strategy accordingly

Convergence Stagnation in Complex Landscapes

Problem: What techniques can address premature convergence or stagnation in complex multimodal landscapes?

MFEA populations may become trapped in local optima, particularly when optimizing tasks with rugged fitness landscapes or when negative transfer misdirects the search process.

Stagnation Identification Metrics:

- Population diversity measures (genotypic and phenotypic)

- Fitness improvement rates across multiple generations

- Transfer effectiveness ratios (successful vs. detrimental transfers)

Advanced Convergence Enhancement Methods:

- Residual learning architectures: Generate high-dimensional residual representations to model complex variable interactions [11]

- Golden Section Search exploration: Implement GSS-based linear mapping to systematically explore promising regions [7]

- Multi-role reinforcement learning: Deploy specialized RL agents for task routing, knowledge control, and strategy adaptation [10]

- Complex network analysis: Use network structures to model and optimize knowledge transfer pathways between tasks [9]

Diagram 1: MFEA Operational Workflow - This flowchart illustrates the core procedural sequence of the Multifactorial Evolutionary Algorithm, highlighting the key stages from population initialization through to convergence checking.

Experimental Protocols and Methodologies

Standardized Benchmarking Protocol

To ensure reproducible evaluation of MFEA performance and facilitate meaningful comparison between algorithmic variants, researchers should adhere to the following standardized experimental protocol:

Benchmark Selection:

- CEC2017 MFO Benchmark Problems: Comprehensive set of single-objective multitask optimization problems [6]

- WCCI20-MTSO and WCCI20-MaTSO: IEEE World Congress on Computational Intelligence benchmark problems [6] [11]

- Network Robustness Optimization: Real-world combinatorial problems for algorithm validation [12]

Performance Metrics:

- Convergence Speed: Generations or function evaluations to reach target solution quality

- Solution Accuracy: Best objective values achieved for each task

- Transfer Effectiveness: Success rate of knowledge transfer operations

- Computational Efficiency: Runtime and resource consumption

Experimental Configuration:

- 30 independent runs per algorithm configuration to ensure statistical significance

- Population sizes: 100-500 individuals depending on problem complexity

- Termination criteria: 500-1000 generations or computational budget limits

- Comprehensive reporting of mean, standard deviation, and statistical test results (Wilcoxon signed-rank test)

MFEA with Adaptive Transfer Strategy (EMT-ADT) Protocol

The EMT-ADT algorithm enhances traditional MFEA through decision tree-based transfer prediction [6]:

Implementation Steps:

- Define transfer ability indicator to quantify useful knowledge in transferred individuals

- Construct decision tree based on Gini coefficient to predict transfer ability

- Select promising positive-transfer individuals based on prediction results

- Integrate SHADE (Success-History based Adaptive Differential Evolution) as search engine

Key Algorithmic Enhancements:

- Individual-level transfer ability assessment

- Supervised machine learning for transfer prediction

- Adaptive selection of transfer candidates

- Generality of MFO paradigm maintenance

Experimental Validation:

- Comparative testing against state-of-the-art algorithms

- Performance demonstration on CEC2017, WCCI20-MTSO, and WCCI20-MaTSO benchmarks

- Statistical significance confirmation of performance improvements

Machine Learning-Enhanced MFEA (MFEA-ML) Protocol

The MFEA-ML approach uses online machine learning to guide knowledge transfer at the individual level [8]:

Training Data Collection:

- Trace survival status of individuals generated by intertask transfer

- Collect features related to parent individuals and transfer outcomes

- Construct training dataset for transfer decision model

Model Architecture:

- Implement feedforward neural network (FNN) as primary machine learning model

- Alternative ML models may be substituted based on problem characteristics

- Online training and model updating during optimization process

Transfer Control Mechanism:

- ML model predicts beneficial transfer pairs at individual level

- Selective application of crossover based on model predictions

- Continuous model refinement through online learning

Validation Methodology:

- Comparison against MFEA, EMEA, MFEA-II, AT-MFEA, SREMTO, and other advanced algorithms

- Application to benchmark problems and engineering design scenario (BWBUG shape design)

- Demonstration of competitive performance and negative transfer reduction

Diagram 2: Machine Learning-Enhanced MFEA - This diagram illustrates the integration of machine learning for adaptive knowledge transfer control in MFEA-ML, showing how historical transfer data trains models to predict beneficial transfers.

Research Reagent Solutions: Algorithmic Components and Tools

Table 3: Essential MFEA Research Components and Their Functions

| Component | Type | Function | Implementation Example |

|---|---|---|---|

| SHADE Engine | Search Algorithm | Success-history based parameter adaptation | Differential evolution with historical memory [6] |

| Decision Tree Model | ML Classifier | Predict individual transfer ability | Gini coefficient-based tree (EMT-ADT) [6] |

| Feedforward Neural Network | ML Model | Individual-level transfer decisions | FNN with backpropagation (MFEA-ML) [8] |

| VDSR Model | Deep Learning | High-dimensional representation learning | Very Deep Super-Resolution networks [11] |

| ResNet Architecture | Deep Learning | Dynamic skill factor assignment | Residual Networks with skip connections [11] |

| Multidimensional Scaling | Dimensionality Reduction | Subspace alignment for transfer | MDS-based LDA [7] |

| Golden Section Search | Optimization Method | Promising region exploration | GSS-based linear mapping [7] |

| Complex Network Analysis | Analytical Framework | Knowledge transfer modeling | Network-based transfer structure [9] |

Frequently Asked Questions (FAQ)

Q1: How does MFEA fundamentally differ from traditional multiobjective optimization?

A: While multiobjective optimization addresses a single problem with multiple competing objectives, MFEA solves multiple distinct optimization tasks simultaneously. The key distinction lies in the nature of the problems being addressed: multiobjective optimization handles conflicting criteria within one problem, while MFEA leverages potential synergies between different problems through knowledge transfer [6].

Q2: What is the computational overhead of implementing advanced MFEA variants with machine learning components?

A: The computational overhead varies significantly by implementation. Basic MFEA introduces minimal overhead beyond standard evolutionary algorithms. ML-enhanced variants (MFEA-ML, EMT-ADT) typically increase computational requirements by 15-30% due to model training and inference [8]. However, this overhead is often offset by reduced function evaluations through more effective knowledge transfer, resulting in net computational savings for complex problems.

Q3: How can I determine the optimal rmp value for my specific multitask problem?

A: For problems with unknown task relatedness, start with conservative rmp values (0.1-0.3) and implement adaptive estimation strategies like those in MFEA-II [6]. For more controlled approaches, use offline task similarity analysis or online reinforcement learning methods [10] that dynamically adjust rmp based on transfer effectiveness.

Q4: Can MFEA handle tasks with completely different dimensionalities and search space characteristics?

A: Yes, but this requires specialized techniques. Modern approaches include MDS-based subspace alignment [7], affine transformations [6], and VDSR-based dimensionality transformation [11]. These methods create aligned latent spaces that enable effective knowledge transfer despite differing original dimensionalities.

Q5: What are the most effective strategies for minimizing negative transfer in practical applications?

A: The most effective strategies include: (1) individual-level transfer filtering using ML models [8], (2) explicit inter-task similarity learning [10], (3) block-level knowledge transfer [9], and (4) adaptive rmp control at the task-pair level [6]. For critical applications, implement multiple strategies with comprehensive transfer effectiveness monitoring.

Q6: How scalable is MFEA to many-task optimization scenarios (5+ tasks)?

A: Basic MFEA faces challenges with many-task optimization due to increased negative transfer risk and population management complexity. Enhanced approaches using complex network structures [9], multi-role reinforcement learning [10], and hierarchical knowledge transfer mechanisms have demonstrated improved scalability to 10+ tasks in benchmark studies.

Q7: What are the promising real-world application domains for MFEA beyond benchmark problems?

A: MFEA has shown particular promise in: (1) engineering design optimization (e.g., blended-wing-body underwater glider design) [8], (2) network robustness and influence maximization [12], (3) drug design and molecular optimization, and (4) complex supply chain optimization involving production and logistics tasks [6].

Emerging Research Directions and Future Developments

The field of evolutionary multitasking continues to evolve rapidly, with several promising research directions emerging from current MFEA research:

Meta-Learned Multitasking Policies: Reinforcement learning approaches that holistically address the "where, what, and how" of knowledge transfer through specialized agents for task routing, knowledge control, and strategy adaptation [10]. These systems show potential for generating generalizable transfer policies that adapt to diverse problem characteristics without manual redesign.

Complex Network-Inspired Architectures: Using network structures to model and optimize knowledge transfer pathways, with tasks as nodes and transfer relationships as edges [9]. This approach enables more efficient control of transfer interactions in many-task scenarios and provides analytical frameworks for understanding transfer dynamics.

Deep Learning Integration: Advanced neural architectures like VDSR and ResNet for enhancing specific MFEA components, including high-dimensional representation learning [11] and dynamic skill factor assignment. These approaches address fundamental limitations in handling complex variable interactions and adapting to changing task relationships.

Theoretical Foundations Development: While empirical success of MFEA is well-established, ongoing research aims to strengthen theoretical understanding of convergence properties, knowledge transfer mechanics, and performance boundaries in evolutionary multitasking environments.

For researchers implementing MFEA in scientific and drug development contexts, these emerging directions suggest increasing integration of adaptive machine learning components and theoretical insights that will enhance algorithm robustness and applicability to real-world optimization challenges.

Frequently Asked Questions (FAQs)

Q1: What is the practical purpose of defining Skill Factor, Factorial Rank, and Scalar Fitness in evolutionary multitasking algorithms?

These concepts are fundamental to the Multifactorial Evolutionary Algorithm (MFEA) and its variants, enabling a population-based search to optimize multiple distinct tasks simultaneously [13] [6]. They provide a mechanism to compare and rank individuals in a population when each individual might be evaluated on a different optimization task. The Skill Factor identifies the task an individual is best at, the Factorial Rank orders individuals based on their performance on a specific task, and the Scalar Fitness gives a unified measure of an individual's overall quality in the multitasking environment, guiding the selection process [13] [6].

Q2: During experimentation, an offspring's Factorial Rank appears inconsistent. What could be the cause?

An offspring's Factorial Rank is determined after it has been evaluated on all component tasks [6]. A common implementation error is to assign a Skill Factor and Factorial Rank based on a single task evaluation. Ensure your algorithm's evaluation step correctly computes the factorial cost for the new offspring across every task before calculating its rank for each task. This comprehensive evaluation is computationally expensive but essential for accurate ranking and subsequent cultural transmission.

Q3: How can negative knowledge transfer impact these properties, and how can it be mitigated?

Negative transfer occurs when genetic material from a solution good for one task harms the performance of another, unrelated task [6]. This can manifest as a promising individual (with high Scalar Fitness) receiving a poor Factorial Rank on a new task after cross-task crossover. Mitigation strategies include adaptive transfer strategies that predict an individual's "transfer ability" before using it for crossover [6], online parameter estimation to control inter-task mating [6], and grouping similar tasks together to promote positive transfer [14].

Q4: Are these concepts applicable to multi-objective optimization?

No. It is critical to distinguish between Multitask Optimization (MTO) and Multi-Objective Optimization (MOO) [15]. MTO aims to find the global optimum for multiple distinct tasks simultaneously, leveraging potential synergies between them. The defined concepts (Skill Factor, Factorial Rank, Scalar Fitness) are specific to MTO. In contrast, MOO deals with optimizing multiple, often conflicting, objectives within a single task to find a set of Pareto-optimal solutions.

Core Concept Definitions and troubleshooting

For a researcher, a precise understanding of these definitions is crucial for correct implementation and interpretation of results. The following table summarizes the core properties of an individual in a multitasking environment [13] [6].

Table 1: Key Properties of an Individual in a Multitasking Environment

| Property | Mathematical Definition | Interpretation |

|---|---|---|

| Factorial Cost(Ψji) | Ψji = γδji + Fji | The performance of individual i on task j, incorporating both the objective value (F) and constraint violation (δ). |

| Factorial Rank(rji) | The index of individual i when the population is sorted in ascending order of Ψj. |

A relative performance measure for individual i on task j (lower rank is better). |

| Skill Factor(τi) | τi = argminj { rji } | The specific task on which individual i performs the best (has the lowest Factorial Rank). |

| Scalar Fitness(φi) | φi = 1 / minj{ rji } | A unified fitness value in the multitasking environment, derived from the individual's best Factorial Rank across all tasks. |

The logical process of calculating these key properties for any individual in the population can be visualized in the following workflow.

Experimental Protocols & Data Presentation

Protocol: Implementing a Basic Multifactorial Evolutionary Algorithm (MFEA)

The following methodology outlines the core MFEA procedure that leverages the defined concepts [13] [6].

- Initialization: Generate a random initial population of individuals. Encode the search space for all tasks into a unified representation.

- Skill Factor Assignment: Evaluate each individual on every task and assign its Skill Factor (τ) and Scalar Fitness (φ) using the definitions in Table 1.

- Assortative Mating & Crossover:

- Select two parent individuals,

p1andp2. - With a probability defined by the

random mating probability (rmp)parameter, OR if their Skill Factors are the same, create offspring via crossover. - If their Skill Factors are different and the random number exceeds

rmp, no crossover occurs.

- Select two parent individuals,

- Mutation: Apply mutation to the generated offspring.

- Vertical Cultural Transmission: Evaluate the offspring. Its Skill Factor is assigned to the task on which it performs best. Only the objective value for this single task is computed to save cost, unless a comprehensive evaluation is required for specific algorithmic steps.

- Selection: Create the next generation by selecting elite individuals from the current population and the new offspring based on their Scalar Fitness.

Quantitative Data from Comparative Studies

Empirical studies on benchmark problems demonstrate the performance of algorithms using these concepts. The following table summarizes sample results, where a higher average accuracy indicates better performance.

Table 2: Sample Algorithm Performance on CEC2017 Multitasking Benchmark Problems [15]

| Algorithm Class | Key Feature | Average Accuracy (Sample Range) | Key Strength |

|---|---|---|---|

| Genetic Algorithm (GA)(e.g., MFEA) | Implicit transfer via crossover | 70.9% - 71.9% | Foundational framework |

| Particle Swarm Optimization (PSO)(e.g., MTLLSO) | Level-based learning from superior particles | Significantly outperformedothers in most problems | Faster convergence |

| Differential Evolution (DE)(e.g., EMT-ADT) | Adaptive transfer strategy using decision trees | Competitive performance oncomplex benchmarks | Mitigates negative transfer |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Components for Evolutionary Multitasking Research

| Item/Component | Function in the Experiment | Specification Notes |

|---|---|---|

| Benchmark Problem Sets | Provides standardized testbeds for comparing algorithm performance. | CEC2017 [15], WCCI20-MTSO, WCCI20-MaTSO [6]. |

| Random Mating Probability (rmp) | A key parameter controlling the rate of cross-task genetic transfer. | Often a scalar (e.g., 0.3) but can be adaptive or a matrix [6]. |

| Unified Search Space | A common encoding that represents solutions for all component tasks. | Dimension is the maximum of all task dimensions [15]. Critical for crossover. |

| Skill Factor (τ) Tag | A metadata tag attached to each individual, determining its primary task. | Used for assortative mating and for deciding which task's objective function to call. |

| Domain Adaptation Technique | Mitigates negative transfer by transforming search spaces to improve inter-task correlation. | e.g., Linearized Domain Adaptation (LDA) [6]. |

FAQs and Troubleshooting Guide

Q1: What is negative transfer and how can I mitigate it in my evolutionary multitasking experiments?

Negative transfer occurs when knowledge shared between optimization tasks is unhelpful or misleading, leading to deteriorated performance and impeded convergence [8]. This is a common challenge when tasks are not sufficiently related.

- Mitigation Strategies: You can implement algorithms designed for adaptive knowledge transfer. For example, the MFEA-ML algorithm uses a machine learning model to learn from the historical success of intertask transfers online, guiding future transfers at the individual level to inhibit negative transfers [8]. Another approach is the Two-Level Transfer Learning (TLTL) algorithm, which reduces random transfer by using elite individuals for inter-task learning, thereby improving search efficiency and convergence [13].

Q2: My multitask optimization is converging slowly. What could be the cause?

Slow convergence can stem from excessive diversity in the population due to simple and random inter-task transfer learning strategies [13].

- Troubleshooting Steps:

- Check Transfer Randomness: Review if your algorithm uses a purely random assortative mating strategy. Consider switching to a method that uses elite individuals or a learned model to guide transfer.

- Evaluate Task Relatedness: Confirm that the tasks being optimized simultaneously have underlying similarities. Solving unrelated tasks together can hinder performance.

- Consider Advanced Algorithms: Implement algorithms like MFEA-ML or TLTL that are specifically designed to enhance convergence rates through more intelligent transfer mechanisms [8] [13].

Q3: How do I measure the performance of a multitask optimization algorithm?

Performance in evolutionary multitasking is often evaluated by comparing the quality of solutions found for each task against solving them independently.

- Common Metrics: A standard approach is to use a performance index on a set of benchmark problems. Researchers track the convergence behavior and final objective function values achieved for all component tasks [8] [13]. It is also critical to account for the computational effort required [16].

Q4: Is evolutionary multitasking a plausible approach for real-world problems like drug development?

Yes, the paradigm shows significant potential for real-world applications. Evolutionary algorithms are versatile and can handle complex, real-world optimization problems without requiring mathematical properties like continuity [8]. Specifically, multiobjective evolutionary algorithms have been effectively used in bioinformatics challenges, such as the RNA inverse folding problem, which is a critical challenge in Biomedical Engineering [17]. This demonstrates the applicability of these methods to complex biological design problems relevant to drug development.

Experimental Protocols

Protocol 1: Adaptive Knowledge Transfer with Machine Learning (MFEA-ML)

This protocol outlines the methodology for implementing an adaptive knowledge transfer mechanism using a machine learning model, as described in Shen et al. [8].

- Population Initialization: Initialize a single population of individuals for a multifactorial evolutionary algorithm (MFEA). Each individual is represented in a unified search space.

- Skill Factor Assignment: Evaluate each individual on every optimization task and assign a skill factor, which is the task on which the individual performs best.

- Offspring Generation: Create offspring using crossover and mutation.

- Assortative Mating: If two parent individuals have the same skill factor, standard crossover is applied.

- Intertask Crossover: If parents have different skill factors, crossover is performed, facilitating implicit knowledge transfer.

- Training Data Collection: Trace the survival status (i.e., whether they are selected for the next generation) of the offspring generated via intertask crossover.

- Machine Learning Model Training: Use the collected data to train an online machine learning model (e.g., a feedforward neural network) to predict the success of a knowledge transfer between two given individuals.

- Adaptive Transfer: In subsequent generations, use the trained ML model to guide intertask crossover, promoting transfers that are likely to be beneficial and suppressing those that are not.

Protocol 2: Two-Level Transfer Learning (TLTL)

This protocol details the two-level transfer learning algorithm from Ma et al. for enhancing convergence in evolutionary multitasking [13].

- Initialization: Initialize the population with a unified coding scheme.

- Upper-Level (Inter-Task) Transfer Learning: This level reduces randomness by leveraging elite individuals.

- With a probability

tp, select parent individuals. - Implement inter-task knowledge transfer via chromosome crossover between individuals from different tasks.

- Incorporate elite individual learning, where knowledge from the best-performing individuals is used to guide the search.

- With a probability

- Lower-Level (Intra-Task) Transfer Learning: This level operates within a single task.

- Perform intra-task knowledge transfer based on the information transfer of decision variables.

- This is an across-dimension optimization that helps accelerate convergence for individual tasks.

- Cooperative Evolution: The upper and lower levels work together in a mutually beneficial fashion, improving both global search efficiency and convergence speed.

Research Reagent Solutions

The following table lists key algorithmic components and their functions in evolutionary multitasking research.

| Research Reagent / Component | Function in Evolutionary Multitasking |

|---|---|

| Multifactorial Evolutionary Algorithm (MFEA) [8] [13] | A foundational framework that uses a single population to solve multiple tasks simultaneously, enabling implicit transfer through crossover. |

| Skill Factor (τ) [13] | A property assigned to each individual that identifies the optimization task on which it performs best, guiding selective evaluation and cultural transmission. |

| Factorial Cost / Rank [13] | A mechanism to compare and rank individuals from a population across different optimization tasks, allowing for cross-task selection. |

| Machine Learning Model (e.g., FNN) [8] | An online model trained to predict the success of knowledge transfer between specific individuals, enabling adaptive control of intertask crossover. |

| Inter-task Crossover [8] [13] | The primary operator for transferring genetic material between individuals from different tasks, facilitating implicit knowledge sharing. |

| Two-Level Transfer (TLTL) [13] | An algorithmic structure that separates learning into inter-task (upper-level) and intra-task (lower-level) transfer to improve efficiency and convergence. |

Workflow and Relationship Visualizations

DOT Visualization Scripts

Algorithm Comparison

Knowledge Transfer Spectrum

MFEA-ML Process

The Critical Role of Task Similarity and Complementarity in Effective Knowledge Exploitation

Welcome to this technical support center for Evolutionary Multitasking Optimization (EMTO), a cutting-edge paradigm in evolutionary computation that enables the simultaneous solving of multiple optimization tasks. By leveraging potential genetic complementarities between tasks, EMTO algorithms can achieve performance superior to traditional single-task optimization. However, a central challenge—and the focus of this guide—is managing knowledge transfer between tasks. Effective transfer can accelerate convergence and improve solution quality, while inappropriate transfer, known as negative transfer, can severely degrade performance [18] [19] [20].

This resource is designed as a practical troubleshooting guide for researchers and scientists implementing EMTO algorithms. The content is structured around frequently asked questions (FAQs) to help you diagnose and resolve common issues, with an emphasis on evaluating and harnessing task similarity and complementarity.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: How can I detect and mitigate negative transfer between tasks?

Problem: My algorithm's performance on one or more tasks is worse than if I had optimized them independently. I suspect harmful genetic information is being transferred.

Diagnosis: You are likely experiencing negative transfer. This occurs when knowledge is shared between unrelated or negatively correlated tasks, disrupting the convergence process [19] [6]. It is often caused by a lack of control over the intensity and content of knowledge exchange.

Solutions:

- Implement an Adaptive Transfer Strategy: Instead of using a fixed random mating probability (rmp), employ an adaptive strategy. For instance, you can use a symmetric RMP matrix that is learned online to capture non-uniform inter-task synergies [6].

- Evaluate Task Relatedness Dynamically: Use online measurements to assess task similarity. Techniques include:

- Population Distribution-based Measurement (PDM): Evaluate task relatedness based on the distribution characteristics of the evolving population [18].

- Maximum Mean Discrepancy (MMD): A metric that can reflect the distribution difference of two sets in a high-dimensional space, helping to select more related source tasks [19].

- Filter Transferred Individuals: Define an indicator to quantify the "transfer ability" of each individual. Use a model, such as a decision tree, to predict and select only promising positive-transferred individuals for knowledge exchange [6].

FAQ 2: What are the best methods to measure similarity between tasks?

Problem: I am running a many-task optimization experiment, but I don't know which tasks are related enough to benefit from knowledge sharing.

Diagnosis: Selecting the wrong source tasks for a target task is a primary cause of negative transfer. You need a robust and computationally efficient way to evaluate inter-task relatedness.

Solutions:

- Similarity and Intersection Measurement (from PDM): This technique uses population characteristics to provide two perspectives:

- Similarity Measurement: Assesses the landscape similarity between tasks.

- Intersection Measurement: Estimates the degree of intersection of the global optima between tasks [18].

- Complex Network Analysis: Model your many-task problem as a directed network where nodes are tasks and edges are transfer relationships. Analyzing this network's properties (e.g., community structure, density) can provide insights into the overall transfer dynamics and help prune harmful connections [9].

- Online Source-Target Similarity Learning: Construct a probabilistic model based on the distribution of elite solutions from a source task. This model can then be used to evaluate its usefulness for a related target task, providing a principled way to select knowledge sources [6].

FAQ 3: How do I adaptively control the intensity of knowledge transfer?

Problem: I don't know how to set the frequency and amount of knowledge shared between tasks. A fixed setting doesn't work across different problem sets.

Diagnosis: The optimal intensity of knowledge transfer changes as the evolution proceeds. A fixed parameter, like a global rmp value, cannot adapt to these dynamic conditions [18] [20].

Solutions:

- Self-Regulated Framework (SREMTO): Dynamically adjust the intensity of knowledge interaction based on the degree of inter-task relatedness, which can be captured by the overlap of task groups in the population [6].

- Two-Level Learning Operator: Implement a hybrid strategy that uses different transfer mechanisms:

- Individual-Level Learning: Shares evolutionary information among solutions with different skill factors based on task similarity.

- Population-Level Learning: Replaces unpromising solutions with transferred solutions from assisted tasks based on the intersection of their optima [18].

- Balance Intertask and Intratask Evolution: Regulate the probability of knowledge transfer by comparing the relative effectiveness (e.g., evolution rate or offspring survival rate) of intertask evolution versus intratask self-evolution [19].

FAQ 4: My tasks have different solution spaces. How can I transfer knowledge between them?

Problem: The tasks I am optimizing have different numbers of decision variables (dimensions), making direct chromosomal crossover impossible.

Diagnosis: This is a common issue in real-world applications. Standard multifactorial evolutionary algorithms assume a unified representation, which breaks down when tasks have heterogeneous search spaces [6].

Solutions:

- Explicit Space Mapping: Learn a mapping between the distinct problem domains. For example, use an autoencoder to transform solutions from one search space to another, enabling effective knowledge transfer [6].

- Affine Transformation: Develop an affine transformation between tasks to enhance transferability. This can bridge the gap between problems from different domains by finding a superior intertask mapping [6].

- Transfer Vector with Adaptive Length: In swarm intelligence-based EMT algorithms, generate "transfer sparks" with an adaptive transfer vector. This vector has a promising direction and a length that can accommodate different spaces, facilitating the transfer of useful genetic information [21].

Quantitative Data on Knowledge Transfer Strategies

The table below summarizes key metrics and performance outcomes for several advanced knowledge transfer strategies, providing a comparison for your experimental planning.

Table 1: Comparison of Advanced Knowledge Transfer Strategies in EMTO

| Strategy / Algorithm | Core Mechanism | Key Metric for Relatedness | Reported Advantage |

|---|---|---|---|

| EMTO-HKT [18] | Hybrid multi-knowledge transfer | Population Distribution-based Measurement (PDM) | Superior convergence & solution quality on single-objective MTO benchmarks. |

| MFEA-AKT [20] | Adaptive crossover selection | Information collected during evolution | Automatically identifies appropriate crossover for transfer, leading to robust performance. |

| AEMaTO-DC [19] | Density-based clustering | Maximum Mean Discrepancy (MMD) | Competitive success rates on many-task problems; promotes synergistic convergence. |

| MTO-FWA [21] | Transfer sparks with adaptive vector | Current fitness information of other tasks | Better performance on single- and multi-objective MTO test suites. |

| EMT-ADT [6] | Decision tree prediction | Individual transfer ability indicator | Improves probability of positive transfer, enhancing solution precision. |

Experimental Protocols for Key Methodologies

Protocol 1: Implementing a Population Distribution-based Measurement (PDM)

This protocol is for dynamically evaluating task relatedness during the evolutionary process [18].

- Input: Evolving populations for each task.

- For each pair of tasks (e.g., Task A and Task B), at a given generation:

- Step 1 (Similarity Measurement): Calculate the distribution characteristics (e.g., mean, covariance) of the elite individuals for each task.

- Step 2: Compute a statistical distance (e.g., Kullback-Leibler divergence, Wasserstein distance) between the two distributions. A smaller distance indicates higher landscape similarity.

- Step 3 (Intersection Measurement): Evaluate the overlap of high-fitness regions by analyzing the proportion of individuals from Task A that perform well in Task B's search space, and vice versa.

- Step 4: Aggregate the similarity and intersection measurements into a single PDM score for the task-pair.

- Output: A relatedness matrix that can be used to adaptively control the RMP or select tasks for knowledge transfer.

Protocol 2: Setting up a Density-Based Clustering for Knowledge Interaction

This protocol describes the cluster-based knowledge interaction mechanism used in AEMaTO-DC [19].

- Input: A target task and its selected related source tasks (chosen via MMD).

- Step 1 (Merge): Merge the subpopulations of the target task and the related source tasks into a single, combined population.

- Step 2 (Cluster): Apply a density-based clustering algorithm (e.g., DBSCAN) to the combined population. This will group individuals based on their proximity in the search space, regardless of their original task.

- Step 3 (Mating Selection): During the reproduction phase, restrict mating parents to individuals within the same cluster. This ensures that genetic material is shared between solutions that occupy similar regions of the fitness landscape.

- Step 4 (Priority Transfer): Within a cluster, preferentially select parents from different original tasks. This promotes knowledge transfer while maintaining population diversity and promoting convergence.

Workflow and System Diagrams

EMTO Knowledge Transfer Workflow

Hybrid Knowledge Transfer (HKT) Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for an Evolutionary Multitasking Experiment

| Item / Concept | Function / Role in EMTO |

|---|---|

| Unified Representation | Encodes solutions from different tasks into a common search space, enabling cross-task operations [18] [13]. |

| Skill Factor (τ) | A property assigned to each individual, indicating the task on which it performs best. Crucial for assortative mating and vertical cultural transmission [13] [21]. |

| Random Mating Probability (RMP) | A scalar or matrix controlling the probability that two individuals with different skill factors will mate and produce offspring. The core parameter for implicit transfer [20] [6]. |

| Multifactorial Evolutionary Algorithm (MFEA) | The foundational algorithmic framework for EMTO, incorporating unified representation, assortative mating, and vertical cultural transmission [13] [21]. |

| Autoencoder / Affine Transformation | An explicit mapping function used to translate solutions or search spaces between dissimilar tasks, mitigating negative transfer [6]. |

| Decision Tree / Surrogate Model | A predictive model used to evaluate the quality of potential knowledge transfers or to reduce expensive function evaluations in costly optimization tasks [6]. |

| Complex Network Model | A structural tool for modeling and analyzing the topology of knowledge transfer between tasks, helping to optimize the transfer framework [9]. |

Advanced Methodologies and Real-World Applications of Adaptive Transfer Strategies

Self-Adjusting Dual-Mode Evolutionary Frameworks for Dynamic State Evolution

FAQs: Framework Fundamentals

Q1: What is the core innovation of a self-adjusting dual-mode evolutionary framework? The core innovation lies in its integration of two distinct evolutionary modes—typically an exploration mode and an exploitation mode—alongside a self-adjusting strategy that dynamically guides the selection between these modes based on real-time search information [22]. This is often combined with a classification mechanism for decision variables and a dynamic knowledge transfer strategy to mitigate performance degradation from inefficient evolution or negative transfer between tasks [22].

Q2: How does the self-adjusting strategy determine which evolutionary mode to use? The strategy uses spatial-temporal information gathered during the search process to guide the selection [22]. This involves monitoring the population's state and its progress over time to make an informed decision on whether to prioritize exploring new regions of the search space or exploiting the current promising areas.

Q3: What is "negative knowledge transfer" in evolutionary multitasking, and how can this framework reduce it? Negative knowledge transfer occurs when the exchange of genetic information between two unrelated or dissimilar optimization tasks hinders the performance of one or both tasks [3]. This framework combats this by using a dynamic weighting strategy for the transferred knowledge and by performing variable classification, which groups variables with different attributes to enable more targeted and effective transfer [22].

Q4: Why might a single evolutionary search operator (ESO) be insufficient for multitasking optimization? Different optimization tasks often have unique landscapes and characteristics. A single ESO may not be suitable for all tasks, as its performance can vary significantly [3]. For instance, Differential Evolution (DE) might excel on one set of problems, while a Genetic Algorithm (GA) performs better on another. Using multiple ESOs allows the algorithm to adapt to the specific needs of each task [3].

Q5: How is "knowledge" defined and utilized in these advanced evolutionary algorithms? Knowledge can be extracted from successful historical evolutionary information. For example, Artificial Neural Networks (ANNs) can be embedded in the algorithm to learn the relationship between an individual's current position and a promising evolutionary direction from past data [23]. This knowledge is then used to guide the current population, making the search more intelligent and efficient [23].

Troubleshooting Common Experimental Issues

Q1: Issue: The algorithm converges prematurely to a local optimum.

- Potential Cause & Solution: The balance between exploration and exploitation is skewed. Adjust the parameters of the self-adjusting strategy to favor the exploration mode for a longer duration. Incorporating a niching method can also help maintain population diversity and allow for the simultaneous exploration of multiple promising regions [23].

Q2: Issue: Knowledge transfer between tasks is degrading performance.

- Potential Cause & Solution: This indicates negative transfer, likely due to high dissimilarity between tasks. Implement a task similarity assessment before transferring knowledge. Use a dynamic weighting mechanism that reduces the influence of knowledge from dissimilar tasks and prioritizes transfer between highly correlated tasks [22] [23].

Q3: Issue: High computational cost per generation.

- Potential Cause & Solution: The cost may stem from complex knowledge-learning models (like ANNs) or frequent similarity calculations. Simplify the knowledge model or employ it selectively, for instance, only at certain generations or for a subset of the population. Using a block-level transfer instead of a full-solution transfer can also reduce overhead [3].

Q4: Issue: One evolutionary search operator is dominating, reducing adaptability.

- Potential Cause & Solution: The operator selection is not truly adaptive. Adopt an adaptive bi-operator strategy that explicitly monitors the performance (e.g., improvement in fitness) of each ESO and adjusts their selection probability accordingly. This ensures the most suitable operator is used for various tasks [3].

Q5: Issue: The algorithm performs poorly on new, unseen benchmark problems.

- Potential Cause & Solution: The framework may be over-fitted to its training benchmarks. Validate the algorithm's robustness on diverse and recently developed benchmark suites like CEC22 [3]. Ensure the self-adjusting mechanisms are general and not overly dependent on specific problem features.

Experimental Protocols & Methodologies

Protocol 1: Performance Benchmarking Against State-of-the-Art Algorithms

- Select Benchmark Problems: Use widely recognized multitasking benchmark sets, such as CEC17 and CEC22 [3].

- Choose Comparison Algorithms: Include established algorithms like MFEA [3], MFEA-II [3], and other recent advanced methods (e.g., BOMTEA [3], EMEA [3]).

- Define Performance Metrics: Common metrics include:

- Average Accuracy (Avg): The average best objective value found over multiple runs.

- Success Rate (SR): The percentage of runs where the algorithm finds a solution within a specified tolerance of the global optimum [23].

- Average Number of Function Evaluations (AFE): The average number of objective function evaluations required to reach a solution of a desired quality.

The table below summarizes a comparison based on the literature:

Table 1: Hypothetical Performance Comparison on CEC17 Benchmarks

| Algorithm | Avg on CIHS | Avg on CIMS | Avg on CILS | Remark |

|---|---|---|---|---|

| Self-Adjusting Dual-Mode | -- | -- | -- | (The proposed method) |

| BOMTEA [3] | 1.15E-02 | 5.88E-03 | 2.56E-02 | Adaptive bi-operator |

| MFEA [3] | 5.21E-02 | 4.15E-02 | 1.89E-02 | Single operator (GA) |

| MFDE [3] | 3.58E-03 | 2.91E-03 | 5.74E-02 | Single operator (DE) |

Protocol 2: Ablation Study for Component Analysis To validate the contribution of each component in the framework (e.g., the self-adjusting strategy, the variable classification mechanism, the knowledge transfer module), conduct an ablation study.

- Create Variants: Develop simplified versions of the full algorithm, each with one key component disabled.

- Run Experiments: Execute all variants on the same set of benchmark problems.

- Compare Results: Use statistical tests (e.g., Wilcoxon rank-sum test) to determine if the performance degradation in the variants is significant, thereby proving the importance of the removed component.

Table 2: Key Components for Ablation Analysis

| Component | Function | Expected Impact if Removed |

|---|---|---|

| Self-Adjusting Mode Switch | Dynamically selects between exploration/exploitation based on search state [22]. | Reduced search efficiency; inability to adapt to different search phases. |

| Variable Classification | Groups decision variables by attributes for targeted evolution [22]. | Less efficient optimization, especially for problems with separable variables. |

| Dynamic Knowledge Transfer | Controls cross-task information flow with adaptive weights [22]. | Increased risk of negative transfer or missed synergistic opportunities. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Concepts

| Tool / Concept | Function / Definition | Application in Research |

|---|---|---|

| Differential Evolution (DE) | An ESO that generates new candidates by combining scaled differences of population vectors [3] [23]. | Serves as a powerful search operator, often used in an adaptive multi-operator pool [3]. |

| Simulated Binary Crossover (SBX) | A crossover operator that simulates the single-point crossover behavior of binary representations in real-valued space [3]. | Commonly used in Genetic Algorithms for real-parameter optimization within multitasking frameworks [3]. |

| Artificial Neural Network (ANN) | A computational model used to learn and approximate complex relationships from data [23]. | Embedded in EAs to learn from successful historical evolutionary directions and guide future search [23]. |

| Skill Factor (τ) | A property assigned to an individual, indicating the optimization task on which it performs the best [13]. | Enables efficient resource allocation in a multitasking environment by evaluating individuals on a single task [13]. |

| Random Mating Probability (rmp) | A key parameter in MFEA that controls the probability of crossover between individuals from different tasks [3] [13]. | A high fixed rmp can cause negative transfer; adaptive rmp strategies are a focus of modern research [3]. |

Workflow and System Diagrams

Dual-Mode Evolutionary Framework Workflow

Knowledge Learning and Transfer Process

The Multitasking Evolutionary Algorithm with Solver Adaptation (MTEA-SaO) is an advanced computational framework designed to solve multiple optimization tasks simultaneously. Unlike traditional evolutionary algorithms that use a single solver for all tasks, MTEA-SaO automatically selects and adapts the most suitable evolutionary solver for each task based on its unique characteristics, while enabling knowledge transfer between related tasks to improve overall performance and efficiency [24].

Frequently Asked Questions

Q1: What is the core innovation of the MTEA-SaO framework compared to previous Multitasking EAs? The core innovation lies in its adaptive solver selection mechanism. Traditional Multitasking Evolutionary Algorithms (MTEAs) typically employ a single solver (e.g., a specific genetic algorithm configuration) to handle all optimization tasks within a problem [24]. In contrast, MTEA-SaO explicitly maintains multiple solver subpopulations (e.g., one for Genetic Algorithms and another for Differential Evolution) and automatically identifies the best-fitting solver for each task's distinct landscape, such as whether it is convex, nonconvex, or multimodal [24]. This is coupled with a knowledge transfer strategy that leverages implicit similarities between tasks to accelerate convergence and avoid premature local optima [24].

Q2: How does the solver adaptation strategy determine which solver is "best" for a task? The adaptation strategy operates during an initial learning period [24]. It assigns different solvers to various subpopulations working on the same task and monitors their performance. The framework maintains success and failure memories to track the performance of each solver-task pairing [24]. Based on this tracked performance, it adaptively assigns computational resources to the most effective solvers, effectively learning and selecting the optimal solver for each task without requiring prior expert knowledge [24].

Q3: What causes negative knowledge transfer, and how does MTEA-SaO mitigate it? Negative knowledge transfer occurs when genetic materials are exchanged between two tasks that are highly dissimilar, leading to performance degradation as inappropriate information impedes the convergence of one or both tasks [2]. MTEA-SaO mitigates this by enabling knowledge transfer based on implicit similarities between tasks [24]. The embedded transfer strategy is designed to leverage helpful information while the adaptive solver selection ensures each task is primarily driven by its most suitable solver, thus reducing reliance on potentially harmful transfers [24].

Q4: My experiment is converging slowly. How can I improve performance using the MTEA-SaO framework? Slow convergence can often be addressed by:

- Verifying Solver Adaptation: Ensure the learning period is sufficiently long for the framework to accurately identify the best solver for your specific tasks [24].

- Promoting Diversity: If the population loses diversity, it can hinder the discovery of better solutions. You can adjust parameters analogous to increasing the

Population SizeorMutation Ratein evolutionary algorithms to foster greater genetic diversity and help the algorithm escape local optima [25]. - Leveraging Knowledge Transfer: The framework is designed to use knowledge from other tasks. Slow convergence might indicate that the implicit similarity measures or transfer parameters need tuning to enhance the utility of transferred knowledge [24] [2].

Q5: Can MTEA-SaO be applied to real-world problems, such as in drug development? Yes, the principles of evolutionary multitasking are highly applicable to complex, data-rich fields like drug development. For instance, a researcher could use MTEA-SaO to simultaneously optimize multiple molecular properties—such as binding affinity, solubility, and synthetic accessibility—each treated as a separate task. The adaptive solver selection would find the best search strategy for optimizing each property, while knowledge transfer could use shared patterns in the molecular data to accelerate the overall multi-objective discovery process [2] [26].

Troubleshooting Guides

Issue: Solver Adaptation is Not Performing as Expected

Problem: The framework fails to consistently select the most efficient solver for one or more tasks, leading to suboptimal performance.

Diagnosis Steps:

- Check Learning Period Length: A learning period that is too short may not provide enough data for the framework to make a reliable judgment on solver effectiveness [24].

- Review Success/Failure Memory: Investigate the records of solver performance. If memories are updated too frequently or infrequently, the adaptation logic may become unstable [24].

- Analyze Task Characteristics: Verify that the available solvers in the pool are, in principle, capable of handling the specific characteristics of your tasks (e.g., using a local search solver for a highly multimodal task might be ineffective).

Resolution Steps:

- Extend the Learning Period: Increase the duration of the initial learning phase to allow for more robust performance data collection [24].

- Adjust Memory Update Parameters: Tune the parameters that control how success and failure are recorded and weighted to ensure a stable and accurate performance history [24].

- Expand Solver Pool: Consider incorporating a more diverse set of evolutionary solvers into the framework to increase the likelihood that a well-suited solver is available for every task [24].

Issue: Prevalence of Negative Knowledge Transfer

Problem: The performance of one or more tasks deteriorates, likely due to the transfer of unhelpful genetic information from dissimilar tasks.

Diagnosis Steps:

- Identify the Task Pairs: Analyze which tasks are interacting. Performance logs can often show which cross-task transfers are correlated with fitness degradation [2].

- Evaluate Implicit Similarity Measures: The method used to infer similarity between tasks might be inaccurate for your specific problem set [24].

Resolution Steps:

- Refine Transfer Controls: Implement or adjust a filtering mechanism for knowledge transfer. This could involve developing a machine learning model, similar to MFEA-ML, that learns to approve or block transfers between individual solutions based on their traits, moving beyond task-level similarity [2].

- Adjust Transfer Frequency and Intensity: Reduce the rate or amount of genetic material being exchanged between tasks. This can minimize the damage caused by individual negative transfer events [2].

Issue: Algorithm Fails to Find a Satisfactory Solution

Problem: The optimization process stagnates, and the best-found solution is of poor quality.

Diagnosis Steps:

- Check for Premature Convergence: Examine the population diversity metrics. A rapid drop in diversity often indicates the population has converged to a local optimum [25].

- Verify Evolutionary Parameters: Ensure that parameters like population size, mutation rate, and crossover rate are appropriately set for the problem scale and complexity [27].

- Inspect Solver-Task Pairing: Confirm that the adaptive selection mechanism has not incorrectly paired a task with an unsuitable solver.

Resolution Steps:

- Increase Population Diversity: Restart the experiment with a larger population size or a higher mutation rate. This introduces more genetic diversity, helping the algorithm explore a wider area of the search space [25].

- Hybridize with a Local Search: After the MTEA-SaO run, use the best solution found as a starting point for a local search or a gradient-based method (if applicable) to refine the solution [25].

- Re-run the Adaptive Process: The stochastic nature of EAs means that multiple independent runs can yield different results. Perform several runs to gain confidence in the solver's adaptation and overall performance [24].

Experimental Protocols & Data

Key Experiment: Benchmarking MTEA-SaO Performance

Objective: To validate the performance of MTEA-SaO against state-of-the-art MTEAs and classical single-task evolutionary algorithms across various multitasking optimization (MTO) benchmark suites [24].

Methodology:

- Benchmark Selection: A series of standardized MTO benchmark problems with known characteristics and difficulties were selected [24].

- Algorithm Comparison: MTEA-SaO was compared against nine advanced MTEAs (including MFEA, MFEA-II, etc.) and six classical non-multitasking EAs [24].

- Performance Metrics: The primary metrics were the quality of the best solution found for each task and the convergence speed [24].

- Implementation: The specific MTEA-SaO implementation used two solvers: a Genetic Algorithm (GA) and Differential Evolution (DE). The solver adaptation and knowledge transfer strategies were activated as described in the framework [24].

Summary of Quantitative Results: Table 1: Comparative Performance of MTEA-SaO vs. Other Algorithms

| Algorithm Category | Number of Algorithms Tested | Reported Outcome | Key Advantage Demonstrated |

|---|---|---|---|

| MTEA-SaO | 1 | Overall superior performance [24] | Automated solver selection & effective knowledge transfer [24] |

| Other MTEAs | 9 | Outperformed by MTEA-SaO [24] | - |

| Single-Task EAs | 6 | Outperformed by MTEA-SaO for MTO problems [24] | - |

Component Analysis: The researchers conducted ablation studies to isolate the contribution of each key component of MTEA-SaO. Table 2: Impact of Key Components within MTEA-SaO

| Component | Function | Impact on Performance |

|---|---|---|

| Solver Adaptation | Automatically selects the best evolutionary solver (e.g., GA or DE) for each task [24]. | Directly improved efficiency and solution quality by matching solver to task characteristics [24]. |

| Knowledge Transfer | Allows sharing of genetic information between tasks based on implicit similarities [24]. | Accelerated convergence and helped avoid local optima, leading to better overall solutions [24]. |

Workflow Visualization

MTEA-SaO High-Level Workflow

The Scientist's Toolkit: Research Reagents & Solutions

Table 3: Essential Components for an MTEA-SaO Experiment

| Item / Concept | Function in the Experiment |

|---|---|

| Multitasking Optimization (MTO) Problem | The core problem definition, comprising multiple (K) optimization tasks to be solved concurrently [24]. |

| Solver Pool (e.g., GA, DE) | A set of different evolutionary algorithms. MTEA-SaO selects the most effective one from this pool for each task [24]. |

| Subpopulations | Distinct groups of candidate solutions, each potentially assigned to a different solver or task, facilitating parallel exploration [24]. |