Adaptive Constraint Handling Techniques for Evolutionary Optimization: From Foundations to Biomedical Applications

Constrained Optimization Problems (COPs) present significant challenges across scientific domains, particularly in drug development where complex biochemical constraints must be balanced with multiple optimization objectives.

Adaptive Constraint Handling Techniques for Evolutionary Optimization: From Foundations to Biomedical Applications

Abstract

Constrained Optimization Problems (COPs) present significant challenges across scientific domains, particularly in drug development where complex biochemical constraints must be balanced with multiple optimization objectives. This comprehensive review explores adaptive constraint handling techniques (CHTs) for evolutionary algorithms, addressing four critical dimensions: fundamental principles of constrained multi-objective optimization, innovative methodological approaches including deep reinforcement learning and repair mechanisms, troubleshooting strategies for complex constraint landscapes, and rigorous validation frameworks. By synthesizing cutting-edge research in adaptive CHTs, we provide researchers and drug development professionals with actionable insights for navigating discontinuous feasible regions, avoiding local optima, and accelerating convergence in computationally expensive optimization scenarios prevalent in biomedical research.

Understanding Constrained Optimization: Core Concepts and Challenges

Mathematical Definition of CMOPs

A Constrained Multi-Objective Optimization Problem (CMOP) can be mathematically defined by three key components: multiple objective functions to be optimized simultaneously, a set of constraints that solutions must satisfy, and decision variables with their boundaries [1] [2].

The standard formulation is as follows [1] [2]:

Minimize ( F(\mathbf{x}) = (f1(\mathbf{x}), f2(\mathbf{x}), \dots, f_M(\mathbf{x})) )

Subject to: [ \begin{align} g_i(\mathbf{x}) & \leq 0, \quad i = 1, \dots, p \ h_j(\mathbf{x}) & = 0, \quad j = p+1, \dots, L \ \mathbf{x} & \in S \subseteq \mathbb{R}^D \end{align} ]

Where:

- ( F(\mathbf{x}) ) is the objective vector comprising ( M ) conflicting sub-objectives

- ( \mathbf{x} = (x1, x2, \dots, x_D) ) is the decision vector in the D-dimensional space

- ( g_i(\mathbf{x}) ) represents inequality constraints

- ( h_j(\mathbf{x}) ) represents equality constraints

- ( S ) defines the feasible decision space bounded by variable constraints

Constraint Violation Measurement

To quantify how much a solution violates constraints, researchers use a constraint violation (CV) function [1] [2]:

[ CV(\mathbf{x}) = \sum{i=1}^{p} \max(0, gi(\mathbf{x})) + \sum{j=p+1}^{L} \max(0, |hj(\mathbf{x})| - \delta) ]

Here, ( \delta ) is a very small positive parameter (typically ( 10^{-4} )) used to relax equality constraints, making them numerically manageable [2]. A solution ( \mathbf{x} ) is considered feasible if ( CV(\mathbf{x}) = 0 ), and infeasible otherwise.

Constrained Dominance Principle

For comparing solutions in CMOPs, the constrained dominance principle (( \precc )) is widely used [2]. A solution ( \mathbf{a} ) constrained-dominates a solution ( \mathbf{b} ) (( \mathbf{a} \precc \mathbf{b} )) if any of the following conditions hold:

- Both solutions are feasible, and ( \mathbf{a} ) dominates ( \mathbf{b} ) in objective space

- Solution ( \mathbf{a} ) is feasible while ( \mathbf{b} ) is infeasible

- Both solutions are infeasible, but ( \mathbf{a} ) has a smaller constraint violation than ( \mathbf{b} )

Real-World Applications of CMOPs

CMOPs arise frequently in real-world applications across various domains. The table below summarizes key application areas and examples:

Table: Real-World Application Areas of CMOPs

| Application Domain | Specific Examples | Key Constraints & Objectives |

|---|---|---|

| Drug Discovery [3] | Molecular optimization for protein-ligand binding (4LDE protein) [3], Glycogen synthase kinase-3 (GSK3) inhibitors [3] | Objectives: Improve bioactivity, drug-likeness (QED), synthetic accessibility [3]. Constraints: Structural alerts, ring size restrictions, reactive groups [3]. |

| Healthcare & Medical Decision Making [4] | Optimal cervical cancer screening/vaccination strategies [4], Statin start time optimization for diabetes patients [4], Radiation therapy treatment planning [4] | Objectives: Maximize health outcomes, minimize costs [4]. Constraints: Resource availability, clinical guidelines, dosage limits [4]. |

| Mechanical & Structural Design [2] | Robot gripper optimization [1] [5], Tall building design [5], Composite structures [5] | Objectives: Maximize performance, minimize weight/cost [2]. Constraints: Physical laws, safety regulations, material limits [2]. |

| Energy Systems [1] | Power system optimization [2], Energy-saving strategies [1] | Objectives: Minimize cost, maximize efficiency/reliability [1] [2]. Constraints: Demand-supply balance, transmission limits, emission caps [2]. |

| Transportation & Logistics [1] | Vehicle scheduling [1], Vehicle routing with time windows [5] | Objectives: Minimize travel time/distance, maximize service level [1] [5]. Constraints: Time windows, capacity limits, route continuity [5]. |

In-Depth Application: Constrained Molecular Optimization

In drug discovery, CMOPs are formulated to optimize multiple molecular properties while satisfying chemical constraints [3]. For a molecule ( \mathbf{m} ), the problem can be defined as:

Minimize ( F(\mathbf{m}) = (f1(\mathbf{m}), f2(\mathbf{m}), \dots, f_M(\mathbf{m})) )

Subject to: [ \begin{align} C_i^{\text{ineq}}(\mathbf{m}) & \leq 0, \quad i = 1, \dots, p \ C_j^{\text{eq}}(\mathbf{m}) & = 0, \quad j = p+1, \dots, L \ \end{align} ]

Where ( f_k(\mathbf{m}) ) represents molecular properties like biological activity or drug-likeness, and constraints ( C(\mathbf{m}) ) include structural requirements like ring size limitations or avoidance of toxic substructures [3].

Classification of CMOPs

Based on the relationship between constrained and unconstrained Pareto fronts, CMOPs can be categorized into four types [2]:

Table: Classification of CMOP Types

| Type | Description | Challenge Level |

|---|---|---|

| Type I | Constrained Pareto Front (CPF) is identical to unconstrained Pareto Front (UPF) | Low |

| Type II | CPF is a subset of UPF | Medium |

| Type III | CPF partially overlaps with UPF | Medium-High |

| Type IV | CPF and UPF have no common regions | High |

This classification helps researchers select appropriate constraint-handling techniques, as the required balance between minimizing objectives and satisfying constraints varies across types [2].

Experimental Setup and Benchmarking

Standard Benchmark Problems

For standardized performance evaluation, researchers have developed benchmark suites of real-world CMOPs. The CEC'2020 test suite contains 57 real-world constrained optimization problems [6], while other suites offer up to 50 RWCMOPs collected from various domains [2].

Table: Real-World CMOP Benchmark Suites

| Benchmark Suite | Number of Problems | Application Domains Covered |

|---|---|---|

| CEC'2020 [6] | 57 problems | Engineering design, energy systems, process control |

| RWCMOP Suite [2] | 50 problems | Mechanical design (21), Chemical engineering (7), Process design (9), Power electronics (6), Power systems (7) |

Performance Metrics for CMOP Algorithms

When evaluating constrained multi-objective evolutionary algorithms (CMOEAs), researchers use multiple performance indicators [2]:

- Inverted Generational Distance (IGD): Measures convergence and diversity

- Hypervolume (HV): Measures the volume of objective space dominated by solutions

- Feasibility Ratio: Percentage of feasible solutions in the final population

- Success Rate: Percentage of successful runs reaching predefined targets

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q: What is the key difference between constrained single-objective and multi-objective optimization?

A: While single-objective constrained optimization aims to find a single optimal solution satisfying constraints, CMOPs must find a set of trade-off solutions (Pareto front) that balance multiple conflicting objectives while satisfying all constraints [1] [2]. The additional complexity arises from maintaining diversity among feasible solutions while approaching the true Pareto front.

Q: How do constraints affect the feasible region in CMOPs?

A: Constraints can significantly reshape the feasible space by [5] [2]:

- Making large portions of the search space infeasible

- Dividing the feasible space into narrow, disconnected regions

- Rendering parts of the unconstrained Pareto front infeasible

- Creating complex feasible boundaries that are challenging to navigate

Implementation Challenges

Q: Why do many algorithms struggle with Type III and Type IV CMOPs?

A: Type III and IV CMOPs present particular challenges because [2]:

- They require careful balancing between objective optimization and constraint satisfaction

- The constrained and unconstrained Pareto fronts have minimal or no overlap

- Algorithms must navigate through large infeasible regions to find feasible solutions

- Traditional penalty function methods often fail without proper adaptation

Q: What is the main limitation of penalty function methods for constraint handling?

A: The main limitations include [6]:

- Difficulty in setting appropriate penalty parameters without trial and error

- Different degrees of constraint violations require different penalty scales

- Poor performance when feasible regions are small or disconnected

- Tendency to get trapped in local optima when penalty parameters are too large

Algorithm Selection

Q: What are the advantages of multi-stage approaches for solving CMOPs?

A: Multi-stage frameworks (like MSEFAS) provide several advantages [5]:

- They can adaptively balance exploration and exploitation across different evolutionary phases

- Early stages can utilize promising infeasible solutions to maintain diversity

- Later stages can focus on convergence to the feasible Pareto front

- They can dynamically adjust search behavior based on population state

Q: How do adaptive constraint-handling techniques improve upon static methods?

A: Adaptive techniques (like dynamic ε) offer significant benefits [6] [1]:

- They dynamically adjust constraint tolerance based on feasible ratio and iteration count

- They maintain a reasonable proportion of virtual feasible solutions throughout optimization

- They can reshape individual positions adaptively based on constraint violation magnitude

- They eliminate the need for manual parameter tuning during the optimization process

Troubleshooting Common Experimental Issues

Problem: Population Lacks Feasible Solutions

Symptoms:

- Zero or very few feasible solutions after many generations

- Population converging to infeasible regions

- Inability to improve constraint violation over iterations

Solutions:

- Implement dynamic ε-constraint handling [6]: Gradually relax constraints early in optimization, then tighten them progressively

Use multi-population approaches [1]: Maintain separate populations focusing on objective improvement and constraint satisfaction

Apply adaptive boundary constraint handling [6]: Reshape individual positions based on constraint violation extent to increase diversity

Problem: Premature Convergence to Suboptimal Feasible Regions

Symptoms:

- Algorithm finds feasible but poor-quality solutions

- Lack of diversity in the obtained Pareto front

- Inability to explore disconnected feasible regions

Solutions:

- Implement multi-stage optimization [5]: Divide optimization into stages with different goals:

- Stage 1: Encourage approaching feasible region with diversity preservation

- Stage 2: Enable spanning large infeasible regions to accelerate convergence

- Stage 3: Refine solutions considering CPF-UPF relationship

Use population state discrimination [1]: Monitor relative positions of main and auxiliary populations to dynamically switch search strategies

Incorporate knowledge transfer mechanisms [5]: Enable information exchange between populations exploring different regions

Problem: Poor Performance on Specific CMOP Types

Symptoms:

- Algorithm works well on some problem types but fails on others

- Inconsistent performance across different benchmark problems

- Difficulty maintaining balance between constraints and objectives

Solutions:

- Employ algorithm selection based on problem characteristics [2]:

- Type I/II: Focus more on objective optimization

- Type III/IV: Prioritize constraint satisfaction initially

Implement cooperative coevolution [1]: Use multiple populations with different constraint-handling techniques:

- Main population: Focus on CPF with strict constraints

- Auxiliary population: Explore UPF with relaxed constraints

Apply adaptive resource allocation [1]: Distribute computational resources between populations based on their contribution to improvement

Essential Research Reagents and Tools

Table: Key Algorithmic Components for CMOP Research

| Component | Function | Examples/Implementation |

|---|---|---|

| Constraint Handling Techniques (CHTs) | Balance objective optimization with constraint satisfaction | ε-constraint [6], Stochastic Ranking [2], Adaptive Penalty [2] |

| Multi-Objective Evolutionary Algorithms (MOEAs) | Base optimizer for handling multiple objectives | NSGA-II [1], MOEA/D [2], NSGA-III [2] |

| Benchmark Suites | Performance assessment and comparison | RWCMOP Suite [2], CEC'2020 [6], MFs, CFs [2] |

| Performance Metrics | Quantitative evaluation of algorithm performance | IGD, HV [2], Feasibility Ratio, Success Rate [2] |

| Visualization Tools | Analysis of Pareto front and population distribution | Parallel coordinates, 2D/3D scatter plots, Heat maps of constraint violations |

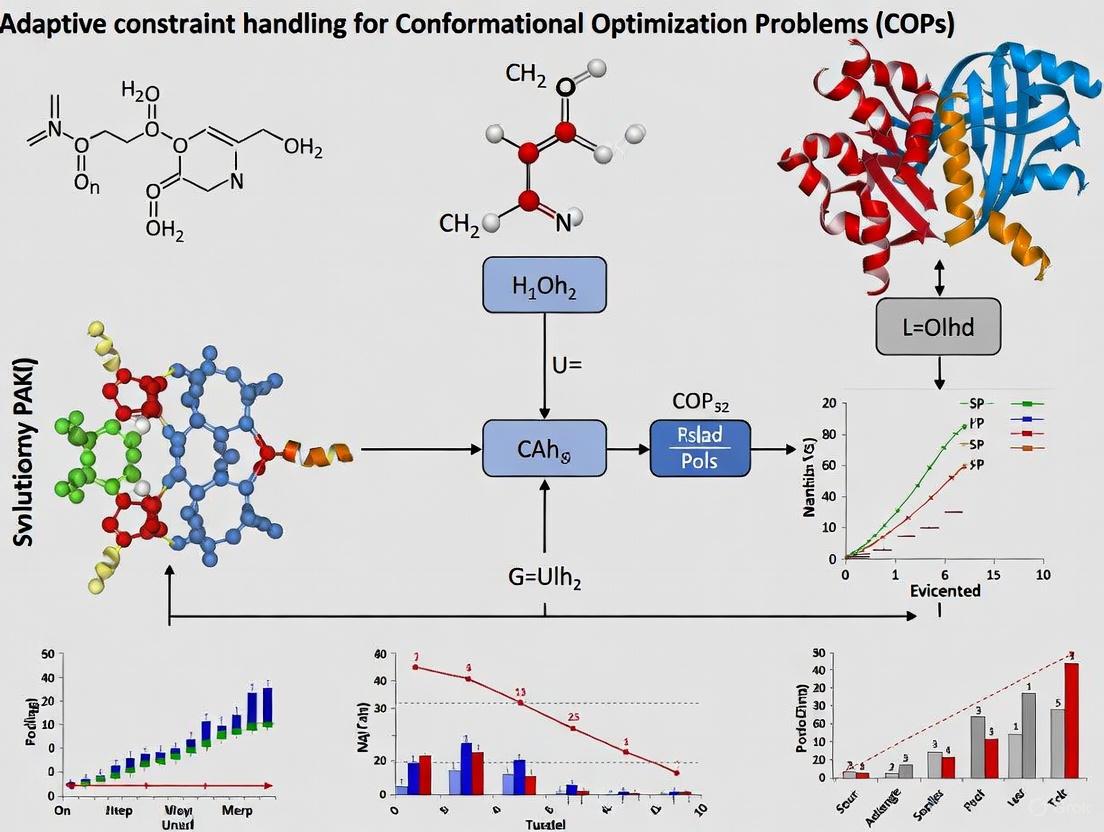

Methodological Workflows

The following workflow diagrams illustrate common experimental setups for CMOP research:

Dynamic ε-Constraint Handling Workflow

Multi-Stage Optimization Framework

Dual-Population Cooperative Framework

Frequently Asked Questions

1. What is a constraint violation (CV) and how is it calculated for a single constraint?

The constraint violation (CV) quantitatively measures how much a candidate solution x fails to satisfy a single constraint. The calculation differs for inequality and equality constraints [7] [8].

For an inequality constraint of the form gi(x) ≤ 0, the violation is calculated as:

CV_i(x) = max(0, gi(x))

This means the violation is zero if the constraint is satisfied (gi(x) ≤ 0), and positive otherwise [9] [7].

For an equality constraint of the form hj(x) = 0, it is typically relaxed into an inequality using a tolerance δ (often set to 0.0001 or 10^-4) [9] [10]. The violation is then calculated as:

CV_j(x) = max(0, |hj(x)| - δ)

A solution is considered to satisfy the equality constraint only if the absolute value of hj(x) is less than or equal to δ [10].

2. How is the overall constraint violation for a solution computed?

The overall constraint violation G(x) or CV(x) is a single metric that aggregates the violation across all m constraints. This is typically done by summing the individual violations for each constraint [10] [7] [8]:

G(x) = ∑ from i=1 to p of max(gi(x), 0) + ∑ from j=p+1 to m of max(|hj(x)| - δ, 0)

A solution is considered feasible if and only if its overall constraint violation G(x) is equal to zero [9].

3. Our algorithm often gets trapped in local optima. How can adaptive constraint handling help? Standard methods might prematurely reject all infeasible solutions, losing valuable information. Adaptive techniques dynamically adjust how constraints are treated during the optimization process to improve global exploration [8].

One method is Adaptive Constraint Relaxation, which starts with relaxed constraint boundaries to allow exploration of promising infeasible regions. As the optimization progresses, the constraints are gradually tightened, guiding the population toward the feasible region [7]. Another approach is the ε-constraint method, where a parameter ε controls the tolerance for accepting slightly infeasible solutions. This ε value can be adaptively decreased based on the iteration count or the feasibility ratio of the population, balancing the use of objective and constraint information over time [9].

4. What are the best practices for measuring convergence in constrained evolutionary algorithms? Measuring convergence requires assessing both the quality of objective function values and the feasibility of solutions.

- Use Feasibility Rates: Track the proportion of feasible solutions in the population over generations. A successful run should show this rate increasing over time [10].

- Monitor Key Metrics: Record the best, median, and worst objective values from the feasible solutions in the population across independent runs. This helps gauge the algorithm's accuracy and robustness [10].

- Employ Quality Indicators: For multi-objective problems, use established metrics like:

- Perform Statistical Validation: Conduct a sufficient number of independent runs (e.g., 31) and use statistical tests to ensure the significance of the results [10].

5. How can surrogate models be used for expensive constrained optimization? In real-world applications like drug discovery, evaluating objectives and constraints can be computationally prohibitive [3] [11]. Surrogate-Assisted Evolutionary Algorithms (SAEAs) address this by using approximate models.

- Global Surrogates: Models like Radial Basis Functions (RBF) or Gaussian Processes (GPs) are built using all historically evaluated solutions. These surrogates are used to prescreen a large number of candidate solutions cheaply, selecting only the most promising ones for exact evaluation [11].

- Constrained Bayesian Optimization: A common approach uses the Constrained Expected Improvement (CEI) acquisition function. CEI is the product of the Expected Improvement (EI) for the objective and the Probability of Feasibility (POF) for the constraints, guiding the search toward optimal and feasible regions with minimal expensive function evaluations [12] [11].

Troubleshooting Guides

Problem: The population lacks diversity and converges to an infeasible local optimum.

- Potential Cause 1: Overly strict constraint handling that prematurely discards all infeasible solutions, some of which may be close to feasible regions and carry valuable objective information [7].

- Solution:

- Implement a two-stage strategy. In the first stage, relax constraints to explore the search space and identify promising regions, even if they are infeasible. In the second stage, strictly enforce constraints to refine solutions and achieve feasibility [7] [8].

- Use an archive mechanism to store promising infeasible solutions. These solutions can be used later to provide diversity and help the population cross large infeasible regions [8].

- Potential Cause 2: The mutation and crossover strategies are not effectively maintaining population diversity.

- Solution:

- Design evolution strategies for a dynamic population. For example, use different base solution selection rules for top-performing individuals and others to emphasize exploitation in promising regions while maintaining exploration elsewhere [11].

- Integrate a diversity-based ranking into the selection process to prevent convergence on a small subset of the feasible region [8].

Problem: The optimization is computationally slow due to expensive constraint evaluations.

- Potential Cause: Each constraint evaluation requires a costly simulation or experiment.

- Solution: Implement a surrogate-assisted framework [11].

- Build Surrogates: Construct approximation models (e.g., RBF, Kriging) for both the objective and constraint functions using an initial set of evaluated solutions.

- Prescreen Candidates: Use these surrogates to predict the performance of a large number of candidate solutions, filtering out poor or highly infeasible ones.

- Select for Exact Evaluation: Choose only a few high-potential solutions, as predicted by an infill criterion (e.g., CEI), for evaluation with the exact, expensive functions. This drastically reduces the number of costly evaluations required [11].

Problem: The algorithm struggles with problems that have disconnected feasible regions.

- Potential Cause: The feasible regions are scattered and separated by large infeasible "gaps," making it difficult for the population to find and transition between all feasible patches [7].

- Solution:

- Apply a constraint-tightening strategy. Begin the search with highly relaxed constraints to connect the disparate feasible regions. Gradually tighten the constraints as the run progresses, guiding the population toward the true, disconnected feasible areas [7].

- Utilize information from "promising infeasible solutions"—those with good objective values but moderate constraint violations. An adaptive step-size adjustment can use these solutions to guide the population across infeasible valleys toward other feasible regions [7].

Constraint Violation Metrics and Handling Techniques at a Glance

| Metric / Technique | Formula / Key Mechanism | Application Context | ||

|---|---|---|---|---|

| Single Constraint Violation [7] [8] | CV_i(x) = max(0, gi(x)) (Inequality) `CV_j(x) = max(0, |

hj(x) | - δ)` (Equality) | Fundamental building block for calculating overall violation for any type of constraint. |

| Overall Constraint Violation [9] [10] | `G(x) = ∑ max(gi(x), 0) + ∑ max( | hj(x) | - δ, 0)` | Determining if a solution is feasible (G(x)=0) and for comparing infeasible solutions. |

| ε-Constraint Handling [9] | Relaxed comparison: if CV(x) ≤ ε, compare by objective; else, compare by CV. |

Balancing objective and constraint search; ε can be self-adaptive based on population's feasibility ratio. |

||

| Feasibility Rule (CDP) [9] | 1) Feasible dominates infeasible. 2) Between feasible, better objective wins. 3) Between infeasible, lower CV wins. |

Simple and popular method for comparing two solutions during selection. | ||

| Two-Stage Methods [7] | Stage 1: Explore with relaxed constraints. Stage 2: Exploit with strict constraints. | Solving problems with disconnected feasible regions or when the global optimum lies on a constraint boundary. |

Experimental Protocols for Evaluating Constraint Handling Techniques

Protocol 1: Benchmarking on Standard Test Suites To validate a new constraint handling technique, it is crucial to test it against established benchmark problems.

- Select Benchmark Problems: Use standardized test suites like CEC2010 or CEC2017 for constrained real-parameter optimization [10]. These suites contain problems with diverse characteristics, such as separable/non-separable objectives and rotated constraints.

- Configure Experimental Settings: Set the search space dimensionality (e.g., D=10, 30) and the maximum number of function evaluations (MaxFEs) as defined by the benchmark (e.g., 200,000 for D=10) [10].

- Run Algorithms: Compare your algorithm against several state-of-the-art constrained evolutionary algorithms (e.g., IMODE, SHADE) [10].

- Performance Measurement: Execute multiple independent runs (e.g., 31) to ensure statistical significance. Compare results using performance metrics like best/median/worst objective values of found feasible solutions and feasibility rates [10].

Protocol 2: Real-World Validation in Molecular Optimization For techniques aimed at applications like drug discovery, testing on real-world problems is essential [3].

- Problem Formulation: Define the constrained multi-objective optimization problem. Treat multiple molecular properties (e.g., bioactivity, drug-likeness) as objectives to be optimized. Stringent drug-like criteria (e.g., ring size constraints, substructure alerts) are treated as constraints [3].

- Algorithm Implementation: Implement a framework like CMOMO that uses a dynamic constraint handling strategy. This involves an initial unconstrained search for good properties, followed by a constrained search to find feasible molecules [3].

- Evaluation: Use a pre-trained encoder to embed molecules into a continuous latent space for efficient optimization. The success of the algorithm is measured by its ability to identify molecules with multiple desired properties that also strictly adhere to all drug-like constraints [3].

The Scientist's Toolkit: Essential Reagents for Constrained Optimization

| Item | Function in Constrained Optimization |

|---|---|

| CEC Benchmark Suites | Provides a standardized set of constrained optimization problems (e.g., CEC2010, CEC2017) for fair and reproducible comparison of algorithms [10]. |

| Differential Evolution (DE) | A versatile and popular evolutionary algorithm framework that serves as a foundation for many advanced constrained optimization algorithms (e.g., SHADE) [9]. |

| Radial Basis Function (RBF) / Gaussian Process (GP) | Surrogate models used to approximate expensive black-box objective and constraint functions, drastically reducing computational cost in SAEAs [11]. |

| Probability of Feasibility (POF) | A metric, often derived from a Gaussian process model, that estimates the likelihood that a candidate solution will satisfy all constraints. Used in acquisition functions like CEI [12] [11]. |

| Constraint Dominance Principle (CDP) | A simple yet powerful rule for comparing two solutions during selection, prioritizing feasibility and guiding the population toward feasible regions [8]. |

Workflow: Adaptive Constraint Handling

The following diagram illustrates a generalized workflow for an adaptive constraint handling technique, integrating concepts from two-stage methods and surrogate assistance.

Framework for Constrained Molecular Optimization

This diagram outlines the CMOMO framework, a specific two-stage approach for constrained multi-objective molecular optimization in drug discovery [3].

Frequently Asked Questions

Q: What is the No Free Lunch (NFL) Theorem in the context of optimization?

- A: The No Free Lunch Theorem states that when the performance of all optimization algorithms is averaged across all possible problems, they all perform equally well [13] [14]. This means there is no single "best" constraint-handling technique (CHT) that is superior for every Constrained Optimization Problem (COP) you may encounter [15].

Q: If no single technique is the best, how should I select a CHT for my research?

- A: Since no algorithm has a priori superiority, your selection strategy is crucial. The NFL theorem implies that you must leverage problem-specific knowledge to choose or design a CHT [14]. Success comes from tailoring the algorithm's inductive biases to the specific structure of your problem, rather than seeking a universal method [16] [17].

Q: My population is getting stuck in local optima. What CHT strategies can help?

- A: This is a common issue. Advanced CHTs incorporate mechanisms to escape local optima. For example, one approach is to use a simple population restart mechanism when a specific criterion indicates the population is trapped in a local optimum, particularly in the infeasible region [18]. Another strategy is to use a hybrid technique that adapts its search strategy based on the population's state [18].

Q: How can I handle problems with a large number of constraints?

- A: One effective method is to decompose the problem. A classification-collaboration constraint handling technique randomly classifies constraints into

Kclasses, decomposing the original problem intoKsimpler subproblems [19]. Each subpopulation then evolves to handle its assigned subset of constraints, and they interact through learning strategies to collaboratively solve the original problem [19].

- A: One effective method is to decompose the problem. A classification-collaboration constraint handling technique randomly classifies constraints into

Q: Are hybrid CHTs more effective than single-method approaches?

- A: Often, yes. Hybrid techniques are designed to leverage the strengths of different methods. For instance, a Hybrid Constraint-handling Technique (HCT) might define different population situations (infeasible, semi-feasible, feasible) and apply a tailored CHT for each situation [18]. This adaptive behavior can lead to more robust performance across various problem types.

Troubleshooting Common Experimental Issues

| Problem Description | Possible Causes | Recommended Solutions |

|---|---|---|

| Premature Convergence (Population loses diversity and gets stuck early) | Overly greedy feasibility rules; lack of diversity-preserving mechanisms. | Implement a restart mechanism [18]. Use a multi-objective approach to maintain a diverse set of solutions [20]. Introduce elite replacement strategies to accumulate experience [18]. |

| Inability to Find Feasible Solutions | Population is far from the feasible region; constraints are too restrictive. | For the "infeasible situation," use techniques that help the population move toward feasibility, like an elite replacement strategy [18]. Employ a repair strategy, such as Random Direction Repair (RDR), to guide infeasible solutions toward the feasible region [19]. |

| Poor Performance on Specific Problem Types | The chosen CHT's inductive bias does not align with the problem's structure. | Switch the CHT based on the problem's features (e.g., number of constraints, location of the optimum) [14]. Use an adaptive framework like ECO-HCT that automatically switches strategies based on population information [18]. |

| High Computational Cost | Complex CHTs; expensive fitness evaluations for feasibility. | Decompose the problem using a classification-collaboration technique to reduce the complexity per evaluation [19]. Use a predictive model like an estimation of distribution algorithm to guide the search and reduce evaluations [19]. |

Experimental Protocols & Methodologies

1. Protocol for Comparing CHT Performance

This protocol provides a standardized way to evaluate and compare different CHTs on your specific set of COPs.

- Objective: To empirically determine the most effective CHT for a given class of COPs.

- Benchmark Sets: Utilize standard constrained test suites such as IEEE CEC2006 (24 functions) and IEEE CEC2017 (28 functions) to ensure comparable results [18] [19].

- Performance Metrics: Define and measure the following for each algorithm:

- Best Feasible Objective Value: The best

f(x)found whereG(x) = 0. - Feasibility Rate: The percentage of runs that find at least one feasible solution.

- Average Computational Cost: Measured in number of function evaluations or time.

- Best Feasible Objective Value: The best

- Experimental Setup:

- Run each algorithm over a significant number of independent runs (e.g., 30).

- Use statistical tests (e.g., Wilcoxon signed-rank test) to validate the significance of performance differences.

2. Protocol for Implementing a Hybrid CHT (e.g., ECO-HCT)

This methodology outlines the steps to implement an adaptive hybrid technique.

- Step 1: Define Population Situations. Categorize the population's state based on the number of feasible individuals [18]:

- Infeasible Situation: Most individuals are infeasible.

- Semi-feasible Situation: A mix of feasible and infeasible individuals.

- Feasible Situation: Most or all individuals are feasible.

- Step 2: Assign CHTs to Situations.

- Infeasible: Focus on minimizing total constraint violation

G(x). Use an elite replacement strategy to preserve good infeasible solutions that are close to feasibility [18]. - Semi-feasible: Balance objective and constraints using methods like stochastic ranking or an

ɛ-constraint method [18] [19]. - Feasible: Focus on minimizing the objective function

f(x)while maintaining feasibility.

- Infeasible: Focus on minimizing total constraint violation

- Step 3: Implement a Restart Mechanism. Define a criterion (e.g., no improvement in best feasible solution for

Ngenerations) to trigger a population restart and help escape local optima [18].

The Scientist's Toolkit: Research Reagent Solutions

| Item / Concept | Function in Constrained Optimization |

|---|---|

| Constraint Violation (G(x)) | A metric quantifying how much a solution x violates all constraints. It is the foundation for most CHTs [18] [19]. |

| Feasibility Rule | A simple, powerful CHT that prefers feasible solutions over infeasible ones, and among feasible solutions, prefers those with a better objective value [19]. |

| ɛ-Constraint Method | A CHT that relaxes the feasibility requirement by allowing solutions with a constraint violation below a threshold ɛ to be treated as feasible, enabling a more gradual approach to the feasible region [19]. |

| Stochastic Ranking | A technique that introduces a probability P to balance the influence of objective function and constraint violation during selection, preventing overly greedy behavior [19]. |

| Multi-Objective Transformation | A method that transforms a COP into a multi-objective problem, treating constraint violations as separate objectives to be minimized [20]. |

| Penalty Function | A method that combines the objective function and constraint violation into a single function using penalty coefficients, which can be fixed, dynamic, or adaptive [19]. |

| Hybrid CHT (HCT) | A framework that combines different CHTs and switches between them adaptively based on the current state of the population during evolution [18]. |

Frequently Asked Questions

1. What are the fundamental types of constraints encountered in optimization problems? Optimization problems typically involve four main types of constraints [21]:

- Bound Constraints: These are simple lower and upper limits on individual variables (e.g., ( x ≥ l ) and ( x ≤ u )).

- Linear Inequality Constraints: Expressed in the matrix form ( A·x ≤ b ), they represent multiple linear conditions that the solution must not exceed.

- Linear Equality Constraints: Expressed as ( A{eq}·x = b{eq} ), they define exact linear relationships that the solution must satisfy.

- Nonlinear Constraints: These are general constraints of the form ( c(x) ≤ 0 ) and ( ceq(x) = 0 ), where ( c ) and ( ceq ) can be scalar or vector functions.

2. How should I efficiently formulate a constraint? For both efficiency and numerical stability, use the lowest-numbered constraint type possible from the following hierarchy [21]:

- Bounds

- Linear equalities

- Linear inequalities

- Nonlinear equalities

- Nonlinear inequalities For example, a constraint like ( 5x ≤ 20 ) should be formulated as a bound ( x ≤ 4 ) instead of a linear or nonlinear inequality [21].

3. What is the standard mathematical representation for problems with both equality and inequality constraints? A constrained optimization problem is often written in the standard form [22]: Maximize ( f(x) ) Subject to: ( gi(x) ≤ 0 ), for ( i = 1, ..., m ) ( hj(x) = 0 ), for ( j = 1, ..., p ) Note that any "greater than or equal to" inequality can be converted to this "less than or equal to" form by multiplying by -1 [21] [22].

4. What is a major challenge when optimizing molecular properties under drug-like constraints? A key challenge is the disconnected and irregular feasible molecular space created by stringent drug-like constraints (e.g., specific ring sizes) [23]. This makes it difficult to find molecules that are both high-performing and feasible. Effective strategies must balance property optimization with constraint satisfaction, often by dynamically handling constraints during the search process [23].

5. What are the Karush-Kuhn-Tucker (KKT) conditions? The KKT conditions are first-order necessary conditions for a solution to be optimal in a constrained nonlinear optimization problem. They extend the method of Lagrange multipliers to include inequality constraints [22]. For a solution to be a candidate optimum, there must exist Lagrange multipliers ( ( λi ) for inequalities and ( μj ) for equalities) such that the gradient of the Lagrangian function is zero, the constraints are satisfied, and the complementary slackness condition holds: ( λi \cdot gi(x) = 0 ) [22]. This means either an inequality constraint is active ( ( g_i(x) = 0 ) ) or its corresponding multiplier is zero.

Experimental Protocol: Dynamic Cooperative Optimization for Constrained Molecular Problems

This protocol is adapted from the CMOMO framework for constrained multi-property molecular optimization [23].

1. Objective To simultaneously optimize multiple molecular properties while satisfying several structural or drug-like constraints.

2. Materials and Reagents

| Item | Function in the Experiment |

|---|---|

| Lead Molecule (SMILES string) | The starting point for optimization, represented by its Simplified Molecular Input Line Entry System string. |

| Public Molecular Database | Used to construct a "Bank" library of high-property molecules similar to the lead for high-quality population initialization. |

| Pre-trained Molecular Encoder | A model that converts discrete molecular structures (SMILES) into continuous vector representations for efficient search. |

| Pre-trained Molecular Decoder | A model that converts continuous vectors back into valid molecular structures for property evaluation. |

| Property Evaluation Software | Tools to compute the target molecular properties (e.g., QED, PlogP) for each generated molecule. |

| Constraint Checking Scripts | Custom scripts to verify if a generated molecule adheres to the defined structural constraints (e.g., ring size rules). |

3. Methodology

Step 1: Population Initialization

- Construct a Bank library from a public database containing molecules with high desired properties that are structurally similar to the lead molecule.

- Encode the lead molecule and all molecules in the Bank library into a continuous latent space using the pre-trained encoder.

- Generate an initial population of molecules by performing linear crossover between the latent vector of the lead molecule and the latent vectors of molecules in the Bank library.

Step 2: Dynamic Cooperative Optimization This step involves an iterative evolutionary process that dynamically handles constraints.

- A. Unconstrained Scenario Search:

- Use a Vector Fragmentation-based Evolutionary Reproduction (VFER) strategy on the latent population to generate offspring in the continuous space.

- Decode both parent and offspring molecules back to discrete chemical structures (SMILES) using the pre-trained decoder.

- Evaluate the properties of all molecules.

- Select the best molecules for the next generation using a multi-objective selection strategy (e.g., NSGA-II) based solely on their property values, ignoring constraints at this stage. The goal is to first find molecules with good convergence and diversity of properties.

- B. Transition to Constrained Scenario:

- After iterating in the unconstrained scenario, shift the focus to finding molecules that are both high-performing and feasible.

- The selection criteria are modified to prioritize molecules that satisfy all constraints while maintaining good property values.

- C. Iteration:

- Repeat the reproduction, evaluation, and selection steps until a termination criterion is met (e.g., a maximum number of generations or convergence of the population).

4. Expected Results The algorithm is expected to output a set of Pareto-optimal molecules that represent the best trade-offs between the multiple optimized properties, all while satisfying the predefined drug-like constraints.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Solution | Function |

|---|---|

| Pre-trained Molecular Encoder/Decoder | Enables a smooth and efficient search for molecules by translating between discrete chemical space (SMILES) and a continuous, meaningful latent space. |

| Constraint Handling Strategy (e.g., Dynamic) | Manages how constraints are applied during optimization. A dynamic strategy can first focus on finding high-performance solutions before filtering for feasibility, preventing premature convergence. |

| Multi-Objective Selection Algorithm (e.g., NSGA-II) | Manages the trade-offs between conflicting property objectives by selecting a diverse set of solutions that are non-dominated, forming a Pareto front. |

| Feasible Region Analyzer | A diagnostic tool to understand the search space defined by the constraints, helping to identify whether it is connected, convex, or disjoint, which impacts algorithm choice. |

Constraint Types in Optimization

The table below summarizes the primary constraint types used in numerical optimization, which form the basis for more complex constraint landscapes [21].

| Constraint Type | Standard Form | Key Characteristics |

|---|---|---|

| Bound Constraints | ( x ≥ l ) and ( x ≤ u ) | Simplest form; define min/max values for each variable. |

| Linear Inequality | ( A·x ≤ b ) | Define a convex, multi-dimensional half-space. |

| Linear Equality | ( A{eq}·x = b{eq} ) | Restrict solutions to a line or plane; reduce solution space dimension. |

| Nonlinear Constraints | ( c(x) ≤ 0 ) and ( ceq(x) = 0 ) | Most general form; can create non-convex, complex feasible regions. |

Adaptive Constraint Handling Workflow

The following diagram illustrates the logical flow of the dynamic cooperative optimization method (CMOMO) for handling complex constraints, which transitions from an unconstrained to a constrained search to effectively balance property optimization with constraint satisfaction [23].

Constrained Multi-Objective Optimization Problems (CMOPs) require the simultaneous optimization of multiple conflicting objectives while satisfying various constraints. These problems are ubiquitous in real-world applications, including engineering design, resource allocation, scheduling optimization, and drug development [24] [25]. A CMOP can be mathematically formulated as follows [25]:

Minimize: F(x) = (f₁(x), f₂(x), ..., fₘ(x))

Subject to: gᵢ(x) ≤ 0, i = 1, ..., l hᵢ(x) = 0, i = 1, ..., k x = (x₁, x₂, ..., xD)T ∈ ℝ

Here, F(x) represents the objective vector with m conflicting objectives, gᵢ(x) are inequality constraints, hᵢ(x) are equality constraints, and x is a D-dimensional decision vector within the search space ℝ [25]. The core challenge lies in balancing objective optimization with constraint satisfaction, a task for which Multi-Objective Evolutionary Algorithms (MOEAs) have proven particularly effective due to their population-based approach and ability to handle complex, non-linear landscapes [25].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between handling constraints in single-objective versus multi-objective optimization?

In single-objective optimization, the goal is to find a single optimal solution that satisfies all constraints. In multi-objective optimization, the aim is to find a set of trade-off solutions, known as the Pareto front. When constraints are involved, this becomes the Constrained Pareto Front (CPF). The challenge is magnified because you must balance not only multiple objectives but also ensure a diverse set of solutions satisfies all constraints. The principle remains similar—feasible solutions are preferred over infeasible ones—but the techniques must be integrated into the environmental selection process of a Multi-Objective Evolutionary Algorithm (MOEA) to manage a population of solutions, not just a single one [25].

Q2: My algorithm converges prematurely to a local optimum. How can I encourage better exploration of the search space?

Premature convergence often occurs when the algorithm is overly greedy in selecting feasible solutions early on, trapping the population in a limited feasible region. Several strategies can mitigate this:

- Implement a Multi-Stage Framework: Relax constraints in the initial stage to allow exploration of infeasible regions that might contain useful information. In subsequent stages, gradually enforce strict constraints to pull promising solutions toward feasibility [26] [8].

- Use a Multi-Population Approach: Maintain separate populations with different tasks. For example, one population can focus on optimizing objectives without considering constraints, while another works on finding feasible solutions. Allowing knowledge transfer between these populations can enhance overall exploration [26].

- Employ an Archive Mechanism: An external archive can store promising infeasible solutions or those from under-explored regions. This information can be used later to guide the population out of local optima [8].

Q3: What does a "good" feasible solution proportion look like during evolution, and when should I intervene?

There is no universally ideal proportion, as it is highly problem-dependent. However, monitoring this proportion is crucial for adaptive techniques. A very low proportion of feasible solutions (e.g., near 0%) throughout the run suggests the constraints are very difficult, and your algorithm might need to relax them to find any feasible region. Conversely, a very high proportion (e.g., near 100%) very early might indicate premature convergence. A common intervention is to use an adaptive constraint relaxation mechanism. For instance, you can dynamically adjust a relaxation parameter ε based on the current feasible ratio, tightening it as more feasible solutions are found to refine the search [8].

Q4: How can I handle problems where the global optimum lies on a narrow feasible boundary?

This is a classic challenge. Techniques that are overly strict with feasibility can easily miss these narrow regions. Consider the following:

- ε-Constraint Method: This technique allows infeasible solutions with a constraint violation below a threshold

εto be treated as feasible. This enables the algorithm to "tunnel through" infeasible regions to reach distant or narrow feasible areas. The thresholdεcan be adaptively decreased during the evolutionary process [27] [28]. - Stochastic Ranking: This method introduces a probability

Pof comparing infeasible solutions based on their objective function value, rather than always ranking by constraint violation. This provides a chance for infeasible solutions with good objective values (which might be near a narrow feasible boundary) to survive and contribute to the search [27] [19].

Troubleshooting Common Experimental Issues

Issue 1: Poor Diversity in Final Solution Set

- Symptoms: The obtained solutions are clustered in a small area of the Constrained Pareto Front (CPF), failing to cover its full extent.

- Potential Causes:

- Over-emphasis on Feasibility: The Constraint Dominance Principle (CDP) can be too greedy, causing the population to lose diversity once it enters a feasible region.

- Poor Mating Selection: Selection pressure focuses only on convergence and feasibility, neglecting diversity maintenance.

- Solutions:

- Integrate Diversity Metrics: Incorporate diversity measures, such as crowding distance or angle-based selection, into the environmental selection process. This ensures that solutions in sparser regions are preserved [26].

- Use Decomposition-Based MOEAs: Algorithms like MOEA/D decompose the problem into several single-objective subproblems. This naturally promotes diversity by forcing the population to spread across these subproblems [25].

- Diversity-Based Archive Ranking: When updating an archive, rank solutions not only by feasibility and convergence but also by their contribution to population diversity [8].

Issue 2: Inability to Find Any Feasible Solutions

- Symptoms: The algorithm terminates without finding a single feasible solution.

- Potential Causes:

- Overly Strict Equality Constraints: Equality constraints are too tight, making the feasible space extremely small or a set of measure zero.

- Insufficient Exploration: The algorithm is not effectively exploring the search space and is missing feasible regions entirely.

- Solutions:

- Relax Equality Constraints: Convert equality constraints

h(x) = 0into inequality constraints|h(x)| - δ ≤ 0, whereδis a small tolerance value (e.g., 1e-4 or 1e-6). This is a standard and necessary practice [27] [25]. - Employ a Push-Pull Framework: In the "Push" stage, completely ignore constraints to encourage global exploration of the objective space. In the "Pull" stage, apply constraint handling techniques to guide the discovered promising solutions toward feasibility [26].

- Adaptive Relaxation: Implement an algorithm like ACREA, which adaptively relaxes constraints based on the current population's state, making it easier to find initial feasible solutions and then tightening the relaxation over time [8].

- Relax Equality Constraints: Convert equality constraints

Issue 3: High Computational Cost

- Symptoms: The algorithm takes an impractically long time to converge, especially when function evaluations are expensive.

- Potential Causes:

- Inefficient Constraint Handling: The method for calculating and comparing constraint violations is computationally heavy.

- Lack of Surrogate Models: Every solution is evaluated using the true, expensive functions.

- Solutions:

- Surrogate-Assisted Evolution: Train machine learning models (e.g., Kriging, neural networks) to approximate the objective and constraint functions. The evolutionary algorithm then works primarily on these cheap surrogates, only occasionally calling the true expensive functions [27].

- Ensemble Constraint Handling: Techniques like RECHT use a ranking-based ensemble to dynamically select the most effective constraint handling technique, which can reduce the number of function evaluations required to find a good solution [28].

- Feasibility-Aware Mating Selection: Before generating offspring, ensure that parents are selected in a way that promotes the generation of feasible or promising infeasible solutions, reducing wasted evaluations [19].

Experimental Protocols for Key Constraint Handling Techniques

Protocol 1: Evaluating a Two-Stage Archive (CMOEA-TA) Algorithm

This protocol is based on the CMOEA-TA framework, which uses different strategies at different stages of evolution [26].

- Initialization: Randomly generate an initial population P and an empty archive A.

- Stage 1 - Exploration with Relaxed Constraints:

- Constraint Relaxation: Calculate the proportion of feasible solutions in the population. Based on this proportion and the overall constraint violation, relax the constraints. For example, treat a solution as "relaxed-feasible" if its total constraint violation

CV(x)is less than a dynamically adjusted thresholdε. - Archive Update: The archive A stores the best solutions found under these relaxed constraints, focusing on optimizing the objectives.

- Goal: Encourage the population to explore the entire search space, including promising infeasible regions, to build a global picture of the Pareto front.

- Constraint Relaxation: Calculate the proportion of feasible solutions in the population. Based on this proportion and the overall constraint violation, relax the constraints. For example, treat a solution as "relaxed-feasible" if its total constraint violation

- Stage 2 - Exploitation with Strict Constraints:

- Information Sharing: Merge the archive A with the current population P.

- Strict Constraint Handling: Switch to a strict constraint handling method, such as the Constraint Dominance Principle (CDP), to refine the solutions.

- Angle-Based Selection: To maintain diversity, use an angle-based selection strategy that prioritizes solutions that increase the spread of the population on the Pareto front.

- Termination: Repeat Stage 2 until a termination criterion (e.g., maximum number of evaluations) is met. The output is the set of non-dominated feasible solutions from the final population and archive.

Protocol 2: Implementing an Adaptive Constraint Relaxation (ACREA)

This protocol focuses on dynamically adjusting how constraints are handled based on the algorithm's progress [8].

- Initialization: Generate an initial population. Initialize the relaxation parameter

εto a large value. - Main Loop:

- Evaluate & Classify: Evaluate all individuals and calculate their constraint violation

CV(x). Classify them as feasible or infeasible based on the currentε. - Archive Management: Maintain an archive that stores high-quality solutions (both feasible and promising infeasible ones) using a diversity-based ranking to ensure a good spread.

- Mating Selection: For parent selection, use a strict domination principle that strongly favors feasible solutions and high-quality infeasible solutions with low constraint violation.

- Adapt

ε: Periodically, adjust the relaxation parameterεbased on feedback from the population. A simple rule is to decreaseεif the proportion of feasible solutions is high, and increase it if the proportion is too low. This adaptively balances exploration and exploitation.

- Evaluate & Classify: Evaluate all individuals and calculate their constraint violation

- Termination: The algorithm outputs the non-dominated feasible solutions from the final population and archive.

Quantitative Data on Algorithm Performance

The following table summarizes the reported performance of several state-of-the-art algorithms on standard benchmark problems, as found in the literature. Metrics like IGD (Inverted Generational Distance) and HV (Hypervolume) are commonly used, where lower IGD and higher HV values indicate better performance.

Table 1: Performance Comparison of State-of-the-Art CMOEAs

| Algorithm | Key Mechanism | Reported Performance (vs. Competitors) | Best For |

|---|---|---|---|

| CMOEA-TA [26] | Two-Stage with Archive | "Far superior" in IGD and HV on 54 benchmark CMOPs. | Complex feasible regions, maintaining diversity. |

| ACREA [8] | Adaptive Constraint Relaxation | Best IGD in 54.6% and best HV in 50% of 44 benchmark tests. Best in 7 of 9 real-world problems. | Problems with large or narrow infeasible regions. |

| EALSPM [19] | Learning Strategies & Predictive Model | "Competitive performance" on CEC2010 and CEC2017 benchmarks. | Leveraging information from good individuals for faster convergence. |

| RECHT [28] | Ranking-Based Ensemble | "Superior performance" on CEC benchmarks, reduces function evaluations. | General-purpose, robust performance across diverse problems. |

Research Reagent Solutions: Essential Algorithmic Components

In experimental optimization, think of these core algorithmic components as your essential "reagents" for designing a successful CMOEA.

Table 2: Essential Components for a CMOEA Framework

| Component / "Reagent" | Function | Examples |

|---|---|---|

| Base MOEA | Provides the core multi-objective optimization engine. | NSGA-II, MOEA/D, SPEA2 [25] |

| Constraint Handling Technique (CHT) | Manages how constraints are evaluated and used to guide selection. | Constraint Dominance Principle (CDP), ε-Constraint, Stochastic Ranking [27] [25] |

| Archiving Mechanism | Stores high-quality solutions (feasible and infeasible) during evolution for later use. | Two-Stage Archive, Diversity-Based Archive [26] [8] |

| Relaxation Strategy | Dynamically adjusts the strictness of constraints to aid exploration. | Adaptive ε-Level, Feasibility Proportion-based Relaxation [8] |

| Surrogate Model | Approximates expensive objective/constraint functions to reduce computational cost. | Kriging, Neural Networks, Polynomial Regression [27] |

Visualized Workflows

The following diagrams illustrate the logical structure of two prevalent algorithmic frameworks for solving CMOPs.

Diagram 1: Two-Stage Archive (CMOEA-TA) Workflow. This framework separates exploration (with relaxed constraints) from exploitation (with strict constraints), using an archive to bridge the two stages [26].

Diagram 2: Adaptive Constraint Relaxation (ACREA) Workflow. This algorithm features a continuous feedback loop where the constraint relaxation parameter ε is dynamically adapted based on the population's state [8].

Advanced Adaptive CHT Methodologies: Techniques and Implementations

Frequently Asked Questions (FAQs)

Q1: What are the common reasons for slow learning convergence when using DRL for CHT selection? Slow learning convergence is often caused by a poorly designed state representation. If the state does not succinctly capture task-relevant information like feasibility, convergence, and diversity of the population, the agent struggles to learn an effective policy. Using raw coordinates or data that includes irrelevant information forces the agent to learn complex relationships from scratch, significantly reducing sample efficiency [29]. Furthermore, a lack of diversity in the training data, which can be mitigated by using multiple co-evolving populations, also hampers the learning process [30].

Q2: How can I design an effective state representation for my COP agent? An effective state representation should be compact, informative, and generalizable. Instead of raw coordinates, it should encode meaningful relationships. A proven approach includes [29]:

- Directional information: Representing the direction to a goal as a compass direction.

- Distance information: Using normalized distance to a goal.

- Constraint awareness: Including information about constraint violations or the "danger" from nearby constrained regions.

- Diversity and convergence metrics: Incorporating information on the population's feasibility, convergence, and diversity to guide the search in COPs [30]. This transforms raw data into features that directly inform the optimal policy.

Q3: My DRL agent fails to satisfy state-wise constraints. What methods can help? The State-wise Constrained Policy Optimization (SCPO) algorithm is specifically designed for this challenge. Unlike traditional Constrained MDP (CMDP) frameworks that often only consider cumulative constraints, SCPO provides guarantees for state-wise constraint satisfaction in expectation. It introduces the framework of Maximum Markov Decision Process and has been demonstrated to effectively bound the worst-case safety violation in high-dimensional tasks like robot locomotion [31].

Q4: Can Q-learning be integrated with other architectures to solve complex scheduling COPs? Yes. A powerful example is the Two-stage Graph Attention Networks and Q-learning (TSGAT+Q-learning) framework for maintenance task scheduling. This method uses a graph neural network (GNN) to embed complex graph-structured information about the problem (like technicians and tasks). The output of this network is then used as the input state for a Q-learning agent, which makes the final scheduling decisions. This hybrid approach combines the representational power of GNNs with the decision-making capabilities of Q-learning to solve large-scale, constrained problems [32].

Troubleshooting Guides

Issue 1: Poor Generalization Across Different COP Datasets Problem: Your DRL agent, trained on one dataset, performs poorly on another with a different distribution of constraints or objective functions. Solution:

- Implement an Adaptive Learning Framework: Use a framework like Adaptive-DTA, which employs reinforcement learning to automatically search for and optimize the neural network architecture based on the specific dataset and task. This reduces reliance on fixed, manually-designed architectures [33].

- Use Multiple CHTs: Incorporate multiple constraint handling techniques (CHTs) within a co-evolutionary framework. Different populations can be assigned different CHTs, enhancing the algorithm's versatility and ability to handle a wider range of problems [30].

- Verify State Representation: Ensure your state representation is not over-fitted to the training dataset. Features should be relative (e.g., directional information, normalized distances) rather than absolute (e.g., specific coordinates) to improve generalization [29].

Issue 2: Inefficient or Unstable Training of the Q-Network Problem: The Q-network fails to converge, or the training process is unstable with high variance in rewards. Solution:

- Design a Better Reward Function: The reward should closely reflect the overall improvement of the population. In CEDE-DRL, rewards are based on the degree of improvement in feasibility, convergence, and diversity. For scheduling problems, novel rewards like an exponential reward function have been shown to speed up training [30] [32].

- Improve State Representation: Revisit the state design. A well-structured state that provides relevant information is crucial for stable learning. Poor state representations are a primary cause of slow and unstable training [29].

- Ensure Diverse Training Data: In co-evolutionary DRL, use multiple populations to generate diverse training samples. This improves the accuracy of the neural network model and stabilizes training by providing a richer set of experiences [30].

Issue 3: Agent Cannot Handle State-Wise Safety Constraints Problem: The agent violates hard constraints that must be satisfied at every step (state-wise), not just on average. Solution:

- Adopt a State-Wise Constrained Algorithm: Move beyond standard CMDP algorithms. Implement the SCPO algorithm, which is a general-purpose policy search method designed specifically for state-wise constrained reinforcement learning. It provides theoretical guarantees for state-wise constraint satisfaction [31].

- Refine State and Reward: Integrate explicit constraint violation metrics into the state representation. The reward function should also provide a strong, clear penalty for any state-wise constraint violation to guide the agent away from unsafe states.

Experimental Protocols & Methodologies

Protocol 1: CEDE-DRL for Constrained Optimization This protocol is for solving COPs using a co-evolutionary framework with DRL [30].

- Initialization: Create four sub-populations, each assigned a different CHT to ensure generality.

- State Representation: For each population, define the state

sto include metrics for:- Feasibility (e.g., degree of constraint violation)

- Convergence (e.g., improvement in objective function)

- Diversity (e.g., distribution of solutions)

- Action and Reward: The DQN agent selects a parent population for mutation. The reward is the overall improvement of the population.

- Training: Train the DQN model using experiences generated from the co-evolving populations. An archive elimination mechanism helps avoid local optima.

- Evaluation: Compare performance against state-of-the-art algorithms on benchmark sets like CEC2017 and real-world problems from CEC2020.

Protocol 2: Optimal Adaptive Allocation in Clinical Trials This protocol uses DRL for adaptive patient allocation in phase II dose-ranging trials [34].

- Problem Setup: Predetermine

Kdiscrete dose levels and the total number of subjectsN. - State Definition: The state

sis a vector containing:- The mean response difference from placebo for each dose

(Ȳ₂-Ȳ₁, Ȳ₃-Ȳ₁, ..., Ȳκ-Ȳ₁) - The standard deviation of responses for each dose

(σ̂₁, ..., σ̂κ) - The proportion of subjects allocated to each dose

(n₁/N, ..., nκ/N)

- The mean response difference from placebo for each dose

- Action: Allocate the next block of patients to a dose based on the policy.

- Reward: The reward is based on the selected performance metric to be optimized, such as:

- Statistical Power

- Accuracy of Model Selection (MS)

- Accuracy of the Target Dose (TD)

- Mean Absolute Error (MAE) of the dose-response curve

- Optimization: Use DRL to find an allocation rule

π*that directly optimizes the chosen metric, outperforming equal allocation or asymptotic methods like D-optimal.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Component | Function in the Experimental Framework |

|---|---|

| Co-evolutionary Populations | Maintains multiple populations with different CHTs to ensure sample diversity and improve neural network training accuracy [30]. |

| Deep Q-Network (DQN) | The core RL agent that evaluates the population state and selects suitable parent populations for mutation, guiding evolutionary direction [30]. |

| Directed Acyclic Graph (DAG) | Defines a flexible search space for neural network architectures, enabling automated model design in frameworks like Adaptive-DTA [33]. |

| Graph Neural Network (GNN) | Processes graph-structured data (e.g., molecular structures, task-agent networks) to learn meaningful embeddings for downstream decision-making [33] [32]. |

| State-wise Constrained Policy Optimization (SCPO) | A specialized algorithm for enforcing state-wise safety constraints, providing satisfaction guarantees in expectation [31]. |

| Exponential Reward Function | A specially designed reward signal (e.g., in TSGAT+Q-learning) to accelerate model training convergence in REINFORCE algorithms [32]. |

Workflow and System Diagrams

The following diagram illustrates the typical workflow for integrating Deep Q-Learning with state representation for adaptive CHT selection:

This diagram outlines the integration of a co-evolutionary structure with the DRL agent for more robust training:

Frequently Asked Questions

Q1: What is the primary advantage of using a multi-stage framework over a single-stage approach for Constrained Multi-Objective Optimization Problems (CMOPs)?

A multi-stage evolutionary framework addresses a key limitation of single-stage approaches: their inability to adapt to the changing characteristics of a population during the search process. By dividing the optimization into distinct phases, each with a specialized strategy, these frameworks can more effectively balance the conflict between constraint satisfaction and objective optimization. For example, the Multi-Stage Evolutionary Framework with Adaptive Selection (MSEFAS) uses different stages to encourage promising infeasible solutions to approach the feasible region, increase diversity, span large infeasible regions to accelerate convergence, and finally handle the relationship between constrained and unconstrained Pareto fronts. This adaptive, staged approach has demonstrated effectiveness in handling a wide range of CMOPs with complex feasible regions [5].

Q2: How does an algorithm adaptively determine when to switch between stages?

Advanced frameworks use performance metrics or population state features to trigger stage transitions autonomously. The MSEFAS framework, for instance, treats the optimization stage with higher validity of selected solutions as the first stage and the other as the second, effectively using solution quality to determine execution order [5]. Other approaches, like the Evolutionary Algorithm assisted by Learning Strategies and a Predictive Model (EALSPM), divide the evolutionary process into random learning and directed learning stages, where subpopulations interact using different strategies [19]. Furthermore, Deep Reinforcement Learning (DRL)-based methods can model this as a Markov Decision Process, using neural networks to learn the optimal policy for stage transitions based on real-time population state features [35].

Q3: Why does my algorithm converge to locally optimal, feasible solutions instead of exploring the global Pareto front?

This common issue, known as "feasibility-driven greediness," occurs when the algorithm prioritizes constraint satisfaction over objective optimization too aggressively. This is particularly problematic in CMOPs where the global optimum lies near constraint boundaries or where feasible regions are disconnected. To address this:

- Implement Feasibility Rules with Objective Information: Approaches like FROFI (Feasibility Rule with Objective Function Information) utilize objective function information to mitigate the well-known greediness of feasibility rules [19].

- Balance Search Efforts: Ensure your framework includes stages that specifically enable the population to span large infeasible regions. This helps maintain diversity and prevents premature convergence to the first encountered feasible region [5].

- Adaptive Constraint Handling: Techniques like the adaptive ε-constraint method control the level of constraint relaxation through heuristic rules based on the current population's constraint violation information, helping the population traverse infeasible regions to reach better feasible areas [19].

Q4: How can I effectively utilize infeasible solutions during the optimization process?

Infeasible solutions can provide valuable information about promising search directions, especially when feasible regions are disconnected or narrow. The key is a balanced approach:

- Controlled Acceptance: Some multi-stage frameworks deliberately encourage promising infeasible solutions to approach the feasible region while increasing diversity in the early stages of optimization [5].

- Information Mining: Techniques like CORCO (Constraint and Objective Relationship Guided Optimization) mine the correlation between constraints and the objective to guide evolution, leveraging information from both feasible and infeasible solutions [19].

- Co-evolutionary Techniques: Algorithms like C-TAEA, CCMODE, and CCMO maintain multiple populations to achieve a balanced search in both feasible and infeasible regions [5].

Q5: What are the signs of poor stage sequencing, and how can it be diagnosed?

Poor stage sequencing manifests as:

- Slow Convergence: The population takes too long to approach the feasible region or the Pareto front.

- Diversity Loss: The population lacks spread across the Pareto front, often getting stuck in one area.

- Premature Convergence: The algorithm converges to a local optimum early in the process.

Diagnosis should involve monitoring key population metrics:

- Track the proportion of feasible solutions over generations.

- Monitor the change in population diversity (e.g., using spread or spacing metrics).

- Observe the rate of improvement in objective values and overall constraint violation.

Q6: How can surrogate models be integrated into multi-stage evolutionary frameworks?

Surrogate models address computational cost by approximating expensive function evaluations. The Multi-Surrogate Framework with an Adaptive Selection Mechanism (MSFASM) demonstrates a two-stage integration:

- Algorithm Selection Stage: A reduced-dimensional Broad Learning System (BLS) adaptively selects the evolutionary algorithm with the best performance during the current optimization period.

- Optimization Stage: A multi-objective algorithm (e.g., NSGA-II) finds Pareto solutions that perform well on multiple surrogate models. An optimal point criterion then selects the final solutions without burdening the numerical simulator [36]. This approach improves adaptability to various problem types by addressing both algorithm and model selection [36].

Troubleshooting Guides

Issue 1: Population Diversity Collapse in Later Stages

Problem: The population loses diversity during the late-phase search, resulting in a poor approximation of the entire Pareto front.

Solution: Implement mechanisms to preserve diversity specifically in later stages.

- Recommended Technique: Introduce a diversity archive or modify the third stage of frameworks like MSEFAS to specifically consider the distribution of solutions along the Pareto front. Avoid relying solely on adaptive penalty terms in late optimization, as this can reduce diversity [5].

- Experimental Protocol:

- Setup: Use a CMOP benchmark with a disconnected feasible region or a discrete Pareto front (e.g., C-DTLZ series).

- Implementation: Augment your algorithm's final stage with a separate diversity archive. Use a crowding distance metric or a niche count to maintain a spread of solutions.

- Evaluation: Compare the performance (using metrics like Inverted Generational Distance and Spread) of the standard algorithm versus the diversity-augmented version over 30 independent runs.

Issue 2: Ineffective Handling of Problems with Disconnected Feasible Regions

Problem: The algorithm fails to find all disconnected feasible components, converging to only one.

Solution: Employ co-evolutionary or multi-population techniques that can explore multiple regions simultaneously.

- Recommended Technique: Adopt a classification-collaboration constraint handling technique, as seen in EALSPM. Here, constraints are randomly classified into K groups, decomposing the problem into K subproblems. K subpopulations then solve these subproblems, interacting through learning strategies [19].

- Experimental Protocol:

- Setup: Configure the EALSPM framework on a benchmark with disconnected feasible regions from CEC2010 or CEC2017.

- Parameters: Set the number of constraint classes, K. Subpopulation sizes can be equal. The random learning and directed learning stages facilitate interaction.

- Metrics: Measure the ratio of found feasible components to total known components and the coverage of the Pareto front over multiple runs.

Issue 3: Poor Adaptive Selection of Evolutionary Operators

Problem: The framework's performance is highly variable because it fails to select the most effective evolutionary operator (e.g., mutation strategy) for different problems or search stages.

Solution: Implement a learning-based auto-configuration system.

- Recommended Technique: Integrate a foundation model like SuperDE, which uses Deep Reinforcement Learning (DDQN) for component auto-configuration. Trained offline on diverse COPs, it can recommend optimal per-generation configurations (like mutation strategies and constraint handling techniques) for unseen problems in a zero-shot manner [35].

- Experimental Protocol:

- Setup: Use the SuperDE model or implement a similar DRL-based controller. Define a pool of candidate components (e.g., 4 mutation strategies and 7 constraint handling techniques).

- State Features: Design state features that reflect the population's distribution regarding decision variables, objectives, and constraints.

- Training/Execution: For a new problem, use the pre-trained SuperDE model to map the current population state to the most suitable algorithm components for each generation.

- Validation: Compare the performance against state-of-the-art algorithms on benchmark test suites to verify generalization and optimization performance [35].

Experimental Protocols & Methodologies

Protocol 1: Validating a Multi-Stage Framework (MSEFAS)

This protocol outlines the steps to test the core adaptive mechanics of a multi-stage framework like MSEFAS [5].

1. Objective: Verify that the adaptive stage sequencing and the three-stage process lead to superior performance on CMOPs with varying characteristics.

2. Materials (Benchmarks): A diverse set of 56 CMOP benchmarks, including problems where the unconstrained Pareto front is partially or completely infeasible.

3. Procedure:

- Step 1: For the early phase, run the two initial stages (diversity-focused and convergence-focused) and measure the "validity of selected solutions" to determine the adaptive execution order.

- Step 2: Proceed with the selected stage order, allowing the population to evolve.

- Step 3: In the late phase, activate the third stage to handle the relationship between the constrained and unconstrained Pareto fronts.

- Step 4: Terminate upon reaching the maximum function evaluations.

4. Comparison: Compare results against 11 state-of-the-art constrained multi-objective evolutionary algorithms (CMOEAs).

5. Key Metrics:

- Inverted Generational Distance (IGD)

- Hypervolume (HV)

- Feasibility Ratio

Protocol 2: Testing a Two-Stage Algorithm with Learning (EALSPM)

This protocol tests an algorithm that divides evolution into random and directed learning stages [19].

1. Objective: Evaluate the effectiveness of classification-collaboration constraint handling and the two-stage learning process.

2. Materials: Benchmark functions from CEC2010 and CEC2017, plus two practical problems.

3. Procedure:

- Initialization: Randomly classify all constraints into K classes, creating K subproblems. Generate K corresponding subpopulations.

- Stage 1 - Random Learning: Subpopulations evolve their subproblems and interact using random learning strategies.

- Stage 2 - Directed Learning: Subpopulations interact using directed learning strategies to generate potentially better solutions for the original problem.

- Offspring Prediction: An improved estimation of distribution model, based on good individuals, is used to predict offspring.

4. Key Metrics:

- Best Objective Function Value Found

- Consistency in Finding Feasible Solutions

- Statistical Significance Tests (e.g., Wilcoxon rank-sum test)

Research Reagent Solutions

The table below details key algorithmic components and their functions in adaptive multi-stage evolutionary frameworks.