Accelerating Discovery: A Comprehensive Analysis of Convergence Speed in Evolutionary Multitasking Optimization

This article provides a systematic analysis of convergence speed in Evolutionary Multitasking Optimization (EMTO), an emerging paradigm that simultaneously solves multiple optimization tasks by leveraging inter-task knowledge transfer.

Accelerating Discovery: A Comprehensive Analysis of Convergence Speed in Evolutionary Multitasking Optimization

Abstract

This article provides a systematic analysis of convergence speed in Evolutionary Multitasking Optimization (EMTO), an emerging paradigm that simultaneously solves multiple optimization tasks by leveraging inter-task knowledge transfer. Targeting researchers and computational biologists, we explore foundational principles, advanced methodologies, and optimization techniques that enhance EMTO convergence rates. Through comparative analysis of state-of-the-art algorithms and validation frameworks, we demonstrate how accelerated EMTO convergence can transform complex problem-solving in biomedical research, including drug discovery and clinical optimization challenges. The synthesis of troubleshooting approaches and performance validation offers practical guidance for implementing EMTO in computationally expensive research domains.

Understanding Evolutionary Multitasking: Principles and Convergence Fundamentals

Evolutionary Multitasking Optimization (EMTO) is an emerging paradigm in computational intelligence that enables the simultaneous solving of multiple optimization tasks. By leveraging the implicit parallelism of population-based search, EMTO facilitates knowledge transfer (KT) between tasks, often leading to accelerated convergence speeds and superior solution quality compared to traditional single-task optimization [1].

Fundamental Principles and Key Algorithms

EMTO operates on the principle that useful knowledge gained while solving one task can improve the performance of another related task. The foundational algorithm in this field is the Multifactorial Evolutionary Algorithm (MFEA), which creates a multi-task environment where a single population evolves under the influence of multiple "cultural factors" or tasks [1].

Subsequent advancements have introduced various algorithmic improvements:

- MFEA-II incorporates online learning to adaptively adjust transfer parameters [2] [3].

- Adaptive EMTO (AEMTO) designs separate intra-task and inter-task evolution mechanisms [2].

- Multitasking Genetic Algorithm (MTGA) evaluates and removes bias between tasks to improve transfer quality [2].

More recent approaches include the competitive scoring mechanism (MTCS) which quantifies the effects of transfer evolution and self-evolution to adaptively set knowledge transfer probability [4]. The association mapping strategy (PA-MTEA) uses subspace projection and alignment matrices to enhance cross-task knowledge transfer [5], while scenario-based self-learning transfer (SSLT) frameworks employ deep Q-networks to learn optimal transfer strategies for different evolutionary scenarios [6].

Knowledge Transfer: Mechanisms and Adaptive Strategies

Effective knowledge transfer is the cornerstone of successful EMTO implementation. The table below summarizes the primary transfer mechanisms and their characteristics:

Table: Knowledge Transfer Mechanisms in EMTO

| Transfer Mechanism | Description | Key Features | Representative Algorithms |

|---|---|---|---|

| Implicit Transfer | Genetic material exchange through crossover between individuals assigned to different tasks [5]. | Simple implementation; relies on task similarity; risk of negative transfer [5]. | MFEA, MFDE, MFPSO [2] |

| Explicit Transfer | Active identification and transfer of high-quality solutions or solution space characteristics [5]. | Targeted transfer; can handle more diverse tasks; requires specialized mechanisms [5]. | EMFF, DA-MFEA, PA-MTEA [5] [3] |

| Adaptive Transfer | Self-regulating strategies that adjust transfer parameters based on online learning of task relationships [6] [2]. | Mitigates negative transfer; improved robustness across various scenarios [6]. | MFEA-AKT, MFEA-II, SSLT [6] [2] |

Advanced strategies address the critical questions of when to transfer and how to transfer knowledge. The competitive scoring mechanism in MTCS addresses these questions by using scores to quantify evolutionary outcomes, adaptively adjusting transfer probability based on the competition between transfer evolution and self-evolution [4]. Similarly, SSLT frameworks automatically select from multiple scenario-specific strategies (e.g., shape KT, domain KT, bi-KT) using reinforcement learning [6].

Experimental Protocols and Performance Benchmarking

Standardized experimental protocols are essential for fair comparison of EMTO algorithms. Major competitions, such as the CEC 2025 Competition on Evolutionary Multi-task Optimization, provide established test suites and evaluation criteria [7].

Benchmark Problems and Evaluation Metrics

The WCCI20-MTSO and CEC17-MTSO benchmark suites are widely used for performance validation [4]. These suites contain problems categorized by the intersection degree of their solutions (complete intersection CI, partial intersection PI, no intersection NI) and similarity levels (high, medium, low) [4].

Standard experimental settings include [7]:

- 30 independent runs per algorithm with different random seeds

- Performance recording at predefined evaluation checkpoints (e.g., 100 checkpoints for 2-task problems)

- Evaluation using Best Function Error Value (BFEV) for single-objective problems and Inverted Generational Distance (IGD) for multi-objective problems

Performance Comparison

Experimental results demonstrate that advanced EMTO algorithms consistently outperform traditional single-task optimization approaches. The following table summarizes quantitative comparisons from recent studies:

Table: Performance Comparison of EMTO Algorithms on Benchmark Problems

| Algorithm | Key Mechanism | Reported Convergence Improvement | Negative Transfer Mitigation | Application Context |

|---|---|---|---|---|

| MTCS [4] | Competitive scoring, dislocation transfer | Superior to 10 state-of-the-art EMTO algorithms | Adaptive probability and source task selection [4] | Multitask and many-task optimization |

| PA-MTEA [5] | Association mapping, adaptive population reuse | Superior to 6 advanced EMT algorithms | Bregman divergence alignment minimizes inter-task variability [5] | Benchmark problems and photovoltaic parameter extraction |

| SSLT [6] | Self-learning framework with DQN | Favourable against state-of-the-art competitors | Automatically selects appropriate scenario-specific strategies [6] | MTOPs and interplanetary trajectory design |

| APMTO [2] | Auxiliary population, adaptive similarity estimation | Outperforms state-of-the-art on CEC2022 | Similarity-based KT frequency adjustment [2] | Chinese semantic understanding (potential) |

| CA-MTO [3] | Classifier-assisted, knowledge transfer for surrogates | Competitive edge on expensive multitasking problems | PCA-based subspace alignment for sample transformation [3] | Expensive optimization problems |

The Scientist's Toolkit: Essential Components for EMTO Research

Table: Research Reagent Solutions for EMTO Experimentation

| Tool/Resource | Function | Example Implementation/Notes |

|---|---|---|

| MTO-Platform Toolkit [6] | Integrated platform for algorithm development and testing | Provides standardized environment for performance comparison |

| CEC Benchmark Suites [7] | Standardized problem sets for controlled experimentation | Includes CEC17-MTSO, WCCI20-MTSO with various task relationships |

| Domain Adaptation Techniques [3] | Enable knowledge transfer between heterogeneous tasks | Includes PCA-based subspace alignment, linear transformation |

| Surrogate Models [3] | Reduce computational cost for expensive problems | Classifiers (SVC) or regression models (GP, RBF) |

| Deep Q-Networks (DQN) [6] | Enable self-learning transfer strategies | Map evolutionary scenario features to optimal strategies |

EMTO in Drug Discovery: A Convergence Synergy

While EMTO originates from computational intelligence, its principles show significant potential for drug discovery, particularly in addressing challenges like expensive optimization problems (EMTOPs) where evaluations involve time-consuming simulations or complex physical experiments [3].

In this context, classifier-assisted EMTO approaches integrate surrogate models like Support Vector Classifiers with evolutionary frameworks to distinguish promising solutions with minimal computational expense [3]. Knowledge transfer strategies further enhance this by transforming and aggregating labeled samples across related tasks, mitigating data sparseness issues common in early-stage drug development [3].

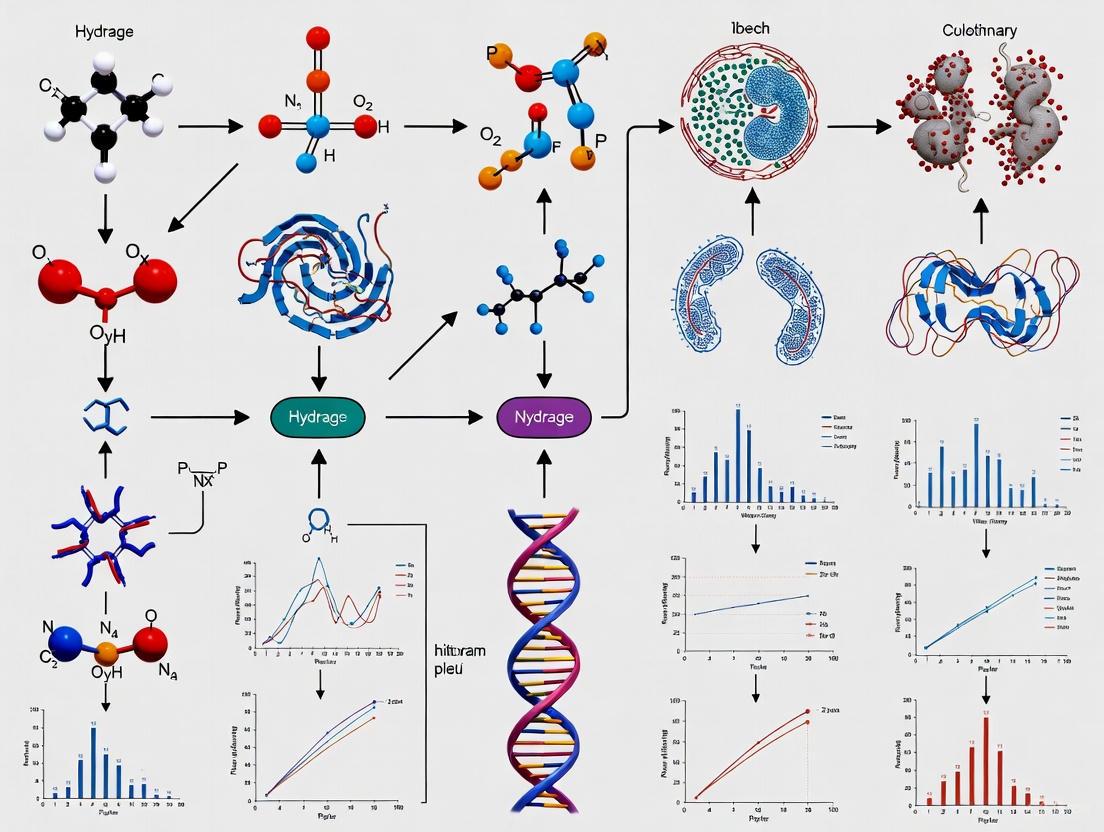

Figure 1: EMTO Knowledge Transfer Workflow. This diagram illustrates the adaptive process of knowledge transfer in evolutionary multitasking optimization, showing how competitive scoring and similarity assessment guide the choice between self-evolution and cross-task transfer.

Evolutionary Multitasking Optimization represents a paradigm shift in how computational optimization problems are approached, moving from isolated problem-solving to synergistic multi-task environments. The empirical evidence demonstrates that properly implemented knowledge transfer mechanisms can significantly enhance convergence speed and solution quality across diverse task types. As research progresses, EMTO continues to expand into more complex domains, including expensive optimization problems and real-world applications in drug discovery, where its ability to leverage latent synergies between tasks provides a distinct advantage over traditional approaches.

Multifactorial Evolutionary Algorithm (MFEA) establishes a foundational paradigm in evolutionary multitasking optimization (EMTO) by enabling the simultaneous solution of multiple optimization tasks. This guide objectively compares the performance of modern MFEA variants, analyzing their convergence speed and efficacy against other EMTO approaches, with supporting experimental data from recent research.

MFEA and the EMTO Paradigm

Evolutionary Multitask Optimization is an emerging field in evolutionary computation that aims to optimize multiple tasks concurrently by leveraging implicit parallelism of population-based search. The core principle involves transferring valuable knowledge across tasks during the evolutionary process, which can significantly enhance convergence speed and solution quality compared to traditional single-task optimization [1].

The Multifactorial Evolutionary Algorithm (MFEA), introduced by Gupta et al., represents the pioneering algorithm in this field. MFEA creates a unified search environment where a single population evolves while solving multiple tasks simultaneously. Each task is treated as a unique "cultural factor" influencing evolution, with knowledge transfer occurring through specialized genetic operations—assortative mating and vertical cultural transmission [1]. The algorithm uses a skill factor to identify which task an individual specializes in and a random mating probability (rmp) parameter to control cross-task reproduction [8].

Performance Comparison of MFEA Variants

Modern MFEA variants address key limitations of the original algorithm, particularly regarding negative transfer (where harmful rather than beneficial knowledge is shared) and adaptive operator selection. The table below summarizes experimental results for recent MFEA variants across standard benchmarks:

| Algorithm | Key Mechanism | Test Problems | Performance Findings | Convergence Speed |

|---|---|---|---|---|

| MFEA-MDSGSS [9] | Multidimensional scaling + golden section search | Single- & multi-objective MTO benchmarks | Superior to state-of-the-art algorithms | Faster convergence with higher solution quality |

| MFEA-DGD [10] | Diffusion gradient descent | Various multitask optimization problems | Faster convergence to competitive results | Provable convergence; benefits from knowledge transfer |

| MFEA-RL [11] | Residual learning crossover + dynamic skill factor assignment | CEC2017-MTSO, WCCI2020-MTSO | Outperforms state-of-the-art algorithms | Excellent convergence and adaptability |

| BOMTEA [8] | Adaptive bi-operator (GA + DE) strategy | CEC17, CEC22 benchmarks | Significantly outperforms comparative algorithms | Outstanding results via adaptive operator selection |

| EMT-ADT [12] | Decision tree-based adaptive transfer strategy | CEC2017, WCCI20-MTSO, WCCI20-MaTSO | Improved solution accuracy, especially for low-relevance tasks | Competitive performance on combinatorial problems |

| MTEA-PAE [13] | Progressive auto-encoding for domain adaptation | Six benchmark suites + real-world applications | Outperforms state-of-the-art algorithms | Enhanced convergence efficiency and solution quality |

Comparative Performance Insights

- MFEA-MDSGSS demonstrates particularly strong performance in mitigating negative transfer, especially between tasks with differing dimensionalities [9].

- BOMTEA's adaptive operator selection proves that no single evolutionary search operator is optimal for all tasks, with DE/rand/1 performing better on CIHS and CIMS problems, while GA operators show superiority on CILS problems [8].

- EMT-ADT addresses the challenge of low-relatedness tasks where traditional MFEAs often struggle with solution precision [12].

Detailed Experimental Protocols

Benchmarking Standards and Performance Metrics

Most MFEA variants are evaluated using standardized benchmark problems and performance metrics to ensure objective comparison:

- Common Benchmarks: CEC2017 Multitask Optimization Benchmark Problems, WCCI2020 Multi-Task Single-Objective (MTSO), and WCCI2020 Multi-Task Multi-Objective (MaTSO) benchmarks [12] [11].

- Performance Metrics: For convergence analysis, researchers typically use:

- Average Fitness Convergence: Tracking the best fitness values across generations.

- Solution Accuracy: Measuring the deviation from known optima.

- Statistical Tests: Wilcoxon signed-rank test to verify significance of performance differences [9].

MFEA-MDSGSS Experimental Methodology

The MFEA-MDSGSS algorithm employs these specific experimental protocols [9]:

- Component Ablation Study: Separate evaluation of MDS-based LDA and GSS-based linear mapping to quantify individual contributions.

- Parameter Sensitivity Analysis: Systematic investigation of key parameter influences on algorithm performance.

- Comparative Framework: Testing against multiple state-of-the-art EMTO algorithms across diverse problem types.

EMT-ADT Validation Framework

EMT-ADT utilizes these validation approaches [12]:

- Transfer Ability Quantification: Defining and measuring individual transfer ability using Gini coefficient-based decision trees.

- Success-History Based Adaptive DE (SHADE): Implementing SHADE as the search engine to demonstrate MFO paradigm generality.

- Combinatorial Problem Testing: Additional validation on Traveling Salesman Problem (TSP) and Tree Routing Problem (TRP).

Visualization of MFEA Mechanisms

MFEA Knowledge Transfer and Skill Factor Assignment

Advanced MFEA Variant Mechanisms

The Scientist's Toolkit: EMTO Research Reagents

This table details essential computational resources and methodologies for EMTO research and application development:

| Research Tool | Function in EMTO | Application Context |

|---|---|---|

| CEC2017/CEC2022 MTO Benchmarks | Standardized problem sets for algorithm comparison | Experimental validation and performance benchmarking [8] |

| Linear Domain Adaptation (LDA) | Aligns search spaces between different tasks | Facilitates knowledge transfer in cross-domain optimization [9] |

| Random Mating Probability (RMP) | Controls frequency of cross-task reproduction | Parameter tuning to balance exploration and exploitation [8] |

| Decision Tree Predictors | Predicts transfer ability of individuals | Identifies promising candidates for knowledge transfer [12] |

| Multi-Dimensional Scaling (MDS) | Establishes low-dimensional subspaces for each task | Enables knowledge transfer between different dimensionality tasks [9] |

| Progressive Auto-Encoding (PAE) | Learns mappings between problem domains | Dynamic domain adaptation throughout evolutionary process [13] |

| Skill Factor Assignment | Identifies task specialization for each individual | Enables implicit knowledge transfer in multifactorial framework [1] |

The MFEA framework continues to evolve with innovations addressing its core challenges. Modern variants demonstrate significant improvements in convergence speed and solution accuracy, particularly through adaptive transfer mechanisms, domain adaptation techniques, and hybrid operator strategies.

Future research directions include developing more sophisticated task-relatedness measures, creating theoretical foundations for EMTO convergence, and expanding applications to complex real-world problems such as drug discovery and personalized medicine optimization [1]. As these algorithms mature, they offer promising approaches for researchers and drug development professionals facing multiple interrelated optimization challenges.

In the field of evolutionary computation, particularly within evolutionary multitasking convergence speed analysis research, understanding knowledge transfer mechanisms is paramount for designing efficient algorithms. Knowledge can be categorized into three primary types—explicit, implicit, and tacit—each with distinct characteristics and transfer mechanisms. Explicit knowledge is easily articulated, codified, and transferred through formal documentation, while implicit knowledge represents the practical application of explicit knowledge to specific contexts [14] [15]. Tacit knowledge, deeply rooted in personal experience and intuition, is the most challenging to formalize and transfer [14] [16].

In evolutionary multitasking optimization (MTO), these knowledge types manifest differently. Explicit knowledge transfer often involves direct encoding of solutions or strategies, while implicit transfer leverages underlying similarities between tasks without conscious articulation [17]. The multifactorial evolutionary algorithm (MFEA) represents a pioneering approach to implicit transfer learning in MTO, where knowledge is shared across optimization tasks through chromosomal crossover operations [17]. Understanding the distinctions between these transfer approaches is crucial for researchers and drug development professionals seeking to accelerate convergence in complex optimization problems, such as those encountered in pharmaceutical research and development.

Theoretical Foundations: Knowledge Transfer in Evolutionary Computation

Explicit Knowledge Transfer Mechanisms

Explicit knowledge transfer in evolutionary computation involves the systematic encoding of information that can be readily documented and shared between optimization tasks. This approach is characterized by its codifiable nature, making it highly accessible and easily reproducible across different contexts [14]. In algorithmic terms, explicit knowledge might include well-defined solution structures, parameter settings, or convergence patterns that can be directly transferred between related optimization problems.

The strength of explicit knowledge transfer lies in its immediate applicability. Experimental studies have demonstrated that when explicit knowledge is acquired during initial learning phases, it can be transferred immediately to novel contexts without delay [18]. This characteristic is particularly valuable in drug development pipelines where rapid adaptation to new compound optimization tasks can significantly accelerate research timelines. However, the requirement for conscious articulation and formal encoding presents limitations when dealing with highly complex, unstructured problem domains where complete explicit documentation is impractical.

Implicit Knowledge Transfer Mechanisms

Implicit knowledge transfer operates through unconscious application of learned patterns and relationships, making it particularly valuable for complex problem domains where explicit articulation is challenging. In evolutionary multitasking, implicit transfer occurs through mechanisms like assortative mating and vertical cultural transmission, where knowledge is shared across tasks without explicit encoding [17]. This approach mirrors human cognitive processes where skills and patterns are acquired through experience rather than formal instruction.

Recent research has revealed that implicit knowledge transfer follows a different temporal pattern compared to explicit mechanisms. While explicit knowledge can be transferred immediately, implicit transfer often requires consolidation periods, with sleep playing a particularly crucial role in restructuring unconscious knowledge for future application [18] [19]. This finding has significant implications for designing evolutionary algorithms with memory mechanisms that mimic these natural cognitive processes. The structural robustness of implicitly acquired knowledge makes it more resilient under stressful conditions, such as when optimization problems encounter noisy fitness evaluations or dynamic environments [20].

Experimental Comparisons: Performance Metrics and Methodologies

Visual Statistical Learning Paradigm

The visual statistical learning (SVSL) paradigm provides a robust experimental framework for investigating knowledge transfer mechanisms [18] [19]. In this approach, participants are exposed to scenes containing abstract shapes arranged in fixed spatial pairs, with the requirement to extract underlying statistical regularities. The methodology involves multiple phases:

- Phase 1: Participants view scenes composed of either exclusively horizontal or exclusively vertical shape pairs, depending on assigned condition.

- Phase 2: After a delay period (varied to test consolidation effects), participants view scenes containing both horizontal and vertical pairs constructed from novel shapes.

- Testing: Knowledge acquisition is assessed through a two-alternative forced-choice (2AFC) familiarity test comparing real pairs against foil pairs constructed from mixed shapes [18].

This experimental design allows researchers to precisely measure transfer learning effects by examining how exposure to one abstract structure influences the acquisition of novel structures. The paradigm effectively disentangles conscious (explicit) from unconscious (implicit) learning by combining objective performance measures with subjective awareness assessments [18].

Serial Response Time Task (SRTT) Methodology

The serial response time task (SRTT) represents another established protocol for investigating sequence learning through both implicit and explicit mechanisms [21]. This approach enables researchers to:

- Measure reaction time improvements as participants unconsciously or consciously learn sequence regularities.

- Apply the drift-diffusion model to disentangle specific cognitive processes affected by learning (stimulus detection, response selection, response execution).

- Independently manipulate explicit sequence knowledge and the opportunity to express such knowledge [21].

Research using this methodology has demonstrated that implicit sequence learning primarily benefits response selection processes, while explicit knowledge enables a shift from stimulus-based to plan-based action control, particularly under deterministic conditions [21].

Throwing Task Under Fatigue Conditions

Motor learning studies provide valuable insights into knowledge transfer resilience under challenging conditions. A recent throwing task experiment compared implicit (errorless) and explicit (errorful) training strategies under both physiological and mental fatigue [20]. The methodology included:

- Implicit Group: Started close to the target and progressively increased distance (error-minimizing approach).

- Explicit Group: Began at a significant distance from the target and gradually moved closer (error-prone approach).

- Fatigue Induction: Mental fatigue through 30-minute Stroop task; physical fatigue through maintained isometric contraction.

- Assessment: Retention tests and transfer tests under fatigue conditions [20].

This experimental approach demonstrates the practical implications of knowledge type on performance resilience, with significant applications to training protocol design in both clinical and industrial settings.

Table 1: Comparative Performance of Implicit vs. Explicit Knowledge Transfer

| Performance Metric | Implicit Transfer | Explicit Transfer | Experimental Context |

|---|---|---|---|

| Immediate Application | Limited immediate transfer; shows structural interference [18] | Strong immediate transfer capability [18] | Visual statistical learning |

| Post-Consolidation | Significant improvement after sleep (12 hours) [18] [19] | Minimal consolidation benefit [18] | Visual statistical learning |

| Fatigue Resilience | Maintains performance under mental and physical fatigue [20] | Significant performance degradation under fatigue [20] | Throwing task experiment |

| Process Specificity | Primarily benefits response selection and execution [21] | Enables shift to plan-based action control [21] | Serial response time task |

| Transfer Flexibility | Abstract structure transfer after consolidation [18] | Direct pattern application | Visual statistical learning |

Evolutionary Multitasking Algorithms: A Convergence Speed Perspective

Multifactorial Evolutionary Algorithm (MFEA)

The Multifactorial Evolutionary Algorithm (MFEA) represents a foundational approach to implicit knowledge transfer in evolutionary computation [17]. MFEA implements knowledge sharing through:

- Implicit transfer learning via chromosomal crossover between solutions from different tasks.

- Assortative mating that allows individuals with different skill factors to reproduce.

- Vertical cultural transmission where offspring randomly inherit genetic material and dominant tasks from parents [17].

While MFEA demonstrates the feasibility of implicit transfer, its simple random inter-task transfer strategy often results in slow convergence rates due to excessive diversity maintenance. This limitation has motivated researchers to develop more sophisticated algorithms with enhanced knowledge transfer mechanisms [17].

Two-Level Transfer Learning Algorithm (TLTL)

To address MFEA's convergence limitations, the Two-Level Transfer Learning (TLTL) algorithm implements a structured approach to knowledge transfer [17]. This enhanced framework includes:

- Upper-Level (Inter-task transfer): Implements knowledge transfer through chromosome crossover and elite individual learning to reduce randomness.

- Lower-Level (Intra-task transfer): Performs information transfer of decision variables for across-dimension optimization within the same task [17].

Experimental evaluations demonstrate that TLTL achieves outstanding global search capability and fast convergence rate by more effectively exploiting correlations and similarities between component tasks [17]. The algorithm's two-level structure enables more efficient knowledge exchange while maintaining appropriate diversity levels throughout the evolutionary process.

Evolutionary Multitasking-Based Multiobjective Optimization Algorithm (EMMOA)

For complex real-world applications like hybrid brain-computer interface (BCI) channel selection, the Evolutionary Multitasking-based Multiobjective Optimization Algorithm (EMMOA) represents a specialized approach [22]. EMMOA features:

- Two-stage framework that balances selected channel number and classification accuracy.

- Simultaneous optimization of motor imagery (MI) and steady-state visual evoked potential (SSVEP) classification tasks.

- Information transfer between related tasks to exploit underlying similarities [22].

This algorithm demonstrates how evolutionary multitasking with effective knowledge transfer can address practical optimization challenges in neuroscience and medical technology development, particularly when multiple conflicting objectives must be balanced.

Table 2: Evolutionary Multitasking Algorithms and Their Transfer Mechanisms

| Algorithm | Primary Transfer Mechanism | Knowledge Type | Convergence Performance | Application Context |

|---|---|---|---|---|

| MFEA [17] | Implicit transfer via chromosomal crossover | Primarily implicit | Slow convergence due to random transfer [17] | General multitasking optimization |

| TLTL [17] | Two-level transfer (inter-task & intra-task) | Implicit with elite guidance | Fast convergence rate [17] | General multitasking optimization |

| EMMOA [22] | Evolutionary multitasking mechanism | Implicit | Improved search efficiency [22] | Hybrid BCI channel selection |

Research Reagent Solutions: Experimental Toolkit

Table 3: Essential Research Materials and Their Functions

| Research Reagent/Resource | Function in Knowledge Transfer Research |

|---|---|

| Visual Statistical Learning Paradigm [18] | Tests abstraction and transfer of statistical regularities |

| Serial Response Time Task (SRTT) [21] | Measures implicit and explicit sequence learning |

| Drift-Diffusion Modeling [21] | Isolates specific cognitive processes affected by learning |

| Stroop Task Protocol [20] | Induces mental fatigue for testing knowledge resilience |

| Isometric Contraction Protocol [20] | Induces physical fatigue for testing knowledge resilience |

| Two-Alternative Forced Choice (2AFC) [18] | Assesses knowledge acquisition through familiarity judgments |

| Multifactorial Evolutionary Algorithm (MFEA) [17] | Provides baseline implicit transfer in optimization tasks |

| Non-dominated Sorting Genetic Algorithm-II (NSGA-II) [22] | Multiobjective optimization for comparison studies |

Knowledge Transfer Pathways in Evolutionary Multitasking

The following diagram illustrates the key pathways and mechanisms for knowledge transfer in evolutionary multitasking environments:

Knowledge Transfer Pathways in Evolutionary Multitasking

The comparative analysis of implicit versus explicit knowledge transfer mechanisms reveals significant implications for researchers and drug development professionals working on evolutionary multitasking convergence speed analysis. Explicit transfer approaches offer immediate application benefits but demonstrate limited resilience under fatigue and limited capacity for handling complex, unstructured problem domains. Conversely, implicit transfer mechanisms require consolidation periods but ultimately provide more robust, flexible knowledge application that withstands challenging conditions.

For evolutionary algorithm design, these insights suggest that hybrid approaches combining the immediate benefits of explicit transfer with the long-term robustness of implicit transfer may yield optimal convergence performance. The demonstrated role of sleep in consolidating implicit knowledge further suggests potential algorithmic analogs in the form of structured rest periods or memory consolidation mechanisms within evolutionary computation frameworks.

Future research in this domain should focus on developing more sophisticated knowledge taxonomies for evolutionary computation and designing explicit mechanisms for converting tacit knowledge into transferable forms without losing its essential contextual richness. Such advances promise to significantly accelerate convergence in complex optimization problems central to drug discovery and development pipelines.

Key Factors Influencing Convergence Speed in Multitasking Environments

Evolutionary Multitasking (EMT) represents a paradigm shift in computational optimization, enabling the simultaneous solving of multiple optimization tasks by exploiting their underlying synergies [5]. In an EMT setting, the convergence speed of an algorithm—the rate at which it approaches optimal or high-quality solutions—is paramount, especially for computationally expensive real-world problems like drug development [3]. The convergence speed is not governed by a single factor but by a complex interplay of algorithmic strategies for knowledge transfer, constraint handling, and population management. This guide provides a comparative analysis of state-of-the-art EMT algorithms, dissecting the key factors that influence their convergence performance through structured experimental data and detailed methodologies.

Comparative Analysis of Advanced EMT Algorithms

The convergence speed in multitasking environments is critically influenced by the algorithm's core design. The table below compares several advanced EMT algorithms, highlighting their primary knowledge transfer mechanisms and their intended effect on convergence.

Table 1: Comparison of State-of-the-Art Evolutionary Multitasking Algorithms

| Algorithm Name | Core Knowledge Transfer Mechanism | Primary Convergence Goal | Key Innovation Focus |

|---|---|---|---|

| ETT-PEGR [23] | Evolutionary Tri-Tasking; two auxiliary tasks (concept-recommended & constraint-ignored) with a novel encoding-based transfer. | Accelerate convergence in large-scale, constrained problems. | Problem-specific auxiliary task design. |

| MFEA-MDSGSS [9] | Multidimensional Scaling (MDS) for subspace alignment & Golden Section Search (GSS) for linear mapping. | Mitigate negative transfer and avoid local optima. | Robust transfer between unrelated or differently-dimensioned tasks. |

| PA-MTEA [5] | Association Mapping via Partial Least Squares (PLS) & Adaptive Population Reuse (APR). | Enhance efficiency and comprehensiveness of bidirectional knowledge transfer. | Balancing global exploration and local exploitation. |

| CA-MTO [3] | Classifier-assisted (SVC) knowledge transfer with PCA-based subspace alignment for expensive problems. | Improve convergence speed and accuracy with limited fitness evaluations. | Data sparseness mitigation via sample aggregation. |

Experimental Protocols for Evaluating Convergence

To objectively compare the convergence performance of EMT algorithms, researchers rely on standardized experimental protocols.

Benchmark Problems and Real-World Cases

Performance is typically validated on benchmark suites and real-world problems. A common benchmark is the WCCI2020-MTSO test suite, a complex set of ten two-task problems designed for the 2020 competition on evolutionary multi-task optimization [5]. Real-world case studies provide critical validation, such as:

- Personalized Exercise Group Recommendation (PEGR): Modeled as a large-scale constrained multi-objective optimization problem [23].

- Parameter Extraction of Photovoltaic (PV) Models: A complex optimization problem in engineering [5].

- Expensive Multitasking Optimization Problems (EMTOPs): Problems where each fitness evaluation is computationally costly, such as those involving complex simulations [3].

Performance Evaluation Metrics

The convergence speed and quality of algorithms are measured using specific metrics:

- Convergence Accuracy: The quality of the best solution found, often measured by the final objective function value.

- Convergence Speed: The rate of improvement, which can be measured by the number of Fitness Evaluations (FEs) or iterations required to reach a solution of a certain quality [3].

- Data Efficiency: For expensive problems, the key metric is the algorithm's performance given a very limited budget of FEs [3].

Key Factors Influencing Convergence Speed

Knowledge Transfer Strategy

The design of the knowledge transfer mechanism is arguably the most critical factor for convergence speed.

- Explicit vs. Implicit Transfer: Modern algorithms increasingly favor explicit knowledge transfer, which actively extracts and maps high-quality solutions or landscape features from a source task to a target task. This approach is more directed and can reduce the risk of negative transfer—where unhelpful knowledge misguides the search and hinders convergence [5] [9].

- Subspace Alignment: To make transfer effective, especially between tasks with different characteristics, many algorithms project tasks into a shared low-dimensional subspace. MFEA-MDSGSS uses Multidimensional Scaling (MDS) to create these subspaces and Linear Domain Adaptation (LDA) to align them, enabling more robust and stable knowledge transfer [9]. Similarly, PA-MTEA uses Partial Least Squares (PLS) to find principal components that maximize correlation between tasks for more effective transfer [5].

Handling of Task Relatedness and Negative Transfer

The convergence speed of an EMT algorithm is highly dependent on its ability to handle unrelated or dissimilar tasks.

- The Negative Transfer Problem: When tasks are unrelated, knowledge from one task can mislead the search of another, causing premature convergence to poor local optima [9] [5].

- Mitigation Strategies: Advanced algorithms incorporate specific strategies to mitigate this. MFEA-MDSGSS introduces a GSS-based linear mapping strategy to help the population escape local optima and explore more promising regions, thus maintaining diversity and preventing premature convergence [9]. PA-MTEA's association mapping strategy aims to transfer only mutually beneficial information, reducing blind transfer [5].

Auxiliary Task Design and Problem Reformulation

Creating simpler, related auxiliary tasks can significantly boost convergence for a complex primary task.

- The ETT-PEGR Approach: This algorithm constructs two auxiliary tasks for its main Personalized Exercise Group Recommendation problem. The concept-recommended auxiliary task operates in a smaller concept space to speed up convergence, while the constraint-ignored auxiliary task helps the main task cross infeasible regions of the search space. This tri-tasking framework directly tackles the "curse of dimensionality" and complex constraints that slow down convergence [23].

Population Management and Exploitation-Exploration Balance

How an algorithm manages its population of solutions throughout the search process directly impacts convergence.

- Adaptive Population Reuse (APR): PA-MTEA uses an APR mechanism to reuse historically successful individuals adaptively. This guides the evolutionary direction and improves convergence performance by effectively balancing the exploration of new regions with the exploitation of known good solutions [5].

- Exploration-Convergence Tradeoff: There is a fundamental tension between exploring the search space widely and converging quickly to an optimum. This is particularly acute when using suboptimal parameters or settings, where a focus on rapid exploration might come at the cost of slower final convergence, and vice-versa [24]. Algorithms must be designed to manage this tradeoff effectively.

Visualization of Algorithm Workflows

The following diagram illustrates the high-level logical workflow and key components shared by advanced EMT algorithms, which contributes to their accelerated convergence.

Figure 1: Generalized workflow of advanced EMT algorithms, highlighting the iterative process of subspace alignment and explicit knowledge transfer that enhances convergence speed.

The Scientist's Toolkit: Essential Research Reagents

The experimental research and application of EMT algorithms rely on a suite of conceptual "reagents" and tools.

Table 2: Key Research Reagent Solutions in Evolutionary Multitasking

| Research Reagent / Tool | Function in EMT Research |

|---|---|

| Benchmark Suites (e.g., WCCI2020-MTSO) | Standardized test problems for fair and reproducible comparison of algorithm convergence performance and robustness [5]. |

| Multifactorial Evolutionary Algorithm (MFEA) | A foundational algorithmic framework for EMT that enables implicit knowledge transfer via assortative mating and vertical cultural transmission [3]. |

| Partial Least Squares (PLS) | A statistical method used for subspace projection and association mapping to maximize correlation between tasks for more effective knowledge transfer [5]. |

| Support Vector Classifier (SVC) | A classification model used as a surrogate in expensive optimization problems to prescreen solutions, reducing the number of computationally costly fitness evaluations [3]. |

| Bregman Divergence | A measure of distance between probability distributions, used in deriving alignment matrices to minimize variability between task domains during knowledge transfer [5]. |

Evolutionary Multitask Optimization (EMTO) is an emerging paradigm in computational intelligence that seeks to simultaneously solve multiple optimization tasks by leveraging the latent complementarities and knowledge transfer between them. Unlike traditional single-task evolutionary algorithms (EAs) that start the search from scratch for each problem, EMTO enhances the solving process of each task based on simultaneously optimizing multiple tasks through inter-task knowledge transfer. The mathematical formulation of an MTO problem consisting of K tasks aims to find a set of solutions {x1, x2, …, xK} such that each xi is the global optimum for its respective task [9]. This paradigm has demonstrated significant potential in accelerating convergence speed and enhancing solution quality across various domains, including vehicle routing, reliability redundancy allocation, and simulation-based process design [6].

The fundamental premise of EMTO rests on the observation that problems in the real world rarely exist in isolation. Virtually all optimization problems, even black-box instances, can be mined from previously completed or ongoing tasks with substantially similar properties [3]. By exploiting the synergies between related tasks, EMTO algorithms can effectively navigate complex search spaces and overcome limitations of traditional evolutionary approaches. The convergence behavior of these algorithms, however, presents unique theoretical challenges that differ substantially from single-task optimization, primarily due to the complex dynamics of knowledge transfer between tasks with potentially disparate fitness landscapes and dimensionalities.

Theoretical Foundations of EMTO Convergence

From Single-Task to Multi-Task Evolutionary Paradigms

Traditional single-task evolutionary algorithms are population-based optimization methods inspired by natural selection and genetics, which have proven effective for solving individual optimization problems [9]. However, their convergence characteristics are fundamentally different from multi-task environments. In single-task optimization, convergence analysis typically focuses on how the population evolves toward the global optimum of a single fitness landscape, considering factors like selection pressure, genetic drift, and exploration-exploitation balance.

In contrast, EMTO introduces the additional dimension of knowledge transfer between tasks, creating a complex interplay between multiple search processes. The convergence speed in EMTO is influenced not only by the efficacy of evolutionary operators but also by the quality and quantity of knowledge exchanged between tasks. When properly implemented, this knowledge transfer can lead to accelerated convergence by allowing tasks to benefit from each other's discovered patterns and promising regions in the search space [9] [6]. However, inappropriate transfer can result in negative transfer, where knowledge from one task misguides the search direction of another, ultimately degrading convergence performance [9].

Key Convergence Challenges in EMTO

The theoretical analysis of EMTO convergence must address several unique challenges that distinguish it from single-task optimization. First, the curse of dimensionality presents a significant obstacle, as the decision space volume increases exponentially with the number of variables, leading to combinatorial explosion and adverse effects on search algorithms [25]. This challenge is compounded in multi-task environments where different tasks may have differing dimensionalities, making direct knowledge transfer problematic.

Second, negative transfer represents a critical convergence challenge in EMTO. This occurs when knowledge from distinct tasks that may not benefit each other is transferred during evolutionary search [9]. For example, if one task converges prematurely to a local optimum, the unhelpful knowledge transferred from it may mislead other tasks into the same local optimum, particularly when task similarity is low [9]. The risk of negative transfer is especially pronounced between tasks with dissimilar fitness landscapes or when robust mappings cannot be learned from limited population data [9].

Third, the dynamic balance between exploration and exploitation becomes more complex in multitask environments. While single-task algorithms must balance exploring new regions and refining known promising areas, EMTO must additionally balance intra-task search with inter-task knowledge transfer. This multi-level balance directly impacts convergence speed and solution quality across all tasks in an MTO problem.

Algorithmic Frameworks and Their Convergence Mechanisms

Multifactorial Evolutionary Algorithm (MFEA) and Variants

The Multifactorial Evolutionary Algorithm (MFEA), proposed by Gupta et al., represents the pioneering work in EMTO and establishes the foundational convergence mechanisms for the field [9] [3]. MFEA operates on a unified search space where individuals are encoded in a unified representation and assigned skill factors denoting their specialized tasks. Knowledge transfer occurs implicitly through chromosome crossover between individuals of different tasks, allowing genetic material to flow between optimization processes [9].

The convergence properties of basic MFEA, however, face limitations in scenarios with unrelated tasks or differing dimensionalities. To address these limitations, several enhanced variants have been developed:

MFEA-MDSGSS: Integrates multidimensional scaling (MDS) and golden section search (GSS) to improve convergence. The MDS-based linear domain adaptation method establishes low-dimensional subspaces for each task, facilitating robust knowledge transfer even between tasks with different dimensions. Meanwhile, the GSS-based linear mapping strategy helps avoid local optima and enhances population diversity, critical factors for maintaining convergence quality [9].

MFEA-II: Introduces an adaptive knowledge transfer mechanism that learns similarities between pairwise tasks by calculating the weight of mixed probability distribution models, thereby reducing negative transfer and improving convergence reliability [3].

MFEA-AKT: Implements adaptive knowledge transfer that dynamically adjusts transfer intensity based on task relatedness, optimizing convergence speed across diverse task combinations [9].

Explicit Transfer Methods for Enhanced Convergence

While MFEA and its variants primarily employ implicit transfer through genetic representation, another approach focuses on explicit knowledge transfer mechanisms that directly share information between tasks:

LDA-MFEA: Employs linear domain adaptation techniques to enable knowledge transfer between homogeneous or heterogeneous multitasking optimization problems. It introduces a linear transformation strategy to map tasks into a higher-order representation search space where knowledge can be transferred more efficiently, enhancing convergence particularly for tasks with explicit discrepancies in their fitness landscapes [3].

G-MFEA: A generalized MFEA that facilitates knowledge transfer among optimization problems with different optimum locations and dimensionalities through translation and shuffling of decision variables, addressing convergence challenges in functionally related but structurally different tasks [3].

EMT via Autoencoding: Uses denoising autoencoders to explicitly transfer high-quality solutions across tasks, where the autoencoder is trained on solutions sampled from the search spaces of optimization problems, creating a more direct pathway for beneficial knowledge exchange [9] [3].

Scenario-Based Self-Learning Frameworks

The Scenario-based Self-Learning Transfer (SSLT) framework represents a significant advancement in convergence assurance for EMTO. This approach categorizes evolutionary scenarios into four possible situations in the MTOP environment and designs corresponding scenario-specific strategies [6]:

- Only similar shape: Employing shape knowledge transfer to help the target population approximate the convergence trend of the source population

- Only similar optimal domain: Utilizing domain knowledge transfer to move populations to more promising search regions

- Similar function shape and optimal domain: Applying bi-knowledge transfer for comprehensive convergence acceleration

- Dissimilar shape and optimal domain: Relying on intra-task strategies to avoid disruptive knowledge transfer

SSLT employs Deep Q-Networks (DQN) as a relationship mapping model to learn the optimal correspondence between evolutionary scenarios and transfer strategies, enabling automatic adjustment of knowledge transfer policies during the optimization process [6]. This self-learning capability allows the algorithm to adapt its convergence strategy based on real-time search conditions, significantly reducing the risk of negative transfer while maximizing positive convergence synergies.

Surrogate-Assisted and Classification-Based Approaches

For expensive optimization problems where fitness evaluations are computationally costly, surrogate-assisted EMTO approaches have been developed to maintain convergence while reducing computational burden:

Classifier-Assisted Evolutionary Multitasking: Replaces traditional regression surrogates with classification models that distinguish the relative merits of candidate solutions, reducing sensitivity to limited training samples while maintaining convergence direction [3].

Knowledge Transfer with Domain Adaptation: Enriches training samples for task-oriented classifiers by sharing high-quality solutions among different tasks using PCA-based subspace alignment techniques, improving model accuracy and convergence reliability despite limited data [3].

Table 1: Comparative Analysis of EMTO Algorithm Convergence Mechanisms

| Algorithm | Knowledge Transfer Type | Convergence Assurance Mechanism | Applicable Scenario |

|---|---|---|---|

| MFEA | Implicit | Assortative mating and vertical cultural transmission | Tasks with similar representations |

| MFEA-MDSGSS | Explicit | MDS-based subspace alignment and GSS-based local avoidance | High-dimensional tasks with differing dimensionalities |

| SSLT | Self-learning | DQN-based strategy selection based on scenario features | Dynamic environments with varying task relatedness |

| LDA-MFEA | Explicit domain adaptation | Linear transformation to shared representation space | Homogeneous or heterogeneous tasks |

| CA-MTO | Classifier-assisted | SVC-based solution prescreening with cross-task sample transfer | Expensive optimization problems |

Experimental Analysis of Convergence Performance

Benchmarking Methodologies and Metrics

Rigorous experimental evaluation is essential for analyzing the convergence performance of EMTO algorithms. Standard benchmarking approaches utilize both synthetic and real-world problems to assess various convergence aspects:

Single-Objective MTO Benchmarks: Test problems designed to evaluate convergence speed and accuracy for tasks with single objectives, measuring performance metrics such as convergence generations, solution quality at termination, and success rates in locating global optima [9].

Multi-Objective MTO Benchmarks: Problems with multiple conflicting objectives that introduce additional convergence challenges, requiring algorithms to approximate Pareto-optimal fronts across multiple tasks simultaneously [9].

Real-World Applications: Complex problems from engineering and scientific domains, such as interplanetary trajectory design missions, which feature challenging characteristics like extreme non-linearity, massively deceptive local optima, and sensitivity to initial conditions [6].

Standard convergence metrics include convergence speed (number of generations or function evaluations to reach target accuracy), solution quality (deviation from known optima or hypervolume for multi-objective problems), and consistency (standard deviation of performance across multiple runs) [9] [6].

Quantitative Performance Comparison

Experimental studies demonstrate the superior convergence performance of advanced EMTO algorithms compared to both traditional single-task EAs and earlier multitasking approaches:

In comprehensive evaluations on single-objective and multi-objective MTO benchmarks, the proposed MFEA-MDSGSS performed better than compared state-of-the-art algorithms [9]. The algorithm's integration of MDS-based subspace alignment and GSS-based local avoidance mechanisms contributed to its enhanced convergence characteristics, particularly for tasks with differing dimensionalities.

For the SSLT framework, experiments conducted on two sets of MTO problems and real-world interplanetary trajectory design missions confirmed the favorable performance of SSLT-based algorithms against competitors [6]. The framework's self-learning capability to select appropriate transfer strategies based on evolutionary scenarios proved essential for maintaining convergence across diverse task relationships.

In expensive optimization scenarios, the classifier-assisted CA-MTO algorithm demonstrated significant superiority over general CMA-ES in terms of both robustness and scalability, with the knowledge transfer strategy further helping it earn a competitive edge over state-of-the-art algorithms on expensive multitasking optimization problems [3].

Table 2: Convergence Performance Comparison Across EMTO Algorithms

| Algorithm | Convergence Speed | Solution Quality | Negative Transfer Resistance | Computational Efficiency |

|---|---|---|---|---|

| Standard MFEA | Moderate | High for related tasks | Low | High |

| MFEA-MDSGSS | High | High | High | Moderate |

| SSLT Framework | High | High | High | Moderate |

| LDA-MFEA | High | High | Moderate | Moderate |

| CA-MTO | Moderate-High | High | High | High for expensive problems |

Ablation Studies and Component Analysis

Ablation studies provide crucial insights into how individual components contribute to overall convergence performance. For MFEA-MDSGSS, ablation experiments confirmed the contribution of both the MDS-based LDA and GSS-based linear mapping strategy to the algorithm's performance [9]. The MDS-based LDA was particularly effective in mitigating negative transfer in high-dimensional multitasking, while the GSS strategy prevented local optima convergence.

Similar component analysis for the SSLT framework validated the importance of its four scenario-specific strategies and the DQN-based selection mechanism for maintaining convergence across diverse evolutionary scenarios [6]. The ensemble method for characterizing scenarios based on intra-task and inter-task features proved essential for appropriate strategy selection.

The Research Toolkit: Essential Methodologies for EMTO Convergence Analysis

Table 3: Research Reagent Solutions for EMTO Convergence Analysis

| Research Tool | Function in Convergence Analysis | Implementation Considerations |

|---|---|---|

| Multidimensional Scaling (MDS) | Aligns latent subspaces for knowledge transfer between tasks | Dimensionality selection, distance metric definition |

| Golden Section Search (GSS) | Prevents local optima convergence and maintains diversity | Section ratio parameter, application frequency |

| Deep Q-Network (DQN) | Learns optimal transfer strategy selection policies | State representation, reward function design |

| Linear Domain Adaptation (LDA) | Enables knowledge transfer between heterogeneous tasks | Transformation matrix learning, subspace alignment |

| Principal Component Analysis (PCA) | Reduces decision space dimensionality for efficient transfer | Variance retention threshold, component selection |

| Support Vector Classifier (SVC) | Prescreens solutions in expensive optimization problems | Kernel selection, hyperparameter tuning |

| Covariance Matrix Adaptation | Maintains effective search distribution in continuous spaces | Step size control, population size settings |

| Skill Factor Encoding | Tracks task specialization within unified population | Factorization method, inheritance mechanisms |

Visualization of EMTO Convergence Pathways

EMTO Convergence Pathway: This diagram illustrates the complex decision process in evolutionary multitask optimization, highlighting key convergence checkpoints and transfer strategy selection mechanisms.

Knowledge Transfer Mechanisms: This diagram details the core knowledge transfer process in advanced EMTO algorithms, highlighting subspace alignment and negative transfer prevention components critical for convergence assurance.

The theoretical analysis of EMTO convergence reveals a complex landscape where traditional single-task convergence theories must be extended to account for the dynamics of knowledge transfer between tasks. The progression from basic MFEA to advanced frameworks like MFEA-MDSGSS and SSLT demonstrates significant improvements in convergence speed, solution quality, and robustness against negative transfer. Key advancements include subspace alignment techniques for handling heterogeneous tasks, self-learning mechanisms for adaptive strategy selection, and classifier-assisted approaches for expensive optimization problems.

Future research directions in EMTO convergence analysis should focus on several promising areas. First, more sophisticated theoretical frameworks are needed to formally characterize convergence guarantees in multitask environments, particularly for algorithms with complex transfer mechanisms. Second, the exploration of quantum-inspired evolutionary approaches for multitask optimization presents opportunities for exponential acceleration in convergence speed [26]. Third, the integration of EMTO with emerging machine learning paradigms, such as meta-learning and neural architecture search, could yield new insights into cross-domain knowledge transfer and convergence behavior.

As EMTO continues to evolve, its convergence properties will remain a central focus of theoretical analysis and empirical validation. The field is poised to make significant contributions to complex optimization challenges across scientific and engineering domains, with convergence assurance serving as the cornerstone of these advancements.

Advanced Algorithms and Speed Enhancement Techniques in EMTO

Evolutionary Multitasking Optimization (EMTO) represents a paradigm shift in evolutionary computation, enabling the concurrent solution of multiple optimization tasks within a single algorithmic run. By exploiting potential synergies and complementarities between tasks, EMTO aims to improve the overall convergence characteristics and optimization efficiency across all problems. The fundamental principle behind this approach is the transfer of knowledge across tasks, which allows promising search directions or genetic material from one task to implicitly guide the exploration of other related tasks [27] [8]. This methodology has shown particular promise in complex real-world domains such as drug design and development, where researchers often need to optimize multiple molecular properties simultaneously, including binding affinity, solubility, synthetic accessibility, and toxicity profiles [28] [29] [30].

Despite considerable advancements in EMTO, a significant limitation persists in many existing algorithms: their reliance on a single evolutionary search operator (ESO) throughout the entire optimization process. Traditional multifactorial evolutionary algorithms typically utilize either genetic algorithms (GA) or differential evolution (DE) operators exclusively, without adapting to the distinct characteristics of different optimization tasks [27]. This one-size-fits-all approach fails to account for the varying landscape properties of different optimization problems, where no single operator performs optimally across all task types. For instance, empirical studies on the CEC17 MTO benchmarks have demonstrated that DE/rand/1 operators outperform GA operators on complete-intersection, high-similarity (CIHS) and complete-intersection, medium-similarity (CIMS) problems, while GA operators show superior performance on complete-intersection, low-similarity (CILS) problems [27] [8]. This performance variability underscores the fundamental limitation of single-operator approaches and highlights the need for more adaptive strategies.

The Bi-operator Evolutionary Algorithm for Multitasking (BOMTEA) represents a strategic response to these limitations. By integrating multiple evolutionary search operators and implementing an adaptive selection mechanism, BOMTEA dynamically adjusts its search behavior according to operator performance across different tasks and evolutionary stages. This adaptive capability allows the algorithm to overcome the performance plateaus often encountered by fixed-operator approaches, particularly when tackling diverse optimization problems with varying characteristics within a multitasking environment [27].

Fundamental Concepts and Algorithmic Framework

Core Components of Evolutionary Multitasking

In a typical evolutionary multitasking scenario, K distinct optimization tasks are solved simultaneously. Each task T~i~ (where i = 1, 2, ..., K) possesses its own search space Ω~i~ and objective function F~i~: Ω~i~ → ℝ. The collective goal of EMTO is to discover a set of optimal solutions {x~1~, x~2~, ..., x~K~*} that satisfies the condition specified in Equation 1 [27] [8]:

$$ {x1^*, x2^, \ldots, x_K^} = \arg\min {F1(x1), F2(x2), \ldots, FK(xK)} $$

To enable effective comparison and selection of individuals across multiple tasks within a unified population, EMTO algorithms employ several key concepts. The factorial cost represents an individual's performance on a specific task, incorporating both objective value and constraint violations. The factorial rank establishes a hierarchical ordering of individuals for each task based on their factorial costs. Each individual is assigned a skill factor indicating the task on which it performs best, and a scalar fitness value provides a unified measure of quality across all tasks [17].

Search Operators in Evolutionary Computation

BOMTEA primarily leverages two prominent evolutionary search operators: Differential Evolution (DE) and Simulated Binary Crossover (SBX) from Genetic Algorithms. The DE algorithm employs a differential mutation strategy that generates new candidate solutions by combining scaled differences between existing population members. The DE/rand/1 variant, commonly used in BOMTEA, follows the mutation scheme in Equation 2 [27] [8]:

$$ vi = x{r1} + F \cdot (x{r2} - x{r3}) $$

where v~i~ represents the mutated individual, x~r1~, x~r2~, and x~r3~ are distinct randomly selected individuals from the population, and F denotes the scaling factor. Following mutation, DE performs a crossover operation between the mutated individual v~i~ and the original individual x~i~ to produce a trial vector u~i~. Finally, a selection operation determines whether the trial vector or the original individual survives to the next generation based on their objective function values [27] [8].

In contrast, Simulated Binary Crossover (SBX) operates on pairs of parent solutions to produce offspring that preserve the parents' genetic information while exploring new regions of the search space. SBX employs a probability distribution to generate offspring near parent solutions, with the spread of offspring controlled by a distribution index parameter η~c~. The offspring solutions c~1~ and c~2~ are generated from parents p~1~ and p~2~ according to Equations 3 and 4 [27] [8]:

$$ c{1,i} = \frac{1}{2} \left[ (1-\betai) \cdot p{1,i} + (1+\betai) \cdot p_{2,i} \right] $$

$$ c{2,i} = \frac{1}{2} \left[ (1+\betai) \cdot p{1,i} + (1-\betai) \cdot p_{2,i} \right] $$

where β~i~ is a sample from a probability distribution that favors values near 1, ensuring that offspring solutions maintain a similar spread to their parents while allowing controlled exploration of the search space [27] [8].

BOMTEA: Algorithmic Architecture and Workflow

Core Mechanism and Adaptive Operator Selection

BOMTEA's innovative approach centers on its adaptive bi-operator strategy, which dynamically balances the utilization of DE and SBX operators based on their demonstrated performance throughout the evolutionary process. Unlike previous multi-operator approaches that employed fixed or random operator selection mechanisms, BOMTEA implements a performance-sensitive probability adjustment system that continuously monitors the effectiveness of each operator and allocates reproductive opportunities accordingly [27].

The algorithm maintains separate selection probabilities for each evolutionary search operator, initialized to equal values. As evolution progresses, these probabilities are periodically updated based on the quality of offspring produced by each operator. Operators that consistently generate offspring with superior fitness values receive increased selection probabilities, while underperforming operators see their probabilities diminished. This adaptive learning mechanism enables BOMTEA to automatically identify the most suitable operator for different tasks and evolutionary stages without requiring prior knowledge of problem characteristics [27].

The mathematical formulation of this adaptive mechanism operates through a credit assignment system that tracks the success rate of each operator in producing offspring that survive to subsequent generations. The selection probability P~op~ for operator op is updated according to Equation 5:

$$ P{op} = \frac{S{op}}{\sum{j=1}^{N{op}} S_j} $$

where S~op~ represents the success count of operator op, and N~op~ denotes the total number of operators. This probability update occurs at regular intervals throughout the evolutionary process, allowing BOMTEA to rapidly respond to changing search landscape characteristics [27].

Knowledge Transfer Strategy

BOMTEA incorporates a sophisticated knowledge transfer mechanism that facilitates information exchange between different optimization tasks. This transfer occurs through the assortative mating procedure, where individuals with different skill factors may undergo crossover with a specified probability, known as the random mating probability (rmp) [27] [17].

The knowledge transfer strategy in BOMTEA includes safeguards against negative transfer - the phenomenon where exchange of genetic material between incompatible tasks leads to performance degradation. To mitigate this risk, the algorithm employs a transfer adaptation mechanism that monitors the success of cross-task transfers and adjusts the rmp parameter accordingly. Successful transfers that produce offspring with improved fitness lead to maintained or increased cross-task interaction, while unsuccessful transfers result in reduced transfer rates between incompatible tasks [27].

Table 1: Key Components of BOMTEA Architecture

| Component | Implementation in BOMTEA | Advantage over Static Approaches |

|---|---|---|

| Operator Pool | DE/rand/1 + SBX | Combines exploration strength of DE with exploitation capability of GA |

| Selection Mechanism | Adaptive probability based on operator performance | Dynamically identifies optimal operator for each task |

| Knowledge Transfer | Adaptive random mating probability (rmp) | Balances transfer benefits against negative transfer risks |

| Population Management | Unified search space with skill factor tagging | Enables implicit knowledge sharing while maintaining task specificity |

Workflow Visualization

The following diagram illustrates the comprehensive workflow of BOMTEA, highlighting the adaptive operator selection mechanism and knowledge transfer process:

Experimental Analysis and Performance Comparison

Benchmark Protocols and Evaluation Metrics

The performance evaluation of BOMTEA employs well-established multitasking optimization benchmarks, primarily the CEC17 and CEC22 test suites, which provide standardized problem sets for comparative analysis of evolutionary multitasking algorithms [27] [8]. These benchmarks encompass diverse problem characteristics, including complete-intersection high-similarity (CIHS), complete-intersection medium-similarity (CIMS), and complete-intersection low-similarity (CILS) problem types, allowing comprehensive assessment of algorithm performance across varying levels of inter-task relatedness [27].

Experimental protocols typically follow a standardized evaluation framework where all competing algorithms are executed with identical population sizes, function evaluation limits, and termination criteria to ensure fair comparison. The population size is commonly set to 30 individuals per task, with algorithms running until a predetermined maximum number of function evaluations is reached [27].

Performance quantification employs multiple metrics to capture different aspects of algorithmic effectiveness. The average accuracy measures solution quality across all tasks, while convergence speed assesses how rapidly algorithms approach near-optimal solutions. Additionally, task similarity metrics help quantify the degree of complementarity between optimization tasks, providing insights into the conditions under which knowledge transfer proves most beneficial [27] [17].

Comparative Performance Analysis

Experimental studies demonstrate that BOMTEA significantly outperforms single-operator evolutionary multitasking algorithms across diverse problem types. The following table summarizes the comparative performance of BOMTEA against prominent alternative algorithms on the CEC17 and CEC22 benchmark suites:

Table 2: Performance Comparison of BOMTEA Against Competing Algorithms

| Algorithm | Operator Strategy | CIHS Performance | CIMS Performance | CILS Performance | Overall Ranking |

|---|---|---|---|---|---|

| BOMTEA | Adaptive DE + SBX | Superior | Superior | Superior | 1st |

| MFEA | GA only | Moderate | Low | High | 3rd |

| MFDE | DE/rand/1 only | High | High | Moderate | 4th |

| EMEA | Fixed DE + GA | High | High | High | 2nd |

| RLMFEA | Random DE/GA | Moderate | Moderate | Moderate | 5th |

The performance advantages of BOMTEA are particularly pronounced in scenarios involving tasks with differing landscape characteristics, where the adaptive operator selection mechanism successfully identifies the most appropriate search operator for each task. On complete-intersection high-similarity (CIHS) problems, BOMTEA leverages the strengths of DE operators to achieve rapid convergence, while on complete-intersection low-similarity (CILS) problems, it automatically increases the utilization of SBX operators to maintain population diversity and avoid premature convergence [27].

The convergence speed analysis reveals that BOMTEA achieves comparable solution quality to single-operator approaches with significantly fewer function evaluations, demonstrating its efficiency in leveraging operator complementarity. This accelerated convergence is attributed to the avoidance of performance plateaus that commonly afflict single-operator approaches when faced with diverse optimization tasks [27].

Application in Drug Design and Development

The principles implemented in BOMTEA find natural application in drug design and development, where researchers frequently need to optimize multiple molecular properties simultaneously. Evolutionary multitasking approaches enable the concurrent optimization of compounds for multiple target proteins or the simultaneous consideration of efficacy, safety, and synthesizability criteria [28] [29] [30].

In computer-aided drug design, molecular optimization is typically formulated as a multi-objective problem with competing criteria. Common objectives include quantitative estimate of drug-likeness (QED), synthetic accessibility (SA) score, biological activity against specific targets, and avoidance of toxicity endpoints. BOMTEA's adaptive operator strategy proves particularly valuable in this context due to the diverse landscape characteristics of different objective functions, which may benefit from different search operators throughout the optimization process [29].

Case studies in evolutionary multitasking fuzzy cognitive map learning for gene regulatory network reconstruction demonstrate the practical utility of BOMTEA's approach in biological domains. These applications involve learning multiple fuzzy cognitive maps simultaneously, where each map represents causal relationships between different biological entities. The adaptive knowledge transfer mechanism enables the sharing of common substructures or patterns across related learning tasks, significantly accelerating the convergence compared to single-task learning approaches [28].

Research Reagents and Computational Tools

The experimental validation of evolutionary multitasking algorithms like BOMTEA relies on specialized computational frameworks and benchmark resources. The following table outlines key components of the research toolkit for evolutionary multitasking studies:

Table 3: Essential Research Resources for Evolutionary Multitasking Studies

| Resource Category | Specific Tools | Function in Research | Application Context |

|---|---|---|---|

| Benchmark Suites | CEC17, CEC22 MTO Benchmarks | Standardized performance assessment | Algorithm comparison and validation |

| Molecular Representations | SELFIES, SMILES | Chemical structure encoding | Drug design applications |

| Drug-likeness Metrics | QED, SA Score | Compound quality evaluation | Multi-objective molecular optimization |

| Optimization Frameworks | MFEA, MFEA-II, MTGA | Baseline algorithm implementation | Performance benchmarking |

| Biological Networks | DREAM3, DREAM4 | Gene regulatory network reconstruction | Validation on biological datasets |

The CEC17 and CEC22 benchmark suites provide standardized problem sets specifically designed for evolutionary multitasking research, enabling direct comparison between different algorithmic approaches. These benchmarks include problems with varying degrees of inter-task similarity and complementarity, allowing researchers to assess algorithm performance under diverse multitasking scenarios [27] [8].

In drug design applications, molecular representation schemes such as SELFIES (SELF-referencing Embedded Strings) offer significant advantages over traditional SMILES representations by guaranteeing chemical validity of all generated structures, thereby improving optimization efficiency in evolutionary algorithms [29].

BOMTEA represents a significant advancement in evolutionary multitasking optimization through its innovative adaptive bi-operator strategy. By dynamically balancing the utilization of differential evolution and genetic algorithm operators based on their demonstrated performance, BOMTEA overcomes fundamental limitations of fixed-operator approaches and establishes a new state-of-the-art in multitasking optimization performance.

The algorithm's robust performance across diverse benchmark problems, particularly on the standardized CEC17 and CEC22 test suites, demonstrates its effectiveness in harnessing operator complementarity to accelerate convergence and improve solution quality. The adaptive operator selection mechanism enables BOMTEA to automatically tailor its search strategy to the characteristics of different optimization tasks without requiring prior knowledge or manual parameter tuning.

For drug development professionals and researchers, BOMTEA's methodology offers promising avenues for addressing complex multi-objective optimization challenges inherent in molecular design. The ability to simultaneously optimize multiple compound properties while adapting search behavior to the specific characteristics of each objective aligns closely with the practical requirements of computer-aided drug design.

Future research directions include extending the adaptive framework to incorporate broader sets of evolutionary operators, developing more sophisticated transfer learning mechanisms to enhance cross-task knowledge exchange, and applying BOMTEA to large-scale real-world drug design problems with numerous competing objectives. As evolutionary multitasking continues to evolve, adaptive operator strategies like those implemented in BOMTEA will play an increasingly crucial role in addressing the complex optimization challenges across scientific and engineering domains.

Domain adaptation (DA) addresses a fundamental challenge in machine learning: models trained on a source domain often experience significant performance degradation when applied to a target domain with different data distributions, a phenomenon known as domain shift [31] [32]. This problem is particularly acute in fields like drug development and biomedical research, where high-dimensional omics data and medical images exhibit substantial variability across institutions, patients, and experimental conditions [33] [32].