A Practical Guide to (μ,λ) Evolution Strategies: From Core Concepts to Biomedical Applications

This tutorial provides a comprehensive exploration of (μ,λ) Evolution Strategies, a powerful class of stochastic optimization algorithms inspired by natural evolution.

A Practical Guide to (μ,λ) Evolution Strategies: From Core Concepts to Biomedical Applications

Abstract

This tutorial provides a comprehensive exploration of (μ,λ) Evolution Strategies, a powerful class of stochastic optimization algorithms inspired by natural evolution. Tailored for researchers and drug development professionals, the article begins with foundational concepts and taxonomy, then progresses to practical implementation and parameter tuning. It covers advanced optimization techniques, comparative analysis with other algorithms, and concludes with specific applications and future directions in biomedical research, including drug design and clinical optimization challenges.

The Foundations of Evolution Strategies: From Biological Inspiration to Optimization Powerhouse

Evolution Strategies (ES) emerged in the 1960s as a novel optimization technique inspired by biological evolution, developed primarily by Ingo Rechenberg and Hans-Paul Schwefel at the Technical University of Berlin [1] [2]. Their work originated from practical engineering challenges that resisted solution by traditional optimization methods, leading them to formulate algorithms that mimicked the principles of natural selection [3] [4]. Unlike other evolutionary algorithms that developed during this period, ES initially focused on phenotypic-level evolution rather than genetic mechanisms, operating directly on continuous parameters without chromosomal abstractions [2] [4]. This approach distinguished ES from Genetic Algorithms (GAs) and Evolutionary Programming (EP), though all three methodologies would later be recognized as subclasses of Evolutionary Algorithms (EAs) [2].

The foundational philosophy behind ES was to subject a population of candidate solutions to simulated biological evolution, where new solutions were generated through random mutation of parameters and selected based on their performance [2]. This process enabled the solution of difficult optimization problems where standard methods failed due to noisy evaluations, lack of mathematical formulations, or complex search landscapes with multiple local optima [2]. The early success of ES in solving real-world engineering problems demonstrated its practical value and established it as a significant contribution to the field of optimization.

The Pioneers and Their Foundational Work

Key Innovators and Historical Context

The development of Evolution Strategies was primarily driven by Ingo Rechenberg, Hans-Paul Schwefel, and their colleague Bienert, who began collaborating in 1964 as students at the Technical University of Berlin [4]. Their pioneering work was motivated by the challenge of optimizing aerodynamics design problems, particularly minimizing drag in wind tunnel experiments [3] [4]. This practical engineering context significantly influenced the development of ES, shaping it into a technique well-suited for continuous parameter optimization problems commonly encountered in engineering disciplines [5].

The seminal work in Evolution Strategies began with Rechenberg's PhD thesis in 1971, which was later published as a book in 1973 [1] [4]. Schwefel followed with his own PhD dissertation in 1975, also published as a book in 1977 [4]. Both works were originally published in German, with Schwefel's book later translated into English, becoming a classical reference for the technique [4]. The earliest English-language paper on ES was published by Klockgether and Schwefel in 1970, focusing on the two-phase nozzle design problem that served as a key test case for the methodology [4].

Table: Key Historical Milestones in Early Evolution Strategies Development

| Year | Development | Key Contributors | Significance |

|---|---|---|---|

| 1964 | Initial development of ES | Bienert, Rechenberg, Schwefel | First application to aerodynamic design optimization [4] |

| 1970 | Two-phase nozzle problem paper | Klockgether, Schwefel | Early English-language publication demonstrating ES applications [4] |

| 1971 | Rechenberg's PhD thesis | Rechenberg | First comprehensive theoretical foundation for ES [4] |

| 1973 | "Evolutionsstrategie – Optimierung technischer Systeme nach Prinzipien der biologischen Evolution" published | Rechenberg | Seminal book establishing ES as an optimization methodology [1] |

| 1975 | Schwefel's PhD dissertation | Schwefel | Extended theoretical framework and applications [4] |

| 1977 | "Numerische Optimierung von Computer-Modellen mittels der Evolutionsstrategie" published | Schwefel | Comprehensive book on numerical optimization using ES [4] |

The First Evolution Strategy: (1+1)-ES

The simplest and earliest Evolution Strategy was the (1+1)-ES, which operated on a single parent that generated a single offspring each generation [1] [5]. In this approach, the algorithm selected the better of the parent or offspring to continue to the next generation, effectively implementing a form of greedy hill climbing with an evolutionary selection mechanism [3] [4]. The (1+1)-ES relied solely on mutation as the genetic operator, with each variable typically perturbed using Gaussian-distributed random numbers [2] [5].

A key innovation in the early ES was the 1/5 success rule, developed to adapt the mutation step size during the search process [4] [5]. This heuristic rule stated that the ratio of successful mutations to all mutations should be approximately 1/5 [4]. If the success rate was higher, the mutation step size was increased to explore the search space more broadly; if lower, the step size was decreased to focus on local refinement [4] [5]. This self-adaptation mechanism represented a crucial advancement that allowed ES to automatically adjust their search parameters during optimization, balancing exploration and exploitation without manual intervention.

Early Engineering Applications and Case Studies

The Two-Phase Nozzle Problem

One of the most significant early applications of Evolution Strategies was the optimization of a two-phase nozzle design, which demonstrated ES's capability to solve complex engineering problems that challenged traditional optimization methods [4]. This problem involved finding the optimal shape for a nozzle that would maximize the velocity of a fluid, requiring optimization in a high-dimensional search space with multiple constraints [4]. The successful application of ES to this problem provided compelling evidence of the method's practical utility and helped establish its credibility within the engineering community.

The two-phase nozzle problem was particularly suitable for ES because it involved continuous parameters, noisy evaluations, and a complex search landscape where gradient-based methods struggled [2]. By using mutation and selection operations directly on the nozzle shape parameters, ES was able to discover novel designs that improved upon conventional solutions [4]. This case study exemplified the ability of evolution-based optimization to produce unexpected yet effective solutions to engineering challenges, a characteristic that would become a hallmark of evolutionary computation approaches.

Wind Tunnel and Aerodynamic Design

The original motivation for developing Evolution Strategies came from aerodynamic design optimization in wind tunnels, where Rechenberg, Schwefel, and their colleagues sought to minimize drag on physical structures [3] [4]. These early applications required manual implementation of the evolutionary operations, with engineers physically modifying designs in the wind tunnel based on the principles of mutation and selection [3]. This hands-on approach necessitated efficient optimization strategies that could converge to good solutions with relatively few evaluations, leading to the development of the robust and sample-efficient (1+1)-ES [4].

In these wind tunnel experiments, each candidate solution represented a physical design that had to be constructed and tested, making evaluation expensive and time-consuming [3]. The ES approach proved valuable in this context because it could make progress toward better designs with fewer evaluations compared to exhaustive search or gradient-based methods that required derivative information [2]. The success of ES in these early engineering applications demonstrated its potential for expensive black-box optimization problems, where objective function evaluations are computationally costly or involve physical experiments.

Algorithmic Framework and Notation

The (μ,λ)-ES and (μ+λ)-ES Variants

Building on the simple (1+1)-ES, Rechenberg and Schwefel developed population-based Evolution Strategies that incorporated multiple parents and offspring, leading to the formal notation that would become standard in the field [1] [3]. The two primary variants were:

(μ,λ)-ES: In this approach, μ parents generate λ offspring through mutation, and the best μ offspring are selected to form the next generation, completely replacing the parents [1] [3]. This comma-selection strategy emphasizes exploration and prevents the retention of older solutions, which can be beneficial for dynamic problems or when continued exploration is desired [4].

(μ+λ)-ES: In this elitist approach, μ parents generate λ offspring, and selection occurs from the union of parents and offspring (μ + λ individuals), with the best μ selected for the next generation [1] [3]. This plus-selection strategy preserves the best solutions found so far, promoting convergence and refinement, which can be advantageous for static optimization problems [4].

The ratio of μ to λ influences the selection pressure within the algorithm, with higher ratios typically resulting in more greedy search behavior [4]. A common configuration was μ = λ/2, though optimal settings depended on the specific problem characteristics [1].

Table: Comparison of Early Evolution Strategy Variants

| Characteristic | (1+1)-ES | (μ,λ)-ES | (μ+λ)-ES |

|---|---|---|---|

| Parent Population | 1 | μ | μ |

| Offspring Population | 1 | λ | λ |

| Selection Pool | Parent + Offspring | Offspring only | Parents + Offspring |

| Elitism | Yes | No | Yes |

| Exploration Emphasis | Low | High | Medium |

| Convergence Behavior | Greedy hill climbing | Continued exploration | Refinement and convergence |

| Historical Context | Original ES implementation | Extension to populations | Elitist variant for refinement |

Representation and Mutation Operations

Early Evolution Strategies used natural problem-dependent representations, where the problem space and search space were identical [1]. For continuous optimization problems, which were the primary application domain, candidate solutions were typically represented as real-valued vectors [4] [5]. This direct representation distinguished ES from Genetic Algorithms, which often used binary encodings of parameters [2] [5].

The primary variation operator in ES was Gaussian mutation, where each component of the solution vector was perturbed by adding a zero-mean Gaussian random variable [5]. The standard deviation of this mutation functioned as a step size parameter, controlling the magnitude of changes to the solution [4]. In self-adaptive ES, these strategy parameters (step sizes) were encoded alongside the solution parameters and evolved simultaneously, allowing the algorithm to adapt its search distribution during the optimization process [1] [4]. This self-adaptation mechanism was a distinctive feature of ES and contributed significantly to its performance on complex optimization problems.

The Experimental Protocol of Early ES

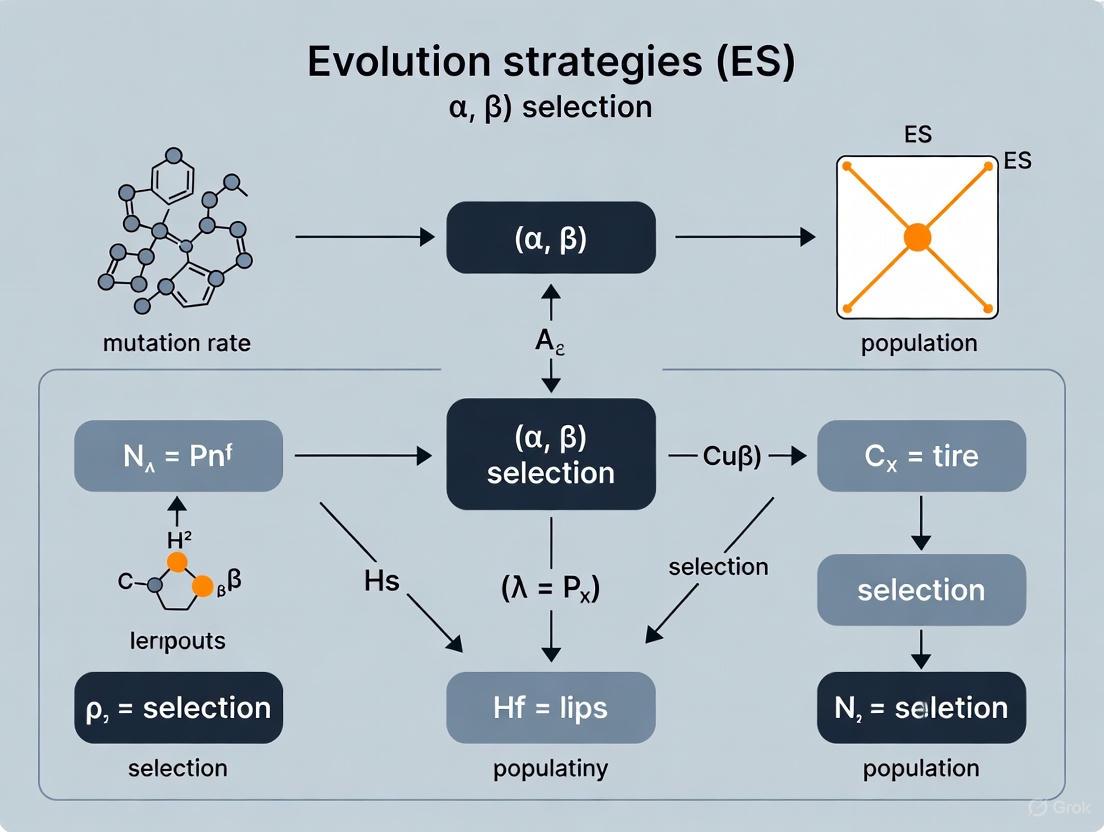

The implementation of early Evolution Strategies followed a systematic procedure that established the framework for subsequent developments in evolutionary computation. The diagram below illustrates the workflow of the (μ,λ)-ES algorithm, which represented a significant advancement over the initial (1+1)-ES approach.

Algorithmic Workflow and Implementation

The experimental protocol for early Evolution Strategies followed a generational approach that iteratively applied mutation and selection operations to improve candidate solutions [3] [4]. The process began with the initialization of a population of μ individuals, typically generated randomly within the defined search space bounds [3]. Each candidate solution was evaluated using the objective function specific to the optimization problem, with the goal of either minimizing or maximizing this function [2].

Following evaluation, the algorithm entered its main generational loop, where λ offspring were generated through mutation operations applied to the parent population [4]. In the simplest case, each parent produced approximately λ/μ offspring through Gaussian mutation of its solution vector [3]. The mutation process typically included mechanisms to ensure that offspring remained within feasible regions of the search space, often through bounded operations or rejection sampling [3]. After evaluating the newly generated offspring, the selection operation identified the most promising individuals to form the next generation, following either the (μ,λ) or (μ+λ) selection strategy [1] [4].

This process continued until a termination criterion was satisfied, such as reaching a maximum number of generations, achieving a target solution quality, or observing stagnation in improvement across successive generations [4]. Upon termination, the best solution encountered during the search was returned as the result of the optimization process [3].

The Researcher's Toolkit: Essential Components

The implementation and application of early Evolution Strategies required several key components that formed the essential "research toolkit" for engineers and scientists working with these algorithms.

Table: Essential Components for Early Evolution Strategies Research

| Component | Function | Implementation Notes |

|---|---|---|

| Representation Scheme | Encoded candidate solutions | Real-valued vectors for continuous parameters [4] [5] |

| Mutation Operator | Generated new candidate solutions | Gaussian perturbation with adaptable step sizes [4] [5] |

| Selection Mechanism | Selected promising solutions | (μ,λ) or (μ+λ) selection strategies [1] [3] |

| Step Size Adaptation | Controlled mutation magnitude | 1/5 success rule or self-adaptive parameters [4] [5] |

| Constraint Handling | Maintained feasible solutions | Bound checking, rejection, or penalty methods [3] |

| Fitness Evaluation | Assessed solution quality | Problem-specific objective function [2] |

The representation scheme formed the foundation of ES implementations, with real-valued vectors directly encoding the parameters to be optimized [5]. The mutation operator introduced variation into the population, typically through Gaussian perturbation of solution vectors [4]. The selection mechanism implemented the evolutionary pressure that drove improvement across generations, with the specific strategy (comma or plus selection) influencing the algorithm's exploration-exploitation balance [1]. Step size adaptation mechanisms, such as the 1/5 success rule, enabled the algorithm to automatically adjust the magnitude of mutations during the search process [4] [5]. Constraint handling techniques ensured that solutions remained within feasible regions, often through bound checking and rejection of invalid candidates [3]. Finally, the fitness evaluation function encapsulated the specific optimization problem being solved, providing the quantitative measure that guided the search process [2].

Comparative Analysis with Contemporary Methods

During the same period that Rechenberg and Schwefel developed Evolution Strategies, other researchers were independently creating related evolutionary computation approaches, including Genetic Algorithms (GAs) by John Holland and Evolutionary Programming (EP) by Lawrence Fogel [2]. While these approaches shared inspiration from biological evolution, they differed in their representations, operators, and emphasis.

ES distinguished itself through its focus on continuous parameter optimization using direct real-valued representations, in contrast to GAs which initially emphasized discrete optimization using binary representations [2] [5]. ES also placed greater emphasis on mutation as the primary variation operator, while contemporary GAs typically relied more heavily on recombination operations [2] [5]. Additionally, ES operated primarily at the phenotypic level (direct parameter values), whereas GAs employed genotypic representations with chromosomal structures [2].

The competition between these different evolutionary approaches initially led proponents of each method to advocate for the superiority of their preferred approach [2]. However, over time, researchers recognized that each method had particular strengths and limitations, and they began to be viewed as complementary subclasses of Evolutionary Algorithms rather than competing methodologies [2]. This perspective enabled more productive research that leveraged the advantages of each approach for different problem types and domains.

The historical development of Evolution Strategies by Rechenberg and Schwefel established a foundation for evolutionary computation that continues to influence optimization research and practice today. Their pioneering work demonstrated the power of evolution-inspired algorithms for solving complex engineering problems and established methodological principles that would be extended and refined in subsequent decades. The early applications to aerodynamic design and nozzle optimization provided compelling evidence of the practical utility of these approaches, paving the way for their adoption across numerous domains and inspiring generations of researchers in evolutionary computation.

Evolution Strategies (ES) represent a class of stochastic, derivative-free optimization algorithms belonging to the broader field of evolutionary computation. Originally developed in the 1960s by Ingo Rechenberg and Hans-Paul Schwefel for solving complex engineering optimization problems, these algorithms imitate the principles of biological evolution—mutation, recombination, and selection—to iteratively improve a population of candidate solutions. The specific notation (μ,λ)-ES and the accompanying comma selection principle form a foundational concept within this field, enabling effective optimization across continuous, discrete, and combinatorial search spaces, including applications in drug development and computational biology.

The core terminology of μ (mu) and λ (lambda) provides a standardized way to describe the population dynamics of Evolution Strategies. In this nomenclature, μ represents the number of parent solutions in the population, while λ denotes the number of offspring solutions generated in each iteration (generation). The comma selection principle refers to the strategy where only the offspring population (λ) is considered for selection, and the parent population (μ) is completely replaced every generation. This contrasts with plus strategies (denoted (μ+λ)-ES), where selection occurs from the combined pool of both parents and offspring. The comma strategy embodies a more realistic model of biological evolution where no individual survives forever, facilitating continuous exploration of the search space and preventing premature convergence to local optima.

Core Algorithmic Components

The (μ,λ)-ES Algorithm Procedure

The canonical (μ,λ)-Evolution Strategy follows a structured procedure that iterates until a termination criterion is met. The algorithm maintains a population of μ candidate solutions, typically represented as real-valued vectors. Each iteration, or generation, involves generating λ new candidate solutions (offspring) through the application of mutation and potentially recombination operators to the parent solutions. Subsequently, the algorithm selects the μ best solutions exclusively from the λ offspring to form the parent population for the next iteration. This selective pressure, applied only to the newly generated offspring, drives the population toward better regions of the search space over successive generations.

Table 1: Key Data Structures and Parameters in (μ,λ)-ES

| Component | Type | Description |

|---|---|---|

| Population | Data Structure | An array of μ candidate solutions, each a real-valued vector [6]. |

| Fitness | Data Structure | An array of μ values representing the objective function evaluation of each candidate [6]. |

| μ (mu) | Parameter | The number of parent solutions in the population [6] [3] [7]. |

| λ (lambda) | Parameter | The number of offspring solutions generated each iteration [6] [3] [7]. |

| mutationStepSize | Parameter | The standard deviation for the Gaussian mutation operator; can be fixed or adapted [6] [3]. |

| maxIterations | Parameter | A common termination criterion specifying the maximum number of algorithm generations [6]. |

The following diagram illustrates the logical workflow and the flow of information in a standard (μ,λ)-Evolution Strategy.

The Role and Tuning of μ and λ

The relationship between μ and λ is critical to the performance of the ES. The ratio λ/μ, often called the "selection pressure," determines the algorithm's character. A higher ratio (e.g., λ = 7μ) promotes exploration and helps maintain diversity, making the algorithm more robust against local optima. In contrast, a lower ratio favors exploitation and can lead to faster convergence in the vicinity of a good solution, though potentially at the risk of premature convergence.

Table 2: Heuristics for Configuring μ and λ Parameters

| Parameter | Heuristic | Impact on Search Dynamics |

|---|---|---|

| μ (Parent Number) | Set proportional to the square root of problem dimensionality [6]. A larger μ increases diversity but also computational cost. | Larger μ: Enhanced diversity, broader exploration. Smaller μ: Faster convergence, risk of premature convergence. |

| λ (Offspring Number) | Typically λ > μ. A common rule of thumb is λ = 7μ [6]. Must be great or equal to μ for comma-selection [4]. | Larger λ (vs. μ): Increased exploration, better escape from local optima. Smaller λ (vs. μ): Increased exploitation, faster convergence. |

| λ/μ Ratio | A standard heuristic is to set this ratio to 7 [6]. The ratio influences the "selection pressure." [8] | High Ratio: High selection pressure, explorative search. Low Ratio: Low selection pressure, exploitative search. |

For researchers in drug development, these parameters offer tunable knobs to balance the search for novel molecular structures (exploration) against the refinement of promising candidate compounds (exploitation). The comma selection principle's inherent forgetting of parents is particularly advantageous for dynamic optimization problems, such as those involving adaptive disease models or shifting biochemical assays.

The Comma Selection Principle: Theory and Application

The comma selection principle, denoted by the comma in (μ,λ)-ES, is a defining feature that differentiates it from the plus strategy. In comma selection, the parent population from generation g is completely replaced by the selected offspring, which become the parents for generation g+1. Formally, if P(g) is the parent population at generation g and C(g) is the offspring population generated from P(g), then the next parent population is P(g+1) = selectμbest( C(g) ). This "forgetting" mechanism prevents individuals from surviving indefinitely and is a key factor in the self-adaptation of strategy parameters, such as mutation step sizes.

The theoretical foundation of comma selection is linked to its ability to facilitate self-adaptation. Because strategy parameters (like mutation rates) are inherited by offspring, selecting only from offspring allows the algorithm to favor individuals that not only have good objective values but also possess strategy parameters that are well-adapted to the current stage of the search. This process would be hindered if long-lived parents with potentially outdated strategy parameters were always preserved. Consequently, the comma strategy is often more effective at avoiding long stagnation phases caused by misadapted strategy parameters and is considered more "realistic" from an evolutionary biology perspective.

Experimental Protocol & Research Toolkit

Detailed Methodology for (μ,λ)-ES

For researchers seeking to implement or benchmark the (μ,λ)-ES, the following detailed protocol, synthesizing information from multiple sources, can be employed.

Initialization:

- Define the objective function

f(y)to be minimized or maximized. - Set the algorithmic parameters: μ (parent number), λ (offspring number, with λ ≥ μ), initial mutation step size (

σ), and a termination criterion (e.g.,maxGenerationsor a fitness threshold). - Initialize the population: Generate μ candidate solutions, typically by sampling uniformly from the defined bounds of the search space. Each individual can be a vector of real numbers

y[3].

- Define the objective function

Generational Loop: Repeat until the termination criterion is met.

- Offspring Generation (

λindividuals): For each of the λ offspring, create a new candidate solution.- Recombination (Optional but common): Select ρ ≤ μ parents (e.g., uniformly at random). Create a recombinant individual

rby combining their genetic information. A standard method is intermediate recombination, where the recombinant is the weighted average of the selected parents [7]. - Mutation: Apply mutation to the recombinant (or to a single parent if recombination is skipped).

- Strategy Parameter Mutation: First, mutate the strategy parameters. For a simple step size

σ, mutate it log-normally:σ_child = σ_parent * exp(τ * N(0,1)), whereτis a learning rate [7]. - Object Parameter Mutation: Then, mutate the object parameters (the solution vector) using the mutated strategy parameter:

y_child = y_parent + σ_child * N(0, I), whereN(0, I)is a vector of independent Gaussian random variables [7].

- Strategy Parameter Mutation: First, mutate the strategy parameters. For a simple step size

- Recombination (Optional but common): Select ρ ≤ μ parents (e.g., uniformly at random). Create a recombinant individual

- Evaluation: Evaluate the fitness

F(y_child)of all λ offspring individuals. In a noisy environment, this might involve multiple evaluations or a surrogate model. - Selection (Comma Principle): From the set of λ offspring only, select the μ individuals with the best (lowest for minimization, highest for maximization) fitness values. These selected individuals become the parent population for the next generation. The previous parent population is entirely discarded [6] [9].

- Offspring Generation (

Termination: Once the loop terminates (e.g., after

maxGenerations), return the best solution found during the entire search process.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for an Evolution Strategies Experiment

| Item / Component | Function in the ES "Experiment" | Research-Grade Example / Note |

|---|---|---|

| Objective Function | The function to be optimized; defines the problem landscape. | In drug development, this could be a scoring function predicting binding affinity, solubility, or a multi-objective combination of ADMET properties. |

| Representation (Genotype) | Encodes a candidate solution for the problem. | A real-valued vector representing molecular descriptors, a string representing a SMILES notation, or a direct parameterization of a molecular graph. |

| Mutation Operator | Introduces small, random variations to create new candidate solutions from existing ones. | Gaussian perturbation for real-valued parameters or specialized operators for molecular graphs (e.g., atom/bond changes). |

| Recombination Operator | Combines information from multiple parents to create new offspring. | Intermediate or discrete recombination of vectors [7]. In molecular design, this could be a crossover of molecular fragments. |

| Selection Operator | Determines which solutions are allowed to propagate to the next generation. | The comma-selection (μ,λ) rule itself. The "fitness" is the objective function value, guiding selection towards better solutions. |

| Strategy Parameter Adaptation | A mechanism to dynamically control the search, such as the mutation step size. | Self-adaptation of step sizes (σ) or more advanced methods like Covariance Matrix Adaptation (CMA) [7] [10]. |

Comparative Analysis and Advanced Variants

The (μ,λ)-ES is one of several configurations in the family of Evolution Strategies. A critical comparison with its counterpart, the (μ+λ)-ES, reveals distinct operational philosophies. In the (μ+λ) strategy, selection is performed on the union of the μ parents and the λ offspring. This "plus" selection is inherently elitist, as it guarantees that the best solution found so far is never lost. While this can lead to faster convergence on simple, static problems, it increases the risk of premature convergence on complex, multi-modal landscapes because the population can become trapped in a local optimum dominated by a long-lived, highly fit parent.

The comma strategy's forced forgetting of parents makes it more robust for dynamic optimization and better suited for the self-adaptation of internal strategy parameters. It aligns with a constant, explorative search of the fitness landscape. The 1/5th success rule, an early heuristic for step-size control, is historically tied to the (1+1)-ES but underscores the importance of balancing exploration and exploitation. Modern ES variants, such as the Covariance Matrix Adaptation ES (CMA-ES), often build upon the (μ,λ) comma selection principle. CMA-ES enhances the algorithm by adaptively learning the full covariance matrix of the search distribution, effectively rotating and scaling the mutation distribution to align with the topology of the objective function, which is a form of second-order information [10]. For drug development professionals, this translates to more efficient navigation of complex, high-dimensional molecular fitness landscapes.

Evolution Strategies (ES) represent a cornerstone of evolutionary computation, a subfield of Computational Intelligence inspired by biological evolution for solving complex optimization problems [11] [1]. Developed in the 1960s by Ingo Rechenberg, Hans-Paul Schwefel, and their colleagues in Germany, ES were designed to tackle challenging optimization problems in engineering and industry [1] [9]. These algorithms operate on real-valued vectors, making them particularly well-suited for continuous optimization tasks where the search space is large and multidimensional [9] [12].

The taxonomy of Evolution Strategies is fundamentally characterized by their selection mechanisms, primarily distinguished by the notation (μ, λ)-ES and (μ + λ)-ES, where μ represents the number of parent solutions and λ denotes the number of offspring generated in each iteration [1] [6]. This nomenclature forms the basis for understanding how different ES variants manage the interplay between exploration and exploitation during the optimization process. While these algorithms share common evolutionary operators such as mutation and selection, their approach to population management differs significantly, leading to distinct algorithmic behaviors and performance characteristics across various problem domains [11] [1] [6].

Evolution Strategies have gained renewed attention in recent years due to their effectiveness in training deep neural networks [12] and solving complex black-box optimization problems where gradient information is unavailable or impractical to obtain [12]. Their population-based nature provides robustness against noisy, non-convex, and multimodal landscapes that challenge traditional gradient-based methods [12]. This technical guide examines the core principles, mechanisms, and practical implementations of ES variants within the broader context of evolutionary computation research.

Core Concepts and Terminology

Fundamental Principles

Evolution Strategies operate on populations of candidate solutions, applying iterative processes of variation and selection to progressively improve solution quality [1]. Each candidate solution is typically represented as a real-valued vector, encoding the parameters to be optimized [9]. Unlike genetic algorithms that often emphasize recombination operations, early ES variants focused primarily on mutation as the main variation operator [1], though modern implementations frequently incorporate sophisticated recombination mechanisms [9].

The evolutionary cycle in ES follows a generate-and-test paradigm: parents produce offspring through variation operators (primarily mutation), and the fittest individuals are selected to form the next generation [1] [6]. This process leverages the principles of natural selection, where solutions with higher fitness have a greater probability of influencing future generations [13]. Strategy parameters, which control the statistical properties of the mutation operator, often undergo self-adaptation alongside the solution parameters, enabling the algorithm to dynamically adjust its search characteristics during optimization [1] [12].

Key Definitions

μ (mu): The number of parent solutions in the population [11] [1] [6]. These individuals represent the current best solutions and form the basis for generating new candidate solutions through variation operators.

λ (lambda): The number of offspring solutions generated in each iteration (generation) [11] [1] [6]. Typically, λ is larger than μ to promote exploration of the search space.

Fitness: A quantitative measure of solution quality evaluated by an objective function [13]. In minimization problems, lower fitness values indicate better solutions, while in maximization problems, higher values are preferable.

Mutation: A variation operator that introduces random perturbations to candidate solutions [1] [13]. In ES, mutation typically involves adding normally distributed random values to solution components, with the mutation strength often controlled by strategy parameters.

Recombination: A variation operator that combines information from multiple parents to produce offspring [9]. This process simulates genetic crossover in biological evolution and promotes the mixing of beneficial traits within the population.

Selection: The process of choosing individuals based on their fitness to form the next generation [1] [6]. Selection mechanisms determine which solutions survive and reproduce, thereby driving the population toward better regions of the search space.

The (μ,λ)-Evolution Strategy

Algorithmic Framework

The (μ,λ)-Evolution Strategy employs a selection mechanism where only the offspring compete for survival [1] [6]. In each generation, the algorithm generates λ offspring from μ parents through mutation and potentially recombination [9]. After evaluating the fitness of all offspring, the best μ individuals are selected to become the parents of the next generation, completely replacing the current parent population [1] [6]. This approach ensures that all parents have recent ancestry, potentially facilitating better adaptation to local search landscapes.

A critical requirement for the (μ,λ)-ES is that λ must be greater than μ (typically λ ≈ 7μ) to ensure sufficient selection pressure [1] [6]. The complete replacement of parents each generation promotes exploration and helps the algorithm escape local optima, as no individual persists indefinitely regardless of its quality [14] [1]. This property makes the (μ,λ)-ES particularly suitable for dynamic optimization problems where the optimum may shift over time [14].

Procedural Implementation

The pseudocode for the conventional (μ,λ)-ES algorithm can be described as follows [9]:

- Initialize a population of μ individuals randomly within the search space

- While termination criterion is not met:

- 2.1. Generate λ offspring by applying variation operators (mutation and/or recombination) to the parent population

- 2.2. Evaluate the fitness of each offspring

- 2.3. Select the best μ offspring to form the new parent population

- Return the best solution found

Table 1: Key Characteristics of (μ,λ)-ES

| Aspect | Description |

|---|---|

| Selection Mechanism | Comma-selection (μ < λ must hold) |

| Survival | Only offspring compete for survival; parents are completely discarded |

| Convergence | No theoretical guarantee of convergence to optimum [9] |

| Exploration | High exploration capability due to complete population replacement |

| Best Suited For | Dynamic environments, problems with moving optima [14] |

The workflow of the (μ,λ)-ES follows a strict generational replacement pattern, as visualized below:

Diagram 1: (μ,λ)-ES workflow showing complete replacement of parents by offspring.

The (μ+λ)-Evolution Strategy

Algorithmic Framework

The (μ+λ)-Evolution Strategy implements an elitist selection approach where both parents and offspring compete for survival [11] [1]. In each iteration, the algorithm generates λ offspring from μ parents through mutation and potentially recombination [9]. The key distinction from the (μ,λ)-ES lies in the selection phase: instead of selecting only from the offspring, the algorithm combines parents and offspring into a pooled population of size μ + λ, then selects the best μ individuals from this combined set to form the next generation [11] [1].

This plus-selection mechanism ensures that the best solutions found so far are always preserved in the population [11]. While this promotes exploitation of known good solutions and typically leads to faster convergence, it also increases the risk of premature convergence to local optima [1] [9]. The (μ+λ)-ES has been proven to converge in probability under certain conditions, providing theoretical guarantees not available for the comma-selection variant [9].

Procedural Implementation

The pseudocode for the conventional (μ+λ)-ES algorithm can be described as follows [9]:

- Initialize a population of μ individuals randomly within the search space

- While termination criterion is not met:

- 2.1. Generate λ offspring by applying variation operators (mutation and/or recombination) to the parent population

- 2.2. Evaluate the fitness of each offspring

- 2.3. Combine parents and offspring into a single population of size μ + λ

- 2.4. Select the best μ individuals from the combined population to form the new parent population

- Return the best solution found

Table 2: Key Characteristics of (μ+λ)-ES

| Aspect | Description |

|---|---|

| Selection Mechanism | Plus-selection |

| Survival | Parents and offspring compete together |

| Convergence | Proven convergence in probability [9] |

| Exploitation | High exploitation capability due to elitism |

| Best Suited For | Static environments, unimodal problems |

The workflow of the (μ+λ)-ES maintains an archive of best solutions through elitist selection, as visualized below:

Diagram 2: (μ+λ)-ES workflow showing competition between parents and offspring.

Comparative Analysis and Performance Characteristics

Selection Pressure and Population Dynamics

The fundamental distinction between (μ,λ) and (μ+λ) selection strategies lies in their approach to selection pressure and population management [1]. Comma-selection in (μ,λ)-ES imposes stronger selection pressure by forcing complete turnover each generation, which promotes exploration and diversity but may discard good solutions prematurely [14] [1]. Plus-selection in (μ+λ)-ES provides weaker but more consistent selection pressure by preserving elite solutions, accelerating convergence but potentially reducing population diversity over time [1] [9].

These differences in selection strategy directly impact how each algorithm balances exploration (searching new regions) and exploitation (refining known good solutions) [1]. The (μ,λ)-ES typically exhibits more exploratory behavior, making it better suited for multimodal problems where escaping local optima is crucial [14] [1]. Conversely, the (μ+λ)-ES demonstrates stronger exploitative characteristics, often achieving faster convergence on unimodal problems or in the final stages of optimization [1].

Practical Performance Considerations

Table 3: Comparative Analysis of (μ,λ)-ES vs. (μ+λ)-ES

| Characteristic | (μ,λ)-ES | (μ+λ)-ES |

|---|---|---|

| Selection Type | Comma-selection | Plus-selection |

| Population Management | Offspring replace parents completely | Best individuals selected from parents + offspring |

| Theoretical Convergence | Question remains open [9] | Converges in probability [9] |

| Local Optima Avoidance | Superior - can escape local optima [14] [1] | Inferior - may converge prematurely |

| Dynamic Environments | Better suited due to continuous renewal [14] | Less suited due to elitism |

| Parameter Sensitivity | Sensitive to μ/λ ratio | Sensitive to population size |

| Implementation Complexity | Simpler population management | Slightly more complex selection |

Empirical studies and theoretical analyses have revealed several important performance characteristics [1] [9]. The (μ+λ)-ES generally demonstrates faster initial convergence and is more "economical" in utilizing highly adapted individuals by preserving them across generations [9]. However, this advantage may become a limitation on complex multimodal problems, where the algorithm can become trapped in local optima [1] [9]. The (μ,λ)-ES typically shows more robust performance across diverse problem landscapes but may require more fitness evaluations to achieve comparable solution quality [1].

Extended ES Variants and Modern Developments

Canonical ES Variants

Beyond the fundamental (μ,λ) and (μ+λ) strategies, several canonical ES variants have played significant roles in the historical development of evolution strategies:

(1+1)-ES: The simplest evolution strategy, maintaining a single parent that produces a single offspring each generation [1] [9]. If the offspring has better or equal fitness, it replaces the parent; otherwise, the parent persists [1]. This strategy implements a form of (1+1) selection and was among the earliest ES developed in the 1960s [1] [9].

(1+λ)-ES and (1,λ)-ES: Single-parent variants where one parent produces λ offspring [1]. In (1+λ)-ES, the best individual is selected from the parent and its offspring, while (1,λ)-ES selects only from the offspring [1]. These strategies are particularly useful in high-precision local search phases.

(μ/ρ,λ)-ES: Introduces the concept of recombination, where ρ ≤ μ represents the "mixing number" or the number of parents involved in producing each offspring [15]. The notation (μ/ρ + λ)-ES indicates recombination with plus-selection [15]. Recombination enables the exchange of genetic information between multiple parents, potentially creating more diverse and promising offspring [9].

Contemporary ES Approaches

Modern evolution strategies have evolved significantly from their canonical predecessors, incorporating sophisticated adaptation mechanisms and leveraging computational advances:

CMA-ES: Covariance Matrix Adaptation Evolution Strategy represents the state-of-the-art in ES research, adapting both the step sizes and the complete covariance matrix of the mutation distribution [14] [12]. CMA-ES typically employs a (μ/μw, λ) selection scheme, where μw denotes weighted recombination of all μ parents [14]. This approach enables the algorithm to learn appropriate search directions and scale automatically to problem difficulty.

Natural ES: Leverages concepts from information geometry, specifically the natural gradient, to update the search distribution parameters [1] [12]. This approach provides more principled update rules compared to heuristic adaptation mechanisms and has connections to other stochastic optimization techniques [12].

Derandomized ES: Removes stochastic components from the adaptation process, using deterministic updates based on successful mutation events [1] [12]. Derandomization improves algorithm stability and reduces sensitivity to random fluctuations in the fitness landscape.

Implementation Guidelines and Parameter Settings

Population Sizing and Ratio Selection

Appropriate parameter settings are crucial for achieving optimal performance with evolution strategies. The relationship between μ and λ significantly influences selection pressure and search characteristics [1] [6]. A common heuristic suggests setting λ = 7μ, providing sufficient selection pressure while maintaining population diversity [1] [6]. For the parent population size, setting μ proportional to the square root of problem dimensionality has shown robust performance across various problem domains [6].

The optimal μ/λ ratio may vary based on problem characteristics. For highly multimodal problems with many local optima, larger λ values (relative to μ) can enhance exploration capabilities [1] [6]. Conversely, for unimodal problems or during final convergence phases, smaller λ values may improve efficiency by reducing computational overhead [6]. Practical implementation often begins with standard ratios (λ/μ ≈ 5-7) followed by problem-specific fine-tuning.

Mutation Control and Adaptation

Mutation represents the primary variation operator in most ES implementations, typically implemented as Gaussian perturbation of solution components [1]. The mutation strength (step size) critically impacts performance - too large steps cause erratic search behavior, while too small steps lead to premature convergence or stagnation [1] [6]. Modern ES employ various adaptation mechanisms to dynamically adjust mutation parameters:

1/5-Success Rule: A simple but effective heuristic that increases mutation step size when more than 20% of mutations are successful (produce better offspring), and decreases it otherwise [11] [1].

Self-Adaptation: Encodes strategy parameters (mutation strengths) within each individual and subjects them to evolution alongside solution parameters [1] [12]. Successful individuals propagate not only their solution characteristics but also their mutation strategy.

Derandomized Self-Adaptation: Uses population-level statistics to adjust mutation parameters deterministically, reducing stochastic effects while maintaining adaptation capabilities [1] [12].

Table 4: Research Reagent Solutions for Evolution Strategies

| Component | Function | Implementation Example |

|---|---|---|

| Population Structure | Maintains candidate solutions and their properties | S_Agent structure with coordinates and fitness [9] |

| Mutation Operator | Introduces variation for exploration | Gaussian perturbation with adaptable σ [1] |

| Recombination Operator | Combines information from multiple parents | Discrete or intermediate recombination of coordinates [9] |

| Selection Mechanism | Determines survival based on fitness | Comma or plus selection based on algorithm variant [1] |

| Step Size Adaptation | Controls mutation magnitude dynamically | 1/5-success rule or self-adaptation [1] |

| Fitness Evaluator | Assesses solution quality | Problem-specific objective function [6] |

Application in Scientific Domains and Recent Advances

Drug Development and Scientific Applications

Evolution Strategies have found significant application in scientific domains, particularly in drug development and biomedical research [16]. Their ability to handle high-dimensional, non-convex optimization problems makes them suitable for molecular design, protein folding, and chemical compound optimization [16]. In these applications, ES can efficiently navigate complex chemical spaces while accommodating multiple constraints and objective functions.

The integration of ES with machine learning approaches has opened new possibilities in scientific computing [16] [12]. For instance, ES can optimize neural network architectures or hyperparameters for drug discovery pipelines, such as in Huawei's PanGu Drug Model which accelerates drug discovery [16]. The black-box nature of ES makes them particularly valuable when dealing with complex simulators or experimental processes where gradient information is unavailable [12].

Synergy with Large Language Models

Recent research has explored synergistic relationships between Evolution Strategies and Large Language Models (LLMs) [16]. LLMs can enhance ES in multiple capacities: as optimizers themselves through natural language reasoning, as intelligent components within traditional ES for operator design or parameter control, and as high-level controllers for algorithm selection or generation [16]. This integration represents a promising direction for developing more adaptive and intelligent optimization systems.

The emerging paradigm of "LLMs for optimization solving" includes three main approaches: LLMs as standalone optimizers, low-level LLM-assisted optimization algorithms where LLMs enhance specific components, and high-level LLM-assisted optimization algorithms for algorithm selection and generation [16]. Each approach offers distinct advantages for different optimization scenarios and problem characteristics.

The taxonomy of Evolution Strategies, centered on the fundamental distinction between (μ,λ) and (μ+λ) selection mechanisms, provides a structured framework for understanding population management in evolutionary optimization. The (μ,λ)-ES with its comma-selection emphasizes exploration and is preferable for dynamic environments and multimodal problems, while the (μ+λ)-ES with its plus-selection favors exploitation and typically converges faster on unimodal landscapes. Contemporary variants like CMA-ES have sophisticated these basic concepts through advanced adaptation mechanisms and recombination strategies.

Practical implementation of ES requires careful consideration of population sizing, mutation control, and selection mechanisms tailored to specific problem characteristics. The ongoing integration of ES with modern machine learning approaches, particularly Large Language Models, suggests a promising future where evolutionary optimization becomes increasingly adaptive, automated, and effective at solving complex real-world problems across scientific domains including drug development and biomedical research.

Evolution Strategies (ES) are a class of stochastic, population-based optimization algorithms that draw direct inspiration from the principles of natural evolution. Developed in the mid-1960s by Ingo Rechenberg and Hans-Paul Schwefel, these algorithms formalize biological concepts into a computational framework for solving complex problems in engineering, machine learning, and drug development [2] [17]. The core metaphor is straightforward: a population of candidate solutions undergoes iterative mutation and selection processes, where only the fittest solutions survive to reproduce, gradually guiding the population toward optimal regions in the search space.

This biological metaphor is powerful because it translates evolutionary mechanisms—mutation, recombination, and selection—into mathematical operators that efficiently explore high-dimensional, complex landscapes. Unlike gradient-based methods that require derivative information, evolution strategies operate as black-box optimizers, making them particularly valuable for problems where objective functions are noisy, non-differentiable, or multimodal with numerous local optima [18]. In drug development, this capability enables researchers to optimize molecular structures, predict protein folding, and design compounds with desired pharmacological properties where traditional methods struggle.

The (μ/μI, λ)-ES algorithm, which incorporates multi-recombination, represents a significant advancement in the field. Its performance and adaptation properties have been extensively studied, particularly for large population sizes on benchmark functions like the sphere model, providing theoretical foundations for its application to real-world scientific problems [19]. By understanding these biological metaphors and their computational implementations, researchers can harness evolution strategies for challenging optimization tasks across diverse scientific domains.

Biological Foundations and Computational Translation

Core Biological Concepts

Biological evolution operates through three fundamental mechanisms: mutation, recombination, and selection. Mutation introduces random changes in genetic information, creating novel traits that may enhance an organism's fitness. Recombination (crossover) shuffles genetic material between parents, producing offspring with combined characteristics. Selection ensures that individuals with traits best suited to their environment have higher reproductive success, gradually improving population fitness across generations [2]. In nature, this process has produced remarkably optimized structures and behaviors through cumulative, iterative refinement over millions of years.

Mathematical Formalization

Evolution Strategies translate these biological concepts into mathematical operators for optimization:

- Mutation corresponds to adding random noise (typically Gaussian) to solution parameters: ( x' = x + \sigma \mathcal{N}(0,1) ), where ( \sigma ) represents mutation strength [17] [18].

- Recombination combines parameters from multiple parents to create offspring, often through intermediate or discrete recombination.

- Selection mimics natural selection by preferentially retaining the best-performing solutions based on objective function evaluation [2] [17].

This formalization creates a powerful correspondence between biological evolution and computational optimization, where solution parameters represent genetic material, objective function evaluation determines fitness, and iterative application of evolutionary operators drives improvement.

Table: Biological to Computational Concept Mapping

| Biological Concept | Computational Implementation | ES Parameter |

|---|---|---|

| Genetic makeup | Solution vector (x) | Object parameters |

| Mutation rate | Step size (σ) | Mutation strength |

| Fitness | Objective function value | Quality measure |

| Generations | Iterations | Algorithm cycles |

| Population diversity | Solution spread | Sampling distribution |

Evolution Strategies: Core Algorithms and Variants

Notation and Terminology

Evolution Strategies employ specific notation to describe algorithm configurations:

- μ: Number of parents selected each iteration

- λ: Number of offspring generated each generation

- (μ,λ)-ES: Offspring replace parents completely each iteration

- (μ+λ)-ES: Parents and children compete for selection [17]

The comma strategy (μ,λ)-ES implements a more radical evolution approach where children always replace parents, enabling the algorithm to forget previous generations and potentially escape local optima. In contrast, the plus strategy (μ+λ)-ES is more conservative, preserving the best solutions found so far and implementing a form of elitism [17].

Algorithmic Framework

The basic ES algorithmic structure follows a simple, iterative process:

- Initialization: Generate an initial population of candidate solutions

- Repeat until termination criterion met:

- Evaluation: Compute fitness of all candidate solutions

- Selection: Choose the best μ solutions as parents

- Recombination: Create new solutions by combining parent parameters

- Mutation: Perturb recombined solutions with random noise

- Return best solution found [17] [18]

This framework maintains a population of candidate solutions that evolves over generations, with each iteration producing potentially better solutions through the application of evolutionary operators.

Table: Comparison of ES Variants

| Algorithm Variant | Selection Mechanism | Advantages | Disadvantages |

|---|---|---|---|

| (1+1)-ES | Single parent produces one offspring | Simple, efficient for local optimization | Premature convergence, no population diversity |

| (μ,λ)-ES | λ offspring replace μ parents | Maintains population diversity, escapes local optima | May forget good solutions |

| (μ+λ)-ES | Parents and offspring compete | Preserves best solutions (elitism) | May converge prematurely |

| CMA-ES | Adapts covariance matrix | Efficient for ill-conditioned problems | Computationally expensive |

Advanced Adaptation Mechanisms

Mutation Strength Adaptation

A critical advancement in Evolution Strategies is the ability to adapt mutation strengths during the search process. Fixed mutation parameters often require extensive tuning and perform poorly across diverse problem landscapes. Self-adaptation techniques encode strategy parameters (like mutation strength) directly into each individual, evolving them alongside object parameters [19].

Two primary approaches have emerged:

- Cumulative Step-size Adaptation (CSA): Adjusts mutation strength based on the evolution path—a weighted memory of previous steps. If consecutive steps correlate positively, step sizes increase; if they cancel out, step sizes decrease [19].

- Self-adaptive ES: Each solution carries its own mutation strength parameters that undergo mutation and selection. Log-normal mutation is commonly applied: ( σ' = σ \cdot \exp(τ \cdot \mathcal{N}(0,1)) ), where τ is a learning parameter [19].

Covariance Matrix Adaptation ES (CMA-ES)

The CMA-ES represents the state-of-the-art in evolution strategies, adapting both the step size and the complete covariance matrix of the mutation distribution. This allows the algorithm to learn appropriate coordinate systems and scale correlations between variables, effectively adapting to the local topology of the objective function [18].

The CMA-ES updates the covariance matrix using information from successful search steps, effectively learning a second-order model of the objective function. This enables the algorithm to efficiently navigate ill-conditioned and non-separable problems that challenge simpler ES variants [18]. The visual guide to evolution strategies demonstrates how CMA-ES dynamically adjusts its search distribution, elongating and rotating to align with the objective function's contours [18].

Implementation Methodologies

Experimental Setup and Parameter Configuration

Proper implementation of Evolution Strategies requires careful attention to parameter settings and experimental design:

Population Sizing:

- The ratio λ/μ (number of children per parent) typically ranges from 5 to 10

- Larger populations enhance exploration but increase computational cost

- For the (μ/μI, λ)-ES on the sphere function, optimal performance occurs with specific μ/λ ratios that balance exploration and exploitation [19]

Termination Criteria:

- Maximum number of generations or function evaluations

- Convergence threshold (minimal improvement over successive generations)

- Target objective function value

Initialization:

- Solution vectors initialized randomly within problem bounds

- Initial step sizes set to cover a significant portion of the search space

The Sphere Function: A Benchmark Case

The sphere function, defined as ( f(x) = \sum{i=1}^{n} xi^2 ), serves as a fundamental benchmark for evaluating ES performance [19]. Despite its simplicity, analysis on the sphere provides crucial insights into algorithm behavior, progress rates, and adaptation properties.

Experimental protocols for sphere function analysis typically involve:

- Running multiple independent trials with random initializations

- Measuring progress rate (expected improvement per generation)

- Tracking mutation strength adaptation over time

- Comparing achieved results to theoretical predictions [19]

These experiments reveal how different ES variants and adaptation schemes perform under controlled conditions, informing parameter choices for more complex, real-world problems.

CMA-ES Workflow

Application in Scientific Research and Drug Development

Optimization in Drug Discovery

Evolution Strategies offer significant advantages for drug development professionals facing complex optimization problems:

- Molecular docking: Optimizing ligand-receptor binding interactions

- Pharmacophore modeling: Identifying essential structural features for biological activity

- Quantitative Structure-Activity Relationship (QSAR): Building predictive models linking chemical structure to biological activity

- Formulation optimization: Balancing multiple excipient ratios for optimal drug delivery

The black-box nature of ES makes them particularly valuable when the relationship between input parameters and outcomes is complex, noisy, or poorly understood—common scenarios in pharmacological research.

Research Reagent Solutions

Table: Essential Research Materials for ES Experiments

| Reagent/Resource | Function in ES Research | Application Context |

|---|---|---|

| Benchmark functions (Ackley, Rastrigin, Sphere) | Algorithm validation and performance assessment | Comparative studies of ES variants [17] [18] |

| Parallel computing infrastructure | Distributed fitness evaluation | Large-scale optimization problems [18] |

| Statistical analysis software | Performance metrics and significance testing | Experimental validation of results |

| High-performance computing clusters | Handling computationally expensive fitness evaluations | Drug discovery and molecular modeling |

| Visualization tools (Python, R) | Algorithm behavior analysis and result presentation | Research publications and methodology development |

Signaling Pathways and Workflow Visualization

The conceptual signaling pathway in Evolution Strategies illustrates how information flows through the algorithm, driving the adaptation process. This pathway connects environmental feedback (fitness evaluation) to algorithmic parameters (mutation strength), creating a closed-loop system that self-adjusts based on performance.

ES Signaling Pathway

Evolution Strategies represent a powerful implementation of biological optimization principles, transforming metaphors of mutation and selection into effective computational tools. The (μ/μI, λ)-ES framework, with its advanced adaptation mechanisms like cumulative step-size adaptation and covariance matrix learning, provides robust performance across diverse optimization landscapes [19] [18].

For researchers and drug development professionals, these algorithms offer distinct advantages for tackling complex optimization problems where traditional methods fail. Their black-box nature, parallelization capabilities, and ability to handle noisy, multimodal objectives make them particularly valuable for pharmacological applications ranging from molecular design to clinical trial optimization.

Future research directions include dynamic population size control, hybridization with local search methods, and application to multi-objective optimization problems in drug discovery. As theoretical understanding deepens and computational resources grow, Evolution Strategies will continue to provide valuable tools for solving some of the most challenging optimization problems in science and industry.

Evolution Strategies (ES) represent a class of evolutionary algorithms that have demonstrated remarkable effectiveness in navigating complex, high-dimensional search spaces common in real-world optimization problems. This technical guide examines the core algorithmic foundations of ES, particularly the (μ,λ) and (μ+λ) variants, and delineates their specific advantages over alternative optimization methods. By exploring theoretical principles, practical implementations, and applications within pharmaceutical research and quantitative finance, this review establishes why ES excels where gradient-based methods and other evolutionary approaches encounter limitations. The analysis incorporates quantitative performance comparisons, detailed experimental protocols, and visual workflow representations to provide researchers with a comprehensive framework for ES application in scientific domains characterized by rugged landscapes, noise, and high dimensionality.

Evolution Strategies (ES) belong to the family of evolutionary algorithms inspired by natural evolution principles, specifically designed for continuous parameter optimization [12]. Unlike genetic algorithms that often emphasize crossover operations, ES primarily rely on mutation and selection mechanisms, making them particularly suited for challenging real-valued optimization landscapes [20] [3]. The fundamental operation of ES follows a sample-and-evaluate cycle: the algorithm maintains a population of candidate solutions, evaluates their quality based on a fitness function, and generates new candidates through mutation of the most promising individuals [12].

Complex search spaces present specific challenges that render many optimization techniques ineffective. These challenges include high dimensionality, multimodality (multiple local optima), non-convexity, noise in fitness evaluations, and the absence of gradient information [12]. In such environments, traditional gradient-based methods frequently converge to suboptimal local minima and struggle with discontinuous or noisy objective functions. Similarly, other population-based algorithms may exhibit premature convergence or inefficient exploration-exploitation balance [3].

ES address these limitations through their inherent capacity for parallel exploration of search spaces, adaptive step-size control, and robustness to problematic landscape characteristics [12]. Their population-based nature enables simultaneous exploration of multiple regions, while self-adaptation mechanisms dynamically adjust mutation strengths to suit local landscape characteristics. These properties make ES particularly valuable for optimization problems in scientific domains such as drug discovery, where objective functions often exhibit the challenging characteristics mentioned above [21] [22].

Foundational Principles and Algorithmic Advantages

Core ES Variants and Terminology

ES employs a standardized terminology to describe its algorithmic configurations [3]. The primary parameters include:

- μ (mu): The number of parent solutions selected each generation

- λ (lambda): The total number of offspring generated each generation

- σ (sigma): The mutation step size, which may be self-adapted

Two primary ES variants dominate practical applications:

- (μ,λ)-ES: In this approach, the offspring population (λ) completely replaces the parent population each generation, with selection choosing the best μ individuals from λ offspring (μ < λ) [3]. This strategy emphasizes exploration and prevents stagnation by discarding previous generations.

- (μ+λ)-ES: This conservative approach selects the best μ individuals from the union of parents and offspring (μ+λ) [3]. This elitist strategy preserves the best solutions found so far, potentially accelerating convergence.

The comma variant (μ,λ) generally exhibits better performance on multimodal problems as it more effectively escapes local optima, while the plus variant (μ+λ) typically demonstrates faster convergence on unimodal landscapes [3] [12].

Key Advantages in Complex Search Spaces

ES possesses several distinctive properties that confer advantages in complex optimization environments:

Gradient-Free Operation: As black-box optimizers, ES require only objective function values, not gradient information [12]. This enables application to problems with non-differentiable, discontinuous, or noisy objective functions common in real-world applications like drug design and hyperparameter tuning [20] [22].

Adaptive Step-Size Control: Modern ES incorporate sophisticated step-size adaptation mechanisms, most notably the Covariance Matrix Adaptation (CMA) strategy, which automatically adjusts the search distribution to the local landscape geometry [12]. This allows ES to efficiently navigate ill-conditioned problems with variable sensitivity across dimensions.

Robustness to Noise: The population-based nature of ES provides inherent averaging effects that mitigate the impact of noisy fitness evaluations [12]. This resilience is particularly valuable in applications like financial modeling or clinical trial optimization where objective functions often contain substantial stochastic components [23].

Global Exploration Properties: Unlike local search methods, ES maintain a diverse population that simultaneously explores multiple regions of the search space [3]. This reduces susceptibility to premature convergence on suboptimal local minima in multimodal landscapes.

Table 1: Comparative Performance of Optimization Algorithms on Complex Landscapes

| Algorithm | High-Dimensional Search | Noisy Objectives | Multimodal Landscapes | Constraint Handling |

|---|---|---|---|---|

| Evolution Strategies | Excellent (with CMA) | Excellent | Very Good | Good |

| Gradient-Based Methods | Good (with modifications) | Poor | Poor | Excellent |

| Genetic Algorithms | Good | Good | Good | Fair |

| Particle Swarm Optimization | Good | Fair | Good | Fair |

Quantitative Performance Analysis

Benchmark Studies and Performance Metrics

Empirical evaluations on standardized benchmark functions provide quantitative evidence of ES effectiveness on complex landscapes. Research demonstrates that ES, particularly CMA-ES variants, consistently outperform or match competing approaches on non-convex, noisy, and ill-conditioned problems [12].

On the multimodal Ackley function—a canonical test case with numerous local minima—ES reliably locates the global optimum where many gradient-based methods become trapped in suboptimal regions [3]. This capability stems from ES maintaining sufficient population diversity to explore disparate regions of the search space simultaneously.

In high-dimensional settings, CMA-ES exhibits polynomial time complexity on convex quadratic functions and demonstrates robust performance on functions with variable conditioning [12]. The algorithm automatically adapts its search distribution to align with the topology of the objective function, effectively performing an unsupervised principal components analysis of promising regions.

Table 2: Performance Comparison on Standard Benchmark Functions

| Benchmark Function | Characteristics | ES Performance | Comparative Performance |

|---|---|---|---|

| Ackley | Multimodal, symmetric | 98% convergence to global optimum | Superior to gradient methods |

| Rosenbrock | Non-convex, curved valley | 92% convergence rate | Comparable to best alternatives |

| Rastrigin | Highly multimodal | 85% convergence to global optimum | Superior to most evolutionary algorithms |

| Sphere | Unimodal, convex | 100% convergence rate | Comparable to gradient methods |

Real-World Application Performance

Beyond artificial benchmarks, ES delivers compelling performance in applied domains:

Drug Discovery: In pharmaceutical research, ES have successfully optimized molecular structures and predicted compound activity, with one study reporting 25-50% reduction in discovery timelines during preclinical stages when using AI-guided approaches that incorporate evolutionary optimization [22].

Quantitative Finance: In financial applications, ES demonstrate particular effectiveness for portfolio optimization and factor mining, outperforming traditional methods in environments with low signal-to-noise ratios [23].

Neural Network Training: ES achieve competitive performance with state-of-the-art policy optimization methods in reinforcement learning, successfully training deep neural networks with millions of parameters [12]. This showcases their scalability to high-dimensional problems.

A critical advantage in these applications is ES resilience to noise. Financial and biological data typically exhibit substantial stochastic components that undermine gradient-based approaches. ES population-based averaging and rank-based selection naturally dampen noise effects, providing more robust optimization [23] [12].

Experimental Protocol and Implementation

Standard (μ,λ)-ES Implementation

The following protocol outlines a standard implementation of the (μ,λ)-ES for continuous optimization problems:

Initialization:

- Set generation counter: ( t \leftarrow 0 )

- Initialize population: Create λ individuals by sampling uniformly from the search space

- Initialize strategy parameters: Set initial step sizes σ for each dimension or individual

Main Loop:

- Evaluation: Compute fitness ( f(x_i) ) for all individuals ( i = 1, \ldots, λ )

- Selection: Rank individuals by fitness and select the best μ parents

- Recombination: Create new offspring by recombining parent parameters (intermediate or discrete recombination)

- Mutation: Apply Gaussian mutation to strategy parameters and object variables: ( x{child} \leftarrow x{parent} + N(0, σ^2) )

- Termination Check: Repeat until convergence criteria met or computational budget exhausted

Parameter Settings:

- Population sizing: ( λ/μ ≈ 7 ) is often effective

- Initial step size: ( σ ≈ 1-10\% ) of search space range per dimension

- Termination: Stagnation of fitness or minimal step size

Diagram 1: Evolution Strategies Algorithm Workflow

Researcher's Toolkit: Essential Components for ES Implementation

Table 3: Research Reagent Solutions for ES Experimentation

| Component | Function | Implementation Example |

|---|---|---|

| Population Sampler | Generates candidate solutions | Gaussian distribution: ( x{child} = x{parent} + N(0, σ^2) ) |

| Fitness Evaluator | Assesses solution quality | Objective function specific to domain (e.g., drug binding affinity) |

| Selection Operator | Chooses parents for reproduction | (μ,λ)-truncation selection based on fitness ranking |

| Recombination Operator | Combines parental traits | Intermediate recombination: ( x{child} = (x{parent1} + x_{parent2})/2 ) |

| Step-Size Adaptation | Adjusts mutation strength | Self-adaptation: ( σ{child} = σ{parent} \cdot e^{N(0, τ^2)} ) |

| Constraint Handler | Maintains feasible solutions | Boundary repair or penalty functions |

Application in Pharmaceutical Research

The pharmaceutical industry presents particularly challenging optimization problems that align well with ES strengths. Key application areas include:

Drug Discovery and Design

ES have demonstrated significant utility in molecular design and optimization, where the search space encompasses numerous chemical compounds with complex structure-activity relationships [21] [22]. The multi-modal nature of these landscapes, combined with expensive and noisy evaluations, creates an environment where ES outperform gradient-based approaches.

Notably, ES have been integrated with AI approaches to accelerate drug discovery. In 2025, approximately 30% of new drugs were discovered using AI methods, many incorporating evolutionary optimization strategies [22]. These approaches reduce discovery timelines and costs by 25-50% in preclinical stages by more efficiently navigating the chemical search space.

Clinical Trial Optimization

ES effectively optimize clinical trial designs, which involve complex constraints, multiple objectives (efficacy, safety, cost), and substantial uncertainty [24]. The ability of ES to handle black-box, non-differentiable objective functions makes them suitable for simulating trial outcomes across parameter variations.

Bioprocess Optimization

In biomanufacturing, ES optimize complex biological systems for production of therapeutics, where first-principles models are often incomplete or inaccurate [24]. ES efficiently tunes multiple process parameters (temperature, pH, nutrient feeds) to maximize yield while satisfying quality constraints.

Diagram 2: ES Applications in Pharmaceutical Research

Comparative Analysis with Alternative Methods

Advantages Over Gradient-Based Methods

Gradient-based optimizers (e.g., SGD, Adam) dominate many machine learning applications but encounter fundamental limitations in complex search spaces:

Local Optima Entrapment: Gradient methods follow local improvement directions, frequently converging to suboptimal local minima in multimodal landscapes where ES maintains global exploration capabilities [12].

Gradient Dependence: Many real-world optimization problems lack computable gradients due to non-differentiable components or simulator-based evaluations, rendering gradient-based methods inapplicable [3].

Noise Sensitivity: Stochastic gradients amplify noise in objective functions, while ES naturally averages noise effects through population-based evaluation [12].

Advantages Over Other Evolutionary Algorithms

While ES share the population-based approach with other evolutionary algorithms, they exhibit distinctive strengths:

Specialization for Continuous Domains: Unlike genetic algorithms originally designed for discrete representations, ES specifically address continuous parameter optimization with specialized mutation operators [3].

Step-Size Adaptation: Modern ES incorporate sophisticated step-size adaptation mechanisms (e.g., CMA) that automatically calibrate search distributions to local landscape geometry [12].

Reduced Parameter Sensitivity: Compared to particle swarm optimization and other metaheuristics, well-designed ES variants exhibit more robust performance across problem domains with minimal parameter tuning [12].