A Practical Framework for Validating Dynamical Models in Drug Development: From Fit-for-Purpose Principles to Regulatory Acceptance

This article provides a comprehensive framework for validating dynamical models throughout the drug development pipeline.

A Practical Framework for Validating Dynamical Models in Drug Development: From Fit-for-Purpose Principles to Regulatory Acceptance

Abstract

This article provides a comprehensive framework for validating dynamical models throughout the drug development pipeline. Targeting researchers, scientists, and drug development professionals, it explores foundational principles of Model-Informed Drug Development (MIDD), examines methodological applications of tools like PBPK and QSP, addresses common troubleshooting challenges, and establishes rigorous validation and comparative assessment protocols. By synthesizing current regulatory perspectives and emerging technologies, this guide aims to enhance model credibility, facilitate regulatory acceptance, and accelerate the delivery of innovative therapies to patients.

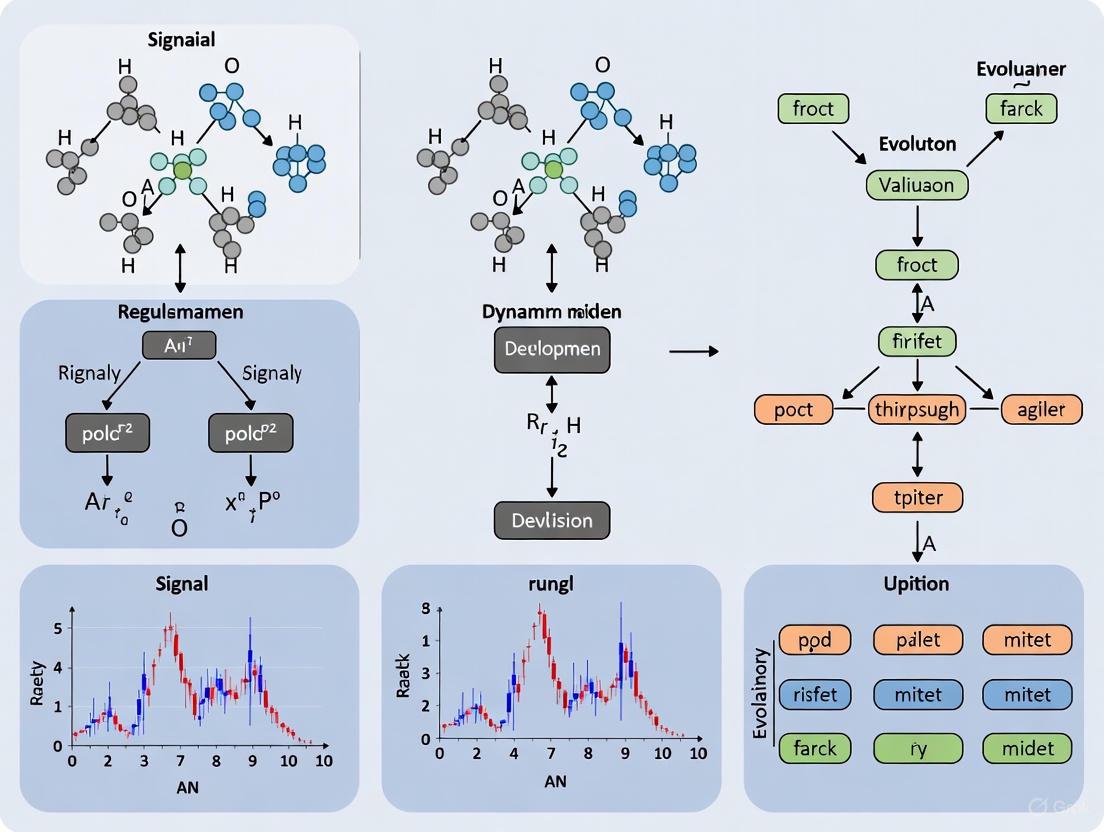

Understanding Dynamical Models in Modern Drug Development

Model-informed drug development (MIDD) employs quantitative frameworks to facilitate drug discovery and regulatory decision-making, transforming a traditionally empirical process into a more predictive and mechanistic science [1] [2]. Dynamical models provide a platform for knowledge integration and hypothesis testing, offering insights into biological systems and drug behaviors that would not be possible through experimental approaches alone [1]. Among these, four key computational approaches—Physiologically Based Pharmacokinetic (PBPK), Quantitative Systems Pharmacology (QSP), Population Pharmacokinetic (PopPK), and Agent-Based Modeling (ABM)—have emerged as cornerstones of modern pharmacology. Each model class possesses distinct foundational principles, applications, and validation pathways, making it critical for researchers to understand their complementary roles within the MIDD landscape. This guide provides a structured comparison of these methodologies, framed within the broader thesis of dynamical model validation, to inform their appropriate application in development research.

Model Frameworks at a Glance

The table below summarizes the core characteristics, applications, and validation criteria for PBPK, QSP, PopPK, and ABM.

Table 1: Comparative Overview of Key Dynamical Models in MIDD

| Feature | PBPK | QSP | PopPK | ABM |

|---|---|---|---|---|

| Core Philosophy | Bottom-up, mechanistic [3] | Bottom-up, systems-level [4] | Top-down, empirical [3] | Bottom-up, individual-based [1] |

| Primary Objective | Predict drug concentration in organs/tissues based on physiology [3] [2] | Understand drug effects on disease network biology [4] | Describe population trends and variability in drug exposure [3] [5] | Understand emergent system behaviors from individual interactions [1] |

| Spatiotemporal Resolution | Explicit spatial (anatomical) scales [1] | Often non-spatial, system-level | Non-spatial, homogenous or empirical population average [6] | Explicit spatial and temporal scales [1] |

| Handling of Variability | Incorporates intersubject variability via "correlated" Monte Carlo methods [4] | Can incorporate variability, but not its primary focus | Quantifies inter- and intra-individual variability as a core output [3] [5] | A core strength; can model heterogeneity and stochastic events [1] |

| Key Applications in MIDD | Drug-Drug Interaction (DDI) prediction, pediatric dose extrapolation, first-in-human PK prediction [3] [2] [7] | Target evaluation, mechanistic PD, clinical trial simulation | Covariate analysis, dosing regimen justification, therapeutic drug monitoring [3] [5] | Preclinical mechanistic modeling, tumor growth/response, immune system dynamics [1] [6] |

| Typical Validation/Qualification | Model "qualification" and "verification" against clinical data; credibility assessment [4] | Qualification for intended purpose; biological plausibility [4] | Goodness-of-fit diagnostics, statistical criteria (e.g., AIC), predictive performance [8] [4] | Reproduction of emergent, system-level patterns not explicitly programmed [1] |

| Key Strength | Strong predictive power for untested clinical scenarios when physiology is known [4] | Integrates PK and complex PD in a network context | Efficiently identifies and quantifies sources of population variability from real-world data [3] [5] | Ideal for systems where spatial structure and cellular heterogeneity are critical [1] |

| Key Limitation | Limited by available mechanistic knowledge and in vitro data [3] | High complexity; many parameters may be unidentifiable | Compartments often lack physiological meaning; limited extrapolation [3] | Computationally intensive; rule-sets can be complex and difficult to validate [1] |

Core Characteristics and Applications

Physiologically Based Pharmacokinetic (PBPK) Modeling

PBPK modeling is a compartment and flow-based approach where each compartment represents a distinct physiological entity (e.g., an organ or tissue) [3]. It is a bottom-up, mechanistic framework that integrates a drug's physicochemical properties, in vitro data, and system-specific (physiological) parameters to predict pharmacokinetics (PK) across populations, including special groups like pediatrics or organ-impaired patients [3] [2] [7]. A key paradigm shift enabled by PBPK is the transition from "learn and confirm" to a "predict-learn-confirm-apply" cycle, largely due to the integration of in vitro-in vivo extrapolation (IVIVE) [4]. Its applications are broad, including the prediction of drug-drug interactions (DDIs) and the support of regulatory submissions, with over 70 publications in the journal CPT:PSP featuring PBPK in their title [4]. A primary strength is its ability to predict and extrapolate beyond the initial data used for model development, though this is limited by the available level of mechanistic knowledge [3] [4].

Quantitative Systems Pharmacology (QSP)

QSP can be viewed as an extension of PBPK modeling that also incorporates the pharmacodynamic (PD) effects of a drug on tissues and organs, providing a systems-level understanding of a drug's mechanism of action within a biological network [3] [4]. In broader terms, PBPK and other emerging disciplines fall under the umbrella of QSP approaches [4]. The objective of QSP is to quantitatively understand a biological or disease process in response to therapeutic modulation, with less initial emphasis on describing specific clinical observations compared to pharmacometric models [4]. This makes it particularly valuable for probing putative targets and understanding complex, non-linear biological systems.

Population Pharmacokinetic (PopPK) Modeling

In contrast to PBPK, PopPK modeling is a top-down, empirical approach that fits a model to all available pharmacokinetic data from a population simultaneously [3] [5]. Its compartments do not necessarily have direct physiological meaning but are mathematical constructs that describe the data [3]. A core function of PopPK is to identify and quantify sources of variability in a drug's kinetic profile, including the effects of intrinsic (e.g., age, weight, renal function) and extrinsic (e.g., concomitant drugs) covariates [3] [5]. PopPK models are developed using non-linear mixed-effects (NLME) models and are integral for supporting dosing recommendations and informing drug labels. While traditionally developed through a manual, sequential process, recent advances demonstrate the successful automation of popPK model development using machine learning, significantly reducing timelines and manual effort [8].

Agent-Based Modeling (ABM)

ABM is a simulation technique that focuses on describing individual components (agents) and their interactions with each other and the environment, from which population-level behaviors emerge [1]. Unlike equation-based models that assume homogeneity, ABM can naturally incorporate cellular heterogeneity and spatial distribution, which is critical for modeling complex processes like tumor growth and immune responses [1] [6]. ABM is particularly advantageous as a platform for knowledge integration because its highly visual output facilitates communication within interdisciplinary teams, and its emergent properties offer a unique means of identifying knowledge gaps when model predictions diverge from experimental observations [1]. Its application in pharmaceutical contexts, while growing, has been less extensive than other methods, but it is uniquely equipped to address questions involving multi-scale, heterogeneous biological systems [1].

Experimental Protocols and Case Studies

Protocol: A Comparative PBPK vs. PopPK Workflow for Pediatric Dose Selection

The following workflow was used to predict effective doses of gepotidacin in paediatrics for pneumonic plague, illustrating a direct comparison of the two methodologies [7].

Title: PBPK vs PopPK Pediatric Workflow

Methodology Details:

- PBPK Model Construction: A full PBPK model for the drug gepotidacin was constructed in Simcyp using a "middle-out" approach. This integrated drug-specific parameters (physicochemical properties, in vitro ADME data) and was optimized with human PK data from a dose-escalation intravenous study [7].

- PopPK Model Development: A PopPK model was developed using pooled PK data from phase 1 studies with intravenous gepotidacin in healthy adults. The model identified body weight as a key covariate affecting clearance [7].

- Qualification/Verification: The PBPK model was qualified against clinical PK results from healthy adult and renally impaired populations. The PopPK model was evaluated using standard goodness-of-fit diagnostics [7].

- Pediatric Simulation: The qualified PBPK model simulated pediatric PK by incorporating age-dependent physiological changes (e.g., organ sizes, blood flows, enzyme maturation). The PopPK model used allometric scaling to project adult PK to children [7].

- Dose Selection: Dosing regimens were proposed such that the simulated pediatric exposures (e.g., AUC) fell within the target range established from effective and safe exposures in adults (or from animal models for biothreat indications) [7].

Key Findings: Both models successfully predicted gepotidacin exposures in children, and the proposed dosing regimens were weight-based for subjects ≤40 kg and fixed-dose for subjects >40 kg. The models produced similar AUC predictions, though Cmax predictions differed slightly. A notable divergence was that the PopPK model was considered suboptimal for children under 3 months due to the lack of explicit maturation functions for drug-metabolizing enzymes, a feature inherent to the PBPK approach [7].

Protocol: Agent-Based Model for Knowledge Integration and Hypothesis Testing

This protocol outlines the use of ABM to study the germinal center, a key mechanistic target in vaccinology, demonstrating its role in consolidating knowledge and testing biological hypotheses [1].

Title: ABM Hypothesis Testing Workflow

Methodology Details:

- Knowledge Integration: Existing information and constraints regarding the germinal center reaction were consolidated from the scientific literature to define the initial state and rules for the ABM [1].

- Rule-set Definition: Agents (e.g., B cells) were programmed with rules governing their interactions with other agents (e.g., T cells) and the microenvironment, based on proposed biological theories [1].

- Simulation and Emergence: Multiple simulations were run, and the aggregate interactions of the individual agents led to the emergence of system-wide patterns, such as germinal center kinetics, which were not explicitly programmed into the model [1].

- Hypothesis Testing: Models developed for different proposed theories of B-cell selection were compared. Those that failed to reproduce experimentally observed kinetics were rejected, providing evidence that the underlying biological hypothesis was false [1].

- Experimental Design: The ABM was used to develop novel mechanistic insights and to identify critical timepoints and conditions to test in vivo, guiding the design of subsequent experimental studies [1].

Key Findings: The ABM approach yielded novel mechanistic insight into the impact of Toll-like receptor 4 (TLR4) signaling on the production of high-affinity antibodies, demonstrating the power of ABM as a platform for integrative hypothesis testing [1].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 2: Key Research Reagents and Computational Platforms

| Tool Name | Type/Function | Application Context |

|---|---|---|

| Simcyp Simulator | Population-Based PBPK Simulator | Industry-standard platform for PBPK modeling, featuring IVIVE, DDI prediction, and pediatric/patient population modules [7] [4]. |

| NONMEM | Software for NLME Modeling | The gold-standard software for PopPK and PopPK/PD model development and simulation [8]. |

| Phoenix NLME | Software for PK/PD Modeling | An integrated software platform for performing population PK/PD analysis, used in regulatory submissions [5]. |

| pyDarwin | Machine Learning Library for PopPK | A library implementing optimization algorithms (e.g., Bayesian optimization, genetic algorithms) to automate PopPK structural model development [8]. |

| IVIVE Techniques | In Vitro-In Vivo Extrapolation | A critical methodology to separate compound and system parameters, allowing in vitro data (e.g., metabolic clearance) to be used as input for PBPK models [4]. |

| SpatialCNS-PBPK | R/Shiny Web-Based Platform | A specialized tool for physiologically based pharmacokinetic modeling of drug distribution in the human central nervous system and brain tumors [9]. |

PBPK, QSP, PopPK, and ABM are not competing methodologies but rather complementary tools in the MIDD toolkit. The selection of the appropriate model depends critically on the question to be answered and the type of data available [3]. PBPK excels in mechanistic, physiology-forward prediction; PopPK powerfully identifies and quantifies population variability from data; ABM is unparalleled for exploring emergent behaviors in heterogeneous, spatial systems; and QSP integrates these approaches to model drug effects on system-level biology. As the field evolves, the integration of these disciplines, facilitated by new algorithms and model assessment criteria, will further enhance their synergies and solidify the role of dynamical models in accelerating the development of safe and effective therapies [4].

The Critical Role of Validation in Regulatory Decision-Making and Patient Safety

Validation provides the critical evidence base that informs regulatory decisions and ensures patient safety throughout the therapeutic development lifecycle. Within dynamical models of development research, validation represents the systematic process of confirming that a model, tool, or methodology is fit for its intended purpose through rigorous evidence generation. This process transforms theoretical constructs into trusted instruments for decision-making, whether assessing instructional design models in educational research [10], predicting clinical outcomes using machine learning [11], or establishing bioanalytical methods for biomarker quantification [12]. The fundamental principle connecting these diverse applications is that proper validation bridges the gap between innovative development and reliable implementation, creating a robust framework for evaluating safety and efficacy across multiple domains.

In regulatory science and patient safety, validation takes on heightened importance because decisions directly impact public health. As demonstrated in medication safety initiatives, effective remedies require more than individual effort—they demand systematically validated processes that account for human limitations and complex healthcare environments [13]. This article explores key validation paradigms, their experimental frameworks, and their critical role in creating a predictable, evidence-based pathway for regulatory decision-making and patient protection.

Comparative Analysis of Validation Frameworks Across Domains

Foundational Validation Frameworks in Regulatory Science

The validation of methods, models, and systems forms the bedrock of modern regulatory science, providing the evidence base for decisions that balance innovation with patient safety. Different frameworks have emerged to address specific validation needs across the therapeutic development lifecycle.

Table 1: Comparative Analysis of Validation Frameworks in Regulatory and Clinical Contexts

| Framework Name | Primary Domain | Key Validation Components | Regulatory Application |

|---|---|---|---|

| Bioanalytical Method Validation [12] | Biomarker Research | Accuracy, precision, selectivity, sensitivity, reproducibility | FDA guidance for industry on validating biomarker assays for regulatory decision-making |

| Regulatory Decision Pathway (RDP) [14] | Nursing Regulation | Behavioral choice evaluation, system analysis, mitigating/aggravating factors | State Boards of Nursing disciplinary decisions incorporating systems approach to errors |

| Real-World Evidence (RWE) Framework [15] | Pharmacoepidemiology | Data quality assessment, confounding control, protocol transparency, reproducibility | EMA utilization of real-world data for safety monitoring and effectiveness assessment |

| Machine Learning Model Validation [11] | Clinical Prediction | Internal-external validation, feature selection, performance metrics (AUROC) | Predicting systemic inflammatory response syndrome (SIRS) in polytrauma patients |

Performance Metrics Across Validation Studies

Quantitative metrics form the evidentiary foundation for validating predictive models and analytical methods across diverse applications. These metrics provide standardized measures for comparing performance and establishing fitness-for-purpose.

Table 2: Performance Metrics in Validation Studies Across Domains

| Validation Context | Primary Metrics | Performance Outcomes | Reference Standard |

|---|---|---|---|

| Machine Learning Clinical Prediction [11] | AUROC, OR, 95% CI | Random forest classifier: AUROC 0.89 (internal), 0.83 (external) | Retrospective-prospective clinical data from multiple trauma centers |

| Instructional Design Model Validation [10] | Post-test scores, attitudinal measures | Significant improvements in learning outcomes with validated model | Comparison with traditional instructional systems design approaches |

| Medication Error Prevention [13] | Error rates, preventable adverse events | Systematic approaches reduce errors versus individual focus | IOM medical error statistics (250,000 deaths annually in US) |

Experimental Protocols in Model Validation

Machine Learning Clinical Prediction Model Validation

The development and validation of machine learning models for clinical prediction represents a cutting-edge application of validation principles, exemplified by recent research on predicting Systemic Inflammatory Response Syndrome (SIRS) in polytrauma patients [11]. This protocol demonstrates the rigorous methodology required for creating clinically actionable tools.

Data Collection and Preprocessing: Researchers conducted a retrospective-prospective study of electronic medical records from multiple trauma centers. Inclusion criteria followed the Berlin definition of polytrauma with modifications: New Injury Severity Score (NISS) > 16 points plus physiological risk factors (hypotension, coagulopathy, etc.). Data preprocessing included transformation of Abbreviated Injury Scale scores into nine anatomical features, multivariate imputation of missing values (0.38% of baseline variables), and generation of additional laboratory value indicators. The final feature set contained 60 baseline variables and 7 outcome variables.

Model Development and Validation: Six machine learning models were developed: decision tree, random forest, logistic regression, support vector machine, gradient boosting classifiers, and neural network. The dataset of 439 patients (52.4% with SIRS) was divided for internal and external validation. The random forest classifier demonstrated superior performance with AUROC of 0.89 (95% CI: 0.83-0.96) in internal validation and 0.83 (95% CI: 0.75-0.91) in external validation, showing robust predictive ability for SIRS risk within 24 hours of admission.

Bioanalytical Method Validation for Biomarkers

The 2025 FDA Bioanalytical Method Validation guidance establishes the experimental protocols for validating biomarker assays used in regulatory decision-making [12]. This protocol emphasizes the critical role of validated methods in generating reliable evidence for drug development and approval.

Key validation parameters include accuracy, precision, selectivity, sensitivity, and reproducibility, following the ICH M10 framework. The guidance specifically addresses the challenges of biomarker quantification in complex biological matrices and establishes performance thresholds appropriate for regulatory use. Implementation of these validated methods enables sponsors to generate consistent, reliable data acceptable for FDA submissions, particularly for novel biomarkers supporting drug efficacy claims.

Instructional Design Model Validation

In educational development research, Tracey (2009) documented a comprehensive validation protocol for an instructional design model incorporating multiple intelligences theory [10]. This systematic approach illustrates validation methodologies applicable beyond pharmaceutical contexts.

The validation process employed a multi-stage design: (1) initial model creation, (2) expert review for content validation, (3) testing by practicing instructional designers, and (4) evaluation of learning outcomes with 102 participants. The experimental design measured both post-test knowledge scores and attitudinal measures to assess model efficacy. This structured validation approach ensured the model was theoretically sound, practically applicable, and effective in improving learning outcomes—a methodology analogous to validation requirements in regulatory science.

Visualization of Validation Relationships and Workflows

Regulatory Decision Pathway for Patient Safety

Validation Approaches for Decision-Making

Key Reagents for Validation Studies

Table 3: Essential Research Resources for Validation Studies

| Resource Category | Specific Examples | Function in Validation |

|---|---|---|

| Data Sources | Electronic Health Records, Claims Data, Patient Registries [15] | Provide real-world data for validating predictive models and treatment outcomes |

| Analytical Frameworks | Common Data Models, Standardized Terminologies [15] | Enable data harmonization and reproducible analyses across diverse datasets |

| Methodological Standards | ENCePP Code of Conduct, EU PAS Register [15] | Ensure study design quality and transparency for regulatory acceptance |

| Reference Materials | USP Compendial Standards [16] | Establish quality benchmarks for pharmaceutical validation and regulatory predictability |

| Statistical Tools | FMEA, Risk Assessment Methodologies [17] | Support risk-based validation approaches and quality by design implementation |

Discussion: Integration of Validation Approaches for Patient Safety

The convergence of multiple validation frameworks creates a robust ecosystem for regulatory decision-making that prioritizes patient safety. The systems approach to error reduction, as embodied in the Regulatory Decision Pathway, shifts focus from individual blame to organizational learning and system design [14]. This philosophy aligns with the proactive validation of processes and methods advocated in pharmaceutical manufacturing [17] and the evidence-based framework for evaluating real-world data [15].

Machine learning model validation represents the cutting edge of predictive validation in clinical care. The successful prediction of SIRS in polytrauma patients [11] demonstrates how rigorous validation protocols can transform complex data into clinically actionable tools. This approach shares fundamental principles with the validation of instructional design models [10]—both require systematic development, expert input, and empirical testing to establish reliability and effectiveness.

The ongoing evolution of regulatory guidance, such as the 2025 FDA Bioanalytical Method Validation for Biomarkers [12], reflects the dynamic nature of validation science. As new technologies and data sources emerge, validation frameworks must adapt while maintaining scientific rigor and regulatory standards. This ensures that innovative approaches can be safely integrated into healthcare while protecting patient safety through evidence-based decision-making.

Validation serves as the critical bridge between innovation and implementation in regulatory decision-making and patient safety. Through the systematic application of validated methods, models, and frameworks—from bioanalytical techniques to predictive algorithms and regulatory decision tools—we establish the evidence base necessary for making sound decisions that protect patients while advancing therapeutic options. The continuous refinement of validation methodologies, coupled with transparent reporting and appropriate application of real-world evidence, will further strengthen this foundation. As validation science evolves, it will continue to provide the essential framework for integrating new technologies into clinical practice while maintaining the rigorous standards required for patient safety and public health protection.

Establishing Context of Use (COU) and Question of Interest (QOI) as Foundational Elements

In the realm of computational modeling for biomedical research and drug development, the establishment of a Context of Use (COU) and a Question of Interest (QOI) serves as the critical foundation for determining model credibility and regulatory acceptance. The COU provides a formal, concise description of how a model or tool will be applied in product development, while the QOI precisely defines the specific question, decision, or concern the model will address [18] [19]. These elements are not merely administrative formalities but constitute the bedrock upon which the entire model validation strategy is built, guiding the extent of verification, validation, and uncertainty quantification activities required [20] [21].

The regulatory landscape has evolved significantly, with agencies like the FDA and EMA now accepting evidence produced in silico (through modeling and simulation) alongside traditional experimental data [20] [19]. This shift has made the formal definition of COU and QOI increasingly important, as they form the basis for risk-informed credibility assessments frameworks such as the ASME V&V 40 standard [19] [22] [21]. Within Model-Informed Drug Development (MIDD), the "fit-for-purpose" principle dictates that modeling tools must be closely aligned with the QOI and COU to ensure they are appropriately matched to development milestones and regulatory needs [23].

Theoretical Framework: Definitions and Interrelationships

Core Definitions and Regulatory Context

- Context of Use (COU): A statement that "fully and clearly describes the way the medical product development tool is to be used and the medical product development-related purpose of the use" [24]. For biomarkers, the FDA specifies that the COU includes both the biomarker category and its intended use in drug development, often structured as "[BEST biomarker category] to [drug development use]" [18].

- Question of Interest (QOI): Describes "the specific question, decision or concern that is being addressed with a computational model" [19]. It represents the fundamental scientific or clinical question that the model aims to answer, laying out the engineering or clinical question to be answered at least partially through modeling.

The Relationship Between COU, QOI, and Model Credibility

The interrelationship between COU and QOI forms a systematic framework for establishing model credibility, particularly within the ASME V&V 40 paradigm [19] [21]. The process begins with identifying the QOI, which then informs the definition of the COU—specifying how the model will be used to address the question. This sequential relationship drives the entire credibility assessment process, influencing risk analysis, validation planning, and ultimately determining whether a model possesses sufficient credibility for its intended application [19].

The following diagram illustrates this foundational relationship and the subsequent workflow in model credibility assessment:

Comparative Analysis: COU and QOI Across Applications

COU and QOI in Different Modeling Contexts

The application of COU and QOI spans multiple domains in biomedical research, from medical devices to pharmaceutical development. The table below compares how these foundational elements are applied across different contexts, along with their associated regulatory frameworks and credibility requirements.

Table 1: Comparison of COU and QOI Applications Across Biomedical Modeling Contexts

| Application Domain | Exemplary Question of Interest (QOI) | Exemplary Context of Use (COU) | Primary Regulatory Framework | Key Credibility Activities |

|---|---|---|---|---|

| Medical Devices [19] [22] | "What is the fracture risk at the femur for osteoporotic patients?" [22] | "To predict the absolute risk of fracture at the femur for a subject to inform a clinical decision" [22] | ASME V&V 40-2018 | Verification, Validation, Uncertainty Quantification |

| Biopharmaceutical Process Development [25] | "How to optimize an ultrafiltration process for a biopharmaceutical?" | "To support process design and inform control strategies in biopharmaceutical manufacturing" [25] | Integrated ASME V&V 40 & EMA QIG | Model qualification, risk-based validation |

| Cardiovascular Safety Pharmacology [19] | "What is the pro-arrhythmic risk of a new pharmaceutical compound?" | "To characterize torsadogenic effects of drugs through human ventricular electrophysiology modeling (CiPA initiative)" [19] | CiPA Initiative (FDA, CSRC, HESI) | Ion channel screening, clinical validation |

| Clinical Outcome Assessments [24] | "How to measure fatigue in cancer patients?" | "A patient-reported outcome measure to evaluate treatment response in Phase 3 clinical trials for breast cancer" [24] | FDA COA Guidance | Concept elicitation, cognitive interviewing |

Impact on Model Risk and Credibility Requirements

The specific combination of COU and QOI directly influences the model risk, which determines the rigor of required validation activities [19] [21]. Model risk is assessed as a combination of model influence (the contribution of the computational model to the decision relative to other evidence) and decision consequence (the impact of an incorrect decision on patient safety, business, or regulatory outcomes) [19] [21].

Table 2: Risk-Based Credibility Requirements Based on COU and QOI

| Model Influence Level | Low Decision Consequence | Medium Decision Consequence | High Decision Consequence |

|---|---|---|---|

| Low Influence (Supporting evidence, other data primary) | Minimal V&V | Basic V&V | Standard V&V |

| Medium Influence (Equal weight with other evidence) | Basic V&V | Standard V&V | Comprehensive V&V |

| High Influence (Primary evidence for decision) | Standard V&V | Comprehensive V&V | Extensive V&V with multiple approaches |

Experimental Protocols and Methodologies

Protocol: Defining COU and QOI for Regulatory Submissions

Purpose: To systematically define COU and QOI for computational models intended for regulatory evaluation of biomedical products.

- Stakeholder Engagement: Engage cross-functional team including modelers, clinicians, regulatory affairs specialists, and statisticians.

- QOI Formulation: Precisely articulate the specific question the model will address, ensuring it is focused, answerable, and relevant to the decision process.

- COU Specification: Develop a comprehensive COU statement describing:

- Intended population and disease stage

- Model scope and limitations

- Stage of product development

- How model outputs will inform decisions

- Relationship to other sources of evidence

- Risk Assessment: Evaluate model influence and decision consequence to determine overall model risk.

- Documentation: Formally document both QOI and COU in the model development plan.

Example Output: "Prognostic biomarker to enrich the likelihood of hospitalizations during the timeframe of a clinical trial in phase 3 asthma clinical trials." [18]

Protocol: Credibility Assessment Using ASME V&V 40 Framework

Purpose: To implement a risk-informed credibility assessment based on a defined COU and QOI.

- Credibility Goal Setting: Based on the model risk determined from COU and QOI, establish acceptability thresholds for validation metrics.

- Verification Activities:

- Code verification: Identify and remove procedural errors in source code

- Solution verification: Determine numerical accuracy of solutions

- Validation Activities:

- Conduct experiments or gather reference data under conditions relevant to COU

- Compare model predictions to experimental results

- Quantify predictive accuracy using appropriate metrics

- Uncertainty Quantification:

- Identify and characterize sources of uncertainty (aleatory and epistemic)

- Propagate uncertainties through the model to output predictions

- Applicability Evaluation: Assess relevance of validation evidence to support the specific COU.

Deliverable: Credibility assessment report documenting evidence that the model has sufficient credibility for the specific COU.

Table 3: Essential Research Reagent Solutions for COU/QOI Implementation and Model Validation

| Tool/Resource | Function/Purpose | Application Context |

|---|---|---|

| ASME V&V 40-2018 Standard [20] [19] | Provides risk-based framework for assessing computational model credibility | Medical devices, biophysical models, regulatory submissions |

| R-Statistical Environment [26] | Open-source platform for validation of virtual cohorts and analysis of in-silico trials | Virtual cohort validation, statistical analysis of trial data |

| SIMCor Web Application [26] | Menu-driven, open-source tool for validating virtual cohorts and applying validated cohorts in in-silico trials | Cardiovascular implantable device development, virtual cohort validation |

| Model-Informed Drug Development (MIDD) Tools [23] | Suite of quantitative approaches (PBPK, QSP, PPK/ER) aligned with COU and QOI | Drug discovery and development across all phases |

| Virtual Population Simulation [23] | Creates diverse, realistic virtual cohorts to predict outcomes under varying conditions | Clinical trial optimization, patient stratification |

The rigorous establishment of Context of Use and Question of Interest represents a paradigm shift in how computational models are developed, validated, and utilized in biomedical research and regulatory decision-making. These foundational elements create a structured framework for aligning model development with specific scientific and clinical needs while ensuring appropriate levels of validation based on a risk-informed approach [20] [19] [21].

The comparative analysis presented demonstrates that while the specific implementation of COU and QOI varies across applications—from medical devices to pharmaceutical development—the underlying principles remain consistent: precise definition of intent, clear articulation of application context, and risk-proportionate validation [25] [19] [22]. As the field advances, with increasing regulatory acceptance of in silico evidence and developing technologies like AI/ML, the disciplined application of COU and QOI frameworks will become increasingly critical for ensuring model credibility and ultimately, patient safety [23] [26].

The Fit-for-Purpose (FFP) Initiative represents a strategic regulatory pathway established by the U.S. Food and Drug Administration (FDA) to facilitate the acceptance of dynamic tools in drug development programs [27]. This initiative addresses the evolving nature of certain Drug Development Tools (DDTs) that, while unable to undergo formal qualification, demonstrate substantial value for specific contexts of use. The FFP designation is granted following a thorough FDA evaluation of the submitted information, with successful determinations made publicly available to encourage broader adoption across the pharmaceutical industry [27] [28].

This initiative operates within the broader framework of Model-Informed Drug Development (MIDD), which employs quantitative modeling and simulation approaches to enhance drug development efficiency and regulatory decision-making [23] [28]. The FFP approach is fundamentally rooted in the principle that model development must be closely aligned with specific Questions of Interest (QOI) and Context of Use (COU), ensuring that methodologies are appropriately matched to development milestones from early discovery through regulatory approval [23]. This strategic alignment helps development teams select the right modeling tools at the right time to support decisions and improve outcomes for patients.

FFP Versus Traditional Model Qualification: A Paradigm Shift

The FFP Initiative introduces a flexible regulatory pathway that contrasts with traditional model qualification processes, particularly for dynamic tools whose applications may evolve across multiple drug development programs. Unlike static, one-time qualifications, the FFP approach acknowledges that some models with the same structure and parameter values can be reused across different development programs [28]. This paradigm is especially relevant for disease modeling, where a single model can be applied to multiple programs, and for commonly used structural components in physiologically-based pharmacokinetic (PBPK) modeling [28].

Table 1: Key Differences Between FFP and Traditional Model Qualification

| Aspect | Fit-for-Purpose Initiative | Traditional Qualification |

|---|---|---|

| Regulatory Basis | Pathway for dynamic, evolving tools [27] | Formal, static qualification process |

| Model Type | "Reusable" models applicable across programs [28] | Program-specific models |

| Validation Approach | Risk-based credibility assessment [28] | Fixed validation criteria |

| Context Dependence | Explicitly tied to Context of Use (COU) [23] | Broader, less context-specific |

| Evolution | Adapts to scientific and technological advances [28] | Generally fixed once qualified |

| Public Availability | Determinations publicly listed [27] | May not be publicly disclosed |

The risk-based credibility assessment framework for FFP models begins with identifying the Question of Interest and Context of Use [28]. The model influence (weight of model-generated evidence in the totality of evidence) and decision consequence (potential patient risk from incorrect decisions) collectively determine the model risk. For reusable models, this risk assessment must conservatively cover a broader spectrum of potential scenarios compared to program-specific models, potentially requiring more extensive validation activities and technical standards [28].

Experimentally Approved FFP Tools and Their Applications

Since its inception, the FDA has granted FFP designation to several modeling approaches that have demonstrated utility across multiple drug development programs. These approved tools represent the practical implementation of the FFP paradigm and serve as benchmarks for future submissions.

Table 2: FDA-Approved Fit-for-Purpose Tools and Applications

| Disease Area | Submitter | Tool Name/Type | Trial Component | Issuance Date |

|---|---|---|---|---|

| Alzheimer's disease | The Coalition Against Major Diseases (CAMD) | Disease Model: Placebo/Disease Progression | Demographics, Drop-out | June 12, 2013 [27] |

| Multiple | Janssen Pharmaceuticals and Novartis Pharmaceuticals | Statistical Method: MCP-Mod | Dose-Finding | May 26, 2016 [27] |

| Multiple | Ying Yuan, PhD (MD Anderson Cancer Center) | Statistical Method: Bayesian Optimal Interval (BOIN) design | Dose-Finding | December 10, 2021 [27] |

| Multiple | Pfizer | Statistical Method: Empirically Based Bayesian Emax Models | Dose-Finding | August 5, 2022 [27] |

The MCP-Mod tool addresses dose-finding challenges through a multiple comparison procedure combined with modeling techniques, enabling more efficient identification of optimal dosing ranges during clinical development [27]. The Bayesian Optimal Interval (BOIN) design provides a novel approach to dose selection in oncology trials, improving upon traditional 3+3 designs through more efficient dose escalation algorithms [27]. These tools demonstrate how the FFP initiative facilitates the adoption of innovative methodologies that can accelerate therapeutic development while maintaining regulatory standards.

Methodological Framework for FFP Model Validation

The validation of FFP models follows a structured methodology that ensures robustness and reliability for regulatory decision-making. This methodological framework incorporates both technical and strategic considerations throughout the model development lifecycle.

Core Validation Protocol

The foundational protocol for FFP model validation centers on a comprehensive assessment aligned with the intended Context of Use. The process begins with explicit definition of the COU, which precisely specifies the boundaries within which the model will be applied [23] [28]. This is followed by model risk assessment based on the decision consequence and model influence within the totality of evidence [28]. The technical implementation phase involves model structure identification using biological, chemical, and pharmacological knowledge, followed by parameter estimation from relevant experimental or clinical data [28]. The critical model validation step employs external datasets not used in model development to verify predictive performance [28]. Finally, documentation and reproducibility measures ensure transparent reporting of all assumptions, limitations, and computational implementations [28].

Experimental Design Considerations

For reusable models, the experimental design must account for broader application scenarios than program-specific models. The Structured Process to Identify Fit-For-Purpose Data (SPIFD) provides a systematic framework for assessing data relevance and reliability [29]. This approach operationalizes the principle that data must be both reliable (representing intended underlying medical concepts) and relevant (representing the population of interest and capable of answering the research question) [29]. The SPIFD framework includes step-by-step processes for operationalizing and ranking minimal criteria required to answer research questions, systematically evaluating candidate data sources, and assessing operational feasibility including contracting logistics and time to data access [29].

Comparative Analysis of FFP with Other Model Development Frameworks

The FFP Initiative exists within a ecosystem of model development frameworks, each with distinct characteristics and applications. Understanding these relationships helps researchers select the appropriate pathway for their specific development needs.

Table 3: Comparative Analysis of Model Development Frameworks

| Framework | Primary Focus | Regulatory Status | Flexibility | Implementation Complexity |

|---|---|---|---|---|

| FFP Initiative | Dynamic, reusable models [27] | Case-by-case determination [27] | High | Moderate to High |

| Model Master File (MMF) | Intellectual property sharing[cite |

The drug development process is a meticulously structured journey that transforms a scientific concept into a commercially available therapy. This pipeline, typically spanning 10 to 15 years and requiring an average investment of $2.6 billion, is designed to rigorously evaluate a drug candidate's safety and efficacy [30] [31]. The process follows a funnel model, where thousands of potential compounds are narrowed down to a single approved drug, with an overall probability of success for new molecular entities of only 12% [30]. This high attrition rate underscores the critical need for efficient strategies and tools to de-risk development and accelerate timelines.

The conventional path is defined by five sequential stages: Discovery and Development, Preclinical Research, Clinical Research, Regulatory Review, and Post-Market Safety Monitoring [30] [32] [33]. At each stage, developers face distinct scientific and regulatory questions. Model-Informed Drug Development (MIDD) has emerged as an essential framework, providing quantitative, data-driven insights that support decision-making across this entire lifecycle [23]. By aligning specific modeling and simulation tools with key development milestones, MIDD aims to improve the probability of technical success, reduce late-stage failures, and ultimately deliver new treatments to patients more efficiently.

The Five-Stage Drug Development Process

The standardized five-stage framework provides the backbone for all modern therapeutic development. Each stage has defined objectives, outputs, and decision gates that determine a candidate's progression.

Table 1: The Five Core Stages of Drug Development

| Stage | Primary Objectives | Typical Duration | Key Outputs & Decision Gates |

|---|---|---|---|

| 1. Discovery & Development | Identify disease target; Discover & optimize lead compound [30] [31]. | 3-6 years [31] | Selection of a promising preclinical candidate compound [31]. |

| 2. Preclinical Research | Assess biological activity & safety in non-human models [30] [33]. | 1-3 years [31] | Investigational New Drug (IND) application; FDA clearance to begin human trials [32] [31]. |

| 3. Clinical Research | Evaluate safety, efficacy, and dosing in humans [30] [32]. | 6-7 years [31] | Successful completion of Phase I, II, and III trials demonstrating safety and efficacy [30] [32]. |

| 4. Regulatory Review | Review all data for risk-benefit assessment [30] [33]. | ~1 year [31] | New Drug Application (NDA)/Biologics License Application (BLA) submission; FDA approval for marketing [30] [32]. |

| 5. Post-Market Monitoring | Monitor safety in real-world patient population [30] [33]. | Ongoing | Continual safety assessment; detection of rare or long-term adverse events [30] [33]. |

The clinical research phase (Stage 3) is itself subdivided, with each phase designed to answer specific questions about the candidate drug in humans.

Table 2: Phases of Clinical Research

| Clinical Phase | Sample Size | Primary Focus | Attrition Rate (Approx.) |

|---|---|---|---|

| Phase I | 20-100 volunteers [30] [32] | Initial human safety, tolerability, and pharmacokinetics [33] | ~30% fail [32] |

| Phase II | Up to several hundred patients [30] [32] | Preliminary efficacy, optimal dosing, and side effects [33] | ~67% fail [32] |

| Phase III | 300-3,000 patients [30] [32] | Confirm efficacy, monitor long-term safety, and compare to standard care [33] | ~70-75% fail [32] |

| Phase IV | Several thousand patients [30] [32] | Post-market surveillance; additional uses in broader populations [30] | N/A |

Figure 1: The Drug Development Funnel. This visualization illustrates the high attrition of drug candidates through the development process, with only about 1 in 10,000 discovered compounds ultimately receiving approval [31].

Model-Informed Drug Development (MIDD): A Strategic Framework

Model-Informed Drug Development (MIDD) is a quantitative framework that uses pharmacological, pathophysiological, and trial models to inform drug development and regulatory decisions [23]. The core principle of MIDD is a "fit-for-purpose" approach, where the selection of modeling tools is strategically aligned with the "Question of Interest" and "Context of Use" at each development stage [23]. This alignment provides a data-driven foundation for key go/no-go decisions, helping to de-risk development and optimize resources.

The utility of MIDD is recognized by global regulatory agencies, including the FDA and EMA, and has been formalized in guidelines like the ICH M15 [23]. Evidence from development programs shows that a well-implemented MIDD approach can significantly shorten development cycle timelines, reduce discovery and trial costs, and improve quantitative risk estimates [23]. By simulating clinical scenarios and integrating prior knowledge, MIDD enables developers to explore more options virtually, design more efficient trials, and increase the probability of successful new drug approvals.

Alignment of MIDD Tools with Development Milestones

A diverse and sophisticated toolkit of modeling and simulation methodologies is available to support the modern drug development pipeline. The strategic application of these tools at the appropriate stage is critical for maximizing their impact.

Table 3: Alignment of MIDD Tools with Development Stages and Key Questions

| Development Stage | Key Questions of Interest (QOI) | Relevant MIDD Tools & Methodologies | Purpose & Impact |

|---|---|---|---|

| Discovery | What is the predicted biological activity of a compound based on its structure? [23] | Quantitative Structure-Activity Relationship (QSAR), AI/ML models [23] [34] | Prioritize compounds for synthesis; predict ADMET properties [23] [34]. |

| Preclinical | What is the safe starting dose for humans? How does physiology influence drug disposition? [23] | PBPK, FIH Dose Algorithms, QSP [23] | Enable mechanistic understanding & predict human PK/PD; determine first-in-human dose [23]. |

| Clinical | What is the population variability in drug exposure? What is the exposure-response relationship? [23] | PPK, ER, Semi-Mechanistic PK/PD, Adaptive Trial Design [23] | Optimize dosing regimens; identify subpopulations; support dose justification for trials [23]. |

| Regulatory Review | How to support evidence of effectiveness and safety for approval? [23] | Model-Integrated Evidence (MIE), Clinical Trial Simulation [23] | Strengthen regulatory submissions; support label claims and dosing recommendations [23]. |

| Post-Market | How to support label updates or manage safety in real-world use? [23] | PBPK, ER, MBMA [23] | Inform dosing in special populations; support new indications [23]. |

Figure 2: MIDD Tool Application Timeline. This diagram shows how different quantitative tools are typically applied across the development lifecycle, from discovery (QSAR, AI/ML) to post-market monitoring (PBPK, MBMA) [23].

The Rise of AI-Driven Platforms in Discovery

Artificial intelligence (AI) and machine learning (ML) have evolved from experimental curiosities into foundational capabilities for modern R&D, particularly in the discovery phase [35] [34]. These platforms claim to drastically shorten early-stage research and development timelines and cut costs by using machine learning and generative models to accelerate tasks traditionally reliant on cumbersome trial-and-error [35].

Leading AI-driven companies have demonstrated the potential of this technology. For instance, Insilico Medicine advanced an idiopathic pulmonary fibrosis drug from target discovery to Phase I trials in just 18 months, a fraction of the typical ~5-year timeline [35]. Similarly, Exscientia has reported in silico design cycles that are ~70% faster and require 10x fewer synthesized compounds than industry norms [35]. By the end of 2024, over 75 AI-derived molecules had reached clinical stages, signaling a paradigm shift in early discovery [35].

Table 4: Comparison of Leading AI-Driven Drug Discovery Platforms (2025 Landscape)

| AI Platform/Company | Core AI Approach | Key Clinical-Stage Achievement | Reported Impact |

|---|---|---|---|

| Exscientia [35] | Generative Chemistry; Centaur Chemist | Multiple clinical compounds (e.g., CDK7, LSD1 inhibitors) designed "at a pace substantially faster than industry standards" [35]. | ~70% faster design cycles; 10x fewer compounds synthesized [35]. |

| Insilico Medicine [35] | Generative AI; Target Identification | ISM001-055 for IPF: from target discovery to Phase I in 18 months [35]. | Compression of traditional ~5-year discovery/preclinical timeline [35]. |

| Schrödinger [35] | Physics-Enabled Molecular Design | Nimbus-originated TYK2 inhibitor (zasocitinib) advanced to Phase III trials [35]. | Physics-based simulations for high-accuracy molecular design [35]. |

| Recursion [35] | Phenomics-First AI | Merged with Exscientia (2024) to integrate phenomic screening with automated chemistry [35]. | High-content phenotypic screening on patient-derived samples [35]. |

| BenevolentAI [35] | Knowledge-Graph Repurposing | AI-driven target discovery and prioritization for internal and partnered programs [35]. | Leverages structured scientific literature and data for novel insights [35]. |

Experimental Protocols for Key Model Validation

The successful application of MIDD and AI tools relies on robust experimental protocols to generate high-quality data for model training and validation. The following are key methodologies cited in the search results.

CETSA (Cellular Thermal Shift Assay) for Target Engagement

Purpose: To quantitatively validate direct drug-target engagement in physiologically relevant intact cells and tissues, bridging the gap between biochemical potency and cellular efficacy [34].

Workflow:

- Cell/Tissue Treatment: Intact cells or tissue samples are treated with the drug compound of interest or a vehicle control [34].

- Heating: Aliquots of the sample are heated to a range of different temperatures [34].

- Cell Lysis & Protein Solubilization: Samples are lysed, and the soluble (non-denatured/aggregated) protein fraction is separated from the insoluble fraction [34].

- Detection & Quantification: Target protein levels in the soluble fraction are quantified, typically using high-resolution mass spectrometry or immunoblotting. A shift in the thermal stability of the target protein (i.e., stabilization against heat-induced denaturation) in the drug-treated sample indicates direct binding and target engagement [34].

Application in Validation: This protocol provides system-level, quantitative confirmation that a drug candidate directly binds to its intended target within a complex cellular environment. This is a critical data point for validating predictions made by AI models regarding a compound's mechanism of action and for de-risking progression into later development stages [34].

AI-Guided Design-Make-Test-Analyze (DMTA) Cycle

Purpose: To rapidly compress the traditional hit-to-lead (H2L) optimization timeline from months to weeks through an integrated, AI-driven iterative process [35] [34].

Workflow:

- Design: AI models (e.g., deep graph networks, generative chemistry algorithms) are used to generate and prioritize novel molecular structures or virtual analogs based on a multi-parameter optimization goal (e.g., potency, selectivity, ADMET properties) [35] [34].

- Make: Prioritized compounds are synthesized, often leveraging high-throughput experimentation (HTE) and automated, robotics-mediated precision chemistry to accelerate production [35].

- Test: Synthesized compounds are tested in a battery of relevant in vitro and cellular assays to determine key pharmacological parameters (e.g., binding affinity, functional activity, cellular potency) [35] [34].

- Analyze: The resulting experimental data is fed back into the AI models, which learn from the new data and refine their predictions for the next cycle of compound design. This creates a closed-loop, learning system [35].

Application in Validation: This iterative protocol validates and improves the predictive power of AI models. For example, a 2025 study used deep graph networks to generate over 26,000 virtual analogs, ultimately producing sub-nanomolar inhibitors with a 4,500-fold potency improvement over the initial hits [34]. The speed and quality of output from these cycles serve as a key performance metric for the underlying AI platforms.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The execution of the experimental protocols above, and the generation of quality data for models, depends on a suite of essential research tools and reagents.

Table 5: Key Research Reagent Solutions for Model Validation Experiments

| Tool / Reagent | Function in Development & Validation |

|---|---|

| CETSA Kits/Reagents [34] | Provides standardized components for conducting Cellular Thermal Shift Assays to confirm direct target engagement of drug candidates in cells and tissues. |

| AI/ML Software Platforms (e.g., Exscientia's Centaur Chemist, Insilico's Generative AI) [35] | Integrated software suites for generative molecular design, virtual screening, and property prediction, forming the core of AI-driven discovery. |

| PBPK/QSP Software (e.g., GastroPlus, Simcyp, SCHRÖDINGER) [35] [23] | Simulation platforms for physiologically-based pharmacokinetic and quantitative systems pharmacology modeling to predict human PK and pharmacology. |

| High-Throughput Screening (HTS) Libraries | Curated chemical libraries containing hundreds of thousands to millions of compounds for initial hit identification via robotic screening. |

| Patient-Derived Cell Lines & Organoids [35] | Biologically relevant cellular models that improve the translational predictivity of in vitro assays, used for phenotypic screening and validation. |

| Stable Isotope Labels & MS Standards | Critical for mass spectrometry-based proteomics and metabolomics in assays like CETSA, enabling precise quantification of proteins and metabolites. |

The strategic alignment of quantitative models with the five-stage drug development process represents a fundamental shift in how modern therapeutics are discovered and developed. The MIDD framework, powered by a "fit-for-purpose" philosophy and increasingly by sophisticated AI and machine learning, provides a structured approach to navigating the immense complexity and high attrition inherent in drug development [23].

The evidence is clear: the integration of these tools is no longer optional but a core component of a efficient and effective R&D strategy. From AI platforms compressing discovery timelines to PBPK models de-risking first-in-human studies, these methodologies are delivering on their promise to shorten timelines, reduce costs, and improve success rates [35] [36] [23]. For researchers and drug development professionals, mastering this evolving toolkit—from the underlying computational models to the essential wet-lab validation protocols like CETSA—is critical for driving the next wave of innovation and delivering new medicines to patients in need.

Implementing Validation Frameworks Across Model Types and Applications

The integration of artificial intelligence (AI) and machine learning (ML) into drug development represents a paradigm shift in how sponsors approach regulatory submissions. In early 2025, the U.S. Food and Drug Administration (FDA) issued its inaugural draft guidance titled "Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products" to address the exponential growth in AI utilization since 2016 [37]. This guidance establishes a structured framework for evaluating AI model credibility—defined as the "trust" in model outputs for a specific context of use (COU)—across nonclinical, clinical, postmarketing, and manufacturing phases of drug development [37] [38]. The framework strategically excludes AI applications in drug discovery and operational efficiencies that do not directly impact patient safety, drug quality, or reliability of nonclinical or clinical study results [37].

At the core of this regulatory approach lies a risk-based credibility assessment that evaluates two critical dimensions: model influence (the proportion of AI-generated evidence relative to other evidence) and decision consequence (the impact of an incorrect model output) [37] [38]. This dual-axis assessment determines the appropriate level of regulatory scrutiny and validation rigor required, creating a sliding scale of evidence expectations proportionate to the potential risk to patients and product quality. The framework adapts principles from recognized standards like ASME V&V 40, emphasizing transparency, reproducibility, and context-specific validation [38]. For researchers and drug development professionals working with dynamical models, this framework provides a structured methodology for establishing model credibility while maintaining regulatory compliance.

The Seven-Step Assessment Framework

The FDA's risk-based framework comprises seven iterative steps that guide sponsors from problem definition through final adequacy determination [37]. This systematic approach ensures AI models are appropriately validated for their specific context of use while maintaining scientific rigor.

Foundational Steps (1-3): Definition and Risk Assessment

The initial framework steps establish the AI model's purpose, boundaries, and risk profile, forming the foundation for subsequent validation activities.

Step 1 – Define the Question of Interest: Researchers must precisely articulate the specific question, decision, or concern the AI model will address. For example, in commercial manufacturing, this might involve determining whether injectable drug vials meet established fill volume specifications. In clinical development, a question of interest could assess whether certain trial participants qualify as low risk for known adverse reactions and can forego inpatient monitoring after dosing [37].

Step 2 – Define the Context of Use (COU): The COU delineates the AI model's scope and role, including what will be modeled, how outputs will inform decisions, and whether other evidence (e.g., animal or clinical studies) will complement model outputs. A comprehensively defined COU establishes clear boundaries for model validation and application [37].

Step 3 – Assess AI Model Risk: This crucial step evaluates risk through the combined lens of model influence and decision consequence. Model influence represents the relative weight of AI-generated evidence compared to other evidence sources informing the question of interest. Decision consequence reflects the impact of an adverse outcome resulting from an incorrect model output. Higher levels of either factor increase overall model risk and corresponding regulatory oversight requirements [37].

Execution Steps (4-7): Implementation and Adequacy Determination

The subsequent framework steps translate the risk assessment into actionable validation activities and final adequacy determination.

Step 4 – Develop a Credibility Assessment Plan: This comprehensive plan details activities to establish model credibility for the specific COU. It must include complete descriptions of: (A) the model architecture, inputs, outputs, features, parameters, and rationale for the chosen modeling approach; (B) model development data practices, including training and tuning datasets; (C) model training methodologies, including learning approaches, performance metrics, regularization techniques, and quality assurance procedures; and (D) model evaluation strategies, including data collection, reference methods, agreement between predicted and observed data, and performance limitations [37].

Step 5 – Execute the Plan: Implementation of the credibility assessment plan according to predefined protocols. The FDA emphasizes discussing the plan with the agency before execution to align expectations, identify potential challenges, and determine appropriate resolution strategies [37].

Step 6 – Document Assessment Results: Creation of a credibility assessment report detailing the AI model's credibility for the COU and documenting any deviations from the original plan. This report may be included in regulatory submissions or made available upon FDA request during inspections [37].

Step 7 – Determine Model Adequacy: Final evaluation of whether the AI model is appropriate for the COU. If inadequacies are identified, sponsors may: (A) reduce model influence by incorporating additional evidence types; (B) enhance development data or increase validation rigor; (C) implement risk mitigation controls; (D) revise the modeling approach; or (E) reject the model as inadequate for the intended COU [37].

Table 1: FDA's Seven-Step Risk-Based Credibility Assessment Framework

| Step | Key Activities | Regulatory Considerations |

|---|---|---|

| 1. Define Question | Articulate specific decision problem | Focus on clinically or quality-relevant outcomes |

| 2. Define COU | Establish model scope, boundaries, and role | Clear documentation of intended use and limitations |

| 3. Assess Risk | Evaluate model influence and decision consequence | Determines level of regulatory scrutiny required |

| 4. Develop Plan | Detail model architecture, data, training, evaluation | Early FDA engagement recommended |

| 5. Execute Plan | Implement validation activities | Document any protocol deviations |

| 6. Document Results | Create credibility assessment report | May be submitted proactively or upon request |

| 7. Determine Adequacy | Evaluate model suitability for COU | Multiple remediation paths available if inadequate |

Quantitative Comparison of Model Risk Assessment

The risk-based framework creates a two-dimensional assessment matrix that categorizes AI models according to their potential impact on regulatory decisions and patient safety.

Model Influence Assessment

Model influence represents the relative contribution of AI-generated evidence to the overall body of evidence informing a regulatory decision. This spectrum ranges from supplemental information to primary decision-driving evidence.

Low Influence Models: AI outputs provide supplemental information that comprises less than 50% of the total evidence base. Examples include operational efficiency tools, preliminary screening models, or supportive analytical applications where traditional evidence forms the decision foundation [37].

Medium Influence Models: AI outputs contribute substantially to the evidence base, roughly equivalent to other evidence sources. Examples include models informing patient stratification for clinical trials or providing intermediate endpoints for manufacturing process controls [37].

High Influence Models: AI outputs serve as the primary or sole evidence source for regulatory decisions. Examples include models directly determining dosage levels, serving as primary efficacy endpoints, or making definitive safety determinations without corroborating traditional evidence [37].

Decision Consequence Evaluation

Decision consequence reflects the potential impact of an incorrect model output on patient safety, product quality, or regulatory decision reliability.

Low Consequence Decisions: Incorrect outputs would result in minor disruptions, such as non-impacting manufacturing deviations, operational inefficiencies, or informational applications with no direct patient impact [37].

Medium Consequence Decisions: Incorrect outputs could lead to significant but manageable impacts, such as clinical trial protocol amendments, manufacturing batch reanalysis, or suboptimal dosing recommendations requiring correction [37].

High Consequence Decisions: Incorrect outputs could directly impact patient safety, lead to ineffective treatments, compromise product quality, or result in fundamentally incorrect regulatory approvals or rejections [37].

Table 2: Risk Matrix Combining Model Influence and Decision Consequences

| Decision Consequence | Low Model Influence | Medium Model Influence | High Model Influence |

|---|---|---|---|

| High | Moderate Risk | High Risk | Highest Risk |

| Medium | Low Risk | Moderate Risk | High Risk |

| Low | Lowest Risk | Low Risk | Moderate Risk |

Experimental Protocols for Credibility Assessment

Establishing AI model credibility requires rigorous, standardized experimental protocols that evaluate performance across multiple dimensions relevant to the specific context of use.

Model Training and Validation Protocols

The FDA recommends comprehensive documentation of model training methodologies, including specific performance metrics with confidence intervals to quantify uncertainty [37].

Data Management Practices: Protocols must characterize training and tuning datasets, including source, composition, preprocessing techniques, and potential biases. Documentation should detail data management practices to ensure reproducibility and traceability [37].

Performance Metrics: Quantitative evaluation must include multiple performance dimensions: ROC curves, recall (sensitivity), positive/negative predictive values, true/false positive counts, true/false negative counts, positive/negative diagnostic likelihood ratios, precision, and F1 scores. Confidence intervals should accompany all performance metrics to quantify estimation uncertainty [37].

Validation Methodologies: Rigorous validation requires independent test datasets completely separate from development data. Protocols must document strategies to ensure data independence and avoid information leakage between training and testing phases. The applicability of test data to the specific COU must be explicitly demonstrated [37].

Dynamic Model Evaluation Protocols

For dynamical models used in development research, additional specialized protocols address temporal patterns, irregular sampling, and evolving clinical states.

Temporal Validation Approaches: Dynamic models require time-aware validation strategies that account for concept drift and temporal dependencies. The Time-aware Bidirectional Attention-based LSTM (TBAL) model exemplifies approaches that handle irregular longitudinal data common in electronic medical records [39]. Such models incorporate dynamic variables (vital signs, laboratory results, medications) updated hourly to perform continuous mortality risk assessment in ICU patients [39].

Performance Benchmarks: Dynamic prediction models should be evaluated against traditional scoring systems. For example, the TBAL model achieved AUROCs of 95.9 (95% CI 94.2-97.5) in MIMIC-IV and 93.3 (95% CI 91.5-95.3) in eICU-CRD for static mortality prediction, significantly outperforming conventional scores like SAPS and APACHE [39]. In dynamic prediction tasks, the model maintained AUROCs of 93.6 (95% CI 93.2-93.9) and 91.9 (95% CI 91.6-92.1) across datasets [39].

Cross-Validation Strategies: External validation across multiple institutions is essential for demonstrating generalizability. The TBAL model underwent cross-database validation yielding AUROCs of 81.3 and 76.1, confirming robustness across healthcare systems [39]. Subgroup sensitivity analyses should evaluate performance consistency across age, sex, and disease severity strata [39].

Diagram 1: FDA AI Credibility Assessment Workflow - This diagram illustrates the seven-step process for evaluating AI model credibility, highlighting the critical risk assessment phase where model influence and decision consequences determine the required level of regulatory scrutiny.

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing the FDA's risk-based credibility assessment framework requires specific methodological tools and documentation approaches tailored to dynamical models in development research.

Table 3: Essential Research Reagents and Materials for Credibility Assessment

| Tool Category | Specific Examples | Function in Assessment |

|---|---|---|

| Data Management | eICU-CRD, MIMIC-IV databases | Provide standardized, multicenter data for model development and external validation [39] |

| Model Architecture | Time-aware Bidirectional LSTM with attention mechanisms | Captures temporal dependencies in irregular longitudinal clinical data [39] |

| Performance Metrics | AUROC, AUPRC, F1-score, sensitivity, specificity | Quantifies model discrimination, calibration, and classification performance [37] [39] |

| Validation Frameworks | Electronic Medical Record Longitudinal Irregular Data Preprocessing (EMR-LIP) | Standardizes handling of missing values and irregular sampling in clinical time series [39] |

| Interpretability Tools | Integrated gradients, attention visualization | Identifies key predictors and provides explanatory insights for model decisions [39] |

| Documentation Templates | Credibility Assessment Report, Model Specification Documents | Ensures comprehensive documentation of model development, validation, and limitations [37] |

Comparative Analysis of Model Performance Metrics

Quantitative performance assessment requires multiple complementary metrics to fully characterize model behavior across different operational contexts.

Static vs. Dynamic Prediction Performance

The predictive performance of AI models varies significantly between static implementations (using only baseline data) and dynamic implementations (incorporating longitudinal data updates).

Static Prediction Performance: Models evaluated solely on data from the first 24 hours of observation demonstrate strong but limited performance. For example, the TBAL model achieved AUROCs of 95.9 (94.2-97.5) in MIMIC-IV and 93.3 (91.5-95.3) in eICU-CRD for mortality prediction using static variables [39]. Accuracy reached 94.1 in MIMIC-IV and 92.2 in eICU-CRD, with F1-scores of 46.7 and 28.1 respectively [39].

Dynamic Prediction Performance: Models incorporating continuously updated longitudinal data show maintained performance with enhanced clinical utility. The TBAL model achieved dynamic AUROCs of 93.6 (93.2-93.9) and 91.9 (91.6-92.1) in MIMIC-IV and eICU-CRD respectively, with AUPRCs of 41.3 and 50.0 [39]. This approach maintained high recall for positive cases (82.6% and 79.1%), crucial for sensitive clinical applications [39].

Benchmarking Against Traditional Scoring Systems

AI models consistently outperform traditional prognostic scoring systems across multiple metrics, demonstrating their potential to enhance decision-making in drug development and clinical care.

Performance Advantages: Machine learning models show significant improvements over systems like SAPS and APACHE, which rely on static first-24-hour data and fail to account for evolving clinical states [39]. The TBAL model demonstrated 15-20% higher AUROC values compared to traditional scores in internal validations [39].

Generalizability Evidence: Cross-database validation between MIMIC-IV and eICU-CRD yielded AUROCs of 81.3 and 76.1, demonstrating robustness across healthcare systems and patient populations [39]. This cross-institutional performance is particularly relevant for drug development programs spanning multiple clinical sites.

Diagram 2: AI Model Risk Assessment Matrix - This visualization represents the two-dimensional risk assessment framework combining model influence and decision consequences. The resulting risk classification determines the appropriate level of regulatory scrutiny and validation rigor required for AI models in drug development.

The FDA's risk-based credibility assessment framework provides a structured, scientifically rigorous approach to evaluating AI models in drug development. For researchers working with dynamical models, successful implementation requires meticulous attention to several key principles.

First, context-specific validation is paramount—model credibility cannot be established in isolation but must be demonstrated for the specific context of use and intended decision-making role. Second, comprehensive documentation of model architecture, training data, performance metrics, and limitations forms the evidentiary foundation for regulatory acceptance. Third, proactive regulatory engagement through pre-IND, Type C, or INTERACT meetings allows sponsors to align on validation strategies before committing significant resources [37] [38].

For dynamical models specifically, additional considerations include implementing lifecycle maintenance plans to monitor performance drift, establishing retesting triggers for model updates, and incorporating real-world evidence responsibly with focus on reproducibility and traceability [37]. As AI continues to transform drug development, this risk-based framework provides both a roadmap for innovation and a safeguard for patient safety, enabling the responsible integration of advanced modeling techniques into regulatory decision-making.