A Multi-Strategy Grey Wolf Optimizer for Enhanced Multi-Kernel Learning in Biomedical Data Analysis

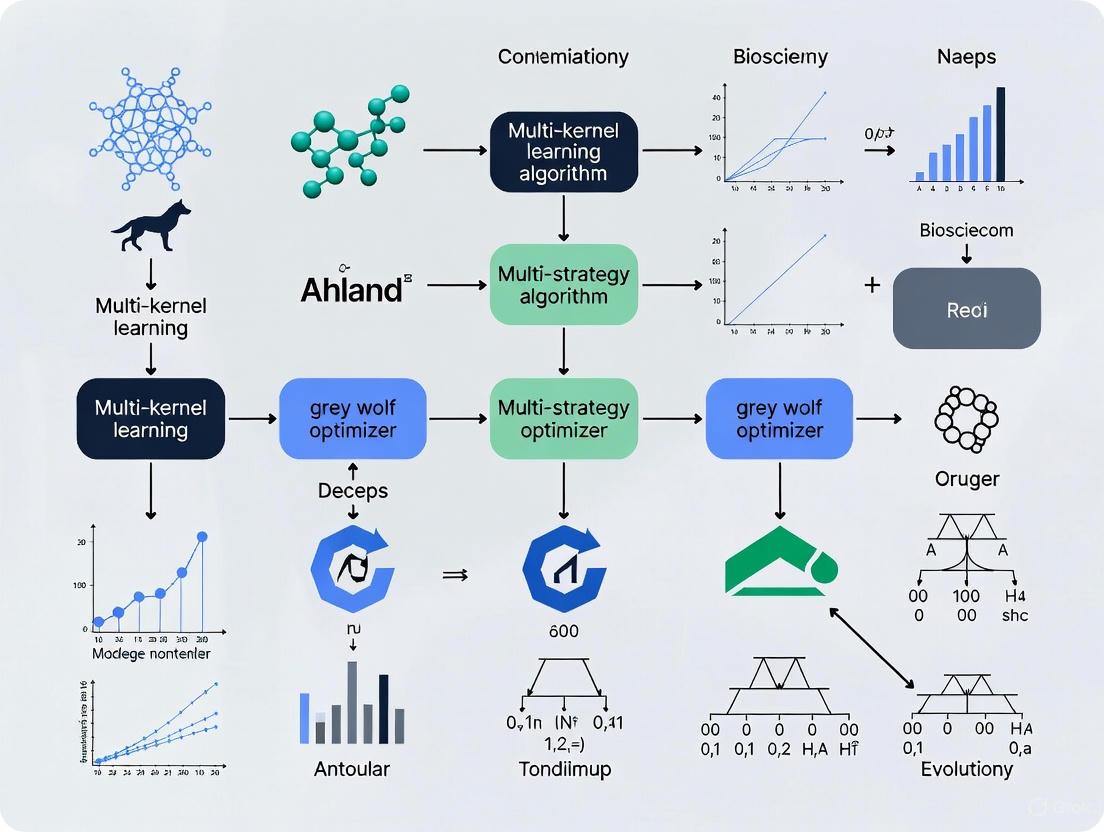

This article presents a novel integration of a multi-strategy Grey Wolf Optimizer (GWO) with Multi-Kernel Learning (MKL) to address complex challenges in biomedical data mining and predictive modeling.

A Multi-Strategy Grey Wolf Optimizer for Enhanced Multi-Kernel Learning in Biomedical Data Analysis

Abstract

This article presents a novel integration of a multi-strategy Grey Wolf Optimizer (GWO) with Multi-Kernel Learning (MKL) to address complex challenges in biomedical data mining and predictive modeling. The hybrid framework is designed to automate kernel selection and hyperparameter tuning, significantly improving the accuracy and robustness of models used for tasks such as disease diagnosis and drug discovery. We explore foundational MKL principles and the limitations of standard GWO, detailing methodological enhancements like dynamic parameter adjustment and hybrid mutation strategies. The performance of the optimized algorithm is rigorously validated against established methods on benchmark functions and real-world biomedical datasets, demonstrating superior predictive accuracy and feature selection capability. This approach offers researchers and drug development professionals a powerful, automated tool for integrating multi-source genomic and clinical data.

Foundations of Multi-Kernel Learning and Grey Wolf Optimization: Principles and Challenges

Multi-Kernel Learning (MKL) represents an advanced machine learning framework designed to integrate multiple, heterogeneous data sources by combining their respective similarity measures (kernels) into an optimal meta-kernel [1] [2]. This approach has gained significant traction in computational biology and bioinformatics, where researchers frequently need to integrate diverse omics datasets (genomics, transcriptomics, proteomics, etc.) obtained from the same biological samples [3] [2]. MKL provides a mathematical solution to the challenge of heterogeneous data integration by transforming different data structures—including vectors, strings, trees, and graphs—into standardized kernel matrices that capture pairwise similarities between samples [1] [4].

The fundamental principle behind MKL is that each kernel function ( k: \mathbb{R}^p \times \mathbb{R}^p \longrightarrow \mathbb{R} ) corresponds to an implicit mapping ( \phi: \mathbb{R}^p \longrightarrow \mathcal{H} ) that projects input data into a high-dimensional feature space ( \mathcal{H} ) without explicitly computing the transformation [2]. Through the "kernel trick," algorithms designed for linear data can be extended to handle nonlinear relationships by replacing standard dot products with kernel similarity values [2]. In multi-omics contexts, where biological systems often exhibit complex nonlinear interactions, this capability proves particularly valuable [2].

MKL frameworks typically combine multiple base kernels ( k1, k2, \ldots, km ) through an affine combination: [ K = \sum{i=1}^m \mui ki ] where ( \mu_i \geq 0 ) represent the kernel weights [1]. The optimization of these weights differentiates various MKL approaches and can yield either sparse solutions (favoring only the most relevant data sources) or non-sparse solutions (smoothly integrating all available information) [4].

MKL Methodologies and Norm Optimization Strategies

The selection of norm constraints in MKL optimization leads to distinct algorithmic behaviors with significant implications for heterogeneous data integration. The three primary MKL variants—L∞, L1, and L2—differ in their regularization approaches and resulting kernel coefficient distributions [4].

Table 1: Comparison of MKL Norm Optimization Strategies

| MKL Type | Norm Optimization | Coefficient Sparsity | Use Case Advantages | Limitations |

|---|---|---|---|---|

| L∞-MKL | Optimizes infinity norm (max value) | High sparsity | Identifies most relevant sources from many irrelevant ones | "Winner-takes-all" effect; underutilizes complementary information |

| L1-MKL | Linear combination with L1 constraint | Moderate sparsity | Balanced selection of relevant sources | May exclude weakly relevant but complementary datasets |

| L2-MKL | Optimizes L2-norm in dual problem | Non-sparse | Thoroughly combines complementary information; better for prospective studies | Less effective with many irrelevant data sources |

L∞-MKL corresponds to L1 regularization on kernel coefficients in the primal problem, producing sparse solutions that assign dominant coefficients to only one or two kernels [4]. This approach benefits scenarios requiring distinction of relevant sources from numerous irrelevant ones. However, in biomedical applications with carefully selected data sources, this sparseness may be too selective, potentially overlooking complementary information [4].

L2-MKL represents an attractive alternative for biomedical contexts where most data sources are relevant. By yielding non-sparse kernel weights, L2-MKL facilitates more thorough information integration from all available sources [4]. Empirical results demonstrate that L2-norm kernel fusion can achieve superior performance in biomedical data integration, particularly when implemented within efficient frameworks like Least Squares Support Vector Machines (LSSVM) [4].

Application Notes: MKL for Multi-Omics Integration

Implementation Frameworks and Protocols

Multiple implementation frameworks exist for applying MKL to multi-omics data integration. The R package mixKernel provides comprehensive MKL tools compatible with the mixOmics package, implementing both consensus meta-kernels and topology-preserving approaches [3]. This implementation enables exploratory analyses through kernel Principal Component Analysis (kPCA) and kernel Self-Organizing Maps (kSOM) [3].

For supervised learning tasks, Support Vector Machines (SVMs) represent the most prevalent MKL implementation [1] [2]. The conventional SVM MKL formulation can be computationally intensive, leading to the development of more efficient LSSVM-based MKL algorithms that maintain comparable performance while reducing computational burden [4].

Recent research has introduced novel deep learning architectures for kernel fusion. The DeepMKL framework transforms input omics data using different kernel functions and guides their integration through supervised neural network optimization [2]. This approach leverages both kernel learning advantages and deep learning's flexibility [2].

Experimental Protocol for Multi-Omics Classification

Objective: Develop a predictive model for breast cancer subtyping using multi-omics data integration via MKL.

Input Data Requirements:

- Multi-omics datasets (e.g., gene expression, DNA methylation, protein expression) from the same patient samples

- Corresponding clinical annotations or phenotypic labels

- Training set (70-80%) and hold-out validation set (20-30%)

Step-by-Step Protocol:

Data Preprocessing

- Perform omics-specific normalization and batch effect correction

- Handle missing values through appropriate imputation methods

- Standardize features to zero mean and unit variance

Kernel Construction

- For each omics dataset, compute similarity matrices using appropriate kernel functions:

- Linear kernel: ( k(\mathbf{x}i, \mathbf{x}j) = \mathbf{x}i^T \mathbf{x}j )

- Gaussian RBF kernel: ( k(\mathbf{x}i, \mathbf{x}j) = \exp(-\gamma \|\mathbf{x}i - \mathbf{x}j\|^2) )

- Polynomial kernel: ( k(\mathbf{x}i, \mathbf{x}j) = (\mathbf{x}i^T \mathbf{x}j + c)^d )

- Validate that all kernel matrices are positive semi-definite

- For each omics dataset, compute similarity matrices using appropriate kernel functions:

Kernel Fusion and Weight Optimization

- Apply selected MKL method (L∞, L1, or L2) to compute optimal kernel weights

- Construct meta-kernel: ( K = \sum{i=1}^m \mui ki ) with ( \mui \geq 0 )

- Validate integration using internal cross-validation

Model Training and Validation

- Train SVM classifier on the meta-kernel using training samples

- Optimize hyperparameters (regularization parameter C, kernel-specific parameters) via grid search

- Evaluate model performance on independent validation set

- Assess generalization through multiple cross-validation strategies

This protocol was successfully applied to analyze multi-omics breast cancer data from The Cancer Genome Atlas, demonstrating improved sample representation compared to single-omics approaches [3].

Performance Assessment and Comparative Analysis

Recent benchmarking studies demonstrate that MKL-based models can compete with and frequently outperform more complex supervised multi-omics integration approaches, including Graph Neural Networks (GNNs) [2]. In systematic comparisons, traditional machine learning approaches like MKL showed competitive results against GNNs in multi-omics analysis, challenging the assumption that increasingly complex architectures necessarily yield superior performance [2].

Table 2: MKL Performance in Multi-Omics Applications

| Application Domain | Data Types Integrated | MKL Method | Key Findings | Performance Metrics |

|---|---|---|---|---|

| Breast Cancer Subtyping | Gene expression, DNA methylation, protein expression | Kernel SOM with consensus meta-kernel | Improved representation of biological system compared to single-omics | Enhanced cluster separation and biological interpretability |

| Microbial Community Profiling | Multiple metagenomic datasets from TARA Oceans expedition | Kernel PCA with topology preservation | Retrieved previous findings and revealed new sample structures | Comprehensive environmental insights |

| Membrane vs. Ribosomal Protein Classification | PPI networks, amino acid sequences, gene expression | SVM with multiple kernel integration | Improved classifier performance with integrated data vs. individual datasets | Enhanced classification accuracy |

| Protein Function Prediction | Gene expression, protein interaction, localization, phylogenetic profiles | Supervised kernel integration | Best performance with integrated datasets; equal information contribution from key sources | Optimal recovery of protein network information |

Integration with Multi-Strategy Grey Wolf Optimizer

Grey Wolf Optimizer Fundamentals and MKL Synergies

The Grey Wolf Optimizer (GWO) is a population-based metaheuristic algorithm that simulates the social hierarchy and hunting behavior of grey wolf packs [5] [6]. In the canonical GWO, the population is divided into four categories: alpha (α), beta (β), delta (δ), and omega (ω) wolves, representing a leadership hierarchy [5]. The optimization process mimics wolf hunting behavior through three main steps: searching for prey, encircling prey, and attacking prey [5].

The integration of GWO with MKL frameworks addresses critical challenges in multi-omics data integration, particularly in high-dimensional optimization landscapes where conventional approaches may converge to suboptimal solutions [7] [8]. Recent advancements in multi-strategy GWO variants have enhanced their applicability to complex computational biology problems:

Fusion Multi-Strategy GWO (FMGWO): Incorporates electrostatic field initialization for uniform population distribution, dynamic parameter adjustment with nonlinear convergence, and hybrid mutation strategies combining differential evolution and Cauchy perturbations [7].

Improved GWO with Multi-Stage Differentiation Strategies (IGWO-MSDS): Implements split-pheromone guidance in early iterations, hybrid Grey Wolf-Artificial Bee Colony strategy during mid-stage, and Lévy flight mechanisms in late stages to balance exploration and exploitation [8].

Multi-population Dynamic GWO (DLMDGWO): Utilizes dimension learning and Laplace mutation operators to enhance global search capability while maintaining population diversity [5].

Protocol for GWO-Enhanced MKL Optimization

Objective: Optimize kernel weights and parameters in MKL using enhanced GWO algorithms.

Step-by-Step Protocol:

Problem Formulation

- Define the search space: kernel weights ( \mui ) with constraints ( \mui \geq 0 ) and ( \sum \mu_i = 1 )

- Set kernel parameter ranges (e.g., γ for RBF kernels, d for polynomial kernels)

- Define fitness function: classification accuracy, regression error, or clustering quality

Enhanced GWO Initialization

Iterative Optimization

- Evaluate fitness for each search agent (wolf) using current kernel parameters

- Update alpha, beta, and delta positions based on fitness ranking

- Apply hybrid strategies (Lévy flight, Laplace mutation, dimension learning) to maintain diversity [8] [5]

- Dynamically adjust exploration-exploitation balance using adaptive parameter control

Convergence and Validation

- Monitor convergence using fitness improvement and population diversity metrics

- Apply local refinement strategies (hill-climbing, pattern search) near promising solutions

- Validate optimized MKL parameters on independent test datasets

This GWO-enhanced MKL approach has demonstrated superior performance in wireless sensor network coverage optimization [7] [8], suggesting potential for similar improvements in multi-omics data integration where high-dimensional, heterogeneous datasets present analogous optimization challenges.

Research Reagent Solutions for MKL Experiments

Table 3: Essential Computational Tools for MKL Implementation

| Tool/Category | Specific Implementation | Function/Purpose | Application Context |

|---|---|---|---|

| Software Packages | R mixKernel Package | Implements consensus and topology-preserving meta-kernels | Multi-omics exploratory analysis [3] |

| MATLAB L2 MKL Implementation | Solves L2-norm multiple kernel learning | Biomedical data fusion [4] | |

| DeepMKL Framework | Neural network architecture for kernel fusion | Supervised multi-omics integration [2] | |

| Optimization Libraries | Enhanced GWO Variants (FMGWO, IGWO-MSDS, DLMDGWO) | Metaheuristic optimization of kernel parameters | High-dimensional parameter tuning [7] [8] [5] |

| Kernel Functions | Linear Kernel ( k(\mathbf{x}i, \mathbf{x}j) = \mathbf{x}i^T \mathbf{x}j ) | Captures linear relationships in data | Initial analysis and baseline models |

| Gaussian RBF Kernel ( k(\mathbf{x}i, \mathbf{x}j) = \exp(-\gamma |\mathbf{x}i - \mathbf{x}j|^2) ) | Models nonlinear similarities with locality | Most common choice for omics data | |

| Polynomial Kernel ( k(\mathbf{x}i, \mathbf{x}j) = (\mathbf{x}i^T \mathbf{x}j + c)^d ) | Captures feature interactions | Specific domain knowledge of interactions | |

| Diffusion Kernel ( k = \exp(\beta H) ) | Graph-based similarity computation | Protein interaction networks [1] | |

| Validation Frameworks | Repeated Cross-Validation | Robust performance estimation | Small sample size settings |

| Independent Test Set Validation | Unbiased performance assessment | Sufficient sample availability |

Multi-Kernel Learning represents a powerful and flexible framework for heterogeneous data integration, particularly valuable in multi-omics research where diverse data types must be combined to construct comprehensive biological models. The integration of advanced optimization strategies, particularly multi-strategy grey wolf optimizers, addresses critical challenges in high-dimensional parameter spaces, enhancing both the efficiency and effectiveness of MKL implementations.

Future research directions include the development of more adaptive MKL formulations that automatically adjust to data characteristics, deeper integration of metaheuristic optimization with kernel learning frameworks, and extension of MKL to emerging data types in computational biology. As multi-omics technologies continue to evolve, MKL approaches—particularly when enhanced with sophisticated optimization strategies—will remain essential tools for extracting meaningful insights from complex, heterogeneous biomedical datasets.

Core Principles and Social Hierarchy of the Grey Wolf Optimizer (GWO)

The Grey Wolf Optimizer (GWO) is a population-based metaheuristic algorithm inspired by the social hierarchy and collective hunting behavior of grey wolves (Canis lupus) in nature. Introduced by Mirjalili et al. in 2014, GWO has gained significant traction for solving complex optimization problems across diverse domains including engineering, machine learning, and economics due to its simplicity, flexibility, and powerful search capabilities [9] [10]. The algorithm effectively mimics the leadership structure and cooperative hunting strategies of grey wolf packs, translating these natural behaviors into a mathematical model for optimization. GWO operates by simulating how grey wolves track, encircle, and attack prey, corresponding to the fundamental optimization phases of exploration and exploitation [9] [11]. Its effectiveness stems from a well-balanced mechanism that allows it to navigate the search space efficiently while avoiding premature convergence, making it a valuable tool for researchers and engineers dealing with multidimensional, nonlinear problems [12].

Social Hierarchy and Core Principles

Social Hierarchy of Grey Wolves

Grey wolves live in packs characterized by a strict social dominant hierarchy, which is central to the GWO algorithm. The pack is divided into four levels, each with distinct roles [9] [11] [13]:

- Alpha (α): The alpha represents the leader of the pack and is considered the most dominant wolf. The alpha wolf is responsible for making decisions about hunting, sleeping place, time to wake, and other activities. In the GWO algorithm, the alpha represents the best solution obtained so far in the search space [11] [13].

- Beta (β): The beta wolves are subordinate wolves that help the alpha in decision-making and other pack activities. They reinforce the alpha's commands throughout the pack and provide feedback to the alpha. In the optimization process, the beta represents the second-best solution [11] [13].

- Delta (δ): Delta wolves are subordinate to the alpha and beta but dominate the omega wolves. They perform specialized roles such as scouts, sentinels, elders, hunters, and caretakers. In GWO, the delta represents the third-best solution [11] [13].

- Omega (ω): The omega wolves are the lowest in the hierarchy and must submit to all other dominant wolves. They play the role of scapegoat but are essential for maintaining the pack's social structure. In GWO, the omega wolves represent the remaining candidate solutions that follow the alpha, beta, and delta [11] [13].

This social hierarchy is mathematically modeled in GWO to guide the optimization process, with the hunting (optimization) being directed by the alpha, beta, and delta wolves. The omega wolves update their positions based on the positions of these three leader wolves [11].

Hunting Mechanism (Core Principles)

The hunting behavior of grey wolves consists of three main phases, which form the core operational principles of the GWO algorithm [9] [11]:

- Tracking, Chasing, and Approaching the Prey (Exploration): This phase corresponds to the exploration of the search space. Wolves search for prey using scent, sound, and movement, which is analogous to exploring different regions in an optimization problem [9].

- Pursuing, Encircling, and Harassing the Prey until it Stops Moving (Exploitation): Once the prey is detected, wolves surround it to prevent escape. In GWO, this means narrowing down the search space and focusing on promising areas [9] [11].

- Attack towards the Prey (Convergence): The wolves finally attack the prey when it stops moving. In the context of optimization, this signifies converging to the best solution [9] [11].

Table 1: Summary of Grey Wolf Social Hierarchy and Its Algorithmic Representation

| Wolf Rank | Role in Natural Pack | Representation in GWO Algorithm |

|---|---|---|

| Alpha (α) | Leader; makes decisions for the pack | The best solution found so far |

| Beta (β) | Second-in-command; advises the alpha | The second-best solution |

| Delta (δ) | Specialized roles (scouts, hunters, etc.) | The third-best solution |

| Omega (ω) | Followers; maintain pack structure | The remaining candidate solutions |

Mathematical Model and Algorithmic Procedure

Encircling Prey

To mathematically model the encircling behavior of grey wolves, the following equations are proposed [11] [10] [14]:

Where:

tindicates the current iteration.A⃗andC⃗are coefficient vectors.X⃗pis the position vector of the prey.X⃗is the position vector of a grey wolf.D⃗represents the distance between the wolf and the prey.

The vectors A⃗ and C⃗ are calculated as follows [11] [14]:

Where:

r₁andr₂are random vectors in [0, 1].- The components of

a⃗are linearly decreased from 2 to 0 over the course of iterations.

Hunting Prey

In the abstract search space, the location of the optimum (prey) is not known. The GWO algorithm assumes that the alpha, beta, and delta wolves have better knowledge about the potential location of the prey. Therefore, the first three best solutions (alpha, beta, and delta) are saved, and the other search agents (omega wolves) are obliged to update their positions according to the position of the best search agents [11]. The mathematical model for the hunting behavior is as follows [11] [10] [14]:

Where:

X⃗α,X⃗β, andX⃗δrepresent the positions of the alpha, beta, and delta wolves, respectively.X⃗(t+1)is the updated position of an omega wolf.

The following diagram illustrates the position update process of an omega wolf relative to the positions of alpha, beta, and delta in a 2D search space.

GWO Position Update Mechanism

Attacking Prey (Exploitation) and Search for Prey (Exploration)

The attacking of prey represents the exploitation phase in the GWO algorithm. This is achieved by decreasing the value of a⃗, which in turn decreases the fluctuation range of A⃗. When |A⃗| < 1, the wolves are forced to attack towards the prey, leading to convergence (exploitation) [11].

Conversely, the search for prey corresponds to the exploration phase. Grey wolves diverge from each other to search for prey and converge to attack prey. This divergence is mathematically modeled by utilizing A⃗ with random values greater than 1 or less than -1 to compel the search agents to diverge from the prey, thus emphasizing exploration. Furthermore, the C⃗ vector, with random values in [0, 2], provides random weights for the prey, also contributing to exploration [11].

Table 2: Key Parameters in the GWO Algorithm and Their Roles

| Parameter | Mathematical Definition | Role in Optimization | Impact on Search Behavior | ||

|---|---|---|---|---|---|

| A⃗ | A⃗ = 2a⃗ ⋅ r₁⃗ - a⃗ |

Controls exploration vs. exploitation | `|A⃗ | > 1: Promotes exploration (divergence).|A⃗ |

< 1`: Promotes exploitation (convergence). |

| C⃗ | C⃗ = 2 ⋅ r₂⃗ |

Provides random weights for prey | Adds randomness to avoid local optima; simulates obstacles in nature. | ||

| a⃗ | Linearly decreases from 2 to 0 | Convergence factor | Balancing exploration and exploitation over iterations. |

Experimental Protocols and Application Notes

Standard GWO Implementation Protocol

Purpose: To provide a foundational methodology for implementing the standard Grey Wolf Optimizer for numerical optimization and problem-solving [9] [11] [15].

Procedure:

- Initialization: Define the objective function, search space boundaries, and GWO parameters (population size

num_wolves, maximum iterationsmax_iterations). Initialize a population ofnum_wolveswolves with random positions within the search space [15]. - Initial Fitness Evaluation: Calculate the fitness of each wolf based on the objective function.

- Hierarchy Assignment: Identify and assign the three wolves with the best fitness values as alpha (α), beta (β), and delta (δ). The remaining wolves are designated as omega (ω) [9] [13].

- Main Optimization Loop: While the stopping criterion (e.g.,

t < max_iterations) is not met: a. Parameter Update: Decrease the value of the convergence factoralinearly from 2 to 0. b. Omega Position Update: For each omega wolf: i. Calculate coefficient vectorsA⃗andC⃗using the updatedaand random vectorsr₁,r₂. ii. Calculate the distancesD⃗α,D⃗β,D⃗δfrom the alpha, beta, and delta wolves using the distance formula. iii. Calculate the intermediate position vectorsX⃗1,X⃗2,X⃗3influenced by alpha, beta, and delta. iv. Update the omega wolf's position using the average ofX⃗1,X⃗2, andX⃗3[11] [10]. c. Boundary Handling: Check and ensure that all updated positions are within the defined search space boundaries. Apply clipping or other boundary constraints if necessary [15]. d. Fitness Re-evaluation: Calculate the fitness of all updated wolves. e. Hierarchy Re-assignment: Update the alpha, beta, and delta wolves if any updated wolf has a better fitness [15]. - Termination: Once the loop terminates, return the alpha wolf's position as the best-found solution to the optimization problem.

Protocol for Enhanced GWO in Complex Model Tuning

Purpose: To detail the application of an Improved Grey Wolf Optimization (IGWO) strategy for tuning parameters in complex models, such as a Kernel Extreme Learning Machine (KELM), for tasks like disease diagnosis or financial prediction [16].

Procedure:

- Problem Formulation: Define the optimization objective. For KELM parameter tuning, the objective is to minimize classification error or maximize prediction accuracy by finding the optimal values for the kernel parameter (e.g., gamma, γ) and the penalty parameter (C) [16].

- Algorithm Enhancement Setup: Implement improvements to the standard GWO hierarchy:

- Fitness Evaluation: The fitness of each wolf (candidate solution of C and γ) is evaluated by training a KELM model with those parameters and calculating its performance (e.g., classification accuracy) on a validation set.

- Iterative Optimization: Execute the enhanced GWO process, where the hierarchical structure improves the stochastic behavior and exploration capability. If a beta wolf finds a solution better than the current alpha, it replaces the alpha [16].

- Model Validation: Upon convergence, validate the final KELM model, configured with the parameters found by the best alpha wolf, on a separate testing dataset to assess its generalization performance.

The following workflow diagram outlines the key stages of this enhanced GWO protocol for parameter optimization.

Enhanced GWO Workflow for Parameter Tuning

Table 3: Essential Research Reagents and Computational Tools for GWO Research and Application

| Item / Tool Name | Type | Function / Purpose in GWO Research |

|---|---|---|

| Benchmark Function Suites | Software/Dataset | A collection of standardized optimization problems (e.g., CEC2017, 23 classic functions) used to validate, compare, and analyze the performance of GWO algorithms [16] [17]. |

| Kernel Extreme Learning Machine (KELM) | Software Model | A machine learning model whose hyperparameters (kernel bandwidth γ, penalty C) are often optimized using GWO to improve performance in classification and regression tasks [16]. |

| Computational Intelligence Library | Software Library | Frameworks like MATLAB, Python (with NumPy/SciPy), or Julia, which provide the necessary environment for implementing GWO and conducting numerical experiments. |

| Static and Dynamic Environment Simulators | Software Tool | Simulated environments (e.g., for robot path planning or wireless sensor network deployment) used as testbeds to evaluate GWO's ability to solve real-world spatial optimization problems [13] [7]. |

| Parameter Adaptation Framework | Methodological Framework | A structured approach for implementing non-linear or dynamic adjustment of the convergence factor a and other parameters to balance exploration and exploitation [14]. |

| Hybridization Strategy | Methodological Framework | A defined protocol for integrating GWO with other optimization algorithms (e.g., TLBO, CSA, PSO) to overcome limitations like premature convergence and enhance search capability [12] [14]. |

| Performance Metrics Suite | Analytical Tool | A set of quantitative measures (e.g., convergence accuracy, speed, stability, Wilcoxon signed-rank test, Friedman test) used to statistically compare GWO variants [17] [14]. |

Application in Multi-Kernel Learning and Future Directions

The integration of the Grey Wolf Optimizer, particularly its multi-strategy enhanced variants, with multi-kernel learning algorithms presents a promising research frontier. The core principles of GWO—social hierarchy and cooperative hunting—align well with the need to optimize complex, multi-parameter systems. In a multi-kernel learning context, an enhanced GWO can be employed to simultaneously optimize the combination weights of different kernels and the hyperparameters of each kernel, a task that is often high-dimensional and nonlinear [16]. The hierarchical structure of GWO allows the "leader" wolves to guide the search towards promising regions of the hyperparameter space, while the enhanced strategies (e.g., local search for beta, global search for omega) help maintain a effective balance between exploring diverse kernel combinations and exploiting the most performant ones [16] [13] [14]. This synergy can lead to more robust and accurate models for complex data in bioinformatics and drug development, such as integrating heterogeneous data sources from genomics, proteomics, and clinical records.

Future research directions highlighted in the literature include developing more sophisticated non-linear parameter adjustment strategies for a to achieve a more refined balance between exploration and exploitation [14]. Furthermore, the creation of hybrid algorithms, such as the GWO-Teaching Learning Based Optimization (GWO-TLBO), demonstrates a path forward for compensating for GWO's weakness of premature convergence by leveraging the strengths of other algorithms [12]. The application of GWO in dynamic and constrained optimization problems, like mobile sensor network deployment, also pushes the development of more adaptive and robust variants [7]. For drug development professionals, these advancements translate into potentially more powerful tools for tasks like quantitative structure-activity relationship (QSAR) modeling, where optimizing multiple learning parameters can significantly improve predictive performance.

The Grey Wolf Optimizer (GWO), a metaheuristic algorithm inspired by the social hierarchy and cooperative hunting behavior of grey wolves, has gained significant recognition for its straightforward implementation and minimal parameter configuration requirements [18] [19]. Despite its popularity, the conventional GWO exhibits fundamental limitations that restrict its effectiveness in solving complex optimization problems, particularly in high-dimensional spaces and real-world engineering applications. The two most critical challenges are premature convergence and exploration-exploitation imbalance [20] [5] [21].

Premature convergence occurs when the algorithm stagnates at local optima rather than continuing toward the global optimum [20]. This phenomenon is primarily attributed to the algorithm's inherent social hierarchy mechanism, where the positions and decisions of the leading wolves (Alpha, Beta, and Delta) disproportionately influence the entire pack's movement [21]. As iterations progress, this hierarchical influence causes rapid diversity loss within the population, trapping the search process in suboptimal solutions [5] [21]. The exploration-exploitation imbalance stems from inadequate coordination between global search (exploration) and local refinement (exploitation) throughout the optimization process [20] [7]. This imbalance manifests as either excessive wandering through the search space without convergence or hasty convergence to local minima [5].

Quantitative Analysis of Standard GWO Limitations

Table 1: Experimental Evidence of Standard GWO Limitations Across Benchmark Functions

| Benchmark Category | Performance Metric | Standard GWO Performance | Primary Limitation Observed | Citation |

|---|---|---|---|---|

| CEC2017 & CEC2022 | Solution accuracy | Suboptimal on complex functions | Premature convergence | [5] |

| CEC2021 (10-Dimensional) | Friedman ranking | Lower ranking compared to variants | Exploration-exploitation imbalance | [20] |

| CEC2021 (20-Dimensional) | Friedman ranking | Lower ranking compared to variants | Exploration-exploitation imbalance | [20] |

| 12 Cancer Microarray Datasets | Feature selection accuracy | Lower classification accuracy | Premature convergence | [20] |

| 23 Standard Benchmark Functions | Convergence precision | Lower precision values | Premature convergence | [18] [19] |

| Large-scale Global Optimization (CEC2013) | Convergence speed | Slow convergence | Exploration-exploitation imbalance | [21] |

Table 2: Impact of GWO Limitations on Engineering Design Problems

| Engineering Application | Standard GWO Performance Issue | Consequence | Improved GWO Solution | Citation |

|---|---|---|---|---|

| WSN Coverage Optimization | Low global coverage efficiency (local optima) | Reduced monitoring efficacy under constrained resources | FMGWO achieves 98.63% coverage with 30 nodes | [7] |

| Cancer Microarray Data Classification | Degraded accuracy due to redundant features | Difficult classification process with extended computation time | EDGWO maintains high convergence speed and accuracy | [20] |

| Three-bar Truss Design | Suboptimal solution quality | Inefficient material usage | IGWO shows balanced exploration-exploitation capability | [22] |

| Vehicle Side Impact Design | Inability to escape local minima | Failure to meet safety or efficiency standards | IGWO demonstrates superior constraint handling | [22] |

| Economic Emission Dispatch | Convergence to local optimum | Higher operational costs | CSTKSO outperforms competing algorithms | [23] |

Root Cause Analysis: Technical Foundations of GWO Limitations

Social Hierarchy Mechanism and Diversity Loss

The standard GWO algorithm implements a rigid social hierarchy that categorizes population members into four levels: Alpha (α), Beta (β), Delta (δ), and Omega (ω) [18]. This structure creates a top-down information flow where Omega wolves update their positions exclusively based on the top three solutions (Alpha, Beta, Delta) [18] [21]. While this mechanism enables efficient knowledge transfer, it gradually diminishes population diversity as iterations progress [21]. The algorithm prioritizes the positions and decisions of the leading wolves, causing the entire population to converge toward the leaders' positions without sufficient exploration of alternative regions in the search space [21]. This diversity loss represents a fundamental cause of premature convergence, particularly when the leader wolves become trapped in local optima during early iterations [20] [5].

Linear Parameter Control and Exploration-Exploitation Imbalance

The standard GWO utilizes a linear control parameter strategy that decreases from 2 to 0 over iterations [20] [22]. This parameter directly influences the balance between exploration and exploitation by controlling the distance between wolves and prey. The linear decrease mechanism fails to adapt to the complex landscape characteristics of real-world optimization problems [5] [21]. In the early stages, the rapid linear decrease may prematurely terminate valuable exploration activities, while in later stages, it may insufficiently focus on promising regions requiring intensive exploitation [20]. This inflexible parameter adjustment represents a structural limitation in the standard GWO algorithm, contributing to suboptimal performance on problems with multiple local optima or complex constraint structures [7] [22].

Enhanced GWO Frameworks: Multi-Strategy Integration Solutions

Elite-driven Grey Wolf Optimizer (EDGWO)

The EDGWO framework addresses standard GWO limitations through three key innovations [20]. First, it integrates social hierarchy with an enhanced search mechanism by establishing three local exploitation operators and three global exploration operators for the Alpha, Beta, and Delta wolves [20]. This strategy clarifies search responsibilities and strengthens global exploration capability. Second, the algorithm implements dynamic adjustment of search parameter values, enabling real-time adaptation of the three leader wolves' search behavior [20]. Finally, EDGWO incorporates a stochastic probabilistic search strategy that allows Omega wolves to randomly alternate between local search and global exploration [20]. This approach increases randomness and diversity throughout the search process, effectively mitigating premature convergence.

Multi-population Dynamic GWO (DLMDGWO)

The DLMDGWO algorithm introduces four sophisticated strategies to overcome standard GWO limitations [5]. The Base-distance Logistic Initialization (BDLI) method establishes dynamic boundaries to partition the initialization range, generating a high-quality uniform initial population distributed from the center to the edge of the search space [5]. The Multi-population Dynamic Strategy (MDS) implements a multi-population hunting mechanism that enhances wolf participation diversity and optimizes strategy selection through Fitness-Distance Correlation coefficients [5]. The Double Laplace Distribution Mutation (DLM) leverages Laplace distribution characteristics to enhance population diversity and global search capability [5]. Finally, Multi-strategy Dimension Learning optimizes population structure through fitness ranking and Small World Topology Dimension Learning [5].

Fusion Multi-strategy Grey Wolf Optimizer (FMGWO)

The FMGWO specifically targets WSN coverage optimization challenges through five integrated strategies [7]. Electrostatic field initialization ensures uniform population distribution, while dynamic parameter adjustment incorporates nonlinear convergence and differential evolution scaling [7]. The elder council mechanism preserves historical elite solutions, and alpha wolf tenure inspection with rotation maintains population vitality [7]. Finally, a hybrid mutation strategy combining differential evolution and Cauchy perturbations enhances diversity and global search capability [7]. This comprehensive approach enables FMGWO to achieve coverage rates up to 98.63% with only 30 nodes, significantly outperforming established algorithms like PSO, GWO, CSA, DE, GA, and FA [7].

Table 3: Comprehensive Comparison of Enhanced GWO Variants

| Algorithm Variant | Core Improvement Strategies | Key Performance Advantages | Application Domains | Citation |

|---|---|---|---|---|

| EDGWO | Elite-driven search operators, Dynamic parameter adjustment, Stochastic probabilistic search | Superior exploration-exploitation capabilities, Fast convergence speed | Feature selection, Medical data analysis | [20] |

| DLMDGWO | Multi-population dynamic strategy, Double Laplace mutation, Dimension learning | Better search efficiency, Solution accuracy, Convergence speed | Global optimization, Engineering design problems | [5] |

| FMGWO | Electrostatic field initialization, Elder council mechanism, Hybrid mutation | Higher coverage rates (98.63% with 30 nodes), Improved stability | WSN coverage optimization, IoT systems | [7] |

| IAGWO | Velocity incorporation, Inverse Multiquadric Function, Adaptive population updates | Outperforms in 88.2%-97.4% of cases across benchmarks | Large-scale problems, Practical engineering applications | [21] |

| IGWO | Lens imaging reverse learning, Nonlinear convergence based on cosine variation, Individual historical optimal integration | Balanced exploration-exploitation, Escaping local minima | Constrained engineering problems, Functional optimization | [22] |

| HMS-GWO | Hierarchical decision-making, Structured multi-step search process | 99% accuracy, Computational time of 3s, Stability score of 0.9 | Complex optimization problems, Engineering design | [18] |

Experimental Protocols for Enhanced GWO Evaluation

Benchmark Function Testing Protocol

Objective: Quantitatively evaluate the performance of enhanced GWO variants against standard GWO and other metaheuristic algorithms [20] [5] [21].

Materials and Setup:

- Test Suites: IEEE CEC2017, CEC2020, CEC2021, CEC2022, and CEC2005 benchmark functions [20] [5] [21]

- Performance Metrics: Solution accuracy, convergence speed, stability score, Friedman ranking [20] [18]

- Comparison Algorithms: Standard GWO, PSO, DE, GA, FA, and other GWO variants [20] [7] [21]

- Population Size: Typically 30-50 individuals [7] [18]

- Maximum Iterations: 500-1000 depending on problem complexity [20] [22]

Procedure:

- Initialize all algorithms with identical population sizes and maximum iterations [20] [5]

- Execute 30 independent runs for each algorithm-function combination to ensure statistical significance [20] [22]

- Record best, worst, mean, and standard deviation of solution quality for each run [5] [21]

- Perform Wilcoxon rank sum test and Friedman test for statistical comparison [20] [22]

- Generate convergence curves to visualize optimization progress across iterations [5] [21]

Feature Selection Application Protocol

Objective: Validate enhanced GWO performance on real-world feature selection problems, particularly medical data classification [20] [24].

Materials:

- Datasets: 12 cancer microarray datasets with high-dimensional features [20]

- Classification Algorithms: Support Vector Machines (SVM), k-Nearest Neighbors (k-NN) [24]

- Evaluation Metrics: Classification accuracy, number of selected features, computational time [20] [24]

Procedure:

- Preprocess datasets using normalization and handle missing values [20]

- Implement binary versions of enhanced GWO for feature selection [24]

- Apply k-fold cross-validation (typically k=10) to ensure reliable results [20]

- Compare classification performance against standard GWO and other feature selection methods [20] [24]

- Perform statistical significance tests (t-test with p<0.05) to verify improvement validity [24]

Engineering Design Optimization Protocol

Objective: Assess enhanced GWO performance on constrained engineering design problems [5] [22].

Materials:

- Engineering Problems: Three-bar truss design, vehicle side impact design, welded beam design [22]

- Constraint Handling: Penalty functions, feasibility-based rules [21] [22]

- Evaluation Metrics: Optimal design cost, constraint satisfaction, convergence speed [5] [22]

Procedure:

- Formulate engineering problems with objective functions and constraint equations [22]

- Implement constraint handling mechanisms within enhanced GWO frameworks [21] [22]

- Execute multiple independent runs to account for stochastic variations [5] [22]

- Compare results with known optimal solutions and other metaheuristic approaches [22]

- Analyze convergence behavior and solution quality across different problem types [5] [22]

Table 4: Essential Computational Resources for GWO Research

| Resource Category | Specific Tools & Benchmarks | Primary Function in Research | Access Information |

|---|---|---|---|

| Benchmark Test Suites | CEC2017, CEC2020, CEC2021, CEC2022, CEC2005 | Standardized performance evaluation of optimization algorithms | Publicly available from IEEE CEC conference websites |

| Medical Datasets | 12 Cancer Microarray Datasets | Validate feature selection performance in real-world scenarios | UCI Machine Learning Repository & public gene databases |

| Engineering Problem Sets | Three-bar truss, Vehicle side impact, Welded beam, Pressure vessel | Test constrained optimization capabilities | Standard engineering design problem collections |

| Statistical Analysis Tools | Wilcoxon rank sum test, Friedman test, t-test | Provide statistical significance for performance comparisons | Implemented in MATLAB, Python (SciPy), and R |

| Implementation Platforms | MATLAB, Python with NumPy/SciPy | Algorithm development and experimental testing | Open-source and commercial licenses available |

Integration with Multi-Kernel Learning Research

The enhanced GWO frameworks present significant opportunities for integration with multi-kernel learning methodologies within the broader thesis context. The dynamic exploration-exploitation balance achieved through elite-driven strategies and multi-population mechanisms can optimize kernel parameter selection and weighting in multi-kernel systems [20] [5]. The feature selection capabilities demonstrated by EDGWO on cancer microarray datasets directly apply to kernel function selection in multi-kernel environments [20] [24]. Furthermore, the constraint handling approaches developed for engineering design problems can be adapted to manage kernel combination constraints in multi-kernel learning architectures [5] [22].

The experimental protocols established for enhanced GWO evaluation provide a methodological foundation for assessing multi-kernel learning performance. The benchmark testing procedures ensure rigorous comparison of kernel optimization approaches [20] [21], while the feature selection protocols validate practical utility in high-dimensional data scenarios [20] [24]. The resource toolkit offers essential components for constructing comprehensive multi-kernel learning experiments, with standardized test functions and statistical evaluation methods [20] [5] [22].

The Rationale for Hybridizing MKL with Multi-Strategy GWO

The integration of Multi-Kernel Learning (MKL) and Grey Wolf Optimizer (GWO) represents a significant advancement in computational optimization, particularly for handling complex, high-dimensional data prevalent in modern scientific research. MKL enhances machine learning model flexibility by combining multiple kernel functions to capture diverse data characteristics, while GWO provides a robust metaheuristic approach for navigating complex solution spaces. The hybridization addresses critical limitations in traditional optimization methods, especially when applied to challenges in drug discovery and development, where model accuracy and computational efficiency are paramount.

The multi-strategy enhancement of GWO effectively counters its inherent tendencies toward premature convergence and local optima stagnation. This synergistic combination creates a powerful framework for optimizing predictive models in scenarios with complex, non-linear relationships, such as pharmaceutical data analysis and biological system modeling. The rationale for this hybridization stems from the complementary strengths of both approaches: MKL provides superior feature representation capabilities, while the enhanced GWO ensures efficient, global optimization of model parameters.

Theoretical Foundation and Synergistic Effects

Multi-Kernel Learning Fundamentals

Multi-Kernel Learning extends conventional kernel methods by employing multiple kernel functions to create a more expressive feature space. This approach allows models to capture heterogeneous patterns in data that single-kernel systems might miss. The combined kernel function typically follows the form:

K(xi, xj) = ∑m=1M βm Km(xi, xj)

where βm represents the weight for the m-th kernel Km, subject to βm ≥ 0 and ∑ βm = 1. This formulation enables the integration of different data representations and similarity measures, making MKL particularly valuable for complex biological data, including protein structures, gene expressions, and chemical compound properties [25] [16].

The key advantage of MKL lies in its adaptive feature representation capability. Unlike single-kernel approaches that impose a fixed similarity metric across all data dimensions, MKL automatically learns the optimal combination of kernels specific to the problem domain. This flexibility is crucial in drug discovery applications where relationships between chemical structures, biological activities, and pharmacological properties exhibit different characteristics that may require different kernel functions for optimal representation [26].

Multi-Strategy Grey Wolf Optimizer Framework

The Grey Wolf Optimizer is a swarm intelligence algorithm inspired by the social hierarchy and hunting behavior of grey wolves. In the standard GWO, the population is divided into four groups: alpha (α), beta (β), delta (δ), and omega (ω), mimicking the leadership hierarchy of wolf packs. The optimization process simulates how these wolves encircle, hunt, and attack prey [27] [28].

Traditional GWO faces challenges in high-dimensional optimization spaces, including premature convergence and inadequate balance between exploration and exploitation. Multi-strategy enhancements address these limitations through several innovative mechanisms:

- ReliefF-based initialization: Optimizes initial population distribution using feature importance scores to improve convergence speed [27]

- Dynamic weighting mechanisms: Enhance elite wolf guidance through fitness-based weighting and competitive strategies [27]

- Hybrid exploration strategies: Incorporate differential evolution and Lévy flight to maintain population diversity [27]

- Self-repulsion strategies: Enable better local optimum escape through flattened hierarchy and repulsion learning [28]

- Hierarchical restructuring: Introduces new hierarchical mechanisms with random local and global search components [16]

Synergistic Rationale for Hybridization

The hybridization of MKL with multi-strategy GWO creates a powerful framework where the weaknesses of one approach are mitigated by the strengths of the other. MKL provides a flexible, expressive model architecture, while the enhanced GWO ensures robust parameter optimization in complex landscapes.

The primary synergistic effects include:

- Enhanced Optimization Capability: Multi-strategy GWO efficiently navigates the complex parameter space of MKL, which includes kernel weights and model hyperparameters [16]

- Adaptive Model Complexity: MKL's kernel combination flexibility allows the model to adapt to various data characteristics, while GWO optimizes this adaptation process [25]

- Prevention of Overfitting: The hybrid approach balances model complexity with generalization through optimized parameter selection [29] [16]

- Improved Convergence Behavior: Enhanced GWO strategies address the local optima problems that often plague kernel method optimization [27] [28]

This synergy is particularly valuable in drug discovery applications, where data relationships are complex, high-dimensional, and often non-linear [26].

Performance Analysis and Quantitative Comparison

Optimization Performance Benchmarks

Table 1: Performance Comparison of GWO Variants on Benchmark Functions

| Algorithm | Average Convergence Improvement | Local Optima Escape Rate | Computational Efficiency |

|---|---|---|---|

| Standard GWO | Baseline | Baseline | Baseline |

| IGWO [16] | 15-30% improvement | 25% improvement | Comparable |

| MIGWO [27] | 20-35% improvement | 30% improvement | 10-15% faster convergence |

| GWO-SRS [28] | 25-40% improvement | 35% improvement | 15-20% faster convergence |

Table 2: Classification Performance of Hybrid MKL-GWO Frameworks

| Application Domain | Dataset | Classification Accuracy | Comparison to Standard Methods |

|---|---|---|---|

| Medical Diagnosis [29] | IDRiD | 98.5-98.8% | 4-6% improvement over traditional SVM |

| Medical Diagnosis [29] | DR-HAGIS | 98.5-98.8% | 4-6% improvement over traditional SVM |

| Medical Diagnosis [29] | ODIR | 98.5-98.8% | 4-6% improvement over traditional SVM |

| UAV Link Prediction [25] | Professional UAV Swarm | 25.9% average improvement | Superior to similarity-based methods |

| Feature Selection [27] | 10 High-dimensional datasets | Significant improvement | Higher accuracy with smaller feature subsets |

| Financial Stress Prediction [16] | Financial datasets | 10-15% improvement | Better than PSO, GA, and standard GWO |

Analysis of Performance Advantages

The quantitative data demonstrates consistent performance improvements across diverse application domains. In medical diagnosis, the G-GWO (Genetic Grey Wolf Optimization) algorithm combined with KELM achieved classification accuracies of 98.5% to 98.8% on diabetic eye disease datasets, outperforming existing methods by 4-6% [29]. This performance enhancement stems from the effective optimization of KELM hyperparameters, specifically the kernel parameters and penalty coefficient, which critically influence model generalization capability.

For high-dimensional feature selection, MIGWO obtained smaller feature subsets while achieving higher classification accuracy compared to mainstream methods [27]. This demonstrates the algorithm's capability to identify meaningful patterns while eliminating redundant features—a critical requirement in drug discovery where minimizing feature dimensionality can significantly reduce computational requirements and enhance model interpretability.

In complex network applications, MSGWO-MKL-SVM improved link prediction accuracy in UAV swarm networks by 25.9% on average compared to conventional approaches [25]. This substantial improvement highlights the framework's effectiveness in handling dynamic, time-varying systems with strong randomness, similar to the complex biological networks encountered in pharmaceutical research.

Experimental Protocols and Implementation Guidelines

MKL-GWO Hybridization Protocol for Drug Discovery

Objective: To implement a hybrid MKL-GWO framework for drug-protein interaction prediction

Materials and Data Requirements:

- Chemical compound databases (ChEMBL, PubChem)

- Protein sequence and structure databases (PDB, UniProt)

- Known drug-target interaction datasets

- Computing environment: MATLAB/Python with parallel processing capability

Procedure:

Data Preprocessing and Feature Engineering

- Represent chemical compounds using molecular fingerprints and descriptors

- Encode protein sequences using physicochemical properties and sequence descriptors

- Normalize all features to zero mean and unit variance

- Split data into training (70%), validation (15%), and test (15%) sets

Multi-Kernel Setup

- Implement three kernel types: Gaussian (RBF) kernel, polynomial kernel, and linear kernel

- Initialize kernel parameters: RBF bandwidth σ ∈ [0.1, 10], polynomial degree d ∈ {2,3,4}

- Construct combined kernel as weighted sum: K = β1KRBF + β2Kpoly + β3K_linear

Multi-Strategy GWO Configuration

- Initialize wolf population size: 30-50 individuals

- Define position encoding: [C, γ, β1, β2, β3] for KELM parameters and kernel weights

- Implement ReliefF-based initialization for population diversity [27]

- Configure dynamic weighting mechanism for α, β, and δ wolves

- Set hybrid exploration parameters: DE crossover rate = 0.7, Lévy flight β = 1.5

Optimization Execution

- Set maximum iterations: 100-200 depending on problem complexity

- Employ fitness function: Classification accuracy on validation set

- Implement early stopping if fitness doesn't improve for 20 consecutive iterations

- Execute main optimization loop with position updates based on hierarchical guidance

Model Validation

- Evaluate optimized model on test set using accuracy, precision, recall, and AUC

- Perform statistical significance testing (e.g., Wilcoxon signed-rank test)

- Compare against baseline methods (standard SVM, single-kernel KELM, PSO-optimized KELM)

Protocol for High-Dimensional Feature Selection in Pharmaceutical Data

Objective: To select optimal feature subsets from high-dimensional genomic or chemical data

Materials:

- High-dimensional biological datasets (gene expression, metabolic profiles)

- Feature selection benchmark datasets from UCI repository

- Computing infrastructure with sufficient memory for large-scale data

Procedure:

Initial Population Generation using ReliefF

- Calculate feature importance scores using ReliefF algorithm [27]

- Rank features based on importance scores

- Initialize wolf positions biased toward high-importance features

- Ensure 20-30% of positions represent random feature combinations

Position Update with Adaptive Strategies

- Implement dynamic control parameter a that decreases nonlinearly from 2 to 0

- Apply competitive weighting for α, β, and δ wolves based on fitness values

- Incorporate differential evolution mutation with probability 0.3

- Utilize Lévy flight for random exploration with probability 0.2

Binary Position Conversion

- Apply sigmoid transfer function to convert continuous positions to binary

- Implement V-shaped transfer function for position updates [28]

- Update binary positions representing feature inclusion/exclusion

Fitness Evaluation

- Use KNN classifier with 5-fold cross-validation for fitness assessment

- Define fitness function: Fitness = α × Accuracy + (1 - α) × (1 - FeatureRatio)

- Set α = 0.9 to prioritize accuracy while considering feature reduction

Termination and Validation

- Terminate after 100 iterations or when fitness improvement < 0.001 for 10 iterations

- Validate selected features on independent test set

- Compare with conventional feature selection methods (filter, wrapper, embedded)

Computational Workflow and Signaling Pathways

Hybrid MKL-GWO Computational Workflow

Drug-Target Interaction Prediction Pathway

Drug-Target Interaction Prediction Pathway

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for MKL-GWO Implementation

| Tool/Category | Specific Examples | Function in MKL-GWO Research |

|---|---|---|

| Kernel Functions | Gaussian RBF, Polynomial, Linear, Sigmoid | Capture different similarity measures in data |

| Optimization Algorithms | GWO, Multi-strategy GWO, Genetic Algorithm, PSO | Optimize kernel weights and model parameters |

| Computational Frameworks | MATLAB, Python (Scikit-learn), WEKA | Implement MKL-GWO hybridization and testing |

| Performance Metrics | Classification Accuracy, Feature Reduction Ratio, Convergence Speed | Evaluate algorithm effectiveness and efficiency |

| Biological/Chemical Data | Drug-Target Interaction Databases, Protein Structures, Compound Libraries | Provide real-world validation datasets |

| Benchmark Datasets | UCI Repository Datasets, IDRiD, DR-HAGIS, ODIR | Standardized performance comparison |

The hybridization of Multi-Kernel Learning with Multi-Strategy Grey Wolf Optimizer represents a sophisticated computational framework that effectively addresses complex optimization challenges in drug discovery and development. The synergistic combination leverages MKL's flexible pattern recognition capabilities with GWO's enhanced global optimization power, resulting in superior performance across various pharmaceutical applications.

The multi-strategy enhancements to GWO—including ReliefF-based initialization, dynamic weighting, and hybrid exploration—specifically target the limitations of conventional optimization methods when handling high-dimensional, complex biological data. The experimental protocols and workflows presented provide researchers with practical guidelines for implementing this advanced computational approach in real-world drug discovery pipelines.

As pharmaceutical data continues to grow in complexity and volume, the MKL-GWO hybridization offers a promising pathway for accelerating drug development processes, improving prediction accuracy, and ultimately contributing to more efficient therapeutic discovery. Future research directions include adapting this framework for specific drug discovery domains and further enhancing the optimization strategies to address emerging challenges in pharmaceutical research.

Survey of Current MKL Implementations and Optimization Needs in Biomedicine

Application Notes: The State of Advanced Algorithms in Biomedical Research

The integration of sophisticated computational algorithms, including machine learning (ML) and metaheuristic optimizers, is accelerating progress in biomedical sciences. These technologies are enhancing capabilities in areas ranging from diagnostic procedures to the analysis of complex 'omics' data. The following application notes summarize the current landscape, key implementation challenges, and performance benchmarks.

Current Implementations and Clinical Integration

Machine learning, a dominant AI model in biomedicine, is being implemented to address critical challenges in healthcare delivery [30] [31]. These implementations often focus on creating robust data infrastructure and operational pipelines. For instance, one pediatric hospital program established a centralized data repository (SEDAR) that transforms electronic health record (EHR) data into a standardized, curated schema of 18 relationally structured tables [32]. This infrastructure supports the extraction of thousands of longitudinal clinical features, enabling the development of models for predicting patient outcomes, such as vomiting in pediatric oncology patients, to guide preemptive clinical interventions [32].

Retrieval-Augmented Generation (RAG) has emerged as a particularly effective method for enhancing large language models (LLMs) in biomedical contexts. A recent meta-analysis of 20 studies demonstrated that RAG implementation yields a statistically significant performance increase over baseline LLMs, with a pooled odds ratio (OR) of 1.35 (95% CI: 1.19-1.53, P = .001) [33]. This approach mitigates key LLM limitations, such as hallucination and outdated knowledge, by integrating current, relevant context from external databases directly into queries [33].

Algorithmic Optimization Needs and Opportunities

While high-level AI applications are being deployed, significant opportunities exist for optimizing the core algorithms themselves, particularly for complex biomedical problems. The Grey Wolf Optimization (GWO) algorithm and its variants exemplify this trend. The standard GWO algorithm, inspired by the social hierarchy and hunting behavior of grey wolves, is effective but can suffer from premature convergence and a tendency to become trapped in local optima [13] [21].

Recent research has focused on multi-strategy improvements to overcome these limitations. Enhanced GWO variants often incorporate several key strategies [13] [16] [21]:

- Modified Position Update Mechanisms: Achieving a better balance between global exploration and local exploitation of the search space [13].

- Dynamic Escape Strategies: Enabling the algorithm to break free from local stagnations [13].

- Reinforced Hierarchical Structures: Introducing new hierarchical mechanisms or adaptive weights for the leader wolves (α, β, δ) to improve stochastic behavior and exploration capabilities [16] [21].

- Hybridization with ML Models: Using improved GWO to optimize the parameters of machine learning models, such as the Kernel Extreme Learning Machine (KELM), for tasks like disease diagnosis and financial prediction [16].

These improved algorithms have demonstrated superior performance in solving large-scale global optimization problems and practical engineering applications, outperforming other state-of-the-art metaheuristic algorithms on numerous benchmark functions [21].

Table 1: Quantitative Performance of RAG-Enhanced LLMs in Biomedical Applications (Meta-Analysis of 20 Studies)

| Metric | Value | Interpretation |

|---|---|---|

| Pooled Effect Size (Odds Ratio) | 1.35 | RAG implementation increases the odds of correct performance by 35% compared to baseline LLMs [33]. |

| 95% Confidence Interval | 1.19 - 1.53 | The true effect size lies within this range with 95% confidence [33]. |

| P-value | .001 | The result is statistically significant [33]. |

| Between-Study Heterogeneity (I²) | 37% | Low to moderate heterogeneity among the included studies [33]. |

Table 2: Common Challenges and Pragmatic Solutions in Clinical ML Deployment

| Challenge Area | Specific Challenge | Pragmatic Solution |

|---|---|---|

| Clinical Scenario Identification | Clinical champions lack ML expertise to define projects [32]. | Shift from a static intake form to a dynamic, collaborative intake process with a data scientist [32]. |

| Data Infrastructure & Utilization | Data leakage and bias in cohort/label definition [32]. | Use global explanation methods (e.g., permutation importance), conduct ablation experiments, and run silent trials [32]. |

| MLOps & Workflow Integration | Aligning pipeline timestamps with clinical reality [32]. | Use data entry timestamps from the EHR for inference, not just measurement timestamps, to reflect data availability at the point of care [32]. |

| Algorithmic Fairness | Satisfying all fairness criteria is often impossible [32]. | Stratify model evaluations across subpopulations and collaborate with clinical champions to define context-specific fairness goals [32]. |

Experimental Protocols

This section provides detailed methodological workflows for implementing a machine learning pipeline in a clinical setting and for applying an improved optimizer to tune a biomedical model.

Protocol 1: Clinical Machine Learning Model Deployment Pipeline

This protocol outlines the end-to-end process for developing and deploying a predictive ML model in a healthcare environment, based on established MLOps principles [32].

Materials and Reagent Solutions

Table 3: Research Reagent Solutions for Clinical ML Deployment

| Item Name | Function / Description |

|---|---|

| Centralized Data Repository (e.g., SEDAR) | A standardized, curated data schema that transforms raw EHR data into a consistent, queryable format for efficient feature extraction [32]. |

| Medical Record Number (MRN) & Encounter ID | Relational identifiers that enable accurate linkage of patient-specific data across different clinical tables (e.g., lab results, diagnoses) over time [32]. |

| Orchestrated ML Pipeline | Automated, modular software steps that handle feature extraction, model training, evaluation, and selection, ensuring reproducibility and experimentation tracking [32]. |

| Fairness Evaluation Data | Demographic and socioeconomic data (e.g., sex, age, income quintile, language flag) used to stratify model performance and evaluate algorithmic bias across subpopulations [32]. |

Detailed Workflow

Workflow Description:

- Identify Clinical Scenario: Select a use case with a clear clinical champion, a measurable and important outcome (label), and a potential for ML to improve patient care or resource allocation (e.g., predicting vomiting in pediatric oncology patients) [32].

- Establish Data Infrastructure: Utilize a centralized, standardized data repository (like SEDAR) that ingests raw EHR data and transforms it into a consistent, longitudinal schema for reliable feature extraction [32].

- Define Cohort and Label: Precisely define the patient population and the target outcome in collaboration with clinical experts, carefully considering temporal relationships to avoid data leakage (e.g., ensuring features are available prospectively at the time of prediction) [32].

- Feature Extraction and Selection: Automatically extract a wide range of clinical features from the structured data tables. Use the orchestrated pipeline to perform feature selection and identify the most predictive variables [32].

- Model Training and Evaluation: Train multiple model architectures (e.g., logistic regression, tree-based models) on a static data set. Use cross-validation and hold-out tests to evaluate performance metrics (e.g., AUC, accuracy) [32].

- Fairness and Bias Assessment: Evaluate the final model's performance across key demographic and socioeconomic subpopulations to identify and quantify any disparate impacts or algorithmic biases [32].

- Set Classification Threshold: Work with clinical stakeholders to choose an appropriate probability threshold for classification. This decision balances the downsides of false positives (e.g., alert fatigue) against the consequences of false negatives (e.g., missed interventions) [32].

- Deploy via MLOps: Integrate the approved model into the clinical workflow using an MLOps platform. Begin with a "silent trial" to assess real-world performance without affecting care, followed by a carefully managed live deployment with continuous monitoring [32].

Protocol 2: Optimizing a Biomedical Model with an Improved GWO

This protocol describes the methodology for employing a multi-strategy Improved Grey Wolf Optimizer (IGWO) to tune the parameters of a predictive biomedical model, such as a Kernel Extreme Learning Machine (KELM), for tasks like disease diagnosis [16].

Materials and Reagent Solutions

Table 4: Research Reagent Solutions for Optimizer-Based Model Tuning

| Item Name | Function / Description |

|---|---|

| Kernel Extreme Learning Machine (KELM) | A fast, single-hidden-layer neural network with a kernel function. Its performance is highly sensitive to its two key parameters: the penalty coefficient C and the kernel parameter gamma [16]. |

| Benchmark Biomedical Dataset | A standardized, publicly available dataset (e.g., for thyroid cancer diagnosis, financial distress prediction) used to train and validate the KELM model [16]. |

| Improved GWO (IGWO) | An enhanced metaheuristic optimizer with strategies like a modified hierarchical mechanism and dynamic escape strategies to avoid local optima while searching for the best KELM parameters [13] [16]. |

| Fitness Function | A performance metric (e.g., classification accuracy, Matthews Correlation Coefficient) that the IGWO seeks to maximize or minimize during its search for optimal parameters [16]. |

Detailed Workflow

Workflow Description:

- Initialize IGWO Population: Generate an initial population of grey wolves, where the position of each wolf represents a candidate solution vector comprising the KELM parameters: the penalty coefficient

Cand the kernel parametergamma[16]. - Evaluate Fitness: For each wolf's position (parameter set), train a KELM model on the training subset of the biomedical dataset. Evaluate the model's performance (e.g., classification accuracy) on a validation set. This performance metric serves as the fitness value for the wolf [16].

- Identify Leader Wolves: Select the three best solutions in the population based on their fitness values and designate them as the alpha (α), beta (β), and delta (δ) wolves, respectively [13] [16].

- Update Omega Positions: The omega (ω) wolves, representing the worst solutions, are repositioned via a random global search to help maintain population diversity and explore new regions of the search space [16].

- Update Beta Positions: A subset of wolves (Beta) performs a random local search around the current alpha position. If a beta wolf finds a better solution than the alpha, it replaces the alpha, enhancing local refinement [16].

- Update Delta Positions: The remaining wolves (Delta) update their positions by following the weighted average of the α, β, and δ positions, as in the standard GWO algorithm, balancing exploration and exploitation [13] [16].

- Apply Dynamic Escape Strategy: Monitor the convergence of the population. If the algorithm is detected to be stagnating in a local optimum for a predefined number of iterations, trigger an escape strategy (e.g., randomly resetting a portion of the population) to force further exploration [13].

- Check Stopping Criteria: Repeat steps 2-7 until a maximum number of iterations is reached or a predefined performance accuracy is achieved. The final alpha wolf's position contains the optimized

Candgammaparameters for the KELM model [16].

Building the Hybrid Model: Methodologies and Biomedical Applications

The integration of nature-inspired optimizers into machine learning frameworks represents a frontier in computational intelligence research. For a broader thesis on multi-kernel learning algorithms, the Grey Wolf Optimizer (GWO) emerges as a particularly suitable metaheuristic due to its simple structure, minimal parameter requirements, and effective balance between exploration and exploitation [13] [21]. The standard GWO algorithm mimics the social hierarchy and collaborative hunting behavior of grey wolves, where the population is guided by the three best solutions (alpha, β, and δ wolves) toward promising regions of the search space [13] [22]. However, when applied to high-dimensional, multi-modal problems characteristic of multi-kernel learning and drug development applications, the conventional GWO exhibits limitations including premature convergence, inadequate population diversity, and suboptimal balancing of global and local search capabilities [21] [34].

To address these limitations, researchers have developed sophisticated enhancement strategies that significantly improve GWO's performance in complex optimization landscapes. Among these, electrostatic field initialization and dynamic parameter adjustment represent two particularly impactful approaches that directly enhance the algorithm's efficacy for data-intensive applications [7]. These strategies work synergistically to establish a more diverse initial population and adaptively control the algorithm's search behavior throughout the optimization process. For drug development professionals and researchers, these enhancements translate to more reliable and efficient optimization in critical tasks such as molecular docking, quantitative structure-activity relationship (QSAR) modeling, and pharmacokinetic parameter estimation, where multi-kernel approaches often provide superior modeling flexibility but present substantial optimization challenges.

Core Enhancement Strategies: Theoretical Foundations and Mechanisms

Electrostatic Field Initialization

The conventional GWO typically employs random population initialization, which can lead to uneven distribution of candidate solutions across the search space and potentially miss promising regions [7] [34]. Electrostatic field initialization addresses this limitation by simulating charged particles within an electrostatic field to achieve more uniform distribution of the initial wolf positions.

This initialization approach functions analogously to particles with similar charges repelling each other within a confined space, thereby achieving superior dispersion throughout the search domain [7]. The theoretical foundation lies in establishing maximum separation between initial candidates, which enables more comprehensive exploration of the solution space from the algorithm's inception. For multi-kernel learning applications, where different kernel functions may dominate in various regions of the feature space, this comprehensive initial exploration is particularly valuable as it reduces the likelihood of overlooking promising kernel combinations during early optimization stages.

Alternative population initialization strategies with similar objectives include:

- Lens Imaging Reverse Learning: Utilizes optical principles to generate reverse solutions, expanding initial coverage [22].

- Quantum Computing Principles: Employs quantum superposition and entanglement to create diverse initial populations [34].

- Latin Hypercube Sampling (LHS): A statistical method that ensures proportional representation of the entire parameter space [35].

- Chaotic Mapping: Uses deterministic chaotic sequences to generate populations with improved randomness characteristics [36].

Dynamic Parameter Adjustment

The standard GWO utilizes linear decreasing of control parameters throughout iterations, which may not accurately reflect the complex nonlinearities of real optimization landscapes, particularly in multi-kernel learning scenarios [7] [21]. Enhanced GWO variants implement nonlinear parameter adjustment strategies that more effectively balance exploration and exploitation phases.

These dynamic parameter strategies typically involve the nonlinear adjustment of the convergence factor (a) and other control parameters based on cosine functions, adaptive mechanisms, or problem-specific characteristics [7] [22]. For instance, one improved GWO variant employs a nonlinear control parameter convergence strategy based on cosine variation to better coordinate global exploration and local exploitation capabilities [22]. This approach allows for more extensive exploration in early iterations while intensifying local search in later stages when converging toward optimal solutions.

Additional parameter adaptation strategies include:

- Differential Evolution Scaling: Incorporates scaling factors from differential evolution to enhance global search capability [7].

- Levy Flight Mechanisms: Introduces random steps following Levy distribution to escape local optima [35] [36].

- Fuzzy Inference Systems: Dynamically adapt parameters based on fuzzy rules reflecting search performance [36].

Table 1: Quantitative Comparison of GWO Enhancement Strategies

| Strategy Category | Specific Mechanism | Reported Performance Improvement | Application Context |

|---|---|---|---|

| Population Initialization | Electrostatic Field Initialization | Up to 98.63% coverage with 30 nodes [7] | WSN Coverage Optimization |

| Parameter Control | Nonlinear Convergence Factor (Cosine) | Significant improvement on CEC2014 benchmarks [22] | Functional Optimization |